Abstract

In the era of digital education, the rapid growth and disordered distribution of learning resources present new challenges for online learning. However, most of the exercise recommendation systems lack targeted guidance and personalization. In response to the above problems in the recommendation of English reading, writing and translation exercises in higher education, this paper proposes an algorithm that combines improved fuzzy cognitive diagnosis model and multi-head attention mechanism to predict students’ performance on future recommended exercises. Firstly, considering three factors: student response time, continuous answer time, and knowledge gap parameters, the cognitive attributes of English knowledge are determined from both subjective and objective aspects, so as to construct an improved fuzzy cognitive diagnosis model. Secondly, the multi-head attention mechanism is used to allocate appropriate attention weights to students’ current knowledge status based on their past knowledge status. Finally, the performance of the algorithm is proved through experimental data.

Similar content being viewed by others

Introduction

In the era of digital education, intelligent learning models under the framework of “Internet Plus Education” have garnered widespread attention, with adaptive learning becoming the new norm for online education. However, the rapid growth and disorderly distribution of learning resources pose challenges to online learning. Addressing issues such as learning disorientation, insufficient resource recommendations, and unclear learning outcomes is crucial for enhancing the efficiency and quality of online education. Many existing assessment systems require students to select practice test questions, leading to the “data disorientation” problem. Students often choose exercises based on subjective perception without targeted guidance, which significantly affects training outcomes1,2,3,4. Therefore, scientifically diagnosing students’ cognitive states and guiding online education platforms to provide personalized question recommendations has become a research focus in the field of intelligent education.

In the field of educational resource recommendation for English reading, writing and translation evaluation in higher education, there are currently some urgent problems that need to be addressed. Firstly, personalized recommendation is still insufficient, resulting in unsatisfactory resource matching degree, because the existing recommendation systems often fail to provide tailored recommendations based on students’ English proficiency, learning style and interests. Secondly, the feedback mechanism of the recommendation system is not perfect, which affects the improvement of the quality of the recommendation system. Furthermore, since the cultivation of English ability requires the integration of interdisciplinary knowledge, the existing recommendation systems are limited to a single discipline and need to consider the integration of knowledge from multiple perspectives. Finally, the existing recommendation systems only focus on the learning status at a certain moment, ignoring the continuity and dynamics of the learning process. To solve these problems, we need to adopt multiple perspectives such as designing personalized recommendation algorithms, optimizing feedback mechanisms, integrating students’ cognitive development laws5,6, strengthening cross-disciplinary resource integration capabilities to improve the quality and effectiveness of exercise recommendation systems.

Addressing the numerous issues currently present in the recommendation of English reading, writing, and translation exercises at the higher education stage. This paper first introduces highly interpretable prior parameters of an improved fuzzy cognitive diagnostic model, which are deeply integrated with the student’s historical state modeling process based on the BiGRU network. The model combines factors such as students’ response times and duration to determine the cognitive attributes of English knowledge from both subjective and objective questions. Secondly, by utilizing attention mechanisms, it deeply investigates the impact of students’ historical exercise responses on predicting future exercises, assigning attention weights to historical knowledge states related to the student’s current knowledge state. Through these two approaches, the model achieves a balance between predictive accuracy and interpretability in deep knowledge tracing, precisely describing the changes in learners’ knowledge capability profiles across continuous time series.

Problem definition

This study aims to develop a personalized recommendation algorithm capable of accurately predicting students’ future exercise performance. Specifically, our objective is to construct a system that dynamically tracks students’ knowledge states and provides personalized exercise recommendations by integrating an improved fuzzy cognitive diagnosis model (MFCD) and a multi-head attention mechanism (MAKT). The system needs to address the following key issues:Accuracy of Personalized Recommendations: How to improve the prediction accuracy of unattempted exercises by modeling students’ learning processes and knowledge states. Dynamic Knowledge Tracing: How to capture the dynamic changes in students’ knowledge states and incorporate them into the recommendation system. Model Interpretability: How to introduce interpretable parameters (e.g., knowledge gaps, response time) to enable the model not only to predict student performance but also to provide insights into the learning process.

Related works

Knowledge tracing models

Currently, knowledge tracing methods have become the mainstream approach for predicting student performance7,8,9,10,11,12. Based on their ability to continuously track changes in students’ knowledge levels at specific learning stages, knowledge tracing can be divided into static knowledge tracing models and dynamic knowledge tracing models.

Static knowledge tracing models refer to cognitive diagnostic models. Among the widely used models in the field of education, traditional cognitive diagnostic models can be divided into continuous cognitive diagnostic models and discrete cognitive diagnostic models based on whether they can track the status of students’ knowledge levels continuously. Continuous cognitive diagnostic models are represented by Item Response Theory (IRT), which posits that learners’ latent traits manifest as continuous cognitive ability parameters. Compared to cognitive diagnostic models that can only diagnose in a single assessment scenario, dynamic knowledge tracing models excel at handling time series response records and can perform continuous dynamic diagnostics13,14,15. As an early representative achievement in the field of knowledge tracing, Bayesian Knowledge Tracing (BKT) has attracted unprecedented attention from researchers due to its strong statistical interpretability. Many scholars have proposed improvements to enhance the model’s performance: Hawkins16 borrowed the concept of collaborative filtering models from recommendation systems, introducing similarity parameters between predicted items and historical items, thereby improving the performance of the BKT model. Agarwal17 proposed the MS-BKT model, which uses dynamically updated weight parameters instead of static learning rates, allowing the model to focus on the impact of frequently occurring knowledge points on tracing results. In real educational scenarios, a single item may assess multiple knowledge concepts. To address the issue that BKT cannot capture the correlation between knowledge points, Kaser et al.18designed a dynamic Bayesian knowledge tracing model that first models the predecessor and successor relationships of knowledge point nodes, embedding knowledge point information into Bayesian networks to enhance tracking performance. Compared to Bayesian networks, Recurrent Neural Networks (RNN) have significant advantages in representing high-dimensional, continuous features and are more suitable for modeling complexity. Chris et al.19 were the first to use Recurrent Neural Networks (RNN) to predict students’ response sequences to practice questions, introducing the concept of deep knowledge tracing. However, the memory capacity of RNN is limited and cannot meet the needs of long sequence data. To address this issue, Zhang et al.20 proposed a knowledge tracing method combining dynamic key value memory networks. Some researchers optimized variable-length LSTM networks to learn long-term dependencies between sequences21. In addition, Liu et al.22 introduced cosine attention mechanisms and knowledge point feature modules, using one-bit effective encoding or text features of knowledge points as knowledge point features, leading to a knowledge tracing model that can perceive practice questions. Furthermore, some scholars23 were the first to apply the Transformer model to the field of knowledge tracing, effectively alleviating data-related cold start problems. Choi et al.24 added multi-head attention mechanisms in the self-attention layer corresponding to practice exercisesand response results, which helps capture the complex interactions between learners and practice questions. Existing research indicates that traditional recommendation systems exhibit significant shortcomings in interdisciplinary integration3 and dynamic feedback mechanisms15. For instance, while the DKT model19 is capable of tracking knowledge states, it fails to capture long-sequence dependencies20. Similarly, although fuzzy cognitive diagnosis models (FuzzyCDF) support the diagnosis of subjective questions, they overlook temporal factors22.

Fuzzy cognitive diagnostic model

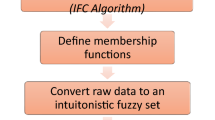

The FuzzyCDF model formalizes the student response process into a four-layer cognitive model, starting from the latent traits of students and employing fuzzy set theory to express their knowledge levels. It constructs models for the mastery proficiency of objective and subjective knowledge points using connection and compensatory approaches from educational psychology, respectively. The FuzzyCDF model is organized into four hierarchical layers: the latent ability level of each student, the proficiency level of the student regarding the knowledge points assessed by the test items, the mastery degree of the student on the test items determined, and the scoring rate of the student on the test items. The FuzzyCDF model utilizes collected real test results and employs the Markov Chain Monte Carlo (MCMC) algorithm to estimate the unknown parameters within this four-layer structure. In the exercise system, each knowledge point corresponds to a fuzzy set \((M,\mu _k)\), M represents the student set, and \(\mu _k\) is a membership function of the student set to the related knowledge point fuzzy set k. The FuzzyCDF model assumes that a student’s proficiency a in a knowledge point is the student’s membership degree in \(\mu \) the corresponding fuzzy set, and the calculation formula borrows from the two-parameter logistic model used in IRT, as shown below:

The proficiency of students in knowledge points \(a_{mk}\) depends on the level of their potential ability \(\theta _m\) and the differences \(a_{mk}\) and difficulties \(d_{mk}\) of knowledge points. The coefficient 1.7 is an empirical parameter set to minimize the maximum difference between the normal distribution and the Logistic distribution function. The proficiency of students a in knowledge points K is determined by two aspects: one is level of student’s potential ability, and the other is the attributes of knowledge points. The value calculated by this formula is a decimal number in the range of [0, 1], with higher values indicating higher proficiency of students in this knowledge point. The proficiency of students in knowledge points is also influenced by the interaction between the knowledge levels required to answer questions. For different types of questions, the interaction between knowledge levels is not the same. Objective exercises only have right or wrong answers, and students can only answer correctly when they are proficient in all the knowledge points tested in that question, otherwise, they usually cannot answer correctly. Therefore, the interaction between knowledge levels in objective exercises is generally considered to be connective. In contrast, subjective exercises do not have a unique answer, the answers are open-ended, and students’ scores depend on their specific responses, even if they have not mastered all the skills completely, they will still receive corresponding scores for partial skills. Therefore, the interaction between knowledge levels in subjective exercises is generally considered to be compensatory. Although dynamic knowledge tracing models (e.g., DKT, C&RM-MAKT) excel in long-sequence prediction, their hidden states lack interpretability7 and fail to account for the influence of response time and knowledge gaps8. Additionally, traditional fuzzy cognitive models (e.g., FuzzyCDF), while capable of integrating subjective question scoring, struggle to jointly model knowledge associations between subjective and objective questions13. In contrast, the proposed MFCD-MAKT in this paper achieves a balance between dynamic diagnosis and cross-question association by introducing temporal weights, knowledge gap parameters, and multi-head attention mechanisms.

Modified fuzzy cognitive diagnostic model

The personalized exercise recommendation method based on the improved fuzzy cognitive diagnosis proposed in this paper mainly consists of three parts: The first part is the student cognitive modeling based on the improved fuzzy cognitive diagnosis model, which mainly analyzes and diagnoses students’ cognitive status to obtain the true mastery level of students on questions, and then extracts students’ individual learning characteristics from their true mastery level of exercises as the prior condition for the probability matrix decomposition part in MFCD. The second part is the exercise performance prediction algorithm that integrates improved fuzzy cognitive diagnosis parameters and multi-head attention mechanisms, which can be divided into two sub-steps. The first step is to calculate the similarity between students and questions, and the second step is to perform probability matrix decomposition by jointly considering students’ answer information, question knowledge point information, students’ cognitive diagnosis information, students’ similarity information, and questions’ similarity information to obtain students’ exercise score prediction. The third part is to recommend students’ exercises based on the predicted scores and question difficulty obtained from the second step.

To enhance the interpretability of the model, we integrate the Fuzzy Cognitive Diagnosis Model (FuzzyCDF) with multi-head attention mechanisms. The FuzzyCDF explicitly quantifies students’ knowledge proficiency through membership functions, enabling intuitive visualization of their mastery levels across distinct knowledge concepts. Concurrently, the multi-head attention mechanism captures intricate dependencies between students’ historical learning trajectories and their current knowledge states, thereby providing granular explanations for recommendation decisions. Specifically, the model improves interpretability through the following mechanisms: Temporal Weights and Knowledge Gap Parameters: By incorporating temporal decay factors and knowledge gap parameters, the model dynamically adjusts the influence of historical learning interactions on current knowledge states, thereby aligning recommendations with real-world learning progression. Multi-Head Attention: This mechanism not only identifies correlations between students’ mastery of diverse knowledge concepts but also reveals latent inter-concept relationships, offering educators actionable insights into students’ cognitive structures. Explicit Cognitive Diagnostic Parameters: The integration of interpretable parameters-such as knowledge mastery levels, exercise similarity metrics, and concept association weights-renders model outputs transparent and pedagogically actionable. For instance, knowledge gap parameters explicitly highlight deficiencies in prerequisite skills, while attention weights quantify the relevance of historical interactions to current predictions.

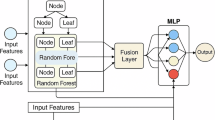

This hybrid framework bridges the gap between data-driven adaptability and educational interpretability, ensuring that recommendations are both statistically robust and pedagogically meaningful. This paper proposed one modified fuzzy cognitive diagnostic model shown as Fig. 1, which enables the joint modeling of subjective and objective items, positing that a student’s performance on objective items is influenced by the interconnections among knowledge points, while their performance on subjective items is affected by the compensatory relationships among those knowledge points. This model demonstrates diagnostic effectiveness that surpasses that of traditional cognitive diagnostic models. Furthermore, in the data derived from students’ answering records, we can identify additional factors that are not utilized within the fuzzy cognitive diagnostic model but still significantly impact the diagnosis of students’ mastery levels of knowledge points. This paper introduces the following three factors based on the fuzzy cognitive model.

Architecture of modified fuzzy cognitive diagnosis.

The effect of students’ response time on cognitive diagnosis

Over time, historical response data that is further removed from the current moment contributes less to the model and may even have a negative impact. Considering the time factor of student responses can help assess the reference value of the response data within the cognitive diagnostic model. By integrating this factor, the model becomes more aligned with reality, thereby enhancing its accuracy. The weight calculation formula for different response times of students is designed as follows:

Let \(\Delta {t_{mn}}\) denotes the time difference, representing the interval between the current diagnostic time and the time student m answered item n. \({T_m}\) indicates the duration student M has been using the system, derived from the time difference between the current diagnostic time and the student’s registration time. Additionally, since the time students take to respond is reflected at the item level, this weight is incorporated into the second layer of the fuzzy cognitive diagnostic model, specifically in the formula for calculating the mastery level of the student regarding the test items. By employing the interconnections and compensatory effects of the knowledge points on objective and subjective items, and integrating the student response time, the formula for calculating the mastery degree \({\eta _{nm}}\) of student m on objective item n is as follows:

As time progresses, the longer the time from the current time, the less the contribution of the historical data to the model, or even the negative impact. The incorporation of this factor can enhance the accuracy of the model, aligning it more closely with reality. The formula for calculating the weights of different response times of students is designed as follows:

In this context, \(\Delta {t_{mn}}\) represents the time differential between the present diagnostic time and the response time of student m to test question n. The variable \(T_m\) denotes the length of time spent by student utilizing the system, obtained from the time differential between the present diagnostic time and the student’s enrolment time. Furthermore, as the student’s response time is embedded at the test question level, this weight is integrated into the second level of the fuzzy cognitive diagnostic model, namely the student’s comprehension of the test question formula. The formula for calculating student m’s mastery degree \({\eta _{nm}}\) on objective question n after integrating the student’s response time is given as follows:

This formula employs the linking and compensating roles between knowledge points subjected to in the fuzzy cognitive diagnostic model on objective and subjective questions, respectively. Similarly, the formula for calculating student m mastery \({\eta _{nm}}\) of subjective exercises n is as follows:

The effect of students’ response duration on cognitive diagnosis

The duration of student responses can reflect their proficiency with the test items, indicating their mastery level of the knowledge points assessed by those items, which is designed as follows:

The left side of the equation represents student m in test question n, and the weight assigned to it is the length of time required to answer the question. The maximum length of time to answer the question n is represented by \((\max ){C_n}\), while the length of time to answer the question n by student m is represented by \({C_{mn}}\). Let \({R_{mn}}\) represent the scoring rate of the response results, calculated as the student’s score divided by the designated score of the test item. If a student answers incorrectly, indicating a lack of mastery of the assessed knowledge point, the response duration is considered irrelevant regardless of its length, resulting in a weight of 0 for the response duration. Only when a student achieves a score can the response duration reflect their proficiency concerning the knowledge points evaluated by the item.When characterizing student abilities, those with shorter response duration are assumed to have a higher mastery level of the knowledge points assessed by the test items compared to those with longer response duration.

Introducing knowledge gap factors into cognitive diagnostic module

Knowledge gap is a crucial factor that affects the assessment of students’ knowledge level. Through deep analysis of students’ performance in different fields, cognitive diagnosis can accurately identify students’ understanding deficiencies of specific knowledge points. These deficiencies are not limited to knowledge that students have not mastered, but also include their misunderstandings and errors in knowledge application. Once these knowledge gaps are identified, the cognitive diagnostic model can develop personalized learning paths for each student’s weaknesses.

In this paper, the model defines the learner’s mastery level of an item as the knowledge level corresponding to the weakest knowledge point assessed by that item. The more important knowledge point k is in answering question j, and the lower learner i’s mastery of knowledge point k is the higher degree of knowledge point k constitutes the knowledge short board, and the formula of knowledge short board parameter is as follows:

The parameter \({q_{kj}}\) for the association between exercises and knowledge points indicates whether exercise j examines knowledge point k or not; \({j_k}\) indicates the importance of knowledge point k in answering exercise j. The parameter \({l_{ijk}}\) is the knowledge gap parameter, which indicates that the mastery parameter of the exercise depends on the maximum value of \({l_{ijk}}\).

Bi-GRU knowledge tracking model incorporate with multiple attention mechanisms

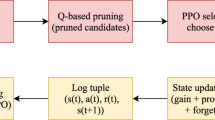

Deep models, especially on large-scale datasets, outperform traditional cognitive diagnostic models. Which require less training time and exhibit stronger robustness. However, deep models lack the interpretability advantages of traditional models. Generally, deep models consider the neurons in the hidden layers of deep networks as the knowledge states of students, making the students’ knowledge states too abstract and inconsistent with objective educational principles. In addition, existing deep models exhibit a cold start phenomenon; they cannot reveal the correlation and similarity between exercises that have never been attempted by any student and exercises that have been answered in the past. To address these issues, this chapter proposes an improved Fuzzy Cognitive Diagnose-Multi-head Attention Knowledge Tracing Model (MFCD-MAKT), as shown in Fig. 2. This model combines the interpretable parameters of MFCD and extends the student’s knowledge state vector to a knowledge state matrix to accommodate these parameters. It uses a GRU network to track any knowledge state vector, thereby enhancing the reliability of the tracking results.

Dynamic knowledge tracing is a key task in the context of smart teaching and learning. In essence, the dynamic knowledge tracking problem is a time series prediction problem. Suppose in an online education scenario, the number of students is I, the number of exercises stored in the online course’s exercise bank is T, and the number of knowledge points examined in the course is K. The set of students can be represented as \(U=\{u_1,u_2,\cdot \cdot \cdot ,u_i,\cdot \cdot \cdot ,u_l\}\); the set of exercises can be represented as \(E=\{e_1,e_2,\cdot \cdot \cdot ,e_t,\cdot \cdot \cdot ,e_T\}\); and the set of knowledge points can be represented as \(Z=\{z_1,z_2,\cdot \cdot \cdot ,z_k,\cdot \cdot \cdot ,z_K\}\). The record of exercises that a student has answered in the education system can be expressed as \(R=\{(e_1,r_1),(e_2,r_2),\cdots ,(e_T,r_T)\}\), in which, when \(r_T\) takes the value of 1, it means that the student has correctly answered the kth exercise, and whentakes the value of 0, it means that the student has incorrectly answered the \(k_{th}\) exercise. The dynamic knowledge tracking model is based on extrinsic, explicit learning process data to predict student performance on exercises that have not yet been answered, and to diagnose changes in students’ knowledge mastery status over time as they work through the exercises.

Structure of MFCD-MAKT.

The parameters training based on MFCD model

One of the basic tasks of knowledge tracking is to capture the learner’s knowledge state that changes with the process of answering exercises. One student’s initial knowledge state is extremely important for subsequent state changes. Therefore, the algorithm proposed in this chapter uses the MFCD to diagnose the student’s knowledge level parameter after completing the first n exercises, instead of randomly initializing the student’s initial state in the deep model. In order to improve the interpretability of the student’s knowledge status, MFCD-MAKT introduces the parameter Exercise-knowledge level, which is explicitly interpretable in the cognitive diagnostic model, into the knowledge tracking process. In order to accommodate this parameter, MFCD-MAKT expands the student’s knowledge state vector into a knowledge state matrix whose column vectors represent the mastery vectors of the corresponding knowledge points. Parameter \(\alpha ,\Psi ,\Omega \) and parameter \(\mu \) are the unknown parameters to be solved in the model. From the relationship between the parameters in the C&RM in Fig. 2, it can be seen that only the parameters \(\alpha ,\Psi ,\Omega \) need to be solved, and the unknown parameters \(\alpha ,\mu \) can be solved successively. Where \(\theta \) is the ability vector of all learners, i.e., \(\theta =(\theta _1,\theta _2,\cdot \cdot \cdot ,\theta _l)\); \(\Psi \) is the knowledge characteristic matrix of k knowledge points, i.e., \(\Psi =(\psi _1,\psi _2\cdot \cdot \cdot ,\psi _K)\) and the eigenvectors of a single knowledge point k are \(\psi _k= \begin{bmatrix} \omega _{\theta k},\omega _{\eta k},d_{\theta k1},d_{\eta k1},d_{\theta k2},d_{\eta k2} \end{bmatrix}\). The eigenvector \(\omega _t=[s_t,g_t,\mu _t]\) of a single exercise. Where \(s_t\) is the carelessness parameter of exercise t, indicating the probability of students’ answering the exercise incorrectly even though they have mastered the exercise, \(g_t\) is the guessing parameter of exercise t, indicating the probability of students’ answering the exercise correctly even though they have not mastered the exercise, and \({\mu _t}\) is the parameter of the degree of examination of each knowledge point of exercise t:

In this paper, the Markov Chain Monte Carlo (MCMC) parameter estimation algorithm is used to solve the parameters \(\alpha ,\Psi ,\Omega \). The MCMC algorithm, originally applied to physics problems, is a dynamic computer simulation technique, and the basic idea is to simulate the Markov chain of the joint posterior distribution of the parameter to be estimated by sampling the Markov chain on the The MCMC algorithm can simplify the complexity of parameter estimation, can meet the task of multi-dimensional model, multi-class parameter estimation, and the estimation accuracy is high and the stability is good. According to the Bayesian formula, the conditional posterior distributions of the parameters \(\alpha ,\Psi ,\Omega \) are calculated as following:

Step 1: Randomly initialize the initial values of the parameters \({\theta ^1},{\Psi ^1},{\Omega ^1}\).

Step 2: Repeat the following process for N iterations.

(a) Sample to obtain \({\Psi ^*}\) based on the full conditional distribution of the parameter , and with probability \(P({\Psi ^n},{\Psi ^*})\) assign the is assigned to \(\Psi ^{n+1}\), otherwise \(\Psi ^n\) is assigned to \(\Psi ^{n+1}\).

(b) Sample to obtain \({\theta ^*}\), compute the formula based on the full conditional distribution of the parameter \({\theta }\), and assign \({\theta ^*}\) to \(\theta ^{n+1}\) with probability \(P({\theta ^n},{\theta ^*})\), and otherwise assign \(\theta ^n\) to \({\theta ^{n + 1}}\).

(c) Sample to obtain \({\Omega ^*}\), compute the equation based on the full conditional distribution of the parameter \({\Omega }\), and assign \({\Omega ^*}\) to \(\Omega ^{n + 1}\) with probability \(P({\Omega ^n},{\Omega ^*})\), and otherwise assign \({\Omega ^n}\) to \(\Omega ^{n + 1}\).

The exercise similarity calculation combing multiple attention mechanisms

Most of the existing similarity calculations are based on distance metrics. Inspired by the multi-head attention mechanism in Transformer model, this paper embeds the multi-head attention mechanism to calculate the similarity of exercises. The similarity of exercises computed by MFCD-MAKT comes from the convergence of multiple self-attention mechanisms that learn the different subspace representations of queries, keys, and values corresponding to the exercise vectors in order to capture dependencies between sequences of short and long distances. In this paper, we use the following equation to compute the query vector \(q_t^{(n)}\), the key vector \(k_t^{(n)}\), and the value vector \(v_t^{(n)}\) corresponding to the vector \(x_t\) at the \(n_{th}\) attention head. \(q_t^{(n)}=x_tW_n^{(q)},k_t^{(n)}=x_tW_n^{(k)},v_t^{(n)}=x_tW_n^{(\nu )}\), where \(W_n^{(q)},W_n^{(k)},W_n^{(\nu )}\) are the projection matrices of the query vectors, the key vectors, and the value vectors, respectively, in \(n_{th}\) attention header, which project their respective vectors to the corresponding subspace. Where the self-attention is computed using the following equitation for the scaling dot product operation.

The deep knowledge tracking model MFCD-MAKT proposed in this chapter uses a three-headed attention mechanism to learn the exercise The similarity information of the exercises in the subspace, \(sim_t^{(1)},sim_t^{(2)},sim_t^{(3)}\), and learned attention weights under different sub-spaces are spliced together to obtain the similarity \(sim_t^{(1)}\) between exercise t and the predicted exercise under the multi-head attention mechanism:

where \(W^{(0)}\) is the weight parameter of the spliced neural network layer. Since the exercise similarity parameter incorporating the multi-head attention mechanism is a customized optimization parameter for the exercise prediction task, it has a stronger representational capability as it mines the similarity information of exercises under multiple sub-spaces from the dimension of semantic features of the exercises.

The student state modelling incorporating exercise similarity and cognitive diagnostic parameters

The network of student state modelling incorporates the parameters \(\mu =(\mu _1,\mu _2,\cdot \cdot \cdot ,\mu _t,\cdot \cdot \cdot ,\mu _T)\) and the similarity vector \(sim=(sim_1,sim_2,\cdots ,sim_t,\cdots ,sim_T),\), which can improve the interpretability and prediction performance of the students’ knowledge state. Interpretability while improving the prediction performance. \(\mu _t=\left( \mu _t^{(1)},\mu _t^{(2)},\cdot \cdot \cdot ,\mu _t^{(k)},\cdot \cdot \cdot ,\mu _t^{(K)}\right) ^T\) represents the weight of the exercise t on the knowledge point k, which is introduced as an a priori parameter in the modelling of students’ state. The parameter \(\mu _t^{(k)}\) is multiplied with the exercise vector splicing the answer result information as shown in equation.

The exercise vector \(\tilde{x}_t^{(k)}\), which incorporates information about the parameter of the degree of knowledge points examined, is used as an input to the GRU model, and shown as:

The prediction output of student performance results

In the online prediction phase, the text similarity calculation of the exercises based on the attention mechanism can well solve the cold-start problem of new exercises.The parameter \(\mu _{T + 1}\) of the model training ensures the interpretability of the prediction results. Where \(\mu _{T + 1}^{(k)}\) denotes the degree of examination of the \(T+1\) exercise to be predicted on knowledge point k. The prediction vector \(p _{T + 1}\) is obtained by number multiplication with the vector of students’ mastery level of each knowledge point, as shown:

The prediction vectors are spliced with the exercise feature vectors and input into the two-layer neural network to get the prediction results, and the specific implementation of the neural network is shown:

where \(W_1,b_1\) are the weight parameters and bias parameters of the first hidden layer of the neural network; \(W_2,b_2\) are the weight parameters and bias parameters of the second hidden layer of the neural network. The loss function is a cross-entropy loss function and the objective function is optimized using the Adam optimizer.\(r_t\) is the real performance of students on the \(t_{th}\) exercise question, and a value of 0 means that the actual answer is wrong, and a value of 1 means that the actual answer is correct. \({\tilde{r}}_t\) is the predicted positive answer rate of students on the \(t_{th}\) exercise question.

Analysis of computational efficiency and memory consumption

Computational complexity analysis

The MFCD-MAKT model comprises three core modules: MFCD, a BiGRU-based knowledge state tracking network, and a multi-head attention mechanism. Below is a detailed analysis of the computational complexity for each module:

The MFCD module employs Markov Chain Monte Carlo (MCMC) for parameter estimation. For N iterations and a parameter dimension of D, its time complexity is \(O(N \cdot D^2)\). The introduction of time-weighted \(\omega _{mm}\) adjustments and knowledge gap parameters \(\eta _{ij}\) incurs linear overhead proportional to the student interaction sequence length T. For an input sequence of length T, the BiGRU layer exhibits a time complexity of \(O(T \cdot H^2)\), where H denotes the hidden state dimension. For multi-head attention mechanism, a multi-head attention mechanism with h heads and embedding dimension d has a per-head complexity of \(O(T^2 \cdot d/h)\), resulting in an overall complexity of \(O(T^2 \cdot d)\). Overall Complexity: The total time complexity of MFCD-MAKT is dominated by the multi-head attention mechanism, yielding \(O(T^2 \cdot d + T \cdot H^2 + N \cdot D^2)\). Compared to baseline models, MFCD-MAKT introduces moderate computational overhead due to the attention mechanism but achieves significantly superior prediction accuracy.

Memory consumption analysis

MFCD Module requires storage for parameters totaling \(O(K \cdot D)\), where K is the number of knowledge points and D is the feature dimension. The hidden state dimension H leads of BiGRU Layer with a parameter count of \(O(H^2)\). Multi-Head Attention Mechanism: The parameter count scales as \(O(h \cdot d^2)\). Attention weights \(\text {sim}_t\) and knowledge state matrices \(\mu _t\) occupy memory \(O(T \cdot K)\). For N students and T interactions, the memory footprint scales as \(O(N \cdot T \cdot K)\). While manageable for moderate-scale datasets, this demand poses challenges in large-scale scenarios.

Experiments and analysis

This study selects reading, writing and translation as the content for cognitive diagnostic assessment in the university English programme. Implementing a new round of reform, the examination has changed from focusing on language knowledge to focusing on comprehension, thinking judgments and logical thinking. English reading comprehension refers to the learners’ ability to construct meaning centered on the materials they read by using a variety of knowledge (including linguistic and non-linguistic knowledge) and strategies in the process of reading and processing written materials as readers. English writing competence refers to the learner’s ability to express information by means of written language (i.e., writing). English translation ability includes translation ability and interpretation ability. In our language testing system, more emphasis is placed on the examination of translation ability. Translation ability includes the communicative language ability of the two languages involved as well as the ability of translation strategy, which requires identifying, generalizing, analyzing and judging the source language text, and carrying out inter-language transformation on the basis of comprehensive understanding.

Determination of cognitive attributes

Identifying cognitive attributes of knowledge is a critical step in cognitive diagnosis, as different types of English test items assess different cognitive attributes. In this study, experts from the School of Foreign Languages were invited to collaboratively determine the cognitive attributes for college-level English reading comprehension, translation, and writing tasks. The cognitive attributes for reading items are defined as shown in Table 1, while the definitions for writing items are presented in Table 2, and the cognitive attributes for translation items are outlined in Table 3. The Q-matrix for the items is annotated on the test platform.

Datasets prepare

The data used in the experiment came from real data records generated by students’ practice in the English expert teaching classrooms participating in the determination of cognitive attributes of the test exercises in our university, containing the scores of 1000 students’ responses on 50 reading, writing and translation test questions, 38 of which were reading comprehension (objective questions), and 6 each of which were writing exercises and translation exercises (subjective questions). Behavior data were collected from the students, including three characteristics: the time when the students registered on the system, the time when the students completed the test questions, and the length of time the students took to complete the test question.

Evaluation metrics

In order to verify the accuracy of the fusion recommendation model, the generic evaluation metrics of precision rate (PRE), recall rate (Recall) and F1 value are used, and the students’ correct answers are regarded as positive samples and the students’ incorrect answers are regarded as negative samples, and the calculation formula is as follows:

In the formula, TP represents the number of exercises in which students answer correctly and the prediction is correct; TN represents the number of exercises in which students answer incorrectly and the prediction is incorrect, FP represents the number of exercises in which students answer incorrectly but the prediction is correct, FN represents the number of exercises in which students answer correctly but the prediction is incorrect. To further observe the experimental effect and evaluate the difference between the algorithm’s predicted score and the students’ actual score, the mean absolute error (MAE) and root mean square error (RMSE) are used as evaluation metrics, with smaller values indicating higher model accuracy. The calculation formulas are as follows:

In the above equation, I denotes the number of all students in the experiment, J denotes the number of all test exercises in the experiment, \(P_{ij}\) denotes the true score of student i on test question j, and \(r_{ij}\) denotes the predicted score of student i on test question by the model.

Comparison results

In this subsection, five representative dynamic and static knowledge tracking models are selected to design the comparison experiments, and the five comparison benchmark methods considered are DINA24, FuzzyCDF25, DKT26, My-FuzzyCDF27 and C&RM-MAKT28. The descriptions and parameter settings of the benchmark methods are as follows:

DINA: The higher-order DINA model models students’ cognition as well as the process of answering into four levels, which extends the hierarchical architecture of cognitive diagnosis by taking into account the relationship between the learner’s own competence characteristics on the level of knowledge, compared to the discrete DINA model.

FuzzyCDF: The fuzzy cognitive diagnosis method incorporates the fuzzy set theory into the process of students’ knowledge mastery level, which is represented in the cognitive diagnostic model as the degree of subordination to a state set. At the same time, FuzzyCDF uses fuzzy operations to model the cognitive-answer model in cognitive psychology, making it possible to model subjective exercises.

My-FuzzyCDF: a fuzzy cognitive diagnostic model that incorporates the factors of students’ response time and length of response, which has more accurate diagnostic results with improved precision, recall, and F1 value compared to other cognitive diagnostic models. The number of iteration rounds (epochs) is 100, the batch size is set to 16, and the learning rate is 0.002.

DKT: Recurrent neural network in the field of knowledge tracking, pioneered the dynamic knowledge tracking. DKT considers neurons in the hidden layer of the neural network as the knowledge mastery level of the students, and can track the change of the knowledge state of the learners over a long period of time. In the experiment, the number of iteration rounds (epochs) is set to 100, the number of batch processes (batch size) is set to 16, and the learning rate is set to 0.002. C&RM-MAKT: A deep diagnostic model that incorporates cognitive diagnostic parameters and multiple attention mechanisms to improve the interpretability of the model. The number of iteration rounds is 100, the batch size (is set to 16, and the learning rate is 0.002). The experimental results are shown in Table 4.

Table 4 shows that the DINA model, due to its static diagnostic nature, results in relatively low PRE values (0.601-0.706), while the DKT model, due to the abstraction of its hidden states, achieves only a Recall value of 0.525-0.583. In contrast, the MFCD- MAKT model, by integrating temporal weights and knowledge gap parameters, significantly improves dynamic diagnostic accuracy, with PRE reaching 0.883-0.901.

For PRE metric, the MFCD-MAKT model achieved scores of 0.883, 0.894, 0.901 for objective exercises, and 0.782, 0.790, 0.804 for subjective exercises at 50%, 60%, and 70% proportions, respectively. Compared to other models, the MFCD-MAKT model significantly outperforms in any type of exercises and proportion. In terms of Recall metric, the MFCD-MAKT model also demonstrates the best performance. The Recall values are 0.585, 0.631, 0.644 for objective exercises, and 0.525, 0.572 and 0.586 for subjective exercises at 50%, 60%, and 70% proportions. For example, at 50% proportion of objective questions, MFCD-MAKT model gets Recall value is 0.585, the second-best model C&RM-MAKT gets 0.553. And then for F1 metric, the MFCD-MAKT model scores 0.701, 0.726, 0.773 for objective exercises, and 0.664, 0.712, 0.733 for subjective exercises at 50%, 60%, and 70% proportions, respectively. For instance, at 50% proportion of objective questions, the second-best model, C&RM-MAKT, has an F1 of 0.668, while the MFCD-MAKT model reaches F1value is 0.701, and C&RM-MAKT gets 0.668.

Based on the data in the table, the improved model not only leads in the PRE index, but also occupies a clear advantage in the comprehensive indicators of Recall and F1. This further indicates that the improved fuzzy cognitive diagnosis module and the introduction of the multi-head attention mechanism module better capture the dynamic changes of students’ knowledge status, improve the prediction accuracy of the model, enhance the model’s ability to pay attention to the relevance and importance of different knowledge points, and improve the overall performance of the model.

The experiment randomly selected 40% to 90% of each learner’s learning record as the training datasets. Figure 3 shows the fit of each model on the datasets. C&RM-MAKT and the algorithm proposed in this paper, MFCD-MAKT, outperform the other methods on the MAE metric. When the proportion of the training set is increased from 40% to 90%, in terms of MAE metrics, MFCD-MAKT varies within a range of 1.51%, while DINA, FuzzyCDF, My-FuzzyCDF, DKT, and C&RM-MAKT vary within a range of 3.37%, 3.65%, 15.39%, 1.87%, and 1.63%, respectively; for RMSE metrics, MFCD-MAKT fluctuates within a range of 1.90%, while DINA, FuzzyCDF, My-FuzzyCDF, DKT, and C&RM-MAKT vary within a range of 2.41%, 2.79%, 15.60%, 2.44%, and 2.18%, respectively.

When the training set ratio falls below 60%, My-FuzzyCDF, due to the lack of an attention mechanism, exhibits an RMSE fluctuation as high as 15.60%. In contrast, MFCD-MAKT, by leveraging multi-head attention to capture semantic associations among questions, maintains RMSE fluctuations at a stable 1.90%, demonstrating its robustness to the cold-start problem.

MAE/RMSE results comparison.

In the recommendation of English test questions, the MFCD-MAKT method, combining the parameters of fuzzy cognitive diagnosis and multiple attention mechanisms, outperforms other methods in terms of precision (PRE) and recall. Therefore, compared to other methods, MFCD-MAKT provides more accurate and effective recommendation results in the English test scenario. Compared with the traditional DKT algorithm, when the proportion of the training set in the experiment is high, the difference between the recommendation results of MFCD-MAKT and other algorithms is small. As the proportion of the test set increases, the experimental results in the table show that the recommendation results of MFCD-MAKT and other algorithms rapidly expand. This indicates that the advantages of the algorithm proposed in this paper have been demonstrated, showing that the depth of introducing fuzzy cognitive diagnostic parameters of the network can explore deeper information of student state characteristics, thus being able to more accurately grasp the true learning level of learners and achieve personalized practice and accurate recommendations.

In current higher education, the training of English reading, writing, and translation needs to integrate interdisciplinary knowledge (e.g., cultural adaptation A15, critical thinking A16). However, traditional recommendation systems, due to disciplinary fragmentation struggle to meet these demands. This paper quantifies cross knowledge dependencies through knowledge gap parameters (Equations 8–9) and combines multi-head attention mechanisms (Equations 18–19) to achieve semantic association of questions, providing a feasible solution for the cultivation of complex competencies (Figs. 4, 5, 6).

Model interpretability

To assist educators in better understanding the model’s decision-making process, the model’s outputs are visualized through three interpretable diagrams: student cognitive state evolution graph, knowledge point proficiency radar chart, and exercise recommendation rationale network. Below are the dataset design and data requirements for generating each visualization:

-

Dataset design

-

Student ID: Unique identifier for each student.

-

Exercise ID: Unique identifier for each exercise.

-

Knowledge point ID: Unique identifier for each knowledge point.

-

Response time: Timestamp indicating when a student started an exercise.

-

Response duration: Time taken by the student to complete the exercise.

-

Score: Binary score (0: incorrect, 1: correct).

-

Recommended exercises: List of recommended exercises generated by the model.

-

Mastery level: Continuous value (0-1) quantifying the student’s proficiency in a knowledge point.

-

Time: Timestamp of exercise completion for temporal analysis.

-

Visualization specifications

Student cognitive state evolution graph.

The cognitive state transition graph illustrates the changes in mastery levels of various knowledge points among different learners across multiple time points. In this graph, the x-axis represents time points (from 1 to 6), and the y-axis represents the Mastery Level of knowledge points, ranging from 0 to 1. Each color corresponds to a distinct Knowledge Point ID, while different line styles denote various Learner IDs. Through this visualization, several key insights can be observed:

Mastery level trends over time: The progression of mastery levels for individual learners across different knowledge points can be tracked. For instance, certain learners demonstrate a steady improvement in their mastery of specific knowledge points over time, whereas others may consistently struggle with particular concepts.

Individual differences: Significant variations exist in the mastery levels of different learners concerning the same knowledge point. Some learners achieve mastery of specific knowledge points more rapidly than others. This insight helps identify which learners may require additional support or intervention.

Difficulty of knowledge points: The overall mastery levels across all learners also reveal the relative difficulty of different knowledge points. Certain knowledge points exhibit higher average mastery levels, while others are consistently challenging for most learners. This information is crucial for educators to allocate teaching resources effectively and focus on areas where learners require more attention.

This graph holds significant value for personalized education, as it provides a clear visualization of individual learning trajectories and identifies specific areas where learners may need additional support. Such insights enable educators to tailor their teaching strategies, offering more targeted interventions and enhancing the overall quality of education.

The knowledge mastery graph.

The knowledge mastery graph illustrates learners’ understanding of each knowledge point, aiding educators in identifying areas that require improvement. This graph uses a polar scatter plot format to display the distribution of mastery levels across various knowledge points among different learners. In the graph, the radial distance represents the Mastery Level, the angle denotes the Knowledge Point ID, and different colors correspond to different learner identifiers. Each subplot corresponds to an individual learner, enabling a detailed view of their mastery across all knowledge points.

Overall mastery levels can be assessed by examining each subplot, revealing noticeable variations in how learners grasp different knowledge points. Some display high proficiency across most points, while others show distinct weaknesses in specific areas. Relative mastery across knowledge points is evident by comparing an individual’s performance across different points, highlighting strengths and weaknesses. For instance, a learner may excel in KP1 and KP3 but struggle with KP4 and KP6.

Comparing subplots reveals mastery level disparities among learners. Some consistently perform well, while others require additional focus to enhance their understanding. This tool aids educators in comprehensively understanding learners’ mastery, enabling tailored learning plans that address individual weaknesses and improve overall educational outcomes.

Recommended practice basis diagram.

The recommended practice basis diagram is a network graph illustrating the relationships between knowledge points and exercises. In the diagram, nodes represent Knowledge Point IDs and Exercise IDs, while the width of the edges indicates the strength of the association between knowledge points and exercises, determined by the mastery level. Wider edges signify that a knowledge point has a greater influence on the corresponding exercise. Through this diagram, the following observations can be made:

Associations between knowledge points and exercises: The graph reveals varying degrees of association between each knowledge point and multiple exercises. Certain knowledge points are highly correlated with many exercises, indicating that these are critical knowledge points requiring focused mastery. The edge width reflects the importance and influence of a knowledge point on an exercise. Educators can leverage these associations to recommend exercises best suited to a student’s current mastery level. For example, for knowledge points with lower mastery levels, exercises with stronger associations and moderate difficulty can be selected for targeted practice. By integrating a student’s mastery levels with the associations between knowledge points and exercises, personalized exercise recommendations can be generated. For instance, if a student demonstrates low mastery of KP1, exercises strongly associated with KP1 can be recommended to help them improve efficiently. This diagram not only visualizes the complex relationships between knowledge points and exercises but also provides educators with a scientific basis to recommend exercises more accurately, enhancing learning efficiency and outcomes.

Through these three graphical representations, educators gain a comprehensive understanding of a student’s learning progress, knowledge point mastery, and the rationale behind exercise recommendations. These visuals offer intuitive and valuable insights, empowering educators to design more scientific and personalized teaching strategies, thereby improving educational quality and student learning outcomes.

Efficiency and scalability experiments

To evaluate computational efficiency, experiments were conducted on datasets of varying scales (ranging from 1K to 100K students). Table 5 compares the training time and memory consumption of the MFCD-MAKT model against baseline models.

As shown in the table data above, MFCD-MAKT exhibits higher training time and memory consumption compared to simpler models like DINA, but achieves significantly superior accuracy (PRE: 0.883 vs. 0.706). The quadratic complexity of the attention mechanism limits its scalability for T > \(10^3\). To address these scalability challenges, the following optimizations can be applied:

Distributed training: Partition student interaction sequences across multiple GPUs/TPUs using data parallelism. For example, dividing N students into P partitions reduces per-device memory demand to O(N/P\(\cdot \)T\(\cdot \)K).

Model compression: Apply pruning techniques to remove redundant attention heads or BiGRU units with minimal performance impact, and adopt 8-bit integer quantization for parameter accuracy loss.

Approximate attention computation: Replace full attention with sparse attention or locality-sensitive hashing (LSH), reducing complexity from O(\(\hbox {T}^2\)) to O(T log T).

Conclusion

Facing the weak interpretability of the hidden states of deep knowledge tracing models and the neglect of the impact of historical exercises on prediction results, this paper proposes a prediction algorithm that combines cognitive diagnostic theory and multi-head attention mechanism. First, time series data is transformed into low-dimensional continuous real-valued vectors. Secondly, a fuzzy cognitive diagnostic model is used to integrate students’ response time and duration to evaluate students’ mastery of knowledge points and skill levels. In addition, in the modeling of exercise response processes, a knowledge gap parameter is introduced to characterize the degree to which specific knowledge points restrict students’ responses to exercises. By using interpretable parameters trained by the fuzzy cognitive diagnostic model to model students’ learning states, the knowledge state vector is expanded into a knowledge state matrix from the perspective of model mechanisms. Finally, the proposed model uses a multi-head attention mechanism to calculate the influence of historical sequential data on prediction results. Experimental results show that this recommendation method achieves relatively good performance in recommending English reading, writing, and translation training exercises. To enhance the interpretability of the model, we will provide visualizations of the model’s outputs, including students’ mastery levels of different knowledge points and the influence weights of historical exercise responses. These visualizations will help educators better understand the model’s decision-making process and apply it in real-world teaching scenarios.

Although this study primarily focuses on recommendations for English reading, writing, and translation exercises, the proposed approach combining the improved fuzzy cognitive diagnosis model (MFCD) and the multi-head attention mechanism (MAKT) exhibits strong generalization. The core of this method lies in providing personalized recommendations by modeling students’ learning processes and dynamic knowledge states, which is not only applicable to the English discipline but can also be extended to other subjects requiring personalized learning paths, such as mathematics and science. For instance, in mathematics exercises, knowledge gap parameters and response time factors can similarly be used to assess students’ mastery of mathematical concepts. Future work will focus on evaluating the scalability and adaptability of the proposed model across different educational contexts, including diverse subjects (e.g., mathematics, physics) and student populations (e.g., high school, university). Additionally, we plan to test the model’s performance on large-scale datasets to further validate its computational efficiency and predictive accuracy in real-world educational settings.

Data availability

The datasets generated and/or analyzed during the current study are not publicly available due to privacy concerns and the absence of consent from all participants to share their data publicly. However, the data are available from the corresponding author upon reasonable request.

References

Kang, D. & Seo, S. Personalized smart home audio system with automatic music selection based on emotion. Multimed. Tools Appl. 78(3), 3267–3276 (2019).

Shi, Y. & Yang, X. A personalized matching system for management teaching resources based on collaborative filtering algorithm. Int. J. Emerg. Technol. Learn. 15(13), 207–220 (2020).

Tiwari, S., Kumar, S., Jethwani, V., Kumar, D. & Dadhich, V. Pntrs: Personalized news and tweet recommendation system. J. Cases Inf. Technol. 24(3) (2022).

Luo, J. & Duan, X. Intelligent recommendation of personalised tourist routes based on improved discrete particle swarm. Int. J. Comput. Sci. Eng. 25(6), 598–606 (2022).

Rodriguez, A., Leonor, G., Florez, R. & Edith, E. The use of legal cases as a way to enhance law students’ cognitive and communicative competences in the English classes. Argentinian J. Appl. Linguist. 8(1), 7–28 (2020).

Wang, J., Jiang, F. & Fang, X. Perspectives on policing education and careers: insights from undergraduate students of China’s police academies. Hum. Soc. Sci. Commun. 11(1), 755 (2024).

Ghodai, A. & Wang, Q. Deep graph memory networks for forgetting-robust knowledge tracing. IEEE Trans. Knowl. Data Eng. 35(8), 7844–7855 (2023).

Zhang, W., Gong, Z., Luo, P. & Li, Z. DKVMN-KAPS: Dynamic key-value memory networks knowledge tracing with students’ knowledge-absorption ability and problem-solving ability. IEEE Access 12, 55146–55156 (2024).

Scruggs, R. et al. How well do contemporary knowledge tracing algorithms predict the knowledge carried out of a digital learning game. Educ. Technol. Res. Dev. 71(3), 901–918 (2023).

Nakagawa, H., Iwasawa, Y. & Matsuo, Y. Graph-based knowledge tracing: Modeling student proficiency using graph neural networks. Web Intell. 19, 1–2 (2021).

Su, W. et al. An xgboost-based knowledge tracing model. Int. J. Comput. Intell. Syst. 16(1), 13 (2023).

Lai, Z., Wang, L. & Ling, Q. Recurrent knowledge tracing machine based on the knowledge state of students. Expert Syst. 38(8), 12782 (2021).

Wang, F. et al. Dynamic cognitive diagnosis: An educational priors-enhanced deep knowledge tracing perspective. IEEE Trans. Learn. Technol. 16(3), 306–323 (2023).

Quatresooz, F., Demey, S. & Oestges, C. Tracking of interaction points for improved dynamic ray tracing. IEEE Trans. Veh. Technol. 70(7), 6291–6301 (2021).

Huang, Z., Liu, Q., Chen, Y. & Wu, L. Learning or forgetting? A dynamic approach for tracking the knowledge proficiency of students. ACM Trans. Inf. Syst. 38(2), 19 (2020).

Hawkins, W. & Heffernan, N. Using similarity to the previous problem to improve Bayesian knowledge tracing. In Proceedings of the EDM. London. 136–140 (2019).

Agarwal, D., Baker, S. & Muraleedharan, A. Dynamic knowledge tracing through data driven recency weights. In The 13th International Conference on Educational Data Mining. Morocco. 725–729 (2020).

Käser, T., Klingser, S. & Schwing, A. Dynamic Bayesian networks for student modeling. IEEE Trans. Learn. Technol. 10(4), 450–462 (2017).

Piech, C., Bassen, J. & Huang, J. Deep knowledge tracing. Adv. Neural Inf. Process. Syst. 3(3), 19–23 (2015).

Zhang, J., Shi, X. & King, I. Dynamic key-value memory networks for knowledge tracing. In Proceedings of the 26th International Conference on World Wide Web. Perth. 765–774 (2017).

Sun, X., Zhao, X. & Li, B. Dynamic key-value memory networks with rich features for knowledge tracing. IEEE Trans. Cybern. 52(8), 8239–8245 (2022).

Liu, J., Huang, Z. & Yin, Y. Ekt: Exercise-aware knowledge tracing for student performance prediction. IEEE Trans. Knowl. Data Eng. 33(1), 100–115 (2019).

Zhang, X., Zhang, J. & Lin, N. Sequential self-attentive model for knowledge tracing. In Proceedings of the International Conference on Artificial Neural Networks. Bratislava. 318–330 (2021).

Choi, Y., Lee, Y. & Cho, J. Towards an appropriate query, key, and value computation for knowledge tracing. In Proceedings of the Seventh ACM Conference on Learning. Brisbane. 341–344 (2020).

Agarwa, L., Baker, R. & Muraleedharan, A. Dynamic knowledge tracing through data driven recency weights. In The 13th International Conference on Educational Data Mining. Morocco. 725–729 (2020).

De, L. & Song, H. Simultaneous estimation of overall and domain abilities: A higher-order IRT model approach. Appl. Psychol. Meas. 33(8), 620–639 (2020).

Reckase, M. & Mckinley, R. Some latent trait theory in a multidimensional latent space. Estimation 53(7), 165–176 (1982).

Luo, Z. Exercise Recommendation for Personalized and Precise Teaching in Moocs Algorithm Research and Implementation. (Hunan University, 2023).

Author information

Authors and Affiliations

Contributions

Yixuan Zhang wrote the main manuscript text, and Yanyi Wang made the simulation, prepared the figures and tables.

Corresponding author

Ethics declarations

Competing interests

The authors declare no competing interests.

Additional information

Publisher's note

Springer Nature remains neutral with regard to jurisdictional claims in published maps and institutional affiliations.

Rights and permissions

Open Access This article is licensed under a Creative Commons Attribution-NonCommercial-NoDerivatives 4.0 International License, which permits any non-commercial use, sharing, distribution and reproduction in any medium or format, as long as you give appropriate credit to the original author(s) and the source, provide a link to the Creative Commons licence, and indicate if you modified the licensed material. You do not have permission under this licence to share adapted material derived from this article or parts of it. The images or other third party material in this article are included in the article’s Creative Commons licence, unless indicated otherwise in a credit line to the material. If material is not included in the article’s Creative Commons licence and your intended use is not permitted by statutory regulation or exceeds the permitted use, you will need to obtain permission directly from the copyright holder. To view a copy of this licence, visit http://creativecommons.org/licenses/by-nc-nd/4.0/.

About this article

Cite this article

Zhang, Y., Wang, Y. A personalized recommendation algorithm for English exercises incorporating fuzzy cognitive models and multiple attention mechanisms. Sci Rep 15, 11531 (2025). https://doi.org/10.1038/s41598-025-96489-3

Received:

Accepted:

Published:

Version of record:

DOI: https://doi.org/10.1038/s41598-025-96489-3