Abstract

The rapid development of photovoltaic (PV) energy and its growing penetration in power systems have made accurate and robust PV power forecasting a critical challenge. However, the strong stochasticity, nonlinearity, and heterogeneity of PV output, driven by complex environmental conditions, hinder the performance of conventiona l forecasting methods. To address these issues, this study proposes a novel hybrid framework that integrates TimeGAN-based data augmentation, extended LSTM (xLSTM), and Transformer networks for probabilistic and accurate PV power prediction. First, TimeGAN is employed to synthesize realistic PV time series data, effectively capturing temporal correlations while preserving the irradiance–temperature dependency, thus mitigating the limitations of scarce or imbalanced historical datasets. Second, a hybrid xLSTM-Transformer architecture is developed, where the matrix memory-enhanced xLSTM module focuses on local feature extraction, and the Transformer module models long-range dependencies via self-attention mechanisms. Finally, the proposed model is validated using real-world operation data from the State Grid of China. Experimental results demonstrate that the proposed framework significantly improves prediction accuracy under realistic operating conditions. Compared with conventional LSTM and Transformer baselines, the proposed TimeGAN–xLSTM–Transformer model achieves a reduction of approximately 48.1% in RMSE and 44.1% in MAE, highlighting its superior capability in capturing both short-term fluctuations and long-term temporal dependencies of photovoltaic power generation. This research contributes to advancing intelligent PV forecasting technologies by leveraging data generation, temporal modeling, and attention mechanisms, offering theoretical and practical support for the reliable integration of PV in renewable-dominated power systems.

Similar content being viewed by others

Introduction

To achieve the dual-carbon goals of carbon peaking and carbon neutrality, China is accelerating the construction of a new power system with renewable energy as its core. Among renewable sources, solar energy plays a particularly important role due to its wide availability, environmental friendliness, and scalability. As a clean and sustainable energy source, photovoltaic (PV) power generation not only reduces environmental pollution but also serves a broad range of economic and public service needs, especially in a geographically vast country like China. Driven by supportive national policies, PV installations across China have experienced rapid growth.

On the one hand, the increasing penetration of PV systems has made them a vital component of the electricity supply structure, significantly affecting grid operations and reserve planning. Accurate and reliable PV power forecasting has thus become a key enabling technology for enhancing system efficiency and improving renewable energy integration. However, the chaotic nature of atmospheric systems introduces considerable uncertainty and randomness into solar power output, complicating prediction tasks. Moreover, as more influencing factors are incorporated into PV prediction models, both internal time-series dependencies and complex, fluctuating meteorological inputs must be accounted for. These data are characterized by strong nonlinearity, non-stationarity, and heteroscedasticity, making the modeling of input–output mappings particularly challenging. The increasing heterogWith the rapid advancement of renewable energy integration and the construction of new-type power systems, both domestic and international scholars have conducted extensive studies on short-term forecasting of renewable energy output. Forecasting methodologies are primarily classified into point prediction, interval prediction, and random scenario (probabilistic distribution) prediction. Point prediction aims to provide a deterministic estimate for a future time step and commonly utilizes methods such as numerical weather prediction, wavelet neural networks, and least squares support vector machines1,2,3. In contrast, interval prediction outputs a range of possible values for uncertain variables, partially reflecting their stochastic nature. Methods including Gaussian processes and deep learning frameworks have been widely employed for this purpose4,5,6, offering improved robustness yet still susceptible to deviations caused by random fluctuations.

Random scenario prediction, also known as probabilistic distribution forecasting, models the possible realizations of uncertain variables by estimating their probability distributions over time. This approach is particularly vital for handling the high variability and intermittency of renewable sources such as photovoltaic (PV) and wind power. It addresses the limitations of point and interval forecasts in capturing the true distributional characteristics of uncertainty. For example, Zhang7 uses Quantile Regression (QR) to generate short-term stochastic scenarios of PV output, while Huang8 further improves this with a quantile convolutional neural network. Similarly, He9 applies a Gaussian quantile-kernel density estimation model to capture wind power distributions, and Fan10 utilizes the swing door algorithm along with fuzzy c-means clustering to classify patterns of wind power fluctuation and predict short-term stochastic scenarios. Despite these advances, QR and swing door-based methods are heavily reliant on large-scale historical datasets, limiting their generalizability in data-scarce environments.

To mitigate data dependency, generative adversarial networks (GANs) have been introduced for renewable energy prediction tasks. References11,12 demonstrate that GAN-based models can generate wind power distributions resembling historical data, facilitating reliable scenario generation even with limited samples. However, standard GAN architectures fall short in modeling temporal dependencies intrinsic to PV outputs. To address this, Time-series Generative Adversarial Networks (TimeGAN) have been proposed as a novel solution. Leveraging the inherent time correlations in PV data, TimeGAN effectively captures both static and temporal features to generate synthetic data that align with the real probabilistic distribution. The application of TimeGAN in PV forecasting has shown promising results, with visualization and empirical evaluation confirming its ability to generate realistic output scenarios.

In parallel, deep recurrent models such as LSTM and GRU have shown strong capabilities in learning temporal dependencies within time-series data. LSTM models have been successfully applied in various domains: for instance, Ehsan et al13. used LSTM to model water absorption behavior in composites, while Zhang et al.14 proposed a multilevel LSTM integrated with ultrasonic detection for defect identification. Jian et al15. combined BiLSTM with attention and transfer learning to predict composite fatigue life with high accuracy. To further enhance long sequence learning, an extended LSTM (xLSTM) was proposed16,17, incorporating deeper network structures and advanced gating mechanisms. While LSTM and GRU models are adept at capturing long-range dependencies, they often underperform in extracting localized features or modeling non-stationary transitions across multi-stage processes, such as the evolution of damage in complex systems.

To overcome these limitations, hybrid neural architectures have emerged, combining different model strengths. Recent works have explored combinations such as CNN-LSTM18 and LSTM-Transformer19, achieving improved accuracy in sequence prediction tasks. In this context, integrating TimeGAN for data generation, xLSTM for long-term temporal modeling, and Transformer for global attention-based feature extraction offers a promising composite framework for accurate, probabilistic, and robust photovoltaic power forecasting under complex and uncertain conditions.eneity and volume of multi-source data further complicate analysis and modeling tasks.

Recent studies have increasingly emphasized the importance of hybrid learning frameworks and data-driven integration strategies in renewable energy systems and complex prediction tasks. At the planning and system level, city-scale photovoltaic deployment and spatial optimization have been investigated to support large-scale PV integration under urban constraints21. From the perspective of short-term operation, advanced deep learning architectures incorporating spatiotemporal modeling and attention mechanisms have demonstrated improved forecasting robustness for photovoltaic clusters under highly variable conditions20. In parallel, learning-based approaches have been widely adopted in power-electronics-dominated energy systems to enhance dynamic control performance and reliability22,23. Beyond the energy domain, recent advances in multi-model fusion learning show that combining heterogeneous predictors and leveraging complementary outputs can significantly improve overall prediction accuracy and generalization capability24. These studies collectively indicate that integrating data augmentation, multi-scale temporal modeling, and fusion-based learning is a promising direction, while existing methods still face challenges in handling data scarcity and complex temporal dependencies—issues explicitly addressed by the proposed TimeGAN–xLSTM–Transformer framework.

(1) This study introduces a TimeGAN-driven data augmentation strategy to address the data scarcity and imbalance issues commonly encountered in photovoltaic (PV) forecasting. By leveraging the temporal modeling capability of TimeGAN, we synthetically generate diverse and realistic PV time series while preserving the intrinsic correlation patterns between key environmental variables such as irradiance and temperature. This enhanced dataset improves the generalization capacity of downstream prediction models under varied operating conditions.

(2) This study propose a novel hybrid architecture that integrates extended Long Short-Term Memory (xLSTM) networks with Transformer modules. The xLSTM component incorporates a matrix-based memory structure (mLSTM), enabling efficient parallel processing and enhanced local feature extraction across multi-stage temporal patterns. Meanwhile, the Transformer submodule contributes global contextual awareness through self-attention mechanisms, thereby effectively capturing long-range dependencies and complex interactions within the PV generation sequences.

(3) This study validate the proposed TimeGAN-xLSTM-Transformer framework using real-world operation data obtained from the State Grid of China. Experimental results demonstrate that our method significantly outperforms traditional machine learning and deep learning baselines in terms of prediction accuracy and robustness. The empirical evaluation confirms the framework’s applicability and reliability in practical PV power forecasting scenarios under complex and uncertain environmental conditions.

Methodology

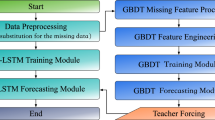

To comprehensively capture the intrinsic temporal dynamics and uncertainty in photovoltaic (PV) power generation data, this study proposes a multi-component framework that processes historical PV time series from three key perspectives: data augmentation, temporal pattern learning, and dependency modeling, as shown in Fig. 1.

The multi-component framework of TimeGAN-xLSTM-Transformer.

First, a TimeGAN-based data augmentation module is employed to address the data sparsity and imbalance issues, especially under extreme fluctuation scenarios. By integrating autoregressive modeling, generative adversarial learning, and temporal dynamics embedding, TimeGAN generates synthetic PV time series that preserve both statistical properties and temporal dependencies of real-world data, thereby enriching the training dataset. Second, an xLSTM network is utilized to extract temporal features across multiple timescales. The model integrates convolutional feature extraction and memory mechanisms, enabling it to simultaneously capture localized fluctuations and long-term trends in PV output. This ensures a more accurate reflection of dynamic variations caused by environmental and operational factors. Finally, a Transformer module is introduced to model complex interdependencies and potential nonlinear evolution patterns within the PV power sequences. Its self-attention mechanism allows for flexible weighting of relevant time steps, facilitating a deeper understanding of how contextual changes affect future outputs.

TimeGAN based synthetic data generation for photovoltaic time series

During the model training process, the scarcity of extreme fluctuation scenarios in photovoltaic (PV) data poses a significant challenge. Training prediction models directly on such limited data may lead to underfitting and insufficient generalization. To address this issue, a time-series data augmentation model based on Generative Adversarial Networks (GANs) is proposed, aiming to generate synthetic PV power sequences that share similar distributions with the original extreme fluctuation scenarios. Reference extends the traditional GAN framework by incorporating the inherent temporal dynamics of sequential data and introduces the TimeGAN model. TimeGAN consists of four key components: an embedding network, a recovery network, a sequence generator, and a sequence discriminator, as illustrated in Fig. 2. The embedding and recovery networks form an autoencoding component, while the generator and discriminator constitute the adversarial component. These two parts are trained jointly, enabling TimeGAN to simultaneously learn meaningful representations, generate realistic sequences, and capture temporal dependencies.

TimeGAN model structure.

Let \(\:{s}_{t}\) denote static features and \(\:{x}_{t}\) denote time-series features. The embedding function \(\:e\) maps them into latent representations \(\:{h}_{t}\):

where \(\:{e}^{S}:S\to\:H\) and \(\:{e}^{X}:H\times\:X\to\:H\) are the static and temporal embedding networks, respectively.

The recovery function \(\:r\) maps the latent representation back to the feature space:

The generator creates latent codes from randomly sampled inputs \(\:{z}^{S},{z}_{t}\) :

The discriminator operates in the latent space using a bidirectional RNN and feedforward classifier to distinguish real from generated data:

where \(\:{u}_{t}\) encodes contextual information via forward and backward hidden states.

To ensure accurate reconstruction of input data, the reconstruction loss is defined as:

The unsupervised adversarial loss that guides the generator and discriminator is:

A supervised loss is introduced to align the next-step prediction from real and synthetic latent sequences:

TimeGAN jointly optimizes reconstruction, adversarial, and supervised losses to effectively model the dynamics of time-series data and enhance data quality. Its novelty lies in simultaneously learning both global and conditional stepwise distributions, enabling realistic and temporally coherent sequence generation.

xLSTM based Multi-Scale Temporal feature extraction

In this study, the Extended Long Short-Term Memory (xLSTM) network is employed to capture both short-term local and long-term global temporal dependencies within PV features. The xLSTM extends the conventional LSTM by incorporating two additional components: the short-term memory module (sLSTM) and the multi-scale memory module (mLSTM), as shown in Fig. 3.

xLSTM model structure.

Unlike conventional LSTM architectures that rely on a single vector-based cell memory, the extended LSTM (xLSTM) introduces enhanced memory structures and multi-scale temporal modeling mechanisms. In particular, the matrix-based memory LSTM (mLSTM) replaces the traditional scalar memory with a matrix-form representation, enabling richer state transitions and improved parallel processing capability. This design allows xLSTM to more effectively capture complex temporal patterns, non-stationary dynamics, and stage-wise variations in photovoltaic power output, which are difficult to model using standard LSTM networks.

The standard LSTM update equations are as follows:

Where \(\:{f}_{t}\), \(\:{i}_{t}\) and \(\:{o}_{t}\) represent the forget, input, and output gates, \(\:{C}_{t}\) is the cell state, and \(\:{h}_{t}\) is the hidden state. \(\:\sigma\:\) denotes the sigmoid function and \(\:tanh\) is the hyperbolic tangent.

sLSTM Module: The sLSTM module strengthens the model’s responsiveness to transient fluctuations in AE signals, such as the early formation and growth of micro-cracks. It improves the control over memory updates, enabling precise modeling of short-term signal patterns.

mLSTM Module: The mLSTM module captures long-term dependencies by processing the input at multiple temporal scales \(\:i\). Each scale has a dedicated LSTM unit with the following equations:

The final output of xLSTM is a fusion of hidden states from all scales, providing a unified representation that captures both fine-grained and long-term signal characteristics.

Transformer based Temporal dependency modeling

In this study, a hybrid model integrating xLSTM and Transformer is proposed to enhance temporal feature representation and global dependency modeling. The xLSTM network is first employed to extract fine-grained temporal features, which are then linearly projected via a fully connected layer to match the input dimensionality of the Transformer. To compensate for the Transformer’s lack of inherent temporal structure, positional encoding is added to the projected features. The Transformer architecture, composed of an encoder-decoder structure as shown in Fig. 4, utilizes multi-head self-attention, residual connections, and layer normalization to effectively capture long-range dependencies. In the decoder, masked attention ensures causality by preventing access to future positions, and information from the encoder guides the decoding process through cross-attention. The final prediction is generated via a softmax output layer. The attention mechanism is central to the Transformer’s strength, enabling the model to assign relevance-based weights across time steps, while residual connections and layer normalization ensure stable gradient flow and efficient training. The integrated feed-forward network further enhances representational capacity by transforming each sequence element independently.

Transformer general structure.

Transformer’s self-attention mechanism can be formulated as Eq. (10):

Where \(\:A\) represents the attention mechanism; \(\:S\) represents the Softmax function that calculates the attention weights; \(\:K,Q,V\) calculates the matrix of the attention mechanism, the keys of the matrix and the values, respectively; \(\:{d}_{k}\) is the dimension of the keys.

The multiple attention mechanism can be expressed as Eq. (11):

Where \(\:{M}_{h}\) is the multi-head attention mechanism; \(\:C\) is the connection mechanism between the attention; \(\:{h}_{i}\) is denoted as the i-th attention mechanism; \(\:{W}_{o}\) is the linear transformation weight matrix after the connection of the multi-head attention mechanism; \(\:{W}_{Qi}\),\(\:{W}_{Ki}\),\(\:{W}_{Vi}\) are the linear transformation weight matrix.

Experiment results

This study employed historical operation data from a distributed photovoltaic (PV) cluster in the State Grid of China, incorporating temperature, humidity, irradiance, and actual power output as input features for TimeGAN-based time-series data generation. The dataset covers a continuous operating period of approximately 180 days, with a sampling interval of 15 min, resulting in 96 time steps per day and a total of over 17,000 data points. The recorded variables include photovoltaic power output, ambient temperature, humidity, and solar irradiance, providing a comprehensive representation of real-world PV operating conditions. Through t-SNE and PCA visualization techniques, the generated data were evaluated both locally and globally. The t-SNE projection results (Fig. 5) revealed that the synthetic samples closely overlapped with real data in the low-dimensional space, demonstrating TimeGAN’s capability to accurately preserve local data structure. Similarly, the PCA-based global projection (Fig. 6) showed that the main variance directions of generated data aligned well with the original data, confirming the model’s effectiveness in capturing the overall data distribution.

Graph of t-SNE results.

Graph of PCA results.

Quantitative evaluations further validated the augmentation quality. The Discriminative Score was 0.154, indicating minimal distributional discrepancy between generated and real data. The Predictive Score reached 0.061, close to the original dataset, reflecting the ability of TimeGAN to maintain meaningful temporal dependencies. Using these synthetic sequences, a backpropagation neural network was trained and tested alongside a model trained on the original dataset. The specific hyperparameter settings for the proposed model are listed in Table 1.

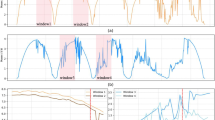

The xLSTM–Transformer hybrid architecture proposed in this study successfully combined local feature extraction with global temporal pattern recognition. The xLSTM module, enhanced by a matrix memory structure (mLSTM), captured stage-specific features of PV output under fluctuating environmental inputs. Concurrently, the Transformer module’s self-attention mechanism enabled modeling of long-range dependencies across sequences. Under identical hyperparameter settings, the proposed hybrid model achieved a Mean Absolute Percentage Error (MAPE) of 2.726%. These findings underscore the model’s robustness and superiority in capturing both short- and long-term dynamics of PV generation. A comparison was conducted between the xLSTM-Transformer method and the traditional LSTM and Transformer methods. The forecasting curves are visualized in Fig. 7, and the numerical results are presented in Table 2.

Forecasting curve of xLSTM-Transformer.

To further validate the effect of TimeGAN-based data augmentation, we trained two identical backpropagation neural network models using the original dataset and the augmented dataset, respectively, and tested both on the same test set. As illustrated in Figure 8 and compared in Table 3, the model trained on TimeGAN-augmented data achieved a MAPE of 2.726%, while the model trained on the original data yielded a significantly higher MAPE of 9.423%. This indicates that the synthetic sequences generated by TimeGAN are not only structurally consistent with real data but also substantially enhance the learning effectiveness of downstream models. The results confirm the practical value of TimeGAN in expanding training samples and improving generalization in photovoltaic forecasting tasks.

Comparison of model predictions before and after data enhancement of the training set.

Discussion

The results of this study highlight the effectiveness of integrating data augmentation and hybrid deep learning architectures for photovoltaic power forecasting. Compared with traditional sequence models, such as standalone LSTM or Transformer networks, the proposed framework benefits from the complementary strengths of xLSTM in capturing multi-scale temporal patterns and Transformer in modeling long-range dependencies. In addition, the incorporation of TimeGAN significantly enhances model generalization by enriching training data with realistic synthetic samples, particularly under extreme fluctuation scenarios.

Compared with recent studies on photovoltaic forecasting and hybrid learning frameworks, the proposed approach demonstrates competitive or superior performance while maintaining a relatively simple and interpretable architecture. Nevertheless, several limitations remain. First, the current framework is validated on data from a single photovoltaic cluster, and its scalability to multi-site or large-scale systems requires further investigation. Second, external uncertainty sources such as numerical weather prediction errors are not explicitly modeled. Future work will focus on extending the framework to multi-site cooperative forecasting, incorporating probabilistic weather forecasts, and deploying the proposed method in real-time power system operation environments.

Conclusion

This study proposed a novel hybrid TimeGAN–xLSTM–Transformer framework for photovoltaic power forecasting under complex and uncertain environmental conditions. By integrating TimeGAN-based data augmentation with multi-scale temporal feature extraction and attention-based dependency modeling, the proposed approach effectively addresses data scarcity, nonlinear dynamics, and long-range temporal dependencies inherent in photovoltaic power generation. Experimental results based on real-world operational data demonstrate that the proposed framework significantly outperforms conventional LSTM and Transformer baselines in terms of RMSE, MAE, and MAPE. The findings confirm that combining generative modeling with hybrid sequence learning provides a robust and accurate solution for photovoltaic power forecasting, offering practical value for renewable energy integration and power system operation.

Data availability

All data generated or analysed during this study are included in this published article.

References

He, Y. & Li, H. Probability density forecasting of wind power using quantile regression neural network and kernel density Estimation. Energy. Conv. Manag. 164, 374–384 (2018).

Sun, G. & Jiang, C. Wind power prediction by cascade cluster method and wavelet neural network. Acta Energiae Solaris Sinica. 42 (3), 56–62 (2021).

Wang, X. et al. VMD-GRU based short-term wind power forecast considering windspeed fluctuation characteristics. J. Electr. Power Sci. Technol. 36 (4), 20–28 (2021).

Xue, H. et al. Using of improved models of Gaussian processes in order to regional wind power forecasting. J. Clean. Prod. 262, 121391 (2020).

Niu, D. et al. Point and interval forecasting of ultrashort-term wind power based on a data-driven method and hybrid deep learning model. Energy 254, 124384 (2022).

Liao, Q. S. et al. Distributed photovoltaic net load forecasting in new energy power systems. J. Shanghai Jiao Tong Univ. 55 (12), 1520–1531 (2021).

Zhang, D. et al. A novel combined model for probabilistic load forecasting based on deep learning and improved optimizer. Energy 264, 126172 (2023).

Huang, Q. & Wei, S. Improved quantile convolutional neural network with two- stage training for dailyahead probabilistic forecasting of photovoltaic power. Energy. Conv. Manag. 220, 113085 (2020).

He, Y. et al. Short-term power load probability density forecasting based on GLRQ- stacking ensemble learning method. Int. J. Electr. Power Energy Syst. 142, 108243 (2022).

Fan, H. et al. Fluctuation pattern recognition based ultra-short-term wind power probabilistic forecasting method. Energy 266, 126420 (2023).

Yoon, J., Jarrett, D. & Van, M. Timeseries generative adversarial networks. Advances in Neural Information Processing Systems, 32, (2019). (2019).

Dutta, R., Chakrabartl, S. & Sharma, A. Topology tracking for active distribution networks. IEEE Trans. Power Syst. 36 (4), 2855–2865 (2021). (2021).

E. Yousefi. et al. A novel long-term water absorption and thickness swelling deep learning forecast method for corn husk fiber-polypropylene composite. Case Studies in Construction Materials. 17, e01268 (2022).

Zhang, F. et al. Ultrasonic lamination defects detection of carbon fiber composite plates based on multilevel LSTM. Struct. 327, 117714 (2024).

Y. Jian. et al. A novel bidirectional LSTM network model for very high cycle random fatigue performance of CFRP composite thin plates. International Journal of Fatigue. 190, 108627 (2025).

Li, W. et al. Chinese named entity recognition based on multi-level representation learning. Sci. 14 (19), 9083 (2024).

Cao, W. et al. Semi-supervised intracranial aneurysm segmentation via reliable weight selection. Vis Comput. 41, 5421–5433 (2025).

Ladjal, B. et al. Hybrid deep learning CNN-LSTM model for forecasting direct normal irradiance: a study on solar potential in Ghardaia, Algeria. Sci. Rep. 15, 15404 (2025).

Cao, K., Zhang, T. & Huang, J. Advanced hybrid LSTM-transformer architecture for real-time multi-task prediction in engineering systems. Sci. Rep. 14, 4890 (2024).

Yang, M. et al. T.Ultra-short-term prediction of photovoltaic cluster power based on Spatiotemporal convergence effect and Spatiotemporal dynamic graph attention network. Renew. Energy. 255, 123843 (2025).

Wei, T. et al. City-scale roof-top photovoltaic deployment planning. Appl. Energy. 368, 123461 (2024).

Wang, S. et al. A thyristor-based solid-state DC circuit breaker with a three-winding coupled inductor. IEEE Trans. Power Electron. 40 (4), 6192–6202 (2025).

Zeng, Z. & Goetz, S. M. A general interchanged interleaving carriers for eliminating DC/low-frequency Circulating currents in multiparallel three-phase power converters. IEEE Trans. Power Electron. 39 (10), 12323–12335 (2024).

Scarrica, V. M. & Staiano, A. Learning from outputs: improving multi-object tracking performance by tracker fusion. Technologies 12 (12), 239 (2024).

Funding

Inner Mongolia Power Company 2025 Science and Technology Project “Research and Application of Key Technologies for Distributed PV Hosting Capacity Assessment and Voltage Active Support in Distribution Networks for New-Type Power Systems” (Project No.: 2025-3-9).

Author information

Authors and Affiliations

Contributions

B.S.C. and J.Z.S. conceived the study, developed the methodology, and wrote the original manuscript. C.H.Z. contributed to the manuscript’s design, review, and editing. Y.H.W., H.W.N., and X.D.Y. processed the experimental datasets and conducted the formal analysis. G.D.W. and B.L. supported the research implementation and validation. All authors reviewed and approved the final manuscript.

Corresponding author

Ethics declarations

Competing interests

The authors declare no competing interests.

Additional information

Publisher’s note

Springer Nature remains neutral with regard to jurisdictional claims in published maps and institutional affiliations.

Rights and permissions

Open Access This article is licensed under a Creative Commons Attribution-NonCommercial-NoDerivatives 4.0 International License, which permits any non-commercial use, sharing, distribution and reproduction in any medium or format, as long as you give appropriate credit to the original author(s) and the source, provide a link to the Creative Commons licence, and indicate if you modified the licensed material. You do not have permission under this licence to share adapted material derived from this article or parts of it. The images or other third party material in this article are included in the article’s Creative Commons licence, unless indicated otherwise in a credit line to the material. If material is not included in the article’s Creative Commons licence and your intended use is not permitted by statutory regulation or exceeds the permitted use, you will need to obtain permission directly from the copyright holder. To view a copy of this licence, visit http://creativecommons.org/licenses/by-nc-nd/4.0/.

About this article

Cite this article

Chu, B., Shu, J., Zhao, C. et al. A hybrid TimeGAN–xLSTM–Transformer framework for photovoltaic power forecasting under complex environmental conditions. Sci Rep 16, 8782 (2026). https://doi.org/10.1038/s41598-026-36073-5

Received:

Accepted:

Published:

Version of record:

DOI: https://doi.org/10.1038/s41598-026-36073-5