Abstract

Intelligent behavior necessitates an adaptive integration of feedback. It is well-known that animals asymmetrically learn from positive and negative feedback. While asymmetrical learning is a robust behavioral effect, the latent computations behind how animals represent their environments and use this to differentially weight wins and losses is poorly understood. Here we tested whether and how uncertainty and reward history modulate the weights placed on wins and losses using a behavioral data set collected in rats. We propose a reinforcement learning model that integrates uncertainty history via an unsigned average reward prediction error and a separate subjective reward history component. We showed that in a dynamic probabilistic reversal learning task with blocks of variable reward predictability, ongoing estimation of uncertainty history and reward history both distinctly influenced rats’ sensitivity to wins and losses. In more predictable environments, and under low uncertainty levels, i.e., when rats were certain in making ‘correct’ choices, rats weighted wins more than losses, as indicated by a higher win-stay, and lower lose-shift probability. This asymmetrical learning strategy enabled rats to remain with the correct action, while discounting the influence of rare losses. Further, male rats were more impacted by their uncertainty history when making win-stay decisions compared to females. Hence, we found sex-specific contributions of these latent computations in modulating behavior. We overall demonstrate that asymmetrically weighting wins and losses could form an important behavioral strategy when adapting to ongoing changes in reward and uncertainty history.

Similar content being viewed by others

Introduction

Triumph and defeat are inevitable consequences of an ever-changing environment. Intelligent behaviors necessitate an adaptive integration of these differentially valanced outcomes. It is well-known that animals do not learn symmetrically from wins and losses, but that they generally learn more from positive outcomes, compared to negatives outcomes1,2,3,4,5,6. Previous work has reasoned that in several contexts, this positivity bias is advantageous to the animal as it makes them less susceptible to unreliable stimuli in their environments which in turn helps them maximize rewards4,6,7. However, the environment is constantly changing and requires that the animal keep track of these changes and adapt behavior accordingly. This therefore raises the question of which facets of the changing environmental statistics are relevant to the animal when differentially integrating positive and negative outcomes to adapt behavior.

According to optimal foraging theory8,9,10,11, animals track changing reward histories, and use this to make their stay and switch behaviors12. Such adaptations allow animals to remain in richer environments. Other work suggests that animals track the changing uncertainties, defined as the variability/predictability of the environmental structure13, which could also produce adaptive behaviors by gating learning in proportion to outcome reliability14,15,16. However, it is yet to be determined if animals adapt to the changing uncertainty and reward history by asymmetrically weighting wins and losses. It is also currently unclear if uncertainty and reward history play interactive roles or are independently used to adapt these learning strategies. Hence, our overall aim was to investigate whether and how reward history and uncertainty history are dynamically used to influence an animal’s sensitivity to wins and losses. We addressed this aim by using a dynamic probabilistic reversal learning task with blocks of varying reward predictability (i.e., stochasticity). There is also increasing evidence for sex differences in reward guided behaviors in rodents and humans17,18,19,20,21,22, however, it is less understood if distinct influence of uncertainty and reward history states may underlie these behavioral differences. Hence, we tested for the possibility of divergent impact of these environmental statistics on males and females, and whether this may underlie some behavioral differences.

We used computational modelling of behavioral data within the reinforcement learning framework to address these questions23. At the heart of these algorithms is the notion that a surprising outcome generates a learning signal. This learning signal is termed a reward prediction-error (RPE). Importantly, how much an animal learns from or weights their RPE is determined by the learning rate, which is often a fixed value. Previous work has expanded on the standard reinforcement learning models in an attempt to capture how this learning rate parameter may be dynamically adjusted to capture the differential weighting of RPEs or learning based on the experienced environmental statistics that included aspects of uncertainty and reward rate12,13,15,16,24,25,26,27,28. These models greatly increased understanding of uncertainty and reward history gating of learning. However, a critical, yet missing aspect in these models is that the uncertainty history and reward history each may differently influence how much weight is placed on negative and positive feedback, and this additional component could provide mechanistic insights in explaining adaptive behaviors.

To compute an animal’s subjective estimate of their reward history, we used a global reward state (GRS) computation, reflecting the animal’s trial-by-trial estimate of their environmental richness12,26. This term captures recency weighted reward history, unlike standard reward averages. Further, to compute an estimate of the animal’s represented uncertainty levels, we computed an unsigned average RPE (avgRPE). The concept of an unsigned avgRPE possibly reflecting an animal’s uncertainty state was introduced by Soltani and Izquierdo13; however, it is yet to be empirically determined how avgRPE may bias an animal’s sensitivity to wins and losses. We hypothesized that the unsigned avgRPE and the GRS computations together may enable subjects to represent relevant facets of their changing environmental statistics, which can then be used to asymmetrically (or symmetrically) gate learning from positive and negative outcomes to adapt behavior.

Our results show that, as expected, subjects do learn more from wins than losses in the probabilistic reversal learning procedure. However, the underlying reasons for this asymmetry vary depending on the distinct subjective reward rates and reward uncertainties present in the environment. Rats used their uncertainty history to down-weight the influence of unreliable losses, and increase the influence of reliable wins, particularly when their environment had a clearer pattern (i.e., less stochasticity). On the other hand, rats were more sensitive to wins when they were in a rich environment (i.e., high reward history state), irrespective of environmental stochasticity (i.e., predictability of rewards) – overall rendering rats less likely to switch from an advantageous action. Further, both males and females showed similar influence of reward and uncertainty histories when weighting losses, however, males were more influenced by uncertainty history, compared to females, when making decisions based on wins. Reward history and uncertainty state also interacted to shape decision strategies based on wins, but specifically in males, and in an environment with low stochasticity. These findings together demonstrate that ongoing estimations of uncertainty and reward history differentially impact asymmetrical learning strategies. Because asymmetrical learning strategies change based on highly relevant environmental statistics reflecting likelihood and magnitude of reward, these results suggest that asymmetrical learning is not a maladaptive bias but may form a part of an animal’s adaptive behavioral strategy.

Results

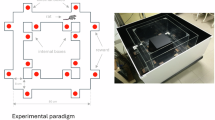

To address our hypotheses, we analyzed data from our previous study (Cheng et al.29 ; only the rats not exposed to ethanol were analyzed here) where we had trained water-restricted Long-Evans rats (n = 14; 9 males and 5 females) on a dynamic probabilistic reversal learning (dynaPRL) paradigm that had blocks of varying reward probability contrasts between the two actions (Fig. 1a and b). A total of 396 sessions were analyzed across 14 rats. There were three different reward probability block types that varied in their reward stochasticity. In the first block type, action one yielded a reward 80% of the time, and action two was rewarded 10% of the time. This was a high contrast block (low stochasticity), as the reward probability difference between the two actions was high. The second block type was a low contrast block (high stochasticity), where action one had a 60% reward probability, and action two was at 30%. Lastly, there was a block with no contrast, with action one and two each having a 45% reward probability (Fig. 1b; also see Methods for details on the task). These block types were switched within session every 15–30 trials, and male and female rats overall adapted to these reversals by the 6th trial (Fig. 1c). Such a design enabled us to investigate how rats used unsigned avgRPE and GRS to differentially weight wins and losses when the environment has a somewhat clear pattern (i.e., in the high contrast block) and when there is a higher level of stochasticity in the environment (i.e., the low contrast and no contrast block). Importantly, we refer to the lack of reward (i.e., omissions) as a loss and this is conceptually distinct from losses defined as a negative outcome (i.e., punishments).

Sex dependent asymmetrical learning behaviors and computations. (a) Reward choice under changing reward probabilities within the DynaPRL task in which rats initiated trials via magazine entry, following which two levers (left and right) are inserted, and rats made their decision. Outcomes were cued with a clicker noise if rewarded or white noise if unrewarded. The reward was ~ 33μL of a 10% sucrose solution. (b) There were three distinct block types based on their reward probability contrasts. The high contrast block had a p(reward|A1) = 0.8 and p(reward|A2) = 0. 1. The low contrast block had a p(reward|A1) = 0.6 and p(reward|A2) = 0.3. The block with no contrast had a 0.45 probability of reward for both actions. (c) the reversal curve plotted based on p(perseveration) (i.e., likelihood of choosing the previous lever) score for each trial, across all block types. Males and females both reverse by the 6th trial, with females showing a lower probability of repeating the previous best choice (p(perseveration) score) from 5th trial onwards compared to males (green dots are post-hoc tests showing a significant difference between males and females). (d) Overall win-stay and lose-shift probabilities, across all block types. Both sex groups win-stay more and lose-shift less in our task, however males had a higher p(WS) and a lower p(LS) compared to females. Each dot represents an individual rat, and error bars are standard error of the mean. (e) Asymmetrical value updating model where chosen and unchosen wins and losses had distinct learning and value decay rates as free parameters (f–i) Posterior densities from the hyperparameter of sex-group differences for the four value updating parameters from the reinforcement learning model in e. Rightward shifts (above 0) indicate higher parameter values for males compared to females, and the opposite for leftward (below 0) values. Horizontal lines below represent 80–95% highest density interval. Males overall had a higher learning rate from positive (alpha +) and negative (alpha-) outcomes, compared to females. Males also had a higher value decay rate for unchosen action when the chosen action’s outcome was negative (1-gamma-), but similar value decay rates for the unchosen action when the chosen action’s outcome was positive (1-gamma +). Abbreviations: dBF = directed Bayes Factor; p(R|A1) = probability of reward given action 1 was taken; p(R|A2) = probability of reward given action 2 was taken. * p < 0.05.

Rats were more sensitive to wins and less sensitive to losses, and this asymmetrical learning effect was stronger in males compared to females

We first asked whether rats generally had different sensitivities to wins and losses in our task, and whether this effect was sex-specific. To study these win/loss sensitivities, we calculated the overall probability of win-stays (WS) and lose-shifts (LS) for each rat. Rats overall had a higher WS probability compared to the LS probability (Fig. 1d, main effect; F(1775) = 10,604.41; p = 2.2e−16), indicating that rats were more sensitive to wins compared to losses in our task. Second, there was an interaction between the WS/LS factor and sex (F(1775) = 121.22, p = 2.2e−16). Subsequent t-tests showed that males had a higher WS probability (p = 0.004, Cohen’s d = 0.91), but a lower LS probability (p = 0.02, Cohen’s d = 0.70), compared to females. This result suggests that males had a greater sensitivity to wins and a lesser sensitivity to losses, compared to females.

To further probe the different win and loss sensitivities in males and females, and the possible computational processes underlying these differences, we used a reinforcement learning model that had four key parameters for updating action value from wins and losses (Fig. 1e). The first two were learning rates that updated values for chosen actions from wins and losses (α + and α − , respectively). The next two were value decay rates for the unchosen actions when the chosen outcome was a win or loss (1− γ+ and 1− γ-, respectively). Since we were specifically interested in comparing the parameter estimates between males and females, we used group-level hierarchical model fitting29,30. Here, the best fit of each rat’s parameters was determined by the group-level (male or female) hyperparameters. The key hyperparameter was the group mean difference, and this gave a posterior density function indicating whether the parameters of interest (learning and value decay rates from wins and losses) were different between the two sex groups, and in which direction (see Methods). We found that males had a right-skewed density function for the group mean difference hyperparameter for the learning rate from wins (α +), indicating that males updated more from wins compared to females. The strength of this rightward shift was quantified using directed Bayes Factor (dBF), where males were approximately 11 times more likely (dBF = 11.31) to have a higher α + than a lower α + , compared to females (Fig. 1f). Males were also more likely to have a higher learning rate from negative outcomes (α-; dBF = 4.47; Fig. 1g). However, despite having higher learning rates, males had a higher value decay rate from losses (dBF = 20.98; Fig. 1i), compared to females, with almost no sex differences in value decay rate for positive outcomes (dBF = 1.04; Fig. 1h). These results collectively suggest that a higher value decay from negative outcomes in males may explain their increased sensitivity to wins, but reduced sensitivity to losses, despite having a higher learning rate for both outcomes. This interpretation is consistent with our findings that males have a higher win sensitivity and lower loss sensitivity based on the WS/LS analysis (Fig. 1d). Further, simulations with the estimated parameters also captured the WS/LS behaviors and the sex differences from Fig. 1d (see Figure S3). Lastly, latency data between the two sex groups was also analyzed (see Figure S4; supplementary materials). Interestingly, males were faster than females to initiate the current trial if the previous trial was a loss (Figure S4c). Hence, a loss did not reduce the motivation to initiate the next trial in males as much as it did for females. This result is consistent with our finding that males were less sensitive to losses (i.e., had a lower LS probability) compared to females.

Asymmetrical learning strategies enabled rats to remain with the ‘better’ high reward probability action by increasing win sensitivity and decreasing loss sensitivity from these actions

Given that rats asymmetrically learnt from wins and losses in our task, and that this effect was sex-specific (Fig. 1), we next asked whether rats used asymmetrical learning as part of their strategy to better adapt to the reversals. More specifically, we asked whether rats treated wins and losses differently in the trials immediately post-reversal (early phase; first 6 trials), where they had to learn the new action contingencies, versus the late phase where these action contingencies were most likely learnt (extended from the 7th trial until the end of the block). We found that rats had a higher WS probability in the late phase (p = 0.0001, Cohen’s d = 1.33), compared to early, with no differences in LS probabilities between phases (p = 0.95, Cohen’s d = 0.01), see Fig. 2a. Rats were therefore more sensitive to wins in the late phase of a given block. We reasoned that developing a strategy of increasing the WS probability in the late phase may serve the rat to remain with the ‘better’ high reward probability action, while also reducing the chances of incorrectly switching to the ‘worse’ low reward probability action based on rare losses. If this was indeed the case, we should find that the better actions (compared to worse actions) have a higher WS probability and a lower LS probability in the late phase. We therefore calculated WS and LS probabilities separately for ‘better’ and ‘worse’ actions (Fig. 2b left) in the late phase. We found that better actions did have a higher WS probability compared to worse actions (main effect of action type: F(1,36) = 57.53, p = 5.68e−09; Fig. 2c and d). Further, better actions also had a lower LS probability (F(1,36) = 436.29, p = 2.20e−12; Fig. 2e and f). Such a strategy could increase the likelihood of rats persisting with the better option.

Using asymmetrical learning strategies following reversals. (a) Rats were more likely to win-stay in the late phase of a given block (7th trial until end of the block), compared to the early phase (first 6 trials of a block). (b) To discern win and loss sensitivity between action types, we separately calculated the WS and LS probabilities for ‘better’ actions (which had a higher probability of reward), compared to ‘worse’ actions (which had a lower probability for reward). To better visualize if wins and losses were weighted asymmetrically between the two action types, we subtracted the WS and LS probabilities from the ‘better’ actions, compared to the worse. This subtraction gave an action difference score, where the further from zero, the more asymmetrically wins and losses are weighted between the two action types. In the high (c) and low contrast (d) blocks, both males and females were more likely to win-stay from better actions compared to worse (p = 0.0001, Cohen’s d = 3.23 and p = 0.01, Cohen’s d = 0.998, respectively). On the right of c and d are the action difference scores, also suggesting that better actions had a higher WS probability compared to worse. In the high (e) and low contrast (f) blocks, both males and females were less likely to lose-shift from better actions compared to worse (p = 0.0001, Cohen’s d = 8.73 and p = 0.0001, Cohen’s d = 2.93, respectively). On the right of (e) and (f) are the action difference scores, also suggesting that better actions had a lower lose-shift probability compared to worse. For specifically the high contrast blocks, males were less likely to lose-shift from better actions, compared to females (p = 0.0006, Cohen’s d = 3.30), indicating a divergence in the use of asymmetrical learning strategies between the two groups.

However, there was also a block and action type interaction for WS (F(1,36) = 16.07, p = 0.0003) and LS behaviors (F(1,36) = 107.46, p = 2.36e-12). Rats were overall more likely to use the asymmetrical learning strategy of increasing WS probability and reducing LS probability from better actions in the high contrast blocks (Fig. 2c–f). This pattern was further reflected in the action difference scores (Fig. 2b) adjacent to the probability figures (Fig. 2c–f), where a greater difference from zero indicates more asymmetry in WS/LS probabilities between the two actions. The high contrast blocks had a larger difference in WS (F(1,13) = 22.65, p = 0.0004) and LS (F(1,13) = 175.92, p = 6.24e−09) probability between the two actions which suggest that this asymmetrical learning strategy was used more when the environment had a clearer structure.

Further, there was a main effect of sex for WS behaviors (F(1,12) = 7.23, p = 0.02). Females had a lower WS probability than males, except for the ‘worse’ actions in the high contrast block (Fig. 2c). However, males and females had no significant differences in their action difference scores for WS behaviors in high (p = 0.26, Cohen’s d = 0.68; Fig. 2c, right) and low contrast blocks (p = 0.93, Cohen’s d = 0.05; Fig. 2d, right). This result indicates that while females did generally have a lower WS probability than males, they used the asymmetrical learning strategy of increasing the WS probability from better actions at a similar level to males. On the other hand, for LS behaviors, there was a sex and action type interaction (F(1,36) = 15.65, p = 0.0003). Males used the strategy of reducing their LS probabilities from better actions more so than females (p = 0.0006, Cohen’s d = 3.30; Fig. 2e, right), specifically in the high contrast block. Therefore, specifically for LS behaviors and in the high contrast block, males and females diverged in the use of their asymmetrical learning strategies between the two action types.

Our analyses here focused on win-stay and lose-shift behaviors; however, these behaviors could be biased by the overall stay/switch probabilities, irrespective of the outcome. Hence, we also compared win-stay and lose-stay probabilities (see Figure S5; supplementary materials). Subjects were generally more likely to win-stay compared to lose-stay, indicating sensitivity to the outcome. Further, previous work31,32 used information theory to combine win-stay and lose-shift probabilities into a single measure capturing reward-dependent strategies. One advantage of this metric is that it accounts for the overall probability of win and thereby better reflects strategy variability. We have also conducted these analyses (see Figure S6; supplementary materials) and find that males overall had a more consistent reward-dependent strategy compared to females.

In sum, males and females were more likely to WS, but less likely to LS from the better action in the late phase of both high and low contrast blocks. This result supports our interpretation that an asymmetrical learning strategy may serve the rat to remain with the ‘better’ high reward probability action, while also reducing the chances of being incorrectly influenced by the rare losses. As might be expected, we found that this asymmetrical learning strategy is used most in the high contrast block where there is a clearer task structure, and it is easier for the rat to dissociate the two actions.

Using unsigned avgRPE as a proxy for the rats’ represented uncertainty state, and the global reward state as a proxy for represented reward rate

Our behavioral results have thus far suggested that rats used asymmetrical learning strategies to adapt to the reversals in our tasks (Figs. 1 and 2). Importantly, the use of these strategies was strongly modulated by the overall task structure. Rats would WS more and LS less when the optimal strategy to obtain reward was clearer, and when they had likely discerned the high reward probability action in the late phase of a block. We therefore asked what latent aspects of the task structure contributed to this observed behavior, using their choice and outcome histories. We used the unsigned avgRPE computation to represent uncertainty level and the GRS computation as a proxy for reward history (see Methods).

First, we aimed to establish whether the unsigned avgRPE state could reflect an aspect of the animal’s uncertainty levels. Previous uncertainty models used the current trial’s unsigned RPE, without the avgRPE component, to reflect an animal’s uncertainty state12,26. These models assume that the previous trial’s RPE does not explicitly influence the animal’s overall decision through their respective uncertainty calculations. We began by asking whether the previous trial’s RPE had any weight in the animal’s WS/LS decisions. To answer this, we fit a standard RL model, and calculated the k-value, which determines how much weight an animal placed on the current trial RPE relative to the previous trial RPE (see Eq. 13) to make their WS/LS decisions. Here, a k of 1 would indicate that the rat placed complete weight on the current trial RPE, however a k of 0.8 would indicate that the rat placed a weight of 0.8 on the current trial RPE and 0.2 on the previous trial RPE (Fig. 3a, also see Methods for details).

Modelling the uncertainty state as unsigned avgRPE and the reward rate as the global reward state computations. (a) Calculating the weight placed on the current trial RPE relative to the previous trial’s RPE (k-value) when making win-stay and lose-shift decisions. A value of 1 is complete weight on only the current trial RPE, with a value of below 1 contained some weight on the previous trial’s RPE. (b) The k-value with the highest correlation with win-stay and lose-shift behaviors was below 1, indicating that rats were influenced by the previous trial’s RPE when making their WS and LS decisions. (c) All rats had a k-value of below 1, indicating that they were all influenced by their previous RPE when making WS/LS decisions. Additionally, males used their previous trial’s RPE more than females to make their LS decision p = 0.0001, Cohen’s d = 2.68). (d) Bayesian Inference Criterion (BIC) score for the standard RL model which does not include an avgRPE component, compared to another model that does. The model which includes the concept of an avgRPE explained choice data better than the standard RPE model. (e) the block with no reward contrast has the highest levels of unsigned avgRPEs, and lower for the low contrast block, with the high contrast block having the lowest levels of unsigned avgRPEs. There was a significant main effect of block (p = 3.80e−10) (f) The global reward state (significant main effect of block; p = 1.56e−10) represents the same trend observed in empirical reward rate (significant main effect of block; p = 2.2e−16) shown in g. Abbreviations: avgRPE = average RPE, BIC = Bayesian information criterion, GRS = global reward state. * p < 0.05.

We found that a k of close to 0.80 and 0.68 produced the strongest correlation with WS and LS behaviors, respectively (Fig. 3b and c). These k-values suggest that rats use a combination of their current and previous trials’ RPE to make their WS and LS decisions in this task. There was also a main effect of sex (F(1,12) = 13.71, p = 0.003) and an interaction between WS/LS factor and sex (F(1,12) = 8.82, p = 0.01). We found that male rats weighted their previous trial RPE more so than females to make their LS decision (p = 0.0001, Cohen’s d = 2.68). This result is consistent with males having an overall lower LS probability, possibly suggesting that males may also need the previous trial’s RPE to be low or negative to make the decision to LS on the current trial.

To validate whether rats are using their RPE histories, and not just their current trial’s RPE to make decisions in this task, we fit the avgRPE RL model (see Methods) to their behavior. We found that the avgRPE model fit the data better than a standard RL model (Fig. 3c). This result suggests that a model where rats use their RPE histories captures the choice data better than a model where rats only use the current trial RPE to make their decision. Due to this better fit, we used the trial-by-trial avgRPE for subsequent analyses.

We next used this unsigned avgRPE to ask if it could be used as a proxy for the rat’s represented uncertainty state. To answer this, we examined the unsigned avgRPEs for the three different contrast blocks, with the idea that the high contrast block should have the least uncertainty (lowest levels of unsigned avgRPEs) and the block with no contrast should have the highest level of uncertainty (highest levels of unsigned avgRPEs). As can be seen in Fig. 3e, we found that the different block types had different levels of unsigned avgRPEs (significant main effect of block; F(2,24) = 61.15, p = 3.80e−10). Consistent with the notion that unsigned avgRPE may indicate uncertainty levels, the high contrast block had the lowest unsigned avgRPEs, followed by the low contrast block, with the block with no contrast having highest unsigned avgRPEs.

Next, we examined whether the GRS could be used as a proxy for the animal’s reward history in this task. We found consistent results between the modelled GRS (Fig. 3f; significant main effect of block; F(2,24) = 66.80, p = 1.56e−10) and the standard average reward (Fig. 3g; significant main effect of block; F(2,24) = 386.50, p = 2.2e−16), across the different block types. As expected, the high contrast block had the highest reward rate and GRS, suggesting that the GRS could be used as an animal’s trial-by-trial estimate of their environmental richness in this task. The GRS, like the standard reward averages, calculates reward history of a few trials back, however, unlike the reward rate, the GRS exponentially discounts previous rewards. Hence, recent rewards have a higher influence on the GRS calculation, which is not the case for the standard reward average calculation. The GRS model also fits the choice data better than a model that uses standard reward averages of the current and previous trial (see Figure S7; supplementary materials).

Uncertainty and reward history differentially influenced rats’ sensitivity to wins and losses, in a sex and block specific manner

Thus far, we found that rats used sex- and block-specific asymmetrical learning strategies in our task (Fig. 1 and 2) and have demonstrated that unsigned avgRPE and GRS computations may represent aspects of uncertainty and environment richness levels, respectively (Fig. 3). We next asked how avgRPE and GRS influence win and loss sensitivity, specifically for the ‘better’ high reward probability actions in the late phase, where rats were more sensitive to wins and less to losses. To answer this, we correlated trial-by-trial WS and LS, with their associated trial-by-trial unsigned avgRPEs and GRS computations (see Fig. 4a for an example of the changing trial-by-trial GRS and unsigned avgRPE computations and Fig. 4b for an illustration of the analysis; see Methods for further details).

Correlations with win-stay and lose-shift behaviors from the ‘better’ high reward probability actions in the late phase, with the unsigned avgRPE and the GRS. (a) an example rat’s changing trial-by-trial GRS and unsigned avgRPE trace based on the two actions and wins and losses. The grey shade highlights trials with several wins in a row, where GRS is increasing and unsigned avgRPE is decreasing. The second shade (red) highlights a series of losses, first three losses are from action 1 where GRS and unsigned avgRPE is decreasing, however, when the rat decided to shift, and take action 2 but still got a loss, the GRS continued to decrease, but unsigned avgRPE increased as this loss likely caused a negative RPE, making the rat more uncertain on the ‘correct’ action. (b) Illustrative summary of the correlational analyses presented in (c and d). (c) Unsigned avgRPE is negatively correlated with win-stay behaviors, suggesting rats were more likely to WS from better actions when uncertainty was low. This correlation was stronger in high contrast blocks, compared to low. Interestingly, females were less influenced by the uncertainty state when making WS decision, compared to males. (d) Rats were more likely to lose-shift from the better action when they were in a high uncertainty state, and this correlation was stronger for high contrast blocks. (e) WS behaviors positively correlated with the GRS state irrespective of the block type for males, but for females, the GRS influenced WS decisions more in the high contrast block, compared to the low contrast block. (f) GRS had a negative correlation with lose-shift behaviors, and this correlation was stronger in the low contrast blocks. Therefore, both males and females, were more likely to LS from better actions when they were in a low GRS state, especially when in a low contrast block. Abbreviations: |avgRPE|= unsigned average RPE, GRS = global reward state. * p < 0.05.

WS behavior was inversely correlated with unsigned avgRPE for the ‘better’ action in the late phase, and this inverse relationship was stronger for the high contrast block (main effect of block; F(1,12) = 288.94, p = 9.23e−10). Rats were therefore more sensitive to wins when they were in a low uncertainty state, i.e., environments with low stochasticity. There was also a significant main effect of sex (F(1,12) = 10.62, p = 0.0068) and a sex by block interaction (F(1,12) = 6.51, p = 0.025), where males had a larger negative correlation between unsigned avgRPE and WS for the high (p = 0.0014, Cohen’s d = 4.70; Fig. 4c) and low contrast block (p = 0.041, Cohen’s d = 2.69). Therefore, the uncertainty state influenced males more so than females when making WS decisions. Next, we found that indeed rats were more likely to WS when they had a high GRS (i.e., when they were in a rich environment; Fig. 4e), however, there were no significant differences based on block type for males (p = 0.24, Cohen’s d = 0.58). Interestingly, females had a stronger correlation with GRS and WS in the high contrast block, compared to the low contrast block (p = 0.0042, Cohen’s d = 2.23). Hence, GRS influenced females’ decisions to WS in a block specific manner, but males were influenced by the GRS similarly irrespective of the block type. This is in sharp contrast with the unsigned avgRPE correlations with WS behaviors, showing distinct block and sex differences. Thus, the detailed examination of trial-by-trial behavioral choices in relation to our measures of uncertainty and reward histories show that estimates of uncertainty and reward density have distinctive contributions to WS behavior in this task.

LS behaviors had a positive correlation with unsigned avgRPE for these ‘better’ actions, and more so in the high contrast block, compared to low (main effect of block type; F(1,12) = 76.28, p = 1.51e = 06). This positive correlation suggested that rats were more likely to maladaptively lose and shift away from the better action when their represented uncertainty state was high. In contrast, LS behaviors had a negative correlation with the GRS, and more so in the low contrast block compared to the high contrast block (main effect of block type F(1,12) = 48.07, p = 1.57e−05). This negative correlation suggested that rats were more likely to shift away from the ‘better’ action when the reward history was low, and this was especially the case for low contrast blocks. Lastly, we did not observe sex differences in the GRS and unsigned avgRPE’s influence with LS behaviors. We further analyzed matching behavior between the two sex groups and found that local rewards influenced males and females’ choices similarly (see Supplementary materials Figure S2). Therefore, while overall behaviors based on rewards were generally similar between males and females, they diverged in the use of latent uncertainty and reward history computations when making WS but not LS decisions.

In sum, rats were most sensitive to wins, and least to losses from the ‘better’ high reward probability actions when their represented uncertainty was low. Further, the uncertainty state was most relevant in making WS/LS decisions when there was less stochasticity in the environment (i.e., the high contrast block). In contrast, the GRS was more relevant for LS behaviors when stochasticity was high (i.e., the low contrast block). These results suggest that uncertainty computations modulate behaviors more when the environment has a clearer structure, but when a clear structure is not determined, rats are more likely to simply rely on their reward histories.

Further, there were key sex differences in the influence of GRS and uncertainty histories on WS but not LS decisions. The uncertainty state impacted males’ decisions to win-stay more heavily than females. Both males and females were similarly influenced by the GRS and uncertainty when making LS decisions, however, for WS decisions, the GRS influenced females in a block dependent manner. By tracking the animal’s trial-by-trial uncertainty and reward history states, we revealed district influence of these computations on their sensitivity to wins and losses, and ultimately their behavioral strategies when adapting to changing reward contingencies in our task.

The uncertainty state and the GRS interact to asymmetrically weight wins in a block and sex specific manner

The GRS and uncertainty states differentially modulated win and loss sensitivity from the ‘better’ high reward probability actions (Fig. 4) and this effect was dependent on the block type and sex. However, it is possible that these two computations may interact, or they may be independent computations when used to modulate win/loss sensitivities. To test this, we grouped all the ‘better’ actions in the late phase (separately for the high and low contrast blocks) as high and low unsigned avgRPE state and GRS, based on a median split. We then calculated the WS and LS probabilities based on four sub-groups: 1) high unsigned avgRPE and high GRS, 2) low unsigned avgRPE and low GRS, 3) high GRS and low unsigned avgRPE, and 4) low GRS and high unsigned avgRPE. For each of these four sub-groups, we calculated the WS and LS probabilities. There were very few LS trials in the late phase of the better action, especially for the high contrast block, and as a result we focused this analysis on the WS probability’s interaction between GRS and uncertainty states only.

For males (Fig. 5a), there was a main effect of the sub-group type (F(3,56) = 19.21, p = 1.09e-08), block type (F(1,36) = 8.39, p = 0.005) and a sub-group by block type interaction (F(3,56) = 3.77, p = 0.016). For the low contrast block, males would WS more when the GRS was high, irrespective of the uncertainty state. This result is consistent with our previous analysis in low contrast blocks where that GRS and WS have a strong correlation, but conversely, the uncertainty state and WS have a weaker correlation (Fig. 4c and e). However, for the high contrast blocks, males did not reduce their WS behaviors when the GRS and uncertainty were low (p = 0.0001, Cohen’s d = 2.04). Instead, for these high contrast blocks, males required both a low GRS and a high unsigned avgRPE state to reduce their WS probability (p = 0.0001, Cohen’s d = 2.40). This result indicates that the unsigned avgRPE and GRS states may interact when modulating WS behaviors specifically in high contrast blocks in males. Conversely, females (Fig. 5b) had a main effect of sub-group type (F(3,28) = 8.50, p = 0.0004), but no main effect of block (F(1,28) = 0.61, p = 0.44) or a block by sub-group type interaction (F(3,28) = 0.23, p = 0.89). Generally, a low GRS state alone, irrespective of uncertainty state, reduced the WS probability in females, and a high GRS increased this WS probability. Hence, females used the GRS more so than unsigned avgRPE to modulate their WS behaviors, which is also consistent with females having a lower correlation with unsigned avgRPE and WS behaviors, compared to males (Fig. 4c).

Block and sex specific interactive effects between unsigned avgRPE and GRS for win-stay behaviors from the high reward probability actions in the late phase. (a) For the low contrast blocks, males would generally WS according to the GRS, irrespective of the unsigned avgRPE state. However, for the high contrast block, males showed an interaction between the two computations, where they needed both, a low GRS and a high unsigned avgRPE to reduce their WS probability. (b) Females did not show this interaction effect, irrespective of the block type. Females were generally more likely to WS when the GRS was high, and less likely to WS when the GRS was low, irrespective of the unsigned avgRPE state.

Collectively, these results suggest that environmental stochasticity and sex play a role in shaping strategies involving the dynamic use of GRS and uncertainty states when modulating the weight placed on wins.

Discussion

Our primary aim was to investigate whether animals adapted to the changing levels of uncertainty and reward history by asymmetrically weighting their wins and losses. First, we established that rats indeed used asymmetrical learning strategies to adapt to the reversals in our task (Fig. 1 and 2). By modelling the latent ongoing trial-by-trial uncertainty and reward history estimates (Fig. 3), we found that these computations had distinct impact on rats’ WS/LS strategies (see Fig. 4). Specifically, reward history, operationalized as the GRS, had an influence on rats’ decision to WS, irrespective of environmental stochasticity for males, but not females. However, uncertainty history, operationalized as avgRPE, influenced an animal’s WS and LS behaviors particularly in environments with low stochasticity (i.e., high predictability of reward). Interestingly, uncertainty history influenced male rats’ decision to WS more than females. Further, uncertainty and reward history states interacted to modulate win-sensitivity specifically in male rats and in an environment with low stochasticity (Fig. 5). These results provide deeper insight into the latent computations behind the ubiquitous phenomena of asymmetrical learning and goes further to suggest that asymmetrical learning is not always a maladaptive bias but could form a part of an animal’s behavioral strategy to adapt (see Fig. 6 for a schematic summary of these findings).

A schematic summary of uncertainty history and reward history computations when used to asymmetrically modulate win and loss sensitivities for ‘better’ actions. (a) Both males and females LS more when the magnitude of the GRS is low, and WS more when the GRS magnitude is high. Further, both sex groups are more likely to WS when uncertainty is low, and more likely to LS when uncertainty is high. However, under the same uncertainty state, females are less likely to WS compared to males. (b) both sex groups put equal weight on the GRS computation when making WS decisions, however, the block type (i.e., environmental stochasticity) influenced the weight placed on GRS for females but not males. Under high and low stochasticity, both sex groups put less weight on uncertainty computations (compared to GRS) when making WS decisions. However, males put more weight on their uncertainty computations to make WS decisions, compared to females. (c) Both sex groups put more weight on the GRS in an environment with high stochasticity when making LS decisions. Further, both sex groups put more weight on uncertainty computations, compared to the GRS when in environments with low stochasticity. Hence, when there is more structure in the environment the uncertainty state influences LS decisions more than GRS, but conversely, when there is high stochasticity, rats rely more on their reward histories for make LS decisions.

Rats adapted their behavioral strategy by increasing their win sensitivity but reducing their loss sensitivity in the later phases of the block (Fig. 2), specifically for the ‘better’ action. Our interpretation here is that rats are using an exploitative strategy in the later phases, where they are more likely to have discerned which action is the high reward probability action (i.e., the ’better’ action). Similarly, previous work has found that animals adopt an exploitative strategy in the later phases of a block or experiment2,33,34. And consistent with previous work12, we found a positive correlation between the represented reward state (GRS) and the WS probability, and conversely, a negative correlation between GRS and LS. The positive correlation between WS and GRS can be interpreted under the optimal forging theory35,36, where a rich environment (i.e., a high GRS) should make the animal more likely to stay following a rewarded choice. Our results add that this increased win-sensitivity strategy is more likely used when the environment has a clearer structure (i.e., less stochasticity, in the high contrast blocks).

However, GRS did not fully explain behavior in this task. Interestingly, we found that the represented uncertainty levels (i.e., unsigned avgRPE) differentially modulated WS and LS behaviors in our task. The represented uncertainty state had a negative correlation with WS, but a positive correlation with LS, specifically for the ‘better’ actions. Therefore, when the animal was in a low uncertainty state, they were more likely to stay following a rewarded choice from the better action. However, when that same choice was unrewarded, they were less likely to shift. The Mackintosh15 learning model proposes that animals gate learning in proportion to the reliability of the outcome. In the context of a low uncertainty state, a loss from this better action may be considered noise or unreliable, since this is when they are most certain they are making the ‘correct’ choice. As a result, the animal may down-weight this loss and consequently shift less. The unsigned avgRPE may be a possible computation that enables an animal to mark a loss as unreliable and thereby update less from this.

We also found key sex differences. Male rats were overall more exploitative (based on a high WS and low LS probability) than females. In contrast, previous work found that female mice made more exploitative choices, acquiring a 2-armed restless bandit task in fewer sessions than male mice18,19. These discrepancies may be due species differences (mice in their experiments versus rats in the current study) but could also be due to the reversal learning nature of our task, which was not the case for the previous two tasks. Another possibility is that we did not focus on task acquisition, but on strategy once the task was learnt. Future work disambiguating the sex-dependent strategies used for task acquisition versus performance may help address these discrepancies, particularly in tasks with reversals.

Consistent with our findings, prior work by Harris and colleagues37 using a visual stimulus-based learning procedure found that WS (and WS from better actions) was higher in the high contrast condition (90% reward probability for selecting stimuli 1, and 30% for stimuli 2) compared to the low contrast condition (70% and 30% for stimuli 1 and 2, respectively). However, they did not find sex differences in WS behaviors, whereas we found that males were more likely to WS compared to females. In addition to the difference in spatial (current study) versus stimulus-based decisions, we exposed subjects to a relatively volatile environment, with reversals every 15–30 trials. Harris and colleagues37 did not have within sessions reversals, making for a relatively stable environment. Hence, these differences in environmental volatility may play a role in how males and females adapt their WS strategies.

Further work by Aguirre et al.17 found that in deterministic reversal learning, but not probabilistic, males had a faster value decay of unchosen actions. Here, we add that both sex groups had similar value decays when the chosen outcome was positive, but males had a higher value decay when the chosen outcome was negative (Fig. 1g and h). Collectively, these results suggest that sex differences in behaviors are significantly modulated by the task type, volatility, as well as the phase of the task (acquisition verses expert phase). We further sought to understand the possible latent computations behind differential win and loss sensitivities between the sex groups. Computational modelling suggested that the reward and uncertainty histories were used similarly to make LS decisions, however, the two groups diverged in the use of these latent computations when modulating WS decisions. Previous work has found that females are generally more risk averse than males21,38,39,40. Our finding that females use uncertainly history differently to males when making WS decisions is consistent with this previous work, overall showing sex differences in decision-making under probabilistic environments.

Here, we sought to understand how reward and uncertainty histories could modulate the weight placed on wins and losses and thereby produce adaptive behaviors. Hence, neural circuits known to encode aspects of trial history may be relevant modulators of WS/LS behaviors in our task. The orbitofrontal cortex (OFC) encodes aspects of uncertainty41,42 and value43, including reward histories44. Further, the anterior cingulate cortex (ACC) plays a role in modulating learning35,45,46 and decision strategies47,48 by encoding environmental statistics, including trial and choice histories49,50 and unsigned reward prediction-errors51,52. Hence, OFC and ACC may represent trial outcome histories, and these representations may then influence the weights on the current wins and losses (i.e., reward prediction-error signals) within the striatum53,54 and ventral tegmental area55 (VTA). In agreement, previous work has found that the medial prefrontal cortex (which includes the ACC) modulates the weight placed on reward prediction-errors in the VTA when the environment has uncertainty, but not when the environment is deterministic56.

In sum, we found that indeed animals do not meet with wins and losses the same. However, this asymmetry is a part of their behavioral strategy to adapt. We found that uncertainty and reward history may underlie this common phenomenon of asymmetrical learning, allowing the animal to adapt appropriately to the changing environment. While we show that asymmetrical learning can be used adaptively, there are cases where this process can go awry and produce decision-making pathologies, including addiction57,58,59, depression60, post-traumatic stress disorder61,62 and obsessive compulsive disorder63,64. Our results have implications for better understanding these decision-making pathologies and suggest that a misalignment of uncertainty and reward history computations may contribute to some aspects of maladaptive asymmetrical learning symptoms. Further, while it is known that reward history and uncertainty computations produce adaptive behaviors, we add that asymmetrically modulating wins and losses may be one possible mechanism by which this is achieved. Hence, future models of learning and decision-making that incorporate this additional computation may help better explain decision-making processes.

Methods

Subjects

We used a total of 28 Long-Evans rats (n = 18 males, n = 10 females; 10 weeks old upon arrival, Envigo). They were all single housed and kept in a constant temperature and humidity-controlled environment, with a 12-h light/dark cycle (light cycle starting 7am, and dark cycle starting at 7 pm). All experiments were conducted during their light cycle. The study is reported in accordance with ARRIVE guidelines.

Ethics statement

Experimental procedures were approved by the Johns Hopkins University Animal Care and Use Committee and are in agreement with recommendations in the Guide for the Care and Use of Laboratory Animals (Institute of Laboratory Animal Resources, Commission on Life Sciences, National Research Council, 2011).

Behavioral task

Initial training

Behavioral training and testing were done in a customized operant chamber (Med Associates). During both, training and testing periods, rats were water restricted while maintaining at least 90% of their ad-libitum weight. The first step of training involved learning to enter the reward magazine to collect a reward (100μL of 10% sucrose with tap water). Rewards were delivered randomly in the magazine, but with an average interval of 60 s. Next, rats learnt to initiate a trial through this magazine entry, which then triggered the insertion of two levers at the same wall as the magazine, with one lever to the left, and the second to the right of the magazine. At this stage, pressing any lever delivered a reward in the magazine with 100% probability. Following a successful completion of lever press training, rats performed a simple reversal learning task. Here, pressing one of the two levers led to a reward with 100% probability, and the other lever a 0% probability of reward. An omission (i.e., non-reward) was defined as a loss. The reward probabilities for the levers were reversed (the previously rewarded lever press now became the unrewarded press, and vice versa for the previously unrewarded lever press) randomly after passing a performance threshold of 0.75 (calculated as an exponential moving average of the previous 8 trials – set a priori). Hence, the reward probabilities reversed following a successful acquisition of the correct lever press to obtain a reward. Here, the reversal probability after reaching the threshold was set at 10%, and if a reversal did not happen within 20 trials of surpassing the performance threshold, a reversal was forced. This deterministic reversal learning training lasted between 1 and 3 days. Following this, the probabilistic reversal learning task was started (see below).

Probabilistic reversal learning (PRL) task

The PRL task consisted of one of the two levers having a 70% probability of being rewarded given a press of this lever, and the second lever being 10% reward probability given a press of this lever. The reversal rules were identical to the deterministic reversal learning task described above. The trial was signaled as ready to be initiated by a onset of the magazine light, and when the rat entered the magazine, the two levers inserted after a variable delay (100–1000 ms, with randomly selected intervals of 100 m). If rats did not press a lever within 30 s, this was termed as an invalid trial, and this trial was excluded from further analysis (less than 1% of trials for all rats). Immediately upon lever press, rewarded trials were indicated by two clicker sounds (0.1 s interval, generated by MED Associates, ENV-135 M), with unrewarded trials cued by 0.5 s of white noise (generated by MED Associates, ENV-225SM). Reward consisted of ~ 33μL of the sucrose solution, delivered in the magazine via a syringe pump (MED Associates, PHM-100). A rewarded trial was termed complete after the first exit by the rat from the magazine, having collected the reward. An unrewarded trial ended after the white noise cue presentation. The intertrial interval was 2.5 s. All rats received a total of 15 2-h sessions. See Fig. 1 for the basic task structure and Figure S1 (supplementary materials) for the data from this task.

Dynamic probabilistic reversal learning (DynaPRL) task

Of the 28 rats from the PRL task, half of these (n = 14, 9 males and 5 females) advanced to training on the dynaPRL task. Only half the number of rats were used for analysis in the dynaPRL task here as the other half of rats were used for an ethanol procedure in another study29. For our current study, we only analyzed rats with no ethanol exposure. There were no further rat exclusions in all analyses reported here. We analyzed a total of 396 sessions across 14 rats. The overall task structure of the dynaPRL task is identical to the PRL task, with three important differences. First, the reward probabilities of the two actions were different. The dynaPRL task consisted of three block types (distinguished by their reward probability, given the lever press). First, one block type had a 45% reward probability if either of the two levers were pressed (termed a no action contrast (NC) block). The second block type was a 60% reward probability for one lever press, and 30% for the other (termed low action contrast (LC) block). The third block type was 80% reward probability after pressing one lever, and 10% for pressing the other (termed high action contrast (HC) block). Importantly, each block diverged in the difference – i.e., the contrast – between the two reward probabilities, hence labeled as the high, low and no contrast blocks (Fig. 1b). The second difference between the PRL and dynaPRL tasks were the conditions for block transition. Here, a block switched every 15–30 trials, with the exception of when rats made at least four consecutive incorrect choices in the blocks with an action contrast (LC and HC blocks). The block transitions occurred randomly, but with two rules imposed, 1) neutral blocks were not repeated, and 2) the position of the lever with the higher reward probability was not repeated. The third key difference between the two tasks was that the dynaPRL task also had a reward magnitude manipulation, but only in the HC and LC block types. Specifically, after 12 trials within a block had passed, in 40% of these trials, the reward was either halved (16.5μL) or doubled (66μL). We did not observe a significant difference in the probability of stay and switch behaviors based on these reward magnitude manipulations (see Figure S8; supplementary materials). Consequently, these trials were also included in our analyses. Rats performed an average of ~ 30 sessions (396 sessions total across all 14 rats), with each session lasting 2 h.

Analyses

Model-independent analyses

All the model-independent analyses were focused on calculating the win-stay (WS) and lose-shift (LS) probabilities. An action was termed WS if that action was rewarded, and then was subsequently repeated. An action was termed LS if an action was not rewarded, and the subsequent action was changed. In general, WS probabilities were calculated as the number of WSs, divided by the number of wins in the trials of interest. Similarly, the LS probability was calculated as the number of LSs, divided by the number of losses, in the trials of interests.

In subsequent analyses we calculated the WS and LS probabilities separately for early and late phases of a given block (Fig. 2). The early phase included the first 6 trials after a block transition, and the late phase extended from the 7th trial until the end of the block. Therefore, here, we calculated the total number of WSs or LSs in a given phase of a given block, and divided by the number of wins or loses within that block/phase. For example, when calculating the WS probabilities of the early phase of the HC block, we first calculated the number of WSs in the first six trials of this block for every session. We then summed all the number of WSs from every session in the early phase of this HC block. Next, we calculated the number of wins in the early phase of every high contrast block, in every session. This was then summed. Therefore, to calculate the probability of WS in the early phase of the high contrast block, we divided the number of WSs here, divided by the total number of wins here. The same analysis was used for the other block types, and for the late phase.

We also did an analysis where WS and LS probabilities were calculated separately given the action type (high or low reward probability action), the block type (HC, LC and no contrast) (Fig. 2). Here, a similar principle was applied as on the above analyses, but this time also taking into account the action type. For example, the probability of WS from the high reward probability action in the early phase of the high contrast block was calculated as following. For each session, we first calculated the number if WSs given the high reward probability action was made, and in the early phase of the HC block. We then summed this across all sessions, to get the total number of WSs given the high reward probability action was made in this block and phase type. Next, we calculated the number of wins from this high reward probability action in this phase and block type, of a given session, and then summed this across all sessions. The WS probability was therefore calculated as the number of WSs from the high reward probability action in the early phase of the HC block, divided by the number of wins from this action/phase and block type. The probability of WS and LS was calculated similarly for all the block, phase and action types.

Lastly, we calculated the p(preservation) (i.e., the probability of persisting the with same choice) pre- and post-reversal to asses adaptation to the reversals in our task (Fig. 1c). This p(preservation) score was calculated trial-by-trial using the ratio of correct actions (i.e., probability of the correct choice) and the total number of blocks. However, in the no contrast block, there is no ‘correct’ choice, and we therefore randomly selected one of the actions as ‘correct’ for the calculation. There were five trials in the pre-reversal stage, and twelve trials post-reversal.

Model dependent analyses

Models used

We used four different models in our analyses. These models were all used as analytical tools to understand asymmetrical learning processes from the empirical data, as supposed to mechanistic instantiations of cognitive hypotheses65. The first model was a standard reinforcement learning (RL) model which consisted of the learning rate (α) and the inverse temperature (β) as free parameters. Here, the trial-by-trial reward prediction error (RPE) was calculated as:

where t is the trial, R is the reward and V is the value. The value was then updated as the following:

We used a softmax function mechanism to calculate the choice probability of the two actions, dependent on the value of the two competing actions:

where \(p\left(a,t\right)\) is the probability of taking action a, at trial t, and β is the inverse temperature (where low values give close to random action selection, and higher values increases the probability of selecting the action with the highest values).

The second model used was the average RPE (avgRPE) RL model. Here, we calculated the RPE as in Eq. 1 above, and using the RPE from here, we calculated the exponential-like avgRPE as below:

where \(\alpha\) is the learning rate and, hW is the history weight which was a free parameter, bounded between 0 and 0.75. A high hW indicated more weight placed on the previous avgRPE, and conversely, a low value here indicated a lower weight placed on the previous avgRPE. The value was then updated as below:

Importantly, if hW is equal to 0 , the model becomes the classic RL model (model 1). The choice probability calculation used was identical to the classic RL model as above, using the softmax action selection equation (Eq. 3). This avgRPE RL model therefore had three free parameters (α, β and hW).

The third model used was the global reward state model (GRS), also used by12. Here, the reward trace (R-trace) was calculated as an exponential moving average of the rewards as below:

Here \({\alpha }_{R}\) is the weight placed on the reward history, and was bounded between 0 and 1. Using this R-trace, the RPE was then calculated as below:

Here, \({w}_{R}\) was the weight placed on the R-trace calculation and was bounded between -1 and 1. The softmax action selection mechanism was used (Eq. 3), and the value was updated as in Eq. 2 above, however, this time using the RPE from Eq. 7. Overall, this third GRS model had 4 free parameters (α, β, \({w}_{R}\) and \({\alpha }_{R}\)).

The fourth model was a five parameter RL model, used specifically to understand general asymmetrical learning differences between the two sex groups. This model had two learning rate parameters (one for learning from positive outcomes; α + , and a second for learning from negative outcomes; α-). The next two free parameters were value decay rates from the unchosen actions (one decay rate for the unchosen positive outcome; γ + , and second for unchosen negative outcome; γ-). The 5th free parameter was the inverse temperature (β), used to select an action based on softmax action selection (Eq. 3). The value in this model was updated as following:

At trial t, if the chosen action is A1, and this chosen action was rewarded (R = 1), the value of the chosen action (A1), and unchosen action (A2) was updated as:

If the chosen action (A1) was unrewarded (R = 0) at trial t, the value was instead updated as:

Lastly, previous work66 has suggested that asymmetrical learning effects may be statistical artifacts due to the preservation tendencies (i.e., repeating the same choice irrespective of the outcome) if choice history is not modelled. We also ran this model with the choice history term added and found that our asymmetrical learning effects still held (see Figure S9, supplementary materials).

Model fitting and comparisons

All models, except the five parameter RL model, were fit on a session level, using the MTLAB (2024b) function fmincon, to find parameters that maximized the log-likelihood. Each session fit was repeated 10 times, and the parameters that yielded the highest log-likelihoods were then used for further analyses. Model comparison was done based on the Bayesian Information Criterion (BIC) score, calculated on a session level as:

where nLL is the negative log likelihood, nParams is the number of free parameters, and nObs is the number of observations.

Given that the five-parameter RL model had a relatively large number of free parameters and it specifically was used to compare the parameter estimates between the two sex groups, we used hierarchical model fitting and comparisons at the group-level such that the likelihood of over-fitting due to noise between individual rats is reduced30. Here, we constructed group-level (male and female) hyperparameters that then modulated the estimates for the five parameters for each rat in a given group. This procedure was done using the Matlab Stan interface (https://mc-stan.org/users/interfaces/matlab-stan). We estimated the best fitted model parameters using the overall choice and outcome data for each rat, per session. More specifically, we drew consecutive samples from the posterior density of the model parameters using Hamiltonian Markov chain Monte Carlo – which gave the density estimates for the best fitted model parameters per rat. Importantly, each rat had the parameter estimates for their five free parameters, all of which were drawn from the group-level hyperparameters (group mean (μ), group mean difference (δ), and variance (σ). In order to asses the magnitude of the differences between the parameter estimates between groups, we used the directed Bayes Factor (dBF) – which calculated the ratio of proportion of the group difference hyperparameter (δ) above zero, versus the proportion that is below zero. The larger the dBF magnitude, the greater the group differences between the parameter estimates of interest. We have previously used this model fitting approach, see29 for more details on this method. See Figures S10 and S11 (supplementary materials) for parameter recovery of the models used, and their relevant parameters.

Model-dependent correlation analyses with WS-LS behaviors

To discern whether rats only use the current trial RPE or a combination of the current and previous trial’s RPE in order to make their WS/LS decisions (Fig. 3b) , we used the equation below:

where wRPE is the weighted RPE based on the current and previous trial RPE. The RPEs here were estimated based on the classic RL model (see above). The k-value determined how much weight to place on the current trial RPE relative to the previous trial. A k of 1 would only place the weight on the current trial RPE, however a k of 0.8 would place a weight of 0.8 on the current trial RPE and 0.2 on the previous trial RPE. We therefore used Eq. 13, with k of between 1 and 0.5, in increments of 0.025, to generate trial-by-trial, wRPEs. After generating wRPEs with different ks, we used binomial logistic regression to correlate all the wRPEs (that were z-scored) with the rats’ WS and LS behaviors to determine the k-value that produced the strongest correlation (i.e., beta weight).

Statistics

All statistical analyses were done using a linear mixed effect model, using RStudio 4.1.2 (function “lmer”). In each case we input the factors of interest for that analysis (main effects and interactions), as specified in the results section for each analysis. Further, to account for the variability due to individual rats, we used the rat ID as a random effect in all our liner mixed effect models. The effect size was calculated as the Partial eta-squared (ηp2), with 0.01 indicating a small effect size, 0.06 indicating a medium effect size, and 0.14 indicating a large effect size. Where the linear mixed effect models yielded significant results, post-hoc analyses were done using t-test comparisons (function “emmeans”), adjusted for multiple comparisons using the Tukey method. To determine the effect size of the t-tests, we used Cohen’s d, where 0.2 indicated a small effect size, 0.5 indicated a medium effect size, and 0.8 indicated a large effect size.

Data availability

Data is available in the Open Science Framework repository via the following link- https://osf.io/34nq6/overview?view_only=2d9c8f0c4c224910bafbaaff056dd784

References

Farashahi, S., Donahue, C. H., Hayden, B. Y., Lee, D. & Soltani, A. Flexible combination of reward information across primates. Nat. Hum. Behav. 3, 1215–1224 (2019).

Jin, F. et al. Dynamics learning rate bias in pigeons: Insights from reinforcement learning and neural correlates. Animals 14, 489 (2024).

Lefebvre, G., Lebreton, M., Meyniel, F., Bourgeois-Gironde, S. & Palminteri, S. Behavioural and neural characterization of optimistic reinforcement learning. Nat. Hum. Behav. 1, 1–9 (2017).

Lefebvre, G., Summerfield, C. & Bogacz, R. A normative account of confirmation bias during reinforcement learning. Neural Comput. 34, 307–337 (2022).

Ohta, H. et al. The asymmetric learning rates of murine exploratory behavior in sparse reward environments. Neural Netw. 143, 218–229 (2021).

Palminteri, S. & Lebreton, M. The computational roots of positivity and confirmation biases in reinforcement learning. Trends Cogn. Sci. 26, 607–621 (2022).

Cazé, R. D. & Van Der Meer, M. A. A. Adaptive properties of differential learning rates for positive and negative outcomes. Biol. Cybern. 107, 711–719 (2013).

Behrens, T. E. J., Woolrich, M. W., Walton, M. E. & Rushworth, M. F. S. Learning the value of information in an uncertain world. Nat. Neurosci. 10, 1214–1221 (2007).

Charnov, E. L. Optimal foraging, the marginal value theorem. Theor. Popul. Biol. 9, 129–136 (1976).

Garrett, N. & Daw, N. D. Biased belief updating and suboptimal choice in foraging decisions. Nat. Commun. 11(1), 3417 (2020).

Wittmann, M. K. et al. Predictive decision making driven by multiple time-linked reward representations in the anterior cingulate cortex. Nat. Commun. 7(1), 12327 (2016).

Wittmann, M. K. et al. Global reward state affects learning and activity in raphe nucleus and anterior insula in monkeys. Nat. Commun. 11, 3771 (2020).

Soltani, A. & Izquierdo, A. Adaptive learning under expected and unexpected uncertainty. Nat. Rev. Neurosci. 20, 635–644 (2019).

Le Pelley, M. E., Mitchell, C. J., Beesley, T., George, D. N. & Wills, A. J. Attention and associative learning in humans: An integrative review. Psychol. Bull. 142, 1111–1140 (2016).

Mackintosh, N. J. A theory of attention: Variations in the associability of stimuli with reinforcement. Psychol. Rev. 82, 276–298 (1975).

Pearce, J. M. & Hall, G. A model for Pavlovian learning: Variations in the effectiveness of conditioned but not of unconditioned stimuli. Psychol. Rev. https://doi.org/10.1037/0033-295X.87.6.532 (1980).

Aguirre, C. G. et al. Dissociable contributions of basolateral amygdala and ventrolateral orbitofrontal cortex to flexible learning under uncertainty. J. Neurosci. 44, (2024).

Chen, C. S. et al. Divergent strategies for learning in males and females. Curr. Biol. 31, 39–50 (2021).

Chen, C. S., Knep, E., Han, A., Ebitz, R. B. & Grissom, N. M. Sex differences in learning from exploration. Elife 10, e69748 (2021).

Cox, J. et al. A neural substrate of sex-dependent modulation of motivation. Nat. Neurosci. 26, 274–284 (2023).

Lei, H. et al. Sex difference in the weighting of expected uncertainty under chronic stress. Sci. Rep. 11, 8700 (2021).

Van den Bos, R., Harteveld, M. & Stoop, H. Stress and decision-making in humans: performance is related to cortisol reactivity, albeit differently in men and women. Psychoneuroendocrinology 34, 1449–1458 (2009).

Sutton, R. S., Barto, A. G. Reinforcement Learning: An Introduction. IEEE Trans. Neural Netw. 9, (1998).

Berns, G. S., McClure, S. M., Pagnoni, G. & Montague, P. R. Predictability modulates human brain response to reward. J. Neurosci. https://doi.org/10.1523/jneurosci.21-08-02793.2001 (2001).

d’Acremont, M., Fornari, E. & Bossaerts, P. Activity in inferior parietal and medial prefrontal cortex signals the accumulation of evidence in a probability learning task. PLoS Comput. Biol. 9, e1002895 (2013).

Grossman, C. D., Bari, B. A. & Cohen, J. Y. Serotonin neurons modulate learning rate through uncertainty. Curr. Biol. 32, 586–599 (2022).

Simoens, J., Verguts, T. & Braem, S. Learning environment-specific learning rates. PLOS Comput. Biol. 20, e1011978 (2024).

Silvetti, M., Vassena, E., Abrahamse, E. & Verguts, T. Dorsal anterior cingulate-brainstem ensemble as a reinforcement meta-learner. PLOS Comput. Biol. 14, e1006370 (2018).

Cheng, Y., Magnard, R., Langdon, A. J., Lee, D. & Janak, P. H. Chronic ethanol exposure produces sex-dependent impairments in value computations in the striatum. Sci. Adv. 11, eadt0200 (2025).

Carpenter, B. et al. Stan: A probabilistic programming language. J. Stat. Softw. 76, 1 (2017).

Trepka, E. et al. Entropy-based metrics for predicting choice behavior based on local response to reward. Nat. Commun. 12, 6567 (2021).

Woo, J. H. et al. Mechanisms of adjustments to different types of uncertainty in the reward environment across mice and monkeys. Cogn. Affect. Behav. Neurosci. 23, 600–619 (2023).

Mah, A., Golden, C. E. M. & Constantinople, C. M. Dopamine transients encode reward prediction errors independent of learning rates. Cell Rep. 43, 114840 (2024).

Trudel, N. et al. Polarity of uncertainty representation during exploration and exploitation in ventromedial prefrontal cortex. Nat. Hum. Behav. 5, 83–98 (2021).

Kolling, N. et al. Value, search, persistence and model updating in anterior cingulate cortex. Nat. Neurosci. 19, 1280–1285 (2016).

Wallis, J. D. & Rushworth, M. F. S. Chapter 22 - Integrating benefits and costs in decision making. In Neuroeconomics 2nd edn (eds Glimcher, P. W. & Fehr, E.) 411–433 (Academic Press, San Diego, 2014).

Harris, C. et al. Unique features of stimulus-based probabilistic reversal learning. Behav. Neurosci. 135, 550 (2021).

Hales, C. A., Hwang, A., Hathaway, B. A. & Winstanley, C. A. Divergent effects of win-paired cues on learning from timeout penalties in female and male rats. Physiol. Behav. 299, 114979 (2025).

Truckenbrod, L. M., Cooper, E. M. & Orsini, C. A. Cognitive mechanisms underlying decision making involving risk of explicit punishment in male and female rats. Cogn. Affect. Behav. Neurosci. 23, 248–275 (2023).

Wheeler, A.-R., Truckenbrod, L. M., Boehnke, A., Kahanek, P. & Orsini, C. A. Sex differences in sensitivity to dopamine receptor manipulations of risk-based decision making in rats. Neuropsychopharmacology 49, 1978–1988 (2024).

Romero-Sosa, J. L., Yeghikian, A., Wikenheiser, A. M., Blair, H. T. & Izquierdo, A. Neural coding of choice and outcome are modulated by uncertainty in orbitofrontal but not secondary motor cortex. Nat. Commun. 16, 8931 (2025).

Zhang, Q. & Zhou, J. Adaptive reward representations integrate expected uncertainty signals in orbitofrontal cortex. Sci. Adv. 11, eadv9590 (2025).

Neurons in the orbitofrontal cortex encode economic value | Nature. https://www.nature.com/articles/nature04676.

Riceberg, J. S. & Shapiro, M. L. Orbitofrontal cortex signals expected outcomes with predictive codes when stable contingencies promote the integration of reward history. J. Neurosci. 37, 2010–2021 (2017).

Elston, T. W., Kalhan, S. & Bilkey, D. K. Conflict and adaptation signals in the anterior cingulate cortex and ventral tegmental area. Sci. Rep. 8, 11732 (2018).

O’Reilly, J., Schüffelgen, U. & Cuell, S. Dissociable effects of surprise and model update in parietal and anterior cingulate cortex. Proc. Of http://www.pnas.org/content/110/38/E3660.short (2013).

Walton, M. E., Croxson, P. L., Behrens, T. E. J., Kennerley, S. W. & Rushworth, M. F. S. Adaptive decision making and value in the anterior cingulate cortex. Neuroimage 36, T142–T154 (2007).

Kennerley, S. W., Walton, M. E., Behrens, T. E. J., Buckley, M. J. & Rushworth, M. F. S. Optimal decision making and the anterior cingulate cortex. Nat Neurosci 9, 940–947 (2006).

Bernacchia, A., Seo, H., Lee, D. & Wang, X.-J. A reservoir of time constants for memory traces in cortical neurons. Nat. Neurosci. 14, 366–372 (2011).

Powell, N. J. & Redish, A. D. Representational changes of latent strategies in rat medial prefrontal cortex precede changes in behaviour. Nat. Commun. 7, 1–11 (2016).

Hyman, J. M., Holroyd, C. B. & Seamans, J. K. A novel neural prediction error found in anterior cingulate cortex ensembles. Neuron 95, 447–456 (2017).

Holroyd, C. B. & Coles, M. G. H. The neural basis of human error processing: Reinforcement learning, dopamine, and the error-related negativity. Psychol. Rev. 109, 679 (2002).

Takahashi, Y. K., Langdon, A. J., Niv, Y. & Schoenbaum, G. Temporal specificity of reward prediction errors signaled by putative dopamine neurons in rat VTA depends on ventral striatum. Neuron 91, 182–193 (2016).

Activity in human ventral striatum locked to errors of reward prediction | Nature Neuroscience. https://www.nature.com/articles/nn802.

Schultz, W., Dayan, P. & Montague, P. R. A neural substrate of prediction and reward. Science 275, 1593–1599 (1997).

Starkweather, C. K., Gershman, S. J. & Uchida, N. The medial prefrontal cortex shapes dopamine reward prediction errors under state uncertainty. Neuron https://doi.org/10.1016/j.neuron.2018.03.036 (2018).

Kalhan, S., Redish, A. D., Hester, R. & Garrido, M. I. A salience misattribution model for addictive-like behaviors. Neurosci. Biobehav. Rev. 125, 466–477 (2021).