Abstract

In this paper, we construct a novel iterative scheme for approximating fixed points of generalized \((\alpha ,\beta )\)-nonexpansive mappings in the setting of a real Banach space. The proposed scheme not only generalizes but also unifies and extends several well-known fixed point iterative processes available in the literature. We establish both weak and strong convergence results under appropriate conditions. Furthermore, a comparative analysis of the rate of convergence is carried out using a carefully chosen numerical example, with the outcomes demonstrated through both tabular and graphical illustrations.In addition to convergence properties, we derive a data dependence result, offering insights into the stability of the proposed scheme with respect to perturbations in the underlying mapping. We further prove that the scheme satisfies \(\mathscr {G}\)-stability and almost \(\mathscr {G}\)-stability criteria, thereby enhancing its robustness in practical applications. To demonstrate the applicability of our results, we provide significant application of the analysis to a SEIR epidemic model governed by a Caputo-type fractional differential equation, showcasing the utility of the proposed method in the context of real-world dynamical systems. Our findings contribute to the advancement of fixed point theory and its applications in mathematical modeling, offering a flexible and powerful tool for analyzing complex nonlinear problems.

Similar content being viewed by others

Introduction

Let \(\mathbb {E}\) be a subset of a uniformly convex Banach space \(\mathbb {X}\). A mapping \(\mathscr {G}:\mathbb {E}\rightarrow \mathbb {E}\) is called a generalized \((\alpha ,\beta )\)-nonexpansive mapping if for all \(x,y\in \mathbb {E}\) there exist \(\alpha ,\beta \in [0,1)\) with \(\alpha +\beta <1\) such that

The class of generalized \((\alpha ,\beta )\)-nonexpansive mappings is broad and includes many well-known nonexpansive-type mappings, such as:

-

1.

Nonexpansive mappings: \(\Vert \mathscr {G}x-\mathscr {G}y\Vert \le \Vert x-y\Vert\).

-

2.

Suzuki nonexpansive mappings1:

$$\frac{1}{2}\Vert x-\mathscr {G}x\Vert \le \Vert x-y\Vert \implies \Vert \mathscr {G}x-\mathscr {G}y\Vert \le \Vert x-y\Vert .$$ -

3.

Generalized \(\alpha\)-nonexpansive mappings2:

$$\Vert \mathscr {G}x - \mathscr {G}y\Vert \le \alpha \Vert x-\mathscr {G}y\Vert + \alpha \Vert y-\mathscr {G}x\Vert + (1-2\alpha )\Vert x-y\Vert .$$ -

4.

Reich–Suzuki nonexpansive mappings3:

$$\tfrac{1}{2}d(x,\mathscr {G}x)\le d(x,y) \implies d(\mathscr {G}x,\mathscr {G}y) \le \alpha d(\mathscr {G}x,x) + \alpha d(\mathscr {G}y,y) + (1-2\alpha )d(x,y).$$

Hence, generalized \((\alpha ,\beta )\)-nonexpansive mappings provide a unified framework that extends these important classes.

Remark 1.1

When \(\alpha = 0\), the definition reduces to Suzuki’s condition (C). However, the converse does not hold (see3). Thus, the class of generalized \((\alpha ,\beta )\)-nonexpansive mappings properly contains the Suzuki nonexpansive mappings.

Fixed point theory, a central component of nonlinear analysis, provides essential tools for establishing the existence and uniqueness of solutions to a wide range of mathematical problems. Its applications are especially prominent in the study of fractional differential equations (FDEs), which model complex phenomena involving memory effects and non-local interactions4,5,6,7,8,9. A standard approach for analyzing FDEs is to convert them into equivalent integral equations using operators such as the Riemann–Liouville or Caputo fractional integrals. Once in integral form, fixed point theorems can be applied to study existence, uniqueness, and approximation of solutions10.

Beyond abstract analysis, fixed point theory plays an important role in modeling real-world systems, including epidemic processes. In SEIR-type models, population dynamics are described through compartments—susceptible (S), exposed (E), infected (I), recovered (R)—and extensions that incorporate additional groups such as vaccinated individuals (see11,12,13). Incorporating fractional derivatives enhances these models by capturing memory effects and historical dependencies, making them suitable for diseases with long incubation periods, complex transmission mechanisms, or sustained immunity.

Fixed point methods ensure the existence and uniqueness of solutions to these fractional-order epidemic models and support the development of iterative schemes for approximating compartment populations over time. Such numerical techniques enable simulation and forecasting of disease dynamics and evaluation of intervention strategies (see, e.g. 14,15,16,17,18,19,45, providing a quantitative foundation for public health decision-making.

Fixed point theory also finds applications in other emerging areas, including fractional-order complex-valued neural networks20, orthogonal interpolative iterative mappings21, multivalued nonlinear dominated mappings22, modeling in partial differential equations23and multivalued operators involving nonlinear contractions (see, e.g. 24,25,45.

The aim of this paper is to introduce a new fixed point iterative scheme that converges faster than existing schemes in the literature for the class of generalized \((\alpha ,\beta )\)-nonexpansive mappings in uniformly convex Banach spaces. We establish weak and strong convergence, \(\mathscr {G}\)-stability and almost \(\mathscr {G}\)-stability, and data dependence results. The rate of convergence is demonstrated through a numerical example comparing several iterative methods. Applications are provided to the approximation of boundary value problems via Green’s functions and to a fractional-order SEIR epidemic model. These results extend and generalize various related findings in the literature.

The paper is organized as follows: section “Preliminaries” contains preliminaries. Section “Main results” presents the main results, including convergence, stability, rate of convergence, and data dependence. Section “Numerical example and rate of convergence” provides a numerical example. Section “Application to the SEIR epidemic model” discusses the application to the SEIR epidemic model. Section “Conclusion” concludes the paper.

Preliminaries

Fixed point iterative schemes are essential numerical tools for approximating solutions to equations for which exact analytical solutions are difficult or impossible to obtain. These schemes aim to find a point xsuch that \(\mathscr {G}(x) = x\), where \(\mathscr {G}\) is a given function or operator. The iterative approach typically starts with an initial guess \(x_0\) and generates a sequence \(\{x_n\}\) using a recurrence relation, often of the form \(x_{n+1} = \mathscr {G}(x_n)\), that converges to the fixed point under suitable conditions. The development of fixed point iterative schemes has its roots in Banach’s Contraction Mapping Principle (1922), which provided a rigorous foundation for convergence under contraction conditions in complete metric spaces. This principle led to the classical Picard iteration26, widely used in solving ordinary differential equations. Over time, more generalized iterative methods or schemes have been developed. Modern advances in fixed point iterative schemes focus on improving convergence rates, enhancing robustness, and applying them to nonlinear and non-compact operators. These developments are particularly significant in functional analysis, optimization, and numerical solutions of integral and differential equations.

Some of such iterative schemes are outlined in the sequel. For instance, in 2011, Sahu and Petrusel27 introduced the S iteration scheme defined as

for \(\{\alpha _n\}\) being a real sequence.

Gürsoy et al.28 introduced an iterative scheme called the Picard-S in 2014 and defined thus:

Also in 2014, Abbas and Nazir29 introduced a three step iterative process called the AK iterative scheme and defined as follows;

for \(\{\alpha _n\}\), \(\{\beta _n\}\), \(\{\gamma _n\}\in (0,1)\)

Forward to 2018, Ullah and Arshad30 introduced the M iterative scheme as follows;

where \(\{\alpha _n\}\) is a real sequence in [0, 1]. The scheme was used to prove weak and strong convergence theorems for Suzuki generalized nonexpansive mapping in the framework of uniformly convex Banach spaces.

Furthermore, Abdeljawad et al.31 in 2021 defined the JA iterative scheme as follows;

where \(\{\alpha _n\}, \{\beta _n\}\in [0,1]\) are sequences of real values.

As a concern to whether there exists a robust iterative scheme that can extend and generalize the above outlined schemes in approximating the fixed points of a class of generalized \((\alpha ,\beta )\)-nonexpansive mapping, we introduce the following new fixed point iterative scheme;

The following are the motivations of the steps of (7);

-

The choice of \(x_0\) initiates the iteration from an arbitrary point of the space.

-

The point \(w_n=\mathscr {G}x_n\) represents a direct application of the operator, providing a first-level approximation to the fixed point.

-

The convex combination \(z_n=(1-\beta _n)x_n+\beta _n\mathscr {G}w_n\) blends the current iterate with a more advanced evaluation of \(\mathscr {G}\), introducing stability and allowing better control of the convergence path through the sequence \(\{\beta _n\}\).

-

The term \(y_n=\mathscr {G}\!\left[ (1-\alpha _n)\mathscr {G}w_n+\alpha _n\mathscr {G}z_n\right]\) introduces a second convex combination, this time between two transformed points, followed by another application of \(\mathscr {G}\). This step accelerates convergence by incorporating deeper information about the operator’s behavior.

-

Finally, \(x_{n+1}=\mathscr {G}y_n\) generates the next iterate, ensuring that each step remains anchored to the operator \(\mathscr {G}\) and systematically pushes the sequence toward the fixed point set.

Lemma 2.132

Let \(\{\mu _n\}\) be a nonnegative sequence for which one assumes there exists \(n_0\in \mathbb {N}\), such that for all \(n\ge n_0\), and suppose the following inequality is satisfied;

where \(\varphi _n\in (0,1)\), \((1-\varphi _n)<1\), \(\forall n\in \mathbb {N}\), \(\sum\nolimits _{n=0}^{\infty }\varphi _n=\infty\) and \(\wp _n\ge 0\), \(\forall n\in \mathbb {N}\). Then,

Definition 2.133

A mapping \(\mathscr {G}:\mathbb {E}\rightarrow \mathbb {E}\) with \(\mathcal {F}(\mathscr {G})\ne \emptyset\) is said to satisfy condition (I) if there exists a nondecreasing function \(h:[0,\infty )\rightarrow [0,\infty )\) such that \(h(0)=0\) and \(h(s)>0\) for all \(s>0\), and

where

denotes the distance from xto the fixed point set \(\mathcal {F}(\mathscr {G})\).

Proposition 2.134

Let \(\mathscr {G}\) be a generalized \((\alpha ,\beta )\)-nonexpansive mapping on a subset \(\mathbb {E}\), subset of a Banach space \(\mathbb {X}\). Then,

-

1.

if \(\mathscr {G}\) has at least one fixed point, then for all \(\varpi ^*\in \mathcal {F}(\mathscr {G})\),

$$\begin{aligned} \Vert \mathscr {G}\varpi ^*-\mathscr {G}x\Vert \le \Vert \varpi ^*-x\Vert , \end{aligned}$$for all \(x\in \mathbb {E}\),

-

2.

for \(x,y\in \mathbb {E}\),

$$\begin{aligned} \Vert x-\mathscr {G}y\Vert \le \Big (\frac{3+\alpha +\beta }{1-\alpha -\beta }\Big )\Vert x-\mathscr {G}x\Vert +\Vert x-y\Vert , \end{aligned}$$(8)holds,

-

3.

if \(\mathbb {X}\) satisfies Opial’s condition, then \(\{x_n\}\subseteq \mathbb {E}\), \(x_n\rightharpoonup \varpi ^*\), \(\Vert x_n-\mathscr {G}x_n\Vert \rightarrow 0\) \(\Rightarrow\) \(\mathscr {G}\varpi ^*=\varpi ^*\) holds.

Lemma 2.235

Let \(\mathbb {X}\) be a uniformly convex Banach space and \(\{\alpha _n\}_{n=0}^{\infty }\), \(\{\beta _n\}_{n=0}^{\infty }\) and \(\{\gamma _n\}_{n=0}^{\infty }\) be sequences of numbers such that \(0<a\le \alpha _n\le b<1\), \(n\ge 1\), for \(a, b\in \mathbb {R}\). Let \(\{s_n\}_{n=0}^{\infty }\) and \(\{t_n\}_{n=0}^{\infty }\) be sequences in \(\mathbb {X}\)such that \(\limsup \nolimits _{n\rightarrow \infty }\Vert s_n\Vert \le \lambda\), \(\limsup \nolimits _{n\rightarrow \infty }\Vert t_n\Vert \le \lambda\)and \(\limsup \nolimits _{n\rightarrow \infty }\Vert \alpha _ns_n+(1-\alpha _n)t_n\Vert =\lambda\)for some \(\lambda \ge 0\). Then, \(\lim \nolimits _{n\rightarrow \infty }\Vert s_n-t_n\Vert =0\).

Definition 2.236

A Banach space \(\mathbb {X}\) is said to satisfy the Opial condition37 if for each sequence \(\{x_n\}\) in \(\mathbb {X}\), converging weakly to \(p\in \mathbb {X}\), we have

for all \(q\in \mathbb {X}\) such that \(p\ne q\)

Lemma 2.338

If \(\rho \in [0,1)\) is a real number and \(\{\epsilon _n\}_{n=0}^{\infty }\) is a sequence of positive numbers such that \(\lim \nolimits _{n\rightarrow \infty }\epsilon _n=0\), then for any sequence of positive numbers, \(\{s_n\}_{n=0}^{\infty }\) satisfying \(s_{n+1}\le \rho s_n+\epsilon _n,~(n=0,1,2,...)\), \(\lim \nolimits _{n\rightarrow \infty }s_n=0\).

Lemma 2.439

Let \(\{m_n\}_{n=0}^\infty\) and \(\{\epsilon _n\}_{n=0}^\infty\) be sequences of nonnegative numbers and \(\delta \in [0,1)\) such that

If \(\sum\nolimits _{n=0}^{\infty }\epsilon _n<\infty\), then \(\sum\nolimits _{n=0}^{\infty }m_n<\infty\).

Definition 2.340

Let \(\alpha>0\), \(0<\alpha <1\), \(n\in \mathbb {N}\). The Caputo fractional derivative of order \(\alpha\) of a function x(t) is defined as

The construction of novel iterative processes is important not only for theoretical enrichment of fixed point theory but also for addressing practical problems where robustness and stability are critical. In particular, the convergence behavior (weak and strong), efficiency in terms of rate of convergence, and sensitivity to data perturbations are central aspects that determine the effectiveness of any new scheme. The incorporation of \(\mathscr {G}\)-stability and almost \(\mathscr {G}\)-stability further ensures that the scheme remains reliable under perturbations, an essential property for applications in real-world dynamical systems.

The significance of the present work lies in developing an iterative process that unifies and extends several established methods, while also demonstrating enhanced stability and convergence properties. Beyond its theoretical contributions, the proposed scheme is applied to a fractional-order SEIR epidemic model, illustrating its relevance in analyzing nonlinear models arising in epidemiology. Thus, the results presented here bridge the gap between abstract fixed point theory and practical applications, underscoring both the mathematical depth and applied value of the approach.

Main results

In this section, we present the core findings of the paper. We establish both weak and strong convergence theorems for the proposed iterative scheme in the setting of uniformly convex Banach spaces. Furthermore, we analyze the rate of convergence to highlight the efficiency of the scheme in comparison with existing methods via numerical example. The data dependence of the scheme is also studied, ensuring robustness of the results under perturbations. Finally, we investigate \(\mathscr {G}\)-stability and almost \(\mathscr {G}\)-stability properties, which confirm the stability of the scheme under various perturbative conditions. These results collectively demonstrate the versatility and effectiveness of the iterative process in fixed point approximation.

Weak and strong convergence

Lemma 3.1

Assume \(\mathbb {E}\) is a nonempty closed convex subset of a Banach space \(\mathbb {X}\). Let \(\mathscr {G}:\mathbb {E}\rightarrow \mathbb {E}\) be a mapping satisfying the generalized \((\alpha ,\beta )\)-nonexpansive mapping with \(\mathcal {F}(\mathscr {G})\ne \emptyset\). Suppose \(\{x_n\}\) is a sequence generated by the new iterative scheme (7), then \(\lim \nolimits _{n \rightarrow \infty }\Vert x_n-\varpi ^*\Vert\) exists for each \(\varpi ^*\in \mathcal {F}(\mathscr {G})\).

Proof

Assume \(\varpi ^*\in \mathcal {F}(\mathscr {G})\) is a fixed point and by Lemma 1, we can invoke (7) to have the following estimates at every step thus;

Therefore, \(\{\Vert x_n - \varpi ^*\Vert \}\) is bounded and non-decreasing, as such, as \(n \rightarrow \infty\), \(\lim \nolimits _{n \rightarrow \infty } \Vert x_n - \varpi ^*\Vert\) exists for every \(\varpi ^* \in \mathcal {F}(\mathscr {G}) \ne \emptyset\). This completes the proof. \(\square\)

Lemma 3.2

Let \(\mathscr {G}: \mathbb {E} \rightarrow \mathbb {E}\) be a mapping that satisfies the conditions of the class of generalized \((\alpha ,\beta )\)-nonexpansive mappings on a nonempty closed convex subset of a uniformly convex Banach space \(\mathbb {X}\). Let \(\{x_n\}\) be a sequence generated by the new iterative scheme (7). Then \(\mathcal {F}(\mathscr {G}) \ne \emptyset\) if and only if \(\{x_n\}\) is bounded and \(\lim \nolimits _{n \rightarrow \infty } \Vert \mathscr {G}x_n - x_n\Vert = 0\).

Proof

Assume that \(\mathcal {F}(\mathscr {G})\ne \emptyset\) is a fixed point set and \(\varpi ^*\in \mathcal {F}(\mathscr {G})\). Then by Lemma 3.1, \(\lim \nolimits _{n \rightarrow \infty }\Vert x_n - \varpi ^*\Vert\) exists and \(\{x_n\}\) is bounded. For a real value m, we set

From Lemma 3.1and (10), we have the following:

and

By hypothesis (1) of Proposition 2.1, we have

From the proof of Lemma 3.1, we have that

Again, from hypothesis (1) of Proposition 2.1, alongside Eq. (10), we have

From (13) and (16), it follows that

such that

and we have

This gives

hence,

So that,

combining (12) and (18), we have

Again,

here, this gives

hence,

Furthermore,

Combining (11) and (20), we have

From (21), we have

so that if the following estimate holds without loss of generality,

(for any arbitrary \(\mu _n\in (0,1)\)), then, by applying Lemma 2.2, we have

Conversely, we want to show that the fixed point set \(\mathcal {F}(\mathscr {G}) \ne \emptyset\)whenever \(\{x_n\}\)is bounded with

To show that, let \(\varpi ^* \in \mathcal {A}(\mathbb {E}, \{x_n\})\). Applying condition (2) of Proposition 2.1, we have

It follows that \(\mathscr {G} \varpi ^* \in \mathcal {A}(\mathbb {E}, \{x_n\})\). Hence \(\mathcal {A}(\mathbb {E}, \{x_n\})\) is singleton, which follows that \(\mathscr {G} \varpi ^* = \varpi ^*\) and \(\mathcal {F}(\mathscr {G})\)is nonempty. Hence, the Lemma is proved. \(\square\)

The next theorem is the weak convergence result.

Theorem 3.1

Let \(\mathbb {E}\)be a convex closed subset of a uniformly convex Banach space \(\mathbb {X}\), and \(\mathscr {G}:\mathbb {E}\rightarrow \mathbb {E}\)a mapping satisfying the class of generalized (\(\alpha ,\beta\))-nonexpansive mapping. Suppose \(\mathcal {F}(\mathscr {G}) \ne \emptyset\)and \(\{x_n\}\)is a sequence generated by the new fixed point iterative scheme (7). Then \(\{x_n\}\)converges weakly to some fixed point of \(\mathscr {G}\)if \(\mathbb {X}\)is endowed with Opial’s condition.

Proof

Since every uniformly convex Banach space is always reflexive, we claim that \(\mathbb {X}\) is reflexive. We have that the sequence \(\{x_n\}\) is bounded as shown in Lemma 3.2. As such, there exists a weak convergent subsequence \(\{x_{n_j}\}\) which has a weak limit, \(s_1\). By Lemma 3.2, we can have that \(\lim \nolimits _{j \rightarrow \infty }\Vert \mathscr {G}x_{n_j} - x_{n_j}\Vert = 0\).

If we apply Condition (3) of Proposition 2.1, the point \(s_1\) becomes the fixed point for the mapping \(\mathscr {G}\). Then we now show that \(s_1\) is a weak limit for \(\{x_n\}\) and the proof is complete. Furthermore, if on the contrary, we have that \(s_1\) is not a weak limit for \(\{x_n\}\) and so, we can find another convergent subsequence \(\{x_{n_k}\}\) of \(\{x_n\}\) such that this subsequence converges to a weak limit \(s_2\) and \(s_1 \ne s_2\). Considering the same approach as before, we can show that \(s_2\) is a fixed point of the mapping \(\mathscr {G}\) by applying Condition (3) of Proposition 2.1. By Lemma 3.1 and applying the Opial’s condition (9) on \(\mathscr {G}\), we have that

Obviously, the strict inequality, \(\lim \nolimits _{n \rightarrow \infty } \Vert x_n - s_1\Vert < \lim\nolimits _{n \rightarrow \infty } \Vert x_n - s_1\Vert\) shows contradiction based on our earlier claim that \(s_1\ne s_2\). Hence, we have to accept that \(s_1 = s_2\) which implies that there is only one weak limit \(s_1\) for the sequence \(\{x_n\}\). Therefore the sequence \(\{x_n\}\) converges weakly to the fixed point. Thereby completing the proof. \(\square\)

Here we now present the strong convergence results in different forms.

Theorem 3.2

Let \(\mathbb {X}\) be a uniformly convex Banach space, \(\mathbb {E}\) be a nonempty closed convex subset of \(\mathbb {X}\) and \(\mathbb {E}\) is compact. Suppose \(\mathscr {G}:\mathbb {E}\rightarrow \mathbb {E}\) is a mapping in the class of generalized \((\alpha , \beta )\)-nonexpansive mapping with \(\mathcal {F}(\mathscr {G}) \ne \emptyset\). If \(\{x_n\}_{n=0}^\infty\) is a sequence generated by the new iterative scheme (7), then \(\{x_n\}_{n=0}^\infty\) converges strongly to a fixed point \(\varpi ^*\in \mathcal {F}(\mathscr {G})\).

Proof

Since the subset \(\mathbb {E}\) is convex and compact, we can find a subsequence \(\{x_{n_p}\}\) of the sequence \(\{x_n\}\) such that it converges to a point \(r \in \mathbb {E}\) (i.e., \(x_{n_p} \rightarrow r\)). We want to show that the limit point \(r \in \mathbb {E}\) is a fixed point of \(\mathscr {G}\) and a strong limit of the sequence \(\{x_n\}\) and hence \(x_{n_p} \rightarrow \mathscr {G}r\). To achieve this, we apply Lemma 3.2and we obtain

Applying condition (2) of Proposition 2.1, we have

Hence, \(r=\mathscr {G}r\), so it is obvious that ris a fixed point of \(\mathscr {G}\).

By Lemma 3.1, \(\lim \nolimits _{n\rightarrow \infty } \Vert x_{n+1} - r\Vert\)exists. Of course, it has become clear that \(\{x_n\}\)converges strongly to the limit r. Hence, the proof is complete. \(\square\)

The next theorem is a strong convergence result that does not need \(\mathbb {E}\)to be compact.

Theorem 3.3

Let \(\mathscr {G}\) be a generalized \((\alpha , \beta )\)-nonexpansive mapping on a closed convex subset \(\mathbb {E}\) of a Banach space \(\mathbb {X}\). If the fixed point set \(\mathcal {F}(\mathscr {G}) \ne \emptyset\) and \(\{x_n\}\) is a sequence generated by the new iterative scheme (7), then \(\{x_n\}\) converges strongly to some fixed point of \(\mathscr {G}\) if \(\liminf \nolimits _{n\rightarrow \infty }\rho (x_n, \mathcal {F}(\mathscr {G})) = 0\), where \(\rho (\cdot ,\cdot )\)denotes a distance function or metric.

Proof

Let \(\varpi ^* \in \mathcal {F}(\mathscr {G}) \ne \emptyset\) be a fixed point. From Lemma 3.1, it has already been established that the sequence \(\{\Vert x_n - \varpi ^*\Vert \}\) has a well-defined limit as \(n \rightarrow \infty\). Consequently, the limit of \(\rho (x_n, \mathcal {F}(\mathscr {G}))\) also exists. Now, assume that

Our goal is to construct a Cauchy sequence within \(\mathcal {F}(\mathscr {G})\). To do this, we define a sequence \(\{p_k\} \subseteq \mathcal {F}(\mathscr {G})\) and a corresponding subsequence \(\{x_{n_k}\} \subseteq \{x_n\}\), ensuring that

If \(\{p_k\}\) is a nonincreasing sequence, then it follows that

By applying the triangle inequality, we obtain

Substituting the bounds, we get

Thus, \(\{p_k\}\) is a Cauchy sequence in \(\mathcal {F}(\mathscr {G})\). Assume that \(\mathcal {F}(\mathscr {G})\)is closed in \(\mathbb {E}\), the sequence \(\{p_k\}\) converges to some \(\varpi ^* \in \mathcal {F}(\mathscr {G})\). Consequently, we obtain

Therefore, the subsequence \(\{x_{n_k}\}\) converges strongly to \(\varpi ^*\). Since Lemma 3.1 guarantees the existence of \(\lim \nolimits _{n\rightarrow \infty } \Vert x_n - \varpi ^*\Vert\), it follows that the entire sequence \(\{x_n\}\)strongly converges to \(\varpi ^*\), a fixed point of \(\mathscr {G}\). Therefore, the proof is complete. \(\square\)

The strong convergence result using condition (I) is given as follows.

Theorem 3.4

Assume that \(\mathscr {G}: \mathbb {E} \rightarrow \mathbb {E}\) is a generalized \((\alpha , \beta )\)-nonexpansive mapping on a convex closed subset \(\mathbb {E}\) of a uniformly convex Banach space \(\mathbb {X}\). Assume that \(\mathscr {G}\)satisfies condition (I). If \(\mathcal {F}(\mathscr {G})\ne \emptyset\) and \(\{x_n\}\) is a sequence generated by the new iterative scheme (7), then \(\{x_n\}\) converges strongly to some fixed point of \(\mathscr {G}\).

Proof

From Lemma 3.2, it has been shown that

Since Gsatisfies condition (I), it follows that

From (23), we have

Given that \(\mu : [0, \infty ) \rightarrow [0, \infty )\) is a nondecreasing function with \(\mu (0) = 0\) and \(\mu (h)>0\) for all \(h \in (0, \infty )\). From \((23)\), we can infer that

If all the claims of Theorem 3.2 are fulfilled, then we can say that \(\{x_n\}\) converges strongly to some fixed point of \(\mathscr {G}\). \(\square\)

The following example validates the above Theorem 3.4;

Example 1

Let \(\mathbb {X}=\mathbb {R}\) with the usual norm and consider the closed convex subset \(\mathbb {E}=[0,1]\). Define \(\mathscr {G}:\mathbb {E}\rightarrow \mathbb {E}\) by

Clearly, \(\mathscr {G}\)maps \(\mathbb {E}\)into itself. We verify that \(\mathscr {G}\) is a generalized \((\alpha ,\beta )\)-nonexpansive mapping for suitable \(\alpha ,\beta \in [0,1)\) with \(\alpha +\beta <1\). Indeed, for any \(x,y\in \mathbb {E}\)we have

and hence the defining inequality of generalized \((\alpha ,\beta )\)-nonexpansiveness holds (take for instance \(\alpha =\beta =0\)).

Next, the set of fixed points of \(\mathscr {G}\) is

Solving \(x=\tfrac{1}{4}x+\tfrac{1}{4}\) yields \(x=\tfrac{1}{3}\). Thus \(\mathcal {F}(\mathscr {G})=\{\tfrac{1}{3}\}\), which is nonempty.

Let \(\{x_n\}\) be the sequence generated by the new iterative scheme (7) with arbitrary initial value \(x_0\in [0,1]\). By Theorem 3.4, the sequence \(\{x_n\}\) converges strongly to the unique fixed point \(\tfrac{1}{3}\)of \(\mathscr {G}\).

Hence, this example illustrates the applicability of the theorem in a nontrivial setting.

Data dependence result

The data dependence result is given as follows;

Theorem 3.5

Suppose \(\mathcal {S}\) is an approximate operator of \(\mathscr {G}\) satisfying the generalized \((\alpha ,\beta )\)-nonexpansive mapping. Assume \(\{x_n\}_{n=0}^\infty\) is a sequence generated by the new iterative scheme (7) for \(\mathscr {G}\) and define the sequence \(\{\bar{x}_n\}\) generated by the iterative scheme

corresponding to the approximate operator \(\mathcal {S}\), where \(\{\alpha _n\}\) and \(\{\beta _n\}\) are real sequences in [0, 1] such that \(\frac{1}{2}\le \alpha _n\) for all \(n\in \mathbb {N}\) and \(\sum\nolimits _{n=0}^{\infty }\alpha _n=\infty\). If \(\mathscr {G}\varpi ^*=\varpi ^*\) and \(\mathcal {S}\mu ^*=\mu ^*\) such that \(\lim \nolimits _{n \rightarrow \infty }\Vert \bar{x}_n-\mu ^*\Vert =0\), then we have \(\Vert \varpi ^*-\mu ^*\Vert \le \frac{9\epsilon }{1-\delta }\) where \(\epsilon>0\) is a constant.

Proof

Since \(\alpha _n,\beta _n\in (0,1)\), we have \(\alpha _n\beta _n<1\) and \(1-\alpha _n\le \alpha _n\), so that,

Let \(\mu _n:= \Vert x_n - \bar{x}_n\Vert\), \(\Phi _n:= \alpha _n (1-\delta )\) and \(\wp _n:= \frac{9\epsilon }{1-\delta }\).

From Lemma 2.1, it follows that \(0\le \limsup \nolimits _{n \rightarrow \infty } \Vert x_n - \bar{x}_n\Vert \le \limsup \nolimits _{n \rightarrow \infty } \frac{9\epsilon }{1-\delta }\).

From strong convergence result, it is clear that \(\lim \nolimits _{n \rightarrow \infty } \Vert x_n - \varpi ^*\Vert = 0\).

Consequently, we can assume that \(\lim \nolimits _{n \rightarrow \infty } \Vert \bar{x}_n - \mu ^*\Vert = 0\), so we clearly have that

Thereby completing the proof. \(\square\)

\(\mathscr {G}\)-stability and almost \(\mathscr {G}\)-stability results

The following theorems indicate the \(\mathscr {G}\)-stability and almost \(\mathscr {G}\)-stability results.

Theorem 3.6

Let \(\mathbb {X}\)be a Banach space, \(\mathscr {G}: \mathbb {E}\rightarrow \mathbb {E}\) be a generalized \((\alpha , \beta )\)-nonexpansive mapping with a fixed point \(\varpi ^*\in \mathcal {F}(\mathscr {G}) \ne \emptyset\). Let \(\{x_n\}_{n=0}^\infty\) be a sequence generated by the iterative scheme (7) and if it converges to the fixed point \(\varpi ^*\), then the iterative scheme (7) is \(\mathscr {G}\)-stable.

Proof

Let \(\{s_n\}_{n=0}^\infty\) be an arbitrary sequence in \(\mathbb {E}\) and let the sequence \(\{s_n\}_{n=0}^\infty\)generated by the new itera tive scheme (7) be \(x_{n+1} = f(\mathscr {G}, x_n)\) which converges to a unique fixed point \(\varpi ^*\).

Assume that \(\epsilon _n = \Vert s_{n+1} - f(\mathscr {G}, s_n)\Vert\). We are to show that \(\lim \nolimits _{n \rightarrow \infty } \epsilon _n = 0\) if and only if \(\lim \nolimits _{n \rightarrow \infty } \Vert s_n - \varpi ^*\Vert = 0\).

Suppose \(\lim \nolimits _{n \rightarrow \infty } \epsilon _n = 0\).

Next,

but,

and,

Putting (32) and (33) in (30), we have

From Lemma (2.3), we have

Conversely, assume that \(\lim \nolimits _{n \rightarrow \infty } \Vert s_n - \varpi ^*\Vert = 0\), then

Taking limit as \(n \rightarrow \infty\) of both sides and taking cognizance of the fact that \(\lim \nolimits _{n \rightarrow \infty } \Vert s_n - \varpi ^*\Vert = 0\). Hence \(\lim \nolimits _{n \rightarrow \infty } \epsilon _n = 0\).

Therefore the iterative scheme (7) is \(\mathscr {G}\)-stable. \(\square\)

Next, we consider the almost \(\mathscr {G}\)-stability result.

Theorem 3.7

Let \(\mathbb {X}, \mathbb {E}\) and \(\mathscr {G}\) be the same as used in Theorem 3.6 with \(\mathscr {G}\) being a mapping in the class of generalized \((\alpha , \beta )\)-nonexpansive mapping and \(\mathcal {F}(\mathscr {G}) \ne \emptyset\). Let \(\sum\nolimits _{n=0}^{\infty }\epsilon _n<\infty\) implies \(\lim \nolimits _{n \rightarrow \infty }\Vert x_n-\mu ^*\Vert =0\). Then the iterative scheme (7) is almost \(\mathscr {G}\)-stable.

Proof

Let \(\{s_n\}_{n=0}^\infty\) be an approximate sequence of \(\{x_n\}_{n=0}^\infty\) in \(\mathbb {E}\) . Assume that the iterative scheme (7) is represented as \(x_{n+1} = f(\mathscr {G}, x_n)\) which converges to a fixed point \(\varpi ^* \in \mathcal {F}(\mathscr {G}) \ne \emptyset\) and \(\epsilon _n = \Vert s_{n+1} - f(\mathscr {G}, s_n)\Vert\), \(\forall n \in \mathbb {N}\). It is our aim to prove that \(\sum\nolimits _{n=0}^\infty \epsilon _n < \infty\) implies \(\lim \nolimits _{n \rightarrow \infty } \Vert s_n - \varpi ^*\Vert = 0\).

Let \(\sum\nolimits _{n=0}^\infty \epsilon _n < \infty\), then using (7), we have

Set \(m_n = \Vert s_n - \varpi ^* \Vert .\)

So that,

Since \(\sum\nolimits _{n=1}^{\infty } \epsilon _n < \infty\), then by Lemma 2.4, we obtain \(\sum _{n=1}^{\infty } m_n < \infty\). It follows that \(\lim _{n \rightarrow \infty } m_n = 0\), that is,

Hence, the proof is complete. \(\square\)

Numerical example and rate of convergence

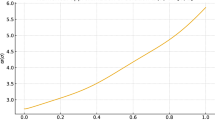

The rate of convergence of the proposed scheme, in comparison with existing iterative methods in the literature, is illustrated through numerical examples. The results are presented in both tabular and graphical forms, providing a quantitative assessment of the comparative performance of the iterative methods. Furthermore, Example 2 analytically demonstrates how the conditions of a generalized (\(\alpha\),\(\beta\))-nonexpansive mapping can be guaranteed.

Example 2

Define \(\mathscr {G}: [0,1] \rightarrow [0,1]\)by

We shall prove that \(\mathscr {G}\)is a generalized \((\alpha ,\beta )\)-nonexpansive mapping for some \(\alpha , \beta \ge 0\) with \(\alpha + \beta < 1\). We shall divide the proof into three cases.

Case 1: If \(0 \le x, y < \frac{1}{8}\), then we have \(\mathscr {G}(x) = 1-x\)and \(\mathscr {G}(y) = 1-y\). Thus,

We choose \(\alpha = \frac{1}{4}, \beta = \frac{1}{4}\)such that

Case 2: If \(x, y \ge \frac{1}{8}\), then \(\mathscr {G}(x) = \frac{x+7}{8}\) and \(\mathscr {G}(y) = \frac{y+7}{8}\), so that

We compute

Since \(|x - \mathscr {G}(y)| = |x - \frac{y+7}{8}| \le |x - y|\), and similarly for other terms, we conclude that

Case 3: If \(x < \frac{1}{8}\) and \(y \ge \frac{1}{8}\), then \(\mathscr {G}(x) = 1-x\) and \(\mathscr {G}(y) = \frac{y+7}{8}\). We have

Using a similar analysis with \(\alpha = \frac{1}{4}, \beta = \frac{1}{4}\), we establish

Thus, \(\mathscr {G}\) is a generalized \((\frac{1}{4}, \frac{1}{4})\)-nonexpansive mapping.

Remark 4.1

The restriction of \(\mathscr {G}\) to the unit interval \([0,1]\) is fundamental. This interval is invariant under \(\mathscr {G}\) and contains its unique fixed point, \(\varpi ^* = 1\). This makes \([0,1]\) the natural and necessary domain for studying the convergence of iterative sequences defined by the iterative schemes outlined for this paper.

Example 3

Let \(\mathbb {E}=[0,1]\) be closed and convex subset of \(\mathbb {X}\). The mapping \(\mathscr {G}:[0,1]\rightarrow [0,1]\) is defined as

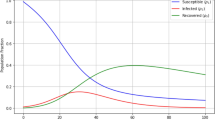

and is clearly a generalized \((\alpha ,\beta )\)-nonexpansive mapping. We compute the function numerically to measure the rate of convergence of our iterative scheme by comparison with other existing schemes in literature. The result can be shown in Table 4 and graph.

Remark 4.2

-

1. The convergence order \(p\) is reported as zero in Table 5 when the classical asymptotic formula

$$p = \lim _{n\rightarrow \infty } \frac{\log |e_{n+1}/e_n|}{\log |e_n/e_{n-1}|}$$cannot be reliably evaluated due to the rapid attainment of machine precision or premature stagnation of the error sequence.

-

2. The rate constant \(r\) is shown as zero when the estimated limit

$$r = \lim _{n\rightarrow \infty } \frac{|e_{n+1}|}{|e_n|^{p}}$$becomes numerically insignificant or undefined because the errors fall below the floating-point tolerance.

-

3. The numerical results for Example 2are presented in Tables 1 and 2, and Figs. 1 and 2. Correspondingly, the results for Example 3 are reported in Table 4 and Figs. 3 and 4.

-

4. Furthermore, to compare the computational efficiency, convergence order, and rate constants of the different schemes, we include Table 3 for Example 2 and Table 5 for Example 3.

3D Plots corresponding to Table 4.

Application to the SEIR epidemic model

The SEIR (Susceptible–Exposed–Infectious–Recovered) model is a fundamental epidemiological framework used to study the transmission dynamics of infectious diseases. It refines the classical SIR model by incorporating an Exposed (E) compartment, accounting for the latent period between infection and the onset of infectiousness. This makes it particularly useful for diseases like COVID-19, measles, influenza, and Ebola, where exposed individuals do not immediately transmit the pathogen41,42,43.

In the SEIR model, the population N(t) is partitioned into four compartments representing the susceptible class - S(t), the exposed class - E(t), infected or infectious class - I(t) and the recovered class - R(t), where tis the time variable. The dynamics of the SEIR model can be represented as a system of differential equations with integer order thus:

where the total population is \(N = S + E + I + R \le N_0\) (for \(N_0\) being the initial population). The Eq. (36) is subject to the initial conditions S(0), E(0), I(0), and R(0). Moreover, the parameters are defined as follows:

\(\Upsilon =\)Per-capita birth rate

\(\mu =\)Per-capita natural death rate

\(\alpha =\)Disease-induced average fatality rate

\(\beta =\)Probability of disease transmission per contact times the number of contacts per unit time

\(\delta =\)Rate of progression from exposed to infectious (such that \(\frac{1}{\delta }\) is the latent period)

\(\gamma =\)Recovery rate of infectious individuals (such that \(\frac{1}{\gamma }\) is the infectious period)

Remark 5.112

The SEIR model (36) will reduce to the classical SIR model if \(\Upsilon =\mu =0\) and \(\delta =\infty\). Furthermore, if \(\Upsilon\) and \(\mu \ne 0\), then the model is considered an endemic SIR model.

To generalize the classical ODE model in (36) with the aim to leverage the effects (particularly for disease with long incubation period like HIV and tuberculosis) and the non-local dynamics in disease spread modelling, we extend (36) to the non-integer order equation of Caputo type. Here, we replace \(\frac{d}{dt}\)with \(^C\mathop {{\mathop {{{\,\mathrm{\mathscr {D}}\,}}}\nolimits ^\alpha }}\limits\)for \(\alpha \in (0,1)\)and the system (36) becomes The fractional-order SEIR model is given by:

where \(_0^C\mathop {{\mathop {{{\,\mathrm{\mathscr {D}}\,}}}\nolimits _t^\alpha }}\limits\) denotes the Caputo fractional derivative of order \(\alpha \in (0, 1)\). Subject to the initial conditions:

To achieve our aim of proving the existence of the solution of the system (37), we use the following transformed representation: Let

So that the accompanying initial condition becomes:

Consequently, (37) becomes:

with initial conditions:

Suppose by transformation,

such that:

Thereby reducing (39) to:

Equation (40) is the fractional differential equation of Caputo type.

Since we are to show the existence of solution of (40), there is need to write (40) in its integral equation equivalence, thus:

where \(\nu (\alpha ) = \frac{1-\alpha }{\Gamma (\alpha )}\) and \(\hslash (\alpha ) = \frac{\alpha }{\Gamma (\alpha )}\).

Suppose that

is the Banach space of all continuous functions \(g : [0,T] \rightarrow \mathbb {R}^4\), endowed with the norm

where \(\Vert \cdot \Vert\)denotes the Euclidean norm in \(\mathbb {R}^4\), we have in the sequel, the following Lemma which is useful in proving the main theorem of this section.

Lemma 5.113

Assume that the following conditions hold:

-

(\(C_1\)) suppose there exists a constant \(L_H>0\) such that

$$\begin{aligned} |H(t,g_1(t))-H(t,g_2(t))|\le L_H|g_1-g_2|, \end{aligned}$$for each \(g\in \mathbb {H}\)and \(t\in [0,T]\), and

-

(\(C_2\)) \([\nu (\alpha )+T\hslash (\alpha )]L_H<1\).

Then (40) has a unique solution.

Theorem 5.1

Suppose that conditions \((C_1)\)and \((C_2)\) of Lemma 5.1 hold. Suppose that \(\{\alpha _n\}, \{\beta _n\} \in (0,1)\) are arbitrary sequences of real numbers such that \(\sum\nolimits _{k=0}^\infty \alpha _k\beta _k = \infty\) for all \(k\in \mathbb {N}\). Then (40) has a unique solution \(\varpi ^*\) and the sequence generated by the new iterative scheme (7) converges to \(\varpi ^*\).

Proof

Suppose that \(\{x_n\}\) is a sequence generated by the new iterative scheme (7). Let \(\mathscr {G}: \mathbb {H} \rightarrow \mathbb {H}\) be an operator defined by

Here, we aim to show that the sequence \(x_n\) converges to the fixed point \(\varpi ^*\) as \(n \rightarrow \infty\).

From (7), (41) and the hypotheses of Lemma 5.1, we have,

Finally, using (7) and (44), we have

From condition (\(C_2\)) of Lemma 5.1, we have that \([\nu (\alpha )+T\hslash (\alpha )]L_H<1\), as such, the following is deduced,

By induction, we obtain

Recall that \(\alpha _k, \beta _k \in [0, 1]\) for all \(k \in \mathbb {N}\), such that based on condition \((C_2)\) of Lemma 5.1, we have

If \(\sum\nolimits _{k=0}^{\infty } \alpha _k\beta _k = \infty\), and from elementary theory we have that \(1 - x \le e^{-x}\), then we can infer that

Hence \(\lim \nolimits _{n \rightarrow \infty } \Vert x_n - \varpi ^*\Vert = 0\). Thereby completing the proof. \(\square\)

Remark 5.2

The fixed point iterative scheme (7) transforms the SEIR fractional system into an integral operator problem. By repeatedly applying the operator to an initial guess, it produces a sequence that converges to the solution of the fractional model.

Conclusion

In this study, we introduced a new fixed point iterative scheme for generalized \((\alpha ,\beta )\)-nonexpansive mappings in Banach spaces. The proposed scheme effectively generalizes and extends several existing iterative methods in the literature, including Picard and those defined in (2), (3), (4), (5), and (6), thereby demonstrating its broad applicability and improved convergence behavior. We rigorously established both weak and strong convergence theorems under suitable conditions and provided a comparative rate of convergence analysis through a numerical example, supported by both graphical and tabular representations.

Additionally, we examined the data dependence of the iterative scheme and proved its \(\mathscr {G}\)-stability and almost \(\mathscr {G}\)-stability, thereby confirming the robustness of the method in addressing perturbations and iterative uncertainties. Two significant applications were also presented to highlight the utility of the developed scheme: one involving the solution of nonlinear boundary value problems via Green’s function, and another applying the scheme to a fractional-order SEIR epidemic model formulated through the Caputo fractional derivative.

These results underscore the theoretical and practical significance of the new iterative method in fixed point theory and nonlinear analysis. Future research directions may include:

-

1.

Further generalization of the proposed scheme to multivalued or non-self mappings;

-

2.

Applications of the scheme to optimal control problems and machine learning frameworks;

-

3.

Extending the convergence analysis to more generalized metric or modular function spaces;

-

4.

Investigating stochastic variants of the scheme for uncertain and data-driven systems;

-

5.

Exploring hybridization of the method with numerical optimization techniques for broader computational efficiency.

Open questions. While the results in this paper establish a solid foundation, several intriguing questions remain open for exploration:

-

Can the proposed scheme be adapted to settings where the Banach space is replaced by a modular, probabilistic, or fuzzy normed space?

-

How does the scheme behave under high-dimensional or infinite-dimensional optimization problems, such as those arising in functional data analysis?

-

Is it possible to establish explicit error estimates and bounds for the rate of convergence beyond asymptotic comparisons?

-

Can the stability results be extended to cover broader perturbation classes, such as stochastic noise or adversarial disturbances?

-

How effectively can the scheme be integrated into computational platforms for real-time simulation of dynamical systems, such as epidemic or control models?

Data availability

No datasets were generated or analysed during the current study.

References

Suzuki, T. Fixed point theorems and convergence theorems for some generalized nonexpansive mappings. J. Math. Anal. Appl. 340(2), 1088–1095. https://doi.org/10.1016/j.jmaa.2007.09.023(2008).

Pant, R. & Shukla, R. Approximating fixed points of generalized \(\alpha\)-nonexpansive mappings in Banach spaces. Numer. Funct. Anal. Optim. 38(2), 248–266 (2017).

Pant, R. & Pandey, R. Existence and convergence results for a class of nonexpansive type mappings in hyperbolic spaces. Appl. Gen. Topol. 20(1), 281–295. https://doi.org/10.4995/agt.2019.11057(2019).

Longhi, S. Fractional Schrödinger equation in optics. Opt. Lett. 40, 1117–1120. https://doi.org/10.1364/OL.40.001117(2015).

Srivastava, T., Singh, A. P. & Agarwal, H. Modeling the under-actuated mechanical system with fractional order derivative. Progr. Fract. Differ. Appl. 1, 57–64 (2015).

Xuan, C. F., Jin, C. & Wei, H. Anomalous diffusion and fractional advection–diffusion equation. Acta Phys. Sin. 53, 1113–1117 (2005).

Kilbas, A. A., Srivastava, H. M. & Trujillo, J. J. Theory and Applications of Fractional Differential EquationsVol. 204 (Elsevier Science B.V., 2006). https://doi.org/10.1016/S0304-0208(06)80001-0.

Podlubny, I. Fractional Differential Equations, vol. 198 of Mathematics in Science and Engineering(Academic Press, 1999).

Okeke, G. A., Udo, A. V., Alharthi, N. H. & Alqahtani, R. T. A new robust iterative scheme applied in solving a fractional diffusion model for oxygen delivery via a capillary of tissues. Mathematics. 12(9), 1339. https://doi.org/10.3390/math12091339(2024).

Okeke, G. A., Udo, A. V., Alqahtani, R. T. & Hussain, N. A faster iterative scheme for solving nonlinear fractional differential equation of the Caputo type. AIMS Math. 8(12), 28488–28516. https://doi.org/10.3934/math.20231458(2023).

Barakat, M. A., Hyder, A. A. & Almoneef, A. A. A novel HIV model through fractional enlarged integral and differential operators. Sci. Rep. 13(1), 7764. https://doi.org/10.1038/s41598-023-34280-y(2023).

Carcione, J. M., Santos, J. E., Bagaini, C. & Ba, J. A simulation of a COVID-19 epidemic based on a deterministic SEIR model. Front. Public Health 8, 230. https://doi.org/10.3389/fpubh.2020.00230(2020).

Hussain, A., Baleanu, D. & Adeel, M. Existence of solution and stability for the fractional order novel coronavirus \((nCoV-2019)\)model. Adv. Differ. Equ. 2020, 384. https://doi.org/10.1186/s13662-020-02845-0(2020).

Belgaid, Y., Helal, M., Lakmeche, A. & Venturino, E. A mathematical study of a coronavirus model with the caputo fractional-order derivative. Fractal Fract. 5, 87. https://doi.org/10.3390/fractalfract5030087(2021).

Ilhem, G. & Kouche, M. Stability analysis of a fractional-order SEIR epidemic model with general incidence rate and time delay. Math. Meth. Appl. Sci. 46(9). https://doi.org/10.1002/mma.9161(2023).

Okeke, G. A., Udo, A. V. & Alqahtani, R. T. An efficient iterative scheme for approximating the fixed point of a function endowed with condition \((B_{\gamma ,\mu })\)applied for solving infectious disease models. Mathematics 13, 562. https://doi.org/10.3390/math13040562(2025).

Okeke, G. A., Udo, A. V. & Alqahtani, R. T. Novel method for approximating fixed point of generalized \(\alpha\)-nonexpansive mappings with applications to dynamics of a HIV model. Mathematics 13, 550. https://doi.org/10.3390/math13040550(2025).

Alam, K. H., Rohen, Y. & Tomar, A. Approximating the solutions of fractional differential equations with a novel and more efficient iteration procedure. J. Supercomput 81, 1084. https://doi.org/10.1007/s11227-025-07562-7(2025).

Alam, K. H. & Rohen, Y. Convergence of a refined iterative method and its application to fractional Volterra–Fredholm integro-differential equations. Comput. Appl. Math. 44, 2. https://doi.org/10.1007/s40314-024-02964-4(2025).

Panda, S. K., Vijayakumar, V., Agarwal, R. P. & Rasham, T. Fractional-order complex-valued neural networks: Stability results, numerical simulations and application to game-theoretical decision making. Discrete Contin. Dyn. Syst. 18(9), 2622–2643. https://doi.org/10.3934/dcdss.2025071(2025).

Nazama, M., Rashamb, T. & Agarwal, R. P. On orthogonal interpolative iterative mappings with applications in multiplicative calculus. Filomat 38(14), 5061–5082. https://doi.org/10.2298/FIL2414061N(2024).

Rasham, T., Panda, S. K. & Basendwah, G.A.,& Hussain, A.,. Multivalued nonlinear dominated mappings on a closed ball and associated numerical illustrations with applications to nonlinear integral and fractional operators. Heliyon 10(14). https://doi.org/10.1016/j.heliyon.2024.e34078(2024).

Alam, K. H., Rohen, Y. & Singh, S. S. Analysis of a refined iterative method with a new setting and applications to various models of partial differential equations. Numer Algor. https://doi.org/10.1007/s11075-025-02159-w(2025).

Rasham, T. et al. On dominated multivalued operators involving nonlinear contractions and applications. AIMS Math. 9(1), 1–21. https://doi.org/10.3934/math.2024001(2024).

Alam, K. H., Dolai, A., Rohen, Y., Panday, S. & Mani, S. On Picard-CR iterations involving weak perturbative contraction operators and application to reversible chemical reactions. Appl. Math. Comput. 512. https://doi.org/10.1016/j.amc.2025.129744(2026).

Picard, E. Memoire sur la theorie des equations aux derivees partielles et la methode des approximations successives. J. Math. Pures Appl. 6, 145–210 (1890).

Sahu, D. R. & Petrusel, A. Strong convergence of iterative methods by strictly pseudocontractive mappings in Banach spaces. Nonlinear Anal. TMA 74(17), 6012–6023 (2011).

Gürsoy, P. & Karakaya V.: A Picard-S hybrid iteration method for solving a differential equation with retarded argument, arXiv:1403.2546v2[math.FA](2014).

Abbas, M. & Nazir, T. A new faster iteration process applied to constrained minimization and feasibility problems. Mat. Vesnik 66, 223–234 (2014).

Ullah, K. & Arshad, M. Numerical reckoning fixed points for Suzuki’s generalized nonexpansive mapping via new iteration process. Filomat 32(1), 187–196. https://doi.org/10.2298/FIL1801187U(2018).

Abdeljawad, T., Ullah, K. & Ahmad, J. Iterative algorithm for mappings satisfying \((B_{\gamma ,\mu })\)condition. J. Funct. Spaceshttps://doi.org/10.1155/2020/3492549(2021).

Şultuz, ŞM. & Grosan, T. Data dependence for Ishikawa iteration when dealing with contractive-like operators. Fixed Point Theory Appl. 2008, 1–7. https://doi.org/10.1155/2008/242916(2008).

Senter, H. F. & Dotson, W. G. Jr. Approximating fixed points of nonexpansive mappings. Proc. Am. Math. Soc. 44, 375–380. https://doi.org/10.2307/2040440(1974).

Ullah, K., Ahmad, J. & de la Sen, M. On generalized nonexpansive maps in Banach spaces. Computation 8, 61. https://doi.org/10.3390/computation8030061(2020).

Schu, J. Weak and strong convergence to fixed points of asymptotically nonexpansive mappings. Bull. Aust. Math. Soc. 43, 153–159. https://doi.org/10.1017/S0004972700028884(1991).

Goebel, K. & Kirk, W. A. Topics in Metric Fixed Theory(Cambridge University Press, 1990). https://doi.org/10.1017/CBO9780511526152.

Opial, Z. Weak convergence of the sequence of successive approximations for nonexpansive mapping. Bull. Am. Math. Soc. 73, 591–597. https://doi.org/10.1090/s0002-9904-1967-11761-0(1967).

Berinde, V. On the stability of some fixed procedure. Bul. Ştiinţ. Univ. Baia Mare Ser. B Mat. Inform. 18(1), 7–14 (2002).

Berinde, V. Summable almost stability of fixed point iteration procedures. Carpathian J. Math. 19, 81–88 (2003).

Milici, C., Drăgănescu, G. & Machado, J. T. Introduction to Fractional Differential Equations, volume 25 of Nonlinear Systems and Complexity(Springer, 2019).

Li, Q. et al. Early transmission dynamics in Wuhan, China, of novel coronavirus—infected pneumonia. N. Engl. J. Med. 382(13), 1199–1207 (2020).

Browder, F. E. Nonexpansive nonlinear operators in a Banach space. Proc. Natl. Acad. Sci. U.S.A. 54(4), 1041–1044. https://doi.org/10.1073/pnas.54.4.1041(1965).

Kirk, W. A. A fixed point theorem for mappings which do not increase distances. Am. Math. Monthly 72(9), 1004–1006. https://doi.org/10.2307/2313345(1965).

Aoyama, K. & Kohsaka, F. Fixed point theorem for \(\alpha\)-nonexpansive mapping in Banach spaces. Nonlinear Anal. 74, 4387–4391. https://doi.org/10.1016/j.na.2011.03.057(2011).

Okeke, G. A., Ofem, A. E. & Işik, H. A faster iterative method for solving nonlinear third-order BVPs based on Green’s function. Bound. Value Probl. 2022, 103. https://doi.org/10.1186/s13661-022-01686-y(2022).

Acknowledgements

The authors wish to thank the editor and the reviewers for their useful comments and suggestions.

Funding

This work was supported and funded by the Deanship of Scientific Research at Imam Mohammad Ibn Saud Islamic University (IMSIU) (grant number IMSIU-DDRSP2602).

Author information

Authors and Affiliations

Contributions

GAO and AVU conceptualized the study. GAO, RTA and AY carried out the methodology. NHA, RTA and AY validated the results. NHA, RTA and AY carried out data curation. GAO supervised the project. GAO and AVU wrote the main manuscript. All authors reviewed the manuscript.

Corresponding author

Ethics declarations

Ethical approval

This article does not contain any studies with human participants or animals performed by any of the authors.

Competing interests

The authors declare no competing interests.

Additional information

Publisher’s note

Springer Nature remains neutral with regard to jurisdictional claims in published maps and institutional affiliations.

Rights and permissions

Open Access This article is licensed under a Creative Commons Attribution-NonCommercial-NoDerivatives 4.0 International License, which permits any non-commercial use, sharing, distribution and reproduction in any medium or format, as long as you give appropriate credit to the original author(s) and the source, provide a link to the Creative Commons licence, and indicate if you modified the licensed material. You do not have permission under this licence to share adapted material derived from this article or parts of it. The images or other third party material in this article are included in the article’s Creative Commons licence, unless indicated otherwise in a credit line to the material. If material is not included in the article’s Creative Commons licence and your intended use is not permitted by statutory regulation or exceeds the permitted use, you will need to obtain permission directly from the copyright holder. To view a copy of this licence, visit http://creativecommons.org/licenses/by-nc-nd/4.0/.

About this article

Cite this article

Alharthi, N.H., Okeke, G.A., Udo, A.V. et al. Novel iterative method for the approximation of fixed point of a class of generalized (\(\alpha ,\beta\))-nonexpansive mapping with applications to seir epidemic model. Sci Rep 16, 11833 (2026). https://doi.org/10.1038/s41598-026-38884-y

Received:

Accepted:

Published:

Version of record:

DOI: https://doi.org/10.1038/s41598-026-38884-y