Abstract

Accurate pain assessment in animals is crucial for ensuring animal welfare and guiding veterinary interventions. Traditional pain evaluation relies on scoring of pain behaviours by veterinarians, which can be influenced by observational variability and individual expertise. There is a growing interest in using AI tools, and the question whether Artificial Intelligence (AI) can outperform humans in animal pain recognition is only beginning to be explored. This study is the first to address cattle pain recognition in this context. Namely, we compare the performance of trained veterinarians in the task of pain recognition in cattle using video-based analysis. Our results show that machine learning models achieve high accuracy in pain classification and demonstrate performance comparable to trained veterinarians, with some advantages in video-based assessments. These findings highlight the potential of machine learning to enhance pain assessment in veterinary medicine, offering a scalable and more objective tool for improving animal welfare.

Similar content being viewed by others

Introduction

Farm animal welfare is a growing concern in society. As pain impairs both animal welfare and productivity1,2, its accurate recognition is essential for decision-making regarding analgesic treatment.

Pain assessment remains a fundamental challenge in both human and veterinary medicine, due to pain being an internal experience with subjective emotional components. In human research, the gold standard for establishing ground truth in pain assessment is self-reporting, which allows individuals to articulate their own experiences of pain3. This method is widely regarded as reliable, minimally invasive, and ethically sound for both clinical and experimental settings.

Due to the infeasibility of self-reporting for animals, state-of-the-art pain evaluation methodologies predominantly rely on observer-dependent subjective measures, including facial expression scoring and behavioral observation by qualified professionals. These approaches may be limited by inter-observer variability and may be influenced by confounding factors such as gender, fatigue, etc.4,5,6.

The veterinary community frequently acknowledges challenges in pain recognition and severity assessment, with numerous practitioners reporting insufficient training in pain evaluation and management protocols7,8. The inability to accurately quantify pain may compromise effective chronic pain treatment9.

In the context of cattle, pain detection presents distinct challenges10. As prey animals, cattle have evolved sophisticated mechanisms to conceal signs of pain, resulting in more subtle and challenging behavioral indicators. Pain in bovine species is frequently underestimated11, leading to suboptimal pain management protocols that adversely affect welfare, productivity, and overall health outcomes.

Current assessment tools include the UNESP-Botucatu Cattle Pain Scale (UCAPS12,13) and Calf Grimace Scale (CGS14), which have been validated for orchiectomy and the Cow Pain Scale (CPS15), validated for both orchiectomy12 and clinical pain15. A recent study sought to validate the Bovine Pain Scale16, a combination of the UCAPS, CPS and a few extra behaviors, by applying it across a wider range of surgical procedures and diverse anesthetic and analgesic protocols in hospitalized cattle. Because results were suboptimal for sensitivity and construct validity, the authors suggested that the scale demands further refinement. Consequently, the assessment of pain in bovines remains a challenge, particularly as these animals are kept in varied environments such as individual hospital stalls, group housing, and open pasture, and are subjected to diverse sources of pain. Although these scales serve specific contexts effectively, their use on a large scale in some commercial farming settings remains impractical. To address this issue, an artificial intelligence (AI) algorithm was developed by da Silva et al., incorporating a reduced set of UCAPS behaviors17. The decision-tree logic identified only two key behaviors—activity and locomotion—that may be used as behavioral red flags to optimize bovine pain diagnosis for large-scale applications.

Another possible approach to optimize pain diagnosis using AI is automatic pain detection. Automated approaches leveraging deep learning and computer vision technologies offer promising alternatives by enhancing objectivity and scalability in pain detection. Recent developments in artificial intelligence (AI) and deep learning have enabled the creation of sophisticated automated pain detection systems across species. Computer vision-based AI models have demonstrated successful implementation in pain recognition for companion animals, including cats18, dogs19, and rabbits20, as well as livestock species such as sheep21 and horses22. These systems analyze facial landmarks, postural configurations, and other image-based indicators, achieving high accuracy in differentiating between pain and non-pain states while minimizing reliance on subjective assessments23. AI-driven models can analyze video sequences to detect pain-related movements, postures, and expressions with unprecedented precision, capturing subtle indicators that may elude human observation. Machine learning techniques facilitate the extraction of complex behavioral patterns, thereby may reduce inter-observer variability and enhance assessment reliability. Notably, these automated systems have already demonstrated superior performance compared to human observers, with studies showing significantly higher accuracy rates and reduced assessment variability across multiple species and pain conditions21,23. This investigation proposes a novel deep learning model for automated pain detection in cattle utilizing video-based analysis. Through the processing of temporal and spatial behavioral features, the model aims to achieve accurate identification of pain-related expressions and postures. Utilizing a comprehensive dataset of labeled videos, we evaluate the model’s capacity to enhance pain recognition accuracy. Our approach contributes significantly to the evolving field of AI-driven veterinary diagnostics and presents potential transformative implications for pain management practices in livestock industries. Following the methodological framework proposed in Feighelstein et al.21, this study advances the field by comparing deep learning model performance to veterinarian assessments using validated pain scales in cattle. While21 focused exclusively on static facial images of sheep, our video-based approach enables the analysis of temporal behavioral dynamics and subtle pain indicators, resulting in significantly improved classification performance.

Methods

The study protocol was approved by the School of Veterinary Medicine and Animal Science (University of São Paulo State–UNESP) Ethical Committee for the Use of Animals in Research (Approval number, 0147/2018). Human ethics approval was not applicable. The bulls were part of another experiment investigating the influence of testicular warming followed by castration, used to validate two pain scales12. Respecting the three R’s (reduce, replace and refine), the perioperative pain assessment in-person observations and video recordings dataset of these patients were used in the previous clinical prospective studies.

All methods were performed in accordance with the relevant guidelines and regulations and in accordance with the COSMIN24,25 and ARRIVE26 guidelines adapted to the experimental design.

Animals received intravenous xylazine (0.05 mg/kg) as premedication, followed by induction of anesthesia with intravenous diazepam (0.05 mg/kg) and ketamine (2.5 mg/kg), and maintenance with isoflurane. Additionally, flunixin meglumine (1.1 mg/kg) was administered intramuscularly and xylazine (0.05 mg/kg diluted into 20 mL of saline 0.9%) was administered via sacrococcygeal epidural at induction of anesthesia. The total duration of anesthesia was 5 h 43 ± 32 min. Before surgery the testicles were submitted to thermal warming with progressively increasing temperatures ranging from 34 to 40 C, each maintained for 45 min, as part of the experimental protocol Subsequently, a bilateral scrotal incision was performed to conduct orchiectomy using the closed technique. Post-operative analgesia was performed with 0.1 mg/Kg of morphine intramuscularly and, when necessary, dipyrone for rescue analgesia. Flunixin meglumine was administered daily for three days after surgery.

Video data collection

The dataset utilized in this study consists of about 3 min video recordings of Bos taurus (Angus) and Bos indicus (Nelore) bulls was obtained from a previous study12. A total of 20 bulls (10 Nelore and 9 Angus) were initially recorded at five different time points. However, footage from one animal was omitted because it received a different postoperative treatment protocol, and videos of two additional animals were excluded due to insufficient illumination during nighttime recording. Consequently, the final dataset included 17 bulls, resulting in 34 valid videos (17 bulls\(\times\)2 time points, 2 observers scoring UCAPS and BGS), each with a duration of 3 min. Examples of frames extracted from dataset are depicted in Fig. 1.

Examples of frames from dataset: left: Bos taurus (Angus), right: Bos indicus (Nelore).

The recordings were conducted at the following time points:

-

M0: 48 hours before surgery and prior to fasting.

-

M1: Preoperative, before sedation, during fasting (48 h after M0).

-

M2: Three hours after sternal recumbency following surgery, before analgesia.

-

M3: One hour after administration of intramuscular morphine (0.1 mg/Kg) as postoperative analgesia.

-

M4: 24 hours after surgery, prior to the final administration of analgesics.

The videos were recorded using a Canon PowerShot SX50 HS camera (Oita, Japan) mounted on a tripod positioned 1–2 m outside the animal enclosure. Each recording was conducted without interruption or editing to ensure the preservation of natural behavior.

For the pain identification process using the deep learning model, only M1 and M2 time points data was used for calculations of in-person assessments and videos . According to that two classes were considered for ground truth: No Pain (the preoperative M1) and Pain (immediate postoperative M2). These time points were chosen to capture behavioral changes indicative of pain, with M1 serving as a baseline and M2 reflecting the acute pain response after surgery. The collected video data played a crucial role in training and validating the model, enabling the objective assessment of bovine pain behaviors.

Pain recognition by humans

The initial human assessment methodology employed the Bovine Grimace Scale (BGS), a proposed facial expression scoring system using the facial action units of the Bovine Pain Face27, complemented by action units of the “Sheep Grimace Scales”28,29 and the “Horse Grimace Scale”30. The last scale evaluates five distinct facial regions utilizing a three-point scoring system (0 = absent, 1 = moderately present, 2 = distinctly present). The evaluated facial features comprise: orbital tightening, tension above the eyes, masseter muscle tension, ear positioning (both frontal and lateral aspects), labial-mandibular configuration, and modifications in nostril or muzzle morphology. The cumulative pain score is calculated through the summation of individual scores across seven assessment areas, with a maximum attainable score of 14 (corresponding to a score of 2 for each facial region, including both lateral and frontal ear positioning evaluations) (see Table 1).

A specific limitation of this study design was the absence of a pre-study training phase for the BGS. Unlike the validated UCAPS (described below), the BGS was not yet validated at the time of data collection, and no standardized training dataset was available, so no previous training could be performed performed for BGS. The BGS score to define “pain” and “no pain” was calculated following the methodology used in previous studies of UCAPS12,13. The time points M1 (pre-surgery, pain-free bulls) and M2 (post-surgery, pain) were used to determine the cut-off value. Before scoring the scales, observers assessed each bull and answered whether, based on their clinical experience, they would administer rescue analgesia. The cut-off point was determined using the Youden Index (YI = [Sensitivity + Specificity] – 1), identifying the threshold that maximized both sensitivity (true positives: number of bulls in M2 judged to require analgesia / total number of bulls) and specificity (true negatives: number of bulls in M1 judged not to require analgesia / total number of bulls). Based on this calculation, the cut-off score was 5, with a sensitivity of 0.76, specificity of 0.85, and a Youden Index of 0.62.

The main assessment protocol used as a ’gold standard’ reference for comparison against deep learning was the UNESP-Botucatu Cattle Pain Scale (UCAPS), a validated body behavioral scoring system12,13 designed for postoperative pain evaluation in cattle. This instrument implements a variable scoring scale encompassing multiple behavioral categories: locomotion, interactive behavior, appetite, and miscellaneous behavioral indicators. Each variable is assessed using an ordinal scale with three descriptive levels, where zero represents normal behavior, and scores of one or two indicate pain-related behavioral modifications. The comprehensive assessment yields a maximum possible score of 10 points, with an established analgesic intervention threshold of 4 points12. This pain scoring instruments12,13 is published in open-access journals, under the Creative Commons license (for the UCAPS: http://creativecommons.org/publicdomain/zero/1.0/ and UCAPS behaviours may be assessed in https://animalpain.org/en/home-en/ and Vetpain application avalailable for Android (https://play.google.com/store/apps/details?id=com.vetpain.app) e IOS (https://apps.apple.com/ca/app/vetpain/id6462712970).

To ensure validated human scoring for a fair comparison with machine learning performance, the UCAPS dataset scores were obtained from our previous study31, which detailed the observer training protocol. This protocol included an initial comprehensive review of the UCAPS scale items and usage guidelines, during which videos exemplifying each behavior were reviewed and discussed to promote scoring consistency using an online platform (https://animalpain.org/en/boisdor-en/). This was followed by a second training session, in which observers scored ten randomized videos depicting the perioperative period of surgical castration in one Nelore and one Angus bull. After each video, their scores were compared and discussed whenever discrepancies occurred.

Two independent anesthetists, scored BGS (in-person) and UCAPS (both in person and by videos recorded simultaneously). It is important to note the difference in blinding between these modalities. For real-time (in-person) assessments, observers were present during the procedure and therefore aware of the surgical time point (unblinded). In contrast, video-based assessments were performed six months later by the same observers, who were fully blinded to the time points, and the order of video observation was randomized. Video-recorded pain assessments were performed six months after the real-time evaluations by the same observers. Each evaluator reviewed the videos individually using separate computers and assessed them in the same randomized order. After watching each video, evaluators completed data collection following the same sequence used during the real-time assessments.

Two independent anesthetists scored BGS and UCAPS in person, simultaneously with the video recordings. Video-recorded pain assessments were performed six months after the real-time evaluations by the same observers. Evaluators were blinded to the time points, and the order of video observation was randomized. Each evaluator reviewed the videos individually using separate computers and assessed them in the same randomized order. After watching each video, evaluators completed data collection following the same sequence used during the real-time assessments.

Inter-observer reliability showed kappa reasonable agreement for BGS (0.375; 95% confidence interval 0.094–0.655)32, good reliability for UCAPS real-time (0.765; 95% confidence interval 0.013–0.646), and reasonable agreement for UCAPS video-based assessment (0.330; 95% confidence interval 0.550–0.980), with each observer performing each scoring only once on a single phase.

A total of 68 observations were collected (17 bulls\(\times\)2 time points (M1 or M2)\(\times\)2 observers). For moving from scoring to recognition (class Pain/No Pain), the scores were then calculated using the appropriate cut-off point (5 for BGS (see description before) and 4 for UCAPS13) on each score.

To summarize, the way we obtain the two human scores to which we refer as BGS and UCAPS is by (i) aggregation of observers scoring each video, (ii) transforming to pain/no pain (binary score) using appropriate cut-off points.

Pain recognition by machine

Pain detection pipeline: sampling rate: one frame per second; preprocessing: cropping animal faces.

For the pain recognition pipeline, we implemented an approach analogous to the methodology described by Feighelstein et al.20. To enhance practical deployment feasibility in commercial farming environments, we simplified our processing pipeline by removing two computationally intensive components: the Grayscale Short-Term Stacking (GrayST) preprocessing technique and the iterative frame selection retraining phase. The GrayST method, which replaces RGB channels with temporal grayscale frames, was eliminated to reduce preprocessing complexity, while the retraining phase–which selectively fine-tuned models using high-confidence frames–was removed to avoid dependency on iterative model updates and mitigate potential overfitting concerns given our limited dataset size (17 bulls). This streamlined approach prioritizes real-time classification capabilities and computational efficiency, making the system more accessible for veterinary applications where technical expertise and specialized hardware may be limited while maintaining robust performance and reducing the risk of model overspecialization. The computational framework is illustrated in Fig. 2 and delineated comprehensively below.

Illustrative examples of original and cropped frames extracted from the experimental dataset. The original frame (1) undergoes a precise facial region cropping process (2).

-

1.

Frame sampling and cropping. Following the methodology of Feighelstein et al.20, we sampled one frame per second from each video, as the original recordings were captured at 30 frames per second. To isolate the cow’s head within the frames, cropping was applied based on individual detections. Examples of an original frame and the corresponding cropped frame are shown in Fig. 3, panels (1) and (2), respectively. To achieve a precise cropping of the animal’s face, a manual annotation of a bounding box was performed on the first frame of each video. The remaining frames of the video were subsequently cropped using the Segment Anything (SAM) video segmentation33.

-

2.

Frame embedding. For embedding, we employed the DINO ViT-B/16 encoding approach34, which maps images into a 768-dimensional embedding space, representing each image with a unique embedding vector. This is achieved through self-supervised pre-training on large-scale image datasets, leveraging knowledge distillation to learn robust visual representations without explicit labels. Specifically, we encoded images using the ViT-B/16 architecture, a variant of the Vision Transformer (ViT) model designed for high-resolution image feature extraction. The “ViT” designation in ViT-B/16 refers to Vision Transformer, while “B/16” indicates the model’s Base configuration with a 16\(\times\)16 patch size used during training. To ensure comparability across individuals, the extracted embeddings were normalized using Z-score normalization per animal, standardizing the feature distributions within each subject.

-

3.

Model training. In our classification framework, we employ a Stochastic Gradient Descent (SGD) classifier35 with a hinge loss function, corresponding to a linear Support Vector Machine (SVM)36. The model is optimized using an adaptive learning rate, initialized at \(\eta _0 = 1.47 \times 10^{-4}\), and an L1 penalty, promoting sparsity in feature selection. We set a small regularization parameter (\(\alpha = 3.98 \times 10^{-5}\)) to balance generalization and overfitting. The classifier is trained for a maximum of 10,000 iterations with a convergence tolerance of 0.001, ensuring thorough optimization.

-

4.

Video aggregation. Video-level predictions were obtained through majority voting (similarly to37) across all sampled frames within each 3-minute recording, ensuring robust classification that accounts for temporal variability in pain expression.

Performance metrics

We evaluate the ML pipeline performance (and compare it to human) using standard metrics commonly used in the literature: accuracy, precision, recall, F1, sensitivity, and specificity38. We primarily compare F1 scores as our evaluation metric, providing a harmonic mean of precision and recall that appropriately weights both false positives and false negatives in medical applications, which is particularly important for pain assessment where both missing actual pain (false negatives) and incorrectly identifying pain when absent (false positives) have significant clinical implications.

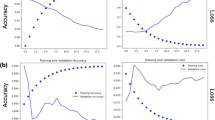

We further use the leave-one-subject-out cross validation with no subject overlap as a validation method39. Due to the relatively low numbers of bulls (n=17) in the dataset, following this stricter method of validation is more appropriate40,41. In our case this means that we repeatedly train on 16 subjects and test on the remaining subject. By separating the subjects used for training, validation and testing respectively, we enforce generalization to unseen subjects and ensure that no specific features of an individual are used for classification.

For each test subject in the leave-one-subject-out cross-validation scheme, predictions were generated at the video level. The performance metrics were calculated per frame or video and subsequently averaged across all frames or all videos in the dataset, providing a robust estimation of classification effectiveness.

Statistical analysis

For a statistical analysis of the performance, we compared areas under the receiver operating characteristic curve (AUCs) with DeLong test42. The AUC represents an index to evaluate the classification performance, that varies from 0 to 100. Accuracy is considered low when values are between 0.50 and 0.70, moderate between 0.70 and 0.90 and high when above 0.9043. Data were analyzed using Jamovi software (https://www.jamovi.org; version 2.3.28.0; Jamovi project (2023)), using Test ROC from the psychoPDA package in R software (version 1.0.5).

Results

Table 2 presents the comparison of the performance metrics between the human scoring based on UCAPS and BGS and the machine, showing the pain pipeline classification performance on the dataset using images and videos. Table 3 presents the comparison of the performance metrics between the machine and human scoring based on UCAPS and BGS across different assessment methods and cut-off values. It is important to note that the deep learning model achieved high classification performance, with the Machine—Using Videos approach reaching an F1 score of 0.9697. This value exceeded the performance of video-based human UCAPS scoring methods and showed comparable performance to real-time assessment: BGS using real-time assessment (F1 = 0.8000 for cut-off = 5), UCAPS using Video assessment (F1 = 0.7467), and showed comparable performance to UCAPS Real Time in-person assessment (F1 = 0.8857, difference of 0.0840, p = 0.052).

Table 3 presents the AUC comparison between machine and veterinary assessments. A DeLong test indicates that the machine shows comparable performance to UCAPS real-time assessment (AUC difference = 0.089, p = 0.052), while demonstrating better diagnostic capacity over video-based assessments: BGS (AUC difference = 0.162, p < 0.01) and UCAPS video assesment (AUC difference = 0.250, p < 0.001).

Discussion

This investigation presents a novel deep learning framework for automated pain recognition in bovine subjects utilizing video analysis. Without any specialized tuning, the model demonstrated performance comparable to trained in person veterinarian assessment achieving an accuracy of 97.06% and F1 score of 96.97%.

The superior discriminative capacity of our machine learning pipeline relative to the UNESP-Botucatu Cattle Acute Pain Scale (UCAPS) using videos and real-time BGS (F1 scores of 0.7467, 0.8857 and 0.8000, respectively) demonstrates the potential of artificial intelligence to overcome the inherent limitations of human assessment protocols. These findings corroborate previously reported observations in ovine subjects by Feighelstein et al.21, where analogous AI systems demonstrated enhanced performance compared to trained evaluators. Our video-based analytical approach provides distinct advantages over static image analysis by incorporating temporal behavioral pattern recognition, detecting subtle pain indicators that remain imperceptible in isolated frame evaluations.

The performance comparison shows the model achieves results comparable to UCAPS real-time veterinary assessment (AUC difference = 0.089, p = 0.052) while demonstrating moderate advantages over real time BGS (AUC difference = 0.162, p< 0.01) and UCAPS video assesments (AUC difference = 0.250, p< 0.001), suggesting automated systems can complement traditional assessment methods.

Remarkably, like in sheep21, the machine showed comparable or better performance compared to trained human assessments of the full body behavior repertoire (UCAPS). The machine learning approach achieved perfect precision (100%) and specificity (100%) for video-based assessment, indicating no false positive classifications in our dataset. This suggests exceptional reliability in identifying true negative cases (animals without pain), which is crucial for avoiding unnecessary interventions and reducing economic costs in commercial settings. The superior performance of machine learning compared to the BGS was unsurprising. The BGS has not yet been validated and the observers had not received previous training in its use. Our BGS reliability results (kappa = 0.375, 95% confidence interval from 0.094 to 0.655) were markedly lower than those reported for a trained observer using the Calf Grimace Scale14 (ICC> 0.8), and training improved inter-observer reliability when scoring the Horse44, Rat45 and Feline Grimace Scales46. The need for training highlights a potential advantage of detecting pain with machine learning. The superior performance of in-person UCAPS assessments compared to video-based evaluations may be attributed to expectation bias, as observers were aware of the time points during real-time assessments but were blinded to them during video evaluations.

Our findings address critical challenges in bovine pain assessment. As prey animals, cattle naturally conceal pain indicators11, making detection challenging for human observers. The automated system’s ability to capture subtle behavioral changes represents a significant advancement for animal welfare monitoring. The high accuracy achieved across different breeds (Bos taurus and Bos indicus) suggests robustness to phenotypic variations, including color (white in Nelore bulls and black in Angus bulls), essential for diverse agricultural settings.

The video-based approach is likely to offer practical advantages over existing methods. Unlike traditional pain scales that require trained evaluators and face limitations in commercial farming environments47, our automated system provides a scalable solution to minimize human presence and reduces assessment variability. The temporal analysis enables detection of dynamic pain indicators, providing more comprehensive evaluation than static assessments.

One possible limitation of this study was that fasting bulls for 48 h at M1 might produce stress and confound pain assessment. The choice of M1, and not M0, as the baseline is supported by our previous validation study on this dataset12. In that work, statistical analysis revealed no significant difference in pain scores between timepoints M0 (48 h before surgery, before fasting) and M1 (immediately before surgery, post-fasting). Consequently, we determined that M1 would provide a valid baseline measurement that more closely reflects a “real-life” scenario where the animals have had time to adapt to their surroundings and are usually under food restriction pre-operativelly. Other limitations include the relatively small sample size (17 bulls, 34 videos), which may affect generalizability and necessitates validation across larger, more diverse populations. Additionally, the binary classification (pain/no pain) represents a simplification of the complex nature of pain experiences. Future work should explore graded pain assessment to distinguish between mild, moderate, and severe pain states. Another limitation is knowing if the model is not detecting residual sedation or anesthesia affecting postoperative behaviors. However, given that anesthesia lasted approximately 6 h and the first postoperative assessment occurred 3 h after the animals achieved sternal recumbency (M2), it is unlikely that residual sedation persisted at that time. Another study could be performed to investigate whether sedation may confound AI ability to diagnose pain like described with the use of the Feline Grimace Scale48. Another point is that pain diagnose may be behavior-dependent, as fear, aggression, or distress may lead to false-positive or false-negative interpretations, as reported in cats49. A potential bias in pain assessment in animals is the influence of observer’s presence, which may lead to underestimation of pain in animals actually experiencing pain, and overestimation in those not in pain, as reported in rabbits50,51 and horses52. In the present study, both assessments of BGS and UCAPS and video recordings were conducted in the presence of the observers.

While these results demonstrate the promising potential of deep learning approaches for automated cattle pain assessment, it is important to acknowledge the limitations imposed by our relatively small dataset of 17 bulls. The advantages of the machine learning model over human expert assessment, though showing statistical significance for UCAPS video based assessments and comparable performance to UCAPS real-time assessment should be interpreted with caution regarding broader generalizability. The consistent performance advantages of automated assessment across multiple validated pain evaluation methods (BGS and UCAPS) and different assessment formats (in-person vs video-based) suggest robust performance within the study parameters, but validation on larger, more diverse populations will be essential to confirm these findings’ applicability to varied cattle populations, environmental conditions, and clinical contexts. Importantly, the machine learning approach showed performance statistically comparable to veterinary real-time assessment (p = 0.052), suggesting that automated systems can achieve similar diagnostic accuracy to trained professionals in optimal conditions. This progression highlights the strength of the deep learning model in automated pain detection within the study constraints and demonstrates significant F1 score improvements over traditional human assessment methods across multiple validated pain scales, regardless of whether human assessment was conducted in-person or via video analysis. These results represent a substantial advancement over previous automated pain detection systems in livestock, with our video-based approach achieving very high performance for pain classification23 The perfect precision and specificity metrics are particularly noteworthy, as they indicate the system’s reliability in avoiding false negative diagnoses.

The methodology employed has potential applications beyond pain assessment. This framework could be adapted for detecting other welfare concerns in livestock, including disease, stress, and emotional states. Integration with existing monitoring systems could enable continuous welfare surveillance in commercial settings, facilitating early intervention and improving herd health management. However, an important consideration in interpreting our findings is the potential gap between controlled experimental settings and real-world farming conditions. It should be noted that the data used in this study were collected under relatively standardized conditions with known surgical procedures, consistent video quality, and a narrow range of environmental variables. Such settings may not fully capture the complexity of commercial agricultural environments, where lighting, background activity, and animal handling practices vary widely. Additionally, it is difficult to fully disentangle pain detection from detection of similar affective or behavioral states. This highlights the need for follow-up studies in more ecologically valid contexts, including graded pain intensities, varied management protocols, and validation across distinct camera types and observer effects.

In conclusion, our findings demonstrate that the video-based AI system achieves performance comparable to trained veterinarians in real-time assessment, with advantages in video-based evaluations for both facial (BGS) and behavioral (UCAPS) scorings. While prior studies have shown promising results in companion and small livestock species, this is the first study to address video-based AI-driven pain recognition in cattle using both facial and behavioral scoring comparisons. These findings have significant implications for veterinary medicine, animal welfare science, and livestock management practices. Future research should focus on expanding the model’s capabilities to detect pain intensity gradients. This will require studies with larger sample sizes, diverse breeds, varied housing environments and management conditions, and a broader range of pain etiologies, including but not limited to neuropathic and inflammatory pain, rather than only nociceptive pain.

Data availability

Data used in this study is available from the corresponding author on reasonable request.

References

Anil, L., Anil, S. S. & Deen, J. Pain detection and amelioration in animals on the farm: Issues and options. J. Appl. Anim. Welfare Sci. 8, 261–278 (2005).

Green, L., Hedges, V., Schukken, Y., Blowey, R. & Packington, A. The impact of clinical lameness on the milk yield of dairy cows. J. Dairy Sci. 85, 2250–2256 (2002).

Labus, J. S., Keefe, F. J. & Jensen, M. P. Self-reports of pain intensity and direct observations of pain behavior: When are they correlated?. Pain 102, 109–124 (2003).

Robinson, M. E. & Wise, E. A. Gender bias in the observation of experimental pain. Pain 104, 259–264 (2003).

Contreras-Huerta, L. S., Baker, K. S., Reynolds, K. J., Batalha, L. & Cunnington, R. Racial bias in neural empathic responses to pain. PLoS ONE 8, e84001 (2013).

De Sario, G. D. et al. Using AI to detect pain through facial expressions: A review. Bioengineering 10, 548 (2023).

Weber, G., Morton, J. & Keates, H. Postoperative pain and perioperative analgesic administration in dogs: Practices, attitudes and beliefs of Queensland veterinarians. Aust. Vet. J. 90, 186–193 (2012).

Williams, V., Lascelles, B. & Robson, M. Current attitudes to, and use of, peri-operative analgesia in dogs and cats by veterinarians in New Zealand. N. Z. Vet. J. 53, 193–202 (2005).

Bell, A., Helm, J. & Reid, J. Veterinarians’ attitudes to chronic pain in dogs. Vet. Record 175, 428–428 (2014).

Tschoner, T., Mueller, K. R., Zablotski, Y. & Feist, M. Pain assessment in cattle by use of numerical rating and visual analogue scales-a systematic review and meta-analysis. Animals 14, 351 (2024).

Steagall, P. V., Bustamante, H., Johnson, C. B. & Turner, P. V. Pain management in farm animals: Focus on cattle, sheep and pigs. Animals 11, 1483. https://doi.org/10.3390/ani11061483 (2021).

Tomacheuski, R. M. et al. Reliability and validity of UNESP-Botucatu cattle pain scale and cow pain scale in Bos taurus and Bos indicus bulls to assess postoperative pain of surgical orchiectomy. Animals 13, 364 (2023).

Oliveira, F. et al. Validation of the UNESP-Botucatu unidimensional composite pain scale for assessing postoperative pain in cattle. BMC Vet. Res. 10, 200. https://doi.org/10.1186/s12917-014-0200-0 (2014).

Farghal, M. et al. Development of the calf grimace scale for pain and stress assessment in castrated angus beef calves. Sci. Rep. https://doi.org/10.1038/s41598-024-77147-6 (2024).

Gleerup, K. B., Andersen, P. H., Munksgaard, L. & Forkman, B. Pain evaluation in dairy cattle. Appl. Anim. Behav. Sci. 171, 25–32 (2015).

Tomacheuski, R. M. et al. Bovine pain scale: A novel tool for pain assessment in cattle undergoing surgery in the hospital setting. PLoS ONE 20, e0323710 (2025).

da Silva, G. V. et al. Behavioral red flags for optimizing castration-induced acute pain diagnosis in cattle. Res. Vet. Sci. 182, 105468 (2025).

Feighelstein, M. et al. Explainable automated pain recognition in cats. Sci. Rep. 13, 8973 (2023).

Zhu, H., Salgirli, Y., Can, P., Atilgan, D. & Salah, A. A. Video-based estimation of pain indicators in dogs. Preprint at arXiv:2209.13296 (2022).

Feighelstein, M. et al. Deep learning for video-based automated pain recognition in rabbits. Sci. Rep. 13, 14679 (2023).

Feighelstein, M. et al. Comparison between ai and human expert performance in acute pain assessment in sheep. Sci. Rep. 15, 626 (2025).

Pessanha, F., Salah, A. A., van Loon, T. & Veltkamp, R. Facial image-based automatic assessment of equine pain. IEEE Trans. Affect. Comput. 14, 2064–2076 (2022).

Broomé, S. et al. Going deeper than tracking: A survey of computer-vision based recognition of animal pain and emotions. Int. J. Comput. Vision 131, 572–590 (2023).

Gagnier, J. J., Lai, J., Mokkink, L. B. & Terwee, C. B. COSMIN reporting guideline for studies on measurement properties of patient-reported outcome measures. Qual. Life Res. 30, 2197–2218 (2021).

Mokkink, L. B. et al. COSMIN risk of bias checklist for systematic reviews of patient-reported outcome measures. Qual. Life Res. 27, 1171–1179 (2017).

Percie du Sert, N. et al. The ARRIVE guidelines 2.0: Updated guidelines for reporting animal research. PLOS Biol. 18, e3000410. https://doi.org/10.1371/journal.pbio.3000410 (2020).

Gleerup, K. B., Andersen, P. H., Munksgaard, L. & Forkman, B. Pain evaluation in dairy cattle. Appl. Anim. Behav. Sci. 171, 25–32. https://doi.org/10.1016/j.applanim.2015.08.023 (2015).

McLennan, K. M. et al. Development of a facial expression scale using footrot and mastitis as models of pain in sheep. Appl. Anim. Behav. Sci. 176, 19–26 (2016).

Häger, C. et al. The sheep grimace scale as an indicator of post-operative distress and pain in laboratory sheep. PLoS ONE 12, e0175839 (2017).

Dalla Costa, E. et al. Development of the horse grimace scale (HGS) as a pain assessment tool in horses undergoing routine castration. PLoS ONE 9, e92281 (2014).

Tomacheuski, R. M. et al. Real-time and video-recorded pain assessment in beef cattle: Clinical application and reliability in young, adult bulls undergoing surgical castration. Sci. Rep. 14, 15257 (2024).

Streiner, D. N. G. & John, C. Health measurement scales: A practical guide to their development and use, vol. 117 (2015).

Ravi, N. et al. Sam 2: Segment anything in images and videos. Preprint at arXiv:2408.00714. (2024).

Caron, M. et al. Emerging properties in self-supervised vision transformers. In ICCV (2021).

Bottou, L. Online algorithms and stochastic approximations. In Saad, D. (ed.) Online learning and neural networks (Cambridge University Press, Cambridge, UK, 1998). Revised, oct 2012.

Cortes, C. & Vapnik, V. Support-vector networks. Mach. Learn. 20, 273–297. https://doi.org/10.1023/A:1022627411411 (1995).

Boneh-Shitrit, T. et al. Explainable automated recognition of emotional states from canine facial expressions: The case of positive anticipation and frustration. Sci. Rep. 12, 22611 (2022).

Feighelstein, M. et al. Automated recognition of pain in cats. Sci. Rep. 12, 9575 (2022).

Refaeilzadeh, P., Tang, L. & Liu, H. Cross-validation 532–538 (Springer, 2009).

Broomé, S., Gleerup, K. B., Andersen, P. H. & Kjellstrom, H. Dynamics are important for the recognition of equine pain in video. In Proceedings of the IEEE/CVF conference on computer vision and pattern recognition, 12667–12676 (2019).

Andresen, N. et al. Towards a fully automated surveillance of well-being status in laboratory mice using deep learning: Starting with facial expression analysis. PLoS ONE 15, e0228059 (2020).

DeLong, E. R., DeLong, D. M. & Clarke-Pearson, D. L. Comparing the areas under two or more correlated receiver operating characteristic curves: A nonparametric approach. Biometrics 837–845 (1988).

Fischer, J. E., Bachmann, L. M. & Jaeschke, R. A readers’ guide to the interpretation of diagnostic test properties: Clinical example of sepsis. Intensive Care Med. 29, 1043–1051 (2003).

Dai, F., Leach, M., MacRae, A. M., Minero, M. & Dalla Costa, E. Does thirty-minute standardised training improve the inter-observer reliability of the horse grimace scale (HGS)? A case study. Animals 10, 781. https://doi.org/10.3390/ani10050781 (2020).

Leung, V. & Pang, D. Influence of rater training on inter- and intrarater reliability when using the rat grimace scale. J. Am. Assoc. Lab. Anim. Sci. https://doi.org/10.30802/AALAS-JAALAS-18-000044 (2019).

Robinson, A. R. & Steagall, P. V. Effects of training on feline grimace scale scoring for acute pain assessment in cats. J. Feline Med. Surg. https://doi.org/10.1177/1098612X241275284 (2024).

Tomacheuski, R. M., Monteiro, B. P., Evangelista, M. C., Luna, S. P. L. & Steagall, P. V. Measurement properties of pain scoring instruments in farm animals: A systematic review using the COSMIN checklist. PLoS ONE 18, e0280830 (2023).

Watanabe, R. et al. The effects of sedation with dexmedetomidine-butorphanol and anesthesia with propofol-isoflurane on feline grimace scale scores. Animals 12, 2914 (2022).

Buisman, M., Hasiuk, M. M. M., Gunn, M. & Pang, D. S. J. The influence of demeanor on scores from two validated feline pain assessment scales during the perioperative period. Vet. Anaesth. Analg. 44, 646–655. https://doi.org/10.1016/j.vaa.2016.09.001 (2017).

Pinho, R. H., Leach, M. C., Minto, B. W., Rocha, F. D. L. & Luna, S. P. L. Postoperative pain behaviours in rabbits following orthopaedic surgery and effect of observer presence. PLoS ONE 15, e0240605 (2020).

Pinho, R. H. et al. Effects of human observer presence on pain assessment using facial expressions in rabbits. J. Am. Assoc. Lab. Anim. Sci. 62, 81–86 (2023).

Torcivia, C. & McDonnell, S. In-person caretaker visits disrupt ongoing discomfort behavior in hospitalized equine orthopedic surgical patients. Animals 10, 210 (2020).

Funding

The research was funded by the SNSF-ISF binational project Switzerland – Israel (grant number 1050/24) and the São Paulo Research Foundation (FAPESP), thematic project grant number 2017/12815-0 and 2018/02006-6; and by Brazil’s National Council for Scientific and Technological Development (CNPq) PhD scholarship grant number 140349/2018-9.

Author information

Authors and Affiliations

Contributions

RT and SL acquired the data. GE, NS and MF conceived the experiment(s). MF conducted the experiment(s). MF, DL and AZ analyzed and/or interpreted the results. All authors reviewed the manuscript.

Corresponding authors

Ethics declarations

Competing interests

The authors declare no competing interests.

Additional information

Publisher’s note

Springer Nature remains neutral with regard to jurisdictional claims in published maps and institutional affiliations.

Rights and permissions

Open Access This article is licensed under a Creative Commons Attribution-NonCommercial-NoDerivatives 4.0 International License, which permits any non-commercial use, sharing, distribution and reproduction in any medium or format, as long as you give appropriate credit to the original author(s) and the source, provide a link to the Creative Commons licence, and indicate if you modified the licensed material. You do not have permission under this licence to share adapted material derived from this article or parts of it. The images or other third party material in this article are included in the article’s Creative Commons licence, unless indicated otherwise in a credit line to the material. If material is not included in the article’s Creative Commons licence and your intended use is not permitted by statutory regulation or exceeds the permitted use, you will need to obtain permission directly from the copyright holder. To view a copy of this licence, visit http://creativecommons.org/licenses/by-nc-nd/4.0/.

About this article

Cite this article

Feighelstein, M., Tomacheuski, R.M., Elias, G. et al. Comparing the performance of deep learning video-based models and trained veterinarians in cattle pain assessment. Sci Rep 16, 9318 (2026). https://doi.org/10.1038/s41598-026-39604-2

Received:

Accepted:

Published:

Version of record:

DOI: https://doi.org/10.1038/s41598-026-39604-2