Abstract

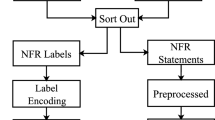

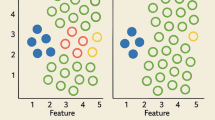

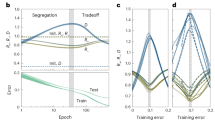

With the growing importance of data privacy and regulatory compliance, machine unlearning has become a critical requirement in deep learning. However, existing approaches often require access to the original training data, incur substantial computational costs, or compromise performance on retained data. To address these limitations, we propose a novel unlearning framework that integrates label encoding fine-tuning with class weight masking, enabling efficient and selective forgetting of specific classes. In particular, we introduce Negative-Hot Label Encoding (NHLE), which suppresses the discriminability of target classes in the feature space, thereby weakening their representations. Our method requires only a small number of samples from the forgotten classes for iterative fine-tuning. Extensive experiments on multiple visual datasets show that the proposed framework achieves near-zero classification accuracy on forgotten data, while reducing accuracy on retained data by no more than 0.035.

Similar content being viewed by others

Data availability

The datasets used in this study are all publicly available: CIFAR-10 and CIFAR-100 [https://www.cs.toronto.edu/kriz/cifar.html], SVHN [http://ufldl.stanford.edu/housenumbers/], Fashion-MNIST [https://github.com/zalandoresearch/fashion-mnist], and MNIST [http://yann.lecun.com/exdb/mnist/]

References

Krizhevsky, A., Sutskever, I. & Hinton, G. E. Imagenet classification with deep convolutional neural networks. Adv. Neural Inf. Process. Syst. 25 (2012).

He, K., Zhang, X., Ren, S. & Sun, J. Deep residual learning for image recognition. In Proceedings of the IEEE Conference on Computer Vision and Pattern Recognition 770–778 (2016).

Ren, S., He, K., Girshick, R. & Sun, J. Faster R-CNN: Towards real-time object detection with region proposal networks. Adv. Neural Inf. Process. Syst. 28 (2015).

Redmon, J., Divvala, S., Girshick, R. & Farhadi, A. You only look once: Unified, real-time object detection. In Proceedings of the IEEE Conference on Computer Vision and Pattern Recognition 779–788 (2016).

Long, J., Shelhamer, E. & Darrell, T. Fully convolutional networks for semantic segmentation. In Proceedings of the IEEE Conference on Computer Vision and Pattern Recognition 3431–3440 (2015).

Schroff, F., Kalenichenko, D. & Philbin, J. FaceNet: A unified embedding for face recognition and clustering. In Proceedings of the IEEE Conference on Computer Vision and Pattern Recognition 815–823 (2015).

Voigt, P. & Von dem Bussche, A. The EU General Data Protection Regulation (GDPR). A Practical Guide 1st ed 5555 (Springer Int. Publ., 2017).

Ginart, A., Guan, M., Valiant, G. & Zou, J. Y. Making AI forget you: Data deletion in machine learning. Adv. Neural Inf. Process. Syst. 32 (2019).

Bourtoule, L. et al. Machine unlearning. In 2021 IEEE Symposium on Security And Privacy (SP) 141–159 (IEEE, 2021).

Ma, Z. et al. Learn to forget: Machine unlearning via neuron masking. IEEE Trans. Depend. Secure Comput. 20, 3194–3207 (2022).

Foster, J., Schoepf, S. & Brintrup, A. Fast machine unlearning without retraining through selective synaptic dampening. In Proceedings of the AAAI Conference on Artificial Intelligence 38, 12043–12051 (2024).

Mehta, R., Pal, S., Singh, V. & Ravi, S. N. Deep unlearning via randomized conditionally independent hessians. In Proceedings of the IEEE/CVF Conference on Computer Vision and Pattern Recognition 10422–10431 (2022).

Peste, A., Alistarh, D. & Lampert, C. H. SSSE: Efficiently erasing samples from trained machine learning models. arXiv preprint arXiv:2107.03860 (2021).

Wu, Y., Dobriban, E. & Davidson, S. DeltaGrad: Rapid retraining of machine learning models. In International Conference on Machine Learning 10355–10366 (PMLR, 2020).

Golatkar, A., Achille, A. & Soatto, S. Eternal sunshine of the spotless net: Selective forgetting in deep networks. In Proceedings of the IEEE/CVF Conference on Computer Vision and Pattern Recognition 9304–9312 (2020).

Suriyakumar, V. & Wilson, A. C. Algorithms that approximate data removal: New results and limitations. Adv. Neural. Inf. Process. Syst. 35, 18892–18903 (2022).

Warnecke, A., Pirch, L., Wressnegger, C. & Rieck, K. Machine unlearning of features and labels. arXiv preprint arXiv:2108.11577 (2021).

Wu, G., Hashemi, M. & Srinivasa, C. PUMA: Performance unchanged model augmentation for training data removal. In Proceedings of the AAAI Conference on Artificial Intelligence 36, 8675–8682 (2022).

Golatkar, A., Achille, A. & Soatto, S. Forgetting outside the box: Scrubbing deep networks of information accessible from input-output observations. In European Conference on Computer Vision 383–398 (Springer, 2020).

Golatkar, A., Achille, A., Ravichandran, A., Polito, M. & Soatto, S. Mixed-privacy forgetting in deep networks. In Proceedings of the IEEE/CVF Conference on Computer Vision and Pattern Recognition 792–801 (2021).

Li, G., Hsu, H., Chen, C.-F. & Marculescu, R. Fast-NTK: Parameter-efficient unlearning for large-scale models. In Proceedings of the IEEE/CVF Conference on Computer Vision and Pattern Recognition 227–234 (2024).

Chen, K., Zhang, D., Mi, B., Huang, Y. & Li, Z. Fast yet versatile machine unlearning for deep neural networks. Neural Netw. 190, 107648 (2025).

Jung, D. EntUn: Mitigating the forget-retain dilemma in unlearning via entropy. ICT Express (2025).

Seo, S., Kim, D. & Han, B. Revisiting machine unlearning with dimensional alignment. In 2025 IEEE/CVF Winter Conference on Applications of Computer Vision (WACV) 3206–3215 (IEEE, 2025).

Panda, S. et al. Partially Blinded Unlearning: Class unlearning for deep networks from bayesian perspective. In Proceedings of the AAAI Conference on Artificial Intelligence 39, 6372–6380 (2025).

Trippa, D., Campagnano, C., Bucarelli, M. S., Tolomei, G. & Silvestri, F. \(\nabla \tau\): Gradient-based and task-agnostic machine unlearning. CoRR (2024).

Foster, J. et al. An information theoretic approach to machine unlearning. arXiv preprint arXiv:2402.01401 (2024).

Cha, S. et al. Learning to unlearn: Instance-wise unlearning for pre-trained classifiers. In Proceedings of the AAAI Conference on Artificial Intelligence 38, 11186–11194 (2024).

Shen, S., Zhang, C., Bialkowski, A., Chen, W. & Xu, M. CaMU: disentangling causal effects in deep model unlearning. In Proceedings of the 2024 SIAM International Conference on Data Mining (SDM) 779–787 (SIAM, 2024).

Cotogni, M., Bonato, J., Sabetta, L., Pelosin, F. & Nicolosi, A. DUCK: Distance-based unlearning via centroid kinematics. arXiv preprint arXiv:2312.02052 (2023).

Wang, W., Zhang, C., Tian, Z. & Yu, S. Machine unlearning via representation forgetting with parameter self-sharing. IEEE Trans. Inf. Forensics Secur. 19, 1099–1111 (2023).

Choi, D., Choi, S., Lee, E., Seo, J. & Na, D. Towards efficient machine unlearning with data augmentation: Guided loss-increasing (GLI) to prevent the catastrophic model utility drop. In Proceedings of the IEEE/CVF Conference on Computer Vision and Pattern Recognition 93–102 (2024).

Hoang, T., Rana, S., Gupta, S. & Venkatesh, S. Learn to unlearn for deep neural networks: Minimizing unlearning interference with gradient projection. In Proceedings of the IEEE/CVF Winter Conference on Applications of Computer Vision 4819–4828 (2024).

Nguyen, Q. P., Low, B. K. H. & Jaillet, P. Variational bayesian unlearning. Adv. Neural. Inf. Process. Syst. 33, 16025–16036 (2020).

Poppi, S., Sarto, S., Cornia, M., Baraldi, L. & Cucchiara, R. Multiclass unlearning for image classification via weight filtering. IEEE Intell. Syst. 39, 40–47 (2024).

Thudi, A., Deza, G., Chandrasekaran, V. & Papernot, N. Unrolling SGD: Understanding factors influencing machine unlearning. In 2022 IEEE 7th European Symposium on Security and Privacy (EuroS&P) 303–319 (IEEE, 2022).

Chundawat, V. S., Tarun, A. K., Mandal, M. & Kankanhalli, M. Zero-shot machine unlearning. IEEE Trans. Inf. Forensics Secur. 18, 2345–2354 (2023).

Tarun, A. K., Chundawat, V. S., Mandal, M. & Kankanhalli, M. Fast yet effective machine unlearning. IEEE Trans. Neural Netw. Learn. Syst. 35, 13046–13055 (2023).

Abbasi, A., Thrash, C., Akbari, E., Zhang, D. & Kolouri, S. CovarNav: Machine unlearning via model inversion and covariance navigation. arXiv preprint arXiv:2311.12999 (2023).

Fan, C. et al. SalUn: Empowering machine unlearning via gradient-based weight saliency in both image classification and generation. arXiv preprint arXiv:2310.12508 (2023).

Graves, L., Nagisetty, V. & Ganesh, V. Amnesiac machine learning. In Proceedings of the AAAI Conference on Artificial Intelligence 35, 11516–11524 (2021).

Watanabe, S. Pseudo-labeling for enhanced user privacy in approximate machine unlearning. In ICASSP 2025–2025 IEEE International Conference on Acoustics, Speech and Signal Processing (ICASSP) 1–5 (IEEE, 2025).

Wang, J., Bie, H., Jing, Z. & Zhi, Y. Scrub-and-learn: Category-aware weight modification for machine unlearning. AI 6, 108 (2025).

Wang, J., Bie, H., Jing, Z., Zhi, Y. & Fan, Y. Weight masking in image classification networks: Class-specific machine unlearning. Knowl. Inf. Syst. 67, 3245–3265 (2025).

Funding

This work is supported in part by the Science and Technology Innovation 2030 Major Project (Grant No. 2022ZD0211603) and the Beijing Natural Science Foundation – Joint Funds of the Haidian Original Innovation Project (Grant No. L232056).

Author information

Authors and Affiliations

Contributions

J.W. conceived the experiment(s), Z.J. and Y.Z. conducted the experiment(s), J.W. and H.B. analysed the results, and J.W. wrote the original draft. All authors reviewed the manuscript.

Corresponding author

Ethics declarations

Competing interests

The authors declare no competing interests.

Additional information

Publisher’s note

Springer Nature remains neutral with regard to jurisdictional claims in published maps and institutional affiliations.

Rights and permissions

Open Access This article is licensed under a Creative Commons Attribution 4.0 International License, which permits use, sharing, adaptation, distribution and reproduction in any medium or format, as long as you give appropriate credit to the original author(s) and the source, provide a link to the Creative Commons licence, and indicate if changes were made. The images or other third party material in this article are included in the article’s Creative Commons licence, unless indicated otherwise in a credit line to the material. If material is not included in the article’s Creative Commons licence and your intended use is not permitted by statutory regulation or exceeds the permitted use, you will need to obtain permission directly from the copyright holder. To view a copy of this licence, visit http://creativecommons.org/licenses/by/4.0/.

About this article

Cite this article

Wang, J., Bie, H., Jing, Z. et al. Feature-indistinguishable machine unlearning via negative-hot label encoding and class weight masking. Sci Rep (2026). https://doi.org/10.1038/s41598-026-40379-9

Received:

Accepted:

Published:

DOI: https://doi.org/10.1038/s41598-026-40379-9