Abstract

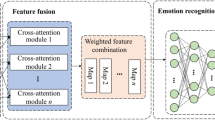

New technologies in human emotion recognition (HER) have drawn considerable attention to use in the fields of security, intelligent customer service, healthcare, educational, human-robot interaction (HRI), and adaptive system training. To identify human emotions, our model incorporates MobileNetV3, Vision Transformer (ViT), RegNet and SE-ResNeXt into a unique deep ensemble classification structure. A Novel Multi Module Neural Networks (MMNNs) architecture is designed in this research for HER for practical application the main purpose of is to identify the human emotions. An innovative approach to improve the performance of HER by integrating MMNNs with Transfer Learning (TL) to train CNNs is researched. The MMNNs classification model is trained by combining features from four CNN models using feature pooling. The key novelty of the model is the novel DEtection TRansformer (DETR) which enhances the CNN learning block. It consists of a CNN that learns low dimensional feature representation, an encoder decoder transformer and a simple Feed Forward Network (FFN) that outputs the final detection prediction, which ultimately boosts face recognition efficiency and accuracy. The MMNNs results are validated on AffectNet, CK + and a custom-made dataset (CMD) achieving accuracy of 91.07%, 87.03% and 96.98% respectively which is further increased by data augmentation technique to 95.09%, 89.15% and 98.13% respectively.

Similar content being viewed by others

Data availability

The dataset used in this research is available on the following web link [https://github.com/123456789khalid/Human-Emotion-HE-.git] (https:/github.com/123456789khalid/Human-Emotion-HE-.git) .

Abbreviations

- MMNNs:

-

Multi-Module Neural Networks

- HER:

-

Human Emotion Recognition

- HRI:

-

Human Robot Interaction

- HCI:

-

Human Computer Interaction

- ER:

-

Emotion Recognition

- DNNs:

-

Deep Neural Networks

- CNNs:

-

Convolutional Neural Networks

- TL:

-

Transfer Learning

- DETR:

-

DEtection TRansformer

- FFN:

-

Feed Forward Network

- CMD:

-

Custom-Made-Dataset

- IIMT Lab:

-

Institute of Intelligent Manufacturing Technology, Laboratory

- ViLT:

-

Vision and Language Transformers

- NMS:

-

non-maximal suppression

- DACL:

-

Deep Attention Centre Loss

- STN:

-

Spatial Transformation Network

- FER:

-

Facial Expression Recognition

- MLP:

-

multilayer perception

- RNNs:

-

recurrent neural networks

- LSTM:

-

long short-term memory

- BDBNs:

-

Boosted Deep Belief Networks

- DCNN:

-

Deep Convolutional Neural Network

- DTN:

-

deep temporal network

- DSN:

-

deep spatial network

- SE:

-

Squeeze-and-excitation

- GRU:

-

Gated Recurrent Units

- IRB:

-

Inverted Residual Block

- CK+:

-

Cohn-Kanade

References

Hirota, K. & Dong, F. Development of mascot robot system in NEDO project. In 2008 4th International IEEE Conference Intelligent Systems (Vol. 1, pp. 1–38). IEEE. (2008), September.

Yamazaki, Y., Dong, F., Masuda, Y., Uehara, Y., Kormushev, P., Vu, H. A., … Hirota,K. (2009). Intent expression using eye robot for mascot robot system. arXiv preprint arXiv:0904.1631.

Yamazaki, Y., Vu, H. A., Le, Q. P., Fukuda, K., Matsuura, Y., Hannachi, M. S., … Hirota,K. (2008, November). Mascot robot system by integrating eye robot and speech recognition using RT middleware and its casual information recommendation. In Proc. 3rd International Symposium on Computational Intelligence and Industrial Applications (pp. 375–384).

Fukuda, T. et al. Human-robot mutual communication system. In Proceedings 10th IEEE International Workshop on Robot and Human Interactive Communication. ROMAN 2001 (Cat. No. 01TH8591) (pp. 14–19). IEEE. (2001), September.

Liu, Z., Wu, M., Cao, W., Chen, L., Xu, J., Zhang, R., … Mao, J. (2017). A facial expression emotion recognition-based human-robot interaction system. IEEE CAA J. Autom. Sinica, 4(4), 668–676.

Carion, N. et al. End-to-end object detection with transformers. In European conference on computer vision (pp. 213–229). Cham: Springer International Publishing. (2020), August.

Yahyaoui, M. A., Oujabour, M., Letaifa, L. B. & Bohi, A. Multi-face emotion detection for effective Human-Robot Interaction. arXiv preprint arXiv:2501.07213. (2025).

Pan, S. Y. A survey on transfer learning. IEEE Trans. Knowl. Data Eng. 22 (10), 1345–1359 (2010).

Zaman, K. et al. A novel driver emotion recognition system based on deep ensemble classification. Complex & Intelligent Systems, 9(6), 6927–6952. (2023).

Zaman, K., Zengkang, G., Zhaoyun, S., Shah, S. M., Riaz, W., Ji, J., … Attar, R. W.(2025). A Novel Emotion Recognition System for Human–Robot Interaction (HRI) Using Deep Ensemble Classification. International Journal of Intelligent Systems, 2025(1), 6611276.

Hopfield, J. J. Neural networks and physical systems with emergent collective computational abilities. Proc. Natl. Acad. Sci. 79 (8), 2554–2558 (1982).

Srivastava, N., Hinton, G., Krizhevsky, A., Sutskever, I. & Salakhutdinov, R. Dropout: a simple way to prevent neural networks from overfitting. J. Mach. Learn. Res. 15 (1), 1929–1958 (2014).

Ioffe, S. & Szegedy, C. Batch normalization: Accelerating deep network training by reducing internal covariate shift. In International conference on machine learning (pp. 448–456). pmlr. (2015), June.

Glorot, X., Bordes, A. & Bengio, Y. Deep sparse rectifier neural networks. In Proceedings of the fourteenth international conference on artificial intelligence and statistics (pp. 315–323). JMLR Workshop and Conference Proceedings. (2011), June.

Yu, Z. & Zhang, C. Image based static facial expression recognition with multiple deep network learning. In Proceedings of the 2015 ACM on international conference on multimodal interaction (pp. 435–442). (2015), November.

Perez-Gaspar, L. A., Caballero-Morales, S. O. & Trujillo-Romero, F. Multimodal emotion recognition with evolutionary computation for human-robot interaction. Expert Syst. Appl. 66, 42–61 (2016).

Zaman, K. et al. Driver emotions recognition based on improved faster R-CNN and neural architectural search network. Symmetry 14 (4), 687 (2022).

Riaz, W., Ji, J., Zaman, K. & Zengkang, G. Neural Network-Based Emotion Classification in Medical Robotics: Anticipating Enhanced Human–Robot Interaction in Healthcare. Electronics 14 (7), 1320 (2025).

Mudassar Shah, S., Zengkang, G., Sun, Z., Hussain, T., Zaman, K., Alwabli, A., … Ali,F. (2025). AI-enabled driver assistance: monitoring head and gaze movements for enhanced safety. Complex & Intelligent Systems, 11(7), 297.

Zaman, K., Zengkang, G., Zhaoyun, S., Mansoor, M., Wei, C., Tao, G., … Xiaozhi, Q.(2025). FTDGT: Federated Temporal Dense Granular Transformer-Based Wind Power Forecasting in Medium and Long Term. International Journal of Energy Research, 2025(1), 9377203.

Zaman, K. et al. Accurately recognizing driver emotions through using CNN fused features and NasNet-large model. Alexandria Eng. J. 134, 177–196 (2026).

Khor, H. Q., See, J., Phan, R. C. W. & Lin, W. Enriched long-term recurrent convolutional network for facial micro-expression recognition. In 2018 13th IEEE international conference on automatic face & gesture recognition (FG 2018) (pp. 667–674). IEEE. (2018), May.

Mollahosseini, A., Chan, D. & Mahoor, M. H. Going deeper in facial expression recognition using deep neural networks. In 2016 IEEE Winter conference on applications of computer vision (WACV) (pp. 1–10). IEEE. (2016), March.

Burkert, P., Trier, F., Afzal, M. Z., Dengel, A. & Liwicki, M. Dexpression: Deep convolutional neural network for expression recognition. arXiv preprint arXiv :150905371. (2015).

Liu, P., Han, S., Meng, Z. & Tong, Y. Facial expression recognition via a boosted deep belief network. In Proceedings of the IEEE conference on computer vision and pattern recognition (pp. 1805–1812). (2014).

Agrawal, A. & Mittal, N. Using CNN for facial expression recognition: a study of the effects of kernel size and number of filters on accuracy. Visual Comput. 36 (2), 405–412 (2020).

Liang, D., Liang, H., Yu, Z. & Zhang, Y. Deep convolutional BiLSTM fusion network for facial expression recognition. Visual Comput. 36 (3), 499–508 (2020).

Mohanraj, V., Chakkaravarthy, S. & Vaidehi, V. Ensemble of convolutional neural networks for face recognition. In Recent Developments in Machine Learning and Data Analytics: IC3 2018 467–477 (Springer Singapore, 2018).

Wang, Y., Li, Y., Song, Y. & Rong, X. Facial expression recognition based on auxiliary models. Algorithms 12 (11), 227 (2019).

Li, T. H. S., Kuo, P. H., Tsai, T. N. & Luan, P. C. CNN and LSTM based facial expression analysis model for a humanoid robot. IEEE Access. 7, 93998–94011 (2019).

Nguyen, L. D., Gao, R., Lin, D. & Lin, Z. Biomedical image classification based on a feature concatenation and ensemble of deep CNNs. J. Ambient Intell. Humaniz. Comput. 14 (11), 15455–15467 (2023).

Fan, Y., Lam, J. C. & Li, V. O. Multi-region ensemble convolutional neural network for facial expression recognition. In International Conference on Artificial Neural Networks (pp. 84–94). Cham: Springer International Publishing. (2018), September.

Renda, A., Barsacchi, M., Bechini, A. & Marcelloni, F. Comparing ensemble strategies for deep learning: An application to facial expression recognition. Expert Syst. Appl. 136, 1–11 (2019).

Vinyals, O., Bengio, S. & Kudlur, M. Order matters: Sequence to sequence for sets. (2015). arXiv preprint arXiv:1511.06391.

Alsenan, A., Youssef, B., Alhichri, H. & B., & Mobileunetv3—a combined unet and mobilenetv3 architecture for spinal cord gray matter segmentation. Electronics 11 (15), 2388 (2022).

Prasad, S. B. R. & Chandana, B. S. Mobilenetv3: a deep learning technique for human face expressions identification. Int. J. Inform. Technol. 15 (6), 3229–3243 (2023).

Howard, A., Sandler, M., Chu, G., Chen, L. C., Chen, B., Tan, M., … Adam, H. (2019).Searching for mobilenetv3. In Proceedings of the IEEE/CVF international conference on computer vision (pp. 1314–1324).

Fard, A. P., Hosseini, M. M., Sweeny, T. D. & Mahoor, M. H. AffectNet+: A Database for Enhancing Facial Expression Recognition with Soft-Labels. IEEE Transactions on Aff ective Computing. (2024). arXiv preprint arXiv:2410.22506.

Lucey, P. et al. The Extended Cohn-Kanade Dataset (CK+): A complete expression dataset for action unit and emotion-specified expression. Proceedings of the Third International Workshop on CVPR for Human Communicative Behavior Analysis (CVPR4HB 2010), San Francisco, USA, 94–101. (2010).

Lyons, M., Kamachi, M. & Gyoba, J. The Japanese female facial expression (JAFFE) dataset. (No Title). (1998).

Kosti, R., Alvarez, J. M., Recasens, A. & Lapedriza, A. Context based emotion recognition using emotic dataset. IEEE Trans. Pattern Anal. Mach. Intell. 42 (11), 2755–2766 (2019).

Liu, Y. et al. Mafw: A large-scale, multi-modal, compound affective database for dynamic facial expression recognition in the wild. In Proceedings of the 30th ACM international conference on multimedia (pp. 24–32). (2022), October.

Mirzaee, H., Peymanfard, J., Moshtaghin, H., Zeinali, H. & H., & Armanemo: A persian dataset for text-based emotion detection 1–23 (Language Resources and Evaluation, 2025).

Bota, P., Brito, J., Fred, A., Cesar, P. & Silva, H. A real-world dataset of group emotion experiences based on physiological data. Sci. data. 11 (1), 116 (2024).

Liu, S. et al. Spectral Efficient Neural Network-Based M-ary Chirp Spread Spectrum Receivers for Underwater Acoustic Communication. Arab. J. Sci. Eng. 49 (12), 16593–16609 (2024).

Gang, Q. et al. A Q-Learning-Based Approach to Design an Energy-Efficient MAC Protocol for UWSNs Through Collision Avoidance. Electronics 13 (22), 4388 (2024).

Farid, G. et al. An improved deep Q-Learning approach for navigation of an autonomous UAV agent in 3D Obstacle-Cluttered environment. Drones 9 (8), 518 (2025).

Ali, W., Bilal, M., Alharbi, A., Jaffar, A. & SA Hassnain Mohsan. Intelligent Bayesian regularization backpropagation neuro computing paradigm for state features estimation of underwater passive object. Front. Phys. 12, 1374138 (2024).

W ur Rahman, Q. et al. &. A MACA-based energy-efficient MAC protocol using Q-learning technique for underwater acoustic sensor network, 2023 IEEE 11th international conference on computer science and network and technology (ICCSNT) (2023).

Xuezhi, X., Ali, S. M., Farid, G. & M Bilal. Image processing in visual tracking by various techniques with the use of a particle filter – a critical review. J. Flow. Visualization Image Process. 23, 1–2 (2016).

Zaidi, S. M. H., Ashraf, S. N., Iqbal, R., Bilal, M. & HH Zuberi. A Alharbi &. Based AI-Driven posture correction and personalized fitness assistant using computer vision and augmented reality. Int. J. E-Health Med. Commun. (IJEHMC) 16 (1), 1–25 (2025).

Khan, M. A., Songzuo, L., Bilal, M. & Y Wang. Low probability of detection constrained Covert underwater acoustic communication receiver using autoencoders (IEEE Transactions on Communications, 2025).

Ali, S. M., Bilal, M. & R Amin., A Alharbi & A Novel Deep Reinforcement Learning Based Extended Fractal Radial Basis Function Network for State-of‐Charge Estimation. IET Power Electron. 18 (1), e70101 (2026).

Khan, M. A., Liu, S., Bilal, M. & Hassan, A. Convolutional autoencoders for low probability of detection constrained underwater acoustic communications. Ocean Eng. 344, 123720 (2026).

Sankoh, A. P. et al. Automated Facial Pain Assessment Using Dual-Attention CNN with Clinically Calibrated High-Reliability and Reproducibility Framework. Biomimetics 11 (1), 51 (2026).

Bilal, M. et al. Covert underwater communication through cepstrum modulation mimicking Pseudorca crassidens whistles using machine learning. Scientific Reports (2026).

Acknowledgements

The authors extend their appreciation to Umm Al-Qura University, Saudi Arabia for funding this research work through grant number: 26UQU4290339GSSR01.

Funding

This research work was funded by Umm Al-Qura University, Saudi Arabia under grant number: 26UQU4290339GSSR01.

Author information

Authors and Affiliations

Contributions

Khalid Zaman, Ammad Ul Islam and Sayyed Mudassar Shah have contributed equally to this work and are the first coauthors.

Corresponding authors

Ethics declarations

Competing interests

The authors declare no competing interests.

Ethics declarations

“Written informed consent was obtained from all participants for the publication of identifiable images in all figures in this manuscript and confirm that informed consent was obtained from all subjects and/or their legal guardian(s) prior to their participation in the study.”

Compliance with Guidelines and Regulations

All methods involving human participants and/or human tissue samples were carried out in accordance with the relevant ethical guidelines and regulations. The study was approved by “Institute of Intelligent Manufacturing Technology, Shenzhen Polytechnic University, Shenzhen, Guangdong 518000, China” with the approval number “Supported by the Post-Doctoral Foundation Project of Shenzhen Polytechnic University (Grant No.6024331021K)”. All participants provided informed consent prior to inclusion in the study.

Approval by institutional committee

We have provided details of the institutional that approved the experimental protocols, including the name of the committee and any relevant approval numbers.

Additional information

Publisher’s note

Springer Nature remains neutral with regard to jurisdictional claims in published maps and institutional affiliations.

Rights and permissions

Open Access This article is licensed under a Creative Commons Attribution-NonCommercial-NoDerivatives 4.0 International License, which permits any non-commercial use, sharing, distribution and reproduction in any medium or format, as long as you give appropriate credit to the original author(s) and the source, provide a link to the Creative Commons licence, and indicate if you modified the licensed material. You do not have permission under this licence to share adapted material derived from this article or parts of it. The images or other third party material in this article are included in the article’s Creative Commons licence, unless indicated otherwise in a credit line to the material. If material is not included in the article’s Creative Commons licence and your intended use is not permitted by statutory regulation or exceeds the permitted use, you will need to obtain permission directly from the copyright holder. To view a copy of this licence, visit http://creativecommons.org/licenses/by-nc-nd/4.0/.

About this article

Cite this article

Zaman, K., Islam, A.U., Zengkang, G. et al. A novel multi-module neural networks strategy of human emotion recognition in the human-robot interaction. Sci Rep (2026). https://doi.org/10.1038/s41598-026-40798-8

Received:

Accepted:

Published:

DOI: https://doi.org/10.1038/s41598-026-40798-8