Abstract

Safe flight operation requires visual scanning across multiple displays in a cockpit, which collectively represent the state of the aircraft and supporting automation. Trust is a crucial factor that drives human-automation interaction, and recent work has suggested a relationship between an operator’s visual attention and automation trust. One index that captures predictability of eye movements between different areas of interest is gaze transition entropy. The current work reanalyzed data from Sato et al., which examined eye movement patterns and trust in automation associated with the system monitoring task of the Multi-Attribute Task Battery. Results showed credible positive correlations between the entropy measures and performance-based trust, but not for process- nor purpose-based trust. Specifically, higher levels of performance-based trust were associated with more random eye movement. Gaze transition entropy may provide a new window into the relationship between visual attentional resource allocation and automation trust in a multitasking workspace.

Similar content being viewed by others

Introduction

Modern aircraft require pilots to not only perform supervisory tasks on dynamic digital avionics gauges, but also to interact with advanced automated systems1,2,3. Such multitasking environments involving imperfect automation can compete for the pilot’s limited visual attention4,5, likely increasing workload and in turn compromising the ability to process and integrate safety-critical information. Trust in automation has been shown to be an important factor in aviation settings6,7, as prior work has shown that delegating pilots’ tasks to trustworthy automation can reduce their task demand and improve pilot performance8.

Trust can be defined as “an attitude that an agent will help achieve an individual’s goals in a situation characterized by uncertainty and vulnerability”9. In the context of human-automation interaction, Lee and See9 further propose three informational bases of human-automation trust: performance-based trust denotes observable behaviors of automation (i.e., what the automation does), process-based trust describes the underlying algorithms that control behaviors of automation (i.e., how the automation works), and purpose-based trust refers to the automation designer’s intent (i.e., why the automation was designed). Laboratory-based experiments using attention-demanding tasks have shown that after initial interactions with unfamiliar automation, participants report an increase to performance-based trust levels but less or no increases to process- or purpose-based trust10,11,12,13. Additionally, Politowicz14 reported that operators who had more accurate mental models for how an automated system worked indicated higher process-based trust levels, yet similar effects were not observed on performance- nor purpose-based trust ratings. These studies provide some empirical evidence for dissociation of the three trust bases, which can develop differentially9.

Using eye movements as a measure of visual attention allocation, controlled laboratory experiments have begun to demonstrate intricate relationships between trust and attention in dynamic environments10,11,12. For example, using the Multi-Attribute Task Battery (MATB-II)15, Sato et al.1 collected eye movement data, performance data, and trust ratings from participants who performed a compensatory tracking task and a system monitoring task supported by a 70% reliable signaling system. Participants were intermittently interrupted to perform a communication task, which required entering a call sign in response to auditory stimuli. Notably, both performance-based and process-based trust were rated lower when the tracking task demanded more visual attention, as confirmed by the percent dwell time (PDT) of their eye fixations. Although previous research has used PDT as a measure of attentional resources10,16, it does not capture gaze transitions between areas of interest (AOIs).

Cui and colleagues17 reanalyzed Sato et al.’s1 eye movement data and demonstrated the utility of gaze transition entropy (GTE) and stationary gaze entropy (SGE)18. Briefly, GTE quantifies the observed randomness in gaze transitions between AOIs, indicating gaze dispersion and visual exploration during a task. In contrast, SGE measures the uncertainty of fixation locations, representing general gaze dispersion in space while assuming a specific level of scanning efficiency. Cui et al.17 showed that the more difficult tracking task reduced both GTE and SGE, collectively demonstrating that participants’ attention was more focused.

A myriad of works indicated that multiple eye movement metrics correlate with automation trust11,19,20,21,22,23,24. Hergeth et al.20 demonstrated that monitoring frequency (i.e., the number of glances toward the automated driving system) was negatively correlated with automation trust at the dispositional, situational, and learned level. This aligns with more recent findings showing that other gaze-based measures negatively correlate with automation trust11,23. For example, Sato et al.11 reported in a meta-analysis that PDT on an automated task was negatively correlated with performance-based trust but not process- and purpose-based trust. Likewise, Ries et al.23 found that the proportion of gaze allocated to the automation was negatively correlated with automation trust, which was measured using a single-item trust scale. Collectively, prior research indicates that people monitor automation less frequently when they report higher levels of automation trust. Furthermore, a variety of eye movement metrics show negative correlation with different trust measures. Recent work examined the relationship between gaze transition entropy measures and automation trust24. Zhang et al.24 reported that GTE decreased and SGE increased during the trust breach phase. This suggests that participants adopted more predictable scanning patterns while equally distributing their gaze more broadly when participants distrusted the automation. However, Foroughi et al.18 found that participants who detected false alarms exhibited high GTE and low automation trust. It remains unclear how gaze transition entropy measures correlate with different facets of automation trust.

To examine relationships between gaze entropy measures (i.e., GTE, SGE) and the different dimensions of automation trust, we reanalyzed data from Sato et al.1. Trust was measured using both Jian et al.’s25 trust scale and Chancey et al.’s26 trust scale, with the latter targeting performance-based, processes-based, and purpose-based trust. The GTE and SGE scores were normalized and submitted to separate Bayesian multiple regression analyses to predict trust. Based on Zhang et al.’s24 finding, we hypothesized that automation trust will be negatively associated with GTE but positively associated with SGE. In other words, as automation trust increases, participants will adopt a more predictable scanning pattern and a more evenly distributed gaze across AOIs. Furthermore, Sato et al.1 found that eye movement behavior was associated with performance-based trust, but not with process- and purpose-based trust. Therefore, we also hypothesize that the relationship between gaze transition entropy measures and automation trust will manifest when trust is developed based on the automation’s behavior (i.e., performance-based trust), not the automation’s functionality (i.e., process-based trust) and the system designer’s intention (i.e., purpose-based trust).

Method

Sato et al.1 collected data from 40 undergraduate students (29 females; M = 20.03 years) from the community of Old Dominion University. All participants reported normal or corrected-to-normal visual acuity with normal color perception. Participants were remunerated for their participation. This research complied with the American Psychological Association Code of Ethics and was approved by Old Dominion University’s Institutional Review Board. This research was performed in accordance with relevant guidelines/regulations as documented in https://www.nature.com/srep/journal-policies/editorial-policies#experimental-subjects and with the Declaration of Helsinki. Informed consent was obtained from each participant.

Participants were asked to perform the system monitoring task with assistance of a signaling system (AOI 1), the compensatory tracking task (AOI 2), and the communication task (AOI 3) in the MATB-II environment (see1 for complete description of the tasks). The compensatory tracking task required participants to keep the moving circular cursor within a predefined dotted square by controlling a joystick. The system monitoring task required participants to monitor four vertical gauges, each representing the aircraft’s engine. When one of the fluctuating pointers deviated from the center of the vertical gauge (i.e., engine malfunction), participants were instructed to correct the fluctuating pointer by pressing a key that corresponds to one of the four vertical gauges (F1-F4). The signaling system served as an automated aid that alerted the presence of an engine malfunction, although the signaling system occasionally made a false alarm. A green box indicated the absence of an engine malfunction, whereas a red box indicated the presence of an engine malfunction. When a red box illuminated, participants were instructed to respond to the signaling system’s alert by pressing F6 to turn off the red box followed by F5 to turn on the green box. In each block, the system monitoring task included 28 correct detection events and 12 false alarm events which occurred at random time points. A correction detection event occurred when a red box illuminates in the presence of an engine malfunction, whereas a false alarm event occurs when a red box illuminates in the absence of an engine malfunction. The communication task required participants to respond to auditory messages, simulating interactions with an air traffic controller. Specifically, participants were instructed to change the radio frequency based on the information provided in each auditory message. Each participant completed two 20-minute blocks of the simulation with the difficulty of the compensatory tracking task manipulated to be Easy (0.06 Hz frequency of force function) or Difficult (0.12 Hz frequency of force function). These conditions were block ordered and were counterbalanced across participants. Throughout each block, participant’s eye movements were recorded using the Eyelink II system at a sampling rate of 250 Hz. After each block, participants were asked to complete the Chancey et al.26 trust scale and Jian et al.25 trust scale [see 27].

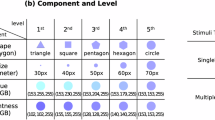

The present study reanalyzed participants’ trust25,26 and gaze entropy measures18. Trust was measured using a modified version of Jian et al.’s25 trust questionnaire and Chancey et al.’s26 trust questionnaire. Jian et al.’s25 trust questionnaire is a 12-item scale on a 7-point Likert scale anchored with 1 = not at all and 7 = extremely, measuring trust and distrust (reverse-coded) in automation with Cronbach’s alpha = 0.9328. Chancey et al.26 trust questionnaire is a 13-item scale on a 12-point Likert scale anchored with 1 = not descriptive and 12 = descriptive. The items were grouped into three informational bases of trust, performance-, process-, and purpose-based trust with Cronbach’s alpha of 0.9729(Table 1).

A recent work indicated that these two questionnaires measure different facets of human-automation trust27, highlighting the need of administering multiple trust questionnaires. The present study used EyeTracking Metrics Calculator, a Python toolkit for eye-tracking research30, to compute gaze entropy measures from fixation-based transitions between AOIs. The EyeTracking Metrics Calculator computed SGE and GTE by applying Eqs. 1 and 2, respectively, as follows.

where Pi is the basic probability that a person views at the ith AOI and Pij is the conditional probability that a person shifts their gaze from the ith to jth AOI. Hs reflects the overall spatial dispersion of gaze. A high Hs implies an equally distributed gaze, whereas a low Hs implies unequal distribution of gaze. Ht depicts the certainty of gaze transition. A high Ht implies unpredictable gaze transition, whereas a low Ht implies predictable gaze transition (Fig. 1).

MATB display with the system monitoring task (AOI 1), the compensatory tracking task (AOI 2), and the communication task (AOI 3).

Results

Bayesian analysis of variance (ANOVA)31 showed decisive evidence that participants in the Easy condition exhibited greater GTE [M = 0.23 vs. 0.19; F(1, 37) = 53.63, B10 = 9.88 ⋅ 105, η2G = 0.19] and SGE [M = 0.72 vs. 0.58; F(1, 37) = 73.94, B10 = 2.79 ⋅ 107, η2G= 0.21] than those in the Difficult condition. These findings suggest that, when the compensatory tracking task became more difficult, participants adopted a more exploratory scanning strategy, characterized by broader and less predictable eye movements. Note that this analysis was reported previously in Cui et al17. and is included here for completeness. Bayesian multiple regression analyses were performed for GTE and SGE scores separately. Shapiro-Wilk normality tests on GTE and SGE scores indicated no violation of the normality assumption on the response variable in Bayesian regression. The results indicated that performance-based trust was positively and credibly associated with GTE [t = 2.17, B10 = 5.93] and SGE [t = 2.41, B10 = 5.12]. However, neither SGE nor GTE was credibly associated with process- or purpose-based trust as well as Jian et al.’s25 trust [1.06 < B10< 2.27]. Similar to Sato et al11., the present results show that both entropy measures correlated with only performance-based trust. Together, the best fitting regression models for GTE and SGE are as follows:

where TPerformance is the participant’s performance-based trust score. To explore whether task difficulty impacts the relationships, we conducted Preacher’s32 slope analysis and visualized the relationships between the gaze entropy measures and performance-based trust. Although the relationship appeared moderated under the high task load, the statistical evidence was weak (p >.30).

Discussion

Eye movement measures are among the most widely used psychophysiological measures of automation trust21. Recent studies have demonstrated associations between gaze entropy measures (i.e., GTE and SGE) and user’s trust in automation18,24, suggesting that these measures may serve as objective behavioral indicators of automation trust. Building on these works, the present study examined the relationship between gaze entropy measures and the different dimensions of automation trust. Specifically, we reanalyzed Sato et al.’s1 eye movement data to investigate whether performance-, process-, and/or purpose-based trust predicted GTE and SGE.

The results confirmed that performance-based trust predicted GTE and SGE1. These findings indicate that participants’ scanning may have been more random in an attempt to focus on assessing observable behaviors of the automated system, which correlated with higher performance-based trust. Such exploratory scanning patterns may only be necessary for confirming behaviors of the system when appropriate mental models have not yet been established (i.e., the underlying algorithm is not known) and subsequent trust is developing33. Operators with more appropriate mental models of automated systems may show more predictable scanning patterns, where process-based trust may lead to checking the automated system only in specific circumstances14. Results show a steeper regression slope for SGE than for GTE, suggesting a stronger link between performance-based trust and SGE. SGE in general indicates the fundamental predictability structure of gaze behavior once transient effects have settled, and the SGE scores reflect the interplay between system performance, task demands, and scanning strategies after eye movement patterns stabilize. SGE therefore may be a more sensitive summary measure of performance-based trust than GTE. Since operators tend to have different strategies to adapt to information environments and stabilize their scanning patterns, more research with a larger sample size may examine the relationship between automation trust and the entropy measures at both individual and group levels. Results further support a recent view that Chancey et al.’s26 trust scale and Jian et al.’s25 trust scale measure different aspects of automation trust27. The findings suggest that gaze entropy measures may be more sensitive to changes of automation trust, especially performance-based trust, measured by Chancey et al.’s26 trust scale than Jian et al.’s25 trust scale. Future experimentation with more extensive training with an automated system may reveal how eye movement patterns change as automation trust across the three dimensions evolves over time.

Several limitations exist in this study. First, the cockpit of a modern aircraft typically features numerous displays and gauges comprising more than three AOIs. Thus, real-world gaze transitions in the visual workspace are often more complex than investigated in the current controlled experiment. Additionally, fidelity of the environment may influence perceived risk, which is crucial for trust in automation to evolve. Second, elaborating the point above, individual differences in pilot expertise may interact with the quality of their mental models and situation awareness, affecting their eye movements and trust in the automated systems. A larger study with pilots with various flight hours may lend insights into this issue. Third, although operator’s mental model of the automation may influence trust and scanning behavior, it is outside of the scope of the present study. Our recent work started examining the impact of granularity of mental models on scanning behaviors and automation trust14, and further experimentation is necessary for modeling the relationship among mental models, scanning behaviors, and automation trust. In conclusion, the present study demonstrates that GTE and SGE are associated with performance-based trust, indicating that more exploratory eye movements may be a basis for operators to develop their trust based on their perception of behaviors of the automation.

Data availability

Data will be available upon request to the corresponding author: Yusuke Yamani (yyamani@odu.edu).

References

Sato, T., Jackson, A. & Yamani, Y. Number of interrupting events influences response time in multitasking, but not trust in automation. Int. J. Aerosp. Psychol. 34(4), 208–224. https://doi.org/10.1080/24721840.2024.2311706 (2024).

Billings, C. E. Human-centered aircraft automation: A concept and guidelines Vol. 103885 (National Aeronautics and Space Administration, Ames Research Center, 1991).

Salas, E. & Maurino, D. (eds) Human Factors in Aviation (Academic, 2010).

Wickens, C. D., Helton, W. S., Hollands, J. G. & Banbury, S. Engineering psychology and human performance (Routledge, 2021).

Yamani, Y. & Horrey, W. J. A theoretical model of human-automation interaction grounded in attention allocation policy during automated driving. Int. J. Hum. Factors Ergon. 5, 225–239 (2018).

Geels-Blair, K., Rice, S. & Schwark, J. Using system-wide trust theory to reveal the contagion effects of automation false alarms and misses on compliance and reliance in a simulated aviation task. Int. J. Aviat. Psychol. 23(3), 245–266. https://doi.org/10.1080/10508414.2013.799355 (2013).

Rice, S. Examining single- and multiple-process theories of trust in automation. J. Gen. Psychol. 136(3), 303–322. https://doi.org/10.3200/GENP.136.3.303-322 (2009).

Parasuraman, R. & Byrne, E. A. Automation and human performance in aviation. Principles Pract. Aviat. Psychology, 311–356. (2003).

Lee, J. & See, K. Trust in automation: Designing for appropriate reliance. Hum. Factors J. Hum. Factors Ergon. Soc. 46(1), 50–80. https://doi.org/10.1518/hfes.46.1.50_30392 (2004).

Karpinsky, N. D., Chancey, E. T., Palmer, D. B. & Yamani, Y. Automation trust and attention allocation in multitasking workspace. Appl. Ergon. 70, 194–201. https://doi.org/10.1016/j.apergo.2018.03.008 (2018).

Sato, T., Inman, J., Politowicz, M. S., Chancey, E. T. & Yamani, Y. A meta-analytic approach to investigating the relationship between human-automation trust and attention allocation. Proceedings of the Human Factors and Ergonomics Society Annual Meeting. Washington, DC: HFES. (2023). https://doi.org/10.1177/15553434241296573

Sato, T., Islam, S., Still, J. D., Scerbo, M. W. & Yamani, Y. Task priority reduces an adverse effect of task load on automation trust in a dynamic multitasking environment. Cogn. Technol. Work 25(1), 1–13. https://doi.org/10.1007/s10111-022-00717-z (2023).

Chancey, E. T. et al. Human-automation trust development as a function of automation exposure, familiarity, and perceived risk: A high-fidelity remotely operated uncrewed aerial system simulation. Cogn. Eng. Decis. Mak. https://doi.org/10.1177/15553434241296573 (2024).

Politowicz, M. S. Visual attention in remote vehicle supervision: Examining the mental models and information bandwidth [Master’s thesis, Old Dominion University]. Old Dominion University ProQuest Dissertations & Theses. (2024). https://digitalcommons.odu.edu/psychology_etds/428

Santiago-Espada, Y., Myer, R. R., Latorella, K. A. & Comstock, J. R. The multi-attribute task battery II (MATB-II) software for human performance and workload research: A user’s guide (NASA/TM-2011-217164) (National Aeronautics and Space Administration, Langley Research Center, 2011).

Salvucci, D. D. & Taatgen, N. A. Threaded cognition: An integrated theory of concurrent multitasking. Psychol. Rev. 115(1), 101 (2008).

Cui, Z. et al. Gaze transition entropy as a measure of attention allocation in a dynamic workspace involving automation. Sci. Rep. 14(1), 23405. https://doi.org/10.1038/s41598-024-74244-4 (2024).

Krejtz, K. et al. Gaze transition entropy. ACM Trans. Appl. Percept. 13(1), 1–20 (2015).

Foroughi, C. K. et al. Near-perfect automation: Investigating performance, trust, and visual attention allocation. Hum. Factors 65(4), 546–561. https://doi.org/10.1177/00187208211032889 (2023).

Hergeth, S., Lorenz, L., Vilimek, R. & Krems, J. F. Keep your scanners peeled: Gaze behavior as a measure of automation trust during highly automated driving. Hum. Factors. 58 (3), 509–519. https://doi.org/10.1177/0018720815625744 (2016).

Kohn, S. C., De Visser, E. J., Wiese, E., Lee, Y. C. & Shaw, T. H. Measurement of trust in automation: A narrative review and reference guide. Front. Psychol. 12, 604977. https://doi.org/10.3389/fpsyg.2021.604977 (2021).

Lu, Y. & Sarter, N. Eye tracking: A process-oriented method for inferring trust in automation as a function of priming and system reliability. IEEE Trans. Hum.-Mach. Syst. 49(6), 560–568 (2019).

Ries, A. J., Aroca-Ouellette, S., Roncone, A. & de Visser, E. J. Gaze-informed Signatures of Trust and Collaboration in Human-Autonomy Teams. Computers Hum. Behavior: Artif. Hum. 100171. https://doi.org/10.1016/j.chbah.2025.10017 (2025).

Zhang, Y., Yadav, A., Hopko, S. K. & Mehta, R. K. In gaze we trust: Comparing eye tracking, self-report, and physiological indicators of dynamic trust during hri. In Companion of the 2024 ACM/IEEE International Conference on Human-Robot Interaction (pp. 1188–1193). (2024), March.

Jian, J. Y., Bisantz, A. M., Drury, C. G. & Llinas, J. Foundations for an empirically determined scale of trust in automated systems. International Journal of Cognitive Ergonomics 4, 53–71 (2000).

Chancey, E. T., Bliss, J. P., Yamani, Y. & Handley, H. A. H. Trust and the compliance–reliance paradigm: The effects of risk, error bias, and reliability on trust and dependence. Hum. Factors. 59(3), 333–345. https://doi.org/10.1177/0018720816682648 (2017).

Yamani, Y. et al. Multilevel confirmatory factor analysis reveals two distinct human–automation trust constructs. Hum. Factors. 67(2), 166–180. https://doi.org/10.1177/00187208241263774 (2025).

Gutzwiller, R. S. et al. Positive bias in the ‘Trust in Automated Systems Survey’? An examination of the Jian et al.(2000) scale. In Proceedings of the Human Factors and Ergonomics Society annual meeting(Vol. 63, No. 1, pp. 217–221). Sage CA: Los Angeles, CA: SAGE Publications. (2019), November.

Sato, T. Exploring the effects of task priority on attention allocation and trust towards imperfect automation: A flight simulator study [Master’s thesis, Old Dominion University]. Old Dominion University ProQuest Dissertations & Theses. (2020). https://digitalcommons.odu.edu/psychology_etds/357

Jundi, H. EyeTracking metrics calculator. GitHub Repository. (2019). https://github.com/Husseinjd/EyeTrackingMetrics

Rouder, J. N., Morey, R. D., Speckman, P. L. & Province, J. M. Default Bayes factors for ANOVA designs. J. Math. Psychol. 56(5), 356–374 (2012).

Preacher, K. J., Curran, P. J. & Bauer, D. J. Computational tools for probing interactions in multiple linear regression, multilevel modeling, and latent curve analysis. Journal of Educational and Behavioral Statistics, 31, 437–448. factors for ANOVA designs. Journal of Mathematical Psychology, 56(5), 356–374. (2006).

Chancey, E. T. & Politowicz, M. S. Designing and Training for Appropriate Trust in Increasingly Autonomous Advanced Aerial Mobility Operations: A Mental Model Approach (Version 1) (Langley Research Center, 2020). NASA Technical Memorandum-20205003378.

Author information

Authors and Affiliations

Contributions

This research did not receive funding.

Corresponding author

Ethics declarations

Competing interests

The authors declare no competing interests.

Additional information

Publisher’s note

Springer Nature remains neutral with regard to jurisdictional claims in published maps and institutional affiliations.

Rights and permissions

Open Access This article is licensed under a Creative Commons Attribution-NonCommercial-NoDerivatives 4.0 International License, which permits any non-commercial use, sharing, distribution and reproduction in any medium or format, as long as you give appropriate credit to the original author(s) and the source, provide a link to the Creative Commons licence, and indicate if you modified the licensed material. You do not have permission under this licence to share adapted material derived from this article or parts of it. The images or other third party material in this article are included in the article’s Creative Commons licence, unless indicated otherwise in a credit line to the material. If material is not included in the article’s Creative Commons licence and your intended use is not permitted by statutory regulation or exceeds the permitted use, you will need to obtain permission directly from the copyright holder. To view a copy of this licence, visit http://creativecommons.org/licenses/by-nc-nd/4.0/.

About this article

Cite this article

Yamani, Y., Jackson, A., Sato, T. et al. Gaze transition entropy and automation trust in a multitasking workspace. Sci Rep 16, 11122 (2026). https://doi.org/10.1038/s41598-026-41338-0

Received:

Accepted:

Published:

Version of record:

DOI: https://doi.org/10.1038/s41598-026-41338-0