Abstract

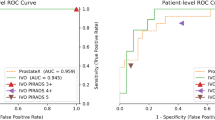

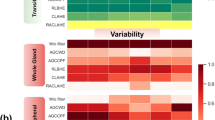

Prostate MRI segmentation is critical for accurate diagnosis and treatment planning but remains challenging due to the complex interplay between the peripheral zone’s thin, irregular boundaries and the central gland’s homogeneous textures, compounded by variability across imaging protocols. To address these challenges, we propose ProSeg, a novel deep learning framework featuring a specialized ProSeg block that integrates dual complementary processes: (1) anisotropic convolutions for precise peripheral zone boundary delineation and (2) cross-slice attention mechanisms for robust central gland texture modeling. Extensive evaluations on the Promise12 and Promise158 datasets demonstrate ProSeg’s state-of-the-art performance, achieving Dice scores of 84.31% (peripheral zone) and 57.92% (central gland) on Promise12, and 83.15% (peripheral zone) and 56.38% (central gland) on Promise158, significantly outperforming existing methods. ProSeg’s consistent accuracy across diverse protocols highlights its clinical potential for reliable prostate zonal segmentation in real-world settings.

Similar content being viewed by others

Data availability

The data supporting the findings of this study are publicly available. The Promise12 dataset is available at: https://promise12.grand-challenge.org/. The Prostate158 dataset is available at:https://github.com/kbressem/prostate158.

References

Mahapatra, D. & Buhmann, J. M. Prostate MRI segmentation using learned semantic knowledge and graph cuts. IEEE Trans. Biomed. Eng. 61, 756–764 (2013).

Hung, A. L. Y. et al. CAT-Net: A cross-slice attention transformer model for prostate zonal segmentation in MRI. IEEE Trans. Med. Imaging 42, 291–303 (2022).

van den Kroonenberg, D. L. et al. Development and validation of an algorithm for segmentation of the prostate and its zones from three-dimensional transrectal multiparametric ultrasound images. Eur. Urol. Open Sci. 75(2025), 48–54 (2025).

Rajagopal, A. et al. Mixed supervision of histopathology improves prostate cancer classification from MRI. IEEE Trans. Med. Imaging 43, 2610–2623 (2024).

Zhe, X., Donghuan, L., Luo, J., Zheng, Y. & Tong, R. K. Separated collaborative learning for semi-supervised prostate segmentation with multi-site heterogeneous unlabeled MRI data. Med. Image Anal. 93(2024), 103095 (2024).

Pati, P. et al. Weakly supervised joint whole-slide segmentation and classification in prostate cancer. Med. Image Anal. 89(2023), 102915 (2023).

Pang, Y. et al. Slim UNETRV2: 3D image segmentation for resource-limited medical portable devices. IEEE Trans. Med. Imaging 45, 542–553 (2025).

Vesal, S. et al. Domain generalization for prostate segmentation in transrectal ultrasound images: A multi-center study. Med. Image Anal. 82(2022), 102620 (2022).

Litjens, G. et al. Evaluation of prostate segmentation algorithms for MRI: The PROMISE12 challenge. Med. Image Anal. 18, 359–373 (2014).

Pang, Y. et al. Online self-distillation and self-modeling for 3D brain tumor segmentation. IEEE J. Biomed. Health Inform. 29, 8965–8975 (2025).

Duran, A. et al. ProstAttention-Net: A deep attention model for prostate cancer segmentation by aggressiveness in MRI scans. Med. Image Anal. 77(2022), 102347 (2022).

Pang, Y. et al. Efficient breast lesion segmentation from ultrasound videos across multiple source-limited platforms. IEEE J. Biomed. Health Inform. 29, 8890–8903 (2025).

Cuocolo, R. et al. Deep learning whole-gland and zonal prostate segmentation on a public MRI dataset. J. Magn. Reson. Imaging 54(2), 452–459 (2021).

Pang, Y. et al. Endoscopic adaptive transformer for enhanced polyp segmentation in endoscopic imaging. IEEE Trans. Med. Imaging 45, 987–999 (2025).

Ding, M. et al. A multi-scale channel attention network for prostate segmentation. IEEE Trans. Circuits Syst. II Express Briefs 70, 1754–1758 (2023).

He, K. et al. HF-UNet: Learning hierarchically inter-task relevance in multi-task U-net for accurate prostate segmentation in CT images. IEEE Trans. Med. Imaging 40, 2118–2128 (2021).

Pang, Y. et al. SegTom: A 3D volumetric medical image segmentation framework for thoracoabdominal multi-organ anatomical structures. IEEE J. Biomed. Health Inform. 30, 551–563 (2025).

Ronneberger, O., Fischer, P., & Brox, T. U-net: Convolutional networks for biomedical image segmentation. In International Conference on Medical Image Computing and Computer-Assisted Intervention 234–241 (Springer, 2015).

Yu, L., Yang, X., Chen, H., Qin, J., & Heng, P. A. Volumetric ConvNets with mixed residual connections for automated prostate segmentation from 3D MR images. In Proceedings of the AAAI Conference on Artificial Intelligence Vol. 31 (2017).

Li, X. et al. H-DenseUNet: Hybrid densely connected UNet for liver and tumor segmentation from CT volumes. IEEE Trans. Med. Imaging 37, 2663–2674 (2018).

Zhou, Z., Siddiquee, M. M. R., Tajbakhsh, N. & Liang, J. Unet++: Redesigning skip connections to exploit multiscale features in image segmentation. IEEE Trans. Med. Imaging 39, 1856–1867 (2019).

Debesh J. et al. Resunet++: An advanced architecture for medical image segmentation. In 2019 IEEE International Symposium on Multimedia (ISM) 225–2255 (IEEE, 2019).

Hatamizadeh, A., et al. Unetr: Transformers for 3d medical image segmentation. In Proceedings of the IEEE/CVF Winter Conference on Applications of Computer Vision 574–584 (2022).

Hatamizadeh, A. et al. Swin unetr: Swin transformers for semantic segmentation of brain tumors in mri images. In International MICCAI Brainlesion Workshop 272–284 (Springer, 2021).

Chen, J. et al. Transunet: Transformers make strong encoders for medical image segmentation. arXiv preprint. arXiv:2102.04306 (2021).

Chen, G., Li, L., Dai, Y., Zhang, J. & Yap, M. H. Aau-net: An adaptive attention u-net for breast lesions segmentation in ultrasound images. IEEE Trans. Med. Imaging 42, 1289–1300 (2022).

Xing, Z., Ye, T., Yang, Y., Liu, G., & Zhu, L. Segmamba: Long-range sequential modeling mamba for 3d medical image segmentation. In International Conference on Medical Image Computing and Computer-Assisted Intervention 578–588 (Springer, 2024).

Tian, Z., Liu, L., Zhang, Z. & Fei, B. PSNet: Prostate segmentation on MRI based on a convolutional neural network. J. Med. Imaging 5, 021208–021208 (2018).

Jia, H. et al. 3D APA-Net: 3D adversarial pyramid anisotropic convolutional network for prostate segmentation in MR images. IEEE Trans. Med. Imaging 39, 447–457 (2019).

Zhu, Q., Du, B. & Yan, P. Boundary-weighted domain adaptive neural network for prostate MR image segmentation. IEEE Trans. Med. Imaging 39, 753–763 (2019).

Rundo, L. et al. USE-Net: Incorporating Squeeze-and-Excitation blocks into U-Net for prostate zonal segmentation of multi-institutional MRI datasets. Neurocomputing 365(2019), 31–43 (2019).

Xiangxiang Qin, Yu., Zhu, W. W., Gui, S., Zheng, B. & Wang, P. 3D multi-scale discriminative network with multi-directional edge loss for prostate zonal segmentation in bi-parametric MR images. Neurocomputing 418(2020), 148–161 (2020).

Jia, H., Cai, W., Huang, H. & Xia, Y. Learning multi-scale synergic discriminative features for prostate image segmentation. Pattern Recogn. 126(2022), 108556 (2022).

Ma, L., Fan, Q., Tian, Z., Liu, L. & Fei, B. A novel Residual and Gated Network for prostate segmentation on MR images. Biomed. Signal Process. Control 87(2024), 105508 (2024).

Arshad, M. et al. RaNet: A residual attention network for accurate prostate segmentation in T2-weighted MRI. Front. Med. 12(2025), 1589707 (2025).

Howard, A. G. et al. Mobilenets: Efficient convolutional neural networks for mobile vision applications. arXiv preprint. arXiv:1704.04861 (2017).

Pang, Y. et al. Slim UNETR: Scale hybrid transformers to efficient 3D medical image segmentation under limited computational resources. IEEE Trans. Med. Imaging 43(3), 994–1005 (2023).

Lin, M., Chen, Q., & Yan, S. Network in network. arXiv preprint. arXiv:1312.4400 (2013).

Khan, S. et al. Transformers in vision: A survey. ACM Comput. Surv. 54, 1–41 (2022).

Milletari, F., Navab, N., & Ahmadi, S.-A. V-net: Fully convolutional neural networks for volumetric medical image segmentation. In 2016 Fourth International Conference on 3D Vision (3DV) 565–571 (IEEE, 2016).

Lin, T.-Y., Goyal, P., Girshick, R., He, K., & Dollár, P. Focal loss for dense object detection. In Proceedings of the IEEE International Conference on Computer Vision 2980–2988 (2017).

Kervadec, H. et al. Constrained deep networks: Lagrangian optimization via log-barrier extensions. In 2022 30th European Signal Processing Conference (EUSIPCO) 962–966 (IEEE, 2022).

Adams, L. C. et al. Prostate158-An expert-annotated 3T MRI dataset and algorithm for prostate cancer detection. Comput. Biol. Med. 148(2022), 105817 (2022).

Litjens, G., Debats, O., Barentsz, J., Karssemeijer, N. & Huisman, H. Computer-aided detection of prostate cancer in MRI. IEEE Trans. Med. Imaging 33, 1083–1092 (2014).

Cuocolo, R., Stanzione, A., Castaldo, A., Lucia, D. R. D. & Imbriaco, M. Quality control and whole-gland, zonal and lesion annotations for the PROSTATEx challenge public dataset. Eur. J. Radiol. 138(2021), 109647 (2021).

Paszke, A. et al. Pytorch: An imperative style, high-performance deep learning library. Adv. Neural Inf. Process. Syst. 32, 8026–8037 (2019).

Pinaya, W. H. L. et al. Generative ai for medical imaging: Extending the monai framework. arXiv preprint. arXiv:2307.15208 (2023).

Loshchilov, I., & Hutter, F. Sgdr: Stochastic gradient descent with warm restarts. arXiv preprint. arXiv:1608.03983 (2016).

Viriyasaranon, T., Woo, S. M. & Choi, J.-H. Unsupervised visual representation learning based on segmentation of geometric pseudo-shapes for transformer-based medical tasks. IEEE J. Biomed. Health Inform. 27(4), 2003–2014. https://doi.org/10.1109/JBHI.2023.3237596 (2023).

Chang, H.-H., Zhuang, A. H., Valentino, D. J. & Chu, W.-C. Performance measure characterization for evaluating neuroimage segmentation algorithms. Neuroimage 47(1), 122–135 (2009).

Powers, D. M. W. Evaluation: From precision, recall and F-measure to ROC, informedness, markedness and correlation (2020).

Author information

Authors and Affiliations

Contributions

J.Q. and Y.Y. conceived the study. J.Q. developed the methodology, implemented the software, performed validation and formal analysis, conducted the investigation, curated the data, and prepared the original draft. Y.Y. supervised the project, administered the research, and edited the manuscript. All authors reviewed and approved the final manuscript.

Corresponding author

Ethics declarations

Competing interests

The authors declare no competing interests.

Additional information

Publisher’s note

Springer Nature remains neutral with regard to jurisdictional claims in published maps and institutional affiliations.

Rights and permissions

Open Access This article is licensed under a Creative Commons Attribution-NonCommercial-NoDerivatives 4.0 International License, which permits any non-commercial use, sharing, distribution and reproduction in any medium or format, as long as you give appropriate credit to the original author(s) and the source, provide a link to the Creative Commons licence, and indicate if you modified the licensed material. You do not have permission under this licence to share adapted material derived from this article or parts of it. The images or other third party material in this article are included in the article’s Creative Commons licence, unless indicated otherwise in a credit line to the material. If material is not included in the article’s Creative Commons licence and your intended use is not permitted by statutory regulation or exceeds the permitted use, you will need to obtain permission directly from the copyright holder. To view a copy of this licence, visit http://creativecommons.org/licenses/by-nc-nd/4.0/.

About this article

Cite this article

Qin, J., Yang, Y. ProSeg: multi-scale context fusion for high-precision prostate segmentation in MRI. Sci Rep (2026). https://doi.org/10.1038/s41598-026-43589-3

Received:

Accepted:

Published:

DOI: https://doi.org/10.1038/s41598-026-43589-3