Abstract

Scientific research on social parameters for prognosis after traumatic brain injury (TBI) is evolving, yet results remain heterogeneous, and predictors for risk stratification are lacking. To understand how social parameters are linked to cognitive outcomes after TBI, we applied machine learning (ML) algorithms using cohort-level data from 30 published studies including 2,364 participants with TBI (72% male; 55% mild, 45% moderate-severe injury). We extracted and harmonised longitudinal data following the PROGRESS-Plus framework, and used the data as predictors of rate of change in cognition post-TBI. We developed random forest, gradient boosting (GB), and extreme GB predictive models, accounting for time from injury to baseline assessment, time between assessments, and country-level structural indicators. We used mean absolute error and root mean squared error to evaluate model performance, and Shapley Additive Explanations analysis for explanatory model predictions. Results highlighted time interval, country-level structural indicators, age, and variation in education as key predictors for rate of change for both injury severities. Sensitivity analyses for predicting rate of change in executive function and learning and memory confirmed the robustness of the results. Our work contributes to novel ML meta-research for understanding prognosis and advancing precision in predicting cognitive outcomes after TBI.

Similar content being viewed by others

Introduction

Research on the course and prognosis of cognitive function following traumatic brain injury (TBI), which refers to structural and/or physiological disruption of brain function due to an external force1, is rapidly developing but remains characterized by extensive inter-patient and intra-patient heterogeneity. Adverse outcomes are attributed to greater injury severity and advanced age, highlighting the challenges in care and poor prognosis for these patients2,3. Recently, the view of TBI of mild severity is progressing through evidence towards a condition with long-term consequences for cognition4,5 rather than a singular event. Furthermore, it has been proposed that TBI should not be seen as a random event and accident, but rather as a health status transition on a continuum, where the magnitude of social adversities and comorbidities increase the risk of injury severity, adverse course, and expedited2,6,7,8,9 cognitive decline, across injury severities10,11. With this in mind, recent research has increasingly posed social equity hypotheses and investigated the role of social parameters in recovery course and long-term outcomes. However, the challenge remains that social parameters are intertwined with injury-related and environmental risks, age-related parameters, and broader social adversities, forming a vastly complex network of associations11,12. Capturing and disentangling these associations on a time continuum presents a substantial challenge for traditional hypothesis-driven research, which typically assumes linear relationships and examines a limited number of variables13,14. As a result, important sources of variability in TBI prognosis remain insufficiently characterised in scientific research, creating a knowledge gap in research, policy, and practice14.

Emerging advances in computational and machine learning (ML) approaches offer a timely opportunity to address this gap by enabling more systematic evaluation of variable importance and the complex interplay of social and demographic parameters in shaping cognitive trajectories after TBI, increasing the confidence in the prognosis of cognitive course after TBI. Here, we present a new analysis of data from 30 published longitudinal cohort studies characterising change in cognitive test performance with 2,364 participants with TBI (72% male, mean age 32 years; 55% mild, 45% moderate/severe TBI)15. We leveraged advancements in computational and ML approaches, with the aim to: (1) develop and validate predictive models of longitudinal cognitive change after TBI using harmonised social, demographic, and injury-related data from published cohort studies; (2) compare the predictive performance of multiple ML approaches (random forest, gradient boosting, and extreme gradient boosting) in modelling standardised rates of cognitive change following TBI, stratified by injury severity, and (3) identify and interpret the relative importance of social and demographic parameters in predicting cognitive trajectories after TBI utilizing the PROGRESS-Plus framework16. PROGRESS stands for social parameters, namely Place of residence, Race/ethnicity/culture/language, Occupation, Gender/sex, Religion, Education, Socioeconomic status, Social capital, and Plus stands for contextual characteristics that may influence outcomes (i.e., age, injury severity, etc.). Through this approach, we sought to move beyond traditional injury-related predictors and elucidate how social profiles of study samples shape longitudinal cognitive course after TBI. This approach to characterizing heterogeneity in cognitive outcomes will equip researchers and policymakers with actionable evidence for understanding and interpreting heterogeneity in cognitive outcomes, and informing more equitable, data-driven prediction.

Results

We included 30 published studies17,18,19,20,21,22,23,24,25,26,27,28,29,30,31,32,33,34,35,36,37,38,39,40,41,42,43,44,45,46 and 43 cohorts within them (i.e., Mandleberg et al. provided eight cohorts31, Dikmen et al. provided three cohorts20, and Kontos et al.25, Macciochi et al.29, and Covassin et al.19 provided two cohorts, each), which provided a total of 218 cognitive outcome data points collected on at least two time points after injury. The data set comprised 2,364 adults (72% male) with TBI, of which 57% corresponded to data of participants with mild TBI, with the remaining data points representing moderate (4.6%), moderate-severe TBI (24%) and severe TBI (16%). Sample size of cohorts varied, ranging from 1027 to 40430 overall. For mild TBI, sample size ranged from 1229 to 15820, and for moderate-severe TBI it ranged from 1027 to 40430.

To mitigate the influence of imbalance in our data in injury severity categories and to preserve clinically meaningful distinctions, moderate, moderate-severe, and severe injuries were combined into a single ‘moderate–severe’ category, and models were trained separately for mild and moderate-severe TBI.

Data extraction process

After combining moderate and severe injury severity with moderate-severe data points, the final dataset resulted in 121 data points for mild, and 97 data points for moderate-severe TBI (Supplement Table 1).

Published data spanned five decades, with the majority (87%) published after the year 2000. Data points represented nine countries, all of high- and middle-income, across global regions. Most cohorts originated from the USA (53%), followed by New Zealand and the UK (9.6% each), China (6.9%), Norway (5.0%), South Korea (4.6%), Brazil (4.1%), and Canada and Australia (3.7%, each) (Table 1). To strengthen the use of country of study origin as a proxy for place of residence, we used country of English language dominance instead of country of study origin in the modelling process, categorizing it under place of residence. Of the nine countries represented by data points, four were classified as English language dominant (i.e., Australia, Canada, New Zealand, UK, USA). Reporting of study sample characteristics varied across cohorts, regardless of country of study origin. Gender/sex composition of study samples was reported in all cohorts, and after harmonisation proportion of males in samples ranged from 0.4443 to 117,25(Table 1).

Cohorts’ education was reported using a variety of formats. After harmonisation to years of formal education, mean education levels ranged from 7.118,38 to 14.743 years across cohorts; 1126,27 to 14.743 years for mild, and 7.118,38 to 13.1721 years for moderate-severe TBI cohorts. Education SDs (within study cohorts) ranged from 1.1136 to 4[18,38 years, 1.529 to 418,38 years for mild, and 1.1136 to 3.340 years for moderate-severe TBI cohorts.

Other PROGRESS-Plus variables (i.e., race/ethnicity, occupation, social capital, and socioeconomic status) were represented by a limited number of data points and were not used in data analysis (Supplement Table 1).

Of the Plus parameters, age and age SD were available for all data points. The pooled mean age across studies ranged from 18.817 to 43.6841 years, 18.817 to 43.6841 years for mild TBI and 25.237 to 4134 years for moderate-severe TBI cohorts.

The nature of our research questions raises the issue of time zero bias. In longitudinal studies, testing starts at a defined point, called zero-time47,48. Designated time zero (i.e. baseline or first assessment) varied, ranging from ~ 12 h17 to ~ 10 years post-injury23. The last follow-up was defined as the final assessment time point at which cognitive test scores were reported within each cohort. Last follow-up assessments ranged from five days19 to ~ 20 years23 post-TBI (Supplement Table 1), with the mean number of days from injury to baseline assessment being 11.2 for mild and 348 for moderate-severe TBI cohorts. Time from baseline to last follow-up assessment, referred to as time interval, ranged from four19 to 485123 days with a mean of 446 ± 824 days.

In order to observe rate of change per month as the outcome, we converted time-related variables to the common metric of months by dividing by 30.4, the average number of days in a month (Supplement Table 1).

The distribution of data points across cognitive test domains, overall and by injury severity, was as follows, from highest to lowest: executive function (118, 55% mild), learning and memory (105, 49% mild), perceptual-motor (87, 64% mild), information processing speed (85, 72% mild), complex attention (84, 60% mild), language (27, 41% mild), and social cognition (6, 100% mild). We refer the reader to Supplement Table 1 for data used in this synthesis.

The cognitive outcome data for ML was a nominal variable across domains of cognition, standardised as rate of change per month (see Methods section). The rate of change was also calculated for domains of cognition with sufficient data: executive function and learning and memory. (Fig. 1)

Study design and analytical workflow. Schematic representation of the analytical pipeline, illustrating the flow from data acquisition to model interpretation. The seven steps include data collection, extraction, harmonisation, preprocessing, machine-learning model development, model evaluation, and sensitivity analyses, with intermediate and final results displayed at each stage to support overall and model-specific explanations.

The outcome data points were collected at baseline and last follow-up assessments, and formed the foundation for the predictive modelling of cognitive trajectories presented in this study (Supplement Table 1).

From baseline to last follow-up assessment, the median standardised rate of change per month was 0.233 for mild and 0.112 for moderate-severe TBI (Table 1). We refer the reader to the figures for results of the standardised rate of change for mild and moderate-severe TBI for each of the PROGRESS-Plus parameters with available data: gender/sex, age and education overall, and by domains of cognition (i.e. learning and memory, executive function) (Supplement Fig. 1a-i).

Data preprocessing

We created heatmaps with correlation coefficient matrices for mild and moderate-severe TBI to identify associations among PROGRESS-Plus variables (Fig. 2 and Supplement Fig. 2 for country-specific associations). We treated all PROGRESS-Plus variables with complete data coverage as primary predictors and included them in the analyses irrespective of the statistical significance of their association with the rate of change. While country of study origin was available for each cohort, in order to use it efficiently (i.e., considering historical evolution) we used Gender Inequality Index (GII) and Human Development Index (HDI) as contextual measures and effect modifiers in the modelling process. These country-level structural indicators contributed to measurable heterogeneity across mild and moderate-severe TBI cohorts in several key predictors (Fig. 2). Assigned HDI and GII values ranged from 0.68926 to 0.92825,33,35,43 and 0.00942 to 0.26939, respectively. Temporal trends indicated a gradual change in values in cohorts coming from the same country.

Heatmaps of the correlation coefficient matrix, by injury severity (a, mild TBI; b, moderate-severe TBI). Heatmaps of the correlation coefficient matrix for each of the parameters’ association with the outcome, rate of change (bivariate associations). Red signifies a positive correlation, while blue represents a negative correlation. The intensity of the color reflects the magnitude of the correlation coefficient, with more vibrant shades indicating stronger correlations. Specifically, shades tending towards red represent coefficients approaching 1, while those leaning towards blue represent coefficients approaching − 1.

Along with PROGRESS-Plus parameters, we also evaluated whether time-related variables (i.e., time from injury to baseline assessment and time between baseline and last follow up assessment) were associated with the rate of change across injury severity cohorts (Fig. 3 and Supplement Fig. 3). Time from injury to baseline assessment and time between baseline assessment and last follow-up varied (Table 2) and were negatively and positively associated with the rate of change in mild and moderate-severe TBI, respectively. We retained these variables as covariates in models.

Feature contributions across machine-learning models (a, mild TBI; b, moderate-severe TBI). Ranking of feature contributions for prediction of the rate of change in cognitive outcomes in Gradient Boosting, Random Forest, and XGBoost models. The colour scale reflects the strength of positive associations, with darker red indicating the strongest positive correlations and darker blue indicating the weakest positive correlations. Intermediate shades represent gradations in effect size, with colour intensity corresponding to the magnitude of the correlation coefficient.

Machine learning modelling

Results of three supervised ML algorithms, Random Forest (RF), Gradient Boosting (GB) and extreme GB (XGBoost), including both overall cognitive rate of change and domain-specific outcomes, respectively, can be seen in Figs. 3 and 4, for mild and moderate-severe TBI, respectively.

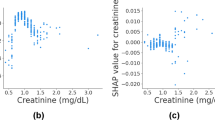

SHAP summary of feature impact on predictive models’ output (a-c mild TBI; d-f moderate-severe TBI). SHAP summary plots illustrating the influence of input variables on model predictions for Gradient Boosting (a, d), Random Forest (b, e), and XGBoost (c, f) models. The colour of each point indicates the value of the feature, with red corresponding to higher values and blue to lower values. The position along the x-axis reflects whether the feature increases or decreases the prediction in the model output. Features are ranked on the y-axis based on their overall importance, with those contributing most to the model’s predictions appearing at the top.

Feature importance heatmaps of each of the models are presented in Fig. 3a and b, for mild and moderate-severe TBI, respectively. For mild TBI, across the three models, the top PROGRESS-Plus feature was age, followed by education SD and the contextual parameter of GII. For moderate-severe TBI cohorts, the top PROGRESS-Plus feature was age SD and time related variables.

Model evaluation and interpretation

Model performance metrics are summarised in Table 2. Across all algorithms, predictive accuracy was comparable, with XGBoost slightly better in mean absolute error (MAE), and RF slightly better in root mean squared error (RMSE) in mild and moderate-severe TBI cohorts (Table 2).

The pattern of tuned hyperparameters (Table 3) across algorithms suggested different levels of structure in data in both mild and moderate-severe TBI. We observed that RF for both injury severity strata captured very shallow trees (i.e., maximum depth = 2) with the complexity in predictive signals explained by low-order interactions. The GB model favoured deeper individual trees (i.e., maximum depth of 9 and 6 for mild and moderate-severe TBI, respectively) where each successive tree focused on residual errors, allowing more complex interaction within a single tree compared to RF. The mild TBI model highlighted a very low learning rate (i.e., 0.01), with deeper trees and more iterations. The moderate-severe model used a higher learning rate (i.e., 0.1) with fewer trees and shallower depths. The XGBoost model, across mild and moderate-sever TBI strata, selected deeper trees using additional regularization to tolerate deeper depths without overfitting. The learning rate remained small, and overall complexity was determined by interactions with a number of boosting runs (Table 3). To support interpretation beyond hyperparameters, we examined variable influence and marginal effects (e.g., partial dependence/Shapley Additive Explanations) to understand how each algorithm captured non-linear relationships and interactions in the data (Fig. 4).

For prediction, the Shapley Additive Explanations (SHAP) ranking showed that across models, time interval, GII, education (picked by RF), and age emerged as the strongest predictors of standardised rate of change for mild TBI. For moderate-severe TBI cohorts, baseline assessment, time interval, age, and GII (picked by RF) emerged as the strongest predictors of standardised rate of change across models (Fig. 4).

Internal validation and sensitivity analyses

To ensure the robustness of the results, we ran several sensitivity analyses. First, we performed 5-fold cross-validation to verify generalisability across folds (Table 4). For both injury severities, XGBoost showed slightly superior performance for standardised rate of change across data points. The effect of all the primary predictors, covariates, and the effect modifiers remained the same (Supplement Figs. 4 and 5).

Second, we re-ran analyses using a subset of data on executive function and learning and memory domains of cognition, which had relatively balanced data between mild and moderate-severe TBI cohorts, confirmed our hypothesis (i.e., that PROGRESS-Plus parameters would exert a more pronounced effect on domain-specific cognitive outcomes than on overall cognition, stronger for mild than moderate-severe TBI). Results showed that all the values of relative contributions for PROGRESS-Plus and time variables varied across models, however they remained largely the same as in the main analysis (Supplement Figs. 6 and 10 for executive function and learning and memory TBI cohorts, respectively). The top three features for prediction of rate of change in executive function domain in mild TBI cohorts identified by the SHAP analyses were age, time interval, and education SD. These results were similar to the main analysis, with the exception of GII whose predictive value was reduced compared to the main analysis. In moderate-severe TBI cohorts, the top three predictive features remained as time interval, time from injury to baseline assessment, and age SD (Supplement Fig. 7).

The top three consistent features for prediction of rate of change in the learning and memory domain in mild TBI cohorts identified by the SHAP analyses were education SD, time interval, and GII. These results were similar to the main analysis, with the exception of age whose predictive value was reduced compared to the main analysis. In moderate-severe TBI cohorts, the top three predictive features remained as time interval, time from injury to baseline assessment, and age SD, similar to the main analysis (Supplement Fig. 11). Model performance for both domains remained similar to that of the main analysis (Supplement Table 4, Table 4).

The results of sensitivity analyses using 5-fold cross-validation as an internal validation approach to evaluate the robustness and generalizability of model estimates for specific domains of cognition are presented in Supplement Figs. 8, 9,12,and 13.

Third, we conducted analyses with the targeted elimination of multicollinear age and education SD parameters provided further validity regarding the impact of time interval, age, and GII in mild TBI cohorts. In moderate-severe TBI, time related variables were further validated (Supplement Figs. 14 and 15).

Finally, by adding sample size of cohorts to the models, we observed that all important parameters remained the same as in main analysis for mild TBI cohorts. However, in moderate-severe TBI cohorts, sample size emerged as an important feature across models, as well as a predictor of rate of change in SHAP analysis, slightly reducing the value of GII and age SD on the model output (Supplement Figs. 16 and 17).

Discussion

In this study, we report innovative explainable ML research using published longitudinal data on the course of cognition after TBI, investigating PROGRESS-Plus characteristics of study samples as predictors of rate of change in cognition after injury. The results support the importance of considering social parameters in post-injury outcomes, and the ability of ML to explain heterogeneity49 in the course of cognition overall and by cognitive domain following TBI of equal severity. Our study has research, clinical, and policy implications.

We found that among the most important predictors of cognitive change after TBI in published research across domains and injury severities were age, time-related parameters (i.e., time from injury to baseline assessment and time interval from baseline to last follow-up assessment), and country-level structural indicators. In the sensitivity analyses, the additional parameter of number of participants in the cohort emerged as an important predictor for the rate of change. These parameters have been discussed in longitudinal research for a long period of time50, and were also cited as key parameters that, if not strongly endorsed in analytical models, make research findings less likely to be true51. ML models were able to capture these variables, which solidified the vision that supervised ML52,53 can contribute significantly to human knowledge and expertise, and discussion concerning scientific validity.

Given the pronounced variation in age and age SD across cohorts, the discussion brings attention to how researchers deal with age in their analyses in longitudinal studies. This is true for both mild and moderate-severe TBI. Age-related deterioration affects all people, and is reflected across domains of cognition, reported for both mild and moderate-severe TBI cohorts1,54,55. The mechanisms that mediate age-related processes and changes reflected in the test performance of cohorts with TBI included in this study are not entirely clear; however, these processes are likely to be influenced by injury severity (Supplement Fig. 1b, e, f). We found a higher rate of change in cognitive outcomes in moderate–severe TBI (0.23) than in mild TBI (0.11) (Table 1), highlighting the value of how machine learning in capturing temporal effects in predicting outcomes. Our results also align with the random damage theories56,57, which situate around the disturbed balance between ongoing damage and repair that occurs in the process of natural aging, reflected in cognitive test performance. When the process is disturbed by brain injury, the balance of ongoing damage and repair is further dysregulated and, therefore, was picked up by ML as a key predictor of the rate of change, to a greater extent in moderate-severe TBI than mild (Supplement Fig. 1b, e, f). The age of research participants, and the SD of the cohort, are, therefore expected to be seen, as it would affect the processes of both natural ageing and constrict recovery after injury, which is not expected to be uniform in participants of various ages. Age variability within cohorts, reflected by larger age standard deviations, indicates broader age dispersion among participants. Such dispersion may correspond to heterogeneity in age-related multimorbidity, particularly among older participants in the cohort, which is known to increase with age. Nonetheless, comorbidity was not consistently reported in the studies included in our dataset, limiting our ability to examine their potential importance. Future research should consider this limitation and systematically report health status of research participants within Plus parameters, as these factors are implicated in injury severity and external cause of injury10. This is important because several longitudinal studies have uncovered that the age-specific strain caused by the onset of TBI in the presence of comorbidity is associated with cognitive outcomes, regardless of TBI severity1,6,9. Future research should investigate age and age-related effects precisely to understand their predictive role in prognosis.

Time-related variables (i.e., time from injury to baseline assessment and time interval between baseline and last follow-up assessment, Supplement Table 2), emerged as important predictors of rate of change, and therefore emphasize the critical role of time in prognostic research. We observed that the rate of cognitive change after TBI differed between mild and moderate-severe cohorts (Table 1). This finding aligns with prior research and with current evidence defining severe TBI as a risk factor for long-term cognitive decline58,59. This study provides evidence that time affects rate of change across executive function and learning and memory domains of cognition, but we did not have sufficient data to test the effect on other cognitive domains (i.e., language, perceptual motor, complex attention, information processing speed, social cognition). Because our prior systematic review1,60 and observational studies35,61 brought attention to the fact that the course of cognition and recovery were not uniform and were dependent on the baseline assessment, future research should consider the implications of time after injury and baseline assessment in prognosis of cognitive domain-specific risks following injury.

Our results on relevance of baseline assessments (i.e., time zero) in prognosis were especially pronounced in moderate-severe TBI cohorts, with the mean baseline assessment conducted at around one year post injury, with great heterogeneity between cohorts (Table 1). In mild TBI, the mean baseline assessment was less than two weeks, with more homogeneity among samples (Table 1). At the point of baseline assessment, many participants in the mild TBI cohort may have reached a recovery plateau, which may not be the case for moderate-severe TBI, and therefore the time effects were not as pronounced in ML prediction for mild as in moderate-severe cohorts. Our results in sensitivity analyses confirmed the robustness of time effects, which underscore a critical need for coordinated research and policy efforts that explicitly integrate discussion on timing of research concerning prognosis based on injury severity, as this would impact scientific evidence with policy and practice implications. This is especially important as in both our prior hypothesis-driven approaches, we faced challenges in characterizing these temporal effects. Given that recovery occurs in parallel with processes of aging, these processes may reinforce one another with differing speed based on time that emerged from injury. Our results underscore that time is not merely a methodological detail, but a determinant of predictive accuracy and scientific interpretation. Future research should consider the benefits of ML for the prediction of outcomes after TBI with greater precision to time.

Country-level indicators also warrant discussion. We systematically integrated heterogeneous datasets across multiple published cohorts on all published longitudinal research concerning the course of cognition after TBI. We found that ML captured GII as a predictor of the rate of change across several models in both injury severities in both bivariate and multivariate ML models. The positive correlation between GII and rate of change was consistent with previous reports of the important role of gender equality in heath outcomes62. These results are consistent with recent observations showing that trust in relationships is associated with outcomes in advanced clinical conditions, including stroke63 and dementia64. In countries with social constraints on education and development, captured via GII and reflected in economic imbalances and restricted decision making, the macro-level relational environment may impose strains on both family and community relationships, impacting trust. While this may be perceived as far-fetched, results bring attention to the critical role of macro-level social parameters within existing data hierarchies and raise fundamental questions about their relative importance compared with person-level predictors. Greater emphasis on social pathways in future research will be essential to elucidate the mechanisms through which these parameters exert their effects on cognitive recovery.

Our correlation matrices results indicate that PROGRESS-Plus parameters characterising study samples, including whether the country of study origin is predominantly English speaking, gender/sex, education, and age are associated with rate of change. However, when these variables were used in ML, in consideration with other parameters (i.e., time effects and country-level structural indicators) their predictive values were not as strong as those of other parameters, except education and age. We have observed implications of the country-level structural indicators expressed by GII in results of our past systematic review8, where when we positioned results along the GII continuum, differences between male and females across outcomes and countries of publication started to dissipate. The GII is a composite, time-varying indicator of a country’s gender inequality level, incorporating measures of educational attainment, labour force participation, maternal mortality, adolescent fertility, and parliamentary representation65. Therefore, when the index was used in ML models, the predictive effect of cohorts’ education and education SD were diminished, and several of our models featured the GII as a salient predictor of the rate of cognitive change after TBI, in both mild and moderate-severe samples. While it has been previously suggested that cognitive outcomes after TBI differ between people of different gender/sex and education level, our results are the first to highlight that the importance of these parameters are affected by broader social and structural contexts. This has important research, clinical, and policy implications, particularly in ongoing debates regarding the long-term cognitive consequences of milder forms of TBI, and the cumulative contributions of biological sex, gender, education, and injury severity to TBI outcomes. Our ML results also bring attention to the intersectionality reflected in the GII, which may also have implications for those who participated in research included in the dataset, and therefore was captured by ML as a prognostic parameter after TBI. Evidence increasingly shows that the characteristics of research participants in TBI studies are not truly reflective of TBI populations, where racialized, less educated, and more disadvantaged communities are less like to participate in research but are also disproportionately affected by TBI and its adverse consequences15,66,67. Future prognostic research and policy should diversify research samples and strive for systematic inclusion of diverse groups of people in TBI research. Overcoming barriers to research remains a priority66,67.

Our sensitivity analyses identified cohort sample size as an important variable in predicting the rate of change. It has long been recognized that statistical power is related to effect size, and that findings are more likely to be true in scientific fields characterized by larger effects51. We observed that in moderate-severe TBI, the rate of change was half that observed in mild TBI cohorts (Table 1). In longitudinal studies where effects are large, such as in moderate-severe TBI, results may be more susceptible to spurious associations arising from limited sample sizes. To elaborate, if the majority of true PROGRESS-Plus characteristics influencing (i.e., associated with) the rate of change is also associated with cohort sample size, these variables may be downgraded in importance among the most important predictive parameters, when sample size was added to ML models. Based on these results, we consider ML to be a valuable and informative tool and screening mechanism for meaningful associations in published TBI research concerning the course of cognition. Its application is particularly valuable given the availability of extensive data and metadata, which can be accessed at relatively low cost.

The purpose of our research was to delineate whether social parameters associated with rate of change of cognition using data from published TBI cohorts, and to provide information on whether the characteristics of longitudinal study samples play a role in prognosis. We applied three ML approaches to build prognostic models concerning rate of cognitive change in both mild and moderate-severe injury severity samples. All three ML approaches converge on the conclusion that social and structural characteristics of published cohorts are prognostically meaningful for cognitive change after TBI. Models for mild TBI strata were more heterogeneous and prone to social influences compared to moderate-severe TBI strata, highlighted in the complexity reflected in the hyperparameters of boosting models (GB and XGBoost). Nonetheless, all models indicated that sample compositions are determining prognostic patterns and therefore should be treated with greater care in future research. Results of ML models not only delineated four PROGRESS-Plus characteristics (i.e., country of English language dominance as place of residence, gender/sex, education, and age) of cohorts as important determinants of prognosis, but also exposed critical gaps in the current prognostic evidence base by showing who contributes to longitudinal research and which social variables are measured in cohort studies on prognosis and which are not (i.e., religion/spirituality, socioeconomic status, occupation, social capital), constraining what can be known about the value of these parameters to the course of cognition and prognosis after TBI. Therefore, ML approaches as a methodological tool in research is of great value not only for improving prognostic modelling and meta-research, but also to bring attention to how methodological choices made by researchers about sampling68, anchored time scale (i.e., time zero and elapsed time)69, and cognitive measures1,60 that actively shaped the current best state of knowledge available to clinicians and policymakers.

Our results should be interpreted in light of several methodological limitations. First, we restricted our analysis to the cohorts’ observational window between first and last available assessments. Several included cohorts (Supplementary Table 1) reported data from multiple assessment points; however, these timepoints were highly inconsistent in the timing of assessment with regards to time zero and time since injury70. When harmonised across studies, there was insufficient overlapping data to support ML modelling at different discrete time intervals (Supplement Table 1). While we adjusted results for time from injury to the first assessment (i.e. time zero) and for the interval between assessments, we acknowledge that our approach approximates change as linear between time zero and the final assessment. However, recovery following injury does not follow a linear course; as early changes are situated within natural recovery processes after injury71. As such, our estimates should be interpreted as average rates of change across cohorts with different observational windows from injury time. As additional longitudinal datasets become available, future work will be better positioned to apply ML approaches that explicitly model nonlinear patterns and time-varying effects across a wide range of recovery phases. Second, because of heterogeneous and inconsistent reporting of study participants’ social profiles in the dataset of published studies, we needed to develop and implement rigorous data harmonisation processes to maximise the ability to examine all available PROGRESS-Plus characteristics in the dataset prior to application of ML-based prognostic modelling. Despite our data harmonisation efforts, the dataset exhibited substantial missingness across several PROGRESS-Plus parameters, including race/ethnicity, socioeconomic status, occupation, religion/spirituality, and social capital. Although data augmentation using synthetic data generation is sometimes employed to mitigate this issue in ML, implementation of this approach was not possible in the present study, as these PROGRESS-Plus parameters were sparsely reported, and the available data was less than 22% for occupation and less than 5% for all other parameters after data harmonisation (Table 1). At such low levels of representation, synthetic data generation would have relied on insufficient underlying distributions, increasing the risk of amplifying noise, introducing artificial patterns, and reinforcing existing biases. For these reasons, we restricted model training to four parameters with adequate representation: the country of study origin through country of English language dominance, gender/sex, education, and age. Future research should consider this limitation and commit to a standardised reporting of PROGRESS-Plus characteristics to enable equitable and robust ML-based prognostic modelling using published data. Further investigations are also needed to refine the role of PROGRESS-Plus parameters as predictors of outcomes when considering structural level parameters. Finally, we used the binary variable English-language dominance as a controlling variable in the ML modelling. This was because all measures that were used to assess cognition were developed in English. Even when translated or culturally adapted, these measures may not achieve full construct and linguistic equivalence when applied and used within non-English-speaking countries, potentially introducing systematic differences in test performance. Because our dataset included samples from multiple countries, including those in which English is not the primary language, we sought to account for potential language-related measurement bias. Although country-level stratification would have been preferable, the number of data points per country was insufficient to support adequately powered analyses. Therefore, to retain statistical power while still addressing potential linguistic effects, we operationalized a binary English-dominance variable (English-speaking vs. non-English-speaking study context). We acknowledge that this binary classification simplifies substantial cultural and linguistic heterogeneity; however, it represents a pragmatic approach to mitigating potential measurement bias given available data.

Our research and results provide a foundation for advancing prediction models that systematically evaluate how study sample representativeness affect TBI prognosis. We employed three ML models, including the RF, the GB, and the XGBoost. Employing SHAP in the present study provided additional insights into model performance and predictive value of each parameter in the presence of others, in both mild and moderate-severe injury severity study samples. The usability of the models in predicting outcomes using published data depends on data quality. We recommend that future researchers and who will apply our process and work with published data dedicate significant time to data extraction, pre-processing and standardization prior to machine learning model input. In addition, users are expected to have at least some machine learning expertise and be familiar with the variables in the dataset, as well as with the principles of modelling. While this may limit direct use by non-technical personnel, it ensures reliable and accurate predictions for research and policy applications. Future enhancements in prognosis research that can be used in machine learning require the systematic collection, reporting, and integration of social parameters in research to enable more equitable clinical and policy decision-making.

Methods

We conducted and reported our research following the Transparent Reporting of a multivariable prediction model for Individual Prognosis or Diagnosis – Artificial Intelligence (TRIPOD-AI) guidelines for prediction model development and validation72. We shared all research-related files in an open data repository on the Open Science Framework (OSF)73, in alliance with the FAIR Guiding Principles74. The complete dataset is available upon reasonable request from the corresponding author.

Data sources and description

The dataset used in this work was developed by our research team using data from our prior systematic reviews1,60 on the course and predictors of cognitive outcomes after TBI. We updated this dataset with new evidence that emerged since the original searches16. The flow diagram of the study selection is depicted in Fig. 1. In brief, the data covers evidence that emerged from five databases: Embase, Medline, Scopus, and Cochrane Central Register for Controlled Trials, and PsycINFO. We searched the databases from inception until April 8, 2024 for peer-reviewed English-language longitudinal studies reporting raw cognitive test scores at two or more time points in adults diagnosed with TBI. Only studies that provided data on the course of cognition over at least two data points after TBI were included. The protocol, PROSPERO registry, and the systematic reviews that provided specifics on study methodology and the dataset are published15,70,75.

Our research concerned data on TBI diagnosis, injury severity and cause of injury data, and PROGRESS-Plus parameters of each study sample (country of recruitment, race/language/ethnicity, occupation, gender/sex, religion/spirituality, education, socioeconomic status, social capital, age, and other contextual parameters), and cognitive test scores at baseline and last follow-up assessments75. Each cognitive test in a given cohort was treated as a unit of analysis.

Dataset preparation and machine learning workflow

Figure 1 represents dataset preparation and machine learning workflow, outlining seven steps: (i) data collection; (ii) data extraction; (iii) data harmonisation; (iv) data preprocessing; (v) machine learning algorithms; (vi) model evaluation; and (vii) sensitivity analyses, which allowed generation of overall and model-specific explanations.

Data collection

Study selection followed predefined inclusion and exclusion criteria. Eligible studies included those that reported longitudinal data on standardised cognitive tests and provided sufficient injury-related data of study sample characteristics to support data harmonisation and modelling. For more information, please refer to published studies1.

Data extraction

Two independent researchers extracted data using standardised, previously published extraction forms70. Extracted variables included demographic characteristics (age, sex/gender, race/ethnicity), social parameters (place of residence, occupation, education, and social capital), and contextual brain injury-related parameters (injury severity, mechanism of injury, comorbidities, and time from injury to baseline or first assessment, also known as time zero in prognosis studies47). We also extracted data on time from injury to baseline assessment, and calculated the time interval between baseline and last follow-up cognitive assessments. Data was extracted from the main text and supplementary materials of each published study by two independent researchers. Extraction accuracy and reliability were ensured through double extraction, cross-checking, and resolution of discrepancies by consensus.

Data harmonisation process

We considered using ML-based solutions for data harmonisation of several variables76,77,78. However, we faced significant challenges in finding scientific guidance on the application of standardised ML data harmonisation in practice. We found that each harmonisation case was a unique process and necessitated a series of context-dependent decisions through iterative team discussions where we evaluated several harmonisation options at once (i.e., flexible harmonisation79,80.

We first created a library of terms based on all extracted data (Supplement Table 2) that defined variable names, operational definitions, categories, and units to ensure consistency across included cohorts. The core team discussed each variable in the table and evaluated different variables under the same section header, jointly deciding how to incorporate these updates into the harmonised protocol template. We then harmonised data to reconcile different operationalisations of similar concepts (i.e., gender/sex composition of study samples, reported in a variety of data formats, known as syntax) as well as semantics (i.e., intended meanings of words such as young adults, primary schooling, etc.), conceptual schema (i.e., structured and unstructured data extracted from tables and raw text, respectively), and measurement differences (e.g. when the same concept was reported with different measurements, including injury severity, cognitive domain, etc.). Finally, we transformed data to numeric values in order to prepare the data for ML (Supplement Table 3). We structured extracted social and contextual elements using the PROGRESS-Plus framework. The categorical parameters of study samples were transformed to continuous variables where feasible: gender/sex composition was transformed to proportion men/males, and education level was transformed to education in years. Additionally, we transformed age-related parameters to continuous age and age standard deviation of study samples. Country of study origin (where recruitment took place) was transformed to country of English language dominance as a binary variable (0, 1).

Cognitive outcome scores of standardised tests at baseline and last follow-up and the domains of cognition they reflect were represented as mean and standard deviation, and binary values, respectively.

To ensure quality, data harmonisation and transformation processes were conducted by two independent researchers. We documented the process and decisions (Supplement Table 3) made for future data users in compliance with the FAIR standards74.

Data preprocessing

We conducted data preprocessing. This step included scaling of continuous variables, systematic assessment of outliers, and correction for imbalances across injury severity groups based on visual plots and correlation matrices between predictors and the outcome.

When time was reported categorically or as ranges, we used midpoint values. For cohorts reporting multiple follow-up time points1, the final available follow-up assessment was used for data harmonisation.

We calculated time SD for each data point, considering cohort sample size and reported outcome score, and score SDs in order to preserve the variance structure across included cohorts. We then calculated rate of change per month as the outcome for longitudinal harmonisation of data points. For each data point, the rate of change was computed by dividing the difference between outcome values at baseline and last follow-up by the elapsed time between assessments using the following formula:

where YFU denotes the cognitive test score at the last follow-up assessment, YBL denotes the cognitive test score at baseline, and T denotes the mean follow-up interval measured in days. The follow-up interval was converted to months by dividing by 30 to harmonise time scales across studies.

Class imbalance

We observed that imbalance in our data occurred within injury severity categories. To mitigate the influence of this imbalance on model performance and to preserve clinically meaningful distinctions, we stratified analyses by injury severity rather than applying resampling-based class-imbalance methods. Moderate, moderate–severe, and severe injuries were combined into a single ‘moderate–severe’ category, and models were trained separately for mild and moderate–severe TBI.

Missing data

We made a priori decision to restrict ML analyses to complete cases, where only data points with complete information on the variables required for ML modelling were included in the final analytic sample81,82,83. This decision was made because many variables expressed structurally missing data reporting (Table 2) rather than missing at random.

Machine learning modelling

We applied three supervised ML algorithms84 to mild and moderate-severe injury severity cohorts: random forest (RF)85,86, gradient boosting (GB)87,88, and extreme gradient boosting (XGBoost)89, to model cognitive change over time. These models were trained to predict both overall cognitive trajectories (standardised rate of change per month) and domain-specific standardised rate of change using harmonised PROGRESS-Plus variables. To ensure comparability across algorithms and mitigate bias introduced by differences in cohorts’ age, time between injury and baseline assessment, and follow-up times, all models were trained on harmonised rates of change per unit of time, and controlled for time between TBI and baseline assessment and time between assessments.

We considered time from injury to baseline assessment, time between baseline assessment and last follow-up, and country-level structural indicators as potential modifiers of the relationship between primary predictors and cognitive outcomes. This decision was made in order to capture historical evolution of populations’ social parameters by country, including the GII and HDI.

Model evaluation and interpretation

We evaluated model performance using mean absolute error (MAE) and root mean square error (RMSE)90. We then examined feature importance using feature importance heatmaps and the Shapley Additive Explanations (SHAP)91. By observing SHAP values, we evaluated the predictive importance of each feature and how it contributes to the difference between an actual prediction and a mean prediction, analyzing non-linear relationships between PROGRESS-Plus variables and rate of change.

Internal validation and sensitivity analyses

We performed multiple sensitivity analyses. First, we used 5-fold cross-validation as an internal validation approach to evaluate the robustness and generalizability of model estimates. This procedure assessed the stability of the effects of input parameters on cognitive outcomes across injury severity strata.

Second, we repeated analyses to assess the potential effects of PROGRESS-Plus parameters on specific cognitive domains that provided sufficient data and had relatively even distribution between mild and moderate-severe TBI cohorts. We hypothesized a priori that PROGRESS-Plus parameters would exert a more pronounced effect on domain-specific cognitive outcomes than on overall cognition, stronger for mild than moderate-severe TBI, given previously observed variability in rates of change by injury severity within the cohorts1. This allowed us to verify the relative contributions of the important PROGRESS-Plus parameters under a more constrained, cognitive domain-specific dataset. We re-evaluated feature importance using feature importance heatmaps and the SHAP, estimating each predictor’s contribution to standardised rate of cognitive change in cognitive domain-specific cohorts for executive function and learning and memory domains of cognition.

Third, we investigated the model performance by removing features with high correlation coefficients to evaluate the impact of multicollinearity on the model’s performance. By selectively excluding highly correlated features (education and age SD), we aimed to improve the robustness of the model.

Finally, we added sample size of cohorts to the models as in main analysis. This is because the correlation matrix (Supplement Fig. 2) highlighted strong associations, positive and negative, with HDI and GII, respectively. Since these structural indicators emerged a list of important parameters for predicting rate of change, we added sample size to the main analysis and evaluated the internal consistency of predictors.

All analyses, including generation of figures, were performed using Python (version 3)52,53. Figures were created using Python libraries (matplotlib (v3.x), seaborn (v0.x), and wordcloud (v1.x)).

Data availability

The data encoding and harmonisation templates used in this research are available from the public data portal(https:/osf.io/jku23/overview). The full dataset creation plan and underlying analytic code are available from the corresponding author upon request.

References

Mollayeva, T., Mollayeva, S., Pacheco, N., D’Souza, A. & Colantonio, A. The course and prognostic factors of cognitive outcomes after traumatic brain injury: A systematic review and meta-analysis. Neurosci. Biobehav. Rev. 99, 198–250 (2019). https://doi.org/10.1016/j.neubiorev.2019.01.011

Tritt A, et al. Data-driven distillation and precision prognosis in traumatic brain injury with interpretable machine learning. Sci Rep. 13(1):21200. (2023). doi:10.1038/s41598-023-48054-z

Bark, D. et al. Refining outcome prediction after traumatic brain injury with machine learning algorithms. Sci. Rep. 14, 8036 (2024). doi:10.1038/s41598-024-58527-4.

Oldenburg, C., Lundin, A., Edman, G., Nygren-de Boussard, C. & Bartfai, A. Cognitive reserve and persistent post-concussion symptoms—A prospective mild traumatic brain injury (mTBI) cohort study. Brain Inj. 30, 146–155 (2016). doi: 10.3109/02699052.2015.1089598.

Theadom, A. et al. Social cognition four years after mild-TBI: An age-matched prospective longitudinal cohort study. Neuropsychology 33, 560–567 (2019). doi:10.1037/neu0000516.

Hanafy, S., Xiong, C., Chan, V. et al. Comorbidity in traumatic brain injury and functional outcomes: a systematic review. Eur J Phys Rehabil Med. ;57(4):535–550. doi:10.23736/S1973-9087.21.06491-1 (2021).

Büttner, F., Terry, D. P. & Iverson, G. L. Using a likelihood heuristic to summarize conflicting literature on predictors of clinical outcome following sport-related concussion. Clin. J. Sport Med. 31, e476–e483 (2021). doi: 10.1097/JSM.0000000000000825.

Mollayeva, T., Mollayeva, S., Pacheco, N. & Colantonio, A. Systematic review of sex and gender effects in traumatic brain injury: Equity in clinical and functional outcomes. Front. Neurol. 12, 678971 (2021). https://doi.org/10.3389/fneur.2021.678971.

McIntosh, S. J., Vergeer, M. H., Galarneau, J.-M., Eliason, P. H. & Debert, C. T. Factors associated with persisting symptoms after concussion in adults with mild TBI. JAMA Netw. Open 8, e2516619 (2025). doi:10.1001/jamanetworkopen.2025.16619.

Mollayeva, T. et al. Decoding health status transitions of over 200 000 patients with traumatic brain injury from preceding injury to the injury event. Sci. Rep. 12, 5584 (2022). doi: 10.1038/s41598-022-08782-0.

Mollayeva, T., Tran, A., Chan, V., Colantonio, A. & Escobar, M. D. Sex-specific analysis of traumatic brain injury events: Applying computational and data visualization techniques to inform prevention and management. BMC Med. Res. Methodol. 22, 30 (2022). doi: 10.1186/s12874-021-01493-6.

Teterina, A. et al. Gender versus sex in predicting outcomes of traumatic brain injury: A cohort study utilizing large administrative databases. Sci. Rep. 13, 18453 (2023). doi: 10.21203/rs.3.rs-2720937/v1.

Yaniv, D. Trust the process: A new scientific outlook on psychodramatic spontaneity training. Front. Psychol. 9, e2083 (2018). https://doi.org/10.3389/fpsyg.2018.02083.

Hanazaki, N. Local and traditional knowledge systems, resistance, and socioenvironmental justice. J. Ethnobiol. Ethnomed. 20, 5 (2024). doi: 10.1186/s13002-023-00641-0.

Kim, H., Jahed, M., Sant’Ana, T. T. & Mollayeva, T. Cognitive outcomes among adults following traumatic brain injury: A systematic review using the PROGRESS-plus framework. Arch. Phys. Med. Rehabil. 106, e120 (2025). https://doi.org/10.1016/j.apmr.2025.01.312.

Sant’Ana, T. T. et al. A PROGRESS-driven approach to cognitive outcomes after traumatic brain injury: A study protocol for advancing equity, diversity, and inclusion through knowledge synthesis and mobilization. PLoS One 19, e0307418 (2024). doi: 10.1371/journal.pone.0307418.

Bleiberg, J. et al. Duration of cognitive impairment after sports concussion. Neurosurgery 54, 1073–1080 (2004). doi: 10.1227/01.neu.0000118820.33396.6a.

Chen, C.-C., Wei, S.-T., Tsaia, S.-C., Chen, X.-X. & Cho, D.-Y. Cerebrolysin enhances cognitive recovery of mild traumatic brain injury patients: Double-blind, placebo-controlled, randomized study. Br. J. Neurosurg. 27, 803–807 (2013). doi: 10.3109/02688697.2013.793287.

Covassin, T., Stearne, D. & Elbin, R. Concussion history and postconcussion neurocognitive performance and symptoms in collegiate athletes. J. Athl. Train. 43, 119–124 (2008). doi: 10.4085/1062-6050-43.2.119.

Dikmen, S., Machamer, J. & Temkin, N. Mild traumatic brain injury: Longitudinal study of cognition, functional status, and post-traumatic symptoms. J. Neurotrauma 34, 1524–1530 (2017). doi: 10.1089/neu.2016.4618.

Farbota, K. D. et al. Longitudinal diffusion tensor imaging and neuropsychological correlates in traumatic brain injury patients. Front Hum. Neurosci 6:160 (2012). doi: 10.3389/fnhum.2012.00160.

Field, M., Collins, M. W., Lovell, M. R. & Maroon, J. Does age play a role in recovery from sports-related concussion? A comparison of high school and collegiate athletes. J. Pediatr. 142, 546–553 (2003). doi: 10.1067/mpd.2003.190.

Hicks, A. J. et al. Does cognitive decline occur decades after moderate to severe traumatic brain injury? A prospective controlled study. Neuropsychol. Rehabil. 32, 1530–1549 (2022). doi: 10.1080/09602011.2021.1914674.

Kersel, D. A., Marsh, N. V., Havill, J. H. & Sleigh, J. W. Neuropsychological functioning during the year following severe traumatic brain injury. Brain Inj. 15, 283–296 (2001). doi: 10.1080/02699050010005887.

Kontos, A. P. et al. The effects of combat-related mild traumatic brain injury (mTBI): Does blast mTBI history matter? J. Trauma Acute Care Surg. 79, S146–S151 (2015). doi: 10.1097/TA.0000000000000667.

Kwok, F. Y., Lee, T. M. C., Leung, C. H. S. & Poon, W. S. Changes of cognitive functioning following mild traumatic brain injury over a 3-month period. Brain Inj. 22, 740–751 (2008). doi: 10.1080/02699050802336989.

Lee, H. et al. Comparing effects of methylphenidate, sertraline and placebo on neuropsychiatric sequelae in patients with traumatic brain injury. Hum. Psychopharmacol 20, 97–104 (2005). doi: 10.1002/hup.668.

Lu, J., Roe, C., Sigurdardottir, S., Andelic, N. & Forslund, M. Trajectory of functional independent measurements during first five years after moderate and severe traumatic brain injury. J. Neurotrauma 35, 1596–1603 (2018). doi: 10.1089/neu.2017.5299.

Macciocchi, S. N., Seel, R. T. & Thompson, N. The impact of mild traumatic brain injury on cognitive functioning following co-occurring spinal cord injury. Arch. Clin Neuropsychol 28, 684–691 (2013). doi: 10.1093/arclin/act049.

Malec, J. F. et al. Longitudinal Effects of Medical Comorbidities on Functional Outcome and Life Satisfaction After Traumatic Brain Injury: An Individual Growth Curve Analysis of NIDILRR Traumatic Brain Injury Model System Data. J. Head Trauma. Rehabilitation. 34, E24–E35 (2019). doi: 10.1097/HTR.0000000000000459.

Mandleberg, I. A. Cognitive recovery after severe head injury. 3. WAIS verbal and performance IQs as a function of post-traumatic amnesia duration and time from injury. J. Neurol. Neurosurg. Psychiatry. 39, 1001–1007 (1976). doi: 10.1136/jnnp.39.10.1001.

Paré, N., Rabin, L. A., Fogel, J. & Pépin, M. Mild traumatic brain injury and its sequelae: Characterisation of divided attention deficits. Neuropsychol. Rehabil. 19, 110–137 (2009). doi: 10.1080/09602010802106486.

Robertson, K. & Schmitter-Edgecombe, M. Self-awareness and traumatic brain injury outcome. Brain Inj. 29, 848–858 (2015). doi: 10.3109/02699052.2015.1005135.

Sandhaug, M., Andelic, N., Langhammer, B. & Mygland, A. Functional level during the first 2 years after moderate and severe traumatic brain injury. Brain Inj. 29, 1431–1438 (2015). doi: 10.3109/02699052.2015.1063692.

Schmitter-Edgecombe, M. & Robertson, K. Recovery of visual search following moderate to severe traumatic brain injury. J. Clin. Exp. Neuropsychol. 37, 162–177 (2015). doi: 10.1080/13803395.2014.998170.

Snow, P., Ponsford, J. & Douglas, J. & Conversational discourse abilities following severe traumatic brain injury: a follow up study. Brain Inj. 12, 911–935 (1998). doi: 10.1080/026990598121981.

Vanderploeg, R. D., Donnell, A. J., Belanger, H. G. & Curtiss, G. Consolidation deficits in traumatic brain injury: The core and residual verbal memory defect. J. Clin. Exp. Neuropsychol. 36, 58–73 (2014).doi: 10.1080/13803395.2013.864600.

Wang, S. et al. Umbilical cord mesenchymal stem cell transplantation significantly improves neurological function in patients with sequelae of traumatic brain injury. Brain Res. 1532, 76–84 (2013). doi: 10.1016/j.brainres.2013.08.001.

Zaninotto, A. L. et al. Visuospatial memory improvement in patients with diffuse axonal injury (DAI): A 1-year follow-up study. Acta Neuropsychiatr. 29, 35–42 (2017). doi: 10.1017/neu.2016.29.

Prigatano, G. P. et al. Neuropsychological rehabilitation after closed head injury in young adults. J. Neurol. Neurosurg. Psychiatry 47, 505–513 (1984). doi: 10.1136/jnnp.47.5.505.

Sours, C. et al. Associations between interhemispheric functional connectivity and the automated neuropsychological assessment metrics (ANAM) in civilian mild TBI. Brain Imaging Behav. 9, 190–203 (2015). doi: 10.1007/s11682-014-9295-y.

Stenberg, J. et al. Change in self-reported cognitive symptoms after mild traumatic brain injury is associated with changes in emotional and somatic symptoms and not changes in cognitive performance. Neuropsychology 34, 560–568 (2020). doi: 10.1037/neu0000632.

Wylie, G. R. et al. Cognitive improvement after mild traumatic brain injury measured with functional neuroimaging during the acute period. PLoS. One 10, e0126110 (2015). doi: 10.1371/journal.pone.0126110.

Powell, T. J., Collin, C. & Sutton, K. A follow-up study of patients hospitalized after minor head injury. Disabil. Rehabil. 18, 231–237 (1996). doi: 10.3109/09638289609166306.

Barker-Collo, S. et al. Neuropsychological outcome and its correlates in the first year after adult mild traumatic brain injury: A population-based New Zealand study. Brain Inj. 29, 1604–1616 (2015). doi: 10.3109/02699052.2015.1075143.

McCREA, M. et al. Standard regression-based methods for measuring recovery after sport-related concussion. J. Int. Neuropsychol. Soc. 11, 58–69 (2005). doi: 10.1017/S1355617705050083.

Giobbie-Hurder, A., Gelber, R. D. & Regan, M. M. Challenges of guarantee-time bias. J. Clin. Oncol. 31, 2963–2969 (2013). https://doi.org/10.1200/jco.2013.49.5283.

van Rein, N., Cannegieter, S. C., Rosendaal, F. R., Reitsma, P. H. & Lijfering, W. M. Suspected survivor bias in case–control studies: Stratify on survival time and use a negative control. J. Clin. Epidemiol. 67, 232–235 (2014). https://doi.org/10.1016/j.jclinepi.2013.05.011.

Rajkomar, A., Hardt, M., Howell, M. D., Corrado, G. & Chin, M. H. Ensuring fairness in machine learning to advance health equity. Ann. Intern. Med. 169, 866–872 (2018). doi: 10.7326/M18-1990.

Feinstein, A. R. An analysis of diagnostic reasoning. 3. The construction of clinical algorithms. Yale J. Biol. Med. 47, 5–32 (1974). PMID: 4596467.

Ioannidis, J. P. A. Why most published research findings are false. PLoS Med. 2, e124 (2005). https://doi.org/10.1371/journal.pmed.0020124.

Pedregosa, F. Machine learning in python - gradient boosting regressor. Scikit Learn (2011). https://scikit-learn.org/stable/modules/generated/sklearn.ensemble.GradientBoostingRegressor.html

Pedregosa, F. Machine learning in python - extra trees regressor. Scikit Learn (2011). https://scikit-learn.org/stable/modules/generated/sklearn.ensemble.ExtraTreesRegressor.html

Murman, D. The impact of age on cognition. Semin. Hear. 36, 111–121 (2015). doi: 10.1055/s-0035-1555115.

Ayton, A., Spitz, G., Hicks, A. J. & Ponsford, J. Ageing with traumatic brain injury: Long-term cognition and wellbeing. J. Neurotrauma https://doi.org/10.1089/neu.2024.0524. (2025).

Kirkwood, T. B. L. & Austad, S. N. Why do we age?. Nature 408, 233–238 (2000). doi: 10.1038/35041682.

Kirkwood, T. B. L. Evolution of ageing. Nature 270, 301–304 (1977). doi: 10.1038/270301a0.

Chan, V., Mollayeva, T., Ottenbacher, K. J. & Colantonio, A. Sex-specific predictors of inpatient rehabilitation outcomes after traumatic brain injury. Arch. Phys. Med. Rehabil. 97, 772–780 (2016). doi: 10.1016/j.apmr.2016.01.011.

Mollayeva, T., Hurst, M., Escobar, M. & Colantonio, A. Sex-specific incident dementia in patients with central nervous system trauma. Alzheimer’s Dementia: Diagnosis Assess. Disease Monit. 11, 355–367 (2019). doi: 10.1016/j.dadm.2019.03.003.

D’Souza, A. et al. Measuring change over time: A systematic review of evaluative measures of cognitive functioning in traumatic brain injury. Front. Neurol. 8;10:353 (2019). https://doi.org/10.3389/fneur.2019.00353.

Bryant, A. M. et al. Profiles of Cognitive Functioning at 6 Months After Traumatic Brain Injury Among Patients in Level I Trauma Centers. JAMA Netw. Open. 6, e2349118 (2023). doi:10.1001/jamanetworkopen.2023.49118.

Armstrong, K. & Ritchie, C. Research participation in marginalized communities — Overcoming barriers. N. Engl. J. Med. 386, 203–205 (2022). doi: 10.1056/NEJMp2115621.

Marshall, I. J. et al. The effects of socioeconomic status on stroke risk and outcomes. Lancet Neurol. 14, 1206–1218 (2015). doi: 10.1016/S1474-4422(15)00200-8.

Ornish, D. et al. Effects of intensive lifestyle changes on the progression of mild cognitive impairment or early dementia due to Alzheimer’s disease: A randomized, controlled clinical trial. Alzheimers Res. Ther. 16, 122 (2024). doi: 10.1186/s13195-024-01482-z.

Gender Inequality Index (GII). United Nations Development Program: Human Development Reports https://hdr.undp.org/data-center/thematic-composite-indices/gender-inequality-index#/indicies/GII

Cookson, R., Robson, M., Skarda, I. & Doran, T. Equity-informative methods of health services research. J. Health Organ. Manag. 35, 665–681 (2021). doi: 10.1108/JHOM-07-2020-0275.

Castillo, E. G. & Harris, C. Directing research toward health equity: A health equity research impact assessment. J. Gen. Intern. Med. 36, 2803–2808 (2021). doi: 10.1007/s11606-021-06789-3.

Graziano, F., Valsecchi, M. G. & Rebora, P. Sampling strategies to evaluate the prognostic value of a new biomarker on a time-to-event end-point. BMC Med. Res. Methodol. 21, 93 (2021). doi: 10.1186/s12874-021-01283-0.

Kent, P., Cancelliere, C., Boyle, E., Cassidy, J. D. & Kongsted, A. A conceptual framework for prognostic research. BMC Med. Res. Methodol. 20, 172 (2020). doi: 10.1186/s12874-020-01050-7.

Mollayeva, T., Pacheco, N., D’Souza, A. & Colantonio, A. The course and prognostic factors of cognitive status after central nervous system trauma: A systematic review protocol. BMJ Open 7, e017165 (2017). doi: 10.1136/bmjopen-2017-017165.

McCrea, M. A. et al. Functional Outcomes Over the First Year After Moderate to Severe Traumatic Brain Injury in the Prospective, Longitudinal TRACK-TBI Study. JAMA Neurol. 78, 982 (2021). doi: 10.1001/jamaneurol.2021.2043.

Collins, G. S. et al. TRIPOD + AI statement: updated guidance for reporting clinical prediction models that use regression or machine learning methods. BMJ 385, e078378 (2024). https://doi.org/10.1136/bmj-2023-078378

Mollayeva, T., Clayton D., et al. Data Templates in A PROGRESS-Driven Approach to Cognitive Outcomes after Traumatic Brain Injury: Advancing Equity, Diversity, and Inclusion through Knowledge Synthesis and Mobilization. OSF (2026). doi: 10.17605/OSF.IO/5SJVX

Wilkinson, M. D. et al. The FAIR Guiding Principles for scientific data management and stewardship. Sci. Data. 3, 160018 (2016). https://doi.org/10.1038/sdata.2016.18

Mollayeva, T. et al. A systematic review of brain health outcomes after traumatic brain injury through a PROGRESS-Plus lens. PROSPERO (2024). https://www.crd.york.ac.uk/PROSPERO/view/CRD42024547456

Voß, H. et al. HarmonizR enables data harmonization across independent proteomic datasets with appropriate handling of missing values. Nat. Commun. 13, 3523 (2022). https://doi.org/10.1038/s41467-022-31007-x

Adhikari, K. et al. Data harmonization and data pooling from cohort studies: A practical approach for data management. Int. J. Popul. Data Sci. 6;1 https://doi.org/10.23889/ijpds.v6i1.1680 (2021).

Gill, I. S. et al. The DataHarmonizer: A tool for faster data harmonization, validation, aggregation and analysis of pathogen genomics contextual information. Microb. Genom. 9(1):mgen000908 .(2023). https://doi.org/10.1099/mgen.0.000908

Fortier, I., Doiron, D., Burton, P. & Raina, P. Invited commentary: Consolidating data harmonization–How to obtain quality and applicability?. Am. J. Epidemiol. 174, 261–264 (2011). doi: 10.1093/aje/kwr194.

Boyden, J. & Walnicki, D. Leveraging the Power of Longitudinal Data: Insights on Data Harmonisation and Linkage from Young Lives. (2021). https://www.younglives.org.uk/sites/default/files/2023-12/2107_InsightReport_BoydenWalnicki_Accessible.pdf

Löw, N., Hesser, J. & Blessing, M. Multiple retrieval case-based reasoning for incomplete datasets. J. Biomed. Inform. 92, 103127 (2019). https://doi.org/10.1016/j.jbi.2019.103127

Mainzer, R. M. et al. Gaps in the usage and reporting of multiple imputation for incomplete data: Findings from a scoping review of observational studies addressing causal questions. BMC Med. Res. Methodol. 24, 193 (2024). doi: 10.1186/s12874-024-02302-6.

Lee, K. J., Carlin, J. B., Simpson, J. A. & Moreno-Betancur, M. Assumptions and analysis planning in studies with missing data in multiple variables: Moving beyond the MCAR/MAR/MNAR classification. Int. J. Epidemiol. 52, 1268–1275 (2023).doi: 10.1093/ije/dyad008.

Lundberg, S. M. et al. From local explanations to global understanding with explainable AI for trees. Nat. Mach. Intell. 2, 56–67 (2020). doi:10.48550/arXiv.1905.04610

Hu, J. & Szymczak, S. A review on longitudinal data analysis with random forest. Brief Bioinform 24(2):bbad002, (2023). https://doi.org/10.1093/bib/bbad002

Wallace, M. L. et al. Use and misuse of random forest variable importance metrics in medicine: Demonstrations through incident stroke prediction. BMC Med. Res. Methodol. 23, 144 (2023). doi: 10.1186/s12874-023-01965-x.

Lee, Y. W., Choi, J. W. & Shin, E.-H. Machine learning model for predicting malaria using clinical information. Comput. Biol. Med. 129, 104151 (2021). doi: 10.1016/j.compbiomed.2020.104151.

Austin, P. C., Harrell, F. E., Lee, D. S. & Steyerberg, E. W. Empirical analyses and simulations showed that different machine and statistical learning methods had differing performance for predicting blood pressure. Sci. Rep. 12, 9312 (2022). https://doi.org/10.1038/s41598-022-13015-5

Lee, H. S., Kim, J. H., Son, J., Park, H. & Choi, J. Machine learning models for predicting early hemorrhage progression in traumatic brain injury. Sci. Rep. 14, 11690 (2024). https://doi.org/10.1038/s41598-024-61739-3

Robeson, S. M. & Willmott, C. J. Decomposition of the mean absolute error (MAE) into systematic and unsystematic components. PLoS One 18, e0279774 (2023). https://doi.org/10.1371/journal.pone.0279774

Jiang, S., Liang, Y., Shi, S., Wu, C. & Shi, Z. Improving predictions and understanding of primary and ultimate biodegradation rates with machine learning models. Sci. Total Environ. 904, 166623 (2023).https://doi.org/10.1016/j.scitotenv.2023.166623

Acknowledgements

The authors thank Professor Michael Escobar for contribution to the technical discussion on mathematical evolution of the concept; trainees of the BRIDGE Lab (bridgelab.ca) Ashlee Kim, Chuxi Pan, and Mursal Jahed for their support with title and abstract screening and data extraction; and Information Specialists from Library Services at the University Health Network (Jessica Babineau, Emilia Main, and Cynthia Chui) for help in retrieving data for systematic reviews that formed the basis for the data used in this research. This study was funded by the Canadian Institutes of Health Research (CIHR) Brain Health and Reduction of Risk for Age-related Cognitive Impairment SGD #202306BK5-510306-BKS-ADHD-220229, and in part by Canada Research Chair in Neurological Disorders and Brain Health, CRC-2021-00074. This research program was developed with endorsement by patient and public organizations, who reviewed the research program prior to its submission to the funding agency (Canadian Institute of Health Research). The critical need to address PROGRESS-Plus parameters in brain injury research was also endorsed by team members of the SGD #202306BK5-510306-BKS-ADHD-220229. The content is solely the authors’ responsibility and does not necessarily represent the official views of CIHR.

Funding

CIHR Brain Health and Reduction of Risk for Age-related Cognitive Impairment SGD #202306BK5-510306-BKS-ADHD-220229, and Canada Research Chair in Neurological Disorders and Brain Health, CRC-2021-00074.

Author information

Authors and Affiliations

Contributions

TM and TTS conceived the project. TM supervised US and TTS data extraction and harmonisation. TM developed templates for data library encoding and transformation processing. JX and US performed the data analyses with methodological supervision from TM. US created visual display of data. TM wrote the first draft of the manuscript with input from US. All authors contributed to data interpretation and editing of the paper.

Corresponding author

Ethics declarations

Competing interests

The authors declare no competing interests.

Ethical approval

The study protocol was approved by the ethics committees at the clinical (Toronto Rehabilitation Institute-University Health Network) and academic (University of Toronto) institutions. All methods were carried out in accordance with the relevant guidelines and regulations.

Informed consent

This research utilised published data with no access to personal information.

Additional information

Publisher’s note

Springer Nature remains neutral with regard to jurisdictional claims in published maps and institutional affiliations.

Supplementary Information

Below is the link to the electronic supplementary material.

Rights and permissions

Open Access This article is licensed under a Creative Commons Attribution-NonCommercial-NoDerivatives 4.0 International License, which permits any non-commercial use, sharing, distribution and reproduction in any medium or format, as long as you give appropriate credit to the original author(s) and the source, provide a link to the Creative Commons licence, and indicate if you modified the licensed material. You do not have permission under this licence to share adapted material derived from this article or parts of it. The images or other third party material in this article are included in the article’s Creative Commons licence, unless indicated otherwise in a credit line to the material. If material is not included in the article’s Creative Commons licence and your intended use is not permitted by statutory regulation or exceeds the permitted use, you will need to obtain permission directly from the copyright holder. To view a copy of this licence, visit http://creativecommons.org/licenses/by-nc-nd/4.0/.

About this article

Cite this article

Xu, J., Shaikh, U., Tylinski Sant’Ana, T. et al. Explainable machine learning of PROGRESS-Plus social factors predicts cognitive trajectories after traumatic brain injury. Sci Rep 16, 10629 (2026). https://doi.org/10.1038/s41598-026-44818-5

Received:

Accepted:

Published:

Version of record:

DOI: https://doi.org/10.1038/s41598-026-44818-5