Abstract

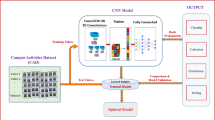

Group activity recognition requires a holistic understanding of individual actions, their spatial relationships, and the surrounding environment. Traditional methods that focus solely on isolated movements often fail to capture the complex inter-player and scene-level dependencies inherent in sports and crowd scenarios. In this research work, a model for group activity recognition is developed. The proposed model combines various contextual features through the integration of poses of individual actors in the scene with the pose-aligned spatial scene context for relational reasoning. Pose features of individual actors are extracted using mmPose, while the scene-level context is encoded through pose-conditioned spatial feature aggregation rather than explicit semantic segmentation. These pose and scene context features extracted are combined and used to construct Actor Relation Graphs (ARGs) using Zero Normalized Cross Correlation (ZNCC) which improves robustness to appearance and variations in illumination. Further, Graph Convolutional Networks (GCNs) are modelled using relationships between individual actors in a scene and their group activities. The proposed framework explicitly combines pose-level and scene-level contextual features into a single relational graph, in contrast to previous ARG-GCN approaches that mainly rely on appearance features. The model is evaluated on two benchmark datasets: the Collective Activity dataset (CAD) and the Volleyball dataset (VD). The model exhibits classification accuracies of 95.02% and 94.81% on CAD and VD, respectively. On a TITAN-XP GPU, the average time per video clip with 41 frames is approximately 0.2 s. The results show that the combination of pose and scene contexts features enhances graph-based relational learning and improves recognition accuracy.

Similar content being viewed by others

Data availability

The Volleyball dataset is publicly available in the “mostafa-saad/deep-activity-rec **”** repository, [https://github.com/mostafa-saad/deep-activity-rec? tab=readme-ov-file#dataset](https:/github.com/mostafa-saad/deep-activity-rec? tab=readme-ov-file) and Collective Activity Dataset is publicly available in the [Computational Vision and Geometry Lab (CVGL) website at Stanford University](https:/cvgl.stanford.edu/projects/collective/collectiveActivity.html) , [https://cvgl.stanford.edu/projects/collective/collectiveActivity.html](https:/cvgl.stanford.edu/projects/collective/collectiveActivity.html) . The datasets used and/or analysed during the current study will be available from the corresponding author on reasonable request.

References

Ullah, H., Muhammad, K., Sajjad, M., Baik, S. W. & Kwak, K. S. Machine learning model for group activity recognition based on discriminative interaction contextual relationship. Appl. Sci. 11, 9545. https://doi.org/10.3390/app11209545 (2021).

Khan, S. D., Ullah, H. & Kwak, K. S. Human group activity recognition using robust features extraction. J. Electr. Comput. Eng. 3090343, (2017). https://doi.org/10.1155/2017/3090343 (2017).

Ibrahim, M. S., Muralidharan, S., Deng, Z., Vahdat, A. & Mori, G. A hierarchical deep temporal model for group activity recognition. In Proceedings of the IEEE Conference on Computer Vision and Pattern Recognition (CVPR) (2016).

Bagautdinov, T., Alahi, A., Fleuret, F., Fua, P. & Savarese, S. Social scene understanding: End-to-end multi-person action localization and collective activity recognition. In Proceedings of the IEEE Conference on Computer Vision and Pattern Recognition (CVPR) (2017).

Li, Y. & Vasconcelos, N. Efficient multi-person group activity recognition by hierarchical relational modeling. In Proceedings of the IEEE Conference on Computer Vision and Pattern Recognition (CVPR) (2019).

Li, S. et al. Groupformer: Group activity recognition with clustered spatial-temporal transformer. In Proceedings of the IEEE/CVF International Conference on Computer Vision (ICCV), 13668–13677 (2021).

Du, Z. & Wang, Q. Exploring global context and position-aware representation for group activity recognition. https://ssrn.com/abstract=4493017, (2023). https://doi.org/10.2139/ssrn.4493017

Yuan, H. & Ni, D. Learning visual context for group activity recognition. In Proceedings of the AAAI Conference on Artificial Intelligence, vol. 35, 3261–3269, (2021). https://doi.org/10.1609/aaai.v35i4.16437

Dasgupta, A., Jawahar, C. V. & Alahari, K. Context aware group activity recognition. In 2020 25th International Conference on Pattern Recognition (ICPR), 10098–10105, (2021). https://doi.org/10.1109/ICPR48806.2021.9412306

Li, S., He, X., Song, W., Hao, A. & Qin, H. Graph diffusion convolutional network for skeleton based semantic recognition of two-person actions. IEEE Trans. Pattern Anal. Mach. Intell. 45, 8477–8493. https://doi.org/10.1109/TPAMI.2023 (2023).

Vahora, S. A. & Chauhan, N. C. Deep neural network model for group activity recognition using contextual relationship. Eng. Sci. Technol. Int. J. 22, 47–54. https://doi.org/10.1016/j.jestch.2018.08.010 (2019).

Amer, M. R., Lei, P., Todorovic, S. & Hirf Hierarchical random field for collective activity recognition in videos. In Proceedings of the European Conference on Computer Vision (ECCV) (2014).

Amer, M. R., Xie, D., Zhao, M., Todorovic, S. & Zhu, S. C. Cost-sensitive top-down/bottom-up inference for multiscale activity recognition. In Proceedings of the European Conference on Computer Vision (ECCV) (2012).

Lan, T., Sigal, L. & Mori, G. Social roles in hierarchical models for human activity recognition. In Proceedings of the IEEE Conference on Computer Vision and Pattern Recognition (CVPR) (2012).

Lan, T., Wang, Y., Yang, W., Robinovitch, S. N. & Mori, G. Discriminative latent models for recognizing contextual group activities. IEEE Trans. Pattern Anal. Mach. Intell. (TPAMI). 33, 814–830 (2011).

Gavrilyuk, K., Sanford, R., Javan, M. & Snoek, C. G. M. Actor-transformers for group activity recognition. In Proceedings of the IEEE Conference on Computer Vision and Pattern Recognition (CVPR) (2020).

Hu, G., Cui, B., He, Y. & Yu, S. Progressive relation learning for group activity recognition. In Proceedings of the IEEE Conference on Computer Vision and Pattern Recognition (CVPR) (2020).

Ibrahim, M. S. & Mori, G. Hierarchical relational networks for group activity recognition and retrieval. In Proceedings of the European Conference on Computer Vision (ECCV) (2018).

Li, X., Chuah, M. C. & Sbgar Semantics based group activity recognition. In Proceedings of the IEEE International Conference on Computer Vision (ICCV) (2017).

Qi, M. et al. Stagnet: An attentive semantic rnn for group activity recognition. In Proceedings of the European Conference on Computer Vision (ECCV), 101–117 (2018).

Shu, T., Todorovic, S., Zhu, S. C. & Cern Confidence energy recurrent network for group activity recognition. In Proceedings of the IEEE Conference on Computer Vision and Pattern Recognition (CVPR) (2017).

Wu, J., Wang, L., Wang, L., Guo, J. & Wu, G. Learning actor relation graphs for group activity recognition. In Proceedings of the IEEE/CVF Conference on Computer Vision and Pattern Recognition (CVPR), 9964–9974 (2019).

Alexe, B., Heess, N., Teh, Y. W. & Ferrari, V. Searching for objects driven by context. In Advances in Neural Information Processing Systems (NeurIPS) (2012).

Divvala, S. K., Hoiem, D., Hays, J. H., Efros, A. A. & Hebert, M. An empirical study of context in object detection. In Proceedings of the IEEE Conference on Computer Vision and Pattern Recognition (CVPR) (2009).

Liang, J., Jiang, L., Niebles, J. C., Hauptmann, A. G. & Fei-Fei, L. Peeking into the future: Predicting future person activities and locations in videos. In Proceedings of the IEEE Conference on Computer Vision and Pattern Recognition (CVPR) (2019).

Lisotto, M., Coscia, P. & Ballan, L. Social and scene-aware trajectory prediction in crowded spaces. In Proceedings of the IEEE International Conference on Computer Vision (ICCV) Workshops (2019).

Liu, Y., Wang, R., Shan, S. & Chen, X. Structure inference net: Object detection using scene-level context and instance-level relationships. In Proceedings of the IEEE Conference on Computer Vision and Pattern Recognition (CVPR) (2018).

Mottaghi, R. et al. The role of context for object detection and semantic segmentation in the wild. In Proceedings of the IEEE Conference on Computer Vision and Pattern Recognition (CVPR) (2014).

Torralba, A. et al. Context-based vision system for place and object recognition. In Proceedings of the IEEE International Conference on Computer Vision (ICCV) (2003).

Shrivastava, A. & Gupta, A. Contextual priming and feedback for faster r-cnn. In Proceedings of the European Conference on Computer Vision (ECCV) (2016).

Das, S. et al. Learning video-pose embedding for activities of daily living. In Proceedings of the European Conference on Computer Vision (ECCV) (2020).

Ulutan, O., Iftekhar, A., Manjunath, B. S. & Vsgnet Spatial attention network for detecting human object interactions using graph convolutions. In Proceedings of the IEEE Conference on Computer Vision and Pattern Recognition (CVPR) (2020).

Ding, H., Jiang, X., Shuai, B., Liu, A. Q. & Wang, G. Context contrasted feature and gated multi-scale aggregation for scene segmentation. In Proceedings of the IEEE Conference on Computer Vision and Pattern Recognition (CVPR) (2018).

Zhou, Y., Sun, X., Zha, Z. J. & Zeng, W. Context reinforced semantic segmentation. In Proceedings of the IEEE Conference on Computer Vision and Pattern Recognition (CVPR) (2019).

Deng, Z., Vahdat, A., Hu, H. & Mori, G. Structure inference machines: Recurrent neural networks for analyzing relations in group activity recognition. In Proceedings of the IEEE Conference on Computer Vision and Pattern Recognition (CVPR), 4772–4781 (2016).

Wang, M., Ni, B. & Yang, X. Recurrent modeling of interaction context for collective activity recognition. In Proceedings of the IEEE Conference on Computer Vision and Pattern Recognition (CVPR) (2017).

Tejonidhi, M. R., Aravinda, C. V., Kumar, S. V. A., Madhu, C. K. & Vinod, A. M. Optimizing group activity recognition with actor relation graphs and gcn-lstm architectures. IEEE Access. 13, 55957–55969. https://doi.org/10.1109/ACCESS.2025.3552668 (2025).

Chappa, N. V. S. R. et al. Spartan: Self-supervised spatiotemporal transformers approach to group activity recognition. In Proceedings of the IEEE/CVF Conference on Computer Vision and Pattern Recognition Workshops (CVPRW), 5158–5168, (2023). https://doi.org/10.1109/CVPRW59228.2023.00544

Shu, T., Todorovic, S., Zhu, S. C. & Cern Confidence-energy recurrent network for group activity recognition. In Proceedings of the IEEE Conference on Computer Vision and Pattern Recognition (CVPR), 5523–5531 (2017).

Yuan, H., Ni, D. & Wang, M. Spatio-temporal dynamic inference network for group activity recognition. In Proceedings of the IEEE/CVF International Conference on Computer Vision (ICCV), 7456–7465, (2021). https://doi.org/10.1109/ICCV48922.2021.00738

Hajimirsadeghi, H., Yan, W., Vahdat, A. & Mori, G. Visual recognition by counting instances: A multi-instance cardinality potential kernel. In Proceedings of the IEEE Conference on Computer Vision and Pattern Recognition (CVPR), 2596–2605 (2015).

Kim, D., Lee, J., Cho, M. & Kwak, S. Detector-free weakly supervised group activity recognition. In Proceedings of the IEEE/CVF Conference on Computer Vision and Pattern Recognition (CVPR), 20051–20061, (2022). https://doi.org/10.1109/CVPR52688. 01945 (2022).

Choi, W., Shahid, K. & Savarese, S. What are they doing? Collective activity classification using spatio-temporal relationship among people. In Proceedings of the IEEE International Conference on Computer Vision (ICCV) Workshops (2009).

He, H., Li, Y., Wang, Y., Li, G. & Guo, W. Runlin Zou. Group Activity Recognition via Spatio-Temporal Reasoning of Key Instances. In Proceedings of the 35th British Machine Vision Conference (2024).

Wang, D. et al. Multi-dimensional convolution transformer for group activity recognition. Multimed Tools Appl 84, 27071–27090 (2025). https://doi.org/10.1007/s11042-024-19973-4 (2024).

Zhu, X., Zhou, Y., Wang, D., Ouyang, W. & Su, R. MLST-Former: Multi-Level Spatial-Temporal Transformer for Group Activity Recognition. IEEE Trans. Circuits Syst. Video Technol. 33(7), 3383–3397 https://doi.org/10.1109/TCSVT.2022.3233069(2023).

Xie, Z., Jiao, C., Wu, K., Guo, D. & Hong, R. Active Factor Graph Network for Group Activity Recognition. IEEE Trans. Image Process. 33, 1574–1587. https://doi.org/10.1109/TIP.2024.3362140 (2024).

Zhu, X. et al. Dynamical Attention Hypergraph Convolutional Network for Group Activity Recognition. IEEE Trans. Neural Networks Learn. Syst. 36(5), 8911–8925. https://doi.org/10.1109/TNNLS.2024.3422265 (2025)

Su, Y. et al. Coming Out of the Dark: Human Pose Estimation in Low-light Conditions, in Proc. 34th Int. Joint Conf. Artif. Intell. (IJCAI), pp. 1888–1896, (2025). https://doi.org/10.24963/ijcai.2025/210

Zhu, S., Liu, X., Xing, M., Oh, C. & Li, J. Spatio-temporal articulation & coordination co-attention graph network for human motion prediction. IEEE Trans. Circuits Syst. Video Technol. 34 (5), 3456–3468 (2024).

Tang, J. et al. MTAN: Multi-degree Tail-aware Attention Network for Human Motion Prediction. Internet Things. 25, 101134. https://doi.org/10.1016/j.iot.2024.101134 (2024).

Author information

Authors and Affiliations

Contributions

Tejonidhi M R contributed in the literature survey, problem identification, design implementation and preparing the article. Raghunandan K R guided throughout all these process. Uma B Co-guided during the above process. Madhu C K and Vinod AM contributed in drafting the article and reviewing and fine tuning the contents of the research.

Corresponding authors

Ethics declarations

Competing interests

The authors declare no competing interests.

Additional information

Publisher’s note

Springer Nature remains neutral with regard to jurisdictional claims in published maps and institutional affiliations.

Rights and permissions

Open Access This article is licensed under a Creative Commons Attribution-NonCommercial-NoDerivatives 4.0 International License, which permits any non-commercial use, sharing, distribution and reproduction in any medium or format, as long as you give appropriate credit to the original author(s) and the source, provide a link to the Creative Commons licence, and indicate if you modified the licensed material. You do not have permission under this licence to share adapted material derived from this article or parts of it. The images or other third party material in this article are included in the article’s Creative Commons licence, unless indicated otherwise in a credit line to the material. If material is not included in the article’s Creative Commons licence and your intended use is not permitted by statutory regulation or exceeds the permitted use, you will need to obtain permission directly from the copyright holder. To view a copy of this licence, visit http://creativecommons.org/licenses/by-nc-nd/4.0/.

About this article

Cite this article

Tejonidhi, M.R., Raghunandan, K.R., Uma, B. et al. A multi-context fusion-aware graph modelling for group activity recognition using pose-conditioned spatial encoding and actor relations. Sci Rep (2026). https://doi.org/10.1038/s41598-026-46296-1

Received:

Accepted:

Published:

DOI: https://doi.org/10.1038/s41598-026-46296-1