Abstract

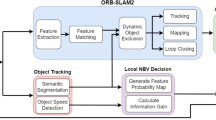

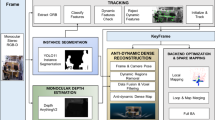

Visual–inertial simultaneous localization and mapping (VI-SLAM) is fundamental for unmanned driving and VR. However, traditional feature-based SLAM systems rely on hand-crafted features and lack dedicated methods for handling dynamic objects, which results in degraded performance under challenging conditions, such as violent motion, varying illumination, and dynamic environments. To handle the issues, we propose SuperDynaSLAM, an enhanced VI-SLAM that integrates SuperPoint which is a deep learning–based feature extractor with a two-stage dynamic feature point detection method. By replacing the traditional ORB extractor with SuperPoint, SuperDynaSLAM can extract more robust feature points under challenging conditions. Furthermore, by fusing semantic information and geometric constraints, SuperDynaSLAM can accurately detect moving objects and remove associated dynamic feature points. Experiments conducted on multiple datasets demonstrate that SuperDynaSLAM achieves more competitive performance compared with ORB-SLAM3 and other SLAM systems.

Similar content being viewed by others

Data availability

The datasets analysed during the current study are available in the KITTI dataset, EuRoC dataset, VIODE dataset and OpenLORIS-Scene dataset repositories, at https://www.cvlibs.net/datasets/kitti/eval_odometry.php, https://projects.asl.ethz.ch/datasets/euroc-mav/, https://github.com/kminoda/VIODE, and https://lifelong-robotic-vision.github.io/dataset/scene.html.

Code availability

The code for the proposed SuperDynaSLAM is available at https://doi.org/10.5281/zenodo.19142293.

References

Ebadi, K. et al. Present and future of slam in extreme environments: The Darpa Subt challenge. IEEE Trans. Rob. 40, 936–959 (2023).

Alsadik, B. & Karam, S. The simultaneous localization and mapping (slam): An overview. Surv. Geospat. Eng. J. 1, 1–12 (2021).

Yuan, S., Wang, H. & Xie, L. Survey on localization systems and algorithms for unmanned systems. Unmanned Syst. 9, 129–163 (2021).

Mur-Artal, R., Montiel, J. M. M. & Tardos, J. D. Orb-slam: A versatile and accurate monocular slam system. IEEE Trans. Rob. 31, 1147–1163 (2015).

Rublee, E., Rabaud, V., Konolige, K. & Bradski, G. Orb: An efficient alternative to sift or surf. In 2011 International Conference on Computer Vision, 2564–2571 (IEEE, 2011).

Vidal, A. R., Rebecq, H., Horstschaefer, T. & Scaramuzza, D. Ultimate slam? Combining events, images, and IMU for robust visual slam in HDR and high-speed scenarios. IEEE Rob. Autom. Lett. 3, 994–1001 (2018).

Gonzalez, R. C. Digital Image Processing (Pearson Education India, 2009).

Campos, C., Elvira, R., Rodríguez, J. J. G., Montiel, J. M. & Tardós, J. D. Orb-slam3: An accurate open-source library for visual, visual-inertial, and multimap slam. IEEE Trans. Rob. 37, 1874–1890 (2021).

Derpanis, K. G. Overview of the Ransac algorithm. Image Rochester NY 4, 2–3 (2010).

Wang, Y., Tian, Y., Chen, J., Xu, K. & Ding, X. A survey of visual slam in dynamic environment: The evolution from geometric to semantic approaches. IEEE Trans. Instrum. Meas. 73, 1–21 (2024).

DeTone, D., Malisiewicz, T. & Rabinovich, A. Superpoint: Self-supervised interest point detection and description. In Proceedings of the IEEE Conference on Computer Vision and Pattern Recognition Workshops, 224–236 (2018).

He, K., Gkioxari, G., Dollár, P. & Girshick, R. Mask r-cnn. In Proceedings of the IEEE International Conference on Computer Vision, 2961–2969 (2017).

Hartley, R. & Zisserman, A. Multiple View Geometry in Computer Vision (Cambridge University Press, 2003).

Jaimez, M., Kerl, C., Gonzalez-Jimenez, J. & Cremers, D. Fast odometry and scene flow from RGB-D cameras based on geometric clustering. In 2017 IEEE International Conference on Robotics and Automation (ICRA), 3992–3999 (IEEE, 2017).

Song, B. et al. Dgm-vins: Visual-inertial slam for complex dynamic environments with joint geometry feature extraction and multiple object tracking. IEEE Trans. Instrum. Meas. 72, 1–11 (2023).

Dai, W., Zhang, Y., Li, P., Fang, Z. & Scherer, S. RGB-D slam in dynamic environments using point correlations. IEEE Trans. Pattern Anal. Mach. Intell. 44, 373–389 (2020).

Kim, D.-H. & Kim, J.-H. Effective background model-based RGB-D dense visual odometry in a dynamic environment. IEEE Trans. Rob. 32, 1565–1573 (2016).

Lin, H.-Y., Liu, T.-A. & Lin, W.-Y. Inertialnet: Inertial measurement learning for simultaneous localization and mapping. Sensors 23, 9812 (2023).

Lai, D., Zhang, Y. & Li, C. A survey of deep learning application in dynamic visual slam. In 2020 International Conference on Big Data & Artificial Intelligence & Software Engineering (ICBASE), 279–283 (IEEE, 2020).

Zhong, F., Wang, S., Zhang, Z. & Wang, Y. Detect-slam: Making object detection and slam mutually beneficial. In 2018 IEEE Winter Conference on Applications of Computer Vision (WACV), 1001–1010 (IEEE, 2018).

Hu, X. et al. Cfp-slam: A real-time visual slam based on coarse-to-fine probability in dynamic environments. In 2022 IEEE/RSJ International Conference on Intelligent Robots and Systems (IROS), 4399–4406 (IEEE, 2022).

Bescos, B., Fácil, J. M., Civera, J. & Neira, J. Dynaslam: Tracking, mapping, and inpainting in dynamic scenes. IEEE Rob. Autom. Lett. 3, 4076–4083 (2018).

Vincent, J. et al. Dynamic object tracking and masking for visual slam. In 2020 IEEE/RSJ International Conference on Intelligent Robots and Systems (IROS), 4974–4979 (IEEE, 2020).

Bolya, D., Zhou, C., Xiao, F. & Lee, Y. J. Yolact: Real-time instance segmentation. In Proceedings of the IEEE/CVF International Conference on Computer Vision, 9157–9166 (2019).

Yan, L. et al. DGS-slam: A fast and robust RGBD slam in dynamic environments combined by geometric and semantic information. Remote Sens. 14, 795 (2022).

Cheng, S., Sun, C., Zhang, S. & Zhang, D. SG-slam: A real-time RGB-D visual slam toward dynamic scenes with semantic and geometric information. IEEE Trans. Instrum. Meas. 72, 1–12 (2022).

Mur-Artal, R. & Tardós, J. D. Orb-slam2: An open-source slam system for monocular, stereo, and RGB-D cameras. IEEE Trans. Rob. 33, 1255–1262 (2017).

Qin, L. et al. Rso-slam: A robust semantic visual slam with optical flow in complex dynamic environments. IEEE Trans. Intell. Transp. Syst. 25, 14669–14684 (2024).

Geiger, A., Lenz, P. & Urtasun, R. Are we ready for autonomous driving? The kitti vision benchmark suite. In Conference on Computer Vision and Pattern Recognition (CVPR) (2012).

Burri, M. et al. The Euroc micro aerial vehicle datasets. Int. J. Rob. Res. 35, 1157–1163 (2016).

Minoda, K., Schilling, F., Wüest, V., Floreano, D. & Yairi, T. Viode: A simulated dataset to address the challenges of visual-inertial odometry in dynamic environments. IEEE Rob. Autom. Lett. 6, 1343–1350 (2021).

Shi, X. et al. Are we ready for service robots? The OpenLORIS-Scene datasets for lifelong SLAM. In 2020 International Conference on Robotics and Automation (ICRA), 3139–3145 (2020).

Sturm, J., Engelhard, N., Endres, F., Burgard, W. & Cremers, D. A benchmark for the evaluation of rgb-d slam systems. In 2012 IEEE/RSJ International Conference on Intelligent Robots and Systems, 573–580 (IEEE, 2012).

Ge, Y., Zhang, L., Wu, Y. & Hu, D. Pipo-slam: Lightweight visual-inertial slam with preintegration merging theory and pose-only descriptions of multiple view geometry. IEEE Trans. Rob. 40, 2046–2059 (2024).

Qin, T., Cao, S., Pan, J. & Shen, S. A general optimization-based framework for global pose estimation with multiple sensors. arXiv preprint arXiv:1901.03642 (2019).

Song, S., Lim, H., Lee, A. J. & Myung, H. Dynavins: A visual-inertial slam for dynamic environments. IEEE Rob. Autom. Lett. 7, 11523–11530 (2022).

Zhang, H., Wang, D. & Huo, J. Real-time dynamic slam using moving probability based on IMU and segmentation. IEEE Sens. J. 24, 10878–10891 (2024).

Liu, X., Zhang, Y., Lu, G., Li, S. & Liu, J. Dgo-vins: A visual-inertial slam for dynamic environments with geometric constraint and adaptive state optimization. IEEE Rob. Autom. Lett. (2025).

Zhang, C., Gu, S., Li, X., Deng, J. & Jin, S. Vid-slam: A new visual inertial slam algorithm coupling an RGB-D camera and IMU based on adaptive point and line features. IEEE Sens. J. (2024).

Acknowledgements

This work was supported by the National Nature Science Foundation of China [Grant number 61374197]; the Shanghai Nature Science Foundation of Shanghai Science and Technology Commission [Grant number 20ZR1437900]; the Jiangsu Basic Science Foundation of Colleagues and Universities (nature science) [Grant number 24KJD520009].

Author information

Authors and Affiliations

Contributions

Conceptualization, J.C. and Y.H.; methodology, J.C. and Y.H.; software, J.C.; validation, J.C., Y.H. and L.W.; formal analysis, J.C. and Y.H.; investigation, J.C.; writing—original draft preparation, J.C. and Y.H.; writing—review and editing, J.C., Y.H. and L.W.; project administration, Y.H. and L.W.; funding acquisition, Y.H. and L.W..

Corresponding author

Ethics declarations

Competing interests

The authors declare no competing interests.

Additional information

Publisher’s note

Springer Nature remains neutral with regard to jurisdictional claims in published maps and institutional affiliations.

Rights and permissions

Open Access This article is licensed under a Creative Commons Attribution-NonCommercial-NoDerivatives 4.0 International License, which permits any non-commercial use, sharing, distribution and reproduction in any medium or format, as long as you give appropriate credit to the original author(s) and the source, provide a link to the Creative Commons licence, and indicate if you modified the licensed material. You do not have permission under this licence to share adapted material derived from this article or parts of it. The images or other third party material in this article are included in the article’s Creative Commons licence, unless indicated otherwise in a credit line to the material. If material is not included in the article’s Creative Commons licence and your intended use is not permitted by statutory regulation or exceeds the permitted use, you will need to obtain permission directly from the copyright holder. To view a copy of this licence, visit http://creativecommons.org/licenses/by-nc-nd/4.0/.

About this article

Cite this article

Cui, J., Huang, Y. & Wang, L. Enhanced visual-inertial SLAM Using SuperPoint and semantic geometric dynamic feature detection. Sci Rep (2026). https://doi.org/10.1038/s41598-026-46629-0

Received:

Accepted:

Published:

DOI: https://doi.org/10.1038/s41598-026-46629-0