Abstract

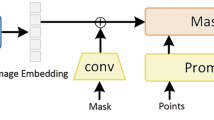

Although MedSAM2 achieves 3D medical image segmentation through a memory attention mechanism, its performance declines significantly when manually designed prompts are replaced by automatically generated ones—particularly in the context of rare cases or multi-object segmentation scenarios. Current automatic prompt generation methods often extract prompt cues directly from image features, which typically lack rich spatiotemporal context and semantic information, resulting in suboptimal performance. To overcome these limitations, we propose DSSAM2-LAPG, a dual-stage 3D medical image segmentation network that integrates a Learnable Automatic Prompt-space Generator (LAPG) with memory attention. The LAPG acts as a trainable mapper that transforms raw 3D image features into a semantically-rich and spatially-aligned prompt embedding space. In the preliminary stage, this mapper utilizes learnable object tokens (concept embeddings) to dynamically interact with image features, generating coarse but reliable spatial priors \(M_{\text {coarse}}\) and initial semantic prompts. In the refinement stage, a memory attention mechanism integrates these priors with historical context from a support memory to precisely delineate boundaries and ensure 3D consistency. This integrated approach specifically addresses the failure of existing methods in capturing 3D context, encoding rare-object semantics, and providing instance-aware guidance. Experimental results demonstrate that DSSAM2-LAPG achieves Dice score improvements of 7.2%, 6.0% and 4.1% on the private XYCH-cervical dataset, public dataset CCTH-Cervical and Multi-Organ BTCV datasets, respectively, compared to the strong baseline MedSAM2, and all without requiring any manual prompts. Our code is available https://github.com/liufangcoca-515/APG/tree/main.

Similar content being viewed by others

Acknowledgements

This work was supported in part by the National Natural Science Foundation of China under Grants 82472634, 82505297; the Key Research and Development Program of Hubei Province (2023BCB128), the Key Research and Development Program of Hubei Province (2024BCB055), and in part supported by the Scientific Research Team Plan of Wuhan Technology and Business University.

Funding

YanDuo Zhang received funding from the National Natural Science Foundation of China under Grant ID 62072350; Hui Xing received funding from the National Natural Science Foundation of China under Grant ID 82472634; and the Key Research and Development Program of Hubei Province, Grant ID 2024BCB055.

Author information

Authors and Affiliations

Corresponding author

Ethics declarations

Competing interests

The authors declare no competing interests.

Additional information

Publisher’s note

Springer Nature remains neutral with regard to jurisdictional claims in published maps and institutional affiliations.

Rights and permissions

Open Access This article is licensed under a Creative Commons Attribution-NonCommercial-NoDerivatives 4.0 International License, which permits any non-commercial use, sharing, distribution and reproduction in any medium or format, as long as you give appropriate credit to the original author(s) and the source, provide a link to the Creative Commons licence, and indicate if you modified the licensed material. You do not have permission under this licence to share adapted material derived from this article or parts of it. The images or other third party material in this article are included in the article’s Creative Commons licence, unless indicated otherwise in a credit line to the material. If material is not included in the article’s Creative Commons licence and your intended use is not permitted by statutory regulation or exceeds the permitted use, you will need to obtain permission directly from the copyright holder. To view a copy of this licence, visit http://creativecommons.org/licenses/by-nc-nd/4.0/.

About this article

Cite this article

Liu, F., Yang, J., Zhang, Y. et al. Dual-stage 3D medical image segmentation integrating learnable prompt generation and memory attention. Sci Rep (2026). https://doi.org/10.1038/s41598-026-51220-8

Received:

Accepted:

Published:

DOI: https://doi.org/10.1038/s41598-026-51220-8