Abstract

Although using artificial intelligence (AI) to analyze ultrasound images is a promising approach to assessing thyroid nodule risks, traditional AI models lack transparency and interpretability. We developed a multimodal generative pre-trained transformer for thyroid nodules (ThyGPT), aiming to provide a transparent and interpretable AI copilot model for thyroid nodule risk assessment and management. Ultrasound data from 59,406 patients across nine hospitals were retrospectively collected to train and test the model. After training, ThyGPT was found to assist in reducing biopsy rates by more than 40% without increasing missed diagnoses. In addition, it detects errors in ultrasound reports 1,610 times faster than humans. With the assistance of ThyGPT, the area under the curve for radiologists in assessing thyroid nodule risks improved from 0.805 to 0.908 (p < 0.001). As an AI-generated content-enhanced computer-aided diagnosis (AIGC-CAD) model, ThyGPT has the potential to revolutionize how radiologists use such tools.

Similar content being viewed by others

Introduction

Thyroid nodules are a common endocrine condition, with a prevalence of over 60% in adults and an incidence three times higher in women than in men1,2. Most of these nodules are benign, while only about 7–15% are malignant3,4. In clinical practice, ultrasound (US) imaging and fine-needle aspiration (FNA) biopsy are the primary methods used to assess the risk of thyroid nodules5. However, the diagnostic outcomes of ultrasonography rely heavily on radiologists’ experiences and skills. Even with FNA, precise risk evaluation remains elusive for over 15% of nodules6,7. To a certain extent, these uncertainties in the risk assessment of thyroid nodules have led to a widespread issue of overdiagnosis and overtreatment, such as unnecessary biopsies or invasive surgeries for nodules ultimately determined to be benign8,9,10. This phenomenon not only inflicts significant physical and psychological trauma upon patients but also substantially augments healthcare expenditure11,12. The current situation of overtreatment of thyroid nodules emphasizes the necessity for more refined and accurate risk assessment tools.

Recently, studies have indicated that computer-aided diagnosis (CAD) based on US images and artificial intelligence (AI) models holds promise as an effective complementary solution13,14,15,16. In general, these studies have focused on developing specialized AI models, such as ThyNet, RedImageNet, and DeepThy-Net, to extract latent features from large US image data sets and assess the risk of thyroid nodules17,18,19. These US image-based CAD models have made substantial progress20,21; however, they also have significant shortcomings. First, existing CAD models lack transparency and cannot provide the rationale or decision-making basis behind their diagnoses22,23, creating a gap in understanding between radiologists and CAD models. This “black box” characteristic undermines the confidence of radiologists, patients, and healthcare administrators in the diagnostic results of these CAD models. Second, the outputs of existing CAD models are mostly simple scores or categorical labels24,25,26; therefore, they lack meaningful interaction with radiologists. This “mute box” characteristic renders challenges for radiologists in distinguishing which AI-based diagnoses are accurate and reasonable and which may be erroneous or AI hallucinations. These barriers in communication and comprehension have even led many radiologists to abandon these AI-based CAD methods27.

The above mentioned issues have sparked controversy over the practical role of AI in thyroid nodule risk assessment. To some extent, such controversy has hindered the progress and integration of large data and AI technology in thyroid nodule diagnosis. In comparison with traditional deep learning models that possess only simple pattern recognition capabilities, models with transparent explanatory capabilities—particularly in elucidating diagnostic criteria and earning people’s trust—are currently being explored in CAD development. This necessitates models equipped with natural language interaction capabilities that allow for question-and-answer exchanges with doctors28, fully leveraging the computational advantages of AI and the professional expertise of radiologists.

The emergence of generative large language models (LLMs) has provided an opportunity to address the aforementioned problems29,30. Many recent studies have confirmed that LLMs, represented by ChatGPT, exhibit superior performance in many tasks27,28,29,30. By incorporating the latest LLM technology, we constructed an AI-generated content-enhanced computer-aided diagnosis (AIGC-CAD) model called the generative pre-trained transformer for thyroid nodules (ThyGPT). To train and test ThyGPT effectively, we retrospectively collected 511,620 US images and 49,733 US reports from 59,406 patients, along with 11 diagnostic guidelines, as training and test data. After training, ThyGPT was found to bridge the semantic gap between the US images and diagnostic reports, effectively interact with radiologists, assist in assessing the risk of thyroid nodules, and avoid a large number of unnecessary FNAs. Furthermore, ThyGPT assisted radiologists in identifying errors in diagnostic reports, thereby effectively preventing misdiagnoses and missed diagnoses caused by report inaccuracies.

To the best of our knowledge, ThyGPT is the first multimodal generative pre-trained transformer (GPT) model independently trained on a large-scale thyroid nodule US data set. Moreover, the concept of AIGC-CAD is proposed for the first time in the field of medical image diagnosis. By combining AIGC-CAD techniques, the ThyGPT model can function as an AI copilot model that intelligently interacts with radiologists, automates thyroid nodule risk assessment, provides decision support, and detects potential errors in diagnostic reports. AIGC-CAD systems such as ThyGPT may become the main direction of the next generation of CAD systems, potentially revolutionizing thyroid nodule diagnosis and overall CAD.

Results

Study cohorts and dataset construction

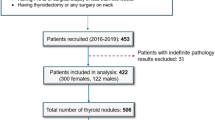

We established three cohorts, upon which we built one training set and two test sets. Table 1 presents the baseline characteristics of the training and test sets. Figure 1 illustrates the relationships between the different cohorts and data sets. The training set consisted of 487,246 ultrasound images from 67,981 thyroid nodules; additionally, we incorporated 48,470 US reports and 11 diagnostic guidelines (listed in Supplementary Table 1) as language training data. To better evaluate model performance, we established two independent external test sets with distinct validation objectives. Test Set 1 comprised 3376 thyroid nodules from 2964 patients, all with definitive surgical pathological confirmation, thereby enabling assessment of ThyGPT’s diagnostic accuracy and its efficacy in reducing unnecessary fine-needle aspiration biopsies. Test Set 2 consisted of 1263 US reports, of which 157 were found to contain various errors. This set was designed specifically to evaluate ThyGPT’s capability in detecting errors in US reports. Given the absence of surgical pathological confirmation in a substantial proportion of those cases with erroneous US reports, Test Set 2 was not used for analyzing unnecessary biopsy reduction. The above US imaging data were obtained from 65 machines. Supplementary Table 2 presents detailed statistical information about these machines. Supplementary Table 3 presents more information on the multicenter data distribution. Figure 2 shows the overall design of the ThyGPT model for assisting in the diagnosis of thyroid nodules. The basic framework used was the LLaMA3 model and transformer architecture.

The main cohort of data was sourced from Centers 1 to 4, which was utilized for building the training set. External Test Cohort 1 consisted of data from Centers 5 to 8, and these data were merged to form Test Set 1. This set was used to evaluate the performance of ThyGPT in assisting radiologists in diagnosing thyroid nodules. External Test Cohort 2 comprised data from Center 9, primarily consisting of 157 erroneous reports. These reports were merged with 1106 correct reports from Center 5 to form Test Set 2. This set was utilized to assess the ability of ThyGPT to automatically detect potential errors in US reports.

a Pre-labeled nodules, guidelines, diagnostic reports, and pathological results were utilized for the training of ThyGPT. b The ThyGPT model was developed by integrating multi-head self-attention mechanisms and the transformer architecture of ThyGPT (For more details, see Supplementary Fig. 1). c Patients undergo US examinations, and radiologists diagnose patients based on the US images, which are simultaneously input into the ThyGPT model for analysis. Radiologists engage in detailed discussions with ThyGPT regarding the condition of the nodules. d ThyGPT engages in discussions with radiologists and answers their questions. The overall performance of ThyGPT is evaluated from different perspectives.

The results were primarily divided into three parts. First, we compared the performance of radiologists in diagnosing thyroid nodules under different conditions, such as unassisted diagnosis, assisted only by the traditional feature heatmaps, and assisted by real-time conversations with ThyGPT. Second, we extracted some typical cases of conversation between radiologists and ThyGPT. Third, we tested ThyGPT’s ability to detect errors in US reports.

Auxiliary diagnostic performance of ThyGPT

The diagnostic performance of ThyGPT was evaluated on Test Set 1, which comprised 2964 patients and 3376 tumors, of which 1601 were malignant. To thoroughly assess ThyGPT’s capability in assisting diagnosis, we conducted a detailed comparison of the diagnostic outcomes when ThyGPT was used by three junior radiologists (with fewer than 5 years of experience) and three senior radiologists (with more than 10 years of experience). To facilitate radiologists in utilizing ThyGPT as an auxiliary diagnostic tool, we established the following usage rules: (1) Initially, radiologists and ThyGPT conduct independent evaluations; (2) when radiologists’ assessment is consistent with ThyGPT’s evaluation, no modification is made; (3) when radiologists’ diagnosis differs from ThyGPT’s evaluation, radiologists query ThyGPT; and (4) ultimately, radiologists decide whether to consider ThyGPT’s diagnostic results and whether to modify their final diagnosis based on the query results.

Figure 3a depicts the receiver-operating characteristic (ROC) curve for ThyGPT, with the diagnostic outcomes of radiologists under different conditions represented as discrete points. This figure shows that referring to traditional feature heatmaps aided in improving the diagnostic performance of radiologists. However, when radiologists engaged in communication with ThyGPT, their diagnostic capability substantially improved, even surpassing the performance of ThyGPT alone.

a The green curve displays the ROC curve of ThyGPT for discriminating benign and malignant nodules. The gray dots represent the independent diagnostic results of radiologists; the blue dots indicate radiologists’ diagnoses assisted by feature heatmaps only; and the orange dots denote radiologists’ diagnoses after discussing with ThyGPT. The circular dots represent junior radiologists; the square dots represent senior radiologists; and the different colored pentagrams represent the mean points of radiologists under different diagnostic modes. We magnified the key area and drew circles centered on the mean point, with the maximum outlier distance as the radius. These circles delineate the coverage ranges of radiologists’ diagnostic results under the different conditions. Compared to independent diagnoses by radiologists (gray) and those assisted by traditional feature heatmaps (blue), the ThyGPT model, which utilized an AI copilot for assistance, exhibited significantly improved diagnostic accuracy (orange). b Histogram distribution of ThyGPT’s predicted probabilities for thyroid nodule malignancy. The horizontal axis represents the predicted scores; the vertical axis denotes the sample count; the pink bins signify benign nodules; and the green bins indicate malignant nodules. c Comparison of confusion matrices in four scenarios: radiologists’ independent diagnosis, ThyGPT’s independent diagnosis, radiologists assisted by feature heatmaps, and radiologists comprehensively assisted by ThyGPT. d ThyGPT assisted radiologists in diagnosis and reduced unnecessary FNAs. The green squares illustrate the proportion of missed FNAs for malignant tumors.

Table 2 presents the specific diagnostic performance under different assistance modalities. The data indicated that without ThyGPT, the average sensitivity and specificity of radiologists on the test set were 0.802 (95% confidence interval [CI]: 0.793–0.809) and 0.809 (95% CI: 0.802–0.817), respectively. After radiologists thoroughly communicated with ThyGPT, the average sensitivity increased to 0.893 (95% CI: 0.887–0.899), and the average specificity increased to 0.922 (95% CI: 0.917–0.927). Considering the p value changes in various evaluation metrics, such as the true positive rate (TPR), true negative rate (TNR), positive predictive value (PPV), negative predictive value (NPV), and F1 score, the average diagnostic capability of junior radiologists reached or approached that of the AI model, while the average diagnostic performance of senior radiologists significantly surpassed that of the AI model.

Figure 3b illustrates the histogram distribution of ThyGPT’s predicted probabilities for thyroid nodule malignancy. The distribution in the figure shows that nodules of different risk levels demonstrated clustering characteristics. Figure 3c depicts the confusion matrices in four scenarios: radiologists’ independent diagnosis, ThyGPT’s independent diagnosis, radiologists assisted by feature heatmaps, and radiologists comprehensively assisted by ThyGPT. The changes in the true positives and true negatives in the confusion matrix indicated that ThyGPT effectively assisted radiologists in assessing the nodule risk.

We further explored how to better use ThyGPT and integrate it into the precision diagnosis and treatment of thyroid nodules. The specific strategy was as follows: (1) High-PPV (H-PPV) nodules: Nodules with a ThyGPT-predicted malignancy score exceeding 0.7 (corresponding to a PPV of exceeding 0.960) are categorized as H-PPV nodules. Detailed information on PPV and NPV under different thresholds is provided in Supplementary Table 4. If ThyGPT identifies a H-PPV nodule and the radiologist reviewing ThyGPT’s results does not raise any objections, bypassing FNA and proceeding directly to surgery can be considered. (2) Moderately suspicious nodules (MSN): Nodules with a ThyGPT-predicted malignancy score between 0.3 and 0.7 are defined as MSNs. In such cases, the radiologist may determine whether to perform FNA based on the ACR guidelines. (3) High-NPV (H-NPV) nodules: Nodules with a ThyGPT-predicted malignancy score below 0.3 (corresponding to an NPV of exceeding 0.975) are categorized as H-NPV nodules. If ThyGPT detects such a nodule and the radiologist raises no objections, follow-up can be considered as an appropriate course of action. In practical applications, the thresholds can be adjusted as needed to modify the expected PPV and NPV.

According to these rules, the proportion of FNAs in the test set decreased from 64.2% to 23.3% (p < 0.001), while the proportion of missed malignant tumors decreased from 11.6% to 5.3% (p < 0.001). The detailed comparison between ACR criteria and ThyGPT-assisted H-PPV approach is presented in Supplementary Table 5. Among 2167 nodules requiring FNA according to ACR criteria, 1459 (67.3%) did not require FNA when applying the above H-PPV and H-NPV rules. In the H-PPV subgroup identified by ThyGPT, there were 903 cases, of which 886 were malignant and 17 were benign, with an accuracy of 98.1%.

Communication between ThyGPT and radiologists

Figures 4, 5 display several representative cases. For the cases shown in Fig. 4, radiologists’ initial judgment was not entirely accurate; however, they modified their diagnoses after consulting and reviewing the ThyGPT analysis. Specifically, for Sample 1 in Fig. 4, the radiologist’s initial diagnosis was a benign nodule with ACR TR category 4, whereas ThyGPT identified the nodule as malignant after analyzing the US image. During the inquiry process, ThyGPT analyzed and explained the relationship between its diagnosis and nodule components to the radiologist. Consequently, the radiologist revised the final diagnosis after considering ThyGPT’s assessment. For Sample 2 in Fig. 4, ThyGPT evaluated the nodule as malignant, highlighting key malignant features present in the solid areas. The radiologist accepted ThyGPT’s assessment and revised the diagnosis following their interactive session.

a Radiologist’s initial diagnosis: ACR TR category 4, likely to be benign. ThyGPT’s assessment: ACR TR category 4, malignant nodule, with a malignancy score of 0.83. The radiologist consulted ThyGPT and updated the diagnosis: ACR TR category 4, likely to be malignant. Final pathological result: a malignant nodule. b Radiologist’s initial diagnosis: ACR TR category 3, likely to be benign. ThyGPT’s assessment: malignancy score 0.86. The radiologist consulted ThyGPT and updated the diagnosis: likely to be malignant. Final pathological result: a malignant nodule.

a Radiologist’s initial diagnosis: ACR TR category 4, likely to be malignant. ThyGPT’s assessment: ACR TR category 4, likely to be benign, with a malignancy score of 0.29. The radiologist consulted ThyGPT and insisted on their initial diagnosis. Final pathological result: a malignant nodule. b Radiologist’s initial diagnosis: ACR TR category 3, likely to be benign. ThyGPT’s first assessment: ACR TR category 4, likely to be malignant, with a malignancy score of 0.75. ThyGPT’s second assessment: likely to be benign, with a malignancy score of 0.21. The radiologist consulted ThyGPT and insisted on their initial diagnosis. Final pathological result: a benign nodule.

Figure 5 presents cases where clinical radiologists correctly diagnosed the nodules, whereas ThyGPT committed errors. Sample 1 in Fig. 5 was a malignant nodule, but ThyGPT assessed it as benign, with a score of 0.29. However, the radiologist found that the detection result was inaccurate, so they prompted ThyGPT to re-detect the nodule. ThyGPT accurately detected the nodule in the second round, but ThyGPT’s auxiliary diagnostic conclusion did not convince the radiologist. Finally, the radiologist maintained the diagnosis of the nodule as likely malignant, and the final pathological result supported the radiologist’s diagnosis. Conversely, Sample 2 in Fig. 5 was a benign nodule, which ThyGPT incorrectly assessed as malignant. The radiologist deemed the heatmap overly concentrated on hyperechoic areas (and thus lacking in reference value), questioned the model’s judgment and instructed the model to recalculate, ignoring the erroneous features in the hyperechoic areas. The model then provided a correct diagnosis following the radiologist’s directive.

Table 3 provide a detailed analysis of missed malignant nodules by ThyGPT, radiologists, and the model-assisted radiologists. Notably, follicular thyroid cancer (FTC) was the most difficult to identify, with radiologists missing 44.7% of cases, compared to the 17.0% missed by ThyGPT. Small nodules (<10 mm), particularly TR3 nodules, spongiform/cystic compositions, and iso/hyperechoic patterns, showed higher miss rates across all methods. However, the integration of ThyGPT with radiologists consistently improved detection rates, reducing missed cases closer to, or even matching, ThyGPT’s standalone performance, suggesting that AI assistance could effectively complement human expertise.

Table 4 shows the overall distribution of diagnostic changes made by different radiologists after using ThyGPT. Based on this data, there is a clear pattern in how radiologists modified their diagnoses when assisted by ThyGPT. Junior radiologists showed a higher alteration rate (11.5%) compared to senior radiologists (9.9%), indicating that they were more likely to change their initial assessments. Importantly, when radiologists did alter their diagnoses, they were highly accurate, with only 0.2% overall wrong alterations compared to 10.5% correct alterations. This indicates that ThyGPT’s assistance led to predominantly beneficial changes in diagnostic decisions, with higher impact on junior radiologists, while maintaining a very low error rate across both experience levels.

We investigated the characteristic distribution of both correct and incorrect diagnostic alterations (see supplementary Table 6), which revealed that TR4 nodules were more prone to diagnostic modifications, whereas other characteristics showed a relatively uniform distribution. In comparison, we found that ThyGPT’s predicted nodule malignancy risk values were highly correlated with radiologists’ incorrect diagnostic alterations. As shown in Fig. 6, 92.7% (38/41) of incorrect alterations occurred between 0.4 and 0.6 (between red dashed lines). This suggests that when ThyGPT predicts nodule malignancy risk probabilities within this range, its own assessment of malignancy risk is in a marginal zone and users should exercise additional caution when considering ThyGPT’s nodule analysis.

a Radiologist’s initial diagnosis: ACR TR category 4, likely to be malignant. ThyGPT’s assessment: ACR TR category 4, likely to be benign, with a malignancy score of 0.43. The radiologist consulted ThyGPT and incorrectly altered the diagnosis as: likely to be benign. Final pathological result: a malignant nodule. b Distribution of predicted malignancy risk values for nodules with incorrectly altered diagnoses by radiologists. c Distribution of predicted malignancy risk values for nodules with correctly altered diagnoses by radiologists.

Detection of errors in diagnostic reports

Test Set 2 was primarily used to evaluate ThyGPT’s ability to detect errors in US reports. This data set included a total of 1263 US reports, 157 of which contained errors. These 157 errors were classified into five categories: 35 omissions, 30 insertions, 33 side confusions, 36 inconsistencies, and 23 other errors. These five categories are the common types of errors found in radiological reports. A more detailed definition of these error categories can be found in Supplementary Table 7. Three senior radiologists and three junior radiologists, as well as ThyGPT, were responsible for detecting these errors. Initially, radiologists reviewed the reports separately to identify any errors. Thereafter, ThyGPT searched for errors in the reports independently. Finally, each radiologist combined their own findings with those from ThyGPT to produce the final result.

Figure 7 shows some samples of erroneous reports, radiologists’ independent judgments, and the outcomes obtained with the assistance of ThyGPT. The error detection rate of ThyGPT was 0.905 (142/157; 95% CI: 0.899–0.910), which exceeded that of all radiologists. Figure 8a presents the error detection rate of radiologists and ThyGPT. With the assistance of ThyGPT, radiologists’ average error detection rate increased from 0.764 (120/157; 95% CI: 0.751–0.779) to 0.962 (151/157; 95% CI: 0.959–0.966), and the p value was less than 0.001. Even junior radiologists exhibited error detection rates approaching those of senior radiologists. Figure 8b illustrates the average error detection rates of radiologists for each error type, as well as their error detection and correction rates aided by ThyGPT. Notably, ThyGPT achieved a 100% error detection and correction rate for side confusion errors.

The first column shows samples of erroneous reports (errors are marked in red). The second column shows the error description and ThyGPT’s automatic detections and corrections. a The report exemplifies a side confusion error. The ThyGPT system successfully detected that the anatomical marker indicated the left thyroid lobe; however, the report text erroneously described findings pertaining to the right thyroid lobe. The feature heatmap generated by ThyGPT highlighted the model’s areas of focus. b The report exemplifies an omission error. The report omitted information regarding the nodule size. ThyGPT’s output provided the correct prompting and the corresponding feature heatmap. c The report exemplifies an inconsistency error. The US images revealed the presence of a halo and internal microcalcifications within the nodule, which contradicted the description in the report. ThyGPT accurately detected this inconsistency and visualized the errors using two heatmaps. For all errors, ThyGPT provided corrections. The third example demonstrates an incomplete correction. After introducing new features, the corresponding ACR TR classification needs to be simultaneously corrected (marked in blue). However, ThyGPT currently lacks the capability to successfully modify such potential cascading errors.

a Detection results of radiologists, ThyGPT, and radiologists aided by ThyGPT in percentages. b Error detection rates of radiologists, ThyGPT, and radiologists aided by ThyGPT for each error type; it also demonstrates the proportion of auto-corrected errors by ThyGPT. The correction results were reviewed by two senior radiologists to confirm whether the corrections were successful.

The average time for ThyGPT to process the reports was 0.031 s, which was shorter than that for radiologists (49.9 s). This processing rate satisfied the requirement for real-time error detection upon report completion. Considering ThyGPT’s average error detection rate of 0.905, employing the model to immediately scan US reports upon their completion could reduce over 90% of report errors in the first instance. More detailed error detection results of the radiologists can be found in Supplementary Fig. 2

Discussion

In this study, we developed ThyGPT, an AIGC-CAD model that integrates generative LLMs with computer vision techniques for thyroid nodule diagnosis and management. Our findings demonstrate that the proposed ThyGPT model can function as an AI copilot model that intelligently interacts with radiologists to enhance their diagnostic performance, reduce unnecessary invasive procedures, assist in the supervision and detection of errors in US examination reports, and ultimately improve the overall quality of care for patients with thyroid nodules.

The key advantage of ThyGPT is its ability to engage in natural language interaction, enabling radiologists to query the model’s rationale and obtain detailed explanations for its diagnoses27,29. This transparency addresses a major limitation of existing CAD models, which often function as “black boxes” and “mute boxes”15,16,17,18,19,20. By allowing radiologists to understand the model’s reasoning, ThyGPT fosters trust and confidence in its diagnoses, potentially increasing the adoption of AI-assisted diagnosis in clinical practice. The results from our independent test sets highlight ThyGPT’s efficacy in improving diagnostic accuracy and reducing unnecessary invasive procedures. Compared to many previous methods16,18, radiologists achieved significantly higher sensitivity and specificity in diagnosing thyroid nodules when they were assisted by ThyGPT. This improvement was observed for both junior and senior radiologists, suggesting that ThyGPT could serve as a valuable tool for radiologists at various levels of experience. The results suggest that junior radiologists may be more receptive to ThyGPT’s recommendations, potentially due to a lack of confidence and experience.

Another key function of ThyGPT lies in its ability to detect errors in ultrasound reports. Currently, some regions employ double-reading systems where senior radiologists review reports to ensure accuracy. However, the reality of radiologists’ heavy workload combined with high-pressure clinical environments makes reporting errors inevitable31,32. Moreover, in underdeveloped regions, the shortage of radiologists impedes the implementation of double-reading systems, resulting in higher error rates in ultrasound reports. These errors may result in patients undergoing redundant examinations or receiving unnecessary treatments. Addressing this challenge requires establishing deep semantic associations between US report textual descriptions and US images. However, early deep learning approaches proved inadequate for this task. In contrast, ThyGPT has demonstrated exceptional cross-modal comprehension capabilities in this study. Through extensive training, ThyGPT can precisely match and align textual descriptions with the semantic features of US images. This cross-modal understanding lays the foundation for further standardization of US reporting and the detection of potential reporting discrepancies. According to our experimental results, ThyGPT can detect over 90% of errors in US reports, operating at a speed 1610 times faster than human review.

To validate whether the model exhibits language-dependent variations in report comprehension and error detection tasks, we employed a multilingual cross-validation (Supplementary Fig. 3). We found that the model showed no significant multilingual variations (p = 0.816). This finding suggests that ThyGPT can serve as a language-agnostic auxiliary tool, supporting medical institutions across different linguistic backgrounds. Furthermore, this multilingual compatibility enables potential applications in international medical collaboration.

Since the inception of deep learning, AI models have continuously improved the diagnostic accuracy for various diseases, including thyroid nodules, with their accuracy even surpassing that of professional medical personnel. In our study, the diagnostic accuracy of ThyGPT was also higher than that of radiologists. However, this finding does not imply that ThyGPT can perform diagnoses independently. On one hand, even the most advanced LLMs can still produce elementary errors such as AI hallucinations. On the other hand, external factors in the medical workflow, such as noise interference, data errors, and operational mistakes, are far beyond the scope of ThyGPT’s understanding. Therefore, ThyGPT still requires supervision from radiologists for their use. One effective approach to implementing ThyGPT is to position it as a copilot tool for radiologists. During diagnosis, ThyGPT can act as an assistive diagnostic tool, actively aiding radiologists in nodule detection and analysis. For challenging cases, radiologists can engage in in-depth interactions with ThyGPT before providing their final judgment. Additionally, during the composition of US reports, it can serve as an intelligent real-time report error-correction tool.

The study has some limitations. ThyGPT demonstrates variable performance across thyroid nodule subtypes, with FTC proving particularly challenging to identify accurately compared to other malignancies. Additionally, the PPV of ThyGPT fluctuates based on the score threshold employed, necessitating careful consideration during clinical application. Another limitation stems from the diversity of ultrasound devices used for data collection, creating image disparities across different brands and even between models from the same manufacturer. Although we implemented essential data augmentation techniques to strengthen the model’s robustness against these variations, their influence on performance remains a notable concern.

In conclusion, as an AIGC-CAD model, ThyGPT offers a paradigm shift in the field of CAD for medical imaging. By integrating generative LLMs with computer vision techniques, ThyGPT can assist radiologists in diagnosing thyroid nodules through an interactive and comprehensible manner, making complex AI analyses accessible and actionable for clinical decision-making. Moreover, ThyGPT also can function as an efficient error-correction assistant for radiologists, rapidly detecting and highlighting mistakes in ultrasound reports. This multimodal, transparent, and interactive approach has the potential to largely enhance diagnostic accuracy and increase the adoption of AI-assisted radiology in clinical practice.

Methods

Data curation

This retrospective, multicenter, diagnostic investigation utilized US data on thyroid nodules from nine hospitals across China. The study was approved by the ethics committees of all participating hospitals, including: Ethics Committee of Zhejiang Cancer Hospital (IRB-2020-287); Ethics Committee of Shenzhen People’s Hospital (IRB-KY-LL-2021026-01); Ethics Committee of Zhejiang Provincial Hospital of Chinese Medicine (IRB-2023-QS-010-02); Ethics Committee of Zhejiang Provincial People’s Hospital (IRB-KT20220140); Ethics Committee of Taizhou Cancer Hospital (IRB-2023001); Ethics Committee of Sun Yat-sen University Cancer Center (IRB-SL-B2021-021-02); Ethics Committee of Shanghai Tenth People’s Hospital (IRB-SHSY-IEC-4.0-18-6801); Ethics Committee of Shaoxing People’s Hospital (IRB-2022-083-Y-01); Ethics Committee of the Affiliated Dongyang Hospital of Wenzhou Medical University (IRB-2023-YX-355). Informed consent was waived due to the retrospective nature of the study. Research was performed in accordance with the Declaration of Helsinki. All data were anonymized, and the model was trained and tested solely within the hospitals’ AI infrastructure.

Cohort definition

Three cohorts were established: one main cohort and two external test cohorts (as illustrated in Fig. 1). Due to the differing testing tasks, the inclusion and exclusion criteria for the main cohort and External Test Cohort 1 differed slightly from those for External Test Cohort 2. The detailed inclusion criteria for the main cohort and External Test Cohort 1 were as follows: (1) patients with thyroid nodules aged ≥18 years; (2) patients who had undergone US examination and had their US images recorded; (3) patients with verified surgical pathological outcomes; and (4) patients who had complete US examination reports available (optional). The exclusion criteria for the main cohort and External Test Cohort 1 were as follows: (1) patients who had received other types of treatment prior to surgery, such as radioiodine (131I) treatment; (2) patients who had incomplete or unqualified US images of thyroid nodules (e.g., nodules too large to be captured in a single image or nodules with missing key images); and (3) patients with incomplete clinical information, such as missing basic patient information or treatment history. The detailed inclusion criteria for External Test Cohort 2 were as follows: (1) patients with thyroid nodules aged ≥18 years; (2) patients who had undergone US examination and had their US images recorded; and (3) patients who had complete US examination reports and whose reports showed errors during QC. The exclusion criteria for External Test Cohort 2 were as follows: (1) patients whose reports were free of textual errors, such as QC issues limited to discrepancies between the diagnostic conclusions and pathological findings, and (2) patients with incomplete or unqualified US images of thyroid nodules (e.g., damaged or missing key images).

Procedures

For image acquisition, patients were placed in the supine position with their neck extended and temporarily refrained from swallowing to fully expose the neck. The examining radiologist scanned the thyroid and stored at least one transverse, longitudinal, or characteristic sectional US image. All radiologists collecting US images were professionally trained, and all data were quality-controlled by at least one senior US radiologist with over 10 years of experience.

In the main cohort and External Test Cohort 1, all thyroid nodules were annotated as either benign or malignant based on the surgical pathological results. External Test Cohort 2 included erroneous reports identified during QC to test the model’s ability to detect errors in US reports. All US images were anonymized, removing any information that could identify patients and thereby protecting patient privacy.

Five senior radiologists independently reviewed the US reports in Test Set 2. These five senior radiologists were then divided into two groups, with the first group consisting of two radiologists responsible for integrating the review results from all five radiologists and confirming the errors and issues found in the reports to form the gold standard test set. The second group of three radiologists participated in the subsequent computer-aided diagnosis and error detection experiments. Additionally, the first group evaluated whether ThyGPT correctly modified the reports.

The ThyGPT model, incorporating techniques such as self-attention mechanisms and transformer architectures, was trained on the pre-labeled US images, diagnostic guidelines, anonymized reports, and nodule pathological data from the training set. Initially, the pre-labeled nodules information, anonymised diagnostic reports, thyroid-related diagnostic guidelines and manually delineated nodule masks or bounding boxes were put into the model for training. Once the training procedure was completed, the relevant parameters of the ThyGPT model were fixed. Then, data from the independent test sets were input into the ThyGPT model for nodule analysis and auxiliary diagnosis. The model will interact with radiologists and provide decision support. The basic framework used in this paper is the LLaMA3 model27.

Ultrasound image preprocessing

Experienced radiologists performed standardized mask annotation, manually delineating the nodule boundaries and regions of interest using a polygon-based annotation tool, Labelme. These masks were used to depict different semantic areas, including the nodule shape, internal echoes, and calcification features. To ensure annotation quality, all masks were verified by two senior radiologists with over 10 years of experience in thyroid ultrasound. Image normalization was performed through a multi-step process. First, all ultrasound images were resized to a standard dimension of 224 × 224 pixels while carefully maintaining the original aspect ratio to prevent distortion of important anatomical features. This standardization was achieved using bicubic interpolation for downsampling and bilinear interpolation for upsampling. The pixel intensity values were then normalized to a range of [0,1] and standardized with a mean of 0 and standard deviation of 1 to reduce the impact of varying ultrasound machine settings and imaging conditions.

To enhance model robustness and prevent overfitting, we implemented comprehensive data augmentation strategies. These included geometric transformations such as rotation (±10°), random cropping (maintaining at least 85% of the original nodule area), and random scaling (80–120% of original size). Additionally, we applied intensity augmentations, including random brightness adjustment (±15%), contrast variation (±10%), and Gaussian noise addition (σ=0.01), to simulate real-world imaging variations.

Statistical analysis

The performance of the model was assessed based on the ROC, AUC, TPR, TNR, PPV, NPV, accuracy, and F1-Score. In addition, we designed a comprehensive performance assessment approach to evaluate the ThyGPT model’s effectiveness, which involved evaluating the performance of radiologists in reading the US images alone, the independent recognition capabilities of the model, and the secondary judgments made by radiologists interacting with the model. The DeLong method was employed to calculate the 95% CIs of the metrics33. All calculations were conducted using the Python programming language.

Data availability

Individual participant data can be made available upon request, directed to corresponding author (D.X.). Once approved by the Institutional Review Board/Ethics Committee of all participating hospitals, deidentified data can be made available through a secured online file transfer system for research purpose only.

Code availability

All the codes used in this manuscript are available at our GitHub repository (github.com/seista131/ThyGPT).

References

Siegel, R. L. et al. Cancer statistics, 2024. CA Cancer J. Clin. 74, 12–49 (2024).

Chen, D. W. et al. Thyroid cancer. Lancet 401, 1531–1544 (2023).

Bray, F. et al. Global cancer statistics 2022: GLOBOCAN estimates of incidence and mortality worldwide for 36 cancers in 185 countries. CA Cancer J. Clin. 74, 229–263 (2024).

Boucai, L. et al. Thyroid cancer: A review. JAMA 5, 425–435 (2024).

Fagin, J. A. et al. Progress in thyroid cancer genomics: A 40-year journey. Thyroid 33, 1271–1286 (2023).

Rossi, E. D. et al. The impact of the 2022 WHO classification of thyroid neoplasms on everyday practice of cytopathology. Endocr. Pathol. 34, 23–33 (2023).

Bhattacharya, S. et al. Advances and challenges in thyroid cancer: The interplay of genetic modulators, targeted therapies, and AI-driven approaches. Life Sci. 332, 122110 (2023).

Pitoia, F. et al. New insights in thyroid diagnosis and treatment. Rev. Endocr. Metab. Dis. 25, 1–3 (2024).

Sun, P. et al. Ultrasound-based nomogram for predicting the aggressiveness of papillary thyroid carcinoma in adolescents and young adults. Acad. Radiol. 31, 523–535 (2024).

Bojunga, J. et al. Thyroid ultrasound and its ancillary techniques. Rev. Endocr. Metab. Dis. 25, 161–173 (2024).

Wang, J. et al. Deep learning models for thyroid nodules diagnosis of fine-needle aspiration biopsy: a retrospective, prospective, multicentre study in China. Lancet Digit. Health 6, 458–469 (2024).

Lee, Y. et al. Improved diagnostic accuracy of thyroid fine-needle aspiration cytology with artificial intelligence technology. Thyroid 34, 723–734 (2024).

Liu, Z. et al. Swin transformer: Hierarchical vision transformer using shifted windows. Proc. IEEE Conf. Comput. Vis. Pattern Recognit. (CVPR) 1, 9992–10012 (2021).

Zhou, L. et al. Deep learning predicts cervical lymph node metastasis in clinically node-negative papillary thyroid carcinoma. Insights Imaging 14, 222 (2023).

Wang, J. et al. A novel approach to quantify calcifications of thyroid nodules in US images based on deep learning: predicting the risk of cervical lymph node metastasis in papillary thyroid cancer patients. Eur. Radiol. 33, 9347–9356 (2023).

Xu, D. et al. The clinical value of artificial intelligence in assisting junior radiologists in thyroid ultrasound: A multicenter prospective study from real clinical practice. BMC Med 22, 293 (2024).

Peng, S. et al. Deep learning-based artificial intelligence model to assist thyroid nodule diagnosis and management: A multicentre diagnostic study. Lancet Digit. Health 3, 250–259 (2021).

Mei, X. et al. RadImageNet: An open radiologic deep learning research dataset for effective transfer learning. Radiol. Artif. Intell. 4, 210315 (2022).

Yao, J. et al. DeepThy-Net: A multimodal deep learning method for predicting cervical lymph node metastasis in papillary thyroid cancer. Adv. Intell. Syst. 4, 2200100 (2022).

He, K. et al. Deep residual learning for image recognition. Proc. IEEE Conf. Comput. Vis. Pattern Recognit. (CVPR) 2, 770–778 (2016).

Huang, G. et al. Densely connected convolutional networks. Proc. IEEE Conf. Comput. Vis. Pattern Recognit. (CVPR) 1, 2261–2269 (2017).

Szegedy, C. et al. Rethinking the inception architecture for computer vision. Proc. IEEE Conf. Comput. Vis. Pattern Recognit. (CVPR) 1, 2818–2826 (2016).

Wang, Z. et al. Localization and risk stratification of thyroid nodules in ultrasound images through deep learning. Ultrasound Med. Biol. 50, 882–887 (2024).

Chen, C. et al. Deep learning to assist composition classification and thyroid solid nodule diagnosis: a multicenter diagnostic study. Eur. Radiol. 34, 2323–2333 (2024).

Liu, Y. et al. The auxiliary diagnosis of thyroid echogenic foci based on a deep learning segmentation model: A two-center study. Eur. J. Radiol. 167, 111033 (2023).

Yao, J. et al. AI diagnosis of Bethesda category IV thyroid nodules. iScience 26, 108114 (2023).

Wu, S. et al. Collaborative enhancement of consistency and accuracy in US diagnosis of thyroid nodules using large language models. Radiology 3, 232255 (2024).

Campbell, D. J. et al. Evaluating ChatGPT responses on thyroid nodules for patient education. Thyroid 34, 371–377 (2024).

Korngiebel, D. M. et al. Considering the possibilities and pitfalls of Generative Pre-trained Transformer 3 (GPT-3) in healthcare delivery. NPJ Digit. Med. 4, 93 (2021).

Jiang, H. et al. Transforming free-text radiology reports into structured reports using ChatGPT: A study on thyroid ultrasonography. Eur. J. Radiol. 175, 111458 (2024).

Stephenson, J. US surgeon general sounds alarm on health worker burnout. JAMA-Health Forum 3, e222299 (2022).

Jeganathan, S. The growing problem of radiologist shortages: Australia and New Zealand’s perspective. Korean J. Radiol. 24, 1043–1045 (2023).

Zou, L. et al. Extending the DeLong algorithm for comparing areas under correlated receiver operating characteristic curves with missing data. Stat. Med. 43, 4148–4162 (2024).

Acknowledgements

This research was supported by the National Key Research and Development Program of China (No. 2022YFF0608403), the National Natural Science Foundation of China (No. 82471990, 82071946),"Pioneer" and "Leading Goose" R&D Program of Zhejiang (No. 2023C04039), Research Program of Zhejiang Provincial Department of Health (No. 2022KY110, 2023XY066, 2025KY717, 2025KY721 and 2023KY066) and Research Program of Zhejiang Cancer Hospital (No. PY2023018). The authors would like to thank all the groups of radiologists from the participating hospitals. We are grateful to Ms. Li Liu for her assistance in organizing the ultrasound data and reports.

Author information

Authors and Affiliations

Contributions

J.C.Y., Y.P.W., Z.K.L. and K.W. contributed equally to this work. J.C.Y., Y.P.W., Z.K.L., K.W., Y.M.Z., P.L. and D.X. contributed to the conceptualization and design of this study. Y.P.W., B.J.F. and Y.H.Z. constructed machine learning and deep learning models. J.C.Y., Z.K.L., K.W., B.J.F., Y.H.Z., C.C., Y.Q.Y., Z.P.W., R.R.R., N.F. and Y.Q.C. handled and mainly analyzed the research data. All authors interpreted the results. J.C.Y., K.W., and L.P.W. wrote the original draft of the paper. J.C.Y., Z.K.L., F.J.D., J.H.Z., X.X.L., X.H., V.W., D.O., W.L., N.F. and Y.D.L. revised the paper. Y.P.W., Z.K.L., K.W., S.S.Z., V.W., N.F., C.Y., Y.M.Z., P.L. and D.X. reviewed clinical evidence of this study. F.J.D., X.X.L., S.S.Z., Y.G., D.O., Y.D.L., L.Y.C. and Y.M.Z. provided the multicenter data. Y.P.W., Y.G., W.L., L.Y.C., C.Y., L.P.W., C.C., Y.Q.Y., Z.P.W., R.R.R. and Y.Q.C. verified the quality of the data. J.H.Z., X.H., Y.M.Z., P.L., and D.X. contributed to the supervision. P.L. and D.X. contributed to the project administration. F.J.D., J.H.Z., X.H. and D.X. contributed to the investigation. J.C.Y., Y.P.W., Z.K.L., K.W., Y.M.Z., P.L. and D.X. had full access to all raw data. All authors critically read and reviewed the manuscript.

Corresponding authors

Ethics declarations

Competing interests

The authors declare no competing interests.

Additional information

Publisher’s note Springer Nature remains neutral with regard to jurisdictional claims in published maps and institutional affiliations.

Supplementary information

Rights and permissions

Open Access This article is licensed under a Creative Commons Attribution-NonCommercial-NoDerivatives 4.0 International License, which permits any non-commercial use, sharing, distribution and reproduction in any medium or format, as long as you give appropriate credit to the original author(s) and the source, provide a link to the Creative Commons licence, and indicate if you modified the licensed material. You do not have permission under this licence to share adapted material derived from this article or parts of it. The images or other third party material in this article are included in the article’s Creative Commons licence, unless indicated otherwise in a credit line to the material. If material is not included in the article’s Creative Commons licence and your intended use is not permitted by statutory regulation or exceeds the permitted use, you will need to obtain permission directly from the copyright holder. To view a copy of this licence, visit http://creativecommons.org/licenses/by-nc-nd/4.0/.

About this article

Cite this article

Yao, J., Wang, Y., Lei, Z. et al. Multimodal GPT model for assisting thyroid nodule diagnosis and management. npj Digit. Med. 8, 245 (2025). https://doi.org/10.1038/s41746-025-01652-9

Received:

Accepted:

Published:

Version of record:

DOI: https://doi.org/10.1038/s41746-025-01652-9