Abstract

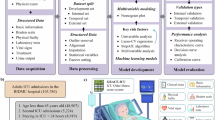

The ELDER-ICU model, a machine learning tool for predicting in-hospital mortality in critically ill older adults ( ≥ 65 years), was externally validated across 12 international centers in the US, Austria, South Korea, and China, where we assessed three model updating strategies: recalibration, incremental training, and retraining. While maintaining robust performance in US and Austrian cohorts (AUROC 0.804–0.864), significant drops occurred in Asian sites (South Korea: 0.753; China: 0.698). Incremental training enhanced performance in most centers, while retraining significantly improved AUROC by 0.066 and 0.076 in the two Asian sites (South Korea and China, respectively). Isotonic regression and Platt scaling improved calibration performance globally. This study demonstrates the varying robustness of the ELDER-ICU model and the differential effectiveness of model updating strategies across temporal shifts, populations, and clinical practice environments. Rigorous validation and proactive model adaptation are essential before clinical deployment in settings with heterogeneous populations and clinical practice.

Similar content being viewed by others

Data availability

The MIMIC and INSPIRE datasets are publicly available under credentialed access on PhysioNet (https://physionet.org/content/mimiciv/, https://physionet.org/content/inspire/1.3/). The SICdb datasets are available for research use following submission of a data access request via the PhysioNet (https://physionet.org/content/sicdb/1.0.8/). The Chinese critical care database is available from the National Genomic Data Center database under accession number PRJCA006118. The eICU-CRD-II dataset is not yet publicly available; please contact the corresponding author for access.

Code availability

Code is available at https://github.com/dmj163/ELDER-ICU-Ext-Val.

References

Ho, L. et al. Performance of models for predicting 1-year to 3-year mortality in older adults: a systematic review of externally validated models. Lancet Healthy Longev. 5, e227–e235 (2024).

Wang, S. M. et al. Association of multimorbidity patterns and order of physical frailty and cognitive impairment occurrence: a prospective cohort study. Age and Ageing 54, 10 (2025).

Guidet, B. et al. The trajectory of very old critically ill patients. Intensive Care Med. 50, 181–194 (2024).

Liu, X. et al. Illness severity assessment of older adults in critical illness using machine learning (ELDER-ICU): an international multicentre study with subgroup bias evaluation. Lancet Digit Health 5, e657–e667 (2023).

Efthimiou, O. et al. Developing clinical prediction models: a step-by-step guide. Br. Med. J. 386, e078276 (2024).

Subbaswamy, A. et al. A data-driven framework for identifying patient subgroups on which an AI/machine learning model may underperform. NPJ Digit. Med. 7, 334 (2024).

Davis, S. E., Dorn, C., Park, D. J. & Matheny, M. E. Emerging algorithmic bias: fairness drift as the next dimension of model maintenance and sustainability. J. Am. Med Inf. Assoc. 32, 845–854 (2025).

Windecker, D. et al. Generalizability of FDA-approved AI-enabled medical devices for clinical use. JAMA Netw. Open 8, e258052 (2025).

Lee, C. S. & Lee, A. Y. Clinical applications of continual learning machine learning. Lancet Digit. Health 2, e279–e281 (2020).

Finlayson, S. G. et al. The clinician and dataset shift in artificial intelligence. N. Engl. J. Med. 385, 283–286 (2021).

Otokiti, A. U. et al. The need to prioritize model-updating processes in clinical artificial intelligence (AI) models: protocol for a scoping review. JMIR Res. Protoc. 12, e37685 (2023).

Liou, L. et al. Assessing calibration and bias of a deployed machine learning malnutrition prediction model within a large healthcare system. NPJ Digit Med. 7, 149 (2024).

de Hond, A. A. H. et al. Predicting readmission or death after discharge from the ICU: external validation and retraining of a machine learning model. Crit. Care Med. 51, 291–300 (2023).

Barak-Corren, Y. et al. Prediction across healthcare settings: a case study in predicting emergency department disposition. NPJ Digit. Med. 4, 169 (2021).

Futoma, J., Simons, M., Panch, T., Doshi-Velez, F. & Celi, L. A. The myth of generalisability in clinical research and machine learning in health care. Lancet Digit. Health 2, e489–e492 (2020).

Reddy, S. et al. Evaluation framework to guide implementation of AI systems into healthcare settings. BMJ Health & Care Informatics 28, e100444 (2021).

van Amsterdam, W. A. C., van Geloven, N., Krijthe, J. H., Ranganath, R. & Cinà, G. When accurate prediction models yield harmful self-fulfilling prophecies. Patterns 6, 101229 (2025).

Leuchter, R. K., Turner, W. B. & Ouyang, D. Evaluating translational AI: a two-way moving target problem. NEJM AI 2, AIp2500705 (2025).

Janse, R. J. et al. When impact trials are not feasible: alternatives to study the impact of prediction models on clinical practice. Nephrol. Dial. Transpl. 40, 27–33 (2024).

Collins, G. S. et al. TRIPOD+AI statement: updated guidance for reporting clinical prediction models that use regression or machine learning methods. Br. Med. J. 385, e078378 (2024).

Moons, K. G. M. et al. PROBAST+AI: an updated quality, risk of bias, and applicability assessment tool for prediction models using regression or artificial intelligence methods. Br. Med. J. 388, e082505 (2025).

Johnson, A. E. W. et al. MIMIC-IV, a freely accessible electronic health record dataset. Sci. Data 10, 1 (2023).

Pollard, T. J. et al. The eICU Collaborative Research Database, a freely available multi-center database for critical care research. Sci. Data 5, 180178 (2018).

Rodemund, N., Wernly, B., Jung, C., Cozowicz, C. & Koköfer, A. Harnessing big data in critical care: exploring a new European Dataset. Sci. Data 11, 320 (2024).

Lim, L. et al. INSPIRE, a publicly available research dataset for perioperative medicine. Sci. Data 11, 655 (2024).

Jin, S. et al. Establishment of a Chinese critical care database from electronic healthcare records in a tertiary care medical center. Sci. Data 10, 49 (2023).

van der Laan, L., Ulloa-Pérez, E., Carone, M. & Luedtke, A. Causal isotonic calibration for heterogeneous treatment effects. Proc. Mach. Learn Res. 202, 34831–34854 (2023).

Jiang, X., Osl, M., Kim, J. & Ohno-Machado, L. Smooth isotonic regression: a new method to calibrate predictive models. AMIA Jt Summits Transl. Sci. Proc. 2011, 16–20 (2011).

Acknowledgements

The study was supported by the Beijing Natural Science Foundation (7252298), National Natural Science Foundation of China (82502525 and 62571550), Beijing Municipal Science and Technology Project (Z241100007724003), Project of Drug Clinical Evaluate Research of Chinese Pharmaceutical Association (NO.CPA-Z06-ZC-2021-004), a collaborative scientific project co-established by the Science and Technology Department of the National Administration of Traditional Chinese Medicine and the Zhejiang Provincial Administration of Traditional Chinese Medicine (GZY-ZJ-KJ-24082), Project of Zhejiang University Longquan Innovation Center (ZJDXLQCXZCJBGS2024016), National Institute of Health (R01 EB017205), DS-I Africa U54 TW012043-01 and Bridge2AI OT2OD032701, and National Science Foundation (ITEST #2148451).

Author information

Authors and Affiliations

Contributions

M.D., X.L., W.Y., and S.H. collected data, validated and updated models, and drafted the paper. J.R. and T.L. edited the paper and checked the results. P.H., C.L., L.C., Z.L., and Z.G. provided the expertise for the validation study design. D.C., F.Z., Zho. Z., Zhe. Z., and L.A.C. designed the study and critically reviewed the core content of the paper. All authors contributed to the methodology, results analysis, and discussions of the modeling process. All authors had full access to the datasets used in this study and confirmed the fidelity of the results. All authors had final responsibility for the decision to submit for publication.

Corresponding authors

Ethics declarations

Competing interests

Zhongheng Zhang is an Editorial Board Member of NPJ Digital Medicine; he was not involved in the peer-review process or in any editorial decisions related to this paper. The remaining authors declare no competing interests.

Additional information

Publisher’s note Springer Nature remains neutral with regard to jurisdictional claims in published maps and institutional affiliations.

Supplementary information

Rights and permissions

Open Access This article is licensed under a Creative Commons Attribution-NonCommercial-NoDerivatives 4.0 International License, which permits any non-commercial use, sharing, distribution and reproduction in any medium or format, as long as you give appropriate credit to the original author(s) and the source, provide a link to the Creative Commons licence, and indicate if you modified the licensed material. You do not have permission under this licence to share adapted material derived from this article or parts of it. The images or other third party material in this article are included in the article’s Creative Commons licence, unless indicated otherwise in a credit line to the material. If material is not included in the article’s Creative Commons licence and your intended use is not permitted by statutory regulation or exceeds the permitted use, you will need to obtain permission directly from the copyright holder. To view a copy of this licence, visit http://creativecommons.org/licenses/by-nc-nd/4.0/.

About this article

Cite this article

Duan, M., Liu, X., Yeung, W. et al. Multicenter validation and updating of the ELDER-ICU model for severity assessment in elderly critical illness. npj Digit. Med. (2026). https://doi.org/10.1038/s41746-026-02472-1

Received:

Accepted:

Published:

DOI: https://doi.org/10.1038/s41746-026-02472-1