Abstract

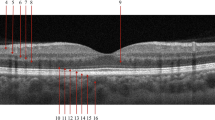

Optical Coherence Tomography (OCT) is essential in ophthalmology for cross-sectional imaging of the retina. Pretrained foundation models facilitate task-specific model development by enabling fine-tuning with limited labeled data. However, current foundation models rely on a single B-scan (usually the central slice), overlooking volumetric context. This research investigates video foundation models to capture full 3D retinal structure and improve diagnostic performance. V-JEPA, a state-of-the-art video foundation model, was benchmarked against retinal foundation models (RETFound, VisionFM) and a natural image foundation model (DINOv2). All were fine-tuned to detect Age-related Macular Degeneration or Glaucomatous Optic Neuropathy using five OCT datasets. V-JEPA consistently equaled or outperformed image-based models, achieving an average AUROC of 0.94 (0.80–0.99), versus 0.90 (0.76–0.98) for the best image model, a statistically significant improvement (p < 0.001). To our knowledge, this is the first application of transformer-based video models to volumetric OCT, highlighting their promise in 3D medical imaging.

Similar content being viewed by others

Data availability

We used open access datasets: CirrusOCT (https://zenodo.org/records/1481223) and Gamma (gamma.grand-challenge.org), A2A OCT (people.duke.edu/~sf59/RPEDC_Ophth_2013_dataset.htm) NEH-UT (data.mendeley.com/datasets/8kt969dhx6/2). The HYRD dataset may be made available for non-commercial academic use from the authors with permission from the Hillel Yaffe Medical Center. Please contact the corresponding author regarding such requests.

Code availability

The source code used in this study is available through this GitHub repository https://github.com/aim-lab/oct-fm-slices-to-volumes that will be made public upon publication. Information on how to run the code is contained within the README.md file.

References

World Health Organization. World report on vision. https://www.who.int/publications/i/item/world-report-on-vision (2019).

Poplin, R. et al. Prediction of cardiovascular risk factors from retinal fundus photographs via deep learning. Nat. Biomed. Eng. 2, 158–164 (2018).

Ting, D. S. W. et al. Artificial intelligence and deep learning in ophthalmology. Br. J. Ophthalmol. 103, 167–175 (2019).

Cheung, C. Y. et al. A deep learning model for detection of alzheimer’s disease based on retinal photographs: a retrospective, multicentre case-control study. Lancet Digit. Health 4, E806–E815 (2022).

Srivastava, O., Tennant, M., Grewal, P., Rubin, U. & Seamone, M. Artificial intelligence and machine learning in ophthalmology: a review. Indian J. Ophthalmol. 71, 11–17 (2023).

Men, Y. et al. Drstagenet: deep learning for diabetic retinopathy staging from fundus images. Physiol. Meas. 46, 015001 (2025).

Abramovich, O. et al. Gonet: a generalizable deep learning model for glaucoma detection. IEEE Trans. Biomed. Eng. 73, 32–39 (2025).

Krishnan, R., Rajpurkar, P. & Topol, E. J. Self-supervised learning in medicine and healthcare. Nat. Biomed. Eng. 6, 1346–1352 (2022).

Zhou, Y. et al. A foundation model for generalizable disease detection from retinal images. Nature 622, 156–163 (2023).

Qiu, J. et al. Development and validation of a multimodal multitask vision foundation model for generalist ophthalmic artificial intelligence. NEJM AI 1, AIoa2300221 (2024).

Shi, D. et al. Eyefound: a multimodal generalist foundation model for ophthalmic imaging. In Proc. Poster session presented at The International Conference of Vision and Eye Research (iCover) (ARVO, 2024).

Bardes, A. et al. Revisiting feature prediction for learning visual representations from video arXiv preprint arXiv:2404.08471 (2024).

Oquab, M. et al. DINOv2: learning robust visual features without supervision. Trans. Mach. Learn. Res. (2024).

Kermany, D. S. et al. Identifying medical diagnoses and treatable diseases by image-based deep learning. Cell 172, 1122–1131.e9 (2018).

Wen, H. et al. Towards more efficient ophthalmic disease classification and lesion location via convolution transformer. Comput. Methods Prog. Biomed. 220, 106832 (2022).

Leuschen, J. N. et al. Spectral-domain optical coherence tomography characteristics of intermediate age-related macular degeneration. Ophthalmology 120, 140–150 (2013).

Farsiu, S. et al. Quantitative classification of eyes with and without intermediate age-related macular degeneration using optical coherence tomography. Ophthalmology 121, 162–172 (2014).

Maetschke, S. et al. A feature agnostic approach for glaucoma detection in oct volumes. PLoS ONE 14, e0219126 (2019).

Wu, J. et al. Gamma challenge: glaucoma grading from multi-modality images. Med. Image Anal. 90, 102938 (2023).

Zhou, J. et al. iBOT: image BERT pre-training with online tokenizer. In Proc. 10th International Conference on Learning Representations (ICLR, 2022).

Wua, Y. et al. An eyecare foundation model for clinical assistance: a randomized controlled trial. Nat. Med. 31, 3404–3413 (2025).

Shi, D. et al. A multimodal visual-language foundation model for computational ophthalmology. npj Digit. Med. 8, 381 (2025).

Morano, J. et al. Multimodal foundation model and benchmark for comprehensive retinal oct image analysis. npj Digit. Med. 8, 576 (2025).

Silva-Rodríguez, J., Chakor, H., Kobbi, R., Dolz, J. & Ayed, I. B. A foundation language-image model of the retina (flair): encoding expert knowledge in text supervision. Med. Image Anal. 99, 103357 (2025).

DeFauw, J. et al. Clinically applicable deep learning for diagnosis and referral in retinal disease. Nat. Med. 24, 1342–1350 (2018).

Ran, A. R. et al. Three-dimensional multi-task deep learning model to detect glaucomatous optic neuropathy and myopic features from optical coherence tomography scans: a retrospective multi-centre study. Front. Med. 9, 860574 (2022).

Sotoudeh-Paima, S., Jodeiri, A., Hajizadeh, F. & Soltanian-Zadeh, H. Multi-scale convolutional neural network for automated amd classification using retinal oct images. Comput. Biol. Med. 144, 105368 (2022).

Tong, Z., Song, Y., Wang, J. & Wang, L. Videomae: masked autoencoders are data-efficient learners for self-supervised video pre-training. In Proc. 36th International Conference on Neural Information Processing Systems, 10078–10093 (NIPS, 2022).

Cubuk, E. D., Zoph, B., Shlens, J. & Le, Q. V. Randaugment: practical automated data augmentation with a reduced search space. In Proc. 34th International Conference on Neural Information Processing Systems, 18613–18624 (NIPS, 2020).

Arnab, A. et al. Vivit: a video vision transformer. In Proc. IEEE/CVF International Conference on Computer Vision, 6836–6846 (ICCV, 2021).

Feichtenhofer, C., Fan, H. & He, Y. L. K. Masked autoencoders as spatiotemporal learners. In Proc. 36th International Conference on Neural Information Processing Systems, Vol. 35, 35946–35958 (NIPS, 2022).

Acknowledgements

We acknowledge the assistance of ChatGPT, an AI-based language model developed by OpenAI, in editing the manuscript. R.J. and J.B. acknowledge the support of the Zimin Foundation.

Author information

Authors and Affiliations

Contributions

Conceptualization: J.B. and R.J. Methodology: J.B., R.J., and T.M. Data curation: E.B. and M.M. Investigation: J.B. and R.J. Funding acquisition: J.B. Writing—original draft: J.B. and R.J. Writing—review & editing: J.B., R.J., E.B., M.M., and T.M.

Corresponding author

Ethics declarations

Competing interests

The authors declare no competing interests.

Additional information

Publisher’s note Springer Nature remains neutral with regard to jurisdictional claims in published maps and institutional affiliations.

Supplementary information

Rights and permissions

Open Access This article is licensed under a Creative Commons Attribution-NonCommercial-NoDerivatives 4.0 International License, which permits any non-commercial use, sharing, distribution and reproduction in any medium or format, as long as you give appropriate credit to the original author(s) and the source, provide a link to the Creative Commons licence, and indicate if you modified the licensed material. You do not have permission under this licence to share adapted material derived from this article or parts of it. The images or other third party material in this article are included in the article’s Creative Commons licence, unless indicated otherwise in a credit line to the material. If material is not included in the article’s Creative Commons licence and your intended use is not permitted by statutory regulation or exceeds the permitted use, you will need to obtain permission directly from the copyright holder. To view a copy of this licence, visit http://creativecommons.org/licenses/by-nc-nd/4.0/.

About this article

Cite this article

Judkiewicz, R., Berkowitz, E., Meisel, M. et al. Shifting the retinal foundation models paradigm from slices to volumes for optical coherence tomography. npj Digit. Med. (2026). https://doi.org/10.1038/s41746-026-02496-7

Received:

Accepted:

Published:

DOI: https://doi.org/10.1038/s41746-026-02496-7