Abstract

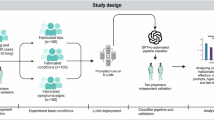

Large Language Models (LLMs) offer promising opportunities to support mental healthcare workflows, yet they often lack the structured clinical reasoning needed for reliable diagnosis and may struggle to provide the emotionally attuned communication essential for patient trust. Here, we introduce WiseMind, a novel multi-agent framework inspired by the theory of Dialectical Behavior Therapy designed to facilitate psychiatric assessment. By integrating a “Reasonable Mind" Agent for evidence-based logic and an “Emotional Mind" Agent for empathetic communication, WiseMind effectively bridges the gap between instrumental accuracy and humanistic care. Our framework utilizes a Diagnostic and Statistical Manual of Mental Disorders, Fifth Edition (DSM-5)-guided Structured Knowledge Graph to steer diagnostic inquiries, significantly reducing hallucinations compared to standard prompting methods. Using a combination of virtual standard patients, simulated interactions, and real human interaction datasets, we evaluate WiseMind across three common psychiatric conditions. WiseMind outperforms state-of-the-art LLM methods in both identifying critical diagnostic nodes and establishing accurate differential diagnoses. Across 1206 simulated conversations and 180 real user sessions, the system achieves 85.6% top-1 diagnostic accuracy, approaching reported diagnostic performance ranges of board-certified psychiatrists and surpassing knowledge-enhanced single-agent baselines by 15-54 percentage points. Expert review by psychiatrists further validates that WiseMind generates responses that are not only clinically sound but also psychologically supportive, demonstrating the feasibility of empathetic, reliable AI agents to conduct psychiatric assessments under appropriate human oversight.

Similar content being viewed by others

Data availability

The datasets generated and/or analyzed during the current study are not publicly available due to requirements stated in the Research Ethics Board (REB) agreement, but are available from the corresponding author upon reasonable request. The simulated interview sessions are available at https://github.com/YWU99u/WiseMind-DDx-Psyc.

Code availability

The underlying code for this study is not publicly available for proprietary reasons. However, a secure test link that exposes the model behavior without revealing proprietary components can be provided by the corresponding author upon reasonable request for academic, non-commercial evaluation.

References

Carlat, D. J. The Psychiatric Interview: A Practical Guide 4th edn (Wolters Kluwer, 2023).

Nordgaard, J., Sass, L. A. & Parnas, J. The psychiatric interview: validity, structure, and subjectivity. Eur. Arch. Psychiatry Clin. Neurosci. 263, 353–364 (2013).

First, M. B. DSM-5-TR Handbook of Differential Diagnosis (American Psychiatric Association Publishing, 2024).

Demazeux, S. & Singy, P. (eds.) The DSM-5 in Perspective: Philosophical Reflections on the Psychiatric Babel, History, Philosophy and Theory of the Life Sciences. vol. 15 (Springer, 2015).

Kessler, R. C., Chiu, W. T., Demler, O. & Walters, E. E. Prevalence, severity, and comorbidity of 12-month DSM-IV disorders in the National Comorbidity Survey Replication. N. Engl. J. Med. 352, 2515–2523 (2005).

Thomas, C. R. & HOLZER III, C. E. The continuing shortage of child and adolescent psychiatrists. J. Am. Acad. Child Adolesc. Psychiatry 45, 1023–1031 (2006).

Butryn, T., Bryant, L., Marchionni, C. & Sholevar, F. The shortage of psychiatrists and other mental health providers: causes, current state, and potential solutions. Int. J. Acad. Med. 3, 5–9 (2017).

Das, K. K. Graduate medical education: variation of program and training duration. Korean J. Med. Educ. 35, 421 (2023).

for Addiction, C. & Health, M. Clinical practicum training program in psychology https://www.camh.ca/-/media/education-files/clinical-psychology-practicum-program-brochure.pdf (2025).

Topol, E. J. High-performance medicine: the convergence of human and artificial intelligence. Nat. Med. 25, 44–56, https://doi.org/10.1038/s41591-018-0300-7 (2019).

Wilson, S. L., Forte, A., Huynh, G. et al. Ethical principles for artificial intelligence in health. Lancet Digit. Health 3, e425–e427 (2021).

Clusmann, J. et al. The future landscape of large language models in medicine. Commun. Med. 3 https://doi.org/10.1038/s43856-023-00370-1 (2023).

Yang, R. et al. Large language models in health care: development, applications, and challenges. Health Care Sci. 2, 255–263 (2023).

Wu, Y., Mao, K., Dennett, L., Zhang, Y. & Chen, J. Systematic review of machine learning in ptsd studies for automated diagnosis evaluation. npj Ment. Health Res. 2, 16 (2023).

Xue, C. et al. Ai-based differential diagnosis of dementia etiologies on multimodal data. Nat. Med. 30, 2977–2989 (2024).

Demetriou, E. A. et al. Machine learning for differential diagnosis between clinical conditions with social difficulty: autism spectrum disorder, early psychosis, and social anxiety disorder. Front. Psychiatry 11, 545 (2020).

Wu, Y., Chen, J., Mao, K. & Zhang, Y. Automatic post-traumatic stress disorder diagnosis via clinical transcripts: A novel text augmentation with large language models. In 2023 IEEE Biomedical Circuits and Systems Conference (BioCAS), 1–5 (IEEE, 2023).

Freidel, S. & Schwarz, E. Knowledge graphs in psychiatric research: potential applications and future perspectives. Acta Psychiatr. Scand. 151, 180–191 (2025).

Wu, H. et al. A survey on clinical natural language processing in the United Kingdom from 2007 to 2022. npj Digit. Med. 5, 186 (2022).

Croxford, E. et al. Current and future state of evaluation of large language models for medical summarization tasks. npj Health Systems. 2 https://doi.org/10.1038/s44401-024-00011-2 (2025).

Tu, T. et al. Towards conversational diagnostic artificial intelligence. Nature 642, 442–450. (2025).

American Psychiatric Association. Diagnostic and Statistical Manual of Mental Disorders: DSM-5 5 edn (American Psychiatric Publishing, 2013).

Regier, D. A., Kuhl, E. A. & Kupfer, D. J. The DSM-5: classification and criteria changes. World Psychiatry. 12, 92–98 (2013).

World Health Organization. International Classification of Diseases for Mortality and Morbidity Statistics. (11th Revision) (World Health Organization, 2019).

Yoran, O., Wolfson, T., Ram, O. & Berant, J. Making retrieval-augmented language models robust to irrelevant context. In The Twelfth International Conference on Learning Representations, https://openreview.net/forum?id=ZS4m74kZpH (2024).

Lu, M. Y. et al. A multimodal generative AI copilot for human pathology. Nature 634, 466–473 (2024).

Meurisch, C. et al. Exploring user expectations of proactive ai systems. Proc. ACM Interact. Mob. Wearable Ubiquitous Technol. 4, 1–22 (2020).

Liu, P. et al. Pre-train, prompt, and predict: a systematic survey of prompting methods in natural language processing. ACM Comput. Surv. 55 https://doi.org/10.1145/3560815 (2023).

McCabe, R. & Healey, P. G. T. What to take up from the patient’s talk? The clinician’s responses to emotional cues during the psychiatric intake interview. Front. Psychiatry 15, 1352601 (2024).

Linehan, M. M.Cognitive–Behavioral Treatment of Borderline Personality Disorder (Guilford Publications, 1993).

Singhal, K. et al. Toward expert-level medical question answering with large language models. Nat. Med. 31, 943–950. (2025).

Chung, H. W. et al. Scaling instruction-finetuned language models. J. Mach. Learn. Res. 25, 1–53 (2024).

Singhal, K. et al. Large language models encode clinical knowledge. Nature 620, 172–180 (2023).

Fitzpatrick, K. K., Darcy, A. & Vierhile, M. Delivering cognitive behavior therapy to young adults with symptoms of depression and anxiety using a fully automated conversational agent (woebot): a randomized controlled trial. JMIR Ment. health 4, e7785 (2017).

Inkster, B., Sarda, S., Subramanian, V. An empathy-driven, conversational artificial intelligence agent (wysa) for digital mental well-being: real-world data evaluation mixed-methods study. JMIR mHealth uHealth 6, e12106 (2018).

Lan, K. et al. Depression diagnosis dialogue simulation: self-improving psychiatrist with tertiary memory. CoRR, https://doi.org/10.48550/arXiv.2409.15084 (2024).

Kim, Y. et al. Mdagents: an adaptive collaboration of llms for medical decision-making. Adv. Neural Inf. Process. Syst. 37, 79410–79452 (2024).

McDuff, D. et al. Towards accurate differential diagnosis with large language models. Nature 1–7 (2025).

Aggarwal, N. K. The cultural formulation interview in case formulations: a state-of-the-science review. Behav. Ther. 55, 1130–1143 (2024).

Kanjee, Z., Crowe, B. & Rodman, A. Accuracy of a generative artificial intelligence model in a complex diagnostic challenge. JAMA 330, 78–80 (2023).

Yu, C. C. et al. The development of empathy in the healthcare setting: a qualitative approach. BMC Med. Educ. 22, 245 (2022).

Licciardone, J. C. et al. Physician empathy and chronic pain outcomes. JAMA Netw. Open 7, e246026 (2024).

Robertson, C. et al. Diverse patients’ attitudes towards artificial intelligence (AI) in diagnosis. PLoS Digit. Health 2, e0000237 (2023).

Kerz, E., Zanwar, S., Qiao, Y. & Wiechmann, D. Toward explainable AI (XAI) for mental health detection based on language behavior. Front. psychiatry 14, 1219479 (2023).

Pashak, T. J. & Heron, M. R. Build rapport and collect data: a teaching resource on the clinical interviewing intake. Discov. Psychol. 2, 20 (2022).

Ferrara, M. et al. Machine learning and non-affective psychosis: identification, differential diagnosis, and treatment. Curr. Psychiatry Rep. 24, 925–936 (2022).

Pozzi, G. & De Proost, M. Keeping an AI on the mental health of vulnerable populations: reflections on the potential for participatory injustice. AI Ethics 5, 2281–2291 (2025).

Rahsepar Meadi, M. et al. Exploring the ethical challenges of conversational AI in mental health care: Scoping review. JMIR Ment. Health 12, e60432 (2025).

Bear Don’t Walk IV, O. J., Nieva, H. R., Lee, S. S. J. & Elhadad, N. A scoping review of ethics considerations in clinical natural language processing. JAMIA Open 5, ooac039 (2022).

Zhang, T., Schoene, A. M., Ji, S. & Ananiadou, S. Natural language processing applied to mental illness detection: a narrative review. npj Digit. Med. 5, 46 (2022).

Tu, G. et al. Multiple knowledge-enhanced interactive graph network for multimodal conversational emotion recognition. In Findings of the Association for Computational Linguistics: EMNLP 2024, 3861–3874 (ACL, 2024).

Maicher, K. et al. Developing a conversational virtual standardized patient to enable students to practice history-taking skills. Simul. Healthc. 12, 124–131 (2017).

Hubal, R. C., Kizakevich, P. N., Guinn, C. I., Merino, K. D. & West, S. L. The virtual standardized patient-simulated patient-practitioner dialog for patient interview training. In Medicine Meets Virtual Reality 2000, 133–138 (IOS Press, 2000).

Reger, G. M., Norr, A. M., Gramlich, M. A. & Buchman, J. M. Virtual standardized patients for mental health education. Curr. psychiatry Rep. 23, 57 (2021).

Wu, Y., Mao, K., Zhang, Y. & Chen, J. Callm: Enhancing clinical interview analysis through data augmentation with large language models. IEEE Journal of Biomedical and Health Informatics. (IEEE, 2024).

King, A. & Hoppe, R. B. "best practice" for patient-centered communication: a narrative review. J. Graduate Med. Educ. 5, 385–393 (2013).

Dacre, J., Besser, M., White, P. Mrcp (uk) part 2 clinical examination (paces): a review of the first four examination sessions (june 2001–july 2002). Clin. Med. 3, 452–459 (2003).

Basco, M. R. et al. Methods to improve diagnostic accuracy in a community mental health setting. Am. J. Psychiatry 157, 1599–1605 (2000).

Hirschfeld, R. M., Lewis, L., Vornik, L. A. Perceptions and impact of bipolar disorder: how far have we really come? results of the national depressive and manic-depressive association 2000 survey of individuals with bipolar disorder. J. Clin. Psychiatry. 64, 161–174 (2003).

Shabani, A. et al. Psychometric properties of structured clinical interview for DSM-5 disorders-clinician version (SCID-5-CV). Brain Behav. 11, e01894 (2021).

Osório, F. L. et al. Clinical validity and intrarater and test–retest reliability of the structured clinical interview for DSM-5–clinician version (SCID-5-CV). Psychiatry Clin. Neurosci. 73, 754–760 (2019).

He, J., Li, M., Sun, T., Gao, X. & Yu, C. Trustworthy AI in medicine: a systematic review. Patterns 5, 100924 (2024).

Dong, Q. et al. A survey on in-context learning. In Proc. 2024 Conference on Empirical Methods in Natural Language Processing, (Association for Computational Linguistics) (eds.) Al-Onaizan, Y. 1107–1128, Miami, Florida, USA (Association for Computational Linguistics, Miami, Florida,USA, 2024).

Dong, Q. et al. A survey on in-context learning. Preprint at https://doi.org/10.48550/arXiv.2301.00234 (2022).

Yan, W.-J., Ruan, Q.-N. & Jiang, K. Challenges for artificial intelligence in recognizing mental disorders. Diagnostics 13, 2 (2022).

Rajpurkar, P., Chen, E., Banerjee, O. & Topol, E. J. AI in health and medicine. Nat. Med. 28, 31–38 (2022).

Norcross, J. C. & Lambert, M. J. Working alliance: Theory, research, and practice (Springer, 2011).

Chapman, A. L. Dialectical behavior therapy: current indications and unique elements. Psychiatry 3, 62–68 (2006).

Park, J. S. et al. Generative agents: Interactive simulacra of human behavior. In Proc. 36th Annual ACM Symposium on User Interface Software and Technology, UIST ’23 (Association for Computing Machinery, 2023).

Frank, J. R., Snell, L. & Sherbino, J. (eds.) CanMEDS 2015 Physician Competency Framework (Royal College of Physicians and Surgeons of Canada, 2015).

Davenport, T. H. & Kalakota, R. The potential for artificial intelligence in healthcare, vol. 6 (2019).

Price, W. N. & Cohen, I. G. Privacy in the age of medical big data. Nat. Med. 28, 197–204 (2022).

Pierson, E., Cutler, D. M., Leskovec, J., Mullainathan, S. & Obermeyer, Z. An algorithmic approach to reducing unexplained pain disparities in underserved populations. Nat. Med. 27, 136–140 (2021).

Sommers-Flanagan, J. & Sommers-Flanagan, R. Clinical Interviewing, 7th edn (John Wiley & Sons, 2023).

Edge, D. et al. From local to global: a graph rag approach to query-focused summarization. Preprint at https://doi.org/10.48550/arXiv.2404.16130 (2024).

Holtzman, A., Buys, J., Du, L., Forbes, M. & Choi, Y. The curious case of neural text degeneration. In International Conference on Learning Representations, https://openreview.net/forum?id=rygGQyrFvH (2020).

Peeperkorn, M., Kouwenhoven, T., Brown, D. & Jordanous, A. Is temperature the creativity parameter of large language models? In ICCC, 226–235 (ICCC, 2024).

Renze, M. The effect of sampling temperature on problem solving in large language models. In Al-Findings of the Association for Computational Linguistics: EMNLP 2024, (eds Onaizan, Y., Bansal, M. & Chen, Y.-N.) 7346–7356 (Association for Computational Linguistics, 2024).

Korbak, T. et al. Pretraining language models with human preferences. In International Conference on Machine Learning, 17506–17533 (PMLR, 2023).

Wang, H. et al. Pre-trained language models and their applications. J. Comput. Sci. Technol. 38, 705–737 (2023).

Acknowledgements

Y.W. gratefully acknowledges the generous financial support provided by the China Scholarship Council under the grant IDs 202308180002 (YW).

Author information

Authors and Affiliations

Contributions

Y.W. served as the lead contributor, responsible for initiating the research ideation, leading the study design and experimental execution, all programming aspects, drafting the initial manuscript, and manuscript editing. G.W. participated substantially in the research ideation, contributed to the study design, experiment design, programming aspects, and assisted with manuscript construction. J.L. conceived and led the research ideation and research design, proposed system architecture and evaluation strategy, and provided substantially support for manuscript drafting and editing throughout the submission and revision process. Y.Z. contributed substantially to the study design, provided key medical insights and expertise, REB Approval, and participated in manuscript editing. J.C. served as the Principal Investigator, coordinating the overall study, providing essential research funding, and contributing significantly to manuscript editing. S.Z., L.M., and T.Y. participated in study discussion and contributed to the evaluation process. M.Z. and I.P. participated in the medical insights discussion and contributed to the final evaluation of the system.

Corresponding authors

Ethics declarations

Competing interests

Authors Y.W., S.Z., Y.Z., and J.C. are employees of and hold equity in Shanghai KeyLinkAI Inc., which is developing commercial applications for the technology described in this manuscript. Additionally, Authors Y.W., G.W., J.L., Y.Z., and J.C. are inventors on a pending patent application regarding this technology. The remaining authors declare no competing interests.

Additional information

Publisher’s note Springer Nature remains neutral with regard to jurisdictional claims in published maps and institutional affiliations.

Supplementary information

Rights and permissions

Open Access This article is licensed under a Creative Commons Attribution-NonCommercial-NoDerivatives 4.0 International License, which permits any non-commercial use, sharing, distribution and reproduction in any medium or format, as long as you give appropriate credit to the original author(s) and the source, provide a link to the Creative Commons licence, and indicate if you modified the licensed material. You do not have permission under this licence to share adapted material derived from this article or parts of it. The images or other third party material in this article are included in the article’s Creative Commons licence, unless indicated otherwise in a credit line to the material. If material is not included in the article’s Creative Commons licence and your intended use is not permitted by statutory regulation or exceeds the permitted use, you will need to obtain permission directly from the copyright holder. To view a copy of this licence, visit http://creativecommons.org/licenses/by-nc-nd/4.0/.

About this article

Cite this article

Wu, Y., Wan, G., Li, J. et al. WiseMind: a knowledge-guided multi-agent framework for accurate and empathetic psychiatric diagnosis. npj Digit. Med. (2026). https://doi.org/10.1038/s41746-026-02559-9

Received:

Accepted:

Published:

DOI: https://doi.org/10.1038/s41746-026-02559-9