Abstract

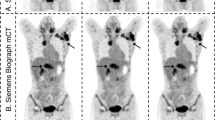

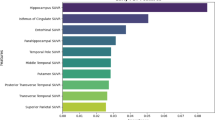

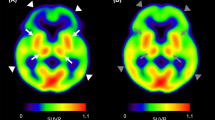

Quantitative PET underpins diagnosis and treatment monitoring in neurodegenerative disease, yet systematic biases between PET-MRI and PET-CT preclude threshold transfer and cross-site comparability. We developed and validated the first unified, anatomically guided deep-learning framework to harmonize PET-MRI quantification to PET-CT standards across multiple tracers and scanner manufacturers. The model learns CT-anchored attenuation representations using a vision transformer autoencoder, aligns MRI features to the CT space via contrastive objectives, and performs attention-guided residual correction. In paired same-day scans (N = 70; 18F-FDG, 18F-florbetaben, and 18F-florzolotau), cross-platform bias fell by >80% while preserving inter-regional biological topology. The framework generalized zero-shot to held-out tracers (18F-florbetapir and 18F-FP-CIT) without retraining. Multicenter validation (N = 420; three sites, four vendors) reduced amyloid Centiloid discrepancies from 23.6 to 4.1 (close to, though slightly above, PET-CT test–retest variability) and aligned tau SUVR thresholds. These results support more consistent cross-platform diagnostic cut-offs and reliable longitudinal monitoring when patients transition between modalities, establishing a practical route to scalable, radiation-sparing quantitative PET in therapeutic workflows.

Similar content being viewed by others

Data availability

The main data supporting the results in this study are available within the article and its Supplementary Information. Individual-level patient data are protected because of patient privacy; they are accessible with the consent of the data management committee from institutions and are not publicly available. Requests for the non-profit use of the images and related clinical information should be sent to C.T.Z. (zuochuantao@fudan. edu.cn). The data management committee will then review all the requests and grant permission (if successful). All data shared will be deidentified.

Code availability

The code for this study is available at https://github.com/ZAC0713/Multi-Tracer-PETMR-Uptake-Correction.

References

Gatidis, S. et al. Comprehensive oncologic imaging in infants and preschool children with substantially reduced radiation exposure using combined simultaneous ¹⁸F-fluorodeoxyglucose positron emission tomography/magnetic resonance imaging: a direct comparison to ¹⁸F-fluorodeoxyglucose positron emission tomography/computed tomography. Investig. Radiol. 51, 7–14 (2016).

Martin, O. et al. PET/MRI versus PET/CT for whole-body staging: results from a single-center observational study on 1,003 sequential examinations. J. Nucl. Med. 61, 1131–1136 (2020).

Hosono, M. et al. Cumulative radiation doses from recurrent PET–CT examinations. Br. J. Radiol. 94, 20210388 (2021).

van Dyck, C. H. et al. Lecanemab in early Alzheimer’s disease. N. Engl. J. Med. 388, 9–21 (2023).

Mintun, M. A. et al. Donanemab in early Alzheimer’s disease. N. Engl. J. Med. 384, 1691–1704 (2021).

Rabinovici, G. D. et al. Updated appropriate use criteria for amyloid and tau PET: a report from the Alzheimer’s Association and Society for Nuclear Medicine and Molecular Imaging Workgroup. Alzheimer's Dement. 21, e14338 (2025).

Weber, W. A. et al. What is theranostics? J. Nucl. Med. 64, 669–670 (2023).

Cummings, J. et al. Lecanemab: appropriate use recommendations. J. Prev. Alzheimer's Dis. 10, 362–377 (2023).

Shekari, M. et al. Stress testing the Centiloid: precision and variability of PET quantification of amyloid pathology. Alzheimer's Dement. 20, 5102–5113 (2024).

Royse, S. K. et al. Validation of amyloid PET positivity thresholds in centiloids: a multisite PET study approach. Alzheimer's Res. Ther. 13, 99 (2021).

Navitsky, M. et al. Standardization of amyloid quantitation with florbetapir standardized uptake value ratios to the Centiloid scale. Alzheimer's Dement. 14, 1565–1571 (2018).

Hanseeuw, B. J. et al. Defining a Centiloid scale threshold predicting long-term progression to dementia in patients attending the memory clinic: an [18 F] flutemetamol amyloid PET study. Eur. J. Nucl. Med. Mol. Imaging 48, 302–310 (2020).

Leuzy, A. et al. Harmonizing tau positron emission tomography in Alzheimer’s disease: the CenTauR scale and the joint propagation model. Alzheimer's Dement. 20, 5833–5848 (2024).

Jagust, W. J. et al. Quantitative brain amyloid PET. J. Nucl. Med. 65, 670–678 (2024).

Seith, F. et al. Comparison of positron emission tomography quantification using magnetic resonance– and computed tomography–based attenuation correction in physiological tissues and lesions. Investig. Radiol. 51, 66–71 (2016).

Sousa, J. M. et al. Comparison of quantitative [11 C]PE2I brain PET studies between an integrated PET/MR and a stand-alone PET system. Phys. Med. 117, 103185 (2024).

Ladefoged, C. N. et al. A multi-centre evaluation of eleven clinically feasible brain PET/MRI attenuation correction techniques using a large cohort of patients. NeuroImage 147, 346–359 (2017).

Catana, C. Attenuation correction for human PET/MRI studies. Phys. Med. Biol. 65, 23TR02 (2020).

Hamdi, M., Ying, C., An, H. & Laforest, R. An automatic pipeline for PET/MRI attenuation correction validation in the brain. EJNMMI Phys. 10, 71 (2023).

Ladefoged, C. N. et al. AI-driven attenuation correction for brain PET/MRI: clinical evaluation of a dementia cohort and importance of the training group size. Neuroimage 222, 117221 (2020).

Gong, K. et al. Attenuation correction using deep Learning and integrated UTE/multi-echo Dixon sequence: evaluation in amyloid and tau PET imaging. Eur. J. Nucl. Med. Mol. Imaging 48, 1351–1361 (2020).

Koesters, T. et al. Dixon sequence with superimposed model-based bone compartment provides highly accurate PET/MR attenuation correction of the brain. J. Nucl. Med. 57, 918–924 (2016).

Krokos, G., MacKewn, J., Dunn, J. & Marsden, P. A review of PET attenuation correction methods for PET-MR. EJNMMI Phys. 10, 52 (2023).

Arabi, H., Bortolin, K., Ginovart, N., Garibotto, V. & Zaidi, H. Deep learning-guided joint attenuation and scatter correction in multitracer neuroimaging studies. Hum. Brain Mapp. 41, 3667–3679 (2020).

Akamatsu, G. et al. A review of harmonization strategies for quantitative PET. Ann. Nucl. Med. 37, 71–88 (2023).

Aide, N. et al. EANM/EARL harmonization strategies in PET quantification: from daily practice to multicentre oncological studies. Eur. J. Nucl. Med. Mol. Imaging 44, 17–31 (2017).

Shi, L. et al. Deep learning-based attenuation map generation with simultaneously reconstructed PET activity and attenuation and low-dose application. Phys. Med. Biol. 68, 035014 (2023).

Dayarathna, S. et al. Deep learning based synthesis of MRI, CT and PET: review and analysis. Med. Image Anal. 92, 103046 (2024).

Guan, Y. et al. Synthetic CT generation via variant invertible network for brain PET attenuation correction. IEEE Trans. Radiat. Plasma Med. Sci. 9, 325–336 (2024).

Liu, Z. et al. Recent progress in transformer-based medical image analysis. Comput. Biol. Med. 164, 107268 (2023).

Takahashi, S. et al. Comparison of vision transformers and convolutional neural networks in medical image analysis: a systematic review. J. Med. Syst. 48, 84 (2024).

He, K. et al. Masked autoencoders are scalable vision learners. In Proc. IEEE/CVF Conference on Computer Vision and Pattern Recognition, 16000–16009 (IEEE, 2022).

Shen, Z. et al. Cross-modality PET image synthesis for Parkinson's Disease diagnosis: a leap from [18 F]FDG to [11 C]CFT. Eur. J. Nucl. Med. Mol. Imaging 52, 1566 –1575 (2025).

Salehjahromi, M. et al. Synthetic PET from CT improves diagnosis and prognosis for lung cancer: proof of concept. Cell Rep. Med. 5, 101463 (2024).

Guo, X. et al. TAI-GAN: a temporally and anatomically informed generative adversarial network for early-to-late frame conversion in dynamic cardiac PET inter-frame motion correction. Med. Image Anal. 96, 103190 (2024).

Vincent, P. et al. Stacked denoising autoencoders: Learning useful representations in a deep network with a local denoising criterion. J. Mach. Learn. Res. 11, 3371–3408 (2010).

Jiang, S., Hondelink, L., Suriawinata, A. A. & Hassanpour, S. Masked pre-training of transformers for histology image analysis. J. Pathol. Inform. 15, 100386 (2024).

Tang, F. et al. Hi-End-MAE: hierarchical encoder-driven masked autoencoders are stronger vision learners for medical image segmentation. Med. Image Anal. 107, 103770 (2026).

Liang, J. et al. Swinir: Image restoration using Swin transformer. In Proc. IEEE/CVF International Conference on Computer Vision, 1833–1844 (IEEE, 2021).

Zamir, S. W. et al. Restormer: efficient transformer for high-resolution image restoration. In Proc. IEEE/CVF Conference on Computer Vision and Pattern Recognition, 5728–5739 (IEEE, 2022).

Yang, Z. et al. Drmc: a generalist model with dynamic routing for multi-center pet image synthesis. In Proc. International Conference on Medical Image Computing and Computer-Assisted Intervention, 36–46 (Springer, 2023).

Yang, Z. et al. All-in-one medical image restoration via task-adaptive routing. In Proc. International Conference on Medical Image Computing and Computer-Assisted Intervention, 67–77 (Springer, 2024).

Jovalekic, A. et al. Validation of quantitative assessment of florbetaben PET scans as an adjunct to the visual assessment across 15 software methods. Eur. J. Nucl. Med. Mol. Imaging 50, 3276–3289 (2023).

Kim, J. W., Khan, A. U. & Banerjee, I. Systematic review of hybrid vision transformer architectures for radiological image analysis. J. Imaging Inform. Med. 38, 3248–3262 (2025).

Taleb, A., Kirchler, M., Monti, R. & Lippert, C. Contig: self-supervised multimodal contrastive learning for medical imaging with genetics. In Proc. IEEE/CVF Conference on Computer Vision and Pattern Recognition, 20908–20921 (IEEE, 2022).

Zhang, S., Zhang, J., Tian, B., Lukasiewicz, T. & Xu, Z. Multi-modal contrastive mutual learning and pseudo-label re-learning for semi-supervised medical image segmentation. Med. Image Anal. 83, 102656 (2023).

Toyonaga, T. et al. Deep learning-based attenuation correction for whole-body PET — a multi-tracer study with 18F-FDG, 68 Ga-DOTATATE, and 18F-Fluciclovine. Eur. J. Nucl. Med. Mol. Imaging 49, 3086–3097 (2022).

Villemagne, V. L. et al. Amyloid β deposition, neurodegeneration, and cognitive decline in sporadic Alzheimer’s disease: a prospective cohort study. Lancet Neurol. 12, 357–367 (2013).

Jack, C. R. Jr. et al. Longitudinal tau PET in ageing and Alzheimer’s disease. Brain 141, 1517–1528 (2018).

Salvadó, G. et al. Centiloid cut-off values for optimal agreement between PET and CSF core AD biomarkers. Alzheimer's Res. Ther. 11, 27 (2019).

Salimi, Y., Mansouri, Z., Nkoulou, R., Mainta, I. & Zaidi, H. Deep learning-based CT-less cardiac segmentation of PET images: a robust methodology for multi-tracer nuclear cardiovascular imaging. J. Imaging Inform. Med. 39, 933–947 (2025).

Orlhac, F. et al. A guide to ComBat harmonization of imaging biomarkers in multicenter studies. J. Nucl. Med. 63, 172–179 (2022).

Yang, F. et al. Multicentric study on the reproducibility and robustness of PET-based radiomics features with a realistic activity painting phantom. PLoS ONE 19, e0309540 (2024).

Wang, H. et al. Robust and generalizable artificial intelligence for multi-organ segmentation in ultra-low-dose total-body PET imaging: a multi-center and cross-tracer study. Eur. J. Nucl. Med. Mol. Imaging 52, 3004–3018 (2025).

Andersen, F. L. et al. Combined PET/MR imaging in neurology: MR-based attenuation correction implies a strong spatial bias when ignoring bone. Neuroimage 84, 206–216 (2014).

Lindemann, M. E. et al. Systematic evaluation of human soft tissue attenuation correction in whole-body PET/MR: implications from PET/CT for optimization of MR-based AC in patients with normal lung tissue. Med. Phys. 51, 192–208 (2023).

Chen, K. et al. Harmonizing florbetapir and PiB PET measurements of cortical Aβ plaque burden using multiple regions-of-interest and machine learning techniques: an alternative to the Centiloid approach. Alzheimer’s Dement. 20, 2165–2172 (2024).

Petersen, R. C. Mild cognitive impairment as a diagnostic entity. J. Intern. Med. 256, 183–194 (2004).

Lewis, G. DSM-IV. Diagnostic and Statistical Manual of Mental Disorders, 4th edn. By the American Psychiatric Association.(Pp. 886;£ 34.95.) APA: Washington, DC. 1994. Psychol. Med. 26, 651–652 (1996).

McKhann, G. M. et al. The diagnosis of dementia due to Alzheimer’s disease: recommendations from the National Institute on Aging-Alzheimer’s Association workgroups on diagnostic guidelines for Alzheimer’s disease. Alzheimer’s Dement. 7, 263–269 (2011).

Jessen, F. et al. A conceptual framework for research on subjective cognitive decline in preclinical Alzheimer’s disease. Alzheimer's Dement. 10, 844–852 (2014).

Román, G. C. & Tatemichi, T. K. Vascular dementia. Neurology, 43, 2160–2160 (1993).

Vandenberghe, R. Sense and sensitivity of novel criteria for frontotemporal dementia. Brain 134, 2450–2453 (2011).

McKeith, I. G. et al. Diagnosis and management of dementia with Lewy bodies: Fourth consensus report of the DLB Consortium. Neurology 89, 88–100 (2017).

Armstrong, M. J. et al. Criteria for the diagnosis of corticobasal degeneration. Neurology 80, 496–503 (2013).

Postuma, R. B. et al. MDS clinical diagnostic criteria for Parkinson’s disease. Mov. Disord. 30, 1591–1601 (2015).

Höglinger, G. U. et al. Clinical diagnosis of progressive supranuclear palsy: the movement disorder society criteria. Mov. Disord. 32, 853–864 (2017).

Gilman, S. et al. Second consensus statement on the diagnosis of multiple system atrophy. Neurology 71, 670–676 (2008).

Groot, C. et al. Tau positron emission tomography for predicting dementia in individuals with mild cognitive impairment. JAMA Neurol. 81, 845–856 (2024).

Johnson, K. A. et al. Appropriate use criteria for amyloid PET: a report of the Amyloid Imaging Task Force, the Society of Nuclear Medicine and Molecular Imaging, and the Alzheimer’s Association. Alzheimer's Dement. 9, e-1–16 (2013).

Lundeen, T. F., Seibyl, J. P., Covington, M. F., Eshghi, N. & Kuo, P. H. Signs and artifacts in amyloid PET. Radiographics 38, 2123–2133 (2018).

Fleisher, A. S. et al. Positron emission tomography imaging with [18 F]flortaucipir and postmortem assessment of Alzheimer's disease neuropathologic changes. JAMA Neurol. 77, 829–839 (2020).

Liu, F.-T. et al. 18F-Florzolotau PET imaging captures the distribution patterns and regional vulnerability of tau pathology in progressive supranuclear palsy. Eur. J. Nucl. Med. Mol. Imaging 50, 1395–1405 (2023).

Li, L. et al. Clinical utility of 18 F-APN-1607 Tau PET imaging in patients with progressive supranuclear palsy. Mov. Disord. 36, 2314–2323 (2021).

Chen, T., Li, B. & Zeng, J. Learning traces by yourself: blind image forgery localization via anomaly detection with ViT-VAE. IEEE Signal Process. Lett. 30, 150–154 (2023).

Woo, S., Park, J., Lee, J.-Y. & Kweon, I. S. Cbam: convolutional block attention module. In Proc. European Conference on Computer Vision (ECCV), 3–19 (Springer, 2018).

Wang, Z., Bovik, A. C., Sheikh, H. R. & Simoncelli, E. P. Image quality assessment: from error visibility to structural similarity. IEEE Trans. Image Process. 13, 600–612 (2004).

Desikan, R. S. et al. An automated labeling system for subdividing the human cerebral cortex on MRI scans into gyral based regions of interest. Neuroimage 31, 968–980 (2006).

Fischl, B. et al. Whole brain segmentation: automated labeling of neuroanatomical structures in the human brain. Neuron 33, 341–355 (2002).

Tian, Y., Margulies, D. S., Breakspear, M. & Zalesky, A. Topographic organization of the human subcortex unveiled with functional connectivity gradients. Nat. Neurosci. 23, 1421–1432 (2020).

Eklund, A., Nichols, T. E. & Knutsson, H. Cluster failure: why fMRI inferences for spatial extent have inflated false-positive rates. Proc. Natl. Acad. Sci. USA 113, 7900–7905 (2016).

Nichols, T. E. & Holmes, A. P. Nonparametric permutation tests for functional neuroimaging: a primer with examples. Hum. Brain Mapp. 15, 1–25 (2002).

Acknowledgements

The computation resources used in this study were provided by the AI4S Initiative and the HPC Platform of ShanghaiTech University. This study was supported by the National Natural Science Foundation of China (grant no. 82394434, 82272039, and 82021002), STI2030-Major Projects (grant no. 2022ZD0211600) and Shanghai Science and Technology Program Project (25TS1405000) to C.T.Z; the National Natural Science Foundation of China (grant no. 82394432) to K.S; the Shanghai Medical Innovation & Development Foundation (grant no. SMIDF-150-2025A18) to H.Z; the Basic Research Talent Development Program of Huashan Hospital, Fudan University (grant no. 2025JC077) to J.W.

Author information

Authors and Affiliations

Contributions

J.W., A.C.Z., C.T.Z., and Q.W. participated in conceptualization, methodology, resources, writing of the original draft, supervision and funding acquisition. J.W., A.C.Z., and H.L.H. participated in the conceptualization, methodology, and formal data analysis. J.W., A.C.Z., and H.L.H. participated in the writing of the original draft. J.W., Y.H.Z. H.W.Z., J.L., C.Y.L., Q.X., J.Y.L., M.N., and Y.H.G. collected and organized data. H.W.Z., J.H.J., M.W., K.C.S., M.T., and D.G.S. provided critical comments and reviewed the paper. All authors contributed to the research, editing and approval of the paper.

Corresponding authors

Ethics declarations

Competing interests

D.G.S. is a consultant and employee of Shanghai United Imaging Intelligence Co., Ltd. The company had no role in designing or performing the study, nor in analyzing or interpreting the data. The other authors declare no competing interests.

Additional information

Publisher’s note Springer Nature remains neutral with regard to jurisdictional claims in published maps and institutional affiliations.

Supplementary information

Rights and permissions

Open Access This article is licensed under a Creative Commons Attribution-NonCommercial-NoDerivatives 4.0 International License, which permits any non-commercial use, sharing, distribution and reproduction in any medium or format, as long as you give appropriate credit to the original author(s) and the source, provide a link to the Creative Commons licence, and indicate if you modified the licensed material. You do not have permission under this licence to share adapted material derived from this article or parts of it. The images or other third party material in this article are included in the article’s Creative Commons licence, unless indicated otherwise in a credit line to the material. If material is not included in the article’s Creative Commons licence and your intended use is not permitted by statutory regulation or exceeds the permitted use, you will need to obtain permission directly from the copyright holder. To view a copy of this licence, visit http://creativecommons.org/licenses/by-nc-nd/4.0/.

About this article

Cite this article

Wang, J., Zhong, A., Xu, Q. et al. A unified deep learning framework for cross-platform harmonization of multi-tracer PET quantification in neurodegenerative disease. npj Digit. Med. (2026). https://doi.org/10.1038/s41746-026-02570-0

Received:

Accepted:

Published:

DOI: https://doi.org/10.1038/s41746-026-02570-0