Abstract

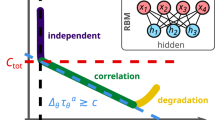

The restricted Boltzmann machine (RBM) is a stochastic neural network capable of solving a variety of difficult tasks including non-deterministic polynomial-time hard combinatorial optimization problems and integer factorization. The RBM is ideal for hardware acceleration as its architecture is compact (requiring few weights and biases) and its simple parallelizable sampling algorithm can find the ground states of difficult problems. However, training the RBM on these problems is challenging as the training algorithm tends to fail for large problem sizes and it can be hard to find efficient mappings. Here we show that multiple, small computational modules can be combined to create field-programmable gate-array-based RBMs capable of solving more complex problems than their individually trained parts. Our approach offers a combination of developments in training, model quantization and efficient hardware implementation for inference. With our implementation, we demonstrate hardware-accelerated factorization of 16-bit numbers with high accuracy and with a speed improvement of 10,000 times over a central processing unit implementation and 1,000 times over a graphics processing unit implementation, as well as a power improvement of 30 and 7 times, respectively.

This is a preview of subscription content, access via your institution

Access options

Access Nature and 54 other Nature Portfolio journals

Get Nature+, our best-value online-access subscription

$32.99 / 30 days

cancel any time

Subscribe to this journal

Receive 12 digital issues and online access to articles

$119.00 per year

only $9.92 per issue

Buy this article

- Purchase on SpringerLink

- Instant access to the full article PDF.

USD 39.95

Prices may be subject to local taxes which are calculated during checkout

Similar content being viewed by others

Data availability

The data to reproduce Figs. 1–4 have been deposited in a public GitHub repository (https://github.com/Saavan/Logic_RBM) and on Zenodo62.

Code availability

The code to reproduce data from this work has been deposited in a public GitHub repository (https://github.com/Saavan/Logic_RBM) and on Zenodo62.

References

Colwell, R. The chip design game at the end of Moore’s law. In 2013 IEEE Hot Chips 25 Symposium (HCS) 1–16 (IEEE, 2013).

Waldrop, M. M. More than Moore. Nature 530, 144–147 (2016).

Barahona, F. On the computational complexity of Ising spin glass models. J. Phys. A: Math. Gen. 15, 3241–3253 (1982).

Kirkpatrick, S., Gelatt, C. D. & Vecchi, M. P. Optimization by simulated annealing. Science 220, 671–680 (1983).

Lucas, A. Ising formulations of many NP problems. Front. Phys. 2, 1–15 (2014).

Ackley, D. H., Hinton, G. E. & Sejnowski, T. J. A learning algorithm for Boltzmann machines. Cogn. Sci. 9, 147–169 (1985).

Korst, J. H. & Aarts, E. H. Combinatorial optimization on a Boltzmann machine. J. Parallel Distrib. Comput. 6, 331–357 (1989).

Hinton, G. E. Training products of experts by minimizing contrastive divergence. Neural Comput. 14, 1771–1800 (2002).

Tieleman, T. Training restricted Boltzmann machines using approximations to the likelihood gradient. In Proc. 25th International Conference on Machine Learning 1064–1071 (ACM, 2008).

Tieleman, T. & Hinton, G. Using fast weights to improve persistent contrastive divergence. In Proc. 26th Annual International Conference on Machine Learning 382, 1033–1040 (ACM, 2009).

Bojnordi, M. N. & Ipek, E. Memristive Boltzmann machine: a hardware accelerator for combinatorial optimization and deep learning. In 2016 IEEE International Symposium on High Performance Computer Architecture (HPCA) 1–13 (IEEE, 2016).

Cooper, G. F. The computational complexity of probabilistic inference using Bayesian belief networks. Artif. Intell. 42, 393–405 (1990).

Aarts, E. H. & Korst, J. H. Boltzmann machines and their applications. In Lecture Notes in Computer Science (including Subseries Lecture Notes in Artificial Intelligence and Lecture Notes in Bioinformatics) 258, 34–50 (Springer, 1987).

Sutton, B., Camsari, K. Y., Behin-Aein, B. & Datta, S. Intrinsic optimization using stochastic nanomagnets. Sci. Rep. 7, 44370 (2017).

Geman, S, & Geman D. Stochastic relaxation, Gibbs distributions, and the Bayesian restoration of images. IEEE Trans. Pattern Anal. Mach. Intell. 6, 721-741 (1984).

Sutskever, I. & Tieleman, T. On the convergence properties of contrastive divergence. J. Mach. Learn. Res. 9, 789–795 (2010).

Camsari, K. Y., Faria, R., Sutton, B. M. & Datta, S. Stochastic p-bits for invertible logic. Phys. Rev. X 7, 031014 (2017).

Sagi, O. & Rokach, L. Ensemble learning: a survey. WIREs Data Mining Knowl. Discov. 8, e1249 (2018).

Srivastava, N. & Salakhutdinov, R. Multimodal learning with deep Boltzmann machines. Adv. Neural Inf. Process. Syst. 3, 2222–2230 (2012).

Jouppi, N. P. et al. In-datacenter performance analysis of a tensor processing unit. In Proc. 44th Annual International Symposium on Computer Architecture 1–12 (ACM, 2017).

Ly, D. L. & Chow, P. A high-performance FPGA architecture for restricted Boltzmann machines. In Proc. ACM/SIGDA International Symposium on Field Programmable Gate Arrays 73–82 (ACM, 2009).

Kim, S. K., McAfee, L. C., McMahon, P. L. & Olukotun, K. A highly scalable restricted Boltzmann machine FPGA implementation. In 2009 International Conference on Field Programmable Logic and Applications 367–372 (IEEE, 2009).

Kim, S. K., McMahon, P. L. & Olukotun, K. A large-scale architecture for restricted Boltzmann machines. In 2010 18th IEEE Annual International Symposium on Field-Programmable Custom Computing Machines 201–208 (IEEE, 2010).

Han, S., Mao, H. & Dally, W. J. Deep compression: compressing deep neural networks with pruning, trained quantization and Huffman coding. In 4th International Conference on Learning Representations, ICLR 2016—Conference Track Proceedings (ICLR, 2016).

Ullrich, K., Welling, M. & Meeds, E. Soft weight-sharing for neural network compression. In 5th International Conference on Learning Representations, ICLR 2017—Conference Track Proceedings (ICLR, 2019).

Chen, W., Wilson, J. T., Tyree, S., Weinberger, K. Q. & Chen, Y. Compressing neural networks with the hashing trick. In Proc. 32nd International Conference on Machine Learning 37, 2285–2294 (PMLR, 2015).

Dally, W. High-performance hardware for machine learning. Nips Tutorial 2 (2015).

Cook, S. A. & A., S. The complexity of theorem-proving procedures. In Proc. Third Annual ACM Symposium on Theory of Computing 151–158 (ACM, 1971).

Karp, R. M. Reducibility among combinatorial problems. In Complexity of Computer Computations 85–103 (Springer, 1972).

Hoos, H. H. & Stützle, T. Stochastic Local Search (Elsevier, 2004).

Ly, D., Paprotski, V. & Yen, D. Neural Networks on GPUs: Restricted Boltzmann Machines. Report No. 994068682 (Univ. of Toronto, 2008).

Han, S. et al. EIE: efficient inference engine on compressed deep neural network. In Proc. 43rd International Symposium on Computer Architecture 243–254 (IEEE, 2016).

Lo, C. & Chow, P. Building a multi-FPGA virtualized restricted Boltzmann machine architecture using embedded MPI. In Proc. 19th ACM/SIGDA International Symposium on Field Programmable Gate Arrays 189–198 (ACM, 2011).

Yamamoto, K. et al. A time-division multiplexing Ising machine on FPGAs. In Proc. 8th International Symposium on Highly Efficient Accelerators and Reconfigurable Technologies 3 (ACM, 2017).

Kim, L. W., Asaad, S. & Linsker, R. A fully pipelined FPGA architecture of a factored restricted Boltzmann machine artificial neural network. In ACM Trans. Reconfigurable Technol. Syst. 7, 5 (ACM, 2014).

Li, B., Najafi, M. H. & Lilja, D. J. An FPGA implementation of a restricted Boltzmann machine classifier using stochastic bit streams. In Proc. 2015 IEEE 26th International Conference on Application-specific Systems, Architectures and Processors (ASAP) 2015, 68–69 (IEEE, 2015).

Li, B., Najafi, M. H. & Lilja, D. J. Using stochastic computing to reduce the hardware requirements for a restricted Boltzmann machine classifier. In Proc. 2016 ACM/SIGDA International Symposium on Field-Programmable Gate Arrays 36–41 (ACM, 2016).

Ly, D. L. & Chow, P. A multi-FPGA architecture for stochastic restricted Boltzmann machines. In 2009 International Conference on Field Programmable Logic and Applications 168–173 (IEEE, 2009).

Borders, W. A. et al. Integer factorization using stochastic magnetic tunnel junctions. Nature 573, 390–393 (2019).

Jiang, S., Britt, K. A., McCaskey, A. J., Humble, T. S. & Kais, S. Quantum annealing for prime factorization. Sci. Rep. 8, 17667 (2018).

Brémaud, P. Markov Chains: Gibbs Fields and Monte Carlo Simulation 253–322 (Springer, 1999).

Yamaoka, M. et al. A 20k-spin Ising chip to solve combinatorial optimization problems with CMOS annealing. IEEE J. Solid-State Circuits 51, 303–309 (2016).

Boyd, J. Silicon chip delivers quantum speeds [news]. IEEE Spectrum 55, 10–11 (2018).

Schneider, C. R. & Card, H. C. Analog CMOS deterministic Boltzmann circuits. IEEE J. Solid-State Circuits 28, 907–914 (1993).

Belletti, F. et al. Janus: an FPGA-based system for high-performance scientific computing. Comput. Sci. Eng. 11, 48–58 (2009).

Ko, G. G., Chai, Y., Rutenbar, R. A., Brooks, D. & Wei, G. Y. FlexGibbs: reconfigurable parallel Gibbs sampling accelerator for structured graphs. In 2019 IEEE 27th Annual International Symposium on Field-Programmable Custom Computing Machines (FCCM) 334 (IEEE, 2019).

Wan, W. et al. 33.1 A 74 TMACS/W CMOS-RRAM neurosynaptic core with dynamically reconfigurable dataflow and in-situ transposable weights for probabilistic graphical models. In 2020 IEEE International Solid-State Circuits Conference—(ISSCC) 2020, 498–500 (IEEE, 2020).

Dridi, R. & Alghassi, H. Prime factorization using quantum annealing and computational algebraic geometry. Sci. Rep. 7, 43048 (2017).

Wang, Z., Marandi, A., Wen, K., Byer, R. L. & Yamamoto, Y. Coherent Ising machine based on degenerate optical parametric oscillators. Phys. Rev. A 88, 063853 (2013).

McMahon, P. L. et al. A fully programmable 100-spin coherent Ising machine with all-to-all connections. Science 354, 614–617 (2016).

Camsari, K. Y., Salahuddin, S. & Datta, S. Implementing p-bits with embedded MTJ. IEEE Electron Device Lett. 38, 1767–1770 (2017).

Salakhutdinov, R. & Hinton, G. Deep Boltzmann machines. In Proc. Machine Learning Research 5, 448–455 (PMLR, 2009).

Salakhutdinov, R. & Larochelle, H. Efficient learning of deep Boltzmann machines. In Proc. Thirteenth International Conference on Artificial Intelligence and Statistics 9, 693–700 (PMLR, 2010).

Savich, A. W. & Moussa, M. Resource efficient arithmetic effects on RBM neural network solution quality using MNIST. In 2011 International Conference on Reconfigurable Computing and FPGAs 2011, 35–40 (IEEE, 2011).

Tsai, C. H., Chih, Y. T., Wong, W. H. & Lee, C. Y. A hardware-efficient sigmoid function with adjustable precision for a neural network system. IEEE Trans. Circuits Syst., II, Exp. Briefs 62, 1073–1077 (2015).

Pervaiz, A. Z., Sutton, B. M., Ghantasala, L. A. & Camsari, K. Y. Weighted p-bits for FPGA implementation of probabilistic circuits. IEEE Trans. Neural Netw. Learn. Syst. 30.6, 1920-1926 (2017).

Tommiska, M. T. Efficient digital implementation of the sigmoid function for reprogrammable logic. IEE Proc.—Comput. Digit. Tech. 150, 403–411 (2003).

Marsaglia, G. Xorshift RNGs. J. Stat. Softw. 8, 1–6 (2003).

Matsumoto, M. & Nishimura, T. Mersenne twister: a 623-dimensionally equidistributed uniform pseudo-random number generator. In ACM Trans. Model. Comput. Simul. 8, 3–30 (ACM, 1998).

Carreira-Perpiñán, M. A. & Hinton, G. E. On Contrastive Divergence Learning (Univ. of Toronto, 2005).

Preußer, T. B. & Spallek, R. G. Ready PCIe data streaming solutions for FPGAs. In 2014 24th International Conference on Field Programmable Logic and Applications (FPL) 2014, 1–4 (IEEE, 2014).

Patel, S. Saavan/logic_rbm: v1.0.2. Zenodo https://doi.org/10.5281/zenodo.5778006 (2021).

Acknowledgements

This work was supported by ASCENT, one of six centers in JUMP, a Semiconductor Research Corporation (SRC) program sponsored by DARPA.

Author information

Authors and Affiliations

Contributions

Model synthesis and analysis was performed by S.P. FPGA programming was performed by S.P. and P.C. The manuscript was co-written by S.P., P.C. and S.S. S.S. supervised the research. All the authors contributed to discussions and commented on the manuscript.

Corresponding authors

Ethics declarations

Competing interests

The authors declare no competing interests.

Peer review

Peer review information

Nature Electronics thanks the anonymous reviewers for their contribution to the peer review of this work.

Additional information

Publisher’s note Springer Nature remains neutral with regard to jurisdictional claims in published maps and institutional affiliations.

Supplementary information

Supplementary Information (download PDF )

Supplementary Figs. 1 and 2, Tables 1–3 and Discussion.

Rights and permissions

About this article

Cite this article

Patel, S., Canoza, P. & Salahuddin, S. Logically synthesized and hardware-accelerated restricted Boltzmann machines for combinatorial optimization and integer factorization. Nat Electron 5, 92–101 (2022). https://doi.org/10.1038/s41928-022-00714-0

Received:

Accepted:

Published:

Version of record:

Issue date:

DOI: https://doi.org/10.1038/s41928-022-00714-0

This article is cited by

-

Noise-augmented chaotic Ising machines for combinatorial optimization and sampling

Communications Physics (2025)

-

A hardware demonstration of a universal programmable RRAM-based probabilistic computer for molecular docking

Nature Communications (2025)

-

Correlation free large-scale probabilistic computing using a true-random chaotic oscillator p-bit

Scientific Reports (2025)

-

Next-generation graph computing with electric current-based and quantum-inspired approaches

Nature Communications (2025)

-

Efficient optimization accelerator framework for multi-state spin Ising problems

Nature Communications (2025)