Abstract

Diffusion magnetic resonance imaging (dMRI) enables non-invasive investigation of tissue microstructure. The Standard Model (SM) of white matter aims to disentangle dMRI signal contributions from intra- and extra-axonal water compartments. However, due to the model’s high-dimensional nature, accurately estimating its parameters poses a complex problem and remains an active field of research, in which different (machine learning) strategies have been proposed. This work introduces an estimation framework based on implicit neural representations (INRs), which incorporate spatial regularization through the sinusoidal encoding of the input coordinates. The INR method is evaluated on both synthetic and in vivo datasets and compared to existing methods. Results demonstrate superior accuracy of the INR method in estimating SM parameters, particularly in low signal-to-noise conditions. Additionally, spatial upsampling of the INR can represent the underlying dataset anatomically plausibly in a continuous way. The INR is self-supervised, eliminating the need for labeled training data. It achieves fast inference, is robust to noise, supports joint estimation of SM kernel parameters and the fiber orientation distribution function with spherical harmonics orders up to at least 8, and accommodates gradient non-uniformity corrections. The combination of these properties positions INRs as a potentially important tool for analyzing and interpreting diffusion MRI data.

Similar content being viewed by others

Introduction

Diffusion magnetic resonance imaging (dMRI) is a non-invasive technique for in vivo measurement of water diffusion in tissue using magnetic field gradients. To extract biologically interpretable information, a common approach is to fit a microstructural tissue model to a set of signals acquired with different dMRI acquisition settings1,2,3,4. In the absence of diffusion time dependence, these typically include different combinations of gradient strengths (commonly quantified by the b-value), directions (b-vector), and B-tensor shape5. Microstructural parameters estimated by these models – including compartmental signal fractions and diffusivities – have shown to be sensitive to changes in brain structure due to diseases like multiple sclerosis6, Alzheimer’s disease7 and Parkinson’s disease8, and can provide a more fundamental understanding of tissue microstructure in both healthy and pathological tissues9.

The Standard Model of white matter (SM), see ref. 4 for a review, describes the signal arising from white matter by a kernel consisting of three compartments (intra-axonal, extra-axonal, and free water (occasionally omitted)) convolved with a fiber orientation distribution (FOD)10. Compartmental signal fractions and diffusivities can be estimated, alongside the parameters that describe the FOD (usually in the form of a spherical harmonics (SH) series). Nevertheless, the high-dimensional parameter space of the SM complicates the estimation of its parameters, potentially leading to low accuracy, precision, and degeneracy of estimates11. These issues become even more prominent at high noise levels.

Multiple strategies have been employed to fit microstructure models to dMRI data. When the primary goal is to estimate the tissue’s directional structure, a common two-step approach involves first fixing the kernel using a global estimate, followed by solving a linear inverse problem to estimate the fiber orientation distribution (FOD)12,13. In contrast, when the focus is on estimating the kernel parameters, the orientational dependence is factored out by using rotational invariants of the signal14,15,16,17. This last approach is most common for SM parameter estimation.

Estimation of SM parameters has been improved by machine-learning based methods including Bayesian estimators16, neural networks18,19, and fitting cubic polynomials15,20. Importantly, these approaches are commonly supervised machine-learning methods, operating at a voxel-level, that are fit by using simulated ground truth parameters and their associated signals – the training dataset. While this can be very effective, the quality of the results depend on biases existing in the training and inference datasets21. Additionally, since the methods operate at a voxel-level, they do not make any use of the spatial correlation that is naturally present in anatomy.

Recently, implicit neural representations (INRs) have been introduced to the dMRI domain as a novel self-supervised fitting method, which – rather than on a voxel level – fit models on a continuous space of coordinates and are trained on the dMRI signal directly without the realization of ground truth parameters. INRs have shown to create noise-robust continuous representations of dMRI datasets of individual subjects, using the spatial correlations present in the data. So far, they have been used to represent the diffusion signal using SH basis functions and to estimate the parameters of (multi-shell multi-tissue) constrained spherical deconvolution (MSMT-CSD)22,23,24. In this previous work, INRs demonstrate potential to improve on voxel-based methods to estimate parameters, especially in more noisy acquisitions. Since INRs are spatially regularized continuous representations of the dataset, they can potentially be beneficial when performing downstream tasks which require interpolation, such as microstructure-informed fiber tracking25,26.

Building upon our previous work22,24, we implement INRs to estimate the SM parameters alongside the FODs, and demonstrate the noise-robustness, continuous representation, and applicability of INRs for fitting on both synthetically generated and in vivo dMRI data. Synthetically generated datasets facilitate a quantitative analysis of the model outputs, while in vivo data quantitatively shows the performance in a realistic setting. The INR is compared to two existing machine learning methods for fitting the SM (Standard Model Imaging Toolbox (SMI)15 and a supervised neural network (NN)18), as well as nonlinear least squares (NLLS). Additionally, moving beyond existing methods, the FOD SH-coefficients up to order eight are estimated directly alongside the SM kernel parameters (intra-axonal diffusivity (Di), extra-axonal axial diffusivity (\({D}_{e}^{\parallel }\)), extra-axonal perpendicular diffusivity (\({D}_{e}^{\perp }\)) and intra-axonal fraction (fi)). Thus, in contrast to earlier methods that focused either on the kernel or on the FOD, this approach performs a joint estimation, which can improve accuracy and ameliorate degeneracy27. Moreover, every INR is fit on and represents a dMRI dataset of a single subject and, therefore, does not rely on a large number of training datasets as supervised methods do. Furthermore, it is capable of explicitly correcting for gradient non-uniformities by inputting the effective acquisition protocol (B-tensor) for each coordinate with the spatially varying gradient coil tensor of the scanner28. The latter would become impractical for supervised methods, which would need prohibitively large sets of training data to capture voxel-wise protocol deviations. Altogether, the proposed method provides a flexible, noise-robust, spatially coherent way of fitting the SM, which is self-supervised and, therefore, not biased by training data.

Results

Quantitative comparison on simulated data

Results from experiment 1 are presented in Fig. 1 (signal-to-noise ratio (SNR) = 20). When Gaussian noise is added, the INR method shows superior performance for all SM parameters, with both Pearson’s correlation coefficient (ρ) and root mean squared error (RMSE) achieving the highest values. Brain parameter maps from Fig. 1b shows the ability of the INR method to reproduce smooth parameter maps similar to the ground truth, where the voxel-wise fitting methods show noisy estimates due to the lack of spatial regularization. The INR method does exhibit minor overestimation of \({D}_{e}^{\parallel }\) in the splenium of the corpus callosum compared to the ground truth.

a Scatter density plots of ground truth versus parameter estimations of all methods. The titles of the subplots indicate Pearson’s correlation coefficient (ρ) and root mean squared error (RMSE). Every column corresponds to a specific parameter, which is indicated above. b SM parameter maps corresponding to the results in a. Bottom row shows the ground truth (GT).

Parameter estimation on simulated data without noise and with SNR = 50 can be found in the supplementary information section 2 (Figs. S2 and S3), showing a less prominent, but still evident improvement in ρ and RMSE of the INR method compared to other estimation methods for all parameters. Fitting without noise shows that the ground truth generating process is not positively biased towards parameter estimates of the INR.

Rician noise bias

The correlation plots between the INR with mean squared error (MSE) or Rician loss and the ground truth parameters, alongside the parameter maps for both approaches, can be seen in Fig. 2. For MSE, the Rician bias is visible in the scatter plots and parameter maps as a general over- or underestimation across all voxels. The Rician loss is able to correct the bias, resulting in better correlation with the ground truth. The bias is most significant for Di and \({D}_{e}^{\parallel }\). The bias effect is less prominent when considering SNR = 50, see supplementary information section 3, Fig. S4.

a Scatter plots for estimation with MSE and Rician loss. Pearson correlation coefficient (ρ) and Root-Mean Squared Error (RMSE) are indicated in the subplot’s title. Every column corresponds to a specific parameter, which is indicated above. b Brain parameter maps of the predictions from a. Difference maps are calculated with the ground truth (GT) parameters.

Qualitative comparison on in vivo data

The parameter maps for the in vivo dataset for the different methods are seen in Fig. 3. The differences between methods are especially visible in the capsula interna and externa, and the splenium of the corpus callosum. The INR produces maps that are more spatially smooth and show a clear (and anatomically plausible) structure, while other methods display higher spatial variability.

All SM parameters are plotted as single row. Every row corresponds to the fitting method indicated at the beginning of the specific row. All maps have equal scaling.

Estimation of SH order up to l max = 8

The INR outputs for the different SH order (lmax) FODs of the synthetic datasets are visualized in Fig. 4. Qualitative inspection of the FODs shows plausible FOD shapes and directions throughout all datasets and SH orders. Furthermore, there are no notable differences between the FOD estimates for the noiseless and SNR 50 synthetic dataset, indicating noise-robustness in the estimate. Small spurious peaks appear in the SNR 20 synthetic dataset, but the fiber orientations indicated by the larger peaks remains almost identical to both comparisons. In the in vivo dataset the INR produces plausible FOD shapes and directions as well, as visualized in Fig. 5. The backgrounds in Figs. 4 and 5 show no large voxel-wise differences for fi across the different orders. This holds true for all kernel parameter estimates, which is shown in further detail in the supplementary information section 4, Tables S1 and S2.

Different combinations of lmax (rows) and datasets (columns) are shown, with parameter map fi as background. Fiber orientation distributions are scaled for visibility.

Fiber orientation distributions (FODs) are shown for increasing lmax with parameter map f as background. FODs are scaled for visibility.

Effect of gradient non-uniformity correction of SM parameter estimation

Brain parameter maps with and without gradient non-uniformity correction on in vivo data are presented in Fig. 6. Difference maps show a significant effect on Di and \({D}_{e}^{\parallel }\). Corrected maps show the effect at the edges of the brain, mainly in the frontal lobe. This is to be expected as gradient non-uniformity is strongest there. Di and \({D}_{e}^{\parallel }\) show lower values after correction. The influence of the correction is least apparent on fi and the rotational invariant of lmax = 2 (p2). The effect of the gradient non-uniformity correction is similar for both INR and NLLS fitting. Lower diffusivity values at the front and back of the brain appear using both methods, as well as higher p2 values. The parameter f shows only small differences between the approaches: NLLS shows no effect, while INR shows small corrections throughout the brain. Results of combining Rician bias loss with gradient non-uniformity correction can be found in the supplementary information section 5, Fig. S5.

The top row shows parameter maps without correction. The middle rows show the effect of gradient non-uniformity correction using the INR method. Bottom rows show the effect of gradient non-uniformity correction on NLLS parameter estimation. The difference maps are computed relative to the parameter estimates obtained with the same method, but without applying gradient non-uniformity correction.

Implicit neural representation for spatial interpolation

The visualizations in Fig. 7 show the comparison between the p2 parameter maps using different methods for upsampling. The linear interpolation maintains the pixelated appearance of the low-resolution data, especially visible in structures that are not aligned with the image grid (a ’staircase-like’ effect). These artifacts are, although less prominent, still visible in cubic interpolation. The INR does not show these artifacts at this resolution, as the underlying continuous representation is less limited by the input resolution.

A coronal slice (a) and a sagittal slice (b) of the p2 parameter map are shown at the original resolution, and upsampled 8x in every dimension using linear interpolation, cubic interpolation, and the INR.

Model fitting times

Model fitting times for all INR experiments are shown in Table 1. The main influence on the fitting time is the size of the dataset (number of voxels in the WM mask), the size of the hidden layers, amount of epochs, the number of outputs (determined by lmax), and the usage of the analytical or numerical integration solution. The analytical approach was used in the experiments with the simulated dataset and the numerical approach for the in vivo data. The number of white matter voxels included in the simulated data and in vivo data are 60800 and 11266, respectively. This means that the analytical approach is considerably faster than the numerical approach. The addition of gradient non-uniformity correction also increases fitting time.

Discussion

Implications of the results

In this work, we show how INRs can be used to estimate continuous, noise-robust SM parameter maps of simulated and in vivo datasets and with FODs of different SH orders. The self-supervised, subject-wise nature of the framework prevents training set bias, while the continuous representation allows spatial correlations to improve parameter estimates and reduce the impact of noise. For high SNR levels, the supervised NN method achieves performance metrics close to those of the INR approach; however, it can be significantly more time-consuming due to its reliance on NLLS for generating part of the training data and the need to retrain the model for each specific acquisition protocol (see Supplementary Fig. S3). At higher noise levels (SNR = 20), the INR method clearly outperforms all other methods (see Fig. 1). On in vivo data, the underlying representation shows a more structurally correlated appearance, without large inter-voxel variability. This is further illustrated by upsampling the INR at high resolution. Parameter estimates for FODs up to SH orders of at least eight can be provided alongside the other SM parameters without introducing bias in other parameters as shown in the supplementary information section 4. The self-supervised nature of the method avoids training set bias prevalent in supervised fitting methods.

The proposed hyperparameters np = 5000 and nh = 2048 provide a robust setting that can provide good representations of dMRI datasets with different sizes, acquisition protocols, and levels of noise. An exploration of these hyperparameters can be found in the supplement of24. The hyperparameter σ2 provides a convenient way of tuning the model to provide stronger or weaker spatial regularization, as detailed in the supplementary information section 1, which shows results for σ2 = 1 (extremely smooth) up to σ2 = 8 (granular).

Fitting time is a critical factor when applying dMRI microstructure modeling, and various efforts have been made to speed up the computationally heavy nonlinear optimization and enable large-scale population studies19,29,30. INRs circumvent this through its inherent self-supervised multi-layer perceptron (MLP) structure, which allows for efficient, continuous representation of the parameter space without requiring voxel-wise optimization. The INRs are fit on consumer-grade hardware in around 5 up to at most 23 minutes for the scenarios tested, much faster than classic NLLS approaches. Using the analytical integration approach decreases training time considerably, possible when excluding negative bΔ values in acquisition protocols. Once the INR is fit to the dataset, the inference time is negligible. For example, an lmax = 2 model with nh = 2048 and np = 5000 can perform inference at one million coordinates in 2.7 seconds and for lmax = 8 in 2.8 seconds, including data writing times to and from the GPU. However, since INRs require a model to be fit to every individual subject, a supervised learning approach could remain faster for large multi-subject datasets consisting of many subjects with identical acquisition protocols, despite the considerable amount of training time it requires initially (e.g., 83 minutes on GPU, excluding initial NLLS parameter estimations, for the supervised NN method18).

The method’s ability to incorporate gradient non-uniformity correction in the fitting process provides an advantage over typical supervised methods, for which the training set would be impractically large to capture the spatial variability. This correction is essential as even small non-uniformities can affect parameter maps28,31,32,33, and the availability of high-performance gradient coils suffering from significant gradient non-uniformities is increasing34. To our knowledge, SMI is the only framework that has incorporated gradient non-uniformity correction into the SM fitting process, in the form of PIPE35. This approach uses SVD and linear regression to approximate the exact acquisition settings in each voxel for linear tensor encoding (LTE) acquisitions.

Limitations of the work

A limitation of using simulated data for evaluation is the variety of possible approaches to generating the ground truth. In this work, the intention was to create a ground truth with structurally smooth characteristics assumed to mimic real brain tissue. However, factors such as voxel size play a role and need to be further investigated. The ground truth generated for the synthetic experiments makes use of SMI for generating the underlying parameter maps. This could potentially bias the parameters to be in a range that favors estimation using SMI. We indeed observed that estimation on the noiseless signal showed optimal performance for SMI and NLLS (see supplementary information Fig. S2). The INR method exhibits lower performance on noiseless signals due to its inability to model voxels individually, indicating that the ground truth is not positively biased with respect to the outputs of the INR method. Nevertheless, we have attempted to reduce biases that would benefit a particular method by smoothing the parameter maps and using FODs from a different source (MSMT-CSD). Omitting the smoothing still resulted in the highest performance for INR, see supplementary information section 6 Fig. S8. Another limitation related to the ground truth is that the MGH dataset used in this study contained only LTE acquisitions, which may have led to inaccurate parameter estimates (see discussion at the end of this section). We have investigated the impact of other possible sources of severe bias on creating ground truth parameter maps from the MGH dataset, such as the relatively short diffusion time, noise estimation procedure, and included b-values (see supplementary information section 6, Figs. S6–S8). We found similar overall distributions and linear voxel-wise correlations when using longer diffusion times, noise estimate from repeated b = 0 smm−2 images, and excluding b = 200 smm−2 and b > 10.000 smm−2 images. Nevertheless, creating a ground truth that balances capturing anatomical reality while exerting sufficient control remains an important avenue to further explore.

The comparison experiments across methods were conducted using Gaussian noise, which differs from the noise characteristics of in vivo magnitude MRI data, typically following Rician or non-central Chi distributions. However, with appropriate preprocessing and ideally the availability of phase data, the noise can be transformed to approximate a Gaussian distribution36,37. This makes the use of Gaussian noise still relevant and consistent with previous work in self-supervised learning for dMRI38. The Rician noise experiments reveal biases in the parameter estimates by the INR when using MSE, suggesting that this should be taken into consideration. In this work, we show the promise of correcting for this bias by using a loss function tailored specifically to Rician noise39.

The INR shows a slight overestimation in \({D}_{e}^{\parallel }\) in the splenium of the corpus callosum in the synthetic experiments. This could be due to the ground truth exhibiting less structural coherence in this part, which is especially apparent in \({D}_{e}^{\perp }\) parameter map. Since the parameters are estimated jointly, this might influence the estimation of \({D}_{e}^{\parallel }\). Potentially, SMI does not suffer from this because it fits the SM voxel-wise and is, therefore, able to produce these combinations of parameters.

The interpretation of the in vivo parameter maps is subjective, as there is no ground truth available. The INR produces more spatially coherent estimates than other methods, showing anatomically plausible structure and physiologically plausible parameter values. This could imply that they more closely resemble the actual underlying tissue, but conclusions should be drawn with caution. For example, compared to the other maps, the INR produces a slightly higher estimate for \({D}_{e}^{\parallel }\) and a slightly lower estimate for \({D}_{e}^{\perp }\). We cannot be certain about which estimate is more accurate. To evaluate the eligibility of the in vivo acquisition protocol to fit SM itself, ground truth simulations with this protocol were performed, which can be found in the supplementary information section 7 (Fig. S9).

Additionally, correcting for gradient non-uniformities has a significant impact on the parameter estimates, yet the accuracy remains to be evaluated, although comparison to NLLS in combination with gradient non-uniformity correction shows similar results. While changes in shape due to gradient non-uniformities are taken into account (bΔ), a limitation of the current implementation is that it assumes conservation of B-tensor axial symmetry, an assumption that generally does not hold when gradient non-uniformities and non-LTE encodings are considered35. The exact impact of this approximation would necessitate implementation of SO(3) convolutions and requires further investigation. Experiment 5 shows that these corrections result in lower estimates for \({D}_{e}^{\parallel }\) which brings \({D}_{e}^{\parallel }\) more in agreement with previous work showing \({D}_{i} > {D}_{e}^{\parallel }\) (in gadolinium based contrast experiments40) and Di ~ 2.3 μm2 ms−1 (in experiments with elaborate acquisition protocols using high diffusion planar tensor encoding41). Any further inconsistency with values of \({D}_{e}^{\parallel }\) for the in vivo dataset could be caused by the inability of the acquisition protocol to discriminate solution branches as a high b-shell (b > 5000 smm−2) with LTE is lacking11,15,42. To gain more insight into the degeneracies of the INRs estimation and to enable error quantification, calculating the posterior distribution is required43,44.

Future work

The presented INR method can be extended in future work. This work has focused on including the minimal number of SM parameters to reduce the complexity of the fitting parameter space and to evaluate the method’s performance. Importantly, the framework is fully flexible to fit any biophysical model, by adjusting the forward equation to predict the signal (Fig. 9). For example, the SM implementation can be extended to include relaxation effects, which introduce compartmental T2 as fitting parameters. Further distinction can be made between intra-axonal compartmental T2 and extra-axonal compartmental T245,46, which adds two extra fitting parameters. The contribution of free water can also be introduced as a fitting parameter. However, previous work has shown that the impact of this parameter is small except for voxels around the ventricles15. Adding this parameter could resolve fitting issues around the ventricles for the in vivo data in experiment 3 where high \({D}_{e}^{\parallel }\) and \({D}_{e}^{\perp }\) values are found.

The spatial regularization inherent to INRs in the fitting process can be beneficial for other biophysical models. The presented method fits the Standard Model of white matter, which – as the name suggests – is only applicable for white matter. As a result, we applied a white matter mask, and only used the coordinates that lie inside this mask as input to the INR. This implies that for coordinates outside of the mask, and in different tissue types, INR results are not fit (correctly). Implementation of gray matter models (e.g. as in ref. 47) using INRs avoids the need for fitting solely white matter and can provide whole brain parameter maps.

The combination of estimating SM parameters together with FOD SH up to high lmax values opens up the possibility to combine the microstructural information of SM-estimates and the directional information of the FOD to do microstructure-informed tractography25,26,43,48, and future work could extend the model to estimate fiber-direction specific kernels.

When applying this method to a large number of subjects, for example when doing group analysis, fitting times of the INR might become a limiting factor. The duration of the fitting process for self-supervised learning in combination with dMRI models can potentially be considerably shortened when applying transfer learning49, meta-learning50, continual learning51, or hash-encodings52, which could make on-the-fly fitting of INRs possible. Tractography can also benefit from the continuous representation of the INR in both interpolation computation time and accuracy53.

The application of microstructural information in clinical studies remains untested in this context, but its potential utility for the diagnosis and assessment of various pathophysiological processes could be explored. The INRs performance remains to be further evaluated in pathology, particularly the effect of spatial regularization on the quantification of small lesions. The encoding frequency variance σ2 can be tuned to accommodate higher frequency changes in the signal. The performance of INRs for sparse, clinically feasible acquisition protocols remains to be investigated and represents a direction for future research38.

Conclusion

Using INRs to fit the SM provides noise-robust, spatially regularized parameter estimates. FODs of SH orders up to at least eight can be estimated alongside the SM kernel parameters. The self-supervised nature of this approach has advantages over existing (supervised) methods, as it prevents training set bias and allows for explicit correction of gradient non-uniformities, within reasonable estimation times.

Methods

Standard Model of white matter

Multiple approaches have been suggested to model white matter dMRI signal as a combination of sticks and anisotropic Gaussian diffusion compartments9,11,16,46,54,55,56. Generalization of this principle without introducing constraints on model parameters has led to a unified framework called the Standard Model of white matter4,14. The Standard Model assumes the measured signal S to be described by the convolution of a kernel \({{{\mathcal{K}}}}(b,{b}_{\Delta },{{{\boldsymbol{n}}}}\cdot {{{\boldsymbol{u}}}})\) – describing the signal arising from water diffusing within and around a coherent fiber bundle with direction n – with a distribution of fiber populations \({{{\mathcal{P}}}}({{{\boldsymbol{n}}}})\) on the unit sphere:

where b is the b-value, bΔ is the B-tensor shape, u describes the first eigenvector of the B-tensor, and S0 is the signal without diffusion weighting. Our implementation of the SM assumes fiber bundles to consist of two compartments, intra-axonal and extra-axonal, that hold different diffusion characteristics. The signal from an axially symmetric tensor (zeppelin) compartment depends on its axial (D∥) and perpendicular (D⊥) diffusivity and is given by the following relation57:

The intra-axonal compartment is modeled as a zero-radius stick (i.e. D⊥ = 0) with D∥ = Di, while the extra-axonal compartment is modeled as a zeppelin with axial and perpendicular diffusivity \({D}^{\parallel }={D}_{e}^{\parallel }\) and \({D}^{\perp }={D}_{e}^{\perp }\), respectively. The fraction of the signal occupied by the intra-axonal compartment is given by fi (thus setting the fraction of the extra-axonal compartment to 1 − fi). Summing over the intra-axonal and extra-axonal signal contributions, results in the following forward equation for the signal:

Calculation of the integral can follow two approaches. The first approach leverages an analytical expression for the integral containing a product of Legendre polynomial function and an exponential term. This term arises when projecting \({{{\mathcal{P}}}}({{{\boldsymbol{n}}}})\) on a SH basis. For a full derivation, see refs. 15,45,58. However, this analytical expression is only valid when bΔ≥0 and \({D}_{e}^{\parallel } > {D}_{e}^{\perp }\) (see ref. 54 for the derivation of this analytical solution). The second approach uses numerical integration to calculate the integral. This approach is able to incorporate negative bΔ but is computationally more demanding than the analytical approach.

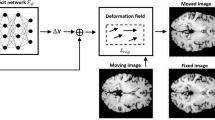

INR network architecture

The purpose of the INR is to map a coordinate vector x to a desired output vector k which represents the underlying dataset at that coordinate, by passing the coordinate through a neural network \({{{{\mathcal{F}}}}}_{\Psi }:{{{\boldsymbol{x}}}}\to {{{\boldsymbol{k}}}}\) with weights Ψ. In our case we map a 3D-coordinate \({{{\boldsymbol{x}}}}\in {{\mathbb{R}}}^{3}\) to a vector of parameters for the SM kernel and FOD, \({{{\boldsymbol{k}}}}=[{D}_{i},{D}_{e}^{\parallel },{D}_{e}^{\perp },{f}_{i},{S}_{0},{p}_{0}^{0},...{p}_{l}^{m}]\) where \({p}_{l}^{m}\) is the coefficient of the SH basis function of order l and phase m. The signal is hence projected onto real SH as in ref. 58. The end result is a representation of a (dMRI) dataset of a single subject by a neural network, from which the parameter maps can be inferred at any x. A ’dataset’ in this manuscript will refer to all dMRI volumes in a single acquisition of a single subject, unless specified otherwise. The implicit neural representation consists of three parts: the spatial encoding, a small MLP, and a number of output layers (Fig. 8). Each of the parts will be discussed in-depth in the upcoming sections.

a shows how the input coordinates (x) are mapped to a higher dimensional frequency space (γ). b These values are then forwarded to the Multi-layer perceptron (\({{{{\mathcal{M}}}}}_{{\Psi }_{m}}\)). c Output layer (z) of the MLP is converted to SM parameters (\(\widehat{{{{\boldsymbol{k}}}}}\)).

Spatial encoding

By encoding the input coordinates to a high-dimensional space before entering them into the model, we can greatly increase the representational power of the INR59. We use the Fourier features encoding described by Tancik et al.59, which was used previously to model MSMT-CSD using INRs24. First we scale the coordinates x (maintaining aspect ratio) to lie in range [−1, 1]3, and then apply to following transformation:

where γ(. ) is the Fourier feature encoding, and A is a size np × 3 matrix with values sampled from \({{{\mathcal{N}}}}(0,{\sigma }^{2})\). The number of encodings np and the variance σ2 are hyperparameters that can be adapted to suit datasets of varying complexity and quality. This process results in an encoded coordinate vector \(\gamma ({{{\boldsymbol{x}}}})\in {[-1,1]}^{{n}_{p}\times 2}\).

Multi-layer perceptron

The MLP \({{{{\mathcal{M}}}}}_{{\Psi }_{m}}\) with weights Ψm (m pointing towards the corresponding MLP) is the backbone of INR and is largely responsible for representing the underlying dataset. It consists of four fully-connected layers of equal sizes nh, determined by the complexity of the represented dataset, and ReLU activation functions. The MLP maps the encoded coordinates to some latent vector \({{{{\mathcal{M}}}}}_{{\Psi }_{m}}:\gamma ({{{\boldsymbol{x}}}})\to {{{\boldsymbol{z}}}}\) with \({{{\boldsymbol{z}}}}\in {{\mathbb{R}}}_{+}^{{n}_{h}}\), that serves as an input to the output layers.

Output layers

The final part of the INR architecture maps z to a parameter estimate \(\widehat{{{{\boldsymbol{k}}}}}\) using a separate fully-connected layer, called ‘head’, for each parameter estimate. For the SM parameters \({\widehat{D}}_{i}\), \({\widehat{D}}_{e}\), \({\widehat{D}}_{p}\), and \({\widehat{f}}_{i}\) the heads use a sigmoid activation function scaled to fit physiological ranges (Table 2). For \({\widehat{S}}_{0}\) a softplus activation function was used, which ensures positivity, without an upper bound60. The SH-coefficients of the estimated FOD \(\widehat{{{{\mathcal{P}}}}}({{{\boldsymbol{n}}}})\) require both positive and negative outputs and, therefore, have no activation function. This results in the full output layer of the INR providing the mapping \({{{{\mathcal{Q}}}}}_{{\Psi }_{q}}:{{{\boldsymbol{z}}}}\to \widehat{{{{\boldsymbol{k}}}}}\), where \({{{{\mathcal{Q}}}}}_{{\Psi }_{q}}\) are the output layers with weights Ψq.

Model fitting

Given set of Nm (capital N denoting a fixed number, opposed to lower case hyperparameters np and nh) measurements \(\{({b}_{i},{({b}_{\Delta })}_{i},{{{{\boldsymbol{u}}}}}_{i})| i\in 1,...,{N}_{m}\}\), measured at coordinates xj ∈ X with \({{{\boldsymbol{X}}}}\subset {{\mathbb{R}}}^{3}\) being the set of all measured coordinates in the dMRI dataset, the estimated signal \(\widehat{S}({b}_{i},{({b}_{\Delta })}_{i},{{{{\boldsymbol{u}}}}}_{i},{{{{\boldsymbol{x}}}}}_{{{{\boldsymbol{j}}}}})\) at coordinate xj is obtained from the model output \({{{{\mathcal{F}}}}}_{\Psi }:{{{\boldsymbol{x}}}}\to \widehat{{{{\boldsymbol{k}}}}}\) by calculating the estimated kernel \(\widehat{{{{\mathcal{K}}}}}(b,{b}_{\Delta },{{{\boldsymbol{n}}}}\cdot {{{\boldsymbol{u}}}})\) using (2) and convolving with \(\widehat{{{{\mathcal{P}}}}}({{{\boldsymbol{n}}}})\) as in (1). We approximate the desired INR \({{{{\mathcal{F}}}}}_{\Psi }\) with weights Ψ ≔ {Ψm, Ψq} by finding the weights Ψ* that minimize the error (MSE or Rician likelihood loss39, the latter allowing an explicit correction of Rician noise) between the estimated signal \(\widehat{S}\) and the measured signal S:

where \({{{\mathcal{L}}}}\) is either the MSE or the Rician likelihood loss. We include an additional term \({\Lambda }_{{{{{\boldsymbol{x}}}}}_{{{{\boldsymbol{j}}}}}}\) which is a non-negativity constraint for the FOD at xj, as described by Tournier et al.10. The constraint is calculated by sampling the FOD across the spherical domain and adding any negative values as a loss. The full dMRI dataset is used, without a train/test split, as the goal is for the INR to represent the data, not to predict unseen data. The fitting process is shown in Fig. 9.

The input coordinates x are input in the INR (architecture shown in Fig. 8) and are mapped to a parameter estimate \(\widehat{{{{\boldsymbol{k}}}}}\). Using \(\widehat{{{{\boldsymbol{k}}}}}\) the signal estimate \(\widehat{S}\) is reconstructed following (3). The loss between \(\widehat{S}\) and the measured signal S is used to update the INR weights.

Implementation

The INR is implemented in Python 3.10.10 using PyTorch 2.0.0. An Adam optimizer was used with a learning rate of 10−4, β1 = 0.9, β2 = 0.999, ϵ = 10−8, and no weight decay. The hyperparameters were set at np = 5000, nh = 2048, σ2 = 3.5 for the SNR 50 synthetic datasets (see section ‘Generation of simulated ground truth data’) and the in vivo dataset (see section ‘In vivo data acquisition’), and σ2 = 2.5 for the SNR 20 synthetic datasets. More details about the choice of σ2 is given in the supplementary information section 1 (Fig. S1). Each INR was fit for 150 epochs on an NVIDIA RTX 4080 GPU with 16GB of VRAM, with a batch size of 500. Visualizations of the model output were created using matplotlib 3.8.0, and MRtrix3 3.0.461. Numerical integration to calculate the integral during training is implemented with Torchquad Simpson function62.

Comparisons

The performance of the INR model (referred to as the INR method) is compared to three other SM model fitting methods described below. A supervised machine learning approach using the SMI toolbox15 (SMI method) with standard settings. The SMI method requires a noise map, which was determined using MP-PCA denoising63. A supervised deep learning method (referred to as the supervised NN method introduced in ref. 18) was trained using a combination of synthetic and data-driven parameter samples. Specifically, the training data consisted of 500,000 samples, with 75% allocated for training and 25% for validation. Half of the training samples were generated by uniformly sampling model parameters, while the other half were derived by applying mutations to NLLS estimates obtained from the target dMRI data. The neural network architecture comprised three hidden layers with 150, 80, and 55 neurons. Gaussian noise was added with SNR 50. For a comprehensive description of all training settings, see ref. 18. Finally, an NLLS approach (NLLS method) was implemented with the MATLAB (MathWorks, Natick, MA, USA) optimization toolbox: lsgnonlin with Levenberg-Marquardt algorithm, max 1000 iterations. Two initializations were fitted after which the solution with the lowest residual norm was chosen. Of the above methods, only INR has a positivity constraint implemented for the FOD (see section ‘Model fitting’).

Fitting performance across the different methods was evaluated using ρ and RMSE on kernel parameters and rotational invariant \({p}_{2}=\sqrt{\frac{4\pi }{5}}\sqrt{{\sum }_{m}| {p}_{2m}{| }^{2}}\), where ∣p2m∣2 is the absolute value of the second order, m-th phase SH-coeffient.

Generation of simulated ground truth data

In silico experiments were conducted on simulated data obtained from one brain (subject 011) of the MGH Connectome Diffusion Microstructure Dataset64. The dMRI data were acquired on the 3T Connectome MRI scanner (Magnetom CONNECTOM, Siemens Healthineers) at 2mm isotropic resolution. The acquisitions with b = [0, 50, 350, 800, 1500, 2400, 3450, 4750, 6000]smm−2 and Δ = 19ms were selected. The lowest 4 b-values were acquired with 32 uniformly distributed diffusion encoding directions, the highest 4 b-values with 64. The dataset also contained 50 b = 0 smm−2 volumes. More details about the imaging parameters and processing can be found in ref. 65. The SM was fitted with the SMI toolbox15 to generate a set of realistic SM kernel parameters for fi, Di, \({D}_{e}^{\parallel }\), and \({D}_{e}^{\perp }\), using lmax = 4 and noise bias correction with a sigma map acquired through MP-PCA63. Further settings were 2 compartments (intra- and extra-axonal), 106 training samples, and Nlevels = 1. To enhance the smoothness of the kernel maps, anisotropic diffusion filtering was performed using MATLAB’s imdiffusefilt function with three iterations (N = 3) and minimal connectivity. The SH-coefficients plm of the FODs were calculated using MSMT-CSD13 for lmax = [2, 4, 6, 8]. The simulated signals corresponding to these parameters were calculated from the SM signal equation with a published optimized acquisition protocol15: b = [0, 1000, 2000, 8000, 5000, 2000] smm−2, number of directions [4, 20, 40, 40, 35, 15], and B-tensor shape bΔ = [1, 1, 1, 1, 0.8, 0]. Directions were optimized by minimizing the electric potential energy on a hemisphere66 after which half of the directions were flipped. The image resolution was kept identical to the original dataset at 2 mm isotropic. Finally, Gaussian or Rician noise was added. The standard deviation of the noise distribution was determined by the mean of the b = 0 smm−2 acquisitions and the SNR (20,50 or ∞ on the b = 0 smm−2 images), resulting in a spatially varying standard deviation. These SNR levels are comparable to (50), or below (20), those investigated in previous work15,18. Free water contributions and TE dependence were not considered. Non-white matter voxels are masked out as their influence on the loss value will decrease the performance of parameter estimation on white matter voxels. A white matter mask was generated with the Freesurfer67 segmentation, which is included in the MGH dataset. Voxels with \({D}_{e}^{\parallel } > {D}_{e}^{\perp }\) were also masked out as these represent nonphysical behavior.

In vivo data acquisition

The study was approved by the Cardiff University School of Psychology Ethics Committee and written informed consent was obtained from the participant in the study. All ethical regulations relevant to human research participants were followed. One healthy volunteer was scanned on a 3T, 300 mT/m Connectom scanner (Siemens Healthineers, Erlangen, Germany). Imaging parameters and diffusion acquisition scheme can be found in Table 3. Gradient waveforms for spherical tensor encoding were optimized using the NOW toolbox68. The in vivo data was corrected for Gibbs ringing69, signal drift70, motion and eddy current correction71, susceptibility correction72, and gradient non-uniformity image distortion and B-matrix correction28.

Experiment 1: Quantitative comparison on simulated data

All four fitting methods were compared on simulated data. To mimic realistic conditions, Gaussian noise was added during the simulation of the synthetic dataset (SNR = [20,50]). For this experiment, only lmax = 2 was considered, which is the highest SH order the supervised NN method can fit. As the used optimized acquisition protocol contains only positive bΔ values, the SM forward model was calculated following the analytical approach.

Experiment 2: Rician noise bias

The effect of Rician noise bias was investigated by introducing noise sampled from a Rician distribution (SNR = [20,50]), rather than Gaussian. As in experiment 1, only lmax = 2 was considered. Parameter estimation was performed using both a standard MSE loss and a Rician likelihood loss39, and the resulting estimations were compared to the ground truth. The integral in the SM forward model is calculated with the analytical approach.

Experiment 3: Qualitative comparison on in vivo data

To test the INR on in vivo data, SM parameter estimation was executed on the dataset from section ‘In vivo data acquisition’ using the Rician likelihood loss. The MPPCA map for the SMI fitting was estimated on the unprocessed data and b-value up to 1300 s/mm2. Only lmax = 2 was considered. As the acquisition protocol contained negative bΔ values, the SM forward model was calculated following the numerical integration approach. The results are compared to the estimates from the methods in section ‘Comparisons’.

Experiment 4: Estimation of SH order up to l max = 8

The performance of the proposed method to model higher order FODs is investigated by fitting the INR with SH orders of lmax = [2, 4, 6, 8], for four different datasets. The noiseless synthetic dataset and the SNR 50 and 20 synthetic data provide insight in the accuracy of FOD estimation in noisy data, while the in vivo dataset qualitatively shows the capability of the INR to estimate higher order FODs on realistic datasets.

Experiment 5: Effect of gradient non-uniformity correction of SM parameter estimation

The impact of gradient non-uniformities on SM parameter estimation was assessed using in vivo data. For each voxel, the b-value, bΔ, and b-vectors were recalculated to account for scanner-specific gradient deviations following28. These corrected effective acquisition parameters were then used to fit the model. This analysis was performed for lmax = 2. The differences between the corrected and uncorrected parameter estimates were subsequently evaluated. Gradient non-uniformity correction was implemented using MSE loss function and compared to gradient non-uniformity correction with NLLS.

Experiment 6: Implicit neural representation for spatial interpolation

To obtain more detailed insight into the continuous spatial representation of the dataset provided by an INR, the INR fit on the Gaussian noise, SNR 50 synthetic dataset at lmax = 2 is sampled at 8x the original resolution in every dimension, resulting in 0.25mm isotropic voxels. The parameter map of p2 was visualized in the coronal and sagittal plane for the original resolution output of the model and the linear, cubic and INR upsampling.

Statistics and reproducibility

All statistical analyses were conducted using custom Python scripts. Comparisons between ground truth and estimated SM parameters on simulated data, as well as on in vivo data, were performed using Pearson’s correlation coefficient and RMSE. The analysis workflow relied on SciPy (v1.16.1), sklearn (1.7.1) and NumPy (v2.3.2). No inter-subject statistical analyses were carried out.

Reporting summary

Further information on research design is available in the Nature Portfolio Reporting Summary linked to this article.

Data availability

Code availability

The code used to generate the results in this paper is publicly available through GitHub at: https://github.com/tomhend/Standard_model_INR.

References

Alexander, D. C., Dyrby, T. B., Nilsson, M. & Zhang, H. Imaging brain microstructure with diffusion MRI: practicality and applications. NMR Biomed. 32, 1–26 (2019).

Lampinen, B. et al. Probing brain tissue microstructure with MRI: principles, challenges, and the role of multidimensional diffusion-relaxation encoding. NeuroImage 282, 120338 (2023).

Jelescu, I. O., Palombo, M., Bagnato, F. & Schilling, K. G. Challenges for biophysical modeling of microstructure. J. Neurosci. Methods 344, 108861 (2020).

Novikov, D. S., Fieremans, E., Jespersen, S. N. & Kiselev, V. G. Quantifying brain microstructure with diffusion MRI: Theory and parameter estimation. NMR Biomed. 32, 1–53 (2019).

Westin, C.-F. et al. Measurement tensors in diffusion mri: Generalizing the concept of diffusion encoding. In Golland, P., Hata, N., Barillot, C., Hornegger, J. & Howe, R. (eds.) Medical Image Computing and Computer-Assisted Intervention – MICCAI 2014, 209–216 (Springer International Publishing, Cham, 2014).

Alotaibi, A. et al. Investigating microstructural changes in white matter in multiple sclerosis: A systematic review and meta-analysis of neurite orientation dispersion and density imaging. Brain Sci. 11, 1151 (2021).

Parker, T. D. et al. Cortical microstructure in young-onset alzheimer’s disease using neurite orientation dispersion and density imaging. Hum. Brain Mapp. 39, 3005–3017 (2018).

Kim, D.-H., Laun, D. H., Kamesh, N. B. et al. Diffusion microstructure imaging of the substantia nigra in Parkinson’s disease using mean apparent propagator MRI. NeuroImage Clin. 12, 451–459 (2016).

Zhang, H., Schneider, T., Wheeler-Kingshott, C. A. & Alexander, D. C. Noddi: Practical in vivo neurite orientation dispersion and density imaging of the human brain. NeuroImage 61, 1000–1016 (2012).

Tournier, J.-D., Calamante, F. & Connelly, A. Robust determination of the fibre orientation distribution in diffusion MRI: Non-negativity constrained super-resolved spherical deconvolution. NeuroImage 35, 1459–1472 (2007).

Jelescu, I. O., Veraart, J., Fieremans, E. & Novikov, D. S. Degeneracy in model parameter estimation for multi-compartmental diffusion in neuronal tissue. NMR Biomed. 29, 33–47 (2016).

Tournier, J. D., Calamante, F. & Connelly, A. Robust determination of the fibre orientation distribution in diffusion MRI: Non-negativity constrained super-resolved spherical deconvolution. NeuroImage 35, 1459–1472 (2007).

Jeurissen, B., Tournier, J.-D., Dhollander, T., Connelly, A. & Sijbers, J. Multi-tissue constrained spherical deconvolution for improved analysis of multi-shell diffusion MRI data. NeuroImage 103, 411–426 (2014).

Novikov, D. S., Veraart, J., Jelescu, I. O. & Fieremans, E. Rotationally-invariant mapping of scalar and orientational metrics of neuronal microstructure with diffusion MRI. NeuroImage 174, 518–538 (2018).

Coelho, S. et al. Reproducibility of the Standard Model of diffusion in white matter on clinical MRI systems. NeuroImage257 (2022).

Reisert, M., Kellner, E., Dhital, B., Hennig, J. & Kiselev, V. G. Disentangling micro from mesostructure by diffusion MRI: A Bayesian approach. NeuroImage 147, 964–975 (2017).

Kaden, E., Kelm, N. D., Carson, R. P., Does, M. D. & Alexander, D. C. Multi-compartment microscopic diffusion imaging. NeuroImage 139, 346–359 (2016).

de Almeida Martins, J. P. et al. Neural networks for parameter estimation in microstructural MRI: Application to a diffusion-relaxation model of white matter. NeuroImage 244 (2021).

Diao, Y. & Jelescu, I. Parameter estimation for wmti-watson model of white matter using encoder-decoder recurrent neural network. Magn. Reson. Med. 89, 1193–1206 (2023).

Liao, Y. et al. Mapping tissue microstructure of brain white matter in vivo in health and disease using diffusion MRI. Imaging Neurosci. 2, 1–17 (2024).

Gyori, N. G., Palombo, M., Clark, C. A., Zhang, H. & Alexander, D. C. Training data distribution significantly impacts the estimation of tissue microstructure with machine learning. Magn. Reson. Med. 87, 932–947 (2022).

Hendriks, T., Vilanova, A. & Chamberland, M. Neural spherical harmonics for structurally coherent continuous representation of diffusion MRI signal. In International Workshop on Computational Diffusion MRI, 1–12 (Springer, 2023).

Consagra, W., Ning, L. & Rathi, Y. Neural orientation distribution fields for estimation and uncertainty quantification in diffusion MRI. Med. Image Anal. 93, 103105 (2024).

Hendriks, T., Vilanova, A. & Chamberland, M. Implicit neural representation of multi-shell constrained spherical deconvolution for continuous modeling of diffusion MRI. Imaging Neuroscience 3, imag_a_00501 (2025).

Girard, G. et al. Ax t ract: Toward microstructure-informed tractography. Hum. Brain Mapp. 38, 5485–5500 (2017).

Reisert, M., Kiselev, V. G., Dihtal, B., Kellner, E. & Novikov, D. S. Mesoft: Unifying diffusion modelling and fiber tracking. In Golland, P., Hata, N., Barillot, C., Hornegger, J. & Howe, R. (eds.) Medical Image Computing and Computer-Assisted Intervention – MICCAI 2014, 201–208 (Springer International Publishing, Cham, 2014).

París, G. et al. Thermal noise lowers the accuracy of rotationally invariant harmonics of diffusion MRI data and their robustness to experimental variations. Magn. Reson. Med. https://onlinelibrary.wiley.com/doi/abs/10.1002/mrm.70035 (2025).

Bammer, R. et al. Analysis and generalized correction of the effect of spatial gradient field distortions in diffusion-weighted imaging. Magn. Reson. Med. 50, 560–569 (2003).

Daducci, A. et al. Accelerated microstructure imaging via convex optimization (amico) from diffusion MRI data. NeuroImage 105, 32–44 (2015).

Harms, R., Fritz, F., Tobisch, A., Goebel, R. & Roebroeck, A. Robust and fast nonlinear optimization of diffusion MRI microstructure models. NeuroImage 155, 82–96 (2017).

Mesri, H. Y., David, S., Viergever, M. A. & Leemans, A. The adverse effect of gradient nonlinearities on diffusion MRI: From voxels to group studies. NeuroImage 205, 116127 (2020).

Mohammadi, S. et al. The effect of local perturbation fields on human DTI: Characterisation, measurement and correction. NeuroImage 60, 562–570 (2012).

Morez, J., Sijbers, J., Vanhevel, F. & Jeurissen, B. Constrained spherical deconvolution of nonspherically sampled diffusion MRI data. Hum. Brain Mapp. 42, 521–538 (2021).

Jones, D. K. et al. Microstructural imaging of the human brain with a ‘super-scanner’: 10 key advantages of ultra-strong gradients for diffusion MRI (2018).

Coelho, S. et al. What if each voxel were measured with a different diffusion protocol? https://arxiv.org/abs/2506.22650 2506.22650 (2025).

Eichner, C. et al. Real diffusion-weighted MRI enabling true signal averaging and increased diffusion contrast. NeuroImage 122, 373–384 (2015).

Koay, C. G., Özarslan, E. & Pierpaoli, C. Probabilistic identification and estimation of noise (PIESNO): A self-consistent approach and its applications in MRI. J. Magn. Reson. 199, 94–103 (2009).

Álvaro, P. et al. Optimisation of quantitative brain diffusion-relaxation MRI acquisition protocols with physics-informed machine learning. Med. Image Anal. 94, 103134 (2024).

Parker, C. et al. Rician likelihood loss for quantitative MRI with self-supervised deep learning. NMR Biomed. 38, e70136 (2025).

Kunz, N., da Silva, A. R. & Jelescu, I. O. Intra- and extra-axonal axial diffusivities in the white matter: Which one is faster? NeuroImage 181, 314–322 (2018).

Dhital, B., Reisert, M., Kellner, E. & Kiselev, V. G. Intra-axonal diffusivity in brain white matter. NeuroImage 189, 543–550 (2019).

Coelho, S., Pozo, J. M., Jespersen, S. N., Jones, D. K. & Frangi, A. F. Resolving degeneracy in diffusion MRI biophysical model parameter estimation using double diffusion encoding. Magn. Reson. Med. 82, 395–410 (2019).

Consagra, W., Ning, L. & Rathi, Y. A deep learning approach to multi-fiber parameter estimation and uncertainty quantification in diffusion MRI. Med. Image Anal. 103537 (2025).

Jallais, M. & Palombo, M. Introducing μguide for quantitative imaging via generalized uncertainty-driven inference using deep learning. eLife 13, RP101069 (2024).

Tax, C. M. et al. Measuring compartmental t2-orientational dependence in human brain white matter using a tiltable RF coil and diffusion-t2 correlation MRI. Neuroimage 236, 117967 (2021).

Veraart, J., Novikov, D. S. & Fieremans, E. Te dependent diffusion imaging (teddi) distinguishes between compartmental t2 relaxation times. NeuroImage 182, 360–369 (2018).

Palombo, M. et al. Sandi: A compartment-based model for non-invasive apparent soma and neurite imaging by diffusion MRI. NeuroImage 215, 116835 (2020).

Daducci, A., Dal Palù, A., Lemkaddem, A. & Thiran, J.-P. Commit: Convex optimization modeling for microstructure informed tractography. IEEE Trans. Med. Imaging 34, 246–257 (2015).

Li, Z. et al. DIMOND: DIffusion Model OptimizatioN with Deep Learning. Adv. Sci. (2024).

Tancik, M. et al. Learned initializations for optimizing coordinate-based neural representations. In Proceedings of the IEEE/CVF Conference on Computer Vision and Pattern Recognition, 2846–2855 (2021).

Wang, Y. et al. Scarf: Scalable continual learning framework for memory-efficient multiple neural radiance fields. In Computer graphics forum, vol. 43, e15255 (Wiley Online Library, 2024).

Dwedari, M. M. et al. Estimating neural orientation distribution fields on high-resolution diffusion MRI scans. In Linguraru, M. G. et al. (eds.) Medical Image Computing and Computer Assisted Intervention – MICCAI 2024, 307–317 (Springer Nature Switzerland, Cham,2024).

Spears, T. & Fletcher, P. T. Learning spatially-continuous fiber orientation functions. In 2024 IEEE International Symposium on Biomedical Imaging (ISBI), 1–5 (IEEE, 2024).

Jespersen, S. N., Kroenke, C. D., Østergaard, L., Ackerman, J. J. & Yablonskiy, D. A. Modeling dendrite density from magnetic resonance diffusion measurements. NeuroImage 34, 1473–1486 (2007).

Kroenke, C. D., Ackerman, J. J. & Yablonskiy, D. A. On the nature of the Na+ diffusion attenuated MR signal in the central nervous system. Magn. Reson. Med. 52, 1052–1059 (2004).

Fieremans, E., Jensen, J. H. & Helpern, J. A. White matter characterization with diffusional kurtosis imaging. NeuroImage 58, 177–188 (2011).

Basser, P. J., Mattiello, J. & LeBihan, D. Mr diffusion tensor spectroscopy and imaging. Biophys. J. 66, 259–267 (1994).

Lampinen, B. et al. Towards unconstrained compartment modeling in white matter using diffusion-relaxation MRI with tensor-valued diffusion encoding. Magn. Reson. Med. 84, 1605–1623 (2020).

Tancik, M. et al. Fourier features let networks learn high-frequency functions in low-dimensional domains. Adv. Neural Inf. Process. Syst. 33, 7537–7547 (2020).

Dugas, C., Bengio, Y., Bélisle, F., Nadeau, C. & Garcia, R. Incorporating second-order functional knowledge for better option pricing. Advances in Neural Inform. Process. Syst. 13 (2000).

Tournier, J.-D. et al. MRtrix3: A fast, flexible and open software framework for medical image processing and visualisation. NeuroImage 202, 116137 (2019).

Gómez, P., Toftevaag, H. H. & Meoni, G. torchquad: Numerical integration in arbitrary dimensions with PyTorch. J. Open Source Softw. 6, 3439 (2021).

Veraart, J. et al. Denoising of diffusion MRI using random matrix theory. NeuroImage 142, 394–406 (2016).

Tian, Q. et al. Comprehensive diffusion MRI dataset for in vivo human brain microstructure mapping using 300 mt/m gradients. figshare. Collection https://doi.org/10.6084/m9.figshare.c.5315474.v1 (2022).

Tian, Q. et al. Comprehensive diffusion MRI dataset for in vivo human brain microstructure mapping using 300 mT/m gradients. Sci. Data 9 (2022).

Garyfallidis, E. et al. Dipy, a library for the analysis of diffusion MRI data. Front. Neuroinform. 8, 8 (2014).

Fischl, B. Freesurfer. NeuroImage 62, 774–781 (2012).

Sjölund, J. et al. Constrained optimization of gradient waveforms for generalized diffusion encoding. J. Magn. Reson. 261, 157–168 (2015).

Kellner, E., Dhital, B., Kiselev, V. G. & Reisert, M. Gibbs-ringing artifact removal based on local subvoxel-shifts. Magn. Reson. Med. 76, 1574–1581 (2016).

Vos, S. B. et al. The importance of correcting for signal drift in diffusion MRI. Magn. Reson. Med. 77, 285–299 (2017).

Nilsson, M., Szczepankiewicz, F., van Westen, D. & Hansson, O. Extrapolation-based references improve motion and eddy-current correction of high b-value DWI data: Application in Parkinson’s disease dementia. PLOS ONE 10, 1–22 (2015).

Andersson, J. L., Skare, S. & Ashburner, J. How to correct susceptibility distortions in spin-echo echo-planar images: application to diffusion tensor imaging. NeuroImage 20, 870–888 (2003).

Hendriks, T. et al. Simulated DWI files for experiments of: Implicit neural representations for accurate estimation of the standard model of white matter https://doi.org/10.5281/zenodo.17092773 (2025).

Acknowledgements

We thank Dennis Klomp for input to the manuscript, and Bram Kraaijeveld for the help creating graphics. CMWT is supported by a Vidi grant (21299) from the Dutch Research Council (NWO) and a Sir Henry Wellcome Fellowship (215944/Z/19/Z). Data were provided [in part] by the Human Connectome Project, MGH-USC Consortium (Principal Investigators: Bruce R. Rosen, Arthur W. Toga and Van Wedeen; U01MH093765) funded by the NIH Blueprint Initiative for Neuroscience Research grant; the National Institutes of Health grant P41EB015896; and the Instrumentation Grants S10RR023043, 1S10RR023401, 1S10RR019307. This research was funded in whole, or in part, by the Wellcome Trust [215944]. For the purpose of Open Access, the author has applied a CC BY public copyright license to any Author Accepted Manuscript version arising from this submission.

Author information

Authors and Affiliations

Contributions

Conception: T.H., G.A., A.V., M.C., C.T.; dataset generation: T.H., G.A., C.T.; analysis and interpretation of data: T.H., G.A., E.V., A.V., M.C., C.T.; drafting manuscript: T.H., G.A., M.C., C.T.; revising manuscript: T.H., G.A., E.V., A.V., M.C., C.T.; funding: A.V., M.C., C.T.; supervision: E.V., A.V., M.C., C.T. Every author has approved the submitted version, and is personally accountable for their own contributions.

Corresponding author

Ethics declarations

Competing interests

The authors declare no competing interests.

Peer review

Peer review information

Communications Biology thanks Jon Haitz Legarreta, Benjamin Towle, and the other anonymous reviewer(s) for their contribution to the peer review of this work. Primary Handling Editors: Sahar Ahmad and Jasmine Pan. A peer review file is available.

Additional information

Publisher’s note Springer Nature remains neutral with regard to jurisdictional claims in published maps and institutional affiliations.

Rights and permissions

Open Access This article is licensed under a Creative Commons Attribution 4.0 International License, which permits use, sharing, adaptation, distribution and reproduction in any medium or format, as long as you give appropriate credit to the original author(s) and the source, provide a link to the Creative Commons licence, and indicate if changes were made. The images or other third party material in this article are included in the article’s Creative Commons licence, unless indicated otherwise in a credit line to the material. If material is not included in the article’s Creative Commons licence and your intended use is not permitted by statutory regulation or exceeds the permitted use, you will need to obtain permission directly from the copyright holder. To view a copy of this licence, visit http://creativecommons.org/licenses/by/4.0/.

About this article

Cite this article

Hendriks, T., Arends, G., Versteeg, E. et al. Implicit neural representations for accurate estimation of the Standard Model of white matter. Commun Biol 9, 120 (2026). https://doi.org/10.1038/s42003-025-09399-5

Received:

Accepted:

Published:

Version of record:

DOI: https://doi.org/10.1038/s42003-025-09399-5