Abstract

Sensitivity to small changes in the environment is crucial for many real-world tasks, enabling living and artificial systems to make correct behavioral decisions. It has been shown that such sensitivity is maximized when a system operates near the critical point of a phase transition. However, proximity to criticality introduces large fluctuations and diverging timescales. Hence, to leverage the maximal sensitivity, it could require impractically long integration periods. Here, we analytically and computationally demonstrate how the optimal tuning of a recurrent neural network is determined given a finite integration time. Rather than maximizing the theoretically available sensitivity, we find networks attain different sensitivities depending on the available time. Consequently, the optimal dynamic regime can shift away from criticality when integration times are finite, highlighting the necessity of incorporating finite-time considerations into studies of information processing.

Similar content being viewed by others

Introduction

Living systems must efficiently encode relevant environmental information while being sensitive to small changes. Increasing evidence suggests that many natural systems tackle this challenge by operating near a critical phase transition1. Signatures of near-critical dynamics have been observed across different scales, from collective behaviors in flocks of birds2 to cellular diversity in stem cell populations3, and in the brain4,5,6,7,8. The proposed advantage of operating near a critical point is that phase transitions endow systems with computational benefits, including elevated sensitivity and correlation9,10, maximized dynamic range11, enhanced information flow12,13,14, optimal input representation15,16, and a diverse spectrum of dynamical responses17.

Operating in the vicinity of a critical phase transition offers significant advantages but comes with inherent challenges. While enhanced sensitivity of critical systems makes them ideal for some tasks, it also increases their vulnerability to noise, further amplified by critical slowing down18,19. A recent example of this is decision-making by integrated Ising models, where operating at a distance from a phase transition allows control of the trade-off between reaction time and error rate20. More generally, such a trade-off can be formulated as an optimization problem with a control parameter λ (in our case, changing the distance to criticality) that regulates both beneficial gain G(λ) and detrimental loss L(λ) with some weighting factor γ, i.e.,

Both gains and losses depend on the particularities of the system. Thus, the optimal tuning of λ, and thereby the optimal distance to criticality, will have to depend on the specific system and requirements of each task21. For example, fish schools balance reaction time and energy cost in their alarmed state22, while neuromorphic computing and artificial networks adjust their state to match memory requirements for optimal functioning23,24. Despite these observations, it remains a challenge to quantitatively assess the trade-off between gain and loss that would determine an optimal distance from criticality.

A famous example of how criticality can assist encoding in the brain is the dynamic range. The dynamic range quantifies the range of continuous input features that can be encoded by the nonlinear firing-rate response of a neuron. It is commonly defined as the logarithmic range of inputs h for which the output is between the 10th and 90th percentile of all outputs11, i.e., \(\Delta =10\,{\log }_{10}({h}_{0.9}/{h}_{0.1})\), selected to exclude responses that would not be distinguishable from the noise floor at low activity and saturation regime at high activity. Examples include encoding of correlations in the visual field25, odor concentration26, and sound level27,28.

Unfortunately, the dynamic range of a single cell is usually much smaller than the dynamic range of perception. This dynamic-range problem can be solved with the emergent properties from recurrent interactions, which were shown to drastically increase the dynamic range as the network approaches criticality11,29,30. Exploiting close-to-critical emergence was also observed in structures with heterogeneous31, modular32, or hierarchical33 organization. However, previous work neglected the emerging close-to-criticality population activity fluctuations that can hinder confidence in discrimination.

In this work, we combine analytical calculations, numerical simulations, and machine-learning approximations to quantify the optimal balance between input discrimination confidence and the sensitivity of a recurrent neural network, controlled by its recurrent interaction strength λ and the timescale T of a leaky readout (Fig. 1). To formalize this optimization problem, we introduce two generalized measures of dynamic range derived from the discriminability of inputs and provide analytical results for the limiting cases of instantaneous readout and infinite integration time. We find that the optimal state, λ*, of the network depends on the required confidence and integration time, with a safety margin from the precise critical point for all finite integration times.

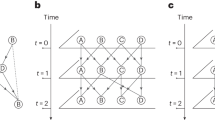

a Illustration of the neural reservoir: a subset of recurrently connected neurons with the largest eigenvalue of the connectivity matrix equal λ receives Poisson spikes with rate h; the output integrates spikes of a subset of neurons with a timescale T and is subject to noise η. b Temporal evolution of output for different λ and T comparing the responses to two different inputs h1 < h2 with mean responses (marked by the arrows on the y-axis) fixed for all panels at the same values with 〈oT〉(h1) < 〈oT〉(h2). Solid lines are the pure output response of the network, and opaque areas include noise. Gray regions highlight the times when the output responses differ from the order of their mean responses. The minimal discrimination error of the two underlying distributions is given by ε, cf. Eq. (3). c Response curve indicating output values to logarithmic input rates. The solid black line shows the mean response, and the gray shading indicates the strength of fluctuations stemming from neural activity and output noise. Output fluctuations are further illustrated as gray distributions on the right (see green line to follow specific input mapped to output distribution). Inputs are called discriminable when their output distributions have a sufficiently small overlap (see blue areas on the right). The first inputs that are discriminable from zero and full activity mark the dynamic range (black dashed lines). From these, we can construct sets of discriminable inputs marked by the black triangles (see text for details).

Results

We consider a random network of probabilistic spiking neurons that can be activated externally and recurrently (see “Methods” section for details). To mimic processing and transmission, only a random subset of neurons receives input, while another random subset of neurons serves as output (Fig. 1a). Input neurons receive uncorrelated, independent Poisson spike trains with a rate h, which represents the input strength. The recurrent interactions are defined by a random sparse connectivity matrix W for which we can control the largest eigenvalue λ and thereby the fluctuations of recurrent activity. A leaky readout integrates output neurons’ activity with timescale T, which can be expressed as a sum of exponential kernels

where \({t}_{i}^{k}\) is the timing of the k-th spike of neuron i. Here, we added a small Gaussian noise \(\eta \sim {{\mathcal{N}}}(0,{\sigma }^{2})\) to be able to technically treat δ-distributed outputs from absorbing states or mean-field solutions in our later analysis, with only a minor effect on typical output distributions. Depending on the parameters of the recurrent interactions, the input ordering would be more or less observable from the output activity, for an external observer or for neurons further up in the processing hierarchy.

For the extreme cases of T → ∞ or T → 0, we can solve the model analytically and obtain closed-form solutions for P(oT∣h). For T → ∞ this can be achieved using a mean-field approach, and for T → 0 we solve a Fokker–Planck equation (see the “Methods” section). For intermediate integration times, 0 < T < ∞, we have to rely on simulations to obtain the output distributions. This intermediate regime is particularly relevant for biological systems, as the intrinsic timescales of the cortical neurons were found to be in the range of about 50–500 ms34,35,36. Comparable timescales can also arise from the slow accumulation of activity signals that inform or trigger synaptic plasticity, for instance, through calcium decay following neural spiking, typically lasting around 500 ms37.

The sensitivity of the system is controlled by the largest eigenvalue λ of the connectivity matrix38. The recurrent network we consider has a critical non-equilibrium phase transition for h → 0 at λc = 132,38, where both sensitivity and correlated fluctuations are strongest. Reducing λ reduces recurrent network fluctuations (Fig. 1b). This generates a trade-off: close to criticality, we expect optimized information processing properties for infinite integration time but simultaneously increased fluctuations in the finite-time output oT(t). In the following, we explore how to quantify this trade-off depending on the available integration time.

As a first step, we illustrate how the recurrent network’s largest eigenvalue and the readout integration time affect the representation of inputs (Fig. 1b). Each panel shows the outputs from two identical copies of the network with given λ and T in response to the two stimuli with rates h1 < h2, which are chosen such that the mean-field outputs 〈oT〉(h2/1) = 0.5 ± Δo/2 are easily distinguishable with 〈oT〉(h2) − 〈oT〉(h1) = Δo = 5σ. Assume the observer’s task is to find which network received the stronger input. This can be solved by deciding that a stronger output indicates a stronger input. We shade gray the times where, following this strategy, the observer will make a mistake. We observe only small fluctuations when λ is far from the critical point (λ = 0.9, left column), irrespective of the integration time (vertical axis), and the inputs can be perfectly assigned from the output. When shifting λ closer to the critical point, fluctuations increase such that with insufficient integration time, the errors appear but can be remedied when the integration time is increased.

To formalize this intuition, we consider the input-output distribution P(oT(t)∣h) to observe an output oT(t) in response to a specific input h at time t. In the following, we will omit the time argument for brevity. Now, if two inputs h1 and h2 were equally likely to be presented, then the overlap between P(oT∣h1) and P(oT∣h2) quantifies the minimal discrimination error39 of an ideal observer:

Computing this error for the stimuli in our example (Fig. 1c), we find that \({{\mathcal{E}}}\) increases with λ and decreases with T, which matches our just-gained intuition. In our example, variability in oT comes from observing stochastic, correlated dynamics (λ) with a finite integration time T plus noise. However, our logic remains the same for other causes of variability.

As a next step, we define a set of discriminable inputs that can be sufficiently well distinguished from observing only the output. We call two inputs ε-discriminable if the overlap of the response distributions generated by the inputs is smaller than an error threshold ε. Formally speaking, a set of ε-discriminable inputs \({{\mathcal{H}}}=\{{h}_{1},{h}_{2},\ldots ,{h}_{{n}_{d}}\}\), with h0 = 0 and \({h}_{{n}_{d}+1}=:{h}_{\infty }\), is a set for which \({{\mathcal{E}}}({h}_{i},{h}_{j})\le \varepsilon\) for all i ≠ j, i, j ∈ [0, nd + 1], where h∞ is an input that generated saturated output 〈oT〉 = 1. Finding the maximal (in the sense of cardinality) set of discriminable inputs is a close-packing problem without a unique solution. To circumvent this complication, we propose the following algorithm: start by finding \({h}_{1}^{{{\rm{left}}}}=\min \{h > {h}_{0}=0:{{\mathcal{E}}}({h}_{0},h)\le \varepsilon \}\), and then proceed by induction to \({h}_{i+1}^{{{\rm{left}}}}=\min \{h > {{h}}_{i}^{{{\rm{left}}}}:{{\mathcal{E}}}({h}_{i}^{{{\rm{left}}}},h )\le \varepsilon \}\), (Fig. 1, see the “Methods” section for more details). We stop at the first i such that \({{\mathcal{E}}}({h}_{i+1}^{{{\rm{left}}}},{h}_{\infty }) > \varepsilon\) and get this way \({n}_{d}^{{{\rm{left}}}}=i\). We repeat the same procedure starting from the right with \({h}_{1}^{{{\rm{right}}}}=\max \{h < {h}_{\infty }:{{\mathcal{E}}}(h,{h}_{\infty })\le \varepsilon \}\) and iterate until \({{\mathcal{E}}}({h}_{i+1}^{{{\rm{right}}}},0) > \varepsilon\) to find \({n}_{d}^{{{\rm{right}}}}\). Our final estimate of the discriminable inputs cardinality is the average \({n}_{d}=1/2({n}_{d}^{{{\rm{left}}}}+{n}_{d}^{{{\rm{right}}}})\).

While this algorithm is numerically straightforward, it comes with a technical challenge: it requires iterative evaluation of P(oT∣h) for continuous values of h. This is not a problem for our analytical solutions, but it becomes intractable for the actual numerical model because each P(oT∣h) is a result of a long simulation. To tackle this, we measure the distribution P(aT∣h) of pure network activity aT(t) for a broad range of h, T, and λ values, notice that they can be well approximated by a Beta distribution Beta(α,β), and train a neural network to learn the parameters (α, β) as a function of (T, h, λ) to interpolate between them (see the “Methods” section).

From the set of ε-discriminable inputs, we can now construct measures for information processing capabilities using finite integration time (Fig. 2). Let us start with the number of ε-discriminable inputs, nd (Fig. 2a). Because the task requires sufficient coupling between the input and output population, nd is very small with λ = 0 and first increases with λ. However, nd exhibits a maximum at a T-dependent subcritical λ < 1, above which it decays for λ → 1. Our numerical results interpolate between the analytical predictions for T → ∞ (solid line) and T → 0, indicating that every finite integration time will have an optimal 0 < \(\lambda \ast\) < 1, while for infinite integration time, nd is bound by the Gaussian noise of the readout.

Discriminating input rates from a noisy output (cf. Fig. 1), we observe that a the number of discriminable inputs, as well as b the dynamic range, are maximal for sub-critical networks (λ < 1). With increasing timescale T (differently colored lines), the maximum locations λ* (specific to the observables, see insets) move closer to the critical point. Analytic solutions for the limits T → 0 and T → ∞ are shown as black dotted and solid lines, respectively. Parameters: N = 104, K = 102, μ = 0.2, ν = 0.2, σ = 10−2, ε = 10−1.

Let us now turn to the dynamic range, which can be naturally generalized to account for fluctuations by choosing as bounds the first and last inputs that can be discriminated from the boundaries. We thus define our dynamic range as

where the \({h}_{1}^{{{\rm{left}}}}\) and \({h}_{1}^{{{\rm{right}}}}\) are the minimal and maximal inputs that can be discriminated from h0 = 0 and h∞, respectively, with error not surpassing ε. Δ depends on the specific choices of the discrimination error ε and the variance of the Gaussian noise σ, which we can be tuned to recover the typical 10–90% bounds of the established dynamic range11 for T → ∞. For finite T, our numerical estimates interpolate well between the analytical bounds (see Supplementary Note 2 for comparison with full readout). Importantly, a finite T results in a substantial reduction of the dynamic range in the vicinity of the critical point, i.e., for λ ≈ 1, but only a slight reduction at small λ. As a result, the dynamic range develops a T-dependent maximum, which is, however, different from the maximum of nd (insets in Fig. 2).

Discussion

Our results establish a connection between sensitivity (governed by the distance to criticality), confidence (capturing the probability of wrong classification), and integration time in a recurrent network of excitatory stochastic neurons. While we primarily focused on discriminability as a function of the distance to criticality for a given integration time, we can also make statements about how T and ε affect the discriminability. For any fixed λ (vertical slice in Fig. 2), we find that both measures of discriminability increase monotonically with T. Also, by construction, the discriminability has to increase monotonically with the discrimination error ε. Still, both T and ε affect the peaks in our measures of discriminability and thereby define the optimal state. For finite T, the optimal balance is achieved by subcritical networks.

We expect that our insight about optimal sensitivity away from criticality will occur similarly in other stochastic systems, where emergent properties near criticality are beneficial for solving tasks in the presence of increasing stochastic fluctuations. On which side of the transition this optimum lies will, however, depend on both the task and the type of phase transition, e.g., absorbing-to-active, transition to chaos, or a bifurcation—see ref. 1 for an overview. For example, in the case of transition to chaos, it was shown that deviations toward the supercritical side, deeper into the chaotic regime, allow for slower integration times and are thus beneficial in the presence of noise40. Additionally, emergent critical fluctuations do not necessarily have to align with the neural population activity; examples include a large dispersion of correlations41 or low-dimensional subspaces8.

Since near-critical dynamics imply a finite autocorrelation time9,10, our results align well with the observation of finite timescales in neurophysiological data42,43,44. While there is clear evidence for sensory integration, many perceptual tasks are solved in short times of less than a second42. This limited temporal integration can be due to non-stationary information rates, temporal correlations (such as in our recurrent dynamics), or leaky integrators (such as in our readout). In our model, the recurrent autocorrelation timescale can be estimated assuming a linear autoregressive representation21,43, yielding \(\tau \approx -\Delta t/ln(\lambda )\). The confidence-dependent optima in Fig. 2 thus correspond to τ ≈ 10 − 100 ms, assuming a timestep Δt = 1 ms, or to τ ≈ 50–500 ms for a timestep Δt = 5 ms that could comprise various propagation delays and raise times. This is consistent with empirical evidence of cortical timescales in the range of 50 ms–1 s34,35,36. Also, it is consistent with the recently observed adaptation to task requirements23,44, which in our case would correspond to a change in ε and T.

On the side of artificial networks, we believe that our results provide a new perspective on the reservoir-computing paradigm with typically memory-less readout signals45. While noise-free continuous formulations, like the echo-state network46, allow for reading out information about the past from standard nonlinear dynamic considerations, any system that comes with noise could benefit from integrating the readout over time, as was demonstrated recently for active particles47. In light of our results, an instantaneous readout would require a larger distance to criticality for optimal discriminability. However, leaky readout units could allow the reservoir to be tuned closer to criticality, thereby benefiting from the edge-of-chaos sensitivity.

To summarize, given a readout with finite integration time, we find maximal discriminability for close-to-critical dynamics. The intuitive reason for our finding is that emergent temporal fluctuations close to a critical phase transition can smear out the signal if they are aligned with the readout, and thereby hinder discrimination. Since the network sensitivity is maximal at criticality, this implies a trade-off between sensitivity and discriminability. Our results thereby add to the hypothesis that living systems need to adjust their state to optimally balance opposing demands depending on the specific processing tasks at hand21,44,48,49.

Methods

Neural network model

We consider a network of N = 104 binary spiking neurons, each described by a state variable si that can be active (si(t) = 1) or inactive (si(t) = 0). Time evolves in discrete steps of Δt. Neurons can be activated by recurrent input from other neurons with probability prec[si(t + Δt) = 1∣s(t)] = f(∑jwijsj(t)), where wij are directed coupling weights (not symmetric) and f(x) is a rectified linear function with f(x) = 0 for x < 0, f(x) = x for 0 < x < 1, and f(x) = 1 for x > 1. The connectivity matrix \(W=({w}_{ij})\) is a sparse matrix with mean degree K = 102, where non-zero edges are selected with probability K/N and diagonal entries are removed. Non-zero weights are set to wij = λ/Ki, where Ki is the indegree of neuron i corresponding to the number of non-zero weights in row i. Thereby, each neuron has the same maximal input of ∑jwij = λ, and λ is the largest eigenvalue of the connectivity matrix W.

In addition to the recurrent activation, a random subset of Nin = μN neurons receives external input. The external input is modeled as a Poisson process with rate h or equivalently as an activation probability pext[si(t + Δt) = 1] = 1 − e−hΔt, which causes neurons to fire independently and irregularly.

The output is defined by an exponential smoothing of the spikes from a random subset of Nout = νN neurons plus Gaussian noise, cf. Eq. (2). Let us denote the pure network output as \({a}^{T}(t)=1/{N}^{{{\rm{out}}}}{\sum }_{i\in {N}^{{{\rm{out}}}}}{\sum }_{\{k| {t}_{i}^{k} < t\}}{e}^{(t-{t}_{i}^{k})/T}\), where the sum goes over all i in the subset of output neurons and all spike times k with \({t}_{i}^{k} < t\). For discrete time steps, these exponential kernels can be implemented as standard exponential smoothing

with cT = 1 − e−Δt/T. Iterative substitution yields a geometric sequence as the discrete realization of the desired exponential function.

Neural-network approximation of output distribution

The stochastic simulations yield \(P\left({a}^{T}| h\right)\) by aggregating all measurements aT(t) after proper equilibration for specific values of h. However, our estimation of discriminable inputs requires a distribution of \(P\left({a}^{T}| h\right)\) for any h that can be achieved using interpolation. To solve this, we first notice that \(P\left({a}^{T}| h\right)\) can be well approximated by a Beta distribution Beta(α, β). We obtain (α, β) as a maximum-likelihood estimate from simulations with parameters θ = (T, h, λ). We scan the parameter space logarithmically in the ranges \(1-\lambda \in \left[1{0}^{-4},1\right]\), \(h\in \left[1{0}^{-6},1{0}^{2}\right]\) and \(T\in \left[1,1{0}^{4}\right]\) and train a dense 3-layer neural network to approximate the functions α(θ) and β(θ), cf. Fig. 3. This exploits the fact that neural networks can act as general function approximators50. Here, we choose three layers with 60 neurons each and a hyperbolic tangent (\(\tanh\)) activation function, following previous approaches to fitting scaling functions51. We found good fits when scaling input and output parameters into the domain [−1, 1]. To ensure that the distribution mean increases monotonously with h (relevant for the discriminable inputs), we further added a regularization term that penalizes deviations of the mean 〈aT〉 = α/(α + β) from the mean-field solution Eq. (9), essentially implementing a physics-informed regularization.

In the first step (a–c), we obtain the distribution P(aT∣h) of pure output activity in a reservoir (with parameter λ) subject to an input h. a For T → ∞, these distributions become δ-distributions that we calculate using our mean-field approximation. b For finite T, we perform many numerical simulations and fit a Beta distribution to the data using maximum likelihood estimates (orange examples obtained for T = 100). We then use these fit results to train a deep neural network as a general function approximation that interpolates the fit parameters (α, β) for all simulation parameters (λ, h, T). c In the limit T → 0, we obtain analytical results by solving the Fokker–Planck equation that are in good agreement with corresponding numerical data for T = 1 (yellow histograms). d In the next step, we obtain the distribution of noisy output responses P(oT∣h) by a convolution of P(aT∣h) with a Gaussian \({{\mathcal{N}}}(0,{\sigma }^{2})\) of small variance σ2. This step allows (i) to connect to previous mean-field results for T → ∞11 and (ii) circumvents numerical intricacies for finite N at the boundaries. The example compares beta distributions from the neural network interpolations (orange) with their corresponding noisy distributions (gray), which mostly differ at the boundaries. e, f In the last step, we determine two sets of discriminable inputs that can be discriminated from reference distributions for vanishing input (left Gaussian distribution, black) and diverging input (right Gaussian distribution, black). For this, we start from the left and right references and perform iterative bisection searches in h to find input values whose response distributions overlap exactly ε with the previous one. The dynamic range is calculated from the smallest and largest inputs (marked green). The number of discriminable inputs is obtained as the average size of the sets. Examples are shown for λ = 0.999, ε = 0.1, σ = 0.01. Example distributions for \(h\in \left[5.6\cdot 1{0}^{-5},1.8\cdot 1{0}^{-3},5.6\cdot 1{0}^{-3},1.8\cdot 1{0}^{-2},3.2\cdot 1{0}^{-1}\right]\).

Mean-field solution for the limit T → ∞

For simplicity, we perform mean-field computation for the case of the read-out population coinciding with the whole network. For T → ∞, we can neglect fluctuations such that the P(aT∣h) becomes a delta distribution at the mean-field activity \({a}^{\infty }=a={lim}_{T\to \infty }1/N{\sum }_{i=1}^{N}1/T{\sum }_{t=1}^{T}{a}_{i}(t)\), cf. Fig. 3a. To estimate the mean activity, we need to separate the network into the part that receives input with Nin = μN neurons and mean activity ain, and the rest of Nrest = (1 − μ)N neurons with mean activity arest, such that the mean activity is a = μain + (1 − μ)arest.

Since each neuron is randomly connected to any other neuron in the network with the same total weight λ = ∑ijwij/N, we can approximate the probability of recurrent activation

After averaging out temporal fluctuations, we find that the mean activity equals the activation probability. For those neurons that can only be excited recurrently, we thus obtain \({a}^{{{\rm{rest}}}}=\overline{{p}^{{{\rm{rec}}}}}\). For those neurons that receive external input, we need to take coalescence into account52 and find \({a}^{{{\rm{in}}}}=1-(1-\overline{{p}^{{{\rm{rec}}}}})(1-{p}^{{{\rm{ext}}}})\). This leaves us with a system of self-consistent equations

that can be solved to yield

Mean-field solution for T → 0

In the limit to continuous time, we can model the probability of neural activation and deactivation as a birth-death process with birth rate Ω+(A) and death rate Ω−(A), where A is the number of active neurons. The time evolution of the probability distribution P(A, t) is then described by the master equation

Using a Kramers–Moyal expansion up to second order53, we obtain the Fokker–Planck equation (see Supplementary Note 1)

with a “drift” term f(A) = Ω+(A) − Ω−(A) and a “diffusion” term g(A) = Ω+(A) + Ω−(A). The solution of the stationary Fokker–Planck equation, \(\frac{d}{dt}P(A,t)=0\), is

which can be solved numerically once birth and death rates are specified.

To specify the birth and death rates, we assume that inactive neurons can create activity by becoming active, while active neurons destroy activity by becoming inactive. If pa is the probability to activate any neuron in the next time step and there are A out of N neurons currently active, then we find

Since the activation probability depends on whether the neuron receives external input or not, we need to distinguish between those neurons that receive input, Nin, and those that can only be activated recurrently, Nrest. While for the latter, we can identify pa = prec, we need to account for coalescence in the former case and obtain pa = 1 − (1 − prec)(1 − pext).

To obtain an expression for prec, we assume a mean-field setting where each connected neuron is described by its mean activity. Then Eq. (6) yields the probability \(\overline{{p}^{{{\rm{rec}}}}}({A}^{{{\rm{in}}}},{A}^{{{\rm{rest}}}})=\lambda \left[{A}^{{{\rm{in}}}}+{A}^{{{\rm{rest}}}}\right]/N\), which depends on the activity in both subsets and thereby couples their Fokker–Planck equations. To solve our Fokker–Planck equations for Nin and Nrest, we decouple this probability by replacing one variable via its mean-field equation as a function of the other. Specifically, we start from Eq. (8) to rewrite \({A}^{{{\rm{in}}}}=\mu \frac{N{p}^{{{\rm{ext}}}}+\lambda (1-{p}^{{{\rm{ext}}}}){A}^{{{\rm{rest}}}}}{1-\mu \lambda (1-{p}^{{{\rm{ext}}}})}\) and get

Similarly, we start from Eq. (7) to rewrite \({A}^{{{\rm{rest}}}}=\frac{(1-\mu )\lambda {A}^{{{\rm{in}}}}}{1-(1-\mu )\lambda }\) and get

We can then independently solve the Fokker–Planck equations for P(Arest) with

as well as P(Ain) with

The solution for the total network activity A = Ain + Arest is obtained by the convolution P(A) = P(Ain)*P(Arest), cf. Fig. 3c.

Data availability

The processed data supporting the findings of this study are available on GitHub at sahelazizpour/Finite-Observation-Dynamic-Range.

Code availability

The simulation code, analysis pipeline, results, and scripts to produce the figures that support the findings of this study are available from GitHub at sahelazizpour/Finite-Observation-Dynamic-Range.

References

Muñoz, M. A. Colloquium: criticality and dynamical scaling in living systems. Rev. Mod. Phys. 90, 031001 (2018).

Cavagna, A. et al. Scale-free correlations in starling flocks. Proc. Natl. Acad. Sci. USA 107, 11865 (2010).

Ridden, S. J., Chang, H. H., Zygalakis, K. C. & MacArthur, B. D. Entropy, ergodicity, and stem cell multipotency. Phys. Rev. Lett. 115, 208103 (2015).

Beggs, J. M. The criticality hypothesis: how local cortical networks might optimize information processing. Philos. Trans. R. Soc. Lond. Math. Phys. Eng. Sci. 366, 329 (2008).

Priesemann, V., Munk, M. H. & Wibral, M. Subsampling effects in neuronal avalanche distributions recorded in vivo. BMC Neurosci. 10, 40 (2009).

Palva, J. M. et al. Neuronal long-range temporal correlations and avalanche dynamics are correlated with behavioral scaling laws. Proc. Natl. Acad. Sci. USA 110, 3585 (2013).

Wilting, J. & Priesemann, V. 25 years of criticality in neuroscience—established results, open controversies, novel concepts. Curr. Opin. Neurobiol. 58, 105 (2019).

Fontenele, A. J., Sooter, J. S., Norman, V. K., Gautam, S. H. & Shew, W. L. Low-dimensional criticality embedded in high-dimensional awake brain dynamics. Sci. Adv. 10, eadj9303 (2024).

Henkel, M., Hinrichsen, H. & Lübeck, S. Non-Equilibrium Phase Transitions Volume 1: Absorbing Phase Transitions (Springer Science & Business Media, 2008)

Täuber, U. C. Critical Dynamics: A Field Theory Approach to Equilibrium and Non-Equilibrium Scaling Behavior (Cambridge University Press, 2014)

Kinouchi, O. & Copelli, M. Optimal dynamical range of excitable networks at criticality. Nat. Phys. 2, 348 (2006).

Boedecker, J., Obst, O., Lizier, J. T., Mayer, N. M. & Asada, M. Information processing in echo state networks at the edge of chaos. Theory Biosci. 131, 205 (2012).

Barnett, L., Lizier, J. T., Harré, M., Seth, A. K. & Bossomaier, T. Information flow in a kinetic ising model peaks in the disordered phase. Phys. Rev. Lett. 111, 177203 (2013).

Meijers, M., Ito, S. & ten Wolde, P. R. Behavior of information flow near criticality. Phys. Rev. E 103, L010102 (2021).

Morales, G. B. & Muñoz, M. A. Optimal input representation in neural systems at the edge of chaos. Biology 10, 702 (2021).

Yang, Z., Liang, J. & Zhou, C. Critical avalanches in excitation-inhibition balanced networks reconcile response reliability with sensitivity for optimal neural representation. Phys. Rev. Lett. 134, 028401 (2025).

Nykter, M. et al. Critical networks exhibit maximal information diversity in structure-dynamics relationships. Phys. Rev. Lett. 100, 058702 (2008).

Dakos, V. et al. Slowing down as an early warning signal for abrupt climate change. Proc. Natl. Acad. Sci. USA 105, 14308 (2008).

Maturana, M. I. et al. Critical slowing down as a biomarker for seizure susceptibility. Nat. Commun. 11, 2172 (2020).

Tapinova, O. et al. Integrated Ising model with global inhibition for decision-making. Proc. Natl. Acad. Sci. USA 122, e2423557122 (2025).

Wilting, J. et al. Operating in a reverberating regime enables rapid tuning of network states to task requirements. Front. Syst. Neurosci. 12, 55 (2018).

Poel, W. et al. Subcritical escape waves in schooling fish. Sci. Adv. 8, eabm6385 (2022).

Cramer, B. et al. Control of criticality and computation in spiking neuromorphic networks with plasticity. Nat. Commun. 11, 2853 (2020).

Khajehabdollahi, S. et al. Emergent mechanisms for long timescales depend on training curriculum and affect performance in memory tasks. in The Twelfth International Conference on Learning Representations (ICLR, 2024)

Britten, K., Shadlen, M., Newsome, W. & Movshon, J. The analysis of visual motion: a comparison of neuronal and psychophysical performance. J. Neurosci. 12, 4745 (1992).

Wachowiak, M. & Cohen, L. B. Representation of odorants by receptor neuron input to the mouse olfactory bulb. Neuron 32, 723 (2001).

Evans, E. F. The dynamic range problem: place and time coding at the level of cochlear nerve and nucleus. in Neuronal Mechanisms of Hearing (eds Syka, J. & Aitkin, L.) 69–85 (Springer, 1981).

Dean, I., Harper, N. S. & McAlpine, D. Neural population coding of sound level adapts to stimulus statistics. Nat. Neurosci. 8, 1684 (2005).

Shew, W. L., Yang, H., Petermann, T., Roy, R. & Plenz, D. Neuronal avalanches imply maximum dynamic range in cortical networks at criticality. J. Neurosci. 29, 15595 (2009).

Gautam, S. H., Hoang, T. T., McClanahan, K., Grady, S. K. & Shew, W. L. Maximizing sensory dynamic range by tuning the cortical state to criticality. PLoS Comput. Biol. 11, e1004576 (2015).

Gollo, L. L. Coexistence of critical sensitivity and subcritical specificity can yield optimal population coding. J. R. Soc. Interface 14, 20170207 (2017).

Zierenberg, J., Wilting, J., Priesemann, V. & Levina, A. Tailored ensembles of neural networks optimize sensitivity to stimulus statistics. Phys. Rev. Res. 2, 013115 (2020).

Galera, E. F. & Kinouchi, O. Physics of psychophysics: large dynamic range in critical square lattices of spiking neurons. Phys. Rev. Res. 2, 033057 (2020).

Murray, J. D. et al. A hierarchy of intrinsic timescales across primate cortex. Nat. Neurosci. 17, 1661 (2014).

Rudelt, L. et al. Signatures of hierarchical temporal processing in the mouse visual system. PLOS Comput. Biol. 20, e1012355 (2024).

Shi, Y.-L., Zeraati, R., Laboratory, I. B., Levina, A. & Engel, T. A. Brain-wide organization of intrinsic timescales at single-neuron resolution. Preprint at https://doi.org/10.1101/2025.08.30.673281 (2025).

Vogelstein, J. T. et al. Spike inference from calcium imaging using sequential Monte Carlo methods. Biophys. J. 97, 636 (2009).

Larremore, D. B., Shew, W. L. & Restrepo, J. G. Predicting criticality and dynamic range in complex networks: effects of topology. Phys. Rev. Lett. 106, 058101 (2011).

Berens, P., Gerwinn, S., Ecker, A. & Bethge, M., Neurometric function analysis of population codes. in Advances in Neural Information Processing Systems, Vol. 22 (eds Bengio, Y., Schuurmans, D., Lafferty, J., Williams, C. & Culotta, A.) (Curran Associates, Inc., 2009)

Toyoizumi, T. & Abbott, L. F. Beyond the edge of chaos: amplification and temporal integration by recurrent networks in the chaotic regime. Phys. Rev. E 84, 051908 (2011).

Dahmen, D., Grün, S., Diesmann, M. & Helias, M. Second type of criticality in the brain uncovers rich multiple-neuron dynamics. Proc. Natl. Acad. Sci. USA 116, 13051 (2019).

Uchida, N., Kepecs, A. & Mainen, Z. F. Seeing at a glance, smelling in a whiff: rapid forms of perceptual decision making. Nat. Rev. Neurosci. 7, 485 (2006).

Wilting, J. & Priesemann, V. Inferring collective dynamical states from widely unobserved systems. Nat. Commun. 9, 2325 (2018).

Zeraati, R. et al. Intrinsic timescales in the visual cortex change with selective attention and reflect spatial connectivity. Nat. Commun. 14, 1858 (2023).

Tanaka, G. et al. Recent advances in physical reservoir computing: a review. Neural Netw. 115, 100 (2019).

Jaeger, H. The “Echo State” Approach to Analysing and Training Recurrent Neural Networks—with an Erratum Note. Technical Report 148 (German National Research Institute for Computer Science, 2001)

Wang, X. & Cichos, F. Harnessing synthetic active particles for physical reservoir computing. Nat. Commun. 15, 774 (2024).

Dahmen, D. et al. Strong and localized recurrence controls dimensionality of neural activity across brain areas. Preprint at https://doi.org/10.1101/2020.11.02.365072 (2022).

Khajehabdollahi, S., Prosi, J., Giannakakis, E., Martius, G. & Levina, A. When to be critical? Performance and evolvability in different regimes of neural ising agents. Artif. Life 28, 458 (2022).

Hornik, K., Stinchcombe, M. & White, H. Multilayer feedforward networks are universal approximators. Neural Netw. 2, 359 (1989).

Dornheim, T. et al. The static local field correction of the warm dense electron gas: an ab initio path integral Monte Carlo study and machine learning representation. J. Chem. Phys. 151, 194104 (2019).

Zierenberg, J., Wilting, J., Priesemann, V. & Levina, A. Description of spreading dynamics by microscopic network models and macroscopic branching processes can differ due to coalescence. Phys. Rev. E 101, 022301 (2020).

Risken, H. and Frank, T. The Fokker–Planck Equation: Methods of Solution and Applications (Springer Science & Business Media, 2012)

Acknowledgements

J.Z. was supported by the Joachim Herz Stiftung. J.Z. and V.P were funded by the Deutsche Forschungsgemeinschaft (DFG, German Research Foundation)—Project-ID 454648639 - SFB 1528 “Cognition of Interaction”. A.L was supported by the Sofja Kovalevskaja Award from the Alexander von Humboldt Foundation. All authors gratefully acknowledge support from the Max Planck Society.

Funding

Open Access funding enabled and organized by Projekt DEAL.

Author information

Authors and Affiliations

Contributions

J.Z. and A.L. designed the project. S.A. and J.Z. wrote the code and performed the simulations. A.L. developed the measure, S.A and J.Z. analyzed the data, and S.A., A.L., and J.Z. calculated the mean-field solution. V.P., J.Z, and A.L discussed the results and wrote the manuscript. All authors contributed to reviewing the manuscript.

Corresponding authors

Ethics declarations

Competing interests

The authors declare no competing interests.

Peer review

Peer review information

Communications Physics thanks John M. Beggs and the other, anonymous, reviewer(s) for their contribution to the peer review of this work.

Additional information

Publisher’s note Springer Nature remains neutral with regard to jurisdictional claims in published maps and institutional affiliations.

Supplementary information

Rights and permissions

Open Access This article is licensed under a Creative Commons Attribution 4.0 International License, which permits use, sharing, adaptation, distribution and reproduction in any medium or format, as long as you give appropriate credit to the original author(s) and the source, provide a link to the Creative Commons licence, and indicate if changes were made. The images or other third party material in this article are included in the article’s Creative Commons licence, unless indicated otherwise in a credit line to the material. If material is not included in the article’s Creative Commons licence and your intended use is not permitted by statutory regulation or exceeds the permitted use, you will need to obtain permission directly from the copyright holder. To view a copy of this licence, visit http://creativecommons.org/licenses/by/4.0/.

About this article

Cite this article

Azizpour, S., Priesemann, V., Zierenberg, J. et al. Finite integration time can shift optimal sensitivity away from criticality. Commun Phys 9, 119 (2026). https://doi.org/10.1038/s42005-026-02584-w

Received:

Accepted:

Published:

Version of record:

DOI: https://doi.org/10.1038/s42005-026-02584-w