Abstract

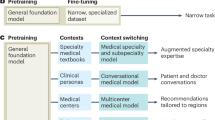

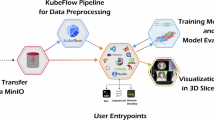

Multimodal artificial intelligence (AI) integrates diverse types of data via machine learning to improve understanding, prediction and decision-making across disciplines such as healthcare, science and engineering. However, most multimodal AI advances focus on models for vision and language data, and their deployability remains a key challenge. We advocate a deployment-centric workflow that incorporates deployment constraints early on to reduce the likelihood of undeployable solutions, complementing data-centric and model-centric approaches. We also emphasize deeper integration across multiple levels of multimodality through stakeholder engagement and interdisciplinary collaboration to broaden the research scope beyond vision and language. To facilitate this approach, we identify common multimodal-AI-specific challenges shared across disciplines and examine three real-world use cases: pandemic response, self-driving car design and climate change adaptation, drawing expertise from healthcare, social science, engineering, science, sustainability and finance. By fostering interdisciplinary dialogue and open research practices, our community can accelerate deployment-centric development for broad societal impact.

This is a preview of subscription content, access via your institution

Access options

Access Nature and 54 other Nature Portfolio journals

Get Nature+, our best-value online-access subscription

$32.99 / 30 days

cancel any time

Subscribe to this journal

Receive 12 digital issues and online access to articles

$119.00 per year

only $9.92 per issue

Buy this article

- Purchase on SpringerLink

- Instant access to the full article PDF.

USD 39.95

Prices may be subject to local taxes which are calculated during checkout

Similar content being viewed by others

Data availability

Source data are provided with this paper. They are also available at https://github.com/multimodalAI/multimodal-ai-landscape, where they will be updated annually.

References

Wang, H. et al. Scientific discovery in the age of artificial intelligence. Nature 620, 47–60 (2023).

Kabir, M. M., Jim, J. R. & Istenes, Z. Terrain detection and segmentation for autonomous vehicle navigation: a state-of-the-art systematic review. Inf. Fusion 113, 102644 (2025).

Woloshin, S., Patel, N. & Kesselheim, A. S. False negative tests for SARS-CoV-2 infection—challenges and implications. N. Engl. J. Med. 383, e38 (2020).

Zhao, F., Zhang, C. & Geng, B. Deep multimodal data fusion. ACM Comput. Surv. 56, 216 (2024).

Baltrušaitis, T., Ahuja, C. & Morency, L.-P. Multimodal machine learning: a survey and taxonomy. IEEE Trans. Pattern Anal. Mach. Intell. 41, 423–443 (2019).

Ektefaie, Y., Dasoulas, G., Noori, A., Farhat, M. & Zitnik, M. Multimodal learning with graphs. Nat. Mach. Intell. 5, 340–350 (2023).

Xu, P., Zhu, X. & Clifton, D. A. Multimodal learning with transformers: a survey. IEEE Trans. Pattern Anal. Mach. Intell. 45, 12113–12132 (2023).

Liang, P. P., Zadeh, A. & Morency, L.-P. Foundations & trends in multimodal machine learning: principles, challenges, and open questions. ACM Comput. Surv. 56, 264 (2024).

Acosta, J. N., Falcone, G. J., Rajpurkar, P. & Topol, E. J. Multimodal biomedical AI. Nat. Med. 28, 1773–1784 (2022).

Kline, A. et al. Multimodal machine learning in precision health: a scoping review. npj Digit. Med. 5, 171 (2022).

Krones, F., Marikkar, U., Parsons, G., Szmul, A. & Mahdi, A. Review of multimodal machine learning approaches in healthcare. Inf. Fusion 114, 102690 (2025).

Notin, P., Rollins, N., Gal, Y., Sander, C. & Marks, D. Machine learning for functional protein design. Nat. Biotechnol. 42, 216–228 (2024).

Song, B., Zhou, R. & Ahmed, F. Multi-modal machine learning in engineering design: a review and future directions. J. Comput. Inf. Sci. Eng. 24, 010801 (2024).

Ofodile, O. C. et al. Predictive analytics in climate finance: assessing risks and opportunities for investors. GSC Adv. Res. Rev. 18, 423–433 (2024).

Quatrini, S. Challenges and opportunities to scale up sustainable finance after the COVID-19 crisis: lessons and promising innovations from science and practice. Ecosyst. Serv. 48, 101240 (2021).

Gupta, V. et al. An emotion care model using multimodal textual analysis on COVID-19. Chaos Solitons Fractals 144, 110708 (2021).

Anshul, A., Pranav, G. S., Zia Ur Rehman, M. & Kumar, N. A multimodal framework for depression detection during COVID-19 via harvesting social media. IEEE Trans. Comput. Soc. Syst. 11, 2872–2888 (2024).

Bordes, F. et al. An introduction to vision–language modeling. Preprint at https://doi.org/10.48550/arXiv.2405.17247 (2024).

Zhang, J., Huang, J., Jin, S. & Lu, S. Vision–language models for vision tasks: a survey. IEEE Trans. Pattern Anal. Mach. Intell. 46, 5625–5644 (2024).

van Breugel, B. & van der Schaar, M. Position: why tabular foundation models should be a research priority. In Proc. 41st International Conference on Machine Learning (eds Salakhutdinov, R. et al.) 48976–48993 (PMLR, 2024).

Ma, M. et al. SMIL: multimodal learning with severely missing modality. In Proc. AAAI Conference on Artificial Intelligence Vol. 35, 2302–2310 (AAAI Press, 2021).

Wu, R., Wang, H., Chen, H.-T. & Carneiro, G. Deep multimodal learning with missing modality: a survey. Preprint at https://doi.org/10.48550/arXiv.2409.07825 (2024).

Wang, F., Zhou, Y., Wang, S., Vardhanabhuti, V. & Yu, L. Multi-granularity cross-modal alignment for generalized medical visual representation learning. Adv. Neural Inf. Process. Syst. 35, 33536–33549 (2022).

Zhao, T., Zhang, L., Ma, Y. & Cheng, L. A survey on safe multi-modal learning systems. In Proc. 30th ACM SIGKDD Conference on Knowledge Discovery and Data Mining 6655–6665 (Association for Computing Machinery, 2024).

Pranjal, R. et al. Toward privacy-enhancing ambulatory-based well-being monitoring: investigating user re-identification risk in multimodal data. In IEEE International Conference on Acoustics, Speech and Signal Processing 1–5 (IEEE, 2023).

Paleyes, A., Urma, R.-G. & Lawrence, N. D. Challenges in deploying machine learning: a survey of case studies. ACM Comput. Surv. 55, 114 (2022).

Jo, A. The promise and peril of generative AI. Nature 614, 214–216 (2023).

Brown, T. et al. Language models are few-shot learners. In Proc. 34th International Conference on Neural Information Processing Systems (eds Larochelle, H. et al.) 1877–1901 (Curran Associates Inc., 2020).

Achiam, J. et al. GPT-4 technical report. Preprint at https://doi.org/10.48550/arXiv.2303.08774 (2023).

Seedat, N., Imrie, F. & van der Schaar, M. Navigating data-centric artificial intelligence with DC-Check: advances, challenges, and opportunities. IEEE Trans. Artif. Intell. 5, 2589–2603 (2024).

Liu, H., Li, C., Wu, Q. & Lee, Y. J. Visual instruction tuning. In Proc. 37th International Conference on Neural Information Processing Systems (eds Oh, A. et al.) 34892–34916 (Curran Associates Inc., 2023).

Li, C. et al. LLaVA-med: training a large language-and-vision assistant for biomedicine in one day. In Proc. 37th International Conference on Neural Information Processing Systems (eds Oh, A. et al.) 28541–28564 (Curran Associates Inc., 2023).

Roberts, M. et al. Common pitfalls and recommendations for using machine learning to detect and prognosticate for COVID-19 using chest radiographs and CT scans. Nat. Mach. Intell. 3, 199–217 (2021).

Kreuzberger, D., Kühl, N. & Hirschl, S. Machine learning operations (MLOps): overview, definition, and architecture. IEEE Access 11, 31866–31879 (2023).

Lavin, A. et al. Technology readiness levels for machine learning systems. Nat. Commun. 13, 6039 (2022).

Nielsen, M. W. et al. Intersectional analysis for science and technology. Nature 640, 329–337 (2025).

Lekadir, K. et al. FUTURE-AI: international consensus guideline for trustworthy and deployable artificial intelligence in healthcare. BMJ 388, e081554 (2025).

Huang, Y. et al. What makes multi-modal learning better than single (provably). In Proc. 35th International Conference on Neural Information Processing Systems (eds Ranzato, M. et al.) 10944–10956 (Curran Associates Inc., 2021).

Meng, X., Babaee, H. & Karniadakis, G. E. Multi-fidelity Bayesian neural networks: algorithms and applications. J. Comput. Phys. 438, 110361 (2021).

Penwarden, M., Zhe, S., Narayan, A. & Kirby, R. M. Multifidelity modeling for physics-informed neural networks (PINNs). J. Comput. Phys. 451, 110844 (2022).

Zitnik, M. et al. Machine learning for integrating data in biology and medicine: principles, practice, and opportunities. Inf. Fusion 50, 71–91 (2019).

Lunke, S. et al. Integrated multi-omics for rapid rare disease diagnosis on a national scale. Nat. Med. 29, 1681–1691 (2023).

Wu, E. et al. How medical AI devices are evaluated: limitations and recommendations from an analysis of FDA approvals. Nat. Med. 27, 582–584 (2021).

van Breugel, B., Liu, T., Oglic, D. & van der Schaar, M. Synthetic data in biomedicine via generative artificial intelligence. Nat. Rev. Bioeng. 2, 991–1004 (2024).

Al-Rubaie, M. & Chang, J. M. Privacy-preserving machine learning: threats and solutions. IEEE Secur. Priv. 17, 49–58 (2019).

Wendland, P. et al. Generation of realistic synthetic data using multimodal neural ordinary differential equations. npj Digit. Med. 5, 122 (2022).

Che, L., Wang, J., Zhou, Y. & Ma, F. Multimodal federated learning: a survey. Sensors 23, 6986 (2023).

Wang, Q., Zhan, L., Thompson, P. M. & Zhou, J. Multimodal learning with incomplete modalities by knowledge distillation. In Proc. 26th ACM SIGKDD International Conference on Knowledge Discovery & Data Mining 1828–1838 (Association for Computing Machinery, 2020).

Yu, Z., Yu, J., Fan, J. & Tao, D. Multi-modal factorized bilinear pooling with co-attention learning for visual question answering. In Proc. IEEE International Conference on Computer Vision 1839–1848 (IEEE, 2017).

Weidinger, L. et al. Holistic safety and responsibility evaluations of advanced AI models. Preprint at https://doi.org/10.48550/arXiv.2404.14068 (2024).

Luccioni, S., Akiki, C., Mitchell, M. & Jernite, Y. Stable bias: evaluating societal representations in diffusion models. In Proc. 37th International Conference on Neural Information Processing Systems (eds Oh, A. et al.) 56338–56351 (Curran Associates Inc., 2023).

Murdoch, W. J., Singh, C., Kumbier, K., Abbasi-Asl, R. & Yu, B. Definitions, methods, and applications in interpretable machine learning. Proc. Natl Acad. Sci. USA 116, 22071–22080 (2019).

Rudin, C. Stop explaining black box machine learning models for high stakes decisions and use interpretable models instead. Nat. Mach. Intell. 1, 206–215 (2019).

Gawlikowski, J. et al. A survey of uncertainty in deep neural networks. Artif. Intell. Rev. 56, 1513–1589 (2023).

Khojasteh, D., Davani, E., Shamsipour, A., Haghani, M. & Glamore, W. Climate change and COVID-19: interdisciplinary perspectives from two global crises. Sci. Total Environ. 844, 157142 (2022).

Elmer, T., Mepham, K. & Stadtfeld, C. Students under lockdown: comparisons of students’ social networks and mental health before and during the COVID-19 crisis in Switzerland. PLoS ONE 15, e0236337 (2020).

Valdez, D., Ten Thij, M., Bathina, K., Rutter, L. A. & Bollen, J. Social media insights into US mental health during the COVID-19 pandemic: longitudinal analysis of Twitter data. J. Med. Internet Res. 22, e21418 (2020).

Liu, Z. et al. Near-real-time monitoring of global CO2 emissions reveals the effects of the COVID-19 pandemic. Nat. Commun. 11, 5172 (2020).

Zheng, B. et al. Satellite-based estimates of decline and rebound in China’s CO2 emissions during COVID-19 pandemic. Sci. Adv. 6, eabd4998 (2020).

Mosser, P. C. Central bank responses to COVID-19. Bus. Econ. 55, 191–201 (2020).

Nicola, M. et al. The socio-economic implications of the coronavirus pandemic (COVID-19): a review. Int. J. Surg. 78, 185–193 (2020).

Ding, X. et al. Wearable sensing and telehealth technology with potential applications in the coronavirus pandemic. IEEE Rev. Biomed. Eng. 14, 48–70 (2020).

Charlton, P. H. et al. Wearable photoplethysmography for cardiovascular monitoring. Proc. IEEE 110, 355–381 (2022).

Zong, Y., Mac Aodha, O. & Hospedales, T. Self-supervised multimodal learning: a survey. IEEE Trans. Pattern Anal. Mach. Intell. 47, 5299-5318 (2025).

Yang, X., Zhang, T. & Xu, C. Cross-domain feature learning in multimedia. IEEE Trans. Multimed. 17, 64–78 (2014).

Han, R. et al. Randomised controlled trials evaluating artificial intelligence in clinical practice: a scoping review. Lancet Digit. Health 6, e367–e373 (2024).

Plana, D. et al. Randomized clinical trials of machine learning interventions in health care: a systematic review. JAMA Netw. Open 5, e2233946 (2022).

Imrie, F., Davis, R. & van der Schaar, M. Multiple stakeholders drive diverse interpretability requirements for machine learning in healthcare. Nat. Mach. Intell. 5, 824–829 (2023).

Mincu, D. & Roy, S. Developing robust benchmarks for driving forward AI innovation in healthcare. Nat. Mach. Intell. 4, 916–921 (2022).

Soni, A. et al. in Machine Learning for Robotics Applications (eds Bianchini, M. et al.) 139–151 (Springer, 2021).

Badue, C. et al. Self-driving cars: a survey. Expert Syst. Appl. 165, 113816 (2021).

Yeong, D. J., Velasco-Hernandez, G., Barry, J. & Walsh, J. Sensor and sensor fusion technology in autonomous vehicles: a review. Sensors 21, 2140 (2021).

Yang, J. et al. Generalized predictive model for autonomous driving. In Proc. IEEE/CVF Conference on Computer Vision and Pattern Recognition 14662–14672 (IEEE, 2024).

Zhang, W. & Xu, J. Advanced lightweight materials for automobiles: a review. Mater. Des. 221, 110994 (2022).

Hansson, S. O., Belin, M.-Å. & Lundgren, B. Self-driving vehicles—an ethical overview. Philos. Technol. 34, 1383–1408 (2021).

Chowdhury, A., Karmakar, G., Kamruzzaman, J., Jolfaei, A. & Das, R. Attacks on self-driving cars and their countermeasures: a survey. IEEE Access 8, 207308–207342 (2020).

Dey, K. C., Mishra, A. & Chowdhury, M. Potential of intelligent transportation systems in mitigating adverse weather impacts on road mobility: a review. IEEE Trans. Intell. Transp. Syst. 16, 1107–1119 (2014).

Kamran, S. S. et al. Artificial intelligence and advanced materials in automotive industry: potential applications and perspectives. Mater. Today Proc. 62, 4207–4214 (2022).

Raissi, M., Perdikaris, P. & Karniadakis, G. E. Physics-informed neural networks: a deep learning framework for solving forward and inverse problems involving nonlinear partial differential equations. J. Comput. Phys. 378, 686–707 (2019).

Karniadakis, G. E. et al. Physics-informed machine learning. Nat. Rev. Phys. 3, 422–440 (2021).

Liu, P., Yang, R. & Xu, Z. Public acceptance of fully automated driving: effects of social trust and risk/benefit perceptions. Risk Anal. 39, 326–341 (2019).

Bi, K. et al. Accurate medium-range global weather forecasting with 3D neural networks. Nature 619, 533–538 (2023).

Lam, R. et al. Learning skillful medium-range global weather forecasting. Science 382, 1416–1421 (2023).

Mathiesen, K. Rating climate risks to credit worthiness. Nat. Clim. Change 8, 454–456 (2018).

Breitenstein, M., Ciummo, S. & Walch, F. Disclosure of Climate Change Risk in Credit Ratings. Occasional Paper Series 303 (European Central Bank, 2022).

Imran, M., Ofli, F., Caragea, D. & Torralba, A. Using AI and social media multimodal content for disaster response and management: opportunities, challenges, and future directions. Inf. Process. Manag. 57, 102261 (2020).

Tanir, T., Yildirim, E., Ferreira, C. M. & Demir, I. Social vulnerability and climate risk assessment for agricultural communities in the United States. Sci. Total Environ. 908, 168346 (2024).

Kumar, P. et al. An overview of monitoring methods for assessing the performance of nature-based solutions against natural hazards. Earth Sci. Rev. 217, 103603 (2021).

Tkachenko, N., Jarvis, S. & Procter, R. Predicting floods with Flickr tags. PLoS ONE 12, e0172870 (2017).

Thulke, D. et al. ClimateGPT: towards AI synthesizing interdisciplinary research on climate change. Preprint at https://doi.org/10.48550/arXiv.2401.09646 (2024).

Bodnar, C. et al. Aurora: a Foundation Model of the Atmosphere. Technical Report MSR-TR-2024-16 (Microsoft Research AI for Science, 2024).

Rasp, S. et al. WeatherBench 2: a benchmark for the next generation of data-driven global weather models. J. Adv. Model. Earth Syst. 16, e2023MS004019 (2024).

Morshed, S. R. et al. Decoding seasonal variability of air pollutants with climate factors: a geostatistical approach using multimodal regression models for informed climate change mitigation. Environ. Pollut. 345, 123463 (2024).

Reichstein, M. et al. Early warning of complex climate risk with integrated artificial intelligence. Nat. Commun. 16, 2564 (2025).

Liang, P. P. et al. MultiBench: multiscale benchmarks for multimodal representation learning. In Proc. 35th Conference on Neural Information Processing Systems Track on Datasets and Benchmarks (eds Vanschoren, J. & Yeung, S.) (2021)

de la Fuente, J. et al. Towards a more inductive world for drug repurposing approaches. Nat. Mach. Intell. 7, 495–508 (2025).

Torabi, F. et al. The common governance model: a way to avoid data segregation between existing trusted research environment. Int. J. Popul. Data Sci. 8, 2164 (2023).

Crosswell, L. C. & Thornton, J. M. ELIXIR: a distributed infrastructure for European biological data. Trends Biotechnol. 30, 241–242 (2012).

Liang, K. et al. A survey of knowledge graph reasoning on graph types: static, dynamic, and multi-modal. IEEE Trans. Pattern Anal. Mach. Intell. 46, 9456–9478 (2024).

Archit, A. et al. Segment anything for microscopy. Nat. Methods 22, 579–591 (2025).

Li, C. et al. Multimodal foundation models: from specialists to general-purpose assistants. Found. Trends Comput. Graph. Vis. 16, 1–214 (2024).

Fei, N. et al. Towards artificial general intelligence via a multimodal foundation model. Nat. Commun. 13, 3094 (2022).

Narayanswamy, G. et al. Scaling wearable foundation models. In The Thirteenth International Conference on Learning Representations (ICLR, 2025).

Cui, H. et al. Towards multimodal foundation models in molecular cell biology. Nature 640, 623–633 (2025).

Introducing the Model Context Protocol. Anthropic https://www.anthropic.com/index/model-context-protocol (2024).

Weisz, J. D. et al. Design principles for generative AI applications. In Proc. 2024 CHI Conference on Human Factors in Computing Systems (eds Mueller, F. F. et al.) 378 (Association for Computing Machinery, 2024).

Li, J., Li, D., Xiong, C. & Hoi, S. BLIP: Bootstrapping language–image pre-training for unified vision–language understanding and generation. In Proc. 39th International Conference on Machine Learning (eds Chaudhuri, K. et al.) 12888–12900 (PMLR, 2022).

Li, J., Li, D., Savarese, S. & Hoi, S. BLIP-2: Bootstrapping language–image pre-training with frozen image encoders and large language models. In Proc. 40th International Conference on Machine Learning (eds Krause, A. et al.) 19730–19742 (PMLR, 2023).

Driess, D. et al. PaLM-E: an embodied multimodal language model. In Proc. 40th International Conference on Machine Learning (eds Krause, A. et al.) 8469–8488 (PMLR, 2023).

Tan, Z. et al. Large language models for data annotation and synthesis: a survey. In Proc. 2024 Conference on Empirical Methods in Natural Language Processing (eds Al-Onaizan, Y. et al.) 930–957 (Association for Computational Linguistics, 2024).

Ding, B. et al. Data augmentation using LLMs: data perspectives, learning paradigms and challenges. In Findings of the Association for Computational Linguistics ACL 2024 (eds Ku, L.-W. et al.) 1679–1705 (Association for Computational Linguistics, 2024).

Chan, A.-W. et al. Reporting guidelines for clinical trial reports for interventions involving artificial intelligence: the CONSORT-AI extension. Nat. Med. 26, 1364–1374 (2020).

de Hond, A. A. H. et al. Guidelines and quality criteria for artificial intelligence-based prediction models in healthcare: a scoping review. npj Digit. Med. 5, 2 (2022).

Tomczak, K., Czerwińska, P. & Wiznerowicz, M. The Cancer Genome Atlas (TCGA): an immeasurable source of knowledge. Contemp. Oncol. 2015, 68–77 (2015).

Johnson, A. E. W. et al. MIMIC-IV, a freely accessible electronic health record dataset. Sci. Data 10, 1 (2023).

Tong, E., Zadeh, A., Jones, C. & Morency, L.-P. Combating human trafficking with multimodal deep models. In Proc. 55th Annual Meeting of the Association for Computational Linguistics (eds Barzilay, R. et al.) 1547–1556 (Association for Computational Linguistics, 2017).

Razi, A. et al. Instagram data donation: a case study on collecting ecologically valid social media data for the purpose of adolescent online risk detection. In CHI Conference on Human Factors in Computing Systems Extended Abstracts (eds Barbosa, S. et al.) 1–9 (Association for Computing Machinery, 2022).

Caesar, H. et al. nuScenes: a multimodal dataset for autonomous driving. In Proc. IEEE/CVF Conference on Computer Vision and Pattern Recognition 11621–11631 (IEEE, 2020).

Yu, H. et al. DAIR-V2X: a large-scale dataset for vehicle–infrastructure cooperative 3D object detection. In Proc. IEEE/CVF Conference on Computer Vision and Pattern Recognition 21361–21370 (IEEE, 2022).

Jain, A. et al. Commentary: The Materials Project: a materials genome approach to accelerating materials innovation. APL Mater. 1, 011002 (2013).

Lu, P. et al. MathVista: Evaluating mathematical reasoning of foundation models in visual contexts. In International Conference on Learning Representations (ICLR, 2024).

iNaturalist contributors. iNaturalist research-grade observations. GBIF https://doi.org/10.15468/ab3s5x (2025).

Hersbach, H. et al. The ERA5 global reanalysis. Q. J. R. Meteorol. Soc. 146, 1999–2049 (2020).

Mathur, P. et al. Monopoly: financial prediction from monetary policy conference videos using multimodal cues. In Proc. 30th ACM International Conference on Multimedia 2276–2285 (Association for Computing Machinery, 2022).

Cheng, D., Yang, F., Xiang, S. & Liu, J. Financial time series forecasting with multi-modality graph neural network. Pattern Recognit. 121, 108218 (2022).

Chen, K. et al. The operational medium-range deterministic weather forecasting can be extended beyond a 10-day lead time. Commun. Earth Environ. 6, 518 (2025).

Jin, Q. et al. Spatiotemporal inference network for precipitation nowcasting with multimodal fusion. IEEE J. Sel. Top. Appl. Earth Obs. Remote Sens. 17, 1299–1314 (2024).

Bycroft, C. et al. The UK Biobank resource with deep phenotyping and genomic data. Nature 562, 203–209 (2018).

Johnson, A. E. W. et al. MIMIC-III, a freely accessible critical care database. Sci. Data 3, 160035 (2016).

Acknowledgements

This work was enabled and supported by the Alan Turing Institute. We thank T. Chakraborty and C. Li for inspiring this work, D. A. Clifton for his support and T. Dunstan for contributing to the climate change adaptation section. J.Z. is supported by donations from D. Naik and S. Naik. S.Z. is supported by EPSRC (grant EP/Y017544/1). T.L.v.d.P. was supported by EPSRC (grant EP/Y028880/1). A.V. is supported by UKRI CDT in AI for Healthcare (grant EP/S023283/1). A.G. is supported by the Research Council of Norway (secureIT project 288787). M.Z. is supported by EPSRC (grant EP/X031276/1). C.T. is supported by UKRI CDT in AI-enabled Healthcare (grant EP/S021612/1). R.L. is supported by the Royal Society (grant IEC\NSFC\233558). L.v.Z. is supported by NERC (grant NE/W004747/1). O.T. is supported by UKRI CDT in Application of Artificial Intelligence to the study of Environmental Risks (grant EP/S022961/1). Z.A.S. is supported by Google DeepMind. O.A. is supported by NIHR Barts BRC (grant NIHR203330). T.N.D. is supported by UKRI CDT in Accountable, Responsible and Transparent AI (grant EP/S023437/1). L.F. is supported by MRC (grant MR/W006804/1). N.J. is supported by the EU’s co-funded HE project MuseIT (grant 101061441). M.V. is supported by St George’s Hospital Charity. A.C.-C. is supported by EPSRC (grant EP/Y028880/1). H.W. is supported by MRC (grant MR/X030075/1). C.C. is supported by the Royal Society (grant GS\R2\242355). T.Z. was supported by the Royal Academy of Engineering (grant RF\201819\18\109). G.G.S. is supported by EPSRC (grant EP/Y009800/1). P.H.C. is supported by BHF (grant FS/20/20/34626). H.L. is supported by EPSRC (grant UKRI396). The views expressed in this material are those of the authors and do not necessarily represent the views of their affiliated institutions or funders.

Author information

Authors and Affiliations

Contributions

X.L., J.Z., S.Z., T.L.v.d.P., S.T., T.Z., W.K.C., P.H.C. and H.L. conceptualized the manuscript. X.L., J.Z., S.Z., A.G., M.Z., L.v.Z., O.T. and H.L. designed the figures. X.L. and H.L. coordinated the entire project. All authors contributed to writing, resources or editing.

Corresponding author

Ethics declarations

Competing interests

G.G.S. is a scientific advisory board member at BioAI Health. P.H.C. provides consulting services to Cambridge University Technical Services for wearable manufacturers. The remaining authors declare no competing interests.

Peer review

Peer review information

Nature Machine Intelligence thanks Girmaw Abebe Tadesse and the other, anonymous, reviewer(s) for their contribution to the peer review of this work.

Additional information

Publisher’s note Springer Nature remains neutral with regard to jurisdictional claims in published maps and institutional affiliations.

Supplementary information

Supplementary Information (download PDF )

Supplementary information.

Supplementary Data 1 (download XLSX )

Statistical data for Supplementary Fig. 1.

Source data

Source Data Figs. 1 and 2 (download XLSX )

Source data.

Rights and permissions

Springer Nature or its licensor (e.g. a society or other partner) holds exclusive rights to this article under a publishing agreement with the author(s) or other rightsholder(s); author self-archiving of the accepted manuscript version of this article is solely governed by the terms of such publishing agreement and applicable law.

About this article

Cite this article

Liu, X., Zhang, J., Zhou, S. et al. Towards deployment-centric multimodal AI beyond vision and language. Nat Mach Intell 7, 1612–1624 (2025). https://doi.org/10.1038/s42256-025-01116-5

Received:

Accepted:

Published:

Version of record:

Issue date:

DOI: https://doi.org/10.1038/s42256-025-01116-5