Abstract

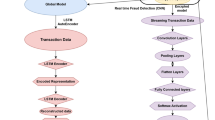

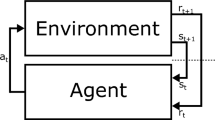

Deep reinforcement learning (DRL) demonstrates significant potential in solving complex control and decision-making problems, but it may inadvertently expose sensitive, environment-specific information, raising privacy and security concerns for computer systems, humans and organizations. This work introduces a privacy-preserving framework using homomorphic encryption and advanced learning algorithms to secure DRL processes. Our framework enables the encryption of sensitive information, including states, actions and rewards, before sharing it with an untrusted processing platform. This encryption ensures data privacy, prevents unauthorized access and maintains compliance with data protection laws throughout the learning process. In addition, we develop innovative algorithms to efficiently handle a wide range of encrypted control tasks. Our core innovation is the homomorphic encryption-compatible Adam optimizer, which reparameterizes momentum values to bypass the need for high-degree polynomial approximations of inverse square roots on encrypted data. This adaptation, previously unexplored in homomorphic encryption-based ML research, enables stable and efficient training with adaptive learning rates in encrypted domains, addressing a critical bottleneck for privacy-preserving DRL with sparse rewards. Evaluations on standard DRL benchmarks demonstrate that our encrypted DRL performs comparably with its unencrypted counterpart (with a gap of less than 10%) and maintaining data confidentiality with homomorphic encryption. This work facilitates the integration of privacy-preserving DRL into real-world applications, addressing critical privacy concerns, and promoting the ethical advancement of artificial intelligence.

This is a preview of subscription content, access via your institution

Access options

Access Nature and 54 other Nature Portfolio journals

Get Nature+, our best-value online-access subscription

$32.99 / 30 days

cancel any time

Subscribe to this journal

Receive 12 digital issues and online access to articles

$119.00 per year

only $9.92 per issue

Buy this article

- Purchase on SpringerLink

- Instant access to the full article PDF.

USD 39.95

Prices may be subject to local taxes which are calculated during checkout

Similar content being viewed by others

Data availability

All data used in this study are publicly available. The Pendulum and CartPole environments are available via GitHub (https://github.com/openai/gym) and the MobileEnv environment is also available via GitHub (https://github.com/stefanbschneider/mobile-env).

Code availability

The software code supporting the findings of the secure SAC algorithm in this study is available via GitHub (https://github.com/hieunch/PPRL) and via Zenodo (https://doi.org/10.5281/zenodo.17038255)64.

References

Huang, Z., Wu, J. & Lv, C. Efficient deep reinforcement learning with imitative expert priors for autonomous driving. IEEE Trans. Neural Netw. Learn. Syst. 33, 3675–3684 (2022).

Feng, S. et al. Dense reinforcement learning for safety validation of autonomous vehicles. Nature 615, 620–627 (2023).

Hachem, E. et al. Reinforcement learning for patient-specific optimal stenting of intracranial aneurysms. Sci. Rep. 13, 7147 (2023).

Hu, M., Zhang, J., Matkovic, L., Liu, T. & Yang, X. Reinforcement learning in medical image analysis: concepts, applications, challenges, and future directions. J. Appl. Clin. Med. Phys. 24, 13898 (2023).

Lei, Y. et al. New challenges in reinforcement learning: a survey of security and privacy. Artif. Intell. Rev. 56, 7195–7236 (2023).

Mo, K. et al. Security and privacy issues in deep reinforcement learning: threats and countermeasures. ACM Comput. Surv. 56, 152 (2024).

Pan, X. et al. How you act tells a lot: privacy-leaking attack on deep reinforcement learning. In Proc. 18th International Conference on Autonomous Agents and MultiAgent Systems 368–376 (ACM, 2019).

Vietri, G., Balle, B., Krishnamurthy, A. & Wu, S. Private reinforcement learning with PAC and regret guarantees. In Proc. International Conference on Machine Learning 9754–9764 (PMLR, 2020).

Garcelon, E., Perchet, V., Pike-Burke, C. & Pirotta, M. Local differential privacy for regret minimization in reinforcement learning. Adv. Neural Inf. Process. Syst. 34, 10561–10573 (2021).

Chowdhury, S. R. & Zhou, X. Differentially private regret minimization in episodic Markov decision processes. In Proc. AAAI Conference on Artificial Intelligence 36, 6375–6383 (AAAI, 2022).

Qiao, D. & Wang, Y.-X. Near-optimal differentially private reinforcement learning. In Proc. International Conference on Artificial Intelligence and Statistics 9914–9940 (PMLR, 2023).

Jesu, A., Darvariu, V.-A., Staffolani, A., Montanari, R. & Musolesi, M. Reinforcement learning on encrypted data. Preprint at https://doi.org/10.48550/arXiv.2109.08236 (2021).

Knott, B. et al. Crypten: secure multi-party computation meets machine learning. Adv. Neural Inf. Process. Syst. 34, 4961–4973 (2021).

Tan, S., Knott, B., Tian, Y. & Wu, D. J. CryptGPU: fast privacy-preserving machine learning on the GPU. In Proc. IEEE Symposium on Security and Privacy (SP) 1021–1038 (IEEE, 2021).

Rathee, D., Bhattacharya, A., Gupta, D., Sharma, R. & Song, D. Secure floating-point training. In Proc. 32nd USENIX Security Symposium 6329–6346 (USENIX Association, 2023).

Wang, S. et al. Privacy-aware estimation of relatedness in admixed populations. Brief. Bioinform. 23, 473 (2022).

Li, W. et al. COLLAGENE enables privacy-aware federated and collaborative genomic data analysis. Genome Biol. 24, 204 (2023).

Nandakumar, K., Ratha, N., Pankanti, S. & Halevi, S. Towards deep neural network training on encrypted data. In Proc. IEEE/CVF Conference on Computer Vision and Pattern Recognition Workshops 40–48 (IEEE, 2019).

Al Badawi, A., Hoang, L., Mun, C. F., Laine, K. & Aung, K. M. M. Privft: private and fast text classification with homomorphic encryption. IEEE Access 8, 226544–226556 (2020).

Kim, M., Jiang, X., Lauter, K., Ismayilzada, E. & Shams, S. Secure human action recognition by encrypted neural network inference. Nat. Commun. 13, 4799 (2022).

Nguyen, C. et al. Encrypted data caching and learning framework for robust federated learning-based mobile edge computing. IEEE/ACM Trans. Netw. 32, 2705–2720 (2024).

Cheon, J. H., Kim, A., Kim, M. & Song, Y. Homomorphic encryption for arithmetic of approximate numbers. In Proc. 23rd International Conference on the Theory and Applications of Cryptology and Information Security 409–437 (Springer, 2017).

Cheon, J. H., Han, K., Kim, A., Kim, M. & Song, Y. Bootstrapping for approximate homomorphic encryption. In Proc. 37th Annual International Conference on the Theory and Applications of Cryptographic Techniques 360–384 (Springer, 2018).

Cheon, J. H., Han, K., Kim, A., Kim, M. & Song, Y. A full RNS variant of approximate homomorphic encryption. In Proc. 25th International Conference on Selected Areas in Cryptography 347–368 (Springer, 2019).

Kim, M., Lee, D., Seo, J. & Song, Y. Accelerating HE operations from key decomposition technique. In Proc. Annual International Cryptology Conference 70–92 (Springer, 2023).

Lee, S., Lee, G., Kim, J. W., Shin, J. & Lee, M.-K. HETAL: efficient privacy-preserving transfer learning with homomorphic encryption. In Proc. International Conference on Machine Learning 19010–19035 (PMLR, 2023).

Crockett, E. A low-depth homomorphic circuit for logistic regression model training. In Proc. 8th Workshop on Encrypted Computing & Applied Homomorphic Cryptography (ACM, 2020).

Jin, C., Ragab, M. & Aung, K. M. M. Secure transfer learning for machine fault diagnosis under different operating conditions. In Proc. International Conference on Provable Security 278–297 (Springer, 2020).

Liu, X., Deng, R. H., Choo, K.-K. R. & Yang, Y. Privacy-preserving reinforcement learning design for patient-centric dynamic treatment regimes. IEEE Trans. Emerg. Top. Comput. 9, 456–470 (2019).

Park, J., Kim, D. S. & Lim, H. Privacy-preserving reinforcement learning using homomorphic encryption in cloud computing infrastructures. IEEE Access 8, 203564–203579 (2020).

Haarnoja, T. et al. Soft actor-critic: off-policy maximum entropy deep reinforcement learning with a stochastic actor. In Proc. 35th International Conference on Machine Learning 1861–1870 (PMLR, 2018).

Kingma, D. P. & Ba, J. Adam: A method for stochastic optimization. In Proc. 3rd International Conference on Learning Representations (ICLR, 2015).

Schulman, J., Wolski, F., Dhariwal, P., Radford, A. & Klimov, O. Proximal policy optimization algorithms. Preprint at https://doi.org/10.48550/arXiv.1707.06347 (2017).

Mnih, V. et al. Asynchronous methods for deep reinforcement learning. In Proc. International Conference on Machine Learning 1928–1937 (PMLR, 2016).

Lyubashevsky, V., Peikert, C. & Regev, O. On ideal lattices and learning with errors over rings. In Proc. 29th Annual International Conference on the Theory and Applications of Cryptographic Techniques 1–23 (Springer, 2010).

Li, B. & Micciancio, D. On the security of homomorphic encryption on approximate numbers. In Proc. Annual International Conference on the Theory and Applications of Cryptographic Techniques 648–677 (Springer, 2021).

Al Badawi, A. et al. OpenFHE: open-source fully homomorphic encryption library. In Proc. 10th Workshop on Encrypted Computing & Applied Homomorphic Cryptography 53–63 (ACM, 2022).

Boemer, F., Kim, S., Seifu, G., Souza, F. & Gopal, V. Intel HEXL: accelerating homomorphic encryption with Intel AVX512-IFMA52. In Proc. 9th Workshop on Encrypted Computing & Applied Homomorphic Cryptography 57–62 (ACM, 2021).

Albrecht, M. R., Player, R. & Scott, S. On the concrete hardness of learning with errors. J. Math. Cryptol. 9, 169–203 (2015).

Raffin, A. et al. Stable-baselines3: reliable reinforcement learning implementations. J. Mach. Learn. Res. 22, 12348–12355 (2021).

Keller, M. & Sun, K. Secure quantized training for deep learning. In Proc. International Conference on Machine Learning 10912–10938 (PMLR, 2022).

Mnih, V. et al. Human-level control through deep reinforcement learning. Nature 518, 529–533 (2015).

Roy, S. S., Turan, F., Järvinen, K., Vercauteren, F. & Verbauwhede, I. FPGA-based high-performance parallel architecture for homomorphic computing on encrypted data. In Proc. IEEE International Symposium on High Performance Computer Architecture 387–398 (IEEE, 2019).

Turan, F., Roy, S. S. & Verbauwhede, I. HEAWS: an accelerator for homomorphic encryption on the Amazon AWS FPGA. IEEE Trans. Comput. 69, 1185–1196 (2020).

Kim, S. et al. BTS: an accelerator for bootstrappable fully homomorphic encryption. In Proc. 49th Annual International Symposium on Computer Architecture 711–725 (ACM, 2022).

Geelen, R. et al. BASALISC: Programmable hardware accelerator for BGV fully homomorphic encryption. In IACR Trans. Cryptogr. Hardw. Embed. Syst. 2023, 32–57 (2023).

Hao, M. et al. Iron: private inference on transformers. Adv. Neural Inf. Process. Syst. 35, 15718–15731 (2022).

Pang, Q., Zhu, J., Möllering, H., Zheng, W. & Schneider, T. BOLT: privacy-preserving, accurate and efficient inference for transformers. In Proc. IEEE Symposium on Security and Privacy 4753–4771 (IEEE, 2024).

Lee, E. et al. Low-complexity deep convolutional neural networks on fully homomorphic encryption using multiplexed parallel convolutions. In Proc. International Conference on Machine Learning 12403–12422 (PMLR, 2022).

Mouchet, C., Troncoso-Pastoriza, J., Bossuat, J.-P. & Hubaux, J.-P. Multiparty homomorphic encryption from ring-learning-with-errors. Proc. Priv. Enhancing Technol. 2021, 291–311 (2021).

Rakhsha, A., Radanovic, G., Devidze, R., Zhu, X. & Singla, A. Policy teaching via environment poisoning: training-time adversarial attacks against reinforcement learning. In Proc. International Conference on Machine Learning 7974–7984 (PMLR, 2020).

Rakhsha, A., Zhang, X., Zhu, X. & Singla, A. Reward poisoning in reinforcement learning: attacks against unknown learners in unknown environments. Preprint at https://doi.org/10.48550/arXiv.2102.08492 (2021).

Cascudo, I. et al. Verifiable computation for approximate homomorphic encryption schemes. In Proc. Annual International Cryptology Conference 643–677 (Springer, 2025).

Santriaji, M. H., Xue, J., Zhang, Y., Lou, Q. & Solihin, Y. DataSeal: ensuring the verifiability of private computation on encrypted data. In Proc. IEEE Symposium on Security and Privacy 2378–2394 (IEEE, 2025).

Gentry, C. A Fully Homomorphic Encryption Scheme (Stanford Univ., 2009).

Brakerski, Z., Gentry, C. & Vaikuntanathan, V. (Leveled) fully homomorphic encryption without bootstrapping. ACM Trans. Comput. Theory 6, 13 (2014).

Chillotti, I., Gama, N., Georgieva, M. & Izabachene, M. Faster fully homomorphic encryption: bootstrapping in less than 0.1 seconds. In Proc. 22nd International Conference on the Theory and Application of Cryptology and Information Security 3–33 (Springer, 2016).

Kim, A., Song, Y., Kim, M., Lee, K. & Cheon, J. H. Logistic regression model training based on the approximate homomorphic encryption. BMC Med. Genomics 11, 23–31 (2018).

Ramachandran, P., Zoph, B. & Le, Q. V. Searching for activation functions. In Proc. 6th International Conference on Learning Representations (ICLR, 2018).

Misra, D. Mish: A self-regularized non-monotonic activation function. In Proc. 31st British Machine Vision Conference (BMVA, 2020).

Adams, R. A. & Fournier, J. J. Sobolev Spaces (Elsevier, 2003).

Rimes, E. Sur le calcul effectif des polynômes d’approximation de Tchebycheff. C. R. Acad. Sci. Paris 199, 337–340 (1934).

Bossuat, J.-P., Mouchet, C., Troncoso-Pastoriza, J. & Hubaux, J.-P. Efficient bootstrapping for approximate homomorphic encryption with non-sparse keys. In Proc. Annual International Conference on the Theory and Applications of Cryptographic Techniques 587–617 (Springer, 2021).

hieunch. hieunch/PPRL: v1.0.0. Zenodo https://doi.org/10.5281/zenodo.17038255 (2025).

Acknowledgements

This research was partially funded by the Australia–Vietnam Strategic Technologies Centre and the Australian Government through the Australian Research Council’s Discovery Projects funding scheme (project DE210100651, T.H.D.). M.K. was supported by the National Human Genome Research Institute grant number R01HG012604; the National Research Foundation of Korea (NRF) grant funded by the Korea government (MSIT) (numbers 2021R1C1C1010173 and RS-2023-00211649); Basic Science Research Program through the NRF funded by the Ministry of Education (2020R1A6A1A06046728); and Korea Basic Science Institute (National research Facilities and Equipment Center) grant funded by the Ministry of Education (2023R1A6C101A009).

Author information

Authors and Affiliations

Contributions

T.H.D. conceived the original idea, which was subsequently refined and expanded by C.-H.N. and D.N.N. to develop the system model, initial framework and approach. M.K. and K.L. made substantial contributions to further enhance the solution and finalize the framework. All authors had key roles in designing the testing scenarios, conducting experiments and analysing the results. Additionally, all authors actively participated in drafting the manuscript and approved the final version for submission.

Corresponding authors

Ethics declarations

Competing interests

The authors declare no competing interests.

Peer review

Peer review information

Nature Machine Intelligence thanks the anonymous reviewer(s) for their contribution to the peer review of this work.

Additional information

Publisher’s note Springer Nature remains neutral with regard to jurisdictional claims in published maps and institutional affiliations.

Supplementary information

Supplementary Information (download PDF )

Supplementary Notes 1 and 2, Supplementary Fig. 1, Supplementary Tables 1–3 and Supplementary References.

Rights and permissions

Springer Nature or its licensor (e.g. a society or other partner) holds exclusive rights to this article under a publishing agreement with the author(s) or other rightsholder(s); author self-archiving of the accepted manuscript version of this article is solely governed by the terms of such publishing agreement and applicable law.

About this article

Cite this article

Nguyen, CH., Dinh, T.H., Nguyen, D.N. et al. Empowering artificial intelligence with homomorphic encryption for secure deep reinforcement learning. Nat Mach Intell 7, 1913–1926 (2025). https://doi.org/10.1038/s42256-025-01135-2

Received:

Accepted:

Published:

Version of record:

Issue date:

DOI: https://doi.org/10.1038/s42256-025-01135-2