Abstract

Machine learning and high-throughput experimentation have greatly accelerated the discovery of mixed metal oxide catalysts by leveraging their compositional flexibility. However, the lack of established synthesis routes for solid-state materials remains a significant challenge in inorganic chemistry. An interpretable machine learning model is therefore essential, as it provides insights into the key factors governing phase formation. Here, we focus on the formation of single-phase Fe2(ZnCo)O4, synthesized via a high-throughput co-precipitation method. We combined a kernel classification model with an application of global SHapley Additive exPlanations (SHAP) analysis to pinpoint the experimental features most critical to single phase synthesizability by interpreting the contributions of each feature. Global SHAP analysis reveals that precursor and precipitating agent contributions to single-phase spinel formation align closely with established crystal growth theories. These results not only underscore the importance of interpretable machine learning in refining synthesis protocols but also establish a framework for data-informed experimental design in inorganic synthesis.

Similar content being viewed by others

Introduction

Mixed metal oxides (MMOs) exhibit excellent catalytic activity in reactions such as the oxygen evolution reaction1,2,3 and hydrogen evolution reaction4,5. MMOs can be composed of a wide selection of metal ions, ranging from earth-abundant alkali and transition metals to noble metals6,7. typically in various oxidation states, allowing for tunable structures and properties for specific applications. However, this flexibility of the design comes with challenges, as the vast number of possible combinations makes it difficult to identify compositions that show both good thermodynamic stability and can be successfully synthesized. To address this, machine learning (ML) or artificial intelligence (AI) has been frequently coupled with high-throughput experiments (HTE) to accelerate the discovery and optimization of targeted materials8,9. With ML and HTE, scientists have not only made significant advances in automated experimentation, but also in autonomous experimentation, utilized to accelerate several aspects of material discovery10,11,12,13. Previous work has demonstrated significantly acceleration to the down-selecting of potential material candidates with enhanced properties, leading to more efficient experimentation and exploration compared to traditional means14,15.

Building a universally applicable model for synthesizability is challenging due to the limited dataset and the broad variability in synthesis methods. The limited data available often restricts the ability of machine learning models to generalize across different material spaces16,17, especially when dealing with new compounds or structures not covered in the training data18,19. Consequently, it can be more practical to develop localized models that guide experimentalists in specific systems. Therefore, the most effective approach for synthesizing a particular material could be to create a surrogate model anchored in local experimental data, which raises a second challenge: the dataset would still be small and imbalanced, even with the use of automated high-throughput experiments to generate it20. Moreover, while ML can recommend promising materials with targeted compositions or structures, the synthesis route often remains unspecified for experimentalists21,22. The core issue lies in the fact that researchers tend to rely on theoretical phase diagrams, leaving little guidance for designing practical, feasible routes to single-phase compounds23,24.

This paper aims to tackle the challenges above by integrating kernel methods and explainable AI with real-world experiments, enabling the handling of small, imbalanced datasets while also guiding the development of practical, feasible synthesis routines. Traditionally, theoretical and ML approaches have been developed primarily for problems with assumed linear settings25. Few examples include calibration in spectroscopy26, predictive control and optimization27, and estimation of coefficient of thermal expansion28. In practice, however, these methods may not be applicable to complex real-world chemical systems, where the relationships between the process variables are non-linear29. Kernel methods30,31,32,33,34 solve the nonlinearity problem by using a simple linear transformation manner. The key idea is to project the sparse data onto a higher-dimensional space, where linear methods are more applicable35 and chances of over-fitting are less likely36. Kernel methods are performed in two successive steps: First, the training data in the input space is mapped onto a higher dimensional feature space, where sometimes even unknown features are induced by the kernel37. In the second step, a linear method is applied to find a linear relationship in that feature space in a regression or a classification setting. Since everything is formulated in terms of kernel-evaluations, there is no need for any explicit calculations in the high-dimensional feature space38.

Herein, we leverage kernel learning and the SHapley Additive exPlanations (SHAP) to interpret the influence of synthesis conditions for the single-phase formation of a ternary spinel system Fe2(ZnCo)O4. Specifically, all samples are synthesized using a Chemspeed automation platform for better reproducibility and precious parameter control across the synthesis space. A kernel classification model is trained with the sparse independent experimental conditions including reagent concentrations, the amount of reagents, the reagent addition rate, and reagent addition order as features for single-phase synthesis. Through this, we aim to generate a synthesizability model. With the synthesizability model, we introduce a global SHAP analysis to interpret the positive-negative contributions of each feature for single-phase predictions. This novel application of SHAP allows for rapid in-silico navigation of the experimental parameter space, as well as identifying critical conditions to spinel synthesizability. Surprisingly, our results indicate that the amount of reagents plays an important role in the high-throughput co-precipitation synthesis route. This hints that the preferred conditions for material synthesis can cause environmental hazards by generating a certain amount of waste, especially for industrial production.

This manuscript is organized as follows: Section 2 presents the experimental results and exploration of the AI-suggested synthesis space. Section 3 follows with a discussion on the implications of our findings. Finally, Section 4 provides details of our methodology, including the HTE synthesis and XRD measurement, kernel learning model, and performance evaluation metrics.

Results and Discussion

Synthesis method and parameter control

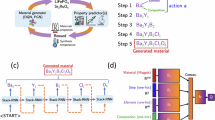

Figure 1 demonstrates the workflow from synthesis and characterization to Kernal-method modeling and SHAP analysis. The targeted Fe2(ZnCo)O4 spinel powders were prepared by co-precipitation method using the Chemspeed platform (Swing XL model). The detailed synthesis and parameter control are listed in Section 4.1. Initially, a comprehensive list of experimental features was developed on the basis of the mechanism for crystal growth process, with strong consideration of pH, ionic strength, and precipitant quotients. To mitigate multicollinearity and reduce chances of overfitting-especially given the sparse experimental data - five key synthesis parameters were selected via feature importance (see Table 1). This was done by calculating the absolute correlation matrix for the generated experimental dataset using the Pearson’s correlation coefficient. A predefined threshold of 0.55 was then used to identify pairs of highly linearly correlated features. This threshold was a hyperparameter tuned to maximize the LOOCV AUC. When a pair of features was highly correlated (exceeding the threshold), the one feature that showed the larger average absolute correlation with all remaining features was discarded to ensure feature independence. The absolute correlation matrix of the initial experimental features set can be found in the SI (Fig. S2). The K2CO3 concentration and the metal precursor concentration represent the precursor concentrations prior to the precipitation reaction. Given the correlation between pH and K2CO3 concentration, pH was disregarded as an input feature. All solutions are prepared from an initial stock solution, diluted accordingly to reach the desired concentrations. The metal precursor amount is calculated beforehand and imported into the platform for control. With these three parameters, all the necessary information for each precursor solution can be determined, including the volume of the highest concentrated precursors and the volume of water for dilution. The adding rate can be regulated by Chemspeed, and precipitation order is handled by experimental protocol design. It should be noted here that the normal precipitation order refers to adding the precipitating agents into metal precursor, while the other order is defined as reverse precipitation order. Figure 2 shows the distribution of each parameter from the conducted experiments.

Fe2(ZnCo)O4 spinel samples are prepared by HT-synthesis using co-precipitation method on Chemspeed automated platform with a series of post-treatment. The final product is suspended in ink for HT-XRD measurement. The XRD results in single-phase classification are used as training data for the synthesizability model. Parts of the figure is created in BioRender.

A Metal precursor concentration, (B) adding rate, (C) metal amount, (D) K2CO3 concentration, and (E) precipitation order. The total number of experiment samples is 79, with only 17 resulting in the desired single-phase solution.

High-throughput XRD results are used to label ground-truth data as single-phase or multi-phase. For our definition of single-phase, we refer to a majority spinel phase as only observed in the XRD pattern. However, it is still possible that there is an undetectable secondary phase in the structure, given the limitations of our instrument. The other phase (or phases) can be one or a combination of Fe2O3, ZnO, or CoO metal oxide phases (Fig. S1). This sparse dataset is significantly skewed toward the undesired multiphase solution, making it essential to employ kernel methods to assess how each feature positively contributes to achieving a single-phase solution.

Kernel classification of the ternary spinel

A kernel learning model is used to classify the phase of the binary spinel, as described in method section “Kernel learning”. By leveraging kernel-based learning approaches, the proposed classifier constructs a more refined and flexible decision boundary within the latent feature space. The kernel transformation not only captures subtle variations among data points but also helps mitigate overfitting, which is especially crucial given our limited sample size (70) and the heavy class imbalance skewed towards the undesired negative class (multiphase solution). Under these conditions, the kernel method helps preserve the integrity of the minority class representations while ensuring robust generalization and improved classification performance. This generalization is assessed via leave-one-out cross-validation (LOOCV) with five independent features Fig. 3A, as shown in the confusion matrix in Fig. 3B. Notably, the LOOCV accuracy and the area under the curve (AUC) are 0.843 and 0.836, respectively, demonstrating the high efficacy of the classifier for synthesizability prediction. Specifically, model evaluation on test data shows only 8 false negatives and 3 false positives, showing the robustness of the model in identifying actual positive (single-phase) experiments with a relatively high recall (0.824). Figure 3C presents the calibration curve (reliability diagram), demonstrating how the predicted probabilities of the model align with the observed frequency of the single phase outcomes. The “zigzagging” trend, rather than a perfectly smooth line, is primarily due to having very few samples in each bin as a result of LOOCV. Despite this, the overall trend clearly demonstrates that higher predicted probabilities consistently correspond to higher proportions of actual single-phase experiments, confirming the model’s ability to meaningfully rank experimental outcomes. The lack of predictions above 0.7 reflects the classifier’s tendency toward moderate probability estimates in a constrained data setting. Rather than assigning near-certain predictions, the model remains conservative, which reduces the upper range of predicted probabilities. This conservative approach is preferable for our application, as it ensures caution and reliability when dealing with inherently uncertain data.

A Absolute correlation matrix of the selected experimental features using Pearson’s correlation coefficient. Other features with correlation > = 0.55 are considered highly correlated, thus, disregarded. B Confusion matrix for the binary classification of the solution’s phase. The leave-one-out cross-validation (LOOCV) accuracy and AUC are, 0.843 and 0.836, respectively. C The calibration curve shows that the model’s predicted probabilities align with observed frequencies of single-phase outcomes, with some natural zigzagging due to sparse bin populations in LOOCV.

We capitalize on the model’s high recall to systematically explore the design space for the desired single-phase solutions. To guide this exploration, we employ an uncertainty sampling acquisition policy that prioritizes experiments based on the model’s confidence. Specifically, we generate a comprehensive set of 43,200 candidate experiments by taking the Cartesian product of the relevant set of 5 experimental parameters (see Section 4.3.2 in Methods). The uncertainty of each candidate is quantified as the absolute difference from a probability of 0.5, with higher values representing predictions closer to the decision boundary, enabling us to focus on parameter combinations where the model is most certain. This strategy ensures that limited experimental resources are directed toward the most informative samples, accelerating the discovery and validation of stable single-phase regions.

Additionally, we leverage SHAP (SHapley Additive exPlanations) values to gain deeper insights into each parameter’s positive contribution toward achieving a single-phase solution. This interpretative step is a novel component of our approach, offering valuable guidance on which parameters most strongly promote single-phase formation, thus, further refining our exploration strategy for identifying stable single-phase regions.

Model interpretability and feature contribution in the synthetic design space

We use SHAP values to measure the contribution of each feature by comparing the classifier’s prediction with and without that feature, then averaging these contributions over all possible coalitions in the synthetic design space. This process yields both local and global interpretations of model behavior. While the local interpretation reveals how a particular feature influences the prediction for an individual data instance (see Fig. S5 in SI), the global interpretation identifies which features have the greatest overall impact on the model’s predictions across all generated synthetic experiments39,40.

Formally, for each feature i and data instance x, the local SHAP value ϕi(f; x) is defined as:

where N is the full set of features, S ⊆ N⧹{i} is a subset of features excluding i, and fx(S) is the model’s prediction for instance x when using only features in S.

Meanwhile, the global SHAP value \({\overline{\phi }}_{i}(f)\) for feature i is obtained by averaging the local SHAP values over all samples \(x\in {{\mathcal{D}}}\) in the synthetic design space:

where \({{\mathcal{D}}}\) is the complete set of generated samples and \(| {{\mathcal{D}}}|\) is the total number of samples in that space.

Figure 4 illustrates global SHAP values for individual features as contour plots spanning the full synthetic design space. Although SHAP values are computed locally for each instance, once a contour map covering the entire range of two features is produced, it effectively aggregates these values across all samples, providing a global perspective. Notably, the precipitation order feature is not plotted in the contour plot SHAP analysis due to its binary nature. Although precipitation order is widely recognized as an important factor in the co-precipitation method, the experimental data in Figure 2E show that both normal and reverse orders can yield single-phase spinel. However, in both cases, the majority of experiments result in multiphase products. This suggests that while precipitation order can influence specific outcomes, its overall impact on achieving single-phase spinel across the explored parameter space is less consistent and generally weaker than continuous variables such as metal concentration or addition rate.

The phase of the binary spinel is inferred by the kernel classifier, and the global SHAP value offers a comprehensive view of how each feature combination positively influences single-phase formation in alignment with theoretical and experimental expectations. Metal concentration SHAP values (A) and adding rate SHAP values (B) in adding rate versus metal concentration contour plots fit our expectation. Within the K2CO3 concentration range of 0.15–0.3 M, all samples exhibit at least one secondary phase, creating a missing region (marked with red) with very few single-phase outcomes (C). Metal concentration SHAP values (D) and K2CO3 concentration SHAP values (E) in metal concentration versus K2CO3 concentration contour plots behave differently.

Here, we provide a detailed discussion on the pairwise contour plots of the global SHAP value for each feature in the synthetic space, where the phase of the binary spinel is inferred by our kernel model. This analysis offers insights into how these interpretative results align with existing theoretical understanding and experimental expectations. These plots ultimately reveal key regions in the synthetic design space, where certain parameter combinations most strongly favor single-phase formation.

Adding rate and metal concentration

Global SHAP values for adding rate and metal precursor concentration align with chemists’ intuition. As shown in Fig. 4A and B, in the projection of metal concentration and adding rate, both parameters prefer smaller values (yellow regions) to form single-phase spinel materials, favouring steady and stable reaction conditions. In contrast, higher metal precursor concentrations and adding rate preferably result in multiple-phase existence of the final precipitants. Figure 4A shows that the SHAP value of metal precursor concentration has the highest positive contribution in the region where metal precursor concentration is lower than 0.5 M and adding rate between 2 and 8 ml per min. In the top region of Fig. 4A, where metal concentration is above 2 M, the metal concentration feature exhibits a negative effect on the targeted phase generation. This result aligns with the experimental observation (Fig. 2) that only multiple phases form at metal concentration higher than 1.5 M. Here an adding rate of 3 ml per min is equal to a dropwise every second, which is commonly used in traditional co-precipitation method. However, the contribution of adding rate is quite independent compared to other parameters. The contour plot (Fig. 4B) of adding rate contribution shows clear steps from 1 to 8 ml per min. Furthermore, the quantitative comparison between those two features’ contribution should not be neglected. The highest positive SHAP value from adding rate (0.4) and metal concentration (0.09) have different orders of magnitude, indicating the direct role of adding rate in the formation of single phases.

The amount of reagents

The amounts of reagents have a significant impact on the single-phase formation as observed in Fig. 4C. The amounts of reagents in this analysis refers to the metal amount parameter, as K2CO3 amount is consistently set to 1.5 times the metal amount to ensure complete reaction and is exclued from the feature correlation analysis41. We should note that the metal amount and metal concentration are two distinct features. The metal amount represents the total metal cations in each sample, not the concentration of the prepared metal salt solution. Figure 4C shows the metal amount contribution contour profile in the metal amount versus K2CO3 concentration dimension. The highest SHAP value of 0.85 indicates the pivotal role of reagent amounts, especially compared with the highest SHAP value 0.144 from metal concentration. See (Fig. 4D), where metal concentration SHAP values are plotted against the same secondary parameter K2CO3 concentration. The highest SHAP value from metal precursor amount in Fig. 4C suggest this parameter is crucial in the region where the K2CO3 concentration stays between 0.18 and 0.25 M and the metal amount is lower than 0.3 mmol. However, the distribution from experimental data (Fig. 2) indicates there is no single-phase generated when K2CO3 concentration is in the range from 0.15 to 0.3 M. The influence of K2CO3 concentration will be further discussed in the next section. The amounts of reagents are usually not considered in traditional experiments, but SHAP analysis results identify its important role in high-throughput synthesis. The sample volume and reaction scale for high-throughput synthesis are usually limited by the modular and protocol design. Studies have shown that different reaction scales can lead to changes in products in Suzuki-Miyaura reaction42. Considering the stochastic nucleation process, it is understandable that the nucleation induction probability depends on the reactor scale43.

K2CO3 concentration

We use K2CO3 as the precipitating agent in the co-precipitation synthesis method41. Within the range of 0.15 to 0.3 M concentration, all 12 samples have at least one secondary phase according to XRD phase identification. Therefore, we call this region a missing region due to the low probability of a successful single-phase synthesis. The distribution of other parameters in this missing region are shown in Fig. S3 in SI. The distributions of other parameters in the missing region fall within the same range as the rest of the data (Fig. 2), allowing us to rule out improper condition selection as the cause. Notably, this absence of single-phase outcomes occurs in the middle of the concentration range rather than at the extremes, making it particularly intriguing. To further verify that this was not a result of biased experimental conditions-such as coincidentally combining this K2CO3 range with other known unfavorable conditions (e.g., high metal precursor concentration)-we conducted 31 additional experiments (Fig. S4). The additional experimental conditions were selected to rule out the negative influence of other parameters on single-phase formation with low metal concentration (0.4, 1.2 M), small metal amount (0.3, 0.8 mmol) and low adding rate (2, 4 ml/min), which are otherwise favorable for single-phase formation according to the SHAP analysis. The K2CO3 concentrations of additional experiments all focus on the “missing region" (0.16, 0.2, 0.24, 0.28 M). Although only 5 additional single-phase samples are fabricated in this region, the single-phase ratio (9.1%) is still more than two times lower than the other regions (26.9%). We used these additional experiments to assess the calibration of our kernel model (Fig. 5). Of the 31 predictions made by the kernel model, 20 aligned with the ground truth, while 11 did not. Figure 5A shows the distribution of these disagreements (errors) across uncertainty levels, separated by class type. Uncertainty was calculated as the distance from 0.5 probability, with higher values indicating predictions closer to the decision boundary. We set the high uncertainty threshold at the 66th percentile (0.47). Overall, 54.5% of model errors occurred in regions of high uncertainty, suggesting reasonable calibration. For multi-phase samples, all disagreements (100%) occurred in high uncertainty regions, indicating the model appropriately expresses low confidence when it misclassifies multi-phase samples as single-phase. In contrast, single-phase misclassifications showed lower uncertainty, suggesting an area for potential model improvement, especially by the addition of more single-phase samples, thus, reducing the class imbalance in the training data. Figure 5B visualizes the model’s predictions of the new experiments from the missing region in the probability-uncertainty space. It is observed that most multi-phase errors (purple diamonds) cluster in the high uncertainty region near the decision boundary, while correct predictions (green circles and blue squares) generally exhibit higher confidence (probabilities much further from 0.5). The observed pattern confirms that the model’s expressed uncertainty correlates with error likelihood, particularly for multi-phase samples.

The high uncertainty threshold was set at the 66th percentile (0.47) A Distribution of kernel model’s disagreements (errors) in the missing region by uncertainty level and class. B Probability vs uncertainty of all samples in the missing region. 100% of the multi-phase errors (FP) occur in the high uncertainty region, while most correct predictions (TP/TN) cluster in the low uncertainty regions with higher confidence (probabilities further from 0.5), demonstrating appropriate model calibration.

The additional results confirm our initial assumption regarding the interesting influence of K2CO3 in co-precipitation synthesis. Figure 4E clearly shows that K2CO3 concentration in the missing region has only negative contributions regardless of the metal precursor concentration. As a weak base, K2CO3 can slow down the reaction, enabling more kinetic control, similar to the usage of a strong base NaOH with a complexation agent ammonia to synthesize dense and spherical hydroxides, as demonstrated for Li-ion battery cathode materials44. K2CO3 can provide hydroxide ion source and also buffer pH to ensure consistent pH. However, it has been reported that metal hydroxide particle growth occurs with dissolution even in a continuous stirred tank reactor (CSTR)44,45,46. Each crystallization and dissolution for different metal cations has a different equilibrium constant, generating a complex net of reactions. For K2CO3 as the precipitating agent, the net reaction includes the dissociation of potassium carbonate, hydrolysis of carbonate, followed by bicarbonate, and the simultaneous growth and dissolution of precipitants. (Table 2). Here Mn+ represents all metal cations in both A and B sites (Fe3+, Co2+ and Zn2+). It is reasonable to assume that with the presence of K2CO3 at a certain pH range favors a secondary phase metal hydroxide formation since the precipitations start to form at different pH for each metal cation species44.

Crystal nucleation and growth

Findings from the global SHAP analysis can be corroborated through the underlying thermodynamics and kinetics of crystal growth theory. Crystal growth theory has been established since Burton, Cabrera and Frank proposed their basic theory (i.e., BCF theory)47,48. BCF theory is widely applied in crystal growth studies from vapor, solution and melt systems, and provides a microscopic description on the mechanism of crystal nucleation, growth, and precipitation. For solution-based synthesis, the precipitate or crystal growth can be separated into two stages: nucleation and growth. The first step, nucleation, occurs rapidly as an induced supersaturation of the solution. Metastable polymorphs and metaphases form rapidly during this process due to the non-equilibrium state, and are dependent on the supersaturation ratio governed by the addition rate of K2CO3 and concentration of metal hydroxides. Our conclusions from the SHAP analysis aligns with this theory, with slower addition of K2CO3 resulting in a higher probability of single-phase growth, in agreement with slow K2CO3 addition resulting in a low supersaturation ratio favoring fewer nucleation sites. The second stage is the growth process. After the precipitate forms, metal cations in the solution are continuously absorbed onto the nuclei while some ions diffuse from the surface of nuclei, reaching a dynamic equilibrium. One metric used to describe the interaction between the particle and supernatant is zeta potential ζ, which depends on pH, ionic strength and ion properties49. For single-phase materials, a high zeta potential is preferred for controlled growth states for the increased repulsive electrostatic interactions50. SHAP analysis results align with the role of zeta potential in the crystal growth process. The missing region in the middle range of K2CO3 concentration may result from a low zeta potential since both low and high pH values can significantly increase the zeta potential (Fig. 4E)51. Similarly, lower precursor concentration with lower ionic strength can prompt a thicker double layer around the particles and lead to higher zeta potential, making the overall crystal nucleation and growth process easier to control. This explains the highest SHAP values only appear in the low metal concentration region (Fig. 4D).

We have discussed how each experimental parameter contributes to the prediction results in above SHAP analysis. All the parameters are related to the thermodynamics and kinetics in the precipitate formation process. Our SHAP value analysis qualitatively aligns with expectations of BCF theory. However, our SHAP analysis also reveals that there is a high degree of complexity. From the results, we can conclude that the complexity of mixed metal oxide synthesis systems rise as the number of metal cations and competing metastable phases increase. Computational studies including machine learning have made it possible to identify and downselect promising materials, but the difficulty of synthesizing the predicted composition or structure still exists in real-life laboratories. Therefore, we believe that surrogate synthesizability models focusing on material nucleation and growth should be developed with effective and generalized descriptors. Additionally, due to the inherent nature and randomness of crystal growth, we propose that synthesizability should be modeled as a probabilistic outcome. This study provides a preliminary inspiration for the synthesizability model pipeline for the community.

Limitations in high-throughput synthesis

During the synthesis exploration process, we notice several limitations and challenges in high throughput synthesis that need attention from us and the broader community. Questions including the flexibility of experiments, reaction considerations and chemical considerations have been thoroughly discussed previously52. Here, we use our high-throughput synthesis as an example to discuss additional layers to this question.

The reaction scale depends on the reactor size from given automation platform. It limits both the parameters we can change and the range within which we can adjust them. In our experiments, the maximum total volume of two precursor solutions is limited to 13 ml with 2 ml extra volume reaction space, since the modules in our Chemspeed platform require the usage of 15 ml tubes in each well. The total volume constrains the grid search space of the experimental parameters, in both the search parameter number and range of each parameter. The theoretical parameter space should have six dimensions including the two reagents’ concentrations and amounts (from which their volumes can be calculated), adding rate and precipitation order. The total volume limitation reduces one dimension in the parameter space. Therefore, we ultimately use a searching space with five dimensions. The range of volume also restricts other parameters’ searching range. If the reagent concentrations are too diluted, their volume can easily go over the total volume limit of our experimentation. Also, the volume limit regulates the amount of final products, which can cause trouble for continuous characterization measurement.

Our results further demonstrate that the reagent amount proves to be an important feature in resulting the final product phase (Fig. 4). As discussed in the previous section, it is understandable that a smaller reaction amount can favor one phase formation in a fixed reactor space. When the nucleation process is considered as a stochastic event, the probability of forming nuclei can be modeled by a Poisson process, which depends on the volume of available space53. The high-throughput synthesis reactor can be considered as a closed system with a fixed amount of reagents. Theoretical studies have shown that crystallization in a closed system would go through a bulk metastable phase43. Compared with open systems in inorganic chemistry synthesis, a flow chemistry setup like a continuous stirred tank reactor can be hard to achieve for high-throughput experiments. The fixed reactor size and closed system design constrain how much we can explore experimental conditions with high-throughput experimentation. Here we want to emphasize that this Poisson-based interpretation is conceptual. Experimental validation by systematically varying reactor volume is beyond the scope of this study. Our empirical observations are consistent with this model, but direct experimental tests of volume-dependent nucleation probability remain a task for future work.

Conclusion

In this study, Fe2(ZnCo)O4 spinel samples were synthesized via a co-precipitation method on the Chemspeed automation platform, and their phases were verified using high-throughput X-ray diffraction. By systematically varying five experimental parameters to explore single-phase formation conditions, we integrated a kernel classification model with a novel application of global SHAP analysis to pinpoint the experimental features most critical to synthesizability. These findings reveal that reagent amounts exert a stronger influence than precursor concentrations and underscore the advantages of combining automated experimentation with interpretable machine learning. The global SHAP analysis is aligned with the classic BCF theory as K2CO3 plays an importance role in both crystal nucleation and growth stages by influencing phase stability and growth thermodynamics. SHAP analysis highlights the complexity of single-phase formation amid competing phases. Our approach offers a promising guidance for high-throughput inorganic material synthesis, aligning both theoretical insights and practical expectations.

Methods

HT synthesis

Fe2(ZnCo)O4 spinel was synthesized using Chemspeed Swing XL platform with co-precipitation method as shown in Fig. 1. Firstly, highly concentrated metal precursor stock solutions (2M) and precipitating agent K2CO3 (1M) were manually prepared and placed in Chemspeed platform. Metal precursors including ferric chloride (99+%, Thermo Scientific), zinc nitrate (98%, Sigma-Aldrich)and cobalt nitrate (≥97.0%, Sigma-Aldrich) were used and mixed with the fixed ratio of 4:1:1. Potassium carbonate (Certified ACS, Fisher Chemical) was used as a precipitating agent to adjust pH for the formation of mixed metal hydroxides/oxides. The amount of total metal precursor and the amount of K2CO3 were always kept at a ratio of 1:1.541. Secondly, both metal precursors and K2CO3 solutions were diluted to different concentrations respectively. The diluted solutions were drawn from the bottom of each tube and then dispensed into the tube for complete dilution. Both reagents were also moved to shaking zone for a 5-minute shaking with 100 rpm. Thirdly, the precipitating agents (or metal precursors) were added at controlled rates into metal precursors (or precipitating agents) to form precipitants while shaking at 100 rpm. The precipitant-solution mixtures were shaking for another 10 min and then aging for 8 h in the Chemspeed. Finally, the precipitant-solution mixed samples were centrifuged and rinsed with DI water for three cycles. All the above operations were carried out by Chemspeed platform with five parameters controlled (Table 1). After automated synthesis, the powders were tried at 80∘ in a vacuum oven and then transferred in a box furnace for 2h annealing at 1000∘. After ball milling treatment, 0.006 ml nafion (5%), 0.03 ml isopropanol and 0.06 ml water were added for each 1 mg fine powder to prepare inks for high-throughput XRD measurement.

HT-XRD measurement

The inks in section “HT synthesis” were drop cast on silicon wafers for high-throughput XRD measurement. X-ray diffraction (XRD) measurements were conducted using a Bruker D8 Discover diffractometer, equipped with a Cu microfocus X-ray source (λ = 0.15418 nm) operating at 50 kV and 1000 μA with a 0.4 mm slit (Fig. S1 in the supporting information). XRD data were collected in the 2θ range from 5∘ to 80∘, with a step size of 0.04∘ and a dwell time of 1.2 seconds per increment. The diffraction patterns were recorded in 1D mode at ambient temperature, with the detector aperture set to 62 mm x 20 mm. The Si wafer with samples was placed on the measuring stage, and the coordinates of the first five samples were determined using the overhead camera integrated into the diffractometer. A script was then employed to apply translation and 2D rotation to calculate the remaining samples’ coordinates.

Kernel learning

With a kernel, data can be nonlinearly mapped from original input space \({{{\mathcal{R}}}}^{D}\) onto a feature space \({{{\mathcal{R}}}}^{F}\), with input and feature dimension D and F, respectively. For transformation \(\phi :{{{\mathcal{R}}}}^{D}\to {{{\mathcal{R}}}}^{F}\), a kernel function is defined as

where 〈⋅〉F is the inner product. This means that a kernel is required to work on scalar products of type xTy that can be translated into scalar products ϕ(x)Tϕ(y) in the feature space. On the other hand, as long as F is an inner product space, the explicit representation of ϕ is not necessary and the kernel function K(x, y) can be directly evaluated as54. This is also known as the kernel trick33, removing the need for the explicit evaluation of the computationally expensive transformation ϕ. Interestingly, many algorithms for regression and classification can be reformulated in terms of the kernelized dual representation, where the kernel function arises naturally32. The transformation can be done implicitly by the choice of the kernel. In specific, the kernel encodes a real valued similarity between inputs (i.e., experimental conditions) x and y. The similarity measure is defined by the representation of the system which is then used in combination with linear or non-linear kernel functions such as Gaussian, Laplace, polynomial and sigmoid kernels. Alternatively, the similarity measure can be encoded directly into the kernel, leading to a wide variety of kernels in the chemical domain55. In this setting, the defined binary kernel function needs to be non-negative, symmetric and point-separating (i.e. \(\langle x,x{\prime} \rangle =0\ \iff \ x=x{\prime}\)). Given the numerical experimental features, we define the distance (d) between the parameter choices in experiments x and y as the l2 norm (Euclidean distance):

Here, xi and yi represent the values of the i-th parameter in experiments x and y, respectively, and n is the total number of experimental parameters.

Kernel-based support vector classifier

We used a support vector classifier (SVC) with a custom distance-based kernel (Eq. (4)) to predict single-phase spinel formation, and evaluated its performance using leave-one-out cross-validation (LOOCV). At each LOOCV iteration, one sample experiment was reserved for testing, while the remaining samples formed the training set. We first scaled each feature into the range [0, 1] using a transformation. Then the pairwise Euclidean distances among the training samples (Xtrain) and between each test sample and the training set were calculated. These distance matrices replaced the original input features, allowing the SVC to learn a classification boundary based on the distance profiles in the higher dimensional space. At inference, we obtained both a class label (single-phase vs. multi-phase) and the decision-function score. The latter is the absolute distance from the decision boundary, which was normalized to serve as an uncertainty measure.

Synthetic design space exploration

We defined a parameter grid to systematically explore the experimental design space. The experimental variables include metal concentration, which takes values from 0.2 to 3.0 in increments of 0.2; metal amount, ranging from 0.2 to 2.0 with a step of 0.2; K2CO3 concentration, varying from 0.1 to 0.45 in steps of 0.05; rate, an integer parameter spanning from 1 to 18; and precipitation order, a binary parameter (0 or 1). The Cartesian product of these parameters yields 43200 unique experimental conditions. For each sample point, our kernel classifier is applied to estimate the uncertainty, measured as the deviation from a probability of 0.5, where higher values signify predictions that are nearer to the decision boundary. This approach enables efficient in-silico navigation of the high-dimensional design space, while providing quantitative uncertainty estimates for the phase predictions.

Performance metrics

To evaluate the performance of the binary classifier, we use two common metrics: accuracy and the area under the receiver operating characteristic (AUROC). Accuracy is calculated as the proportion of correct predictions (true positives and true negatives) out of the total number of predictions:

where TP is the number of true positives, TN is the number of true negatives, FP is the number of false positives, and FN is the number of false negatives. AUROC measures the ability of the classifier to rank positive instances higher than negative ones, accounting for different discrimination thresholds. Formally, it is given by the integral of the true positive rate (TPR) as a function of the false positive rate (FPR):

where \({{\rm{TPR}}}=\frac{TP}{TP+FN}\) and \({{\rm{FPR}}}=\frac{FP}{TN+FP}\).

Data availability

All data used to produce results in this study are publicly available in the following GitHub repository: https://github.com/AccelerationConsortium/gremlin.

Code availability

All used to produce results in this study are publicly available in the following GitHub repository: https://github.com/AccelerationConsortium/gremlin.

References

Xu, Y. et al. Rational design of metal oxide catalysts for electrocatalytic water splitting. Nanoscale 13, 20324–20353 (2021).

Tyndall, D. et al. Understanding the effect of mxene in a tmo/mxene hybrid catalyst for the oxygen evolution reaction. npj 2D Mater. Appl. 7, 15 (2023).

Li, H., Liu, H., Qin, Q. & Liu, X. Balair double mixed metal oxides as competitive catalysts for oxygen evolution electrocatalysis in acidic media. Inorg. Chem. Front. 9, 702–708 (2022).

Faid, A. Y., Barnett, A. O., Seland, F. & Sunde, S. Nicu mixed metal oxide catalyst for alkaline hydrogen evolution in anion exchange membrane water electrolysis. Electrochim. Acta 371, 137837 (2021).

Choi, H. et al. Boosting eco-friendly hydrogen generation by urea-assisted water electrolysis using spinel m 2 geo 4 (m= fe, co) as an active electrocatalyst. Environ. Sci.: Nano 8, 3110–3121 (2021).

Gu, X.-K., Camayang, J. C. A., Samira, S. & Nikolla, E. Oxygen evolution electrocatalysis using mixed metal oxides under acidic conditions: Challenges and opportunities. J. Catal. 388, 130–140 (2020).

Gawande, M. B., Pandey, R. K. & Jayaram, R. V. Role of mixed metal oxides in catalysis science-versatile applications in organic synthesis. Catal. Sci. Technol. 2, 1113–1125 (2012).

Zhou, P. et al. Machine learning accelerates the screening of efficient metal-oxide catalysts for photocatalytic water splitting. Mater. Res. Bull. 179, 112956 (2024).

Jia, X. & Li, H. Machine learning enabled exploration of multicomponent metal oxides for catalyzing oxygen reduction in alkaline media. J. Mater. Chem. A 12, 12487–12500 (2024).

Chang, J. et al. Efficient closed-loop maximization of carbon nanotube growth rate using Bayesian optimization. 10, 9040 (2020).

MacLeod, B. P. et al. Self-driving laboratory for accelerated discovery of thin-film materials. Sci. Adv. 6, eaaz8867 (2020).

Lu, J.-M., Pan, J.-Z., Mo, Y.-M. & Fang, Q. Automated intelligent platforms for high-throughput chemical synthesis. Artif. Intell. Chem. 2, 100057 (2024).

Zeni, C. et al. A generative model for inorganic materials design. Nature 639, 624–632 (2025).

Pyzer-Knapp, E. O. et al. Accelerating materials discovery using artificial intelligence, high performance computing and robotics. npj Comput. Mater. 8, 1–9 (2024).

Maqsood, A., Chen, C. & Jacobsson, T. J. The future of material scientists in an age of artificial intelligence. Adv. Sci. 11, 2401401 (2024).

Steinmann, S. N., Wang, Q. & Seh, Z. W. How machine learning can accelerate electrocatalysis discovery and optimization. Mater. Horiz. 10, 393–406 (2023).

Sutton, C. et al. Identifying domains of applicability of machine learning models for materials science. Nat. Commun. 11, 4428 (2020).

Jain, A. Machine learning in materials research: developments over the last decade and challenges for the future. Curr. Opin. Solid State Mater. Sci. 33, 101189 (2024).

Bartel, C. J. et al. A critical examination of compound stability predictions from machine-learned formation energies. npj Comput. Mater. 6, 97 (2020).

Choubisa, H. et al. Crystal site feature embedding enables exploration of large chemical spaces. Matter 3, 433–448 (2020).

Chen, J. et al. Navigating phase diagram complexity to guide robotic inorganic materials synthesis. Nat. Synth. 3, 606–614 (2024).

Mannan, S., Bihani, V., Krishnan, N. A. & Mauro, J. C. Navigating energy landscapes for materials discovery: Integrating modeling, simulation, and machine learning. Mater. Genome Eng. Adv. 2, e25 (2024).

Jansen, M. A concept for synthesis planning in solid-state chemistry. Angew. Chem. Int. Ed. 41, 3746–3766 (2002).

Sun, W. et al. The thermodynamic scale of inorganic crystalline metastability. Sci. Adv. 2, e1600225 (2016).

Hofmann, T., Schölkopf, B. & Smola, A. J. Kernel methods in machine learning. Ann. Stat. 36, 1171–1220 (2008).

Workman Jr, J. J. A review of calibration transfer practices and instrument differences in spectroscopy. Appl. Spectrosc. 72, 340–365 (2018).

Qin, X., Lysecky, S. & Sprinkle, J. A data-driven linear approximation of HVAC utilization for predictive control and optimization. IEEE Trans. Control Syst. Technol. 23, 778–786 (2014).

Chew, A. K., Afzal, M. A. F., Chandrasekaran, A., Kamps, J. H. & Ramakrishnan, V. Designing the next generation of polymers with machine learning and physics-based models. Mach. Learn.: Sci. Technol. 5, 045031 (2024).

Nelles, O. Nonlinear dynamic system identification. In Nonlinear System Identification, 547–577 (Springer, 2001).

Schölkopf, B. et al. Learning with kernels: support vector machines, regularization, optimization, and beyond (MIT Press, 2002).

Shawe-Taylor, J. et al. Kernel methods for pattern analysis (Cambridge University Press, 2004).

Muller, K.-R., Mika, S., Ratsch, G., Tsuda, K. & Scholkopf, B. An introduction to kernel-based learning algorithms. IEEE Trans. Neural Netw. 12, 181–201 (2001).

Soentpiet, R. et al. Advances in kernel methods: support vector learning (MIT Press, 1999).

Czekaj, T., Wu, W. & Walczak, B. About kernel latent variable approaches and SVM. J. Chemom.: A J. Chemometr. Soc. 19, 341–354 (2005).

Cover, T. M. Geometrical and statistical properties of systems of linear inequalities with applications in pattern recognition. IEEE Trans. Electron. Comput. 326–334 (1965).

Cao, D.-S. et al. Exploring nonlinear relationships in chemical data using kernel-based methods. Chemometr. Intell. Lab. Syst. 107, 106–115 (2011).

Mika, S., Ratsch, G., Weston, J., Scholkopf, B. & Mullers, K.-R. Fisher discriminant analysis with kernels. In Neural Networks for Signal Processing IX: Proc. 1999 IEEE Signal Processing Society Workshop (cat. no. 98th8468), 41–48 (IEEE, 1999).

Braun, M. L., Buhmann, J. M. & Müller, K.-R. On relevant dimensions in kernel feature spaces. J. Mach. Learn. Res. 9, 1875–1908 (2008).

Lundberg, S. M. & Lee S.-I. A unified approach to interpreting model predictions. Adv. Neural Inf. Process. 30 (2017).

Lundberg, S. M. et al. From local explanations to global understanding with explainable ai for trees. Nat. Mach. Intell. 2, 56–67 (2020).

Biesuz, M., Spiridigliozzi, L., Dell’Agli, G., Bortolotti, M. & Sglavo, V. M. Synthesis and sintering of (mg, co, ni, cu, zn) o entropy-stabilized oxides obtained by wet chemical methods. J. Mater. Sci. 53, 8074-8085 (2018).

Schmink, J. R., Bellomo, A. & Berritt, S. Scientist-led high-throughput experimentation (hte) and its utility in academia and industry. Aldrichimica Acta 46, 71–80 (2013).

Sun, W. & Ceder, G. Induction time of a polymorphic transformation. Cryst. Eng. Comm. 19, 4576–4585 (2017).

Mugumya, J. H. et al. Synthesis and theoretical modeling of suitable co-precipitation conditions for producing nmc111 cathode material for lithium-ion batteries. Energy Fuels 36, 12261–12270 (2022).

Van Bommel, A. & Dahn, J. Analysis of the growth mechanism of coprecipitated spherical and dense nickel, manganese, and cobalt-containing hydroxides in the presence of aqueous ammonia. Chem. Mater. 21, 1500–1503 (2009).

Wang, D., Belharouak, I., Koenig, G. M., Zhou, G. & Amine, K. Growth mechanism of ni0. 3mn0. 7co3 precursor for high capacity li-ion battery cathodes. J. Mater. Chem. 21, 9290–9295 (2011).

Burton, W.-K., Cabrera, N. T. & Frank, F. The growth of crystals and the equilibrium structure of their surfaces. Philos. Trans. R. Soc. Lond. Ser. A, Math. Phys. Sci. 243, 299–358 (1951).

Uwaha, M. Introduction to the bcf theory. Prog. Cryst. Growth Charact. Mater. 62, 58–68 (2016).

Duan, J., Wang, J., Guo, T. & Gregory, J. Zeta potentials and sizes of aluminum salt precipitates–effect of anions and organics and implications for coagulation mechanisms. J. Water Process Eng. 4, 224–232 (2014).

Adair, J., Suvaci, E. & Sindel, J. Surface and colloid chemistry (2001).

Serrano-Lotina, A. et al. Zeta potential as a tool for functional materials development. Catal. Today 423, 113862 (2023).

Christensen, M. et al. Automation isn’t automatic. Chem. Sci. 12, 15473–15490 (2021).

Kashchiev, D., Verdoes, D. & Van Rosmalen, G. Induction time and metastability limit in new phase formation. J. Cryst. Growth 110, 373–380 (1991).

Boser, B. E., Guyon, I. M. & Vapnik, V. N. A training algorithm for optimal margin classifiers. In Proc. Fifth Annual Workshop on Computational Learning Theory, 144–152 1992).

Schütt, K. T. et al. Machine learning meets quantum physics. Lecture Notes in Physics (2020).

Acknowledgements

This research was undertaken thanks in part to funding provided to the University of Toronto’s Acceleration Consortium from the Canada First Research Excellence Fund: Grant number - CFREF-2022-00042. The authors also gratefully acknowledge the partial financial support from Materials for Clean Fuel (MCF) Challenge program at National Research Council of Canada (NRC). The authors acknowledge support from the Alliance for AI-Accelerated Materials Discovery (A3MD). The authors acknowledge that Fig. 1 is partially created in BioRender. Liu, Y. (2025) https://BioRender.com/o88ttzd.

Author information

Authors and Affiliations

Contributions

Y.L. performed the experiments and analyzed the results. M.A. performed machine lerning in the work. Y.L. and M.A. drafted the manuscript. R.B. and J.H. supervised the project and provided critical revisions to the manuscript. All authors reviewed and approved the final version of the manuscript.

Corresponding authors

Ethics declarations

Competing interests

The authors declare no competing interests.

Peer review

Peer review information

Communications Materials thanks Shengwei Deng and the other, anonymous, reviewer(s) for their contribution to the peer review of this work. Primary Handling Editors: Jet-Sing Lee. A peer review file is available.

Additional information

Publisher’s note Springer Nature remains neutral with regard to jurisdictional claims in published maps and institutional affiliations.

Supplementary information

Rights and permissions

Open Access This article is licensed under a Creative Commons Attribution 4.0 International License, which permits use, sharing, adaptation, distribution and reproduction in any medium or format, as long as you give appropriate credit to the original author(s) and the source, provide a link to the Creative Commons licence, and indicate if changes were made. The images or other third party material in this article are included in the article's Creative Commons licence, unless indicated otherwise in a credit line to the material. If material is not included in the article's Creative Commons licence and your intended use is not permitted by statutory regulation or exceeds the permitted use, you will need to obtain permission directly from the copyright holder. To view a copy of this licence, visit http://creativecommons.org/licenses/by/4.0/.

About this article

Cite this article

Liu, Y., Ansari, M., Black, R. et al. Kernel learning assisted synthesis condition exploration for ternary spinel. Commun Mater 6, 145 (2025). https://doi.org/10.1038/s43246-025-00867-3

Received:

Accepted:

Published:

Version of record:

DOI: https://doi.org/10.1038/s43246-025-00867-3