Abstract

Earth system models, or simulators, are foundational for projecting climate change impacts, but their computational expense limits the number and diversity of simulations available. Machine learning-based emulators, statistical surrogates trained on simulator outputs, can replicate components of climate models at orders-of-magnitude lower cost, enabling ensembles and interpolation across scenarios. We argue that the next phase of climate modeling hinges on closer collaboration between simulator and emulator communities. We outline three priorities: (1) co-design of simulators and emulators so that experimental design, diagnostics, and data products support training, evaluation, and targeted simulation; (2) shared, machine learning-ready benchmarks with data partitions and metrics that emphasize physical fidelity; and (3) treating emulators as reliable software components with interfaces, documentation, and deployment pathways for sensitivity analyses, scenario exploration, and uncertainty decomposition. This perspective envisions emulators not as statistical shortcuts, but core tools that accelerate the pace of climate science.

Similar content being viewed by others

Introduction

As climate change intensifies, so does the need for fast, reliable, and probabilistic projections of future climate, especially for impacts assessments. Climate simulators or Earth system models are complex physical models that represent interactions between Earth’s atmosphere, oceans, land, and ice. They are foundational tools for understanding future climate1,2. These simulators produce the projections that inform policy decisions and shape the global response to climate change3. Despite their increasing accuracy and physical consistency over the past decades, these models are computationally intensive, often requiring weeks or even months to produce a projection for a single climate scenario4,5,6. This high computational cost limits their utility and places discretion of how they are applied to a limited few specialists. Their expense leads to sparsely populated projections–only handfuls of futures are considered with only moderately-sized ensembles to sample a highly chaotic underlying weather system. Many of the questions that scientists and decision-makers have are left unaddressed4,7.

Climate emulators offer a promising alternative–or better yet, complement–to this computational bottleneck8,9,10. In this perspective, we focus specifically on emulators designed for use as surrogate models for Earth system components: statistical or machine learning-based models trained to reproduce the output of climate simulators given the same inputs. By learning from existing simulations, emulators approximate the behavior of a limited set of measures from complex models, but do so millions to billions of times faster with high accuracy and flexibility11,12,13. Emulators are enabling scientists to quickly run experiments14, produce extensive ensembles13,15, and supplement missing projections16,17. Emulators are transforming the relationship that scientists (and some other stakeholders) have with climate models: what was once a static set of hard-won results from a simulator can now be probed and queried for new scientific insights.

Over the past decade, climate emulation has evolved from simple approximations (e.g., reduced-order, simplified-physics) to complex, machine learning (ML)-based emulators [e.g.,18]. Advances in model architectures, training techniques, and open-source tools have transformed climate emulation, enabling more accurate emulation and greater adaptability across climate tasks while maintaining computational efficiency19. Machine learning has proven effective in modeling both space and time, capturing high-dimensional nonlinear relationships of many Earth systems components1,19.

The rise of ML-based emulators is part of a broader shift in climate science from process-based modeling toward data-driven insights. Traditional climate models are effective because they are rooted in physical principles and decades of model development, model-versus-observation evaluation, and model intercomparison, including CMIP20,21. Yet limited resolution and approximate physical interactions will always limit our ability to gain new insight from these models alone5,22. Emulators, in contrast, are shifting the bottleneck of scientific discovery from computation to training data–potentially drawn from both simulators and observations. The result is a dynamic relationship between simulators and emulators: simulators generate data that trains emulators, and emulators, in turn, help target where simulation efforts are most needed.

This paper offers a present perspective on how to rewire climate modeling for the age of artificial intelligence (AI) and machine learning23, particularly using ML emulators. Our central focus is a vision for the next phase of climate modeling, based on our own experiences in building and improving simulators and emulators. We argue that the future of climate modeling will depend not only on the development of better models, but on better integration between simulators and emulators, between data scientists and climate scientists, and between communities in hosting and sharing data. In this vision, emulators are not only a way to accelerate scenario and impacts exploration, but also a way to make simulators more interactive: what was once a static set of hard-won simulator outputs can become a tool that scientists can probe, stress-test, and iterate on to generate and evaluate new hypotheses. We propose that the co-design of simulators and emulators will allow for more robust, accurate, and interactive climate analysis. We emphasize the need for benchmark datasets, open-sourced deployment, and a shared data infrastructure to unify community efforts in developing better climate models. This perspective does not focus on specifics of ML architectures, but rather provides a vision of how the use of ML emulators by climate scientists can be integrated into existing modeling workflows through shared data standards, benchmarks, and software practices, enabling broader participation and more effective scientific analysis. By reframing emulators not just as statistical shortcuts but as tools for scientific acceleration, we aim for a next stage of climate modeling: faster, more flexible, and better equipped to inform decisions (Box 1).

Machine learning in climate emulation

Machine learning in climate emulation

Machine learning has become central to climate emulation due to its ability to capture high-dimensional, nonlinear relationships between simulator inputs and outputs. Traditional emulation approaches, such as linear pattern scaling24 and reduced order models6,25 remain essential for their simplicity, accuracy, transparency, and ease of interpretation, particularly when training data is limited. Gaussian Process (GP) emulators, a type of statistical ML emulator, are also widely used, offering a flexible, probabilistic framework that naturally quantifies uncertainty26,27, although they can be computationally intensive for very large or high-dimensional datasets. Deep learning-based approaches offer flexibility and computational efficiency while maintaining the ability to model complex phenomena, resulting in fast and accurate approximations of spatio-temporal dynamics28. Unlike GP models, most deep learning architectures do not inherently provide uncertainty estimates or interpretability, although ensemble, Bayesian, and dropout-based methods can approximate uncertainty and improve transparency when designed carefully. In this perspective, we primarily use “ML emulators” to refer to deep learning-based approaches, while noting that many of the considerations discussed here, such as training data requirements, evaluation, and integration with simulators, also apply to statistical machine learning methods, including Gaussian Processes.

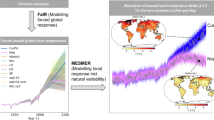

ML-based emulators substantially expand the scale and scope at which established forms of climate analysis can be performed, as rapid, low-cost projections of complex physical processes are possible. These tools allow researchers to run thousands of perturbation experiments to quantify sensitivity to different forcings, perform probabilistic forecasts, and rigorously explore scenario and model uncertainties for high-dimensional and complex tasks13,29. They also allow for a better understanding and decomposition of uncertainty stemming from various sources, including model parameters, emulator architectures, and forcing pathways, by enabling large ensembles and systematic perturbation experiments. This capability is illustrated in Fig. 1, which uses a large ensemble of potential volcanic futures13, to quantify the additional uncertainty in global temperature projections attributable to volcanism. Similar approaches can be applied to other forcing uncertainties, structural model differences, and internal variability. While traditional emulation approaches including reduced-complexity and spatial climate emulators have long enabled sensitivity studies and scenario exploration [e.g.,30,31,32], ML-based emulators build on this foundation, extending these capabilities to higher-dimensional and complex settings, capturing spatiotemporal structure and dependencies that were previously impractical to represent.

Figure 11 from 13 showcasing the capability of emulators to decompose global mean surface temperature projection uncertainties beyond what is plausible with large simulator ensembles. Panels a, b show the EBM-KF emulator uncertainty projections for temperature change, which adds the ability to quantify uncertainty due to volcanic activity in addition to the uncertainties quantified by the equivalent simulator projections from the CMIP621 projections in Panels c, d75,76. Source: Nicklas, J.M., Fox-Kemper, B., Lawrence, C.: Efficient Estimation of Climate State and Its Uncertainty Using Kalman Filtering with Application to Policy Thresholds and Volcanism. Journal of Climate 38(5), 1235-1270 (2025). ⓒAmerican Meteorological Society. Used with permission.

The success of ML in climate emulation is demonstrated by a growing number of specialized models that achieve state-of-the-art performance. ACE11 effectively emulates high-dimensional weather and climate fields from the FV3GFS weather simulator33 using a deterministic neural operator-based architecture, and improves upon baseline models in both accuracy and conservation of natural laws. Emulators, such as CPMGEM34,35 and ISEFlow36, accurately emulate components of the Earth system. Other emulators, such as BGC-UNet37, DiffESM38, and emulators presented in ClimART39, all showcase similar results: ML emulators routinely perform well when working with large complex datasets, often achieving higher accuracy, faster inference, and better generalization than other methods in a large range of tasks.

Key challenges remain. ML models typically fail to generalize beyond their training distributions, especially when extrapolating to previously unseen forcings, extreme events, or overshoot scenarios40. Interpretability and physical consistency are crucial concerns, as ML emulators are often black boxes, in need of verification that they obey the physical laws they are meant to emulate. Hybrid modeling seeks to address this, incorporating physical conservation laws13, dimensional analysis41, and constraints directly into the architectures or loss functions42,43, but these approaches are not yet standard practice.

The emergence of ML emulators is a methodological and structural shift that compels scientists to rethink what models are, how they are used, and who can run experiments with them. The full potential of emulators will be achieved not just through technical advancement, but through the intentional co-design of simulators, emulators, and observations, the democratization and standardization of data, and the improvement of emulator software for integration into workflows across disciplines.

Vision: climate modeling in the AI era

Emulators, combined with the long-standing challenges in climate modeling, present an opportunity to reimagine the design, development, and deployment of climate models. We outline three key developments soon to be essential for climate modeling in the AI era: (1) the co-design of simulators and emulators as part of an integrated modeling ecosystem, (2) the development of shared data infrastructure and standardized benchmarks to support reproducibility and scalability, and (3) the software improvements for deeper integration of emulators into core scientific workflows, enabling interactive and decision-relevant climate analysis. Each of the three shifts will accelerate and advance discovery in climate science through interoperability, transparency, and collaboration across disciplinary boundaries.

Simulator and emulator co-design

As emulators and simulators improve, there is ample opportunity for strategic coordination and shared design. Today, emulators and simulators are often developed independently, advancing in parallel toward the same broad goals. While these separate efforts have enabled progress within each domain, they miss opportunities to accelerate insight. Emulators rely on simulation outputs for model training, and simulators can benefit from the rapid experimentation, uncertainty quantification for comparison to observations, complementary data assimilation approaches, integration with new ML-based analysis tools, and scenario analysis that emulators can provide. By moving toward the co-design of emulators and simulators, both communities can accelerate scientific discovery, improve model performance, and build a more unified climate modeling ecosystem.

Co-design refers to the intentional and collaborative development of both simulators and emulators, with each informing the architecture, data outputs, and experimental protocols of the other. Rather than solely developing emulators downstream from simulators using static simulator outputs, co-design encourages alignment around shared workflows, experimental setups, and data sharing, allowing both communities to benefit from earlier integration.

One of the clearest opportunities for co-design lies in the careful and intentional design of a simulation experimental protocol. Because of their high computation cost, simulators are often run under a sparse set of scenarios chosen to answer specific scientific questions or to inform policy decisions20,21,44,45,46,47. While these simulation choices are grounded in valid scientific goals, greater coordination could allow them to simultaneously support more robust emulators. For example, while scenarios like SSP5-8.5 may be considered unlikely from a policy perspective [e.g., refs. 48,49], high-end emissions simulations are invaluable for developing emulators that are more robust up toward these upper bounds. Through intentional co-design, simulation protocols could be optimized not only to address scientific and political priorities, but also to ensure broad and balanced coverage of the climate response. Emulators can more effectively “fill in the gaps” or impute between scenarios or provide higher frequency or resolution, enabling accurate interpolation (rather than extrapolation) across socioeconomic pathways. In turn, this could reduce the need for densely populated ensembles of middle-of-the-road simulations or high-resolution redundancy, alleviating storage and compute demands while still supporting robust, decision-relevant insights.

Emulators can also play a critical role in “bringing it all together” by expanding the scope of available scenarios and reducing the data and computational burdens associated with traditional simulators in scenario selection and design50. As discussed by ref. 18, emulators can efficiently enhance output for impacts assessment, and the emulators described here can extend between the limited scenario sets of CMIP simulators, providing high-frequency, multi-variable outputs and enabling exploration of hypothetical or policy-relevant futures within the agreed-upon experiment protocols. They can also support ScenarioMIP-style design by rapidly screening candidate pathways and diagnostics to prioritize the small set of additional simulator runs that most reduce uncertainty in decision-relevant outcomes. When paired with targeted simulator experiments designed to improve emulator fidelity, which can be specified by the emulator itself, the simulator-emulator co-development process can make climate modeling both broader and more efficient while supporting more inclusive and policy-relevant science.

Progress in emulation depends on continued advances in physical Earth system modeling. Co-design, therefore, involves not only aligning data and workflows between emulators and simulators but also maintaining active development of process representations, parameterizations, and resolution within simulators. Each improvement in simulator fidelity produces new, richer training data for emulators, while emulator analyses can identify where simulator refinements will most reduce uncertainty or computational cost. This iterative exchange ensures that both communities advance together, linking process-level understanding with data-driven exploration.

As shown in Fig. 2, the co-design of simulators and emulators has the potential to create a mutually beneficial feedback loop where fast emulator outputs inform simulator development and carefully curated simulator outputs promote accurate emulators. By analyzing emulator outputs, simulator developers can diagnose model sensitivities and structural shortcomings, learning more about their own models without incurring high computational costs. Emulators sharpen plans for scenario projection experiments by identifying regions of input space that require denser sampling and avoiding redundancy. However, this feedback loop should be treated as conditional on where the emulator is reliable: co-design should include routine checks for out-of-distribution inputs and regime shifts (e.g., forcing pathways or extremes not represented in training), and the use of calibrated uncertainty or diagnostics to flag when targeted simulator runs are needed to verify and extend the emulator’s training set.

A conceptual overview of simulator-emulator co-design. Blue arrows indicate information and resources that simulators typically provide to emulators, and orange arrows indicate what emulators can provide back to simulators. Some arrows are bidirectional, reflecting iterative feedback loops where both systems inform and refine one another (e.g., experimental design, data processing, and scientific analysis). This mutual exchange enables more targeted experiments, improved emulator training, and more efficient and informative climate modeling workflows.

Emulators also support efficient parameter estimation through efficient ensembling and sensitivity testing, avoiding overtuning to observations. Furthermore, emulators excel at decomposing sources of uncertainty, such as uncertainty arising from scenarios, parameters, or the emulator itself, revealing where simulator improvements will most reduce predictive uncertainty13,51,52. This uncertainty analysis enables developers to prioritize research efforts on processes that matter most for decision-relevant outputs. Large observational data, such as from recent satellites, such as SWOT53, is difficult to compare to simulators due to limited data transfer between sites–however, comparing an emulator to both the simulator and the observations requires little data transfer. Taken together, this co-design approach will accelerate both emulator accuracy and simulator fidelity, enabling a more integrated and efficient climate modeling ecosystem.

Shared data infrastructure and benchmarking

The future of climate emulation relies on both the quality and accessibility of available data. The data produced by climate simulators is a valuable resource, not just for understanding the Earth system but for enabling the broader scientific community to build new tools and capabilities. As interest in ML-based emulators grows, there is an opportunity to increase the value of simulator outputs by making them more accessible and standardized for downstream tasks, including emulator training, evaluation, and deployment.

Many simulator datasets are publicly available, but even small differences in file structure, variable naming, metadata conventions, and units make them difficult to use for ML workflows, especially when combining models across intercomparison projects. By improving accessibility through clear documentation, formatting consistency, and intuitive tooling for access, these datasets can serve an even broader community of users, expanding their scientific impact. The CMIP7 Fast Track Data Request process20 is nearly complete, and the Earth System Grid Federation has released a new system for sharing CMIP data; ease of machine learning was not a major aspect of either process.

Great strides are already being made to expand the usability of simulator outputs. CMIP has a long history in standardizing data outputs with common data formats and data sharing54. Projects like the Earth System Grid Federation (ESGF) MetaGrid55 have made CMIP data more accessible by providing interfaces to CMIP projections based on standardized variable names, experiments, and model runs. Ice sheet modelers in ISMIP46, as well as the ice sheet emulator Emulandice27, have also demonstrated the power of well-structured, accessible datasets, as their work made it possible for new emulator developers, such as ourselves, to quickly enter the field. However, there is still room to build on these efforts to make simulator outputs more directly usable for machine learning applications. As advocated by CMIP, these should include consistent CF-compliant variable naming and timestamp conventions56, clearly declared units and coordinate systems, and metadata schemas that support automatic parsing57. Model intercomparison projects should implement validation scripts or schema tests that check that submitted model outputs conform to these standards before accepting model runs, and organize working groups to ensure that these standards are met. Establishing such standards would reduce friction in emulator development and lower the barrier to entry, helping expand participation, attract new researchers to climate science, and drive faster iteration of climate emulators.

Benchmarking will be equally vital to transforming the capabilities of current emulators. A benchmark refers to a publicly available dataset accompanied by a clearly defined task, standardized data splits (e.g., training, validation, and testing), and a set of evaluation metrics58. Benchmarks serve as a shared reference point that allows consistent and reproducible comparison between architectures and methods. Emerging efforts, such as ClimateBench59, ClimART39, ClimateSet60, and the CMIP Rapid Evaluation Framework61 represent early steps to creating shared data benchmarks. However, the number of available benchmarks remains small and often lacks sufficient simulations for robust ML training. For example,62 found that simple linear pattern-scaling models achieved comparable or superior performance to ML approaches on ClimateBench, illustrating that limited training data can lead ML emulators to overfit and that traditional methods remain valuable alongside newer ML techniques. In order to create better emulators, we will need more high-quality benchmarks that evolve over time, incorporating more observations, newer generations of models, and different sources of projection uncertainties.

Unlike weather forecasting, which focuses on a small set of well-defined tasks and has globally consistent data sharing of observations, climate emulation spans a wide and diverse range of scientific objectives, from temperature and precipitation projections to ice sheet dynamics, ocean heat transport, and biogeochemical cycles. As such, a single universal benchmark is unlikely to fulfill the needs of the broader community. Instead, we propose a more distributed approach: each emulator development effort should make its training data public and benchmark-quality. This means clearly defining the predictive task, providing predetermined, standardized train-test splits, and documenting relevant input variables and forcings. Crucially, benchmark evaluation metrics must be chosen carefully, as they capture the important aspects of performance beyond prediction error. Depending on the emulation task, benchmarks may also include tests of generalization or extrapolation, assessments of energy or mass conservation, or evaluations of model behavior during extreme or out-of-sample events such as major volcanic eruptions or strong El Niño episodes. Benchmarks that combine machine learning rigor (e.g., withheld test sets, reproducibility, code baselines) with physical relevance will accelerate emulator innovation and enable more confident adoption in both research and policy applications63.

Trust in emulators can be built through the sharing of data as well–other groups' examination of the data will identify inconsistencies or opportunities for improvement, much as climate model intercomparisons do now. As more research groups format training datasets as benchmarks, the field should move toward a shared framework for storing and accessing each benchmark, even across different emulation tasks. For example, in seismology applications, SeisBench64 is a shared repository of ML-ready seismic datasets maintained by the academic community that focuses on several core tasks (e.g., phase picking, event detection65), but is packaged in a common format with unified data and model interfaces and evaluation tools. A similar approach in climate emulation would allow researchers to submit and share their training data to foster meaningful comparison between emulators, while benefiting from the accessibility of a common interface.

Climate science and data science bring complementary strengths to this effort. Data science emphasizes reproducibility and systematic evaluation, using practices like version control, withheld test sets, and modular pipelines. Climate science has long prioritized interpretability, documentation, and transparency for societal trust-building and relevance. A shared data infrastructure that draws from both traditions would not only accelerate emulator development but also support more trustworthy, transparent, and decision-relevant climate tools.

Emulators as software

Investing in climate emulators as software – modular, accessible, and production-ready – will broaden their impact in climate science and encourage adoption by more scientists and researchers. Many existing emulators remain research prototypes, lacking standardized packaging, interfaces, and documentation. As such, they remain disconnected from production workflows, as there is little incentive for simulator developers to engage with developed emulators if they are not easy to use.

Transitioning emulator development from research prototypes to software and adopting best practices for scientific computing and software66 would dramatically increase the usefulness of emulators throughout climate sciences. Projects like scikit-learn67 or xarray68 offer a useful blueprint: intuitive APIs, modular code, unit tests, clear documentation, and community contribution guidelines have made it a standard tool across disciplines. Emulator development should be similar, being distributed as installable Python packages with minimal setup, standardized input formats, and clear examples. Containerization (e.g., via Docker) and cloud-friendly execution can further reduce friction by ensuring reproducible environments and consistent performance across computing systems, simplifying deployment across institutions and infrastructures. The primary goal of this shift is to make emulators usable by climate scientists who are not ML specialists, through accessible interfaces, clear documentation, and reproducible workflows rather than direct engagement with emulator architectures or training procedures.

Several recent efforts in both weather and climate emulators illustrate this vision. Google’s GraphCast emulator43, NVIDIA’s FourCastNet69, and AI2’s ACE11 are not only high-performing models but also examples of functional, modular packages with pretrained weights, intuitive APIs, and clear documentation. These systems have enabled researchers with non-ML or software engineering expertise to use these emulators out of the box, increasing the number of scientists able to use them70,71. While these examples come from industry groups that have dedicated engineering teams, the underlying principles are broadly applicable. Even modest improvements in packaging and documentation, such as clearly defined model inputs and outputs, example usage scripts, and basic installation instructions, can substantially decrease the amount of effort required to use and deploy a trained emulator.

With robust packaging and accessibility, emulators can be integrated directly into existing climate workflows, allowing rapid testing of scenarios, efficient sensitivity analyses, and targeted exploration of model uncertainty. Their usability would expand beyond emulator developers, empowering a broader range of scientists, policymakers, and even educators to run experiments and probe climate behavior. Overall, climate projections should be able to be produced by simulators, simulators with emulators embedded or stand-alone emulators. To provide useful information to decision makers, however, insight will be required on how to interpret and combine the different data sources.

A suite of accurate, well-documented emulators could form the foundation of a new kind of ensemble, one that explores both scenario uncertainty and emulator architecture uncertainty. FACTS72, for example, is a single software framework that integrates multiple sea level projection models under a common interface, allowing users to compare and ensemble sea level projections across methods and scenarios. While reduced-complexity and spatial climate emulators intercomparisons are being developed, such as RCMIP73 and FASTMIP74, there is currently no equivalent effort for process- or component-level emulators that replicate individual Earth system components. As emulators become software products, a similar framework should be created to not only quantify uncertainty between emulator methods on the same task but also to emulate multiple Earth systems simultaneously, as is done in coupled climate simulators. We envision such a framework as an Emulator Intercomparison Project (EIP) focused initially on component-level emulators, with the potential to expand across emulator classes as specific emulation tasks require. Much like model intercomparison projects have shaped traditional climate modeling, this framework could help standardize evaluation, identify blind spots, and build trust in emulator predictions while being easy to use and accessible to non-experts.

Conclusions

Machine learning-based emulators represent a structural shift in climate science: they are changing how scientists interact with climate models, turning previously static climate simulators into interactive tools that can be probed and tested to gain new scientific insights. As emulators become more accurate, flexible, and widely adopted, their role is changing from auxiliary tools to core components of the modeling workflow. We propose three key priorities for transforming the use and capabilities of emulators in climate science: (1) the co-design of simulators and emulators to allow more effective and efficient model development, (2) the creation of shared data infrastructure and benchmarks to support reproducibility and lower the barrier to entry, and (3) the development of emulators as reliable, well-documented software that can easily integrate into scientific and policy workflows. Although this perspective emphasizes component-level, machine learning-based climate emulators, the broader vision outlined here—including co-design, evolving benchmarks, and software integration—extends to other model and emulator paradigms used across climate science. The integration of machine learning emulators into climate modeling represents a pivotal evolution in climate science, one that redefines who can pose scientific questions, expands the scope of inquiry, and accelerates our ability to act on scientific insight.

Data availability

No data or code were used in this article.

Code availability

No data or code were used in this article.

References

Eyring, V. et al. Pushing the frontiers in climate modelling and analysis with machine learning. Nat. Clim. Change 14, 916–928 (2024).

Bordoni, S., Kang, S., Shaw, T. A., Simpson, I. & Zanna, L. The futures of climate modeling. npj Clim. Atmos. Sci. 8, 99 (2025).

IPCC. Climate Change 2021: The Physical Science Basis. Contribution of Working Group I to the Sixth Assessment Report of the Intergovernmental Panel on Climate Change (Cambridge University Press, Cambridge, United Kingdom and New York, NY, USA, 2021).

Schneider, T. et al. Harnessing AI and computing to advance climate modelling and prediction. Nat. Clim. Change 13, 887–889 (2023).

Bauer, P., Stevens, B. & Hazeleger, W. A digital twin of Earth for the green transition. Nat. Clim. Change 11, 80–83 (2021).

Tebaldi, C., Snyder, A. & Dorheim, K. STITCHES: creating new scenarios of climate model output by stitching together pieces of existing simulations. Earth Syst. Dyn. Discuss. 2022, 1–58 (2022).

Holden, P. B., Edwards, N. R., Hensman, J. & Wilkinson, R. D. ABC for Climate: Dealing with Expensive Simulators. In Sisson, S. A., Fan, Y. & Beaumont, M. (eds.) Handbook of approximate Bayesian computation, 569–595 (Chapman and Hall/CRC, Boca Raton, FL, 2018).

Hartin, C. A., Patel, P., Schwarber, A., Link, R. P. & Bond-Lamberty, B. A simple object-oriented and open-source model for scientific and policy analyses of the global climate system - Hector v1.0. Geosci. Model Dev. 8, 939–955 (2015).

Nicholls, Z. R. et al. Reduced Complexity Model Intercomparison Project Phase 1: introduction and evaluation of global-mean temperature response. Geosci. Model Dev. 13, 5175–5190 (2020).

Kikstra, J. S. et al. The IPCC Sixth Assessment Report WGIII climate assessment of mitigation pathways: from emissions to global temperatures. Geosci. Model Dev. 15, 9075–9109 (2022).

Watt-Meyer, O. et al. ACE: A fast, skillful learned global atmospheric model for climate prediction. In Tackling Climate Change with Machine Learning Workshop, Conference on Neural Information Processing Systems (NeurIPS) (2023).

Watt-Meyer, O. et al. ACE2: accurately learning subseasonal to decadal atmospheric variability and forced responses. npj Clim. Atmos. Sci. 8, 205 (2025).

Nicklas, J. M., Fox-Kemper, B. & Lawrence, C. Efficient estimation of climate state and its uncertainty using Kalman filtering with application to policy thresholds and volcanism. J. Clim. 38, 1235–1270 (2025).

Wu, E. et al. Applying the ACE2 emulator to SST green’s functions for the E3SMv3 global atmosphere model. JGR: Machine Learning and Computation. 2, e2025JH000774 (2025).

Guan, H., Arcomano, T., Chattopadhyay, A. & Maulik, R. LUCIE: A Lightweight Uncoupled ClImate Emulator with long-term stability and physical consistency for O(1000)-member ensembles. In Machine Learning for Earth System Modeling Workshop, International Conference on Machine Learning (ICML) (2024).

Mathison, C. et al. A rapid-application emissions-to-impacts tool for scenario assessment: Probabilistic Regional Impacts from Model patterns and Emissions (PRIME). Geosci. Model Dev. 18, 1785–1808 (2025).

Kitsios, V., O’Kane, T. J. & Newth, D. A machine learning approach to rapidly project climate responses under a multitude of net-zero emission pathways. Commun. Earth Environ. 4, 355 (2023).

Tebaldi, C., Selin, N., Ferrari, R. & Flierl, G. Emulators of Climate Model Output. Annual Review of Environment and Resources. 50 (2025).

De Burgh-Day, C. O. & Leeuwenburg, T. Machine learning for numerical weather and climate modelling: a review. Geosci. Model Dev. 16, 6433–6477 (2023).

Dunne, J. P. et al. An evolving Coupled Model Intercomparison Project phase 7 (CMIP7) and Fast Track in support of future climate assessment. EGUsphere 2024, 1–51 (2024).

Eyring, V. et al. Overview of the Coupled Model Intercomparison Project Phase 6 (CMIP6) experimental design and organization. Geosci. Model Dev. 9, 1937–1958 (2016).

Balaji, V. et al. Are general circulation models obsolete? Proc. Natl. Acad. Sci. 119, e2202075119 (2022).

Wang, H. et al. Scientific discovery in the age of artificial intelligence. Nature 620, 47–60 (2023).

Tebaldi, C. & Arblaster, J. M. Pattern scaling: Its strengths and limitations, and an update on the latest model simulations. Clim. Change 122, 459–471 (2014).

Beusch, L., Gudmundsson, L. & Seneviratne, S. I. Emulating Earth system model temperatures with MESMER: from global mean temperature trajectories to grid-point-level realizations on land. Earth Syst. Dyn. 11, 139–159 (2020).

Lee, L., Carslaw, K., Pringle, K., Mann, G. & Spracklen, D. Emulation of a complex global aerosol model to quantify sensitivity to uncertain parameters. Atmos. Chem. Phys. 11, 12253–12273 (2011).

Edwards, T. L. et al. Projected land ice contributions to twenty-first-century sea level rise. Nature 593, 74–82 (2021).

Bocquet, M. Surrogate modeling for the climate sciences dynamics with machine learning and data assimilation. Front. Appl. Math. Stat. 9, 1133226 (2023).

Van Katwyk, P., Fox-Kemper, B., Seroussi, H., Nowicki, S. & Bergen, K. J. A Variational LSTM Emulator of Sea Level Contribution From the Antarctic Ice Sheet. J. Adv. Model. Earth Syst. 15, e2023MS003899 (2023).

Gasser, T. et al. Historical co2 emissions from land use and land cover change and their uncertainty. Biogeosciences 17, 4075–4101 (2020).

Nicholls, R. J. et al. A global analysis of subsidence, relative sea-level change and coastal flood exposure. Nat. Clim. Change 11, 338–342 (2021).

Quilcaille, Y., Gasser, T., Ciais, P. & Boucher, O. Cmip6 simulations with the compact earth system model oscar v3.1. Geosci. Model Dev. 16, 1129–1161 (2023).

Zhou, L. et al. Toward Convective-Scale Prediction within the Next Generation Global Prediction System. Bull. Am. Meteorol. Soc. 100, 1225–1243 (2019).

Addison, H., Kendon, E., Ravuri, S., Aitchison, L. & Watson, P. A. Machine learning emulation of a local-scale UK climate model. In Tackling Climate Change with Machine Learning workshop at NeurIPS 2022 (2022).

Addison, H., Kendon, E., Ravuri, S., Aitchison, L. & Watson, P. A. Machine learning emulation of precipitation from km-scale regional climate simulations using a diffusion model. arXiv preprint arXiv:2407.14158 (2024).

Van Katwyk, P., Fox-Kemper, B., Nowicki, S., Seroussi, H. & Bergen, K. J. Iseflow v1.0: A flow-based neural network emulator for improved sea level projections and uncertainty quantification. EGUsphere 2025, 1–32 (2025).

Wu, B., Zheng, S., Li, S. & Wang, S. Neural emulator based on physical fields for accelerating the simulation of surface chlorophyll in an Earth System Model. Ocean Model. 102491 (2025).

Bassetti, S., Hutchinson, B., Tebaldi, C. & Kravitz, B. DiffESM: Conditional Emulation of Temperature and Precipitation in Earth System Models With 3D Diffusion Models. J. Adv. Model. Earth Syst. 16, e2023MS004194 (2024).

Cachay, S. R., Ramesh, V., Cole, J., Barker, H. & Rolnick, D. ClimART: A Benchmark Dataset for Emulating Atmospheric Radiative Transfer in Weather and Climate Models. In Proceedings of the Neural Information Processing Systems Track on Datasets and Benchmarks, vol. 1 (2021).

Beucler, T. et al. Climate-invariant machine learning. Sci. Adv. 10, eadj7250 (2024).

Perezhogin, P., Zhang, C., Adcroft, A., Fernandez-Granda, C. & Zanna, L. A stable implementation of a data-driven scale-aware mesoscale parameterization. J. Adv. Model. Earth Syst. 16, e2023MS004104 (2024).

Smith, C. J. et al. Fair v1. 3: a simple emissions-based impulse response and carbon cycle model. Geosci. Model Dev. 11, 2273–2297 (2018).

Lai, C.-Y. et al. Machine Learning for Climate Physics and Simulations. Ann. Rev. Condens. Matter Phys. 16 (2024).

van Vuuren, D. et al. The Scenario Model Intercomparison Project for CMIP7 (ScenarioMIP-CMIP7). EGUsphere 2025, 1–38 (2025).

O’Neill, B. C. et al. The scenario model intercomparison project (ScenarioMIP) for CMIP6. Geosci. Model Dev. 9, 3461–3482 (2016).

Nowicki, S. et al. Experimental protocol for sea level projections from ISMIP6 stand-alone ice sheet models. Cryosphere 14, 2331–2368 (2020).

Jones, C. D. et al. C4MIP – the coupled climate–carbon cycle model intercomparison project: experimental protocol for CMIP6. Geosci. Model Dev. 9, 2853–2880 (2016).

Hausfather, Z. An assessment of current policy scenarios over the 21st century and the reduced plausibility of high-emissions pathways. Dialogues Clim. Change 2, 26–32 (2025).

Hausfather, Z. & Peters, G. P. Emissions–the ‘business as usual’ story is misleading. Nature 577, 618–620 (2020).

Jones, C. G. et al. Bringing it all together: science priorities for improved understanding of Earth system change and to support international climate policy. Earth Syst. Dyn. 15, 1319–1351 (2024).

Yang, Q., Elsaesser, G. S., Van Lier-Walqui, M. & Eidhammer, T. A simple emulator that enables interpretation of parameter-output relationships, applied to two climate model PPEs. J. Adv. Model. Earth Syst. 17, e2024MS004766 (2025).

Hourdin, F. et al. Toward machine-assisted tuning avoiding the underestimation of uncertainty in climate change projections. Sci. Adv. 9, eadf2758 (2023).

Fu, L.-L. et al. The surface water and ocean topography mission: A breakthrough in radar remote sensing of the ocean and land surface water. Geophys. Res. Lett. 51, e2023GL107652 (2024).

Durack, P. J. et al. The Coupled Model Intercomparison Project (CMIP): Reviewing project history, evolution, infrastructure and implementation. EGUsphere 2025, 1–74 (2025).

Cinquini, L. et al. The Earth System Grid Federation: An open infrastructure for access to distributed geospatial data. Future Gener. Comput. Syst. 36, 400–417 (2014).

Hassell, D., Gregory, J., Blower, J., Lawrence, B. N. & Taylor, K. E. A data model of the climate and forecast metadata conventions (cf-1.6) with a software implementation (cf-python v2.1). Geosci. Model Dev. 10, 4619–4646 (2017).

Juckes, M. et al. The CMIP6 Data Request (DREQ, version 01.00.31). Geosci. Model Dev. 13, 201–224 (2020).

Dueben, P. D. et al. Challenges and benchmark datasets for machine learning in the atmospheric sciences: Definition, status, and outlook. Artif. Intell. Earth Syst. 1, e210002 (2022).

Watson-Parris, D. et al. ClimateBench v1. 0: A benchmark for data-driven climate projections. J. Adv. Model. Earth Syst. 14, e2021MS002954 (2022).

Kaltenborn, J. et al. ClimateSet: A Large-Scale Climate Model Dataset for Machine Learning. In Thirty-seventh Conference on Neural Information Processing Systems Datasets and Benchmarks Track (2023).

Hoffman, F. M. et al. Rapid evaluation framework for the CMIP7 assessment fast track. EGUsphere 2025, 1–57 (2025).

Lütjens, B., Ferrari, R., Watson-Parris, D. & Selin, N. E. The impact of internal variability on benchmarking deep learning climate emulators. J. Adv. Model. Earth Syst. 17, e2024MS004619 (2025).

Ullrich, P. A. et al. Recommendations for comprehensive and independent evaluation of machine learning-based Earth system models. J. Geophys. Res.: Mach. Learn. Comput. 2, e2024JH000496 (2025).

Woollam, J. et al. SeisBench-a toolbox for machine learning in seismology. Seismol. Res. Lett. 93, 1695–1709 (2022).

Münchmeyer, J. et al. Which picker fits my data? A quantitative evaluation of deep learning based seismic pickers. J. Geophys. Res.: Solid Earth 127, e2021JB023499 (2022).

Wilson, G. et al. Best practices for scientific computing. PLOS Biol. 12, e1001745 (2014).

Pedregosa, F. et al. Scikit-learn: Machine Learning in Python. J. Mach. Learn. Res. 12, 2825–2830 (2011).

Hoyer, S. & Hamman, J. xarray: N-D labeled arrays and datasets in Python. J. Open Res. Softw. 5 (2017).

Kurth, T. et al. FourCastNet: Accelerating Global High-Resolution Weather Forecasting Using Adaptive Fourier Neural Operators. PASC '23: Proceedings of the Platform for Advanced Scientific Computing Conference, 13. (ACM, New York, NY, 2023).

Duncan, J. P. C. et al. Application of the AI2 climate emulator to E3SMv2’s global atmosphere model, with a focus on precipitation fidelity. J. Geophys. Res.: Mach. Learn. Comput. 1, e2024JH000136 (2024).

Kent, C. et al. Skilful global seasonal predictions from a machine learning weather model trained on reanalysis data. npj Clim. Atmos. Sci. 8, 314 (2025).

Kopp, R. E. et al. The Framework for Assessing Changes To Sea-level (FACTS) v1.0: a platform for characterizing parametric and structural uncertainty in future global, relative, and extreme sea-level change. Geosci. Model Dev. 16, 7461–7489 (2023).

Nicholls, Z. R. J. et al. Reduced complexity model intercomparison project phase 1: introduction and evaluation of global-mean temperature response. Geosci. Model Dev. 13, 5175–5190 (2020).

Windisch, M. et al. Fastmip-coordinating experiments of regional emulators for intercomparison and fast regional projections. In AGU Fall Meeting Abstracts, vol. 2024, GC01–113 (2024).

Hawkins, E. & Sutton, R. The potential to narrow uncertainty in regional climate predictions. Bull. Am. Meteorol. Soc. 90, 1095–1108 (2009).

Lehner, F. et al. Partitioning climate projection uncertainty with multiple large ensembles and CMIP5/6. Earth Syst. Dyn. 11, 491–508 (2020).

Lam, R. et al. Learning skillful medium-range global weather forecasting. Science 382, 1416–1421 (2023).

Nguyen, T., Brandstetter, J., Kapoor, A., Gupta, J. K. & Grover, A. ClimaX: A foundation model for weather and climate. In Proceedings of the 40th International Conference on Machine Learning (ICML), vol. 202 of Proceedings of Machine Learning Research (PMLR), 25904–25938 https://proceedings.mlr.press/v202/nguyen23a.html (2023).

Kochkov, D. et al. Neural general circulation models for weather and climate. Nature 632, 1060–1066 (2024).

Holden, P. B. & Edwards, N. R. Dimensionally reduced emulation of an AOGCM for application to integrated assessment modelling. Geophys. Res. Lett. 37 (2010).

Castruccio, S. et al. Statistical Emulation of Climate Model Projections Based on Precomputed GCM Runs. J. Clim. 27, 1829–1844 (2014).

Geoffroy, O. et al. Transient Climate Response in a Two-Layer Energy-Balance Model. Part II: Representation of the Efficacy of Deep-Ocean Heat Uptake and Validation for CMIP5 AOGCMs. J. Clim. 26, 1859–1876 (2013).

Mitchell, T. D. Pattern Scaling: An Examination of the Accuracy of the Technique for Describing Future Climates. Clim. Change 60, 217–242 (2003).

Kravitz, B. & Snyder, A. Pangeo-Enabled ESM Pattern Scaling (PEEPS): A customizable dataset of emulated Earth System Model output. PLOS Clim. 2, e0000159 (2023).

Nath, S., Lejeune, Q., Beusch, L., Schleussner, C.-F. & Seneviratne, S. I. MESMER-M: an Earth system model emulator for spatially resolved monthly temperature. Earth Syst. Dyn. Discuss. 2021, 1–38 (2021).

Carzon, J. et al. Statistical constraints on climate model parameters using a scalable cloud-based inference framework. Environ. Data Sci. 2, e24 (2023).

Baker, E., Harper, A. B., Williamson, D. & Challenor, P. Emulation of high-resolution land surface models using sparse Gaussian processes with application to JULES. Geosci. Model Dev. 15, 1913–1929 (2022).

Ming, D., Williamson, D. & Guillas, S. Deep Gaussian process emulation using stochastic imputation. Technometrics 65, 150–161 (2023).

Cachay, S. R., Henn, B., Watt-Meyer, O., Bretherton, C. S. & Yu, R. Probabilistic Emulation of a Global Climate Model with Spherical DYffusion. In Conference on Neural Information Processing Systems (NeurIPS) (2024).

Bi, K. et al. Accurate medium-range global weather forecasting with 3D neural networks. Nature 619, 533–538 (2023).

Dheeshjith, S. et al. Samudra: An AI Global Ocean Emulator for Climate. Geophys. Res. Lett. 52, e2024GL114318 (2025).

George, T. M., Manucharyan, G. E. & Thompson, A. F. Deep learning to infer eddy heat fluxes from sea surface height patterns of mesoscale turbulence. Nat. Commun. 12, 800 (2021).

Bolton, T. & Zanna, L. Applications of Deep Learning to Ocean Data Inference and Subgrid Parameterization. J. Adv. Model. Earth Syst. 11, 376–399 (2019).

Palmer, M. D., Harris, G. R. & Gregory, J. M. Extending CMIP5 projections of global mean temperature change and sea level rise due to thermal expansion using a physically-based emulator. Environ. Res. Lett. 13, 084003 (2018).

Tang, G., Nicholls, Z., Norton, A., Zaehle, S. & Meinshausen, M. Synthesizing global carbon–nitrogen coupling effects – the MAGICC coupled carbon–nitrogen cycle model v1.0. Geosci. Model Dev. 18, 2193–2230 (2025).

Gasser, T., Guivarch, C., Tachiiri, K., Jones, C. & Ciais, P. The compact Earth system model OSCAR v2.2: description and first results. Geosci. Model Dev. 10, 271–319 (2017).

Acknowledgements

PV is supported by the National Science Foundation Graduate Research Fellowship Program under Grant 2040433. BFK was supported by the Equitable Climate Futures initiative at Brown University and by NSF RISE-2425380. HH was supported by the Met Office Hadley Centre Climate Programme funded by DSIT.

Author information

Authors and Affiliations

Contributions

P.V. conceived the study, formed the perspective, and led the writing of the manuscript. K.J.B. and B.F.K. contributed to the development of the conceptual framework and provided feedback on the perspective. H.H. and B.F.K. provided expertise on Earth system modeling, CMIP processes, and policy-relevant climate assessment, and contributed to manuscript revision. P.V. and K.J.B. contributed to the framing of machine learning and emulator development, and to critical discussion of benchmarks and software practices. All authors discussed the ideas presented and contributed to revising the manuscript.

Corresponding author

Ethics declarations

Competing interests

The authors declare no competing interests.

Peer review

Peer review information

Communications Earth and Environment thanks Peter Challenor, Yann Quilcaille, and Shruti Nath for their contribution to the peer review of this work. Primary Handling Editor: Nandita Basu. [A peer review file is available].

Additional information

Publisher’s note Springer Nature remains neutral with regard to jurisdictional claims in published maps and institutional affiliations.

Supplementary information

Rights and permissions

Open Access This article is licensed under a Creative Commons Attribution-NonCommercial-NoDerivatives 4.0 International License, which permits any non-commercial use, sharing, distribution and reproduction in any medium or format, as long as you give appropriate credit to the original author(s) and the source, provide a link to the Creative Commons licence, and indicate if you modified the licensed material. You do not have permission under this licence to share adapted material derived from this article or parts of it. The images or other third party material in this article are included in the article’s Creative Commons licence, unless indicated otherwise in a credit line to the material. If material is not included in the article’s Creative Commons licence and your intended use is not permitted by statutory regulation or exceeds the permitted use, you will need to obtain permission directly from the copyright holder. To view a copy of this licence, visit http://creativecommons.org/licenses/by-nc-nd/4.0/.

About this article

Cite this article

Van Katwyk, P., Fox-Kemper, B., Hewitt, H.T. et al. Rewiring climate modeling with machine learning emulators. Commun Earth Environ 7, 107 (2026). https://doi.org/10.1038/s43247-026-03238-z

Received:

Accepted:

Published:

Version of record:

DOI: https://doi.org/10.1038/s43247-026-03238-z