Abstract

Magnetic resonance imaging-guided acoustic trapping is expected to manipulate drug carriers (e.g., microbubbles) within the body, potentially improving carrier concentration at tumor sites and thereby enhancing targeted therapy outcomes. However, accurate trap generation remains challenging due to complex wave propagation through multiple tissue materials. Moreover, respiration-induced tissue motion imposes stringent requirements on computational efficiency for rapid phase updates. Here we propose a machine learning-based model and a closed-loop control scheme to modulate phase patterns rapidly. The model delivers precise time-of-flight prediction (mean err. ≤ 0.24 μs) within 26 ms for 196 transducer elements. In proof-of-concept experiments, computer vision feedback permits fast (about 15 frames per second) position adjustment of a trapped polystyrene ball (Ø2.7 mm). This control scheme helps lessen the ball’s spatial drift induced by time-varying multi-medium environments. These experiments on robotic manipulation support our model’s potential for future magnetic resonance imaging-guided targeted therapy.

Similar content being viewed by others

Introduction

Acoustic traps are sophisticated forms of ultrasound interference, achieved through the precise steering of phased array. Its focal-1, twin-1,2, vortex-2,3, and bottle-shaped2,4 acoustic structure can exert acoustic radiation force to enable contact-free robotic manipulation of sub-wavelength (<λ) objects2. Beyond this operational capability, acoustic traps feature excellent biocompatibility and deep penetration in biological tissues1, thereby positioning it as a non-invasive robot manipulator in life sciences5,6. In lab-based works, acoustic traps have been applied for cell selection7, organism localization (e.g., a shrimp larva)8, and bacteria cluster enrichment9. Subsequently, researchers proposed integrating acoustic traps with magnetic resonance imaging (MRI) for targeted therapy1,2. Specifically, acoustic traps are expected to allow robotic localization of drug carriers (e.g., microbubbles) around tumor tissues under hemodynamic (see Fig. 1a), and MRI provides guidance during this procedure. Unlike alternative contact-free manipulation approaches (e.g., electrokinetic tweezing, and magnetic swimming1), ultrasound exhibits distinct compatibility in magnetic resonance (MR) environments2. Thus, MRI-guided acoustic trapping is envisioned to improve carrier concentration in targeted therapy1. This proof-of-concept technique must rely on the visualization of both microbubbles and acoustic interference, ensuring their alignment with the target. As of now, microbubbles containing MR imageable materials have been successfully tracked in preclinical applications10. Regarding acoustic interference, MR scans recently visualized focal-shaped acoustic structures by detecting ultrasound pulse-induced micro-scale tissue displacements11. In addition to visualization, sensing array position and orientation is necessary, as it critically affects trap generation. It also provides feedback to robotic actuation and prevents errors from manual positioning. Currently, both radiofrequency (RF) circuits (wired to MR scanner) and optical fiber Bragg gratings (FBGs) achieve sensing in MR. However, the connecting cables may induce RF heating, posing a risk of tissue overheating, and the rigid registration of FBGs challenges their alignment with MRI, introducing localization errors. To address this need, a previous study12 reported a compact wireless tracking coil that can generate a high-contrast spot in MR images (see Fig. 1a). Multiple such coils (≥3) embedded in the array base can rapidly (~28 fps12) reflect array’s three-dimensional (3D) pose, which provides fast feedback to control system. Such coils also can be attached to abdominal walls for monitoring nonlinear respiration-induced motion, offering feedback for the formation of the acoustic trap. Accredited to these advancements, MRI-guided acoustic trapping presents promising prospects and deserves study for targeted therapy.

a Proof-of-concept of MRI-guided acoustic trapping for tumor treatment. MRI visualizes the acoustic trap and tracks drug carriers within organs. MR-based coils (Ø3 mm × 5 mm12) can be used to produce high-contrast spots for precise array positioning and abdomen motion monitoring. The twin trap localizes microbubbles around the tumor tissues for targeted therapy. b Overview of our hardware architecture. The flat 2D phased array consists of 196 (14 × 14) elements. A chamber filled with mixed gases is moved by a XY-table, constituting a time-varying multimedia with the air. A stereo camera module tracks three marks attached to the front wall, offering real-time vision feedback. c–e Examples of PS ball manipulation along the H-, K-, U-shaped trajectories. These pink balls present the positions of the PS ball during its stepwise movement. The larger indicate a 2 mm step in Y direction, and the smaller indicate a 0.5 mm step on in X direction with an optional 0.7 mm step in Y direction for K- trajectory and an optional 1.0 mm step in Y direction for U- trajectory.

Currently, established methodologies for acoustic robotic manipulation in a singular medium (e.g., air2,13,14 or water3,8,15) are available. By electronically steering the phase of individual transducer elements, the acoustic structure and its position can be flexibly and accurately modified2. Nonetheless, reproducing this robotic manipulation within multi-medium environments presents substantial challenges. The primary reason is the variations in the medium’s properties (e.g., heterogeneity, interface effects and layered structure), which induce phase distortion along the wavefront, i.e., phase aberration11. As a result, the acoustic trap inevitably shifts away from the predefined target or even fails to form entirely. Reviewing these existing algorithms, only four, namely, iterative backpropagation (IB)16, holographic acoustic element framework (HAEF)17, time reversal-based method (TRM)18 and learning-based method1,19, made preliminary attempts in multi-medium environments. Both IB and HAEF employ phase iteration to construct holographic acoustic tweezers in the target area2,13. Yet, neither has accounted for phase aberrations in their current implementations20. Thus far, IB only enabled acoustic trap generation in a mouse’s skin-fold chamber model (tissue thickness <1 mm)16, where the medium irregularity is negligible. IB now is not capable of resolving the acoustic field in large-volume medium1. Regarding HAEF, its phase iteration necessitates the prior knowledge of ultrasound beam’s pathway distance between elements and target point2. However, in multi-medium environments, HAEF’s accuracy of distance computation is compromised due to complex refraction1. To increase precision, a hydrophone was placed at the target for phase calibration17, producing a twin trap for robotic manipulation after beams passed through a 30-mm-thick porcine. This approach requires invasive placement and is thus not suitable for non-invasive therapy. In contrast, the widely used TRM18,21 can correct strong phase distortion with notable precision. This method calculates the beams’ time of flight (ToF) between the target and transducer elements, instead of iterating phase. In practice, TRM mainly relies on numerical methods (e.g., k-Wave toolbox18, finite element analysis22), which mesh the medium model into a massive amount of voxels. This implies that TRM has a severe computational complexity1. Hence, the reported works only used stationary medium (e.g., macaque skull18, resin baffle18, and kidney stones21), maintaining a fixed medium design after extensive pre-calculations. Currently, TRM still cannot support rapid phase update in response to time-varying media (e.g., respiration-induced tissue motions). Given the future medical scenarios, TRM cannot compensate for the respiration-induced tissue motion, thus failing to maintain the robust microbubble trapping at the target site.

Apart from the above analytical and numerical methods, learning-based approaches are increasingly being applied to phase prediction. Previously reported convolutional neural networks19,23 have proven the efficacy and efficiency (~200 ms) to create dynamic holographic acoustic fields for free-contact robotic manipulation. Additionally, another study1 addressed dataset sampling strategy and learning model architecture. The trained model accurately computed phase to produce a twin tweezer and focal spot in two-dimensional (2D) MRI slices. These works1,19,23 demonstrate that learning-based method offers two key advantages: first, they do not rely on prior knowledge of medium properties1, focusing solely on the learning mapping between inputs (e.g., target position) and outputs (e.g., ToF); second, the models eliminate the need for mesh- or voxel-based computation and facilitate the rapid predictions, considerably surpassing these existing methods in computation efficiency. However, these learning-based works have so far been limited to the singular medium19,23 or 2D heterogeneous environments (i.e., MRI slices)1. Worse yet, all reported models are only validated in stationary medium, without attempts made in time-varying multi-medium environments.

To propel this proof of concept, we propose a machine learning model to facilitate acoustic trapping in time-varying multi-medium environments. In our proof-of-concept validation, the multimedia comprise air, a sealed chamber filled with mixed gases (12% oxygen, 44.8% nitrogen and 43.2% carbon dioxide), and one layer of polyethylene (PE) plastic wrap (see Fig. 1b). While the chamber is moved, this medium model becomes time-varying, simulating the respiration-induced tissue motion. To adapt to such time-varying multi-medium environments, a stereo camera module is used to monitor this movement to provide computer vision feedback. By combining vision feedback with a learning model, we design a closed-loop control framework to allow for rapid phase update for robust acoustic trapping. This achievement has not been demonstrated or reported before. The proposed model is trained using multi-layer perceptron regressor, inversely mapping from the given target position and chamber position to the ToF patterns. In this work, we utilize the time-reversal principle for dataset collation, and use the trained model to modulate a flat 14 × 14 array. The proof-of-concept experiments achieve stable acoustic levitation and accurate manipulation of a polystyrene (PS) ball along predefined trajectories (see Fig. 1c–e). These results highlight acoustic traps’ potential for robotic manipulation of microbubbles in future MRI-guided targeted therapy. Our key work contributions are listed as follows:

-

1)

Development of a learning-based, mesh-free model for phase-modulation in the 3D multi-medium environment, allowing the accurate generation of acoustic traps at the target position.

-

2)

Design of a closed-loop control framework which combines fast ToF estimator and vision feedback, enabling rapid phase update (up to 15 frames per second) for robust trapping in time-varying environments.

-

3)

Proof-of-concept validation for the proposed machine learning-facilitated acoustic trapping is conducted, including robust levitation and accurate manipulation of a PS ball. These experimental results verify the ToF prediction accuracy and computation efficiency, in support of the clinical potential for microbubble manipulation.

Methods

This section presents details of control framework, model training, and hardware setup, by designing rapid and accurate generation of acoustic traps in multi-medium environments. To facilitate the reproducibility, all related designed files are publicly available on our public GitHub repository (see Data Availability Statement), including printed circuit board’s layout of phased-array, FPGA code (Verilog HDL) for actuating transducers, k-Wave projects for dataset collection, Python scripts for model training, and Arduino code for gas monitoring and XY-table actuation. Here, we claim that we used two large language models (ChatGPT and DeepSeek) to refine the English writing.

Acoustic trap model

The acoustic field of acoustic traps usually manifests as exterior high pressure converging towards the low-amplitude interior region. Specifically, the twin tweezer’s two high-pressure control points converge towards the in-between low-pressure area. Similarly, the bottle trap’s surrounding area converges towards the low-pressure center, forming a potential well structure. Such pressure gradients would exert acoustic radiation force, denoted by F, to trap objects. F can be calculated from the gradient of the Gor’kov potential U24:

where \(a\) is the radius of the trapped objects; \(\left\langle \cdot \right\rangle\) denotes the time average; \({c}_{0}\), \({\rho }_{0}\) and \({\kappa }_{0}\) are the acoustic speed, density and compressibility of host medium (air in our study), respectively; \({\rho }_{p}\) and \({\kappa }_{p}\) are the density and compressibility of particles (PS bead in our study), respectively; \(p\) is the pressure, and \({{{\mathbf{u}}}}\) is the velocity of objects; \(\phi\) is acoustic contrast factor. In this work, the PS bead exhibits a positive acoustic contrast factor and is therefore trapped at the pressure node of the acoustic field. Theoretically, this pressure node can be characterized as a local maximum of the Gor’kov Laplacian2. This method generally requires phase iteration, which is challenging to converge in complex environments. In practice, TRM is widely used to solve for the key parameter, ToF, in time domain. The ToF results are then processed in the time-reversed order to manage the delay time of activating transducer elements. The resulting beams can be focused at the desired points by assuming the reciprocity of the ultrasound travel paths18. Then, by adding the fixed signature phase patterns, the focal beam can be transferred to unique acoustic structures, such as twin-, bottle-, and vortex-shaped traps2. Here, we employed TRM as the principle for collecting datasets in time-varying multi-medium environments and then built a learning model to predict ToF rapidly. The predicted results enabled real-time phase modulation of the transducer array facilitating robust object manipulation in time-varying environments.

Control framework overview

The control framework consists of two flows as illustrated in Fig. 2, i.e., rapid phase prediction (red arrows) and vision feedback (blue arrows). It can realize stable levitation and robotic manipulation of a white PS bead (Ø2.7 mm) in the 3D time-varying multi-medium environments.

It has two flows, where the red arrows present the rapid ToF (φ) prediction, and the blue present the vision feedback. The machine learning-empowered ToF predictor is the core module of first flow, which computes the ToF between elements and target position (p). In the vision feedback, the chamber is moved by a 2-axis step actuation along the prescribed path. By tracking the chamber, the time-varying medium environment (m) is quantified. Meanwhile, the vision feedback also tracks the PS ball position (p*) for metrics evaluation. When error exceeds the threshold (e), the learning model will be triggered to update the phase patterns (Ψ).

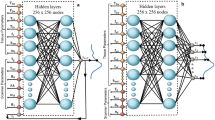

The first flow’s core module is machine learning-empowered ToF predictor. It predicted the ToF between target and transducer elements, rather than directly calculating phase. This learning model featured two input variables, including the target position (p) and medium parameter (m). Specifically, the medium parameter only included the chamber position. The material properties within the chamber were not required as inputs for the learning model. Here, m was represented by the positions of three circular markers on the front wall (see Fig. 1b). Their circular profiles were detected using Hough transform25, and their 3D positions were then calculated via stereo matching, enabling the specification of the chamber position. Thus, the 3D coordinates of target and markers constituted 12 input nodes. The output layer comprised n (=196 in our study) nodes, corresponding to the total number of transducer elements. The output results, i.e., ToF (φ), were processed in the time-reversed order to derive an intermediate phase delay. By HAEF, this intermediate phase, combined with a fixed holographic signature, can form the final phase patterns (Ψ) of twin, vortex or bottle trap2. This proposed model architecture shows a step forward toward medical potential compared to reported convolutional neural networks19,23. Those previous models discretized the acoustic field into a pressure amplitude distribution image for convolutional calculations to predict phase hologram. To ensure model accuracy, a small 2D acoustic field (50 mm × 50 mm) required collecting a massive number of data pairs (e.g., 9000 in ref. 19 and 21,000 in ref. 23). Even worse, in multi-medium environments, it is challenging to predefine acoustic fields that can ensure convergence of convolutional calculations. In contrast, our model combined multi-layer perceptron regressor with time-reversal principle, effectively forming a physics-informed neural network. This approach can achieve accurate predictions with a much smaller sample size, thereby saving a large amount of sampling time. The second flow primarily provided low-latency vision feedback (~30 frames per second) to support the online prediction. Considering the prediction time (~30 ms per inference), the control framework finally provided phase updates at a maximum rate of 15 frames. When the chamber was moved by an XY-table, a stereo camera module measured the 3D coordinates of the chamber’s markers. Alongside tracking the chamber, the camera also measured PS ball position (p*) to evaluate the spatial error relative to the target. When the error fell below the threshold (e), the trained model predicted a new phase pattern, guiding the PS bead to the next designated point. Conversely, the control framework applied an offset to the target position and then re-predicted ToF, iteratively updating the phase patterns until the PS bead converged toward the target.

Dataset collection and model training

In future medical scenarios, the dataset will be acquired using MR imaging. In this preliminary work, we used the k-Wave toolbox26 to collect raw data for model training. In the k-Wave computation, the time-reversal principle was applied to calculate ToF between the target position and transducer elements. The efficacy of k-Wave in 3D environments has been demonstrated18. Thus, our focus was on the sampling strategy that effectively balances computational expense and sample size. In our prior work1 on 2D acoustic traps, a threshold spacing (1.5 mm) was identified between two adjacent samples. In this work, we aimed to extend this strategy for 3D sampling and then verify its effectiveness. Considering that chamber continuously moved within repetitive vertical (4 mm) and horizontal (7 mm) ranges, its position was discretized at 1 mm increments along both the X and Y axes. As shown in Fig. 3, the chamber positions were discretized into 40 distinct positions during dataset collection. Each chamber position was represented by the positions of three circular markers. When the top-left marker was used as reference, the two chamber boxes in Fig. 3 represented two representative positions corresponding to movements (0, 2) and (1, 1), respectively. A rectangular region of interest (ROI) was defined inside the box with a size of 10 mm (on X-axis direction) by 14 mm (on Y-axis direction). In the Z-axis direction, the ROI plane was positioned at the midpoint of the chamber. This ROI’s spatial position was fixed and did not move along with the chamber’s movement. Within such a ROI, 63 samples were acquired, and each sample (denoted by “×”) was spaced 1.5 mm in both directions. As the chamber moved and this dataset acquisition process was repeated, a total of 2520 samples were acquired. In k-Wave computation, each sample served as a virtual sound source to emit beams, and all transducer elements acted as receivers to pick up signals. Finally, ToF data was extracted from these signals.

The chamber continuously moves within repetitive vertical (4 mm) and horizontal (7 mm) ranges, with its position discretized at 1 mm increments along both the X and Y axes during dataset collection. The two boxes represent two representative positions corresponding to movements (0, 2) and (1, 1), respectively. In the region of interest, 63 samples spaced 1.5 mm apart serve as virtual sound source, emitting sound beams. All transducers act as receivers, picking up signals from sound sources.

After finishing the dataset collection, a multi-layer perceptron regressor27 was employed to build the inverse mapping from the target position and medium parameter to ToF. Target position was denoted as \({{{\bf{p}}}}=\left[{p}_{1},{p}_{2},{p}_{3}\right]\in {{\mathbb{R}}}^{3}\), the medium parameter as \({{{\bf{m}}}}=\left[{m}_{1},{m}_{2},{m}_{3}\right]\in {{\mathbb{R}}}^{9}\), and ToF as φ = \(\left[{\varphi }_{1},{\varphi }_{2},{\cdots ,\varphi }_{n}\right]\in {{\mathbb{R}}}^{n}\). In the training process, out of \({N}_{0}\) samples, 70%, 15% and 15% were randomly assigned for model training, validation, and hold-out testing, respectively. The dataset of target position, chamber position and ToF were

and

respectively. The given input [P, M] was standardized (zero mean, unit variance) using scikit-learn’s StandardScaler, and the output \({{{\boldsymbol{\eta }}}}\) can be directly predicted via this learning mapping. This mapping is expressed as

The model was trained using scikit-learn with L2-loss and ReLU activation functions. In its hidden layers, a total of \(v\) (=370 in this study) nodes were symmetrically distributed across 5 hidden layers: 20, 90, 150, 90, and 20 neurons respectively. All the dataset collection and model training were conducted on a computer equipped with an Intel i9-12900KS central processing unit and 64 GB of random-access memory without graphics processing unit acceleration.

Design of sealed chamber and gas monitoring

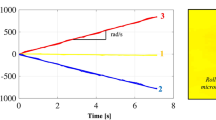

The chamber was a bottom-opening acrylic cuboid box (220 mm × 193 mm × 125 mm). This sole opening was covered with a layer of stretched plastic wrap (~9.4 µm) to isolate the inner mixed gases from the outside air. The acoustic impedance mismatch between the air (417 rayls) and mixed gases (453 rayls) resembled that between fat (1.38 × 106 rayls28) and water (1.5 × 106 rayls3). Thus, plastic wrap, air and mixed gases effectively formed three-layer multi-medium environments. This thin plastic wrap allowed beams to penetrate inside the chamber. Regarding the chamber’s spatial arrangement and its movement range, we referred to current ultrasound treatment scenarios (e.g., MR-guided focused ultrasound29), where patients generally lie in the prone position with transducers placed under the MRI table (see Fig. 1a). The average magnitudes of respiration-induced tissue motion in craniocaudal direction and anteroposterior direction are 7.4 mm and 3.8 mm, respectively30. Hence, the chamber was suspended above our array and underwent repetitive vertical (4 mm) and horizontal (7 mm) movement to simulate the tissue motion. To investigate plastic wrap’s influence on the acoustic field, we carried out a finite element analysis (FEA) using COMSOL Multiphysics (COMSOL Inc, Stockholm, Sweden). In this FEA, eight transducers (Ø10 mm) were spaced 0.5 mm apart and driven at 40 kHz. A 9.4-μm-thick plastic wrap layer was placed 10 cm above these elements, with a density of 920 kg m−³ and acoustic speed of 2000 m s−1. The host medium was air, surrounded by 5-mm-thick perfectly matched layers. The entire geometry was meshed using free triangular elements (minimum size: 1 μm in the wrap layer). As shown in Fig. 4a, b, the solo difference in geometry model lay in the presence of one layer of polyethylene plastic wrap (9.4 µm). Despite a severe amplitude attenuation of 62%, a twin trap was reproduced above the plastic wrap, with the spacing between its two control points expanding from 9.6 mm (~1.1λ) to 13.2 mm (~1.5λ). Besides, both control points were displaced by 1 mm along the Y-axis, which was attributed to the effect of plastic wrap. Then, a 10-mm-wide rubber ring was used to tightly secure the plastic wrap, preventing gas leak and ensuring composition stability. Unlike the low acoustic impedance mismatch among the tissue materials (e.g., muscle, fat, and protein), the mismatch between gases and smooth acrylic walls is notable. This can cause unwanted high reflections31, thereby disrupting the generated trap. Thus, a layer of wool felt (8 mm thick) and a layer of sponge (30 mm thick) were sequentially attached to the top, left, back, and right walls of the chamber, respectively. These materials can reduce unwanted reflection. A compact gas sensor 4MZ-HH (Huashenke Tech, Zhengzhou, China) was embedded inside sponge to measure O2 volume fractions in real time. Then, pure liquid CO2 packaged in an aluminum can, was gradually gasified and released into a 100 mL syringe via a pressure regulator, and then manually injected into the chamber. This process gradually decreased O2 and N2 volume fractions until O2 reduced to 12%. This stage can last for 309 s, as shown in Fig. 4c, providing sufficient time for experimental operations. At this stage, the density (ρ) and acoustic speed (c) of the mixed gases were computed as follows32,

where \(P\), \(T\), and \({{{\rm{R}}}}\) (=8.314 J K−1⋅mol−1 in our case) are the pressure, temperature, and universal gas constant of the mixed gases, respectively. \(P\) and \(T\) are measured using BMP388 sensor (Bosch Sensortec, Reutlingen, Germany). The heat ratio (\(\gamma\)) and molar mass (\(M\)) of gas mixture are determined by the volume fractions (\({V}_{k}\)) and the inherent properties (\({\gamma }_{k}\) and \({M}_{k}\)) of each gas component (k = 1 to N, with N = 3 in our case). As a result, the density is 1.491 kg m−3, and the acoustic speed is 303 m s−1. In our k-Wave computation, these gas parameters resulted in the shortest pathway’s ToF being about 20 μs (~λ) longer than that in pure air. This difference, apart from the acoustic impedance mismatch, further highlighted the distinct properties between such mixed gases and air. Moreover, a metal net (10 mm × 30 mm, grid space = 2.5 mm) was placed 10 mm above the plastic wrap. Its area covers approximately three transducers located beneath it. Through a hole (Ø4 mm) on the front wall, the PS bead can be delivered and positioned on the metal net using a long needle (Ø0.2 mm). Note that neither the mesh nor the bead will be placed inside the body in medical scenarios. The metal wire diameter (Ø0.4 mm) was far smaller than wavelength (8.6 mm) to minimize its influence on beam propagation. However, the grid hole is not much smaller than the wavelength, and it is likely to affect the formed acoustic field, especially when the acoustic trap is close to the metal mesh.

a A baseline twin trap generated in air. b A twin trap generated with beams passing through a single layer of polyethylene plastic wrap (thickness = 9.4 µm). The normalized pressure (NP) at two control points decreases from 1 to 0.38, and the high-pressure region was markedly reduced. The spacing between two control points increased from 9.6 mm to 13.2 mm, and they shifted by 1 mm along Y-axis. c Oxygen volume fraction monitoring. After CO₂ injection, the O₂ concentration stabilizes at 12.0% and 12.1% for 309 s and 510 s, respectively.

Electronic circuit design

This section describes the details of driver circuits for steering phased array and motors. The array consisted of 196 MA40S4S elements (Murata Ltd., Kyoto, Japan). Each was driven by 17.2-volt square pulses at the frequency of 40 kHz. These pulses were generated by 98 MOSFET TC4427 (Microchip Tech Inc., Chandler, USA) and 2 Cyclone III FPGA (Intel, Santa Clara, USA). The triggering time of individual pulse was predicted by the proposed learning model and transmitted to FPGA via a USB-UART port at a baud rate of 460,800. Two stepping motors of XY-table CT28 (Haijiejiachuang Ltd, Beijing, China) were controlled by an Arduino microcontroller (Arduino, Ivrea, Italy) and 2 DM420 digital driver (Yisheng Tech, Shenzhen, China). Figure 5 shows the overall hardware platform.

The stereo camera module is temporarily removed to provide a clearer view of the entire system.

Closed-loop control scheme

The closed-loop control was designed to counteract time-varying environments and plastic wrap. The medium model directly affected the ToF computation. The plastic wrap film was even not considered in the k-Wave computation for dataset collection, as its thickness (~9.4 µm) was much smaller than the grid size (728 µm). Our FEA results (see Fig. 4a, b) only proved that the film, placed at a specified position (30 mm above the array), caused the twin tweezer to deviate from the target position by 1 mm in Y-axis. While the film was moved (see Supplementary Movie 4), this spatial drift of the trapped PS ball reached up to 6.4 mm, which cannot be disregarded. Hence, a compensation mechanism was required to improve the trapping accuracy.

Here, the stereo camera module (Pixel XYZ Ltd., Wuhan, China) tracked the position of the PS ball (p*) and provided vision feedback to the control framework. By calculating the deviation (d) and comparing it with the predefined threshold (e), the trained model accordingly re-predicted a new ToF (φ) to correct this spatial drift. This closed-loop control framework was described as follows:

where j is the index of update steps; \({\mathbb{N}}\) is the maximum number of movement steps. Note that \(H\left(\cdot \right)\) was trained using the datasets that excluded the plastic wrap, but d included the deviation component induced by plastic wrap. This means that Eq. (4) cannot fully correct the shifted PS bead back to the target position, and instead, a small deviation remains.

Results and discussion

Model sensitivity analysis

Given the extensive computation time for dataset collection in k-Wave, our sample size is relatively small. Currently, no universal strategy has been reported for sampling in 3D multi-medium environments. Therefore, model sensitivity has to be analyzed to quantify the relationship between prediction accuracy and sample size. This analysis helps identify an optimal sample size, thereby reducing computational costs and providing a reference for future works.

In the defined ROI, we obtained a total of 2520 training samples (sample spacing s = 1.5 mm) while considering the chamber movement. By progressively increasing s to 3.0 mm, 4.5 mm, 6.0 mm, and 7.5 mm, four additional training datasets with smaller sample sizes were extracted from the initial dataset. Their sample sizes reduce to 31.7%, 14.3%, 9.5% and 6.3%, respectively. After training the models using these five datasets, their mean errors of ToF prediction were calculated individually using the hold-out testing samples. As shown in Fig. 6, when s is 1.5 mm, the error is 0.024 μs, and the ToF prediction accuracy reaches 99.6%. At the larger spacing of 4.5 mm, despite a tenfold increase in prediction error (0.24 μs), the prediction accuracy remains high at 99.3%. However, it substantially drops to 88.0% when s reaches 7.5 mm. Notably, at 6.0 mm, the accuracy exhibits obvious fluctuations with diminished reliability. These results suggest that spacing s = 4.5 mm (~0.5λ) achieves an optimal trade-off, effectively reducing the sample size by nearly an order of magnitude while maintaining high prediction precision.

The sample size varied from 6.3% to 100%, corresponding to sample spacing (s) ranging from 7.5 mm to 1.5 mm. When s is 4.5 mm, the ToF error increased by an order of magnitude.

Computational efficiency

Considering the physiological constraints, high-speed computation is required to shorten the procedure time in future ultrasound treatment. Specifically, patients cannot remain still on the MRI bed for a long time. Moreover, respiration continuously induces periodic tissue motion. The high computational efficiency is also demanded to adapt to time-varying multi-medium environments, avoiding the off-target trapping in future targeted therapy.

We quantified both the model training time and inference time. To ensure high accuracy, only three datasets sampled at s ≤ 4.5 mm (i.e., 1.5 mm, 3.0 mm and 4.5 mm) were used for the evaluation. Regarding training time, all three models were trained within 3 minutes (see Fig. 7a). When using the dataset with the smallest sample size, the training time was under 1 minute. This implies that patients will not experience prolonged waiting time after dataset collection. Then, the trained model can complete predictions for 100 targets within 2591 ms (see Fig. 7b). Estimation for a single target only costed 25.68 ms. In contrast, the numerical method (i.e., k-Wave) consumed 371,605.2 ms and 36,618,630 ms to calculate 1 and 100 targets in our dataset collection process, respectively, which would be unacceptable in future clinical scenario. Our model presents a six-order-of-magnitude acceleration. This efficiency advances the realization of robot-assisted MRI-guided ultrasound therapy.

a Training time of the proposed learning-based model. Larger sample sizes cost longer training time, but the maximum time does not exceed 3 min. b Inference time of the trained model compared to numerical mothed, with batch sizes ranging from 1 to 100.

Note that this comparison does not include precomputation cost (e.g., training time, sampling time). Precomputation time is usually longer than that required by numerical solvers; however, once trained, the model enables real-time prediction to accommodate highly dynamic physiological motions, which is difficult for numerical solvers to match. Moreover, our current model only delivered the prediction for 196 transducer elements. When scaling up to larger commercial arrays (e.g., ExAblate InSightec® with 1024 elements), the increased dataset size will likely lead to a substantial increase in precomputation cost for model training. Consequently, the trained model’s inference time also will rise greatly, and the speedup over k-Wave would decrease from the reported six orders of magnitude. Another important factor is the transducer position, which is currently not incorporated as an input feature in our model. However, in the practical scenario, the features should be incorporated, as positions directly influence the formation of acoustic traps. Especially for large-volume tumors, the array positions may need to be adjusted to cover the whole ROI, and the adjusted positions are fed back into the model for phase update. Undoubtedly, adding such features will also increase training time. For example1, when only incorporating the positions of eight transducers, the training time ranged from several seconds to ten of seconds, which is far longer than the sub-second time we reported here. If array dimension increases to 196 or even 1024 elements, the training time is anticipated to expand substantially. Training on the scale of tens of minutes or several hours is unsuitable for clinical use. Therefore, improving the training efficiency of our model in adapting to large-scale arrays is a critical challenge for clinical translation.

Accuracy of robotic manipulation

To validate the prediction accuracy of our model, we used the acoustic trap to levitate a PS ball at an expected position, thereby calculating spatial error. Multiple representative target points, distributed along H-, K- and U-shaped trajectories, were selected for a comprehensive evaluation. Note that these three predefined trajectories were not completely designed to match the letter shapes. In addition, all trajectories were planned on the XY-plane (stereo camera system) without any motion along the Z-axis. All target points’ Z-coordinate values were equal to Z-coordinate of array center. This design mainly considered that the structures of the array and chamber made the X- and Y-axis movements physically equivalent in terms of horizontal manipulation. As a result, it simplified the experimental process and avoided redundant tests.

Through rapid ToF prediction and phase updates, the PS bead was navigated through all predefined target points. For clearer visualization, their actual predefined paths are shown as yellow dashed boxes in Fig. 8a–c, where they were divided into three (Fig. 8a), five (Fig. 8b), and five (Fig. 8c) numbered segments, respectively. The actual positions of trapped PS bead are represented using pinks balls with each path comprising 30 (Supplementary Movie 1), 31 (Supplementary Movie 2), and 23 points (Supplementary Movie 3), respectively. Larger pink balls correspond to larger movement steps (i.e., 2 mm in Y direction), while smaller pink balls indicate smaller steps (i.e., 0.5 mm in X direction, optionally 0.7 mm for Figs. 8b and 1.0 mm for Fig. 8c in Y direction). The red dashed line indicates if the PS bead enters the upper region of the metal net. In all three cases, the magnitude of spatial deviation markedly increases after the bead passes the red line. Moreover, their respective maximum deviations also occur on the right side of the red line. Among them, the maximum deviation occurs at the 23rd step in H-shaped trajectory, most likely due to metal net’s impact on the adjacent segment 2, which leads to the accumulation of errors. In contrast, the more complex K-shaped trajectory has a smaller maximum error, mainly because PS ball gradually moves away from the metal net starting in segment 3. Overall, the metal mesh primarily affects the nearby acoustic field, with a more pronounced influence on the horizontal movements. It will not be used in real medical applications, and as such, this impact will not exist.

To clearly demonstrate manipulation accuracy, the PS bead positions are marked with pink balls along three (a), five (b), and five (c) segments. Larger pink balls represent larger movement steps (i.e., 2 mm in Y direction), while smaller pink balls indicate smaller steps (i.e., 0.5 mm in X direction, optionally 0.7 mm for (b) and 1.0 mm for (c) in Y direction). The red dashed line indicates if the PS bead enters the upper region of the metal net. d Spatial error of PS ball manipulation along three prescribed trajectories. The maximum spatial error between target and real position is 2.328 mm, which is approximately a quarter of the wavelength (8.6 mm).

We used the data from 84 movement steps to calculate their mean Euclidean distance error (\(\delta\)) and root mean square error (\(\sigma\)) as follows:

where \({x}_{t}\), \({y}_{t}\) and \({z}_{t}\) are the coordinates of targeted positions; \({\hat{x}}_{t}\), \({\hat{y}}_{t}\) and \({\hat{z}}_{t}\) are the measured coordinates of the trapped PS bead; t is the index of points. Since the PS bead did not move along the Z-axis, \({z}_{t}\) was assumed to be equal to \({\hat{z}}_{t}\). The resulting δ was 1.002 mm, reflecting the prediction accuracy of the learning-based model for robotic manipulation. \(\sigma\) was 1.135 mm, representing the robustness of the manipulation process. When \(\sigma\) exceeds δ, it indicates the presence of larger outliers during manipulation. Their Euclidean errors are presented in Fig. 8d, with a maximum error of 2.328 mm occurring in the H-shaped trajectory, which is approximately a quarter of the wavelength. The minimum error approaches 0.2 mm. Note that these errors also are influenced by the transducers’ performance and mechanical assembly quality. Currently, there is no established medical standard for evaluating the precision of acoustic trapping. Given that clinical-stage focused ultrasound systems regard 2.3 ± 0.9 mm as a fair criterion33, we tentatively adopt this baseline for evaluation. Although the achieved manipulation accuracy in our work can fulfill the focused ultrasound’s precision requirement, preliminarily demonstrating the potential of learning-empowered acoustic robotic manipulation, a considerable gap still exists before clinical translation. Achieving sub-millimeter precision will require further validation at higher acoustic frequencies.

Closed-loop control for robust levitation

The closed-loop control is designed to compensate for spatial drift between the levitating position and target point, which can counteract perturbations induced by time-varying environments and plastic wrap. Here, the movement ranges of the mixed gases were 4 mm in vertical direction and 7 mm in horizontal direction, matching the amplitude of respiration-induced tissue motion30. The chamber followed a linear trajectory from the lower-right to the upper-left. By coordinating the motor drive speeds in both directions, the chamber simultaneously reached its maximum displacements along the X- and Y-axes, and then returned to its initial position in reverse.

Figure 9 compares the spatial drift of the PS ball before and after implementing closed-loop control, and Supplementary Movie 4 provides a demonstration. The results were based on the real-time 3D position coordinates measured by the stereo camera. We used the drift on XY-plane (stereo camera system) to present the bead’s spatial drift. The drift on Z-axis was disregarded, because its fluctuation amplitude was confined to within 2.04%. This result was consistent with our hardware setup, where the chamber was not moved on Z-axis. Figure 9a, b respectively, present the actual X- and Y-coordinate of the trapped bead, with the horizontal axis representing the frame index. This analysis is divided into three stages: stage I features stable levitation; stages II and III exhibit time-varying environment-induced spatial drift. Stage II, without closed-loop control, serves as the baseline. Figure 9a shows that the time-varying chamber only slightly increased the deviation range along X-axis, fluctuating from –0.6 mm to 0.5 mm. This deviation did not trigger the drift correction on X-axis, indicating that the effects of plastic wrap and the mixed gases are negligible. In contrast, as shown in Fig. 9b, the bead experiences a much more pronounced drift in Y-axis. In stages II and III, the floating path of the bead repeatedly presents a w-shape. Its five apexes are defined as feature points, i.e., FP.1, 2, 3, 4 and 5. The full-width-at-half-maxima (FWHM) at FP.2 and FP.5 is used to quantify the bead’s off-target time. The relevant results are listed in Table 1. Regarding drift magnitude, the reduction is noted at FP.1, 3, and 4; however, at FP.2 and FP.5, drift magnitudes are either not reduced or only marginally increased. This occurrence is primarily attributed to high drift velocities and the computation latency in our control framework (≤15 fps), both of which prevent immediate correction. This explanation can be corroborated by the FWHM results in Table 1. Compared to stage II without closed-loop control, the FWHM values in stage III are reduced by at least 50%, with some reaching up to 83.3%. This demonstrates that this control leads to a substantial reduction in the bead’s off-target time, although a few peak drifts persist. In Fig. 9c, d, two representative frame sequences are supplemented to show the bead spatial drift, illustrating scenarios without and with closed-loop control, respectively. In each sequence, the time interval between two selected frames is 167 ms. Conversely, without closed-loop control, the ball remains off-target for over four frames since it reaches the maximum deviation; however, with control, the ball is promptly repositioned to target area’s boundary in the subsequent frame. These experimental results demonstrate that our closed-loop control framework can effectively compensate for time-varying multi-medium environments, thereby improving the robustness of acoustic robotic trapping. In future clinical applications, MR-based position tracking markers12 will be attached to the patient’s abdominal skin, which can provide rapid (~28 fps) feedback of tissue motions to the learning models for phase updates. These achieved capabilities are pivotal for future medical scenarios, particularly in adapting to respiration-induced tissue motion.

a Graph illustrating the PS ball’s drift in X-axis (stereo camera coordinate system). Its position in X-axis is almost unaffected by the chamber movement, fluctuating within the range of –0.6 mm to +0.5 mm around target point (27.4 mm). b Graph illustrating the PS ball’s drift on Y-axis. Stage I depicts the ball levitated at the target position. In stage II and III, the chamber movements trigger the spatial drift. Only stage III activates the closed-loop control. In these two stages, the floating path of the ball presents a w-shape. Its five apexes are defined as feature points, i.e., FP.1, FP.2, FP3, FP.4 and FP.5. The full width at half maxima (FWHM) at FP.2 and FP.5 is used to quantify the ball’s off-target time. The results show that the control can reduce the drift amplitude or off-target time. c A representative frame sequence on ball spatial drift without closed-loop control. d A representative frame sequence on ball spatial drift with closed-loop control.

Apart from evaluating the correction of peak drift and the reduction in off-target time, we also assessed the errors in correction, specifically the deviations between the corrected PS bead positions and target position (x = 27.40 mm, y = 5.75 mm). Six typical corrections are presented in Table 2. The deviations mainly occurred along the Y-axis, with the maximum error reaching –1.18 mm. This major error was primarily caused by the inaccurate accounting of plastic wrap’s effect in Eq. (4). Considering that the wrap mainly served to separate the mixed gases from air, but would not exist in future medical scenarios, we did not further investigate its influence to eliminate this range of errors.

Conclusion

In the letter, we presented a learning-based model and a closed-loop control scheme to generate the non-invasive acoustic robotic manipulator in 3D time-varying multi-medium environments. Initially, we identified a sample spacing of 0.5λ as an optimal threshold. This threshold can balance sample size against prediction accuracy in 3D environment. Based on this sampling strategy, the trained model achieved high precision for ToF prediction with a mean error of 0.24 µs. These precise ToF results enabled accurate phase modulation of 196 transducer elements within 26 ms simultaneously. Such computational efficiency represented a six-order-of-magnitude improvement over the conventional numerical method (i.e., k-Wave). For the closed-loop control, we utilized computer vision feedback to iteratively update phase patterns. In our case, this scheme effectively adapted to the time-varying environments, ensuring that the trapped PS ball remains robust levitation around the target position. This compensation mechanism shows great potential for adapting to respiration-induced tissue motion, thereby maintaining accurate alignment of acoustic traps with tumor vessels. Therefore, the combination of the proposed learning-based model and closed-loop control holds promise for modulating robot-assisted MRI-guided ultrasound therapy.

However, this work only serves as a preliminary validation of learning-based acoustic trapping for microbubble manipulation. In the future, improvements in both model prediction and the vision algorithm are required to achieve a higher phase update rate. This would help mitigate the spatial drift of acoustic traps in highly dynamic physiological motions. Meanwhile, we will design more advanced hardware equipped with higher-power transducers, suitable for experiments in water and at higher frequencies (e.g., 1 MHz), aiming to validate the learning-based model and control framework in biomimetic/biological media. Our current work only uses three types of materials with linear movements. This medium model does not adequately reflect the complexity and non-linear motion of real human tissues. In addition, RGB cameras are not compatible with MR environment, lacking the capability to monitor patients’ tissue motions. Moreover, they are unable to sense the position of acoustic traps within the body. Therefore, MRI capable of visualizing both non-linear tissue motions and ultrasound interferences will play a crucial role in advancing acoustic trapping’s clinical procedures. More advanced MRI sequences (e.g., MR-acoustic radiation force imaging) have been successfully developed to detect the acoustic field patterns of focused ultrasound formed within living animals34. Looking ahead, acoustic traps formed inside the human body are likely to be spatially localized with a faster acquisition rate. This expected rate should be faster than 15 Hz to match the phase update for acoustic trapping, ensuring adaptability to highly dynamic physiological motions. This promising technique will enable reliable data acquisition in clinical scenarios without any invasive procedures, ultimately achieving learning-based acoustic trapping’s practical feasibility.

Data availability

The detailed data sets are available at a public GitHub repository (https://github.com/mengjwu/acoustictrap3D).

Code availability

The source code is available at a public GitHub repository (https://github.com/mengjwu/acoustictrap3D).

References

Wu, M. & Liao, W. Machine learning-empowered real-time acoustic trapping: an enabling technique for increasing MRI-guided microbubble accumulation. Sensors 24, 6342 (2024).

Marzo, A. et al. Holographic acoustic elements for manipulation of levitated objects. Nat. Commun. 6, 8661 (2015).

Lo, W.-C., Fan, C.-H., Ho, Y.-J., Lin, C.-W. & Yeh, C.-K. Tornado-inspired acoustic vortex tweezer for trapping and manipulating microbubbles. Proc. Natl. Acad. Sci. 118, e2023188118 (2021).

Zhou, Q., Zhang, J., Ren, X., Xu, Z. & Liu, X. Multi-bottle beam generation using acoustic holographic lens. Appl. Phys. Lett. 116, 133502 (2020).

Ozcelik, A. et al. Acoustic tweezers for the life sciences. Nat. Methods 15, 1021–1028 (2018).

Tang, T., Shen, C. & Huang, L. Propagation of acoustic waves and determined radiation effects on axisymmetric objects in heterogeneous medium with irregular interfaces. Phys. Fluids 36, 012023 (2024).

Baudoin, M. et al. Spatially selective manipulation of cells with single-beam acoustical tweezers. Nat. Commun. 11, 4244 (2020).

Yang, Y. et al. 3D acoustic manipulation of living cells and organisms based on 2D array. IEEE Trans. Biomed. Eng. 69, 2342–2352 (2022).

Hammarström, B., Laurell, T. & Nilsson, J. Seed particle-enabled acoustic trapping of bacteria and nanoparticles in continuous flow systems. Lab Chip 12, 4296 (2012).

Rizzitelli, S. et al. Sonosensitive theranostic liposomes for preclinical in vivo MRI-guided visualization of doxorubicin release stimulated by pulsed low intensity non-focused ultrasound. J. Control. Rel. 202, 21–30 (2015).

Dai, J. et al. Learning-based efficient phase-amplitude modulation and hybrid control for MRI-guided focused ultrasound treatment. IEEE Robot. Autom. Lett. 9, 995 (2024).

Cheung, C. L. et al. Omnidirectional monolithic marker for intra-operative MR-based positional sensing in closed MRI. IEEE Trans Med. Imaging 43, 439–448 (2024).

Marzo, A. & Drinkwater, B. W. Holographic acoustic tweezers. Proc. Natl. Acad. Sci. 116, 84–89 (2019).

Tang, T. & Huang, L. Soundiation: a software in evaluation of acoustophoresis driven by radiation force and torque on axisymmetric objects. J. Acoust. Soc. Am. 152, 2934–2945 (2022).

Tang, T., Shen, C. & Huang, L. Acoustic rotation of non-spherical micro-objects: Characterization of acoustophoresis and quantification of rotational stability. J. Sound Vib. 554, 117694 (2023).

Yang, Y. et al. In-vivo programmable acoustic manipulation of genetically engineered bacteria. Nat. Commun. 14, 3297 (2023).

Cao, H. X. et al. Holographic acoustic tweezers for 5-DoF manipulation of nanocarrier clusters toward targeted drug delivery. Pharmaceutics 14, 1490 (2022).

Yang, Y. et al. Self-navigated 3D acoustic tweezers in complex media based on time reversal. Research 2021, 9781394 (2021).

Zhong, C., Jia, Y., Jeong, D. C., Guo, Y. & Liu, S. AcousNet: a deep learning based approach to dynamic 3D holographic acoustic field generation from phased transducer array. IEEE Robot. Autom. Lett. 7, 666–673 (2022).

Schoen, S. & Arvanitis, C. D. Heterogeneous angular spectrum method for trans-skull imaging and focusing. IEEE Trans. Med. Imaging 39, 1605–1614 (2020).

Wu, F., Thomas, J. L. & Fink, M. Time reversal of ultrasonic fields. Il. Experimental results. Phys. Rev. Appl. 39, 567–578 (1992).

Ley, M. W. & Bruus, H. Three-dimensional numerical modeling of acoustic trapping in glass capillaries. Phys. Rev. Appl. 8, 024020 (2017).

Zhong, C. et al. Real-time acoustic holography with physics-based deep learning for robotic manipulation. IEEE Trans. Autom. Sci. Eng. 21, 1–10 (2023).

Gor’kov, L. P. On the forces acting on a small particle in an acoustical field in an ideal fluid. J. Dokl. Akad. Nauk SSS 140, 88–91 (1961).

Wu, M. et al. A method to detect circle based on Hough transform. In Proceedings of the First International Conference on Information Sciences, Machinery, Materials and Energy, 2013–2016, (Atlantis Press, 2015).

Treeby, B. E. & Cox, B. T. k-Wave: MATLAB toolbox for the simulation and reconstruction of photoacoustic wave fields. J. Biomed. Opt. 15, 021314 (2010).

Rumelhart, D. E., Hinton, G. E. & Williams, R. J. Learning representations by back-propagating errors. Nature 323, 533–536 (1986).

Acorda, J. A., Yamada, H. & Ghamsari, S. M. Evaluation of fatty infiltration of the liver in dairy cattle through digital analysis of hepatic ultrasonograms. Vet. Radiol. Ultrasound. 35, 120–123 (1994).

Tempany, C., MacDonold, N., Stewart, E. A., & Hynynen, K. Tumor Ablation: Principles and Practice (Springer, 2005).

Gierga, D. P. et al. Quantification of respiration-induced abdominal tumor motion and its impact on IMRT dose distributions. Int. J. Radiat. Oncol. Biol. Phys 58, 1584–1595 (2004).

Im, K. & Park, Q.-H. Omni-directional and broadband acoustic anti-reflection and universal acoustic impedance matching. Nanophotonics 11, 2191–2198 (2022).

Suchenek, M. & Borowski, T. Measuring sound speed in gas mixtures using a photoacoustic generator. Int. J. Thermophys. 39, 11 (2018).

Schoen, S. Jr et al. Towards controlled drug delivery in brain tumors with microbubble-enhanced focused ultrasound. Adv. Drug Deliv. Rev. 180, 114043 (2022).

Auboiroux, V. et al. ARFI-prepared MRgHIFU in liver: simultaneous mapping of ARFI-displacement and temperature elevation, using a fast GRE-EPI sequence. Magn. Reson. Med. 68, 932–946 (2012).

Acknowledgements

This work is supported in part by National Natural Science Foundation of China (Grant No. 12504533), Guangdong Basic and Applied Basic Research Foundation (Grant No. 2023A1515110927) and Guangdong University Featured Innovation Project (Grant No. 2025KTSCX129); in part by Shaanxi Provincial Department of Education (Grant No. 24JS024). We sincerely thank Prof. Huang Lixi for his valuable suggestions on the experiments. We are also grateful to Prof. Kwok Ka-Wai and Dr. Dai Jing for their early insights that contributed to the development of the idea.

Author information

Authors and Affiliations

Contributions

Mengjie Wu is with Department of Mechanical Engineering, The University of Hong Kong, Hong Kong SAR, China. M. Wu conceptualized the study, designed the learning model and data analysis, developed the stereo vision algorithm, designed the chamber and printed circuit board, conducted the COMSOL study and manipulation experiments, and drafted and edited the manuscript. Xiaohan Li is with School of Information and Control Engineering, Xi’An University of Architecture and Technology, Xi’An, China. X. Li designed the stereo vision algorithm, recommended camera modules, and revised the manuscript. Tianquan Tang is with Department of Mechanical Engineering, The University of Hong Kong, Hong Kong SAR, China, and also with School of Mechatronic Engineering and Automation, Foshan University, Foshan, China. T. Tang is the corresponding author (tianquan@connect.hku.hk). T. Tang conceptualized the study, designed the chamber, advised on experiments, and edited the manuscript.

Corresponding author

Ethics declarations

Competing interests

The authors declare no competing interests.

Peer review

Peer review information

Communications Engineering thanks Gordon Dobie and the other, anonymous, reviewer(s) for their contribution to the peer review of this work. Primary Handling Editors: [Liangfei Tian] and [Philip Coatsworth]. A peer review file is available.

Additional information

Publisher’s note Springer Nature remains neutral with regard to jurisdictional claims in published maps and institutional affiliations.

Rights and permissions

Open Access This article is licensed under a Creative Commons Attribution-NonCommercial-NoDerivatives 4.0 International License, which permits any non-commercial use, sharing, distribution and reproduction in any medium or format, as long as you give appropriate credit to the original author(s) and the source, provide a link to the Creative Commons licence, and indicate if you modified the licensed material. You do not have permission under this licence to share adapted material derived from this article or parts of it. The images or other third party material in this article are included in the article’s Creative Commons licence, unless indicated otherwise in a credit line to the material. If material is not included in the article’s Creative Commons licence and your intended use is not permitted by statutory regulation or exceeds the permitted use, you will need to obtain permission directly from the copyright holder. To view a copy of this licence, visit http://creativecommons.org/licenses/by-nc-nd/4.0/.

About this article

Cite this article

Wu, M., Li, X. & Tang, T. Machine learning-facilitated real-time acoustic trapping in time-varying multi-medium environments toward magnetic resonance imaging-guided microbubble manipulation. Commun Eng 5, 52 (2026). https://doi.org/10.1038/s44172-026-00600-z

Received:

Accepted:

Published:

Version of record:

DOI: https://doi.org/10.1038/s44172-026-00600-z