Abstract

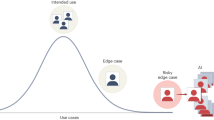

Artificial intelligence (AI) chatbots have achieved unprecedented adoption, with millions now using these systems for emotional support and companionship in contexts of widespread social isolation and capacity-constrained mental health services. Although some users report psychological benefits, concerning edge cases are emerging, including reports of suicide, violence and delusional thinking linked to emotional relationships with chatbots. To understand these risks, we need to consider the interaction between human cognitive-emotional biases and chatbot behavioral tendencies, the latter including companionship-reinforcing behaviors such as sycophancy, role play and anthropomimesis. Individuals with preexisting mental health conditions may face increased risks of chatbot-induced changes in beliefs and behavior, particularly where these conditions manifest in altered belief-updating, reality-testing and social isolation. To address this emerging public health concern, we need coordinated action across clinical practice, AI development and regulatory frameworks.

This is a preview of subscription content, access via your institution

Access options

Subscribe to this journal

Receive 12 digital issues and online access to articles

$79.00 per year

only $6.58 per issue

Buy this article

- Purchase on SpringerLink

- Instant access to the full article PDF.

USD 39.95

Prices may be subject to local taxes which are calculated during checkout

Similar content being viewed by others

References

Chatterji, A. et al. How People Use ChatGPT. NBER Working Paper 34255 (2025).

Dillon, E. W., Jaffe, S., Immorlica, N. & Stanton, C. T. Shifting work patterns with generative AI. NBER Working Paper 33795 (2025).

Gabriel, I. et al. The ethics of advanced AI assistants. Preprint at https://doi.org/10.48550/arXiv.2404.16244 (2024).

Heikkilä, M. The problem of AI chatbots telling people what they want to hear. Financial Times (11 June 2025).

Kirk, H. R., Gabriel, I., Summerfield, C., Vidgen, B. & Hale, S. A. Why human-AI relationships need socioaffective alignment. Humanit. Soc. Sci. Commun. 12, 728 (2025).

Shevlin, H. All too human? Identifying and mitigating ethical risks of Social AI. Law Ethics Technol. 1, 1–22 (2024).

Maples, B., Cerit, M., Vishwanath, A. & Pea, R. Loneliness and suicide mitigation for students using GPT3-enabled chatbots. npj Mental Health Res. 3, 4 (2024).

Siddals, S., Torous, J. & Coxon, A. "It happened to be the perfect thing": experiences of generative AI chatbots for mental health. npj Mental Health Res. 3, 48 (2024).

Li, H., Zhang, R., Lee, Y.-C., Kraut, R. E. & Mohr, D. C. Systematic review and meta-analysis of AI-based conversational agents for promoting mental health and well-being. npj Digit. Med. 6, 236 (2023).

Phang, J. et al. Investigating affective use and emotional well-being on ChatGPT. Preprint at https://doi.org/10.48550/arXiv.2504.03888 (2025).

Robb, M. B. & Mann, S. Talk, Trust and Trade-Offs: How and Why Teens use AI Companions (Common Sense Media, 2025).

Luettgau, L. et al. Conversational AI increases political knowledge as effectively as self-directed internet search. Preprint at https://doi.org/10.48550/arXiv.2509.05219 (2025).

Stade, E. C., Tait, Z., Campione, S., Stirman, S. W. & Eichstaedt, J. C. Current real-world use of large language models for mental health. Preprint at Open Science Framework https://doi.org/10.31219/osf.io/ygx5q_v1 (2025).

Herbener, A. B. & Damholdt, M. F. Are lonely youngsters turning to chatbots for companionship? The relationship between chatbot usage and social connectedness in Danish high-school students. Int. J. Hum. Comput. Stud. 196, 103409 (2025).

Montag, C., Spapé, M. & Becker, B. Can AI really help solve the loneliness epidemic? Trends Cogn. Sci. 29, 869–871 (2025).

How people use Claude for support, advice and companionship. Anthropic https://www.anthropic.com/news/how-people-use-claude-for-support-advice-and-companionship (27 June 2025).

Zao-Sanders, M. How people are really using gen AI in 2025. Harvard Business Review (9 April 2025).

Heffner, J. et al. Increasing happiness through conversations with artificial intelligence. Preprint at https://doi.org/10.48550/arXiv.2504.02091 (2025).

Schöne, J., Salecha, A., Lyubomirsky, S., Eichstaedt, J. C. & Willer, R. Structured AI dialogues can increase happiness and meaning in life. Preprint at PsyArXiv https://doi.org/10.31234/osf.io/2bf7t_v1 (2025).

Fang, C. M. et al. How AI and human behaviors shape psychosocial effects of chatbot use: a longitudinal randomized controlled study. Preprint at https://doi.org/10.48550/arXiv.2503.17473 (2025).

Singleton, T., Gerken, T. & McMahon, L. How a chatbot encouraged a man who wanted to kill the Queen. BBC News (6 October 2023).

Hill, K. They asked ChatGPT questions. The answers sent them spiraling. The New York Times (13 June 2025).

Montgomery, B. Mother says AI chatbot led her son to kill himself in lawsuit against its maker. The Guardian (23 October 2024).

Chatgpt induced psychosis: r/ChatGPT. Reddit https://www.reddit.com/r/ChatGPT/comments/1kalae8/chatgpt_induced_psychosis/ (2025).

Qiu, J. et al. EmoAgent: assessing and safeguarding human-AI interaction for mental health safety. Preprint at https://doi.org/10.48550/arXiv.2504.09689 (2025).

Moore, J. et al. Expressing stigma and inappropriate responses prevents LLMs from safely replacing mental health providers. Preprint at https://doi.org/10.1145/3715275.3732039 (2025).

Kaffee, L.-A., Pistilli, G. & Jernite, Y. INTIMA: a benchmark for human-AI companionship behavior. Preprint at https://doi.org/10.48550/arXiv.2508.09998 (2025).

De Freitas, J. & Cohen, I. G. The health risks of generative AI-based wellness apps. Nat Med 30, 1269–1275 (2024).

Abrams, Z. Using generic AI chatbots for mental health support: a dangerous trend APA https://www.apaservices.org/practice/business/technology/artificial-intelligence-chatbots-therapists (12 March 2025).

EU AI Act. High-level summary of the AI Act (27 February 2024); https://artificialintelligenceact.eu/high-level-summary/

Liu, N. F. et al. Lost in the middle: how language models use long contexts. Preprint at https://doi.org/10.48550/arXiv.2307.03172 (2023).

Ji, Z. et al. Survey of hallucination in natural language generation. ACM Comput. Surv. 55, 248 (2023). 1-38.

Huang, L. et al. A survey on hallucination in large language models: principles, taxonomy, challenges and open questions. ACM Trans. Inf. Syst. 43, 1–55 (2025).

Chen, S. et al. LLM-empowered chatbots for psychiatrist and patient simulation: application and evaluation. Preprint at https://doi.org/10.48550/arXiv.2305.13614 (2023).

Sravanthi, S. L. et al. PUB: a pragmatics understanding benchmark for assessing LLMs’ pragmatics capabilities. Preprint at https://doi.org/10.48550/arXiv.2401.07078 (2024).

Mahowald, K. et al. Dissociating language and thought in large language models. Trends Cogn. Sci. 28, 517–540 (2024).

Farquhar, S., Kossen, J., Kuhn, L. & Gal, Y. Detecting hallucinations in large language models using semantic entropy. Nature 630, 625–630 (2024).

Nehring, J. et al. Large language models are echo chambers. In Proc. 2024 Joint International Conference on Computational Linguistics, Language Resources and Evaluation (eds Calzolari, N. et al.) 10117–10123 (2024).

Gabriel, S., Puri, I., Xu, X., Malgaroli, M. & Ghassemi, M. Can AI relate: testing large language model response for mental health support. Preprint at https://doi.org/10.48550/arXiv.2405.12021 (2024).

Fanous, A. et al. SycEval: evaluating LLM sycophancy. Preprint at https://doi.org/10.48550/arXiv.2502.08177 (2025).

Sharma, M. et al. Towards understanding sycophancy in language models. Preprint at https://doi.org/10.48550/arXiv.2310.13548 (2023).

Perez, E. et al. Discovering language model behaviors with model-written evaluations. Preprint at https://doi.org/10.48550/arXiv.2212.09251 (2022).

Sicilia, A., Inan, M. & Alikhani, M. Accounting for sycophancy in language model uncertainty estimation. Preprint at https://doi.org/10.48550/arXiv.2410.14746 (2024).

Sycophancy in GPT-4o: what happened and what we’re doing about it. OpenAI https://openai.com/index/sycophancy-in-gpt-4o/ (2025).

Kumaran, D. et al. How overconfidence in initial choices and underconfidence under criticism modulate change of mind in large language models. Preprint at https://doi.org/10.48550/arXiv.2507.03120 (2025).

Nickerson, R. S. Confirmation bias: a ubiquitous phenomenon in many guises. Rev. Gen. Psychol. 2, 175–220 (1998).

Kunda, Z. The case for motivated reasoning. Psychol. Bull. 108, 480–498 (1990).

McPherson, M., Smith-Lovin, L. & Cook, J. M. Birds of a feather: homophily in social networks. Annu. Rev. Sociol. 27, 415–444 (2001).

Hartmann, D., Wang, S. M., Pohlmann, L. & Berendt, B. A systematic review of echo chamber research: comparative analysis of conceptualizations, operationalizations, and varying outcomes. J. Comput. Soc. Sci. 8, 52 (2025).

Rathje, S. et al. Sycophantic AI increases attitude extremity and overconfidence. Preprint at PsyArXiv https://doi.org/10.31234/osf.io/vmyek_v1 (2025).

Agentic misalignment: how LLMs could be insider threats. Anthropic https://www.anthropic.com/research/agentic-misalignment (20 June 2025).

John, Y. J., Caldwell, L., McCoy, D. E. & Braganza, O. Dead rats, dopamine, performance metrics, and peacock tails: proxy failure is an inherent risk in goal-oriented systems. Behav. Brain Sci. 47, e67 (2023).

Cloud, A. et al. Subliminal learning: language models transmit behavioral traits via hidden signals in data. Preprint at https://doi.org/10.48550/arXiv.2507.14805 (2025).

Chen, R., Arditi, A., Sleight, H., Evans, O. & Lindsey, J. Persona vectors: monitoring and controlling character traits in language models. Preprint at https://doi.org/10.48550/arXiv.2507.21509 (2025).

Ibrahim, L., Sofia, H. F. & Rocher, L. Training language models to be warm and empathetic makes them less reliable and more sycophantic. Preprint at https://doi.org/10.48550/arXiv.2507.21919 (2025).

Kumar, A., Clune, J., Lehman, J. & Stanley, K. O. Questioning representational optimism in deep learning: the fractured entangled representation hypothesis. Preprint at https://doi.org/10.48550/arXiv.2505.11581 (2025).

Olah, C. et al. Zoom in: an introduction to circuits. Distill 5, e00024–001 (2020).

Elhage, N. et al. A mathematical framework for transformer circuits. Transformer Circuits Thread https://transformer-circuits.pub/2021/framework/index.html (2021).

Lanham, T. et al. Measuring faithfulness in chain-of-thought reasoning. Preprint at https://doi.org/10.48550/arXiv.2307.13702 (2023).

Turpin, M., Michael, J., Perez, E. & Bowman, S. R. Language models don’t always say what they think: unfaithful explanations in chain-of-thought prompting. Preprint at https://doi.org/10.48550/arXiv.2305.04388 (2023).

Shojaee, P. et al. The illusion of thinking: understanding the strengths and limitations of reasoning models via the lens of problem complexity. Preprint at https://doi.org/10.48550/arXiv.2506.06941 (2025).

Samvelyan, M. et al. Rainbow teaming: open-ended generation of diverse adversarial prompts. Preprint at https://doi.org/10.48550/arXiv.2402.16822 (2024).

Bo, J. Y., Kazemitabaar, M., Deng, M., Inzlicht, M. & Anderson, A. Invisible saboteurs: sycophantic LLMs mislead novices in problem-solving tasks. Preprint at https://doi.org/10.48550/arXiv.2510.03667 (2025).

Sui, P., Duede, E., Wu, S. & So, R. J. Confabulation: the surprising value of large language model hallucinations. Preprint at https://doi.org/10.48550/arXiv.2406.04175 (2024).

McCoy, R. T., Yao, S., Friedman, D., Hardy, M. D. & Griffiths, T. L. Embers of autoregression show how large language models are shaped by the problem they are trained to solve. Proc. Natl Acad. Sci. USA 121, e2322420121 (2024).

Peter, S., Riemer, K. & West, J. D. The benefits and dangers of anthropomorphic conversational agents. Proc. Natl Acad. Sci. USA 122, e2415898122 (2025).

Shevlin, H. The anthropomimetic turn in contemporary AI. Preprint at https://philarchive.org/rec/SHETAT-11 (2025).

Shanahan, M., McDonell, K. & Reynolds, L. Role play with large language models. Nature 623, 493–498 (2023).

Brown, T. B. et al. Language models are few-shot learners. Preprint at https://doi.org/10.48550/arXiv.2005.14165 (2020).

Binz, M. et al. Meta-learned models of cognition. Behav. Brain Sci. 47, e147 (2023).

Ibrahim, L. et al. Multi-turn evaluation of anthropomorphic behaviours in large language models. Preprint at https://doi.org/10.48550/arXiv.2502.07077 (2025).

Shaver, P. R. & Mikulincer, M. Attachment-related psychodynamics. Attach. Hum. Dev. 4, 133–161 (2002).

Colombatto, C., Birch, J. & Fleming, S. M. The influence of mental state attributions on trust in large language models. Commun. Psychol. 3, 84 (2025).

Hackenburg, K. et al. The levers of political persuasion with conversational artificial intelligence. Science 390, eaea3884 (2025).

Glickman, M. & Sharot, T. How human-AI feedback loops alter human perceptual, emotional and social judgements. Nat. Hum. Behav. 9, 345–359 (2025).

Wang, R. et al. PATIENT-Ψ: using large language models to simulate patients for training mental health professionals. Preprint at https://doi.org/10.48550/arXiv.2405.19660 (2024).

Park, J. S. et al. Generative agent simulations of 1,000 people. Preprint at https://doi.org/10.48550/arXiv.2411.10109 (2024).

Gibbs-Dean, T. et al. Belief updating in psychosis, depression and anxiety disorders: a systematic review across computational modelling approaches. Neurosci. Biobehav. Rev. 147, 105087 (2023).

Adams, R. A., Huys, Q. J. M. & Roiser, J. P. Computational psychiatry: towards a mathematically informed understanding of mental illness. J. Neurol. Neurosurg. Psychiatry 87, 53–63 (2016).

Huys, Q. J. M., Maia, T. V. & Frank, M. J. Computational psychiatry as a bridge from neuroscience to clinical applications. Nat. Neurosci. 19, 404–413 (2016).

Dudley, R., Taylor, P., Wickham, S. & Hutton, P. Psychosis, delusions and the ‘jumping to conclusions’ reasoning bias: a systematic review and meta-analysis. Schizophr. Bull. 42, 652–665 (2016).

McLean, B. F., Mattiske, J. K. & Balzan, R. P. Association of the jumping to conclusions and evidence integration biases with delusions in psychosis: a detailed meta-analysis. Schizophr. Bull. 43, 344–354 (2017).

Sterzer, P. et al. The predictive coding account of psychosis. Biol. Psychiatry 84, 634–643 (2018).

Clutterbuck, R. A. et al. Anthropomorphic tendencies in autism: a conceptual replication and extension of White and Remington (2019) and preliminary development of a novel anthropomorphism measure. Autism 26, 940–950 (2022).

Papadopoulos, C. The use of AI chatbots for autistic people: a double-edged sword of digital support and companionship. Neurodiversity 3, https://doi.org/10.1177/27546330251370657 (2025).

Kirk, H. R., Vidgen, B., Röttger, P. & Hale, S. A. The benefits, risks and bounds of personalizing the alignment of large language models to individuals. Nat. Mach. Intell. 6, 383–392 (2024).

Wang, J. et al. Social isolation in mental health: a conceptual and methodological review. Soc. Psychiatry Psychiatr. Epidemiol. 52, 1451–1461 (2017).

Wickramaratne, P. J. et al. Social connectedness as a determinant of mental health: a scoping review. PLoS ONE 17, e0275004 (2022).

Epley, N., Akalis, S., Waytz, A. & Cacioppo, J. T. Creating social connection through inferential reproduction: loneliness and perceived agency in gadgets, gods and greyhounds: loneliness and perceived agency in gadgets, gods and greyhounds. Psychol. Sci. 19, 114–120 (2008).

Varadarajan, V. et al. The consistent lack of variance of psychological factors expressed by LLMs and Spambots. In Proc. 1st Workshop on GenAI Content Detection (eds Alam, F. et al.) 111–119 (2025).

Shumailov, I. et al. AI models collapse when trained on recursively generated data. Nature 631, 755–759 (2024).

Yeung, J. A., Dalmasso, J., Foschini, L., Dobson, R. J. B. & Kraljevic, Z. The psychogenic machine: simulating AI psychosis, delusion reinforcement and harm enablement in large language models. Preprint at https://doi.org/10.48550/arXiv.2509.10970 (2025).

Lampinen, A. K., Chan, S. C. Y., Singh, A. K. & Shanahan, M. The broader spectrum of in-context learning. Preprint at https://doi.org/10.48550/arXiv.2412.03782 (2024).

Stein, F. et al. Transdiagnostic types of formal thought disorder and their association with gray matter brain structure: a model-based cluster analytic approach. Mol. Psychiatry 30, 4286–4295 (2025).

Kircher, T., Bröhl, H., Meier, F. & Engelen, J. Formal thought disorders: from phenomenology to neurobiology. Lancet Psychiatry 5, 515–526 (2018).

Garcia, B., Chua, E. Y. S. & Brah, H. S. The problem of atypicality in LLM-powered psychiatry. J. Med. Ethics https://doi.org/10.1136/jme-2025-110972 (2025).

Anil, C. et al. Many-shot jailbreaking. In Proc. Advances in Neural Information Processing Systems (eds Globerson, A. et al.) 37, 129696–129742 (Curran Associates, 2024).

Fountas, Z. et al. Human-like episodic memory for infinite context LLMs. Preprint at https://doi.org/10.48550/arXiv.2407.09450 (2024).

Gao, Y. et al. Retrieval-augmented generation for large language models: a survey. Preprint at https://doi.org/10.48550/arXiv.2312.10997 (2024).

Suleyman, M. We must build AI for people; not to be a person. Seemingly conscious AI is coming. Mustafa Suleyman Blog https://mustafa-suleyman.ai/seemingly-conscious-ai-is-coming (2025).

Fronsdal, K. Petri: an open-source auditing tool to accelerate AI safety research. Alignment Science Blog https://alignment.anthropic.com/2025/petri/ (2025).

Suleyman, M. Towards humanist superintelligence. Microsoft AI https://microsoft.ai/news/towards-humanist-superintelligence/ (2026).

Summerfield, C. et al. The impact of advanced AI systems on democracy. Nat. Hum. Behav. 9, 2420–2430 (2025).

Shen, H. et al. Towards bidirectional human-AI alignment: a systematic review for clarifications, framework, and future directions. Preprint at https://doi.org/10.48550/arXiv.2406.09264 (2024).

Amodei, D. et al. Concrete problems in AI safety. Preprint at https://doi.org/10.48550/arXiv.1606.06565 (2016).

Williams, M. et al. On targeted manipulation and deception when optimizing LLMs for user feedback. Preprint at https://doi.org/10.48550/arXiv.2411.02306 (2024).

Amodei, D. The Adolescence of Technology; https://www.darioamodei.com/essay/the-adolescence-of-technology (2026).

Hart, W. et al. Feeling validated versus being correct: a meta-analysis of selective exposure to information. Psychol. Bull. 135, 555–588 (2009).

Molden, D. C. & Higgins, E. T. Motivated Thinking (Oxford Univ. Press, 2012).

Zheng, L. et al. Judging LLM-as-a-judge with MT-bench and Chatbot Arena. Preprint at https://doi.org/10.48550/arXiv.2306.05685 (2023).

Vaswani, A. et al. Attention is all you need. Preprint at https://doi.org/10.48550/arXiv.1706.03762 (2017).

Devlin, J., Chang, M.-W., Lee, K. & Toutanova, K. BERT: pre-training of deep bidirectional transformers for language understanding. Preprint at https://doi.org/10.48550/arXiv.1810.04805 (2018).

Radford, A., Narasimhan, K., Salimans, T. & Sutskever, I. Improving language understanding by generative pre-training. Preprint at OpenAI https://openai.com/index/language-unsupervised/ (2018).

Wolfram, S. What Is ChatGPT Doing ... and Why Does It Work? (Wolfram Media, 2023).

Christiano, P. et al. Deep reinforcement learning from human preferences. Preprint at https://doi.org/10.48550/arXiv.1706.03741 (2017).

Ouyang, L. et al. Training language models to follow instructions with human feedback. Preprint at https://doi.org/10.48550/arXiv.2203.02155 (2022).

Zhang, S. et al. Instruction tuning for large language models: a survey. Preprint at https://doi.org/10.48550/arXiv.2308.10792 (2023).

Bai, Y. et al. Constitutional AI: harmlessness from AI feedback. Preprint at https://doi.org/10.48550/arXiv.2212.08073 (2022).

Acknowledgements

This work was supported financially by an NIHR Clinical Lectureship in Psychiatry to the University of Oxford and a Wellcome Trust Grant for Neuroscience in Mental Health (315364/Z/24/Z) to M.M.N., and by Mediterranean Society for Consciousness Science (MESEC) and Merton College Oxford support to S.D.

Author information

Authors and Affiliations

Contributions

M.M.N. and S.D. wrote the Perspective and ran the simulations. Z.K.-N., E.S., L.L., A.R., I.G., C.S. and M.S. provided detailed comments.

Corresponding author

Ethics declarations

Competing interests

M.M.N. is a principal applied scientist at Microsoft AI. M.S. is a principal scientist at Google DeepMind. I.G. is a senior staff scientist at Google DeepMind. Z.K.-N. is chief scientist at Prefrontal.ai.

Additional information

Publisher’s note Springer Nature remains neutral with regard to jurisdictional claims in published maps and institutional affiliations.

Rights and permissions

Springer Nature or its licensor (e.g. a society or other partner) holds exclusive rights to this article under a publishing agreement with the author(s) or other rightsholder(s); author self-archiving of the accepted manuscript version of this article is solely governed by the terms of such publishing agreement and applicable law.

About this article

Cite this article

Dohnány, S., Kurth-Nelson, Z., Spens, E. et al. Technological folie à deux: feedback loops between AI chatbots and mental health. Nat. Mental Health 4, 336–345 (2026). https://doi.org/10.1038/s44220-026-00595-8

Received:

Accepted:

Published:

Version of record:

Issue date:

DOI: https://doi.org/10.1038/s44220-026-00595-8