Abstract

Tsunami science is moving from standalone physics simulations to integrated forecasting and risk-governance frameworks. This review synthesizes advances in multi-source observations, signal detection, propagation and inundation modeling, physics–AI hybrid prediction, and real-time warning workflows. It further discusses uncertainty communication, decision support, and pathways for linking forecast systems with resilient coastal planning and community preparedness.

Similar content being viewed by others

Introduction

Tsunamis are among the most destructive natural hazards, capable of causing massive damage and loss of life within a very short time1,2,3,4,5 (Fig. 1). Unlike many other natural hazards, tsunamis are complex phenomena generated by a wide range of seismic and non-seismic processes, including submarine earthquakes, volcanic eruptions, landslides, and meteorological disturbances6,7,8. Their inherent unpredictability, together with sudden energy release and rapid propagation, makes tsunami prediction particularly challenging9. The central difficulty of tsunami forecasting lies not only in the severe time constraints and high uncertainty, but also in the grave consequences that can arise from forecast errors. Because tsunami waves travel extremely fast in the deep ocean, the window for issuing reliable warnings is often only minutes to hours, leaving very limited tolerance for mistakes.

Circle size is proportional to the source moment magnitude for earthquake-triggered tsunamis; colors indicate the Soloviev–Imamura tsunami intensity scale. Gray denotes events for which intensity cannot be determined due to missing wave-height data. Green solid lines represent major plate boundaries. Adapted from ref. 5, with permission.

Fundamentally, the challenges of tsunami forecasting stem from the tight coupling among time constraints, uncertainty, and the real-world consequences of forecast-driven decisions. Tsunami waves can traverse vast ocean basins at high speeds, which requires forecasting to be performed in real time or near real time10,11. This is especially critical for near-field tsunamis (e.g., those triggered by offshore earthquakes), where the time between hazard onset and the arrival of the first wave at the coast is typically only a few minutes to tens of minutes. Such a narrow window severely limits opportunities for human intervention and evacuation, placing extremely high demands on both timeliness and accuracy. Meanwhile, tsunami prediction is intrinsically uncertain. On the one hand, the complexity of the ocean environment and seafloor topography, along with variations in earthquake magnitude, focal mechanism, and epicentral location, makes it difficult to fully and precisely characterize tsunami generation and propagation12,13. On the other hand, non-seismic tsunamis (e.g., those induced by submarine landslides and volcanic activity)14,15,16 further amplify uncertainty because their triggering mechanisms remain incompletely understood and their timing and scale are difficult to anticipate. In addition, tsunami forecasting is a high-stakes task: forecast outcomes have profound implications for public safety17 and socioeconomic stability18,19. Timely and reliable warnings can provide precious time for coastal evacuation and save lives; conversely, false alarms may disrupt normal operations, trigger unnecessary evacuations, and lead to substantial economic losses. Even more critically, missed warnings (i.e., failure to issue an alert when a tsunami is approaching) can be catastrophic, as they deprive communities of the opportunity to prepare and take protective actions.

In recent years, the limitations of the traditional “earthquake–physics model–warning” paradigm have become increasingly evident. The 2011 Great East Japan Earthquake and Tsunami, for example, exposed inherent deficiencies in existing forecasting systems: although the tsunamigenic earthquake was detected early, the arrival time and magnitude of the tsunami were not predicted with sufficient accuracy, ultimately contributing to catastrophic outcomes19. Similarly, the 2018 Sulawesi tsunami in Indonesia20,21 highlighted the major challenge of forecasting tsunamis generated by non-seismic processes such as submarine landslides—events that often lack clear seismic triggers and whose generation and amplification mechanisms are not yet fully understood, while related monitoring and warning technologies remain underdeveloped22. Comparable issues have repeatedly surfaced in other major events. During the 2004 Indian Ocean tsunami23,24, the absence of a robust regional warning system meant that coastal nations received little effective warning despite the enormous earthquake magnitude, resulting in more than 200,000 fatalities and underscoring systemic gaps in monitoring networks and information dissemination25. The 1998 Papua New Guinea tsunami26, widely considered to have been primarily landslide-triggered, had strong local impacts and short propagation distance yet caused exceptionally severe nearshore damage, revealing deficiencies in identifying small-scale but highly destructive tsunami sources27. More recently, the tsunami triggered by the 2022 Hunga Tonga–Hunga Ha’apai submarine volcanic eruption11 further demonstrated that volcanic activity and atmosphere–ocean coupling can produce complex tsunami responses on a global scale, exceeding the scope of earthquake-centric forecasting frameworks28. Collectively, these events indicate that current tsunami forecasting systems still face fundamental limitations in source characterization, multi-source trigger identification, and the coupling of complex processes. Consequently, there is an urgent need to move beyond a single earthquake-driven forecasting paradigm toward a more data-intensive, mechanism-inclusive, and uncertainty-aware integrated framework.

Over the past two decades, tsunami forecasting has undergone major transformations, reshaping the field. With breakthroughs in data acquisition, ocean observing systems, and real-time communications, global monitoring networks have expanded at an unprecedented pace29,30,31. Traditional approaches often relied on sparse, cable-based sensors, leading to delayed or inaccurate forecasts. The current data-driven era is changing this landscape in multiple ways. Large-scale observing facilities such as Deep-ocean Assessment and Reporting of Tsunamis (DART) enable continuous, real-time monitoring of wave heights and seafloor pressure, providing high-frequency data support for early tsunami detection and tracking32. Meanwhile, advances in satellite remote sensing have extended observational coverage across space and time: satellite altimeters, radar, and synthetic aperture radar (SAR) can capture sea-surface height anomalies and deformation signals on a global scale, especially in the open ocean and other observation-sparse regions33. Stable, high-speed communications have further reduced latency between observation, analysis, and warning issuance, gradually shifting tsunami forecasting from reliance on fragmented and delayed observations toward near-real-time or real-time responses driven by multi-source data streams34. This fundamental change in the data environment makes it possible, for the first time, to acquire and jointly analyze seismic, oceanic, and satellite observations in a synchronized manner, laying a critical foundation for improving forecast accuracy, timeliness, and operational usefulness.

Physics-based tsunami models remain scientifically indispensable for describing wave generation, propagation, and inundation processes and continue to serve as a key pillar of forecasting systems32,35,36,37,38. However, as warning needs evolve toward higher timeliness, more complex scenarios, and more explicit decision orientation, these models increasingly reveal limitations in computational efficiency, uncertainty representation, and adaptability to non-typical tsunami sources. To achieve faster, more accurate, and probabilistically informative forecasts, research and operational communities have begun to systematically incorporate data-driven techniques, integrating machine learning and artificial intelligence methods into traditional workflows39,40. This transition is not simply about replacing physics-based models with data-driven ones; rather, it aims to establish a new forecasting paradigm that fuses multi-source real-time observations, physical constraints, and statistical learning. The core goal is not only to improve predictive accuracy, but also to build an uncertainty-aware decision-support framework that can serve both second-to-minute real-time response and longer-term risk assessment and coastal planning (Fig. 2).

Intelligent real-time response tsunami forecasting and risk management system.

With the introduction of machine learning, artificial intelligence, and advanced data-analytic techniques, tsunami forecasting is transitioning from a static, rigid deterministic computational framework toward a dynamic modeling system that integrates multi-source real-time observations, adaptive modeling, and probabilistic risk assessment. Such systems can not only deliver near-real-time predictions shortly after event onset, but also provide probabilistic forecasts through uncertainty quantification, expressed across confidence levels and risk tiers, thereby offering decision-relevant information to support warning issuance, evacuation planning, and emergency resource allocation. Against this backdrop, this review first surveys the rapid evolution of data ecosystems for tsunami forecasting, including in situ ocean observations, seismic and geodetic measurements, satellite remote sensing, and emerging non-traditional data streams, and analyzes their key characteristics and challenges in terms of spatiotemporal resolution, uncertainty, and operational availability. It then synthesizes the progression of data-analysis methodologies from traditional signal processing to machine learning and deep learning, with particular emphasis on their roles in weak-signal detection, early triggering, and learning under rare-event regimes. Next, the review systematically compares the capabilities and limitations of physics-based models, purely data-driven approaches, and physics–AI hybrid models for tsunami prediction. Building on this foundation, it evaluates how real-time forecasting systems, risk assessment frameworks, and AI-enabled decision-support tools translate model outputs into actionable information. Finally, from an interdisciplinary perspective spanning science, policy, and society, the review discusses issues of communication, governance, and ethics in the use of forecasting information, and outlines future directions centered on digital twins, foundation models, and adaptive resilience. Through this systematic synthesis, the review aims to provide a unified analytical framework for understanding the ongoing paradigm shift in tsunami forecasting, identifying key technical bottlenecks, and advancing deeper integration between predictive science and risk-reduction practice.

Tsunami-relevant data acquisition

Tsunami forecasting and risk management depend heavily on real-time, high-accuracy data drawn from diverse sources. Over the past several decades, technological advances have led to an explosive growth in both the variety and volume of tsunami-relevant data available to researchers27,41,42,43,44,45. However, substantial challenges remain in effectively integrating and analyzing these data streams due to technical bottlenecks such as data heterogeneity, noise contamination, and the stringent requirements of real-time processing. This section systematically reviews the key data sources underpinning tsunami forecasting, delineates their distinctive strengths, and examines the central challenges associated with their operational use in prediction.

In situ ocean observations

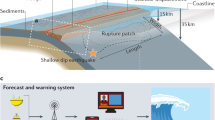

In situ ocean observations constitute one of the central pillars of tsunami monitoring and forecasting systems. Their distinctive value lies in the ability to directly and continuously record key physical processes occurring within the ocean—capabilities that cannot be fully replaced by land-based measurements or remote-sensing approaches. The in situ instruments most widely deployed in current tsunami observing networks include Deep-ocean Assessment and Reporting of Tsunamis (DART) buoys45, coastal tide gauges46, and ocean-bottom pressure sensors47, with a schematic of a modern tsunami monitoring system shown in Fig. 3. DART systems are typically deployed in deep-ocean settings, where ocean-bottom pressure sensors detect extremely subtle changes in the water-column pressure and thereby infer sea-surface conditions, enabling early identification of transoceanic tsunami waves during the initial stages of propagation48,49,50. Coastal tide gauges, in contrast, record the rapid sea-level fluctuations induced as tsunamis reach nearshore regions51,52. Ocean-bottom pressure sensors can capture pressure anomalies associated with passing tsunami waves at high temporal resolution in deep-water environments, providing critical evidence for characterizing wave dynamics and improving process understanding53,54.

Tsunami monitoring system.

A major advantage of in situ ocean observations is their high accuracy and real-time capability. These sensors directly measure fundamental physical quantities such as pressure and sea level, yielding data with clear physical meaning that provide essential evidence for tsunami detection, source inversion, and wave-propagation analysis. For example, Mizutani et al.47 used near-fault ocean-bottom pressure (OBP) recordings and successfully isolated tsunami signals from OBP data within tens of seconds after the earthquake, enabling rapid tsunami identification. Adriano et al.51 jointly assimilated observations from coastal tide gauges and offshore buoy stations to invert the tsunami source of the 2017 Mexico Mw 8.2 earthquake, substantially improving the stability and precision of estimated source parameters. In addition, Rabinovich and Eblé55 provided a systematic assessment of deep-ocean bottom-pressure observations across multiple transoceanic tsunami events, highlighting that DART systems can deliver crucial observational evidence during the early propagation stage and thus constitute a key data stream for operational tsunami early warning. Beyond event detection and source characterization, in situ observations play an important role in the calibration and validation of numerical simulations and forecasting models, thereby enhancing the overall reliability of both physics-based and data-driven approaches. Tanioka53, for instance, leveraged data from Japan’s S-net ocean-bottom pressure sensor network to improve near-field tsunami forecasting methods, demonstrating that dense in situ measurements are indispensable for model calibration and for achieving higher predictive accuracy. Owing to their near-real-time data transmission, in situ observing systems have become an integral component of tsunami warning architectures, providing rapid observational confirmation of tsunami occurrence and enabling continuous tracking of tsunami evolution following generation.

However, the global deployment of in situ observing networks continues to face multiple challenges. Despite the growing number of tsunami monitoring instruments in recent years, their spatial distribution remains highly uneven: sensors are concentrated primarily in traditionally high-risk regions such as the circum-Pacific, while vast deep-ocean and remote areas still lack effective coverage. In their systematic synthesis of global deep-ocean tsunami observations, Rabinovich and Eblé55 noted that the existing DART network exhibits substantial observational gaps in several ocean basins, which constrain the comprehensive capture of transoceanic tsunami propagation processes. Moreover, offshore and seafloor observing systems are costly to deploy, maintain, and operate over the long term, imposing stringent requirements on technical capacity and logistical support. Heidarzadeh and Gusman48 pointed out that installing and servicing deep-ocean bottom-pressure sensors depends on specialized vessels and complex subsea operations, making it difficult to rapidly scale dense observing networks worldwide. During sustained operation, instruments are also vulnerable to degradation from ocean currents, biofouling, and structural aging, which can reduce data quality or lead to observational outages.

In summary, although in situ ocean observing networks still face constraints related to spatial coverage and operational cost, their foundational role in tsunami monitoring and early warning remains irreplaceable. By directly measuring ocean-bottom pressure and sea-level variations, in situ observations provide the most immediate and physically robust basis for real-time estimation of tsunami wave heights, arrival times, and propagation characteristics. This high-precision, near-real-time information is particularly critical in the early post-generation phase, where it enables warning systems to rapidly verify event authenticity and supplies essential data for subsequent propagation analyses and risk assessment. As observing networks continue to expand and are increasingly integrated with numerical models and advanced data-processing methods, in situ observations will remain a central supporting component of future tsunami early warning architectures.

Seismic and geodetic data

Seismic and geodetic data constitute a foundational component of tsunami forecasting systems, playing a critical role in identifying and characterizing earthquake sources that generate the most destructive tsunamis. Seismic waveform observations can rapidly provide key parameters—such as magnitude, focal depth, and epicentral location—and therefore serve as the primary basis for assessing tsunamigenic potential. For example, Satake56 noted that following the 2011 Tohoku Mw 9.0 earthquake, global seismic networks delivered magnitude estimates and focal mechanism solutions within minutes, supplying the initial triggers for tsunami warning. However, reliance on seismic magnitude alone remains insufficient for accurately characterizing the true rupture scale and damaging potential of megathrust events. As an essential complement, Global Navigation Satellite System (GNSS) measurements can record coseismic ground displacements with high precision, providing direct constraints on fault slip behavior and, critically, vertical deformation—factors that govern the magnitude of initial sea-surface uplift or subsidence. Studies by Minson et al.57 and Zielke et al.58, based on landmark events such as the 2011 Tohoku earthquake, demonstrate that incorporating GNSS coseismic displacement data can effectively mitigate the magnitude-saturation problem inherent to seismic waveforms during very large earthquakes. This substantially improves the robustness of source inversion and yields more reliable constraints for constructing the initial tsunami wave field.

In recent years, advances in seafloor geodetic observing technologies have enabled more direct and higher-resolution measurements of offshore and far-field seafloor deformation induced by earthquakes, thereby substantially reducing uncertainty in source-parameter inversion. Compared with conventional approaches that rely primarily on land-based seismic and geodetic observations, seafloor measurements can record coseismic displacements much closer to the source region, providing stronger constraints on rupture extent, slip distribution, and vertical deformation. For example, Japan’s offshore GNSS-A seafloor geodesy system, together with seafloor observing networks such as S-net and DONET—comprising ocean-bottom seismometers and pressure sensors—provides critical observations of source processes and seafloor deformation for near-field tsunami forecasting, and has successfully captured coseismic seafloor deformation and associated bottom-pressure changes during multiple near-field earthquake events59.

These observations indicate that seafloor geodetic data not only compensate for the limited observational capability of onshore GNSS in offshore and remote regions but also reduce the dependence of source inversions on prior model assumptions. From the perspective of tsunami forecasting, such direct constraints on seafloor deformation are particularly valuable. Coseismic vertical displacement of the seafloor is a key determinant of initial sea-surface uplift or subsidence; accurately resolving this quantity can markedly improve the robustness of initial tsunami wave-field construction for near-field events. The study of Tanioka53 further showed that incorporating S-net bottom-pressure observations significantly reduced uncertainties in predicted tsunami arrival times and wave heights, underscoring the distinctive advantages of seafloor geodetic observations for near-field tsunami early warning.

A key advantage of seismic and geodetic data lies in their ability to support rapid source inversion in the source region, which constitutes one of the core components of modern tsunami warning and forecasting systems. Qiu et al.60 proposed a new two-step hybrid postseismic modeling approach that integrates real-time seismic and geodetic observations; such physics-informed insights provide a critical foundation for robust and rapid characterization of tsunamigenic sources. The local tsunami warning strategy T-LarmS proposed by Melgar et al.61 leverages regional seismic and GNSS observations to obtain rapid source information within minutes after an earthquake. This information is then used to estimate seafloor deformation and to drive tsunami propagation simulations, producing actionable warning maps and estimates of maximum expected amplitudes. These results demonstrate that seismic and geodetic data can provide essential, rapid constraints on tsunami sources for warning purposes.

By jointly analyzing seismic waveforms and crustal displacement fields, it is possible to infer fault geometry, slip distribution, and rupture extent within minutes of earthquake occurrence, and to use the inversion results as initial conditions for numerical tsunami propagation models62. Moreover, the high level of maturity of global and regional seismic monitoring networks makes seismic data one of the most reliable and commonly used triggering information sources in most operational tsunami warning systems today.

At the global scale, geodetic observations related to earthquakes and tsunamis primarily rely on four major Global Navigation Satellite Systems (GNSS) recognized by the United Nations: the United States’ GPS, Russia’s GLONASS, the European Union’s Galileo, and China’s BeiDou system. These systems differ in terms of coverage, signal design, and service focus, and together form the foundation of the current multi-source GNSS observation framework. Table 1 provides a brief summary of the basic characteristics of the major satellite positioning systems. However, earthquake and geodetic data still exhibit clear limitations in addressing the diverse mechanisms that trigger tsunamis. Non-seismic tsunamis caused by submarine landslides, volcanic eruptions, or multi-process coupling often lack clear seismic signals, making it difficult for earthquake-triggered warning mechanisms to identify them effectively63,64,65,66,67. In addition, although terrestrial seismic and GNSS networks are becoming increasingly dense, geodetic observations in oceanic regions—especially in the deep ocean—remain relatively sparse. This limitation hampers the detailed characterization of small-magnitude earthquakes, complex rupture processes, and nearshore seismic sources. Therefore, future tsunami forecasting systems need to integrate seismic and geodetic data with ocean observations, remote sensing data, and data-driven approaches in order to compensate for shortcomings in spatial coverage and mechanism identification.

Satellite remote sensing

Satellite remote sensing provides a synoptic and continuous observational perspective over the ocean and coastal zones, offering a distinct advantage for compensating for the limited spatial coverage of in situ observing networks. Satellite altimetry, in particular, can retrieve sea surface height (SSH) variations on a global scale, thereby enabling the detection of transoceanic tsunami waves in open-ocean regions where in situ observations are sparse or unavailable68,69. Although tsunami amplitudes in the deep ocean are typically small, their spatially coherent signatures can, under favorable observing conditions, be captured by satellite systems at large spatial scales. Event-level evidence further demonstrates this capability. For the 26 December 2004 Sumatra–Andaman tsunami, satellite altimeters (TOPEX/Poseidon and Jason-1) captured tsunami-related sea-surface height anomalies along-track in the open ocean, providing a direct benchmark for model–data comparison70. More recently, SWOT (Surface Water and Ocean Topography) observed the 2025 Kamchatka tsunami in swath mode, revealing the tsunami wave pattern with near-2D spatial context that is particularly valuable for validating forecasting models and motivating future real-time hazard assessment workflows.

In nearshore and onshore regions, satellite remote sensing plays a particularly important role in characterizing the impacts of tsunamis. Interferometric Synthetic Aperture Radar (InSAR), as a high-precision satellite remote sensing technique, can retrieve large-scale, high-resolution surface deformation fields (including subsidence and uplift) by processing the phase interference of two or more SAR images71,72,73. In coastal and terrestrial areas, this capability makes InSAR a crucial tool for depicting tsunami-related crustal deformation and the tectonic displacement processes closely associated with tsunami generation mechanisms.

First, during large tsunami events, significant coastal uplift or subsidence occurs in nearshore areas due to earthquake rupture, which constitutes one of the key physical mechanisms governing tsunami wave generation and propagation. For example, in the 2011 Mw 9.0 Tohoku-oki tsunami event off the Pacific coast of northeastern Japan, researchers successfully reconstructed the coseismic surface deformation field across hundreds of kilometers of the Japanese mainland using InSAR based on ALOS/PALSAR satellite data74. This deformation field included pronounced horizontal displacement and vertical subsidence along the coast of the Oshika Peninsula. These surface deformations directly reflect the generation of tsunami waves triggered by the earthquake and the associated release of energy in the ocean. Compared with traditional GPS monitoring methods, InSAR not only provides more spatially continuous deformation data but also achieves higher spatial resolution, enabling more precise characterization of tsunami wave spectra variations and propagation characteristics over broader areas. Consequently, InSAR has become an important tool for studying tsunamis and their impacts, offering notable advantages especially for dynamic monitoring in tsunami source regions and far-field areas.

Second, multi-temporal InSAR techniques (such as SBAS-InSAR and PS-InSAR) have advantages in time-series analysis, allowing quantitative inversion of coseismic and postseismic deformation processes and thereby revealing tsunami-induced surface dynamic evolution. For instance, studies of earthquake-induced terrestrial deformation have extracted millimeter-scale subsidence and uplift patterns over large source regions using Sentinel-1 data75, providing constraints for constructing accurate fault slip models and surface stress change analyses. These techniques are equally applicable to deformation analysis in tsunami source zones and coastal regions, enabling InSAR to capture not only the instantaneous deformation associated with the main shock but also the subsequent post-event land deformation evolution. This, in turn, facilitates linking surface deformation with complex processes such as tsunami wave propagation and geotechnical responses.

Optical remote sensing imagery can be used for the rapid assessment of tsunami-induced coastal inundation extent, infrastructure damage, land-use change, and shoreline evolution, and thus offers irreplaceable advantages for characterizing tsunami impacts in nearshore and onshore regions. In the immediate aftermath of a disaster, optical imagery can provide a spatially continuous chain of surface evidence, enabling rapid quantification of tsunami impacts throughout the entire process—from water intrusion to abrupt changes in land cover, damage to built-up areas, and geometric reshaping of the coastline. Ramírez-Herrera and Navarrete-Pacheco76 combined high-resolution optical imagery with digital elevation models (DEMs) to rapidly invert tsunami inundation distances and run-up heights across representative coastal geomorphic units, and validated the results through field surveys. Their work demonstrates that optical satellites can provide first-hand constraints for emergency mapping and subsequent numerical modeling when transportation infrastructure is damaged, and on-site access is limited. At broader regional scales, medium-resolution optical data enable rapid, cross-border or cross-basin reconnaissance of tsunami damage. Belward et al.77 conducted a systematic scan of the extensive coastline affected by the 2004 Indian Ocean tsunami using pre- and post-event MODIS imagery, identifying coastal zones of significant surface change and quantifying the order of magnitude of areas with “severely damaged or lost land.” This study shows that medium-resolution optical sensors can rapidly and consistently map extremely large impacted coastal belts after a tsunami, providing baseline data for cross-regional emergency response coordination and loss assessment. Beyond inundation extent, optical imagery is also highly sensitive to infrastructure damage and surface functional degradation. In the case of the 2018 Sulawesi earthquake and tsunami in Indonesia, Hu et al.78 used Sentinel-2 and Maxar WorldView-3 imagery to rapidly delineate tsunami-affected areas through pre- and post-event differenced spectral indices and threshold-based frameworks. They further examined the spatial correspondence between tsunami impacts, low-lying urban areas, and damage to coastal built-up zones, emphasizing the critical need to acquire imagery before cleanup and reconstruction alter the surface, as physical evidence of damage can disappear rapidly.

Collectively, these studies demonstrate that optical remote sensing not only indicates “where the water reached,” but also extracts spatial patterns closely related to engineering damage by integrating multiple cues, such as changes in surface reflectance and texture, vegetation and bare land transitions, and the loss or deformation of roads, levees, and buildings. These capabilities provide traceable evidence for subsequent vulnerability curve development, exposure statistics, and recovery assessment. Together, InSAR and optical remote sensing data form a critical information foundation for post-tsunami damage assessment and reconstruction analysis, offering essential support for impact quantification and long-term recovery planning.

Furthermore, tsunamis in nearshore areas often trigger long-term shoreline reshaping and sustained land-use transitions. Long-term optical image time series make it possible to distinguish between post-disaster transient erosion and multi-year human reconstruction and natural recovery. Ghadamode et al.79 selected the South Andaman coast affected by the 2004 tsunami as a case study and used multi-temporal imagery to quantitatively analyze shoreline changes, linking them with regional socioeconomic development. Their results emphasize that post-disaster expansion of built-up areas and ecological degradation can reshape future tsunami risk exposure patterns. Therefore, the key contribution of optical remote sensing lies not only in providing a “post-disaster snapshot,” but more importantly in supporting multi-scale quantification of tsunami impacts through repeatable spatiotemporal evidence—ranging from hour-to-day scale mapping of inundation and damage, to year-to-decade scale analyses of shoreline and land-use evolution and risk redistribution.

However, due to limitations such as satellite revisit cycles, data acquisition latency, and time-consuming processing workflows, satellite observations often cannot be delivered within the short time window required for tsunami early warning. In addition, there is an inherent trade-off between spatial and temporal resolution in satellite data: high-resolution SAR or optical imagery is typically difficult to acquire at high frequency, while altimetry data offer broad coverage but limited temporal sampling. Consequently, satellite remote sensing is better suited as an important complement to tsunami forecasting systems, to be used in synergy with in situ ocean observations, seismic data, and data-driven approaches, thereby fully leveraging its unique strengths in large-scale observation and post-disaster assessment.

Emerging and non-traditional data streams

In addition to conventional ocean observations, seismic measurements, and satellite data, a range of emerging and non-traditional data sources are increasingly being explored to complement tsunami monitoring and assessment systems. Among these, submarine cable observations based on Distributed Acoustic Sensing (DAS) have attracted substantial attention in recent years80. By converting telecommunication fiber-optic cables into dense virtual sensor arrays, DAS enables continuous, real-time monitoring of pressure variations, vibrations, and acoustic signals, and can be used to detect tsunami-wave propagation and seafloor deformation processes. Because submarine cables often span extensive areas of the seafloor, DAS systems have considerable potential to increase observational density in both offshore and nearshore domains, particularly in regions where deploying conventional instruments is challenging.

Beyond fiber-optic sensing, shipborne and civilian sensors are also regarded as valuable complementary data sources81,82. Some vessels can record pressure, motion, or navigation-related measurements during routine operations; after appropriate screening and processing, these observations may provide additional in situ information for tsunami monitoring. Although such data are not specifically designed for tsunami observation, their wide spatial coverage and large volume confer potential utility, particularly in regions with dense maritime traffic. In recent years, social media and crowdsourced information have likewise attracted attention as non-traditional data streams83,84,85. Eyewitness reports, images, and videos posted on social platforms can provide rapid situational awareness in the early stages following a tsunami, and may support impact assessment and emergency response, especially when official observations are delayed or disrupted. However, these data remain limited by a lack of structure and predictability, and their use in quantitative forecasting is still relatively constrained.

Although emerging and non-traditional data sources show considerable promise, their operational use in tsunami forecasting still faces substantial challenges. Issues of data quality and reliability are particularly salient: crowdsourced information is often noisy and unevenly distributed, underscoring the need for robust validation and filtering mechanisms. Moreover, differences among data sources in data formats, spatiotemporal resolution, and processing pipelines introduce additional technical complexity for integration with existing warning architectures. Consequently, these novel data streams can contribute meaningfully to tsunami forecasting and risk management only after rigorous quality control, appropriate assimilation, and coordinated fusion with conventional observing systems.

Core data challenges and implications for data-driven models

Although tsunami monitoring technologies have advanced substantially over recent decades, several fundamental data-related challenges persist and continue to constrain forecasting accuracy and the reliability of operational systems. One of the most pervasive issues is the prevalence of noise and strong non-stationarity in tsunami-relevant observations. Signals recorded by oceanographic and geophysical sensors are often influenced by a combination of background wind waves, tides, meteorological disturbances, and instrumental noise. This is particularly problematic during tsunami generation and the early propagation stage, when tsunami signatures are frequently obscured by complex background variability and are difficult to separate reliably. Moreover, tsunami dynamics are inherently transient and rapidly evolving, which clearly violates the stationarity assumptions underlying many conventional signal-processing and statistical modeling approaches. Consequently, tsunami forecasting requires analytical frameworks that can robustly extract weak signals from noisy, time-varying data streams under near-real-time constraints.

Another key challenge arises from the pronounced multiscale nature and spatiotemporal heterogeneity of tsunami data. Relevant observations range from high-frequency, local measurements acquired by in situ sensors to low-frequency, transoceanic-scale information provided by satellite remote sensing, with substantial disparities across data sources in sampling rate, spatial coverage, latency, and uncertainty characteristics. This heterogeneity makes the joint interpretation and effective fusion of multi-source observations particularly complex. Maintaining physical consistency across scales and developing forecasting models that can operate coherently in a cross-scale manner remain central challenges faced by both physics-based and data-driven approaches.

At the same time, tsunami forecasting is characterized by a structural tension in which “small-sample” and “big-data” regimes coexist. Although modern observing systems continuously generate massive, high-dimensional data streams, the number of informative samples that actually contain key tsunami signals remains extremely limited because destructive tsunami events are inherently rare. This class imbalance poses a major challenge for machine learning, which typically relies on large and representative training datasets to achieve robust generalization. Models trained on a small number of historical events are prone to overfitting and may perform poorly when confronted with atypical or compound tsunami scenarios. Accordingly, there is a pressing need for modeling strategies that explicitly account for data scarcity and uncertainty, including physics-constrained learning, transfer learning, synthetic data generation, and uncertainty-aware modeling.

Overall, the tsunami data ecosystem is inherently highly complex, encompassing multiple observational data streams that differ substantially in spatial coverage, resolution, latency, and reliability. In situ ocean measurements, seismic and geodetic observations, and satellite remote sensing remain the core pillars of tsunami detection and forecasting, providing complementary information across the source process, wave propagation, and impact assessment. Meanwhile, emerging data sources—including submarine fiber-optic sensing, shipborne observations, and crowdsourced information—are progressively extending observational frontiers and creating new opportunities for regions and scenarios that are difficult to capture with conventional monitoring infrastructures. However, greater diversity of data sources and expanding data volumes do not automatically translate into commensurate improvements in predictive capability. Effective tsunami forecasting increasingly depends on the rigorous integration of heterogeneous data, strict control of data quality and reliability, and the capacity to extract actionable information and deliver decision support within extremely constrained time windows. Against this backdrop, tsunami science is shifting from a focus on whether data can be acquired to how key information can be extracted from complex data streams to support decisions—thereby providing the scientific basis for advanced data-analytic methods and physics–AI hybrid forecasting frameworks.

Tsunami signal detection and feature extraction

With the growing availability of ocean observations, seismic monitoring, and satellite data, the informational basis for tsunami forecasting has undergone a qualitative leap. However, raw data streams are often contaminated by noise, dominated by redundancy, and populated with irrelevant features; they must therefore be processed and analyzed effectively in order to extract meaningful signals. This section systematically delineates the methodological transition from traditional signal-processing techniques to state-of-the-art approaches such as machine learning and deep learning, which enable high-density information extraction and convert raw tsunami-related observations into actionable decision-relevant evidence. As data complexity and volume continue to rise, modern analytical methods are providing a powerful impetus for improving the accuracy of tsunami detection, classification, and forecasting.

Classical signal processing approaches

Classical signal-processing techniques have long served as a core component of tsunami detection and early warning systems, providing fundamental tools for identifying tsunami-related signatures from oceanic and seismic observations that are often heavily contaminated by noise. The primary objective of these methods is to enhance the signal-to-noise ratio and extract discriminative features from time series—capabilities that are particularly critical for real-time operational systems requiring rapid and reliable responses. By exploiting differences in frequency content, temporal structure, and scale-dependent characteristics, classical approaches can, to a certain extent, separate tsunami signals from background wind waves, tides, and seismic noise86,87,88

In practice, spectral analysis is commonly used to characterize the low-frequency components of tsunami waves and to contrast them with short-period processes such as wind-driven waves. After mapping time-domain observations into the frequency domain via Fourier-based transforms, anomalous frequency components can be identified and quantified more directly. Building on this, low-pass or band-pass filters suppress noise outside target bands to emphasize spectral energy associated with tsunami propagation, and have been incorporated into relatively mature automated detection workflows for tide gauges, bottom-pressure sensors, and seismic monitoring pipelines86,88,89,90,91.

However, tsunami signals exhibit pronounced non-stationarity and transient behavior, and reliance on fixed frequency bands alone often fails to capture their full temporal evolution. Consequently, time–frequency methods (e.g., wavelet transforms) have been employed to decompose signals across multiple scales, enabling more effective detection of weak and short-lived sea-level or pressure anomalies embedded within complex backgrounds92,93,94,95,96. Although classical signal processing offers operational robustness and interpretability, its dependence on pre-defined frequency bands, thresholds, and hand-crafted features limits adaptability to complex, non-stationary, and out-of-distribution tsunami scenarios, motivating an increasing shift toward more flexible data-driven methodological families.

Machine learning for tsunami signal identification

As tsunami-related data streams continue to expand in type, dimensionality, and scale, reliance on traditional signal processing alone has become insufficient to cope with the complexity and diversity of modern observations. Against this backdrop, machine learning methods have increasingly been introduced for automated identification and classification of tsunami-related signals, making it feasible to distinguish potential tsunamigenic events from non-tsunami disturbances under near-real-time constraints. Compared with procedural strategies based on fixed thresholds or pre-defined frequency bands, learning-based models can infer discriminative decision boundaries from labeled data and offer stronger representational capacity for feature fusion and exploitation of cross-station consistency—advantages that are particularly valuable in warning frameworks integrating multi-source observations and network (array) structure information97.

In many application paradigms, conventional machine learning typically follows a “representation-then-discrimination” pipeline: discriminative statistical and time–frequency representations are first engineered from tide-gauge/bottom-pressure records, seismic observations, or electromagnetic–ionospheric measurements (e.g., spectral amplitudes, signal duration, energy distribution, and phase/amplitude perturbations), and classification or regression models are then used to identify “tsunami vs. non-tsunami” cases or to assign risk levels. A substantial body of work focuses primarily on signal extraction and representation, yet nevertheless yields operationally useful feature sources for subsequent machine-learning modeling. For instance, frameworks that characterize the transfer function between offshore forcing and coastal response in the frequency domain provide a stable pathway for constructing spectral features86. Event-level statistics such as maximum amplitude and signal duration offer interpretable indicators for discrimination and uncertainty characterization89. Time–frequency features of tsunami-related electromagnetic signals, together with noise-separation strategies, provide direct guidance for feature engineering from geomagnetic-perturbation data93. Likewise, detection of phase and amplitude perturbations in very low frequency (VLF) observations offers a reusable quantification scheme for features linking ionospheric responses to tsunami events94,95. Accordingly, conventional machine learning can be viewed, methodologically, as an automated extension that fuses and discriminates over these interpretable feature sets86,89,93,94,95.

It is important to emphasize that the performance of supervised recognition hinges critically on the quality, coverage, and representativeness of labeled data. Meanwhile, tsunami signals are tightly coupled to the source process, propagation pathway, and local site response, making the “limited training coverage → weak generalization to rare or atypical events” problem particularly acute. Existing studies show that different source parameterizations and modeling assumptions can substantially affect the consistency between simulations and observations, thereby increasing uncertainty in cross-event transfer98. Moreover, tsunami waveforms are highly sensitive to earthquake rupture characteristics, which further elevates predictive risk under out-of-distribution scenarios99. Against this backdrop, incorporating more physically diagnostic early-stage signals into learning frameworks and performing near-real-time recognition and estimation that explicitly leverages the spatiotemporal structure of monitoring networks has emerged as a promising direction for methodological evolution97,98,99.

Deep learning for spatiotemporal feature learning

Conventional machine learning for tsunami-signal recognition often depends on hand-crafted feature engineering, which makes it difficult to adequately capture, within a unified framework, the high-dimensional, nonlinear, and spatiotemporally coupled characteristics exhibited by multi-source observations. Deep learning, by contrast, offers a plausible alternative: through end-to-end representation learning, it can map raw or minimally processed measurements directly into hierarchical feature representations, enabling a more systematic characterization of structural information such as cross-station coherence, propagation dynamics, and multimodal associations97.

From a methodological perspective, deep models for tsunami early warning typically need to handle both spatial dependencies—such as network topology, propagation-path correlations, and cross-sensor synergy—and temporal evolution, including the gradual emergence of weak early-stage signatures and the subsequent dynamics of wave propagation (Fig. 4). Arias et al. incorporated near-real-time analysis of prompt elastogravity signals (PEGS) into the Peruvian warning system and trained a graph neural network to learn the spatiotemporal structure of PEGS across a broadband sensor network for rapid magnitude estimation and enhanced warning performance. This work illustrates the feasibility of deep models along the “network spatiotemporal structure recognition → near-real-time decision support” chain97.

Adapted from ref. 213, with permission.

At the same time, operationalizing deep approaches remains constrained by practical realities. On the one hand, the rarity of destructive tsunami events limits training-set size and exacerbates class imbalance. On the other hand, model interpretability and cross-regional generalization are still challenged by variations in source processes and changing observational conditions. Prior studies indicate that differences in source modeling can alter the correspondence structure between simulations and observations98, and that tsunami signals are highly sensitive to rupture characteristics99, implying that fitting to empirical data distributions alone is insufficient to robustly cover atypical scenarios. Accordingly, integrating anomaly detection and rare-event learning with the injection of physical information has become a key direction for deep learning to converge toward practical tsunami warning systems.

Anomaly detection and rare event learning

One of the fundamental challenges in tsunami forecasting stems from the combination of low event frequency and extreme impact: positive samples available for model training are scarce, while tsunami signatures in both open-ocean and nearshore observations often exhibit a “low-amplitude, high-background” profile and are easily obscured by tides, wind waves, ocean circulation, and seismic noise. This dual constraint—rarity plus low signal-to-noise ratio—limits the practical effectiveness of supervised learning methods that rely on large volumes of labeled data in operational warning contexts, and has motivated a shift toward methodological families that emphasize detecting anomalous deviations and improving robustness under small-sample regimes87,88,93,94,95,97.

From a methodological standpoint, anomaly detection is centered on learning normality from abundant background data and then identifying potential anomalies based on the degree of deviation. In the context of tsunami monitoring, this paradigm aligns naturally with signal-extraction tasks across multiple observation modalities. For instance, extracting tsunami signatures from DART records, satellite altimetry, or tide-gauge time series is essentially a problem of detecting anomalous fluctuations embedded in strong background variability87,100. Frequency-domain responses of coastal sea-level records to offshore forcing—characterized via transfer functions—can provide an interpretable baseline for testing “normal response versus anomalous deviation”86. Likewise, the low-frequency responses of seismic stations to tsunami arrival can vary substantially across events, suggesting that detectability assessments should be formulated in terms of departures from a learned background model88,90.

For electromagnetic and ionospheric observations, tsunami-related responses typically manifest as weak perturbations; consequently, time–frequency features and noise-separation strategies offer a direct source of feature spaces for anomaly detection92,93,94. Taken together, this body of work provides transferable building blocks for anomaly detection—linking observable quantities, background noise structure, and deviation criteria—that can be adapted across data types and operational settings86,87,88,90,93,94,95,97,100.

At the level of rare-event learning, research typically converges on three classes of strategies. First, mechanistic and numerical models are used to compensate for limited sample coverage by generating synthetic scenarios or performing data augmentation to expand the effective training distribution. Second, transfer learning across regions or sensor modalities is employed to improve robustness. Third, physically informed priors are incorporated into learning frameworks to enhance sensitivity to extreme scenarios. Differences in source modeling can substantially alter the correspondence structure between simulations and observations, underscoring the need for more systematic scenario generation and sample expansion to improve generalization98. The strong sensitivity of tsunami signals to rupture characteristics implies that limited historical records cannot exhaust the space of tsunamigenic mechanisms, further highlighting the importance of sensitivity experiments and synthetic scenario coverage99. Studies on hydroacoustic and acoustic–gravity signals provide mechanistic foundations for constructing libraries of earlier observable signatures, which can be leveraged to enhance warning performance under rare-event regimes101,102,103. Therefore, anomaly detection and rare-event learning should not be viewed as “patchwork fixes,” but rather as essential methodological support for real-world warning systems operating in environments characterized by low sample size, high stakes, and high uncertainty97,98,99,101,102,103.

Furthermore, tsunami “anomalies” are rarely confined to a single observational channel; rather, they are distributed across sensor systems in multi-physical and multiscale forms. In sea-surface/bottom-pressure and tide-gauge records, anomalies typically manifest as low-frequency elevations relative to background tides and sea state, long-period oscillations, or impulsive wave trains, which can be characterized using frequency-domain response modeling and statistical indicators86,87,89,100. In onshore or seafloor seismic records, anomalies may appear as low-frequency responses consistent with theoretical arrival times, dispersive energy packets, or long-duration signals, providing complementary detectability when sea-level observations are sparse or ambiguous88,90,91. In hydroacoustic and acoustic–gravity observations, anomalies can present as faster-arriving acoustic perturbations, offering an additional information channel for earlier detection101,102,103,104. In geomagnetic measurements, anomalies are expressed as weak magnetic-field perturbations synchronous with tsunami propagation and their associated time–frequency structure, yielding computable feature spaces for anomaly discrimination105,106,107. In ionospheric VLF observations, anomalies emerge as detectable deviations in phase and amplitude induced by tsunami propagation, providing indirect evidence for long-range, cross-domain monitoring94,95,108. In ocean-radar measurements, anomalies may be reflected in changes to time–distance patterns and spectral characteristics of radial current fields that correspond to propagation or resonance processes96.

Accordingly, for practical warning systems, a more viable route is often not qualitative detection based on a single signal, but the development of transferable, fusion-ready, rare-event-oriented detection and decision frameworks centered on cross-modal anomaly representations, in coordination with physically informed priors to enhance robustness under extreme scenarios97.

Tsunami propagation and inundation models

Developing accurate tsunami forecasting models has long been a central objective in tsunami science. Traditionally, physics-based models grounded in fluid dynamics and earthquake source mechanics have served as the primary tools for tsunami prediction. However, with the rapid growth of available data and advances in computational capability, data-driven models have emerged as a highly promising alternative. By leveraging machine learning (ML) and artificial intelligence (AI), these approaches learn patterns from historical observations and enable fast, flexible, near-real-time forecasting. This section examines the strengths and limitations of major tsunami modeling paradigms—from purely physics-based approaches to purely data-driven models—and analyzes how emerging hybrid methods can integrate the advantages of both.

Purely physics-based models

Physics-based models have long been the foundation of tsunami prediction systems, using fundamental principles of fluid mechanics, oceanography, and seismology to mechanistically describe the generation, propagation, and coastal impacts of tsunamis. When the horizontal movement of water particles significantly exceeds the vertical scale, the vertical acceleration can be considered negligible compared to gravity, and the pressure distribution is assumed to be hydrostatic109,110. With these assumptions, the depth-averaged Navier-Stokes equations simplify to a shallow water model. The conservation equations for mass and momentum in tensor form are expressed as follows:

Where, the indices i and j follow the Einstein summation convention. The coordinate system is set in the XY plane, where \({x}_{j}\) represents the Cartesian coordinates, \(g=9.81{\rm{m}}/{\rm{s}}\) is the gravitational acceleration, \({\text{h}}\) is the total water depth, \({u}_{j}\) is the depth-averaged velocity, \(\nu\) is the kinematic viscosity, and \({F}_{i}\) represents the external force term. If the wind shear stress and Coriolis force are negligible, \({F}_{i}\) is defined as:

Where, \(\rho\) denotes the density, \({z}_{b}\) represents the bottom bed elevation, and \({\tau }_{{bi}}\) is the bed shear stress in the i-direction, which is calculated based on the depth-averaged velocities as follows:

Where, Cb is the bed friction coefficient, which is estimated as:

Where, \({C}_{z}\) is the Chezy coefficient, which is determined using the Manning equation:

Where, \(n\) is Manning’s coefficient.

Shallow-water models used to predict tsunami travel times, wave heights, and nearshore inundation extents have been shown to be highly reliable in earthquake-tsunami scenarios where source conditions are relatively well constrained. For example, national warning agencies routinely employ shallow-water-equation solvers to simulate tsunami propagation from the source to the coast, estimate arrival times and wave amplitudes, and infer potential inundation zones. Empirically, when earthquake source parameters are reasonably well characterized, shallow-water models can deliver dependable forecasts.

In recent years, the application of the shallow-water equations to tsunami modeling has advanced markedly. To improve accuracy and numerical stability for high-Reynolds-number flows (as typified by tsunami waves), researchers have developed a range of numerical schemes and model-optimization strategies. Sato et al.111 investigated numerical simulation of the shallow-water equations for high-Reynolds-number flows using a multiple-relaxation-time lattice Boltzmann method (MRT-LBM) and applied it to real-world tsunami cases. Although the lattice Boltzmann method (LBM) has attracted attention in computational fluid dynamics due to its locality and algorithmic efficiency, it can suffer from numerical instabilities under high-Reynolds-number conditions. The authors therefore introduced a subgrid-scale (SGS) model to improve the stability of the MRT-LBM formulation, enhancing its applicability to tsunami simulations.

Khan and Kevlahan explored data assimilation in the two-dimensional shallow-water equations, focusing on recovering initial conditions for tsunami simulations through variational data assimilation. They showed that, with an appropriate configuration of observational data, initial states of the 2D shallow-water system can be effectively reconstructed, and they validated the approach through numerical experiments112. Arpaia et al. proposed a new efficient discretization method for shallow-water equations on spherical geometry, applying it to tsunami and storm-surge simulations. Using a covariant-basis projection approach, they simplified a discontinuous Galerkin (DG) scheme and introduced a well-balanced correction, further improving numerical stability and computational efficiency113.

Regarding bottom friction, Tinh et al. developed a new numerical approach that incorporates a wave-friction factor into conventional one-dimensional shallow-water models. Compared with traditional steady-friction formulations, the proposed method yields more accurate estimates of tsunami-induced bed shear stress, leading to more realistic simulation outcomes114. In addition, Kwon et al. examined tsunami-mitigation configurations using a fifth-order weighted essentially non-oscillatory (WENO) scheme coupled with an immersed boundary method (IBM). By simulating arrays of column-like structures, they proposed more effective layout designs to reduce tsunami impacts on nearshore buildings115.

Physics-based models are advantageous in terms of scientific credibility and interpretability. By strictly adhering to governing physical laws (e.g., conservation of mass and momentum), their predictions have clear physical meaning and are therefore easier to analyze and explain. For example, physics-based simulations can explicitly track tsunami propagation pathways, energy distribution, and interactions with bathymetry and coastal topography—capabilities that are essential for understanding tsunami mechanisms and for verifying model fidelity116. They also enable scenario analysis and uncertainty assessment by allowing initial conditions to be systematically varied, for instance by perturbing earthquake source parameters or changing the volume of a submarine landslide to evaluate how tsunami impact patterns respond under different conditions.

Nevertheless, purely physics-based models exhibit notable limitations. One major constraint is computational efficiency: high-fidelity simulations often require fine spatial meshes and small time steps, making real-time computation across global or very large regional domains highly challenging. In more complex settings—particularly in deep-ocean environments or regions with highly intricate seafloor topography—fully three-dimensional numerical models can explicitly represent nonlinear wave interactions, depth-dependent effects, and bottom-friction processes, thereby providing high-accuracy, multiscale representations of tsunami dynamics. However, such models are computationally demanding and typically require high-performance computing resources, which substantially restricts their use in real-time warning applications117.

Overall, existing studies indicate that tsunami simulation accuracy and efficiency can be substantially improved by optimizing numerical schemes for the shallow-water equations, incorporating data assimilation, and integrating multiple model-enhancement strategies. Future work should further investigate how these approaches can be extended to more complex topographies and flow regimes in order to address the challenges encountered in realistic hazard simulations. While physics-based models offer irreplaceable advantages in scientific credibility and interpretability, they remain limited in terms of computational efficiency, sensitivity to uncertainties in source parameters, and adaptability to complex or non-seismic tsunami sources. These limitations have become increasingly pronounced under contemporary demands for rapid response and large-area warning, motivating the exploration of new forecasting paradigms.

Purely data-driven models

Unlike physics-based models, purely data-driven forecasting approaches do not explicitly solve governing equations. Instead, they leverage historical observations or pre-generated scenario datasets and use machine learning algorithms to rapidly map input data—such as seismic waveforms, ocean-bottom pressure records, and tide-gauge observations—onto key tsunami parameters including wave height, arrival time, and potential inundation extent, thereby meeting the stringent timeliness requirements of early warning118,119,120,121. Figure 5 illustrates the overall workflow of data-driven tsunami forecasting, highlighting the basic logic of parallel multi-model prediction and model fusion. For example, Sarika and Milton118,119,120,121 trained a classification model on earthquake P-wave features to rapidly identify potentially tsunamigenic events in the early phase following an earthquake, demonstrating the computational efficiency and deployability of data-driven pipelines within minute-scale warning windows. Lee et al.’s TRRF-FF data-driven response-function model, once trained and calibrated, can predict the spatial distribution of near-field coastal run-up at a sub-second timescale, providing an efficient alternative for rapid near-field forecasting121.

Data-driven tsunami forecasting models.

Beyond directly predicting key tsunami parameters, data-driven approaches are often extended to impact assessment and risk characterization. One line of work couples rapid predictions of waveforms or intensity measures with coastal exposure and vulnerability information, thereby providing more operationally actionable impact estimates during the warning phase. Another line integrates data-driven prediction with the optimization of observing networks and sensor configurations to improve forecast stability and information gain under resource constraints119,120.

For example, Iwabuchi et al. employed Gaussian process regression with automatic relevance determination (ARD) to quantify the contributions of individual ocean-bottom pressure sensors to estimating maximum coastal tsunami heights, providing evidence of differential sensor effectiveness and highlighting the potential of data-driven methods to partially mitigate “black-box” concerns and enhance interpretability119. Purnama et al. coupled deep-learning-based waveform prediction with metaheuristic optimization (e.g., genetic algorithms) to simultaneously improve predictive efficiency and optimize buoy placement, offering a framework for the joint design of “model–observation” components in operational warning systems120. In addition, for atypical tsunami sources (e.g., landslide-generated tsunamis), empirical or statistical regression relationships can also be viewed as part of the data-driven paradigm, providing rapid first-order estimates when mechanisms are uncertain or real-time simulation is infeasible. For instance, studies that derive predictive formulas for dominant wave periods of landslide-generated waves based on experimental and event data can complement rapid assessment under such scenarios122.

Nevertheless, purely data-driven models still exhibit significant limitations. Beyond the commonly noted issues of interpretability, the practical limits of purely AI-based tsunami forecasting are closely tied to operational deployment conditions. Model skill can drop sharply when input patterns deviate from training distributions, such as cross-basin transfer, atypical rupture processes, or compound coastal conditions. Performance is also uneven for rare high-impact events because class imbalance tends to bias training toward more frequent, moderate cases. In addition, good aggregate scores may mask physically implausible local predictions (e.g., inconsistent coastal gradients or unstable arrival-time ordering) if mechanism-level checks are absent. Data-stream fragility is another major concern: missing, delayed, or asynchronous sensor inputs can propagate errors through end-to-end pipelines. Finally, model drift after sensor upgrades, coastline changes, or long-term environmental shifts can silently degrade reliability if continual monitoring is not implemented. Therefore, operational AI forecasting should be coupled with data-quality gates, out-of-distribution detection, mechanism-consistency screening, periodic recalibration, and fallback strategies to maintain decision reliability in real warning workflows. Their performance depends strongly on the size and representativeness of the training data, yet destructive tsunami events are relatively rare in real-world observations, which can lead to insufficient generalization across regions, source types, or extreme scenarios118,119,120,121. Moreover, the internal mechanisms and physical consistency of some deep-learning models are difficult to interpret directly, which increases concerns about credibility and auditability in high-stakes warning decisions. Consequently, interpretability and robustness need to be strengthened through approaches such as feature-importance analysis, physics constraints, and/or hybrid modeling, and uncertainty quantification119,120,123. For example, Buenrostro et al. used a convolutional neural network to predict intensity measures—such as run-up, inundation area, and wave height—from slip and coseismic deformation data, demonstrating the potential of “learning-based surrogate models” to replace computationally expensive inundation simulations. However, the acquisition of training data and the transferability of such models across scenarios still require careful evaluation123.

Hybrid physics–AI models

To address the high computational cost and limited responsiveness of purely physics-based models, as well as the shortcomings of purely data-driven models in physical consistency and generalization, physics–artificial intelligence (Physics–AI) hybrid models are increasingly emerging as a major direction in tsunami forecasting. These approaches aim to combine the interpretability of physical mechanisms with the rapid inference capability of data-driven models. On the one hand, they incorporate constraints or feasibility checks that enforce physical consistency during learning or inference; on the other hand, they use deep learning to replace or accelerate computationally expensive numerical components. In doing so, Physics–AI hybrids seek to reconcile “speed” with “trustworthiness” in real-world warning settings121,124,125.

A typical pathway for hybrid modeling is to embed AI-based predictions within a physics-consistency framework for constraint or validation. Rather than relying solely on statistical correlations, this paradigm requires that predictions remain physically feasible with respect to key variables and dynamical relationships—for example, by satisfying the mass- and momentum-conservation constraints implied by shallow-water dynamics (with the shallow-water equations and their numerical solution framework serving as the physical foundation)126,127. In practice, Sabah and Shanker combined ANN-based predictions with physics-based validation to conduct tsunami hazard forecasting and enhance credibility across the Indo-Pacific region, illustrating the value of the “AI prediction + physical consistency checking” hybrid paradigm for regional-scale risk assessment124.

Another key line of development is to use machine learning as a surrogate for high-fidelity physics-based models. In this paradigm, large training libraries are first generated via numerical simulations, and AI is then used to rapidly approximate propagation or inundation processes that would otherwise be computationally expensive, thereby substantially reducing forecast latency. Focusing on the 2018 Anak Krakatau volcano-flank-collapse tsunami, Juanara and Lam adopted a coupled strategy of “physics-based simulations for database generation + LSTM-based waveform forecasting,” enabling the prediction of subsequent tsunami evolution from a short initial waveform window and demonstrating the potential of hybrid models for non-seismic tsunami scenarios125. For near-field warning, Lee et al.121 developed and calibrated a run-up response-function model based on a precomputed ensemble of simulations, achieving sub-second prediction of alongshore near-field run-up distributions and illustrating the advantage of “physics-consistent simulation backgrounds + data-driven fast mapping.” Earlier response-function approaches for rapid far-field forecasting, as well as efficient inversion/prediction schemes based on precomputed Green’s functions, can likewise be viewed as extensions of this broader “precomputation–fast mapping” hybrid logic128,129.

In parallel, combining data assimilation with AI acceleration allows hybrid models to dynamically update forecasts as new observations arrive, improving both timeliness and accuracy. Melgar and Bock showed that integrating high-rate GPS with strong-motion and other land-based data (supplemented by offshore pressure sensors and GPS buoys) can improve source inversion and enhance tsunami inundation and run-up predictions, providing important support for operational multi-source data assimilation130. Within tsunami warning workflows, practices such as extracting source coefficients from deep-ocean bottom-pressure data (e.g., DART) and using them to drive operational forecast models, as well as inferring sources from multiple measurement types to initialize tsunami simulations, further exemplify an integrated “observation–inversion–physics-based forecasting” pathway131,132. Real-time event assessment and near-field forecasting studies also indicate that rapid updating and uncertainty control are critical for improving warning effectiveness133. On the computational side, parallel numerical computing for high-resolution near-field inundation forecasting (e.g., parallel implementations of TUNAMI-N2 coupled with source inversion) provides engineering support for “faster physics updates” and can complement data-driven rapid-estimation modules134.

Overall, Physics–AI hybrid models offer a more favorable compromise among predictive accuracy, computational efficiency, and physical consistency, but their development and training are highly complex. They require careful balancing across multiple factors, including multi-source data quality control, the strength of imposed physical constraints, cross-scale consistency (deep-ocean propagation to nearshore inundation), and robustness under extreme scenarios. Particularly important is the establishment of systematic validation frameworks—benchmarking against controlled physical experiments and multiple numerical models—to avoid failure modes or error accumulation under complex topography and extreme boundary conditions. Comparative studies integrating laboratory experiments and numerical models can provide direct references for validation design and error diagnostics in hybrid modeling135. Moreover, substantial epistemic uncertainty remains in rapid near-field inversion and forecasting: how to strike an auditable trade-off between “speed” and “trustworthiness” remains a key challenge that hybrid frameworks must confront on the path toward operational deployment136.

As summarized in Table 2, a practical deployment strategy is to orchestrate models by decision stage rather than selecting a single “best” method. In strict near-field timelines, low-latency estimators and surrogates/ROMs are suitable for rapid first alerts, while subsequent minutes should be used for rolling uncertainty updates, data assimilation, and consistency checks against physics-based baselines. For far-field transoceanic assessment, physics-based ensembles remain the most robust option for extrapolation across sources and regions, with surrogates primarily used to accelerate repeated scenario evaluation. In data-scarce or mechanism-uncertain settings, conservative uncertainty reporting and explicit exposure of multi-model disagreement are preferable to single deterministic outputs, because model divergence itself is often decision-relevant for risk grading137,138,139,140.

The three paradigms show distinct operational trade-offs. When viewed from an operational perspective, physics-based, purely data-driven, and physics–AI hybrid models represent three complementary ways to trade off physical fidelity, computational latency, and implementation complexity for the same forecasting targets such as arrival time, maximum wave height, and inundation depth. Physics-based models remain the most transparent and mechanism-consistent option, because their outputs can be traced to explicit source assumptions, bathymetry/topography, boundary conditions, and solver settings. This traceability is essential for high-fidelity inundation mapping and for auditable decision support, but it often comes with substantial runtime cost, particularly when fine meshes, wetting–drying treatments, and ensemble-style uncertainty analyses are required.

Purely data-driven models shift most computational burden to the offline stage and therefore offer very low inference latency in real-time use. This makes them attractive for strict near-field timelines, where a rapid first-pass estimate and early screening can be more valuable than a delayed high-fidelity solution. However, their reliability is strongly conditional on the representativeness of the training data and on the stability of the learned mapping under distribution shift. Performance can degrade when applied across regions, under rare or extreme source configurations, or when observation quality and coverage differ from the conditions implicitly assumed during training. For operational adoption, these limitations imply that data-driven outputs should be accompanied by explicit robustness evaluation, well-designed validation splits, and calibrated uncertainty reporting rather than treated as deterministic substitutes for physics.

Physics–AI hybrid approaches occupy the middle ground by combining faster turnaround with stronger physical plausibility than purely black-box pipelines. In practice, hybrids can be implemented in several ways, including using learning-based components for rapid source estimation or fast mapping from observations to coastal responses, while retaining physics-based propagation and inundation modules where physical constraints and interpretability are most critical. This design logic can deliver a pragmatic compromise for routine operations, but it also increases pipeline complexity and integration cost. Hybrids therefore require careful end-to-end verification, including ablation-style checks to demonstrate the contribution of the physical constraints, and systematic benchmarking against controlled experiments and multiple numerical models to prevent error accumulation under complex topography and boundary conditions.