Abstract

Sound Event Localization and Detection (SELD) is a critical capability for environmental acoustic intelligence, enabling systems to jointly identify what sounds are active and where they originate. This technology is foundational for applications ranging from smart-city monitoring and autonomous systems to immersive media. Modern SELD research is dominated by deep learning approaches that leverage multi-channel audio, explicit spatial representations, and unified output formats that resolve complex and densely polyphonic scenes. This review provides a comprehensive synthesis of the field, charting its progress from foundational concepts to the state-of-the-art. We systematically analyze key methodological advancements, spatially-informed feature engineering, and the sophisticated data augmentation pipelines that underpin top-performing systems on public benchmarks. This review also highlights emerging opportunities for future research such as distance-aware 3-D SELD and the advancement of data-efficient learning paradigms. By translating these challenges into concrete research directions, this work aims to accelerate the progress of SELD toward robust, field-ready environmental intelligence tools.

Similar content being viewed by others

Introduction

In today’s digital age, the proliferation of low-cost sensors and inter-connected devices has created an unprecedented volume of audio data, capturing the rich, multifaceted soundscapes of daily life. These acoustic environments are dense with information, offering valuable cues about human activities, social interactions, mechanical operations, and potential environmental hazards1. While humans possess an innate ability to discern not only the nature of a sound but also its spatial origin2,3 and approximate distance4, manually analyzing this vast and continuous stream of audio is fundamentally impractical. This challenge has catalyzed a paradigm shift toward intelligent, automated systems for interpreting our acoustic world, a field we term Environmental Acoustic Intelligence. The technical foundation for this intelligence is the domain of computational auditory scene analysis2, which seeks to imbue machines with human-like auditory perception.

A cornerstone of Environmental Acoustic Intelligence is the field of Sound Event Localization and Detection (SELD), a critical technology that automates the process of identifying what sounds are present in an environment and where they originate. It accomplishes this by fusing two complementary tasks into a single, cohesive framework: Sound Event Detection (SED) and Direction-of-Arrival Estimation (DOAE). The first task, SED, addresses the “what” by identifying the class of a sound event (e.g., “car horn” or “music”) and its precise onset and offset times. In contrast, DOAE address the “where” by estimating the spatial origin of a sound source relative to the recording device, typically in terms of azimuth and elevation. While useful in isolation, integrating these two capabilities allows SELD systems to provide a comprehensive spatiotemporal understanding of an auditory scene5. Unlike traditional methods which are often limited to localizing specific source types such as speech or drone noises6, SELD distinguishes itself by its ability to simultaneously detect and localize a diverse array of overlapping sound events.

As illustrated in Fig. 1, a SELD system can monitor a complex acoustic environment, such as a busy street, and concurrently identify a blaring alarm on the right while tracking pedestrian activity on the left. This ability to generate a comprehensive spatiotemporal understanding of an acoustic scene is invaluable for a wide range of applications; incorporating SELD can enhance situational awareness in autonomous robotics7, enable smarter surveillance systems in dense urban environments8,9,10, and create deeply immersive experiences in virtual and augmented reality5. Despite these benefits, however, computationally replicating the proficiency of the human auditory system in parsing such complex scenes remains a formidable scientific challenge2,3.

In a complex acoustic scene, a SELD system simultaneously identifies multiple distinct sound events and estimates their corresponding spatial origins relative to the listener. This capability facilitates a comprehensive understanding of the auditory environment.

While substantial progress has been made in the independent domains of SED and DOAE, their integration into a single framework introduces unique complexities. Specifically, SELD not only inherits the challenges of its constituent tasks but also confronts the critical data association problem: accurately linking multiple concurrent sound events to their respective spatial origins5. This associative challenge is exacerbated in real-world settings characterized by overlapping sources, reverberation, and dynamic background noise11.

In recent years, the advent of deep learning has driven remarkable progress in the field, with deep learning-methods setting new standards on many benchmark tasks3,12. This rapid evolution has led to a diverse and increasingly specialized body of work, creating a clear need for a comprehensive synthesis to map the current state-of-the-art. This review address this need by providing the first holistic survey of the deep learning-centric methodologies that define modern SELD research. We systematically outline methodological breakthroughs and persistent limitations across the entire SELD pipeline, including advances in neural network architectures, benchmark datasets, feature engineering strategies, and training paradigms.

This comprehensive, task-specific scope distinguishes our work from previous literature. Most prior work has surveyed the constituent sub-tasks in isolation, providing valuable in-depth analyses of either SED13,14,15 or DOAE6,16. While some reviews have addressed the joint SELD problem, their scope has been limited. For instance, some offer only a brief overview of SELD while maintaining a primary focus on DOAE17 or a narrow selection of early model architectures18. Perhaps the most relevant prior work is the overview by Politis et al.19, which provided a foundational analysis of the first Detection and Classification of Acoustic Scenes and Events (DCASE) challenge task on SELD in 2019. However, the field has advanced at a remarkable pace since that publication, with significant breakthroughs in model architectures, feature engineering, and output formats. In contrast, this review synthesizes these recent, multi-faceted developments across the entire SELD pipeline to provide a current and comprehensive map of the state-of-the-art.

By offering insights into both the state-of-the-art and ongoing challenges, we aim to guide researchers towards meaningful future directions, ultimately facilitating the development of more robust and deployable SELD systems for enhanced environmental acoustic intelligence. Although promising efforts are beginning to address multi-modal20 and 3-D SELD with distance estimation21, these fields are still in their formative stages and therefore lie beyond the scope of this review.

Sound event localization and detection

At its core, SELD is a machine listening framework designed to emulate the human capacity for parsing complex acoustic environments. To achieve this, SELD systems must simultaneously answer two fundamental questions: what sound events are occurring, and where are they originating? This is accomplished by integrating two traditionally separate yet interdependent tasks into a unified model: polyphonic SED and high-resolution DOAE.

The first task, SED, entails understanding the temporal and semantic aspects of an auditory scene. Its objective is to identify the class of each active sound event and determine its precise onset and offset times. While SED can be monophonic, where the most dominant event is identified, real-world applications demand polyphonic capability, where multiple event classes can be detected simultaneously within the same time frame22. Polyphonic SED, while more challenging, is essential, as natural acoustic environments are rarely composed of isolated, sequential sounds13,15.

The second task, DOAE, also commonly known as Sound Source Localization, provides the spatial context for an acoustic scene. Using multi-channel audio, DOAE methods estimate the spatial origin of a sound source, typically represented by its azimuth (left-right) and elevation (up-down) angles relative to the recording device5,17. By itself, DOAE can determine the location of acoustic activity but offers little information about the identity of the sound source, perfectly complementing the semantic information provided by SED.

While SED and DOAE offer valuable insights independently, their integration within the SELD framework unlocks a much richer understanding of the environment. The central challenge of SELD is not merely executing these tasks in parallel, but correctly linking each detected sound with its precise spatial origin. This becomes particularly difficult in acoustically complex scenes with multiple overlapping sound sources. This complete spatiotemporal representation is critical for a growing number of applications across industrial, commercial, and scientific domains19,23. These applications demonstrate the core value of Environmental Acoustic Intelligence, where raw audio is translated into actionable, spatially-aware insights. For instance:

-

Public Safety and Surveillance: Accurately linking detections of “gunshots” or “alarms” with precise spatial coordinates can significantly enhance public safety monitoring and policing efforts24,25. Urban security systems can dispatch responders more effectively without relying on cameras, which may be cost-prohibitive and raise privacy concerns8.

-

Autonomous Vehicles: Autonomous vehicles typically rely on visual or LiDAR sensors for context, which can fail in low light or poor weather. Incorporating SELD enables vehicles to detect and localize non-line-of-sight warning sounds such as sirens or horns, substantially improving situational awareness and decision-making in complex traffic scenarios26.

-

Biodiversity Monitoring: Integrating SELD can automate non-invasive acoustic surveys of wildlife. By detecting and localizing animal calls, these systems can help track populations over vast habitats, greatly reducing the need for costly and labor-intensive manual fieldwork27.

Initial research into SELD treated the task as a modular pipeline, often combining separate algorithms for each sub-task. For instance, early systems first performed event detection using machine learning methods such as Hidden Markov Models (HMMs)28,29 or Support Vector Machines30. Parametric methods, such as the Steered Response Power with Phase Transform (SRP-PHAT), then handled localization28,29. While foundational, these early frameworks struggled to associate detected sound events with their corresponding DOAs31 and were generally incapable of scaling to complex scenarios with overlapping sound sources5.

The challenge of parsing these acoustic scenes has been met with the transformative power of deep learning3. Deep neural networks (DNNs) are exceptionally well-suited to the task, as they can learn the intricate spectral and spatial patterns directly from multi-channel audio. This has led to the development of robust end-to-end solutions that perform well even in challenging acoustic conditions5, making deep learning the dominant framework for SELD. Accordingly, this review focuses on deep learning-based methods for SELD, given their demonstrated prowess in localization and detection32.

A pivotal catalyst in this research domain has been the DCASE challenges33, which introduced a dedicated SELD task in 2019. The DCASE community has been instrumental in fostering a collaborative research ecosystem, providing widely adopted public datasets31,34,35, open-source baseline systems5,21, and unified evaluation metrics19.

Table 1 provides a timeline that summarizes key methodological breakthroughs that have defined contemporary SELD research. Furthermore, the confluence of community-driven benchmarking and deep learning techniques has given rise to a generic SELD pipeline, as depicted in Fig. 2. The process typically involves five key stages:

-

1.

Data Collection: Acquiring multi-channel audio recordings paired with precise temporal and spatial annotations for all sound events of interest.

-

2.

Feature Extraction: Transforming raw audio signals into robust time-frequency (e.g., log-Mel spectrograms) and spatial representations (e.g., intensity vectors). Data augmentation is often applied at this stage to increase the diversity of the training set.

-

3.

Neural Network Inference: Training a DNN, commonly a Convolutional Recurrent Neural Network (CRNN), to jointly predict event activity probabilities and their corresponding DOAs.

-

4.

Output Formatting: Structuring the raw predictions of the network into a human-readable format that provides frame-by-frame classifications of sound events alongside their estimated spatial coordinates.

-

5.

Evaluation: Systematically assessing model performance using established evaluation metrics to guide iterative improvements and benchmark against the state-of-the-art.

Multi-channel audio is recorded with detailed annotations of event types and spatial origins. After converting the raw signals into time-frequency and spatial features, potentially with data augmentation to improve model generalization, a neural network infers both event labels and DOA coordinates on a frame-by-frame basis. Final predictions are benchmarked using standardized metrics, informing iterative improvements for real-world readiness.

Deep learning

Deep learning is the driving force behind modern SELD systems. As a subfield of machine learning, it enables models to learn complex, high-dimensional patterns directly from raw or minimally processed data12, making it particularly suited to the intricacies of audio analysis. The success of deep learning in SELD has been fueled by advancements in computational power, the availability of large-scale datasets, and the development of innovative network architectures that have revolutionized fields from medical imaging36,37 to materials analysis38. This section introduces the foundational concepts and neural network architectures that underpin current SELD systems.

Fundamentally, deep learning relies on artificial neural networks, which draw inspiration from the structure of the human brain39. These networks consist of multiple interconnected layers of “neurons”, where each layer applies learnable transformation to its inputs. By stacking these layers, the network progressively extracts increasingly abstract features, learning a rich data hierarchy. While early neural networks were relatively shallow, researchers discovered that increasing network depth and incorporating specialized modules allowed models to capture far more intricate patterns40, leading to the highly expressive and accurate models seen today.

Different classes of specialized architectures have emerged, each tailored to specific data structures12,38,41. For tasks involving grid-like data, such as time-frequency representations of audio (spectrograms), Convolutional Neural Networks (CNNs) are especially effective42. In a CNN, learnable filters “slide” across the input spectrograms to capture local patterns, such as spectral shape and temporal patterns. This property makes CNNs a highly effective front-end for SELD, ideal for extracting the foundational spectral cues needed for event detection and localization.

In contrast, Recurrent Neural Networks (RNNs) are designed to model sequential data and temporal dependencies. By maintaining internal “memory” or hidden states across time steps, RNNs excel at capturing sequential patterns in time-series data. Variants such as Long Short-Term Memory (LSTM) and Gated Recurrent Units (GRUs) are particularly adept at preserving long-range temporal information12. This temporal modeling capacity is crucial for SELD, where understanding the onset, duration, and co-occurrence of events over time is essential for accurate detection and localization.

Recognizing the need to model both local features and long-term temporal structure, hybrid architectures that combine CNN and RNN components have become commonplace in SELD. In particular, the CRNN has become the dominant architecture for the task, stacking convolutional layers to capture spectral and spatial features, followed by recurrent layers to model their temporal dynamics43,44,45. More recently, attention-based models such as the Transformer46 and its audio-specialized variants (e.g., Conformer47) have emerged as compelling alternatives. These systems use self-attention mechanisms to capture global dependencies, often demonstrating superior performance in modeling long-range context in audio-related tasks.

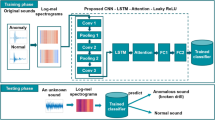

In modern SELD research, CRNN-based architectures architectures continue to form the foundation for many competitive SELD systems48. Fig. 3 illustrates a generic CRNN pipeline adapted from the first publicly available SELD baseline proposed by Adavanne et al.5. The system begins by converting multi-channel audio into time-frequency representations. The convolutional layers then act as a powerful feature extractor, identifying localized spectro-temporal patterns and creating a sequence of feature vectors (one for each time frame). These vectors are then fed into the recurrent layers, which integrate contextual information across time to produce enriched sequential representations. Finally, fully connected output layers interpret these time-distributed representations to yield frame-wise predictions for both event activity and corresponding spatial location. This architectural blueprint has served as a springboard for a wide range of more complex and powerful SELD systems.

Multi-channel audio is transformed into time-frequency representations (e.g., spectrograms). Convolutional layers extract local spectro-temporal features, which are then reshaped into sequential feature vectors. RNN layers process these vectors to capture and integrate temporal dependencies, enriching each vector with context from adjacent frames. Finally, fully connected layers use these refined, time-distributed representations to generate both classification and localization outputs.

Audio formats

Accurately localizing sound sources hinges on decoding spatial cues such as inter-channel time delays, level differences, and phase relationships. These cues are physically encoded in the sound waves arriving at multiple points in space and are inherently lost in single-channel recordings6. This makes multi-channel audio an indispensable prerequisite for any state-of-the-art SELD system49. While numerous multi-channel configurations exist, SELD research has predominantly centered on two principal four-channel recording formats: First-Order Ambisonics (FOA) and tetrahedral or multi-channel microphone arrays (MIC).

The FOA audio format provides a holistic representation of the sound field by employing a mathematical technique known as spherical harmonic decomposition to represent the complete 360° acoustic scene surrounding the microphone50. In practice, a spherical microphone array, such as the Eigenmike em32 shown in Fig. 4, captures sound from multiple directions. An encoding matrix then transforms these raw signals into the four-channel FOA format, often labeled W, X, Y, and Z. The omnidirectional W channel represents the sound pressure, or what a single microphone would hear, while the three figure-of-eight X, Y, and Z channels represent the Cartesian particle velocity45. This provides a physically meaningful description of the sound field that has proven highly effective for deep learning models. Consequently, SELD systems utilizing the FOA format consistently achieve state-of-the-art performance on public benchmarks32,51.

A spherical microphone array that is a standard recording device in SELD research32,65. Its 32 internal capsules allow for the capture of a high-resolution sound field, which is then typically encoded into the FOA audio format. Alternatively, a subset of four tetrahderally-arranged capsules can be selected to provide raw audio in the MIC audio format.

The MIC format, by contrast, is a more direct, hardware-level approach. It retains the raw, unprocessed signals from microphone capsules placed at the vertices of a known geometry, typically a regular tetrahedron. Lacking an explicit spherical harmonic conversion, the MIC format captures spatial information purely through the raw inter-channel phase and level differences. By eliminating the FOA encoding stage, the MIC setup simplifies the front-end processing pipeline and can reduce computational latency, making it an attractive choice for resource-constrained applications52. Furthermore, because they operate directly on raw signals, MIC-based systems are not tied to specific array geometries and can support more flexible arrangements, facilitating highly adaptable, real-world deployments.

While FOA and MIC remain the most studied formats, interest is rapidly expanding to other geometries driven by practical applications. This trend signals a move to bring SELD from controlled laboratory settings into everyday devices. For instance, binaural recordings, captured from microphones placed at the ears of a human or dummy head, closely approximate human spatial hearing and align with the growing demand for immersive audio in wearable devices and virtual reality53,54,55. Moreover, circular arrays56,57,58 and microphones embedded in common devices such as mobile phones59 are also being actively investigated as practical and cost-effective solutions for scalable outdoor surveillance and monitoring platforms8.

Each recording format offers a distinct trade-off between representational richness, computational cost, and hardware practicality. The choice of format, therefore, depends heavily on the target application, intended device, and available resources. Regardless of the specific arrangement, however, multi-channel audio remains the essential foundation for capturing the spatial characteristics necessary for effective SELD.

Available datasets

The performance of deep learning models fundamentally depends on extensive, strongly labeled training data. For SELD, this necessitates datasets with precise temporal annotations (onsets and offsets) for each sound event, coupled with their corresponding DOAs. While the curation of such datasets is a labor-intensive process, they are the cornerstone of model development, benchmarking, and validation. Table 2 presents a comprehensive overview of widely used SELD datasets, summarizing key attributes such as recording format, signal-to-noise ratios (SNRs), and total training duration.

Synthetic vs. real-world data

The landscape of SELD datasets can be broadly categorized by data origin into either synthetic or real-world datasets. Early research relied almost exclusively on synthetic mixtures generated by convolving isolated audio recordings with Room Impulse Responses (RIRs). These RIRs can be derived from acoustic simulations to create fully synthetic datasets, or from in-situ measurements in real-rooms to create hybrid datasets60. This synthesis paradigm offers precise control over all acoustic parameters, including event types, locations, and SNRs. However, synthetic data often fails to capture the full acoustic complexity and subtle nuances of real sound scenes, which can lead to a performance gap when models are deployed in real-world conditions61.

In contrast, fully real-world datasets are captured directly in authentic acoustic environments with genuine sound sources. These datasets represent the gold standard for model generalization, as they contain the natural acoustic variability and sound event activity that models will ultimately face in deployment. The primary challenge, however, lies in the extensive and costly manual annotation required to obtain accurate spatiotemporal labels required for model training32.

Key benchmark datasets

A series of notable benchmark datasets has been instrumental in steering the direction and progress of SELD research. Starting in 2019, the Tampere University (TAU) datasets marked the beginning of standardized SELD benchmarking. The initial TAU Spatial Sound Events (TSSE) 2019 dataset featured stationary sources in hybrid acoustic scenes31. This was succeeded by the TAU-NIGENS Spatial Sound Events (TNSSE) 2020 and 2021 datasets, which increased realism by introducing moving sound sources34 and unknown directional interferences35, respectively, pushing models to handle more dynamic and cluttered sound scenes.

Parallel to these efforts, the Learning 3D Audio Sources (L3DAS) Challenge provided its own series of dedicated SELD datasets. The L3DAS21 and L3DAS22 corpora were hybrid datasets focused on FOA audio within a single office environment62,63. The final L3DAS23 dataset explored multi-modal SELD by introducing simulated visual data, extending the task into audio-visual contexts64.

A critical shift towards greater realism was signaled by the introduction of manually annotated real-world datasets. Early examples include the SECL-UMons56 and AVECL-UMons58 datasets, which provided real-world audio and audio-visual recordings, respectively. However, they were limited by discrete source angles and the use of a 2-D circular microphone array, which prevented elevation estimation.

This gap was decisively addressed by the Sony-TAu Realistic Spatial Soundscapes (STARSS) datasets. The STARSS22 dataset provided the first large-scale, real-world SELD dataset featuring genuine human actors recorded in a diversity of real rooms65, forming the basis for the DCASE 2022 Challenge. Subsequently, the extended STASRSS23 dataset enriched this by including synchronized 360° video recordings32, further advancing audio-visual SELD research20. These comprehensive, real-world datasets have been invaluable in bridging the gap between controlled synthetic experiments and the complexities of natural acoustic environments.

Growing diversity of recording setups

Recent data collection efforts reflect a growing trend toward exploring more diverse and practical recording conditions beyond stationary indoor arrays. This is crucial for deploying SELD systems on consumer devices for various real-world applications. For instance, Nagatomo et al.54 proposed the WearableSELD dataset, which captured spatial RIRs using microphones distributed across a head-and-torso simulator and various wearable items (e.g., earphones, glasses). Furthering this, Yasuda et al.55 introduced the 6 Degrees of Freedom (6DoF) dataset, which incorporated motion-tracking data from wearable sensors, simulating truly dynamic scenarios where both the user and the sound sources can move.

The DCASE Challenge 2025 signals another pivotal shift by focusing on SELD using stereo audio, moving away from the specialized four-channel FOA and MIC formats66. For this task, the FOA audio from the STARSS23 dataset is converted into a stereo format using mid-side conversion53. This initiative directly addresses the need to make SELD technology more accessible and applicable to the vast ecosystem of consumer electronics, which predominantly feature stereo microphone systems.

Despite these innovations, data collection has predominantly focused on indoor environments, leaving outdoor settings comparatively unexplored67. The UNS-Exterior Spatial Sound Events (UNS-ESSE2023) dataset is a notable exception, explicitly tailored for outdoor urban scenes, albeit with a limited number of event classes57. This highlights a significant opportunity for future research to create datasets that capture the unique acoustic challenges of outdoor applications.

Collectively, the evolution of these datasets chart a clear trajectory: from carefully parameterized synthetic scenes toward large-scale, and potentially multi-modal, recordings of everyday acoustic environments. The selection of an appropriate corpus is therefore a critical decision, dictated by the intended deployment domain, target microphone geometry, and the degree of realism required for robust model evaluation.

Input features

The design of input features directly influences the ability of a SELD model to accurately detect and localize sound events. Although some studies have explored end-to-end learning directly from raw audio waveforms68, the predominant and most successful approach in SELD involves transforming the raw multi-channel audio into structured, informative representations49. This explicit feature engineering serves two primary purposes: it reduces the dimensionality of the input, making learning more computationally efficient, and it embeds physically meaningful acoustic and spatial cues into the representation. Consequently, this process is integral to the success of state-of-the-art systems. Feature sets are typically composed of two key components: a foundational time-frequency spectral representation and explicit directional cues.

Foundational time-frequency representations

Time-frequency representations, or spectrograms, are the standard foundation for audio analysis in SELD due to their robustness in characterizing acoustic signals. A spectrogram visualizes the distribution of the energy of the signal across time and frequency. Initial SELD research employed multi-channel magnitude and phase spectrograms, which effectively preserves both the energy fluctuations of events and the raw spatial phase relationships between microphone channels5,62. These early representations are computationally efficient and relatively invariant to specific microphone array geometries44, enhancing model generalizability.

Contemporary approaches, however, have largely converged on using perceptually-inspired representations that better align with human auditory processing, with the most widely adopted being the log-Mel spectrogram. The Mel scale mimics human hearing by allocating higher resolution to lower frequencies, where human perception is more sensitive, while also compressing higher frequencies. This results in lower-dimensional features, which reduces computational complexity and can improve model generalization by focusing on perceptually relevant frequency bands69. Nevertheless, this non-linear frequency scaling presents a critical trade-off: spatial cues from different narrow bands are merged into a single Mel band, potentially hindering DOAE performance in scenarios with multiple overlapping sources51.

Incorporating explicit directional cues

For high-resolution localization, the implicit spatial information contained within multi-channel spectrograms is often insufficient. The contemporary approach is therefore to augment the foundational spectral representations with explicit directional features designed to embed spatial information. The choice of these spatial features is typically dictated by the audio recording format.

For FOA recordings, Intensity Vectors (IVs) are a common choice. As proposed by Perotin et al.45, these vectors are derived from the Ambisonic channels and describe the directional flow of acoustic energy at each time-frequency bin. Because the DOA of a sound source is associated with the inverse of this energy flow, IVs provide an effective and physically-grounded spatial cue.

Conversely, for MIC recordings, features based on the Generalized Cross-Correlation with Phase Transform (GCC-PHAT) are widely used. The GCC-PHAT is a robust estimator of the time difference of arrival (TDoA) between pairs of microphones, providing an effective geometric cue for inferring sound source direction19,70. However, these phase-based features are suspectible to degradation from noise and reverberation in real-world environments, a key limitation that has prompted the development of more advanced spatial features51.

Recent advances have focused on creating sophisticated feature sets that contain rich spectral and spatial information. This trend is best exemplified by the development of the Spatial Cue-Augmented Log-Spectrogram (SALSA) feature set51 and its variants, which represent significant advancements in SELD-based feature engineering:

-

SALSA: The original SALSA feature set combines log-linear spectrograms with advanced spatial cues derived from an eigenvector-based analysis of the spatial covariance matrix. This provides a rich, high-fidelity representation of an acoustic scene51.

-

SALSA-Lite: To improve computational efficiency for real-time applications, the same authors introduced a lightweight version, SALSA-Lite49. It replaces the costly eigen-decomposition with normalized inter-channel phase differences (NIPDs), achieving a significant speedup while preserving strong localization performance.

-

SALSA-Mel: Building on this, Huang et al.69 adapted SALSA-Lite for the Mel scale, resulting in the SALSA-Mel. This version further reduces dimensionality and computational load, making it highly suitable for resource-constrained edge applications.

The continual refinement of input features, from generic spectrograms to these sophisticated representations, highlights a pivotal evolutionary path in the field. The overarching goal is to design input representations that are rich in semantic and spatial information, robust to real-world acoustic conditions, and computationally efficient. Feature engineering remains a critical step in developing practical and reliable SELD systems capable of real-world deployment.

Model architectures

DNN architectures are the computational backbone of SELD, tasked with translating high-dimensional input features into a structured, spatiotemporal understanding of an acoustic scene. The design of these architectures has progressed from a foundational blueprint toward more powerful and flexible models that mirror broader trends in artificial intelligence. While the CRNN remains a highly effective and dominant framework48, the field has seen a clear progression towards deeper models, more sophisticated attention mechanisms, and the adoption of large-scale pre-training paradigms.

Foundational SELDNet

The first deep learning-based method for SELD, introduced by Hirvonen3, framed the task as a classification problem over a discrete spatial grid, using a simple CNN for detection and localization. The contemporary approach builds upon this work and was standardized by the seminal SELDNet architecture proposed by Adavanne et al.5. SELDNet established a new paradigm by framing the task as a joint classification and regression multi-task learning problem. This pivotal shift enabled the continuous, high-resolution estimation of sound event activities and their corresponding locations.

The SELDNet architecture is a classic CRNN, a hybrid design that became the blueprint for many subsequent systems. As illustrated in Fig. 5 (Left), its structure is logically composed of two primary modules. First, a CNN front-end is employed to effectively extract local patterns from input features. Next, an RNN module, typically implemented with bi-directional GRUs (biGRUs), processes the sequence of feature vectors from the CNN, which is vital for accurately tracking event onsets and offsets. Finally, the output layer of the network diverges into two heads: one performing multi-label classification to identify active sound events, and another performing regression to estimate their corresponding Cartesian coordinates.

(Left) The SELDNet architecture, adapted from Adavanne et al.5 It consists of convolutional layers, biGRU recurrent modules, and fully-connected layers to produce frame-by-frame event activity probabilities and corresponding DOA Cartesian coordinates. (Right) Block diagram of the popular ResNet-Conformer hybrid architecture, adapted from ref. 74, used by many top-performing systems.

The influence of SELDNet is evident, as it became a de facto baseline for numerous benchmarks, including DCASE Challenges31,32,34,35,65 and other public datasets54,62,63,64. Subsequent research has built on this foundation by incorporating more sophisticated input features and attention mechanisms to further refine accuracy and robustness71,72.

Deeper backbones and advanced attention

As SELD research matured, efforts turned to enhancing the feature extraction and temporal modeling capacities of the CRNN framework. This evolution has proceeded along two main axes: increasing the complexity of the convolutional backbone and advancing the sophistication of the temporal modeling component.

A prominent trend has been the replacement of shallow CNN front-ends with deeper, more powerful Residual Networks (ResNets)73,74,75. By introducing skip connections, ResNet-based architectures mitigate the vanishing gradient problem40, enabling the successful training of much deeper models. This allows the network to learn a more expressive hierarchy of features from the audio input, improving its discriminative power17. Another complementary approach is the incorporation of advanced attention-based mechanisms within the feature extraction backbones, such as the integration of Squeeze-and-Excite blocks (SqEx)76, Attentional Feature Fusion (AF), and Multi-scale Channel Attention Mechanisms (MS-CAM)77.

Concurrently, the choice of temporal modeling modules has shifted from purely recurrent models toward attention-based architectures. Attention mechanisms enable a model to dynamically weigh the important of different segments of a sequence, focusing on frames most relevant to the task. For instance, the Transformer46, with its self-attention mechanism, proved highly effective at capturing long-range dependencies without the sequential processing limitations of RNNs. This has led to the development of audio-specialized variants, most notably the Conformer architecture47, which combined convolution (for local patterns) with self-attention (for global context). The fusion of a ResNet front-end with Conformer modules, depicted in Fig. 5 (Right), has become a state-of-the-art backbone74, consistently adopted by many top-performing systems78,79,80,81,82.

Beyond adopting general-purpose attention, researchers have developed mechanisms tailored to the multi-faceted nature of SELD inputs83,84,85. Since features for SELD contain information across channel, tine, and frequency axes, specialized attention modules have been proposed to model these dimensions independently75,86. For example, Shul et al.87 proposed a channel-spectro-temporal attention mechanism that applies separate attention modules to each dimension, achieving strong performance while significantly reducing model complexity – a key advantage for real-time inference on edge devices88.

Large-scale pre-training

A transformative trend in modern SELD research is the adoption of large-scale pre-training89. Instead of training a model from scratch on limited, task-specific SELD data, this paradigm involves a two-stage process: first, a large model is pre-trained on a massive, general-purpose dataset; then, the knowledge of this model is transferred to the target SELD task90. This approach allows the model to learn a rich and generalizable representation of audio that serves as a powerful starting point for fine-tuning, often leading to significant performance improvements91,92.

Early examples included using large-scale pre-trained audio neural networks (PANNs)73, which were trained on the extensive AudioSet dataset for audio tagging1. More recent work has explored pre-training Transformer-based models, such as the Audio Spectrogram Transformer (AST)93,94, using self-supervised methods on millions of unlabeled audio clips. These pre-trained models can then be used to augment SELD systems with high-level information about the acoustic scene95. However, these models generally use single-channel audio and cannot be directly applied to the multi-channel SELD problem. Recent work by He et al.48 has begun addressing this problem by proposing methods to adapt these single-channel models for the multi-channel SELD task.

A task-specific pre-training strategy has recently emerged with pre-trained SELD Networks (PSELDNets)89. These PSELDNets can also be seen as the SELD version of the previous PANNs73 meant for audio tagging tasks, highlighting a pivotal step forward in environmental audio intelligence. The PSELDNets are pre-trained on massive, synthetically generated SELD datasets before being fine-tuned on smaller, real-world datasets. This approach has been shown to achieve state-of-the-art results by effectively transferring knowledge from diverse, large-scale data sources directly to the SELD problem.

Modular strategies

While integrated, end-to-end models dominate SELD research, a noteworthy alternative is the use of modular strategies. These approaches decouple the joint SELD task, typically by focusing on the SED and DOAE problems independently. For instance, Cao et al.70,96 employed a two-stage process where a SED model is trained first, and its learned weights are transferred to a second DOAE model with an identical architecture. Similarly, Nguyen et al.97,98 trained SED and DOAE models independently before using a specialized sequence-matching or alignment network to associate their outputs.

Although modular architectures can be effective, particularly where the complexity of detection and localization differs greatly59, they introduce significant complexity into the training pipeline. The need to manage, train, and fuse multiple models makes them less practical for seamless deployment. Consequently, the vast majority of state-of-the-art systems favor unified, end-to-end architectures that learn to detect and localize jointly, simplifying both the training and implementation process.

Data augmentation

High-quality, large-scale SELD datasets are often limited in size and class variability32,65, which can cause models to overfit the training data and fail to generalize to unseen conditions. Data augmentation methods mitigate these challenges by synthetically expanding the training data through controlled variations. Consequently, data augmentation is critical for improving model robustness and is a cornerstone of most high-performing SELD systems99. Table 3 summarizes several common data augmentations methods specific to SELD.

The central challenge in SELD-based data augmentation is to enhance data variability without corrupting the critical spectral content and spatial cues of sound events74. For instance, a naive transformation that modifies one microphone channel differently from another can destroy the inter-channel relationships crucial for localization100.

Augmenting the spectral-temporal domain

This category includes methods that operate directly on the time-frequency representation of the audio, often adapted from the image and speech processing domains101.

For instance, masking techniques such as SpecAugment102 and Cutout103 introduce random, rectangular-shaped masks in the time and frequency dimensions of the spectrogram. This forces the model to learn more robust and distributed representations, making it more resilient to the partial loss of information. For SELD, it is generally considered imperative that the same mask is applied coherently across all microphone channels to preserve the integrity of the spatial cues74. This constraint becomes especially critical for formats where inter-channel information is more pronounced, such as stereo or binaural recordings100.

Techniques such as Frequency Shifting51 and FilterAugment104 modify the spectral content of the audio. Frequency Shifting simulates pitch variations by shifting or reflecting frequency bands, while FilterAugment simulates the spectral coloration from diverse acoustic environments by applying random gain offsets to frequency bands105. Such methods encourage models to learn frequency-invariant features rather than focusing on a few dominant bands. However, the efficacy of these methods have been mixed80, highlighting the delicate balance between enhancing spectral diversity and inadvertently distorting critical spatial information, especially in real-world audio.

Augmenting the spatial domain

This class of techniques is particularly effective for SELD as they can directly create additional directional training samples from existing recordings without altering the underlying sound events or reverberation characteristics. For instance, SpatialMixup106 applies selective directional gains to the audio signals, simulating variations in loudness from different directions and creating more diverse spatial scenarios.

For Ambisonics recordings, spatial rotation is a highly effective method where mathematical rotation matrices are applied to the FOA channels107. This simulates rotating the entire sound field to a new orientation, effectively expanding the dataset several fold. Building on this concept, Wang et al.74 proposed the Audio Channel Swapping (ACS) method, a more general approach that works for both FOA and MIC audio formats. The ACS method systematically swaps or permutes microphone channels according to the physical symmetry of the recording array while adjusting the ground truth labels accordingly. For a tetrahedral array, this can generate up to eight unique directional configurations from a single recording while preserving the reverberation of the recording environment80.

Augmentation via mixture and synthesis

More sophisticated augmentation methods synthesize entirely new and realistic multi-channel audio clips. Mixture-based methods, for example, create new audio samples by mixing existing ones108. The Mixup method, proposed by Zhang109, linearly interpolates the waveforms and labels of two samples, which helps to regularize the network and smooth decision boundaries. Mixing two different monophonic samples can also create artificial polyphonic sound events, improving the ability of the model to detect overlapping sound sources110. SpecMix, as proposed by Kim et al.101, performs a similar operation in the time-frequency domain by mixing patches of two different spectrograms. Because this method only swaps designated time-frequency patches, it avoids the wholesale magnitude averaging of waveforms that can obscure class-discriminative cues.

Other synthesis methods include the Multi-Channel Simulation (MCS)74 and Impulse Response Simulation (IRS)99 frameworks. The MCS method isolates target events via beamforming to obtain source spectra and corresponding spatial covariance matrices. New multi-channel examples are then synthesized by randomly recombining spectra with spatial matrices. The IRS framework improves on the previous MCS method by eliminating directional interferences from the extracted target events. Subsequently, the IRS method replaces the empirically derived spatial covariance matrices with simulated RIRs, resulting in interference-free directional sound event examples.

Augmentation chains and adaptive policies

In practice, state-of-the-art systems rarely rely on a single augmentation method. Instead, they often employ augmentation chains—sequential pipelines that apply multiple transformations to improve robustness and generalization82,106. For instance, the top-ranking submission in the DCASE Challenge 2022 utilized a four-stage data augmentation strategy combining spatial (ACS), synthesis (MCS, Mixup), and Masking-based (SpecAugment) operations74.

However, designing these complex pipelines requires significant manual effort and parameter tuning111, which has motivated a recent push towards adaptive strategies. For instance, Zhang et al.81 introduced an Automated Audio Data Augmentation (AADA) framework that allows the network to automatically learn optimal augmentation parameters rather than relying on fixed ones. Such advancements in learnable and adaptive augmentation strategies hold immense promise for reducing the manual effort needed to improve the generalization of SELD models.

Output formats

The output format of a SELD model is a critical architectural component that defines how the system represents its understanding of an acoustic scene. An effective format must not only encode what sounds occur and where they originate but must also be robust to multiple, overlapping same-class sound sources. The design of these formats has evolved from conceptually simple representations to sophisticated structures designed to overcome this fundamental challenge. This progression, illustrated in Fig. 6, directly reflects the growing capability of SELD systems to parse realistic and complex acoustic environments.

(Top Left) The conventional class-wise two-branch design5 outputs separate SED probabilities and DOA coordinates for each class. (Top Right) The class-wise ACCDOA format112 integrates detection and localization into a single 3-D vector per class, where the vector’s direction encodes the DOA and its magnitude encodes event activity. (Bottom Left) The track-wise two-branch design113 replicates the SED and DOA outputs across multiple tracks to handle overlapping sound events of the same class. (Bottom Right) The multi-ACCDOA format116 extends ACCDOA to multiple tracks, combining a unified representation with multi-track functionality. Active sound events are denoted by green boxes.

Class-wise, two-branch approaches

Initial SELD architectures, as exemplified by SELDNet5, adopted a straightforward and intuitive output format. This approach, as depicted in Fig. 6 (top-left), uses two parallel network branches to produce a class-wise output. In this design, a SED branch performs multi-label classification, yielding a vector of activity probabilities for each known sound class. Simultaneously, a parallel DOAE branch performs regression, predicting a corresponding 3-D Cartesian coordinate vector (x, y, z) for each class, representing its location on a unit sphere.

While conceptually intuitive, this class-wise format suffers from a critical limitation: it can only represent a single active instance of a sound class at any given time. This makes it fundamentally incapable of resolving common scenarios where multiple sounds of the same type occur, such as two people speaking simultaneously from different directions. Furthermore, training separate classification and regression branches with distinct loss functions introduces complexity and can hinder stable convergence72.

Toward a unified representation

To address the challenges of the two-branch approach, Shimada et al.112 proposed the Activity-Coupled Cartesian DOA (ACCDOA) format, depicted in Fig. 6 (top-right). Instead of separating detection and localization outputs, ACCDOA combines them into a single 3-D vector for each sound class. In this representation, the direction of the vector encodes the DOA, while its magnitude represents the event’s activity probability. For the ground truth, a vector of unit length signifies an active event, while a zero-length vector signifies inactivity.

This tight coupling of detection and localization confers several advantages. First, by learning a single representation, the model is encouraged to develop more coherent spatial and semantic features. Second, it simplifies the training objective to a single regression loss on the 3-D vector, enhancing training stability and eliminating the need to balance multiple loss functions. Finally, model complexity is reduced by only using a single branch, yielding a more lightweight system. These benefits have positioned ACCDOA as a foundational concept in contemporary SELD frameworks.

Overcoming polyphonic scenarios

While ACCDOA solved the multi-task learning problem, it did not resolve the single-instance limitation of class-wise output formats. Effectively handling simultaneous occurrences of an identical sound class remained a persistent challenge in SELD35,65. The solution was the development of multi-track or track-wise output formats82,97,113,114, central to models such as the Event-Independent Networks (EINV)113 and the enhanced EINV2114. This paradigm, as illustrated in Fig. 6 (bottom-left), restructures the output layer to predict a fixed number of independent “tracks”. Each track acts as a potential sound source slot, with its own associated SED and DOAE outputs. A model with three tracks, for instance, can theoretically detect and localize up to three concurrent instances of the same event class. The number of tracks is typically determined by the maximum polyphony of the dataset110.

This solution, however, introduces the challenge of permutation ambiguity. Since the tracks are interchangeable and there is no inherent correct ordering for multiple identical sources, the model’s predicted order may not match the arbitrary order of the ground-truth annotation. To resolve this, track-wise models are trained using Permutation-Invariant Training (PIT)115. At each training step, PIT dynamically solves a combinatorial assignment problem, finding the optimal permutation between the predicted and ground-truth tracks that minimizes the loss function. This allows the network to learn effectively without being penalized for an arbitrary output ordering.

The synthesis: multi-ACCDOA

The logical culmination of these developments was the unification of the ACCDOA concept with the track-wise paradigm. Shimada et al.116 extended the original ACCDOA format with multi-track support, creating the multi-ACCDOA format as shown in Fig. 6 (bottom-right). This strategy duplicates the ACCDOA vector representation across multiple tracks for each class, enabling the network to represent and distinguish concurrent events of the same class from different directions. To handle the associated permutation ambiguity, the authors also introduced the Auxiliary Duplicating PIT (ADPIT) framework, a specialized training strategy for this format.

This integrated approach combines the representational effectiveness of ACCDOA with the polyphonic understanding of track-wise formats. Consequently, the multi-ACCDOA output format has become the foundational output strategy for state-of-the-art SELD systems designed for highly complex and polyphonic acoustic environments.

Alternative classification-based approaches

While most modern SELD systems employ regression-based output formats, an alternative paradigm frames the entire task as a classification problem. The first deep learning-based approach for SELD by Hirvonen3 pioneered this concept by treating localization as a multi-class classification task. In this work, the listening space was divided into a discrete set of spatial sectors (e.g., eight azimuth directions). Each unique combination of a sound event class and location (e.g., “speech” at 45°) was treated as a distinct class.

More recently, this idea has evolved into more sophisticated location-oriented frameworks that treat the listening area as a 2-D grid and predict event activity at each grid point. For instance, Kim et al.117 proposed AD-YOLO, inspired by the “You Only Look Once” object detection algorithm118. In this method, AD-YOLO assign grid cells the responsibility of detecting nearby sound sources, producing predictions that combine class probabilities and DOA coordinates for each cell. Similarly, Zhang et al.119 proposed the Spatial Mapping and Regression Localization for SELD (SMRL-SELD) framework, which considers each grid cell as either containing a specific sound event class or background noise, using a novel regression loss to guide localization. By shifting from an event-oriented to a location-oriented perspective, these hybrid classification-based approaches offer an effective alternative for handling complex polyphonic scenarios.

Evaluation and benchmarks

Quantifying the performance of a SELD system is uniquely challenging, as it requires a methodology that can simultaneously assess the accuracy of both event detection and localization. The evaluation metrics for SELD have matured significantly, evolving from separate, task-specific scores to an integrated framework that holistically captures the ability of a system to correctly associate what sound is present and where it originates.

Early decoupled evaluation

Early SELD evaluation, as defined for the inaugural DCASE Challenge 2019 SELD task, treated the two sub-tasks independently. SED performance was measured using standard classification metrics: Error Rate (ER) and F1 score22. Concurrently, DOAE was evaluated with a frame-level DOA Error (DE) and a Frame Recall (FR) metric44.

While informative, this decoupled approach exhibited a critical flaw: it failed to penalize systems for data association errors. A model could, for instance, achieve a low DE by correctly localizing a sound source while misclassifying its event label (e.g., localizing a “dog bark” but labeling it as “speech”). These incorrect associations would not be adequately reflected in the final scores, meaning that the metrics did not fully represent the practical utility and true performance of a SELD system.

Contemporary joint evaluation

Recognizing the inherent interdependence of the tasks, the DCASE 2020 Challenge organizers introduced a standardized, integrated evaluation framework that has since become the community standard19,120. This framework is built upon metrics that jointly consider classification and localization accuracy.

The core of this framework rests on location-dependent detection scores and class-dependent localization scores. In this framework, SED performance is quantified by the location-dependent Error Rate (\({\text{ER}}_{\le {\text{T}}^{\circ }}\)) and F1 score (\({\text{F1}}_{\le {\text{T}}^{\circ }}\)). A detected event is considered a true positive only if its class label is correct and its estimated DOA is within a spatial threshold, T° (typically 20°), of the ground-truth direction. The \({\text{F1}}_{\le {\text{T}}^{\circ }}\) score is calculated from the location-dependent precision and recall metrics. The \({\text{ER}}_{\le {\text{T}}^{\circ }}\) is the sum of substitutions (class errors), deletions (missed events), and insertions (false alarms) tallied between predictions and ground-truth references that are within this spatial threshold, divided by the total number of reference events.

Concurrently, DOAE performance is quantified by the class-dependent Localization Error (LECD) and Localization Recall (LRCD). The LECD score measures the average angular distance between predicted and ground-truth DOAs for correctly classified events. The LRCD represents the per-class recall, calculated as the fraction of ground-truth events that are correctly detected for the sound event class. Crucially, these localization metrics are computed only for correctly classified sound events, thereby measuring the localization performance exclusively on successfully detected sounds.

To provide a single, comprehensive score for ranking systems, the overall SELD error (\({{\mathcal{E}}}_{{\rm{SELD}}}\)) aggregates these four interdependent metrics:

An effective SELD system aims to minimize ER ≤ 20°, LECD, and \({{\mathcal{E}}}_{{\rm{SELD}}}\), while maximizing \({\text{F1}}_{\le 2{0}^{\circ }}\) and LRCD. This joint evaluation methodology ensures that modern systems are optimized to correctly link the what and where, which is the true goal of SELD.

Current state-of-the-art

Table 4 provides an overview of top-performing SELD systems benchmarked on the STARSS23 dataset32, which also serves as the basis for the recent DCASE 2023 Challenge task on SELD. Analysis of these leading systems reveals several clear trends that define the current state-of-the-art.

As evidenced by the DCASE baseline results, a performance advantage is observed for the FOA format over raw MIC signals, a trend also noted in other studies32,48,51,65. This is likely attributed to the rich, physically meaningful spatial information explicitly encoded in the FOA channels, enabling the extraction of more effective input features. Consequently, all top-performing systems listed in Table 4 use the FOA audio format. However, because MIC-based systems utilize raw microphone signals, they are more flexible to a wider range of microphone geometries52. A substantial research opportunity therefore exists to bridge this performance gap for practical, real-world deployment.

Architecturally, there is a clear shift away from the classic CRNN. The leading systems predominantly utilize powerful feature extraction backbones, such as ResNets, combined with advanced attention-based mechanisms, such as Conformers, for temporal modeling. Furthermore, achieving state-of-the-art performance now extends beyond model architecture to encompass complex training and inference strategies. Nearly all top-performing systems utilize methods such as large-scale pre-training on massive datasets48,89,95, model ensembling to combine outputs from multiple models78,121,122, or custom post-processing techniques such as output averaging and test-time augmentation to refine final predictions78,79,87.

While these advanced techniques achieve impressive results on benchmarks, they also highlight a growing gap between benchmark performance and practical, real-world deployability. These state-of-the-art systems are often computationally immense, with substantially large model sizes and ensemble configurations that are generally too slow and resource-intensive for real-time inference on edge devices78,121,122. This underscores a crucial challenge for the field—the development of systems that are not only accurate but also computationally efficient enough for practical application.

Current challenges and emerging opportunities

Despite substantial progress, the transition of SELD systems from controlled benchmarks to robust, real-world deployment is impeded by significant challenges. These challenges, however, also define the most promising areas for future research. This section outlines these limitations and the corresponding opportunities for scientific and technological advancement.

Handling dense polyphony and source overlap

A primary obstacle for SELD is the sheer complexity of real-world acoustic scenes. High degrees of polyphony, particularly involving multiple instances of the same sound class, can obscure spectral cues and introduce ambiguity in spatial estimation, making it increasingly difficult to distinguish and localize a large number of simultaneous sources11. While modern track-wise output formats enable models to handle a fixed number of concurrent sources116, they are inherently limited and fail when this capacity is exceeded or when sources originate from spatially similar locations.

This limitation presents an opportunity to evolve SELD from a detection and localization framework into a more comprehensive acoustic scene decomposition paradigm. Future research could focus on models that dynamically estimate the number of active sources rather than relying on a fixed, predetermined number of output tracks. One promising avenue is the integration of source separation modules as a pre-processing step to disentangle mixed signals before the primary SELD task95,123. A complementary approach is the exploration of “location-oriented” frameworks117,119. By assigning class probabilities to a spatial grid, these methods can theoretically detect and localize an arbitrary number of concurrent events, offering a potential solution to the same-class polyphony limitation without being constrained by a predefined number of tracks.

Toward full 3-D spatial awareness

The evolution from traditional 2-D SELD (azimuth, elevation) to comprehensive 3-D spatial awareness, which includes accurate distance estimation, represents a critical next step for the field16,21. Robust distance estimation is essential for unlocking richer spatial intelligence in applications such as virtual and augmented reality, immersive audio experiences, and robotics navigation. Notably, distance-aware systems are increasingly prevalent, underscoring the demand for such advanced spatial intelligence124,125.

Despite these benefits, integrating accurate distance estimation into SELD systems remains difficult. Estimating distance from audio alone is a fundamentally challenging problem, as distance cues are often entangled with source-specific properties (e.g., loudness) and the acoustic characteristics of the recording environment126. The DCASE 2024 Challenge on 3-D SELD highlighted this difficulty127; despite using large datasets and complex models, most systems struggled to outperform the baseline in distance estimation accuracy128,129,130.

This performance gap highlights that existing SELD features are not optimized for the nuanced task of distance estimation. It presents a clear opportunity to develop physically-motivated input features that explicitly model distance-related acoustic cues126. Recent work has begun to explore cues such as reverberation characteristics and TDoA information131. For instance, Berghi & Jackson90 proposed features using the short-term power of the signal’s autocorrelation (stpACC) to capture information about early reflections. Similarly, Yeow et al.132 jointly modeled coherence and direct-path energy to create a robust distance cue. This trend toward specialized, physics-informed features is a key pathway for achieving true 3-D spatial intelligence.

Real-time processing and edge deployment

A major hurdle is the gap between the computationally intensive models that achieve state-of-the-art results on academic benchmarks and the stringent requirements for real-time, on-device deployment. Top-performing systems often rely on massive, complex architectures, such as large ResNet-Conformer ensembles74, which are prohibitive for real-time inference on resource-constrained edge devices such as wearable sensors or autonomous robots23,54. Furthermore, the computational overhead of multi-channel feature extraction and the inherent latency of deep models present further major barriers to deployment52,88.

This creates a pressing need for research in efficient artificial intelligence for acoustics. The opportunity lies in developing lightweight SELD models that maintain high accuracy while operating under strict power and latency budgets. Promising research directions include the design of streamlined network architectures, such as replacing recurrent layers with temporal convolutional networks (TCNs). The SELD-TCN network proposed by Guirguis et al.133 was a pioneering example, demonstrating substantially faster inference speeds. Subsequently, Brignone et al.134 proposed the QSELD-TCN network to leverage the parameter efficiency of Quaternions, achieving remarkable reductions in both model size and computational cost.

Future research can also investigate advanced model compression techniques such as network pruning, quantization, and knowledge distillation. In knowledge distillation135, a compact “student” model is trained to replicate the output of a much larger, high-performance “teacher” model, effectively transferring its knowledge into an efficient form121. Approaches from related domains, such as acoustic scene classification136, demonstrate that real-time processing is feasible with such targeted architectural optimization.

Achieving robustness in unseen environments

When transitioning from synthetic or controlled training data to real-world acoustic environments, SELD models often exhibit significant performance degradation132,137. While synthetic data enables precise control over acoustic conditions, it often fails to capture the full complexity of natural environments61. Real-world scenarios are characterized by pervasive background noise, complex reverberation, and moving sources, all of which can cause a mismatch between the training and testing feature distributions, thereby compromising SELD performance69,138.

The challenge of robustness creates vital research opportunities in domain adaptation. Rather than simply training on more diverse data, the goal is to create models capable of dynamic adaptation. For instance, Yasuda et al.139 proposed a framework that uses measured echo signals to adapt a SELD model to unknown environments. Similarly, Hu et al.137 proposed META-SELD, a framework that applies Model-Agnostic Meta-Learning (MAML)140 to enable SELD models to adapt quickly to new acoustic environments using only a few samples. Building on this, Hu et al.141 later proposed environment-adaptive META-SELD, an enhanced framework that incorporates selective memory and environment-specific representations to mitigate conflicts between diverse acoustic environments.

Beyond fixed taxonomies

A fundamental limitation of most SELD models is their reliance on a fixed, predefined list of sound classes. This makes them ill-suited for long-term, real-world deployment, where systems must adapt to novel sounds and evolving user needs. This has catalyzed research into more flexible and scalable paradigms, such as class-incremental learning. Pandey et al.142 proposed a class-incremental learning framework, which enabled SELD systems to incorporate new sound classes as additional information is available without requiring complete retraining from scratch. Such methods address the challenge of “catastrophic forgetting” and will be essential for creating systems that can evolve throughout their operational lifetime.

Extending these concepts, the paradigm of open-vocabulary SELD has emerged, which aims to create systems not confined to any fixed class list. For instance, Shimada et al.143 proposed an embed-ACCDOA model that jointly predicts a spatial location and a semantic embedding for each sound event. By leveraging the knowledge of contrastive language-audio pre-training (CLAP) models144, these systems allow users to define target sound events using natural language text prompts at inference time. This represents a monumental step towards truly user-centric, scalable, and flexible SELD systems, enabling more open-ended and descriptive understandings of our acoustic environments.

Advancing data-efficient learning paradigms

The high cost of curating large-scale, strongly-labeled datasets remains a fundamental bottleneck for SELD, challenging model generalization in real-world deployments48,61. While data augmentation techniques are effective74,99,107, there is a growing need for learning paradigms that can substantially improve model robustness while reducing the reliance on labeled data.

This has motivated research into semi-supervised and self-supervised learning, which leverage large volumes of readily available unlabeled audio to learn powerful representations. For instance, Santos et al.145 pre-trained a wav2vec-style encoder146 directly on unlabeled FOA recordings, showing substantial performance gains when fine-tuned on a small amount of labeled data. Similarly, Nozaki et al.147 proposed a source-aware spatial self-supervised learning method that uses blind source separation to inherently separate and localize sound sources, reducing the need for extensive labeled during fine-tuning.

A parallel and highly promising direction is the development of zero- and few-shot learning frameworks, which demonstrate that SELD systems can potentially learn to detect and localize sound events with minimal or even no labeled examples for those specific classes143,148. Together, these data-efficient learning paradigms are crucial for overcoming the annotation bottleneck and unlocking the full potential of SELD for scalable deployment.

Conclusion

SELD has matured into a vibrant field, propelled by advancements in deep learning. This review has charted its comprehensive progress: from foundational CRNNs to sophisticated attention-based architectures, and from generic spectrograms to specialized, spatially-aware input features. Concurrently, systematic refinements in data augmentation and output formats have enabled the handling of complex polyphonic scenes. These collective advancements have significantly enabled the ability of computational systems to parse acoustic scenes, bring the goal of Environmental Acoustic Intelligence closer to reality.

Despite this progress, a critical gap persists between benchmark performance and the demands of practical, real-world deployment. State-of-the-art models are often too computationally intensive for low-latency inference on edge devices. Furthermore, overlapping same-class sources, complex acoustic environments, and the limited availability of large-scale strongly-labeled labeled datasets continue to challenge current methods. These challenges, however, directly highlight the most promising avenues for future research. Future advancements include exploring open-vocabulary learning, incorporating complex 3-D distance estimation, and creating adaptive models that can generalize to unseen environments.

Moving forward, the advancement of SELD will hinge on a multi-faceted effort. Coordinated benchmarking initiatives, such as the DCASE Challenges, will remain indispensable for steering research efforts and ensuring rigorous, comparative evaluation. As the field addresses these frontiers, SELD will transition from a promising academic pursuit into a versatile and widely deployed technology. In doing so, it will not only enhance how machines hear but will form a critical component of holistic machine perception, enabling systems to understand and interact with their environments – achieving unprecedented Environmental Acoustic Intelligence.

Data availability

No datasets were generated or analysed during the current study.

References

Gemmeke, J. F. et al. Audio set: an ontology and human-labeled dataset for audio events. In 2017 IEEE international conference on acoustics, speech and signal processing (ICASSP), 776–780 (IEEE, 2017).

Virtanen, T., Plumbley, M. D. & Ellis, D. Computational analysis of sound scenes and events (Springer, 2018).

Hirvonen, T. Classification of spatial audio location and content using convolutional neural networks. In Audio Engineering Society Convention 138 (Audio Engineering Society, 2015).

Risoud, M. et al. Sound source localization. Eur. Ann. Otorhinolaryngol. Head. Neck Dis. 135, 259–264 (2018).

Adavanne, S., Politis, A., Nikunen, J. & Virtanen, T. Sound event localization and detection of overlapping sources using convolutional recurrent neural networks. IEEE J. Sel. Top. Signal Process. 13, 34–48 (2018).

Jekateryńczuk, G. & Piotrowski, Z. A survey of sound source localization and detection methods and their applications. Sensors 24, 68 (2023).

He, W., Motlicek, P. & Odobez, J.-M. Deep neural networks for multiple speaker detection and localization. In 2018 IEEE International Conference on Robotics and Automation (ICRA), 74–79 (IEEE, 2018).

Tan, E.-L., Karnapi, F. A., Ng, L. J., Ooi, K. & Gan, W.-S. Extracting urban sound information for residential areas in smart cities using an end-to-end IoT system. IEEE Internet Things J 8, 14308–14321 (2021).

Kotus, J., Lopatka, K. & Czyzewski, A. Detection and localization of selected acoustic events in acoustic field for smart surveillance applications. Multimed. Tools Appl. 68, 5–21 (2014).

Bello, J. P. et al. Sonyc: a system for monitoring, analyzing, and mitigating urban noise pollution. Commun. ACM 62, 68–77 (2019).

Nguyen, T. N. T. et al. What Makes Sound Event Localization and Detection Difficult? Insights from Error Analysis in Proceedings of the 6th Detection and Classification of Acoustic Scenes and Events 2021 Workshop (DCASE 2021) Barcelona, Spain, 120–124 (2021).

LeCun, Y., Bengio, Y. & Hinton, G. Deep learning. Nature 521, 436–444 (2015).

Chan, T. K. & Chin, C. S. A comprehensive review of polyphonic sound event detection. IEEE Access 8, 103339–103373 (2020).

Mohmmad, S. & Sanampudi, S. K. Exploring current research trends in sound event detection: a systematic literature review. Multimed. Tools Appl. 83, 84699–84741 (2024).

Mesaros, A., Heittola, T., Virtanen, T. & Plumbley, M. D. Sound event detection: a tutorial. IEEE Signal Process. Mag 38, 67–83 (2021).

Desai, D. & Mehendale, N. A review on sound source localization systems. Arch. Comput. Methods Eng. 29, 4631–4642 (2022).

Grumiaux, P.-A., Kitić, S., Girin, L. & Guérin, A. A survey of sound source localization with deep learning methods. J. Acoust. Soc. Am. 152, 107–151 (2022).

Mohmmad, S. & Sanampudi, S. K. A parametric survey on polyphonic sound event detection and localization. Multimed. Tools Appl. 84, 22083–22120 (2024).

Politis, A., Mesaros, A., Adavanne, S., Heittola, T. & Virtanen, T. Overview and evaluation of sound event localization and detection in dcase 2019. IEEE/ACM Trans. Audio Speech Lang. Process. 29, 684–698 (2020).

Berghi, D., Wu, P., Zhao, J., Wang, W. & Jackson, P. J. Fusion of audio and visual embeddings for sound event localization and detection. In ICASSP 2024-2024 IEEE International Conference on Acoustics, Speech and Signal Processing (ICASSP), 8816–8820 (IEEE, 2024).

Krause, D. A., Politis, A. & Mesaros, A. Sound event detection and localization with distance estimation. 32nd European Signal Processing Conference (EUSIPCO) Lyon, France, pp. 286–90 (2024).

Mesaros, A., Heittola, T. & Virtanen, T. Metrics for polyphonic sound event detection. Appl. Sci. 6, 162 (2016).

Shabbir, A. et al. Enhancing smart home environments: a novel pattern recognition approach to ambient acoustic event detection and localization. Front. Big Data 7, 1419562 (2025).

Svatos, J. & Holub, J. Impulse acoustic event detection, classification, and localization system. IEEE Trans. Instrum. Meas. 72, 1–15 (2023).

Park, J., Cho, Y., Sim, G., Lee, H. & Choo, J. Enemy spotted: In-game gun sound dataset for gunshot classification and localization. In 2022 IEEE Conference on Games (CoG), 56–63 (IEEE, 2022).

Banchero, L., Vacalebri-Lloret, F., Mossi, J. M. & Lopez, J. J. Enhancing road safety with ai-powered system for effective detection and localization of emergency vehicles by sound. Sensors 25, 793 (2025).

Kojima, R., Sugiyama, O., Suzuki, R., Nakadai, K. & Taylor, C. E. Semi-automatic bird song analysis by spatial-cue-based integration of sound source detection, localization, separation, and identification. In 2016 IEEE/RSJ International Conference on Intelligent Robots and Systems (IROS), 1287–1292 (IEEE, 2016).

Butko, T., Pla, F. G., Segura, C., Nadeu, C. & Hernando, J. Two-source acoustic event detection and localization: Online implementation in a smart-room. In 2011 19th European Signal Processing Conference, 1317–1321 (IEEE, 2011).