Abstract

The study examines how aspects of recorded soundscapes and the visual field can influence test participants’ emotional responses in an urban environment in Okinawa, Japan. Despite a breadth of study in soundscape and well-being, there is very little research on sensory congruence and well-being in the context of natural vs. urban soundscapes in a controlled environment, and despite its importance for the design of restorative spaces. This study asks: How do natural and synthetic soundscapes differentially influence emotional responses, and how does coloured lighting, in combination with these soundscapes, modulate such responses? We report results from an immersive environmental installation in a pedestrian tunnel. The variables were a selection of natural sounds comprising local recordings of wildlife and natural phenomena, and synthetic sounds generated using digital waveforms and filters as sound sources. Coloured lighting was employed in conjunction with the sounds, ranging from warm to cool colours. Data from participants were recorded on a tablet-based interface using an emotional circumplex and analysed quantitatively. Findings reveal that the natural sounds played in the tunnel in relation to colours significantly affect the subject’s emotional well-being. The results from this study have important implications for the design and consideration of sound in urban environments.

Similar content being viewed by others

Introduction

This paper presents the initial findings of an interdisciplinary research programme conducted by Okinawa Institute of Science and Technology Graduate University (OIST)’s Sonic Lab, Sony CSL Japan, and UCL’s Bartlett School of Architecture.

Soundscapes and their perceived impact on well-being are a popular subject for architects and urban planners, especially since the work of R Murray Schaffer argued for more thought to be given to how our environments sound alongside their visual characteristics. Considerable research has been undertaken into establishing health-related effects in the sonic qualities of soundscapes1. Key findings have suggested that natural environments and associated soundscapes can enhance mood, cognition and restorative well-being2. The literature on the subject largely focuses on sound in isolation, and few studies reference multisensory approaches, especially the influence of cross-modal perception on the impact of soundscapes and well-being. There is a need to explore ‘multisensory experiences’3. Yet there is scarce evidence of the impact of colour on the efficacy of soundscapes on well-being. The outcomes of this paper are essential for the development of urban environments, interiors and spaces for people, especially where pre-recorded or simulated soundscapes are to be considered as a means of generating well-being and human comfort.

Urban sound, and especially environmental noise, is an increasing health problem; there are established links between noise and cardiovascular, cognitive and sleep-related outcomes. Tens of millions of the human population are exposed to harmful noise levels, requiring significant public health intervention4. The impact of noise on the cardiovascular system is significant, even when isolated from airborne pollution5. In a 2006 review of epidemiological studies, it was found that traffic noise is linked to high blood pressure, heart disease and other cardiovascular risks6. Environmental noise exposure causes stress reactions, hypertension, and oxidative stress, which all link chronic noise to heart disease7.

Urban soundscapes and environmental noise are clearly detrimental to health and well-being, but conversely, the link between natural soundscapes and well-being is well-documented and established. A full review of the psychological and physiological effects of soundscapes found consistent health benefits, including stress reduction8. Yet it is in largely open spaces such as green spaces and natural parks where health benefits are mostly prevalent, especially in WHO-5 Well-Being scores1,9.

The consistent element in the studies of health benefits of soundscapes is that natural soundscapes support emotional, cognitive and physical health. Bio-diverse soundscapes have been shown to support both human well-being and ecosystem health10. Individual natural elements have been singled out as beneficial, such as birdsong and bird-related soundscapes, enhancing psychological restoration11.

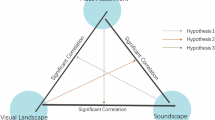

Natural environments are, by their nature, multisensory spaces, and the impact on the senses should be considered in the round, with visual influence being as important as sound in the efficacy of emotional response. Such congruence on multisensory well-being has been studied in cars12, between sound attributes such as pitch and loudness and colour13, and with musical instrument timbre, especially the impact of colour on the perception of tone14. These studies suggest that sound cannot be perceived in isolation if its impact is to be understood, and that visual context is critical to understanding perceived soundscape pleasantness models15. However, the impact of multimodal congruence in soundscapes is limited to a few studies. In regard to urban and man-made manufactured environments, the relationship changes; the impact of visual context on soundscape has been undertaken with residential areas, looking at the impact of architectural conditions on influencing factors of soundscapes16,17. The impact of the visual, especially colour, on noise annoyance judgments has been shown to be statistically significant, especially in terms of loudness18.

With regard to the experimental approach used to study participants' responses to soundscape, studying responses to soundscapes in more natural conditions—away from more rigid, laboratory settings—is proving to be valuable. To date, an interactive public installation has been used to observe how people react to the soundscape19. Another recent study used a combination of four public installations and written surveys to explore how sounds used, the venues, and other factors present at the venue, contribute to the subjects’ perception of valence and wellbeing20. However, how soundscape and colours interact to influence the emotional state remains unexplored in this context.

Thus, despite the breadth of study into the visual field, sound, soundscapes and well-being, there is a paucity of focused research into the impact of colours, colour temperature, especially on the efficacy of recorded soundscapes. The work outlined in this study is a controlled study into how the colour temperature of the visual environment affects the emotional responses to both synthetic and natural soundscapes. The focus was on the following questions:

-

1.

How do natural and synthetic recorded soundscapes differ in their influence on emotional responses in an urban environment?

-

2.

How does lighting (warm vs cool colour temperatures) interact with soundscapes to shape these responses?

Results

Overview of the data acquired

The experiment consisted of 25 days of audiovisual presentations to investigate the effect of synthetic versus natural sounds on the emotional states of participants in an urban environment (Fig. 1A). During this period, the study collected a total of 3365 responses from the two survey stations combined. The number of responses was largely consistent from day to day, although with a slight decline over time and minor deviations on holidays (Fig. 2B). Over the day, the response frequencies fluctuated with the traffic in the tunnel (Fig. 1C). Comparable numbers of responses were obtained across stimulus conditions (Fig. 1D).

A Matrices representing acoustic stimuli presented for every 20-minute slot for blue and red visual environments (top and bottom, respectively); categories of sound presented are colour-coded as shown in the legend; white squares indicate that the stimulus was presented in the other colour environment. B Number of responses collected per day. Data from the two survey stations are combined. Data from workdays (Monday–Friday) are shown in black; red points correspond to data from holidays (Saturday, Sunday, and national holidays). C Number of responses per 20-minute slot for workdays (black) and holidays (red). Mean and SD shown. D Responses obtained from the whole experimental period for each sound category in conjunction with a specific visual environment.

A All responses on Russell’s circumplex survey for the three sound categories. The vertical and horizontal axes correspond to valence and activation components. B Average vectors for the data in (A) with the same colour coding. The range of directions corresponds to the 95% confidence interval, obtained by the Bootstrap method. C Magnitude of the average vectors for the three sound categories, with 95% confidence interval obtained by Bootstrap. P < 0.001, one-way ANOVA for Bootstrapped samples.

Effect of synthetic vs. natural sound on the emotional states

The data collected above were first used to assess whether the participants’ emotional states depended on the sound categories, regardless of what visual stimuli were present. To this end, the two-dimensional coordinates that the participants entered on the Circumplex survey for the natural sounds, synthetic sounds, or no sound conditions were compared. On average, the responses tended to have positive valence and positive activation levels for all categories. This result is evident in the greater density of points in the upper right quadrants (Fig. 2A). The direction of the resultant vector did not differ statistically across the three sound categories (Fig. 2B). However, the responses to the synthetic sounds were less coherent, overall, compared to the natural sounds and no sound conditions, manifesting in the shorter magnitude of the resultant vector for the synthetic sounds (Fig. 2C; Average vector lengths = 56 pixels [95% confidence interval = 48.50, 64.79], 30.70 pixels [23.09 38.98], and 56.30 pixels [49.01 63.68] for No sound, Synthetic, and Natural sound categories, respectively). In summary, the synthetic sounds used in our study had a negative impact on the emotional well-being of the participants.

Congruence of sound and visual environment appears specifically for natural sounds

To further understand the difference between responses to natural and synthetic sounds, the analysis was separated by individual scenes within categories, as well as the hues of the visual environment. Again, the quality and strength of emotional tendencies were analysed using the Circumplex coordinates. For the synthetic sounds, none of the acoustic scenes produced a positive effect for any visual condition, indicating that the adverse influence of the synthetic sounds was general for our stimulus sets. For the natural sounds, in contrast, certain juxtapositions of acoustic scenes and hues produced a marked increase in valence. The enhancement in valence was observed for the ocean scene in the blue environment (Fig. 3A, B; difference in average vector lengths (cool –warm) = 58.73 pixels; C.I. for null distribution = [−59.20 50.11]). For the rainy scene with frog calls, a significant increase in valence was observed preferentially in the red visual environment (difference in average vector lengths (cool–warm) = −65.68 pixels; C.I. for null distribution = [−56.22 48.83]). Overall, our results suggest that multimodal stimulus presentations are beneficial for emotional well-being, but the effect is specific to the combination of acoustic scene and colour.

A Responses on the emotional circumplex survey separated by individual acoustic scenes for the synthetic category (left) and natural category (right), and further distinguished by the visual environment (red vs. blue hues). Thick lines are the average vectors. B Summary data showing the magnitude of the average vectors for each sound-colour combination. Dots correspond to the observed average vector length, with the range of the 95% confidence interval obtained by Bootstrap resampling. *significant at an alpha level of 0.05. p = 0.018 and 0.02 for the effect of colour on emotional responses to natural coral and wave sounds and rain and frog sounds, respectively.

Discussion

The scope of the experiment was broad: we examined how visual environments shape the perception of natural and synthetic soundscapes, as well as their effects on well-being. Colour influenced participants’ emotional responses to natural sounds, though the strength of the effect varied by sound type. The study took place in an uncontrolled public space, which allowed for a large number of responses to be collected without behavioural constraints but introduced limitations. These included slight modulation of the acoustics within the tunnel due to the absorbative effect of participants and potential sample bias, as most participants were local users familiar with the space, and a lack of complete control over environmental variables. Both the strengths and weaknesses of these conditions are considered in the results.

The specificity of the observed colour–sound relationships suggests that congruence enhances the recognisability of scenes21. Participants may have drawn on personal associations, such as linking the warmth of sodium lighting with familiar night-time natural sounds like frogs, creating comfort through resonance with lived experience. Strong pairings emerged between land-based phenomena and warm colours, and aquatic sounds with cool colours. Recognition of scenes may thus be bound to emotional memory and cultural context, particularly as participants were local to Okinawa. This raises the question of whether the effects are site-specific or generalisable, requiring further research.

A further limitation was the reliance on self-reported data at the end of the experience, which may have introduced noise, as emotional states are influenced by many factors. Collecting paired data at both entry and exit could reduce this. Exploring responses across demographic variables—age, gender, cultural background, and familiarity with the site—would also clarify whether the effects are universal or group-specific.

In nature, soundscapes evolve continuously without exact repetition, and this variability may sustain engagement. Synthetic sounds, designed to replicate natural ones at the same SPL, produced unexpectedly negative reactions. Their similarity and slight deviation from natural patterns may have created an “uncanny valley” effect. From a predictive coding perspective, such near-matches may generate error signals, as the brain expects truly natural acoustic patterns. Natural sounds are generated by thousands of distributed micro-events, which are difficult to replicate without immense computational power. Future work could refine synthetic sounds to reduce these inconsistencies or lower amplitudes to perceptual thresholds, potentially achieving effects closer to natural soundscapes. Such findings align with Attention Restoration Theory (ART22) and Stress Recovery Theory (SRT23), which emphasise the cognitive and emotional benefits of natural stimuli, though from different perspectives—ART on replenishing attention, and SRT on emotional and physiological recovery. Both highlight the multisensory integration underlying well-being.

The influence of colour on these outcomes was clear. However, our experiments were limited to warm and cool tones, primarily red and blue. Further work should expand the colour space to include secondary and interstitial colours (orange, purple), as well as variations in saturation, brightness, and contrast. Tests should compare abstract single-colour stimuli with more naturalistic palettes, such as forests and fields, and examine whether effects persist beyond exposure. Studies in other cultural contexts are also needed to test whether associations are universal or culturally constructed.

The multisensory effects observed suggest integration is more complex than simple addition. Pairings like “ocean sounds with blue” benefited from semantic congruence, while counterintuitive combinations such as “rain with red colour” likely drew strength from cultural associations. In Japanese culture, for instance, red symbolises both danger and protection, potentially amplifying the restorative impact of rain sounds through feelings of security.

These insights have implications for restorative urbanism and salutogenic design, particularly in artificial environments where sensory cues must be carefully considered for their impact on long-term well-being. They may inform urban planning policies that recommend specific colour–sound pairings for different functions and help design inclusive spaces for those with sensory impairments.

Finally, the strong preference for natural over synthetic sounds supports both the biophilia hypothesis and sound object recognition theory: people are comforted not just by natural stimuli, but also by the ability to correctly identify them. Synthetic sounds, by obscuring source recognition, created cognitive dissonance and discomfort.

Overall, the study highlights how colour, soundscape, and light interact to influence well-being. Since artificial light always carries a colour temperature—from warm reds and yellows to cool blues and greens—its role alongside sound requires closer study in both urban and interior design contexts.

In summary, this study investigated the influence of audiovisual stimuli on the emotions of passersby in an everyday environment: a public passageway. There were three main findings. First, natural sounds, such as birdsong and ocean waves, consistently guided people’s emotions in a positive direction. Second, synthetic sounds mimicking them did not produce the expected effect but rather had a negative impact, lacking emotional coherence. The third and most significant finding was that specific sound-colour combinations markedly enhanced emotions; namely, the ‘ocean sound and blue colour’ and ‘rain sound and red colour’ combinations significantly increased positive emotional valence compared to other pairings.

The findings of this study offer important implications for the design of public spaces. By not just introducing natural elements into sterile environments like tunnels and waiting rooms, but by designing ‘meaningful sensory combinations’ rooted in the local culture and people’s experiences, these spaces can be transformed into ‘restorative environments’ that actively enhance well-being. Future research is expected to further explore the universality and cultural dependency of the effects of audiovisual experiences on emotion by using objective physiological indicators in addition to self-reports and by conducting similar experiments in different cultural contexts.

Methods

Research design

The experiment was set up as a public installation, “Oto-Iro” (“Sound-Colour” in Japanese), which was a month-long sound and video presentation held in a 100-metre tunnel of OIST (Fig. 4). The tunnel serves as a gallery for visitors as well as the entrance for the university. The experiment aimed to test the influence of natural vs. synthetic sounds, colours, and their juxtaposition on the emotional states of passersby in a non-laboratory setting. The choice of the space allowed repeated presentations of specific stimulus combinations to overlapping groups of participants, the majority of whom were commuters. The procedures have been approved by OIST’s Human Subject Research Ethics Committee.

A Plan and section of the tunnel showing speakers and projection panels. B, C Photographs of the immersive environmental installation, for the “red” condition (B) and “blue” condition (C).

Variables and experimental conditions

Acoustic stimuli comprised natural and synthetic categories, in addition to no sound presentation. Natural scenes generally comprise different facets, yet the number of stimuli that could be presented during the experiment was limited. Previous studies found that the beneficial effect of natural sounds depends on whether the sounds are recognised24. With these considerations, the natural acoustic stimuli comprised mixtures of recordings from the local terrestrial scene (Ryukyu scops owl hoots against the background of night insects), ocean scene (gentle waves against the background of coral sounds), and rainy scene (frog sounds against the background of rainfall sounds). Natural sounds were recorded using the following equipment: Microphones, Sennheiser MKH416, Sennheiser MKH 8040 AQUARIAN Hydrophone. Recorders; ZOOM F6, ZOOM H8, Format: 44.1 kHz/24 bit. The Owl sound was recorded by OIST’s Okinawa Environmental Observational Network.

The synthetic sounds mimicked the natural sounds, but were synthesised from filtered white noise and basic waveforms. These sounds were modelled in MaxMsp, and each iteration of a sound was modulated using simple triggers and envelopes; attack, decay, sustain and release. The sounds were sequenced using random pattern generators to simulate the periodicity found in the natural source files. These were output as .WAV files at 44.1 kHz/24 bit.

Sound textures were analysed using C1 correlation (Fig. 5), which quantifies the degree of similarity in amplitude modulations across different acoustic frequency channels of a sound (McDermott & Simoncelli, 2011)24. Specifically, it captures correlations between modulation bands that are tuned to the same temporal modulation rate but lie in different cochlear frequency channels (52–8844 Hz in 30 bands). For each sound file (WAV files, sampled at 44.1 kHz, 16 bits per sample), the magnitude of signals in cochlear channels and their modulation were computed in MATLAB using the function MelSpectrogram. Then, the pair-wise correlation of the modulation envelopes across cochlear channels was computed using the MATLAB function corrcoef at the modulation frequency of 4.78 Hz.

Colors correspond to the magnitude of C1 correlation (see methods) for each pair of cochlear channels. < 0.001, one-way ANOVA for Bootstrapped samples.

The sound and colour conditions were paired exhaustively, resulting in 14 specific combinations (three natural sounds x 2 hues; 3 synthetic sounds x 2 hues; no sound x 2 hues) and presented in blocks of 20-minute segments. The tunnel environment was darkened, and the main source of light was the projected images whose colours comprised red vs. blue hues. The stimuli were presented from 8:00 to 18:00 everyday, including weekends. As commuters typically arrive at around the same time every day, stimuli were randomly permuted across days for each 20-minute slot of the day to ensure that all combinations were matched in frequency for a given time of the day. The tunnel was open to the public. The exact acoustic parameters could slightly change depending on occupancy and the density of participants. See Fig. 4 for visual representations of the tunnel.

Acoustic stimuli were presented via 15 speakers, controlled via the audio interface (MOTU - 24Ao). Visual stimuli comprised abstract images, with warm reds and yellows, or cool blues and greens. These were presented via 20 projectors (25,600 pixels by 720 pixels in total, displayed on spaced displays in 90 metre by 1 metre). Audio and visual are controlled simultaneously by the media processing programme built with TouchDesigner running on a Windows 11 Machine (AMD GPU WX9100, Ryzen 7 3700X CPU).

The acoustic conditions within the tunnel are as reported in (Table 1):

Data collection

Human Ethics: Ethics permission was granted by the Okinawa Institute of Science & Technology Human Subjects Research Review Committee. Approval Date: December 12th 2024, Reference (HSR-2024-017). The approval granted by the Human Subjects Review Board aligns with the “Ethical Guidelines for Life Science and Medical Research involving Human Subjects” set by the Japanese government, which itself is based on the Belmont Report and the Declaration of Helsinki.

Consent to participate: There was no personal information associated with the data collected. All passersby were clearly notified before entering the premises of the presence of the camera and the procedure to anonymize the data. If, however, they did not want to participate in the study, they were able to take alternative routes of entry into the building.

Participants’ emotional states were assessed using Russell’s circumplex, a 2-dimensional coordinate system where one axis corresponds to valence and the other to arousal25. The survey was implemented as a MATLAB (R2024a) script on a workstation running Windows 11. Participants were prompted by signage to volunteer, whenever possible, to report their states using touchpad survey stations installed at the two ends of the tunnel. All instructions were included in the panel. Upon touching the screen (IO Data IODATA Mobile Monitor, Multi-Touch, 21.5 Inches, Full HD ADS Panel), a point appeared briefly at the coordinate entered as visual feedback, followed by an acknowledgement page. The time and coordinates were automatically appended to a table and stored as a text file.

Data processing and analysis

The tables from the survey stations were imported into MATLAB for analysis. Data from two stations were combined for analysis. Coordinates for each condition were indexed based on the timestamps. For each category, the coordinates entered were vector-averaged to give the direction and strength. These resultant directions corresponded to the quality of emotional states, and the magnitudes to the coherence of the emotional state. Circular statistical methods26 were used for the statistical analysis of these summary measures. Null distributions were obtained by random shuffling of the labels repeated 10,000 times, for both the vector direction and the magnitude of the resultant vector.

Participant demographics and selection criteria

Participation was voluntary and anonymous. Employees of OIST commuting to and from work, as well as public visitors, were the target demographics. All entries during the experimental period were included in the study.

Data availability

The data that support the plots within this paper and other findings of this study are available from the corresponding authors upon request. No code was generated.

References

Aletta, F., Oberman, T. & Kang, J. Associations between positive health-related effects and soundscapes perceptual constructs: a systematic review. Int. J. Environ. Res. Public Health 15, 2392 (2018).

Ratcliffe, E. Sound and soundscape in restorative natural environments: a narrative literature review. Front. Psychol. 12, 570563 (2021).

Qu, S. & Ma, R. Exploring multi-sensory approaches for psychological well-being in urban green spaces: evidence from edinburgh’s diverse urban environments. Land 13, 1536 (2024).

Hammer, M. S., Swinburn, T. K. & Neitzel, R. L. Environmental noise pollution in the United States: developing an effective public health response. Environ. Health Perspect. 122, 115–119 (2014).

Stansfeld, S. A. Noise effects on health in the context of air pollution exposure. Int. J. Environ. Res Public Health 12, 12735–12760 (2015).

Babisch, W. Transportation noise and cardiovascular risk: updated review and synthesis of epidemiological studies indicate that the evidence has increased. Noise Health 8, 1–29 (2006).

Munzel, T., Gori, T., Babisch, W. & Basner, M. Cardiovascular effects of environmental noise exposure. Eur. Heart J. 35, 829–836 (2014).

Kong, P. R. & Han, K. T. Psychological and physiological effects of soundscapes: a systematic review of 25 experiments in the English and Chinese literature. Sci. Total Environ. 929, 172197 (2024).

Buxton, R. T., Pearson, A. L., Allou, C., Fristrup, K. & Wittemyer, G. A synthesis of health benefits of natural sounds and their distribution in national parks. Proc. Natl Acad. Sci. USA 118, e2013097118 (2021).

Francis, C. D. et al. Acoustic environments matter: synergistic benefits to humans and ecological communities. J. Environ. Manag. 203, 245–254 (2017).

Ratcliffe, E., Gatersleben, B. & Sowden, P. T. Bird sounds and their contributions to perceived attention restoration and stress recovery. J. Environ. Psychol. 36, 221–228 (2013).

Kim, T., Choi, K. & Suk, H.-J. Affective responses to chromatic ambient light in a vehicle. arXiv 2209.10761 (2022).

Spence, C. & Di Stefano, N. Coloured hearing, colour music, colour organs, and the search for perceptually meaningful correspondences between colour and sound. Iperception 13, 20416695221092802 (2022).

Reymore, L. & Lindsey, D. T. Color and tone color: audiovisual crossmodal correspondences with musical instrument timbre. Front. Psychol. 15, 1520131 (2024).

Ooi, K., Watcharasupat, K. N., Lam, B., Ong, Z. T. & Gan, W. S. in 2023 IEEE International Conference on Acoustics, Speech and Signal Processing (ICASSP) 1–5 (IEEE, 2023).

Zhou, Z., Ye, X., Chen, J., Fan, X. & Kang, J. Effect of visual landscape factors on soundscape evaluation in old residential areas. Appl. Acoust. 215, 109708 (2024).

Zhang, X., Ba, M., Kang, J. & Meng, Q. Effect of soundscape dimensions on acoustic comfort in urban open public spaces. Appl. Acoust. 133, 73–81 (2018).

Kitapci, K. & Akbay, S. Audio-visual interactions and the influence of colour on noise annoyance evaluations. Acoust. Aust. 49, 293–304 (2021).

Fraisse, V. et al. Exploring sound installation reactivity: acoustic niches in public space. Proc. Meet. Acoust. 58, 022002 (2025).

Fraisse, V., Tarlao, C. & Guastavino, C. Shaping city soundscapes: in situ comparison of four sound installations in an urban public space. Landsc. Urban Plan. 251, 105173 (2024).

Speer, M. E., Bhanji, J. P. & Delgado, M. R. Savoring the past: positive memories evoke value representations in the striatum. Neuron 84, 847–856 (2014).

Kaplan, S. The restorative benefits of nature: toward an integrative framework. J. Environ. Psychol. 15, 169–182 (1995).

Ulrich, R. S. et al. Stress recovery during exposure to natural and urban environments. J. Environ. Psychol. 11, 201–230 (1991).

Van Hedger, S. C. et al. The aesthetic preference for nature sounds depends on sound object recognition. Cogn. Sci. 43, e12734 (2019).

Russell, J. A. A circumplex model of affect. J. Pers. Soc. Psychol. 39, 1161–1178 (1980).

Fisher, N. I. in Statistical Analysis of Circular Data (ed. Fisher, N. I.) 257–268 (Cambridge Univ. Press, 1993).

Acknowledgements

We thank Nicholas M. Luscombe (Project Leader, COI-NEXT) for helping initiate the study and supporting its participation in the Bioconvergence Center of Innovation, OIST’s Okinawa Environmental Observation Network of Environmental Science and Informatics Section for providing the Ryukyu Owls sound from its archive and Cassondra George for the curation, Communication and Public Relations and Buildings and Facilities Management teams for help with the tunnel installation, Gail Tripp for advice on human experiments, and Natalia Koshikina and Sayori Gordon for administrative assistance. We are also grateful to Maho Hayashi and Junichi Shimizu from Sony Corporation for the technical assistance. The experiment system was built with the Spatial Sound XR system by Sony Corporation. This project was supported by funding from the Japan Science and Technology Agency’s Bio-convergence Centre of Innovation and OIST Graduate University.

Author information

Authors and Affiliations

Contributions

P.B, I.F, S.K. & N.L. conceptualised the study. I.F. & P.B. wrote the main manuscript text. I.F. prepared the figures. I.F. curated the data. N.L. recorded the natural soundscapes. S.N. & S.K. undertook the technical installation on site. All authors reviewed the manuscript.

Corresponding authors

Ethics declarations

Competing interests

The authors declare no competing interests.

Additional information

Publisher’s note Springer Nature remains neutral with regard to jurisdictional claims in published maps and institutional affiliations.

Rights and permissions

Open Access This article is licensed under a Creative Commons Attribution 4.0 International License, which permits use, sharing, adaptation, distribution and reproduction in any medium or format, as long as you give appropriate credit to the original author(s) and the source, provide a link to the Creative Commons licence, and indicate if changes were made. The images or other third party material in this article are included in the article’s Creative Commons licence, unless indicated otherwise in a credit line to the material. If material is not included in the article’s Creative Commons licence and your intended use is not permitted by statutory regulation or exceeds the permitted use, you will need to obtain permission directly from the copyright holder. To view a copy of this licence, visit http://creativecommons.org/licenses/by/4.0/.

About this article

Cite this article

Fukunaga, I., Kasahara, S., Luscombe, N. et al. The impact of visual-auditory interactions on well-being: colour and soundscapes in urban spaces. npj Acoust. 2, 12 (2026). https://doi.org/10.1038/s44384-026-00048-7

Received:

Accepted:

Published:

Version of record:

DOI: https://doi.org/10.1038/s44384-026-00048-7