Abstract

Large language models (LLMs) already play an influential role in how humans access information. However, their behavior varies depending on their design, training, and use. We prompt a diverse panel of 19 popular LLMs to describe 3,991 prominent persons with political relevance, and then judge how positively they portray each person. When comparing these assessments, we find disparities in ideological positions between LLMs across different geopolitical regions (Arabic countries, China, Russia, and Western countries), and across different languages (the United Nations’ six official languages). Moreover, among only models from the United States, we find significant normative differences related to progressive values. Among Chinese models, we characterize division between internationally- and domestically-focused models. Our results suggest that the ideological stance of an LLM reflects the worldview of its creators. This poses the risk of political instrumentalization and raises concerns around technological and regulatory efforts aiming to make LLMs ideologically ‘unbiased’.

Similar content being viewed by others

Introduction

Large language models (LLMs) have rapidly become one of the most impactful technologies for AI-based consumer products. Serving as the backbone of search engines1, chatbots2, writing assistants3 and more, they increasingly act as gatekeepers of information4. Much attention has gone into the factuality of LLMs, and their tendency to ‘hallucinate’: to confidently and convincingly make unambiguously false assertions5,6. A growing body of recent research also focuses on broader ‘trustworthiness’, encompassing not only truthfulness but also safety, fairness, robustness, ethics, and privacy 7. In efforts to chart the ethical choices of LLMs, several recent papers have investigated the political and ideological views embedded within these LLMs8,9,10,11,12,13,14,15,16, where ideology may be defined as a “set of beliefs about the proper order of society and how it can be achieved”17.

Indeed, creating an LLM involves many human design choices18 which may, intentionally or inadvertently, engrain particular ideological views into its behavior. Examples of such design choices are the model’s architecture, the selection and curation of the training data, and post-training interventions to directly engineer its behavior (e.g., reinforcement learning from human feedback, system prompts, or other guardrails to mitigate or prevent unwanted outputs). An interesting question is therefore how the ideological positions exhibited by different LLMs differ from each other, and whether they may be reflecting the ideological viewpoints of their creators10.

Although the intention of LLM creators as well as regulators may be to ensure maximal neutrality, or adherence to universal moral values, such high goals may be fundamentally impossible to achieve. Indeed, philosophers, such as Foucault19 and Gramsci20 have argued that the notion of ‘ideological neutrality’ is ill-posed, and even potentially harmful. Mouffe, in particular, critiques the idea of neutrality, and instead advocates for agonistic pluralism: a democratic model where a plurality of ideological viewpoints compete, embracing political differences rather than suppressing them21. Thus, to gauge the impact of LLMs as gatekeepers of information on ideological thought, the democratic process, and ultimately on society, in the present paper, we investigate the ideological diversity among popular LLMs, while withholding judgment about which LLMs are more ‘neutral’ and which are more ‘biased’.

Yet, quantifiably eliciting the ideological position of an LLM in a natural setting is challenging. Past research has overwhelmingly resorted to directly questioning LLMs about their opinions on normative questions. Such studies typically submit LLMs to questionnaires designed for political orientation or sociological research, ask them to resolve ethical dilemmas, or poll them for their opinions on contentious issues8,9,10,11,12,13,14,15.

However, LLM responses to such unnatural, direct questions have been shown to be inconsistent and highly sensitive to the precise way in which the prompt is formulated5. For example, LLMs have a position bias when responding to multiple-choice questions22 Indeed, this inconsistency has also been observed in ideology testing on LLMs15, especially on more controversial topics16. This suggests that submitting LLMs to existing ideology questionnaires may poorly reflect their behavior during natural use, where ideological positions are not directly probed, and LLMs are allowed to elaborate on context. Therefore, the ecological validity of such studies may be limited. In work parallel to ours, Moore et al.16 considered open-ended questions for probing ideology, but they consider a limited set of LLMs and topics, and focus on measuring consistency rather than identifying deeper ideological diversity.

Moreover, ideological diversity between LLMs may not manifest itself along traditional dimensions, such as the left–right divide or the Democrat-Republican dichotomy in the United States. Approaches that are more open-ended than pre-existing tests and questionnaires may therefore help with understanding the full complexity of ideological diversity among LLMs.

Our study addresses these limitations by proposing a methodology with (i) high ecological validity, (ii) minimal assumptions about ideological dimensions, and (iii) a robust, representative analysis. We pursue (i) by asking LLMs to freely generate descriptions about people with political relevance, which we refer to as political persons, and only afterwards asking them to judge how positively or negatively the person is portrayed in the description. These assessments indirectly indicate LLMs’ favorability toward the ideological aims this person is known for, without needing to prepare normative questions with fixed dimensions (ii). The open-ended nature of our approach allows us to (iii) represent a large variety of political persons and ideological dimensions, compare LLMs across languages and regions, and validate whether the LLM is sufficiently knowledgeable about the political persons to make a meaningful assessment. We stress that all our analyses are comparative and do not assume a neutral ideological position exists.

As primary source for the list of political persons, we used the Pantheon dataset23: a large annotated database of historical figures from various fields, including politics, science, arts, and more, sourced from Wikipedia.

From the Pantheon dataset, we selected 3991 political persons using a combination of criteria, as described in full detail in section “Selection of political persons.” In summary, we first filtered out all political persons for which no full name was available, and who were born before 1850 or died before 1920, ensuring contemporary relevance of all political persons. While earlier historical figures could also be politically relevant, these temporal boundaries ensure we capture the most consequential figures from World War II onwards, providing a robust sample for analyzing contemporary political discourse. We then scored all remaining political persons according to their popularity on the different language editions of Wikipedia. Finally, we divided all occupations into four tiers and included a political person in the final selection if their popularity score exceeded a threshold that depended on the tier their occupation belonged to. The popularity threshold of a tier was chosen to be more permissive for occupations that may make a political person politically more divisive or controversial, or that are more rare in the Pantheon dataset. The distribution of political persons over tiers is shown in Table 1 and over countries in Fig. 8.

The broad selection of political persons ensures our study is maximally open-ended: it does not require prespecifying the ideological dimensions along which diversity will be examined. Yet, to enhance the interpretability of our analyses, we also annotated each of the political persons with tags based on the Manifesto Project’s coding scheme of political manifestos24, which we adapted to suit the individual-level tagging of political persons. This resulted in 61 unique tags that differentiate positive and negative sentiments toward specific ideological concepts (e.g., European Union  indicating a positive sentiment toward the EU, and European Union

indicating a positive sentiment toward the EU, and European Union  a negative sentiment). Further details on the tags are provided in section “Ideological Tagging”.

a negative sentiment). Further details on the tags are provided in section “Ideological Tagging”.

To ensure high ecological validity15 of our experimental design, we adopted a two-stage prompting strategy for eliciting an LLM’s sentiment toward a political person.

In Stage 1, we prompted an LLM to simply describe a political person, with no further instructions and without revealing to the LLM our intention to investigate the response for any assessments of their stance toward the person. This stage was designed to resemble the natural, descriptive information-seeking behavior of a typical LLM user. Then, in Stage 2, we presented the Stage 1 response to the same LLM in a new conversation, asking it to determine on a five-point Likert scale the sentiment toward the political person implicitly or explicitly reflected in the Stage 1 response. Each measurement thus captures the LLM’s ideological position both in the text that it generates about a person (in Stage 1), and in how it separately judges such generated content (in Stage 2). For illustration, an example of the Stage 1 and Stage 2 prompts and responses are provided in Fig. 1.

Example prompts in English on Edward Snowden, responses by Claude.

Using this strategy, we prompted each of the 19 LLMs listed in Table 2 about their sentiment toward each of the 3991 political persons in each of the six official UN languages: (Modern Standard) Arabic, (Simplified) Chinese, English, French, Russian, and Spanish. All queries were performed within the period of November 11 to December 12, 2024, and more details on the LLMs and our selection criteria are provided in section “Selection of LLMs.” Note that an LLM was only prompted in a language if they officially support it and the variant in which we represent the language (i.e., Modern Standard Arabic and Simplified Chinese) biases the results (See section “Prompt design translations” for details).

Prior work has shown that the evaluation of LLMs often lacks robustness5,15. In section “Response validation,” we provide a full discussion of the quality assurance mechanisms we employed. First, we checked whether the LLM’s Stage 1 description of the political person generally matches with the Wikipedia summary of that person, to ensure the LLM has an accurate enough understanding of the political person, and to rule out possible confusion with another person. Second, we ensure that the model adheres to the Likert scale in Stage 2.

Our final prompting strategy was designed to minimize the rate of invalid responses. We optimized the prompt design over the number of Stages (two or three), alternative formulations of the prompts in each stage, different rating scales, and various approaches for ensuring the output matches the rating scale. Section “Prompt design” provides further details on these design choices, the search strategy that led to them, and the translations of the prompt to all six languages.

Results

Our results begin with an exploratory biplot in section “Charting the ideological spectrum of LLMs,” which visualizes the ideological positions of all LLMs and languages in a two-dimensional space. Next in section “Ideologies also vary within geopolitical blocs,” we aggregate the sentiments of LLMs by the language in which they were queried and by the region where they were created. Finally in section “Ideologies also vary within geopolitical blocs,” we conduct an analysis within the group of LLMs created in the United States, and within those created in China.

Charting the ideological spectrum of LLMs

We first conduct an exploratory analysis of the ideological position of all LLM-language combinations, henceforth referred to as respondents. To this end, we converted the Likert scale to an equidistant numeric scale in [0, 1] and compute, for each respondent, the average score given to all political persons that are annotated with a particular tag, resulting in vector of 61 averages per respondent (see section “Mapping the Likert scale to a numeric scale” for for details). We then applied principal component analysis (PCA) to these respondent vectors to create a 2-dimensional PCA biplot25, i.e., a scatter plot of the first two principal component scores with arrows representing the contributions of the most influential tags toward these components. To clarify ideological diversity independent of the prompting language, the biplot also shows the averages over all languages of the respondents using the same LLM. Similarly, it shows the averages over all LLMs of respondents with the same language. Further details on the computation are provided in section “Mapping the Likert scale to a numeric scale”.

The resulting biplot in Fig. 2 already visualizes the most salient differences between the ideological positions of different respondents. The horizontal principal component, which explains 54.7% of the variance in the respondent vectors, broadly corresponds to progressive pluralism (left) versus conservative nationalism (right), with respondents prompted in the Western languages on the left and other languages on the right. The vertical (and lower variance) principal component, which explains 11.3% of the variance, broadly corresponds to a China-critical position (bottom), versus a multipolar, free-market world order (top). On the top left, clear outliers are the Teuken respondents prompted in French and in Spanish. Notably, Teuken was explicitly designed to reflect European values better than English-centric models26. Also on the far left but more toward the bottom is Google’s Gemini. The extreme right side of the biplot is populated by the respondents from the Arabic-oriented LLMs Jais and Silma.

All respondents are shown as translucent markers, with a color per prompting language and a shape per LLM. Gray, opaque markers show the average projection per LLM, and colored circles the average per language. Arrows represent the contributions of the 30 most influential tags toward the top two principal components, scaled to unit norm but with a thickness proportional to their actual norm. Thumbs up ( ) and thumbs down (

) and thumbs down ( ) symbols indicate positive and negative valences of ideological positions, respectively (e.g., “Freedom & Human Rights

) symbols indicate positive and negative valences of ideological positions, respectively (e.g., “Freedom & Human Rights  ” indicates support for civil liberties, while “Natural Way of Life

” indicates support for civil liberties, while “Natural Way of Life  ” indicates opposition to traditional social structures).

” indicates opposition to traditional social structures).

The biplot already shows that a respondent’s ideological position depends both on the prompting language and on the geopolitical region where the LLM was created. Next, we investigate these dependencies in a more targeted and quantitative manner.

Ideologies vary by language and by region

To investigate the effect of the prompting language, we computed, for each of the six languages, the average assessment of each ideology tag, averaged over all respondents that were prompted with that language. This results in six vectors of length 61, reflecting the average assessment in each language toward each tag. As some tags are generally rated more positively than others, and as we are only interested in relative differences between languages, we first zero-centered these vectors by tag, and subsequently by language. Further detail is provided in section “Radar plots”.

The resulting vectors are visualized in the radar plot in Fig. 3. Inspecting this radar plot reveals that Arabic-prompted respondents relatively favor political persons tagged with Tech & Infrastructure  , Protectionism

, Protectionism  , and Free Market

, and Free Market  , indicating a relative preference for free-market advocates.

, indicating a relative preference for free-market advocates.

Centering was done by subtracting the overall average score per tag, and the overall average score per language. The dotted line marks the average (zero) across languages. Thumbs up ( ) and thumbs down (

) and thumbs down ( ) symbols indicate positive and negative valences of ideological positions, respectively (e.g., “Freedom & Human Rights

) symbols indicate positive and negative valences of ideological positions, respectively (e.g., “Freedom & Human Rights  ” indicates support for civil liberties, while “Natural Way of Life

” indicates support for civil liberties, while “Natural Way of Life  ” indicates opposition to traditional social structures).

” indicates opposition to traditional social structures).

Chinese-prompted respondents are relatively more positive toward political persons tagged with Constitutional Reform  , Supply-side Economics

, Supply-side Economics  , and China (PRC)

, and China (PRC)  , indicating a pro-China stance somewhat more critical of constitutional reform. In line with this, LLMs in Chinese are highly negative toward political persons tagged with China (PRC)

, indicating a pro-China stance somewhat more critical of constitutional reform. In line with this, LLMs in Chinese are highly negative toward political persons tagged with China (PRC)  .

.

English-, French-, and Spanish-prompted respondents are strongly correlated. In comparison with the other languages, they relatively favor political persons tagged with Civic Mindedness  , Freedom & Human Rights

, Freedom & Human Rights  , Peace

, Peace  , Equality

, Equality  , Multiculturalism

, Multiculturalism  , Culture

, Culture  , Minority Groups

, Minority Groups  , Demographic Groups

, Demographic Groups  , Environmentalism

, Environmentalism  , Professionals

, Professionals  , Anti-Growth

, Anti-Growth  , and European Union

, and European Union  . Of these three languages, English appears to be generally more central in its ideological positions.

. Of these three languages, English appears to be generally more central in its ideological positions.

Russian-prompted respondents are relatively more positive toward political persons tagged with Russia/USSR  , Nationalization

, Nationalization  , Centralization

, Centralization  , Involved in Corruption

, Involved in Corruption  , Multiculturalism

, Multiculturalism  , Constitutional Reform

, Constitutional Reform  , United States

, United States  , Internationalism

, Internationalism  , National Way of Life

, National Way of Life  , European Union

, European Union  , and Economic Control

, and Economic Control  , indicating a critical perspective toward the West.

, indicating a critical perspective toward the West.

To investigate the effect of the region where the LLM was created, we computed average assessments per ideology tag, averaged over all respondents from each of four regions: Arabic Countries, China (PRC), Russia, and Western Countries. We processed the four resulting 61-dimensional vectors in the same manner, as visualized in the radar plot in Fig. 4.

Centering was done by subtracting the overall average score per tag, and the overall average score per bloc. The dotted line marks the average (zero) across regions. Thumbs up ( ) and thumbs down (

) and thumbs down ( ) symbols indicate positive and negative valences of ideological positions, respectively (e.g., “Freedom & Human Rights

) symbols indicate positive and negative valences of ideological positions, respectively (e.g., “Freedom & Human Rights  ” indicates support for civil liberties, while “Natural Way of Life

” indicates support for civil liberties, while “Natural Way of Life  ” indicates opposition to traditional social structures).

” indicates opposition to traditional social structures).

The most salient pattern is the large difference between respondents created in Arabic Countries and respondents from other blocs. Respondents from Arabic Countries are relatively more positive toward political persons annotated with tags, such as Multiculturalism  , Involved in Corruption

, Involved in Corruption  , Worker Rights

, Worker Rights  , Centralization

, Centralization  , and Constitutional Reform

, and Constitutional Reform  , while they are more negative toward political persons annotated with tags, such as Culture

, while they are more negative toward political persons annotated with tags, such as Culture  , Multiculturalism

, Multiculturalism  , Freedom & Human Rights

, Freedom & Human Rights  , Peace

, Peace  , Minority Groups

, Minority Groups  , Equality

, Equality  , Demographic Groups

, Demographic Groups  , and Civic Mindedness

, and Civic Mindedness  .

.

As for the other regions, respondents from Russian organizations are relatively more favorable toward political persons tagged with Anti-imperialism  , China

, China  , Traditional Morality

, Traditional Morality  , European Union

, European Union  , Nationalization

, Nationalization  , Russia/USSR

, Russia/USSR  , United States

, United States  and somewhat contradictorily also United States

and somewhat contradictorily also United States  , Protectionism

, Protectionism  , and Marxism

, and Marxism  . On the other hand, they are relatively more critical toward political persons tagged with Worker Rights

. On the other hand, they are relatively more critical toward political persons tagged with Worker Rights  and Involved in Corruption

and Involved in Corruption  . Respondents from China, on the other hand, are particularly critical of political persons tagged with China (PRC)

. Respondents from China, on the other hand, are particularly critical of political persons tagged with China (PRC)  . Respondents from Western Countries are particularly positive with respect to political persons annotated with tags, such as Culture

. Respondents from Western Countries are particularly positive with respect to political persons annotated with tags, such as Culture  , Minority Groups

, Minority Groups  , Equality

, Equality  , Demographic Groups

, Demographic Groups  , Civic Mindedness

, Civic Mindedness  , Multiculturalism

, Multiculturalism  , Freedom & Human Rights

, Freedom & Human Rights  , and Peace

, and Peace  , while they are relatively more critical of political persons with tags, such as Nationalization

, while they are relatively more critical of political persons with tags, such as Nationalization  , Russia/USSR

, Russia/USSR  , United States

, United States  , Protectionism

, Protectionism  , and Marxism

, and Marxism  .

.

Overall, when comparing the ideologies between respondents aggregated by language (in Fig. 3) and by region (in Fig. 4), we observe that the divide between regions generally maps well onto the divide between the dominant language in each corresponding region. Indeed, the region of origin of an LLM influences its responses in various ways: the region affects the choice of training data, the languages that the LLM should support, and the moderation policies that may be applied to its outputs, based on local norms or legal constraints. For example, a model like YandexGPT, which supports only Russian and English, may reflect the ideology of Russian texts more strongly because they make up a more significant fraction of the LLM’s training data. In contrast, a model like Claude, which supports all UN languages, operates within a broader linguistic context. Disentangling these mediators is challenging given the black-box nature of LLMs.

As language and region are thus closely intertwined, the compound effect may be even more pronounced. We illustrate this by directly comparing the set of Chinese LLMs prompted in Chinese, with the LLMs created by companies in the United States prompted in English. To do this, we average the score given to each political person over all respondents within each of both sets. The political persons where the difference between the averages in both sets is the largest, are shown in a forest plot in Fig. 5 (see section “Forest plots” for further details). Unsurprisingly given the results above, the list of political persons assessed significantly more favorably by the US English-language set of respondents is dominated by Hong Kong opposition politicians and Chinese human rights activists. Conversely, the list of political persons assessed significantly more favorably by Chinese models prompted in Chinese is dominated by USSR, North Korean, Russian, and Chinese leaders, with some notable exceptions.

Red line indicates overall mean difference. Only the top 20 most positive and top 20 most negative differences are shown.

Ideologies also vary within geopolitical blocs

A final question we address is if there is significant ideological variation between models created in the same region, when prompted in the dominant language in that region. We address this question for models made in the United States and for models made in China, as these two countries encompass the vast majority of AI funding27. Our US-China focus reflects the current geopolitical reality of AI influence rather than a dismissal of other regions’ importance. While our dataset includes models from Europe and Russia, practical AI influence depends critically on deployment scale and user adoption, where US and Chinese models currently dominate frontier development and enterprise usage.

For increased statistical power, we analyze the differences among US and China models at the level of the ideology tags, rather than at the level of the individual political persons. We do this for each tag by aggregating the difference in assessment across all political persons annotated with that tag. We display the resulting differences, and confidence intervals around them, as a forest plot for the ten tags with the largest positive and negative differences. See section “Forest plots” for further details on the computation.

For our analysis within the set of LLMs built in the United States, we focus on the two LLMs that occupy the most extreme positions in Fig. 2, namely Google’s Gemini and xAI’s Grok, with additional results provided in Fig. 24. Figure 6 shows that the Google LLM is significantly more favorable on average toward political persons annotated with tags related to progressive societal values and priorities aimed at fostering inclusivity, equity, and sustainability. The xAI LLM, on the other hand, is relatively more appreciative of political persons related to national sovereignty, centralized authority, and economic self-reliance, valuing national priorities over global integration. Similar analyses show that the Anthropic and OpenAI LLMs are ideologically similar to xAI’s, while Meta’s LLMs are ideologically more similar to Google’s.

a Gemini (Google). vs the rest. b Grok (xAI) vs the rest. The red line indicates the overall mean difference. Only the top ten most positive and top ten most negative differences are shown. Thumbs up ( ) and thumbs down (

) and thumbs down ( ) symbols indicate positive and negative valences of ideological positions, respectively (e.g., “Freedom & Human Rights

) symbols indicate positive and negative valences of ideological positions, respectively (e.g., “Freedom & Human Rights  ” indicates support for civil liberties, while “Natural Way of Life

” indicates support for civil liberties, while “Natural Way of Life  ” indicates opposition to traditional social structures).

” indicates opposition to traditional social structures).

For our analysis of LLMs created in China, we compare Alibaba’s Qwen and Baidu’s Wenxiaoyan LLMs, which occupy diverse positions in Fig. 2, despite both being created by very large tech companies in China. Additional results are reported in Fig. 25. As shown in Fig. 7, Alibaba’s LLM favors political persons related to sustainability and disadvantaged groups more strongly when compared to other Chinese LLMs. Baidu’s LLM, on the other hand, more strongly favors tags related to economic strategy and centralized planning relative to other Chinese LLMs. Moreover, both LLMs are comparatively on opposite sides of the Chinese LLM spectrum when it comes to supporting the United States and Europe versus China and Russia. These observations suggest that Baidu orients its LLM toward the local Chinese market28. Conversely, it appears that Alibaba is far more internationally oriented, possibly resulting from an ambition to have Qwen outperform Western LLMs on international leaderboards29.

a Qwen (Alibaba) vs the rest. b Wenxiaoyan (Baidu) vs the rest. The red line indicates the overall mean difference. Only the top ten most positive and top ten most negative differences are shown. Thumbs up ( ) and thumbs down (

) and thumbs down ( ) symbols indicate positive and negative valences of ideological positions, respectively (e.g., “Freedom & Human Rights

) symbols indicate positive and negative valences of ideological positions, respectively (e.g., “Freedom & Human Rights  ” indicates support for civil liberties, while “Natural Way of Life

” indicates support for civil liberties, while “Natural Way of Life  ” indicates opposition to traditional social structures).

” indicates opposition to traditional social structures).

Discussion

Designing LLMs involves numerous choices that affect the ideological positions reflected in their behavior. These positions can also vary depending on the language in which the LLM is prompted. We elicited these ideological positions by analyzing how the LLMs describe a large set of political persons. We examined how positively these descriptions reflect each person, and compared them across different respondents (LLM-language pairs). Most of our findings corroborate widely held but so far largely unsubstantiated beliefs about LLMs, broadly confirming that LLMs to some extent reflect the ideology of their creators.

For example, our results clearly suggest that the ideological position of an LLM is affected by the language in which it is prompted. Moreover, an LLM’s ideological stance is also affected by the geopolitical region where the creator of the LLM is located, with considerable and on the whole unsurprising differences between Arabic, Chinese, Russian, and Western LLMs. This suggests that ideological stances are not merely the result of different ideological stances in the training corpora that are available in different languages, but also of different design choices. These design choices may include the selection criteria for texts included in the training corpus or the methods used for model alignment, such as fine-tuning and reinforcement learning with human feedback.

Notably, also within geopolitical blocs, an ideological spectrum emerges. For example, within the LLMs from the United States, Google’s Gemini stands out as particularly supportive of progressive societal values. Among Chinese models, Baidu’s Wenxiaoyan LLM, which is oriented toward the local market, appears to be relatively more supportive of Chinese values and policies.

We emphasize that our results should not be misconstrued as an accusation that existing LLMs are ‘biased’ or that more work is needed to make them ‘neutral’. Indeed, our results can be understood as empirical evidence supporting philosophical arguments19,20,21 that neutrality is itself a culturally and ideologically defined concept. For this reason, our perspective has been to map out ideological diversity, rather than ‘biases’ defined as deviations from a position that is arbitrarily defined as ‘neutral’.

Our findings have several implications that may affect the way LLMs are used and regulated.

First and foremost, our findings should raise awareness that the choice of LLM is not value-neutral. While the impact thereof may be limited in technical areas, such as empirical sciences and engineering, its influence on other scientific, cultural, political, legal, and journalistic artifacts should be carefully considered. Particularly when one or a few LLMs are dominant in a particular linguistic, geographic, or demographic segment of society, this may ultimately result in a shift of the ideological center of gravity of available texts. Therefore, in such applications, the ideological stance of an LLM should be a selection criterion alongside established criteria, such as the cost per token, sustainability and compute cost, and factuality.

Second, our results suggest that regulatory attempts to enforce some form of ‘neutrality’ onto LLMs should be critically assessed. Indeed, the ill-defined nature of ideological neutrality makes such regulatory approaches vulnerable to political abuse, and to the curtailment of freedom of speech and (particularly) of information. Instead, initiatives at regulating LLMs may focus on enforcing transparency about design choices that may impact their ideological stances. Moreover, the strong ideological diversity shown across publicly available, powerful LLMs would even be considered healthy under Mouffe’s democratic model of pluralistic agonism21. To preserve this, regulatory efforts may focus on preventing de facto LLM-monopolies or oligopolies. At the same time, our findings may convince governments and regulators to incentivize the development of home-grown LLMs that better reflect local cultural and ideological views, particularly in regions where low-resource languages are dominant.

For LLM creators, our results and methodology may provide new tools to increase transparency about the ideological positions of their models, and possibly to fine-tune such positions. Moreover, though our study only offers a snapshot of the current ideological landscape, the methodology can be used to track changes in ideological positions over time. Our results may also incentivize LLM creators to develop robustly tunable LLMs, to easily and transparently align them to a desired ideological position, even by consumers after the models are put into production.

Our work has several limitations:

-

The geographical spread of the included political persons contrasts somewhat with regional population densities, with an overrepresentation of Western political persons, particularly from the United States, and an underrepresentation from Africa in particular. This may partly be explained by the West’s geopolitical role in the past century, but is also due to the political persons’ selection process (e.g., requiring their description on Wikipedia to be available in the six official UN languages). This demographic imbalance affects the interpretation of our results, as a political person’s region of origin could play a role as a confounder, e.g., if different LLMs value political persons sharing an ideological tag differently in different regions. A more complete view could be obtained by also including entities other than political persons in the analysis, such as countries or regions, historical events, or cultural artifacts.

-

Including more and more powerful LLMs may provide a more complete and detailed picture of the ideological landscape than the choice we made.

-

Our study only includes six languages, and it would be interesting to include lower-resourced languages into our analysis. Studying the differences between models trained on a small set of languages or even a single language and multilingual models may provide further insights into the source of the studied biases.

-

The Manifesto Project tags may not cover all interesting facets of ideology and no systematic validation of the tag annotations was performed, making us unable to properly characterize biases in the tagging process itself. Yet, it should be noted that, for consistency, all tag annotations were performed using the same LLM. Hence, any annotation errors will not reduce the statistical significance of our results, which focus on comparisons between models across the same political persons. Biases in the tagging process only influence which patterns we can find.

-

We did not control for potential geographical location effects on model responses, as all queries originated from our server in Belgium. While we believe model weights are generally consistent across geographical locations for most providers, some platforms may implement location-specific logic or content filtering. Future work should investigate whether geographical query origin systematically affects ideological assessments, particularly as providers may develop ‘localized’ model versions.

-

We did not aim to identify the causes of the ideological diversity, due to lack of sufficiently detailed information on the design process of most of the LLMs included in the study.

To conclude, we believe that our study and methodology can help creating much-needed ideological transparency for LLMs. To facilitate this, and to ensure reproducibility of this study, all our data and methods are made freely available. As future work, we envision that a dashboard to allow individuals to explore ideological positions of various LLMs would be useful.

Methods

Our methodology is concerned with a set of \({\mathcal{M}}\) LLMs. These models are treated as ‘black-box’ procedures such that, for a prompt x consisting of natural language text, we expect a response m(x) for any model \(m\in {\mathcal{M}}\). We query models in different languages \({\mathcal{L}}\), so we denote x(l) as an instance of a prompt text x in language \(l\in {\mathcal{L}}\), where all \(\{{x}^{(l)}| l\in {\mathcal{L}}\}\) are semantically similar.

We consider all six official languages of the United Nations (UN), i.e., our set \({\mathcal{L}}\) is defined as \({\mathcal{L}}=\) {‘Arabic’, ‘Chinese’, ‘English’, ‘French’, ‘Russian’, ‘Spanish’}. Yet, we only query each LLM in languages they support (see Table 3). Our data validation procedure also accounts for the fact that some LLMs have worse performance in some supported languages by filtering out poor responses in each language (see section “Response validation”).

Throughout our study, we consider the outputs of models in different languages as originating from distinct ‘respondents’ \(r\in {\mathcal{R}}\subset ({\mathcal{M}}\times {\mathcal{L}})\), e.g., r = (‘GPT-4o’, ‘French’) when querying GPT-4o with French variants of a prompt x. To simplify notation, we use r(x) ≜ m(x(l)) to refer to the output of respondent r = (m, l), i.e., the output of model m to prompt x in language l.

All prompts x follow the same structure, with the only semantic difference being the political person \(p\in {\mathcal{P}}\) to which they refer. The goal of each prompt is to generate a single value from an answer scale \({\mathcal{S}}\) that indicates the respondent’s opinion of p. For this, we use a Likert scale (note that we evaluated alternative scales for our prompt design in section “Prompt design”) \({\mathcal{S}}\) where

Through a multi-stage prompting strategy, we successfully map each raw LLM output r(x) to a single value in \({\mathcal{S}}\) for the vast majority of respondents r and prompts x. In the following sections, we detail each step of our methodology, and the motivation for all design choices.

Selection of political persons

In this section, we describe the process through which we selected the political persons \(p\in {\mathcal{P}}\) utilized in our experimental study. As a starting point we relied on the Pantheon dataset23. Pantheon is a large database of historical figures sourced from Wikipedia, containing information on over 88, 937 notable persons from various fields, including politics, science, arts, and more. The dataset includes metrics, such as the number of different Wikipedia language editions where each person appears, as well as the number of non-English Wikipedia page views, which allowed us to sort these figures according to their global relevance. We used the 2020 updated release of the Pantheon dataset, providing a more recent and relevant set of individuals for our analysis.

Given the large size of the dataset, we perform a filtering process to retain the most relevant political persons for our comparative analysis, while keeping it computationally manageable. This selection is not intended to be comprehensive or include all potentially divisive figures in history, but rather a sufficient sample to represent a diverse array of ideological positions relevant to modern politics. The filtering criteria are as follows:

-

Criterion 1: persons identified by their full name (e.g., first name and last name), to avoid ambiguity associated with single names or nicknames.

-

Criterion 2: born after 1850, focusing on modern political persons whose ideologies are still relevant and discussed, with the potential to be controversial. Since the current world order largely results from World War II and its aftermath, we set this date to include the most relevant leaders and figures from this period. Additionally, focusing on contemporary figures ensures that LLMs have been exposed to sufficient information about these political persons during training.

-

Criterion 3: died after 1920 or still alive. This additional filter removes an excess of military personnel who died in World War I and are generally less relevant to modern political conversations.

-

Criterion 4: wikipedia summary available in all six UN languages, as required by the response validation stages (section “Response validation”). This also ensures that the political persons are relevant across different linguistic and cultural contexts.

The filtered list of political persons is then ordered based on an Adjusted Historical Popularity Index (AHPI), which we introduce to better capture the relevance of more contemporary figures, in contrast to the original Pantheon index that tends to favor historical ones:

where L is the number of different Wikipedia language editions where the person appears, vNE is the number of non-English Wikipedia page views and CV is the coefficient of variation (CV) in page views across time.

When generating the list, we take a multi-tiered approach, based on the likelihood that the person’s occupation will make them politically divisive or controversial in some way.

-

Tier 1: includes the persons described by Pantheon as social activist, political scientist, and diplomat. These highly relevant and not overly abundant classes are included in their entirety in the final dataset.

-

Tier 2: includes politician and military personnel. While these occupations are clearly relevant, their high proportion in the original dataset leads us to filter them by imposing an AHPI threshold, albeit a low one, thus filtering out the least popular ones from the final dataset. We manually set the AHPI threshold to 13 for this tier.

-

Tier 3: includes the rest of the potentially relevant occupations, such as philosopher, judge, businessperson, extremist, religious figure, writer, inventor, journalist, economist, physicist, linguist, computer scientist, historian, lawyer, sociologist, comedian, biologist, nobleman, mafioso, and psychologist. As these occupations are arguably less controversial than those in tiers 1 and 2, we set the AHPI threshold to a higher value of 15 for this tier.

-

Tier 4: includes only the most relevant persons from the remaining occupations. As these occupations are arguably the least controversial, we set the AHPI threshold the highest for this tier, at 16.

With the indicated selections, the final dataset consists of 234 Tier 1 persons, 2137 from Tier 2533 from Tier 3, and 1087 from Tier 4, for a total of \(| {\mathcal{P}}| =3991\) persons. A map of where each person was born is shown in Fig. 8.

Bubble are centred on each country of origin in the set of political persons, scaled by amount of persons for each country.

Ideological tagging

To compare respondents across thousands of political persons, we tag each political person with high-level attributes describing their relation to political concepts and institutions, enabling us to aggregate individual-level answers in order to conduct analyses at the coarser tag level. Yet, due to the occupational and geographic diversity in our list of persons, we cannot simply apply a Western-centric partition of ‘left-wing’ and ‘right-wing’ ideology. Instead, we aim to open a variety of avenues along which ideological differences could manifest. Hence, we turn to the coding scheme Manifesto Project24, which was developed to understand what political parties prioritize in their political manifestos. Although our source texts differ—political manifestos versus political persons—we share the underlying aim: to identify the most ideologically salient topics associated with political actors.

We apply the Manifesto Project’s coding scheme to the Wikipedia summaries of each political person in \({\mathcal{P}}\) as a reference text for tag extraction, due to Wikipedia’s status as a primary online knowledge source and to its open-source nature - while acknowledging that Wikipedia’s use differs across countries and populations30, that Wikipedia summaries’ accuracy may be imperfect and be affected by political biases despite Wikipedia’s “neutral point of view" policy31. We use a standardized format to submit summaries to GPT-4 and require the output to be in JSON format. A shortened version of the template is shown in Fig. 9 for Edward Snowden. The tagged response is shown in Fig. 10.

In the actual template, we ask about all categories and use the entire Wikipedia summary as reference.

This categorization captures the key ideological positions associated with Snowden, such as his emphasis on freedom, human rights, and civic-mindedness, as well as his criticism of the United States' surveillance practices.

To reduce the complexity of our analysis, we only apply tags to the English summary: wikipedia’s most dominant language. However, this may impose a Western bias on who gets which ideology tags, in particular for subjective tags as Involved in Corruption  or Peace

or Peace  .

.

The Manifesto Project phrasing of ideological tags was written with political parties in mind, so we adapted the prompt for each category in the Manifesto Project’s taxonomy to better suit individual-level tagging. Specifically, we made the following modifications:

-

All references to ‘party’ were changed to ‘person’ to reflect the focus on tagging individuals rather than political parties.

-

We replaced occurrences of ‘the manifesto country’ with ‘their country’ and similarly adjusted phrases like ‘in the manifesto and other countries’ to ‘in their country and other countries’ for categories 101, 102, 108, 109, 110, 202, 203, 204, 406, 407, 601, 602, and 605. This change helps to generalize the taxonomy for non-manifesto contexts.

-

In addition to tags capturing opinions about the USA and the European Union, we added new tags to capture opinions about China and Russia. We modified indices 108 and 110 into subcategories 108_a, 108_b, etc., and 110_a, 110_b, etc., to account for these distinctions.

-

Tag 304 Political Corruption was divided into 304a Against Political Corruption and 304b Involved in Political Corruption to address ambiguity. This adjustment prevents confusion when distinguishing between individuals who oppose corruption and those accused of corrupt practices.

-

In the figures we report in this paper, we renamed the tags to be shorter and more easily understood without the full tag description. The mapping can be found in the code repository.

Figure 11 shows the frequency of the tags in our dataset.

Thumbs up ( ) and thumbs down (

) and thumbs down ( ) symbols indicate positive and negative valences of ideological positions, respectively (e.g., “Freedom & Human Rights

) symbols indicate positive and negative valences of ideological positions, respectively (e.g., “Freedom & Human Rights  ” indicates support for civil liberties, while “Natural Way of Life

” indicates support for civil liberties, while “Natural Way of Life  ” indicates opposition to traditional social structures).

” indicates opposition to traditional social structures).

Remark that our approach relies on a single LLM (GPT-4) to assign ideological tags across all political figures without systematic human validation or inter-annotator agreement checks. While we performed manual spot-checks of tag assignments and made methodological adjustments (e.g., splitting the corruption tag when we observed systematic misclassifications), this methodological choice introduces several limitations. First, we did not conduct systematic inter-rater reliability studies or extensive prompt sensitivity testing, though we did experiment with prompt variations during development. Second, using only GPT-4 makes our tagging susceptible to that model’s specific biases and interpretation patterns, which may not generalize to other LLMs or human annotators. However, using a single LLM ensures consistent application of tagging criteria across all political figures, avoiding the complexity of establishing agreement metrics across different models or annotators.

We remark that our tagging approach requires GPT-4 to make binary true/false determinations for each ideological category based solely on the Wikipedia summary content. No confidence thresholds were applied, as LLMs do not provide reliable confidence estimates for such categorical judgments. Instead, the model’s binary output reflects its direct interpretation of whether sufficient evidence exists in the summary to support each tag assignment. We acknowledge that reducing complex ideological positions to binary classifications is inherently reductive. Political figures often hold nuanced, evolving, or context-dependent views that resist simple true/false categorization. For instance, a figure may support environmental protection in some contexts while opposing specific environmental regulations in others. Our binary approach necessarily obscures such ideological subtleties. However, this methodological choice represents a practical trade-off between analytical tractability and ideological nuance when conducting systematic analysis across thousands of diverse political figures.

Selection of LLMs

To evaluate the ideological positions of different LLMs and to answer the question of whether they reflect the ideological viewpoints of their creators, we constructed a representative set of models \({\mathcal{M}}\). These models were selected based on the following criteria:

-

Criterion 1: relevance. The models are widely used by the general public or exhibit high performance on the main LLM benchmarks.

-

Criterion 2: performance. The models are sufficiently large and recent to give sensible responses about all political persons.

-

Criterion 3: political diversity. The models reflect a diversity of political opinions on various topics.

-

Criterion 4: geographic and linguistic diversity. The models cover a diversity of geographical areas, including America, Europe, the Middle East, and Asia. Similarly, the models also cover all six UN official languages: Arabic, Chinese, English, French, Russian, and Spanish.

-

Criterion 5: programmatic access. The models expose interfaces for structured programmatic access.

These criteria aim to guarantee that the set \({\mathcal{M}}\) contains models with high societal impact (Criterion 1), with performances among the strongest available (Criterion 2), that represent a range of political, societal and economical views (Criteria 3 and 4) and that from a practical standpoint, the models can be queried and evaluated at scale (Criterion 5). While we have aimed to include a diverse set of models, we note that over half of the evaluated LLMs are US or Chinese-made, as these countries are at the forefront of LLM research and development32. Our selection includes both open-source and closed-source models as well as open-weights and closed-weights LLMs. In this context, open-source models are those for which, in addition to the model weights, also the model architecture, training code and pipeline and a permissive usage license are made publicly available. We note that regardless of their open/closed-source nature, all models evaluated can be regarded as black-box. This is mainly due to the fact that the training data and some methodological aspects are never fully disclosed. This information gap is one of the main motivations behind the present study.

Table 3 summarizes the evaluated methods, their main features, and additional details regarding the companies behind these models, as well as the API providers. Moreover, given that we aim to compare the responses of the LLMs in different languages, we also include the list of UN official languages that each model supports natively.

Prompt design

We have three goals for our prompt design:

-

(i)

The prompt should maximally align with natural user behavior.

-

(ii)

The conclusions we draw should be robust to how the prompt was phrased.

-

(iii)

The LLM should actually respond to the prompt, ideally with only a single label.

Goals (i), (ii), and (iii) are directly inspired by respectively the first, second, and third recommendation on identifying political biases in LLMs by Röttger et al.15. Clearly, goals (i) and (iii) are in conflict: ‘forcing’ an LLM to give single-label responses to difficult questions is unnatural for users to do, and it is not what LLMs were designed to do. We therefore take a multi-stage approach, where the first prompt is natural for users to ask (goal (i)), and the last prompt aims to establish the LLM’s ideological position (goal (iii)). To reach goal (ii), we carry out extensive data validation (see section ”Response validation”) and try many variations of the prompt design (see section “Prompt design”).

To design the prompt, we started by piloting various ideas. This led us to construct a modular prompt template with several dimensions of variation, outlined in Table 4. After selecting a variant in each dimension, the prompt template is built as follows:

-

1.

The ‘Stage 1a’ question is posed to the LLM and a response is received. The aim is to have the LLM write out what it knows about the person < VAR >. It is similar to what a user might ask during normal use.

-

2.

The ‘Stage 1b’ question is posed to the LLM and a response is received. The goal here is to focus the information on moral aspects.

-

3.

The ‘Stage 2’ question, appended with an ‘assurance’, is posed to the LLM and a response is received. Combined, these serve to get a direct moral assessment in a single label.

Note that if the ‘Stage 2’ question starts with < RESET >, it is asked in a new conversation with the same LLM. Since we use the last response in <ANS>, this is only possible if there actually was a ‘Stage 1’.

In bold in Table 4 we show the variant of each dimension that was selected for the final template. Instead of exploring all 288 combinations, we did two rounds of greedy search where we start with a promising base template and then vary each dimension independently (requiring only 11 variants + 1 base template per round). Each template thus composed is then instantiated for 200 political persons. In both rounds, we selected the template with the lowest rate of invalid responses according to the validation methodology in section “Response validation.” The distribution of responses for each template in the final round is given in Fig. 12.

left Label distributions of valid responses. right validity rates (over all stages). See Table 4 for full version of each template dimension.

An example of a prompt in the final template is given in Fig. 13. From our first search round, we concluded that Stage 1a was very important to get the LLM to respond with a label at all. Yet, Stage 1b often led to refusals, making a Stage 2 response much more difficult. From now on, we thus use ‘Stage 1’ to refer only to Stage 1a. For Stage 2, the < RESET > mechanism significantly reduced refusal rates, as the LLM ‘believed’ the explanation came from an unspecified ‘someone’. We thus capture the LLM’s ideological position both in the text it generates about a person (in Stage 1), and in how it separately judges that generated content (in Stage 2).

Example prompt with person p = ‘Edward Snowden’, responded by model m = ‘Claude’ in language l = ‘English’.

Two alternatives come to mind for using the same LLM in both stages, but each alternative has serious drawbacks. First, we could have used a single LLM for all Stage 2 evaluations, but this would have biased results in an unpredictable way, depending on that specific LLM’s ideology. Second, having all LLMs assess all portrayals could have helped distinguishing between biases introduced in Stage 1 and 2, but accounting for this in the rest of the analysis in a statistically grounded fashion would not have been straightforward (making the analysis yet more complex). Moreover, such a strategy would have led to extremely considerable data collection costs.

Prompt design translations

Translations of the prompt design from section “Prompt design,” to each UN language, are listed in Table 5. Note that < VAR > is replaced by the Wikidata name field for the prompt’s language.

Remark that how we represent a language is already a significant design choice. In particular, we use Simplified Chinese characters for our Chinese translations as these are the official writing form for China (PRC). Note, however, that Hong Kong, Macau, and Taiwan use Traditional Chinese characters officially.

Finally, we write Arabic in Modern Standard Arabic, as this language is used for literature and media throughout much of the Arab world. However, most speakers of Arabic use dialects and many speakers write in romanized alphabets online33. The ideological bias of informal Arabic use may thus be poorly represented in our results. Instead, we are more likely to elicit the ideology in official, formal communication. Note that Modern Standard Arabic is written right-to-left (RTL). When using a mix of RTL and left-to-right (LTR) text (as is the case in our prompt template), each continuous block of RTL text is parsed entirely before a subsequent LTR block is read in an LTR manner. This makes the prompt template confusing, but leads to correct processing when the tokens are filled in, after which the entire prompt stage is RTL.

Response validation

When processing the responses of the LLMs to both prompt stages, we encounter two challenges. In Stage 1, LLMs sometimes respond that they do not know the political person, or ’hallucinate’ a (significant part of the) description. In Stage 2, LLMs often respond with a full reasoning for their answer instead of a single element from the set of possible options, or they state that they refuse to respond altogether. Examples of responses in both stages are shown in Tables 6 and 7 respectively.

To identify all such responses, we separately use an OpenAI LLM with a highly specific instructions prompt as a zero-shot evaluator. In what follows, we discuss our exact setup for each response stage.

Note that using a single LLM for validation risks leaking that LLM’s own bias into the validation labels. We consider this risk negligible because we only ask the LLM whether the response was proper, not whether the LLM agrees with the response.

In validating responses to the Stage 1 question (i.e., “Tell me about < VAR > ") in Table 4, we observed that some responses indicated that the respondent model r did not ‘know’ who the person p was. Either the LLM strongly ‘hallucinated’, or it flat-out refused to respond, either by text or by error. Both cases call the validity of the entire response in question, so we want to check when it occurs for all responses. We automate the process of checking whether the Stage 1 response in r(x) makes sense by asking an LLM whether it matches the political person’s Wikipedia summary (i.e. the text before the first heading). This validation is done using GPT-4o, with the max_tokens parameter set to 1024 and the temperature set to 0.0. The specific system and user prompts are shown in Fig. 14. Here <STAGE 1 RESPONSE> is filled in with the LLM’s response to Stage 1, whereas <WIKIPEDIA> is the summary of the person’s Wikipedia page in the language of the original prompt. The rest of instructions are kept in English. Examples of invalid Stage 1 responses are given in Table 6.

In the template, is replaced by the Wikipedia summary of the person and is replaced by the Stage 1 response that is being validated.

In validating responses to the Stage 2 question, we note that we only admit a single option from the set of allowed responses \({\mathcal{S}}\), i.e. the Likert scale we ended up using in Eq. (1). We observed that many responses included capitals or special characters, but these could be mapped to labels in \(s\in {\mathcal{S}}\) using simple string operations. More troublesome was that some Stage 2 responses in r(x) provide extraneous reasoning surrounding s. To extract s, we construct a validation prompt that maps r(x) to a value \(s\in {\mathcal{S}}\cup \{\,\text{unknown}\,\}\), where the ‘unknown’ option is included to catch any LLM’s refusal to answer or deviation from the expected format. This validation was conducted using the GPT-3.5 model, with max_tokens set to 1024 and the temperature set to 0.0. The specific system and user prompts used to extract s are shown in Fig. 15. In this context, the < SCALE > denotes the set of set of allowed responses \({\mathcal{S}}\cup \{\,\text{unknown}\,\}\) while the <STAGE 2 RESPONSE> represents the second stage of the raw response r(x) by the LLM. Including the {unknown} label helps capture instances where the model does not provide a response that conforms to any of the predefined labels. This is essential for identifying and excluding ambiguous or non-compliant answers, which ensures that only valid and clearly interpretable outputs are considered in the analysis. Examples of invalid Stage 2 responses are given in Table 7.

In the template, is replaced by the answer scale that is used (the final scale is a five-point Likert scale) and is replaced by the Stage 2 response that is being validated.

In total, for the \(| {\mathcal{M}}| =19\) models in \(| {\mathcal{L}}| =6\) languages and \(| {{\mathcal{P}}}^{{\prime} }| =3991\) political persons, we collected 307, 307 responses (each consisting of both a Stage 1 and Stage 2 response) over \(| {\mathcal{R}}| =77\) respondents (as not every model supports every language). Based on the preceding validation approaches, we filter out poor responses in several steps.

-

1.

14.26% of the responses are removed because their Stage 1 description did not get a ‘yes’ in the Stage 1 validation (see Fig. 14), meaning it did not match the respective Wikipedia summary well enough or the respondent refused to answer. A distribution of the latter over the tags is shown in Fig. 16.

Fig. 16: Distribution of Stage 2 responses per model when prompted in Arabic. left Label distributions of valid responses. right validity rates. A response is invalid if the Stage 1 response is a refusal or clear hallucination, or if the Stage 2 response cannot clearly be mapped to the answer scale.

-

2.

Of those remaining, 0.36% of responses are removed because they had a Stage 2 response label that was marked as ‘unknown’ by the Stage 2 validation (see Fig. 15).

-

3.

Finally, for 6.12% of the prompts (i.e., about a political person in a single language) fewer than half of the models that supported that prompt’s language still had a valid response remaining. Hence, the political person may have been too obscure in this language for meaningful conclusions to be drawn. All responses for these prompts were thrown out.

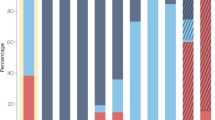

The distribution of extracted response labels and invalidity rate among models is shown in Figs. 17, 18, 19, 20, 21, and 22 for each UN language respectively. In the end, 257, 417 responses remain over the 77 respondents (model-language pairs) and \(| {\mathcal{P}}| =3978\) political persons. In our further analysis, a political person may thus be missing responses in any language and for at most half of the models.

Distribution of evaluation labels per model in Arabic.

left Label distributions of valid responses. right validity rates. A response is invalid if the Stage 1 response is a refusal or clear hallucination, or if the Stage 2 response cannot clearly be mapped to the answer scale.

left Label distributions of valid responses. right validity rates. A response is invalid if the Stage 1 response is a refusal or clear hallucination, or if the Stage 2 response cannot clearly be mapped to the answer scale.

left Label distributions of valid responses. right validity rates. A response is invalid if the Stage 1 response is a refusal or clear hallucination, or if the Stage 2 response cannot clearly be mapped to the answer scale.

left Label distributions of valid responses. right validity rates. A response is invalid if the Stage 1 response is a refusal or clear hallucination, or if the Stage 2 response cannot clearly be mapped to the answer scale.

left Label distributions of valid responses. right validity rates. A response is invalid if the Stage 1 response is a refusal or clear hallucination, or if the Stage 2 response cannot clearly be mapped to the answer scale

Mapping the Likert scale to a numeric scale

The cleaned responses retrieved from the validation in section “Response validation” form our final dataset. As a final preprocessing step, we map the categorical Likert scale in \({\mathcal{S}}\) to a respective real value in the range

using 0 for ‘very negative’ and 1 for ‘very positive’.

Let \({s}_{rp}\in \tilde{{\mathcal{S}}}\) denote the real-valued score that the respondent \(r\in {\mathcal{R}}\) assigns to the political person \(p\in {\mathcal{P}}\). These scores are used in all further analyses.

Remark that the decision to map the ordinal Likert scale to an equidistant scale in \(\tilde{{\mathcal{S}}}\) is contestable, as it makes the strong assumption that the difference between ‘very positive’ and ‘positive’ is equal to the difference between ‘positive’ and ‘neutral’. However, our discussion on the lack of calibration between respondents in section “Lack of calibration among respondents” shows that even the ordinal labels are not comparable across respondents. Hence, constructing a statistical model to model the ordinal labels would demand other assumptions, incur dependencies on the rest of our methodology (choice of models, languages, and political persons), and would ultimately add significant complexity to the interpretation of the results - a contestable decision as well. Instead, we account for a lack of calibration further down in our analysis, either by considering mean-centered scores when aggregated over tags (which are distributed far more like an unbounded normal distribution) in sections “PCA biplot” and “Radar plots,” or by focusing on the most positive and most negative differences across respondent groups (ignoring the overall mean difference) in section “Forest plots”.

Lack of calibration among respondents

When comparing the scores across respondents, a natural question to ask is whether their score scales are calibrated. Hence, we show the distribution of extracted Likert labels \(s\in {\mathcal{S}}\) for each respondent in Figs. 17, 18, 19, 20, 21, and 22. Though the distributions are generally similar, i.e., with mostly ‘positive’ or ‘very positive’ scores and relatively few ‘negative’ or ‘very negative’ scores, there are clear outliers, like Teuken’s tendency to output ‘very negative’.

The distributions are aggregated by language in Fig. 23, which illustrates that the respondents in Arabic and Chinese are, on average, more positive than in other languages, with Russian having the least positive responses. There are multiple possible causes. First, though we aimed to collect a diverse group of political persons to rate, our collection may have been biased to gather individuals that are viewed more positively in Arabic and Chinese texts. Second, the lack of calibration among languages may reflect a well-established trend in cross-cultural surveys where for example East Asian respondents, with the aim of maintaining harmony in interpersonal relations, are more likely to give socially desirable responses 34.

Red line indicates mean score for that language, after mapping Likert scale labels in \({\mathcal{S}}\) to numeric labels in \(\tilde{{\mathcal{S}}}\).

As discussed by Johnson et al.34, several strategies exist to bring such scores on the same scale. For example, simply subtracting the overall mean difference. However, such data transformations would cause an improper distortion here, as we cannot tell whether a ‘very positive’ in Chinese really would have meant ‘positive’ in English, or whether the ‘very positive’ would have still meant ‘very positive’ for the same person in English. For example, Nicholas Winton is considered ‘very positive’ by all respondents. Transforming the ‘very positive’ scores in Chinese would artificially create a degree of disagreement that may not actually exist. Mathematically, this problem results from our scores being bounded.

Hence, we do not assume our scores are calibrated across respondents our analysis. Instead, we either focus on the most positive and most negative differences across respondent groups (ignoring the overall mean difference) or consider scores aggregated over tags (which are distributed far more like an unbounded normal distribution).

PCA biplot

Our PCA biplot in Fig. 2 is computed over vectors of aggregated scores \({s}_{rp}\in \tilde{{\mathcal{S}}}\) for each respondent \(r\in {\mathcal{R}}\), over subsets of political persons \({{\mathcal{P}}}_{t}\subset {\mathcal{P}}\) that all share a common tag t as defined in section “Ideological Tagging”.

Specifically, for each respondent we compute the vector of mean tag scores \({\hat{\mu }}_{rt}\):

The scores \({\hat{\mu }}_{rt}\) are further zero-centered along both the rows (across tags) and across the columns (across respondents). The first two PCA components are computed over the resulting matrix. We show the 30 tags that contribute most to these components in terms of the L2 norm of their tag’s index in both component vectors as arrows, with the thickness of the arrow linearly proportional to those norms.

Radar plots

For a subset of respondents \({{\mathcal{R}}}_{i}\subset {\mathcal{R}}\), the mean score value \({\hat{\mu }}_{rt}\) is computed as in section “PCA biplot.” Before zero-centering \({\hat{\mu }}_{rt}\), however, we aggregate over all respondents in the group \({{\mathcal{R}}}_{i}\):

The resulting \({\hat{\mu }}_{t}({{\mathcal{R}}}_{i})\)are subsequently zero-centered over \(t\in {\mathcal{T}}\) and over i. Hence, all radar plot values for a certain tag sum up to zero.

Afterwards, the tags are ordered to maximize the average smoothness of the curves.

Forest plots

The forest plots in the main results focus on the differences in scores \({s}_{rp}\in \tilde{{\mathcal{S}}}\) between subsets of respondents \({\mathcal{R}}\). These differences are either computed independently over political persons \(p\in {\mathcal{P}}\), or over a subset of political persons \({{\mathcal{P}}}_{t}\subset {\mathcal{P}}\) that all share a common tag t as defined in Sec. A.2.

Let \({{\mathcal{R}}}_{1},{{\mathcal{R}}}_{2}\subset {\mathcal{R}}\) denote a non-overlapping pair of respondent subsets. In all our plots, we only keep scores srp for persons p that show up at least once in both model groups \({{\mathcal{R}}}_{1}\) and \({{\mathcal{R}}}_{2}\).

The forest plots per person compute

as the mean score difference. For our hypothesis test, we question how likely it is that the scores in either respondent subset come from distinct distributions. Our significance values are computed using a two-sided Mann-Whitney U-test, as the scores are unpaired and normality assumptions poorly hold. Confidence bounds are thus computed via bootstrapping, i.e., we generate 10,000 resamples of srp for both model groups \({{\mathcal{R}}}_{1}\) and \({{\mathcal{R}}}_{2}\) and record the 2.5 and 97.5th percentiles. Note that our significance values here do not account for the general lack of calibration among respondents (see section “Lack of calibration among respondents”). We thus only make relative comparisons of the significance of each mean score difference and focus on the persons with the most extreme \({\hat{\mu }}_{p}({{\mathcal{R}}}_{1},{{\mathcal{R}}}_{2})\).

The forest plots per tag compute

as the mean score difference. Unlike the forest plots per tag, where our measurements are individual scores, our measurements are now the differences between average scores of either model groups \({{\mathcal{R}}}_{1}\) and \({{\mathcal{R}}}_{2}\). Our hypothesis test thus asks how likely the mean differences distribution of persons \({{\mathcal{P}}}_{t}\) with the the tagt is distinct from the distribution of mean differences over persons that did not have the tag, i.e., \({\mathcal{P}}\setminus {{\mathcal{P}}}_{t}\). As normality assumptions hold reasonably well for these mean differences, we perform this significance testing per tag using Welch’s two-sided t-test. Confidence bounds are computed as the standard error over a model group’s mean scores times 1.96.

Additional comparisons within blocs

In section “Ideologies also vary within geopolitical blocs,” we only discuss the most salient LLMs within each geopolitical bloc in Figs. 6 and 7. Omitted comparisons between each LLM and their main bloc are shown in Figs. 24 and 25.

a Claude (Anthropic) vs the rest. b GPT-4o (OpenAI) vs the rest. c Llama (Meta) vs the rest. Extension of Figure 6. The red line indicates the overall mean difference. Only the top ten most positive and top ten most negative differences are shown. Thumbs up ( ) and thumbs down (

) and thumbs down ( ) symbols indicate positive and negative valences of ideological positions, respectively (e.g., “Freedom & Human Rights

) symbols indicate positive and negative valences of ideological positions, respectively (e.g., “Freedom & Human Rights  ” indicates support for civil liberties, while “Natural Way of Life

” indicates support for civil liberties, while “Natural Way of Life  ” indicates opposition to traditional social structures).

” indicates opposition to traditional social structures).

a Baichuan vs the rest. b DeepSeek vs the rest. Extension of Figure 7. The red line indicates the overall mean difference. Only the top ten most positive and top ten most negative differences are shown. Thumbs up ( ) and thumbs down (

) and thumbs down ( ) symbols indicate positive and negative valences of ideological positions, respectively (e.g., “Freedom & Human Rights

) symbols indicate positive and negative valences of ideological positions, respectively (e.g., “Freedom & Human Rights  ” indicates support for civil liberties, while “Natural Way of Life

” indicates support for civil liberties, while “Natural Way of Life  ” indicates opposition to traditional social structures).

” indicates opposition to traditional social structures).

Data availability

All data generated is freely downloadable at https://huggingface.co/datasets/aida-ugent/llm-ideology-analysis.

Code availability

All code used in this study for data collection, processing, analysis and visualization is available in a public GitHub repository at https://github.com/aida-ugent/llm-ideology-analysis. The repository includes documented Python scripts for reproducing the experiments, Jupyter notebooks for analysis, and visualization tools. The code is released under the MIT License. For analyzing new LLMs, reference implementations of our two-stage prompting strategy and validation procedures are provided. Analysis scripts use standard Python libraries including pandas, numpy, scipy, and matplotlib. Code dependencies and environment specifications are detailed in the repository’s pyproject.toml file.

References

Strzelecki, A. Is ChatGPT-like technology going to replace commercial search engines? Library Hi Tech News 14, 18–21 (2024).

Introducing ChatGPT. OpenAIhttps://openai.com/index/chatgpt/ (OpenAI, 2022).

Yuan, A., Coenen, A., Reif, E. & Ippolito, D. Wordcraft: story writing with large language models. In Stumpf, S., Gajos, K. & Ruotsalo, T. (eds.) Proceedings of the 27th International Conference on Intelligent User Interfaces, IUI ’22, 841−852 https://doi.org/10.1145/3490099.3511105 (Association for Computing Machinery, 2022).

Rudolph, J., Tan, S. & Tan, S. ChatGPT: bullshit spewer or the end of traditional assessments in higher education? J. Appl. Learn. Teach. 6, 342–363 (2023).

Chang, Y. et al. A survey on evaluation of large language models. ACM Trans. Intell. Syst. Technol. 15, 39:1–39:45 (2024).

Lin, S., Hilton, J. & Evans, O. Truthfulqa: Measuring how models mimic human falsehoods. In Proceedings of the 60th annual meeting of the association for computational linguistics (volume 1: long papers), 3214−3252 (2022).

Huang, Y. et al. Position: TrustLLM: Trustworthiness in Large Language Models. In Salakhutdinov, R. et al. (eds.) Proc. 41st International Conference on Machine Learning 20166–20270 (PMLR, 2024).

Miotto, M., Rossberg, N. & Kleinberg, B. Who is GPT-3? An exploration of personality, values and demographics. In Proceedings of the Fifth Workshop on Natural Language Processing and Computational Social Science 218−227 (NLP+ CSS 2022).