Abstract

Residual-based adaptive strategies are widely used in scientific machine learning yet remain largely heuristic. We introduce a variational framework that formalizes these methods through convex transformations of the residual, where different transformations correspond to distinct objective functionals. For instance, exponential weights target uniform error minimization, while linear weights recover quadratic error minimization. This perspective reveals adaptive weighting as a means of selecting sampling distributions that optimize a primal objective, directly linking discretization choices to error metrics. This principled approach yields three key benefits: it enables systematic design of adaptive schemes, reduces discretization error by lowering estimator variance, and enhances learning dynamics by improving gradient signal-to-noise ratio. Extending the framework to operator learning, we demonstrate substantial performance gains across diverse optimizers and architectures. Our results provide a theoretical perspective for residual-based adaptivity and establish a foundation for principled discretization and training.

Similar content being viewed by others

Introduction

Scientific machine learning (SciML) has emerged as a powerful alternative to traditional numerical methods for solving partial differential equations (PDEs). Here, we consider two of the main approaches in SciML. The first, which includes Physics-Informed Neural Networks (PINNs)1 and their variants, focuses on function approximation, where a representation model is trained to satisfy the governing equations of a specific problem2. The second is operator learning3,4, where a model learns the underlying solution operator itself, allowing it to generate solutions for new boundary conditions, source terms, or parameters almost instantaneously.

At their core, SciML models employ parameterized functions with strong approximation capabilities5,6 to represent the solution of a PDE or a solution map from parameters and boundary data to solutions to PDEs. The problem is thus reduced to an optimization task of finding the parameters that minimizes certain loss function. For example, the loss function for physics-informed methods is usually composed of the PDE residuals and the mismatch with observational data. Compared to traditional numerical method, this optimization-centric approach provides significant flexibility, say, for solving inverse problems that incorporates sparse data or lacks prescribed boundary conditions, or for high-dimensional problems7. However, there is no free lunch: these parameter optimization in SciML are generally high-dimensional and non-convex, making models susceptible to converging to poor local minima. Consequently, tackling the optimization has drawn significant research attention, with efforts including the development of specialized optimizers8,9,10 and methods that simplify the optimization task by explicitly encoding physical constraints, such as the exact imposition of boundary conditions11.

Among the various approaches, one of the most prominent strategies, which addresses both optimization and discretization errors, is to modify the loss function itself. This is typically achieved through adaptive sampling12,13 and weighting schemes14,15,16 that dynamically adjust the training process to focus on regions of the domain that are more difficult to learn. Adaptive sampling methods achieve this by concentrating collocation points in areas where the PDE residual is high13, while adaptive weighting methods assign larger local weights to the same important regions. The strategies for determining these weights are diverse, ranging from direct residual-based schemes to more complex adversarial or augmented Lagrangian formulations14,17,18.

Due to their simplicity and efficiency, weighting/sampling methods based on the residuals are particularly popular16,19,20,21,22,23,24,25,26, as they do not require specialized architectures or additional parameters. Two such examples are residual-based attention (RBA)15 and residual-based adaptive distribution (RAD)13 for residual-based weighting and sampling strategies, respectively. At first glance, these heuristic strategies are conceptually related to importance sampling; however, we remark an essential difference. Standard importance sampling reweights the sample to produce an unbiased estimator of the desired functional with less variance, whereas the schemes used in SciML estimate an entirely different functional.

In this work, we propose a mathematical framework for interpreting and designing new adaptive sampling/weighting schemes. Leveraging variational formulas of general statistical divergences, we show that minimizing the norms (e.g., L2 or L∞) of the residual can be written in a dual form that naturally involves sampling adaptively from distributions tilted by a factor depending on the current residual. Estimates from this new distributions can be realized through either direct sampling or importance weights. We refer to the multipliers obtained from this variational approach as variational residual-based attention (vRBA).

Our framework provides a compelling interpretation for RBA and RAD and shows how the choice of residual-based weights/distribution influences the primal minimization objective. We extend these methods to operator learning with a hybrid strategy employing importance sampling over the function space and importance weighting over the spatial domain, which can be seamlessly integrated into architectures like FNO and DeepONet. The framework yields a twofold benefit: it lowers the discretization error by reducing the variance of the loss estimator, and it improves learning dynamics by enhancing the signal-to-noise ratio of the gradients, leading to faster convergence. Finally, we demonstrate the efficacy of vRBA across a range of challenging PINN and operator learning tasks. Our empirical results show that using vRBA is pivotal to achieve lower errors, providing significant improvements even when paired with state-of-the-art second-order optimizers9 or specialized architectures like TC-UNet27.

Results

A central goal in scientific machine learning (SciML) is to train a parameterized model, u(x; θ) for parameters θ in some parameter space \({\mathcal{T}}\), to solve problems ranging from function approximation to satisfying physical laws described by a differential operator, \({\mathcal{F}}\). The performance of such a model is quantified by a residual function, r(x; θ), which measures the local error at each point x in the domain Ω. The ultimate objective is to find optimal parameters θ* that minimize this residual across the entire domain by optimizing a loss function \({\mathcal{L}}\), typically formulated as the L2 minimization of the residual over the uniform distribution, i.e.,

While effective for simple problems, this L2-based objective is often insufficient for complex PDEs involving multi-scale physics or singularities14. A suggestive reason comes from the perspective of PDE theory: classical solutions to PDEs are in the space of continuously-differentiable functions endowed with the supremum norm. Therefore, minimizing the maximum residual, rather than the average, can be desirable for capturing strong solutions28, i.e.,

This objective can also be (superfluously) expressed as an optimization over the space of probability measures, where the inner maximum is achieved by a measure concentrated at the point of highest error, which reads

For each θ, the optimizer of the inner optimization problem is \({q}^{* }={\delta }_{\{{x}^{* }(\theta )\}}\) where \({x}^{* }(\theta )=\arg \mathop{\min }\limits_{\Omega }r(\cdot ,\theta )\). (The notation δ{x} here refers to the Dirac measure at point x, which is the measure that satisfies ∫Ωf(y)δ{x}(dy) = f(x) for all bounded, measurable \(f:\Omega \to {\mathbb{R}}\).)

vRBA: a generative framework for residual-based adaptive scheme

We introduce a variational framework that solves a certain regularized version of using Φ-divergences29. Rather than fixing the metric a priori, the choice of regularization informs the residual-based adaptive scheme, which we show to correspond to specific error norms (e.g., L∞, variance) in a certain primal formulation. For a chosen potential function \(\Phi :{\mathbb{R}}\to {\mathbb{R}}\), the proposed optimization reads

where \({{\bf{D}}}_{{\Phi }^{* }}\) is the statistical divergence associated with the convex conjugate. This formulation offers a compelling interpretation for adaptive weighting and sampling12,13,14,15,16. The approach builds upon classical variational techniques commonly used in the theory of large deviations 30,31,32. See the supplementary information (SI) for further theoretical details.

Our proposed algorithm for solving (4) follows an intuitive alternative optimization scheme. At each iteration k, the method updates the sampling distribution q, the model parameters θ, and the regularizer ϵ as follows:

The regularization—the main novelty of the framework—is characterized by the choice of sampling distribution q, which is optimal in light of the (generalized) Gibbs variational formula. The Annealing step slowly decreases ϵ → 0, recovering (2). Depending on the choice of potential—in fact, for all choices besides the exponential—a normalization condition would be needed (see (20) and surrounding discussion in the Methods section for more details). Table 1 compiles a list of potentials used in the paper and their corresponding tilt q. See the Methods section for implementation details or SI for theoretical discussions.

The power of this framework lies in its generative capability: by simply choosing the potential function Φ, we can generate and interpret new adaptive approaches. For example, we recovered existing heuristics: the Exponential potential Φ(r) = er targets L∞ minimization and recovers softmax-based attention mechanisms similar to those used in transformers33 and uncertainty quantification34; on the other hand, the quadratic potential Φ(r) = r2 + 1 targets variance minimization and recovers linear weighting schemes like RAD13 and RBA15. In summary, vRBA collects residual-based adaptive schemes into a single interpretable framework that facilitates the design and comparison of numerical schemes.

Extension to operator learning: a hybrid adaptivity strategy

Neural Operators (NOs) are a class of models designed to learn the solution map to a PDE. More specifically, they approximate the solution operator Gθ from an input function, such as a source term f, to a corresponding solution u3,4. Alternatively, they can be formulated as propagators that evolve a solution in time by mapping an initial state u(t0) to a future state u(t0 + Δt) for some Δt > 0.

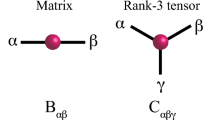

The residual for this task, R(v, x; θ), measures the pointwise error between the model’s prediction Gθ(v)(x) and the true solution \({\mathcal{G}}[v](x)\) for each spatial point x ∈ Ω. The learning task is now defined over a product of the function space \({\mathcal{X}}\) and the spatiotemporal domain ΩY and directly optimizing the objective \({{\mathbb{E}}}_{{q}^{k}}[R]\) is challenging as a single adaptive scheme is ill-suited for this heterogeneous product space. We address this by disintegrating the Radon-Nikodym derivative, which allows us to rewrite the expectation as a nested integral, which reads

The outer integral over the function space \({\mathcal{X}}\) is approximated via importance sampling, where we draw functions v from an adaptive distribution defined by the marginal derivative \(\frac{d{q}^{k}}{dp}(v)\). Concurrently, the inner integral over the spatiotemporal domain ΩY is approximated via importance weighting, where weights Λi,j are derived from the conditional \(\frac{d{q}^{k}}{dp}(x| {v}_{j})\). While this formulation fully accommodates unstructured meshes or point clouds on variable geometries, this division is practically motivated by the constraints of specific architectures. In particular, importance weighting is beneficial for operator learning schemes that are restricted to fixed spatial grids. Moreover, the mathematical framework of vRBA requires little of the underlying domain, enabling operator learning applications in infinite-dimensional function spaces.

vRBA accelerates convergence, achieves higher accuracy and reduces error accumulation

We evaluate the performance of the vRBA framework in both Physics-Informed Neural Network (PINN) and operator learning settings. To demonstrate the generative flexibility of the framework, we test seven distinct schemes: the standard uniform baseline (Φ(r) = r) and six adaptive potentials derived from the proposed framework described in Table 1.

For the PINN benchmarks, we solve the forward problem for the Allen-Cahn and Burgers’ equations and the Korteweg-De Vries (KdV) equations. Crucially, we investigate two distinct regimes for the Burgers’ equation: a standard case (ν = 1/100π) and a “hard” case (ν = 1/1000) featuring extremely sharp gradients, which allows us to evaluate the method’s performance on quasi-discontinuous solutions. To ensure a fair comparison with the current development of PINNs, both our baseline and vRBA-enhanced models incorporate Fourier features to improve expressive capabilities and encode periodic boundary conditions35. Furthermore, to demonstrate that the benefits of vRBA are complementary to advanced optimization techniques, we evaluate its performance on the Allen-Cahn equation using both first-order and state-of-the-art second-order optimizers, such as SSBroyden9.

Similarly, we evaluate vRBA’s performance in the operator learning setting across two primary scenarios. The first scenario involves learning a direct mapping from an input function to the corresponding solution. We apply this approach to the Bubble Growth Dynamics using a DeepONet. To explicitly assess the framework’s capability to handle weak solutions with pure discontinuities, we introduce the Sod Shock Tube (SST) benchmark using an SVD-DeepONet36. The second, more challenging scenario involves learning an operator that propagates a solution forward in time. This approach is often implemented autoregressively, where the model’s output at one timestep becomes the input for the next. We investigate this recursive setting by solving the Navier-Stokes (Kolmogorov flow) and Wave equations with FNO4 and TC-UNet27 architectures, respectively. The governing PDE formulations and specific implementation details for all benchmarks are provided in the Methods section.

Figure 1, Tables 2, and 3 demonstrate that vRBA consistently accelerates convergence and improves accuracy across all benchmarks compared to the baseline. While all adaptive potentials generally outperform uniform sampling, the optimal choice of potential correlates with the physical characteristics of the solution. For problems with smooth but complex structures like the Allen-Cahn and KdV equations, the Exponential potential (Φ(r) = er) tends to yield the best performance. This is most dramatic in the KdV benchmark, where the baseline model fails completely, while the Exponential vRBA model achieves high accuracy. In contrast, for the “hard” Burgers’ case (ν = 1/1000), where the solution develops steep gradients akin to a shock, the Quadratic potential (Φ(r) = r2) outperforms the exponential variants, suggesting that variance reduction may be more stable than aggressive L∞ minimization in the presence of extremely sharp transitions. Finally, in the Sod Shock Tube problem, which features a true discontinuity, the Dual-KL potential (\(\Phi (r)\approx \log r\)) achieves the lowest error, highlighting the benefit of asymmetric or logarithmic penalties for weak solutions. Additionally, we compare the vRBA performance against other state-of-the-art PINN methods. We focus on the Allen-Cahn and Burgers’ equations, which have been considered challenging benchmarks since the introduction of PINNs due to their sharp transitions and complex dynamics. Furthermore, we introduce the Korteweg-De Vries (KdV) equation as an additional “stress test”—a problem known to cause failure in vanilla PINNs, yet possessing a smooth exact solution that enables precise error quantification. Table 4 demonstrates that combining the SSBroyden optimizer with vRBA yields the most accurate result among the compared methods across all benchmarks.

A Relative L2 error convergence for the PINN benchmarks. Different potentials are marked in different colors, while the black line represents the Baseline. vRBA models generally converge faster and to a lower final error. Notably, for the Korteweg-De Vries (KdV) equation, the Baseline fails to converge, whereas vRBA achieves low error. We observe that potentials lacking an exact closed-form solution for the weighting parameter ϵ can exhibit instability: the superexponential \(\Phi (r)={e}^{{r}^{2}}\) becomes unstable due to the sharp gradients in Burgers' equation, and \(\Phi (r)=\cosh (r)\) leads to divergence in the Allen-Cahn equation. B Relative L2 error convergence for the operator learning tasks. For these purely data-driven problems, vRBA models consistently demonstrate accelerated convergence and lower final errors for Bubble Growth Dynamics (DeepONet), the Sod Shock Tube (SVD-DeepONet), Navier-Stokes (FNO), and the Wave Equation (TC-UNet). C, D Mean error norms (solid lines) with standard deviation (shaded areas) evaluated over timoomene for the FNO and TC-UNet models, respectively. The vRBA-enhanced models consistently exhibit a lower mean error, smaller variance, and a significantly slower rate of error accumulation.

Notably, our results reveal that vRBA is beneficial regardless of the optimization strategy. Even when coupled with the standard first-order Adam optimizer, vRBA (\(\Phi ={e}^{{r}^{2}}\)) significantly outperforms not only Adam-based baselines but also recent methods that rely on L-BFGS and complex architectures.

The table highlights that one of the high-performance methods with ADAM combines RBA with complex architectural enhancements. Moreover, the previous state-of-the-art results were achieved using SSBroyden and RAD. As pointed out in the previous sections, RAD and RBA can be identified as specific variations of vRBA with Φ(r) = r2 and specific smoothing configurations. This underscores our main conclusion: the combination of a powerful optimizer with a principled adaptive sampling strategy is the essential factor for achieving the highest accuracy.

Figure 1B indicates that vRBA significantly accelerates convergence for all operator learning tasks. For the direct mapping scenario, Table 2 shows that vRBA reduces the final error for the Bubble Growth Dynamics problem by more than an order of magnitude. While the overall relative L2 error improvements for the autoregressive Navier-Stokes (FNO) and Wave Equation (TC-UNet) tasks appear more modest, this single, aggregated metric does not capture the full performance gain. As their rate of error accumulation governs the long-term performance of these models, Fig. 1C, D reveals an additional advantage: the vRBA-enhanced models exhibit significantly slower error growth and a much smaller standard deviation across the test trajectories, indicating more robust and generalizable long-term predictions.

vRBA captures fine details and promotes uniform error distribution

In addition to the overall error reduction documented in the previous section, a key advantage of vRBA is its ability to capture fine-scale solution features. This improved capability can be analyzed from the theoretical perspective of our framework. When an exponential potential (Φ(x) = ex) is used, the framework attempts to minimize the L∞-norm in the primal formulation, which pressures the model to suppress the largest residuals. On the other hand, when a quadratic potential (Φ(r) = r2) is used, the primal objective corresponds to variance minimization, forcing the model to address high-magnitude outliers. Both mechanisms compel the model to fit the entire solution domain more evenly, leading to a more uniform error distribution.

This effect is observed empirically in Figs. 2 and 3. For the PINN benchmarks Figs. 2 and 3, the baseline model’s error is highly concentrated along specific structures, whereas vRBA produces a more spatially uniform error distribution. This redistribution is particularly effective for the KdV and low viscosity- Burgers (ν = 1/1000) benchmarks (Fig. 2). In these cases, the baseline error spikes uncontrollably at shock fronts and solitons, whereas vRBA helps the optimizer improve mode performance across these sharp transitions.

Columns correspond to the Allen-Cahn (AC), Burgers' (BG, with ν = 1/100π and ν = 1/1000), and Korteweg-De Vries (KdV) equations. The top row shows the reference solution. Rows 2 and 3 display the pointwise absolute error for the baseline and vRBA models, respectively, while rows 4 and 5 show the corresponding PDE residuals. The vRBA framework yields a significantly more uniform error distribution with lower magnitudes, with residuals appearing negligible (dominant blue) across most domains. In the Burgers' benchmarks, vRBA markedly suppresses the high-residual structures observed in the baseline despite the sharp gradients.

The top two rows correspond to the Bubble Growth Dynamics (BGD) problem, while the bottom two rows depict the Sod Shock Tube (SST) problem. To ensure a fair comparison, the columns display the worst-case sample for the baseline, the worst-case sample for vRBA, and a randomly selected sample. In the BGD benchmark, the baseline model (Row 1) fails to resolve the initial high-frequency oscillations. In contrast, the vRBA model (Row 2) faithfully reconstructs these rapid fluctuations, capturing fine-scale details. Similarly, for the SST problem, vRBA (Row 4) yields cleaner shock profiles with significantly reduced oscillations near discontinuities compared to the baseline artifacts (Row 3). Notably, the discontinuities in these problems span several orders of magnitude, ranging from \({\mathcal{O}}(1)\) to \({\mathcal{O}}(1{0}^{-3})\); vRBA remains robust even in this challenging regime, capturing the sharp transitions far more cleanly than the baseline.

This advantage is also evident in the operator learning context, Fig. 3, where the baseline DeepONet fails to resolve high-frequency oscillations in the Bubble Growth Dynamics problem or the sharp discontinuities in the Sod Shock Tube (SST) benchmark (Fig. 3). In the SST case specifically, vRBA captures the shock profile without the severe artifacts seen in the baseline, despite the solution spanning multiple orders of magnitude.

This improved capability for resolving complex features is further demonstrated in the autoregressive operator learning tasks, as shown in Fig. 4. For both the Navier-Stokes (FNO) and Wave Equation (TC-UNet) benchmarks, the reference solutions involve intricate, evolving structures. The baseline models fail to track these details over time, leading to a rapid accumulation of structured, high-magnitude error. In contrast, the vRBA-enhanced models maintain a much lower pointwise error throughout the simulations by successfully capturing the fine-scale dynamics at each timestep, thereby preventing the compounding of errors.

The figure displays a qualitative comparison for a single representative trajectory from the testing dataset for each benchmark. A For the 2D Navier-Stokes benchmark, the baseline FNO fails to resolve the complex vorticity structures, leading to a large and growing Mean Squared Error (MSE). In contrast, the vRBA-enhanced model’s error remains suppressed throughout the simulation. B Similarly, for the 2D Wave Equation, the baseline TC-UNet accumulates significant error as the wave creates intricate interference patterns. The vRBA model successfully captures these fine details, keeping the error exceptionally low. By maintaining a lower pointwise error at every step, vRBA exhibits a much slower rate of error accumulation, which is an esential property for autoregressive tasks.

vRBA reduces discretization error via variance reduction

The total error of a neural network-based PDE solver can be decomposed into approximation, optimization, and discretization components37. The discretization error arises when approximating the continuous loss integral with a finite sum via Monte Carlo sampling. More concretely, for any bounded, measurable functional \(r:\Omega \to {\mathbb{R}}\) such as the residual or its gradient, we can estimate the mean \({{\mathbb{E}}}_{q}[r]\) with n samples by defining an unbiased estimator

Alternatively, if one only has the ability to sample from distribution p instead, importance sampling weight can be used assuming absolute continuity of q with respect to p, and the estimator now reads

In the context of vRBA (and other residual-based adaptive schemes), the distribution qk at the k-th iteration has a normalizing constant in the Radon-Nikodym derivative, \(\frac{d{q}^{k}}{dp}\), which introduces a bias. However, these Monte Carlo estimators are nonetheless consistent and converge according to the central limit theorem

This implies that the discretization error (i.e., \(| {\widehat{r}}^{n}-{{\mathbb{E}}}_{{q}^{k}}[r]|\)) vanishes at a rate of \({\mathcal{O}}(1/\sqrt{n})\), with a magnitude that scales directly with the variance of the estimator. Therefore, if the variance of the reweighted estimator is smaller than that of the standard estimator, such that

then vRBA actively reduces the discretization error at each training step.

Proving such variance reduction is difficult at this level of generality, and we resort to numerical demonstration. Figure 5 shows the evolution of the residual variance during training for both the PINN benchmarks (Fig. 5A) and the operator learning tasks (Fig. 5B). A consistent trend is immediately apparent across all six problems: the vRBA-enhanced models achieve a residual variance that is several orders of magnitude lower than their corresponding baseline models. This result suggests vRBA may effectively induce variance reduction.

The figure displays the evolution of the residual variance Var(r) (top rows) and the infinity norm ∥r∥∞ (bottom rows) during training for (A) the PINN benchmarks and B the operator learning tasks. We compare the baseline against vRBA instantiated with various convex potentials Φ(r), including r2, er, \((1+r)\log (1+r)-r\), \({e}^{{r}^{2}}\), rp, and \(\cosh (r)\). Generally, the vRBA models achieve both lower variance and lower ∥r∥∞ compared to the baseline across both distinct problem classes. Crucially, this variance reduction is established early in training, providing a more stable and accurate loss signal, which explains the accelerated convergence seen in Fig. 1. However, notably in the WF (TC-UNet) benchmark, potentials designed to aggressively minimize ∥r∥∞ do not sufficiently constrain the variance, causing Var(r) to increase during the later stages of training.

Furthermore, Fig. 5 tracks the evolution of the infinity norm ∥r∥∞ in the bottom rows. These results demonstrate that vRBA generally achieves lower ∥r∥∞ values alongside reduced variance, indicating that the method may effectively suppresses extreme outliers and does not merely smooth the average error at the expense of worst-case residuals.

vRBA improves the learning dynamics

Due to the linearity of the gradient operator, the overall update direction, \({\widehat{p}}_{k}\), is equivalent to the average of gradients over different regions of the domain (see Proposition in SI). During training, conflicting gradient directions from different parts of the data coexist. If these component gradients are not well-aligned, the average direction—the direction of update—diminishes, leading to slow convergence or stagnation.

We formalize the above description. From any given partition j, the update direction \({\widehat{p}}_{j}^{k}={\widehat{p}}^{k}+{\epsilon }_{j}^{k}\) can be decomposed into two components: the “signal” \({\widehat{p}}^{k}\), the true update direction from the continuous loss, and the “noise” \({\epsilon }_{j}^{k}\), the deviation of the partition’s gradient from that signal. The Signal-to-Noise Ratio (SNR) measures the ratio of the magnitude of the signal to the root mean square (RMS) magnitude of the noise38,39,40, which reads

The expectation and variance are taken over random partitions Bj of the full dataset. A high SNR indicates that the gradient from any given partition is a faithful representation of the true update direction, leading to a confident and effective optimization step. The full derivation showing how the denominator corresponds to the noise term is provided in the SI.

The evolution of the SNR, shown in Fig. 6 alongside the generalization error (measured as the relative L2 error on the test set), reveals three distinct stages of learning i.e., fitting, transition, and diffusion. This phased progression has been observed across diverse applications such as function approximation, PINNs, and operator learning40,41,42, and it provides a metric to quantify how vRBA improves the training dynamics.

The figure plots the generalization error (top row of each panel) and the Signal-to-Noise Ratio (SNR, bottom row) for (A) the PINN benchmarks and B the operator learning tasks. We compare the baseline against vRBA instantiated with several distinct convex potentials marked with different colors. The three stages of learning are clearly visible in most PINN and DeepONet experiments. After an initial fitting phase (high but decreasing SNR), models enter a low-SNR transition phase where the generalization error stagnates. The key finding is that the vRBA-enhanced models (colored lines) escape the transition phase significantly faster than the baseline (black line). This allows them to enter the diffusion phase, which is marked by a sharp increase in SNR that correlates with a rapid drop in error. This effect is most evident in the first-order Allen-Cahn problem, where vRBA accelerates the entry to diffusion from ~ 50,000 to ~ 10,000 iterations. While the dynamics differ for the second-order optimizers and the FNO architecture, vRBA consistently maintains a higher SNR relative to the baseline. Finally, the late-stage decay in SNR, particularly for FNO and TC-UNet, corresponds precisely with the stagnation of the generalization error, indicating model saturation.

The first stage is the fitting phase, characterized by a high but decreasing SNR. Initially, when the model’s predictions are poor, a consensus on the update direction is easily found. As the training error reduces, the optimal direction becomes less clear, causing the SNR to drop. This behavior is clearly observed in the Allen-Cahn, Burgers’, and DeepONet experiments, and to some extent in the Baseline TC-UNet. The FNO model, however, appears to bypass this phase, with its generalization error improving almost immediately, likely due to the strong structural priors of its Fourier-based architecture.

Next, the model enters the transition phase, a low-SNR exploratory stage where the optimizer searches for an effective update direction to minimize the loss across the entire domain. This phase is noisy, with different data partitions pointing in conflicting directions, and models that get trapped here fail to converge. We observe this phase in the Allen-Cahn, Burgers’, and DeepONet results. For instance, the second-order Allen-Cahn model is temporarily trapped in this phase during its initial 5000 iterations of first-order pre-training. Again, this phase is less distinct for the FNO and TC-UNet, possibly due to their complex architectures.

After the exploratory phase, a successful model enters the productive diffusion phase, marked by a sharp jump in the SNR that correlates with a rapid decrease in generalization error. A key finding is that vRBA significantly accelerates the entry into this stage. This is most evident in the first-order Allen-Cahn benchmark: the baseline model is stuck in the transition phase for nearly 50,000 iterations, while the vRBA models enter diffusion in just 10,000. This provides a mechanistic explanation for claims that standard PINNs cannot solve this problem; often, the models are simply not trained long enough to escape the prolonged transition phase, a challenge vRBA mitigates.

The dynamics of the second-order optimizer also show important distinctions. For the Allen-Cahn problem, which was trapped in the transition phase during pre-training, the switch to the SSBroyden optimizer triggers a sharp initial increase in the SNR as the model finds the optimal direction, followed by a decrease as it converges. In contrast, the Burgers’ model was already in a productive diffusion phase, so the switch to the more efficient optimizer simply causes the SNR to drop.

Finally, the phenomenon of model saturation is particularly evident in the operator learning benchmarks. For both the FNO and TC-UNet models, the late-stage decay in the SNR corresponds precisely with the stagnation of the generalization error. This indicates that the model has “lost the signal”, confirming the SNR’s information as a powerful diagnostic metric for the entire learning process.

Discussion

Residual-based adaptive strategies are widely used in scientific machine learning to accelerate convergence and improve accuracy. Yet, there lacks a mathematical framework that for describing and generating these adaptive schemes. In this work, we introduce a unifying variational framework that formalizes these methods by leveraging the duality of statistical divergences, specifically the Laplace principle and the Gibbs variational formula (and generalizations thereof). We show, for a wide class of residual-based adaptive schemes, that optimization under adaptively tilted distributions can be interpreted as dual to certain primal objective minimization depending on the tilting “potential” function Φ.

Crucially, the power of this framework, which we term vRBA, lies in its generative capability: the choice of the convex potential function Φ dictates the nature of the adaptive scheme. Our analysis demonstrates that previously distinct reweighting methods are, in fact, specific instantiations of this single theoretical perspective. For instance, selecting an exponential potential (Φ(r) = er) targets the minimization of the L∞-norm, recovering exponential distributions akin to attention mechanisms33 but with an adaptive temperature parameter. Similarly, selecting a quadratic potential (Φ(r) = r2 + 1) targets the minimization of the residual variance, recovering the linear weighting schemes utilized in Residual-Based Attention (RBA)15 and Residual-Based Adaptive Distribution (RAD)13. This unification bridges the conceptual gap between sampling and weighting strategies, which are traditionally treated as distinct optimization heuristics. Our analysis reveals that they are alternative computational approaches—one via resampling, the other via importance weighting—of the same underlying variational objective. Beyond unifying existing methods, this framework offers a generative recipe for designing new adaptive schemes tailored to specific physical regimes, such as using logarithmic potentials for sharp transitions or super-exponential potentials for aggressive error suppression. Furthermore, we extend this framework to Operator Learning, demonstrating that these variational principles are generic and apply equally to learning mappings between infinite-dimensional function spaces.

The implications of this variational perspective, however, extend even further. For instance, the formulation proposed in25 can be recovered using quadratic potentials and constructing the distribution using a locally averaged residual. Our framework also enables us to interpret other advanced heuristics. For instance, methods that balance the residual decay rate16, for example, can be viewed as replacing the simple temporal smoothing of the EMA with a more sophisticated, history-aware mechanism for computing the adaptive distribution qk. More broadly, while our analysis focuses on deriving the optimal distribution q analytically, the dual problem also admits an alternative solution strategy: learning the distribution q directly. This insight reframes self-adaptive methods—techniques with learnable weights or auxiliary networks14,17—as implicitly learning this optimal biasing distribution. Our framework thus justifies these pioneering approaches, unifying them under the same variational principles.

Complementing this theoretical foundation, our empirical results indicate that the vRBA framework significantly improves model accuracy across all tested scenarios. For all our benchmarks, this improvement manifests as an enhanced ability to capture fine-scale solution features, leading to a more uniform error distribution. Crucially, this advantage holds for models trained with both standard first-order and state-of-the-art second-order optimizers, confirming that vRBA is a complementary enhancement to advanced optimization techniques. In the operator learning setting, particularly for autoregressive tasks using advanced architectures like FNO and TC-UNet, vRBA achieves a significant reduction in the error accumulation rate, leading to more robust and reliable long-term predictions.

We attribute vRBA’s empirical success to two underlying mechanisms. First, the residual-based adaptation lowers the discretization error by reducing the variance of the loss estimates. At each step, the gradients computed are stable and faithful to the true, continuous loss objective. Consequently, the optimizer can focus on minimizing the actual objective function rather than navigating a noisy loss landscape induced by a high-variance estimator. Second, our results indicate that vRBA improves the learning dynamics as quantified by the Signal-to-Noise Ratio (SNR) of the back-propagated gradients. The SNR measures the reliability of the gradient signal relative to the stochastic noise and is a well-studied metric in the context of stochastic training38. Our empirical analysis reveals that the vRBA-enhanced models consistently exhibit a higher SNR than their baselines across all analyzed benchmarks. Higher SNR correlates with improved training outcomes: vRBA models show significantly shorter transition phases while entering the diffusion phase faster. This effect is particularly pronounced in the PINN and DeepONet experiments.

Despite these advantages, the application of our framework is subject to certain constraints. While vRBA theoretically provides a rich supply of weighting functions by choosing different convex potentials, it requires these potentials to be both convex and increasing. In general, we observe that the framework works robustly for the majority of potentials. However, there are specific cases that can be too aggressive, such as super-exponential potentials (e.g., \(\Phi (r)={e}^{{r}^{2}}\)). This behavior is expected, as these potentials are designed to be extremely strong, imposing severe penalties on high residuals which can be destabilizing in sensitive regimes. Nevertheless, as illustrated in Fig. 1, valid potentials generally outperform the baselines. Furthermore, not all valid potentials yield an exact, closed-form solution for the annealing parameter. As demonstrated in our examples, closed-form solutions are readily available for the Lp family (which includes the linear weights of RBA) and the exponential potentials derived here. However, for other convex potentials, the optimal parameter may not be analytically invertible. While this is not a fundamental bottleneck, since the convexity of the problem allows the parameter to be computed efficiently using Newton’s method with almost null computational overhead, it does necessitate additional implementation effort compared to the closed-form cases.

Another limitation concerns the stochastic nature of the computed weights and the nuances of their application. Similar to adaptive optimizers like Adam43, our method relies on memory terms—specifically an Exponential Moving Average (EMA), to ensure stability in the presence of stochastic noise. Consequently, the adaptive weights are not applied directly or sampled in their raw form. The necessity of this memory term varies by application: as shown in SI, for PINNs trained with robust second-order optimizers, the method can often be applied directly. However, for Operator Learning, the EMA is indispensable both for stability and to efficiently compute the aggregate scores needed to resample within the function space. While this introduces an additional hyperparameter (the decay rate), our sensitivity analysis demonstrates that the model is highly robust, consistently outperforming baselines with decay rates ranging from 0.4 to 0.99. Furthermore, as shown in SI, applying the derived weighting function directly yields significant performance gains. However, we observe that for exponential potentials targeting the L∞-norm, using a small convex combination of the adaptive weights further improves performance. In contrast, for quadratic and logarithmic potentials, this interpolation yields no additional benefit, and direct application is optimal. This distinction is expected: the L∞ optimization is inherently aggressive, even in its dual variational form, and thus benefits from the regularization provided by the convex combination, whereas the variance-reduction objectives are inherently more stable.

Finally, while the SNR analysis provides insights into the learning dynamics for most cases, the behavior of the two operator learning cases, FNO and TC-UNet, presents a more complex scenario. These models exhibit distinct dynamics where the generalization error decreases almost from the start of training, apparently bypassing the distinct fitting and transition phases observed in simpler models. We postulate that this discrepancy arises from the interplay between the SNR and the model’s geometric complexity. Previous studies found an inverse relationship: the geometric complexity tends to increase precisely when the SNR decreases42. This suggests that the low-SNR transition phase is an active period where the model increases its representational capabilities to resolve conflicting gradients. We speculate that because FNO and TC-UNet are sophisticated architectures endowed with strong structural priors, their initial states may be complex enough to facilitate immediate generalization, thereby bypassing these initial low-SNR stages. However, unlike in function approximation, where discrete Dirichlet energy, as introduced in44, serves as a quantifiable metric for geometric complexity, a clear proxy for this property in operator learning is not currently established. As such, fully characterizing these dynamics remains an open question for future work.

Methods

Variational residual-based attention methods

The potential function \(\Phi :{{\mathbb{R}}}_{+}\to {{\mathbb{R}}}_{+}\) is a crucial parameter for vRBA. It determines the form of the sampling distribution and (consequently) the corresponding primal problem. We restrict Φ to be a non-negative, convex, increasing, superliner function; we show two examples and give detailed calculations in the coming sections. The training process starts by sampling N i.i.d. random variables \({\{{X}_{i}\}}_{i=1}^{N}\) uniformly from the spatial domain Ω and calculating the corresponding residuals r(Xi). The general method then involves three steps per iteration:

-

1.

updating the tilted distribution q, which generally proportional to the derivative (or sub-differential) \({\Phi }^{{\prime} }\);

-

2.

updating the model parameters θ via a line search method (first- or second-order);

-

3.

updating the temperature parameter ϵ using an annealing scheme which can depend on Φ.

We elaborate on each step below, providing the implementation specifics for the examples shown in the Results section.

Update the tilted distribution

While the generalized Gibbs variational principle does not typically yield a closed-form update rule for the distribution q, for a fixed potential Φ, one can obtain the optimal tilt by the appropriate annealing schedules (to be discussed in the coming subsection). Under this assumption, the optimal sampling distribution for the next iteration qk+1 is given by

and we evaluate only at the collocation points \({\{{X}_{i}\}}_{i=1}^{N}\). The above form holds for all choices of potential functions while normalization differs. Only when Φ(r) = er, we can immediately deduce that

For other choices of Φ, normalization will be enforced via the choice of ϵk (the annealing parameter) instead, i.e., the proportionality constant in (12) is one.

For both models, the collocation points {Xi} are randomly sampled and the empirical target distribution qk+1 can be prone to spurious fluctuations. To promote stability, especially when using fast-growing potentials like Φ(r) = er, we smooth the target distribution over time using an exponential moving average (EMA). Furthermore, we introduce an additional smoothing mechanism by interpolating between the adaptive distribution qk+1 and the base uniform distribution p. The combined update rule for the importance weights reads

where (γ ∈ [0, 1)] is a memory term and η* is a learning rate. The parameter ϕ ∈ [0, 1] controls the degree of adaptivity; ϕ = 1 corresponds to the fully adaptive case from which the original method is recovered, while smaller values of ϕ increase stability by biasing the distribution towards uniformity. For stability reasons, which becomes particularly important for second-order methods, we have found that normalizing the learning rate as \({\eta }^{* }=\eta /ma{x}_{\varOmega }{q}^{k+1}\) is beneficial. The resulting vector λk+1 can be interpreted as the smoothed, unnormalized distribution that guides the optimization. While it incorporates information from the optimal distribution qk+1, it is not itself a probability mass function as it does not necessarily sum to one.

Update the model parameters

Once the smoothed importance weights \(\{{\lambda }_{i}^{k+1}\}\) have been computed, they can be used to formulate the loss function in two primary ways: by guiding a resampling process or by directly weighting the residuals.

Adaptive Sampling

In this approach, the weights are first normalized to recover a smooth probability mass function (p.m.f.) over the discrete domain Ω

This new distribution \(\overline{q}\) is then used to resample a new set of training points \({\{{X}_{i}\}}_{i=1}^{N}\) from the full collocation set Ω. By focusing the sampling on high-importance regions, the loss can be computed as a standard, unweighted mean-squared error on this new, more challenging set of points

Notably, this framework can recover methods similar to residual-based adaptive sampling13 by setting the potential to Φ(x) = x2 + 1 and the EMA parameters to η* = 1 and γ = 0.

Importance Weighting

Alternatively, the weights can be used directly in an importance weighting scheme. In this case, the training points \({\{{X}_{i}\}}_{i=1}^{N}\) are sampled uniformly from Ω. The weights \(\{{\lambda }_{i}^{k+1}\}\) are then applied directly to the residuals within the loss calculation, creating a weighted objective that reads

One might worry that squaring the weights and residual depart from the procedure outlined previously. By an application of Jensen’s inequality, one can obtain that squaring the residual (and weights when applicable) corresponds to solving a strictly stronger problem with the added benefits of differentiability.

This framework is also general enough to recover other popular methods. For example, with the potential Φ(x) = x2 + 1 and specific choices of EMA parameters, it is possible to recover the traditional residual-based adaptive weights15 and their variations42,45.

Once the objective function \({\mathcal{L}}({\theta }^{k+1})\) is calculated, the model parameters are optimized using a line search algorithm of the form

where αk is the step size, and \({p}^{k}=-{H}_{k}{\nabla }_{\theta }{\mathcal{L}}({\theta }^{k})\) is the update direction which depends on the gradient of the objective function and some symmetric matrix Hk9.

Update the regularization parameter

As alluded to before, the choice of the annealing schedule depends on the choice of potential Φ. There are two cases to be discussed, and the latter has two further subcases.

Case I: Exponential Potential (Φ(x) = e x)

For this choice, there are no requirements on the choice of ϵ thanks to the duality between entropy and free energy. The particular choice we implemented reads

This schedule has several advantages. Using the maximum residual, \(ma{x}_{\varOmega }| r|\), in the numerator provides a dynamic, problem-dependent characteristic scale for the temperature ϵ. This helps to normalize the magnitude of the residuals relative to the magnitude of the solution itself. The logarithmic term in the denominator ensures a slow and stable decay, such that ϵk → 0 as k → ∞, which gradually sharpens the distribution’s focus on the largest residuals. In the context of simulated annealing, logarithmic decay is sufficiently slow to guarantee global convergence [ref. 46, Theorem 1].

Case II: general potentials

For this case, the optimality of the distribution q holds under the normalization condition

For this case, the constraint is satisfied by choosing ϵk to be the normalizing constant. There are two further cases.

1. There are cases when the choice of ϵ can be computed analytically, for example, the polynomials Φ(r) = ∣r∣p + c for any p ∈ (1, ∞) and \(c\in {\mathbb{R}}\). In this case, we can see that

so

2. In many other cases, e.g., \(\Phi (r)=\cosh (r)\), \(\Phi (r)={e}^{{r}^{2}}\), or \(\Phi (r)=(1+r)\log (1+r)-r\), analytic computation might challenging. We calculate ϵk dynamically at each training step using a Newton-Raphson solver. To ensure stability and speed, we derive robust initial guesses based on the asymptotic behavior of each potential: \({\epsilon }_{0}\approx {r}_{\max }/{\text{ln}}(2N)\) for \(\cosh (r)\), \({\epsilon }_{0}\approx {r}_{\max }/\sqrt{{\text{ln}}N}\) for \({e}^{{r}^{2}}\), and \({\epsilon }_{0}\approx \overline{r}/(e-1)\) for the logarithmic potential. Due to the strict convexity of these functions and the accuracy of the initialization, the solver typically converges to machine precision in a few iterations (See Algorithm 1). This dynamic adjustment induces minimal computational overhead relative to the full backpropagation step. In particular, for all the analyzed examples, the inclusion of vRBA involves < 10% of computational overhead from the vanilla models.

Algorithm 1

Newton-Raphson Solver for ϵk

Require: Residual batch r; Potential Φ; Max iterations M = 20; Tolerance δ = 10−8.

Ensure: Optimal scaling parameter ϵ.

1: Compute statistics: \({r}_{\max }\Leftarrow \max (r)\), \(\overline{r}\Leftarrow mean(r)\), N ⇐ length(r).

2: Initialization (Asymptotic Approximation):

3: if \(\Phi (r)=\cosh (r)\) then

4: \(\epsilon \Leftarrow {r}_{\max }/ln(2N)\)

5: else if \(\Phi (r)={e}^{{r}^{2}}\) then

6: \(\epsilon \Leftarrow {r}_{\max }/\sqrt{ln(N)}\)

7: else if \(\Phi (r)=(1+r)\log (1+r)-r\) then

8: \(\epsilon \Leftarrow \overline{r}/(e-1)\)

9: end if

10: \(\epsilon \Leftarrow \max (\epsilon ,\delta )\)

11: Newton-Raphson Loop:

12: for j = 1…M do

13: u ⇐ r/ϵ

14: Compute constraint residual: \(F(\epsilon )\Leftarrow \frac{1}{N}\sum {\Phi }^{{\prime} }(u)-1\)

15: Compute gradient: \({F}^{{\prime} }(\epsilon )\Leftarrow \frac{1}{N}\sum {\Phi }^{{\prime\prime} }(u)\cdot (-u/\epsilon )\)

16: Update: \(\epsilon \Leftarrow \epsilon -F(\epsilon )/({F}^{{\prime} }(\epsilon )-\delta )\)

17: Clamp: \(\epsilon \Leftarrow \max (\epsilon ,\delta )\)

18: end for

19: return ϵ

Physics-informed neural networks (PINNs)

In the Physics-Informed Neural Network (PINN) framework, the goal is to approximate the solution \(\bar{u}(x)\) of a PDE or ODE using a parameterized representation model, u(x; θ). The training objective is to minimize a loss function composed of several residual terms that enforce the problem’s physical and data constraints.

The primary component is the PDE residual, which measures how well the model satisfies the governing equations. It is defined using a differential operator \({\mathcal{F}}\) as:

Additionally, the model must match any available data, which may include boundary conditions, initial conditions, or sparse observations from a known function \(\bar{u}(x)\). This is enforced through a data-fit residual, defined as the pointwise error:

The total loss function, \({\mathcal{L}}\), is a weighted sum of the individual loss terms computed from these residuals. For a problem with governing equations (E), boundary/initial conditions (B), and observational data (D), the total loss is:

where mE, mB, and mD are global weights that balance the contribution of each term. The individual loss functions (\({{\mathcal{L}}}_{E}\), \({{\mathcal{L}}}_{B}\), \({{\mathcal{L}}}_{D}\)) are each computed as described in equation (16) for the adaptive sampling approach or as in equation (17) for the importance weighting. To ensure the update directions induced by the different loss components are balanced, we employ the self-scaling mechanism presented in42.

Global weights

Notice that for first-order optimizers such as ADAM, the update direction for PINNs (i.e., equation (25)) is given by:

where \({\nabla }_{\theta }{{\mathcal{L}}}_{E}\), \({\nabla }_{\theta }{{\mathcal{L}}}_{B}\), and \({\nabla }_{\theta }{{\mathcal{L}}}_{D}\) are the loss gradients which can be represented as high-dimensional vectors defining directions to minimize their respective loss terms. Notice that if the gradient magnitudes are imbalanced, one direction will dominate, which may lead to poor convergence. To address this challenge, we propose modifying the magnitude of the individual directions by scaling their respective global weights. In particular, we fix mE and update the remaining global weights using the rule:

where α ∈ [0, 1] is a stabilization parameter47. This formulation computes the iteration-wise average ratio between gradients, enabling normalized scaling, which, on average, allows us to define a balanced update direction \({\widehat{p}}^{k}\):

Under this approach, all loss components have balanced magnitudes, allowing each optimization step to minimize all terms effectively.

A detailed description of the proposed method presented in Algorithm 2. For the second-order experiments, we follow the general methodology of9, which uses the SSBroyden optimizer after 5k Adam pre-training iterations. The crucial modification in our work is that the sampling distribution is generated by our vRBA framework, rather than the standard RAD formulation used in the reference.

Algorithm 2

vRBA for PINNs

Require: Representation model \({\mathcal{M}}\); Training points XB, XD, XE; Optimizer parameters lr; vRBA parameters \(\eta ,{\lambda }_{ma{x}_{0}},{\lambda }_{cap},{\alpha }_{g},{m}_{E},{\gamma }_{g}\); Iterations per stage Nstage; Total iterations Ntrain; Boolean flags adaptive_weights, adaptive_distribution.

Ensure: Optimized network parameters θ.

1: Initialize network parameters θ0.

2: Initialize weights \({\lambda }_{\alpha ,i}^{0}\Leftarrow 0.1{\lambda }_{max0}\) for each loss component α ∈ {B, D, E}.

3: Initialize sampling p.m.f. \({\overline{q}}_{\alpha }\) to be uniform for each α.

4: k ⇐ 0.

5: while k < Ntrain do

6: \({\lambda }_{max}\Leftarrow \min ({\lambda }_{max0}+k/{N}_{stage},{\lambda }_{cap})\)

7: γk ⇐ 1 − η/λmax

8: for α ∈ {B, D, E} do

9: if adaptive_distribution then

10: Update sampling p.m.f: \({\overline{q}}_{\alpha }^{k}\Leftarrow {{\boldsymbol{\lambda }}}_{\alpha }^{k}/\sum ({{\boldsymbol{\lambda }}}_{\alpha }^{k})\)

11: end if

12: Sample batch \({X}_{\alpha }^{k} \sim {\overline{q}}_{\alpha }^{k}\) from Xα.

13: Compute predictions: \({u}_{\alpha ,i}\Leftarrow {\mathcal{M}}({\theta }^{k},{x}_{\alpha ,i}^{k})\) for each \({x}_{\alpha ,i}^{k}\in {X}_{\alpha }^{k}\).

14: Compute residuals \({r}_{\alpha ,i}^{k}\) using equations (24) or (23).

15: Update tilted distribution \({q}_{\alpha ,i}^{k}\) using equation (12).

16: Apply EMA: \({\lambda }_{\alpha ,i}^{k+1}\Leftarrow {\gamma }^{k}{\lambda }_{\alpha ,i}^{k}+{\eta }^{* }{q}_{\alpha ,i}^{k}\).

17: if adaptive_weights then

18: Compute loss term: \({{\mathcal{L}}}_{\alpha }^{k}\Leftarrow \frac{1}{| {X}_{\alpha }^{k}| }{\sum }_{i}{({\lambda }_{\alpha ,i}^{k+1}{r}_{\alpha ,i}^{k})}^{2}\).

19: else

20: Compute loss term: \({{\mathcal{L}}}_{\alpha }^{k}\Leftarrow \frac{1}{| {X}_{\alpha }^{k}| }{\sum }_{i}{({r}_{\alpha ,i}^{k})}^{2}\).

21: end if

22: Compute gradient \({\nabla }_{\theta }{{\mathcal{L}}}_{\alpha }^{k}\).

23: Update average gradient: \(\parallel {\nabla }_{\theta }{\overline{{\mathcal{L}}}}_{\alpha }^{k}\parallel \Leftarrow {\gamma }_{g}\parallel {\nabla }_{\theta }{\overline{{\mathcal{L}}}}_{\alpha }^{k-1}\parallel +(1-{\gamma }_{g})\parallel {\nabla }_{\theta }{{\mathcal{L}}}_{\alpha }^{k}\parallel\).

24: end for

25: Update global weight: \({m}_{D}^{k+1}\Leftarrow {\alpha }_{g}{m}_{D}^{k}+(1-{\alpha }_{g}){m}_{E}\frac{| | {\nabla }_{\theta }{{\overline{{\mathcal{L}}}}_{E}}^{k}| | }{| | {\nabla }_{\theta }{{\overline{{\mathcal{L}}}}_{D}}^{k}| | }\).

26: Define total update direction: \({p}^{k}\Leftarrow -{m}_{E}{\nabla }_{\theta }{{\mathcal{L}}}_{E}^{k}-{m}_{B}{\nabla }_{\theta }{{\mathcal{L}}}_{B}^{k}-{m}_{D}^{k+1}{\nabla }_{\theta }{{\mathcal{L}}}_{D}^{k}\).

27: Update parameters: θk+1 ⇐ θk + lr ⋅ pk.

28: k ⇐ k + 1.

29: end while

Benchmarks

Allen-Cahn

The Allen-Cahn equation is a widely recognized benchmark in PINNs due to its challenging characteristics. The 1D Allen-Cahn PDE is defined as:

where k = 10−4. The problem is further defined by the following initial and periodic boundary conditions:

Burgers Equation

The Burgers’ equation is defined as

where u represents the velocity field, subject to the dynamic viscosity. In this study we consider two separate cases where \(\nu =\frac{1}{100\pi }\) and \(\nu =\frac{1}{1000}\). The initial conditions are described as follows

defined over the domain Ω = (−1, 1) × (0, 1) and periodic boundary conditions in x.

Korteweg-De Vries (KdV)

The Korteweg-De Vries (KdV) equation is a canonical model for shallow water waves and serves as a rigorous benchmark for PINNs due to the presence of third-order spatial derivatives and nonlinear soliton interactions. The PDE is defined as:

defined over the spatio-temporal domain x ∈ [0, 20] and t ∈ [0, 5]. The problem is closed by the following initial and boundary conditions:

where the boundary functions g0, g1, g2, and g3 are derived by evaluating the analytical solution at the domain boundaries. The exact solution, describing the interaction of two solitons, is given by:

where ζi = x − cit − xi for i = 1, 2. The specific parameters for this benchmark are set to c1 = 6.0, c2 = 2.0, x1 = − 2.0, and x2 = 2.0.

Operator learning

Let \({\mathcal{X}}\) be a space of functions over a domain \({\Omega }_{X}\subset {{\mathbb{R}}}^{{d}_{x}}\), and \({\mathcal{Y}}\) be a space of functions over \({\Omega }_{Y}\subset {{\mathbb{R}}}^{{d}_{y}}\). The operator of interest is

The goal is to learn a parametric model Gθ that approximates \({\mathcal{G}}\). The residual \(R:{\mathcal{X}}\times {\Omega }_{y}\to {{\mathbb{R}}}^{+}\) for this task is defined as the difference between the operator’s prediction and the true solution and reads

where \(v\in {\mathcal{X}}\) is an input function and \({\mathcal{G}}[v]\) is the corresponding true output function evaluated at a point x ∈ ΩY. The training data consists of Nfunc input-output function pairs, \({\{{v}_{j},{\mathcal{G}}[{v}_{j}]\}}_{j=1}^{{N}_{func}}\), where each output function \({\mathcal{G}}[{v}_{j}]\) is evaluated at N discrete points \({\{{x}_{i}\}}_{i=1}^{N}\). The standard loss is an average over both the function instances and the spatial points:

A single importance sampling or weighting scheme is ill-suited for this problem due to the two distinct levels of discretization (in function space and spatial domains). To address this, we propose a mixed strategy: importance weighting is used for the spatial points within each function, while adaptive sampling is used for the functions themselves. This is motivated by the fact that many neural operators have a fixed spatial discretization, making weighting a natural fit, while the function space offers more flexibility for sampling.

The loss function for a batch of bu functions is updated as follows

where the functions \({\{{v}_{j}\}}_{j=1}^{{b}_{u}}\) are sampled from the full set of training functions. The term Λ is a matrix of importance weights, where Λi,j corresponds to point xi for function vj. These weights are constructed from a target p.m.f. matrix Qk constructed based on the choice of potential. For instance when Φ(x) = ex, \({Q}^{k}\in {{\rm{{\mathbb{R}}}}}^{N\times {N}_{{\text{func}}}}\) is defined recursively as follows

Note that each column of the matrix Q (for a fixed function j) is a p.m.f. over the spatial points, focusing attention on high-residual regions for that specific function. The weights are then smoothed over time with an EMA

As in the previous case, we can set the learning rate for stability, for example, by normalizing it as \({\eta }^{* }=\eta /ma{x}_{j}{Q}_{i,j}\). Note that this choice of η* achieves a normalization per function which is consistent with our two-level discretization. This EMA formulation has the useful property of keeping the weights bounded. As described in15, the update rule ensures that the weights are constrained to the interval \({\Lambda }_{i,j}\in (0,\frac{{\eta }^{* }}{1-\gamma })\), which aids in stabilizing the training process.

A key advantage of this framework is that, if η ≠ 1 − γ, we can leverage the imbalance on learned spatial weights, Λi,j, to construct a sampling distribution over the functions themselves. The intuition is that functions with higher overall residuals will naturally accumulate larger Λ values over time. Therefore, we propose the following approach to create a function-level sampling distribution. First, we compute an aggregated importance score sj for each function by summing its spatial weights

These scores are then normalized to create a p.m.f. over the function space:

This distribution \(\overline{q}\) can then be used to sample the most informative functions vj for the next training batch. A detailed description of the proposed method is given in Algorithm 3

Algorithm 3

vRBA for Operator Learning

Require: Neural Operator Gθ; Training data \({\{{v}_{j},{u}_{j}\}}_{j=1}^{{N}_{func}}\); Optimizer parameters lr; vRBA parameters \(\eta ,{\lambda }_{ma{x}_{0}},{\lambda }_{cap},\gamma\); Batch size bu; Update frequency Nupdate; Total iterations Ntrain.

Ensure: Optimized network parameters θ.

1: Initialize network parameters θ0.

2: Initialize weights \({\Lambda }_{i,j}^{0}\Leftarrow 0.1{\lambda }_{max0}\) for all i, j.

3: Initialize function sampling p.m.f. \({\overline{q}}_{j}^{0}\Leftarrow 1/{N}_{func}\) for all j.

4: for k ⇐ 0 to Ntrain − 1 do

5: \({\lambda }_{max}\Leftarrow \min ({\lambda }_{cap},{\lambda }_{max0}+k/{N}_{stage})\)

6: γk ⇐ 1 − η/λmax

7: Sample a batch of bu function indices \({{\mathcal{J}}}_{k} \sim {\overline{q}}^{k}\).

8: Compute the batch residuals: \({R}_{i,j}^{k}\Leftarrow {G}_{{\theta }^{k}}({v}_{j})({x}_{i})-{u}_{j}({x}_{i})\) for \(i\in \{1..N\},j\in {{\mathcal{J}}}_{k}\).

9: Update target distribution \({Q}_{i,j}^{k+1}\) using \(| {R}_{i,j}^{k}|\) (via Eq. (12)).

10: Update weights via EMA: \({\Lambda }_{i,j}^{k+1}\Leftarrow {\gamma }^{k}{\Lambda }_{i,j}^{k}+{\eta }^{* }{Q}_{i,j}^{k+1}\) for \(j\in {{\mathcal{J}}}_{k}\).

11: Compute weighted loss for the batch: \({{\mathcal{L}}}^{k}\Leftarrow \frac{1}{{b}_{u}N}{\sum }_{j\in {{\mathcal{J}}}_{k}}{\sum }_{i=1}^{N}{[{\Lambda }_{i,j}^{k+1}{R}_{i,j}^{k}]}^{2}\).

12: Compute gradient of the loss: \({g}^{k}\Leftarrow {\nabla }_{\theta }{{\mathcal{L}}}^{k}{| }_{\theta ={\theta }^{k}}\).

13: Update parameters: θk+1 ⇐ θk − lr ⋅ gk.

14: if \(k\,(mod\,\,{N}_{update})==0\)then

15: Aggregate scores: \({s}_{j}^{k+1}\Leftarrow {\sum }_{i=1}^{N}{\Lambda }_{i,j}^{k+1}\) for j = 1. . Nfunc.

16: Normalize to form new p.m.f.: \({\overline{q}}_{j}^{k+1}\Leftarrow {s}_{j}^{k+1}/{\sum }_{\ell =1}^{{N}_{func}}{s}_{\ell }^{k+1}\).

17: end if

18: end for

DeepONet

DeepONet consists of two networks - a trunk network and a branch network. The trunk network encodes spatial coordinates and learns a basis in the target function space, while the branch network maps the input function, evaluated at a fixed set of sensors, to coefficients that project onto this learned basis. The resulting dot product yields the output function at each spatial location. This design is rooted in the operator approximation theorem and enables expressive and efficient modeling of nonlinear operators. DeepONet and its variants are widely applied in mechanics, high-speed flows36, materials science, and multi-phase flows48.

SVD-DeepONet

To address the challenges of modeling discontinuous solutions such as shocks, a two-step training strategy49 is often employed to enhance the standard DeepONet architecture. In this approach, the trunk network is trained first to extract a basis, which is then orthonormalized using QR factorization or Singular Value Decomposition (SVD). While QR factorization ensures orthonormality, SVD is frequently preferred because it provides a unique solution and generates a hierarchical set of orthonormal basis functions that allow for physical interpretation of the flow features. Once this optimized basis is established, the branch network is trained in the second stage to map input parameters to the corresponding coefficients. This modification significantly improves the network’s accuracy, efficiency, and robustness, particularly when solving Riemann problems with extreme pressure ratios36.

FNO

FNO learn solution operators by leveraging spectral convolutions in the Fourier domain4. The input function is first lifted to a high-dimensional latent space through pointwise linear transformations. A Fourier transform is applied to these lifted features, enabling convolutional operations to be performed as multiplications in frequency space. High-frequency modes are typically truncated to enforce smoothness, reduce overfitting, and improve training dynamics. The result is then transformed back to physical space via the inverse Fourier transform and projected to the target dimension. The global receptive field of FNOs makes them particularly effective for modeling long-range dependencies in solutions to PDEs, as demonstrated in applications such as weather forecasts, porous media flows, and turbulence.

TC-UNet

Unlike FNOs, TC-UNet27 operates entirely in physical space using local convolutions. The architecture is based on a UNet, a hierarchical fully convolutional neural network that captures multiscale features through successive downsampling and upsampling. TC-UNet uses time conditioning via feature-wise linear modulation (FiLM)50, applied at each level of the hierarchy. This allows the model to adaptively modulate intermediate features based on the time coordinate input, enabling accurate modeling of spatiotemporal dynamics. TC-UNet or UNet-based architectures are particularly well-suited for problems characterized by sharp gradients36 or fine-scale structures51 and are, in general, more robust to spectral bias52 compared to other neural operator architectures.

Benchmarks

Bubble growth dynamics

We study the dynamics of a single gas bubble in an incompressible liquid governed by the Rayleigh-Plesset (R-P) equation48, a nonlinear ordinary differential equation describing the evolution of the bubble radius R(t) under a time-varying pressure field P∞(t). Under isothermal assumptions and negligible temperature variations, the simplified linearized R-P equation reads

where r(t) = R(t) − R0 is the deviation from the initial bubble radius R0, ρL is the liquid density, νL is the kinematic viscosity, γ is the surface tension, and PG0 is the initial gas pressure inside the bubble.

We generate a dataset by numerically solving equation (45) for 1000 independent realizations of the forcing function Δp(t), which is constructed as a product of a Gaussian random field and a smooth ramp function, following the procedure in48. Specifically, the pressure field is modeled as

where k(t1, t2) is a squared exponential kernel with correlation length ℓ, and s(t) is a smooth ramp used to induce a sharp initial pressure drop.

The data were split into training, validation, and testing subsets in the ratio 80:10:10. Each simulation yields a trajectory of the bubble radius R(t), sampled over a fixed time window with initial condition R(0) = R0, \(\mathop{R}\limits^{^\circ }(0)=0\). All simulations assume periodic boundary conditions and are performed with parameters corresponding to the physical properties of water at room temperature.

High-pressure sod-shock tube

We consider the one-dimensional Riemann problem governed by the compressible Euler equations of gas dynamics. This system describes the conservation of mass, momentum, and energy in an inviscid flow and is given by the hyperbolic system

where the vector of conservative variables U and the flux vector F(U) are defined as

Here, ρ denotes the fluid density, u the velocity, and p the pressure. The total energy E is related to the pressure by the equation of state for an ideal gas, \(\rho E=\frac{p}{\gamma -1}+\frac{1}{2}\rho {u}^{2}\), with the specific heat ratio set to γ = 1.4.The system is subject to discontinuous initial conditions consisting of two constant states, UL and UR, separated by a diaphragm at x = xc. In this study, we specifically focus on the High-Pressure Ratio (HPR) regime, characterized by extreme pressure jumps (up to a ratio of 1010) across the discontinuity1. The dataset is generated by varying the initial left-state pressure pL while keeping the right-state parameters fixed, utilizing an exact Riemann solver to provide the ground truth solutions at a final time tf.While the system involves three primitive variables, we restrict the neural operator to map the initial pressure parameter pL exclusively to the density field ρ(x, tf). The density profile is particularly challenging and representative as it uniquely exhibits all three fundamental wave structures inherent to the Riemann problem: the rarefaction wave, the contact discontinuity, and the shock wave.

Navier-Stokes Equations- Kolmogorov’s flow

We consider the two-dimensional unsteady Navier-Stokes equations in vorticity formulation, modeling an incompressible, viscous fluid on the periodic domain (x, y) ∈ (0, 2π)2. The system is driven by a Kolmogorov-type external forcing, as previously studied in53, and is governed by:

with viscosity ν = 10−3, and the source term defined as

where χ = 0.1. The Laplacian Δ acts in two spatial dimensions, ω denotes the vorticity, and u is the velocity.

Initial conditions ω0(x, y) are sampled from a Gaussian random field with zero mean and covariance operator \({\mathcal{N}}(0,{7}^{3/2}{(-\Delta +49I)}^{-5/2})\). To generate the data, we employ a Fourier-based pseudo-spectral solver introduced in4. The simulation output consists of 1000 spatiotemporal vorticity realizations, each on a 512 × 512 spatial grid, subsequently downsampled to 128 × 128 for downstream learning tasks.

We partition the dataset into training, validation, and testing subsets in an 80:10:10 ratio. A neural operator model \({\mathcal{G}}\) is trained to predict evolution of the vorticity field by learning the mapping from the initial condition at t = 0 to the interval [t ∈ (0, 50]).

For the 2D Navier-Stokes problem, we train a Fourier Neural Operator (FNO) to learn the mapping from an initial vorticity field ω0(x, y) to the full spatiotemporal solution ω(x, y, t).

Wave Equation

We investigate the propagation of acoustic waves governed by the linear wave equation in heterogeneous media. In 2D, the governing equation is given by:

where u(x, t) represents the acoustic pressure at spatial location x = (x, y), c(x) is the spatially varying wave speed, and Δ denotes the Laplacian operator. We assume fully reflective (homogeneous Dirichlet) boundary conditions throughout the domain.

For the spatially varying wave speed, we set \(c(x,y)=1+\sin (x)\sin (y)\). The initial pressure profile u0(x) is modeled as a localized Gaussian source centered at a point xc, i.e.,

with xc sampled randomly on the spatial grid. We solve this system numerically using a second-order finite difference method on a grid with a spatial resolution of 64 × 64 and generate 1000 simulations corresponding to different realizations of u0. The dataset is partitioned into training, validation, and test sets in the ratio 80:10:10. We train a neural operator \({\mathcal{G}}\) to learn the mapping u(x, 0) ↦ u(x, t) for all [t ∈ (0, 2]).

In our final operator learning example, we train a Time-Conditioned U-Net (TC-UNet) to learn the solution operator for the 2D wave equation, mapping an initial pressure profile u0(x) to the full wave propagation over time u(x, t).

vRBA hyperparameter selection

This section details the selection of the hyperparameters introduced by the vRBA framework. The present formulation does not require extensive problem-specific tuning; in fact, all hyperparameters discussed below were held constant across every benchmark presented in this paper. Unless otherwise stated, we utilize a consistent set of vRBA hyperparameters: an Exponential Moving Average (EMA) memory of γ = 0.999, an EMA learning rate of η = 0.01, and a smoothing factor of ϕ = 1.0.

Annealing schedule

For the exponential potential, the convex duality between entropy and free energy warrants any choice of annealing schedule. We observe the parallel between vRBA and simulated annealing, which is provably convergent under sufficiently low temperature decay, e.g., [ref. 46, Theorem 1]. Inspired by such theoretical results, our annealing schedule (see Eq. (19)) follows a logarithmic decay in the number of iterations scaled by a universal constant, which we took to be one (i.e., c = 1) in all our examples.

For the general potential-dependent case, there are generically two cases. For potentials such as \(\cosh (r)\), \({e}^{{r}^{2}}\), and \((1+r)\log (1+r)-r\) where the appropriate ϵ is not easily found via analytic calculations, the annealing parameter is determined dynamically at each iteration by solving the optimality condition via Newton’s method. On the other hand, for the polynomial (rp, for p > 1) potentials, the formulation remains effectively constant throughout training. Consequently, these parameters are governed by theoretical or numerical optimality conditions rather than manual hyperparameter selection.

EMA learning rate

Here, the values of λ are bounded in the interval (0, η*/(1 − γ)), implying a maximum importance score of \({\lambda }_{\max }={\eta }^{* }/(1-\gamma )\). These parameters were originally introduced in the RBA framework15, and we utilize the exact same values established in that work. We note that the use of Exponential Moving Averages (EMA) to stabilize stochastic estimates is a standard practice in machine learning, most notably in the Adam optimizer43, where it is essential for convergence stability. Subsequent analyses in related studies, such as the sensitivity analysis performed for standard RBA (Φ(r) = r2) in KKANs42, have investigated the effect of \({\lambda }_{\max }\) on convergence. That study demonstrated that while initializing with a low bound (\({\lambda }_{\max }\approx 1\)) yields suboptimal results due to insufficient attention, and overly aggressive bounds (\({\lambda }_{\max } > 20\)) can slightly degrade performance, there exists a broad, robust plateau of optimal performance for \({\lambda }_{\max }\in [5,20]\). The configuration used in this paper targets \({\lambda }_{\max }\approx 10\), which lies in this optimal regime. Thus, the selection of η* is not a new free parameter requiring tuning, but is a fixed value inherited from previous empirical evidence.

EMA memory parameter

Similar to the learning rate, we inherit the memory parameter from the previous studies that introduced the RBA framework15. To further validate the robustness of the proposed method, we performed a sensitivity analysis in the Supplementary Information. Our results indicate that our framework is quite robust to this value. For operator learning, γ cannot be zero since we need to collect the λ scores to sample the function space (see Eq. (43)); however, our results indicate that γ ∈ [0.4, 0.999] outperforms the baseline. On the other hand, for PINNs with second-order optimizers, it is even possible to train without any memory, significantly outperforming the baselines. Nevertheless, the values used in this study, inherited from previous studies, lead to the best results in our benchmarks.

Stabilization parameter

The stabilization parameter ϕ governs the convex combination of the adaptive distribution q and a uniform prior pu. We primarily utilized pure adaptivity by setting ϕ = 1.0 for all PIML examples, while adopting a slight regularization for Operator Learning benchmarks. A sensitivity analysis provided in SI confirms that the method is robust to this choice, as vRBA consistently outperforms the baseline even in the absence of regularization. Notably, the inclusion of the uniform prior yields performance gains specifically for potentials targeting the L∞ norm, such as the exponential variants, by mitigating their aggressive nature; for variance-minimizing potentials, the sensitivity to ϕ is negligible.

SSBroyden optimizer

This section details our custom JAX implementation of the Self-Scaled Broyden (SSBroyden) optimizer, which was used for all second-order optimization experiments. The original method, proposed by Urbán et al.9, relies on modified SciPy routines that are CPU-bound and not directly portable to a JAX-native, GPU-accelerated workflow.