Abstract

Prolonged exposure to natural factors and human activities has caused severe brick missing damages in many Great Wall defensive forts, weakening their load-bearing structures. Given the Great Wall’s vast scale, remote locations, and complex terrain, there is an urgent need for a method to quickly identify and locate such damages to support daily monitoring and maintenance. This study proposes a computer vision-based two-phase automatic damage detection and localization method for ancient city walls. In phase one, an Improved-YOLOv5n object detection network, trained on 1197 UAV images, integrates attention mechanisms and knowledge distillation to enhance small target detection, achieving a mean average precision of 74.5%. In phase two, a genetic algorithm-optimized multi-threshold OTSU segmentation and image processing are used to localize damages and extract edge locations, aiding subsequent modeling. The findings of this study can provide a time-efficient, high-accuracy and non-destructive solution for routine structural safety assessments of ancient city walls.

Similar content being viewed by others

Introduction

Ancient city walls are large-scale defensive structures composed of walls and auxiliary military facilities such as defensive forts. Among them, the Great Wall is the most representative example and stands as an important symbol of ancient Chinese civilization, possessing significant historical, artistic, and scientific value. Compared to other historical masonry structures, such as churches, castles, or residences, the Great Wall is unique in terms of scale, structural design, and geographic location. Firstly, in terms of scale, the Great Wall is the largest linear heritage site in the world, spanning several provinces and totaling 21,196 km in length1. Secondly, in terms of structural design, the Great Wall was designed as a functional military fortification, emphasizing defensive performance rather than decorative elements. The spatial layout of its associated defensive forts is relatively simple, with a more streamlined structure. Thirdly, in terms of geographic location, the construction of the Great Wall spans a variety of natural landscapes2,3, including mountains, deserts, grasslands, and hills, many of which are in remote and scarcely inhabited regions. Considering the Great Wall’s massive scale and often inaccessible locations, conservation monitoring faces significant logistical challenges, resulting in delayed interventions and substantial structural damage in multiple sections. For instance, in the Beijing section of the Great Wall, only 7.02% (about 25,861 m) is well-preserved, while 61.5% (about 226,379 m) is severely damaged4.

Traditional architectural heritage inspection relies on manual, non-automated visual checks. This method is often costly, time-consuming, and due to human limitations, it is challenging to inspect difficult-to-reach locations5. Given the Great Wall’s enormous scale and often inaccessible locations, manual visual inspection cannot meet the daily monitoring and maintenance needs, making automated methods necessary. In recent years, computer vision methods have gained traction in civil engineering and manufacturing industry. These techniques offer detection accuracy surpassing traditional visual inspections and exhibit characteristics of flexibility, real-time operation, and high efficiency. Additionally, unmanned aerial vehicle (UAV) technology has become increasingly advanced, enabling coverage of vast area and acquisition of high-resolution image, even in hard-to-reach locations. Therefore, this paper aims to utilize UAVs for large-scale automated detection and proposes a suitable computer vision-based algorithm for identifying and locating typical damages on ancient city walls.

In this paper, automatic damage detection and localization using computer vision are divided into two aspects: object detection and image processing methods. Object detection involves identifying and determining the position and category of objects of interest in images. Traditional object detection is divided into three stages: region selection, feature extraction, and classifier classification, with a relatively low detection speed and accuracy6. Currently, object detection algorithms using convolutional neural networks (CNNs) have dominated the mainstream, achieving wide applications in building health monitoring field7,8,9,10. CNN-based object detection algorithms are typically categorized into two-stage and single-stage methods. Single-stage algorithms perform object localization and recognition simultaneously during training, resulting in faster inference speeds while maintaining good accuracy, making them more suitable for real-time detection. Notable examples include the YOLO (You only look once) v111 -v8、SSD (Single Shot Multibox Detector)12.

In recent years, CNN-based object detection algorithms have been applied to damage detection in masonry heritage. For example, a Faster R-CNN model based on the ResNet101 framework was used to identify spalling and efflorescence on the masonry walls of the Forbidden City13, with the detection conducted from a handheld mobile perspective. Ali et al. also trained a Faster R-CNN model to identify surface brick damage in the Wat Phra Si Sanphet Temple14. Haciefendioglu et al. combined ResNet50, Grad-CAM and K-means clustering to detect crack in historical masonry structures15. Specific studies have also targeted the Great Wall. For instance, Wang et al. established a cloud platform capable of large-scale data collection and damage detection, including spalling, severe spalling, efflorescence, and cracks, with the detection recognition box size set to a single brick16. Li et al. established a dataset based on the Shanhaiguan Great Wall, comprising 361 images representing typical surface damages, including chalking, plants, ubiquinol, and cracking17.

Although these existing CNN-based techniques can effectively replace manual labor for masonry heritage damage detection, they are often limited to specific visual areas such as single locations, and primarily focus on surface damage18. For the daily maintenance of large-scale ancient city walls, focusing on surface damage detection cannot meet timeliness requirements. Consequently, there is an urgent need to develop a method suitable for rapid identification of its damages over a large scale.

While object detection algorithm is able to detect the position of the damage, it lacks the capacity to obtain the real physical information, so image processing methods that emphasize image segmentation and image measurement are introduced in this study. Traditional image segmentation algorithms include threshold-based segmentation, region-based segmentation, edge-based segmentation, clustering-based segmentation, and segmentation methods based on specific theories such as mathematical morphology19. Threshold-based methods and edge detection remain widely adopted for segmenting building structures in images with cluttered backgrounds20. Combining the Sobel edge detection method with adaptive threshold approach can effectively eliminate the noise during crack detection compared to using the threshold approach solely21. Ding et al. used a single threshold image segmentation method followed by the Canny operator to efficiently extract the cracks on the surface of the wooden structure22. Xia et al. introduced an edge detection operator Sobel to recognize building surface cracks and used OTSU method to segment brick spalling on building facades23. Yang et al. proposed an improved algorithm combining dilation and Canny’s algorithm to detect cracks on the tunnel surface24.

However, due to the complex background interference in UAV-captured images, especially for brick missing areas on ancient city walls, single-algorithm segmentation approaches are often inadequate. Therefore, effective combination of multiple segmentation algorithms is essential. After segmentation, image measurement quantifies measurement information, such as size, shape, and position of objects in the image. Image measurement is usually realized by the targeting method, i.e., the use of known physical information objects in the image to calculate the relationship between pixel size and true size and thus perform residual quantization22,25,26. For example, Xia et al. calculate pixel calibration values from known face brick dimensions to obtain spalling area as well as crack lengths23. In practical applications, it is necessary to consider multiple factors such as image quality, noise, and limitations of the capturing device.

Despite recent progress, existing research has the following limitations:

-

(1)

Most automated methods target surface-level defects using close-range imagery, which remains labor-intensive and time-consuming. This approach does not meet the demands of large-scale monitoring in remote areas like the Great Wall.

-

(2)

Currently, datasets for ancient city walls’ damage mainly include surface damage types. However, due to the long service time, the Great Wall suffers from extensive brick missing, i.e., the direct loss of the defensive forts’ walls. This indicates that research on this type of damage remains lacking.

-

(3)

Existing image processing methods for building damage identification are applicable to building surface damage typically featured more homogeneous backgrounds, whereas a single image segmentation method faces challenges in segmenting UAV images with complex backgrounds. In addition, the edges of brick missing areas are usually very irregular, making it necessary to propose a comprehensive localization method to obtain the precise location of these edges.

To address the above limitations, this study proposes a two-phase automatic detection and localization method for brick missing damages of large scale ancient city walls based on computer vision. Firstly, UAVs are used to extensively capture high-resolution images of the Great Wall from a large perspective. The images include the entire defensive fort, trails, and natural background, from which brick missing damages are extracted to construct the corresponding dataset. Secondly, to meet the timeliness requirements for the daily detection of large-scale ancient city walls, the YOLOv5n object detection algorithm, which has a smaller model size and faster detection speed, is selected for detection. Targeted improvements are made to enhance its detection accuracy for small targets in the complex background of UAV images. Finally, to address the challenges in segmenting and localizing brick missing damage under complex UAV backgrounds, a hybrid process combining genetic algorithm-based multi-threshold image segmentation with complementary image processing techniques was proposed. This process is capable of obtaining the position information of the brick missing damage on the defensive fort, facilitating the subsequent refinement modeling.

The structure of this study is as follows: Section “Methods” demonstrates the framework of the proposed methodology, including the process of dataset establishment, the improved methods of object detection network, and the principles of key image processing algorithms. Section “Results” provides the results, which explains the performance of the proposed object detection network, the ablation test results and the segmentation and localization results of brick missings. Finally, Section “Discussion” summarizes the research findings, highlights the superiority of the proposed methodology, and identifies limitations as well as future research directions.

Methods

Overall methodological framework

Focusing on ancient city wall, a two-phase automatic detection and localization method is proposed for typical damages. The framework of this method is illustrated in Fig. 1 and is characterized by the following characteristics: (1) A field inspection of the ancient city wall, exemplified by the Great Wall, was conducted. High-resolution images of the defensive forts were captured from different angles using UAV aerial photography, with brick missing damage subsequently annotated. This process resulted in the establishment of a damage dataset comprising 246 defensive forts and 1197 images. (2) An Improved-YOLOv5n model was trained to detect the brick missings of ancient city wall. The original YOLOv5n was improved in terms of attention mechanism, allowing for improved detection of small targets in UAV view. Additionally, knowledge distillation method enables the lightweight YOLOv5n model with fewer network layers to learn richer feature representations, achieving detection accuracy comparable to the more complex YOLOv5s while maintaining its original inference speed. (3) An image processing process, mainly including image segmentation and image measurement methods, was proposed to obtain the position of brick missing, among which the OTSU multi-threshold image segmentation algorithm based on genetic algorithm proved effective for UAV photographs with complex background.

The blue dashed boxes represent three stages for describing the proposed method: Satge I (Inspection): Sample defensive forts used for dataset construction. Stage II (Phase 1: detection): The input dataset is processed by Improved-YOLOv5n to identify brick missing locations. Stagel III (Phase 2: localization): Three key steps for brick missing edge localization, including bounding box cropping, image segmentation and image measurement.

Damage inspection

The ancient city wall, represented by the Great Wall, primarily consists of the wall body, defensive forts and wall platforms situated on top of or around the wall, etc. The well-preserved sections of the Great Wall, mainly built during the Ming Dynasty (1368–1644), were constructed using primarily bricks27. In the Ming Great Wall system, the Beijing sections are among the most well-preserved and representative. This study investigates the sections of the Great Wall in Miyun and Yanqing districts, where defensive forts are most densely distributed, and conducts on-site research in these areas. This section of the Great Wall is surrounded by trees or fields, making it highly susceptible to the effects of natural climate. Moreover, due to the surrounding terrain being predominantly steep and remote, it is rarely accessed by humans, resulting in delayed conservation monitoring.

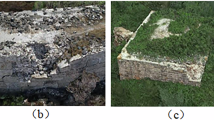

According to on-site research, the Great Wall is severely damaged, with widespread brick loss observed on the top surfaces and wall bodies of many forts. This brick missing damage has led to the direct loss of structural load-bearing components, adversely affecting the structure’s load-bearing capacity and stability. In this study, brick missing is annotated on the parts of the wall or top surface where bricks are missing. Figure 2 illustrates the appearance of brick missing and its corresponding annotation range. The green box represents the annotated bounding box, which contains the damaged wall, the damaged edges, and the background. The distinctive characteristic of this type of damage lies in the irregularity of its edges. It should be noted that wide-angle UAV photography introduces challenges including complex backgrounds and high levels of noise. To address these, improvements were made to both the object detection network and image segmentation method.

The green bounding box indicates the actual brick missing location, with dashed leader line connecting to magnified detail views.

In this study, images were collected using a DJI Phantom 4 with a wide-angle perspective. The collected images have high resolution, with a pixel size of 5472 × 3648. A total of 1197 images, which include defensive forts, trails, and natural backgrounds, and exhibit typical brick missing damage, were then selected for the subsequent dataset construction. The images were annotated using the deep learning image annotation software Labelme, marking typical brick missing damages. The dataset includes 246 defensive forts, of which 244 are located in the Miyun district and 2 in the Yanqing district. Each fort was photographed from multiple UAV angles without overlapping viewpoints, ensuring that damage observed from one angle is not redundant with another. For example, brick missing observed from the front of a fort cannot be represented in an image taken from the back. Images and annotations of a fort photographed from three sides are shown in Fig. 3, with the green boxes indicating the labeling boxes.

a Front, b Side 1, c Side 2.

After randomly shuffling the images, they were randomly selected for the training set, validation set, and test set in an 8:1:1 ratio. The training set contains 957 images, the validation set contains 120 images, and the test set contains 120 images. Due to the limited amount of data and the varying damage conditions of different defensive forts, some forts exhibit more typical and severe brick missing damage, resulting in a greater number of images. Therefore, if the angular difference between the images of the same fort in the training and test sets exceeds 90 degrees, the images will not be considered spatially overlapping. In contrast, if the angular difference is less than 90 degrees, the images will be considered spatially overlapping. In the end, the training and validation sets contain a total of 240 defensive forts, while the test set contains 32 defensive forts. However, there are 4 defensive forts with spatial overlap between the training and test images. The spatial distribution of the defensive forts in the dataset is illustrated in Fig. 4. Additionally, in order to enhance the diversity and quantity of dataset, the subsequent detection network YOLOv5 employed various data augmentation strategies during the training process, including random rotation, translation, perspective transformation, flipping, mosaic augmentation (random scaling, cropping, and splicing of four images), etc.

The names above the black circles indicate the administrative districts: Miyun District, Huairou District, and Yanqing District. The symbols indicating the Great Wall and forts are illustrated in the box down left.

Damage detection network

The YOLO series algorithms are widely recognized for its efficiency in one-stage target detection, combining region generation, feature extraction and classification, as well as complete the detection by one forward propagation. This makes YOLO faster than the two-stage algorithms, making it particularly suitable for large-scale applications such as routine monitoring of the Great Wall, where timely detection of newly emerged damage is essential for early intervention.

YOLOv5, a well-developed and robust version within the YOLO series, is known for its fast detection speed and high accuracy. It offers five versions categorized by network size and depth: YOLOv5x, l, m, s, and n. Among them, the x network has the highest complexity, while the n network is the lightweight version. To meet the demand for rapid detection, YOLOv5n is selected for improvement. Figure 5 illustrates the architecture of the Improved-YOLOv5n proposed in this study.

The white dashed boxes contain the network input and output. The gray dashed boxes enclose the key network architecture (including backbone, neck, and head). The rounded rectangle boxes represent different network layers. The stacked gray rectangles indicate feature maps, with lower values showing their dimensions (width × height × channels). The red cubes denote prediction heads, with lower values displaying feature map dimensions (width × height) × prediction data dimensions.

The input of the network consists of three components: data augmentation, adaptive anchor calculation, and image adaptive scaling28, which enable effective preprocessing of the dataset. The backbone of the network, which is responsible for feature extraction, primarily consists of CBS (Conv + Batchnorm + SiLu), C3 (cross-stage partial network with 3 convolutions), and SPPF (spatial pyramid pooling-fast) modules. C3 modules enhance training stability through feature splitting and residual connections, while SPPF modules achieve efficient multi-scale feature fusion via repeated 5 × 5 pooling operations. The neck of the network, designed to better fuse/extract the features delivered by the backbone, adopts the FPN-PAN structure. FPN (feature pyramid networks) uses top-bottom connections to enable the network to focus on multi-scale information, and PAN (pyramid attention network) addresses the issue of blurred target information after images pass through multiple layers of the FPN. The FPN-PAN feature pyramid structure enables the object detection network to understand the image more comprehensively by fusing multi-scale feature information at different layers, thereby improving the object detection performance.

Integration of attention mechanism

Due to the large viewing angle of the UAV, the target brick missing damages often appear small in the images, while there is a large proportion of background, leading to a reduction in the number of effective channels for object detection in multi-channel detection29. Therefore, by introducing SEAttention, different weights are assigned to different positions in the image from a channel perspective, allowing the model to focus more effectively on target damages.

The SEAttention operation primarily consists of two phases: squeeze and excitation. In the squeeze phase, the feature map undergoes dimension reduction to generate 1 × 1 × C vectors, where C represents the number of channels in the feature map. Subsequently, in the excitation phase, these vectors are transformed into weight information. Finally, the weighted feature vectors (1 × 1 × C) are multiplied with the corresponding channels of the feature maps. This process ensures that each channel is assigned a corresponding weight, thereby enhancing the model’s efficiency of detecting small targets during training. According to the experiment, adding the SEAttention module in front of the C3 block in the neck part yields improved performance. The modified network architecture with this attention mechanism is illustrated in Fig. 5.

Model distillation

While YOLOv5n achieves faster detection speed due to fewer network layers, it typically underperforms in detection accuracy compared to larger models such as YOLOv5s, YOLOv5x. In order to enhance YOLOv5n’s detection performance while maintaining its fast inference speed, this paper employs a knowledge distillation approach. This method enables the lightweight model to achieve performance levels closely resembling those of the complex model30.

Knowledge distillation is a technique in which a complex model, termed the teacher model, guides the training of a smaller target model, termed the student model. In image deep learning tasks, feature maps play a crucial role by capturing different levels of features from the input image, ultimately forming a complete understanding of the image. Compared to a student model, the feature maps generated by a teacher model provide a more accurate understanding of the image. Channel-wise knowledge distillation31 is a representative type of feature distillation. By applying softmax normalization to each channel of the teacher model’s feature map, the probability distribution of each channel is obtained, allowing for the identification of the relative importance of each position in the image. The student model can enhance its detection capability by learning this importance. In UAV image recognition where targets are small and background noise dominates, feature distillation provides an optimal solution by simultaneously highlighting target locations and amplifying foreground features.

In this study, YOLOv5s with SEAttention was used as the teacher model and the YOLOv5n with SEAttention was used as the student model for knowledge distillation. The recognizing performance of the student model was improved, resulting in the final Improved-YOLOv5n, as shown in Fig. 6.

The left-side teacher model is YOLOv5s integrated with SEAttention, while the right-side student model is YOLOv5n with SEAttention. The dashed box in the middle represents the feature map knowledge transfer process. The dataset section at the bottom indicates that both teacher and student networks share the same dataset during knowledge distillation.

Damage localization process

Determining the location of brick missing on the defensive forts is crucial for the subsequent modeling and preventive protection of the forts. This paper proposes an image-based damage localization method consisting of three key stages: perspective transformation matrix acquisition, brick missing edge extraction, and coordinate transformation between edge locations and defensive fort corner points. The localization process of this study is shown in Fig. 7.

-

(1)

Firstly, camera calibration method32 is used to remove camera distortion to ensure positioning accuracy. Subsequently, perspective transformation is conducted by marking the target plane, enabling the extraction of the four corner points of the target plane and the perspective transformation matrix.

-

(2)

A key objective is to obtain the precise location of the clear edge of brick missing damage. The UAV image is initially processed using Improved-YOLOv5n for detection. Upon acquiring the target brick missing detection box, a multi-threshold segmentation algorithm, employing genetic algorithms, is applied to segment the cropped target box image. The segmented sub-images then undergo morphological processing to determine absolute edge point coordinates in the original image space. Firstly, binary segmentation is performed according to the recommended threshold of multi-threshold segmentation algorithm, then opening and closing operations are used to eliminate the voids and noise between the target and the background33, and then Canny operator34 is applied for edge detection to obtain the locations of the defective edges.

-

(3)

After obtaining the positions of corner points relative to the original image and positions of edge points relative to the original image, the perspective transformation matrix is applied to convert both sets of points, thus eliminating perspective distortion. Finally, the edge position of the missing brick relative to its corresponding plane can be determined. Repeating the above operation in multiple images yields the positions of multiple partial edges of the brick missing relative to the plane where they are located. Through curve aggregation, the complete edge position of the brick missing can be finally obtained.

The light gray rounded rectangle represents the input image. The blue rectangles indicate the key processing steps. The yellow parallelogram denotes the perspective transformation matrix. The orange parallelograms represent the target points' coordinates relative to the original image. The dark gray rounded rectangle indicates the partial brick missing edge positions on the target plane. The red rounded rectangle shows the final position of the complete brick missing edge on its respective plane.

In this section, the three key image processing methods involved in the aforementioned localization process are introduced: multi-threshold image segmentation algorithm based on genetic algorithm, perspective transformation method and edge curves aggregation method.

Multi-threshold image segmentation

The OTSU algorithm, also known as the maximum inter-class variance method, is commonly used for adaptive thresholding during binary image segmentation35. Because of the complex background of UAV photographs, using a direct single-threshold OTSU segmentation algorithms will miss the boundary of brick missing area. Therefore, genetic algorithm is introduced to segment images with multiple thresholds.

Multi-threshold image segmentation first converts the image into a grayscale map with pixel points in the grayscale range 0 ~ L−1. Subsequent computations are performed based on the image grayscale values, with the goal of segmenting the image into n + 1 threshold intervals, and the set of thresholds is \([\,{T}_{1},\,{T}_{2},\ldots ,{T}_{{\rm{n}}}]\). The cumulative interclass variance within each threshold interval is shown in Eq. (1), where \({\omega }_{i}(\,{T}_{i})\) denotes the probability of occurrence of pixels in grayscale valued Ti−1 to Ti, \({\mu }_{i}(\,{T}_{i})\) is the grayscale mean value of thresholds interval from Ti−1 to Ti, and the grayscale mean value of the whole image is \(\mu\):

The maximum interclass variance is defined in Eq. (2):

When the interclass variance \({\sigma }^{2}(\,{T}_{1},\,{T}_{2},\ldots ,{T}_{{\rm{n}}})\) reaches the maximum value, the optimal set of thresholds, which is \((\,{T}_{1}^{\ast },\,{T}_{2}^{\ast },\ldots ,{T}_{{\rm{n}}}^{\ast })\), for OTSU multi-threshold segmentation can be obtained. It can be seen that the core idea of OTSU algorithm is to find the optimal set of thresholds, aligning with the fundamental principle of genetic algorithm36. In this paper, each chromosome (individual) represents a unique set of thresholds. Through the process of natural evolution simulated by genetic algorithms, individuals in the population continuously evolve higher fitness threshold combinations through operations such as selection, crossover, and mutation.

As shown in Fig. 8, the damage edge of a brick missing is taken as an example. The single-threshold image segmentation method, with a recommended threshold 142, fails to completely separate the bricks on the ground in the background from the bricks on the wall, resulting in big noise (Destination 1). In contrast, the multi-threshold method adaptively splits the image in multiple threshold intervals, according to the gray value on both sides of the brick missing. The ground brick’s gray value reaches a minimum of 132. Therefore, selecting a threshold greater than 132 for binary segmentation fails to properly separate the bricks in this region. Consequently, a threshold interval of 127 ~ 132 is adopted for segmentation, with results shown in Destination 2. The selected threshold interval can clearly separate the two sides of brick missing, demonstrating the superiority of multi-threshold image segmentation for guiding the subsequent binary segmentation.

The upper-right part of the figure (Destination 1) displays the result of the single-threshold segmentation method, while the lower-right part (Destination 2) presents the final result of the multi-threshold segmentation method. The dashed box contains the exemplary output of multi-threshold segmentation method, where the seven recommended thresholds (110, 127, 132, 140, 170, 176, 223) are represented by distinct colors and their positions are marked on both the histogram and segmented image.

Perspective transformation

UAV-acquired images often suffer from perspective distortion, as the camera typically captures the scene at an oblique angle rather than perpendicular to the surface. Therefore, perspective correction is necessary for accurate positioning. This study employs the target method, selecting objects with known geometric properties in the image, i.e., the plane of the defensive fort where the brick missing is located, as a target to guide the transformation of the brick missing edges23.

The transformed target point is denoted as \({[X,Y,Z]}^{T}\), and the original point before transformation is denoted as \({[x,y,1]}^{{\rm{T}}}\), the perspective transformation matrix is M. The perspective transformation formula can be expressed as Eq. (3):

From the coordinates of the four corner points on the defensive fort, \({[X,Y,Z]}^{T}\) and \({[x,y,1]}^{T}\) can be derived. This allows for the calculation of the perspective transformation matrix M, which can then be used to transform the target points. The process of image transformation is shown in Fig. 9.

The top-left section shows the original morphology of the target defensive wall in the original image. After applying the perspective transformation matrix (M) indicated by the red arrow, the bottom-right section displays the rectified morphology of the target defensive fort wall in the destination image with perspective distortion removed.

Edge curve aggregation

Given the extensive spatial range of brick-missing areas and the complexity of the background, it is often impossible to clearly split background and edges in one image. Accordingly, it is necessary to extract the edges in multiple images and then aggregate the extracted edge curves to get the complete brick missing location. The goal of the aggregation operation is to maximize the area of the brick missing to improve the reliability of the structural analysis.

Figure 10 shows the process of curve aggregation. There are three curves to be aggregated in the figure, assuming that the left side of the three curves is brick missing area. The upper-left corner (\(A\)) and the lower-left corner (\(B\)) of the plane form the initial brick missing area (\({S}_{0}\)), traverse the curve points (\({p}_{i}\)) from top to bottom, then select the point with the largest increment (\({p}_{i+1}\)) in the area of brick missing (\({S}_{{\rm{i}}}\)), and finally get the aggregated curve with the largest area of brick missing (\({S}_{f}\)).

The upper black rectangle represents the target wall surface. Within the dashed box, the blue, yellow, and green curves correspond to three partial edge curves of the target brick missing area awaiting aggregation. The dark blue dashed line guides to the magnified aggregation process below. The lower solid black line represents the enlarged dashed box area, while the black dotted line indicates omitted brick missing regions.

Results

Experiment in this study was based on pytorch framework. The detection network was trained on a computer equipped with an Intel(R) Core(TM) i5-12400F (CPU) and NVIDIA GeForce RTX 3090 Ti (GPU).

Evaluation metrics for Improved-YOLOv5n

To evaluate the performance of the Improved-YOLOv5n object detection model, Precision (\(P\)), Recall (\(R\)), and mean Average Precision at IoU (Intersection over Union) with threshold of 0.5 (\(mAP@0.5\)) are employed as evaluation metrics.

Precision (\(P\)) is the proportion of correct detections that are detected as positive cases, as shown in Eq. (4):

Recall (\(R\)) is the proportion of the target object that is detected, as shown in Eq. (5):

Where \({T}_{P}\) denotes the number of correctly categorized positive examples, \({F}_{N}\) denotes the number of incorrectly categorized positive examples into negative examples, \({T}_{N}\) denotes the number of correctly categorized negative examples, and \({F}_{P}\) denotes the number of incorrectly categorized positive examples.

The setting of the threshold affects the value of Precision and Recall, and a threshold of 0.5 means that IoU greater than 0.5 is recognized as a positive case, otherwise it is classified as a negative case. The \(mAP@0.5\) (mean average precision) refers to the average area under the Precision-Recall (\(P\)-\(R\)) curve for each detection category at a threshold of 0.5, as shown in Eqs. (6) and (7), where \(C\) stands for the number of categories. In this paper, there is only one “brick missing” category, so the value of \(C\) is 1. Since \(P\) and \(R\) metrics are contradictory to each other, \(mAP\) can provide a better balance between the two, objectively reflecting the detection performance of the model.

Damage detecting results

For training parameter configurations, the adaptive moment estimation (Adam) optimizer was utilized for parameter optimization. The initial learning rate was set to 0.001, with a momentum factor of 0.937 and a weight decay coefficient of 0.0005. A batch size of 4 was employed, and the training epochs were determined by implementing an early stopping mechanism.

Both the loss values of the training set and the validation set gradually converge as the training epochs increase, reflecting the fitting and generalization capabilities of the model, the smoothed loss curve is shown in Fig. 11a.

a Training convergence diagram, b the PR curve.

The model’s \(P\) is 0.917, \(R\) is 0.77, and \(mAP@0.5\) reaches 0.745 on the dataset of ancient city wall damages. The PR curve is shown in Fig. 11b.

Figure 12 shows the detection results of the model on the defensive forts used for the test, with defensive forts 1 to 6 ordered by increasing damage. The red boxes indicate the locations of brick missing detected by Improved-YOLOv5n. As can be seen, the algorithm proposed in this paper demonstrates good regional recognition performance, overcoming challenges such as the small size of target objects in UAV images, complex natural backgrounds (e.g., changes in tree colors due to seasonal variations), and the varying degrees of damage that result in different fort shapes. It successfully identifies brick missing damages.

a Defensive fort 1, b Defensive fort 2, c Defensive fort 3, d Defensive fort 4, e Defensive fort 5 and trials, f Defensive fort 6.

However, based on the algorithm’s recognition performance, it is found that there are instances of omissions and misdetections. Figure 13 shows the defensive forts with missed or false detections, where the green box represents the ground truth label and the red box indicates the algorithm’s detected result. Due to the irregular morphology of brick missing damages, the scope of the bounding box cannot be accurately specified when using a rectangular box for human labeling. Consequently, the algorithm is not able to completely cover a wide range of brick missing, resulting in partial detections. As shown in Fig. 13a, the algorithm can recognize the presence and location of the brick missing, but not the complete location of the brick missing. In addition, in cases where defensive forts have severe damage and collapse, the brightness difference between the brick missing areas and the collapsed parts is small, making it difficult to distinguish. As a result, the collapsed areas are often misidentified as brick missing, leading to false positives, as shown in Fig. 13b.

a Omission, b Misdetection.

To further illustrate the advantages of the improved model for detecting ancient city wall damages, comparative experiments were conducted with identical hyper-parameter settings to compare the performance of the improved model with the Faster-RCNN, YOLOv5x, YOLOv5s, YOLOv5n, and YOLOv8s. The \(mAP@0.5\) performance during the training process were compared in Fig. 14. Firstly, when the number of training epochs is less than 50, the detection accuracy of Improved-YOLOv5n is lower than that of YOLOv5x, which benefits from a higher number of parameters and a deeper network structure. However, due to the introduction of knowledge distillation, which allows it to learn more feature knowledge, Improved-YOLOv5n achieves higher detection accuracy after 250 epochs. Moreover, the lower detection accuracy of YOLOv5x can be attributed to the fact that it has more parameters, which are not well-matched to the scale of the brick missing dataset. As a result, its advantages are not fully realized, leading to a decrease in detection performance. Secondly, although YOLOv8 reaches the highest detection accuracy within 100 epochs, its rapid convergence results in minimal improvement in accuracy, and its learning performance in the later stages is inferior to that of Improved-YOLOv5n. Thirdly, compared to the two-stage algorithm Faster-RCNN, the detection accuracy of the Improved-YOLOv5n is also higher. In the early stages, due to YOLO’s lower computational complexity and faster forward propagation speed, it can quickly update weights and exhibit a faster convergence rate. Although Faster-RCNN’s accuracy steadily improves in the later stages, YOLOv5 still maintains a high detection ability for small targets in this study’s UAV perspective dataset, as it integrates various data augmentation strategies.

Each marked polyline represents the improvement in mAP@0.5 of individual neural networks as training epochs advance.

The model’s size is evaluated based on computational cost and parameters. Computational cost is measured using Giga Floating-point Operations Per Second (GFLOPs). Parameters represent the trainable parameters of the model, and it measures the complexity of the model. Detection speed is assessed in terms of Frames Per Second (FPS), indicating the number of images that the model can detect per second. A higher FPS value signifies faster detection speed. Based on the results presented in Table 1, it is evident that the Improved-YOLOv5n maintains the advantages of the small model size and fast inference speed of YOLOv5n, while achieving the best detection accuracy. These findings demonstrate that Improved-YOLOv5n offers superior overall performance in the automatic detection of brick missing damages for ancient city wall.

Ablation studies

In order to clarify the role of each improvement component, ablation studies were performed, and the results are shown in Table 2. Specifically, two modified versions of Improved-YOLOv5n were tested: “Model 1”, which excludes the SEAttention module; and “Model 2”, which omits knowledge distillation. It can be seen that the accuracy of “Model 1” without SEAttention is lower compared to that of Improved-YOLOv5n, showing that SEAttention in the algorithm is able to improve the ability of detecting small targets under the view of UAV, which has a positive effect on the improvement of the detection accuracy. The accuracy of “Model 2” is also lower than that of Improved-YOLOv5n, which shows that knowledge distillation makes the detection accuracy of YOLOv5n approximate the larger model, and also helps to improve the learning ability of the model.

Brick missing localizing results

To demonstrate the effectiveness of the proposed brick missing localization method, we take Defensive Fort 3 in Fig. 12 as an example. One specific damage region, referred to as brick missing 0, located on a side wall, was selected for detailed analysis. Its position in the plane is illustrated in Fig. 15, showing the left side of this brick missing edge constitutes the wall loss area, while the right side represents the residual wall structure after damage.

The black line delineates the complete wall boundary, the red markings indicate the positions of missing bricks on the wall surface, and warm gray identifies the remaining wall areas.

Figure 16 shows the localization process of the example brick missing 0, the localization process includes both image segmentation and image measurement. The image segmentation process are as follows: the original image is first obtained the recommended segmentation threshold interval, i.e., 127 ~ 132, by the multi-threshold segmentation algorithm based on genetic algorithm. Then the threshold was set to 130 for binary segmentation, and a clearer brick missing boundary was obtained, with the left ground brick segmented into white and the right wall segmented into black. However, some of the background bricks are still divided into black, resulting in noise, and there are white voids within the right-hand wall section. Application of morphological opening and closing operations effectively eliminates these artifacts, yielding a clear brick-missing edge.

The upper blue dashed box contains the image segmentation process, while the lower red dashed box encloses the image measurement process.

Then the Canny operator is used to detect the edges of the clear boundary and obtain the coordinates of the boundary position. The image measurement process are as follows: recognize the plane where the brick missing is located, get the coordinates of the four corner points and then get the perspective transformation matrix, use the perspective transformation matrix to transform the coordinates of the corner points and the edge positions, and then get the positions of the brick missing in the plane. Since the edge of brick missing in one image is partial, the position curves of the edge of brick missing 0 in multiple images are aggregated by the edge curves aggregation operation, and finally the complete edge that makes the area of brick missing 0 the largest is obtained.

Similarly, the other four brick missings of the defensive fort 3, which resulted in the direct missing of the wall or the top, were localized. Additionally, the damage locations can serve as the basis for subsequent structural modeling. Since the defensive forts of the Great Wall are relatively regular in shape and have a rectangular layout, it is relatively straightforward to obtain the length, width, and height of the structure through field surveying. After constructing a rectangular prism based on the length, width, and height information, the model, excluding the base, consists of five planes. The acquired brick-missing location data is applied to the corresponding planes, facilitating both damage removal and generation of the damaged fort model. Figure 17 presents the localization results and damage-assisted modeling outcomes, which demonstrates that the localization method can effectively locate brick missings with irregular edges and significantly assist in constructing the physical model.

The top four images show the actual views of the defensive fort, with black lines outlining the wall surfaces, red markings indicating brick missing regions, and warm gray representing the remaining areas after excluding brick missing sections. The middle section presents four schematic diagrams of brick missing locations identified by the proposed localization algorithm. The bottom section presents the 3D model constructed in modelling software, assisted by the derived brick missing positions.

Discussion

This paper proposed a two-phase method for automatically detection and localization of brick missing damages on ancient city wall, combining object detecting algorithm and image processing procedures. Phase-1 enables automatic detection of brick missing damages, while Phase-2 localizes of the edges in the plane for the brick missing damage. The key conclusions are:

-

(1)

A large-view ancient city wall brick missing damages dataset containing 246 defensive forts, 1197 images has been established for the Great Wall. Different from the surface damage dataset, the images in the dataset include the whole defensive forts, trails and natural backgrounds, thus offering strong support for routine structural health monitoring of large-scale ancient city wall from a UAV perspective.

-

(2)

An improved-YOLOv5n object detection network for automatic damage detection of ancient city wall is proposed. Aiming at the characteristics of small target objects and complex background of aerial images, SEAttention is introduced to make the network focus on small targets. In addition, the knowledge distillation method was adopted to approximate the detection performance of YOLOv5n with fewer layers to that of YOLOv5s, enabling the model to improve the detection accuracy while maintaining the detection speed. The mAP@0.5 of Improved-YOLOv5n network reaches 74.5%, and FPS reaches 768.1, outperforming Faster R-CNN, YOLOv5x, YOLOv5s, YOLOv5n and YOLOv8s in terms of overall performance, which can support the automatic damage detection of daily monitoring of ancient city wall.

-

(3)

A brick missing localization method is proposed, which can obtain the position of the brick missing in the plane where it is located. The method includes multi-threshold image segmentation algorithm based on genetic algorithm, binary segmentation, opening and closing operation, Canny edge detection, perspective transformation and edge curves aggregation. This method enables accurate segmentation and localization for UAV images with complex background, and can guide subsequent defensive fort structure modeling.

The automatic detection results on test defensive forts indicate that Improved-YOLOv5n can detect brick missing damages on defensive forts with varying levels of damage under complex background conditions. Comparison experiments also demonstrate that Improved-YOLOv5n has better overall performance in both detection accuracy and speed. However, there are some limitations in this part of the study.

Firstly, due to insufficient data and the large variation in damage levels across different defensive forts, the number of images for each fort differs, which results in overlap between the forts used for training and those used for testing in the dataset. This overlap may reduce the algorithm’s generalization ability. Secondly, the algorithm has omissions and misdetections. For extensive brick missing damage requiring multiple rectangular boxes to cover, some areas are missed in the detection box. For severely collapsed defensive forts, the completely collapsed remnant bricks are easily misdetected as brick missing damage. To address these limitations, further improvements are required.

Regarding the data volume issue, future work will focus on the less-represented Yanqing section of the Great Wall, conducting more in-depth field research to collect additional images of defensive forts, thus expanding the dataset. This will help reduce the spatial overlap between training and testing defensive forts and enhance the algorithm’s generalization capability. Moreover, algorithm improvements will consider the adoption of more advanced data augmentation strategies and bounding box optimization techniques to enhance detection accuracy.

The algorithm proposed in this paper is based on computer vision and image processing procedures, which has low cost and can be migrated to the daily protection and monitoring of other types of architectural heritage. For example, in the case of detecting cracks in timber-structured heritage buildings, by collecting images of heritage buildings with timber structure cracks, performing annotation and targeted algorithm improvements, the model can learn the typical damage features of timber structure cracks, thus enabling automatic damage detection and early warning. However, research has shown that the model’s detection performance is highly dependent on the amount of data. When transferring the algorithm to other scenarios, it is essential to collect a large amount of image data during the early stages to ensure the model’s generalization ability. Additionally, due to the lack of depth information in the images, future work should incorporate more advanced equipment, such as oblique UAVs and LiDAR, to perform 3D quantification of the damage to masonry heritage.

Data availability

Data will be made available on request.

Code availability

Code will be made available on request.

References

Wang, H. Y. et al. Quantitative evaluation method of structural safety for the Great Wall hollow defensive forts under gravity loads. Int. J. Archit. Herit. 17, 1736–1756 (2023).

He, D. et al. Spatial distribution characteristics of the Ming Great Wall heritages in Beijing. J. Arid Land Resour. Environ. 36, 184–191 (2022).

Shen, Y. et al. Characteristics of the site selection and the layout of the Great Wall of the Ming dynasty from a military perspective: Xiaohekou section as an example. Front. Archit. Res. 9, 541–555 (2020).

Li, X. J. et al. Research on the damage of the Great Wall of Ming Dynasty in Beijing by remote sensing. Sci. China Ser. E-Technol. Sci. 51, 195–202 (2008).

Mishra, M. & Lourenco, P. B. Artificial intelligence-assisted visual inspection for cultural heritage: state-of-the-art review. J. Cult. Herit. 66, 536–550 (2024).

Chen, W. et al. A review of object detection: datasets, performance evaluation, architecture, applications and current trends. Multimed. Tools Appl. https://doi.org/10.1007/s11042-023-17949-4 (2024).

Chen, H., Chen, Z. & Yu, H. Enhanced YOLOv5: an efficient road object detection method. Sensors 23, https://doi.org/10.3390/s23208355 (2023).

Jiang, Y. Q., Pang, D. D. & Li, C. D. A deep learning approach for fast detection and classification of concrete damage. Autom. Constr. 128, https://doi.org/10.1016/j.autcon.2021.103785 (2021).

Kang, S. H. et al. Enhanced non-maximum suppression for the detection of steel surface defects. Mathematics 11, https://doi.org/10.3390/math11183898 (2023).

Sun, Y. et al. DSMFFNet: depthwise separable multiscale feature fusion network for bridge detection in very high resolution satellite images. Geocarto Int. https://doi.org/10.1080/10106049.2022.2146 (2022).

Redmon, J. et al. You only look once: unified, real-time object detection. In Proceedings of the 2016 IEEE Conference on Computer Vision and Pattern Recognition (CVPR), 779–788 (IEEE Comp Soc; Comp Vis Fdn, 2016).

Liu, W. et al. SSD: single shot multibox detector. In Proceedings of the 14th European Conference on Computer Vision (ECCV), Vol. 9905, 21–37 Lecture Notes in Computer Science (LNCS) (Springer, 2016).

Wang, N. N. et al. Automatic damage detection of historic masonry buildings based on mobile deep learning. Autom. Constr. 103, 53–66 (2019).

Ali, L., Khan, W. & Chaiyasarn, K. Damage detection and localization in masonry structure using faster region convolutional networks. Int. J. Geomate 17, 98–105 (2019).

Haciefendioglu, K., Altunisik, A. C. & Abdioglu, T. Deep learning-based automated detection of cracks in historical masonry structures. Buildings 13, https://doi.org/10.3390/buildings13123113 (2023).

Wang, N. N. et al. Novel system for rapid investigation and damage detection in cultural heritage conservation based on deep learning. J. Infrastruct. Syst. 25, https://doi.org/10.1061/(asce)is.1943-555x.0000499 (2019).

Li, Q. et al. Non-destructive testing research on the surface damage faced by the Shanhaiguan Great Wall based on machine learning. Front. Earth Sci. 11, https://doi.org/10.3389/feart.2023.1225585 (2023).

Soleymani, A., Jahangir, H. & Nehdi, M. L. Damage detection and monitoring in heritage masonry structures: systematic review. Constr. Build. Mater. 397, https://doi.org/10.1016/j.conbuildmat.2023.132402 (2023).

Tewari, P. & Surbhi, P. Evaluation of some recent Image segmentation methods. In Proceedings of the 3rd International Conference on Computing for Sustainable Global Development, 3741–3747 (INDIACom, 2016).

Deng, J. H. et al. Review on computer vision-based crack detection and quantification methodologies for civil structures. Constr. Build. Mater. 356, https://doi.org/10.1016/j.conbuildmat.2022.129238 (2022).

Talab, A. M. A. et al. Detection crack in image using Otsu method and multiple filtering in image processing techniques. OPTIK 127, 1030–1033 (2016).

Ding, Y. W. et al. Innovative computer vision-based full-scale timber element cracks detection, stitching, and quantification. Struct. Health Monit. https://doi.org/10.1177/14759217241258682 (2024).

Xia, Z. Q. et al. Computer vision-based two-step inspection method for spalling and cracks of building facades. J. Build. Struct. 44, 207–216 (2023).

Yang, H. & Xu, X. Y. Intelligent crack extraction based on terrestrial laser scanning measurement. Meas. Control 53, 416–426 (2020).

Xu, X. J. & Zhang, X. N. Crack detection of reinforced concrete bridge using video image. J. Cent. South Univ.20, 2605–2613 (2013).

Adhikari, R. S., Moselhi, O. & Bagchi, A. Image-based retrieval of concrete crack properties for bridge inspection. Autom. Constr. 39, 180–194 (2014).

Shi, J. S. et al. Comparative study on material properties of ancient fired clay bricks of China. Case Stud. Constr. Mater. 19, e02463 (2023).

Luo, S. et al. Aircraft target detection in remote sensing images based on Improved YOLOv5. IEEE Access 10, 5184–5192 (2022).

Hu, J. et al. Squeeze-and-excitation networks. In Proceedings of the 31st IEEE/CVF Conference on Computer Vision and Pattern Recognition (CVPR) 7132–7141 (IEEE, CVF, IEEE Comp Soc, 2018).

Hinton, G., Vinyals, O. & Dean, J. Distilling the knowledge in a neural network. arXiv https://doi.org/10.48550/arXiv.1503.02531 (2015).

Shu, C. Y. et al. Channel-wise knowledge distillation for dense prediction. arXiv https://doi.org/10.48550/arXiv.2011.13256 (2021).

Zhang, Z. Y. Flexible camera calibration by viewing a plane from unknown orientations. In The Proceedings of the Seventh IEEE International Conference 666–673 (IEEE, 1999).

Jones, R. & Svalbe, I. The design of morphological filters using multiple structuring elements. 2. open(close) and close(open). Pattern Recognit. Lett. 13, 175–181 (1992).

Canny, J. A computational approach to edge-detection. IEEE Trans. Pattern Anal. Mach. Intell. 8, 679–698 (1986).

Singh, S. et al. Improving the segmentation of digital images by using a modified Otsu’s between-class variance. Multimed. Tools Appl. 82, 40701–40743 (2023).

Peng, Z. B. et al. Multi-threshold image segmentation of 2D OTSU inland ships based on improved genetic algorithm. PLoS ONE 18, https://doi.org/10.1371/journal.pone.0290750 (2023).

Acknowledgements

This research was supported by the National Natural Science Foundation of China (Grant No. 52078111).

Author information

Authors and Affiliations

Contributions

S. Y. Ma conducted data processing, code writing, experiments, and manuscript drafting. Q. Chun reviewed and revised the manuscript and provided funding. C. W. Zhang contributed to the conceptualization of the research and revised the manuscript. D. Q. Li identified the research problem. F. Zhai provided the original data. Y. Yuan revised the manuscript and offered suggestions for language improvement. All authors read and approved the final manuscript.

Corresponding author

Ethics declarations

Competing interests

The authors declare no competing interests.

Additional information

Publisher’s note Springer Nature remains neutral with regard to jurisdictional claims in published maps and institutional affiliations.

Rights and permissions

Open Access This article is licensed under a Creative Commons Attribution-NonCommercial-NoDerivatives 4.0 International License, which permits any non-commercial use, sharing, distribution and reproduction in any medium or format, as long as you give appropriate credit to the original author(s) and the source, provide a link to the Creative Commons licence, and indicate if you modified the licensed material. You do not have permission under this licence to share adapted material derived from this article or parts of it. The images or other third party material in this article are included in the article’s Creative Commons licence, unless indicated otherwise in a credit line to the material. If material is not included in the article’s Creative Commons licence and your intended use is not permitted by statutory regulation or exceeds the permitted use, you will need to obtain permission directly from the copyright holder. To view a copy of this licence, visit http://creativecommons.org/licenses/by-nc-nd/4.0/.

About this article

Cite this article

Ma, S., Chun, Q., Zhang, C. et al. Automatic damage detection and localization of ancient city walls—a case study of the Great Wall. npj Herit. Sci. 13, 174 (2025). https://doi.org/10.1038/s40494-025-01749-0

Received:

Accepted:

Published:

Version of record:

DOI: https://doi.org/10.1038/s40494-025-01749-0