Abstract

In this paper, we present Opti3D, a unified enhancement–reconstruction framework addressing three key challenges in 3D digitization of cultural artifacts: low-light conditions, non-invasive data acquisition, and high computational complexity. First, we designed a low-light image enhancement network integrating residual attention and multi-scale illumination enhancement modules, which significantly improves image contrast and detail fidelity while suppressing noise artifacts. Second, we introduced an end-to-end pipeline built on the existing DUSt3R approach. This approach reduces matching complexity from \(O({N}^{2})\) to \(O(N)\), enabling high-precision global geometric reconstruction without precise calibration or reliance on ordered image sequences. Finally, we introduced a pixel gradient scaling strategy into the 3D Gaussian Splatting algorithm to achieve ultra-photorealistic rendering of fine details. Extensive experiments demonstrate that Opti3D outperforms current mainstream methods, offering an efficient, scalable solution for heritage preservation.

Similar content being viewed by others

Introduction

Digital preservation of cultural heritage serves as a cornerstone for perpetuating human civilization’s memory, particularly for fragile and movable artifacts. High-precision 3D reconstruction technology provides a non-destructive alternative for archeological research, virtual restoration, and public exhibition, effectively mitigating irreversible damage1. However, traditional 3D reconstruction methods face three critical technical bottlenecks due to stringent data acquisition constraints.

First, many cultural artifacts are situated in environments with low or uneven lighting—such as dimly lit museum galleries or cave temples—which significantly compromise image quality and signal-to-noise ratio (SNR)2. Insufficient illumination often results in blurred or noisy images, leading to failures in feature matching and the loss of fine texture details during 3D reconstruction. In the absence of adequate lighting, passive photogrammetric systems struggle to capture the subtle surface geometries of artifacts reliably, thereby degrading the performance of multi-view stereo reconstruction.

Second, the digitization of cultural heritage must adhere to strict non-invasive protocols, imposing substantial constraints on data acquisition. Artifacts cannot be physically modified (e.g., by attaching markers) or relocated unnecessarily; in many cases, such as with cave murals, they must remain in situ. Conventional Structure-from-Motion (SfM) and Multi-View Stereo (MVS) pipelines typically require precise camera calibration and highly controlled acquisition setups3, which are incompatible with conservation practices. In particular, photogrammetric surveys often avoid using strong artificial lighting or external sensors that may damage fragile pigments. Therefore, calibration-free or highly flexible capture methods are essential to comply with heritage preservation standards.

Third, computational complexity remains a critical barrier to scalability4. High-resolution 3D reconstruction typically requires processing hundreds of images, and classical incremental SfM involves computationally intensive procedures—such as exhaustive pairwise feature matching and global bundle adjustment. Despite recent algorithmic advances, the processing of large-scale image sets remains time-consuming and memory-intensive. This bottleneck becomes particularly acute when digitizing extensive collections or capturing artifacts from multiple viewpoints.

Recent advances in deep learning offer promising solutions to these challenges. In low-light enhancement, Retinex theory-driven decomposition-enhancement frameworks have improved visual quality by decoupling illumination and reflectance components5. Yet, existing methods often overlook the physical reflectance properties of cultural materials, causing over-enhancement artifacts such as glaze highlight distortion and pigment color shifts6. For 3D reconstruction, implicit representation methods like Neural Radiance Fields (NeRF)7 excel in novel view synthesis but struggle to recover fine surface details (e.g., pottery cracks, inscription engravings) with voxel-based rendering, while requiring extensive calibrated views. Emerging calibration-free algorithms (e.g., DUSt3R8) and explicit Gaussian representations (3D Gaussian Splatting, 3DGS9) show potential in generic scenarios, but their applicability to heritage digitization remains systematically unverified.

To situate our contributions in context, we briefly review prior work in low-light image enhancement, SfM and 3D scene representation.

Early Retinex theory-based methods relied on variational optimization to separate illumination and reflectance components but struggled with complex light-material interactions due to handcrafted priors10. With the rise of deep learning, data-driven enhancement approaches have become mainstream. Retinex-Net11 adopted a two-stage decomposition-enhancement framework using encoder-decoder networks. However, its decomposition lacks physically interpretable constraints, leading to high-frequency detail loss in reflectance maps. End-to-end networks like Zero-DCE12 map low-light to normal-light images via learnable curves, achieving efficiency but failing to address non-uniform illumination on artifact surfaces (e.g., localized shadows and highlights). MAT-Net13 improved enhancement through material classification but required manual labeling and neglected heritage-specific materials (e.g., glazes, textiles). Recent studies integrate physical constraints into learning frameworks. PALR-RTV14 balances denoising and detail retention via local low-rank priors and relative total variation, yet induces block discontinuity artifacts. Xu et al.15 employed structure-aware GANs but generated structural artifacts in extremely dark regions with high computational costs. Wu et al.16 reformulated Retinex decomposition as implicit prior regularization but lacked explicit decoupling constraints under extreme low light. Zhao et al.17 suppressed noise via low-frequency feature fusion but suffered from cross-sensor generalization and texture oversmoothing. Zou et al.18 proposed wavelet-based decomposition (LFSSBlock/HFEBlock), but their high-frequency recovery depended heavily on low-frequency reconstruction accuracy and lacked computational optimization. While these methods excel in specific aspects, they fail to balance precision and robustness.

Current 3D reconstruction methods primarily follow three paradigms: hardware-driven systems, implicit radiance fields (NeRF), and explicit radiance fields (3DGS). Given the prohibitive costs and invasiveness of hardware solutions, we focus on radiation field-based approaches.

Implicit Radiance Fields: NeRF’s success has spurred numerous improvements. Mip-NeRF36019 achieves state-of-the-art accuracy in complex scenes via conical sampling and scene parameterization but requires dozens of training hours. GARF20 jointly optimizes camera poses and scenes with Gaussian-activated MLPs, yet slow convergence limits practicality. Acceleration efforts focus on spatial data structures—e.g., sparse voxel grids21; alternative encoding schemes—codebooks22 and hash tables23; and MLP capacity adjustment. Instant-NGP24 accelerates computation via hash grids and lightweight MLPs, while Plenoxels25 replaces neural networks with sparse voxel grids. Despite faster rendering, these methods sacrifice empty-space representation and image quality under acceleration.

Explicit Radiance Fields: 3D Gaussian Splatting models scenes as differentiable Gaussian ellipsoids, enabling real-time rendering and geometric optimization. While surpassing NeRF in detail reconstruction and training efficiency, 3DGS performance heavily depends on SfM initialization quality. Motion blur or low-texture inputs cause Gaussian particle overfitting or under-convergence.

Given implicit methods’ memory and efficiency bottlenecks, our framework adopts explicit radiance fields to balance computational economy and heritage digitization demands.

SfM aims to jointly estimate camera poses and 3D scene structure from multi view images26, forming a core component of 3DGS. Classical SfM pipelines (e.g., COLMAP27) involve feature extraction, pairwise matching, and incremental reconstruction, but suffer from: (1) outlier accumulation in RANSAC based solvers28 under low overlap; (2) prohibitive computational and memory costs for large image sets29; and (3) degeneracies under pure rotational motion or weak textures, causing bundle adjustment to converge to poor local minima30. Recent innovations attempt to reimagine SfM: VGGSfM31 employs a fully differentiable network for direct pose–structure regression; detector free SfM32 replaces keypoint detection and matching with learned modules; SfM TTR33 uses wide baseline sparse clouds for test time self supervision; RTSfM34 integrates vocabulary tree retrieval with hierarchical weighted local BA for real time online SfM; and planar marker assisted SfM35 uses planar markers and rotation consistency checks to filter ambiguous views, enhancing robustness in complex scenes. While these methods offer incremental improvements, they largely retain pairwise matching or multi stage workflows, failing to break the bottleneck. Attempts to accelerate retrieval with global descriptors—AP GeM36 and NetVLAD37—introduce coarse granularity and expensive keypoint matching. DUSt3R leverages a single Transformer pass to estimate two view geometry and camera parameters, stitching local reconstructions via gradient descent, but remains computationally prohibitive for large image collections.

To address these gaps, we propose a synergistic enhancement-reconstruction framework for cultural relics, with three key contributions:

-

(1)

We propose a deep learning-based low-light image enhancement method that significantly improves the quality of cultural relic images, providing more reliable input data for 3D reconstruction.

-

(2)

We adapt and extend the DUSt3R into a complete SfM pipeline (DUSt3R-SfM), an end‑to‑end pipeline that automatically constructs a global geometry model without manual calibration, simplifying traditional reconstruction workflows.

-

(3)

A pixel gradient scaling strategy integrated into 3DGS, dynamically adjusting Gaussian kernel coverage to optimize depth-varying detail rendering and artifact suppression.

Methods

Overview

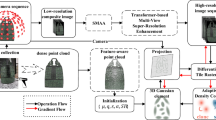

This study focuses on 3D reconstruction of cultural relics under low-light and non-uniform illumination environments, proposing a novel solution named Opti3D. The algorithm takes multi-view image sets of static scenes as input. The technical framework achieves breakthroughs through three key designs:

First, a low-light enhancement network with texture-preserving characteristics is constructed to improve image visibility while effectively suppressing noise. Second, an end-to-end DUSt3R-SfM module is adopted to overcome the limitations of traditional SfM techniques, enabling joint efficient prediction of point cloud data and camera parameters. Finally, an optimized 3DGS algorithm with pixel gradient dynamic scaling is introduced to adaptively regulate Gaussian primitive distributions for ultra-photorealistic rendering. The complete Opti3D architecture is illustrated in Fig. 1.

The pipeline comprises three main stages: low-light image enhancement, intermediate DUSt3R-SfM reconstruction, and final 3D Gaussian Splatting rendering.

Improved Retinex-Net

The Retinex theoretical model can be expressed by mathematical Eq. (1). In Eq. (1), \(S(x,y)\) represents the image signal to be observed or received by the camera; \(I(x,y)\) represents the illumination component of the ambient light; \(R(x,y)\) represents the reflection component of the target object carrying the image detail information.

Figure 2 illustrates the Retinex-Net model, which exhibits inherent limitations in feature representation and multi-scale information processing:

-

(1)

The traditional decomposition network employs equal-weight feature learning, making it difficult to effectively distinguish noise from valid features.

-

(2)

The enhancement network tends to generate localized over-/under-exposure when handling complex illumination distributions.

The upper part of the figure illustrates the overall Retinex-Net architecture. We propose enhancements to two core sub-networks within this framework, namely: the Decom-Net with Residual Attention Module, and the Multi-Scale Illumination Enhancement Module. The Decom-Net decomposes the input low-light image into reflectance and illumination components, where the Residual Attention Module improves spatial feature extraction and preserves fine details. The illumination map is then refined through the multi-scale enhancement module, which progressively fuses global and local information to achieve balanced brightness correction. Meanwhile, noise present in the reflectance component is also suppressed during this step. Finally, the adjusted illumination and reflectance are recombined to generate the enhanced output.

To overcome these issues, we introduce a dual improvement strategy—Residual Attention‑Enhanced Feature Decoupling in the decomposition stage and Multi‑Scale Illumination Correction in the enhancement stage.

In Decom-Net, we integrate a Residual Attention Module38 (Fig. 2) to bolster feature extraction. The residual connections preserve deep information flow and mitigate gradient vanishing, while the attention mechanism adaptively focuses on salient image regions, suppressing redundant noise and enhancing fine details. Concretely, the first layer applies residual attention to extract low-level features; subsequent convolutional layers refine these features under residual attention guidance. Intermediate layers employ 3 × 3 convolutions with ReLU activations to capture mid-level representations. Final layers project features into reflection and illumination spaces via 3 × 3 convolutions, with outputs constrained to [0,1] via Sigmoid. This architecture enables Decom-Net to more accurately recover both local details and global structure in low-light images, providing a robust foundation for subsequent enhancement.

In Enhance-Net, we propose a Multi-Scale Illumination Enhancement Module (Fig. 2) to leverage information across different resolutions and avoid detail loss or uneven brightness inherent to single-scale methods. The module consists of three branches:

Branch 1 downsamples the input twice via bilinear interpolation to 1/4 resolution, expanding the receptive field to capture global illumination distributions. The output is a coarse, globally balanced brightness map.

Branch 2 upsamples Branch 1’s output to 1/2 resolution and merges it with the corresponding downsampled input, refining brightness under global context. Though finer details are still developing, this branch ensures overall luminance consistency.

Branch 3 merges Branch 2’s intermediate result with the original full-resolution input via a four-dimensional invariant convolution layer, recovering fine details lost during sampling.

By hierarchically fusing global and local information, our multi-scale strategy achieves balanced illumination across the entire image while preserving intricate textures, avoiding the over-exposure or detail erasure typical of mono-scale approaches.

DUSt3R-SfM

Traditional SfM pipelines suffer from multi-stage error accumulation, quadratic matching complexity \(O({N}^{2})\), and failures under pure rotational motion. While DUSt3R provides end-to-end pose estimation and point-cloud reconstruction for image pairs, its pairwise processing still incurs \(O({N}^{2})\) complexity on large scenes and cannot ensure global geometric consistency. To overcome these limitations, we introduce DUSt3R-SfM, which leverages Aggregated Selective Match Kernel (ASMK)39 retrieval and Farthest Point Sampling (FPS)40 to build a sparse scene graph, reducing complexity to linear \(O(N)\) and significantly enhancing the accuracy of the initial point cloud. Although often mentioned together, ASMK and FPS serve fundamentally different roles. ASMK is a feature aggregation and image retrieval method that encodes local descriptors into compact global representations by selectively aggregating residuals. It enables efficient and robust view selection from large-scale, unstructured image sets. In contrast, FPS is a spatial subsampling algorithm used to select a uniformly distributed subset of points from a 3D point cloud, helping to reduce redundancy while preserving geometric coverage. In our pipeline, ASMK is used for image-pair selection in feature space, while FPS operates in 3D space to sparsify reconstruction outputs. The two components complement each other in different stages of the pipeline.

DUSt3R is formalized as

where \(Enc(I)\to F\) is a Siamese Vision Transformer (ViT) encoder mapping an image \(I\) to a feature map \(F\), and \(Dec({F}^{n},{F}^{m})\) is a Siamese decoder regressing a per-pixel depth map \(X\), local feature descriptors \(D\), and their confidence scores.

As shown in Fig. 3, DUSt3R-SfM begins by treating the frozen features from the pre‑trained DUSt3R encoder as local descriptors and using the ASMK39 to rapidly construct a co-visibility graph \(G=(V,E)\). Here, each vertex \(I\in V\) denotes an image, and each edge \(e=(n,m)\in E\) links potentially overlapping image pairs. For every edge, DUSt3R executes pairwise local 3D reconstruction and matching. A final global alignment step fuses these local reconstructions into a unified point cloud in world coordinates, followed by estimation of camera poses, intrinsics, and depth maps.

Given an unconstrained image collection, we first construct a sparse view graph using efficient image retrieval based on features extracted by a frozen DUSt3R encoder. Then, for each selected image pair (edge), we perform local 3D reconstruction and feature matching using the same frozen DUSt3R decoder. Finally, a global optimization step integrates all local reconstructions into a globally consistent sparse point cloud.

ASMK generates the similarity matrix \(S\in {[0,1]}^{N\times N}\) without extra spatial verification via three steps:

-

(1)

Feature whitening: normalize the bag-of-features FFF from the encoder;

-

(2)

Codebook quantization: assign features to a k-means–derived codebook;

-

(3)

Residual aggregation & binarization: aggregate and binarize per-codebook residuals into a high-dimensional sparse binary representation.

To significantly reduce the number of image pairs to be processed while maintaining \(G\) as a single connected component, we employ FPS: first, select \({N}_{a}\) keyframes based on \(S\) to form a densely connected core; then, connect each remaining image to its nearest keyframe and its top \(k\) neighbors. The resulting graph has \(O({N}_{a}^{2}+(k+1)N)=O(N)\) edges—far fewer than the \(O({N}^{2})\) of exhaustive matching.

Improved 3DGS

The reconstruction quality of 3DGS is highly dependent on the quality of the initial point cloud. Existing adaptive density control schemes typically use the mean gradient magnitude across observable views to assess point-cloud density. However, in under-modeled regions, sparse SfM point clouds often initialize Gaussian ellipsoids with excessively large axes, producing blur artifacts and spike-like distortions.

To address this defect, we introduce a pixel-gradient scaling strategy. When computing the mean gradient for the i Gaussian, we weight each view’s contribution by the number of pixels that the Gaussian covers, instead of using a simple average. This approach dynamically accounts for the number of pixels each Gaussian covers in every view, thereby effectively encouraging the growth of large‑scale Gaussians. Conversely, for small‑scale Gaussians—whose pixel coverage varies minimally across views—the weighted averaging has little effect on their gradients and incurs no additional memory overhead.

Concretely, for Gaussian i at view k, let its projections in camera, image, and NDC coordinates be \(\left({\mu }_{c,x}^{i,k},{\mu }_{c,y}^{i,k},{\mu }_{c,z}^{i,k}\right),\left({\mu }_{p,x}^{i,k},{\mu }_{p,y}^{i,k},{\mu }_{p,z}^{i,k}\right),\left({\mu }_{ndc,x}^{i,k},{\mu }_{ndc,y}^{i,k},{\mu }_{ndc,z}^{i,k}\right)\). Its 2D covariance matrix \({\sum }_{2D}^{i,k}=\left(\begin{array}{cc}\begin{array}{l}{a}^{i,k}\\ {b}^{i,k}\end{array} & \begin{array}{l}{b}^{i,k}\\ {c}^{i,k}\end{array}\end{array}\right)\) defines a support radius \({R}_{k}^{i}\) covering 99% of the probability mass:

When the image width is W and the height is H, the viewpoint k will participate in the calculation only when Gaussian i satisfies the conditions of Eq. (4) at the same time:

In standard 3DGS, a Gaussian splits or clones based on the mean NDC-coordinate gradient magnitude across its participating views, using threshold \({\tau }_{pos}\) = 0.0002. Specifically, if

where \({M}^{i}\) is the number of views contributing to Gaussian \(i\) over 100 adaptive-density iterations and \({L}_{k}\) is the gradient magnitude at view \(k\), the Gaussian is split into two.

We assign an NDC coordinate gradient weight based on the number of pixels it covers to each Gaussian under each viewpoint. Since the pixels near the projection center contribute significantly to the gradient, when the projection center of a large-scale Gaussian is at the edge of the screen, even though the viewpoint is involved in the calculation, its NDC coordinate gradient is still small, further lowering the average gradient and inhibiting the splitting or cloning of the Gaussian. Therefore, we use the average gradient calculation weighted by the number of pixels, as shown in Eq. (6), to more reasonably drive the splitting and cloning of the Gaussian.

Where \({m}_{k}^{i}\) denotes the number of pixels covered by Gaussian i in view k, and \(\frac{\partial {L}_{k}}{\partial {\mu }_{ndc,x}^{i,k}}\), \(\frac{\partial {L}_{k}}{\partial {\mu }_{ndc,y}^{i,k}}\) represent the gradient of Gaussian i in the x and y directions of NDC space at viewpoint k, respectively.

In addition, floating objects near the camera occupy more pixels on the screen due to their closer distance—the number of pixels is inversely proportional to the square of the distance—which makes them obtain too high a gradient priority in the NDC space, making them prone to misoptimization and generating artifacts. To this end, we introduce a spatial scaling factor based on the squared distance to adaptively scale the gradient to balance the optimization weights of different depth regions. Finally, the Gaussian split and clone decision is given by Eq. (7).

Where \(f(i,k)\) is the scaling factor, whose value is determined by the radius, and radius \(r\) is calculated using Eq.(8):

Where \({C}_{i}\) represents the coordinates of the camera at view \(j\) in the world coordinate system, and there are \(N\) viewpoints in total. We scale the gradient of the NDC coordinates of each Gaussian \(i\) at the view \(k\), where the scaling factor \(f(i,k)\) is calculated by Eq.(9):

Among them, \({\mu }_{c,z}^{i,k}\) is the \(z\) coordinate of Gaussian\(i\) in the camera coordinate system under view \(k\), indicating the depth of this Gaussian from the viewpoint, \({\gamma }_{depth}\) is a manually set hyperparameter of 0.37, and the \(clip\) function constrains the final value to the range of [0,1].

Simplifying the formula, \({p}_{i}\) represents the number of pixels participating in the Gaussian calculation from this viewpoint, \({g}_{i}\) represents the gradient of the NDC coordinates of the Gaussian, and \(f\) represents the scaling factor. The final determination of whether the Gaussian undergoes “splitting” or “cloning” can be intuitively represented by Fig. 4.

The left figure shows the standard 3DGS strategy, where splitting is driven by unweighted gradient magnitudes in normalized device coordinate (NDC) space. This often leads to premature splitting of small Gaussians and under-optimization of large ones. The right figure visualizes our improved method, which weights NDC gradient contributions by the number of pixels each Gaussian covers per view. This results in more balanced splitting decisions, especially for large Gaussians projected at screen peripheries.

Results

Experimental setup

All components of our framework are implemented in Python using the PyTorch library. All experiments are run on a high-performance workstation equipped with an Intel® Xeon® Platinum 8352 V CPU (2.10 GHz) and an NVIDIA GeForce RTX 4090 GPU (24 GB).

To validate the effectiveness of our enhanced Retinex-Net, we benchmark against two widely adopted low-light image pairs: the LOL dataset (Wei et al.) and the SID dataset (Chen et al.). Decom-Net uses 3 × 3 convolutions with 64 feature channels throughout (except output convolutions, which map 64 → 3 channels for reflectance and illumination). Enhance-Net’s multiscale convolutions similarly use 3 × 3 kernels with 64 channels. The 4D invariant convolution in Enhance-Net has a 3 × 3 × 3 × 3 kernel (64 input, 64 output). All ReLU activations operate on 64-channel feature maps. Each module is trained independently with a batch size of 8. Training patches of size 128×128 are randomly cropped from paired low-/normal-illumination images. The initial learning rate is set to 2 × 10⁻⁴ and decayed to 1 × 10⁻⁶ via a cosine annealing schedule41. We evaluate using peak signal-to-noise ratio (PSNR), structural similarity index (SSIM), and learned perceptual image patch similarity (LPIPS)—where higher PSNR/SSIM and lower LPIPS indicate superior enhancement quality.

We also assess DUSt3R-SfM reconstruction performance. Due to the absence of dedicated datasets for artifact reconstruction, we curate a Cultural-Relics dataset by filtering and merging ShapeNet, ETH3D, Mip-NeRF360, and Palace Museum collections—removing categories with large geometric discrepancies (e.g., firearms, yachts) and retaining artifacts such as porcelain, ancient furniture, and handicrafts. To assess generalization in complex outdoor scenes, we also employ the Tanks & Temples dataset, which features challenging textures (e.g., trains, tanks, sculptures) captured in real-world environments.

We use the publicly available DUSt3R checkpoint. For sparse scene graph construction, we set the number of anchor images \({N}_{a}\) = 20 and nearest-neighbor non-anchor images \(k\) = 10. Global optimization is performed with the Adam optimizer42, a learning rate of 0.08, 400 iterations, and a weight decay of 1.5, using a cosine learning rate scheduler (no additional decay). We report:

Average Translation Error (ATE): The normalized mean error obtained by aligning estimated poses to ground truth via FlowMap43 and Procrustes analysis44.

Relative Rotation/Translation Accuracy (RRA@τ / RTA@τ): Averaged per image pair with angular threshold τ45.

Registration Success Rate (Reg.): Percentage of successfully registered images, averaged over all scenes.

We further evaluate our enhanced 3DGS. The same Cultural-Relics and Tanks & Temples datasets are used to evaluate our enhanced 3DGS. We again employ PSNR, SSIM, and LPIPS as performance metrics. Simultaneously, DOVER + +46 is employed to comprehensively evaluate the reconstruction outcomes. DOVER + + is a full-reference video quality evaluator focusing on esthetic vs. technical quality. Training is fixed at 30,000 iterations, mirroring the original 3DGS loss configuration, with the SSIM loss weight set to 0.2. Optimizer parameters are: feature learning rate 0.0025, opacity learning rate 0.05, scale learning rate 0.005, and rotation learning rate 0.001.

Results and evaluation

First, we quantitatively compare our enhanced Retinex‑Net against state‑of‑the‑art low‑light enhancement methods on the LOL and SID datasets. Results are shown in Table 1.

LOL: Our approach attains an SSIM of 0.846, markedly higher than Retinexformer’s 0.821. Although its PSNR of 24.33 dB is slightly below Retinexformer’s 25.17 dB, it still ranks second best. Moreover, the LPIPS is reduced to 1.37—surpassing all comparison methods—indicating superior structural fidelity and perceptual quality. Notably, this performance is achieved with only 21.38 G FLOPs and 3.89 M parameters—representing a favorable balance between accuracy and efficiency.

SID: Our method establishes the best performance with an SSIM of 0.692 and a PSNR of 24.96 dB, corresponding to improvements of 11.1% and 11.6% over the next‑best method (Retinexformer), respectively. While its LPIPS score remains on par with Retinexformer, the combination of high SSIM and PSNR confirms its strong perceptual consistency under extreme low-light conditions.

On the LOL dataset, Opti3D exhibits slightly lower PSNR than Retinexformer, but achieves higher SSIM and lower LPIPS, indicating better structural preservation and perceptual quality. While PSNR measures pixel-wise accuracy, SSIM captures luminance and structural consistency, and LPIPS is more aligned with human perceptual similarity. For downstream SfM tasks, structural and textural consistency are more critical than absolute brightness fidelity. Although Retinexformer improves PSNR, it may introduce over-smoothing or lose fine details. In contrast, Opti3D retains sharper textures and more coherent luminance transitions, resulting in better multi-view consistency and more reliable 3D reconstruction.

In terms of complexity, our model operates with fewer FLOPs than Retinex-Net (136.47 → 21.38 G), and significantly fewer parameters than KinD (3.89 → 8.02 M), while outperforming both in nearly all evaluation metrics. Compared to Retinexformer, which is more compact (15.57 G FLOPs), our model introduces modest computational overhead, yet yields substantially higher SSIM and comparable LPIPS, indicating enhanced structural awareness with small cost.

Importantly, our algorithm excels at balancing pixel‑level accuracy (SSIM/PSNR) with perceptual consistency (LPIPS), particularly under the complex illumination conditions of the SID dataset, thus demonstrating robust enhancement of real low‑light images. Figure 5 provides a visual comparison: existing methods typically exhibit color distortion (KinD), overexposure or residual noise (Retinex‑Net), and blurring or unnatural artifacts (Retinexformer). By contrast, our method significantly improves visibility in low‑contrast and dark regions, effectively suppresses noise without introducing new artifacts, and faithfully preserves color authenticity.

Our method effectively enhances the visibility and preserves the color.

We further conduct an ablation study on SID (Table 2), isolating the Residual Attention Module (RAM) and the Multi-Scale Light Enhancement Module (MSLEM):

When solely activating RAM, SSIM/PSNR improved by 4.9%/8.9% respectively, while LPIPS decreased by 9.2%, demonstrating that residual attention effectively enhances feature discriminability and perceptual quality. With MSLEM independently enabled, SSIM/PSNR increased by 12.1%/21.2%, validating the critical role of multi-scale fusion in global illumination balancing and local detail preservation. The joint deployment of both modules achieved peak performance across all metrics, revealing the complementary nature of RAM and MSLEM in “structural fidelity” and “visual authenticity,” ultimately leading to a performance breakthrough.

To quantify the contribution of each branch in our MSLEM, we conducted a component-wise ablation study, as shown in Table 3.

Activating only Branch 1, which performs global illumination normalization at 1/4 resolution, yields modest improvements in SSIM ( + 0.012) and PSNR ( + 0.76 dB), confirming its role in correcting coarse luminance imbalance.

Introducing Branch 2 alongside Branch 1 enhances global-local integration and leads to further gains ( + 0.032 SSIM and +1.67 dB PSNR over Branch 1), demonstrating the value of mid-level refinement under global context.

Alternatively, combining Branch 1 and Branch 3 prioritizes fine-detail restoration and achieves lower LPIPS (1.45) among partial configurations, indicating perceptual benefit from high-frequency fusion even in the absence of mid-scale features.

The full configuration delivers the strongest results across all metrics (SSIM: 0.638, PSNR: 22.67, LPIPS: 1.43), validating that hierarchical fusion from coarse to fine scales is critical for achieving both structural fidelity and perceptual consistency in extreme low-light conditions.

These results justify the inclusion of all three branches in the final design, showing that each component contributes complementary benefits while collectively enabling superior enhancement performance.

Second, we evaluated the impact of image overlap on SfM output quality using the Cultural-Relics and Tanks & Temples datasets (high-overlap reconstruction scenarios). By uniformly subsampling original images, we constructed four subsets containing 50, 100, 200 frames and the full dataset. Experimental results demonstrate that DUSt3R-SfM maintains stable performance across all subset sizes and significantly outperforms COLMAP, FlowMap, and VGGSfM. Notably, the latter two methods exhibit severe performance degradation in small-scale scenarios and fail catastrophically when processing large-scale image collections.

As shown in Table 4, COLMAP exhibits clear quadratic scaling—from 310.5 s at 50 views to 14,338.3 s at full scale—due to exhaustive pairwise feature matching and global bundle adjustment. In contrast, our method grows nearly linearly with input size, completing the full scene in 2254.2 s, with consistent 100% registration and the lowest ATE across all scales. This confirms that our ASMK-based sparse view graph avoids unnecessary matching and dramatically improves scalability.

To further validate performance on unconstrained image collections, we tested selected scenes from ETH-3D (a photogrammetric dataset with 13 scenes, ≤76 images per scene). Table 5 presents detailed per-scene comparisons of RRA@5/RTA@5 metrics, showing DUSt3R-SfM’s substantial average superiority over all competitors. Notably, FlowMap fails to process non-sequential imagery, while COLMAP underperforms due to its dependency on high overlap—highlighting traditional methods’ limitations for truly unconstrained data.

DUSt3R-SfM achieves linear complexity and global consistency through sparse scene graphs and local Transformer-based reconstruction, without relying on sequential frames or high overlap rates. Its superior performance across both constrained subsets and unstructured collections validates exceptional generalizability and scalability. Table 5.

Finally, we conducted comprehensive evaluations of our enhanced 3DGS algorithm against InstantNGP, Mip-NeRF360, vanilla 3DGS, and SAGS47 on Cultural-Relics and Tanks & Temples datasets. Metrics included SSIM, PSNR, LPIPS, training time (Train), and GPU memory usage (Mem).

Our improved 3DGS achieves dual breakthroughs in reconstruction precision (0.832 SSIM, 28.68 PSNR on Cultural-Relics) while maintaining competitive LPIPS (0.223). For Tanks & Temples, it attains 0.874 SSIM and 0.183 LPIPS, excelling in detail preservation despite a marginally lower PSNR (22.97 vs. SAGS’s 23.89). Table 6.

We treated the sequence of rendered views from our reconstruction as a “user-generated video” and computed its DOVER + + score. As shown in Table 7, our method achieves the highest DOVER + + scores on both the CulturalRelics (0.81) and Tanks&Temples (0.84) datasets, indicating superior visual coherence and esthetic fidelity. These results are consistent with our improvements in photometric and geometric fidelity, and further validate the advantage of our view-consistent pipeline.

Qualitative results are presented in Fig. 6. As shown, our method more accurately reconstructs fine geometric details; for instance, intricate surface textures and sharp artifact boundaries—such as inscriptions and relief patterns—are rendered with greater clarity compared to competing methods. In contrast, baseline approaches exhibit noticeable blurring and geometric artifacts, including missing carvings and excessively smoothed surfaces. The output generated by our method shows a closer visual alignment with the ground truth. These qualitative findings are consistent with our quantitative improvements, underscoring that the integration of global structural priors and pixel-level refinements in Opti3D yields reconstructions that are superior both perceptually and numerically.

We show comparisons of ours to previous methods and the corresponding ground truth images from test views. Differences in quality are highlighted by insets.

To quantify the impact of different design choices, we conducted ablation experiments on the Cultural-Relics dataset, with results summarized in Table 8.

When DUSt3R‑SfM is enabled alone, SSIM improves by 4.8%, PSNR by 2.6%, LPIPS decreases by 6.6% and DOVER + + improves by 5.0%, indicating that the global geometric reconstruction module significantly enhances structural fidelity of the scene.

When Pixel Gradient Scaling is enabled alone, SSIM and PSNR increase by 1.0% and 2.1%, respectively, while LPIPS decreases by 4.5% and DOVER + + rises to 0.76, validating the necessity of pixel-level dynamic gradient weighting for detail preservation.

When both components are combined, SSIM and PSNR improve by 7.4% and 4.9% over the baseline, LPIPS decreases by 8.2%, and DOVER + + reaches 0.81. This superlinear gain highlights the complementary nature of structural and pixel-level optimizations: the global framework provides semantic anchors, while pixel modulation reinforces local consistency, together forming a closed-loop optimization of “structure-constrained, detail-refined” enhancement.

It is worth noting that when Pixel Gradient Scaling is used alone, the improvements in SSIM/PSNR ( + 1.0%/ + 2.1%) and DOVER + + ( + 3.0%) are significantly lower than those achieved by DUSt3R‑SfM alone ( + 4.8%/ + 2.6% /+5.0%), indicating that in the absence of reliable 3D structural priors, pixel-level adjustments alone offer limited benefits for overall reconstruction quality. In contrast, the superlinear improvements observed when both modules are used (SSIM improves by 2.0%/5.0% over each individual module, respectively, and DOVER + + increases by 3–5 points) underscore the necessity of cross-level architectural design—where global structure modeling sets the upper bound for reconstruction accuracy, and pixel-level optimization serves to approach that bound.

Discussion

This paper proposes the Opti3D framework, which addresses three major challenges in the digital reconstruction of cultural artifacts—low-light conditions, non-intrusive data acquisition, and high computational complexity—through a unified optimization strategy.

First, we design a low-light image enhancement network that integrates residual attention and multi-scale illumination enhancement, effectively improving image contrast and detail fidelity. Second, we introduce DUSt3R SfM, which leverages pretrained DUSt3R encoder features, ASMK-based retrieval, and farthest point sampling to reduce the complexity of SfM matching from \(O({N}^{2})\) to \(O(N)\), enabling global geometric reconstruction without precise calibration. Finally, we incorporate a Pixel Gradient Scaling strategy into the 3DGS pipeline, which dynamically reweights NDC gradients to significantly suppress blurring and needle-like artifacts, achieving photorealistic detail reconstruction.

Extensive experiments on datasets including LOL/SID, Cultural Relics, Tanks & Temples, and ETH 3D demonstrate that Opti3D achieves better performance in both image enhancement and 3D reconstruction tasks, validating the accuracy, robustness, and scalability of the proposed method.

While our matching framework demonstrates linear complexity and strong efficiency on datasets with hundreds to low-thousands of images, scalability to large-scale collections (e.g., >10k images) remains an open challenge. In practice, cultural heritage digitization often operates within manageable scales due to capture constraints and preservation protocols. Nevertheless, large-scale SfM introduces not only increased matching costs but also significant memory and global optimization overhead (e.g., bundle adjustment). Although our ASMK-based sparse scene graph construction avoids exhaustive pairwise comparisons and supports scalable matching, we acknowledge that memory footprint and wall-clock runtime under such large-scale regimes have not been evaluated in this work. Future extensions may include hierarchical or distributed matching strategies to address these limits, especially for use cases beyond cultural heritage domains.

Future work will focus on three directions: (1) incorporating multispectral data and physical reflectance models to better handle glazed surfaces and pigments; (2) developing lightweight networks for real-time deployment on edge devices; and (3) exploring the fusion of multi-source data such as laser scans and drone imagery to support large-scale cultural heritage digitization. We believe these extensions will further accelerate the real-world application of digital preservation technologies for cultural artifacts.

Data availability

The datasets used and/or analysed during the current research are available from the corresponding author on reasonable request.

References

Wen, K. Literature review of digital protection of material cultural heritage. Environ. Soc. Gov. 1, 9–15 (2024).

Munkberg, J. et al. Extracting triangular 3D models, materials, and lighting from images. In Proc. IEEE/CVF Conf. Comput. Vis. Pattern Recognit. 8280–8290 (2022).

Gao, M. Research on cultural relic restoration and digital presentation based on 3D reconstruction MVS algorithm: A case study of Mogao Grottoes’ Cave 285. In Proc. Int. Conf. Image, Algorithms and Artificial Intelligence (ICIAAI) 744–754 (Atlantis Press, 2024).

Niu, Y. et al. Overview of image-based 3D reconstruction technology. J. Eur. Opt. Soc. -Rapid Publ. 20, 18 (2024).

Cai, Y. et al. Retinexformer: One-stage Retinex-based transformer for low-light image enhancement. In Proc. IEEE/CVF Int. Conf. Comput. Vis. 12504–12513 (2023).

Rasheed, M. T., Guo, G., Shi, D., Khan, H. & Cheng, X. An empirical study on Retinex methods for low-light image enhancement. Remote Sens 14, 4608 (2022).

Gao, K. et al. NeRF: Neural radiance field in 3D vision, a comprehensive review. arXiv preprint arXiv:2210.00379 (2022).

Wang, S., Leroy, V., Cabon, Y., Chidlovskii, B. & Revaud, J. DUSt3R: Geometric 3D vision made easy. In Proc. IEEE/CVF Conf. Comput. Vis. Pattern Recognit. 20697–20709 (2024).

Wu, T. et al. Recent advances in 3D Gaussian splatting. Comput. Vis. Media 10, 613–642 (2024).

Parihar, A. S. & Singh, K. A study on Retinex-based method for image enhancement. In Proc. Int. Conf. Inventive Syst. Control (ICISC) 619–624 (IEEE, 2018).

Feng, W., Wu, G., Zhou, S. & Li, X. Low-light image enhancement based on Retinex-Net with color restoration. Appl. Opt. 62, 6577–6584 (2023).

Mi, A., Luo, W., Qiao, Y. & Huo, Z. Rethinking zero-DCE for low-light image enhancement. Neural Process. Lett. 56, 93 (2024).

Zhang, B., Chen, Y., Rong, Y., Xiong, S. & Lu, X. MATNet: A combining multi-attention and transformer network for hyperspectral image classification. IEEE Trans. Geosci. Remote Sens. 61, 1–15 (2023).

Du, S. et al. Low-light image enhancement and denoising via dual-constrained Retinex model. Appl. Math. Model. 116, 1–15 (2023).

Xu, X., Wang, R. & Lu, J. Low-light image enhancement via structure modeling and guidance. In Proc. IEEE/CVF Conf. Comput. Vis. Pattern Recognit. 9893–9903 (2023).

Wu, W. et al. URetinexNet: Retinex-based deep unfolding network for low-light image enhancement. In Proc. IEEE/CVF Conf. Comput. Vis. Pattern Recognit. 5891–5900 (2022).

Zhao, M. et al. Low-light stereo image enhancement and de-noising in the low-frequency information enhanced image space. Expert Syst. Appl. 265, 125803 (2025).

Zou, W., Gao, H., Yang, W. & Liu, T. Wave-Mamba: Wavelet state space model for ultra-high-definition low-light image enhancement. In Proc. ACM Int. Conf. Multimedia 1534–1543 (2024).

Barron, J. T., Mildenhall, B., Verbin, D., Srinivasan, P. P. & Hedman, P. Mip-NeRF 360: Unbounded anti-aliased neural radiance fields. In Proc. IEEE/CVF Conf. Comput. Vis. Pattern Recognit. 5470–5479 (2022).

Shi, Y. et al. GARF: Geometry-aware generalized neural radiance field. arXiv preprint arXiv:2212.02280 (2022).

Chen, Z., Funkhouser, T., Hedman, P. & Tagliasacchi, A. MobileNeRF: Exploiting the polygon rasterization pipeline for efficient neural field rendering on mobile architectures. In Proc. IEEE/CVF Conf. Comput. Vis. Pattern Recognit. 16569–16578 (2023).

Takikawa, T. et al. Variable bitrate neural fields. In ACM SIGGRAPH Conf. Proc. 1–9 (2022).

Müller, T., Evans, A., Schied, C. & Keller, A. Instant neural graphics primitives with a multiresolution hash encoding. ACM Trans. Graph. 41, 1–15 (2022).

Chen, Y., Wu, Q., Harandi, M. & Cai, J. How far can we compress Instant-NGP-based NeRF? In Proc. IEEE/CVF Conf. Comput. Vis. Pattern Recognit. 20321–20330 (2024).

Fridovich-Keil, S. et al. Plenoxels: Radiance fields without neural networks. In Proc. IEEE/CVF Conf. Comput. Vis. Pattern Recognit. 5501–5510 (2022).

Hartley, R. Multiple View Geometry in Computer Vision (Cambridge Univ. Press, 2003).

Sattler, T. et al. Benchmarking 6DoF outdoor visual localization in changing conditions. In Proc. IEEE Conf. Comput. Vis. Pattern Recognit. 8601–8610 (2018).

Fischler, M. A. & Bolles, R. C. Random sample consensus: A paradigm for model fitting with applications to image analysis and automated cartography. Commun. ACM 24, 381–395 (1981).

Campos, C. et al. ORB-SLAM3: An accurate open-source library for visual, visual–inertial, and multimap SLAM. IEEE Trans. Robot. 37, 1874–1890 (2021).

Mildenhall, B. et al. Local light field fusion: Practical view synthesis with prescriptive sampling guidelines. ACM Trans. Graph. 38, 1–14 (2019).

Wang, J., Karaev, N., Rupprecht, C. & Novotny, D. VGGSfM: Visual geometry grounded deep structure-from-motion. In Proc. IEEE/CVF Conf. Comput. Vis. Pattern Recognit. 21686–21697 (2024).

He, X. et al. Detector-free structure-from-motion. In Proc. IEEE/CVF Conf. Comput. Vis. Pattern Recognit. 21594–21603 (2024).

Izquierdo, S. & Civera, J. SfM-TTR: Using structure-from-motion for test-time refinement of single-view depth networks. In Proc. IEEE/CVF Conf. Comput. Vis. Pattern Recognit. 21466–21476 (2023).

Zhao, Y. et al. RTSfM: Real-time structure-from-motion for mosaicing and DSM mapping of sequential aerial images with low overlap. IEEE Trans. Geosci. Remote Sens. 60, 1–15 (2021).

Jia, Z., Rao, Y., Fan, H. & Dong, J. An efficient visual SfM framework using planar markers. IEEE Trans. Instrum. Meas. 72, 1–12 (2023).

Revaud, J., Almazán, J., Rezende, R. S. & Souza, C.R. Learning with average precision: Training image retrieval with a listwise loss. In Proc. IEEE/CVF Int. Conf. Comput. Vis. 5107–5116 (2019).

Arandjelovic, R., Gronat, P., Torii, A., Pajdla, T. & Sivic, J. NetVLAD: CNN architecture for weakly supervised place recognition. In Proc. IEEE Conf. Comput. Vis. Pattern Recognit. 5297–5307 (2016).

Zhu, K. & Wu, J. Residual attention: A simple but effective method for multi-label recognition. In Proc. IEEE/CVF Int. Conf. Comput. Vis. 184–193 (2021).

Tolias, G., Avrithis, Y. & Jégou, H. To aggregate or not to aggregate: Selective match kernels for image search. In Proc. IEEE Int. Conf. Comput. Vis. 1401–1408 (2013).

Eldar, Y., Lindenbaum, M., Porat, M. & Zeevi, Y. The farthest point strategy for progressive image sampling. IEEE Trans. Image Process. 6, 1305–1315 (1997).

Loshchilov, I. & Hutter, F. SGDR. Stochastic gradient descent with warm restarts, (2016). arXiv preprint arXiv:1608.03983.

Kingma, D. P. & Ba, J. Adam: A method for stochastic optimization. arXiv preprint arXiv:1412.6980 (2014).

Smith, C., Charatan, D., Tewari, A. & Sitzmann, V. FlowMap: High-quality camera poses, intrinsics, and depth via gradient descent. arXiv preprint arXiv:2404.15259 (2024).

Luo, B. & Hancock, E. R. Procrustes alignment with the EM algorithm. In Int. Conf. Comput. Anal. Images Patterns 623–631 (Springer, 1999).

Wang, J., Rupprecht, C. & Novotny, D. PoseDiffusion: Solving pose estimation via diffusion-aided bundle adjustment. In Proc. IEEE/CVF Int. Conf. Comput. Vis. 9773–9783 (2023).

Wu, H. et al. Exploring video quality assessment on user generated contents from aesthetic and technical perspectives. In Proc. IEEE/CVF Int. Conf. Comput. Vis. 20144–20154 (2023).

Song, X. et al. SA-GS: Scale-adaptive Gaussian splatting for training-free anti-aliasing. arXiv preprint arXiv:2403.19615 (2024).

Acknowledgements

This work was supported by the 2024 Open Research Project of the Key Laboratory for Digital Art Creation in Higher Education Institutions of Yunnan Province (Yunnan Arts University) [grant numbers 2024KFKT01].

Author information

Authors and Affiliations

Contributions

Conceptualization, Methodology, Writing—original draft, Writing—review & editing, J.H.; Software, Writing—review & editing, Writing—original draft, Q.J. All authors reviewed the manuscript.

Corresponding author

Ethics declarations

Competing interests

The authors declare no competing interests.

Additional information

Publisher’s note Springer Nature remains neutral with regard to jurisdictional claims in published maps and institutional affiliations.

Rights and permissions

Open Access This article is licensed under a Creative Commons Attribution-NonCommercial-NoDerivatives 4.0 International License, which permits any non-commercial use, sharing, distribution and reproduction in any medium or format, as long as you give appropriate credit to the original author(s) and the source, provide a link to the Creative Commons licence, and indicate if you modified the licensed material. You do not have permission under this licence to share adapted material derived from this article or parts of it. The images or other third party material in this article are included in the article’s Creative Commons licence, unless indicated otherwise in a credit line to the material. If material is not included in the article’s Creative Commons licence and your intended use is not permitted by statutory regulation or exceeds the permitted use, you will need to obtain permission directly from the copyright holder. To view a copy of this licence, visit http://creativecommons.org/licenses/by-nc-nd/4.0/.

About this article

Cite this article

He, J., Jia, Q. Opti3D for low light enhancement and calibration free 3D digitization of cultural relics. npj Herit. Sci. 13, 357 (2025). https://doi.org/10.1038/s40494-025-01944-z

Received:

Accepted:

Published:

Version of record:

DOI: https://doi.org/10.1038/s40494-025-01944-z