Abstract

The use of TRECs/KRECs in allogeneic HSCT (alloHSCT) has been limited by a lack of standard technical platforms to allow comparison and validation of results between centers. We quantified absolute TRECs/KRECs on sequential samples collected prospectively (pre-transplant, 1, 3, 6 and 12 months post-transplant) in 374 alloHSCT for hematological malignancies using LightCycler 480/TREC-KREC- ACTB (Roche Diagnostics). Following prompt decrease after transplant, KRECs recover as soon as 3 months posttransplant, while TRECs recovery takes up to 1 year (p < 0.001). KRECs do not associate with outcomes. However, higher pre-transplant TRECs strongly associate with reduced non-relapse mortality (NRM) and increased overall survival (OS), and remain independent in multivariate analysis (HR 0.37, p = 0.001, and HR 0.51, p < 0.001, respectively). In addition, faster TRECs recovery measured sequentially at 1, 3, 6 and 12 months after alloHSCT associates with better OS. Furthermore, landmark analyses showed that early survivors with higher TRECs levels at 6 and 12 months after alloHSCT had significantly better subsequent long-term survival, independent from graft-versus-host disease (GVHD) and other clinical factors in multivariate analysis (HR 0.33, p = 0.016 and HR 0.13, p < 0.001, respectively). TRECs levels pre-transplant and at 6 and 12 months post-transplant provide novel biomarker measurable data that associate with alloHSCT long-term outcomes.

Similar content being viewed by others

Introduction

Allogeneic hematopoietic stem cell transplantation (alloHSCT) remains a standard of care for patients with hematological malignancies [1, 2]. Long-term survival after alloHSCT has improved over time, primarily through a better understanding of patient and transplant factors associated with outcomes, and through improved management of transplant complications [3, 4]. Beyond clinical factors and improved management, in the current new era of personalized medicine, there is an increasing interest and need for biomarkers that may have an independent association with alloHSCT recipients’ outcomes and may predict their long-term survival [5, 6].

T cell receptor excision circles (TRECs) and kappa-deleting recombination excision circles (KRECs), stable fragments of circularized DNA that do not replicate during mitosis and get diluted in each cell division, measure T and B lymphocyte neogenesis in primary lymphoid organs [7,8,9,10,11]. Primary lymphoid reconstitution is essential for a successful immune recovery after alloHSCT [12,13,14]. Thus, over the past two decades, many studies have explored the measurement of TRECs and KRECs in alloHSCT [15,16,17,18,19,20,21,22,23,24,25,26,27,28,29,30,31,32]. Unfortunately, the majority of these studies were carried out in relatively small numbers of patients, with assorted samples collected at different time points and quantified with diverse methodologies in the absence of standardized TRECs/KRECs technical platforms. All this limited the comparability among studies, led to inconsistent results, and prevented further development of the role of TRECs/KRECs in alloHSCT.

Our objective here is to measure TRECs/KRECs in a relatively large population of alloHSCT recipients using a standardized commercial platform in prospectively collected sequential samples, and to ascertain their value as biomarkers to predict long-term outcomes after alloHSCT.

Materials, subjects and methods

Study design, population and samples

This retrospective study includes all consecutive alloHSCT procedures in patients with hematological malignancies at Hospital Universitario Puerta de Hierro Majadahonda between 1999 and 2017. All patients signed informed consent forms for treatment, samples and data collection. The study was approved by the IRB (PI 66/19). DNA samples from peripheral blood mononuclear cells were collected prospectively and sequentially pre-transplant and at 1, 3, 6 and 12 months (m) after transplant. Samples were preserved under optimal conditions, and the concentration and quality of DNA were confirmed in all samples prior to inclusion and testing in this study.

TRECs and KRECs measurement

Absolute quantification of TRECs and KRECs was carried out using Roche Diagnostics LightCycler® 480 and TREC-KREC-ACTB Protocol (Version 1.0, 25/09/2018; Roche Diagnostics, Barcelona, ES). Briefly, we used 96-well (semi-skirted) plates containing lyophilized primers and probes for simultaneous qPCR detection of TREC-KREC-ACTB. The primers designed for TRECs use a specific LNA hydrolysis probe marked with FAM (530 nm) to amplify and to detect a 100 bp fragment in a specific locus of the excision circles of T lymphocytes (VDJ genes). For the detection of KRECs, a 105 bp fragment is amplified with a LNA hydrolysis probe labelled with LC610 (610 nm) within the excision circle locus of the Kappa elements of B lymphocytes (VDJ genes). Finally, the quality control of genomic DNA and amplification detects an 83 bp fragment within a specific locus of the ACTB (β-Actin) gene, with a set of primers and LNA hydrolysis probe labelled with the fluorophore LC670 (660 nm, equivalent to Cy5).

Results are expressed in TRECs/KRECs units per reaction (U/R; reaction = 5 µL DNA).

Endpoints and variables

The primary endpoint was alloHSCT recipient overall survival (OS) with a follow-up horizon of five years after transplantation. Non-relapse mortality (NRM), with relapse as a competing event, was also included as a secondary endpoint. Landmark analyses were performed at 6 and 12 months after transplant to explore the impact of TRECs/KRECs levels measured at those time points in early transplant survivors on their long-term OS. The study CRF included all data referring to patient and transplant characteristics, complications, outcomes, and TRECs/KRECs levels at different timepoints. The original dataset was pre-processed to work with some variables and to identify possible outliers. Eighteen TRECs and/or KRECs outlier values over the threshold of 10^5 were removed.

Statistical analyses

Statistical analyses and definitions used in our study are consistent with the EBMT Statistical Guidelines [33]. This study has only right-censored data, given that all time-to-event variables are available, and we have no truncation effects. Primary and secondary outcomes are considered time-dependent categorical variables, with binary (No = 0, Yes = 1) values and related time-to-event variables expressed in days after transplantation. Other categorical variables were treated as factors and checked, in a preliminary exploratory analysis, with Chi-squared or Fisher’s exact test (provided by the compareGroups R package) against the outcomes. For the survival analyses, we created Kaplan-Meier curves to check and plot OS over time, using the Mantel-Cox log-rank test (performed with the survival R package) when needed to assess differences between groups. For the secondary outcome NRM, we used the Fine-Gray approach for competitive risk analysis [34], applying the cmprsk R package [35]. We also used Cox proportional hazard models, with hazard ratios (HR) for all categorical variables of interest, and subdistributed hazard ratios (SHR) using competing risks models. Based on the exploratory analyses and the HR and SHR results, we built several Cox regression models using the log10TRECs and log10KRECs values pre-transplant and at 1, 3, 6 and 12 months post-transplant, and adjusting for significant categorical variables from univariable analyses. From these models, we computed log10TRECs and log10KRECs cut point thresholds to split cases into two categories, high or low TRECs/KRECs values. These cut-point thresholds were computed with the cutpointr R package, applying options to maximize classification accuracy. This package provides robust methods to identify optimal cut points and allows the establishment of the direction of the dependency between class and outcome variables. Visual inspection was done to evaluate the cut points using the plot_metric function in addition to statistical results. All analyses were done with The R Project for Statistical Computing (https://www.r-project.org/; version 4.0.5), including the following R packages: compareGroups (v 4.5.1); survival (v 3.2.13); cmprsk (v 2.2-11); cutpointr (v 1.1.1); survminer (v 0.4.9); survMisc (v 0.5.5); ezfun (v 0.1.3); splitstackshape (v 1.4.8) and ggplot2 (v 3.3.5). For the transformation between days and months, in time-to-event variables, a ratio of 30.5 days per month has been used. In patients with more than one alloHSCT, data on prior transplants were censored on the day of the second or third alloHSCT infusion. P-values were considered statistically significant if <0.05. We assumed non-informative censoring, as well as sufficient follow-up time (60 months) and events to ensure adequate statistical power. Other assumptions were assessed for each kind of test, and none were significantly violated.

Results

Patient and transplant characteristics

The study comprises 374 alloHSCT procedures in 350 recipients with hematological malignancies, median age at transplant of 45 years (range 16–68), 59.6% male recipients, including 21 second and three third transplants. The main indication for alloHSCT was acute leukemia/myelodysplastic syndromes (AL/MDS, 69.8%), and the most frequent donor type was matched siblings (52.9%), with nearly a third of cord blood transplants (30.5%). Other characteristics are presented in Table 1.

TRECs and KRECs levels and recovery after transplant

We analyzed the kinetics of recovery of TRECs and KRECs in alloHSCT recipients both pre-transplant and at various timepoints during the first year after transplant (Fig. 1). TRECs levels dropped down from pre-transplant levels in the first 3 months after transplantation, started recovering thereafter and peaked at 1 year after transplantation: median U/R and interquartile ranges (IQR) were 20 (5.42–120) pre-transplant, 5.28 (0.04–46.9) at 1 m, 4.72 (0.01–39) at 3 m, 13 (1.61–137) at 6 m, and 38.8 (5.06–402) at 12 m (p < 0.001). KRECs levels recovered faster, reaching high levels above baseline values as soon as 3 months after transplant and remaining stable thereafter: 5.55 (0.01–266) pre-transplant, 9.74 (0.4–106) at 1 m, 553.5 (28.85–5690) at 3 m, 810 (91.5–4930) at 6 m, and 704.5 (51.6–2820) at 12 m (p < 0.001).

Violin plots showing the distribution of TRECs (a) and KRECs (b) values pre-transplant and at the various post-transplant sequential time-points of the study. The horizontal black lines mark medians and quartiles, and the red lines mark arithmetic means.

KRECs levels and transplant characteristics, and outcome

Pre-transplant KRECs levels were associated with age (354 U/R < 30 years vs 27.1 U/R > 60 years; p < 0.001) and with the underlying malignancy, being higher in patients with AL/MDS compared with those with multiple myeloma or chronic lymphoproliferative disorders (183 vs 106 vs 44.2 U/R, respectively; p = 0.002) (Table 2). They did not associate with sex, donor type, intensity of conditioning regimen or other patient or transplant characteristics. Moreover, we did not find any association between KRECs levels, neither pre-transplant nor at any time-point post-transplant, and alloHSCT outcomes.

Pre-transplant TRECs levels & patient and transplant characteristics

Pre-transplant TRECs levels were higher in younger patients compared with the other age groups and were lowest in the oldest patient group (26.4 U/R < 30 years vs 5.06 U/R > 60 years; p < 0.001; Table 2). We found that pre-transplant TRECs levels were lower in patients with AL/MDS, compared with those with multiple myeloma or chronic lymphoproliferative disease (8.89 vs 19.9 vs 21.5 U/R, respectively, p = 0.001). Also, pre-transplant TRECs appeared to be higher in patients who were to receive a matched related transplant compared to other donor types (17.9 vs 7.52 U/R, p < 0.001), but no associations were found between TRECs levels and other characteristics such as sex or type of conditioning.

Pre-transplant TRECs levels and transplant outcome

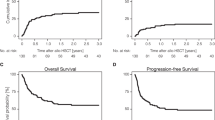

Patients with higher pre-transplant TRECs levels had a significantly higher OS in the univariate analysis (72.7% vs 38.2% at 2 years and 64.7% vs 35.3% at 5 years, p = 0.005; Table 3 and Fig. 2a), in keeping with a lower cumulative incidence of NRM (21.2% vs 44.1%, p = 0.044).

Kaplan-Meier overall survival curves according to pre-transplant log10TRECs values above (in yellow) or below (in red) the threshold cut point (thrTRECs ≥1.4), using the Mantel-Cox log-rank, and presented separately for the whole population (a), for transplants from matched related donors (b) and from other donor types, including cord blood, haploidentical and unrelated donors (c). The yellow and red ranges are the 95% confidence interval.

This association between higher pre-transplant TRECs levels and higher OS was also confirmed when analyzed separately for transplants from matched related donors (81.7% vs 55.2% at 2 years and 69.2% vs 49.9% at 5 years, p = 0.015; Fig. 2b) and from other donor types, including cord blood, haploidentical and unrelated donors (63.6% vs 37.9% at 2 years and 55.4% vs 32.8% at 5 years, p = 0.008; Fig. 2c).

In multivariate analysis, after adjusting for patient age and sex, underlying disease (AL/MDS vs others), type of alloHSCT donor (HLA identical siblings vs others), conditioning regimen (myeloablative vs others) and graft-versus-host disease (GVHD, grades 0–1 vs 2–4), higher pre-transplant TRECs levels remained independently associated with increased OS (HR 0.51 [95% CI 0.35–0.73], p < 0.001) and lower NRM (HR 0.37 [95% CI 0.21–0.66], p = 0.001). Patient age (HR 0.54 [95% CI 0.36–0.79], p = 0.002) and type of donor (HR 0.52 [95% CI 0.31–0.85], p = 0.01) were also independently associated with OS.

Recovery of TRECs levels after transplant, patient outcomes and landmark analyses in early survivors

Beyond the impact of TRECs levels prior to transplant on OS that we have just described, the prospective collection of sequential samples in our study allows for a more detailed analysis of the impact of the recovery of TRECs after transplant on long-term outcomes. As measured by their levels at 1, 3, 6 and 12 months after alloHSCT, faster TRECs recovery, at every time-point, associates with better OS (Table 3). Thus, we decided to carry out landmark analyses at 6 and 12 months to identify the impact of TRECs levels on the long-term outcomes of early survivors at those time points. Landmark analyses showed that higher TRECs levels in survivors at 6 and 12 months after alloHSCT associated with significantly better long-term survival of these patients. At 6 months, TRECs values above the threshold cut point (thrTRECs ≥0.1) associated with better OS of 82.4% vs 56.7%, 73.9% vs 43.7%, and 72.4% vs 38.9%, 1, 3 and 5 years later, respectively (p < 0.001). At 12 months, TRECs values above the threshold cut point (thrTRECs ≥0.4) also associated with better OS of 93% vs 59.7%, 85.6% vs 47.6%, and 83.4% vs 47.6%, 1, 3 and 5 years later, respectively (p < 0.001; Fig. 3a). Furthermore, this association of TRECs levels and long-term survival remains independent in multivariate analysis both at 6 months (HR 0.33 [95% CI 0.13–0.81], p = 0.016) and 12 months (HR 0.13 [95% CI 0.04–0.42], p < 0.001) after adjusting for other clinical factors including GVHD (Fig. 3b, c).

Kaplan-Meier survival curves of early survivors at 12 months after transplant (landmark analysis) according to 12-month log10TRECs values above (in yellow) or below (in red) the threshold cut point (thrTRECs ≥0.4), using the Mantel-Cox log-rank, and presented separately for the whole population (a), and for cases with (b) and without (c) graft versus host disease. The yellow and red ranges are the 95% confidence interval.

Discussion

This is one of the first studies, the largest in this field, to sequentially measure TRECs and KRECs in alloHSCT recipients. The study shows that sequential quantification of TRECs in alloHSCT recipients using a standardized commercial platform provides novel biomarker measurable data that associate with patient and transplant characteristics and outcomes. Pre-transplant TRECs levels have an independent association with NRM and OS that may complement and inform decision-making in transplant candidates, currently based only on clinical factors. Furthermore, TRECs levels measured at six and 12 months may provide a novel objective tool to predict the long-term outcome of early survivors after alloHSCT.

Few studies have explored the role of KRECs in alloHSCT [28, 32]. Beyond the description of KRECs recovery early after transplant, similar to our own findings [28], these studies have failed to show any association of KRECs levels and key transplant outcomes [32]. KRECs do not appear to be a suitable candidate biomarker to predict alloHSCT survival and patient outcomes.

Many prior studies explored the role of TRECs in alloHSCT, primarily focusing on their association with patient and transplant characteristics, with differences among studies and often inconclusive results [20,21,22,23,24,25,26,27,28,29,30,31]. Our data, in line with most prior studies, show an association between pre-transplant TRECs levels and patient age, but other than this, we did not find associations with sex or other relevant factors, and associations found with factors such as the underlying malignancy or the type of transplant are most likely surrogates of other features in these subgroups of patients. Some of these studies also explored the role of TRECs on hard endpoints and patient outcomes. Clave et al. and Sairafi et al. identified associations of higher TRECs levels pre-transplant and at 3 months post-transplant, respectively, with improved NRM and OS [26, 27]. Others showed associations between TRECs levels pre-transplant and post-transplant and the risk of disease relapse for some subgroups of malignancies, but without an overall impact on OS and long-term outcomes [29, 31]. As described above, our data show a strong association of TRECs levels, pre-transplant and at every time point of their recovery post-transplant, with long-term patient outcomes. This impact, both for NRM and OS, remains independent from other key factors such as age, sex, type of disease, conditioning, type of transplant or GVHD in multivariate analysis.

Beyond differences in patient and transplant characteristics among all these TRECs studies, major limitations thus far are that most of them were carried out in relatively small numbers of patients, with assorted samples at different time-points rather than collected prospectively and sequentially, and with diverse methodologies in the absence of a standardized TRECs/KRECs technical platform. Measurement methods have included semiquantitative PCR [7, 16], hybridization probe [9, 23], and TaqMan probe [11, 24, 27, 28]. Cell populations used for DNA extraction for TRECs quantification have also varied among studies, including CD3 ref. [23, 27], CD4/CD8 ref. [7, 25], or peripheral blood mononuclear cells [16, 24]. So have the units in which measurements have been reported, TRECs/µg of DNA [16, 23], TRECs/µL of blood [20], and TRECs/cell count [24], further complicating the comparability among studies and the reproducibility of their results. Our use of a broadly available standardized commercial platform, such as LightCycler 480/TREC-KREC-ACTB, will likely improve previous inconsistencies and will facilitate further development and validation of the role of TRECs as biomarkers to predict long-term outcomes in alloHSCT recipients.

Apart from TRECs levels pretransplant, our battery of samples collected prospectively at 1, 3, 6 and 12 months confirms that TRECs measured sequentially after transplant strongly associate with long-term OS. Based on such strong signal of TRECs recovery and patient survival, we carried out landmark analyses of the impact of TRECs levels in early survivors on their long-term outcomes. Only one recent study, by Söderström et al. [32], has reported landmark analyses of TRECs in alloHSCT, showing in a group of 90 alloHSCT recipients for AML an association of OS with TRECs levels measured at 12 months, but not at 6 months. As presented above, our data strongly show that early transplant survivors at 6 and 12 months with higher TRECs levels at those time-points had significantly better subsequent long-term OS. In addition, this effect in our series is independent of GVHD and of other clinical factors in the multivariate analyses. This finding is particularly remarkable, as the field of alloHSCT currently lacks any such tool to estimate survival after the first year posttransplant, and identifies TRECs as a potential biomarker to predict long-term OS in early alloHSCT survivors.

Our study has some limitations. Despite the impact of age and intensive chemotherapy on thymic involution [18, 19], studies have shown that thymic function is not completely eliminated, and under immunosuppressive stress conditions such as HIV infection and alloHSCT, the remaining thymic tissue can contribute to immune recovery in these patients [7, 16]. While these findings support the role of TRECs and their relationship with immune recovery and outcomes after alloHSCT, our study was not designed to assess immune recovery parameters that may have helped draw a connection between our findings with TRECs values and transplant outcomes. In addition, cut point thresholds of TRECs/KRECs levels used in this study come from the available data in this series, and may not be optimal to be applied to other external data sets. Thus, for the purpose of the work presented here, the log10TRECs and log10KRECs cut points are only intended to improve stratification between high and low TRECs or KRECs values for the survival curves and HR estimations in this series.

In summary, our study shows that while KRECs do not seem to be a good candidate biomarker in alloHSCT, TRECs have a strong impact on patient outcomes, independent from other clinical factors. Pre-transplant, they could potentially improve patient assessment beyond current clinical factors, and post-transplant, at 6 and 12 months, they could provide novel biomarker data to predict long-term survival in early transplant survivors. The use of a standardized commercial platform will improve prior inconsistencies in this field. Future work will aim to prospectively examine the relationship between TRECs dynamics and immune reconstitution, explore the potential impact of donor-related variables, including TREC levels, and ultimately pursue external validation, ideally in a prospective multicenter setting, using the standardized LightCycler 480 / TREC-KREC-ACTB platform.

Data availability

The datasets generated during and/or analysed during the current study are available from the corresponding author on reasonable request.

References

Passweg JR, Baldomero H, Chabannon C, Basak GW, de la Camara R, Corbacioglu S, et al. Hematopoietic cell transplantation and cellular therapy survey of the EBMT: monitoring of activities and trends over 30 years. Bone Marrow Transpl. 2021;56:1651–64. https://doi.org/10.1038/s41409-021-01227-8.

Snowden JA, Sánchez-Ortega I, Corbacioglu S, Basak GW, Chabannon C, de la Camara R, et al. Indications for haematopoietic cell transplantation for haematological diseases, solid tumours and immune disorders: current practice in Europe, 2022. Bone Marrow Transpl. 2022;57:1217–39. https://doi.org/10.1038/s41409-022-01691-w.

Penack O, Peczynski C, Mohty M, Yakoub-Agha I, Styczynski J, Montoto S, et al. How much has allogeneic stem cell transplant-related mortality improved since the 1980s? A retrospective analysis from the EBMT. Blood Adv. 2020;4:6283–90. https://doi.org/10.1182/bloodadvances.2020003418.

Styczyński J, Tridello G, Koster L, Iacobelli S, van Biezen A, van der Werf S, et al. Death after hematopoietic stem cell transplantation: changes over calendar year time, infections and associated factors. Bone Marrow Transpl. 2020;55:126–36. https://doi.org/10.1038/s41409-019-0624-z.

Karaesmen E, Rizvi AA, Preus LM, McCarthy PL, Pasquini MC, Onel K, et al. Replication and validation of genetic polymorphisms associated with survival after allogeneic blood or marrow transplant. Blood. 2017;130:1585–96. https://doi.org/10.1182/blood-2017-05-784637.

Paczesny S. Biomarkers for posttransplantation outcomes. Blood. 2018;131:2193–204. https://doi.org/10.1182/blood-2018-02-791509.

Douek DC, McFarland RD, Keiser PH, Gage EA, Massey JM, Haynes BF, et al. Changes in thymic function with age and during the treatment of HIV infection. Nature. 1998;396:690–5. https://doi.org/10.1038/25374.

Haynes BF, Hale LP, Weinhold KJ, Patel DD, Liao HX, Bressler PB, et al. Analysis of the adult thymus in reconstitution of T lymphocytes in HIV-1 infection. J Clin Investig. 1999;103:453–60. https://doi.org/10.1172/JCI5201.

van Zelm MC, Szczepanski T, van der Burg M, van Dongen JJ. Replication history of B lymphocytes reveals homeostatic proliferation and extensive antigen-induced B cell expansion. J Exp Med. 2007;204:645–55. https://doi.org/10.1084/jem.20060964.

Livak F, Schatz DG. T-cell receptor α locus V(D)J recombination by-products are abundant in thymocytes and mature T-cells. Mol Cell Biol. 1996;16:609–18. https://doi.org/10.1128/MCB.16.2.609.

Hazenberg MD, Verschuren MC, Hamann D, Miedema F, van, Dongen JJ. T cell receptor excision circles as markers for recent thymic emigrants: Basic aspects, technical approach, and guidelines for interpretation. J Mol Med. 2001;79:631–40. https://doi.org/10.1007/s001090100271.

Storek J, Geddes M, Khan F, Huard B, Helg C, Chalandon Y, et al. Reconstitution of the immune system after hematopoietic stem cell transplantation in humans. Semin Immunopathol. 2008;30:425–37. https://doi.org/10.1007/s00281-008-0132-5.

Cavazzana-Calvo M, Andre-Schmutz I, Dal Cortivo L, Neven B, Hacein-Bey-Abina S, Fischer A. Immune reconstitution after haematopoietic stem cell transplantation: obstacles and anticipated progress. Curr Opin Immunol. 2009;21:544–8. https://doi.org/10.1016/j.coi.2009.08.001.

Bemark M, Holmqvist J, Abrahamsson J, Mellgren K. Translational mini-review series on B cell subsets in disease. Reconstitution after haematopoietic stem cell transplantation—revelation of B cell developmental pathways and lineage phenotypes. Clin Exp Immunol. 2012;167:15–25. https://doi.org/10.1111/j.1365-2249.2011.04469.x.

Mackall CL, Fleisher TA, Brown MR, Andrich MP, Chen CC, Feuerstein IM, et al. Age, thymopoiesis, and CD4+ T-lymphocyte regeneration after intensive chemotherapy. N Engl J Med. 1995;332:143–9. https://doi.org/10.1056/NEJM199501193320303.

Douek DC, Vescio RA, Betts MR, Brenchley JM, Hill BJ, Zhang L, et al. Assessment of thymic output in adults after haematopoietic stem-cell transplantation and prediction of T-cell reconstitution. Lancet. 2000;355:1875–81. https://doi.org/10.1016/S0140-6736(00)02293-5.

Weinberg K, Blazar BR, Wagner JE, Agura E, Hill BJ, Smogorzewska M, et al. Factors affecting thymic function after allogeneic hematopoietic stem cell transplantation. Blood. 2001;97:1458–66. https://doi.org/10.1182/blood.V97.5.1458.

Storek J, Joseph A, Espino G, Dawson MA, Douek DC, Sullivan KM, et al. Immunity of patients surviving 20 to 30 years after allogeneic or syngeneic bone marrow transplantation. Blood. 2001;98:3505–12. https://doi.org/10.1182/blood.v98.13.3505.

Loeffler J, Bauer R, Hebart H, Douek DC, Rauser G, Bader P, et al. Quantification of T-cell receptor excision circle DNA using fluorescence resonance energy transfer and the LightCycler system. J Immunol Methods. 2002;271:167–75. https://doi.org/10.1016/s0022-1759(02)00337-x.

Chen X, Barfield R, Benaim E, Leung W, Knowles J, Lawrence D, et al. Prediction of T-cell reconstitution by assessment of T-cell receptor excision circle before allogeneic hematopoietic stem cell transplantation in pediatric patients. Blood. 2005;105:886–93. https://doi.org/10.1182/blood-2004-04-1405.

Clave E, Rocha V, Talvensaari K, Busson M, Douay C, Appert ML, et al. Prognostic value of pretransplantation host thymic function in HLA-identical sibling hematopoietic stem cell transplantation. Blood. 2005;105:2608–13. https://doi.org/10.1182/blood-2004-04-1667.

Jiménez M, Martínez C, Ercilla G, Carreras E, Urbano-Ispízua A, Aymerich M, et al. Reduced-intensity conditioning regimen preserves thymic function in the early period after hematopoietic stem cell transplantation. Exp Hematol. 2005;33:1240–8. https://doi.org/10.1016/j.exphem.2005.06.016.

Jiménez M, Martínez C, Ercilla G, Carreras E, Urbano-Ispízua A, Aymerich M, et al. Clinical factors influencing T-cell receptor excision circle (TRECs) counts following allogeneic stem cell transplantation in adults. Transpl Immunol. 2006;16:52–59. https://doi.org/10.1016/j.trim.2006.02.006.

Przybylski GK, Kreuzer KA, Siegert W, Schmidt CA. No recovery of T-cell receptor excision circles (TRECs) after non-myeloablative allogeneic hematopoietic stem cell transplantation is correlated with the onset of GvHD. J Appl Genet. 2007;48:397–404. https://doi.org/10.1007/BF03195239.

Sugita J, Iwao N, Tanaka J, Kato N, Shiratori S, Wakasa K, et al. T cell receptor excision circle levels in CD94-expressing CD8 T Cells during graft-versus-host disease. Leuk Lymphoma. 2008;49:1306–10. https://doi.org/10.1080/10428190802146086.

Clave E, Busson M, Douay C, Peffault de Latour R, Berrou J, Rabian C, et al. Acute graft-versus-host disease transiently impairs thymic output in young patients after allogeneic hematopoietic stem cell transplantation. Blood. 2009;113:6477–84. https://doi.org/10.1182/blood-2008-09-176594.

Sairafi D, Mattsson J, Uhlin M, Uzunel M. Thymic function after allogeneic stem cell transplantation is dependent on graft source and predictive of long-term survival. Clin Immunol. 2012;142:343–50. https://doi.org/10.1016/j.clim.2011.12.001.

Mensen A, Ochs C, Stroux A, Wittenbecher F, Szyska M, Imberti L, et al. Utilization of TREC and KREC quantification for the monitoring of early T- and B-cell neogenesis in adult patients after allogeneic hematopoietic stem cell transplantation. J Transl Med. 2013;11:188. https://doi.org/10.1186/1479-5876-11-188.

Uzunel M, Sairafi D, Remberger M, Mattsson J, Uhlin M. T-cell receptor excision circle levels after allogeneic stem cell transplantation are predictive of relapse in patients with acute myeloid leukemia and myelodysplastic syndrome. Stem Cells Dev. 2014;23:1559–67. https://doi.org/10.1089/scd.2013.0588.

Gaballa A, Sundin M, Stikvoort A, Abumaree M, Uzunel M, Sairafi D, et al. T cell receptor excision circle (TREC) monitoring after allogeneic stem cell transplantation; a predictive marker for complications and clinical outcome. Int J Mol Sci. 2016;17:1705. https://doi.org/10.3390/ijms17101705.

Mikhael NL, Elsorady M. Clinical significance of T cell receptor excision circle (TREC) quantitation after allogenic HSCT. Blood Res. 2019;54:274–81. https://doi.org/10.5045/br.2019.54.4.274.

Söderström A, Vonlanthen S, Jönsson-Videsäter K, Mielke S, Lindahl H, Törlén J, et al. T cell receptor excision circles are potential predictors of survival in adult allogeneic hematopoietic stem cell transplantation recipients with acute myeloid leukemia. Front Immunol. 2022;13:954716. https://doi.org/10.3389/fimmu.2022.954716.

Iacobelli S, EBMT Statistical Committee. Suggestions on the use of statistical methodologies in studies of the European Group for Blood and Marrow Transplantation. Bone Marrow Transpl. 2013;48:S1–37. https://doi.org/10.1038/bmt.2012.282.

Fine JP, Gray RJ. A proportional hazards model for the subdistribution of a competing risk. J Am Stat Assoc. 1999;94:496–509. https://doi.org/10.1080/01621459.1999.10474144.

Austin P, Fine J. Practical recommendations for reporting Fine-Gray model analyses for competing risk data. Statistics Med. 2017;36:4391–400. https://doi.org/10.1002/sim.7501.

Acknowledgements

The authors would like to acknowledge other staff members of the HSCT Program and the Cytogenetics and Molecular Biology Laboratory at our Department for their support in the conduction of this study, Dr. Carlos Vilches (Spanish National Transplant Organization) for his expert supervision of immunological aspects of this work, and the Spanish Centro para el Desarrollo Tecnológico y la Innovación (CDTI) for funding this project.

Funding

Funded by the Centro para el Desarrollo Tecnológico y la Innovación (CDTI) of the Spanish Ministry of Science, Innovation and Universities (Project # IDI-20180259).

Author information

Authors and Affiliations

Contributions

CDM designed the study, collected the data, performed the laboratory analyses, interpreted the results, and wrote the manuscript. FB performed the statistical analysis and reviewed the results. RA collected the samples and reviewed the final manuscript. GB interpreted the results and reviewed the final manuscript. LE participated in the analysis and reviewed the final manuscript. CMP contributed to the study design and reviewed the final manuscript. MEM participated in the analysis and reviewed the final manuscript. LN collected the samples and reviewed the final manuscript. IS participated in the analysis and reviewed the final manuscript. ASP contributed to the study design and reviewed the final manuscript. RD designed the study, interpreted the results, and reviewed the final manuscript. All authors read and approved the final version of the manuscript.

Corresponding author

Ethics declarations

Competing interests

FB, CMP and ASP are employees of Roche Diagnostics (Barcelona, Spain), which LightCycler® 480/TREC-KREC-ACTB Platform was used for the measurement of TRECs/KRECs in this study. CdM work was partly funded by CDTI’s Project # IDI-20180259. No other authors have any relevant conflicts of interest to disclose for the work presented here.

Additional information

Publisher’s note Springer Nature remains neutral with regard to jurisdictional claims in published maps and institutional affiliations.

Rights and permissions

Open Access This article is licensed under a Creative Commons Attribution-NonCommercial-NoDerivatives 4.0 International License, which permits any non-commercial use, sharing, distribution and reproduction in any medium or format, as long as you give appropriate credit to the original author(s) and the source, provide a link to the Creative Commons licence, and indicate if you modified the licensed material. You do not have permission under this licence to share adapted material derived from this article or parts of it. The images or other third party material in this article are included in the article’s Creative Commons licence, unless indicated otherwise in a credit line to the material. If material is not included in the article’s Creative Commons licence and your intended use is not permitted by statutory regulation or exceeds the permitted use, you will need to obtain permission directly from the copyright holder. To view a copy of this licence, visit http://creativecommons.org/licenses/by-nc-nd/4.0/.

About this article

Cite this article

de Miguel, C., Briansó, F., Alonso, R. et al. Sequential quantification of T-cell receptor excision circles (TRECs) and K-deleting recombination excision circles (KRECs) and overall survival after allogeneic HSCT. Bone Marrow Transplant 61, 179–186 (2026). https://doi.org/10.1038/s41409-025-02744-6

Received:

Revised:

Accepted:

Published:

Version of record:

Issue date:

DOI: https://doi.org/10.1038/s41409-025-02744-6