Abstract

The rapid advancement of artificial intelligence has enabled breakthroughs in diverse fields, including autonomous systems and medical diagnostics. However, conventional deterministic neural networks struggle to capture uncertainty, limiting their reliability when handling real-world data, which are often noisy, imbalanced, or scarce. Bayesian neural networks address this limitation by representing weights as probabilistic distributions, allowing for natural uncertainty quantification and improved robustness. Despite their advantages, hardware-based implementations face significant challenges due to the difficulty of independently tuning both the mean and variance of weight distributions. Herein, we propose a 3D ferroelectric NAND-based Bayesian neural network system that leverages incremental step pulse programming technology to achieve efficient and scalable probabilistic weight control. The page-level programming capabilities and intrinsic device-to-device variations enable gaussian weight distributions in a single programming step, without structural modifications. By modulating the incremental step pulse programming voltage step, we achieve precise weight distribution control. The proposed system demonstrates successful uncertainty estimation, enhanced energy efficiency, and robustness to external noise for medical images.

Similar content being viewed by others

Introduction

Rapid advancements in artificial intelligence (AI) have catalyzed the evolution of various machine learning paradigms. Among these, artificial neural networks (ANNs) have significantly enhanced learning efficiency and generalization capabilities, achieving breakthroughs in applications such as image recognition, autonomous driving, healthcare diagnostics, and weather prediction1,2,3,4,5,6. However, the growing complexity of ANN models has led to an exponential increase in the computational demand, exposing the inherent limitations of the traditional von Neumann architecture, particularly its memory and processing bottlenecks. To address these challenges, hardware-accelerated neural networks that employ non-volatile memory (NVM) have emerged as compelling alternatives. By integrating data storage and parallel computation through vector-matrix multiplication (VMM) operations that leverage Kirchhoff’s current law for input voltages and stored conductances directly within the memory array, these architectures can overcome the inefficiencies of conventional designs and offer a more scalable and energy-efficient solution for next-generation AI workloads7,8,9,10,11,12.

As synaptic elements in neural networks, NVMs should not only store weight information but also represent analog values for precise computations through complex VMM operations, requiring a multilevel capability to encode analog weights, stable retention, and robust endurance13,14,15. In this regard, hafnium oxide (HfO₂)-based ferroelectric field-effect transistors (FeFETs) have gained significant attention for their low programming voltage, fast switching speed, high scalability, stable multilevel states, robust reliability, and CMOS compatibility16,17,18,19,20,21,22 among various NVM technologies, such as memristors23,24,25,26,27, ferroelectric devices28,29,30,31,32,33,34,35, magnetic random-access memory (MRAM)36,37,38, and flash memory39,40,41,42,43. Therefore, the implementation of hardware neural networks using ferroelectric-based synaptic devices has attracted significant attention from both academia and industry.

Existing neural networks lack robust uncertainty quantification and fail to provide reliable assessments of their predictions44,45. These models often exhibit overconfidence and produce incorrect predictions with high certainty, which poses significant risks in high-reliability applications such as healthcare and autonomous driving46. Furthermore, real-world data frequently contains noise or deviates from the training distribution, making it challenging for conventional models to deliver accurate and reliable predictions47. In hardware-based neural networks, additional challenges arise from phenomena such as endurance, retention, and drift, which can alter the weight values over time, leading to significant performance degradation48,49.

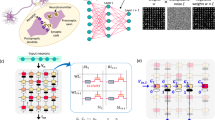

To address these challenges, Bayesian neural networks (BNNs) have been developed by applying Bayes’ theorem to conventional neural networks. Unlike traditional models, which represent weights as fixed values, BNNs model weights as probability distributions, enabling quantitative uncertainty evaluation (Fig. 1a)50,51,52,53. This capability makes BNNs particularly suitable for applications in safety-critical domains, such as autonomous driving, robotics, and medical diagnosis, where understanding and managing uncertainty are essential. As the posterior distribution is computationally intractable, Bayesian methods typically approximate it using techniques such as variational inference (VI)54,55 and Markov chain Monte Carlo (MCMC) sampling56,57. BNNs can instantiate conventional neural networks by sampling weight values from these distributions. During the training, multiple networks were generated by sampling M times from the BNN. When the same input data are provided to these networks, a predictive distribution of the output is obtained. Unlike traditional networks that primarily focus on fitting data, BNNs inherently model uncertainty, mitigate overfitting, and perform well even in scenarios with limited data. In addition, their probabilistic framework allows them to assess uncertainty, rendering them effective at identifying and classifying previously unseen images.

a Applications of Bayesian neural networks (BNNs). BNNs model uncertainty in neural networks, making them suitable for critical tasks such as medical data analysis, self-driving vehicles, robotics, and unseen data detection, where reliability and robustness are essential. b Schematic of 3D FeNAND array for BNN system. c Mapping BNNs on 3D FeNAND arrays. The NAND structure enables simultaneous programming of the same word lines (WLs), reducing programming time, and current summation is achieved through bit lines (BLs). d Incremental step pulse programming (ISPP) method to adjust cells to the target state. Inhibit voltage is applied to the BLs of cells that exceed the target current to prevent further state changes. e Method for regulating the standard deviation of weight distribution using ISPP. Voltage steps and pulse widths in ISPP are adjusted to control σ. The final weight distribution is obtained by subtracting µ cells, which have very narrow dispersion achieved through the program-and-verify method, and σ cells represented by grouping multiple cells.

To implement a BNN in hardware where weights are represented as probability distributions, an effective method for realizing these weight distributions is essential. Recent studies have proposed various approaches for expressing the synaptic weight distributions of BNNs in hardware58,59,60,61,62,63. The inherent stochasticity of NVMs aligns well with the probabilistic nature of the synapses in BNNs. For instance, cycle-to-cycle variability has been utilized by performing repeated program-and-erase cycles, repeated read operations, and combining multiple cells to represent the distribution of a single synapse’s distribution58,59,60,61. In addition, random telegraph noise (RTN) induced by traps has been explored as a method for generating weight distributions62. Another approach involves directly mapping the weights sampled M times in the software to M hardware arrays63. However, these methods have several challenges. Achieving the desired distribution through repeated programming and erasing of a single cell is time-intensive and lacks efficiency, which is a significant limitation. Furthermore, the proposed architecture requires substantial design modifications or the addition of peripheral circuits to accommodate the vast number of parameters required for modern deep learning, which leads to increased hardware complexity and design challenges. Additionally, RTNs are inherently difficult to manage, often resulting in excessively narrow distributions during repeated read operations, which pose considerable challenges to the efficient and practical realization of BNNs in hardware systems.

In this study, we demonstrate the practical implementation of BNNs in commercial 3D NAND architecture without any structural changes (Fig. 1b). By utilizing ferroelectric materials, which enable low-voltage operation and the programming of multiple cells sharing a word line (WL) simultaneously through the NAND structure, the proposed approach achieves significant improvements in time/area efficiency and reliability (Fig. 1c). Leveraging page-level programming of 3D ferroelectric NAND-type memory (FeNAND) and device-to-device variation, BNN weight distribution is implemented in a single process. The incremental step pulse programming (ISPP) technique, which is a standard programming method in NAND flash memory, is employed to generate multiple states, creating Gaussian weight distributions without the need for additional circuitry. The wide-ranging standard deviation (σ) of these distributions is simply controlled by adjusting the ISPP voltage step (Vstep) (Fig. 1d). The program voltage is incrementally increased by Vstep until all the cells in the WL reach the target current. The cells that reach the target current first have an inhibitory voltage applied to stabilize their state and prevent further changes. Moreover, the mean (μ) is fine-tuned using a program-and-verify method, ensuring that cells are programmed until the cell current reaches the target current value (Fig. 1e). Therefore, the mean and distribution dispersion of multiple cells sharing a WL can be finely adjusted using only an ISPP program-and-verify operation without multiple programming and erasing iterations of a single cell. The final weight distribution is determined by subtracting G+ and G−, reflecting the weight’s inherent distribution. This allows for the representation of a wide range of distributions, from narrow to broad, by adjusting Vstep. To validate this approach, we fabricated a 24×12 HfZrO2 (HZO)-based FeNAND array. Experimental results confirmed that both the weight distribution of σ and μ can be precisely controlled by varying Vstep and the target current. Additionally, to verify the compatibility with the 3D NAND structure, the weight distributions trained in the software were mapped onto the FeNAND arrays. Inference operations were then performed using the proposed NAND-based BNN framework and evaluated using SPICE simulations, thereby confirming both the feasibility and practicality of the implementation. The proposed system offers the most compatible and reliable solution for hardware BNNs currently available in the memory industry.

Results

Device characteristics

Figure 2a shows a schematic of the method for emulating biological synapses using FeFETs. In the biological brain, the strength of synaptic connections between presynaptic and postsynaptic neurons is updated based on the degree of interaction between neurons in response to external stimuli. This process can be replicated by utilizing the partial polarization of the FeFETs. The extent of polarization in the ferroelectric layer domains varies according to the magnitude of the external programming pulse applied to the gate of the FeFET, thereby enabling the simulation of the synaptic connection strength. Figure 2(b-1) and (b-2) show the top optical image of the fabricated 24×12 HZO-based FeNAND array. The array structure consists of 24 WLs intersecting 12-bit lines (BLs) and source lines (SLs), forming a total of 288 memory cells. Rows can be selected via WLs, whereas BLs connect to multiple cell drains, allowing selective read and write operations through column selection. Figure 2c shows the cross-sectional TEM image of a single FeNAND cell, where a 1.18 nm SiO₂ gate oxide layer is deposited on the Si channel, and a 6.63 nm HZO layer serves as the ferroelectric layer. The WL consists of a TiN layer with a thickness of 100 nm (see the Methods section and Supplementary Fig. 1 for the detailed fabrication process). Supplementary Fig. 3 shows the energy-dispersive X-ray spectroscopy (EDS) results of the FeFET gate stack. Figure 2d shows the current (I) measured through the FeFET gate as a function of the applied voltage (V ) based on the PUND measurements. Figure 2e shows the leakage-removed polarization-switching current density ( J) versus voltage (left axis) and its integration (polarization: P) over time (right axis). The HZO layer shows remnant polarization (2Pr) exceeding 50 μC/cm², confirming the robust polarization switching capability of the fabricated FeNAND cells. Unclosed loop is due to the partial domain switching caused by domain pinning centers, such as oxygen vacancies or dopants, which may render some domains slower to flip. However, from the perspective of BNN performance, these minor non-idealities do not undermine the key advantage of our system: it reliably supports the statistical weight distributions needed for Bayesian inference.

a Schematic demonstrating the method of emulating biological synapses using FeFETs. b-1 Top optical image of the fabricated 24×12 HfZrO₂ (HZO)-based FeNAND array. b-2 The array structure consists of 24 word lines (WLs) intersecting with 12 bit lines (BLs) and source lines (SLs), forming a total of 288 memory cells. c Cross-sectional TEM image of a single FeNAND cell. d Measured current (I) through the FeFET gate as a function of applied voltage (V) based on PUND measurements, verifying the ferroelectric properties of the HZO layer. e Leakage-removed polarization switching current versus voltage (right axis) and polarization versus voltage (left axis). f Pristine-state DC IV double-sweep characteristics of the 24×12 FeNAND array. g I–V curves of 30 randomly selected cells after program and erase operations. The program state was induced by applying a 5.5 V program voltage for 2μs, while the erase state was achieved using a −5 erase voltage for 5μs, with both BLs and SLs grounded. h Program and erase speeds of the fabricated FeNAND device. i Retention characteristics of four distinct states programmed by applying specific pulses to the WL (State 1: 5 V, 2 μs; State 2: 3.8 V, 2 μs; State 3: 3.2 V, 2 μs; and State 4: −5 V, 5 μs). j ID-VGS characteristics over 106 seconds at room temperature show negligible Vth drift. ID-time of FeNAND cell with different state. k Endurance characteristics of the fabricated FeNAND device under repeated programming and erasing. A 5.5 V program pulse and a −5 V erase pulse, each applied for 2μs, are repeatedly cycled. l ID-VGS curves obtained when programming the FeNAND cell using the ISPP scheme. m Multilevel conductance states were obtained by performing iterative tuning cycles for eight distinct target levels. The circle represents the average conductance value for each target level.

Figure 2f shows the pristine-state DC IV double-sweep characteristics of the 24×12 FeNAND array. FeFETs with NAND architecture exhibit variations in both erase and program operations owing to process parameters, including dielectric properties, ferroelectric layer thickness, and doping concentration. The step-like signal observed when the order changes is attributed to the B1500A measurement setup. Supplementary Fig. 4a shows the pristine-state DC IV single-sweep characteristics of the 24×12 FeNAND array. Supplementary Fig. 4b shows current maps corresponding to each WL and BL. Similar to the double-sweep results, device-to-device variations are observed. Although such variations typically degrade the memory system accuracy, they can be beneficially exploited as weight uncertainty representations in BNNs through appropriate operation schemes. Fig. 2g shows the I–V curves of 30 randomly selected cells after the program-and-erase operations. The program state is induced by applying a 5.5 V program voltage (VPGM) for 2 μs, while the erase state is achieved using a −5 V erase voltage (VERS) for 5 μs, with both BLs and SLs grounded. During the read operation, the voltage applied to the unselected cells (Vpass) was fixed at 2 V. The rationale behind this choice is reported in Supplementary Note 1 (Determination of Vpass value for read operations). Additionally, the inhibition operation during the program and erase processes is discussed in Supplementary Note 2 (Inhibition process of the FeNAND arrays during program and erase operations). In Fig. 2g, the threshold voltage (Vth) decreases in the program state and increases in the erase state compared to the initial state, confirming that the memory state of the FeNAND cell can be effectively stored based on the polarization state of the HZO layer. Figure 2h shows the programming and erasing speeds of the fabricated FeNAND device. Under grounded BL and SL conditions, the threshold voltage shift (ΔVth) is measured based on the magnitude of VPGM and VERS and the applied pulse width. The FeNAND device shows low-voltage programming (<5 V) and fast switching speeds (<10 μs), attributed to the excellent switching characteristics of the HZO layer.

Figure 2i shows the retention characteristics of four distinct states programmed by applying specific pulses to the WL (State 1: 5 V, 2 μs; State 2: 3.8 V, 2 μs; State 3: 3.2 V, 2 μs; and State 4: −5 V, 5 μs). Figure 2i shows the ID-VGS characteristics over 106 seconds at ambient temperature (25 °C), with negligible Vth drift. Figure 2j plots the stability of ID over time, further confirming that the fabricated FeNAND device possesses retention properties suitable for use as synaptic memory cells in hardware-based neuromorphic systems. Figure 2k presents the endurance characteristics of the fabricated FeNAND device under repeated programming and erasing conditions. A 5.5 V (2 μs) program pulse and a −5 V (5 μs) erase pulse are repeatedly cycled. Even after 107 endurance cycles, minimal Vth degradation is observed, confirming the robust endurance and reliability characteristic of devices. Figure 2l shows the ID-VGS curves obtained when programming the FeNAND cell using the ISPP scheme. In the ISPP scheme, the programming start voltage (Vstart) is set to 3.00 V, the voltage increment per pulse (Vstep) is 0.15 V, and the final programming voltage (Vend) is 5.25 V. According to the ISPP scheme, Vth of the FeNAND cell gradually decreases, demonstrating that successful conductance modulation is achievable through the ISPP scheme. Supplementary Fig. 9 shows the process of achieving different target current values (a: 50 nA and b: 80 nA) using the ISPP scheme. Figure 2m shows the ID changes in the FeNAND cells under repeated ISPP and erase pulses, demonstrating stable and consistent ID shifts. These results verify that the FeNAND cells can be effectively used for memory and synaptic applications in hardware-based neuromorphic systems.

Control µ and σ in FeNAND array

In this section, we propose a scheme that simultaneously controls the conductance and variation of FeNAND cells by adjusting Vstep and Vend. Figure 3a shows the ID-Vend graph with Vstart fixed at 3.00 V and varying Vstep values. As Vend increases, the magnitude of the final applied voltage increases, resulting in an increase in ID. In addition, for the same Vend, a smaller Vstep leads to a larger ID owing to the application of a greater number of pulses. Therefore, by selecting an appropriate Vend corresponding to the applied VStep, the desired ID level can be achieved. Figure 3b demonstrates that different target IDs (10, 50, and 100 nA) were successfully attained for 100 FeNAND cells under varying Vstep conditions ((b-1): 0.05 V, (b-2): 0.10 V, and (b-3): 0.20 V). Read operations were carried out at 0.4 V in the subthreshold region. This minimizes disturbances from pass-resistor effects during on-state reads and ensures a high on/off ratio. This indicates precise control over the mean current. Interestingly, even for the same target cell, the variability among the cells increased as the magnitude of Vstep increased. As Vstep increases, more cells experience large shifts in a single pulse, and excessive overshoots in some cells result in a wider overall distribution. Conversely, setting up a smaller Vstep can suppress these shifts and narrow the distribution, but it requires significantly longer programming times. Moreover, previous studies on charge-trap flash-based 3D NAND architectures have shown that the expected number of over-programmed cells increases with the applied ISPP voltage. They mathematically modeled this behavior to demonstrate that as Vstep increases and the voltage increment per programming step becomes larger, leading to more frequent trapping events and a higher probability of over-programming, the expected value of over-programming occurrences also increases64,65,66. Supplementary Fig. 10 illustrates how cells reach a given target current as VPGM increases. It also shows that once certain cells surpass the target current, applying an inhibit voltage (2.7 V) prevents any further changes in their state. Supplementary Fig. 11 shows the retention characteristics measured at intermediate current levels. These characteristics were evaluated over 104 seconds in 10 devices, with programming performed in 10 nA increments from 10 nA to 100 nA. Reliable retention was observed for the entire duration at each programmed current level, indicating that the weight state remains stable. Supplementary Fig. 12 shows the ID histograms of 100 cells programmed with different Vstep values (0.05, 0.1, 0.15, and 0.2 V) for all target IDs. Despite consistent mean ID values close to the target, increasing Vstep (from 0.05 to 0.20 V) results in broader standard deviations (from 7.64×10-10 to 2.59×10-9), validating the modulation of σ through Vstep adjustments. Since the ISPP scheme applies Vinhibit solely to cells that exceed the target current and performs no further trimming, the resulting distribution is right-skewed around the intended μ. Figure 3c shows current variations of 100 FeNAND cells programmed with varying target currents (10, 20, 50, 80, and 100 nA) and Vstep values, confirming increased dispersion with larger Vstep. Fig. 3d shows the dependence of ID standard deviation on both Vstep and target current, indicating consistent increases in σ with larger Vstep values, regardless of the target current level. To investigate the effect of programming pulse width, Supplementary Fig. 13 shows the relationship between Vstep, μID, and σID for pulse widths of 20 and 100µs, respectively. Regardless of the pulse width magnitude, an increasing trend in σID is observed with increasing Vstep. Therefore, by proposing a scheme that adjusts Vstep and Vend, we demonstrate that μID and σID can be independently controlled within a FeNAND cell array. Importantly, this ISPP scheme has already been utilized in commercial NAND cells, offering the significant advantage of not requiring additional circuitry or changes in operational modes. Figure 3e shows the difference between the target μID and the measured μID for five different FeNAND arrays (each array consists of 288 cells) through the control of Vend in the proposed ISPP scheme. It is confirmed that programming could be successfully achieved over a wide μID range (10–130 nA). Figure 3f shows the difference between the target σID and the measured σID for five different FeNAND arrays (each array consists of 288 cells) through the control of Vstep in the proposed ISPP scheme. In off-chip BNN implementations, verifying cycle-to-cycle consistency is essential. Supplementary Fig. 14 shows the weight distribution on the same 100 FeNAND devices over five programming cycles at target currents of 50 nA and 100 nA. Both the μ and standard σ remained highly stable, varying by less than 10% across all five cycles, and identical trends were observed in different FeNAND arrays, confirming the robustness of the proposed scheme. If the distribution of devices produced via ISPP changes over the endurance period, it will affect the network’s performance. Supplementary Fig. 15 shows the retention characteristics for three states (50, 80, 100 nA), measured over 104 seconds after applying 103 cycling damage. Experimentally, it was verified that all three states exhibited distinguished retention, with less than a 10% loss over 104 seconds. Supplementary Figs. 16 and 17 show retention measurements at 55 °C and 85 °C, respectively; see Supplementary Note 4 for further details. The mean error in Supplementary Fig. 11 is caused by the inherent right‑skew of ISPP, not retention; retention‑induced drift is <5 % over 104 seconds and therefore negligible during the sub‑millisecond ISPP window. Figure 3g presents the controllable range of μID and σID achieved by adjusting Vend and Vstep in the proposed ISPP scheme. The ability to adjust over a wide range demonstrates the potential for enhancing the expressive power of BNN models.

a ID-Vend graph with Vstart fixed at 3.00 V and varying Vstep values. b Different target IDs (10 nA, 50 nA, and 100 nA) are successfully attained for 288 FeNAND cells under varying Vstep conditions ((b-1): 0.05 V, (b-2): 0.10 V, (b-3): 0.20 V). c ID histograms of 100 cells programmed with different Vstep values (0.05, 0.1, 0.15, and 0.2 V) while maintaining a target current of 10 nA. d Current variations of 100 FeNAND cells programmed with varying target currents (10, 20, 50, 80, and 100 nA) and Vstep values, confirming increased dispersion with larger Vstep. e Difference between the target μID and the measured μID for five different FeNAND arrays (each array consists of 288 cells) through the control of Vend in the proposed ISPP scheme. f Difference between the target σID and the measured σID for five different FeNAND arrays (each array consists of 288 cells) through the control of Vstep in the proposed ISPP scheme. g Controllable range of μID and σID achieved by adjusting Vend and Vstep in the proposed ISPP scheme.

Validation of 3D FeNAND Design Feasibility through SPICE Simulation

SPICE simulations were performed using pretrained weights mapped onto the fabricated array to validate the implementation of a BNN on the 3D FeNAND structure. The ability of the 3D NAND structure to program cells simultaneously enhances time efficiency. Unlike planar NAND structures, the 3D NAND architecture enables VMM operations through a BL. Figure 4a shows a BNN implemented on the 3D FeNAND architecture trained to classify the two types of patterns, with the dataset comprising 20 training examples with 4×2 pixel dimensions (see Supplementary Fig. 19 for the entire dataset). The input data were binarized into 0 and 1, and the classification was determined based on whether a shaded pattern appeared in the upper or lower portions of the image. The parameters used in more detailed software simulations are shown in Supplementary Table 2.

a–c Schematic illustration of up and down pattern classification and weight distribution mapping in a BNN using 3D FeNAND. In the system, binary input pulses are applied to the string select line (SSL), and current is summed through BLs. The summed current is processed by the neuron and activation circuits before being passed as input to the next layer. To maximize the efficient use of cells, multiple layers were mapped onto a single WL. d Processing step of tuning to the desired state using the program-and-verify method for µ cells. Set pulses are applied when the current is below the target, and reset pulses when it exceeds the target. This process repeats until the value meets the specified error margin. e Transferred distribution of weight1 in the FeNAND array. G+ and G- values are determined based on the sign of each weight’s target current and its σ. f Distribution of weight1 trained in software and its transferred distribution in the FeNAND array (G+ – G-). g Measured transfer curves and SPICE-fitted curves using the BSIM model. h Output predictive distribution results for the test image obtained from both software and SPICE. i Output result obtained by averaging the predictive distribution. The results obtained from software simulation align with the inference results fitted and conducted through SPICE.

Depending on the input, the string select line (SSL) is configured to apply either a pulse or ground (GND), while a read voltage (0.4 V, 100 µs) is applied to the WL containing the transferred weights. Vpass (2 V) is applied to all the other unselected WLs. The current flowing through each string is summed along the BL, making VMM operations essential for BNN inference. The detailed VMM operation in NAND structure is reported in Supplementary Note 3 (Vector Matrix Multiplication operation in 3D FeNAND architecture). Each cell serves as a synaptic device to store the individual weight values. The cumulative currents from multiple BLs collectively represent the weight distribution required for the BNN, ensuring the robust execution of its probabilistic computations. For negative weight distributions, weights were divided into G+ and G-, and the final output was calculated using a subtractor. This output passes through the neuron and activation circuits, serving as the input for the subsequent layers.

In a 3D NAND flash structure, the cells in each string are connected in series, allowing only one WL to be read simultaneously. Previous studies have mapped one network layer per WL, which led to significant inefficiency when the network size was small. To address this, multiple layers were mapped onto a single WL depending on the network size (Fig. 4b). Figure 4c illustrates the overall system process of the BNN implemented using 3D FeNAND arrays. The architecture stored weight distribution parameters (µ and σ) in each layer of WL. The combined currents were processed through subtractor circuits and ADCs, followed by activation and neuron circuits before being propagated to the next layer. To provide input to the subsequent layer, the output is converted using a DAC. This optimization reduces the hardware overhead and improves the efficiency of the FeNAND array, making it more suitable for larger and more complex neural network architectures.

The synaptic weights for pattern classification were first trained using a Python-based neural network simulation. In BNNs, synaptic weights are modeled as probability distributions defined by the posterior distribution p(W | D). Using the VI, the posterior was approximated as a Gaussian distribution. During training, the μ and σ of the weights were optimized through backpropagation (see the Methods section for BNN training). The training was conducted over 100 epochs, ensuring alignment with the measured μ and σ of the device. As training progressed, the loss gradually decreased, and test accuracy reached 100% (Supplementary Fig. 23a). During inference, sampling is performed 50 times (M = 50) to obtain a predictive distribution of the output.

The μ and σ of the trained synaptic weights were transferred to the fabricated FeNAND array (see the Methods section for mapping synaptic weight distributions to the FeNAND array). Sixteen synaptic distributions were mapped to G+ and G- based on the weight sign. To match the σ of the target synaptic distribution, the ISPP voltage step is adjusted, whereas the cells representing μ were precisely tuned to the target conductance using a program-and-verify method (Fig. 4d). The tuning process was terminated when the conductance reached an error margin of 5×10-10 of the target value. Figure 4e, f and Supplementary Fig. 21 display the overall 16 weight distributions obtained from the software and their counterparts implemented on the FeNAND array. Figure 4e shows the G+ and G- distributions for weight 1, derived from the FeNAND array. G+ and G- values were determined based on the sign of each weight. Figure 4f compares the weight distribution calculated from the BL current differences (G+ – G-) with the values sampled using the Monte Carlo software. This comparison demonstrated that the distributions obtained from the FeNAND array closely matched the software-generated distributions, validating the accuracy and reliability of the proposed implementation.

The inference process was validated using SPICE simulations to confirm the consistency of the 3D FeNAND structures. To ensure proper connectivity with the fabricated FeNAND array, each string consisted of 24 cells, with inputs provided through eight SSLs and 50 BLs allocated per output node, resulting in 100 BLs. The measured transfer characteristics were modeled using the BSIM model in SPICE to verify the reliability of the simulation (see the Methods section for the detailed fitting process). As illustrated in Fig. 4g, both states were accurately fitted, demonstrating the precision of the SPICE model. The weight distributions were transferred to the 3D FeNAND array using MATLAB for SPICE fitting (see Methods for detailed MATLAB fitting procedures). After successfully transferring all weight distributions, inference was conducted on the test images. Figure 4h shows the predictive distribution results obtained from the software and SPICE simulations, and Fig. 4i shows the average values derived from the sampled predictive distributions. Supplementary Fig. 22 shows the inference processes for both simulation and measurement. In the simulation, inference was conducted by sampling from a learned ideal Gaussian weight distribution. In the measurement, a dispersion was generated using the σ value obtained from the software as the target. Subsequently, an additional BL was applied to fine-tune the μ. The adjusted parameters were then fitted to the SPICE simulation to perform the inference. The predictive distributions from the software closely matched those produced through SPICE inference, exhibiting consistent trends across all test images (see Supplementary Fig. 23b–d for additional test images. b and c present the predictive distributions for different images, and d illustrates the average values of the downward patterns). The agreement between the measured and simulated data validates the accuracy of the weight-transfer process and highlights the feasibility of the proposed hardware-based classification framework, demonstrating its efficiency and reliability.

Medical data analysis using 3D FeNAND-based bayesian neural networks

BNNs possess a distinctive capability to quantify the uncertainty in their predictions, making them particularly advantageous for medical data analysis, where diagnostic precision is critical. Biomedical data obtained using medical devices are inherently vulnerable to various sources of external noise, including limited instrument resolution, calibration inaccuracies, and patient movement during data collection. These factors introduce significant challenges to accurate data analysis and obscure critical diagnostic information. Therefore, evaluating the uncertainty in noisy data is essential for making reliable clinical decisions. By effectively assessing the uncertainty level, medical professionals can account for potential inaccuracies and provide more precise diagnoses, ultimately improving patient outcomes through informed clinical judgment.

In this study, the performance of the 3D FeNAND-based BNN system was evaluated using a chest X-ray dataset (Fig. 5a). Chest X-ray data are a critical tool in medical diagnostics, providing a noninvasive method to assess lung health by detecting structural abnormalities and signs of disease67. This plays a vital role in identifying various respiratory conditions, including lung opacities, viral infections, and COVID-19-related complications (lower panel of Fig. 5a). In particular, the diagnosis of pneumonia relies heavily on chest X-ray images, as they can reveal fluid-filled alveoli and blocked bronchioles caused by inflammation and mucus buildup (right panel of Fig. 5a). In a healthy lung, the alveoli remain air-filled and facilitate efficient gas exchange, whereas the bronchioles remain clear, ensuring smooth airflow (left panel of Fig. 5a). However, when pneumonia or similar conditions occur, these structures are compromised, resulting in breathing difficulties and reduced oxygen supply. The ability of chest X-rays to detect such critical features makes them indispensable for diagnosing and managing respiratory diseases. Figure 5b shows a schematic representation of the disease diagnosis system utilizing a 3D FeNAND-based BNN trained for medical data analysis. An explanation of the chest X-ray dataset, along with the training and inference processes of the BNN, is reported in the methods section.

a Chest X-ray dataset with four classes: normal, COVID, lung opacity, and viral pneumonia. The dataset includes both healthy and diseased lung images, highlighting critical diagnostic features for classification. b Schematic of the 3D FeNAND-based BNN system for medical data analysis. The system utilizes probabilistic inference for robust and reliable disease diagnosis. c Classification accuracy and loss curves for standard ResNet (deterministic prediction) and BNN (probabilistic prediction). Both networks achieve high accuracy as training progresses. d Classification accuracy of standard ResNet and BNN for various external noise levels. As the external noise level increases, the performance of the standard ResNet significantly degrades, while the BNN maintains higher accuracy due to its uncertainty-aware predictions. e Prediction counts for a specific class with noisy chest X-ray inputs. The standard ResNet misclassifies frequently with high confidence, while the BNN makes more accurate predictions with reduced overconfidence. f ROC curves for the standard ResNet and BNN (σ/μ = 0.1). The BNN achieves a higher AUC, demonstrating superior classification performance under noisy conditions. g PR curves for the standard ResNet and BNN (σ/μ = 0.1). The BNN outperforms the standard ResNet with a higher AUC, indicating better precision–recall trade-offs. h Calibration curves for the standard ResNet and BNN (σ/μ = 0.1). The BNN shows better calibration, aligning predicted probabilities closer to actual outcomes, while the standard ResNet exhibits overconfidence.

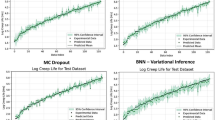

Figure 5c illustrates the classification accuracy and loss of the deterministic (standard ResNet) and probabilistic (BNN) prediction methods within the same ResNet-101 architecture (see Supplementary Fig. 25 for details). In both approaches, the classification accuracy increased as training progressed, ultimately reaching approximately 96%. Supplementary Fig. 26 presents the confusion matrix of the BNN, which validates the reliable and accurate predictive performance of the network. Although both networks demonstrated comparable performance under noise-free conditions, the performance gap became significant as the external noise levels increased (Fig. 5d). The classification accuracy of the standard ResNet degrades significantly owing to its deterministic predictions and inherent inability to account for uncertainties. In contrast, BNNs employing probabilistic predictions exhibit robust resilience against external noise, maintaining higher classification accuracy through uncertainty-aware inference. This resilience arises from the ability of the BNN to model uncertainty, enabling adaptive decision-making under noisy conditions by considering multiple possible representations of the input data, unlike the fixed-point estimates used in standard ResNet models. This highlights the intrinsic advantages of BNNs in handling noisy data environments. Figure 5e depicts the prediction counts for a specific class when randomly selected noisy chest X-ray data were fed into both the standard ResNet and BNN. Despite receiving chest X-ray data from healthy individuals, the standard ResNet frequently misdiagnoses the data as lung opacity, owing to its susceptibility to external noise. This limitation is further exacerbated by unwarranted confidence in these incorrect predictions. In contrast, the BNN, which employs probabilistic predictions, significantly increases the frequency of correct diagnoses. This behavior highlights the reduced overconfidence of the network and prediction uncertainty in the presence of external noise.

Beyond classification accuracy, various evaluation metrics, such as receiver operating characteristic (ROC), precision–recall (PR), and calibration curves, provide deeper insights into model behavior, particularly under uncertain and noisy conditions. These metrics capture different aspects of predictive performance, including discrimination ability, class imbalance handling, and confidence calibration, enabling a more comprehensive assessment of the reliability and robustness of the networks (see the Methods section for more details). Figure 5f shows the ROC curves for the standard ResNet and BNN under an external noise level (σ/μ) of 0.1. The ROC curve offers a comprehensive evaluation of the model performance by plotting the true positive rate (TPR) against the false positive rate (FPR) across various classification thresholds. The curve (AUC) quantifies the overall discriminative ability of the model. A higher AUC indicates better performance, reflecting the capacity of the model to distinguish between different classes, even when subjected to noisy input data. The BNN demonstrates a larger AUC than the standard ResNet, confirming its superior robustness and uncertainty-aware predictive capability. Figure 5g represents the PR curves for the standard ResNet and BNN under an external noise level (σ/μ) of 0.1. The PR curve is particularly valuable in imbalanced datasets, where positive cases are rare, because it evaluates the tradeoff between precision (positive predictive value) and recall (sensitivity). A higher AUC indicates more reliable detection of relevant cases with fewer false positives. The BNN consistently achieved a higher AUC, reflecting its better handling of uncertain data by reducing the false-positive predictions. Figure 5h depicts the calibration curves for the standard ResNet and BNN under an external noise level (σ/μ) of 0.1. The calibration curve compares the predicted probabilities with the actual observed frequencies, indicating how well the model’s predicted confidence aligns with reality. A perfectly calibrated model aligns with a diagonal line where the predicted probabilities match the actual likelihoods. The standard ResNet demonstrates signs of overconfidence, predicting high probabilities even when incorrect, whereas the BNN demonstrates a more reliable calibration by producing less overconfident and better-calibrated predictions, reflecting its ability to appropriately express uncertainty. Furthermore, the distribution of the mean and standard deviation values of the synaptic weights in the trained BNN was within the range of the measured characteristics of FeNAND (Supplementary Fig. 27). This alignment indicates that the 3D FeNAND-based BNN system can be effectively trained to approximate an ideal model, enabling optimal performance by leveraging the inherent device characteristics. The integration of 3D FeNAND technology with the probabilistic inference capabilities of BNNs enables scalable and energy-efficient medical data analysis with enhanced reliability, even in the presence of external noise. In off-chip trained BNNs, retention loss can affect classification accuracy. To evaluate this impact, we incorporated the retention characteristics (see Supplementary Fig. 11) into our simulations. After 10⁴ seconds, an average retention loss of 5 % was observed across all states; we then extrapolated these data to 10⁵ seconds to cover a broader timescale. Supplementary Fig. 28 shows the performance degradation of the BNN system due to the retention loss, confirming that strong retention characteristics yield only a ∼3 % accuracy drop after 10⁵ seconds. Should further retention loss lead to greater accuracy degradation, the excellent cycle-to-cycle stability of our FeNAND allows for a simple refresh operation to restore the programmed weight distributions.

Discussion

In this study, we demonstrated the hardware implementation of a BNN using a 3D FeNAND architecture based on HZO. By leveraging the ISPP technology, a widely adopted technique in conventional NAND flash memory, we achieved precise and efficient weight distribution control without requiring additional circuitry. The intrinsic device-to-device variations of FeNAND, combined with its page-level programming capability, enabled the generation of Gaussian-distributed weights in a single operation, eliminating the need for iterative program-erase cycles. Furthermore, by modulating the Vstep of the ISPP technology, we established a tunable weight distribution spanning a broad range, surpassing previous studies in flexibility and applicability (see Supplementary Table 1). Furthermore, our FeNAND-based BNN implementation achieves better power efficiency compared to SRAM- or other CTF-based NAND flash approaches (see Supplementary Note 6 for detailed power calculations).

To validate the feasibility of our approach in the 3D FeNAND, pre-trained weight distributions were accurately mapped onto the FeNAND array using a custom algorithm and verified through SPICE simulations. The integration of 3D FeNAND technology with probabilistic inference capabilities of BNNs enabled scalable and energy-efficient medical data analysis, enhancing robustness and reliability under noisy conditions. Notably, compared to deterministic models, our FeNAND-based BNN system exhibited superior resilience to external noise, maintaining high classification accuracy through uncertainty-aware inference. These findings establish 3D FeNAND as a promising platform for hardware-based BNNs, bridging the gap between probabilistic computing and non-volatile memory technology while paving the way for next-generation AI hardware optimized for uncertainty-aware deep learning applications.

Method

Fabrication process of HZO based FeNAND

A non-volatile HZO-based MFIS FeNAND device was fabricated on a 100 nm-thick silicon-on-insulator (SOI) wafer. First, Si etching was performed to create BLs with a width of 1 µm through patterning. Subsequently, a 1 nm-thick SiO2 layer and a 6 nm-thick HZO layer (Hf:Zr = 2:1) were deposited via atomic layer deposition (ALD). A 100 nm-thick TiN layer was then deposited using sputtering, followed by patterning to form WLs with a length of 5 µm (line spacing = 500 nm). Source and drain implantation (P + , dose: 1×10¹⁵) was conducted using a gate patterning mask. To crystallize the HZO layer and activate the dopant, rapid thermal annealing (RTA) was performed at 650 °C for 30 s. The device fabrication was completed using back-end-of-line processing. For further details, please refer to Supplementary Fig. 1.

Electrical measurements

The ferroelectric properties of the fabricated HZO-based FeFETs were investigated using a probe station and Keithley 4200-SCS semiconductor parameter analyzer (Keithley 4200-SCS). DC ID-VGS measurements were conducted using a semiconductor parameter analyzer (Keysight B1500A) equipped with a source measurement unit (SMU). A B1500A equipped with a waveform generator/fast measurement unit (B1530A, WGFMU) module and a high-voltage semiconductor pulse generator unit (B1525A, SPGU) were used for the pulse measurements. To characterize the fabricated array, a custom-made probe card (B1500A) and switching matrix (Keysight E5250A) were used. Each input was distributed across multiple channels via the switching matrix and controlled using LABVIEW. The overall experimental setup for the device measurements is shown in Supplementary Fig. 2. All the electrical measurements and characterizations were performed in ambient air at room temperature.

BNN training and inference process

The training of a BNN is based on Bayes’ theorem, in which the posterior distribution p(W | D) combines the prior belief p(W) with the training dataset D to identify the most probable models. Mathematically, Bayes’ theorem is expressed by Eq. (1):

where \(p\left(D|W\right)\,\) represents the likelihood, \(p\left(W\right)\) the prior, and \(p\left(D\right)\) the evidence. The posterior distribution determines the weight of the network and captures the uncertainty inherent in the training process. In BNNs, calculating the exact posterior distribution is often intractable. Therefore, methods such as VI or Markov chain MCMC sampling were used to approximate the posterior distribution. VI approximates the posterior \(p\left(W,|D\right)\) with a simpler, computationally tractable distribution \(q\left(W|\theta \right)\), often assumed to follow a normal distribution. Typically, the variational distribution is modeled as a gaussian distribution, where the variational parameters \(\theta=(\,\mu,\sigma )\) represent the mean and standard deviation. Unlike traditional neural networks that optimize single-weight values, these parameters are optimized to model a distribution over weights and capture the uncertainty in the network. The optimization of parameters is performed by minimizing the Kullback–Leibler (KL) divergence, which measures the similarity between the distributions p(W | D) and q(W|θ).

During training, the parameters were optimized using the Bayes by Backprop algorithm, a form of backpropagation adapted for BNNs. Inference in Bayesian models involves multiple steps, in which weights are sampled from the posterior distribution for each step. The network output was then obtained by averaging the predictions across these samples, typically using Monte Carlo sampling with N iterations, as shown in Eq. (3).

where x and y denote the test input and output, respectively, N is the number of Monte Carlo samples used to approximate the posterior predictive distribution, D represents the training data, and \({W}_{i}\,\) denotes the weight sampled from the posterior distribution.

Mapping synaptic weight distribution to FeNAND arrays

Supplementary Fig. 8 illustrates the overall off-chip training process of the BNN. After training the software, the synaptic weight distributions were transferred to an FeNAND array for off-chip processing. Upon completion of training, each weight was represented by its µ and σ. To map the software-trained weight range to the device’s range, a scaling factor was applied to both the µ and σ.

In addition, the number of programmed BLs was adjusted based on the number of samples used for inference. To represent negative weights, the devices for σ and µ were separated, and weight distribution was expressed as the difference between them. The process of transferring the weight distribution to FeNAND is as follows: First, all cells connected to the selected WL are erased by applying −5.5 V for 100 µs. After erasing, the current at the read voltage (0.4 V) was recorded. VPGM was initially set at 3 V and increased incrementally in predefined steps. For σ transfer, the process continued until the conductance of all cells connected to the WL exceeded the target conductance value or reached the maximum VPGM (6 V). Owing to the NAND structure, cells on the same WL may change their state because of VPGM. To prevent further changes in their state, Vinhibit was applied to the BL of cells that exceeded the target conductance (Supplementary Fig. 10).

For μ tuning, precise control was achieved by recalculating the error after each application of the programming voltage, with programming and erasing repeated iteratively until the conductance falls within the specified margin (5e-10). If the value is smaller than the target value, the program voltage gradually increases. Conversely, if the target is exceeded, the erase voltage is incrementally increased to adjust the value within the error margin. This approach ensures that the entire synaptic weight distribution is transferred to the FeNAND array simultaneously rather than transferring individually sampled weights.

SPICE Fitting

The measured I–V transfer curve was fitted using the SPICE Berkeley Short-Channel IGFET Model (BSIM4) (Level = 54). Various parameters of the BSIM model, such as the threshold voltage, subthreshold swing, and gate-induced drain leakage, were adjusted to accurately fit the program and erase states (Fig. 4g). The SPICE-based 3D FeNAND simulation accurately reflects the actual weight distribution transferred from the software-trained weights. To properly match the µ and σ of each weight distribution obtained from FeNAND, a MATLAB-based SPICE fitting algorithm was developed.

The algorithm runs SPICE in MATLAB and dynamically adjusts the threshold voltage of the SPICE model. To ensure precise and efficient current fitting, the threshold voltage was adjusted based on the calculated error magnitude. When the difference between the read voltage current of the model and experimentally measured current was large, a larger voltage adjustment was applied. Conversely, when the difference was small, the adjustment was reduced. The fitting process was terminated when the error fell below a predefined threshold (Algorithm 1). Empirical, skewed conductance histograms were embedded unchanged in the SPICE model to quantify their effect on network accuracy.

Algorithm 1

Measured current distribution for SPICE simulation

1: Margin: Allowed current error

2: GM : Measured FeNAND current

3: Vs: Applied Voltage

4: imax: Maximum number of iterations

5: G: Fitted FeNAND current

6: G ← RESET

7: i ← 0

8: Vs ← Vinit

9: while i < imax do

10: i ← i + 1

11: if |G − GM | ≤ 5 × 10−10 then

12: END

13: else if |G − GM | > 0.1 × |GM | then

14: Vs ← Vs ∓ 0.2

15: else if 0.05 × |GM | < |G − GM | < 0.1 × |GM | then

16: Vs ← Vs ∓ 0.015

17: else

18: Vs ← Vs ∓ 0.005

19: end if

20: end while

Chest X-ray dataset

The chest X-ray dataset consisted of 16,932 training and 2116 validation images, each represented as grayscale images with a resolution of 224 × 224 pixels. The dataset is organized into four distinct classes: one representing healthy individuals (“Normal”) and three corresponding to specific respiratory conditions (“COVID,” “Lung opacity,” and “Viral pneumonia”). This multiclass structure allowed for a comprehensive evaluation of the ability of the proposed BNN system to distinguish between normal and diseased lungs.

Evaluation metrics

The 3D FeNAND-based BNN system was evaluated using three performance metrics: ROC, PR, and calibration curves. These metrics offer a comprehensive assessment of the predictive capabilities of a network by capturing different aspects of performance, particularly in the context of uncertain and noisy medical data.

1) ROC curve: The ROC curve visualizes the tradeoff between TPR and FPR across varying classification thresholds. The TPR represents the proportion of correctly identified positive cases, whereas the FPR indicates the proportion of incorrectly identified negative cases. A perfect balance model maximizes the TPR while minimizing the FPR. The ROC AUC quantifies the overall discriminative ability of the model. An AUC approaching 1 indicates superior performance in distinguishing between classes, even in the presence of external noise. Because the chest X-ray dataset used in this study included four classes, individual ROC curves were generated for each class, and a single representative curve was obtained using macro-averaging, averaging the AUC scores across all classes.

2) PR curve: The PR curve evaluates the relationship between the precision (the proportion of correctly predicted positive samples among all predicted positives) and recall (the proportion of correctly predicted positives among all actual positive samples). This metric is particularly useful in datasets with class imbalance because it focuses on the positive class and avoids distortion by a large number of negative samples, which is a common limitation of ROC curves. A higher PR AUC value indicates superior positive class detection and fewer false positives. Similar to the ROC analysis, individual PR curves were generated for each of the four dataset classes, and macro-averaging was applied to aggregate the results into a single PR curve.

3) Calibration curve: The calibration curve evaluates the alignment between the predicted probabilities and actual observed frequencies, providing insight into the reliability of the model in representing uncertainty. A perfectly calibrated model aligns along the diagonal line (y = x) where the predicted and actual probabilities match exactly. Deviations from this diagonal indicate calibration errors. Overconfidence occurs when the model predicts probabilities higher than the actual likelihood, resulting in points below the diagonal. In contrast, underconfidence occurs when the model predicts probabilities lower than the actual likelihood, placing points above the diagonal.

Uncertainty estimation in MNIST images

Unlike conventional neural networks, where weights are fixed values, a BNN represents weights as distributions. Through multiple Monte Carlo samplings, BNNs provide predictive distributions for the output values. In BNNs, total uncertainty, or predictive uncertainty, is quantified by combining the aleatoric uncertainty inherent in the data with the epistemic uncertainty, which reflects the model’s uncertainty regarding its parameters. The total uncertainty expressed in Eq. (5) can be applied to classification or entropy calculations using the SoftMax values of the predictive distributions63.

where M denotes the number of sampling iterations, N represents the number of output classes, and y refers to the output values from the SoftMax function. The total uncertainty is composed of the sum of the aleatoric and epistemic uncertainties.

Aleatoric and epistemic uncertainties represent distinct types of information, making it essential to distinguish them. Aleatoric uncertainty arises from the inherent properties or ambiguity of the data itself and occurs in situations where a specific input can simultaneously support multiple outcomes. By contrast, epistemic uncertainty originates from unknown situations and is primarily caused by data that the model has not encountered during training.

Supplementary Fig. 16 presents the uncertainty density distribution results obtained using the Modified National Institute of Standards and Technology (MNIST) images. The network consists of two convolutional layers followed by two fully connected layers (256–256–10), reflecting the range of µ and σ of the hardware. Each convolutional layer uses a 5×5 kernel with stride 1 and is followed by average pooling. The first convolutional layer produces 6 feature maps, and the second takes those 6 channels as input and outputs 16 feature maps.The network was trained using VI, and predictions were made with 50 sampling iterations. The uncertainty was calculated for three distinct cases: Correct, Unseen, and Incorrect. To compute the epistemic uncertainty, Fashion MNIST data were incorporated into the test dataset. Supplementary Fig. 24 presents the calculated aleatoric and epistemic uncertainties for the correct predictions (dark cyan), incorrect predictions (red), and unseen data (purple). In both cases, the uncertainty values for the correct images were mostly below 0.4, indicating low uncertainty. In contrast, for the unseen images, the uncertainty values were generally above 0.5, indicating significantly higher uncertainty. These results demonstrate that BNN can assess uncertainty and recognize novel, previously unseen images.

Data availability

All relevant data are available within the article and in the Supplementary Information. The data generated in this study are provided in Source Data files. All other data are available from the corresponding author upon request. Source data are provided with this paper.

Code availability

All relevant codes used for the simulation are available in the article and Supplementary Information. All other codes are available from the corresponding authors on request.

Change history

14 August 2025

In the acknowledgments section of this paper funding sources were omitted. The original article has been corrected.

References

LeCun, Y., Bengio, Y. & Hinton, G. Deep learning. Nature 521, 436–444 (2015).

Shah, A. et al. A comprehensive study on skin cancer detection using artificial neural network (ANN) and convolutional neural network (CNN). Clin. eHealth https://www.keaipublishing.com/en/journals/clinical-ehealth/ (2023).

Mall, P. K. et al. A comprehensive review of deep neural networks for medical image processing: Recent developments and future opportunities. Healthc. Anal., 100216 https://doi.org/10.1016/j.health.2023.100216 (2023).

Kurani, A., Doshi, P., Vakharia, A. & Shah, M. A comprehensive comparative study of artificial neural network (ANN) and support vector machines (SVM) on stock forecasting. Ann. Data Sci. 10, 183–208 (2023).

Chouard, T. & Venema, L. Machine intelligence. Nature 521, 435 (2015).

Atakishiyev, S., Salameh, M., Yao, H. & Goebel, R. Explainable artificial intelligence for autonomous driving: A comprehensive overview and field guide for future research directions. IEEE Access https://doi.org/10.48550/arXiv.2112.11561 (2024).

Ielmini, D. & Wong, H.-S. P. In-memory computing with resistive switching devices. Nat. Electron. 1, 333–343 (2018).

Sebastian, A., Le Gallo, M., Khaddam-Aljameh, R. & Eleftheriou, E. Memory devices and applications for in-memory computing. Nat. Nanotechnol. 15, 529–544 (2020).

Misra, J. & Saha, I. Artificial neural networks in hardware: A survey of two decades of progress. Neurocomputing 74, 239–255 (2010).

Aguirre, F. et al. Hardware implementation of memristor-based artificial neural networks. Nat. Commun. 15, 1974 (2024).

Prezioso, M. et al. Training and operation of an integrated neuromorphic network based on metal-oxide memristors. Nature 521, 61–64 (2015).

Burr, G. W. et al. Neuromorphic computing using non-volatile memory. Adv. Phys. X 2, 89–124 (2017).

Kim, K., Song, M. S., Hwang, H., Hwang, S. & Kim, H. A comprehensive review of advanced trends: from artificial synapses to neuromorphic systems with consideration of non-ideal effects. Front. Neurosci. 18, 1279708 (2024).

Rao, M. et al. Thousands of conductance levels in memristors integrated on CMOS. Nature 615, 823–829 (2023).

Zhao, M., Gao, B., Tang, J., Qian, H. & Wu, H. Reliability of analog resistive switching memory for neuromorphic computing. Appl. Phys. Rev. 7, https://doi.org/10.1063/1.5124915 (2020).

Shin, W. et al. Self‐curable synaptic ferroelectric FET arrays for neuromorphic convolutional neural network. Adv. Sci. 10, 2207661 (2023).

Mikolajick, T., Park, M. H., Begon‐Lours, L. & Slesazeck, S. From ferroelectric material optimization to neuromorphic devices. Adv. Mater. 35, 2206042 (2023).

Schroeder, U., Park, M. H., Mikolajick, T. & Hwang, C. S. The fundamentals and applications of ferroelectric HfO2. Nat. Rev. Mater. 7, 653–669 (2022).

Jerry, M. et al. Ferroelectric FET analog synapse for acceleration of deep neural network training. 2017 IEEE International Electron Devices Meeting (IEDM). IEEE 10.1109/IEDM.2017.8268338 (2017).

Koo, R. H. et al. Polarization pruning: reliability enhancement of hafnia‐based ferroelectric devices for memory and neuromorphic computing. Adv. Sci. 11, 2407729 (2024).

Mulaosmanovic, H. et al. Ferroelectric field-effect transistors based on HfO2: a review. Nanotechnology 32, 502002 (2021).

Ali, T. et al. High endurance ferroelectric hafnium oxide-based FeFET memory without retention penalty. IEEE Trans. Electron Devices 65, 3769–3774 (2018).

Yao, P. et al. Fully hardware-implemented memristor convolutional neural network. Nature 577, 641–646 (2020).

Kim, S. et al. Memristive architectures exploiting self-compliance multilevel implementation on 1 KB crossbar arrays for online and offline learning neuromorphic applications. ACS nano 18, 25128–25143 (2024).

Duan, X. et al. Memristor‐based neuromorphic chips. Adv. Mater. 36, 2310704 (2024).

Lu, C. et al. Self-rectifying all-optical modulated optoelectronic multistates memristor crossbar array for neuromorphic computing. Nano Lett 24, 1667–1672 (2024).

Zhong, Y. et al. Dynamic memristor-based reservoir computing for high-efficiency temporal signal processing. Nat. Commun. 12, 408 (2021).

Kim, J. et al. Analog reservoir computing via ferroelectric mixed phase boundary transistors. Nat. Commun. 15, 9147 (2024).

Li, Q. et al. High-performance ferroelectric field-effect transistors with ultra-thin indium tin oxide channels for flexible and transparent electronics. Nat. Commun. 15, 2686 (2024).

Gong, T. et al. First Demonstration of a Bayesian Machine based on Unified Memory and Random Source Achieved by 16-layer Stacking 3D Fe-Diode with High Noise Density and High Area Efficiency. 2023 International Electron Devices Meeting (IEDM). IEEE (2023).

Chen, L. et al. Ultra-low power Hf 0.5 Zr 0.5 O 2 based ferroelectric tunnel junction synapses for hardware neural network applications. Nanoscale 10, 15826–15833 (2018).

Garcia, V. & Bibes, M. Ferroelectric tunnel junctions for information storage and processing. Nat. Commun. 5, 4289 (2014).

Kim, I.-J., Kim, M.-K. & Lee, J.-S. Highly-scaled and fully-integrated 3-dimensional ferroelectric transistor array for hardware implementation of neural networks. Nat. Commun. 14, 504 (2023).

Kim, M.-K., Kim, I.-J. & Lee, J.-S. CMOS-compatible ferroelectric NAND flash memory for high-density, low-power, and high-speed three-dimensional memory. Sci. Adv. 7, eabe1341 (2021).

Lee, G. H. et al. Ferroelectric field-effect transistors for binary neural network with 3-D NAND architecture. IEEE Trans. Electron Devices 69, 6438–6445 (2022).

Bhatti, S. et al. Spintronics based random access memory: a review. Mater. Today 20, 530–548 (2017).

Xu, N. et al. STT-MRAM design technology co-optimization for hardware neural networks. 2018 IEEE International Electron Devices Meeting (IEDM). IEEE (2018).

Jung, S. et al. A crossbar array of magnetoresistive memory devices for in-memory computing. Nature 601, 211–216 (2022).

Kim, J. et al. Demonstration of in‐memory biosignal analysis: novel high‐density and low‐power 3D flash memory array for arrhythmia detection. Adv. Sci. 26, 2308460 (2024).

Lee, S.-T. & Lee, J.-H. Neuromorphic computing using NAND flash memory architecture with pulse width modulation scheme. Front. Neurosci. 14, 571292 (2020).

Guo, X. et al. Fast, energy-efficient, robust, and reproducible mixed-signal neuromorphic classifier based on embedded NOR flash memory technology. 2017 IEEE International Electron Devices Meeting (IEDM). IEEE (2017).

Wang, P. et al. Three-dimensional NAND flash for vector–matrix multiplication. IEEE Trans. Very Large Scale Integr. (VLSI) Syst. 27, 988–991 (2018).

Song, M. S. et al. Kernel mapping methods of convolutional neural network in 3D nand flash architecture. Electronics 12, 4796 (2023).

Kabir, H. D., Khosravi, A., Hosen, M. A. & Nahavandi, S. Neural network-based uncertainty quantification: A survey of methodologies and applications. IEEE Access 6, 36218–36234 (2018).

Gawlikowski, J. et al. A survey of uncertainty in deep neural networks. Artif. Intell. Rev. 56, 1513–1589 (2023).

Kristiadi, A., Hein, M. & Hennig, P. Being bayesian, even just a bit, fixes overconfidence in relu networks. International Conference on Machine Learning. 5436-5446 (PMLR, 2020).

Abdar, M. et al. A review of uncertainty quantification in deep learning: techniques, applications and challenges. Inf. Fusion 76, 243–297 (2021).

Boniardi, M. et al. Statistics of resistance drift due to structural relaxation in phase-change memory arrays. IEEE Trans. Electron Devices 57, 2690–2696 (2010).

Park, J. et al. Intrinsic variation effect in memristive neural network with weight quantization. Nanotechnology 33, 375203 (2022).

Blundell, C., Cornebise, J., Kavukcuoglu, K. & Wierstra, D. Weight uncertainty in neural network. International Conference on Machine Learning, 1613–1622 (PMLR, 2015).

Neal, R. M. Bayesian learning for neural networks. Vol. 118 (Springer Science & Business Media, 2012).

MacKay, D. J. A practical Bayesian framework for backpropagation networks. Neural Comput 4, 448–472 (1992).

Jospin, L. V., Laga, H., Boussaid, F., Buntine, W. & Bennamoun, M. Hands-on Bayesian neural networks—A tutorial for deep learning users. IEEE Comput. Intell. Mag. 17, 29–48 (2022).

Shridhar, K., Laumann, F. & Liwicki, M. A comprehensive guide to bayesian convolutional neural network with variational inference. ArXiv abs/1901.02731 (2019).

Tzikas, D. G., Likas, A. C. & Galatsanos, N. P. The variational approximation for Bayesian inference. IEEE Signal Process. Mag. 25, 131–146 (2008).

Andrieu, C., De Freitas, N., Doucet, A. & Jordan, M. I. An introduction to MCMC for machine learning. Mach. Learn. 50, 5–43 (2003).

Hoffman, M. D. & Gelman, A. The No-U-Turn sampler: adaptively setting path lengths in Hamiltonian Monte Carlo. J. Mach. Learn. Res. 15, 1593–1623 (2014).

Sebastian, A. et al. Two-dimensional materials-based probabilistic synapses and reconfigurable neurons for measuring inference uncertainty using Bayesian neural networks. Nat. Commun. 13, 6139 (2022).

Lin, Y. et al. Uncertainty quantification via a memristor Bayesian deep neural network for risk-sensitive reinforcement learning. Nat. Mach. Intell. 5, 714–723 (2023).

Dalgaty, T., Esmanhotto, E., Castellani, N., Querlioz, D. & Vianello, E. Ex situ transfer of bayesian neural networks to resistive memory‐based inference hardware. Adv. Intell. Syst. 3, 2000103 (2021).

Oh, S. et al. Bayesian neural network implemented by dynamically programmable noise in vanadium oxide. 2023 international electron devices meeting (IEDM). IEEE (2023).

Lin, Y. et al. Bayesian neural network realization by exploiting inherent stochastic characteristics of analog RRAM. 2019 IEEE International Electron Devices Meeting (IEDM). IEEE (2019).

Bonnet, D. et al. Bringing uncertainty quantification to the extreme-edge with memristor-based Bayesian neural networks. Nat. Commun. 14, 7530 (2023).

Yang, Giho et al. Improved ISPP scheme for narrow threshold voltage distribution in 3-D NAND flash memory.”. Solid-State Electron 202, 108607 (2023).

Park, Sung-Ho et al. Reliability improvement in vertical NAND flash cells using adaptive incremental step pulse programming (A-ISPP) and incremental step pulse erasing (ISPE). IEEE Trans. Electron Devices 71, 1834–1838 (2024).

Park, Chanyang et al. Investigation of program efficiency overshoot in 3D vertical channel NAND flash with randomly distributed traps. Nanomaterials 13, 1451 (2023).

Wang, G. et al. A deep-learning pipeline for the diagnosis and discrimination of viral, non-viral and COVID-19 pneumonia from chest X-ray images. Nat. Biomed. Eng 5, 509–521 (2021).

Acknowledgements

This work was supported in part by the National Research Foundation of Korea (NRF) grant funded by the Korea Government Ministry of Science and ICT (MSIT) under Grant RS-2023-00260527 (MS Song, D.K.), in part by the National Research Foundation of Korea(NRF) grant funded by the Korea government (MSIT) (RS-2024-00441473, D. K.), in part by the Nano & Material Technology Development Program through the National Research Foundation of Korea(NRF) funded by Ministry of Science and ICT(RS-2024-00444182, MS Song, D. K.), and by the Samsung Advanced Institute of Technology (Grant No. IO240408-09521-01, D. K.).

Author information

Authors and Affiliations

Contributions

MS Song conducted research and performed device fabrication, experiments, electrical measurements, and simulations. He wrote the manuscript. R.H.K. performed electrical measurements and analyses and wrote the manuscript. J.K. performed the simulations and wrote the manuscript. C.H.H. fabricated the devices. J.Y. supported the electrical measurements. J.H.K. supported power estimation simulations. S.Y., D.H.C., and S.K. supervised the analysis. W.S. supported the simulations. D.K. directed the study and drafted the manuscript.

Corresponding authors

Ethics declarations

Competing interests

The authors declare no competing interests.

Peer review

Peer review information

Nature Communications thanks Saptarshi Das, Amritanand Sebastian, Huaqiang Wu and the other, anonymous, reviewer(s) for their contribution to the peer review of this work. A peer review file is available.”

Additional information

Publisher’s note Springer Nature remains neutral with regard to jurisdictional claims in published maps and institutional affiliations.

Supplementary information

Source data

Rights and permissions

Open Access This article is licensed under a Creative Commons Attribution-NonCommercial-NoDerivatives 4.0 International License, which permits any non-commercial use, sharing, distribution and reproduction in any medium or format, as long as you give appropriate credit to the original author(s) and the source, provide a link to the Creative Commons licence, and indicate if you modified the licensed material. You do not have permission under this licence to share adapted material derived from this article or parts of it. The images or other third party material in this article are included in the article’s Creative Commons licence, unless indicated otherwise in a credit line to the material. If material is not included in the article’s Creative Commons licence and your intended use is not permitted by statutory regulation or exceeds the permitted use, you will need to obtain permission directly from the copyright holder. To view a copy of this licence, visit http://creativecommons.org/licenses/by-nc-nd/4.0/.

About this article

Cite this article

Song, M., Koo, RH., Kim, J. et al. Ferroelectric NAND for efficient hardware bayesian neural networks. Nat Commun 16, 6879 (2025). https://doi.org/10.1038/s41467-025-61980-y

Received:

Accepted:

Published:

Version of record:

DOI: https://doi.org/10.1038/s41467-025-61980-y

This article is cited by

-

Recent advances in ferroelectric materials, devices, and in-memory computing applications

Nano Convergence (2025)