Abstract

The human eye is highly advanced but limited by color blindness and poor adaptation to changing light. Artificial photodetectors attempt to mimic vision but often require complex processing to overcome these limitations. Thus, developing photodetectors that complement human vision is crucial to overcoming these limitations. Here, we report a CuInP2S6-based photodetector array with tunable photoresponse for in-sensor image processing, directly complementing human vision. Through ionic and electronic tuning, the photodetector shows both positive and negative correlations with light intensity and wavelength. It enhances signal-to-background ratio by 880% and suppresses noise by 1,170 times, allowing effective detection of weak signals under strong illumination. Moreover, taking advantage of the distinct photoresponse to red and green light, the photodetector could improve the contrast between red and green patterns up to 43%, offering potential aid for red-green color blindness. This work presents a vision-enhancing photodetector capable of compensating for human visual deficiencies without external computation.

Similar content being viewed by others

Introduction

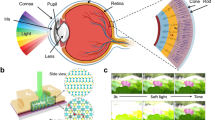

The human eye possesses over a hundred million visual cells (cones and rods), making it an excellent photodetector that contributes 80% of the information for humans1,2,3. For a long time, people have been making photodetectors attempting to emulate human vision with high sensitivity and pixel resolution. However, human vision also has some inherent shortcomings. For example, ~8% of males suffer from color blindness due to deficiencies in light-sensing cones (Fig. 1a), bringing many inconveniences to their daily lives4,5, and so far, there hasn’t been a good treatment strategy. Moreover, the differentiation between cone cells responsible for daylight vision and rod cells responsible for night vision means that they cannot function simultaneously. This not only elongates the adaptation period required for transitioning between dark and bright conditions but also hinders the human eye’s ability to detect faint light signals when exposed to intense illumination6,7. This phenomenon, known as glare, is often encountered while driving a car and being exposed to the strong upcoming headlights, making it difficult to observe the road conditions (Fig. 1b). Conventional man-made photodetectors function similarly to the human eye8,9, but none of them could overcome these human vision deficiencies. Although some advanced computer processing algorithms can help provide clearer images by resolving weak signals and detecting colors that the human eye cannot perceive through post-processing of detector images, this working mode still faces significant challenges in terms of processing efficiency, computational power, and energy consumption as the amount of detector data surges10.

a The schematic of the retina structure of the human eye with different color cones, and a comparison of colorful objects and their protanopia view due to the deficiency in red cones. b The cone cells work under bright conditions, while the rod cells work under dim conditions; a weak signal cannot be observed when facing the bright headlights since cones and rods cannot work simultaneously. c CIPS photodetector array and its microscopic molecular structure under photon illumination. d Multiple relationships between photocurrent and light intensity lead to the in-sensor image transformations, including logarithmic transformation, piecewise transformation, and inverse transformation. e Distinct photoresponse of CIPS photodetector for red, green, and blue light, which results in distinguishable photoresponse for color blindness individuals. The gradient color in the plot represents light intensity, with darker colors indicating higher light intensity.

Many recent efforts have been made on addressing the challenges in the massive data transmission and processing between the detectors and processors11,12,13,14,15,16. A concept of near-sensor and in-sensor computing has been proposed to reduce the redundant data movement between the sensing and processing units17. This type of detection device has shown advantages in specific situations, such as a computational event-driven vision sensor that could selectively capture and convert the dynamic motion into programmable informative spiking signals18, and an in-memory sensing and computing device could realize image recognition without any memory and computing units19. While this approach shows significant potential for future applications, it still faces challenges in overcoming the shortcomings of human vision, which needs more complicated image transformation and processing ways.

Here we demonstrate a photodetector array made with the two-dimensional ionic conducting material, CuInP2S6 (CIPS). By controlling the ionic conductivity, the CIPS detector exhibits tunable photoresponse that varies with light wavelength and intensity, demonstrating both positive and negative correlation with light intensity. This photoresponse enables functionalities beyond the conventional human vision, such as enhanced supervision performance. The tunable photoresponse behavior of CIPS photodetector enables the image transformations within the sensing device itself, which can be applied for object enhancement and noise reduction in low signal scenarios against strong backgrounds. It is used to highlight and identify vehicles and people under low-light or glare conditions, demonstrating its potential application in advanced driver assistance systems. All image processing is completed within the detector array, independent of any subsequent data processing unit, showing its capability for in-sensor detection and processing. Moreover, considering the distinct photoresponse to red and green light in image processing, the CIPS photodetector can assist individuals with red-green color blindness. Thus, the CIPS photodetector array shows its potential for complex in-sensor image processing and color blindness assistance, representing a substantial advancement in artificial vision technology.

Results

Ionic-electronic photodetector based on CuInP2S6 material

CIPS is a van der Waals layered material, and the Cu ions are weakly bonded with the (P2S6)4− in the molecular structure (Fig. 1c), resulting in the ionic conducting behavior through Cu+ ions20,21,22,23,24. Many works have studied the ion transport behavior in CIPS to make an ionic memristor25,26,27,28,29. Here, we fabricated two-terminal photodetector devices with a 20 µm channel based on 43 nm CIPS thin flakes (“Methods” and Supplementary Note 1). Due to the presence of ionic conduction, the device exhibits a nontrivial photoresponse behavior in contrast to a conventional semiconductor. Specifically, it shows tunable relationships between photocurrent and light intensity, shifting from positive correlation to negative correlation (Fig. 1d), which is distinct from the human eye and conventional photodetector. This characteristic allows for in-sensor image transformations during detection. The device can achieve various types of image transformations, including logarithmic, piecewise, and inverse transformations based on relationships between photocurrent and light intensity. These capabilities enable the object enhancement and intensity inversion, facilitating advanced in-sensor image processing and functionalities beyond the conventional human vision. Additionally, the photoresponse of the CIPS photodetector can be tuned by varying the wavelength of incident light, as shown in Fig. 1e. The device exhibits a positive photoresponse for red light (638 nm), and a negative photoresponse for green and blue light (520 nm and 450 nm, respectively). This wavelength-dependent behavior enables the photodetector to differentiate between red and green color shades, providing valuable assistance for individuals with color blindness.

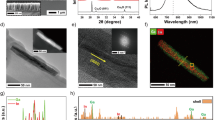

The intrinsic photoelectric effect of the CIPS semiconductor can be observed only when the ionic conductivity is suppressed at low temperature or under low bias voltages. Due to the highly insulating nature of CIPS material30,31,32, the CIPS photodetector exhibits an extremely low dark current of ~10 fA, with a large photo-to-dark current ratio at 405 nm up to 104, as shown in Supplementary Fig. 2. It shows fast photoresponse from visible to UV light with a photoresponsivity reaching 28 mA·W−1 at 254 nm. Such an intrinsic photoelectric response is easily disturbed by the ionic conductivity, showing tunable photoresponse for light with varying intensities and wavelengths. To systematically elucidate the underlying photoresponse mechanism of the CIPS photodetector, we tested the photoconductivity of the device under various conditions (“Methods” and Supplementary Note 1). Figure 2a–c shows the conductivity measurement of three identical CIPS devices made from a large-sized CIPS flake under different light conditions and the microscopic characterization of the conducting channel, respectively. The device 1 exhibits an extremely low (0.05 pA) and stable current when only applying 5 V bias voltage in the dark (Fig. 2a). The corresponding energy dispersion spectroscopy (EDS) mapping of device 1 shows the uniformly distribution of Cu elements, suggesting the biased electric field (~4.6 kV·cm−1) is not strong enough to assist the Cu ions in overcoming the hopping barrier in CIPS. In contrast, the conductivity of device 2 gradually increases under 405 nm light illumination (P = 1 mW·cm−2) when applying the same bias voltage as device 1 (Fig. 2b). The corresponding EDS mapping shows a clear accumulation of Cu element near the grounded electrode in device 2, indicating the emerging of ionic conductivity in CIPS. This is consistent with the photon promoting ionic conductivity as reported before24,27. The Raman spectroscopy and atomic force microscopy measurement of the device also indicate the activation of ionic conductivity after light illumination (see Supplementary Figs. 4 and 5).

The I–t curve, EDS mapping, line profile of Cu elements for three identical CIPS photodetectors under 5 V bias voltage, device 1 in the dark (a), device 2 under 405 nm light illumination with an intensity of 1 mW·cm−2 (b), device 3 under 405 nm light illumination with an intensity of 30 mW·cm−2 (c). All scale bars represent 5 µm. d Three distinct photoresponse processes in the CIPS photodetector, including intrinsic photoelectric effect (I), photon-assisted ionic conductivity under weak light (II), and recombination of photogenerated electrons and ions under intense light (III). e The photoresponse under 405 nm light pulses with an intensity of 1 mW·cm−2 and 4 mW·cm−2 at 300 K. f The photoresponse under 450 nm, 520 nm, and 638 nm light pulses with an intensity of 40 mW·cm−2. g The net photocurrent (It−I0) under varying light wavelengths with an intensity of 60 mW·cm−2 after 12 s of illumination. h Schematic of photoresponse origin for photons with energy (Ehν) above and below bandgap (Eg). EC: conduction band; EV: valence band; WS: hopping barrier height for Cu+ ions.

Strikingly, there is an inhibition following the initial increase in conductivity when a stronger light (30 mW·cm−2) is illuminated on device 3 (Fig. 2c), and ultimately not reaching the same current level as device 2. Meantime, there is no accumulation of Cu element that can be observed in the EDS mapping in device 3. Note that the other elements (In, P, S) are also homogeneously distributed as shown in the EDS mapping (Supplementary Fig. 6). These results suggest that stronger light will suppress the ionic conductivity CIPS channel. A similar phenomenon has been observed in early reports in superionic conductors Ag13I9W2O8 by Scott et al.33. The decrease in conductivity under illumination is attributed to the recombination of conducting ions and photogenerated electrons34, which not only reduces the concentration of conducting ions but also blocks the ions conducting channel by neutralized metal atoms35.

Therefore, three distinct processes can be observed in the CIPS photodetector under illumination, as illustrated in Fig. 2d. First, the intrinsic photoelectric effect exists in CIPS materials due to its moderate bandgap of 2.6–2.9 eV36,37. The conductivity could be regulated by the photon-generated electrons and holes. This phenomenon can be clearly distinguished when ionic conductivity is frozen upon cooling down to 240 K38 (Supplementary Figs. 3 and 7). Second, photon illumination could also promote the ions’ hopping between potential wells, leading to the increased ionic conductivity, as demonstrated in Fig. 2b. Third, under strong illumination, photogenerated electrons can recombine with Cu+ ions, leading to reduction in ionic conductivity, as shown in Fig. 2c. The negative photoconductivity can be described as the kinetic process hν + Cu+ → e− + h+ + Cu+ → h+ + Cu0, which occurs when photogenerated electrons neutralize a sufficient number of Cu+ ions, thereby causing a decrease in conductivity (Supplementary Note 1). To further evidence the working mechanism, we also conducted numerical simulations of the photoresponse behavior under weak and strong light, which could well reproduce the different trends of the photocurrent as shown in Fig. 2b, c, the detailed simulation is demonstrated in the Supplementary Note 1 and Supplementary Fig. 9.

As shown in Fig. 2e, the CIPS photodetector shows enhanced conductivity at an intensity of 1 mW·cm−2 and reduced conductivity under 4 mW·cm−2 light pulses, showing a transition from enhancement to suppression of the conductivity with increased light intensity at 300 K. This is contrasted with the photoresponse behavior at 240 K, where both the photocurrent and dark current remain stable under light pulses with varying intensities (Supplementary Fig. 10). Furthermore, the photoresponse of CIPS photodetector is also influenced by light wavelength. Figure 2f shows increased conductivity under 638 nm light pulses, while decreased conductivity occurs under 450 nm and 520 nm light pulses. The photocurrent after 12 s of illumination for wavelengths from 405 to 980 nm, revealing a clear positive to negative photoresponse transition (Fig. 2g and Supplementary Fig. 11). The distinct behavior is closely related to the different photoconducting processes associated with varying incident photon energy (Fig. 2h). Photons with energy below the bandgap but above the activation energy of Cu ions (0.62 eV)24,38 can enhance conductivity by promoting ion hopping. In contrast, photons with energy above the bandgap not only enhance the ionic conductivity but also generate electron-hole pairs due to the photoelectric effect. The recombination of these photon-generated electrons with Cu+ ions leads to the observed negative photoresponse.

In-sensor Image processing with CuInP2S6 photodetector

As aforementioned, the photoresponse of the CIPS detector depends on the light intensity. Increasing light intensity would lead to a positive to negative photoresponse transition. We systematically investigated the photocurrent dependency on the light intensity for 405 nm light as shown in Fig. 3a. When the intensity is weaker than 4 mW·cm−2, only positive photoresponse has been observed as a function of illumination duration. However, when the light intensity is stronger than 4 mW·cm−2, a clear transition from positive to negative photoresponse can be seen. Therefore, we can achieve multiple relationships between the photocurrent and light intensity by taking different accumulation times, as shown in Fig. 3b and Supplementary Fig. 12. It could not only demonstrate the typical positive correlation as the human eyes and most photodetectors do, but also demonstrate negative correlation, and parabolic correlation. These are the functions widely used for image transformations in computational algorithms for post-analysis39,40,41, indicating the CIPS photodetector could conduct image processing while capturing without the assistance of computing units. To verify the image processing capability, we fabricated a 5 × 10 CIPS photodetector array as shown in Fig. 3c (“Methods” and Supplementary Note 2). The CIPS photodetector array exhibits good uniformity in properties, as can be seen from its dark current mapping and thickness distribution histogram (Fig. 3d and Supplementary Fig. 13). Meanwhile, we found that photocurrent remains almost constant within a specific thickness range, which contributes to the uniform photoresponse observed across the CIPS photodetector array, as shown in Supplementary Fig. 14. We then took an image of a designed object using the CIPS photodetector array (Supplementary Fig. 15). The raw object image “FD” and photocurrent map taking by CIPS detector are shown in Fig. 3e. In the raw image, the character “D” is obscured due to the strong background interference, and only the character “F” is visible. As a contrast, the photocurrent map taken by the CIPS photodetectors clearly shows the “FD” characters by inversing the intensity and removing high noise background, resulting in a significantly improved signal-to-noise ratio (Supplementary Fig. 16 and Supplementary Movie 1). These results demonstrate that the CIPS photodetector could be used for detecting hidden information through in-sensor image processing.

a Photoresponse under 405 nm light at varying intensities, with the color bar indicating the net photocurrent. b Correlation between photocurrent and light intensity at different accumulation times, showing positive, parabolic, and negative correlations at 0.5 s, 5 s, and 10 s, respectively. c Optical and SEM images of the CIPS photodetector array consisting of 5 × 10 pixels. The scale bar in the optical image represents 50 µm, while that in the SEM image represents 20 µm. d Dark current map and corresponding histogram of the CIPS photodetector array. e Designed object image “FD” and its corresponding photocurrent map obtained from CIPS photodetector. f LTP and LTD behavior of CIPS photodetector under light pulses of 1 mW·cm−2 and 10 mW·cm−2, respectively. g Workflow of a CNN network, and the recognition accuracy of the raw image and processed image incorporating the LTP and LTD data for weight updating.

Furthermore, the conductivity of the device can be regulated by the laser pulses as shown in Fig. 3f and Supplementary Fig. 19, leading to the so-called long-term potentiation (LTP) and long-term depression (LTD) behavior. This makes the CIPS device also be used for all-optical neuromorphic computing (Supplementary Note 2). We compared the performance of the CIPS photodetector with other all-optical modulation devices based on 2D materials (Supplementary Table 1). The CIPS photodetector exhibits a simple architecture and advantages in multi-state capability and low non-linearity factor (0.04 for the LTP and 1.52 for the LTD, Supplementary Fig. 20). Taking advantage of the LTP and LTD properties, we demonstrate the potential application in neuromorphic computing of the CIPS photodetector by constructing a convolutional neural network (CNN)42,43 (Fig. 3g). Customized datasets of two characters similar to “FD” image were established as training and test datasets (“Methods” and Supplementary Note 2). The recognition accuracy for the raw images and the processed images through the CIPS detector is strikingly different. After 100 epochs, the accuracy for the raw images is about 45%, while that for the processed images exceeds 99%. These results demonstrate the effectiveness high recognition accuracy of image processing using CIPS photodetector, together with its application in all-optical neuromorphic computing (Supplementary Fig. 22). We tested the performance of the CIPS photodetector with varying channel lengths, and even when the size was reduced to 2 µm, the device still exhibited a tunable photoresponse, meeting the requirements for device miniaturization (Supplementary Fig. 23).

We further tested the in-sensor image transformations utilizing the multiple relationships between photocurrent and light intensity. Figure 4a shows an image where signal information has been obscured by the artificially added random noise. The signal-to-noise ratio (SNR) of the raw image is only 0.7, making the signal unrecognized. Conventional image processing for such scenes involves capturing the image, storing it in a memory device, and processing it in a computation unit using special transformation functions (Fig. 4b)44,45. This approach requires significant data transfer between sensing and processing units, leading to low processing efficiency and high energy consumption. In contrast, the CIPS photodetector could perform image processing directly during detection, as illustrated in Fig. 4c. Figure 4d, e shows the processed results of the noisy image from Fig. 4a via these two different approaches. Figure 4d displays the image processed by a computer, using a logarithmic transformation with the function s = −log (r−b), where s represents the processed pixel intensity, r represents the raw pixel intensity, and b is a constant. Figure 4e shows the simulated result from the CIPS photodetector after 10 s of illumination. It is clear that the noise level has been significantly reduced after processing by the CIPS photodetector, achieving results comparable to the computer-based transformation. The SNR of the CIPS photodetector processed image reaches ~820, a 1170-fold improvement compared with the raw image.

a Example of a highly noisy image where the signal object is completely obscured by noise, with a signal-to-noise (SNR) ratio of ~0.7. b Conventional image processing workflow: capturing the image with a camera, storing it in a memory device, and processing it using transformation functions in a computation unit. c In-sensor image processing workflow using the CIPS photodetector: performing image transformations directly during detection. Processed image using the computational algorithm (d) and CIPS photodetector (e). f Image transformations using the CIPS photodetector: the raw image, captured by a charge-coupled device camera in a dark scene, with signal area outlined by a white-dashed box, and the background area by a green-dashed box. The signal-to-background intensity ratio (SBR) increases from 0.14 to 1.24 as accumulation time increases from 0.5 to 10 s, dramatically enhancing visibility of objects in the dark. g Histogram of raw and processed images at different illumination times (t = 0.5 s, 5 s, and 10 s).

Furthermore, we explored the potential application of the CIPS photodetector in real-life scenarios commonly encountered, such as in the dark and glaring situations, where human vision fails to detect weak signals. As shown in Fig. 4f, the raw image shows a dark scene captured by a charge-coupled device camera without any computational processing. The weak details in the image, like people and cars, are not visible in the raw image. By utilizing the relationship between photocurrent and light intensity in the CIPS photodetector, we simulated the detector’s performance at different moments. The results clearly show a ~880% enhancement in the signal-to-background ratio (SBR), improving from 0.14 to 1.24, making previous hidden objects significantly more pronounced. At longer illumination times, the intensity of the image can be even inverted (Supplementary Fig. 24 and Supplementary Movie 1). The corresponding histogram of the images further confirms the transformations occurring during processing by the CIPS photodetector (Fig. 4g). These findings demonstrate the strong capability of CIPS detectors for detecting faint signal against a strong background, which could be particularly useful for the autonomous driving system in challenging conditions such as darkness and glare (Supplementary Fig. 25). Moreover, the recognition accuracy of processed image is significantly improved compared to raw images when tested using a standard object recognition algorithm (YOLO v3) (“Methods” and Supplementary Note 3).

Color-blindness assistance with CuInP2S6 photodetector

The photoresponse of the CIPS detector also varies with light wavelength, which enables the imaging processing of colored images. Figure 5a shows the photoresponse for different colored lights under varying intensities over time, revealing clear differences between red (638 nm) and green (520 nm) light. The photocurrent difference increases with illumination time for the same incident light intensity (Fig. 5b). Taking advantage of such differences, the 5 × 10 CIPS detector array has been utilized to capture images of color-blind objects, as illustrated in Fig. 5c (see “Methods” and Supplementary Note 4). As shown in Fig. 5d, the raw image with colored “FD” characters is visible to individuals with normal vision but indistinguishable to those with protanopia. However, when the “FD” image is captured using the CIPS detector array, the red channel intensity is enhanced while the green channel is suppressed due to their distinct photoresponse (Supplementary Fig. 29). This adjustment improves the intensity contrast between the red and green patterns by 43%, making the image distinguishable for individuals with protanopia (Fig. 5e). Additionally, a simulated image of a red-green color blindness test card “69” was created employing the device’s photoresponse curve, as shown in Fig. 5f–h (Methods). The simulation reveals that the test number “69” becomes distinguishable for protanopia individuals when detected by the CIPS photodetector. These results underscore the CIPS detector’s capability in assisting individuals with color blindness, particularly for those with protanopia.

a The photoresponse for 450 nm (blue), 520 nm (green), and 638 nm (red) light with varying intensities. The color bar represents the net photocurrent. b The photocurrent difference between red and green as a function of accumulation time. c Schematic diagram of detecting the color-blindness pattern “FD” with a CIPS photodetector array. d, e The designed target image “FD” and its photocurrent map captured by the 5 × 10 CIPS detector array after 3 s illumination. The protanopia vision simulation demonstrates that due to the contrast change between the green and red channels, the letters “FD” become distinguishable for individuals with color blindness. f–h Simulated detector image for the color blindness test card “69” after an accumulation time of 10 s, based on the different photoresponse behavior for red and green light. The normal vision view where the number is clearly visible (f); The protanopia view where the number is indistinguishable (g); The protanopia view after CIPS detector processing where the number becomes distinguishable (h).

Discussion

In this work, we demonstrated a supervisory photodetector based on the ionic conductor CIPS. The photodetector exhibits nontrivial photoresponse to light of varying wavelengths and intensities by modulating the electronic and ionic conductivity of CIPS. This results in a variable relationship between photocurrent and light intensity, transitioning from a conventional positive correlation to a negative correlation. This characteristic enables the image transformations and processing by the photodetector itself without any additional computational unit. It can effectively enhance faint signals against a strong background, such as vehicles and people under low-light or glare conditions, complementing the shortcomings of human vision. Furthermore, the CIPS photodetector shows distinct photoresponse behavior for red and green light, aiding individuals with protanopia by improving the contrast between red and green patterns while capturing. A 5 × 10 CIPS photodetector array was created to validate these capabilities, showing significantly improved SBR and reduced noise levels, consistent with simulations of the image processing capabilities of the CIPS detector. These findings represent a substantial advancement in the development of in-sensor image processing technologies for visual assistance. The CIPS photodetector’s ability to autonomously transform and process images, coupled with its wavelength-selective response, overcomes limitations of conventional photodetectors and opens avenues for applications in color vision correction. In the future, further optimization to enhance the performance of CIPS photodetectors, the growth of high-quality, large-area, uniform CIPS thin films, and the fabrication of large-scale photodetector array imaging devices will be of great significance in advancing their practical applications.

Methods

CuInP2S6 photodetectors fabrication and characterization

CIPS flakes with a size from tens to hundreds of micrometers and a thickness of ~40 nm were mechanically exfoliated from the bulk crystal and transferred onto the Si substrate with a 285 nm SiO2 layer. The large-scale CIPS flakes were first patterned into squared arrays with a size of 150 × 150 μm2 by the standard electron beam lithography (EBL) process, and then etched by the reactive ion etching (RIE, Trion T2). The Cr/Au (10/60 nm) electrodes were fabricated through the thermal evaporation method at a deposition rate of 0.5 Å·s−1 following the standard EBL process. In the photodetector array, each unit has a channel length of 8 μm, and the distance between pixels is ~9 μm.

The thickness of the CIPS flakes and photodetector array was characterized by the atomic force microscope (Bruker Dimension Edge). Scanning electron microscopy image and electron dispersion spectroscopy of different elements were measured by the Axia Chemi SEM with a 10 kV voltage. Electrical and optoelectronic measurements were conducted using the semiconductor parameter analyzers (Keithley 4200A-SCS and Keysight B1500A) with a Lakeshore probe station. Optical fiber-tailed laser diodes with different light wavelengths were used as the incident light for the photoresponse measurements.

Image processing with CuInP2S6 photodetectors

The detector images with 5 × 10 pixels in Fig. 3 were captured by the 5 × 10 photodetector array, where the pre-designed object image “FD” was used as the input signal. The incident light intensity for each detector pixel is proportional to the gray value of the pixel in the raw image. And in the color blindness test image, incident intensity for red, green, and blue light is proportional to the pixel intensity in the red, green, and blue channels of the raw image (Fig. 5d). The obtained photocurrent of the detector arrays is then displayed as the detector image.

The raw image (photograph of the vehicles) in Fig. 4f is captured by the Nikon D7500 camera at night without using any built-in visual enhanced algorithms. The detector processed image at different moments is simulated considering the relationship between the photocurrent and light intensity at varying times, as shown in Fig. 3b, where the gray value of the raw image is proportionally transferred into light intensity in the simulation. The detector processed image for the color-blindness test image in Fig. 5 is also simulated in a similar way, where the photoresponse behavior for different light wavelengths has been considered. The protanopia view of the images is obtained through the built-in protanopia visual mode in Adobe Illustrator software with CMYK color mode. Standard color-blind test pictures were obtained from the website: https://uutool.cn/color-blindness/.

Convolutional neural network and object recognition

Based on the LTP and LTD properties of the CIPS photodetector, we constructed a CNN to test the image recognition. The CNN consists of an input layer, a convolution layer, and a fully connected layer. We made a customized dataset of two characters similar to the “FD” image were established as training and test datasets. Four thousand eight hundred different 5 × 10 pixels grayscale images, encompassing eight categories, including “FD”, were randomly generated. Each image has grayscale values distributed from 0 to 255, with noise included, and the test set of 480 images was completely different and more complex than those in the training set. The CNN architecture adjusted weights based on the LTP/LTD curves obtained from the CIPS photodetector, and it was compared with full precision simulation results (Supplementary Note 2). We obtained the recognition accuracy curve with epoch from the test set before and after image processing, and 500 times of recognition results are averaged to minimize the influence of the chance factor. The recognition of the objects in images is conducted with a popular object recognition algorithm, You Only Look Once version 3 (YOLO v3) network (Supplementary Note 3 and Supplementary Fig. 27).

Data availability

The Source Data underlying the figures of this study are available at https://doi.org/10.6084/m9.figshare.28870310. All raw data generated during the current study are available from the corresponding authors upon request.

Code availability

The source codes used for simulation and data plotting are available from the corresponding authors upon request.

References

Masland, R. H. The neuronal organization of the retina. Neuron 76, 266–280 (2012).

Euler, T. et al. Retinal bipolar cells: elementary building blocks of vision. Nat. Rev. Neurosci. 15, 507–519 (2014).

Pandiyan, V. P. et al. The optoretinogram reveals the primary steps of phototransduction in the living human eye. Sci. Adv. 6, eabc1124 (2020).

Crameri, F., Shephard, G. E. & Heron, P. J. The misuse of colour in science communication. Nat. Commun. 11, 5444 (2020).

Mancuso, K. et al. Gene therapy for red-green colour blindness in adult primates. Nature 461, 784–787 (2009).

Takemura, Y., Ito, M., Shimizu, Y., Okano, K. & Okano, T. Adaptive light: a lighting control method aligned with dark adaptation of human vision. Sci. Rep. 10, 11204 (2020).

Tikidji-Hamburyan, A. et al. Retinal output changes qualitatively with every change in ambient illuminance. Nat. Neurosci. 18, 66–74 (2015).

Liu, W. et al. Self-powered and broadband opto-sensor with bionic visual adaptation function based on multilayer γ-InSe flakes. Light Sci. Appl. 12, 180 (2023).

Liao, F. et al. Bioinspired in-sensor visual adaptation for accurate perception. Nat. Electron. 5, 84–91 (2022).

Graves, C. E. et al. In‐memory computing with memristor content addressable memories for pattern matching. Adv. Mater. 32, 2003437 (2020).

Zhang, Z. et al. All-in-one two-dimensional retinomorphic hardware device for motion detection and recognition. Nat. Nanotechnol. 17, 27–32 (2022).

Mennel, L. et al. Ultrafast machine vision with 2D material neural network image sensors. Nature 579, 62–66 (2020).

Huang, H. et al. Fully integrated multi-mode optoelectronic memristor array for diversified in-sensor computing. Nat. Nanotechnol. 20, 93–103 (2025).

Li, T. et al. Reconfigurable, non-volatile neuromorphic photovoltaics. Nat. Nanotechnol. 18, 1303–1310 (2023).

Huang, P.-Y. et al. Neuro-inspired optical sensor array for high-accuracy static image recognition and dynamic trace extraction. Nat. Commun. 14, 6736 (2023).

Chen, K. et al. Organic optoelectronic synapse based on photon-modulated electrochemical doping. Nat. Photonics 17, 629–637 (2023).

Zhou, F. & Chai, Y. Near-sensor and in-sensor computing. Nat. Electron. 3, 664–671 (2020).

Chen, J. et al. Optoelectronic graded neurons for bioinspired in-sensor motion perception. Nat. Nanotechnol. 18, 882–888 (2023).

Wu, G. et al. Ferroelectric-defined reconfigurable homojunctions for in-memory sensing and computing. Nat. Mater. 22, 1499–1506 (2023).

Qi, L., Ruan, S. & Zeng, Y. J. Review on recent developments in 2D ferroelectrics: Theories and applications. Adv. Mater. 33, 2005098 (2021).

Brehm, J. A. et al. Tunable quadruple-well ferroelectric van der Waals crystals. Nat. Mater. 19, 43–48 (2020).

Zhou, Z. et al. Unconventional polarization fatigue in van der Waals layered ferroelectric ionic conductor CuInP2S6. Nat. Commun. 14, 8254 (2023).

Zhou, J. et al. 2D ferroionics: Conductive switching mechanisms and transition boundaries in van der Waals layered material CuInP2S6. Adv. Mater. 35, e2302419 (2023).

Zhong, Z. et al. Robust threshold-switching behavior assisted by Cu migration in a ferroionic CuInP2S6 heterostructure. ACS Nano 17, 12563–12572 (2023).

Chen, J. et al. Mimicking neuroplasticity via ion migration in van der Waals layered copper indium thiophosphate. Adv. Mater. 34, e2104676 (2022).

Wu, Y., Duong, N. T., Chien, Y. C., Liu, S. & Ang, K. W. A Dynamic memory for reservoir computing utilizing ion migration in CuInP2S6. Adv. Electron. Mater. 10, 2300481 (2024).

Liu, Y. et al. CuInP2S6-based electronic/optoelectronic synapse for artificial visual system application. Adv. Funct. Mater. 34, 2306945 (2024).

Ci, W., Wang, P., Xue, W., Yuan, H. & Xu, X. Engineering ferroelectric-/ion-modulated conductance in 2D vdW CuInP2S6 for non-volatile digital memory and artificial synapse. Adv. Funct. Mater. 34, 2316360 (2024).

Sun, Y. et al. Internal ion transport in ionic 2D CuInP2S6 enabling multi-state neuromorphic computing with low operation current. Mater. Today 66, 9–16 (2023).

Wu, J. et al. High tunnelling electroresistance in a ferroelectric van der Waals heterojunction via giant barrier height modulation. Nat. Electron. 3, 466–472 (2020).

Liu, F. et al. Room-temperature ferroelectricity in CuInP2S6 ultrathin flakes. Nat. Commun. 7, 1–6 (2016).

Zhong, Z. et al. Reconfigurable logic circuits and rectifier based on two-terminal ionic homojunctions. Device 3, 100712 (2025).

Scott, J. F. & Melnick, B. M. Superionic conductors as fast, repetitive opening switches. In Proc. Space Structures, Power, and Power Conditioning Vol. 871, 153–156 (SPIE, 1988).

Dang, Q. et al. Bias-free driven ion assisted photoelectrochemical system for sustainable wastewater treatment. Nat. Commun. 14, 8413 (2023).

Kawashima, T. et al. Argon-plasma-controlled optical reset in the SiO2/Cu filamentary resistive memory stack. Appl. Phys. Lett. 112, 213505 (2018).

Rao, R. et al. Investigating strain between phase-segregated domains in Cu-deficient CuInP2S6. Chem. Mater. 35, 8020–8029 (2023).

Ma, R.-R. et al. High-speed ultraviolet photodetectors based on 2D layered CuInP2S6 nanoflakes. Appl. Phys. Lett. 117, 131102 (2020).

Zhou, S. et al. Anomalous polarization switching and permanent retention in a ferroelectric ionic conductor. Mater. Horiz. 7, 263–274 (2020).

Yang, Y. et al. In-sensor dynamic computing for intelligent machine vision. Nat. Electron. 7, 225–233 (2024).

Ye, H.-S., Zhou, N.-R. & Gong, L.-H. Multi-image compression-encryption scheme based on quaternion discrete fractional Hartley transform and improved pixel adaptive diffusion. Signal Process. 175, 107652 (2020).

Monga, V., Li, Y. & Eldar, Y. C. Algorithm unrolling: Interpretable, efficient deep learning for signal and image processing. IEEE Signal Process. Mag. 38, 18–44 (2021).

Wright, L. G. et al. Deep physical neural networks trained with backpropagation. Nature 601, 549–555 (2022).

Dai, Y. et al. Interfacial magnetic spin Hall effect in van der Waals Fe3GeTe2/MoTe2 heterostructure. Nat. Commun. 15, 1129 (2024).

Anubha Pearline, S., Sathiesh Kumar, V. & Harini, S. A study on plant recognition using conventional image processing and deep learning approaches. J. Intell. Fuzzy Syst. 36, 1997–2004 (2019).

Ashtiani, F., Geers, A. J. & Aflatouni, F. An on-chip photonic deep neural network for image classification. Nature 606, 501–506 (2022).

Acknowledgements

This work was supported by National Key Research and Development Program of China (grants No. 2021YFA1200700 (J.W.)), and National Natural Science Foundation of China (grants No. 12204109 (H.H.)), and 12274090 (W.S.), and the Science and Technology Innovation Plan of Shanghai Science and Technology Commission (grant No. 21JC1400200 (H.H.)), and Natural Science Foundation of Shanghai (grant No. 22ZR1406300 (W.S.)). Part of the experimental work has been carried out in the Fudan Nanofabrication Laboratory.

Author information

Authors and Affiliations

Contributions

Z.Z., Y.Z., X.C., J.Z., Q.Y., and X.L. fabricated the devices and conducted the optoelectronic characterization. Z.Z., Y.Z., Y.C., H.S., W.S., and H.H. analyzed the experimental data. T.L., X.M., J.W., and J.C. advised on the experiments and data analysis. Z.Z., Y.Z., and H.H. wrote the manuscript based on inputs from all the other authors. H.H. and J.W. conceived this project.

Corresponding authors

Ethics declarations

Competing interests

The authors declare no competing interests.

Peer review

Peer review information

Nature Communications thanks Giovanni Matrone and the other anonymous reviewer(s) for their contribution to the peer review of this work. A peer review file is available.

Additional information

Publisher’s note Springer Nature remains neutral with regard to jurisdictional claims in published maps and institutional affiliations.

Rights and permissions

Open Access This article is licensed under a Creative Commons Attribution-NonCommercial-NoDerivatives 4.0 International License, which permits any non-commercial use, sharing, distribution and reproduction in any medium or format, as long as you give appropriate credit to the original author(s) and the source, provide a link to the Creative Commons licence, and indicate if you modified the licensed material. You do not have permission under this licence to share adapted material derived from this article or parts of it. The images or other third party material in this article are included in the article’s Creative Commons licence, unless indicated otherwise in a credit line to the material. If material is not included in the article’s Creative Commons licence and your intended use is not permitted by statutory regulation or exceeds the permitted use, you will need to obtain permission directly from the copyright holder. To view a copy of this licence, visit http://creativecommons.org/licenses/by-nc-nd/4.0/.

About this article

Cite this article

Zhong, Z., Zhuang, Y., Cheng, X. et al. Ionic-electronic photodetector for vision assistance with in-sensor image processing. Nat Commun 16, 7096 (2025). https://doi.org/10.1038/s41467-025-62563-7

Received:

Accepted:

Published:

Version of record:

DOI: https://doi.org/10.1038/s41467-025-62563-7

This article is cited by

-

Multisensory Neuromorphic Devices: From Physics to Integration

Nano-Micro Letters (2026)