Abstract

Grid cells, with hexagonal spatial firing patterns, are thought critical to the brain’s spatial representation. High-speed movement challenges accurate localization as self-location constantly changes. Previous studies of speed modulation focus on individual grid cells, yet population-level noise covariance can significantly impact information coding. Here, we introduce a Gaussian Process with Kernel Regression (GKR) method to study neural population representation geometry. We show that increased running speed dilates the grid cell toroidal-like representational manifold and elevates noise strength, and together they yield higher Fisher information at faster speeds, suggesting improved spatial decoding accuracy. Moreover, we show that noise correlations impair information encoding by projecting excess noise onto the manifold. Overall, our results demonstrate that grid cell spatial coding improves with speed, and GKR provides an intuitive tool for characterizing neural population codes.

Similar content being viewed by others

Introduction

In navigation, it is crucial that the brain forms a certain internal representation of the external space1. Grid cells are widely regarded as an essential component of internal spatial representation2,3. Their hexagonal spatial firing patterns are thought to form a coordinate system of external space4 and support the downstream hippocampal spatial representations (e.g., place cells)5,6,7,8,9. Yet maintaining precise spatial coding is particularly challenging at high running speeds, when self-location changes rapidly10. The effect of running speed modulation on grid cell population coding remains unclear.

Previous literature offers dual possible predictions about running speed modulation on grid cell codes. On the one hand, speed may support grid cell spatial coding. Running speed is known to mostly increase grid cell firing rates11,12,13. Rats running at high speeds (10 cm/s to 50 cm/s) are also known to have more medial entorhinal cortex (MEC) cells coding spatial information than when running at a low speeds (2 cm/s to 10 cm/s)10. On the other hand, speed signals disrupt the phase differences between pairs of grid cells14. Increasing speed may also lead to larger input noise (possibly from the medial septum11,15 or speed cells16,17), causing larger noise error to accumulate over time, thus degrading spatial coding fidelity18,19,20,21.

While these previous studies provide insights into speed modulations of grid cells, their analyses were limited to individual or pairs of grid cells11,12,13,14 (although decoding analysis has been performed on MEC cell population10). Neurons in the brain represent information through their collective population activity. Population noise covariance can significantly impact information coding22,23,24,25,26,27,28. Grid cells’ activities are especially known to be tightly coupled and change coherently14,29. To study the speed modulation of grid cell code, it is important to analyze simultaneously recorded grid cell population activities, including the effect of noise covariance. Yet such a study is still lacking.

Because neural data is intrinsically high-dimensional, inferring the noise-covariance matrix can be difficult. A standard approach for discretely valued information is to compute the sample covariance across trial responses24. To handle continuously varying stimuli (e.g., orientations of static grating stimuli), one typically first bins continuous parameters, then collected repeated trials at each bin30,31,32,33. From these trial-based data, the noise covariance can be estimated using the sample-covariance estimator or, more recently, via a Wishart-process model34.

However, discretizing continuous stimuli and collecting trial-based data can be impractical for two main reasons. First, high-dimensional inputs—such as natural images—require an exponentially large set of discretized values27. Second, many naturalistic experimental paradigms (e.g., navigation tasks) lack repeated trials31,33,35. A study on retinal representations of natural images addressed these challenges by substituting retinal data with convolutional neural network (CNN) units, explicitly formulating the noise covariance27. However, this approach relies on the observed similarities between retinal neurons and CNN units36. There’s a trend in neuroscience to move beyond trial-based experiments, towards trial-free naturalistic experiments31,33. Yet, to our knowledge, it remains challenging to reliably estimate noise covariance from high-dimensional neural data in naturalistic tasks without repeated trials.

In this paper, we introduce Gaussian Process with Kernel Regression (GKR), a method for inferring both the smooth mean (manifold) and noise covariance from high-dimensional neural data, including recordings from naturalistic tasks. The study of manifolds and noise covariance falls within the framework of information geometry27,37. We applied GKR to simultaneously recorded grid cell activities35. We found that: (1) Running speed both dilates the grid cells’ toroidal-like manifold and increases noise; (2) Nevertheless, the effect of manifold dilation outpaces the effect of noise increase, as indicated by the overall higher Fisher information at increasing speeds, and further supported by improved spatial coding accuracy at higher speeds; (3) Furthermore, compared to hypothetical independently firing grid cells, we found that noise correlations in real grid cells “shape “ the noise structure such that more noise is projected onto the manifold surface, indicating that noise correlation in grid cells is information-detrimental. Overall, our results indicate that running speed enhances grid cell spatial coding through geometric modulations. GKR provides a useful tool to interpret noisy neural data from an intuitive information geometry perspective.

Results

Grid cell population spatial coding accuracy improves with increasing speed

We analyzed grid cell recordings from Gardner et al.35, obtained as rats foraged in an open-field (OF). The dataset included approximately 60–200 simultaneously recorded grid cells per module, with the exact number varying by rat, recording day, and module (see Methods). The experiment comprised nine configurations, denoted using a notation system, for example, “R1M2” refers to rat R on day 1, specifically from grid cell module 2. Grid cells within the same module shared a similar spatial period but differed in phase. Raw spiking data were converted into firing rates using a kernel averaging (see Methods), with example rate maps shown in Fig. 1A and Supplementary Fig. 1.

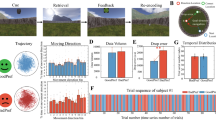

A Top: Rats were performing open-field foraging (OF) tasks with grid cell activity collected (data from Gardner et al.35). Nine experimental configurations were analyzed, varying across rats, recording days, and grid cell modules. For example, the configuration “R1M2” represents rat “R”, day 1, and grid cell module 2. Each experimental configuration was subsampled, generating 50 sampled datasets \({{{\mathscr{D}}}}_{s}\), ensuring a similar number of data points across different speed values (see text and Methods). Bottom: Example rate maps of grid cells in R1M2; with additional examples provided in Supplementary Fig. 1. B SCA quantifies the fidelity of grid cell spatial coding. Random locations were sampled within the open field, and for each location (star), two conjugate boxes were defined, separated by a fixed distance of 10 cm. Neural activity within these boxes was collected and classified using logistic regression (circles and crosses). The SCA is defined as the average classification accuracy across all sampled locations (see Methods). C SCA as a function of the rat’s speed. Each dot represents the SCA computed from a sampled dataset \({{{\mathscr{D}}}}_{s}\) at one speed bin (see Methods, fifty sampled \({{{\mathscr{D}}}}_{s}\) with eight speed bins = four hundred data points). The solid line and error band show the best-fitting line and 95% confidence interval (CI), estimated using Bayesian linear ensemble averaging (BLEA, see text and Methods). D SCA-speed slopes of different experimental configurations. Dots and error bars represent mean and 95% CI of the slopes estimated using BLEA (panel C shows the case of R1M2). Numbers above error bars are linear models’ r-squared values. Asterisks below the x-axis denote the statistical significance of the slope computed from the original datasets \({{{\mathscr{D}}}}_{s}\) compared to label-shuffled data (two-sided, Bayesian method, see Methods). ***p < 0.001; **p < 0.01; *p < 0.05; NS no significance. Source data are provided as a Source Data file.

We analyzed the rats’ behavioral data and found a predominance of low-speed movement, resulting in a concentration of observations in the slow-speed range (Supplementary Fig. 2). Since the primary goal of this study is to compare grid cell representation properties across different speeds, it is essential to control nuisance factors—specifically, ensuring an equal number of data points across speed conditions. To achieve this, we randomly sampled data within discrete speed bins (bin width = 5 cm/s, see Methods) to create a balanced dataset, Ds, with an equal number of data points per bin. We generated fifty such \({{{\mathscr{D}}}}_{s}\) datasets per experimental configuration to estimate uncertainty.

One straightforward approach to evaluate the quality of neural spatial coding is by decoding location information from neural states. Good decoding performance indicates good neural spatial coding. We designed a locally linear classification accuracy to evaluate the quality of spatial coding, formally referred to as spatial coding accuracy (SCA) (Fig. 1B, see Methods). Specifically, at each speed bin value, several locations were randomly sampled. For each sampled location, we created two conjugate boxes centered near the sampled location but positioned in opposite directions, separated by a fixed distance of 10 cm. Data from these two boxes were collected, relabeled as class 1 and class 2, and then split into training and test sets. A logistic regression model was then trained to classify the data and evaluated on the test set. The classification accuracy, averaged over all randomly sampled spatial locations, is referred to as the SCA. SCA quantifies how well neural states corresponding to two nearby spatial locations are discriminable.

For each sampled dataset \({{{\mathscr{D}}}}_{s}\), we computed SCA across eight speed bins as described above. Fifty \({{{\mathscr{D}}}}_{s}\)—each yielding eight metric-speed pairs (where metric is SCA in this case)—produced a metric-speed dataset of 400 points (dots in Fig. 1C). A natural idea is to fit a simple linear regression (e.g., least-squares regression) to these 400 points and use the slope to quantify how metric varies with speed. However, this approach is problematic as it assumes all observations are independent, which here is violated: our 400 points come from fifty \({{{\mathscr{D}}}}_{s}\) drawn from the same original dataset D, so they are statistically related. If one were to increase the number of sampled datasets \({{{\mathscr{D}}}}_{s}\), a naïve linear regression would misleadingly drive the slope’s estimation uncertainty toward zero.

To address this, we introduced Bayesian Linear Ensemble Averaging (BLEA, see Methods). BLEA proceeds in two stages: first, it fits a Bayesian linear regression separately to the metric-speed data from each \({{{\mathscr{D}}}}_{s}\), yielding fifty posterior distributions over the regression weights; then it combines these posteriors via Bayesian model averaging38,39. The result is a Gaussian-approximated posterior over the slope (and intercept) together with predictive distributions, from which confidence intervals (CIs), p-values, and other statistical measures can be estimated using a Bayesian framework (see Methods)40.

Applying BLEA to SCA (Fig. 1C, D), we found that SCA increases with speed, with a slope significantly larger than that of the label-shuffled dataset. This result holds across different classifiers used for computing the SCA (support vector machine and perceptron, see Supplementary Fig. 3), indicating better self-location representations in grid cell population at higher speeds.

Gaussian Process with Kernel Regression (GKR) method for fitting manifold and covariance matrices from noisy neural states

What are the underlying neural mechanisms contributing to the improved spatial coding in grid cells? To explore this question, we need a tool to analyze the high-dimensional noisy neural states. A recent popular neural population geometry framework suggests that, instead of analyzing noisy high-dimensional data, it will be more intuitive to use certain methods to extract the data’s underlying smooth manifold along with the noise covariance34,41,42. Wishart process is such a method that can infer the smooth manifold and covariance matrix34. However, the recent implementation of Wishart process requires trial-based experimental paradigm, which forbids this method to be used in broader and complex natural behaving experiments34. The OF task (Fig. 1A) is one of such natural behaving experiments without strict repeated trials.

Therefore, we developed Gaussian Process with Kernel Regression (GKR) method. The main purpose of GKR is to estimate the representation manifold relevant to the (known) labels of interest42. Response fluctuations arising from nuisance latent variables (e.g., emotional states) are captured as a noise covariance term. For example, in this paper, locations and speeds are the labels of interest, while neural response fluctuations due to other factors are treated as “noise,” summarized in the noise covariance matrix.

Here we illustrate the principles of GKR. A dataset (e.g., \({{{\mathscr{D}}}}_{s}\)) contains noisy neural states r whose dimensionality equals the number of neurons; and labels x whose dimensionality equals the number of label variables. A label variable can be stimulus parameters (e.g., grating stimulus’ orientation), latent variables (e.g., internal decision factor) or behavior variables (e.g., x, y locations and speed). GKR assumes that r follows a Gaussian distribution

where μ(x) is assumed to be a smooth-varying mean, also called a manifold in this paper; Σ(x) is a smooth-varying covariance. The combination of manifold and noise covariance is referred to as a statistical manifold of neural response. The goal of GKR is to infer the manifold and covariance from a dataset \(\{{{\bf{r}}},{{\bf{x}}}\}\).

GKR solves this inference problem by two steps (Fig. 2A, see Methods): (1) inferring smooth manifold \({{\mathbf{\mu }}}\left({{\bf{x}}}\right)\) via Gaussian process regression38; (2) inferring the covariance matrix by applying a kernel averaging to residues \({{\bf{r}}}\left({{{\bf{x}}}}_{{{\rm{i}}}}\right)-{{\mathbf{\mu }}}\left({{{\bf{x}}}}_{{{\rm{i}}}}\right)\) (index i represents the i-th data point). Kernel parameters are optimized to maximize data log-likelihood.

A Given neural states \({{\bf{r}}}\) and labels \({{\bf{x}}}\), the goal of GKR is to infer the conditional distribution \(p\left({{\bf{r}}}|{{\bf{x}}}\right)\) as a smooth function of \({{\bf{x}}}\). GKR approximates \(p\left({{\bf{r}}}|{{\bf{x}}}\right)\) as a Gaussian distribution and separates the inference problem into two steps: inferring mean \({{\boldsymbol{\mu }}}\left({{\bf{x}}}\right)\), and inferring noise covariance \(\Sigma \left({{\bf{x}}}\right)\). B Application of GKR to a synthetic dataset. The synthetic dataset consists of \(N\) synthetic neurons with heterogeneous tuning curves to a circular label \(\theta\) ranging from 0 to \(2{{\rm{\pi }}}\). The ground truth \({{\boldsymbol{\mu }}}\left(\theta \right)\) and \(\Sigma \left({{\rm{\theta }}}\right)\) are visualized in the first two principal components plane (via PCA, left panel). Ellipses indicate the principal axes of covariance, with lengths proportional to the eigenvalues. In this example, the dataset consists of 10 neurons and 100 data points, which were used to fit manifolds via GKR, bin averaging, and Ledoit-Wolf (LW) methods, respectively (right panel; see Methods). The default dataset consists of 300 data points and 10 neurons, with the number of data points (C) or neurons varying (D) as indicated by x axes. Dataset was used for estimating geometric metrics. The estimated geometric metrics were compared against ground truth values and evaluated using relative estimation error, defined as the normalized difference between the estimated and true values. Dots and error bars represent the median, first, and third quartiles of relative estimation error across 10 randomly generated synthetic datasets (see Methods). A similar analysis for a 2D synthetic manifold is presented in Supplementary Fig. 4. Source data are provided as a Source Data file.

GKR outperforms empirical estimation methods on synthetic datasets

We evaluated GKR on both a one-dimensional synthetic model (Fig. 2B–D) and a two-dimensional synthetic model (Supplementary Fig. 4). Each model consisted of a ground truth manifold, \({{\mathbf{\mu }}}\left({{\bf{x}}}\right)\), where each component represented a synthetic neural tuning curve, and a covariance matrix, \(\Sigma \left({{\bf{x}}}\right)\). We generated data from these models using a Gaussian distribution (Eq. 1) and applied GKR to infer the manifold and covariance matrix.

For comparison, we also applied bin averaging and the Ledoit-Wolf (LW) method. In bin averaging, we discretized the label space \({{\boldsymbol{x}}}\) into small bins, treating all data within a bin as having the same label. The sample mean and covariance within each bin served as estimates of the inferred manifold and covariance matrix. The LW method builds on bin averaging by incorporating shrinkage regularization to improve covariance estimation (see Methods)43.

Using the inferred manifold and covariance matrix, we computed other geometric quantities, including the Riemannian metric, precision matrix, and Fisher information (see Methods). To assess the inference performance, we compared these inferred quantities to their ground truth values by computing the relative estimation error, defined as the difference between the estimate and the ground truth, normalized by the ground truth. Across various experimental conditions and in both one-dimensional and two-dimensional synthetic datasets, GKR consistently outperformed bin averaging and LW methods (Fig. 2B–D, Supplementary Fig. 4).

Grid cell population activity manifold exhibits a toroidal-like topology

We then applied GKR to the grid cell sampled dataset \({{{\mathscr{D}}}}_{s}\). The inferred manifold is intrinsically three-dimensional, as it is parameterized by three label variables of interest: two spatial locations and one speed (notably, these three labels are largely uncorrelated, see Supplementary Fig. 5). To characterize the fitted manifold’s topology, we conducted a persistent homology analysis (see Methods) and found that the manifold exhibits possibly toroidal topology (Supplementary Figs. 6A, B), consistent with previous findings35.

Next, we examined how spatial locations were represented by grid cells. For each speed value, we defined a speed-slice manifold (SSM) as a cross-section of the full manifold, obtained by fixing speed while varying location (Fig. 3A). To visualize the SSM, we randomly sampled points from the manifold at a fixed speed of 20 cm/s, projected them onto the first six principal components (PCs) using principal component analysis (PCA), and further reduced the dimensionality to three using Uniform Manifold Approximation and Projection (UMAP)44. The resulting visualization (Fig. 3B), along with persistent homology analysis (Supplementary Fig. 6C), suggests that the SSM exhibits possibly toroidal topology.

A A speed-slice manifold is a cross-section of the full manifold, obtained by holding speed constant while varying location. B We visualized an example SSM (speed = 20 cm/s) by first projecting it onto the first six PCs, then further reducing it to three latent dimensions using UMAP44. Color represents the third UMAP component value only for better visualization. The upper and bottom panels show two views of the same SSM. C We also visualized the representation of four fixed spatial positions (i.e., lattice) but varying speed values. D For visualization, the lattice manifold was projected into the first three PCs (upper) and two PCs (bottom) respectively (see Supplementary Fig. 7 for cumulative variance explained ratio). E SSM size was measured in the original high-dimensional space (dimension equals the number of neurons). SSM radius is the average distance from points on the manifold to the manifold center. The lattice area measures the parallelogram area formed by two tangent vectors (i.e., Tan. vec., differentiated along x and y labels respectively, see Methods). Left: Each dot represents the measured quantity from one sampled dataset \({{{\mathscr{D}}}}_{s}\) at one speed bin (see Methods, fifty sampled \({{{\mathscr{D}}}}_{s}\) with eight speed bins = four hundred data points). The solid line and error band show the best-fitting line and 95% CI estimated using BLEA. Right: Dots and error bars represent mean and 95% CI of the slopes estimated using BLEA (left panel shows the case of R1M2). The numbers above error bars are r-squared scores. The texts below x axis tick label represent the significance level whether the slope fitted from original data \({{{\mathscr{D}}}}_{s}\) differs from that fitted from label-shuffled data (two-sided, Bayesian method, see Methods); ***p < 0.001; **p < 0.01; *p < 0.05; NS not significant. Source data are provided as a Source Data file.

Running speed dilates the grid cell toroidal-like speed-slice manifold

A key question is how speed modulates the geometry of the SSM. Direct visualization of SSMs at different speeds is challenging, so we instead examined speed modulation using an example lattice on the SSM. This lattice consists of four spatially adjacent points, centered at the OF center, with an inter-point distance of 2 cm (Fig. 3C). While keeping these four spatial locations fixed, we varied the speed components to construct a lattice manifold. PCA analysis revealed that this manifold is low-dimensional, with three principal components accounting for over 90% of the variance (Supplementary Fig. 7). Based on this, we projected the lattice manifold into three or two dimensions for visualization (Fig. 3D). The results indicate that the lattice expands as speed increases.

In addition to the lattice manifold, we also visualized other manifold slices, including those obtained by fixing the rat’s x-coordinate and using a larger lattice. All visualizations consistently imply that the SSM dilates with increasing speed (Supplementary Fig. 7).

To quantify changes in SSM size, we used two metrics: (1) SSM radius, defined as the average distance from the SSM surface to its center, providing a global measure of SSM size; and (2) Lattice area, computed as the area of a parallelogram whose sides are tangent vectors of the SSM, capturing local manifold surface size. Across all experimental configurations examined, both SSM radius and lattice area increase with running speed, indicating that the grid cell SSM dilates as speed increases (Fig. 3E).

Running speed increases grid cell population noise

SSM dilation intuitively enhances spatial coding. Consider a binary classification task distinguishing two neural state classes corresponding to nearby locations (e.g., Fig. 1B). By increasing the separation between these classes, SSM dilation makes their representations more distinguishable. However, discriminability is not solely determined by distance—noise strength also plays a crucial role. Increased noise in the grid cell population reduces spatial coding accuracy. This raises a question: what’s the effect of running speed on grid cell population noise?

To investigate this, we fitted manifolds and noise covariances for the sampled datasets \({{{\mathscr{D}}}}_{s}\) using GKR. Total noise is the trace of the covariance matrix (Fig. 4A). We found that total noise increases with increasing speed (Fig. 4B, C). Compared to total noise, noise projected onto the manifold may be more relevant to information coding26. Therefore, we projected the covariance matrix onto the tangent plane of the SSM, and computed the trace of the projected covariance matrix as the projected noise. Consistent with total noise, projected noise also increases with speed (Fig. 4B, C).

A Total noise is the trace of the covariance matrix. Projected noise is the trace of the covariance matrix projected onto the SSM tangent plane. B Each dot is the measured quantity from one sampled dataset \({{{\mathscr{D}}}}_{s}\) at a speed value (see Methods, fifty sampled \({{{\mathscr{D}}}}_{s}\) with eight speed bins = four hundred data points). The line and error band show the best linear fitting line and 95% CI using BLEA. C Dots and error bars represent mean and 95% CI of the slopes estimated using BLEA (panel B shows the case of R1M2). The numbers above error bars are r-squared scores. The texts below x axis tick label represent the significance level whether the slope fitted from original data \({{{\mathscr{D}}}}_{s}\) differs from that fitted from label-shuffled data (two-sided, Bayesian method, see Methods); ***p < 0.001; **p < 0.01; *p < 0.05; NS not significant. Source data are provided as a Source Data file.

Fisher information increases with increasing speed, indicating that the effect of speed-slice manifold dilation outpaces the effect of increasing noise

Running speed has opposing effects on spatial coding. On the one hand, it expands the smooth spatial manifold (SSM), pushing neural representations of nearby locations further apart (Fig. 3E), thereby improving spatial coding. On the other hand, it increases grid cell population noise (Fig. 4C), which degrades spatial coding. To assess the overall impact, we examined (linear) Fisher information, a metric that quantifies the local discriminability of neural population representations, defined as \({\left(\partial {{\mathbf{\mu }}}/\partial {{\bf{x}}}\right)}^{T}{\Sigma }^{-1}\left(\partial {{\mathbf{\mu }}}/\partial {{\bf{x}}}\right)\), which incorporates both the noise factor (\(\Sigma\)) and the lattice area factor (lattice area is formed by tangent vectors \(\partial {{\mathbf{\mu }}}/\partial {{\bf{x}}}\)) (Fig. 5A). Fisher information is a commonly used metric for assessing the local discriminability of neural population representations. The total Fisher information, given by the trace of the Fisher information matrix, measures the overall precision of the representation, with higher values indicating better spatial coding45.

A (Linear) Fisher information mathematically combines noise covariance and tangent vectors (which determine lattice area), which is commonly used to measure the local discriminability of information from noisy neural states45. Total Fisher information is the trace of the Fisher information matrix. Each dot represents the measured total Fisher information from one dimensionally reduced sampled dataset \({{{\mathscr{D}}}}_{s}^{\left(6\right)}\) (\({{{\mathscr{D}}}}_{s}\) projected into its first six PC subspace) at a specific speed value (fifty sampled \({{{\mathscr{D}}}}_{s}^{\left(6\right)}\) with eight speed bins = four hundred data points). The line and error band show the best linear fit and 95% CI using BLEA. B Dots and error bars show the mean and 95% CI of the estimated slopes using BLEA (see Methods, panel A shows the case of R1M2). The numbers above the error bars represent r-squared scores. The texts below x-axis tick labels indicate the significance level of whether the slope fitted from the sampled dataset \({{{\mathscr{D}}}}_{s}^{\left(6\right)}\) differs from that of label-shuffled data (two-sided, Bayesian method, see Methods); ***p < 0.001; **p < 0.01; *p < 0.05; NS not significant. C We computed theoretical SCA upper bounds derived from the Fisher information (red, see Methods), and also showed the actual SCA computed directly from data (blue, similar as Fig. 1B, C, but using \({{{\mathscr{D}}}}_{s}^{\left(6\right)}\) rather than \({{{\mathscr{D}}}}_{s}\)). Dots and error bands have the same meaning as in (A). D Correlation between upper bounds and SCA. For each experimental configuration, fifty \({{{\mathscr{D}}}}_{s}^{\left(6\right)}\) and eight speed bins were used, thus four hundred data points for upper bound and actual SCA, respectively (see C). We then computed the correlation (black dots) between these two sets of four hundred data points, with error bars indicating the 95% CI obtained via Fisher transformation87. The texts below the error bars indicate the significance levels of whether the correlation differs from zero: ***p < 0.001; **p < 0.01; *p < 0.05; NS not significant (Pearson correlation test, two-sided). The results of the same analysis but using the original datasets \({{{\mathscr{D}}}}_{s}\) are similar (Supplementary Fig. 8). Source data are provided as a Source Data file.

It is well known that Fisher information is hard to estimate in a high-dimensional space24. Therefore, besides using the original \({{{\mathscr{D}}}}_{s}\), we also projected \({{{\mathscr{D}}}}_{s}\) into the first six PCs, denoted as \({{{\mathscr{D}}}}_{s}^{\left(6\right)}\). Each \({{{\mathscr{D}}}}_{s}^{\left(6\right)}\) was then fed into GKR for fitting a GKR model. GKRs fitted from both \({{{\mathscr{D}}}}_{s}\) and \({{{\mathscr{D}}}}_{s}^{\left(6\right)}\) were analyzed identically to double-check our results on Fisher information.

We computed the total Fisher information from the fitted GKRs (see Methods, Supplementary Fig. 8A for \({{{\mathscr{D}}}}_{s}\) and Fig. 5A for \({{{\mathscr{D}}}}_{s}^{\left(6\right)}\)). Slope analyses indicate that Fisher information increases with running speed in both \({{{\mathscr{D}}}}_{s}\) and \({{{\mathscr{D}}}}_{s}^{\left(6\right)}\) (Fig. 5B for \({{{\mathscr{D}}}}_{s}^{\left(6\right)}\), Supplementary Fig. 8B for \({{{\mathscr{D}}}}_{s}\), although four out of nine experimental configurations in the results of \({{{\mathscr{D}}}}_{s}\) do not show statistical significance, possibly due to the curse of dimensionality). This increase in Fisher information with running speed suggests that the effect of SSM dilation outweighs that of increasing noise, leading to improved spatial coding at higher speeds, which is qualitatively consistent with the results obtained using SCA (Fig. 1B–D).

Fisher information, derived from a purely bottom-up geometric approach, in fact is intrinsically linked to SCA (Fig. 1B). Specifically, we established a theoretical upper bound for SCA directly from Fisher information (see Methods). This bound is approximately a linear function of the square root of total Fisher information. Therefore, an increase in Fisher information implies a corresponding increase in SCA.

We first tested this theoretical upper bound in synthetic datasets (Supplementary Fig. 9). We showed that the SCA computed directly from the synthetic datasets is well bounded by the theoretical upper bound predicted by the Fisher information. Moreover, the upper bound exhibits trends consistent with actual SCA across different dataset parameters (e.g., number of data points, dimensionality).

We then applied this analysis to grid cell datasets, computing the theoretical SCA upper bounds using Fisher information fitted from GKR. The computed upper bounds are well above the actual SCA values (Fig. 5C, Supplementary Fig. 8C). More importantly, both SCA and its upper bound exhibit similar speed modulation effects. The correlations between the predicted upper bounds and actual SCA are positive (Fig. 5D, Supplementary Fig. 8D).

Overall, Fisher information, derived from the geometric properties of the SSM and noise, quantitatively aligns with decoding performance measured via SCA. Both approaches support the conclusion that grid cell spatial coding improves with increasing running speed.

Results from GKR agree with those from a modified method that does not assume data normality

GKR approximates the distribution \(p\left({{\bf{r}}}|{{\bf{x}}}\right)\) as a normal distribution, which might not always valid (see Methods and Supplementary Fig. 10). To verify the validity of our previous sections’ results, we propose a modified approach—Gaussian process regression with kernel sampling (GKR-S)—that does not assume data normality. GKR-S contains two steps: first, it uses Gaussian process regression to estimate the manifold (as in GKR); second, it resamples from neighboring labels \({{{\bf{x}}}}_{{{\boldsymbol{i}}}}\) to generate pseudo-samples and thereby estimate the conditional distribution (see Methods).

We evaluated GKR-S on a synthetic dataset and found that it performs well only for low-dimensional data (Supplementary Fig. 11A). Therefore, we projected the grid cell datasets onto their first six PCs and applied both GKR-S and GKR to estimate geometric properties (e.g., manifold size, Fisher information etc.). The two methods yielded closely matching results, both revealing better spatial representation at higher running speeds, indicating that, despite its normality assumption, GKR remains a reasonable method to estimate these geometric properties (Supplementary Fig. 11).

Speed modulation effects on grid cell information geometry can be qualitatively reproduced by a simulated independent Poisson Speed-Gain (IPSG) grid cell population

What do these population results imply about individual grid cells? Here, we propose a simple model—the Independent Poisson Speed-Gain (IPSG) grid cell population, which contains three key assumptions: (1) grid cell firings are independent; (2) grid cells are Poisson neurons; and (3) the effect of running speed on individual grid cell firing is a monotonically increasing gain factor11.

Using these assumptions, we derived analytical expressions for manifold size, noise strength, and Fisher information as functions of running speed. Both these analytical results and additional numerical simulations demonstrate that the IPSG can qualitatively reproduce the observed positive speed modulation effects in Figs. 3E, 4C, 5B (Supplementary Fig. 12, see Methods).

Intuitively, in IPSG, increasing speed amplifies each cell’s firing without altering its spatial tuning. Because manifold size scales roughly with firing rate, it too grows with speed (see Methods, Fig. 3E, Supplementary Fig. 12). Poisson-neuron assumption implies that noise (the standard deviation) scales as the square root of the firing rate, so noise increases more slowly than firing rate. As a result, each cell’s signal-to-noise ratio—and hence its Fisher information—rises with speed under the independent-firing assumption (see Methods). More loosely, IPSG suggests that faster running increases grid-cell firing (without disrupting the rate map too much) more than noise and the effects of noise correlations are negligible, explaining the observed speed modulations on the manifold (Figs. 3E, 4C, 5B). Whether this picture holds in real grid-cell data remains unclear. We leave more precise theoretically modeling and validations for future work, while this paper focuses more on data-driven descriptive analysis.

Grid cell activity noise correlation is information-detrimental

Our results indicate that grid cell spatial coding improves at high speeds, based on our analysis of simultaneously recorded grid cell population activity. A key advantage of analyzing population activity, as opposed to individual neural responses, is that it inherently accounts for the effects of noise correlation on spatial coding. Here, we use the term ‘noise correlation’ specifically to refer to the cell-to-cell noise covariance (i.e., between two different cells). Noise correlation can be information-beneficial or information-detrimental, depending on the geometric relation between noise covariance and the information encoding manifold (Fig. 6A, also see a two-neuron toy example as an illustration in Supplementary Fig. 13)24,26. In this section, we explicitly examine how noise correlation influences grid cell spatial coding.

A Noise correlation can either enhance (“information‐beneficial”) or impair (“information‐detrimental”) coding relative to a model of independent firing grid cells (IFGC). B IFGC’s GKR is identical to the GKR fitted from the original sampled dataset \({{{\mathscr{D}}}}_{s}\), except that the off-diagonal elements of the covariance matrix are set to zero (see texts and Methods). Total noise is the trace of the covariance matrix. Left: Each dot represents the total noise from one GKR model fitted to a specific \({{{\mathscr{D}}}}_{s}\) at a given speed (fifty sampled \({{{\mathscr{D}}}}_{s}\) with eight speed bins = four hundred data points). The lines and error bands show the best linear fitting and 95% CI using BLEA. Right: We computed the speed-averaged total noise as the average total noise across all speeds (from 5 cm/s to 45 cm/s) per \({{{\mathscr{D}}}}_{s}\). Since fifty \({{{\mathscr{D}}}}_{s}\) were used, we have fifty speed-averaged total noise values per experimental configuration, allowing fitting a normal distribution. Dots and error bars show the mean and 95% CI of the estimated speed-averaged total noise distributions (see Methods). The texts below each x-axis tick label indicate the significance level of whether the speed-averaged total noise fitted from the original GKR differs from that of IFGC GKR (two-sided, Bayesian method, see Methods). ***p < 0.001; **p < 0.01; *p < 0.05; NS not significant. C, D Same as (B), but measuring projected noise and total Fisher. Statistical testing (Bayesian Methods) on projected noise (or total Fisher) is one-sided—whether the projected noise (or total Fisher) obtained from the original GKR is greater (or smaller) than IFGC GKR (see Methods). E The key idea of SCA is to assess classification accuracy between data points drawn from two spatial boxes. To compute IFGC’s SCA, we generated a “trial‐shuffled” dataset by permuting each cell’s firing activity across all data points within the same box, allowing eliminates intercellular noise correlations. Illustrations are the same as panel B, except showing SCA at different speeds. Statistical testing is one-sided (Bayesian method, see Methods), indicating whether the SCA from the original dataset is smaller than IFGC’s SCA. Source data are provided as a Source Data file.

To explore the role of noise correlation in spatial coding, we compared outcomes from the original grid cell dataset (\({{{\mathscr{D}}}}_{s}\)) with those from a hypothetical population of independent firing grid cells (IFGC)26. A conventional method to eliminate noise correlation is trial shuffling. For neural states recorded under identical conditions (e.g., the same spatial location) across different trials, one can randomly permute the firing profile of each neuron across trials. This shuffling preserves single-cell statistics while effectively disrupting intercellular noise correlation.

Although the OF task does not include repeated trials, the GKR serves as a generative model that can produce multiple data points for each condition. Specifically, for a given condition \({{\boldsymbol{x}}}\), the fitted GKR generates (theoretically) an infinite number of data points drawn from a Gaussian distribution (Eq. 1). After the trial-shuffling procedure is applied to these generated data points, the mean remains unchanged, but the covariance matrix becomes diagonal, with all off-diagonal elements set to zero. Thus, the IFGC’s GKR model is equivalent to the original GKR model with a purely diagonal covariance matrix.

We computed the total noise levels for both the GKR and IFGC GKR models (Fig. 6B). As expected, removing the off-diagonal elements of the covariance matrix does not alter its trace, leaving the overall noise unchanged. However, this manipulation affects the projected noise: the GKR model exhibits a higher projected noise than the IFGC GKR model (Fig. 6C). This finding indicates that cell-to-cell noise correlations in grid cell activity “reshape” the noise structure, directing a larger fraction of noise onto the torus surface. Consistent with this, increasing noise correlation leads to smaller Fisher information (Fig. 6D), underscoring their detrimental impact on information encoding.

To further validate that the correlation is information-detrimental, we employed a top-down decoding approach based on linear classification accuracy of neural states from two spatial boxes (i.e., SCA in Fig. 1B), without using GKR. For the IFGC SCA, we randomly permuted each neuron’s firing rates within each box. This procedure preserves the single-cell statistics while effectively disrupting the noise correlations. Analysis of the permuted data showed that the IFGC SCA exceeded the SCA from the original datasets (Fig. 6E), thereby confirming that noise correlation is information detrimental.

Discussion

Accurate internal spatial representation is essential for navigation, and grid cells are widely regarded as a fundamental component of this process2,3. Previous analyses of speed modulation on grid cell coding have predominantly focused on individual cells or cell pairs11,12,13,14, thereby neglecting the influence of population noise covariance—a factor that can significantly impact coding fidelity26. Here, we developed GKR to study the population coding from an information geometry perspective. We demonstrated that the grid cell manifold expands in size as speed increases. This manifold dilation effect exceeds the increase in noise, as indicated by the higher Fisher information observed at high speeds. Overall, our results favor the hypothesis that increasing running speed increases grid cell spatial coding accuracy. GKR can be a powerful tool to study neural population representation from an intuitive information geometric perspective.

However, GKR has its limitations. First, it does not perform well in very high-dimensional spaces, which may require a larger number of data points (Fig. 2 and Supplementary Fig. 4). This issue may be mitigated by first applying a dimensionality reduction method to the data46. Second, GKR assumes that the data follows a normal distribution. While the normal distribution is a commonly used approximation34,38—GKR may produce unreliable results if the true distribution deviates significantly from normality. It is advisable to perform a normality test (Supplementary Fig. 10), compute test data’s likelihood34, or use an alternative method (e.g., GKR-S, Supplementary Fig. 11) to verify the results. Finally, GKR is only applicable when the data explicitly contains labels. In other words, its purpose is to evaluate the geometric representation properties of known labels of interest. For example, in vision42, navigation, or working memory47 studies, the labels of interest are often defined by stimulus parameters. However, in cases where one aims to evaluate the representation structure of latent variables, it is necessary to first apply latent variable inference methods46,48, and then apply GKR. Overall, future improvements to GKR could focus on enhancing its performance in high-dimensional settings, adapting it for non-normal data, and extending its applicability to scenarios without explicit labels.

We analyzed the population-level properties of grid cell activities, but what are the implications of these findings for individual grid cells? There are two major models of grid cells49: the rate-based model and the oscillatory-interference model. First, in some rate-based models (continuous attractor networks20), running speed serves as an input to the grid-cell network. Faster speeds may elevate firing rates11,12, which can sharpen the spatial rate map, enhance the signal-to-noise ratio, and thus boost population Fisher information (Fig. 5). Although some other rate-based models contain normalization mechanisms implying the opposite—that the population mean firing rate should not change with speed (e.g., self-organizing models50). Secondly, in the oscillatory-interference model, running speed modulates the frequency of membrane potential oscillation which might lead to more accurate spatial fields49 (although a study suggests that MEC theta frequency is modulated by acceleration rather than speed51). Finally, higher running speeds imply more frequent encounters with environmental boundaries, allowing for more frequent corrections in grid coding18. Despite these conjectures, it should be noted that real grid cells are complicated, involving correlated noise. Models based solely on individual grid cells, without accounting for noise correlations, may result in substantial estimation errors, as shown by the pronounced discrepancies between the original data and the IFGC model in Fig. 6. The connection between population results and individual grid cells remains for future exploration.

Rats typically exhibit higher running speeds in novel environments52, as implied by this paper, which might enhance grid coding thus supporting more effective adaptation to novel surroundings. Grid cell representations are known to change in novel environments53,54,55. Some studies suggest that the grid pattern rescales in a novel environment (e.g., Barry et al. 201256); others propose that rats refine their grid coding by learning the environment’s boundaries18,55. These alterations in individual grid cell patterns may reflect corresponding changes in the representation geometry, such as a rescaling of the toroidal structure or localized distortions near environmental boundaries. The effects of environmental modulation on population-level representations remain an open question for future investigation.

Beyond grid cells, how does running speed influence other cell types in the navigation system? Hardcastle et al. found that the spatial decoding accuracy of MEC neurons improves at higher speeds, suggesting that increased speed generally benefits MEC spatial representation10. This aligns with findings that, similar to grid cells, running speed predominantly increases the firing rates of other MEC cell types, including head direction cells, speed cells, and conjunctive cells11,17. However, the modulation effects of running speed on hippocampal cells may be more complex. Grid cells are modeled as a primary feedforward input to the hippocampus8,57, suggesting that running speed should also enhance place cell representation as well. However, this feedforward model is a simplification, as the hippocampus sends feedback projections to the MEC58. Moreover, place cells receive inputs not only from grid cells but also from other sources, such as head direction cells57. These additional mechanistic factors obscure how running speed modulates place cell activity. Indeed, earlier research suggests that the majority of place cells are not strongly modulated by running speed, at least not in an obvious manner59. Nevertheless, beyond speed modulation, movement direction could influence the representational geometry of place cells, as it has been shown to reshape place fields60. Extending geometric analyses to other cell types remains an interesting avenue.

Running speed modulation effects have been widely observed across other brain regions as well61. For example, locomotion primarily suppresses neural activities in the auditory cortex62,63. In contrast, locomotion generally enhances V1 neuron activity64,65, but may turn to suppression after certain high running speeds66. In fact, the effect of locomotion modulation is usually entangled with other modulation factors61,66,67. For example, V1 neural activities are jointly influenced by both animal’s running speed and visual stimuli movement speed65. This influence can be mathematically expressed as a weighted sum of the two speed contributions, with weights varying diversely across neurons. The geometric approach has been shown to be a practically effective method to assist in understanding the diversity of individual neurons from a comprehensive population-level perspective41,68,69. GKR can be a useful tool to understand the diversity of running speed modulations in different brain areas.

One advantage of GKR is its ability to provide detailed inspection of location geometry. Local geometry reveals the intricacies of information coding within a small range of values, which is particularly useful for comparing the representation bias of different information values. Representation bias has been observed in the navigation system60,70. For instance, place and grid cells’ fields tend to shift towards reward locations, which has been interpreted as an overrepresentation of rewarded locations70,71,72,73. From a geometric perspective, overrepresentation implies larger Fisher information, which can be attributed to either local manifold dilation, reduced projected noise, or both (Figs. 3, 4, 5). The concepts of local manifold dilation and reduced noise have been supported in working memory studies: (1) The working memory system may use attractors to reduce noise47,74,75. (2) Recurrent neural networks (RNNs) trained on working memory tasks utilize larger state spaces to represent common values, thus yield improved Fisher information47. In RNNs, manifolds are often observed to be quite simple, usually taking the form of a low-dimensional ring structure47. This simplicity allows the size of the encoding space to be measured using straightforward methods. However, in the actual brain, manifolds can be highly complex and high-dimensional69. The GKR method illustrated in this paper can be particularly helpful in studying the local structure of these complex, high-dimensional manifolds, assisting the analysis of representation bias.

Methods

Experimental data

Experimental data were collected by Gardner et al.35. Rats performed open-field foraging (OF) tasks in a 150 cm wide OF box. Three-dimensional motion capture tracked the rats’ head positions and orientations using five retroreflective markers attached to the implant during recordings. The 3D marker positions were then projected onto the horizontal plane to determine the rats’ 2D positions. Neuropixel probes recorded neural activity in the MEC. Neural activity were then processed using a clustering method to classify neurons into grid cells and non-grid cells35. In total, these procedures yielded nine sets of simultaneously recorded grid cell population activities (i.e., nine experimental configurations): rat ‘R’ day 1 modules 1, 2, 3; rat ‘R’ day 2 modules 1, 2, 3; rat ‘S’ module 1; and rat ‘Q’ modules 1, 2. We used a shorthand notation, e.g., “R1M2”, to represent rat R (“R”) on day 1 (“1”) and grid cell module two (“M2”). Note that “R1” does not necessarily refer to the same day as “S1”. Day labels are used solely to distinguish recordings from the same rat. These processed data are available from Gardner et al. 202235.

Grid cell rate map

The grid cell rate maps shown in Fig. 1A and Supplementary Fig. 1 were computed as follows. Firing rate was estimated by dividing spike counts by 10‐ms time bins and then convolving the result with a Gaussian filter with a standard deviation of 20 ms. To estimate the averaged firing rate at different locations, the OF box (\(150\times 150\) cm) was digitized into small spatial bins of \(3\times 3\) cm. Firing rates at each visited spatial bin were averaged, and those at each unvisited bin were set to 0. To correct the effect of unvisited bins, we created a mask \(({M}_{0})\) with a value of 1 at the visited bins and 0 at unvisited bins. Next, both the firing rate and mask \({M}_{0}\) were spatially convolved with a 2D Gaussian filter with a standard deviation σ = 8.25cm. The convolved firing rate was divided by the convolved \({M}_{0}\) to obtain the final corrected rate map for each cell.

Gridness

Gridness measures how well a grid cell’s rate map conforms to a hexagonal pattern12. Some grid cells’ rate maps have incomplete peaks at the OF box boundaries. To correct this boundary effect, the rate map was first padded by 30 cm on each side. This padding was performed by linearly ramping the firing rate at the edges to zero over the outer 30 cm of the padded area. (implemented using the ‘numpy.pad‘ function in Python, with ‘mode = ‘linear_ramp’‘). Autocorrelating the padded rate map produced an autocorrelation map. The boundary effect of the autocorrelation was corrected by padding with zeros on all sides (implemented using ‘scipy.signal.correlate2d (padded_rate_map, padded_rate_map, mode = ‘same’, boundary = ‘fill’, fillvalue = 0)‘).

The autocorrelation map was masked by two circles centered at the map’s center. The outer circle’s diameter matched the edge length of the autocorrelation map. The inner circle’s area was 15% of the outer circle’s area to filter out center peaks on the map. Only the regions between the two circles were kept; the rest were set to 0. Next, the masked autocorrelation map was correlated with its rotated versions (rotated by 30, 60, 90, 120, and 150 degrees, respectively). A well-defined grid cell should have peak correlation values at 60 and 120 degrees, and valleys at 30, 90, and 150 degrees. Gridness was calculated by subtracting the average valley values (30, 90, and 150 degrees) from the average peak values (60 and 120 degrees).

Data preprocessing

Time was binned in 10‑ms intervals. Spikes count at each time bin was computed, and then divided by 10 ms as an estimate of firing rate. The firing rate was then temporally smoothed using a Gaussian kernel with a standard deviation of 20 ms. To estimate the rat’s speed, velocity was first computed by computing the finite differences of the rat’s positions, i.e., \(\left({{{\bf{p}}}}_{i+1}-{{{\bf{p}}}}_{i-1}\right)/20\) where \({{{\bf{p}}}}_{i}\) is the rat’s position at time bin \(i\). The velocity’s L2 norm is the speed. This procedure provided a feature map indicating grid cell firing rates, with rows representing time bins and columns representing grid cell IDs; and a label with rows representing time bins and three columns indicating \(x\) location, \(y\) location, and speed. Data with speeds lower than 5 cm/s or higher than 45 cm/s were excluded. Grid cells with low gridness (below 0.1) were also excluded. The combined feature map and label are termed as a grid cell dataset, denoted as \({{\mathscr{D}}}\). There are 9 grid cell datasets corresponding to different experimental configurations (different rats, grid cell modules, and different days). The number of grid cells in each dataset is: 113 in R1M1, 132 in R1M2, 51 in R1M3, 140 in R2M1, 153 in R2M2, 62 in R2M3, 96 in S1M1, 81 in Q1M1, 53 in Q1M2.

The speed distribution in \({{\mathscr{D}}}\) is highly biased. It has more data in low-speed region than in the high-speed region (Supplementary Fig. 2). This biased distribution of data may cause potentially biased evaluation. To avoid this, we performed resampling on the dataset as follows. Speeds ranging from 5 cm/s to 45 cm/s were binned into 5 cm/s bins. Data in each speed bin was collected. Let \({N}_{{sp}}^{\min }\) denote the minimum number of data points among all speed bins, we defined \(K\) as the \(\min \{{N}_{{sp}}^{\min },{\mathrm{10,000}}\}\). In each speed bin, we sampled \(K\) data points (without replacement). Sampled data points from different speed bins were combined to create a single sampled dataset, denoted as \({{{\mathscr{D}}}}_{s}\). \({{{\mathscr{D}}}}_{s}\) has a roughly equal amount of data at each speed value. The above sampling procedure was repeated 50 times, resulting in 50 sampled datasets \({{{\mathscr{D}}}}_{s}\) per experimental configuration.

As a baseline comparison, we also shuffled the data \({{\mathscr{D}}}\) by permuting the label timestamps, thereby disrupting the relationship between neural states and labels. This permuted data was then processed using the same sampling procedure as described above, yielding 50 label-shuffled-sampled datasets.

The dimensionality of \({{{\mathscr{D}}}}_{s}\) is the number of grid cell, which can be more than 100. This can pose a challenge in accurately estimating covariance and Fisher information24. Therefore, we also performed PCA on \({{{\mathscr{D}}}}_{s}\), projecting onto the first 6 principal components to obtain \({{{\mathscr{D}}}}_{s}^{\left(6\right)}\). The same projection procedure was applied to the shuffled datasets. These projected datasets were used for estimating Fisher information in Fig. 5, and comparing to GKR-S in Figure 11.

Spatial coding accuracy

A common way to evaluate the quality of neural population representation is to assess how accurately a simple linear classifier can distinguish between neural population representations of two adjacent experimental conditions (e.g., stimulus parameters or locations in this paper)22. In this paper, this type of classification accuracy is referred to as spatial coding accuracy (SCA, Fig. 1B).

For a given sampled dataset \({{{\mathscr{D}}}}_{s}\), we split it into 8 speed-split datasets (SSD) based on speed values. Specifically, data with speed values within \(\left[{v}_{i},{v}_{i}+5{\mbox{cm}}/{\mbox{s}}\right]\) were collected as one SSD, where \({v}_{i}={\mathrm{5,10}},\ldots,40{\mbox{cm}}/{\mbox{s}}\). For each SSD, we randomly sampled 300 spatial locations \({{{\bf{x}}}}_{c}\). For each location \({{{\bf{x}}}}_{c}\), we constructed two adjacent locations \({{{\bf{x}}}}_{\pm }={{{\bf{x}}}}_{c}\pm {{\rm{\delta }}}l\hat{{{\bf{e}}}}\), where \(\hat{{{\bf{e}}}}\) is a unit vector with a random angle, \({{\rm{\delta }}}l=5{\mbox{cm}}\). Each \({{{\bf{x}}}}_{\pm }\) defines a small spatial box, centered at \({{{\bf{x}}}}_{\pm }\) with an edge length of 10 cm. Data from the two boxes were collected. To ensure fair classification, the data from the box with the larger number of data points were subsampled (without replacement) so that both boxes had an equal number of data points. The data from the two boxes were then concatenated. If the total number of data points was less than 50, this \({{{\bf{x}}}}_{c}\) was discarded due to insufficient data. Otherwise, the concatenated data was split into train and test sets (0.67:0.33). A logistic classifier (with an L2 regularization coefficient C = 1, using the scikit-learn package) was then trained on the train set and evaluated on the test set. The classification accuracy averaged across all valid \({{{\bf{x}}}}_{c}\) is defined as the SCA of that speed bin \(\left[{v}_{i},{v}_{i}+5{\mbox{cm}}/{\mbox{s}}\right]\). This procedure was applied to all speed bins, \({{{\mathscr{D}}}}_{s}\) (or \({{{\mathscr{D}}}}_{s}^{\left(6\right)}\) in Fig. 5C), and the label-shuffled dataset.

Besides using logistic regression for classification (Fig. 1), we also computed SCA using perception and support vector machines, see the results in Supplementary Fig. 3.

Bayesian linear ensemble averaging and statistical testing

Metrics considered in this paper include SCA (e.g., Figs. 1C, D, 5C), torus radius, lattice area (e.g., Fig. 3E), total and projected noise (e.g., Fig. 4B, C), and Fisher information (e.g., Fig. 5, Supplementary Figs. 8A, B), etc. For each sampled dataset \({{{\mathscr{D}}}}_{s}\), we computed the metric values at different speed bins, forming a metric-speed dataset consisting of metric value \({t}_{i}\) and the corresponding speed value \({v}_{i}\), where \(i\) indexes the i th data point in the metric-speed dataset. For example, one dot in Fig. 1C is one data point in the SCA-speed dataset (with a corresponding \({{{\mathscr{D}}}}_{s}\)). For convenience, we define \({{{\bf{x}}}}_{{{\boldsymbol{i}}}}=\left({v}_{i},1\right)\), which includes speed and a constant for a bias parameter. Currently, we limit our discussion to one \({{{\mathscr{D}}}}_{s}\), and later we will ensemble results from different \({{{\mathscr{D}}}}_{s}\) by Bayesian model averaging39.

Given a metric-speed dataset from one \({{{\mathscr{D}}}}_{s}\), we used Bayesian linear regression (BLR) to fit the linear relationship between a metric and speed38. The benefit of BLR over ordinary least squares is that BLR naturally provides a way to set the regularization parameter (by setting the prior) and offers the posterior distribution of inferred parameters (e.g., slope), thus enabling a pure Bayesian analysis of the data. We follow the implementation of BLR in Bishop 200638.

In BLR, the relationship between metric and speed is modeled as

where \(\epsilon \sim {{\mathscr{N}}}\left(\epsilon |0,{\beta }^{-1}\right)\), \(\beta\) is a scalar representing precision, and \(y={{{\bf{w}}}}^{T}{{\bf{x}}}\). This equation indicates that the conditional distribution \(p\left(t|{{\bf{x}}},{{\bf{w}}}\right)\) is a Gaussian distribution with mean \(y\) and variance \({\beta }^{-1}\). The prior for parameter \({{\bf{w}}}\) is \({{\bf{w}}}\sim {{\mathscr{N}}}\left({{\bf{w}}}|0,{\alpha }^{-1}{\mathbb{I}}\right)\) where \({\mathbb{I}}\) is a \(2\times 2\) identity matrix, and \(\alpha\) is a scalar. Given the prior and conditional distribution, we can derive the posterior distribution \(p\left({{\bf{w}}}|{{\bf{t}}},X\right)\) and predictive distribution \(p\left({t}_{q}|{{{\bf{x}}}}_{q},{{\bf{t}}},X\right)\), where \({{{\bf{x}}}}_{q}\) is the query label, \({{\bf{t}}}\) and \(X\) are the data points in the metric-speed dataset, \({t}_{q}\) is the prediction. Both posterior and predictive distributions are Gaussian distributions. Hyperparameters \(\alpha\) and \(\beta\) were estimated by maximizing the marginal likelihood \(p\left({{\bf{t}}}|\alpha,\beta,X\right)\) through an iterative method38.

Overall, given a metric-speed dataset (obtained from one sampled dataset \({{{\mathscr{D}}}}_{s}\)), BLR provides the posterior distribution \(p\left({{\bf{w}}}|{{\bf{t}}},X\right)\) and predictive distribution \(p\left({t}_{q}|{{{\bf{x}}}}_{q},{{\bf{t}}},X\right).\) To simplify the notation, the two distributions are written as \(p\left({{\bf{w}}}|{{{\mathscr{D}}}}_{s}\right)\) and \(p\left({t}_{q}|{{{\bf{x}}}}_{q},{{{\mathscr{D}}}}_{s}\right)\), which follow \({{\mathscr{N}}}\left({{\bf{w}}}|{{{\bf{m}}}}_{w,s},{\Sigma }_{w,s}\right)\) and \({{\mathscr{N}}}\left({t}_{q}|{m}_{t,s},{\Sigma }_{t,s}\right)\), respectively.

We aggregate results from sampled datasets \({{{\mathscr{D}}}}_{s}\), i.e., \(p\left({{\bf{w}}}|{{\mathscr{D}}}\right)\) and \(p\left({t}_{q}|{{{\bf{x}}}}_{q}{,}{{\mathscr{D}}}\right)\), by Bayesian model averaging39. Since each \({{{\mathscr{D}}}}_{s}\) is a random subsampling (under the restriction of an equal number of data points in each speed bin, see Methods: Data Preprocessing) of the \({{\mathscr{D}}}\), \(p\left({{\bf{w}}}|{{\mathscr{D}}}\right)={\sum }_{s}p\left({{\bf{w}}}|{{{\mathscr{D}}}}_{s}\right)p\left({{{\mathscr{D}}}}_{s}|{{\mathscr{D}}}\right)={\sum }_{s}p\left({{\bf{w}}}|{{{\mathscr{D}}}}_{s}\right)/B\) where \(B=50\) is the total number of sampled datasets. \(p\left({{\bf{w}}}|{{\mathscr{D}}}\right)\) is a mixture of Gaussian distributions. For simplicity, we approximate it as a single Gaussian distribution (see details in SI Methods). The mean of the approximated \(p\left({{\bf{w}}}|{{\mathscr{D}}}\right)\) is

The covariance is

where the first term is the average of covariances, second term represents bias.

Similarly, \(p\left({t}_{q}|{x}_{q}{,}{{\mathscr{D}}}\right)\) can be approximated by a Gaussian distribution with certain mean and covariance matrix (see Supplementary Methods). In fact, the mean and covariance matrix are in forms that, after a Gaussian approximation on \(p\left({t}_{q}|{x}_{q}{,}{{\mathscr{D}}}\right)\), \({t}_{q}\) is still a linear function of \({{\bf{w}}}\), as can be explicitly written as below

where \({{\bf{w}}}\sim {{\mathscr{N}}}\left({{\bf{w}}}{|}{{{\bf{m}}}}_{w},{\Sigma }_{w}\right)\), \({{\boldsymbol{\epsilon }}}\sim {{\mathscr{N}}}\left({{\boldsymbol{\epsilon }}};0,{\sum }_{s}{\beta }_{s}^{-1}/B\right)\), and \({\beta }_{s}\) is the best hyperparameter fitted using an iteration method from a sampled dataset \({{{\mathscr{D}}}}_{s}\) (see above). Overall, we obtained \(p\left({{\bf{w}}}|{{\mathscr{D}}}\right)\) and \(p\left({t}_{q}|{{{\bf{x}}}}_{{{\boldsymbol{q}}}}{,}{{\mathscr{D}}}\right)\), which are both Gaussian distributions. This overall method pipeline is called Bayesian linear ensemble averaging (BLEA) in this paper. Mathematical details can be found in Supplementary Methods.

\(p\left({{\bf{w}}}|{{\mathscr{D}}}\right)\) and \(p\left({t}_{q}|{{{\bf{x}}}}_{q}{,}{{\mathscr{D}}}\right)\) allow us to estimate the confidence interval (CI) and assess statistical significance from Bayesian framework40. First, since the predictive distribution \(p\left({t}_{q}|{{{\bf{x}}}}_{q}{,}{{\mathscr{D}}}\right)\) is a Gaussian, the 95% CI of the prediction (two-tailed) is given by an interval [a, b] such that \(\Phi \left(\left(a-\mu \right)/\sigma \right)=0.025\) and \(\Phi \left(\left(b-\mu \right)/\sigma \right)=0.975\), where \(\Phi \left(\cdot \right)\) is a cumulative distribution function of a standard Gaussian distribution, \(\mu\) and \(\sigma\) are the predictive distribution mean and standard deviation. One example CI can be found in Fig. 1C, which well covers most of data points. 95% CI is also called a credible interval in the Bayesian framework40.

Similarly, knowing the posterior distribution of slope \(p\left({{\bf{w}}}|{{\mathscr{D}}}\right)\), we can also compute its 95% CI. For example, this is illustrated by the error bars in Fig. 1D.

We are interested in whether the slope fitted from \({{\mathscr{D}}}\) is statistically different from that fitted from the label-shuffled dataset (e.g., Fig. 1D). Therefore, we also prepared label-shuffled \({{{\mathscr{D}}}}_{s}\) from \({{\mathscr{D}}}\) (see Methods: Data Preprocessing), and ran the above analysis to obtain their label-shuffled posterior and predictive distributions. Both of the posterior distributions of the original and label-shuffled datasets are Gaussian, hence we defined the slope difference \(d={w}^{{data}}-{w}^{{shuffle}}\), which also follows a Gaussian distribution with a mean equal to the difference between the two slope means and a variance equal to the sum of the two variances. Based on the distribution of \(d\), probability of direction, \({p}_{d}\), can be computed as the maximum of \(P\left(d > 0\right)\) and \(P\left(d < 0\right)\). \({p}_{d}\) represents the probability that \(d\) to be positive or negative (depending on which is the most probable). It directly relates to p-value (from a frequentist framework, two-sided) by \(p=2\times \left(1-{p}_{d}\right)\), where the null hypothesis is that \(d=0\) and alternative hypothesis is that \(d\ne 0\)40. Thus, statistical statements can be made based on the p-values. One-side p value is \({p}_{d}\) or \(1-{p}_{d}\) depending on the one-side direction.

We are also interested in whether the speed-averaged metric computed under the \({{\mathscr{D}}}\) is statistically different from that computed under the hypothetical independent firing grid cell assumption (IFGC, Fig. 6). For each \({{{\mathscr{D}}}}_{s}\), we averaged the metric value across speed values. This gives one \({\bar{t}}_{s}\). Fifty \({\bar{t}}_{s}\) were concatenated and fitted by a Gaussian distribution using the maximum log-likelihood method, as an approximation of \(p\left({\bar{t}}_{s}|{{\mathscr{D}}}\right)\). Therefore, we can use the same method above to determine whether the speed-averaged metric computed under original dataset is statistically different from that computed from the IFGC (Fig. 6B–E).

Bin average and Ledoit-Wolf estimator

We approximate the neural population responses (neural states for short) as a Gaussian distribution:

where \({{\bf{r}}}\in {{{\mathscr{R}}}}^{N}\) represents a neural state containing N neurons, and \({{\bf{x}}}\in {{{\mathscr{R}}}}^{M}\) represents M labels. Labels are defined broadly. They can be stimulus parameters (e.g., grating image orientation, object positions), an agent’s latent state (e.g., latent dynamics factor, emotion), or an agent’s behavioral labels (e.g., agent speed, agent position). \({{\mathbf{\mu }}}\) is the mean of neural state, modeled as a continuous function of the labels. \({{\mathbf{\mu }}}\) is also referred to as a manifold. \({{\boldsymbol{\epsilon }}}\) is white noise with a covariance \(\Sigma \left({{\bf{x}}}\right)\). Given noisy neural states \({{\bf{r}}}\) and corresponding labels \({{\bf{x}}}\), our goal is to infer the smoothly varying manifold \({{\mathbf{\mu }}}\) and covariance \(\Sigma\).

Bin averaging is a straightforward estimation method. This approach divides the entire range of label \({{\bf{x}}}\) into small bins. Data points \({{{\bf{r}}}}_{i}\) within each bin are considered to have the same label \({{{\bf{x}}}}_{i}\). Hence, the manifold can be estimated by sample average \({{\mathbf{\mu }}}\left({{{\bf{x}}}}_{{{\boldsymbol{i}}}}\right)={\left\langle {{\bf{r}}}\right\rangle }_{{{{\bf{x}}}}_{{{\boldsymbol{i}}}}}\), where \({\left\langle \cdot \right\rangle }_{{{{\bf{x}}}}_{{{\boldsymbol{i}}}}}\) denotes averaging over the data points within bin \({{{\bf{x}}}}_{{{\boldsymbol{i}}}}\). Similarly, the covariance \(\Sigma\) can be estimated by the sample covariance matrix.

However, when the number of data points in each small bin is sparse and the neural state dimensionality is high (i.e., a large number of recorded neurons), bin averaging can lead to unreliable—and sometimes even non-invertible—estimation of the covariance matrix24. To address this, the shrinkage method was proposed. This method is equivalent to adding L2 regularization to the maximum likelihood estimation of the covariance matrix, guiding the estimation towards a more structured assumption (e.g., an identity matrix)43. In particular, this paper uses:

where \(S\) is the sample covariance, \({{\rm{\lambda }}}\) is the shrinkage coefficient estimated by the Ledoit-Wolf (LW) shrinkage algorithm43, \(N\) is the number of neurons, and \({\mathbb{I}}\) is an identity matrix. This algorithm is implemented by a Python function ‘sklearn.covariance.LedoitWolf‘.

Gaussian process with Kernel Regression

One disadvantage of the bin average and LW methods is that the estimation of one bin’s covariance does not use data from adjacent bins. Ideally, the manifold and covariance matrix are smooth over label values. Data in adjacent bins provide certain information about the current bin. Therefore, we developed the Gaussian Process with Kernel Regression (GKR) method to infer smoothly varying manifold and covariance from noisy neural states. GKR has two major steps: (1) inferring the manifold via a Gaussian process, and (2) inferring the covariance matrix. Note that while Gaussian processes have been used in previous studies to infer the firing of individual grid cells76,77, our method, GKR, which is partially based on Gaussian processes, focuses on cell-to-cell statistics.

In step 1, each component of \({{\bf{r}}}\) across all time bins is standardized to have a mean of zero and a variance of one. Denoting the standardized \({{\bf{r}}}\) as \(\widetilde{{{\bf{r}}}}\). \(\widetilde{{{\bf{r}}}}\) then is modeled as \(\widetilde{{{\mathbf{\mu }}}}+{\beta }^{2}{{\mathbf{\eta }}}\), where \({{\mathbf{\eta }}}\) is a standard Gaussian noise, and \(\beta\) is a scalar parameter. The manifold \(\widetilde{{{\mathbf{\mu }}}}\) is modeled as an N-independent Gaussian process written as \(\widetilde{{{\mathbf{\mu }}}}\sim {{\mathscr{G}}}{{{\mathscr{P}}}}^{N}\left(0,{k}_{{{\rm{\mu }}}}\right)\), i.e., with zero mean and a kernel function \({k}_{{{\rm{\mu }}}}:{{{\mathscr{R}}}}^{M}\times {{{\mathscr{R}}}}^{M}\to {{\mathscr{R}}}\) to control the “closeness” of \(\widetilde{{{\mathbf{\mu }}}}\) given two different labels \({{\bf{x}}}\)38. A shared kernel for all components of \(\widetilde{{{\mathbf{\mu }}}}\) is used in this paper. Although the kernel is shared by all components \(\widetilde{{{\mathbf{\mu }}}}\), it has different parameters for different components of the label, i.e., \({k}_{{{\rm{\mu }}}}\left({{\bf{x}}},{{{\bf{x}}}}^{{{{\prime} }}}\right)={\prod }_{i=1}^{M}k\left({x}_{i},{x}_{i}^{{\prime} }\right)+c\), where \({x}_{i}\) is the i th component of a label \({{\bf{x}}}\), and \(c\) is a constant parameter. The kernel for \({x}_{i}\) is

where \({{{\rm{\sigma }}}}_{i}\) and \({l}_{i}\) are parameters. If the \({x}_{i}\) is a circular variable, a sine wrapping is applied:

where \({p}_{i}\) represents the period of the circular variable. Based on all these modeling, the problem of inferring \(\widetilde{{{\mathbf{\mu }}}}\) from noisy data \(\widetilde{{{\bf{r}}}},{{\bf{x}}}\) becomes a classical Gaussian process regression problem, where the parameters \(\{\beta,{l}_{i},{{{\rm{\sigma }}}}_{i},{c}_{i}\}\) are optimized to maximize the log-likelihood of a joint Gaussian distribution for \(\widetilde{{{\mathbf{\mu }}}}\sim {{\mathscr{G}}}{{{\mathscr{P}}}}^{{{\mathscr{N}}}}\left(0,{k}_{{{\rm{\mu }}}}\right)\). Finally, \(\widetilde{{{\mathbf{\mu }}}}\) is unstandardized back to \({{\mathbf{\mu }}}\).

In many scenarios, the label \({{\bf{x}}}\) spans a large continuous range rather than a few discretized values (e.g., possible positions of a rat in a navigation task). In this case, Gaussian process regression requires computing a large kernel matrix, leading to expensive matrix manipulations78. To reduce this, we employed a variational inducing variable method78. It approximates training label values with a smaller set of inducing points \({{\bf{z}}}\), thereby reducing the time complexity. In this paper, inducing points were initialized as a randomly sampled subset of the original training labels (200 inducing points), and were optimized during the optimization of Gaussian process regression. Gaussian process regression with inducing variables method is implemented in the Python GPflow package79.

The above step one infers the manifold \({{\mathbf{\mu }}}\left({{\bf{x}}}\right)\). Step two infers the covariance matrix \(\Sigma \left({{\bf{x}}}\right)\). Define the gram matrix of a point \(\left({{{\bf{r}}}}_{{{\boldsymbol{i}}}},{{{\bf{x}}}}_{{{\boldsymbol{i}}}}\right)\) as \(C\left({{{\bf{x}}}}_{{{\boldsymbol{i}}}}\right){{\boldsymbol{\equiv }}}\left({{{\bf{r}}}}_{{{\boldsymbol{i}}}}{-}{{\mathbf{\mu }}}\left({{{\bf{x}}}}_{{{\boldsymbol{i}}}}\right)\right){\left({{{\bf{r}}}}_{{{\boldsymbol{i}}}}{-}{{\mathbf{\mu }}}\left({{{\bf{x}}}}_{{{\boldsymbol{i}}}}\right)\right)}^{{{\boldsymbol{T}}}}\), we estimate the covariance matrix at \({{\bf{x}}}\) as

where \(i\) sums over all training data points, and \(\eta={10}^{-6}\) is a small number for numerical stability (keeping the covariance invertible even in the first term is small). \({k}_{L}\left({{\bf{x}}},{{{\bf{x}}}}_{{{\boldsymbol{i}}}}\right)\) is a weight kernel that represents the contribution of \(C\left({{{\bf{x}}}}_{{{\boldsymbol{i}}}}\right)\) in estimating the covariance matrix at label \({{\bf{x}}}\). It is normalized such that \({\sum }_{i}{k}_{L}\left({{\bf{x}}},{{{\bf{x}}}}_{{{\boldsymbol{i}}}}\right)=1\). To gain an intuition of this method, consider a simple case where (up to a normalization) \({k}_{L}\left({{\bf{x}}},{{{\bf{x}}}}_{{{\boldsymbol{i}}}}\right)=1\) if \(\left|\left|{x}_{i}-x\right|\right| < {{\rm{\delta }}}\) and zero otherwise, step two is simply a sample covariance in a small bin of half-width \({{\rm{\delta }}}\).

Since we assumed covariance is a smooth function over \({{\bf{x}}}\), \(C\left({{{\bf{x}}}}_{i}\right)\) of adjacent \({{{\bf{x}}}}_{i}\) should still contribute to the estimation of \(\Sigma \left({{\bf{x}}}\right)\). Therefore, we used a gradually decaying weight kernel

with \(\kappa\) as a normalization factor ensuring \({\sum }_{i}{k}_{L}\left({{\bf{x}}},{{{\bf{x}}}}_{{{\boldsymbol{i}}}}\right)=1\), and \(L\) is an \(M\times M\) upper triangular matrix, interpreted as the Cholesky decomposition of a semi-positive definite precision matrix \(L{L}^{T}\). Note that the precision matrix has non-diagonal terms, hence the interactions between different label components are considered.

Parameter \(L\) is optimized to maximize the Gaussian log-likelihood of the data

where terms irrelevant to covariance are omitted. Notably, while \(\Sigma\) is the weighted average of the Gram matrices from the training set, the log-likelihood function \({{\mathscr{L}}}\) should be evaluated from the validation set, where we used different indices \(i,j\) to distinguish (Eq. 10 using training set). Setting the log-likelihood function on the training set would result in \(\Sigma \left({{\bf{x}}}\right)\) converging to the Gram matrix \(C\left({{\bf{x}}}\right)\). This can be demonstrated by computing \(\Sigma \left({{\bf{x}}}\right)\) to satisfy the condition \(\partial {{\mathscr{L}}}/\partial \Sigma=0\). Therefore, splitting between training for computing covariance and validation for computing likelihood is necessary.

Overall, we use the following procedure to fit the manifold and covariance from a dataset. In step one, the entire train dataset was used for Gaussian process regression, obtaining a continuous manifold function \({{\mathbf{\mu }}}\). In step two, the train dataset was split into batches, each containing 3000 data points (except for the final batch). Each batch was further split into train and validation sets (0.66:0.33). The train set was used for computing the covariance matrix given an \(L\) (initialized as an identity matrix), and the validation set was used to compute the log-likelihood function. The log-likelihood was maximized by an Adam optimizer (gradient applied on \(L\)). This batch training was repeated for 30 epochs. Finally, with the optimized \(L\), the whole dataset was used for computing covariance (Eq. 10). The computer code for implementing GKR is provided at https://github.com/AgeYY/speed_grid_cell_information.git.

Testing the normality assumption in data

We are interested in whether \(p\left({{\bf{r}}}|{{\bf{x}}}\right)\) follows a normal distribution, as assumed by GKR. We first inspected the case of an example cube that centered at (11 cm, 37 cm, 18 cm/s) with edge lengths of (10 cm, 10 cm, 10 cm/s) in the label space. Data (from a \({{{\mathscr{D}}}}_{s}\) sampled from R1M2) within the cube were collected and projected into their PC1-PC2 plane, as well as PC1 axis and PC2 axis for visualization. Using the projected data, we fitted the optimal 2D and 1D normal distributions using maximum likelihood estimation and overlapped the optimal normal distributions with the projected data for direct visual comparisons. To formally assess normality, we performed Henze–Zirkler test to the projected 2D data, and Shapiro–Wilk tests to the projected 1D data (see Supplementary Fig. 10). This example cubic data shown in Supplementary Fig. 10 does not follow a normal distribution.