Abstract

Mobility impairments from aging, injury, or medical conditions limit independence and social participation. Conventional assistive devices lack adaptability in complex environments. Recent wearable technologies integrating neural sensing, electronics, and co-design offer personalized, responsive mobility support. This perspective focuses on advances in wearable sensing and multimodal fusion for intent recognition, environmental interaction, and adaptive control in exoskeletons, prosthetics, smart wheelchairs, and navigation systems. Emphasizing human-in-the-loop and cognitive–sensorimotor integration, it outlines emerging trends and challenges, promoting intelligent, user-centered solutions to restore function and enhance autonomy, accessibility, and inclusion for individuals with mobility impairments.

Similar content being viewed by others

Introduction

Approximately 1.3 billion people live with significant disabilities, about 1 in 6 individuals1. Complementing this, data from the United Nations Statistics Division2 indicate that ~58 million people worldwide live with some form of walking or mobility impairment. Assistive technologies have emerged to enable individuals to regain some degree of functional independence. While traditional assistive devices such as wheelchairs, crutches, and prostheses have significantly improved mobility, they often lack the flexibility, adaptability, and comfort required for effective use in dynamic, real-world environments3,4,5,6,7.

Recent advancements, particularly in wearable technologies, are driving a transformative shift in the field of assistive mobility. Modern wearable devices offer an enhanced user experience through their emphasis on adaptability, comfort, and user-centered design. Wearables can dynamically adjust to the user’s needs in real-time, offering unprecedented levels of mobility support previously unattainable with conventional approaches8,9,10.

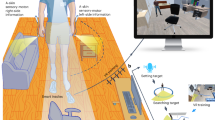

The field of wearable technologies for mobility assistance brings together diverse disciplines, including robotics, computer science, neuroscience, and brain-computer interfaces (BCIs)/human-robot interaction. As illustrated in Fig. 1, this interdisciplinary convergence has enabled the seamless integration of wearable sensing modalities—such as electroencephalography (EEG), functional near-infrared spectroscopy (fNIRS), electromyography (EMG), electrooculography (EOG), and inertial motion sensors—into assistive devices. This integration has paved the way for adaptive, user-responsive systems that can dynamically interpret and respond to the user’s physiological and behavioral signals. As these technologies advance, the incorporation of co-creation design, multimodal integration, and human-in-the-loop strategies is poised to drive more user-centered development and enhance the functionality and reliability of assistive devices. Together, these innovations mark a paradigm shift, enabling more effective, intuitive mobility solutions that integrate seamlessly into everyday life.

The figure illustrates primary applications of assisted mobility, i.e., exoskeletons, prosthetics, smart wheelchairs, and non-visual navigation aids, using wearable sensing technologies. These integrated solutions support diverse user needs in mobility and navigation across outdoor environments.

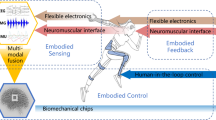

This perspective provides a focused overview on the role of wearable technologies—encompassing neural, physiological, and kinematic sensing—in assisted mobility, excluding other categories of assistive devices such as stationary rehabilitation systems or sensors that perceive and respond to environmental conditions (as shown in Fig. 2). Unlike previous reviews11,12,13,14 that broadly survey wearable systems, we emphasize the unique challenges, current progress, and future potential of wearable solutions designed to enhance mobility and independence for individuals with motor impairments. This perspective also presents an argument as to why the integration of diverse sensing technologies is essential for the continued development of effective assistive mobility technologies.

This diagram highlights key modalities—EEG, fNIRS, EOG, EMG, and motion sensing—and their multimodal integration in supporting real-world applications such as exoskeletons, prosthetics, smart wheelchairs, and non-visual navigation. Sensor placement across the body reflects diverse interaction points tailored to user intent recognition and environmental awareness. Reused with permission from Tang et al.166.

The scope is deliberately focused on lightweight, user-adaptive devices designed for real-time interaction with the human body in real-world environments. These include, but are not limited to, exoskeletons, prosthetics, smart wheelchairs, and non-visual navigation systems. Furthermore, each technology discussed is either already commercialized or shows a well-defined and realistic pathway toward scalable production and market entry. This perspective aims to serve as a practical guide for researchers, engineers, and clinicians engaged in the development and deployment of assistive mobility technologies.

Current landscape of wearable human sensing technologies

Decoding user intent

Effective assistive mobility requires a direct interface with the human nervous system—typically through estimating user intent—to enable intuitive and responsive control. Accurate recognition and interpretation of user intent are essential for intuitive and responsive control in assistive mobility devices, ensuring the system aligns with the user’s movement intentions. Intent recognition can be broadly classified into central and peripheral interfacing15. Central interfacing methods interpret cognitive intent by capturing brain activity through signals such as EEG or fNIRS. Peripheral interfacing relies on physiological and motion-based signals, including EMG, eye tracking, EOG, and inertial measurement units (IMUs), to infer user intent based on neuromuscular and kinematic cues. A more detailed explanation of these sensing modalities can be found in a summary table provided in Table 115,16,17,18,19,20,21,22,23,24. Rather than directly contributing to intent understanding, these technologies can be utilised to form a complete closed-loop system where effective sensing and mossnitoring is vital. Physiological signals such as electrocardiogram (ECG), photoplethysmography (PPG), heart rate variability, blood pressure, electrodermal activity, breathing, sweat biomarkers are then generally reactive physiological indicators; they reflect physiological changes resulting from, rather than preceding, the intended motion. They thus do not necessarily contribute to intent understanding, but may provide additional context and form part of a complete closed-loop system where monitoring success is important. The choice of sensing approach depends on the user needs. Some applications require brain activity monitoring, while others rely on body signals. Integrating central and peripheral interfaces in wearable systems enhances both accuracy and adaptability; this section will outline the key advantages of each modality as well as of their integration.

Central interfacing: functional brain imaging

Over the last decade, wearable assistive devices for mobility and performance have increasingly aimed to decode user intent from brain signals25, directly leveraging neural activity for device control. This approach aligns with motor control processes, supported by various non-invasive neuroimaging modalities based on distinct physiological principles. EEG records electrical activity using scalp electrodes to detect voltage changes from neuronal ionic currents26. Magnetoencephalography, conversely, measures the magnetic fields produced by these currents, offering comparable temporal resolution and improved cortical localization27. Functional magnetic resonance imaging (fMRI) tracks localized changes in cerebral blood flow as indicators of neural activation during tasks28. Lastly, fNIRS, a potential “wearable alternative” to fMRI, quantifies changes in oxygenated and deoxygenated haemoglobin to monitor cortical activity, albeit with fine spatial resolution. While magnetoencephalography and fMRI provide high spatial resolution for brain activity mapping, their reliance on expensive, stationary equipment28 and controlled environments29 limits their feasibility for integration with assistive mobility devices such as exoskeletons or smart wheelchairs. In contrast, EEG and fNIRS offer the advantages of being lightweight, cost-effective, and easily constructed into wearable form factors, making them more suitable for real-time user intent recognition in assistive mobility systems.

EEG enables users to control assistive devices via BCIs, bypassing the need for physical input. Two widely used paradigms in EEG-based BCIs are motor imagery (MI) and steady-state visual evoked potentials (SSVEP). MI can vary by movement type, laterality, or motor state (e.g., walking vs. standing), offering precise control 30. However, MI requires sustained concentration, and prolonged use may induce mental fatigue31. Consequently, monitoring cognitive state is essential for maintaining effective control. This can also be monitored during MI tasks using EEG, with secondary tasks such as mental arithmetic and adaptive feedback employed to sustain attention and assess user engagement32. SSVEP-based BCIs use visual stimuli to elicit frequency-specific neural responses. This approach offers precise and rapid command selection with high reliability, minimal user training, and fast response time. For instance, a recent study introduced an augmented reality (AR)-based BCI system using SSVEPs to enable hands-free prosthetic control with eight distinct hand movement modes33. However, their reliance on continuous visual input can cause visual fatigue and limit usability in prolonged tasks like wheelchair navigation, where eye strain and reduced situational awareness may compromise safety34. Therefore, the selection of control modalities should be tailored to the specific requirements of each application.

fNIRS effectively detects movement-related cortical activation, characterized by increases in oxygenated haemoglobin in the motor cortex during movement preparation and execution. For example, in individuals with transhumeral amputations, one study combined fNIRS with an artificial neural network to classify six upper-limb motion intentions, including elbow extension/flexion, wrist pronation/supination, and hand opening/closing35. fNIRS can also monitor MI and cognitive functions such as attention and decision-making, especially in neurorehabilitation settings like gait training36. While its high spatial specificity makes it well-suited for tracking cognitive workload, its limited temporal resolution—due to delayed hemodynamic responses—limits its effectiveness for capturing rapid changes.

As previously noted, EEG provides high temporal resolution for real-time detection of movement intent, whereas fNIRS offers superior spatial resolution for localising brain activation. Their complementary strengths make EEG–fNIRS integration a powerful approach to enhance both the accuracy and responsiveness of user intent recognition. Several initial studies combining EEG and fNIRS have explored lower limb motor imagery37 and contributed to an improved understanding of gait and balance38. Diffuse Optical Tomography (DOT), an advanced offshoot of fNIRS that reconstructs 3D hemodynamic responses, further enhances spatial resolution39.

Peripheral interfacing

Indirect methods for inferring user movement intent involve monitoring physiological and physical cues, providing useful information into navigational intentions and motor control. Wearable EMG sensors are widely employed in prosthetics and exoskeletons to detect muscle activity40, allowing for intuitive and responsive device control. Eye-tracking techniques and EOG-based systems help determine a user’s intended direction41. Beyond movement-related signals, physiological indicators such as heart rate, heart rate variability, respiration rate, and galvanic skin response can be integrated to assess the user’s physical state and movement readiness42. By capturing real-time physiological and biomechanical data, these indirect methods enhance the adaptability and responsiveness of mobility-assistive wearable devices, enabling more seamless interaction between the user and the assistive device.

EMG captures the electrical signals generated by motor units during skeletal muscle contraction40, providing a reliable means to infer user movement intent. By capturing muscle activation patterns, EMG enables real-time decoding of voluntary movements, facilitating intuitive control of assistive devices such as wearable robotic system43, wheelchairs44 and exoskeletons45, as illustrated in Fig. 3. The intensity and frequency of the recorded signals correspond to the degree of muscle activation, enabling the inference of the user’s intention to execute specific movements. Driven by advancements in sensor miniaturization, signal processing algorithms, and material science, EMG systems have evolved to offer greater precision, adaptability, and user comfort46. Notably, innovations such as high-density EMG (HD-EMG)47,48 (Fig. 3e) and stretchable patches49,50 have significantly enhanced the reliability and practicality of electrophysiological sensing in wearable applications.

a Overview of a lower limb exoskeleton using TFDP, a time-frequency method based on differential pattern analysis for feature extraction64. Reused with permission from Li et al.64. b Wearable BCI mouse system with headband, electrode placement, and schematic control framework66. c Textile-based exomuscle (Myoshirt) for shoulder support using tendon-driven actuation anchored on the thorax and upper arm62. Reused with permission from Geogarakis et al.62. d Soft upper-limb wearable robots including elbow and hand exosuits with remote actuation and IMU-based torque control43. e Stretchable high-density EMG sensor enabling real-time gesture recognition via AI-based processing49. f fNIRS-based system for recognizing upper-limb motion intention via optodes placed on the motor cortex35. g Brain-controlled prosthetic hand platform integrating EEG, AR glasses, and an 8-degree of freedom (DOF) prosthesis for real-time control33.

Eye-tracking and EOG are complementary techniques for monitoring ocular movements. Eye-tracking systems employ infrared cameras to detect pupil position and gaze direction, allowing for precise tracking of a user’s visual attention51. Gaze-based control is especially effective in assistive devices like wheelchairs; for instance, a gaze-enabled smart wheelchair aimed at individuals with severe physical impairments such as Amyotrophic Lateral Sclerosis and quadriplegia was demonstrated52. Eye-tracking has also proven useful in upper-limb prosthetics, with one study showing that gaze-based wrist movement prediction reduced compensatory shoulder and trunk motions53. Commercially available systems such as Tobii Dynavox have already been used52,54. In contrast, EOG measures the electrical potential differences generated by ocular movement, utilizing electrodes placed around the eyes to capture these bioelectrical signals55. Although EOG-based systems have lower spatial resolution than optical eye-tracking methods, they offer reliable eye movement detection with low computational demand, strong resistance to lighting variations, and easy integration into compact wearable devices56,57. EOG is also often used alongside EEG for neural control applications and has been applied to robotic arms58 and exoskeletons59 to assist stroke survivors with chronic paralysis in performing activities of daily living58.

IMUs measure motion-related parameters, including acceleration, angular velocity, and sometimes magnetic orientation, making them essential for wearable gait analysis and body motion tracking60. They provide continuous kinematic data for precise assessment of joint angles, stride length, gait phases, and balance. IMUs can measure abnormal gait patterns, enabling adaptive control in exoskeletons. IMUs have also been used in prosthetic knees to assess performance in tasks such as treadmill walking, incline/decline and stair navigation, and obstacle crossing. They allow for the evaluation of gait symmetry, comfort, and functional outcomes in individuals with limb amputations61. IMUs can additionally be integrated with advanced soft wearable systems to provide real-time posture monitoring, balance assessment, and adaptive movement assistance, enhancing mobility for individuals with motor impairments62.

Multimodality

Single-modal sensing methods, such as EEG, EMG, and IMUs, are widely used for motion intent recognition, yet each has inherent limitations. EEG, particularly in motor-impaired users, enables intent decoding via motor imagery but is susceptible to motion artifacts and environmental noise39, making it less suitable for dynamic tasks like walking. EMG captures neuromuscular activity preceding movement and is effective for proactive control of exoskeletons. However, its reliability can diminish over time due to muscle fatigue, electrode displacement, and skin impedance variability63. IMUs provide valuable kinematic data for estimating posture and joint angles, supporting adaptive locomotion. However, they are prone to drift accumulation over prolonged use, degrading accuracy and increasing processing latency to certain degree during sensor fusion16.

The features of individual sensing modalities are often complementary—where one underperforms, another can compensate. Multimodal signal fusion leverages this complementarity by integrating diverse sensor inputs to improve accuracy, responsiveness, and robustness in mobility assistance. Numerous studies have demonstrated that combining brain signals (e.g., EEG, fNIRS) with physiological or motion-based signals (e.g., EMG, EOG, IMUs) enhances intent recognition and system reliability. For example, EMG’s rapid response offsets EEG’s latency, while EEG contributes intent-related information when EMG degrades under fatigue. EEG–EMG integration64,65 improves motor intent detection by combining EEG’s early representation of motor planning with EMG’s detailed muscle activation signals, enabling more accurate reconstruction of complex limb movements. Similarly, EEG-EOG fusion42,59 supports eye-gaze-based control using EOG for precise eye movement detection and ocular artifact removal, improving EEG signal quality and command accuracy. IMUs further enhance context-awareness and support differentiation between voluntary movements and external disturbances. When combined with EEG66 or EMG43, IMUs improve motion tracking and control precision in devices such as prosthetics and exoskeletons, particularly in dynamic environments. As noted in Section 2.1.1, EEG-fNIRS fusion also improves classification accuracy and enables cognitive state monitoring, supporting adaptive assistance during rehabilitation67.

Effective sensor fusion techniques are essential to fully exploit the complementary strengths of multimodal signals such as EEG, EMG, EOG, and IMUs. Traditional fusion approaches fall into three categories: data-level37,42, feature-level64,65, and decision-level65. Data-level fusion combines raw signals from multiple modalities, preserving maximum information but often encountering issues like noise amplification and signal misalignment. Feature-level fusion extracts modality-specific features and concatenates them for joint modelling, balancing data richness and complexity but requiring precise feature engineering and synchronization. Decision-level fusion integrates outputs from independent classifiers, offering modularity but limiting cross-modal interaction. While these methods can perform well in controlled settings, they often lack joint optimization across modalities and require improvements in accuracy, sensitivity, and generalizability to meet real-world demands39.

Understanding environmental context

Beyond intent detection, environmental perception is essential for assistive mobility devices to adapt to varied terrains, avoid obstacles, and ensure user safety. This capability enables systems like smart wheelchairs, prosthetics and exoskeletons to navigate complex environments while enhancing safety and energy efficiency. A critical aspect of environmental understanding in assistive systems is accurately recognizing user intent within dynamic, task-oriented contexts. This involves not only interpreting physiological signals but also situational cues that reflect the user’s interaction goals. Complementing these approaches, image-based sensing has also proven essential in prosthetic calibration. For example, an image-based calibration system utilizing LED markers and camera-based image analysis enables accurate measurement of joint angles68. This ensures anatomically realistic motion calibration during development, which is critical for enabling precise and coordinated hand function. By supporting individualized and visually validated calibration, such systems contribute directly to improving the dexterity, control accuracy, and task-specific adaptability of upper-limb prostheses.

Another key aspect of environmental understanding is obstacle detection and avoidance, which allows mobility devices to identify potential hazards, such as uneven surfaces, staircases, curbs, or moving objects69. Alternatively, objects should not always be considered obstacles when planning navigation; for example, the user of a mobility device may wish to approach a person or dock to a table to have an interaction70. Technologies such as computer vision (CV)71,72 and Red, Green, Blue-Depth cameras73,74 provide real-time spatial awareness, enabling precise path planning and responsive adjustments to avoid collisions. This is particularly crucial for smart wheelchairs, lower-limb exoskeletons74, and prosthetics73, where safe navigation in crowded or dynamic environments is a primary concern75. For example, one study demonstrated that CV enhances mobility by enabling a robotic companion to track user movement without requiring body-mounted sensors72. Using a 3D vision system to accurately determine the relative position and orientation between the human and the robot, the system provides hands-free, real-time navigation assistance, reducing user effort and promoting independent mobility. Multi-sensor systems combining ultrasonic, passive infrared motion sensors, IMUs, and smartphone-based feedback have also been used to detect obstacles, surface changes, and moving objects, supporting real-time navigation and enhancing mobility for visually impaired users76.

Real-world adoption of wearable technologies for assisted mobility

Exoskeletons for mobility assistance in rehabilitation medicine

Exoskeletons are advanced wearable robotic devices designed to assist individuals with neurological and musculoskeletal disorders, such as stroke rehabilitation, spinal cord injury (SCI), multiple sclerosis, and Duchenne muscular dystrophy77,78,79,80,81,82,83.

One of the primary applications of exoskeletons is neurorehabilitation for motor recovery, particularly in conditions like stroke, SCI and multiple sclerosis84, which are often present with paresis, spasticity, muscle fatigue, and reduced coordination84,85. Exoskeletons enhance motor recovery by enabling intensive, task-specific training that leverages neuroplasticity for motor learning. Commercial lower-limb exoskeletons such as Lokomat, EksoGT, HAL, and Indego support gait training by guiding patients through controlled gait trajectories via actuated hip, knee, or ankle joints86,87,88. These devices incorporate sensors like IMUs and force-sensitive resistors to continuously monitor the patient’s movement intentions and physical condition, allowing real-time, adaptive assistance89,90 (Fig. 4a). For example, when a patient initiates a step but lacks sufficient strength, the device provides only the needed torque, promoting active engagement while avoiding over-reliance91. This iterative training improves coordination, joint stability, gait symmetry, and walking endurance while reducing compensatory patterns and accelerating motor recovery87,92,93. Similarly, upper-limb exoskeletons like ArmeoSpring, MyoPro, and ANYexo 2.0 support rehabilitation of arm and hand movements using adaptive assistance based on muscle activity and biosignals (EEG, EMG), encouraging user participation and functional gains94,95,96,97.

a Exoskeleton using joint moment estimation for task-agnostic support89, b Upper-limb exoskeleton with soft bioelectronics45; c EMG-driven leg prosthesis for biomimetic gait restoration107, d A lightweight robotic leg prosthesis replicating the biomechanics of the knee, ankle, and toe joint108; e Multimodal sensing-based smart wheelchair for health monitoring119, f BCI-controlled wheelchair with adaptive mental-state navigation118; g Visual aid translating depth images to audio for navigation167. Reused with permission from Tang et al.166; h Wearable obstacle avoidance system with multimodal feedback138.

Despite their potential in rehabilitation, exoskeletons face several challenges. Their rigid structures are often mismatched with complex human biomechanics, resulting in discomfort and unnatural movement98,99. Current control systems typically rely on limited inputs such as sEMG or motion capture, hindering accurate, real-time interpretation of user intent100. Other significant limitations include high costs, short battery life, bulky design, and complex maintenance101. Clinical adoption is further constrained by individual differences in disease stage, gender, height, body size, and muscle tone, affecting both usability and therapeutic outcomes102. Future advancement may involve developing soft exoskeletons using flexible biomaterials for enhanced comfort and adaptability103. Advances in artificial intelligence (AI) and personalized control algorithms could improve human–machine coordination104, while miniaturized actuators may enhance portability and extend battery life91. Interdisciplinary collaboration across rehabilitation, neuroscience, and interface design will also be essential for optimizing clinical usability. As regulatory processes evolve and costs decrease, exoskeletons have the potential to facilitate more personalized mobility support.

Prosthetics for sensory-motor restoration

Prosthetics replace missing limbs entirely, assuming both locomotor and sensory roles105, which usually leverages wearable sensing technologies such as EMG, IMUs, and environmental sensors to enable closed-loop, real-time control based on user intent and environmental context106,107,108 (Fig. 4c,d). IMUs and encoders embedded in prosthetic joints enable real-time gait phase detection and adaptive control, improving symmetry and reducing energy cost. Integrated EMG sensors decode user intent for voluntary control of joint movement, supporting both repetitive and complex actions. Environmental sensors such as cameras and LiDAR enhance terrain recognition and obstacle avoidance, improving robustness in real-world use. Integrating soft sensors and biomechatronic components further improves responsiveness, comfort, and long-term usability, making prosthetics a promising direction for next-generation mobility assistance.

Despite these advances, prosthetics still face challenges in achieving stable, high-resolution sensory feedback, particularly during dynamic, real-world tasks109,110. Current limitations include signal processing delays, inconsistent sensor performance over prolonged use (e.g., IMU drift), and the need for frequent user-specific calibration. Non-invasive techniques are often limited by low spatial specificity, while invasive methods face biocompatibility, surgical risk, and long-term reliability concerns. Future directions include the development of soft, lightweight sensors to enhance comfort, machine learning models that generalize across users to reduce training burden, and high-efficiency algorithms to improve real-time responsiveness. Co-designing wearable hardware with multimodal sensor fusion can enable robust, personalized, closed-loop control in daily-life environments.

Smart wheelchair: multimodal biosignal-driven autonomous navigation

Smart wheelchairs expand upon traditional powered wheelchairs to better support individuals with severe motor impairments from conditions such as SCI, progressive neuromuscular disorders, or cognitive disabilities. Unlike conventional wheelchairs, which rely on manual control, smart wheelchairs interpret user intent via physiological signals, reducing effort and improving independence111.

Users control smart wheelchairs through various modalities, including EMG, IMU, EOG, and EEG. Wearable EMG sensors placed on accessible muscles translate these signals into directional commands with minimal effort44. For example, Oonishi et al.112. utilized sEMG signals from the wrist and hand dorsum to control forward and backward motion via a threshold-based disturbance observer. Similarly, IMUs can capture gestures or head movements, enabling intuitive navigation. Mogahed et al.113. developed a system for users with quadriplegia that converted head movements into control signals with over 97% accuracy and sub-second response time.

For individuals with severely limited motor function, eye-tracking and BCIs offer non-manual navigation. EOG-based systems detect eye movements and convert them into wheelchair commands, enabling intuitive control. For example, Barea et al. used EOG electrodes to detect horizontal and vertical eye movements, translating them into directional inputs with 95% accuracy41. EEG-based BCIs are also commonly used in smart wheelchair control, enabling users to navigate through mental tasks such as MI114,115 or SSVEP116,117. These methods significantly expand mobility options for individuals with severe motor impairments, highlighting the growing potential of EEG-based smart wheelchairs to foster greater independence and mobility.

Beyond simplifying control, smart wheelchairs integrate real-time physiological monitoring to assess user health and adapt assistance levels accordingly118 (Fig. 4f). Embedded sensors track vital signs such as heart rate, muscle fatigue, and respiratory rate119,120. ECG and PPG121 sensors track cardiovascular activity, detecting issues such as arrhythmia or sudden hypotension. sEMG sensors122 assess muscle fatigue, guiding control adjustment to prevent overexertion. Additionally, accelerometers and gyroscopes support fall and seizure detection by recognizing abrupt, abnormal movements and triggering automatic alerts123,124,125,126. Within shared control frameworks, both the user and system share control authority to allow the user to achieve their goals safely in the environment127. When signs of fatigue or reduced engagement are detected from the sensors, the system can temporarily increase autonomy to lessen user effort. This dynamic adjustment minimizes physical and cognitive strain, supporting safer and more sustainable use128. With machine learning integration, these systems are also advancing toward predictive analytics, enabling early detection of health risks and proactive assistance.

Despite encouraging progress, smart wheelchairs still face challenges related to user comfort during extended use, power efficiency, signal reliability, and individualized system calibration119,129,130. Overcoming these limitations is essential for broader adoptions.

Non-visual navigation: multisensory fusion for real-time environmental interaction

With ~285 million people worldwide living with vision impairment, including 39 million experiencing moderate to severe blindness131, independent navigation remains a significant challenge. Suitable wearable technologies are needed to deliver real-time spatial awareness and enhance environmental interaction. Modern wearable navigation systems integrate real-time sensing, AI-driven data processing, and intuitive feedback to detect, interpret, and communicate spatial information—improving user safety and enabling more autonomous mobility132 (Fig. 4g,h).

Effective wearable navigation systems typically involve three key processes: environmental perception, real-time data interpretation, and non-visual user feedback. Environmental perception relies on sensor such as red, green, blue-depth cameras133,134,135,136,137, time-of-flight sensors138,139, acoustic sensors140,141, IMUs142,143,144, electromagnetic sensors145 and light sensors146 to detect objects, movement and navigation cues like crosswalks and path boundaries147 (as shown in Fig. 4g,h). Real-time interpretation employs algorithms, often based on AI to rapidly analyze sensor data and determine precise user location, obstacle distances, and optimal navigation routes148,149,150. Non-visual feedback methods such as auditory signals or haptic (vibrational or tactile) guide users safely through environments without visual cues141,151,152. Many systems combine both feedback modalities, enabling adaptability across diverse environments153. For example, using haptic cues in noisy urban settings where auditory signals may be less effective154.

Wearable navigation devices have been validated in real-world applications with studies confirming their ability to reduce navigation errors and boost user confidence133,135,136,137,139,143,144,145,147,155,156,157,158,159,160,161,162. Several commercially available systems are already in use: Sunu Band163 and BuzzClip164 employ sonar-based proximity detection, whereas OrCam MyEye165 uses AI and CV to recognize objects, text, and faces, delivering spoken descriptions of the surrounding environment.

Despite technological advancements, current wearable navigation systems still face limitations such as high cost, restricted performance in adverse environmental conditions (e.g., darkness, fog, rain), limited battery life, and a steep learning curve132. Additionally, most devices require precise calibration and consistent connectivity, potentially complicating their use151. Addressing these issues will require ongoing progress in AI137, sensor miniaturization151, and user-centered design166,167. Enhancing real-time scene comprehension and developing predictive navigation models can improve responsiveness by anticipating movement and suggesting timely adjustments. Moreover, adaptive feedback tailored to user preferences and environmental context could significantly improve usability. With these improvements, wearable navigation systems hold the potential to deliver more autonomous and precise mobility solutions, bridging critical gaps left by conventional aids for the visually impaired.

Outlook

Current landscape and unmet needs

Ageing-related mobility decline constitutes a societal-scale challenge that complements disease- and injury-related rehabilitation needs. According to the World Population Prospects 2024 Revision, the global population aged 65 and overreached ~809 million in 2023168. Projections further indicate that by 2035 China alone will have over 300 million citizens above 65 years, accounting for more than 20% of its population169. These demographic shifts underscore the urgency of addressing ageing alongside clinical rehabilitation. Clinical disorders represent urgent scenarios requiring therapeutic devices, while ageing reflects a broader demographic demand for long-term mobility support. Regulatory frameworks further highlight this distinction: post-stroke exoskeletons are typically subject to stringent medical device approval, whereas fall-prevention sensors or balance-support exoskeletons for older adults may be classified as wellness or assistive devices with fewer regulatory barriers. Importantly, technologies developed for rehabilitation can serve as a foundation for ageing-related applications. For example, a wearable hip exoskeleton validated in post-stroke gait training for improvements in gait parameters and muscle effort has subsequently been shown to support daily physical activity and gait exercise in older adults. Such shared sensing and control modules can be leveraged to reduce development costs and facilitate broader adoption across populations170,171.

The global burden of mobility-related impairments remains substantial and unevenly distributed. Current solutions, such as robotic exoskeletons, prosthetics, smart wheelchairs and non-visual navigation, have shown significant promise in restoring mobility and autonomy across diverse settings, including rehabilitation clinics and home-based care. However, many of these systems face persistent technical limitations that hinder widespread adoption. Sensor data can be susceptible to distortion and noise10, while limited battery life10,172 makes it challenging to maintain a continuous power supply for long-term monitoring. A further challenge lies in the inequalities in sensing accuracy and accessibility across populations, particularly in modalities such as EEG173, PPG174, and ECG175. Participatory co-design is also critical for the successful development and adoption of assistive mobility technologies176. By involving users directly, it ensures solutions are usable, sustainable, and aligned with real-world needs176,177. For example, Biggs et al. demonstrated how participatory workshops with blind and low-vision travelers refined non-visual navigation cues to better fit everyday wayfinding practices, underscoring the value of user involvement in shaping technical features178. The SOC framework (Selection–Optimization–Compensation) complements this approach, highlighting that technologies should not only compensate for functional loss but also optimize residual abilities. In exoskeleton research, human-in-the-loop optimization strategies exemplify this principle by iteratively tuning assistance profiles to individual gait dynamics, thereby enhancing both efficiency and safety179. Similarly, navigation systems can be designed to guide users along safer or more accessible routes180,181,182. Combining co-design with SOC principles has the potential to strengthen user acceptance, destigmatize device use, and expand the role of these systems in supporting both recovery and proactive adaptation.

Contextual metadata, such as environmental conditions, spatial information, and user preferences, can enhance wearable mobility systems by enabling adaptive navigation, informed behavioral adjustments, and improved safety and usability183. For instance, an outdoor navigation system for blind users integrates GPS with cartographic data to deliver spatialized audio cues, demonstrating how environmental and spatial metadata can be translated into real-time, user-friendly guidance184. Beyond environmental data, behavioral and psychological information such as ecological momentary assessment (EMA) can also provide valuable context for system design, supporting the development of more adaptive technologies and facilitating participatory co-design with users185,186.

Beyond the technologies discussed above, other commonly used assistive devices, such as rollators, have not yet been extensively studied in conjunction with wearable sensors187,188. Future advancements that integrate these assistive devices with wearable sensors could enable better monitoring of users’ movement patterns, enhance risk detection, and provide more personalized support, thereby improving their overall effectiveness as mobility aids188.

Across the adoptions of wearable technologies, each technology also faces specific issue. Exoskeletons are constrained not only by delays between intention recognition and actuation but also by weight, bulk, and limited adaptability across diverse daily activities88. Prosthetics suffer from delays in integrating user intention and sensory feedback as well as challenges in achieving naturalistic multi-degree-of-freedom control189. Non-visual navigation systems require intuitive, low-burden feedback in complex environments and remain sensitive to environmental variability such as lighting or weather166. Smart wheelchairs are restricted by limited command sets, high cognitive demands, and difficulties in operating within dynamic, cluttered environments.

Shared challenges include high power consumption, susceptibility to sensor noise, privacy concerns associated with sensitive data, and the need for extensive user customization and training, which together hinder broader adoption88,166,189. Addressing these issues requires both cross-cutting and device-specific strategies. For example, deep learning and computer vision methods can improve environmental perception for non-visual navigation and smart wheelchairs, while enhancing intention recognition for exoskeletons and prosthetics, and simultaneously filtering sensor noise45,190,191. Advances in low-power electronic components such as memristors can substantially reduce energy consumption, and edge processing architectures can protect user privacy by enabling on-device computation without reliance on remote servers192,193,194. Finally, embedding co-creation design principles throughout development can address user customization and training needs, ensuring that systems are not only technically robust but also tailored to everyday practices and user acceptance, thereby optimizing overall product experience176,177. The advancements of wearables for assisted mobility reflect a paradigm shift, from isolated, function-specific devices to intelligent, connected, and user-centric systems.

Additional barriers for real-world use include high costs, short battery life, discomfort, and a lack of widely available commercial products. Addressing issues of usability, affordability, and continuous operation is essential to support broader adoptions, especially in low-resource settings. Emerging technologies are expanding what wearable systems can do. Besides this, although the potential of wearable devices for mobility enhancement is widely recognized, there is a lack of a unified framework for evaluating their effectiveness, usability, and long-term impact. For exoskeletons, prostheses, smart wheelchairs, and non-visual navigation systems, each subfield relies on individual and task-specific evaluation methods. There is currently no universally acknowledged standard for assessing system performance. The difficulty of establishing a unified evaluation framework lies in the high heterogeneity of user populations and needs. Widely used scales, such as the Jebsen–Taylor Hand Function Test and the Box & Block tests, provide objective scores on specific functional dimensions and normative data for clinical or research contexts, but they fall short of capturing requirements across diverse devices and user groups195. For instance, in upper-limb prosthetic rehabilitation, Resnik et al. found that outcome measures such as the Jebsen–Taylor and Box & Block tests show variable responsiveness across different levels of amputation195. Moreover, assistive devices are typically highly personalized with numerous adjustable parameters. Even for a single prosthetic device, the interpretation of performance metrics (e.g., when using multiple measures of control, gait, user experience with varying weightings) continues to evolve, highlighting the immaturity of internal evaluation frameworks and the challenge of cross-industry harmonization196. Data collection and sharing further face ethical and privacy barriers. Wearable and digital health research repeatedly report users’ reluctance to share sensitive physiological and behavioral data, inconsistencies in policy implementation, and the lack of unified inter-institutional data-sharing agreements197. These constraints directly limit the availability of open datasets and cross-context benchmarks, thereby restricting the development of a standardized framework. Establishing standardized evaluation frameworks thus represents an important direction for future work and requires joint input from researchers, clinicians, and end-users throughout the design and implementation process. Critical questions, such as how to optimize devices for specific types of mobility impairments, how to balance performance with user comfort, and how to ensure the affordability and accessibility of these technologies, remain underexplored and not addressed.

Ongoing interdisciplinary research and continued technical improvements will be essential to overcome these barriers. By fostering collaboration across engineering, medicine, and user-cantered design, the next generation of wearable assistive technologies are expected to move beyond technical innovation toward greater autonomy, safety, and quality of life for users worldwide.

Human in the loop: challenges in sensorimotor-cognitive integration

Despite the sophistication of embedded sensors or control algorithms, the human brain remains a vital limiting factor. Whether the goal is to augment motor capacity (as in exoskeletons), restore (prosthetics), or replace lost function (as in wheelchairs), effective real-world performance depends on the user’s ability to integrate these devices into their existing sensorimotor and cognitive systems to manage novel input–output mappings and adapt to unfamiliar sensorimotor contingencies. Technologies that appear intuitive on paper may collapse under real-world conditions where users must simultaneously coordinate multiple goals, as seen with midair haptic systems, which often produce faint signals that require concentrated attention and thus underperform in complex everyday contexts198.

This integration is non-trivial. As demonstrated in upper-limb augmentation research using extra robotic digits and limbs, introducing artificial actuators—even in able-bodied users—requires the brain to recruit control strategies and sensory mappings that are not innately available199. In such cases, users must “borrow” neurocognitive, sensory and motor resources from other body parts (e.g., toes controlling a robotic thumb200), leading to what has been termed the resource allocation problem: the cognitive and neural cost of operating a new device without compromising existing function199. For example, controlling a robotic thumb using the toes may impact fundamental lower limb function, as recent evidence shows that both actively using and merely wearing the toe-controlled robotic thumb can lead to measurable declines in balance performance, suggesting competition with the toes’ original role in postural stability201.

Crucially, this challenge is not unique to augmentation202. It generalizes to assistive mobility technologies where the user must continuously plan, monitor, and adapt their interaction with the device under conditions of physical impairment, environmental uncertainty, and cognitive load. The human sensorimotor system evolved efficient strategies—like sensory gating, attenuation203 and active inference204 —to reduce redundant input and prioritize error signals205. Artificial feedback systems often bypass these mechanisms, delivering non-adaptive, uniformly salient signals. Even systems designed for simplicity, like vibrotactile or visual feedback cues, may become counter-effective if they are not congruent with the brain’s filtering and predictive mechanisms. For example, continuous vibrotactile cues can induce sensory overload or desensitization, leading users to ignore the feedback altogether198.

Paradoxically, the challenge of neurocognitive compatibility for motor interfaces might grow with the sophistication of the wearable interface, such as when artificial systems generate high-dimensional or ambiguous data streams that must be interpreted in real time. Even interfaces designed to be highly intuitive may falter if they approximate—but fail to fully replicate—natural sensorimotor mappings206. For example, near-biomimetic sensorimotor interfaces can produce mismatches between expected and actual sensations, triggering challenges analogous to the “uncanny valley” in artificial vision207.

In addition, temporal delays—whether introduced during sensing, processing, or actuation—pose a critical barrier to seamless integration. The human sensorimotor system relies on tight timing loops, often on the order of tens of milliseconds, to predict and correct movement. For example, temporal delays as short as 50 ms in haptic cues have been shown to distort perceived stiffness and object cohesion208. Beyond disruptions for motor control, delays exceeding this threshold can disrupt the user’s sense of agency, making actions feel disconnected from intention209. This issue is especially acute in interfaces that depend on slow signal acquisition (or accumulation) and processing, such as EEG or fNIRS, where effective control signals may take up to hundreds of milliseconds to emerge. These lags impair the fluidity of control and can undermine the user’s confidence in, and sense of ownership over, the device’s actions.

Finally, we must acknowledge that neurocognitive integration is context-dependent198. Physiological and cognitive factors like fatigue, stress, cognitive load and divided attention can significantly alter how an individual will act and react. A haptic cue that is helpful during training in a lab may become irrelevant or even misleading when navigating a crowded, noisy urban environment. The cognitive effort required to control a neural signal for EEG and fNIRS interfaces might be too costly when trying to multitask in everyday settings. Indeed, mobile fNIRS studies show that under dual-task conditions, performance declined sharply and prefrontal activation plateaued or even dropped210. Thus, rather than focusing solely on biomimicry or technical fidelity, future co-creation designs must prioritize technologies that adapt to human cognitive variability and offer flexible, context-sensitive modes of interaction.

Conclusion

This perspective provides a timely report of the status and prospects of the rapidly evolving area of wearable technologies in assisted mobility, informing a guidance for its future development. In the context of global population ageing and global economic slowdown cycles, there is a clear need that the wearable mobility technologies in the real world should be more accessible, inexpensive, inclusive, intelligent, user-centric, and personalized, and be able to effectively “close the human loop”. Nonetheless, given the rapid progress in wearable technologies, co-creation design, and medical engineering, there is strong reason to believe that meaningful improvements in assisted mobility—and, consequently, in the independence and quality of life of individuals with severe motor impairments—can be realistically achieved in real-world settings within the next decade.

References

World Health Organization. Disability. World Health Organization https://www.who.int/news-room/fact-sheets/detail/disability-and-health (2023).

United Nations Statistics Division. United Nations Disability Statistics Database. United Nations https://unstats.un.org/unsd/demographic-social/sconcerns/disability/statistics (2018).

Emerson, E., Fortune, N., Llewellyn, G. & Stancliffe, R. Loneliness, social support, social isolation and wellbeing among working age adults with and without disability: cross-sectional study. Disabil Health J 14, 100965 (2021).

van Dam, K., Gielissen, M., Bles, R., van der Poel, A. & Boon, B. The impact of assistive living technology on perceived independence of people with a physical disability in executing daily activities: a systematic literature review. Disabil. Rehabil. Assist Technol. 19, 1262–1271 (2024).

Desideri, L., Salatino, C. & Borgnis, F. Assistive Technology Service Delivery Outcome Assessment: From Challenges to Standards. in The Palgrave Encyclopedia of Disability 1–13 (Springer Nature Switzerland, 2024).

Khoshmanesh, F., Thurgood, P., Pirogova, E., Nahavandi, S. & Baratchi, S. Wearable sensors: At the frontier of personalised health monitoring, smart prosthetics and assistive technologies. Biosensors & bioelectronics. 176, 112946 (2021).

Caro, C. C., Costa, J. D. & Cezar da Cruz, D. M. The use of mobility assistive devices and the functional independence in stroke patients. Braz. J. Occup. Ther. 26, 558–568 (2018).

Liu, S., Zhang, J., Zhang, Y. & Zhu, R. A wearable motion capture device able to detect dynamic motion of human limbs. Nat. Commun. 11, 5615 (2020).

Ouyang, W. et al. A wireless and battery-less implant for multimodal closed-loop neuromodulation in small animals. Nat. Biomed. Eng. 7, 1252–1269 (2023).

Iqbal, S. M. A., Mahgoub, I., Du, E., Leavitt, M. A. & Asghar, W. Advances in healthcare wearable devices. npj Flex Electron. 5, 9 (2021).

Zambotti, M. et al. State of the science and recommendations for using wearable technology in sleep and circadian research. Sleep 47, zsad325 (2024).

Canali, S., Schiaffonati, V. & Aliverti, A. Challenges and recommendations for wearable devices in digital health: Data quality, interoperability, health equity, fairness. PLOS Digital Health 1, e0000104 (2022).

Rebelo, A., Martinho, D. V., Valente-dos-Santos, J., Coelho-e-Silva, M. J. & Teixeira, D. S. From data to action: a scoping review of wearable technologies and biomechanical assessments informing injury prevention strategies in sport. BMC Sports Sci. Med Rehabil. 15, 169 (2023).

Doherty, C., Baldwin, M., Keogh, A., Caulfield, B. & Argent, R. Keeping pace with wearables: a living umbrella review of systematic reviews evaluating the accuracy of consumer wearable technologies in health measurement. Sports Med. 54, 2907–2926 (2024).

Zhang, F. & Huang, H. Source selection for real-time user intent recognition toward volitional control of artificial legs. IEEE J. Biomed. Health Inf. 17, 907–914 (2013).

Gu, C., Lin, W., He, X., Zhang, L. & Zhang, M. IMU-based motion capture system for rehabilitation applications: a systematic review. Biomim. Intell. Robot. 3, 100097 (2023).

Halford, J. J., Sabau, D., Drislane, F. W., Tsuchida, T. N. & Sinha, S. R. American Clinical Neurophysiology Society Guideline 4: Recording clinical EEG on digital media. Neurodiagn. J. 56, 261–265 (2016).

Li, R., Potter, T., Huang, W. & Zhang, Y. Enhancing Performance of a Hybrid EEG-fNIRS System Using Channel Selection and Early Temporal Features. Front Hum Neurosci 11, (2017).

Pinti, P., Scholkmann, F., Hamilton, A., Burgess, P. & Tachtsidis, I. Current Status and Issues Regarding Pre-processing of fNIRS Neuroimaging Data: An Investigation of Diverse Signal Filtering Methods Within a General Linear Model Framework. Front Hum Neurosci 12, (2019).

Roberto Merletti. et al. Standards for reporting EMG data. J. Electromyogr. Kinesiol. 9, Ⅲ–Ⅳ (1999).

Pashaei, A., Yazdchi, M.R. & Marateb, H.R. Designing a low-noise, high-resolution, and portable four channel acquisition system for recording surface electromyographic signal. J. Med Signals Sens 5, 245–252 (2015).

Belkhiria, C., Boudir, A., Hurter, C. & Peysakhovich, V. EOG-based human–computer interface: 2000–2020. Rev. Sens. 22, 4914 (2022).

VectorNav Technologies LLC. Inertial Navigation Primer: Specifications & Error Budgets — IMU Specifications. VectorNav Technologies LLC https://www.vectornav.com/resources/inertial-navigation-primer/specifications--and--error-budgets/specs-imuspecs.

Inertial Labs, I. IMU – Inertial Measurement Units — Inertial Labs Product Page. Inertial Labs, Inc https://inertiallabs.com/products/imu-inertial-measurement-units/.

Hamid, H. et al. Analyzing classification performance of fNIRS-BCI for gait rehabilitation using deep neural networks. Sensors 22, 1932 (2022).

Alarcao, S. M. & Fonseca, M. J. Emotions recognition using EEG signals: a survey. IEEE Trans. Affect Comput 10, 374–393 (2019).

Cohen, D. Magnetoencephalography: detection of the brain’s electrical activity with a superconducting magnetometer. Science (1979) 175, 664–666 (1972).

DeYoe, E. A., Bandettini, P., Neitz, J., Miller, D. & Winans, P. Functional magnetic resonance imaging (FMRI) of the human brain. J. Neurosci. Methods 54, 171–187 (1994).

Mellor, S. et al. Real-time, model-based magnetic field correction for moving, wearable MEG. Neuroimage 278, 120252 (2023).

Kueper, N., Kim, S. K. & Kirchner, E. A. Avoidance of specific calibration sessions in motor intention recognition for exoskeleton-supported rehabilitation through transfer learning on EEG data. Sci. Rep. 14, 16690 (2024).

Talukdar, U., Hazarika, S. M. & Gan, J. Q. Motor imagery and mental fatigue: inter-relationship and EEG based estimation. J. Comput Neurosci. 46, 55–76 (2019).

Apicella, A., Arpaia, P., Frosolone, M. & Moccaldi, N. Hisgh-wearable EEG-based distraction detection in motor rehabilitation. Sci. Rep. 11, 5297 (2021).

Zhang, X. et al. A novel brain-controlled prosthetic hand method integrating AR-SSVEP augmentation, asynchronous control, and machine vision assistance. Heliyon 10, e26521 (2024).

Zheng, X. et al. Anti-fatigue performance in SSVEP-based visual acuity assessment: a comparison of six stimulus paradigms. Front Hum. Neurosci. 14, 301 (2020).

Sattar, N. Y. et al. fNIRS-based upper limb motion intention recognition using an artificial neural network for transhumeral amputees. Sensors 22, 726 (2022).

Chen, J. et al. Simultaneous mental fatigue and mental workload assessment with wearable high-density diffuse optical tomography. IEEE Trans. Neural Syst. Rehabil. Eng. 1–1 https://doi.org/10.1109/TNSRE.2025.3551676 (2025).

Cui, W., Lin, K., Liu, G., Sun, Y. & Cai, J. A wireless integrated EEG–fNIRS system for brain function monitoring. IEEE Sens J. 24, 2125–2133 (2024).

Tait, P. J. et al. MoBI: Mobile Brain/Body Imaging to Understand Walking and Balance. in Locomotion and Posture in Older Adults 15–38 (Springer Nature Switzerland, Cham, 2024).

Chen, J. et al. fNIRS-EEG BCIs for motor rehabilitation: a review. Bioengineering 10, 1393 (2023).

Reaz, M. B. I., Hussain, M. S. & Mohd-Yasin, F. Techniques of EMG signal analysis: detection, processing, classification and applications. Biol. Proced. Online 8, 11–35 (2006).

Barea, R., Boquete, L., Mazo, M. & López, E. System for assisted mobility using eye movements based on electrooculography. IEEE Trans. Neural Syst. Rehabilitation Eng. 10, 209–218 (2002).

Ortiz, M. et al. An EEG database for the cognitive assessment of motor imagery during walking with a lower-limb exoskeleton. Sci. Data 10, 343 (2023).

Noronha, B. et al. Soft, Lightweight wearable robots to support the upper limb in activities of daily living: a feasibility study on chronic stroke patients. IEEE Trans. Neural Syst. Rehabilitation Eng. 30, 1401–1411 (2022).

Welihinda, D. V. D. S. et al. EEG and EMG-based human-machine interface for navigation of mobility-related assistive wheelchair (MRA-W). Heliyon 10, e27777 (2024).

Lee, J. et al. Intelligent upper-limb exoskeleton integrated with soft bioelectronics and deep learning for intention-driven augmentation. npj Flexible Electronics 8, 11 (2024).

Kim, D., Min, J. & Ko, S. H. Recent developments and future directions of wearable skin biosignal sensors. Adv. Sens. Res. 3, 2 (2024).

Yang, J., Shibata, K., Weber, D. & Erickson, Z. High-density electromyography for effective gesture-based control of physically assistive mobile manipulators. npj Robot. 3, 2 (2025).

Driscoll, N. et al. MXene-infused bioelectronic interfaces for multiscale electrophysiology and stimulation. Sci. Transl. Med. 13, eabf8629 (2021).

Lee, H. et al. Stretchable array electromyography sensor with graph neural network for static and dynamic gestures recognition system. npj Flex. Electron. 7, 20 (2023).

Kim, H. et al. Skin preparation–free, stretchable microneedle adhesive patches for reliable electrophysiological sensing and exoskeleton robot control. Sci. Adv. 10, eadk5260 (2024).

Zhu, L. et al. Wearable near-eye tracking technologies for health: a review. Bioengineering 11, 738 (2024).

Tharwat, M., Shalabi, G., Saleh, L., Badawoud, N. & Alfalati, R. Eye-Controlled Wheelchair. in 5th International Conference on Computing and Informatics, ICCI 2022 97–101 (Institute of Electrical and Electronics Engineers Inc., 2022).

Karrenbach, M., Boe, D., Sie, A., Bennett, R. & Rombokas, E. Improving automatic control of upper-limb prosthesis wrists using gaze-centered eye tracking and deep learning. IEEE Trans. Neural Syst. Rehabil. Eng. 30, 340–349 (2022).

Sunny, M. S. H. et al. Eye-gaze control of a wheelchair mounted 6DOF assistive robot for activities of daily living. J. Neuroeng. Rehabil. 18, 173 (2021).

Ma, J., Zhang, Y., Cichocki, A. & Matsuno, F. A Novel EOG/EEG hybrid human–machine interface adopting eye movements and erps: application to robot control. IEEE Trans. Biomed. Eng. 62, 876–889 (2015).

Frey, S. et al. GAPses: Versatile smart glasses for comfortable and fully-dry acquisition and parallel ultra-low-power processing of EEG and EOG. IEEE Trans Biomed Circuits Syst 1–11 (2024).

Kosmyna, N., El Adl, K. & Kim, M. Wearable Pair of EEG, EOG and fNIRS Glasses for Cognitive Workload Detection. in 2024 IEEE 20th International Conference on Body Sensor Networks (BSN) https://doi.org/10.1109/BSN63547.2024.10780518 (IEEE, 2024).

Nann, M. et al. Restoring activities of daily living using an EEG/EOG-controlled semiautonomous and mobile whole-arm exoskeleton in chronic stroke. IEEE Syst. J. 15, 2314–2321 (2021).

Pillai, B. M. et al. Lower limb exoskeleton with energy-storing mechanism for spinal cord injury rehabilitation. IEEE Access 11, 133850–133866 (2023).

Manupibul, U. et al. Integration of force and IMU sensors for developing low-cost portable gait measurement system in lower extremities. Sci. Rep. 13, 10653 (2023).

Gao, S. et al. Bioinspired origami-based soft prosthetic knees. Nat. Commun. 15, 10855 (2024).

Georgarakis, A. M., Xiloyannis, M., Wolf, P. & Riener, R. A textile exomuscle that assists the shoulder during functional movements for everyday life. Nat. Mach. Intell. 4, 574–582 (2022).

Al-Ayyad, M., Owida, H. A., De Fazio, R., Al-Naami, B. & Visconti, P. Electromyography monitoring systems in rehabilitation: a review of clinical applications, wearable devices and signal acquisition methodologies. Electron. (Basel) 12, 1520 (2023).

Li, W. et al. The human–machine interface design based on sEMG and motor imagery EEG for lower limb exoskeleton assistance system. IEEE Trans. Instrum. Meas. 73, 1–14 (2024).

Gordleeva, S. Y. et al. Real-Time EEG–EMG human–machine interface-based control system for a lower-limb exoskeleton. IEEE Access 8, 84070–84081 (2020).

Jiang, Y. et al. Daily Assistance for Amyotrophic Lateral Sclerosis Patients Based on a Wearable Multimodal Brain-Computer Interface Mouse. IEEE Transactions on Neural Systems and Rehabilitation Engineering https://doi.org/10.1109/TNSRE.2024.3520984 (2024).

Khan, H. et al. Analysis of human gait using hybrid EEG-fNIRS-based BCI system: A review. Front. Hum. Neurosci. 14, 613254 (2021).

Yang, H. et al. A lightweight prosthetic hand with 19-DOF dexterity and human-level functions. Nat. Commun. 16, 955 (2025).

Madake, J., Bhatlawande, S., Solanke, A. & Shilaskar, S. PerceptGuide: a perception driven assistive mobility aid based on self-attention and multi-scale feature fusion. IEEE Access 11, 101167–101182 (2023).

Arditti, S., Habert, F., Saracbasi, O. O., Walker, G. & Carlson, T. Tackling the Duality of Obstacles and Targets in Shared Control Systems: A Smart Wheelchair Table-Docking Example. in 2023 IEEE International Conference on Systems, Man, and Cybernetics (SMC) 4393–4398 https://doi.org/10.1109/SMC53992.2023.10393886 (IEEE, 2023).

Thøgersen, M. B. et al. User based development and test of the EXOTIC exoskeleton: Empowering individuals with tetraplegia using a compact, versatile, 5-DoF upper limb exoskeleton controlled through intelligent semi-automated shared tongue control. Sensors 22, 6919 (2022).

Shen, T., Afsar, M. R., Zhang, H., Ye, C. & Shen, X. A 3D computer vision-guided robotic companion for non-contact human assistance and rehabilitation. J. Intell. Robot Syst. 100, 911–923 (2020).

Hu, C., Kim, D., Luo, S. & Gionfrida, L. PointGrasp: Point cloud-based grasping for tendon-driven wearable robotic applications. in IEEE RAS International Conference in Robotics and Automation (2024).

Ramanathan, M. et al. Visual Environment perception for obstacle detection and crossing of lower-limb exoskeletons. in IEEE International Conference on Intelligent Robots and Systems 12267–12274 (2022).

Zhang, B. et al. From HRI to CRI: crowd robot interaction—understanding the effect of robots on crowd motion. Int J. Soc. Robot 14, 631–643 (2022).

Rahman, M. M., Islam, M. M., Ahmmed, S. & Khan, S. A. Obstacle and fall detection to guide the visually impaired people with real time monitoring. SN Comput Sci. 1, 219 (2020).

Nizamis, K. et al. Transferrable expertise from bionic arms to robotic exoskeletons: perspectives for stroke and duchenne muscular dystrophy. IEEE Trans. Med Robot Bionics 1, 88–96 (2019).

Nolan, K. J. et al. Intensity Modulated Exoskeleton Gait Training Post Stroke. in Proceedings of the Annual International Conference of the IEEE Engineering in Medicine and Biology Society, EMBS (Institute of Electrical and Electronics Engineers Inc., 2023). https://doi.org/10.1109/EMBC40787.2023.10340452.

De Miguel Fernandez, J. et al. Adapted assistance and resistance training with a knee exoskeleton after stroke. IEEE Trans. Neural Syst. Rehabil. Eng. 31, 3265–3274 (2023).

Meijneke, C. et al. Symbitron exoskeleton: design, control, and evaluation of a modular exoskeleton for incomplete and complete spinal cord injured individuals. IEEE Trans. Neural Syst. Rehabil. Eng. 29, 330–339 (2021).

Dunkelberger, N., Berning, J., Dix, K. J., Ramirez, S. A. & O’Malley, M. K. Design, characterization, and dynamic simulation of the MAHI open exoskeleton upper limb robot. IEEE/ASME Trans. Mechatron. 27, 1829–1836 (2022).

Afzal, T. et al. Evaluation of muscle synergy during exoskeleton-assisted walking in persons with multiple sclerosis. IEEE Trans. Biomed. Eng. 69, 3265–3274 (2022).

Postol, N. et al. The metabolic cost of exercising with a robotic exoskeleton: a comparison of healthy and neurologically impaired people. IEEE Trans. Neural Syst. Rehabil. Eng. 28, 3031–3039 (2020).

Cosh, A. & Carslaw, H. Multiple sclerosis: symptoms and diagnosis. InnovAiT: Educ. Inspir. Gen. Pract. 7, 651–657 (2014).

Dietz, V. & Sinkjaer, T. Spastic Movement Disorder: Impaired Refl Ex Function and Altered Muscle Mechanics. http://neurology.thelancet.comVol (2007).

Aliman, N., Ramli, R. & Amiri, M. S. Actuators and transmission mechanisms in rehabilitation lower limb exoskeletons: A review. Biomed. Tech. (Berl.) 69, 327–345 (2024).

Weber, L. M. & Stein, J. The use of robots in stroke rehabilitation: A narrative review. NeuroRehabilitation 43, 99–110 (2018).

Siviy, C. et al. Opportunities and challenges in the development of exoskeletons for locomotor assistance. Nat. Biomed. Eng. 7, 456–472 (2022).

Molinaro, D. D. et al. Task-agnostic exoskeleton control via biological joint moment estimation. Nature 635, 337–344 (2024).

Plaza, A., Hernandez, M., Puyuelo, G., Garces, E. & Garcia, E. Lower-limb medical and rehabilitation exoskeletons: A review of the current designs. IEEE Rev. Biomed. Eng. 16, 278–291 (2023).

Htet, Y., Behera, B. & Maria Joseph, F. O. Lower extremity exoskeletons: a systematic review on design, control, and sensing. Eng. Res. Express https://doi.org/10.1088/2631-8695/ada663 (2025).

Bacek, T. et al. BioMot exoskeleton—Towards a smart wearable robot for symbiotic human-robot interaction. in 2017 International Conference on Rehabilitation Robotics (ICORR) 1666–1671 (IEEE, 2017). https://doi.org/10.1109/ICORR.2017.8009487.

Li, G., Li, Z., Su, C. Y. & Xu, T. Active human-following control of an exoskeleton robot with body weight support. IEEE Trans. Cyber 53, 7367–7379 (2023).

Tang, Q. et al. Research trends and hotspots of post-stroke upper limb dysfunction: a bibliometric and visualization analysis. Front. Neurol. 15, 1449729 (2024).

Gijbels, D. et al. The Armeo Spring as training tool to improve upper limb functionality in multiple sclerosis: a pilot study. J. Neuroeng. Rehabil. 8, 5 (2011).

Chang, S. R. et al. Myoelectric arm orthosis assists functional activities: a 3-month home use outcome report. Arch. Rehabil. Res Clin. Transl. 5, 100279 (2023).

Zimmermann, Y., Sommerhalder, M., Wolf, P., Riener, R. & Hutter, M. ANYexo 2.0: a fully actuated upper-limb exoskeleton for manipulation and joint-oriented training in all stages of rehabilitation. IEEE Trans. Robot. 39, 2131–2150 (2023).

Romero-Sánchez, F., Menegaldo, L. L., Font-Llagunes, J. M. & Sartori, M. Editorial: Rehabilitation robotics: challenges in design, control, and real applications, volume II. Front Neurorobot 18, 1437717 (2024).

Barbareschi, G., Richards, R., Thornton, M., Carlson, T. & Holloway, C. Statically vs dynamically balanced gait: Analysis of a robotic exoskeleton compared with a human. in 2015 37th Annual International Conference of the IEEE Engineering in Medicine and Biology Society (EMBC) 6728–6731 https://doi.org/10.1109/EMBC.2015.7319937 (IEEE, 2015).

Dhatrak, P., Durge, J., Dwivedi, R. K., Pradhan, H. K. & Kolke, S. Interactive design and challenges on exoskeleton performance for upper-limb rehabilitation: a comprehensive review. Int. J. Interact. Des. Manuf. (IJIDeM) https://doi.org/10.1007/s12008-024-02090-9 (2024).

Bonnechère, B. Animals as architects: building the future of technology-supported rehabilitation with biomimetic principles. Biomimetics 9, 723 (2024).

Pei, Y. et al. Consumer views of functional electrical stimulation and robotic exoskeleton in SCI rehabilitation: A mini review. Artif Organs https://doi.org/10.1111/aor.14925 (2024).

Hussain, S. & Ficuciello, F. Advancements in soft wearable robots: a systematic review of actuation mechanisms and physical interfaces. IEEE Trans. Med Robot Bionics 6, 903–929 (2024).

Aqabakee, K., Abdollahi, F., Taghvaeipour, A. & Akbarzadeh-T, M.-R. Recursive generalized type-2 fuzzy radial basis function neural networks for joint position estimation and adaptive EMG-based impedance control of lower limb exoskeletons. Biomed. Signal Process Control 100, 106791 (2025).

Xia, H. et al. Shaping high-performance wearable robots for human motor and sensory reconstruction and enhancement. Nat. Commun. 15, 1760 (2024).

Song, H. et al. Continuous neural control of a bionic limb restores biomimetic gait after amputation. Nat. Med. 30, 2010–2019 (2024).

Tran, M., Gabert, L., Hood, S. & Lenzi, T. A lightweight robotic leg prosthesis replicating the biomechanics of the knee, ankle, and toe joint. Sci. Robot 7, eabo3996 (2022).

Manz, S. et al. A review of user needs to drive the development of lower limb prostheses. J. Neuroeng. Rehabil. 19, 119 (2022).

Gehlhar, R., Tucker, M., Young, A. J. & Ames, A. D. A review of current state-of-the-art control methods for lower-limb powered prostheses. Annu Rev. Control 55, 142–164 (2023).

Shu, T., Herrera-Arcos, G., Taylor, C. R. & Herr, H. M. Mechanoneural interfaces for bionic integration. Nat. Rev. Bioeng. 2, 374–391 (2024).

Leaman, J. & La, H. M. A comprehensive review of smart wheelchairs: past, present, and future. IEEE Trans. Hum. Mach. Syst. 47, 486–499 (2017).

Oonishi, Y., Oh, S. & Hori, Y. A new control method for power-assisted wheelchair based on the surface myoelectric signal. IEEE Trans. Ind. Electron. 57, 3191–3196 (2010).

Mogahed, H. S. & Ibrahim, M. M. Development of a motion controller for the electric wheelchair of quadriplegic patients using head movements recognition. IEEE Embed Syst. Lett. 16, 154–157 (2024).

Mustafa, I. & Mustafa, I. Smart thoughts: BCI based system implementation to detect motor imagery movements. in 2018 15th International Bhurban Conference on Applied Sciences and Technology (IBCAST) 365–371 https://doi.org/10.1109/IBCAST.2018.8312250 (IEEE, 2018).

Xiong, M. et al. A Low-Cost, Semi-Autonomous Wheelchair Controlled by Motor Imagery and Jaw Muscle Activation. in 2019 IEEE International Conference on Systems, Man and Cybernetics (SMC) 2180–2185 https://doi.org/10.1109/SMC.2019.8914544 (IEEE, 2019).

Mahmood, M. et al. Fully portable and wireless universal brain–machine interfaces enabled by flexible scalp electronics and deep learning algorithm. Nat. Mach. Intell. 1, 412–422 (2019).

Mandel, C. et al. Navigating a smart wheelchair with a brain-computer interface interpreting steady-state visual evoked potentials. in 2009 IEEE/RSJ International Conference on Intelligent Robots and Systems 1118–1125 https://doi.org/10.1109/IROS.2009.5354534 (IEEE, 2009).

Hussain, S. A. H. et al. A mental state aware brain computer interface for adaptive control of electric powered wheelchair. Sci. Rep. 15, 9880 (2025).

Hou, L. et al. An autonomous wheelchair with health monitoring system based on Internet of Thing. Sci. Rep. 14, 5878 (2024).

Postolache, O. et al. Treat me well: Affective and physiological feedback for wheelchair users. in 2012 IEEE International Symposium on Medical Measurements and Applications Proceedings 1–7 https://doi.org/10.1109/MeMeA.2012.6226660 (IEEE, 2012).

Ferizoli, R., Karimpour, P., May, J. M. & Kyriacou, P. A. Arterial stiffness assessment using PPG feature extraction and significance testing in an in vitro cardiovascular system. Sci Rep 14, 2024 (2024).

Cheng, L., Li, J., Guo, A. & Zhang, J. Recent advances in flexible noninvasive electrodes for surface electromyography acquisition. npj Flex. Electron. 7, 39 (2023).

Jiang, L. et al. SmartRolling: A human–machine interface for wheelchair control using EEG and smart sensing techniques. Inf. Process. Manag. 60, 103262 (2023).

Do Nascimento, L. M. S. et al. Sensors and systems for physical rehabilitation and health monitoring—A review. Sensors 20, 4063 (2020).

Gassara, H. E. et al. Smart wheelchair: Integration of multiple sensors. in IOP Conference Series: Materials Science and Engineering 254 (Institute of Physics Publishing, 2017).

Ma, C., Li, W., Gravina, R. & Fortino, G. Activity recognition and monitoring for smart wheelchair users. in 2016 IEEE 20th International Conference on Computer Supported Cooperative Work in Design (CSCWD) 664–669 https://doi.org/10.1109/CSCWD.2016.7566068 (IEEE, 2016).

Morbidi, F. et al. Assistive robotic technologies for next-generation smart wheelchairs: codesign and modularity to improve users’ quality of life. IEEE Robot Autom. Mag. 30, 24–35 (2023).

Carlson, T., del, R. & Millan, J. Brain-controlled wheelchairs: a robotic architecture. IEEE Robot Autom. Mag. 20, 65–73 (2013).

Newaz, A. I., Sikder, A. K., Rahman, M. A. & Uluagac, A. S. A survey on security and privacy issues in modern healthcare systems: Attacks and defenses. ACM Trans. Comput. Healthc. 2, 27 (2021).

Kapeller, A., Felzmann, H., Fosch-Villaronga, E., Nizamis, K. & Hughes, A. M. Implementing ethical, legal, and societal considerations in wearable robot design. Appl. Sci. (Switzerland) 11, 6705 (2021).

Blindness and vision impairment. World Health Organization https://www.who.int/news-room/fact-sheets/detail/blindness-and-visual-impairment (2023).

Xu, P., Kennedy, G. A., Zhao, F. Y., Zhang, W. J. & Van Schyndel, R. Wearable obstacle avoidance electronic travel aids for blind and visually impaired individuals: a systematic review. IEEE Access 11, 66587–66613 (2023).

Barontini, F., Catalano, M. G., Pallottino, L., Leporini, B. & Bianchi, M. Integrating Wearable Haptics and Obstacle Avoidance for the Visually Impaired in Indoor Navigation: A User-Centered Approach. IEEE Trans. Haptics 14, 109–122 (2021).

Zou, W., Hua, G., Zhuang, Y. & Tian, S. Real-time passable area segmentation with consumer RGB-D cameras for the visually impaired. IEEE Trans Instrum Meas 72, 99 (2023).

Hu, X., Song, A., Wei, Z. & Zeng, H. StereoPilot: a wearable target location system for blind and visually impaired using spatial audio rendering. IEEE Trans. Neural Syst. Rehabil. Eng. 30, 1621–1630 (2022).

Zhang, J. et al. Trans4Trans: efficient transformer for transparent object and semantic scene segmentation in real-world navigation assistance. IEEE Trans. Intell. Transportation Syst. 23, 19173–19186 (2022).

Joshi, R. C., Singh, N., Sharma, A. K., Burget, R. & Dutta, M. K. AI-SenseVision: a low-cost artificial-intelligence-based robust and real-time assistance for visually impaired people. IEEE Trans. Hum. Mach. Syst. 54, 325–336 (2024).

Gao, Y. et al. A wearable obstacle avoidance device for visually impaired individuals with cross-modal learning. Nat. Commun. 16, 2857 (2025).

Katzschmann, R. K., Araki, B. & Rus, D. Safe local navigation for visually impaired users with a time-of-flight and haptic feedback device. IEEE Trans. Neural Syst. Rehabil. Eng. 26, 583–593 (2018).

Chandra, M., Jones, M. T. & Martin, T. L. E-Textiles for Autonomous Location Awareness. IEEE Trans. Mob. Comput 6, 367–380 (2007).

Xiao, J. et al. An assistive navigation framework for the visually impaired. IEEE Trans. Hum. Mach. Syst. 45, 635–640 (2015).

Hajati, N. & Rezaeizadeh, A. A wearable pedestrian localization and gait identification system using Kalman filtered inertial data. IEEE Trans Instrum Meas 70, 2507908 (2021).

Tian, Y., Hamel, W. R. & Tan, J. Accurate human navigation using wearable monocular visual and inertial sensors. IEEE Trans. Instrum. Meas. 63, 203–213 (2014).

Wang, M., Li, L., Liu, J. & Chen, R. Neural network aided factor graph optimization for collaborative pedestrian navigation. IEEE Trans. Intell. Transport.Syst. 25, 303–314 (2024).

Lee, K. M., Li, M. & Lin, C. Y. Magnetic tensor sensor and way-finding method based on geomagnetic field effects with applications for visually impaired users. IEEE/ASME Trans. Mechatron. 21, 2694–2704 (2016).

Cheok, A. D. & Yue, L. A novel light-sensor-based information transmission system for indoor positioning and navigation. IEEE Trans. Instrum. Meas. 60, 290–299 (2011).

Patil, K., Jawadwala, Q. & Shu, F. C. Design and construction of electronic aid for visually impaired people. IEEE Trans. Hum. Mach. Syst. 48, 172–182 (2018).

Bi, H., Liu, J. & Kato, N. Deep learning-based privacy preservation and data analytics for IoT enabled healthcare. IEEE Trans. Ind. Inf. 18, 4798–4807 (2022).

Li, G., Xu, J., Li, Z., Chen, C. & Kan, Z. Sensing and navigation of wearable assistance cognitive systems for the visually impaired. IEEE Trans. Cogn. Dev. Syst. 15, 122–133 (2023).