Abstract

Knowledge of the domain of applicability of a machine learning model is essential to ensuring accurate and reliable model predictions. In this work, we develop a new and general approach of assessing model domain and demonstrate that our approach provides accurate and meaningful domain designation across multiple model types and material property data sets. Our approach assesses the distance between data in feature space using kernel density estimation, where this distance provides an effective tool for domain determination. We show that chemical groups considered unrelated based on chemical knowledge exhibit significant dissimilarities by our measure. We also show that high measures of dissimilarity are associated with poor model performance (i.e., high residual magnitudes) and poor estimates of model uncertainty (i.e., unreliable uncertainty estimation). Automated tools are provided to enable researchers to establish acceptable dissimilarity thresholds to identify whether new predictions of their own machine learning models are in-domain versus out-of-domain.

Similar content being viewed by others

Introduction

Machine learning (ML), as one component of the larger umbrella of artificial intelligence (AI), is one of the fastest evolving technologies in the world today. In the context of materials science, thousands of papers using ML are now published each year, and the number of publications using ML has been growing exponentially since around 20151,2. The applications of ML in materials science takes many forms, including materials property prediction3, computer vision-based defect detection and microstructure segmentation4,5, assimilation of data and knowledge from publications using natural language processing and large language models6,7, and fitting of ML-based interatomic potentials representing nearly all elements in the periodic table to enable fast and accurate atomistic simulations8.

Useful ML models generally require some form of prediction quality quantification because models can experience significant performance degradation when predicting on data that falls outside the model’s domain of applicability. This performance degradation can manifest as high errors, unreliable uncertainty estimates, or both. Without some estimation of the model domain, one does not know, a priori, whether the results are reliable when making predictions on new test data. More precisely, useful ML models ideally have at least the following three characteristics regarding their prediction quality: (i) accurate prediction, meaning the model has low residual magnitudes, (ii) accurate uncertainty in prediction, meaning the model produces some useful quantification of uncertainty on new predictions (note this requirement does not stipulate that the uncertainties should be small), and (iii) domain classification, meaning the model can reliably determine when predictions are inside a domain (ID) versus outside a domain (OD) of feature space where the model is trustworthy.

Separate from determining whether data are ID or OD are the techniques used in domain adaptation. In certain situations, domain adaptation techniques enable the fine-tuning of a model or data to transform originally OD data into ID data. The objective of domain adaptation is to adapt a model for prediction on a property (denoted as Mprop here) to new data whose distribution may be shifted from training data9,10. There would be no need to identify OD data if domain adaptation were always effective, but adapting models to initially OD data can be a challenging and intricate process. First, many techniques require re-training models, involving a substantial effort in tuning parameters and validating models. Second, once a model is adapted to one target domain, it may still fail on other unknown domains. It is therefore useful to have a method to identify when a model is applied to problematic domains without having to adapt the model. In this work, we develop a domain classification technique that identifies when predictions are likely ID or OD (equivalently ID/OD).

The domain classification problem, at least for materials property prediction and many other similar problems, can be formulated as follows: given a trained model Mprop and the features of an arbitrary test data point, how can we develop a model to predict if the test data point is ID/OD for Mprop? In this work, we frame this challenge as a classic supervised ML problem for categorization. To develop such a model, we need training data with input features and labels, which labels are ID/OD, as well as some ML modeling approach for making the label prediction. We will denote this ML model for domain as Mdom to distinguish it from Mprop. Note that the labeled training data for Mdom does not necessarily have to match the original Mprop model training data.

There is no unique, universal definition for the domain of an Mprop model, and therefore no unambiguously defined labels for the Mdom training data. In other words, we do not have an absolute ground truth labeling on which to train the Mdom model11,12. This problem can be solved by imposing some reasonable definition of ground truth for ID/OD based on model reliability, as quantified by, e.g., small residual magnitudes and stable predictions under changes in data. In many cases ID/OD data points are described in terms of a region of feature space, in which case Mdom becomes a trivial check if a data point is in the region or not13. Predictions of ID/OD are often checked against chemical intuition of whether data are somehow “similar" to training data, and therefore ID, or not, and therefore OD. Such checks are an effective way of using field-specific knowledge of similarity to provide a ground truth for ID/OD classification.

We are aware of three approaches, developed in refs. 14,15,16, that effectively find a region in feature space where an Mprop model shows performance above some cutoff. Ref. 14 employs threshold values for descriptors to ascertain whether a prediction is ID/OD based on collected prediction errors. While their method for domain classification appears to be effective, several issues regarding complexity and implementation made it difficult for us to implement. Features are prioritized based on their error reduction capacity, and thresholds are assigned to each feature to distinguish between ID and OD points. This requires determining a threshold for each feature based on a cross validation scheme with its own threshold. Also, their approach does not provide a definitive maximum number of features to consider, resulting in ID/OD classifications that vary depending on the number of selected features. In ref. 15, the purpose of the developed technique is to establish a continuous feature space where models yield low errors (i.e., points that are ID) from model predictions via a tradeoff of feature region coverage and error. They are limited by a single continuous region when multiple areas in feature space could provide low error predictions from models. In the case of ref. 16, they gather prediction errors from cross validation, reduce the feature dimension to five using principal component analysis, establish an error threshold of the fifth percentile of errors to define the boundary of a convex hull, and finally measure the distance of predicted points from the convex hull. A convex hull defines a boundary that encompasses a set of points, creating a region that may contain areas where training data are sparse or underrepresented in a model’s feature space. These approaches are potentially very powerful but have limitations. For methods that create a single connected region to denote as ID, multiple disjointed regions in space that could yield perfectly reasonable predictions from Mprop will be excluded. A method by which non-connected ID regions are established without a single, pre-defined shape would be advantageous (e.g., with kernel density estimation as done in this work). In addition, the sophisticated approaches in these models introduce significant complexity, which makes them challenging to implement. It is therefore useful to revisit simpler approaches that build directly on the intuition that ID regions of feature space are likely to be those regions close to significant amounts of training data16,17,18.

There are many techniques for quantifying closeness in feature spaces, including convex hulls, distance measures, and (probability) density estimates13. While identifying as ID all data within a convex hull of the training data methods is reasonable, such approaches have the major limitation of potentially including large regions with no training data. For example, the convex hull of points on a circle in a two-dimensional feature space includes the entirely empty middle of the circle as ID. Distance measures are also a reasonable approach to measure closeness. In ref. 19, distance measures from a number of nearest neighbors was used as a dissimilarity score between a point and a model’s training data. They showed that target property prediction errors generally increase with increasing distance, which followed intuition. Nevertheless, distance measures have the limitation of there being no unique measure of distance between two points and no unique single distance of a new point from a set of training data. This creates a vast space of possible ways of measuring two-point distances (e.g., Euclidean, Mahalanobis, etc.) and one to N-point distances (closest point distance, closest k-points distance, weighted average of distances, etc.), making it difficult to find a robust method. In general, approaches based on convex hulls or standard distances between two points do not account naturally for data sparsity, and may consider a point near one outlier training data point or many training data points as almost identically likely to be ID.

Kernel density estimation (KDE), and density-based methods in general, offer several advantages vs. other approaches, including (i) a density value that can act as a distance or dissimilarity measure, (ii) a natural accounting for data sparsity, and (iii) trivial treatment of arbitrarily complex geometries of data and ID regions. Techniques utilizing Gaussian process have comparable advantages. But unlike Gaussian process, KDE is relatively fast to fit and evaluate, at least of modest size data sets that are common in materials (see the Supplemental Materials for comparisons between KDE and Gaussian process regression). The work in ref. 20 employed KDEs to demonstrate, using a projection of features, that many assessments of machine learning models were in regions where models had a significant number of training data. Their research demonstrated that numerous assessments previously categorized as extrapolation were, upon closer examination, actually instances of interpolation. The authors also showed that model residuals generally increased in regions of the feature space with little to no training data. While the authors employed KDE in their study, they do not utilize it as a means to categorize new predictions as ID/OD. In contrast, our research establishes a definition for ID/OD classification based on prediction errors. Later, we demonstrate how KDE can effectively differentiate data points that fall within the ID category and those that are considered OD. We therefore focus on KDE, as it provides a natural solution to all the issues around topology, distance measures, data sparsity, and has been effectively shown to have a relationship with prediction errors in the past.

Given the absence of any unique ground truth, as noted above, we explore four different approaches for defining ID/OD. Specifically, we define four domain types, each based on a corresponding ground truth, which are: (i) a chemical domain where test data materials with similar chemical characteristics to the training data are ID, (ii) a residual domain where test data with residuals below a chosen threshold are ID, (iii) another residual domain where groups (i.e., not single cases) of test data with residuals below a chosen threshold are ID, and (iv) an uncertainty domain where groups of test data with differences between predicted and expected uncertainties below a chosen threshold are ID. For each of our four domain types, we assessed our models on sets of test data that were increasingly distinct from the training data. Generally, test cases that had low KDE likelihoods were chemically dissimilar, had large residuals, and had inaccurate uncertainties, just as one would hope for an effective method of domain determination.

Here we summarize the structure of the paper. Results starts with some basic details on ML models and our definitions of domain. Four materials property data sets and one commonly used synthetic data set were studied. Various model types including random forest, bagged support vector regressor, bagged neural network, and bagged ordinary least squares models were trained with the aforementioned data sets. We then show the assessment of our domain predictions. We finish with a discussion, methods that show the methodology behind assessing domains and data curation, data resources, code resources, acknowledgements, author contributions, and competing interests. Our findings indicate that KDE likelihoods can provide valuable insights of model applicability domain, enabling effective classification of ID/OD for most data sets and models examined here. Importantly, our approach is expected to be generally applicable to regression-based prediction problems involving tabular data and can be easily applied to other regression tasks.

Results

Our work presents an approach for determining machine learning model applicability domains. The beginning of this section establishes critical terminology and mathematical foundations that are referenced extensively in the remaining results. Having readers understand these concepts before encountering numerical results significantly enhances the impact of the remaining work. Subsequently, we show how these conceptual tools can build reliable models for domain determination based on several criteria including chemistry, residuals of predictions, and quality of uncertainty estimate measures.

Definition of Model Types

We outline here the types of models, their applications in this work, and define the nomenclature for each. First, Mprop is a regression model that uses X to predict a property, y. Second, Mdom is a classification model that predicts domain labels ID/OD given the features of a single data point, \(\vec{x}\). The predicted labels of domain will henceforth be called \(\widehat{ID}\) and \(\widehat{OD}\). Construction of Mdom requires other additional models. We define a model called Mdis(X,\(\vec{x}\)) (or just Mdis for short) which returns a dissimilarity score between \(\vec{x}\) and the data X used in training Mprop. Finally, we also build a model for predicting uncertainties in Mprop predictions, Munc, which uses Mprop. The data used to build or train the models of Mprop, Munc, and Mdis will be referred to as In-The-Bag (ITB). Conversely, the data excluded from the training of these models will be termed Out-Of-Bag (OOB). The following subsections provide explanations for how Mprop, Munc, and Mdis combine to generate Mdom. Because of the interdependence of many kinds of models to produce Mdom, a summary of models, their inputs, and their outputs are provided in Table 1.

In some cases, these models are very simple, e.g., just a simple function or a check if a value is above or below a cutoff. We make the choice of defining them as models and denoting them with a variable for two reasons. First, it gives a well-defined symbol for each item, which makes the discussion more precise and compact, although at the cost of more variables. Second, defining each of these relationships as models stresses the fact that the specific models we use in this work could easily be replaced by other models, and these could be much more complex. For example, our domain model Mdom for predicting whether a test data point with features \(\vec{x}\) belongs to the ID domain is a simple check of whether the kernel density value exceeds a cutoff. However, this could be replaced by a more complex ML model based on the KDE or other features. We hope that these definitions will help make the paper clearer and suggest natural ways to improve our approach in the future.

Model for Property Regression (M prop)

We investigated a range of model types for Mprop including Random Forest (RF), Bagged Support Vector Regressor (BSVR), Bagged Neural Network (BNN), and Bagged Ordinary Least Squares (BOLS). We used bagged versions of all but the RF models (RF is already an ensemble model) as these ensembles were used to generate uncertainty estimates and define the model Munc. Note that Mdis depends only on the features of ITB data, XITB, and does not depend on the form of Mprop nor Munc. Two types of errors on property predictions were considered. First, absolute residuals (\(| y-\hat{y}|\)), normalized by the Mean Absolute Deviation of y (MADy) was measured with Eq. (1) and was named \({E}^{| y-\hat{y}| /MA{D}_{y}}\).

Second, we denote the Root Mean Squared Error (RMSE) of predictions from Mprop normalized by the standard deviation of y (σy) by the symbol \({E}^{RMSE/{\sigma }_{y}}\) (see Eq. (2)). In both Eqs. (1) and (2), “E” denotes that it is a type of error, \(\hat{y}\) is a prediction from Mprop, and \(\bar{y}\) is the mean of y. \({E}^{| y-\hat{y}| /MA{D}_{y}}\) can be measured for any individual data point considered, but can be randomly low for a data point known to be OD (i.e., residuals are stochastic). \({E}^{RMSE/{\sigma }_{y}}\) considers residuals for groups of data, so data points with low and high values of \({E}^{| y-\hat{y}| /MA{D}_{y}}\) are included in a statistical measure. Both \({E}^{| y-\hat{y}| /MA{D}_{y}}\) and \({E}^{RMSE/{\sigma }_{y}}\) were used in producing ID/OD labels to train Mdom (see the Defining Ground Truths section).

Model for Uncertainty Estimates (M unc)

Uncertainty calibration has been extensively studied in both classification and regression settings21,22,23,24,25. Our study utilized uncertainty estimates for regression and used the calibration implementation from Palmer et al.26. In this approach, we started from an ensemble model to acquire individual predictions from each sub-model comprising an ensemble. The mean of predictions from the sub-models is \(\hat{y}\). The standard deviation of predictions, σu, was calculated and then calibrated to yield the calibrated uncertainty estimates, denoted as σc. Repeated 5-fold Cross Validation (CV) was used throughout the study for producing residual and σu values used for calibration.

We borrowed arguments from ref. 27 for assessing the quality of calibrated uncertainty estimates. The mean and variance of a set of z-scores, Z, should be 0 (unbiased) and 1 (unit-scaled), respectively. Each z ∈ Z was calculated by \(z=(y-\hat{y})/{\sigma }_{c}\). We made the assumption that the distribution of Z is standard normal (i.e., had a mean of 0 and a standard deviation of 1) for reliable uncertainty estimates as done in refs. 26 and 28, which is not done in ref. 27. We called the cumulative distribution of both Z and the standard normal distribution O(z) and Φ(0, 1), respectively. We calculated how far any O(z) was from Φ(0, 1) via Eq. (3), where Earea is the miscalibration area or error in uncertainties. Other measures of the quality of uncertainty measures exist like sharpness, dispersion, etc. (see ref. 29) that could be used instead. However, we used Eq. (3) because it includes information of σc, y, and \(\hat{y}\). Earea was important for determining the quality of uncertainties in future sections and was used to produce ID/OD labels for training Mdom (see the Defining Ground Truths section).

Earea is a comparison of two statistical quantities and is inaccurate for small sample sizes. However, the comparison is still possible. If the sample size of O(z)=Φ(0, 1) is 1, then the cumulative distribution will be 0 until the single observation and then become 1. Earea will be non-zero in the aforementioned case, but does not represent the population of O(z) well. In other words, even a single value sampled from a standard normal distribution will give a non-zero Earea. To prevent issues with small samples sizes, we performed multiple splits and binned data as outlined in Model Assessments section to get many points for statistical comparisons.

Model for Dissimilarity Measures (M dis)

KDE is a method to approximate a density of points given finite sampling of points from that density. If KDE is fit to a density distribution normalized to an area of one over a region, then KDE can be used to estimate the probability density (often called a likelihood) of observing points from the distribution at a point in that region. KDE works by first placing a local distribution (kernel) with a thickness (bandwidth) on each observed data point. Overlapping regions of kernels are superimposed, providing an estimate of an overall density and can be generalized to any number of dimensions30. In our approach, we developed a KDE of XITB whose values approximately give the likelihood of finding a data point at any coordinate in feature space.

To implement KDE, we took the following steps. First, XITB was standardized using scikit-learn’s StandardScaler fit (i.e., data were rescaled to have a mean of zero and a standard deviation of one for each feature)31. This was done because the KDE implementation in scikit-learn uses a single bandwidth parameter, so all dimensions of XITB should be set to a similar length scale. Then, that single bandwidth parameter was used with the Epanechnikov kernel to construct the KDE of XITB data of Mprop. The bandwidth was estimated automatically using a nearest-neighbors algorithm as implemented in scikit-learn through the sklearn.cluster.estimate bandwidth method31. One could potentially obtain better results by more carefully optimizing the bandwidth for each data set and model combination studied. However, we opted for a straightforward automated approach to avoid any possible data leakage into our assessment and to keep the method simple to understand and implement. We used the Epanechnikov kernel because it is widely used and is a bounded kernel with a value of zero for any observation outside the bandwidth. This means that the likelihood of far away data will be exactly zero. Other kernels like the Gaussian and exponential kernels are unbounded and have non-zero values for any point. More details for these hyperparameter choices are covered in the Supplemental Materials.

Scikit-learn uses the natural logarithm of likelihoods as the inference outputs of KDE. We converted any logarithmic output to likelihoods by exponentiating the values. Eq. (4) was then employed to transform the likelihood of any inference data point \(\vec{x}\) with respect to the maximum likelihood observed from XITB. The result obtained from Eq. (4) served as our dissimilarity measure, d. It is important to note that \(\vec{x}\) was transformed using the same scaler utilized to build the KDE previously mentioned prior to attaining its likelihood with \(KDE(\vec{x})\).

This transformation did not alter the information provided by the KDE and was performed only to produce an easy to interpret number, which ranges from 0 to 1. A d value of 0 corresponds to \(\vec{x}\) being in the region of feature space most densely sampled by XITB (i.e., the peak of the KDE) and a d value of 1 corresponds to \(\vec{x}\) being far from XITB, where the density is zero for our choice of kernel.

Model for Domain (M dom)

Mdom is a classifier which relies only on d as an input and produces \(\widehat{ID}/\widehat{OD}\) given a cutoff, dt. Values d < dt result in the label \(\widehat{ID}\) and values d≥ dt result in the label \(\widehat{OD}\) (Eq. (5)). dt is a value chosen from interval [0, 1]. Each dt is associated with a specific set of \(\widehat{ID}/\widehat{OD}\) predictions. The effectiveness for a given dt is assessed by precision and recall given a ground truth, which we define based on chemistry, \({E}^{| y-\hat{y}| /MA{D}_{y}}\), \({E}^{RMSE/{\sigma }_{y}}\), or Earea (see the Defining Ground Truths section for ground truth labeling).

Here, we explain the procedure for training Mdom, which amounts to determining a single value called \({d}_{c}^{t}\). The superscript in \({d}_{c}^{t}\) does not represent exponentiation, instead it denotes a specific value of d used in predictions by Mdom. Note that Mdom can be trained with data from \({E}^{| y-\hat{y}| /MA{D}_{y}}\), \({E}^{RMSE/{\sigma }_{y}}\), Earea, or chemical information, and each will produce a different Mdom. Assume we have a data set split into ITB and OOB sets. We generated labels on OOB data for training Mdom by following the procedures outlined in the Defining Ground Truths section, which produced the class labels of ID and OD. For each dt, precision and recall were measured by comparing ID/OD and \(\widehat{ID}/\widehat{OD}\) (Eq. (6)). The number of True Positives (TP), True Negatives (TN), False Positives (FP), and False Negatives (FN) were acquired, corresponding to when the (ground truth, prediction) are (ID, \(\widehat{ID}\)), (OD, \(\widehat{OD}\)), (OD, \(\widehat{ID}\)), and (ID, \(\widehat{OD}\)), respectively. Note that TN is not used in Eq. (6), but it is included for completeness.

Mdom learns by selecting \({d}_{c}^{t}\) such that desirable properties are acquired. \({d}_{c}^{t}\) can be selected to maximize the harmonic mean between precision and recall (F1max) or to maximize recall while retaining a precision above a desired value. We used F1max for selecting \({d}_{c}^{t}\) in this study unless explicitly stated otherwise. We performed many splits of data into ITB and OOB sets using the approaches described in the Model Assessments section to obtain a large set of OOB data. We then find a single \({d}_{c}^{t}\) to optimize F1 for all OOB data together. If all the OOB is OD, then \({d}_{c}^{t}\) is set to less than zero (we effectively use − ∞) so that nothing is predicted \(\widehat{ID}\). Conversely, \({d}_{c}^{t}\) is set to greater than 1 (we effectively use + ∞) if all OOB data is ID so that all data are predicted \(\widehat{ID}\). Each value of d was acquired from the KDE built from each XITB for each split.

Deployment of Mdom only relies on \(\vec{x}\) of a single data point and can produce \(\widehat{ID}/\widehat{OD}\) for that point by checking the d produced by Mdis. If \(d < {d}_{c}^{t}\), then the prediction is \(\widehat{ID}\) and \(\widehat{OD}\) otherwise (Eq. (5) when \({d}^{t}={d}_{c}^{t}\)). This describes how someone can use Mdom for domain prediction after training. Note that this prediction does not involve \({E}^{| y-\hat{y}| /MA{D}_{y}}\), \({E}^{RMSE/{\sigma }_{y}}\), Earea, nor chemical intuition after the learning process is complete. The overall precision and recall on splits used to find \({d}_{c}^{t}\) can be stored and provided at inference along with \(\widehat{ID}/\widehat{OD}\), giving guidance to the user on the confidence they should put in the domain determination.

Why not More Complex M dis and M dom?

In this section, we discuss the question “Why use this particular approach to get domain when there are clear opportunities for something potentially more accurate?” The composite function \({M}^{cdom}={M}^{dom}({M}^{dis}(\vec{x}))\) takes a feature vector for a given data point and returns a class value of \(\widehat{ID}/\widehat{OD}\). In our approach, we used Mdis(\(\vec{x}\)) and a simple cutoff as the learned parameter for Mdom. One could easily replace this approach with a full ML model for Mcdom, presumably with much more ability to predict ID/OD. We did not do this for two reasons. First, we were concerned that it would be difficult to avoid overfitting a complex ML model for Mcdom with limited data. The present choice for Mcdom has almost no adjustable parameters and a strong foundation in our understanding that ML models learn best where there is more training data, which protects from overfitting. Second, we wanted to have a simple approach that was easy to use and reproduce by other researchers. We expect that more complex versions of Mcdom will be explored in the future, and we hope our work can provide a baseline for such studies, as our work has yielded a simple but promising method, as shown by our results.

Defining Ground Truths

We highlighted the absence of an absolute, unique definition for data being ID in our Introduction section. As we are proposing Mdom to predict if a data point will be ID and wish to assess its effectiveness, we must provide some precise quantitative ground truth definitions for our OOB data points being ID/OD. We will generally define a ground truth for an observation to be either ID or OD of a model based on privileged information, by which we mean information never seen during the creation of Mprop, Munc, nor Mdis. Chemical differences, residuals, RMSEs, and accuracy of uncertainty estimates are examples of privileged information and are represented in our study by errors denoted as Echem, \({E}^{| y-\hat{y}| /MA{D}_{y}}\), \({E}^{RMSE/{\sigma }_{y}}\), and Earea. Each of these errors has an associated cutoff to provide a ground truth ID/OD labeling. The cutoffs for Echem, \({E}^{| y-\hat{y}| /MA{D}_{y}}\), \({E}^{RMSE/{\sigma }_{y}}\), and Earea are denoted \({E}_{c}^{chem}\), \({E}_{c}^{| y-\hat{y}| /MA{D}_{y}}\), \({E}_{c}^{RMSE/{\sigma }_{y}}\), and \({E}_{c}^{area}\), respectively. When \({E}^{i} < {E}_{c}^{i}\,(i\in \{chem,| y-\hat{y}| /MA{D}_{y},RMSE/{\sigma }_{y},area\})\), the ground truth label is ID and OD otherwise (Eq. (7)).

We outline our rationale behind these sets of privileged information and how we used them to produce our ground truth labels in subsequent sections. Note that there are multiple ways one could use different privileged information and define these ground truths, but we feel these form a logical and broad set of ground truths. The key result of this paper is to show that our dissimilarity measure d (see Eq. (4)), determined only from the data features, can predict these ground truth ID/OD categorizations and therefore clearly contain essential aspects of privileged information. We demonstrate this ability of d through the assessments outlined in the Model Assessments section.

Chemical Intuition

Here we define Echem and associated cutoffs. We define Echem as the mismatch between the chemistries used to build Mprop and those seen during deployment of Mprop. \({E}_{c}^{chem}\) implicitly denotes when chemical mismatch (Echem) becomes sufficiently large such that the physics governing materials defined as ID are vastly different from those that are OD. Both Echem and \({E}_{c}^{chem}\) depend on the set of studied materials, their governing physics, and empirical observations. By choosing very similar (e.g., materials selected from ITB data) and very different (e.g., materials with totally different composition, phases, controlling physics, etc.) materials, it is easy to produce data that are mostly ID/OD. For example, an Mprop trained on steel alloys (labeled ID) should not be applicable to polymers (labeled OD). Note that this approach does not require the value for the property being predicted, y. Therefore, we can assess Mdom on any data point for which we can write down the feature vector (\(\vec{x}\)) and have a useful intuition about its chemical similarity to ITB data. We view Mdom being successful at delineating ID/OD based on simple chemical intuition as a necessary, but not sufficient, condition to use KDE for domain determination. In other words, if Mdom struggles to delineate basic intuitive chemical domains, it is likely that the approach is not particularly useful for domain determination. Specifics on chemical groups, which implicitly define \({E}_{c}^{chem}\), are covered in the Data Curation Section.

Normalized Absolute Residuals and R M S E

Here we define the cutoffs \({E}_{c}^{| y-\hat{y}| /MA{D}_{y}}\) and \({E}_{c}^{RMSE/{\sigma }_{y}}\) as the associated error metrics were already defined (see Eqs. (1) and (2)). For an Mprop whose predictions represent the mean of all y (denoted as \(\bar{y}\)), both \({E}^{| y-\hat{y}| /MA{D}_{y}}\) and \({E}^{RMSE/{\sigma }_{y}}\) are 1.0 as calculated from Eqs. (1) and (2). We consider this “predicting the mean” to be a baseline naïve model with respect to which any reasonable Mprop model should perform better. If a fit Mprop yields better predictions than a baseline, we considered those data to be ID, otherwise, those data were considered OD (Eq. (7)). This defines \({E}_{c}^{| y-\hat{y}| /MA{D}_{y}}\)=\({E}_{c}^{RMSE/{\sigma }_{y}}\)=1. Using either \({E}_{c}^{| y-\hat{y}| /MA{D}_{y}}\) or \({E}_{c}^{RMSE/{\sigma }_{y}}\) as the ground truth cutoff for ID/OD labeling captures the widely invoked idea that ID cases should be predicted better compared to the OD cases. These definitions require residuals and can therefore be assessed on data with X and y for Mprop.

Errors in Predicted Uncertainties

Here we define the cutoff \({E}_{c}^{area}\) as the associated error metric was already defined (see Eq. (3)). Similar to \({E}_{c}^{| y-\hat{y}| /MA{D}_{y}}\) and \({E}_{c}^{RMSE/{\sigma }_{y}}\), we set \({E}_{c}^{area}\) such that Munc will show better performance than a naïve baseline case. For this case, we assume a baseline Mprop model that provides \(\bar{y}\) as the prediction for all cases and a baseline Munc that provides the standard deviation of the training target values, σy, as the uncertainty for all cases. These baseline predictions can be used in Eq. (3) to measure Earea for any given set of data, and we take this predicted baseline Earea to be \({E}_{c}^{area}\). We defined ID cases as those for which the \({E}^{area} < {E}_{c}^{area}\) (OD otherwise). Unlike both \({E}_{c}^{| y-\hat{y}| /MA{D}_{y}}\) and \({E}_{c}^{RMSE/{\sigma }_{y}}\), \({E}_{c}^{area}\) changes depending on the evaluated set of y and ranged from approximately 0.2 to 0.3 in our study. In other words, Eq. (3) does not yield a single number for naíve models.

It is worth relating that this criterion of Earea < \({E}_{c}^{area}\) for a set of data points tells us that Munc is more accurate than the naïve baseline, but not that Mprop has small residuals. If Mprop has high \({E}^{RMSE/{\sigma }_{y}}\) on a set of data points but Munc has accurate estimates of those residuals, then Munc clearly knows significant and useful information about the data, and the data is therefore in some sense ID. This situation can be contrasted with the case where Mprop has high residuals and Munc has very inaccurate estimates of those residuals, in which case Mprop and Munc appear to know nothing about the data, and the data is therefore reasonably considered OD. The extent a quality ID/OD ground truth definition can be defined by just Earea, whether Earea needs to be combined with the other errors considered, or even whether Earea should be excluded entirely from consideration is not established at this point. Regardless, we show that Mdom provides an excellent ability to predict an ID/OD ground truth label based on Earea.

Summary of Ground Truth Definitions

The above detailed definitions of ID/OD can be confusing, so we summarize the idea again here. We considered four intuitive ways of thinking about being ID/OD of a trained model based on intuitive chemical similarity to ITB data, model residuals, model RMSEs, and model uncertainty estimates. For each way of thinking, we defined a score of the closeness of the data to being ID, which we denoted Echem, \({E}^{| y-\hat{y}| /MA{D}_{y}}\), \({E}^{RMSE/{\sigma }_{y}}\), and Earea, respectively. We then used chemical intuition (for chemical dissimilarity) or naïve baseline model behavior (for model residuals and model uncertainty estimates) to define a cutoff (denoted \({E}_{c}^{i}\)) for each corresponding \({E}^{i}(i\in \{chem,| y-\hat{y}| /MA{D}_{y},RMSE/{\sigma }_{y},area\})\) such that it was reasonable to assume that \({E}^{i} < {E}_{c}^{i}\) meant data were ID (Eq. (7)). This allowed us to assign the labels of ID/OD for data so that we could evaluate the utility of d. It is important to realize that these ground truth definitions make extensive use of privileged information (i.e., information not expected to be available during application of the model). However, these ground truth definitions make no direct use of d and d makes no use of the privileged information. The value of our results is that we gain access to ID/OD measures that require privileged information by using d.

We note that while Echem relies on a researcher’s intuition regarding the chemical field and is specific to given fields, definitions relying on \({E}^{| y-\hat{y}| /MA{D}_{y}}\), \({E}^{RMSE/{\sigma }_{y}}\), and Earea are automated numerical approaches and quite general. Therefore, the success of Mdom on methods relying on \({E}^{| y-\hat{y}| /MA{D}_{y}}\), \({E}^{RMSE/{\sigma }_{y}}\), and Earea suggests our approach is not limited to materials and chemistry problems, but that in general d gives valuable access to the domain implications of knowledge of residuals. Note that the way we define \({E}^{RMSE/{\sigma }_{y}}\) and Earea for our automated numerical ground truths require averaging over groupings based on d and is covered in the Methods section. Thus, there is a very modest and indirect path by which the nature of d can influence the ground truth categorization based on \({E}^{RMSE/{\sigma }_{y}}\) and Earea. Although we think this effect is modest, it represents a path of data leakage that could potentially lead to bias in the assessments of the approach. To counteract this concern, we have included \({E}^{| y-\hat{y}| /MA{D}_{y}}\), which does not require any binning and is immune to data leakage. This quantity has other limitations as it is quite stochastic, but our success on assessments using the ground truth categorization from \({E}_{c}^{chem}\) and \({E}_{c}^{| y-\hat{y}| /MA{D}_{y}}\) demonstrates that the other successes were not dominantly influenced by data leakage from binning on d. Each error and ground truth definition have a corresponding assessment and the details are covered in the Methods section. Assessments on each type of error follow the convention of \({A}^{i}(i\in \{chem,| y-\hat{y}| /MA{D}_{y},RMSE/{\sigma }_{y},area\})\).

Aggregate of Assessment Results

Now that the conceptual tools and methodology used in this study are established, we can apply those concepts to various data sets and models. We start with Achem and show that d mostly separated OD materials from ID materials. Although the separation is not perfect in many cases, it provided a clear way to flag OD materials. We then cover more automated numerical methods which do not require a priori knowledge of domains from chemical intuition. We show that d can be used to separate cases with high \({E}^{| y-\hat{y}| /MA{D}_{y}}\) (i.e., data that are OD) and cases with lower \({E}^{| y-\hat{y}| /MA{D}_{y}}\) (i.e., data that are ID). A similar observation was made with the separation of high and lower \({E}^{RMSE/{\sigma }_{y}}\) and Earea groups of data, respectively. We end by providing time scaling and notes of caution for use cases where developed methods may not yield desired behavior. All results can be seen in Table 2.

If the precision is 1 and the recall is low in Table 2, only some of the data are predicted as ID but many are mislabeled as OD. This means that we discard many reliable predictions, but the remaining ID predictions are reliable. If the recall is 1 and the precision is low, then nearly all data are predicted as ID. This is indeed a problem and the chosen \({d}_{c}^{t}\) should be changed such that a desired precision is reached as discussed in the Model for Domain (Mdom) section. Although F1max is a standard metric in classification, it has its issues. In addition to F1max, we ask readers to note AUC-Baseline (defined in the Model Assessments section) because it considers multiple values of d, its corresponding precision and recall, and how much above a baseline the measure of d provides. AUC-Baseline is covered in the Model Assessments section.

Because interpreting the data in Table 2 can be difficult due its size, we have included visualizations of the data in Fig. 1. Fig. 1a through 1d plot the AUC, AUC-Baseline, precision, recall, and F1max scores from the table. Values closer to 1 are better with decreasing values signaling worsening scores.

The chemical, residual, RMSE, and miscalibration area assessments are shown in (a–d), respectively. For all points shown in these figures, a score closer to 1 is better, with decreasing values being worse. AUC, AUC-Baseline, precision, recall, and F1max are shown by the blue, orange, green, red, and purple markers, respectively. The type of assessment is noted by the subfigure caption.

Relationship Between Chemical Dissimilarity (E chem) and Distance (d) from the Chemical Assessment (A chem)

For all chemical data sets analyzed through Achem, violin plots for d with respect to chemical groups were generated and shown in Fig. 2. A positive result shows that ID materials have lower values of d compared to OD materials. Values to the left are more likely to be observed (i.e., be ID), and values to the right become less likely to be observed (i.e., be OD). All sets of Achem generally show the aforementioned trend. Data are organized based on their median d values, with lower values positioned at the bottom and higher values at the top in Fig. 2. The classification metrics are tabulated in Table 2. Our F1max scores range from 0.71 to 1.00, which are rather high. We show how d is useful for flagging OD materials from predictions in subsequent text. The figures for all precision and recall curves are provided in the Supplemental Materials. Details on chemical groupings and their naming are in the Data Curation section.

Chemical groupings and their dissimilarities are shown for Diffusion (a), Fluence (b), Steel Strength (c), Cuprates (d), Iron-Based (e), and Low-Tc (f) datasets. We show the violin plot for all the d scores separated by chemical groups. The first, second, and third vertical lines within each violin denote the separations between the first, second, third, and fourth quartiles. Values to the left are more likely to be observed compared to values to the right. All violins were forced to have the same width for visual purposes (i.e., the actual number of observations are not reflected by the visual). Green and red violins denote ID and OD groups, respectively. The data set is denoted by the captions.

The violin plot for the Diffusion data is shown in Fig. 2a. Intuitively dissimilar materials to the Original data subset, such as noble gases, are to the very right of the figure. Materials that were mixed across chemical groups (Manual set) also show high dissimilarities compared to the Original set. Conversely, more similar materials like transition metals, alkaline earths, and post transition metals have their median d value closer to the Original subset compared to the aforementioned groups. Lanthanides, however, appear closer to our Original set than expected. If we want 95% of data to come from a trustworthy chemical domain while maximizing recall for the Diffusion data, then the corresponding threshold of \({d}_{c}^{t}=0.45\) can act as a decision boundary. Using this threshold for Mdom, chemical groups such as noble gases, actinides, reactive non-metals, etc. are mostly excluded from \(\widehat{ID}\), but our recall is only 0.20. At a value slightly below 1.00 for \({d}_{c}^{t}\), our F1 score is maximized and can still exclude most noble gases, reactive non-metals, and actinides. At F1max, our recall and precision are 0.80 and 0.64 respectively. One can increase the precision of predictions by choosing a lower value of \({d}_{c}^{t}\) at the cost of recall and vice versa according to the needs of Mdom application.

We have a similar observation for our Fluence data (see Fig. 2b). Note that the Perturbed Original set have their quartiles shifted to the left of the Original data, meaning that values from the Perturbed Original data are predicted by the KDE to be more likely to be observed than test cases from the Original data, which is surprising. This is likely just an anomaly due to the modest size of the data and is not a concern given that both sets of data are ID. The important consideration is that all OD data have d values at or near 1.00. All OD data are far away from ID data. Any prediction with \({d}_{c}^{t}\approx 0.99\) is deemed to be untrustworthy and separates ID/OD essentially perfectly.

As for our Steel Strength data in Fig. 2c, the Perturbed Original data have a median d value further to the right than the Original set, as expected. Most Perturbed Original data from the Steel Strength data are to the left of other OD groups. In other words, OD data are further away than ID data. The Iron-Based-Far data have cases that are closer to the Original data than the Copper-Based and Aluminum-Based sets, although it is not evident from the figure. The observation is reasonable considering most of the Original data are iron based, but generally outside the range of Fe weight percentages and with more constituent elements than in the Iron-Based-Far data. Like our Fluence data, any prediction at or near \({d}_{c}^{t}\approx 1.00\) was deemed to be untrustworthy. But unlike Fluence, ID/OD labels were not separated perfectly. Our recall is 0.98 because of a few FN predictions and the precision is 1.00.

Regarding the Superconductor data, we divided data into Cuprates, Iron-Based, and Low-Tc groups and assessed the behavior where each set was taken as the Original data (see the Model Assessments section) with the results shown in Fig. 2d, e, f, respectively. In all cases, the ID materials in the Original data generally have d values to the left of OD data. F1max scores are not lower than 0.7, which is generally good. Note that we can tune \({d}_{c}^{t}\) (i.e., non-\(F{1}_{max}{d}_{c}^{t}\)) such that the corresponding precision is near or at 1.00 at the cost of a lower recall for these data.

While our method does not flawlessly differentiate all chemical cases, it serves as a robust tool to eliminate a substantial portion of unreliable predictions. Furthermore, our chemical domains, like all the domain definitions in this paper, are approximate, and it is not expected that any method will provide perfect ID/OD predictions. Predicting domain with \({d}_{c}^{t}\) derived from data analysis like those shown in Fig. 2a through 2f required being able to label a large set of data as chemically similar (ID) or dissimilar (OD), which requires extensive domain expertise and is not always feasible. Our other methods for domain determination rely purely on statistics of the trained model for assigning labels for domain, which makes them more practical for deployment. Achem serves as an initial, intuitive, and rudimentary assessment, and passing this assessment was necessary for any domain method to be considered useful for materials. These results show that d effectively provided privileged information regarding the chemical classes and physics that separate materials. We are confident that any positive outcomes observed for \({A}^{| y-\hat{y}| /MA{D}_{y}}\), \({A}^{RMSE/{\sigma }_{y}}\), or Aarea are not solely attributable to numerical artifacts. These readily interpretable results from Achem establish a firm, intuitive, and chemically informed foundation before exploring more general numerical approaches, which we do now.

Relationship Between Absolute Residuals (\({E}^{| y-\hat{y}| /MA{D}_{y}}\)) and Distance (d) from the Residual Assessment (\({A}^{| y-\hat{y}| /MA{D}_{y}}\))

In Fig. 3, we illustrate how \({E}^{| y-\hat{y}| /MA{D}_{y}}\) is related to d for the RF model type. The same set of plots for other model types are offered in the Supplemental Materials, but we summarize their results in Table 2. We observe that \({E}^{| y-\hat{y}| /MA{D}_{y}}\) increases when the likelihood of observing similar points to ITB data decreases (larger d). All AUC-Baseline scores in Table 2 are positive for the relevant assessment, meaning that our d measure gives more information than a naïve guess provided by our baseline. Nearly all F1max scores are above 0.7, which means that d can be used to significantly separate ID and OD points. Only 2 out of 20 measures of F1max fall below 0.7. As an example of application, we can examine Fig. 3a and select \({d}_{c}^{t}=1.00\) for which we reject predictions (i.e., label as \(\widehat{OD}\)) from any Mprop according to Eq. (5). Most points with large \({E}^{| y-\hat{y}| /MA{D}_{y}}\) tend to occur at d=1.00. By selecting a \({d}_{c}^{t}\)=1.00, a user can filter out the majority of those points from a study, thereby saving time by excluding untrustworthy cases. Other data sets in Fig. 3b, c, and d have more OD cases above \({E}_{c}^{| y-\hat{y}| /MA{D}_{y}}\) at lower d compared to the previous example. However, the fraction of OD cases greatly exceeds that of ID cases when d = 1.00 and the fraction of ID cases is higher than the number of OD cases when d < 1.00. Therefore, the F1max occurs at \({d}_{c}^{t}\)=1.00, where data have a zero likelihood based on the KDE constructed from ITB data. A similar methodology can be applied for \({d}_{c}^{t}\) values across other data set and model combinations. Essentially, we can select predictions likely to be better than naïve. We note that \({E}^{| y-\hat{y}| /MA{D}_{y}}\) is a statistical quantity that will almost certainly be challenging to predict by d or any other domain method as it is quite stochastic. Even if one has a method that was essentially perfect at identifying data that was ID/OD, some residuals would likely be large for ID data and small for OD data by chance. Given this intrinsic limitation of predicting \({E}^{| y-\hat{y}| /MA{D}_{y}}\), we consider the present results quite strong. The statistical quantity \({E}^{RMSE/{\sigma }_{y}}\) is essentially the same information as \({E}^{| y-\hat{y}| /MA{D}_{y}}\), but averaged over many points and therefore less stochastic, and we will see below that \({E}^{RMSE/{\sigma }_{y}}\) is predicted more robustly by d.

The relationship between residuals and dissimilarity is shown for Diffusion (a), Fluence (b), Steel Strength (c), Superconductor (d), and Friedman (e) datasets. The relationship between \({E}^{| y-\hat{y}| /MA{D}_{y}}\) and d for the RF model type is shown. Generally, \({E}^{| y-\hat{y}| /MA{D}_{y}}\) increases with an increase in d. \({E}_{c}^{| y-\hat{y}| /MA{D}_{y}}\) is shown by the horizontal red line, which separates our OD (red) and ID (green) cases. The data set is denoted by the captions.

Classification between ID and OD cases should become increasingly challenging when all considered data points are more similar. We examine the ability of d at discerning ID from OD data points when differences between the two are less by lowering \({E}_{c}^{| y-\hat{y}| /MA{D}_{y}}\) for the Friedman data using the RF model type for Mprop. All remaining ID and OD points become more similar and can be seen in Fig. 4a where many ID and OD points now have the same value of d (i.e., ID and OD points are more on top of each other and are more similar). Note that the labeling of ID/OD is independent of d for \({A}^{| y-\hat{y}| /MA{D}_{y}}\). Re-evaluating data from Fig. 3e for \({E}_{c}^{| y-\hat{y}| /MA{D}_{y}}=0.25\), the recall, precision, and \({d}_{c}^{t}\) changes from 0.86, 0.93, and 1.00 to 0.73, 0.97, and 0.74, respectively. Mdom becomes more conservative with a lower ground truth by selecting a lower \({d}_{c}^{t}\) but is still a viable model for discerning domain. If \({E}_{c}^{| y-\hat{y}| /MA{D}_{y}}\) is further lowered to 0.01, then the overwhelming majority of points are OD and Mdom’s recall, precision, and \({d}_{c}^{t}\) become 0.72, 0.19, and 0.27. Mdom becomes imprecise at discerning ID from OD points when they are extremely similar (Fig. 4b).

Lowering \({E}_{c}^{| y-\hat{y}| /MA{D}_{y}}\) has a large impact on how the threshold for Mdom is chosen and its corresponding metrics like precision. These data points, identical to those in Fig. 3e, have been reclassified as ID/OD based on lower ground truth thresholds of 0.25 and 0.01 for a, b, respectively (indicated by the horizontal red line in each figure). ID points are green while OD points are red.

Relationship Between R M S E (\({E}^{RMSE/{\sigma }_{y}}\)) and Distance (d) from the R M S E Assessment (\({A}^{RMSE/{\sigma }_{y}}\))

In Fig. 5, we illustrate how \({E}^{RMSE/{\sigma }_{y}}\) is related to d for the RF model type. The same set of plots for other model types are offered in the Supplemental Materials, but we summarize their results in Table 2. Similar to the results from the previous section, we observed that \({E}^{RMSE/{\sigma }_{y}}\) increased when the likelihood of observing similar points to ITB data decreased (larger d). All AUC-Baseline scores in Table 2 are positive for the relevant assessment, meaning that our d measure gives more information than a naïve guess provided by our baseline. Nearly all F1max scores are 1.00, which means that ID and OD bins were nearly perfectly separated (i.e., only 4 out of 20 were not perfect but still above 0.7). As an example of application, we can examine Fig. 5c and select \({d}_{c}^{t}\)=0.85 for Eq. (5). Three bins at d > 0.85 have \({E}^{RMSE/{\sigma }_{y}}\) above \({E}_{c}^{RMSE/{\sigma }_{y}}\) and are OD. All other bins have \({E}^{RMSE/{\sigma }_{y}}\) below \({E}_{c}^{RMSE/{\sigma }_{y}}\) and are ID. If Mdis yields d=0.8 for a data point, the point falls in a bin that is ID. If Mdis yields d=0.95 for a data point, the point falls in a bin that is OD. A similar methodology can be applied for \({d}_{c}^{t}\) values across other data set and model combinations. We can use d to distinguish points where \({E}^{RMSE/{\sigma }_{y}}\) is expected to be low from those likely to have high \({E}^{RMSE/{\sigma }_{y}}\) (i.e., we can discern predictions likely to be better than a naïve). While it is true that some cases exhibit perfect discrimination between ID and OD classifications, this outcome can be attributed to the specific binning strategies used in our analysis (unlike the results in Fig. 3). The threshold we used to separate these bins is selected to maximize the F1 score (F1max), which can inadvertently lead to overfitting. However, it is important to highlight that when examining OOB predictions (i.e., data that were not utilized during model training) the \({E}^{RMSE/{\sigma }_{y}}\) generally increases with respect to the dissimilarity metric d.

The relationship between RMSE and dissimilarity is shown for Diffusion (a), Fluence (b), Steel Strength (c), Superconductor (d), and Friedman (e) datasets. The relationship between \({E}^{RMSE/{\sigma }_{y}}\) and d for the RF model type is shown. Generally, \({E}^{RMSE/{\sigma }_{y}}\) increases with an increase in d. \({E}_{c}^{RMSE/{\sigma }_{y}}\) is shown by the horizontal red line, which separates our OD (red) and ID (green) bins. The data set is denoted by the captions.

Relationship Between Miscalibration Area (E area) and Distance (d) from the Uncertainty Quality Assessment (A area)

We now provide a similar analysis for Earea as previously provided for \({E}^{RMSE/{\sigma }_{y}}\). In Fig. 6, we illustrate how Earea and d are related for the RF model type. The same set of plots for the other model types are offered in the Supplemental Materials, but we summarize their results in Table 2. We observed that Earea for bins with small d values tend to be relatively small compared to bins with larger d values for all the data presented in Fig. 6. All AUC-Baseline scores are greater than zero except for two, indicating that two data set and model combinations performed worse than naively predicting the number of ID bins (i.e., only 2 out of 20 results were undesirable). For the most part, our d measure offers substantial insight into the quality of Earea for the reported data. Approximately half (9 out of 20) of the model type and data combinations yield precision and recall scores of 1.00, indicating their ability to perfectly discern points in ID/OD bins. A total of 11 out of the 20 entries report F1max above 0.7, which is relatively good but not nearly as great as the results from \({A}^{| y-\hat{y}| /MA{D}_{y}}\) and \({A}^{RMSE/{\sigma }_{y}}\). An additional 4 entries were only slightly below 0.7. The only entries with F1max scores worse than naïve were Steel Strength with BNN and Diffusion with BOLS. No data for the entry for Friedman with BOLS was ID, which was the reason for an AUC-Baseline of zero. The quality of Munc affects the ability of Mdom to discern domain, a problem we discuss in detail as case (ii) in the Notes of Caution for Domain Prediction section and in the Supplemental Materials. As an example of application, we can examine Fig. 6b and select \({d}_{c}^{t}\)=0.92 for as a threshold to discern between ID/OD bins. If Mdis yields d=0.5 for a point, the point falls in a bin that is ID. If Mdis yields d=0.99 for a point, the point falls in a bin that is OD. Choices for \({d}_{c}^{t}\) can be similarly made for other models. These results show that we can usually use d to distinguish points where Earea is expected to be low from those likely to have high Earea (i.e., we can discern predictions likely to be better than a naïve). It is important to note that each bin utilizes the exact same data to calculate \({E}^{RMSE/{\sigma }_{y}}\) and Earea. This implies that bins that were ID based on \({E}_{c}^{RMSE/{\sigma }_{y}}\) but OD based on \({E}_{c}^{area}\) had low absolute residuals but poor uncertainty quantification accompanying the predictions.

The relationship between miscalibration area and dissimilarity is shown for Diffusion (a), Fluence (b), Steel Strength (c), Superconductor (d), and Friedman (e) datasets. The relationship between Earea and d for the RF model type is shown. Generally, Earea increases with an increase in d. \({E}_{c}^{area}\) is shown by the horizontal red line, which separates our OD (red) and ID (green) bins. The data set is denoted by the captions.

Time Complexity of Established Methods

Here we describe the time scaling of our domain method with respect to the number of features and the number of data points. The scikit-learn implementation of KDE can scale as either \({\mathcal{O}}(nlog(n))\) (Ball-Tree algortihm) or \({\mathcal{O}}(log(n))\) (KD Tree algorithm) depending on the size of training X32,33. n is the number of data points used in training. However, our method includes pre-clustering, cross validation, the underlying model type for Mprop, and a multitude of other functions which will alter the scaling of the overall codebase. Using the computation times from our assessments (i.e., \({A}^{| y-\hat{y}| /MA{D}_{y}}\), \({A}^{RMSE/{\sigma }_{y}}\), and Aarea combined) with Mprop being an RF model type, the scaling of our assessment with respect to the number of data points appears to be approximately \({\mathcal{O}}({n}^{2})\) (Fig. 7a). A number of fuctions (constant, logarithmic, linear, logarithmic linear, and quadratic) were fit to the computation time versus number of data points using the curve_fit optimizer from SciPy. The quadratic function fit the data the best, so the empirical scaling is estimated to be approximately \({\mathcal{O}}({n}^{2})\).

a, b show the time scaling of the developed methods as a function of the number of data points and features, respectively. The RF model type was used. Only the Diffusion data was used for (b). The red line is the best fit to the blue data points gathered from completed calculations ((a) is a quadratic fit and (b) is a linear fit).

We can also examine how our methods scale with respect to the number of features. Using the Diffusion data, we re-run our analysis with the 25 features as discussed in the Diffusion Data Set section. Additionally, we run the same analysis with 50, 75, 100, 250, 500, 750, and 1000 features to compare the run times. Features were added by feature importance rankings established in the Diffusion Data Set section up to 100. We did not rank the remaining features. Because no feature beyond 25 altered \({E}^{RMSE/{\sigma }_{y}}\) significantly, features beyond 100 were added randomly. With an Mprop of RF and following the methodology used for scaling with respect to the number of data points, we establish that our method scales approximately linearly (i.e., \({\mathcal{O}}(n)\)). The maximum number of features is about 1000 and was set by the number of features from our feature generation (covered in the Diffusion Data Set section). Note that the set of computers used for the data point and feature scaling assessments were conducted with separate sets of computers. For example, computer set 0 was used for the data point scaling and computer set 1 was used for feature scaling. Every computer in each corresponding set had the same type of hardware, but detailed results may differ significantly with different hardware.

Notes of Caution for Domain Prediction

We have identified four scenarios that could, but not necessarily, lead to the breakdown of developed models (although others likely exist): (i) the trivial case where OOB y cannot be learned from X (i.e., Mprop breaks), (ii) the case where uncertainties are bad (i.e., Munc breaks), (iii) the case where Bootstrapped Leave-One-Cluster Out (BLOCO) fails to select regions of X that are sufficiently distinct as OOB sets, and (iv) the case where KDE does not provide adequate information about ITB data because of a very large number of features. We will show the first 3 failure modes with easily controlled data generated with Eq. (8) (Friedman and Friedman WithOut Distinct Clusters (FWODC)). BLOCO and FWODC are defined in the Methods section. To show case (i), we shuffled y with respect to X. Mprop could not learn y from X in this scenario. \({E}^{RMSE/{\sigma }_{y}}\) will be above \({E}_{c}^{RMSE/{\sigma }_{y}}\) for all (or nearly all) bins of d. To show case (ii), we used uncalibrated (σu) instead of calibrated (σc) uncertainties. If Munc is not accurate, then d will show no reasonable trend between Earea and d. To show case (iii), we used Uniform Manifold Approximation and Projection (UMAP) to show how insufficient clustering of distinct spaces provided values of d that could not be used to build Mdom. This is a failure in producing ID/OD labels for OOB data. In cases (i)-(iii), the failure is not really a problem with the fundamental domain method, as we now explain. For case (i), it is reasonable to assume an Mprop model has no domain (or, equivalently, all data is OD) if its predictions are poor, and therefore no domain prediction method can reasonably be expected to work. Similarly, an Munc incapable of generating accurate uncertainties as outlined in case (ii) would have most, if not all, data as being OD. For case (iii), we do not separate data with our BLOCO procedure. This is not a failure of the fundamental approach, but a failure of our specific strategy for splitting leading to poor generation of ID/OD samples. However, case (iv) would be a true failure of the underlying KDE approach, although we have not seen it occur in our tests.

The RF model type was used for Mprop for cases (i)-(iii) with either Friedman or FWODC data. We start with case (i) using the Friedman data. By shuffling y with respect to X, the results from our assessments of \({A}^{RMSE/{\sigma }_{y}}\) breakdown (Fig. 8a). Note that all predictions from Mprop are worse than naively predicting \(\bar{y}\) for all points. A \({d}_{c}^{t}\) cannot be established for separating ID/OD cases from \({E}^{RMSE/{\sigma }_{y}}\) since all data are OD. Fig. 8b shows how Mdom fails if Munc fails via case (ii). We use σu instead of σc as the estimates provided by Munc. Errors in our uncertainties are high, and no value of \({d}_{c}^{t}\) separates ID/OD points well (Mdom failure) because no bins exist that are ID. Note that the portion of Mdom trained with \({E}^{RMSE/{\sigma }_{y}}\) data should remain unaffected as long as Mprop functions well.

a shows the assessment of the Friedman data fit with an RF model type where we purposefully shuffled y to acquire an Mprop with no predictive ability. Because X no longer has a strong relationship with y, all data are OD. b shows the relationship between d and Earea for using σu instead of σc for Munc. Because Munc is poor at estimating uncertainties, all data are OD. The UMAP projections of Friedman and FWODC onto two-dimensions are shown in c, d, respectively. One sampling of X yields distinct regions in features (left) and the other does not (right). The colors represent the labels for three clusters acquired through agglomerative clustering. e, f show the relationship between d, \({E}^{RMSE/{\sigma }_{y}}\), and Earea for the poorly clustered FWODC data. Note that at least the bin with the highest d for \({A}^{RMSE/{\sigma }_{y}}\) should be OD. We observe that data closest to our XITB for Aarea are marked as OD domain by \({E}_{c}^{area}\), but should ideally be ID.

Failure case (iii) for our domain methodology stems from a specific failure case of the BLOCO procedure. First, we use the UMAP approach to project X into a two-dimensional space and visualize the cluster labels assigned by agglomerative clustering for Friedman (Fig. 8c) and FWODC (Fig. 8d) data. Each color from the figures represents the cluster labels for three clusters. Because there are intervals of sampling for X that exclude other intervals for Friedman in Fig. 8c, we can cluster subspaces of X that are distinct. If we consider FWODC as shown in Fig. 8d, all data that are OOB by BLOCO are close to each other, which results in an insufficient distinctiveness of clusters for pseudo-label generation (ID/OD). The X space from Fig. 8c led to the previously seen results on \({A}^{RMSE/{\sigma }_{y}}\) and Aarea (Figs. 5e and 6e). Data used to make Fig. 8d led to the results shown in Fig. 8e and f. For Fig. 8e, we know that data at d=1.00 can produce OD points as seen in Fig. 5e. For Fig. 8f, Earea is much larger for our first bin than that from Fig. 6e. Conversely, Earea is much smaller for our last bin in Fig. 8f compared to Fig. 6e. Unusual trends of d with respect to Earea emerge when the data set lacks diversity (i.e., data that cannot be clustered well).

Finally, we discuss failure mode (iv). KDE is known to have challenges in getting accurate representations for a large number of dimensions34. For models with many features, it is possible that KDE will give a poor representation of the distance to the XITB data and the approach taken in this work will break down (i.e., yield inaccurate \(\widehat{ID}/\widehat{OD}\) labels). Here we explore how the F1max scoring metric for \({A}^{| y-\hat{y}| /MA{D}_{y}}\) is affected by the number of features included in the assessment. We use the exact same tests as used in the feature scaling results described in the Time Complexity of Established Methods section. RF was the model type for Mprop and the data used was the Diffusion data set. As shown in Fig. 9, the smaller features sets of 25 and 50 have an approximate 0.09 increase in F1max scores compared to larger feature sets. Based on these results, it is recommended to perform feature selection to reduce the number of features to a modest level. We do not know how many features would cause issues, but these results suggest that 50 and below is optimal and it is reasonable to assume the inclusion of thousands of irrelevant features will further degrade scores. Although this result shows that the established methods work best with a smaller number of relevant features for Mprop, the degradation with even 20 times a larger feature set is not very large. However, the degree of performance degradation with respect to the number of features is likely to depend on the data analyzed and Mprop used.

The F1max score from classifying ID points from OD points decreases for larger numbers of features.

In summary, there are three known conditions and a fourth one hypothesized that will lead to a non-functional Mdom. First, Mprop cannot properly predict y from X. Second, Munc fails to provide accurate measures of uncertainty. Third, the data cannot be clustered to produce ID/OD labels. Fourth, the KDE fails to provide a good density due to a large number of features. So long as the aforementioned conditions are not met, Mdom has been found to effectively predict domain in our tests.

Discussion

Our work addresses a significant concern in the deployment of machine learning models: the potential for these models to produce inaccurate or imprecise predictions without warning. We have shown that kernel density estimates provide valuable insights into where in the feature space model performance degrades significantly or where predictions fall outside the model’s domain of applicability. The central idea of our approach is to use kernel density estimation to define a dissimilarity measure d and then classify data as in-domain or out-of-domain based on d. We assessed the approach with a range of machine learning models (random forest, bagged neural network, bagged support vector regressor, and bagged ordinary least squares) on data sets with diverse physical properties and chemistries (dilute solute diffusion in metals, ductile-to-brittle transition temperature shifts in irradiated steel alloys, yield strengths of steel alloys, and superconducting critical temperatures) as well as the synthetic Friedman data set. We demonstrated qualitatively that as d increased, data became more chemically distinct, residual magnitudes increased, and uncertainty estimates deteriorated, validating that d was a powerful descriptor for these critical ways of thinking about the domain. Our quantitative assessment compared our predicted domain categorization to ground truth values based on chemistry, residuals, and uncertainty estimates and generally found good improvement over naïve models and high F1 scores. This approach can be easily applied to many problems and allows categorization of in-domain or out-of-domain for any test data point during inference. The approach can be applied easily through its stand-alone implementation or its implementation in the MAterials Simulation Toolkit - Machine Learning (MAST-ML) package (see Data and Code Availability). Researchers can easily use this method to provide automated guardrails for their machine-learning models, greatly enhancing their reliable application.

Methods

Software Tools Used

In our study, we utilized several software tools for analysis. We employed MAST-ML to generate and select features, X, across multiple data sets35. Furthermore, we utilized various models and subroutines from scikit-learn31. For neural network (NN) implementations, we relied on Keras36. Visualizations from projecting X onto lower dimensions used the UMAP package37.

Model Assessments

We can assess how well Mdom, or equivalently, categorizing the data based on d (Eq. (5)), can predict ID/OD. Specifically, we evaluate the ability of d obtained from Mdis to predict domain labels by building precision-recall curves on OOB data sets. For each precision-recall curve, a naïve baseline area under the curve (AUC) was constructed by considering a baseline model that predicts ID for every case, which yields a baseline AUC of the ratio of ID cases over the total number of cases38. The difference between the true and baseline AUC was used as an assessment metric, which we call AUC-Baseline. AUC was calculated using the sklearn.metrics.average_precision_score from scikit-learn31. AUC-Baseline shows how much additional information d provided for domain classification above the naïve baseline model. Any AUC-Baseline above zero indicates domain information retained by d. The values of precision, recall, and F1 were reported for the acquired metric of F1max, which evaluates how effectively the ID points were separated from the OD points by the dissimilarity measure d.

Assessing Our Domain Prediction Based on A Ground Truth Determined by Chemical Intuition

In this section, we describe our method to evaluate how d provides information with respect to Echem and call this assessment Achem. We started with a set of ITB data that were used to construct Mprop and additional data with chemically distinct groups. These data are called the Original and non-Original sets, respectively. The non-Original set can be further subdivided into other chemistries and was always treated as OOB. Each case from all data was labeled as ID/OD based on chemical intuition. We wanted to evaluate the ability of d to discern ID/OD from groups of chemistries that are OOB. To do this, we built sets of OOB data that contained ID/OD labels. For every observation in the Original set, i, the following sets of steps were performed: i was taken out of the Original set (i.e., placed in the OOB data), an Mdis model was trained on the remaining Original ITB data, then Mdis was used to calculate d on all OOB data (i.e., i and all cases from the non-Original set). This formed one prediction set. We repeated the procedure for all data points in the Original set and aggregated that data. In other words, we performed Leave-One-Out (LOO) CV on the Original set while treating the non-Original set as an OOB set for every iteration. If there are n cases from the Original set, then there should be n different models and prediction sets by the time the procedure finished. See Fig. 10a for a diagram of the splitting procedure. Violin plots were generated to show the distribution of d values for each chemical group.

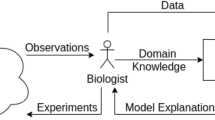

Shown in (a) is the splitting methodology for Achem. The square and circle sets are from LOO CV and a fixed OOB set, respectively. Shown in (b) is the BLOCO splitting methodology. Each shape represents a specific cluster. Clusters were iteratively swapped between ITB and OOB sets. Shown in (c) is the depiction of nested CV. The upper level (L1) was used to produce ITB (blue Train) and OOB (red Test) data from k-fold and BLOCO splits. From each L1 Train, we further divided data in a nested manner (L2). From L2, we calibrated model uncertainties on a set of k-fold splits and produced \({M}_{i}^{unc}\). \({M}_{i}^{prop}\) and \({M}_{i}^{dis}\) were fit to all Train for each split in L1. The predictions of \({M}_{i}^{prop}\), \({M}_{i}^{unc}\) and \({M}_{i}^{dis}\) on each respective L1 Test set produced the OOB data used to measure \({E}^{| y-\hat{y}| /MA{D}_{y}}\), \({E}^{RMSE/{\sigma }_{y}}\), and Earea.

This methodology often produced a large class imbalance between the number of ID and OD cases, which can make interpreting the assessment of the domain classifier difficult. Therefore, we used a resampling procedure to obtain a balanced number of ID and OD data points in the OOB aggregated set. More specifically, if the number of ID cases exceeded the number of OD cases, we randomly sampled a subset of the ID data such that the number of ID cases was equal to the number of OD cases. Conversely, if the number of OD cases surpassed the number of ID cases, we randomly sampled a subset of the OD data to match the number of ID cases. However, if the ID and OD data sets contained an equal number of cases, no subsampling was performed. These samplings of data were then used to assess the ability of d to predict ID/OD with precision and recall.

Assessing Our Domain Prediction Based on A Ground Truth Determined by Normalized Residuals and Errors in Predicted Uncertainties

In this section we describe our method to evaluate how d provides information with respect to \({E}^{| y-\hat{y}| /MA{D}_{y}}\), \({E}^{RMSE/{\sigma }_{y}}\), and Earea and call these assessments \({A}^{| y-\hat{y}| /MA{D}_{y}}\), \({A}^{RMSE/{\sigma }_{y}}\) and Aarea, respectively. We must generate a set of OOB data that contain ID/OD labels to assess the ability of d to separate ID/OD. To do this, data containing both X and y were split using several methods for CV. First, data were split by 5-fold CV where models were iteratively fit on 4 folds and then predicted \(\hat{y}\), σc, and d on OOB data. Second, data were pre-clustered using agglomerative clustering from scikit-learn31. One cluster was left out for OOB data, and all other ITB clusters were used to train models. Then, models predicted \(\hat{y}\), σc, and d on the OOB data. Like the 5-fold CV methodology, we sequentially left out each cluster, which was similar to the approaches applied in refs. 39 and 40. However, applying this clustering to the original data gave only a limited number of clusters. To generate many more OOB data, we generated a series of bootstrap data sets from clusters and then applied the same leave out cluster approach. To clarify, we first clustered the data, and then performed bootstrapping separately on each cluster, rather than bootstrapping on all the data and then clustering. We call this a BLOCO approach. We performed BLOCO with 3 and 2 total clusters with the aim of providing a large amount of OOB data that are increasingly dissimilar to ITB data and hopefully OD. See Fig. 10b for an illustration of BLOCO.