Abstract

Materials with large spin–orbit torque (SOT) hold considerable significance for many spintronic applications because of their potential for energy-efficient magnetization switching. Unfortunately, most of the existing materials exhibit an SOT efficiency factor that is much less than unity, requiring a large current for magnetization switching. The search for new materials that can exhibit an SOT efficiency much greater than unity is a topic of active research, and only a few such materials have been identified using conventional approaches. In this paper, we present a machine learning-based approach using a word embedding model that can identify new results by deciphering non-trivial correlations among various items in a specialized scientific text corpus. We show that such a model can be used to identify materials likely to exhibit high SOT and rank them according to their expected SOT strengths. The model captured the essential spintronics knowledge embedded in scientific abstracts within various materials science, physics, and engineering journals and identified 97 new materials to exhibit high SOT. Among them, 16 candidate materials are expected to exhibit an SOT efficiency greater than unity, and one of them has recently been confirmed with experiments with quantitative agreement with the model prediction.

Similar content being viewed by others

Introduction

Materials exhibiting spin–orbit torque (SOT)1,2,3 are of great interest for efficient charge current to spin current conversion that can enable energy-efficient nano-magnet switching in many novel applications3,4,5,6,7,8. In the past decade, a large variety of materials1,2,3 have been explored, exhibiting wide ranges of spin current density to charge current density ratios, known as SOT efficiency factors. However, most of them exhibit an efficiency factor much less than unity, requiring a larger current for magnetization switching. There is a growing interest in new materials that can exhibit a SOT efficiency factor much greater than unity, and only a few9,10,11,12,13,14,15 have been identified. While conventional methodologies1,2,3 are often successful in guiding such materials search; they can be quite expensive in evaluating the large available materials space for SOT. There is an opportunity to use emerging machine learning models to accelerate the search for such materials.

Many materials often get discussed in scientific publications from different domains, which may share similar crystallography or properties as seen in various SOT materials. Such non-trivial connections can get hidden within the overwhelmingly large text due to its heterogeneous format and domain-specific language. Thus, such unstructured published data on diverse materials can be leveraged using natural language processing tools to identify new and non-trivial results. Such tools have been very useful in scientific text mining16,17,18,19,20,21, especially in effectively unifying large text data to extract materials or synthesize information and build knowledge graph structures.

Recently, there is a growing interest in large language models (LLMs)22,23 due to their ability to comprehend and generate human-like text. The significant cost and hardware requirements for training such models24 make it challenging to implement them in specialized research environments25. Source-available LLMs are typically trained over a substantially large dataset consisting of publicly available data that is often not scientifically peer-reviewed and a generative model can produce unrealistic results if not carefully used26,27. Thus, the use of such models in scientific research remains an evolving topic. Despite the challenges, a number of recent works have focused on using LLMs in scientific research, with or without fine-tuning, which showed promising results in property prediction28, inverse designs28, molecule-caption translation29, harvesting from low-fidelity calculations30, protein structure prediction31, biomedical text generation32, battery research33, and autonomous scientific experiments34.

Word embedding models35,36,37 can be very useful and efficient for specialized tasks using a tailored dataset while offering scalability and interpretability within a cost-effective computational framework typically available in research laboratories. These models have shown usefulness in identifying new scientific results from large text datasets and have been applied in predicting new materials38,39,40, identifying harmful substances in food41, disease assessments and therapeutic identification42,43,44,45,46. Later, a transformer-based model is used to understand research trends and directions47 and to extract information from large corpora of materials48,49 within appropriate contexts.

In this paper, we show that a word embedding model trained with specialized and peer-reviewed scientific literature can identify new results by deciphering hidden correlations among various items within the large text. The trained word embedding model translates text-based knowledge into a high-dimensional vectorial representation50 that allows us to use standard mathematical tools to understand many intrinsic features of the text corpus. Such a model can be useful in domain-specific research for predicting new material candidates and estimating the expected strength of a given phenomenon relative to a reference material.

We show that the model learned key concepts of magnetism and spintronics in an unsupervised manner from scientific abstracts published in various materials science, physics, and engineering journals. We define a function that exploits the similarities among word embeddings and provides rankings based on the likelihood of observing a given phenomenon in a structure consisting of multiple materials. Our model successfully identified and ranked a number of materials in the context of SOT device structure and that the quantitative projection on the SOT strength is in good agreement with the experimental reports in various known materials.

In addition, our model predicted 97 new materials to exhibit SOT, with 16 of them likely to exhibit SOT efficiency factors much greater than unity. Iron silicide is one of these 16 new high SOT efficiency materials, which we have recently experimentally demonstrated15 with the measured SOT efficiency factor in good agreement with the word embedding model projection; however, the data on iron silicide experiment was not included in the training text corpus. While these materials have been explored in various other contexts within the literature, to our knowledge, they have not been examined in the context of SOT. We further show that known results on a few materials could have been predicted much before their first report appeared in the literature, which indicates that such a methodology can serve as a powerful assistive tool to accelerate materials and device research.

Results

We trained a model using a word embedding model called Word2Vec35 (see Fig. 1) and adopted an unsupervised training approach similar to the report on thermoelectric materials38. Our training dataset contained approximately one million scientific abstracts from various peer-reviewed materials science, physics, and engineering journals published between 1970 and 2020 within the American Physical Society (APS) and the Institute of Electrical and Electronics Engineers (IEEE). We have restricted our data collection until 2020 because one of our key validations of model prediction is based on our experimental demonstration15 of SOT in iron silicide, and there has not been any discussion of iron silicide in the context of high SOT before 2020 to our knowledge. Additionally, several other studies were published during the peer-review process of this manuscript, which are not included in our training dataset and directly or indirectly supported several of our predictions. We have summarized these studies later in this section. In addition, our trained model inherited a broad range of knowledge beyond the research presented in this manuscript, which will greatly interest different research domains within the scientific community, and a copy of the model is made available online51. However, in this manuscript, we evaluated the trained model only in the context of magnetism and spintronics. Further details about model training and the training dataset has been discussed later in the Methods section.

It consists of one-hot encoded input layer, a hidden layer, and an output layer that performs negative sampling with n = 15. The trained word embedding model is connected to a postprocessing module, which collects material word embeddings related to a target word and calculates the similarity (Γ) to a phenomenon within a multilayered stack. The final output of the postprocessing module ranks those materials according to Γ.

Materials and devices knowledge encoded in word embeddings

The relationships among the word embeddings within the trained model captured the knowledge encoded in the text corpus. The related words are closer to each other by the cosine similarity. If two word embeddings are related, the cosine similarity is closer to 1. On the other hand, if the word embeddings are unrelated, the cosine similarity is closer to 0. The cosine similarity among various word embeddings can be used to generate a list of materials, phenomena, and applications related to a target word. For example, the target word “spin Hall effect” is closer to the words “spin-charge conversion”, “nonequilibrium spin polarization”, “spin currents”, and “spin–orbit torque”, among others.

The word embeddings support analogy operations35,38, which can be useful in connecting the material name to its chemical formula. For example, in the following vector algebraic expression,

Here, “platinum” − “Pt” specifies the context in which we are relating a material name, platinum, to its chemical formula, Pt. Then, the resultant vector, after adding the target word “Ta”, is the corresponding material name, tantalum. We can fix the context and keep changing the target chemical formula to get the corresponding material name, as shown below.

Such vector operations can be useful in populating a list of materials by specifying a material's class. For example, in the following expression,

Here, “Fe” − “ferromagnet” sets the context to the chemical formula for a material class, “ + semiconductor” specifies the target materials class, and the resultant vector is close to word embeddings for chemical formulas of different semiconductor materials. Changing the target materials class to “topological insulator” also changes the populated materials list that contains materials known to exhibit topological phases. Note that the topological phases of materials are of great interest for large SOT efficiency factors.

We use a similar method to populate a list of SOT materials later in the manuscript.

Our model has been trained with abstracts from both fundamental science and engineering journals; therefore, word embeddings can relate materials and phenomena to relevant devices and applications. For example, the “tunneling magnetoresistance” phenomenon is used to “read” the bit stored in the magnetic tunnel junction devices in the magnetoresistive random access memory (MRAM) application. Within this context, if we ask about the purpose of the “spin-transfer torque” or “spin–orbit torque” phenomenon using the following form, the resultant vector is closest to “write” by the cosine similarity.

Moreover, the addition of word embeddings corresponding to the two phenomena, “tunneling magnetoresistance” and “spin-transfer torque,” yields a vector closest to “magnetic tunnel junctions,” which is the device that functions based on these phenomena52. Furthermore, if we add the resultant word embedding “magnetic tunnel junctions” with the word “memory,” it yields a vector closest to ‘MRAM,’ which refers to the application that uses magnetic tunnel junctions52.

Note that in some instances, beyond the examples shown here, the closest word embedding to the resultant vector may not be the desirable answer, and the desirable answer may appear as the second or third nearest word embedding. This is mostly due to the nature of the training text corpus, especially how these words have been discussed in the text. However, all the words closer to the resultant vector are relevant and meaningful in the context.

Word embeddings capture intrinsic figure-of-merits

Most spintronics phenomena relevant for potential device applications are characterized by multilayered stacks that contain different materials. Thus, the cosine similarities among multiple word embeddings related to different elements of the stack can provide insight into how a given combination of materials is correlated with a particular phenomenon. We define the following function to quantify such a similarity (Γ) among multiple word embeddings (\(\vec{x}\)), as given by

Note that Eq. (1) is not a unique choice for a function to calculate the similarity among multiple word embeddings, and other functions may be more appropriate depending on the nature of the structure and underlying physics. We have defined Eq. (1) such that Γ is highest when all word embeddings under consideration (\(\overrightarrow{x}\)) are highly similar; however, Γ will be zero if one of the word embeddings under consideration is independent or dissimilar to the others.

For three-word embeddings \(\overrightarrow{x}\in \left\{{\mathcal{A}},{\mathcal{B}},{\mathcal{C}}\right\}\), Eq. (1) reduces to the following expression

where \(\cos ({\mathcal{A}},{\mathcal{B}})\) represents cosine similarity between vectors \({\mathcal{A}}\) and \({\mathcal{B}}\). Such a reduced function can be used to understand the likelihood of a phenomenon \({\mathcal{C}}\) being observed in a bilayer consisting of materials \({\mathcal{A}}\) and \({\mathcal{B}}\). The value of Γ will depend on the similarities among \({\mathcal{A}}\), \({\mathcal{B}}\), and \({\mathcal{C}}\) within the trained word embedding model. If both \({\mathcal{A}}\) and \({\mathcal{B}}\) have a high similarity to \({\mathcal{C}}\) but the similarity between materials \({\mathcal{A}}\) and \({\mathcal{B}}\) is low due to growth issues or interfacial dissimilarities, then overall Γ becomes low.

Interestingly, within our trained model, the similarity pattern generated from Γ for a given set of materials in the context of a particular phenomenon often showed a good correlation with the pattern generated by experimental measurements on the intrinsic figure-of-merit for that phenomenon. Here, we show an example by applying Eq. (2) for the phenomenon \({\mathcal{C}}\equiv \,\)“oscillatory exchange coupling” typically observed in magnetic multilayers with transition metal spacers53,54,55,56 and utilized as a synthetic-antiferromagnet-based reference layer in magnetic tunnel junctions52. We have calculated Γ from the trained word embedding model with \({\mathcal{A}}\) being word embeddings for various 3d, 4d, and 5d transition metals and \({\mathcal{B}}\) being “Co” (cobalt). Γ is plotted as a function of the atomic numbers of the transition metals as shown in Fig. 2a. For 3d, 4d, and 5d transition metals, Γ increases with the atomic number, peaks at “Cr”, “Ru”, and “Ir”, respectively, and then decreases with the atomic number. The trend observed from Γ in Fig. 2a agrees well with the experimentally measured first antiferromagnetic oscillatory exchange coupling (OEC) peak energy in various Co/transition metals multilayers53,54,55,56, as shown in Fig. 2b.

Word embeddings projecting intrinsic figure-of-merit. a Γ estimated using Eq. (2) from the trained word embedding model, with \({\mathcal{C}}=\,\)‘oscillatory exchange coupling’, \({\mathcal{B}}=\,\)‘Co’ (cobalt), and \({\mathcal{A}}=\,\)3d, 4d, and 5d transition metals. b Experimental reports53,54,55,56 on the first antiferromagnetic oscillatory exchange coupling (OEC) energy peak in various Co/transition metals multilayers.

The agreement observed in Fig. 2 is non-trivial since the estimation of Γ did not involve the experimental values of the first OEC energy peak reported in the text corpus. Γ reflects the similarities among word embeddings: “oscillatory exchange coupling”, “Co”, and various transition metals, the vectorial configurations of which were set during the training based on how those words were used to represent knowledge in the text corpus. Thus, Γ can be very useful in ranking materials or materials stacks according to the target functionality. Note that in a given research domain, materials exhibiting larger figure-of-merits are often discussed in more articles than other materials. Hence, such a correlation pattern partly builds up based on how often a particular materials stack has been discussed in different literature in the context of the phenomenon.

On the other hand, the word embedding model can be useful in identifying the correlation between a material and a phenomenon via patterns hidden within the large text corpus, although such a correlation has never been directly discussed in the training dataset. In the next subsection, we will show that such indirect correlation build-up can be very useful in predicting new materials for a target functionality; however, all elements of the new results are part of the training dataset. This method of predictive research differs from those employing advanced generative models28,29,30,31,32,33,34, which can generate results not explicitly part of its training dataset and does not serve solely the purpose of finding hidden correlations among the elements within the dataset.

Materials word embeddings related to large spin–orbit torques

We apply the trained word embedding model to evaluate various material word embeddings in the context of SOT. We first populated about ~2000 word embeddings similar (by the cosine similarity) to the resultant vector of the analogy expression “Fe” − “ferromagnet” + “spin–orbit torque”. We then filtered out the word embeddings that do not correspond to a material to generate the list of materials correlated with SOT (\(\in {\mathcal{A}}\)). Note that the analogy expression used to generate the list of materials is not unique; similar analogy expressions would have provided the same list after thresholding and ranking using Γ as described in the next paragraph.

Next, we calculate Γ for each of these materials word embeddings (\(\in {\mathcal{A}}\)) using Eq. (2) with the phenomenon \({\mathcal{C}}\equiv \,\)“spin–orbit torque” and the other layer \({\mathcal{B}}\) as “CoFeB” typically used to form the conductor/magnet (\(\equiv {\mathcal{A}}/{\mathcal{B}}\)) bilayers for SOT characterization. We then select candidates for SOT by taking materials word embeddings that exhibit a Γ higher than a threshold value. The threshold value of Γ for candidate selection was determined by the minimum value at which the model identified a known SOT material, which in this case is PtTe257 (see Fig. 3). The threshold is ~3% of the calculated Γ for the widely used SOT material platinum58. The calculated Γ for different candidate materials are shown in Fig. 3a, b. The rationale for selecting the threshold value is that any new candidate that demonstrates a Γ higher than an established SOT material is likely to be significantly correlated with the SOT phenomenon.

Γ values for various materials are normalized to the reference material Pt.

Some of the SOT materials identified by the word embedding model have already been discussed in the literature, e.g., IrMn59, Pt58, Ta4, Ir60, CuPt61 Cu/Bi2, Bi/Ag62, Bi2Se39, (BiSb)2Te363, SnTe63, WTe264, PtTe257, NbSe265, SrIrO366,67, IrO268, Nb-Ta69, and CoGa70, which are shown as blue bars in Fig. 3. In addition, the model has identified 97 new materials that have a higher similarity with SOT, which are shown as red bars in Fig. 3. Note that we are referring to the red bars as new materials, as they have not been discussed in the literature dataset in the context of SOT to the best of our knowledge. However, these materials have been discussed in the literature in other contexts, and thus, various knowledge on these materials is included in our training dataset. The model identified the blue bars in Fig. 3 via a direct correlation with SOT; however, the red bars were identified via an indirect correlation with SOT, which was built up within the model according to the information available on these materials within the training dataset. ‘FeSi’ (iron silicide) is one of the new materials identified by the model (green bar in Fig. 3), which we have recently experimentally demonstrated to exhibit a high SOT15; however, the experimental data were not included in the training text corpus.

Model projection on known spin–orbit torque materials

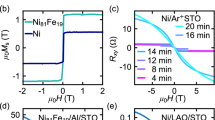

We first separate the known SOT materials (blue bars in Fig. 3) and compare the relative positions of Γ among these materials (see Fig. 4a) with the experimental reports on the spin Hall conductivities on these materials (see Fig. 4b). Spin Hall conductivity is a widely used figure-of-merit to characterize the SOT materials1,2,3. Interestingly, the relative position patterns of Γ obtained from the word embeddings reasonably agree with the relative positions of the spin Hall conductivities in these materials. Such an agreement is non-trivial because Γ only calculates the association among the words based on the context in which they appeared in the text corpus. The calculation of Γ did not take into account the numerical values of the spin Hall conductivity reported in the text.

a Γ vs. material conductivity for known SOT materials (blue bars in Fig. 3). b Experimental reports of spin Hall conductivities in various conductors: IrMn59, Pt58, Ta4, Ir60, CuPt61, Cu/Bi2, Bi/Ag62, Bi2Se39, (BiSb)2Te363, SnTe63, WTe264, PtTe257, NbSe265, SrIrO366,67, IrO268, Nb-Ta69, and CoGa70. FeSi is one of the new materials identified by the model, which we have demonstrated experimentally15. c Neural network projected SOT efficiency, \({\xi }_{\,\text{SOT}}^{\text{NN}\,}\), vs. measured SOT efficiency from the literature, ξSOT. d Timeline analysis of the model projection on SOT efficiency factors as more data is added to the training text corpus.

The model projected a larger Γ for IrMn compared to Pt. The relative position of Γ between IrMn and Pt is in agreement with the relative positions obtained from the experimental spin Hall conductivities59 (see Fig. 4a, b). Although SOT in IrMn has been discussed in the past71; the reported strength of the spin Hall conductivity was much lower than in Pt. The experimental report59 on a strong spin Hall conductivity in IrMn that is higher than in Pt is not included in our training dataset. Thus, the model is also projecting a high Γ based on an indirect association between the words ‘IrMn’ and ’spin–orbit torque’.

Note that our model did not identify ‘W’ (tungsten) as an SOT material, although it has been reported many times in the literature72. One possible explanation is that a majority of the training text-corpus from engineering journals used the symbol “W” to represent watt (unit of power), which in turn reduced the correlation between tungsten and SOT. Another thing to note is that the model identified “Cu/Bi” as an SOT material and the relative position of Γ is in good agreement with the calculated spin Hall conductivity for a Cu/Bi Rashba interface2. However, there is an experimental report of a CuBi alloy that exhibited higher spin Hall conductivity at lower temperatures73, which the model has not picked up.

We exploit the remarkable similarity between the Γ pattern among various materials and the corresponding experimental reports of spin Hall conductivities to quantitatively estimate the neural network (NN) projection of the SOT efficiency factor, \({\xi }_{\,\text{SOT}}^{\text{NN}\,}\), using the following expression

where σ is the charge conductivity of the corresponding material \({\mathcal{A}}\), σPt is the spin Hall conductivity of the reference material Pt, and \(\Gamma (`{\text{Pt}}{\mbox{'}} )\) is the Γ value for the word embedding of ‘Pt’.

The NN projected SOT efficiency factors for the blue bars (i.e., known materials) in Fig. 3 are summarized in Table 1 and compared with corresponding reports in the literature. The NN projected \({\xi }_{\,\text{SOT}}^{\text{NN}\,}\) for various known materials are shown in Fig. 4c as a function of their corresponding experimental values reported in the literature. Fig. 4c is represented on a log-log scale to accurately depict the SOT efficiency factors of these materials, which span several orders of magnitude. We show the ideal line y = x in Fig. 4c, where the y-axis represents the NN projected \({\xi }_{\,\text{SOT}}^{\text{NN}\,}\) and the x-axis represents experimental reports, to show how the prediction deviates from the experimental reports. Due to the functional dependence of y = x, the ideal line also appears linear in the log-log representation. Although a few model projections differed from experimental reports by a sizable factor, the model correctly projected a value of the SOT efficiency factor in the same order of magnitude as expected in these materials, see Table 1. Therefore, Fig. 4c serves as the benchmark for the known material subset out of the total candidates identified by the model (see Fig. 3), and we assert that the predictions on the new material candidates reliably project the order of magnitude of SOT expected in those materials.

Such quantitative projections from the trained word embedding model are highly data-dependent, especially because they are calculated based on the correlations among word embeddings, which may evolve as more data is added to the training text corpus. In order to understand the stability of such a quantitative projection when more knowledge becomes available every year in the form of published literature, we present a timeline analysis in Fig. 4d. For this analysis, we have trained multiple models by cumulatively adding one more year of literature abstracts to the training text corpus. The x-axis of Fig. 4d corresponds to six models that were trained using journal abstracts starting from 1970 until 2015, 2016, 2017, 2018, 2019, and 2020, respectively. For each of these six models, we have evaluated Γ using Eq. (2) for the blue bars in Fig. 3 and then calculated \({\xi }_{\,\text{SOT}}^{\text{NN}\,}\) using Eq. (3), as shown on the y-axis of Fig. 4d.

The magnitudes of the NN projected \({\xi }_{\,\text{SOT}}^{\text{NN}\,}\) in most of these known materials remained relatively stable beyond 2015, with each successive model incorporating additional data. The projection for Cu/Bi became relatively stable around 2017. The model did not pick up PtTe2 as an SOT material candidate before 2018, and the projected \({\xi }_{\,\text{SOT}}^{\text{NN}\,}\) fluctuates by an order of magnitude onward, which indicates that more data on PtTe2 is needed for a stable quantitative projection on \({\xi }_{\,\text{SOT}}^{\text{NN}\,}\); however, there is enough data that resulted in a sizable Γ. The model projection on NbSe2 is reasonably stable beyond 2015; however, the projected value is about three times higher than the experimental report65. Note that the quantitative projection of \({\xi }_{\,\text{SOT}}^{\text{NN}\,}\) relies on the relative positions of Γ with respect to the reference word embedding “Pt” and is independent of the absolute value of Γ. Therefore, the stability in the predicted order of magnitude for \({\xi }_{\,\text{SOT}}^{\text{NN}\,}\) corresponds to the stability of the relative position of Γ for new candidates becoming stable with respect to that of “Pt”.

Surprisingly, the NN projected \({\xi }_{\,\text{SOT}}^{\text{NN}\,}\) value for FeSi was relatively stable around 2015, although we first measured this material in the context of SOT in 202074, and subsequently we demonstrated a large SOT efficiency factor closer to the model prediction in 202215. Furthermore, the model identified SrIrO3 as an SOT material and correctly projected the efficiency factor based on abstracts from the literature up to 2015; however, the experimental report appeared four years later66,67. Remarkably, the word embedding model could have predicted both of these materials (FeSi and SrIrO3) back in 2015, showing the significant usefulness of such machine learning algorithms in materials and device research.

New materials prediction for large spin–orbit torques

The word embedding model has identified 97 new candidates, shown as red bars in Fig. 3, which, to our knowledge, have not been directly discussed in the context of SOT. Although a few of these candidates have been discussed in the literature in the context of special material phases that are often correlated to SOT, the model correlated most of them to SOT via patterns hidden within the large text corpus. We used Eq. (3) to estimate \({\xi }_{\,\text{SOT}}^{\text{NN}\,}\) for these new candidate materials (red bars in Fig. 3) and distribution of \({\xi }_{\,\text{SOT}}^{\text{NN}\,}\) is shown in Fig. 5a. The estimated values of \({\xi }_{\,\text{SOT}}^{\text{NN}\,}\) and the corresponding conductivities are listed in Table 2.

a Distribution of the neural network (NN) projected strength of the spin–orbit torque (SOT) efficiency factors, \({\xi }_{\,\text{SOT}}^{\text{NN}\,}\), for the predicted materials. b \({\xi }_{\,\text{SOT}}^{\text{NN}\,}\) as a function of their corresponding Γ for the 16 high SOT (\({\xi }_{\,\text{SOT}}^{\text{NN}\,}\ge 1\)) candidates. c Timeline analysis of the prediction on \({\xi }_{\,\text{SOT}}^{\text{NN}\,}\) for the 16 high SOT candidates as more data is added to the training dataset.

Among the 97 new materials, 16 of the candidate materials are likely to exhibit an SOT efficiency factor much larger than or closer to unity (see Fig. 5a). These 16 candidates include EuSe, CrSe, DyBa2Cu3O7, MnGe, CrVTiAl, EuTe, EuMnBi2, CrSi, BiSbTeSe2, CrTe, EuFe2As2, NaCo2O4, FeSi, FeGa, TaSe3, and ZrZn2, where EuSe, CrSe, DyBa2Cu3O7, and MnGe are projected to exhibit a SOT efficiency factor >10. The estimated \({\xi }_{\,\text{SOT}}^{\text{NN}\,}\) for these 16 candidates are shown in Fig. 5b as a function of their corresponding Γ values. Γ values for these candidates range from 10 to 80% of Pt and FeSi, EuTe, EuSe, MnGe, CrSi, CrTe, and FeGa have Γ > 50% of Pt while exhibiting \({\xi }_{\,\text{SOT}}^{\text{NN}\,} \,> \,1\). We recently measured a high SOT efficiency factor on the order of ~2 in one of these 16 candidates, FeSi15, which is in good agreement with the quantitative projection of the word embedding model (see Table 1). Note that the measured data on FeSi or related information was not included in the training text corpus. Thus, such an agreement between model prediction and experimental observation demonstrates the quantitative prediction capability of the machine learning approach, which will be useful for application-specific materials search.

In addition to FeSi, the word embedding model predicted SOT in various other silicides, including CrSi, MoSi, CoSi, PdSi, PtSi, NiSi, FeSiAl, LaPt2Si2, Co2FeSi, Co2MnSi, CePt3Si, CaIrSi3, and YbRh2Si2. The model predicts that CrSi will exhibit an efficiency factor ~3 × higher than FeSi, close to a value of ~5.3. Other silicides identified by the model are expected to exhibit SOT efficiencies close to what we observe for transition metals. SOT in silicides could be interesting for SOT device applications, as silicides are integrable within standard CMOS technologies.

In order to understand the stability of such a quantitative projection on the high SOT candidates, we have performed a timeline analysis similar to that done for the known materials in the previous subsection. We used the six models trained with journal abstracts starting from 1970 until 2015, 2016, 2017, 2018, 2019, and 2020, respectively, to calculate the NN projected \({\xi }_{\,\text{SOT}}^{\text{NN}\,}\) using Eq. (3) for the 16 high SOT candidate materials identified by the model. Fig. 5c shows \({\xi }_{\,\text{SOT}}^{\text{NN}\,}\) for these materials as a function of accumulated training data over each consecutive year. The predictions for EuSe, CrSe, DyBa2Cu2O7, MnGe, EuTe, and EuFe2As2 were already reasonably stable since 2015. The predictions for CrSi, CrTe, TaSe, and ZrZn2 became stable between 2016 and 2018. CrVTiAl, EuMnBi, and BiSbTeSe2 were not identified as SOT candidate material before 2019, and the projected \({\xi }_{\,\text{SOT}}^{\text{NN}\,}\) is still fluctuating as more data is added to the training text corpus.

Among the other candidate materials identified by the model, 49 of them are predicted to exhibit a sufficiently large SOT efficiency factor within the range of \(0.1\le {\xi }_{\,\text{SOT}}^{\text{NN}\,} \,<\, 1\) (see Fig. 5a), where Co2TiSn, SrMnBi2, NiMn, MnAl, Co2MnGe, MnPt, CeRh3B2, CeRu2Al10, NbSe3, and MoSi are expected to exhibit \({\xi }_{\,\text{SOT}}^{\text{NN}\,}\ge 0.5\). Furthermore, 26 materials are expected to have SOT efficiency factors ranging within \(0.01\le {\xi }_{\,\text{SOT}}^{\text{NN}\,} \,< \,0.1\), and 6 materials are expected to show very weak SOT efficiency factors \({\xi }_{\,\text{SOT}}^{\text{NN}\,}\, <\, 0.01\). For example, the model identified highly conductive delafossite metals, specifically PdCrO2 and PdCoO2, as SOT materials, although their expected SOT efficiencies are roughly an order of magnitude lower than that of platinum. A detailed list of the candidate materials and their expected \({\xi }_{\,\text{SOT}}^{\text{NN}\,}\) are given in Table 2.

Some of the materials identified by the model have a relatively straightforward correlation with SOT. The correlation built up between SOT and BiSbTeSe2 is straightforward, as this material has been discussed as a topological insulator75 in the literature. In particular, many other bismuth-based compounds9,63 were discussed as a host for the topological insulator phase, and some of them have experimental reports of a strong SOT. Another candidate material, MnGe, has been discussed in the context of a topological texture76, which is a possible correlation to a strong SOT. Another candidate, CaMnBi2, has been discussed to exhibit a nonzero Berry phase77, which is associated with the physical origin of SOT in many materials1,3.

Interestingly, a magnetism-induced topological phase has been discussed for EuAs3 in the literature78; however, the article appeared beyond the time frame of the dataset used to train the model. Similarly, GdPtBi79 and TaSe380 have been discussed to host a topological semi-metallic phase, and such a phase is known to exhibit a strong SOT64; however, these articles were published beyond the dataset collected to train the model. Moreover, there has been a discussion of a Rashba channel formation in GeTe81, which is another intrinsic mechanism for SOT3. An article also discussed a strong field-like SOT in a GeTe/NiFe bilayer82; however, the article also appeared beyond the time frame of the collected dataset used to train the model.

Note that the calculation of the Γ value depends only on the similarities among various word embeddings within the trained model, but the calculation of the NN projection of the SOT efficiency factor also takes into account the corresponding material conductivity (see Eq. (3)). Here, we use the nominal conductivity reported in the literature for these materials (see Table 2 and supplementary information); however, some of the NN projections of SOT efficiency may need to be re-calibrated as conductivity varies in different growth and crystallinity conditions.

t-SNE projection of the possible origin of spin–orbit torque

SOT in nonmagnetic materials can arise from various intrinsic mechanisms1,2,3, e.g., interfacial mechanisms such as a Rashba interface or a topological phase of material9,63,64 or bulk mechanisms such as the spin Hall effect. Current-induced SOT has been observed in ferromagnetic materials83,84 that arise from the spin anomalous Hall effect85, the planar spin Hall effect86, or a magnetization-dependent spin Hall effect87. In addition, large SOT has also been discussed in antiferromagnetic phases of materials59,88.

The word embedding model can provide insight into a possible intrinsic mechanism of SOT for the new candidate materials based on the correlation among related word embeddings within the high-dimensional space. To understand such a correlation, we use a dimensionality reduction methodology called t-distributed stochastic neighbor embedding (t-SNE)89, which is a widely used method for visualizing high-dimensional data on a two-dimensional (2D) plane. In a t-SNE plot, the data points corresponding to similar word embeddings tend to cluster together, and dissimilar data points are located farther apart.

To generate a cluster of word embeddings related to a target word, we have collected all word embeddings closer to the target word by a cosine similarity ≥0.8 and used the t-SNE method to reduce these high-dimensional word embeddings onto a 2D plane. The t-SNE plots were generated using scikit-learn with perplexity = 50, n − components = 2, init = ‘pca’, n − iterations = 5000, and random states = 10. For visual clarity, we represent the cluster for a given target word with a semi-transparent colored region constructed by the boundary data points of the cluster. We have plotted such clusters for the target words “topological insulator”, “topological semimetal”, “spin Hall effect”, “Rashba”, “ferromagnet”, and “antiferromagnet” in Fig. 6 with the x and y axes having arbitrary units. Note that the choice of target words is based on the known intrinsic mechanisms that can give rise to SOT.

Different colored regions correspond to the data clusters for the target words “topological insulator”, “topological semimetal”, “spin Hall effect”, “Rashba”, “ferromagnet”, and “antiferromagnet”. The black scatter points are the new SOT materials identified by the word embedding model, shown into four groups for visual clarity: a \(0.4\,<\, {\xi }_{{\rm{SOT}}}^{{\rm{NN}}}\le\)50, b \(0.2\,<\, {\xi }_{{\rm{SOT}}}^{{\rm{NN}}}\le\)0.4, c \(0.1\,<\, {\xi }_{{\rm{SOT}}}^{{\rm{NN}}}\le\)0.2, and d \({\xi }_{{\rm{SOT}}}^{{\rm{NN}}}\le\)0.1. The blue scatter points are a few known SOT materials for benchmarking purposes.

We also projected the word embeddings for the new candidate materials using t-SNE to understand which candidates overlap with various clusters for different intrinsic mechanisms, as shown in Fig. 6. Such an overlap insinuates a possible origin of SOT in these materials, which are summarized in Table 2. For a benchmark of this methodology, we have also projected the word embeddings for known materials “Pt” and “Ta”, and they overlap with the ‘spin Hall effect’ cluster, which is the known origin of SOT in these transition metals4,58. We have also projected the word embeddings for known topological insulator materials “Bi2Se3” and “Bi2Te3” on the 2D t-SNE plot, and they overlap with the “topological insulator” cluster, confirming the intrinsic mechanism.

Note that the t-SNE method effectively preserves the local structure of the data, but may not accurately represent the overall data distribution. As a result, while any overlap of data points and spatial separation of data clusters may indicate a possible qualitative relation, the quantitative distance between two data points may not be meaningful.

Among the 16 candidate materials with \({\xi }_{\,\text{SOT}}^{\text{NN}\,}\ge 1\), EuSe, DyBa2Cu3O7, EuTe, CrTe, FeGa, and FeSi overlap with the “spin Hall effect” cluster (see Fig. 6a), indicating that the spin Hall effect is a possible mechanism expected in these materials. On the other hand, CrSe, CrSi, BiSbTeSe2, and TaSe3 are expected to exhibit a topological phase based on the overlap with ‘topological insulator’ and ‘topological semimetal’ clusters, as shown in Fig. 6a. Note that BiSbTeSe2 has been established as a topological insulator75 in the literature. Moreover, TaSe3 has been discussed as a topological semimetal in ref. 80, in agreement with the overlap with the ‘topological semimetal’ cluster (see Fig. 6a); however, the article was published beyond the dataset collected to train the model. MnGe and EuFe2As2 overlap with both “antiferromagnet” or “ferromagnet” clusters, indicating a possible mechanism similar to that observed in ferromagnetic or antiferromagnetic conductors. Other high SOT candidates such as CrVTiAl, EuMnBi2, NaCo2O4, and ZrZn2 did not overlap with any clusters for known mechanisms, and we leave the further evaluations of these candidates for the future.

Other candidate materials likely to exhibit a spin Hall effect-induced SOT include Co2MnGe, MnPt, MoSi, Co-doped ZnO, GeTe, CoZrNb, CuMnAs, Co2FeAl, CoS2, Co2CrAl, GdAl2, FeZr, PdSi, GdPtBi, CeNiSn, NiMnSb, CrGe, RuO2, AuFe, PdNi, NiSi, PtSi, NiGe, FeCu, CuCr, and PdCoO2, as shown in Fig. 6a–d. Note that the Rashba mechanism has been discussed in GeTe81 with a strong field-like SOT in a GeTe/NiFe bilayer82; however, a direct discussion of the spin Hall effect in GeTe is not present in the literature. In addition, a topological Weyl semimetal state in GdPtBi has been discussed79 under hydrostatic pressure. In a recent article90, RuO2 has been discussed as a powerful spin current generator to achieve field-free SOT switching. However, these articles appeared beyond the timeframe of the dataset used to train the model.

Candidate materials that are likely to exhibit a topological semi-metallic phase include SrMnBi2, SrPtAs, LaPt2Si2, CuTe, and EuS/Al. Two of the candidate materials, CaMnBi2 and MnBi, overlapped with both “topological insulator” and “topological semimetal” clusters; indicating a possible topological phase in these materials. Note that two-dimensional Dirac fermions and a nonzero Berry phase have been discussed for CaMnBi277. Candidates such as CoSi, Cu2MnAl, NbZr, and PtIr overlapped with clusters for both ‘topological insulator’ and ‘spin Hall effect’; while other candidates such as Co2TiSn, YCo5, and PtMnSb overlapped with clusters for both ‘topological semimetal’ and ‘spin Hall effect’.

Some candidate SOT materials only overlapped with the “antiferromagnet” or “ferromagnet” clusters. Candidate materials such as MnAl, CeRh3B2, CeRu2Al10, FeGe, SmAl2, CoS2, EuAs3, YbPtIn, GdPtBi, NbFe2, YbRh2Si2, RhSn, PtGa, TiBe2 overlap with the “antiferromagnet” cluster and may exhibit SOT using a similar mechanism as seen in a few known antiferromagnets59,88. Note that a magnetism-induced topological transition has been discussed78 for EuAs3 where a topological massive Dirac metal phase has been studied in the antiferromagnetic ground state at low temperatures; however, the article appeared beyond the time frame of the dataset used to train our model. On the other hand, TaS2 and FeAs overlap with the “ferromagnet” cluster only. The proper magnetic phase in these materials and associated mechanisms can be further evaluated using conventional theoretical and experimental methodologies.

Discussion

In conclusion, we present a new approach to identifying new materials in the context of a phenomenon, a device, or an application. We used a word embedding model to enable unsupervised training of a neural network using a text corpus containing scientific abstracts collected from various materials science, physics, and engineering journals. The trained word embedding model can learn special concepts in science and engineering and quantitatively project on the expected strength of the intrinsic figure-of-merit. We applied the model to identify SOT materials and rank them according to their expected SOT strengths. While the model quantitatively showed reasonably good agreement with the known SOT materials, it also identified 97 new materials that are likely to exhibit strong SOT. Interestingly, 16 of the new candidate materials are expected to exhibit an SOT efficiency factor much larger than unity, one of which we have recently demonstrated experimentally and in good agreement with model projection. We further show that a widely used dimensionality reduction technique can provide insight into the expected underlying mechanism of the targeted phenomenon. The method presented here is general and can be applied to different research domains to accelerate the application or device-specific materials search.

Methods

Training dataset preparation

We have collected approximately one million scientific abstracts from various peer-reviewed materials science, physics, and engineering journals published between 1970 and 2020 within the American Physical Society (APS) and the Institute of Electrical and Electronics Engineers (IEEE). Article metadata was obtained from the APS Harvest (harvest.aps.org) and IEEE Xplore (developer.ieee.org) application programming interfaces (APIs), and only the abstract text was extracted and written into a text file without any labeling. Extraneous symbols, specifically markup tags, were removed from the text files. Although the full-text of an article contains more technical details of the research, the abstract summarizes the key research findings, often within 200 words. Thus, restricting ourselves to abstract-only dataset, we were able to include about one million independent research articles and their key findings within our computational limits.

We have collected the data in chronological order and merged them together to create the dataset to train the word embedding model. We have avoided any keyword-based search in collecting manuscript abstracts and pre-processing of any form to avoid any unintentional bias in the training text corpus. The journals were selected to encompass sufficient knowledge of materials, physics, and devices, and all selected journals are peer-reviewed. Note that we have restricted our data collection until 2020 as there have been no discussions of SOT in iron silicide prior to this date and our experimental demonstration15 serves as a key validation of our model.

Word embedding model

The training was carried out by a word embedding model, Word2Vec35, using gensim (https:// radimrehurek.com/gensim/). We have adopted an unsupervised training approach similar to the report on thermoelectric materials38. We have used the skip-gram architecture of the neural network, where the target word is represented as a one-hot encoded vector at the input layer. The skip-gram architecture91 is efficient with larger datasets and predicts context words from a given target word, making it particularly effective at capturing uncommon words within the corpus. The neural network also consists of a single hidden layer and an output layer that performs negative sampling with n = 15. Such a word embedding model represents the words in the training text corpus into high-dimensional vectors, where we have set the dimension to 200 according to the nature of the text corpus. Note that a lower dimension may lead to closely spaced vectors that do not capture the relationships in the data. In contrast, a higher dimension may lead to over-fitting that captures noise rather than meaningful patterns. The optimum dimension of the vector is around 200 for the size of our training text corpus. The window size is set to 10. The minimum count threshold is set to 5, which excludes any words that do not appear more than five times in the text corpus. The learning rate is set to 0.01. The number of epochs is set to 30.

The largest training corpus comprises approximately 200 million words, including repeated occurrences of identical words. The model was trained on a computer with moderate specifications, equipped with an Intel(R) Xeon(R) X5550 2.66 GHz CPU and 24 GB RAM. A single model training process took ~20 h using the optimized set of hyperparameters.

Data availability

The journal abstract text data used to train neural networks in this work were collected from the American Physical Society (APS) and the Institute of Electrical and Electronics Engineers (IEEE). The raw data is available at their respective data repository and can be accessed via their application programming interfaces (APIs) harvest.aps.org and developer.ieee.org, respectively. The trained word embedding model and related instructions are available for download at https://nanohub.org/resources/38611. The data generated by the trained models are incorporated in this article in tabular and graphical forms.

References

Sinova, J., Valenzuela, S. O., Wunderlich, J., Back, C. H. & Jungwirth, T. Spin hall effects. Rev. Mod. Phys. 87, 1213–1260 (2015).

Sayed, S. et al. Unified framework for charge-spin interconversion in spin-orbit materials. Phys. Rev. Appl. 15, 054004 (2021).

Shao, Q. et al. Roadmap of spin-orbit torques. IEEE Trans. Magn. 57, 1–39 (2021).

Liu, L. et al. Spin-torque switching with the giant spin hall effect of tantalum. Science 336, 555–558 (2012).

Manipatruni, S. et al. Scalable energy-efficient magnetoelectric spin-orbit logic. Nature 565, 35–42 (2019).

Sayed, S., Hong, S., Marinero, E. E. & Datta, S. Proposal of a single nano-magnet memory device. IEEE Electron Device Lett. 38, 1665–1668 (2017).

Sato, N., Xue, F., White, R. M., Bi, C. & Wang, S. X. Two-terminal spin-orbit torque magnetoresistive random access memory. Nat. Electron. 1, 508–511 (2018).

Sayed, S., Salahuddin, S. & Yablonovitch, E. Spin-orbit torque rectifier for weak rf energy harvesting. Appl. Phys. Lett. 118, 052408 (2021).

Mellnik, A. R. et al. Spin-transfer torque generated by a topological insulator. Nature 511, 449–451 (2014).

Lesne, E. et al. Highly efficient and tunable spin-to-charge conversion through Rashba coupling at oxide interfaces. Nat. Mater. 15, 1261–1266 (2016).

Khang, N. H. D., Ueda, Y. & Hai, P. N. A conductive topological insulator with large spin hall effect for ultralow power spin-orbit torque switching. Nat. Mater. 17, 808–813 (2018).

DC, M. et al. Room-temperature high spin–orbit torque due to quantum confinement in sputtered BixSe(1−−x) films. Nat. Mater. 17, 800–807 (2018).

Shirokura, T., Fan, T., Khang, N. H. D., Kondo, T. & Hai, P. N. Efficient spin current source using a half-heusler alloy topological semimetal with back end of line compatibility. Sci. Rep. 12, 2426 (2022).

York, B. et al. High spin hall angle doped BiSbX topological insulators using novel high resistive growth and migration barrier layers. In 2022 IEEE 33rd Magnetic Recording Conference (TMRC) 1–2 (IEEE, 2022).

Hsu, C.-H. et al. Large spin-orbit torque generated by amorphous iron silicide. Preprint at Research Square 10.21203/rs.3.rs-1946953/v1 (2022).

Huang, S. & Cole, J. M. A database of battery materials auto-generated using ChemDataExtractor. Sci. Data 7, 1–13 (2020).

Raccuglia, P. et al. Machine-learning-assisted materials discovery using failed experiments. Nature 533, 73–76 (2016).

Kim, E. et al. Machine-learned and codified synthesis parameters of oxide materials. Sci. Data 4, 170127 (2017).

Mehr, S. H. M., Craven, M., Leonov, A. I., Keenan, G. & Cronin, L. A universal system for digitization and automatic execution of the chemical synthesis literature. Science 370, 101–108 (2020).

Krenn, M. & Zeilinger, A. Predicting research trends with semantic and neural networks with an application in quantum physics. Proc. Natl Acad. Sci. USA 117, 1910–1916 (2020).

Venugopal, V., Broderick, S. R. & Rajan, K. A picture is worth a thousand words: applying natural language processing tools for creating a quantum materials database map. MRS Commun. 9, 1134–1141 (2019).

Minaee, S. et al. Large language models: a survey. Preprint at arXiv:2402.06196 (2024).

Naveed, H. et al. A comprehensive overview of large language models. Preprint at arXiv.2307.06435 (2024).

Sharir, O., Peleg, B. & Shoham, Y. The cost of training NLP models: a concise overview. Preprint at arXiv.2004.08900 (2020).

Lei, G., Docherty, R. & Cooper, S. J. Materials science in the era of large language models: a perspective. Digit. Discov. 3, 1257–1272 (2024).

Augenstein, I. et al. Factuality challenges in the era of large language models and opportunities for fact-checking. Nat. Mach. Intell. 6, 852–863 (2024).

Garry, M., Chan, W. M., Foster, J. & Henkel, L. A. Large language models (LLMs) and the institutionalization of misinformation. Trends Cogn. Sci. 28, 1078–1088 (2024).

Jablonka, K. M., Schwaller, P., Ortega-Guerrero, A. & Smit, B. Leveraging large language models for predictive chemistry. Nat. Mach. Intell. 6, 161–169 (2024).

Li, J. et al. Empowering molecule discovery for molecule-caption translation with large language models: A ChatGPT perspective. IEEE Trans. Knowl. Data Eng. 36, 6071–6083 (2024).

Ward, L. et al. Machine learning prediction of accurate atomization energies of organic molecules from low-fidelity quantum chemical calculations. MRS Commun. 9, 891–899 (2019).

Lin, Z. et al. Evolutionary-scale prediction of atomic-level protein structure with a language model. Science 379, 1123–1130 (2023).

Luo, R. et al. BioGPT: generative pre-trained transformer for biomedical text generation and mining. Brief. Bioinformatics 23, bbac409 (2022).

Zhao, S. et al. Potential to transform words to watts with large language models in battery research. Cell Rep. Phys. Sci.5, 101844 (2024).

Boiko, D. A., MacKnight, R., Kline, B. & Gomes, G. Autonomous chemical research with large language models. Nature 624, 570–578 (2023).

Mikolov, T., Sutskever, I., Chen, K., Corrado, G. & Dean, J. Distributed representations of words and phrases and their compositionality. In Proc. 27th International Conference on Neural Information Processing Systems (NIPS'13) 3111–3119 Vol. 2 (Curran Associates Inc., Red Hook, NY, USA, 2013).

Pennington, J., Socher, R. & Manning, C. GloVe: global vectors for word representation. In Proc. 2014 Conference on Empirical Methods in Natural Language Processing (EMNLP) 1532–1543 (Association for Computational Linguistics, 2014).

Bojanowski, P., Grave, E., Joulin, A. & Mikolov, T. Enriching word vectors with subword information. Trans. the Assoc. Comput. Linguist. 5, 135–146 (2017).

Tshitoyan, V. et al. Unsupervised word embeddings capture latent knowledge from materials science literature. Nature 571, 95–98 (2019).

He, M. & Zhang, L. Prediction of solar-chargeable battery materials: a text-mining and first-principles investigation. Int. J. Energy Res. 45, 15521–15533 (2021).

Pei, Z., Yin, J., Liaw, P. K. & Raabe, D. Toward the design of ultrahigh-entropy alloys via mining six million texts. Nat. Commun. 14, 54 (2023).

Gavai, A. K. et al. Artificial intelligence to detect unknown stimulants from scientific literature and media reports. Food Control 130, 108360 (2021).

Krämer, A. et al. Mining hidden knowledge: Embedding models of cause-effect relationships curated from the biomedical literature. Bioinform. Adv. 2, vbac022 (2021).

Freeman, T. C. B. et al. A neural network approach for understanding patient experiences of chronic obstructive pulmonary disease (COPD): retrospective, cross-sectional study of social media content. JMIR Med. Inform 9, e26272 (2021).

Tworowski, D. et al. COVID19 drug repository: text-mining the literature in search of putative COVID19 therapeutics. Nucleic Acids Res. 49, D1113–D1121 (2020).

Powell, J. & Sentz, K. Diachronic text mining investigation of therapeutic candidates for COVID-19. Preprint at arXiv.2110.13971 (2021).

Martinc, M. et al. in Discovery Science (eds Appice, A., Tsoumakas, G., Manolopoulos, Y. & Matwin, S.) (Springer International Publishing, 2020).

Choi, J. et al. Deep learning of electrochemical co2 conversion literature reveals research trends and directions. J. Mater. Chem. A 11, 17628–17643 (2023).

Shetty, P. et al. A general-purpose material property data extraction pipeline from large polymer corpora using natural language processing. npj Comput. Mater. 9, 52 (2023).

Weston, L. et al. Named entity recognition and normalization applied to large-scale information extraction from the materials science literature. J. Chem. Inf. Model. 59, 3692–3702 (2019).

Harris, Z. S. Distributional structure. WORD 10, 146–162 (1954).

Sayed, S., Hannah, K., Hashemi-Asasi, G., Hsu, C.-H. & Salahuddin, S. Supplementary data for “an unsupervised machine learning based approach to identify efficient spin-orbit torque materials”. https://nanohub.org/resources/38611. (2024).

Apalkov, D., Dieny, B. & Slaughter, J. M. Magnetoresistive random access memory. Proc. IEEE 104, 1796–1830 (2016).

Parkin, S. S. P. Systematic variation of the strength and oscillation period of indirect magnetic exchange coupling through the 3d, 4d, and 5d transition metals. Phys. Rev. Lett. 67, 3598–3601 (1991).

Qiu, Z. Q., Pearson, J., Berger, A. & Bader, S. D. Short-period oscillations in the interlayer magnetic coupling of wedged Fe(100)/Mo(100)/Fe(100) grown on Mo(100) by molecular-beam epitaxy. Phys. Rev. Lett. 68, 1398–1401 (1992).

Gökemeijer, N. J., Ambrose, T. & Chien, C. L. Long-range exchange bias across a spacer layer. Phys. Rev. Lett. 79, 4270–4273 (1997).

Smardz, L. Interlayer exchange coupling across quasi-amorphous Ti-Co and Zr-Co spacers. Czech. J. Phys. 52, 209–214 (2002).

Xu, H. et al. High spin hall conductivity in large-area type-ii dirac semimetal PtTe2. Adv. Mater. 32, 2000513 (2020).

Liu, L., Lee, O. J., Gudmundsen, T. J., Ralph, D. C. & Buhrman, R. A. Current-induced switching of perpendicularly magnetized magnetic layers using spin torque from the spin hall effect. Phys. Rev. Lett. 109, 096602 (2012).

Zhou, J. et al. Large spin-orbit torque efficiency enhanced by magnetic structure of collinear antiferromagnet IrMn. Sci. Adv. 5, eaau6696 (2019).

Liu, Y., Zhou, B. & Zhu, J.-G. Field-free magnetization switching by utilizing the spin hall effect and interlayer exchange coupling of iridium. Sci. Rep. 9, 325 (2019).

Ramaswamy, R. et al. Extrinsic spin hall effect in Cu1−xPtx. Phys. Rev. Appl. 8, 024034 (2017).

Niimi, Y. et al. Extrinsic spin hall effects measured with lateral spin valve structures. Phys. Rev. B 89, 054401 (2014).

Wu, H. et al. Room-temperature spin-orbit torque from topological surface states. Phys. Rev. Lett. 123, 207205 (2019).

Shi, S. et al. All-electric magnetization switching and dzyaloshinskii–moriya interaction in WTe2/ferromagnet heterostructures. Nat. Nanotechnol. 14, 945–949 (2019).

Guimarães, M. H. D., Stiehl, G. M., MacNeill, D., Reynolds, N. D. & Ralph, D. C. Spin–orbit torques in NbSe2/permalloy bilayers. Nano Lett. 18, 1311–1316 (2018).

Everhardt, A. S. et al. Tunable charge to spin conversion in strontium iridate thin films. Phys. Rev. Mater. 3, 051201 (2019).

Nan, T. et al. Anisotropic spin-orbit torque generation in epitaxial SrIrO3 by symmetry design. Proc. Natl Acad. Sci. USA 116, 16186–16191 (2019).

Bose, A. et al. Effects of anisotropic strain on spin–orbit torque produced by the dirac nodal line semimetal IrO2. ACS Appl. Mater. Interfaces 12, 55411–55416 (2020).

Lee, M. H. et al. Spin-orbit torques in normal metal/Nb/ferromagnet heterostructures. Sci. Rep. 11, 21081 (2021).

Lau, Y.-., Lee, H., Qu, G., Nakamura, K. & Hayashi, M. Spin hall effect from hybridized 3d − 4p orbitals. Phys. Rev. B 99, 064410 (2019).

Zhang, W. et al. Spin hall effects in metallic antiferromagnets. Phys. Rev. Lett. 113, 196602 (2014).

Pai, C.-F. et al. Spin transfer torque devices utilizing the giant spin hall effect of tungsten. Appl. Phys. Lett. 101,122404 (2012).

Niimi, Y. et al. Giant spin hall effect induced by skew scattering from bismuth impurities inside thin film CuBi alloys. Phys. Rev. Lett. 109, 156602 (2012).

Hsu, C.-H. et al. Spin-orbit torque generated by amorphous FexSi1−x. Preprint at arXiv:2006.07786 [cond-mat.mes-hall] (2020).

Segawa, K. et al. Ambipolar transport in bulk crystals of a topological insulator by gating with ionic liquid. Phys. Rev. B 86, 075306 (2012).

Fujishiro, Y. et al. Large magneto-thermopower in mnge with topological spin texture. Nat. Commun. 9, 28012–28018 (2018).

Wang, K. et al. Two-dimensional dirac fermions and quantum magnetoresistance in CaMnBi2. Phys. Rev. B 85, 041101 (2012).

Cheng, E. et al. Magnetism-induced topological transition in EuAs3. Nat. Commun. 12, 6970 (2021).

Zhang, J. et al. Modulation of weyl semimetal state in half-heusler GdPtBi enabled by hydrostatic pressure. N. J. Phys. 23, 083041 (2021).

Saleheen, A. I. U. et al. Evidence for topological semimetallicity in a chain-compound TaSe3. npj Quantum Mater. 5, 53 (2020).

Di Sante, D., Barone, P., Bertacco, R. & Picozzi, S. Electric control of the giant rashba effect in bulk GeTe. Adv. Mater. 25, 509–513 (2013).

Jeon, J. et al. Field-like spin–orbit torque induced by bulk rashba channels in GeTe/NiFe bilayers. NPG Asia Mater. 13, 76 (2021).

Yang, W. L. et al. Determining spin-torque efficiency in ferromagnetic metals via spin-torque ferromagnetic resonance. Phys. Rev. B 101, 064412 (2020).

Davidson, A., Amin, V. P., Aljuaid, W. S., Haney, P. M. & Fan, X. Perspectives of electrically generated spin currents in ferromagnetic materials. Phys. Lett. A 384, 126228 (2020).

Taniguchi, T., Grollier, J. & Stiles, M. D. Spin-transfer torques generated by the anomalous hall effect and anisotropic magnetoresistance. Phys. Rev. Appl. 3, 044001 (2015).

Safranski, C., Montoya, E. A. & Krivorotov, I. N. Spin–orbit torque driven by a planar hall current. Nat. Nanotechnol. 14, 27–30 (2019).

Qu, G., Nakamura, K. & Hayashi, M. Magnetization direction dependent spin hall effect in 3d ferromagnets. Phys. Rev. B 102, 144440 (2020).

Fukami, S., Zhang, C., DuttaGupta, S., Kurenkov, A. & Ohno, H. Magnetization switching by spin–orbit torque in an antiferromagnet–ferromagnet bilayer system. Nat. Mater. 15, 535–541 (2016).

van der Maaten, L. & Hinton, G. Visualizing data using t − SNE. J. Mach. Learn. Res. 9, 2579–2605 (2008).

Li, Z. et al. Fully field-free spin-orbit torque switching induced by spin splitting effect in altermagnetic RuO2. Adv. Mater. 37, 2416712 (2025).

Mikolov, T., Chen, K., Corrado, G. & Dean, J. Efficient estimation of word representations in vector space. Preprint at arXiv:1301.3781 (2013).

Acknowledgements

This work was in part supported by Applications and Systems-Driven Center for Energy-Efficient Integrated NanoTechnologies (ASCENT), one of six centers in the Joint University Microelectronics Program (JUMP), a Semiconductor Research Corporation (SRC) program sponsored by Defense Advanced Research Projects Agency (DARPA) and in part by the US Department of Energy, under Contract No. DE-AC02-05-CH11231 within the NonEquilibrium Magnetic Materials (NEMM) program.

Author information

Authors and Affiliations

Contributions

Shehrin Sayed developed the program, trained word embedding models, optimized the hyperparameters, analyzed the models, generated results, benchmarked with experiments, and wrote the manuscript. Hannah Calzi Kleidermacher primarily collected and preprocessed the raw data and optimized the training. Giulianna Hashemi-Asasi used the raw data to train multiple neural networks using optimized hyperparameters and evaluated the models on various contexts. Cheng-Hsiang Hsu primarily worked on validating model projections with experiments. Sayeef Salahuddin supervised the entire project and helped everyone with valuable input. All authors participated in analyzing the results and reviewing the manuscript.

Corresponding authors

Ethics declarations

Competing interests

The authors declare no competing interests.

Additional information

Publisher’s note Springer Nature remains neutral with regard to jurisdictional claims in published maps and institutional affiliations.

Supplementary information

41524_2025_1626_MOESM1_ESM.pdf (download PDF )

Supplementary information: an unsupervised machine learning based approach to identify efficient spin–orbit torque materials

Rights and permissions

Open Access This article is licensed under a Creative Commons Attribution 4.0 International License, which permits use, sharing, adaptation, distribution and reproduction in any medium or format, as long as you give appropriate credit to the original author(s) and the source, provide a link to the Creative Commons licence, and indicate if changes were made. The images or other third party material in this article are included in the article’s Creative Commons licence, unless indicated otherwise in a credit line to the material. If material is not included in the article’s Creative Commons licence and your intended use is not permitted by statutory regulation or exceeds the permitted use, you will need to obtain permission directly from the copyright holder. To view a copy of this licence, visit http://creativecommons.org/licenses/by/4.0/.

About this article

Cite this article

Sayed, S., Kleidermacher, H.C., Hashemi-Asasi, G. et al. An unsupervised machine learning based approach to identify efficient spin-orbit torque materials. npj Comput Mater 11, 167 (2025). https://doi.org/10.1038/s41524-025-01626-1

Received:

Accepted:

Published:

Version of record:

DOI: https://doi.org/10.1038/s41524-025-01626-1