Abstract

Recent advances in machine learning have demonstrated an enormous utility of deep learning approaches, particularly Graph Neural Networks (GNNs) for materials science. These methods have emerged as powerful tools for high-throughput prediction of material properties, offering a compelling enhancement and alternative to traditional first-principles calculations. While the community has predominantly focused on developing increasingly complex and universal models to enhance predictive accuracy, such approaches often lack physical interpretability and insights into materials behavior. Here, we introduce a novel computational paradigm—Self-Adaptable Graph Attention Networks integrated with Symbolic Regression (SA-GAT-SR)—that synergistically combines the predictive capability of GNNs with the interpretative power of symbolic regression. Our framework employs a self-adaptable encoding algorithm that automatically identifies and adjust attention weights so as to screen critical features from an expansive 180-dimensional feature space while maintaining O(n) computational scaling. The integrated SR module subsequently distills these features into compact analytical expressions that explicitly reveal quantum-mechanically meaningful relationships, achieving 23 × acceleration compared to conventional SR implementations that heavily rely on first-principle calculations-derived features as input. This work suggests a new framework in computational materials science, bridging the gap between predictive accuracy and physical interpretability, offering valuable physical insights into material behavior.

Similar content being viewed by others

Introduction

The modern discovery of advanced functional materials demands predictive frameworks that successfully reconcile frequently conflicting requirements: quantum-level precision, generalizable physical insights, and human-interpretable design principles. While conventional computational methods struggle to address this challenge, machine learning (ML) approaches have shown remarkable progress across a wide range of materials property prediction and discovery of novel materials1,2. Specifically, two distinct ML paradigms have emerged in solid state materials research: symbolic regression (SR), offering equation-based interpretability, and graph neural networks (GNNs) excelling in structure-property mapping accuracy. SR reveals fundamental correlations through transparent mathematical descriptors to generate explicit descriptors. These descriptors are expressed as mathematical relationships among various material features, providing actionable insights for rational material design to achieve desired properties. However, the explicit feature combination in SR limits its ability to capture complex relationships among features in high-dimensional spaces. In contrast, GNNs circumvent this limitation through automated learning of hidden material representations via message-passing architectures3,4,5,6, but their black-box nature obscures the underpinning atomic-scale physics driving property variations—a critical barrier for scientific discovery. This inherent trade-off between interpretative clarity (SR) and automated learning capability (GNN) has constrained computational materials science to situation-specific solutions rather than unified and robust discovery platforms.

Over the past decade, remarkable progress has been achieved in advancing both GNNs and SR methodologies within the realm of computational materials science. For GNNs, a diverse array of architectures has emerged, ranging from message passing neural networks (MPNN)7 and crystal graph convolutional neural networks (CGCNN)8, to atomistic line graph neural networks (ALIGNN)9 and Graphformer10,11,12, each pushing the boundaries of accuracy in predicting material properties. Concurrently, substantial efforts have been dedicated to refining GNN components, including feature engineering13,14,15,16, algorithms for updating node and edge attributes17,18,19, and readout functions20, all aimed at enhancing the alignment of graph-based representations with fundamental physical principles. Furthermore, integrating algorithms and strategies from other domains in GNN can improve its performance and has been validated as feasible in specific cases21,22,23,24,25,26,27,28,29,30. On the other hand, SR has found significant success in deriving interpretable mathematical descriptors for specific material systems, such as perovskites, where it has guided the design of materials with optimal band gaps for photovoltaics, enhanced catalytic activity for oxygen evolution reactions, and similar applications31,32,33,34. Despite these advancements, a critical gap remains: the lack of an integrated framework that combines GNNs and SR to simultaneously achieve model enerality, high predictive accuracy, and interpretable descriptor generation. Current approaches in material screening and guided synthesis often prioritize one aspect at the expense of others, highlighting the need for a unified methodology that harmonizes the strengths of both paradigms to accelerate materials discovery and design.

In this work, we present a computational framework that synergistically integrates graph neural networks (GNNs) and symbolic regression (SR) facilitating advancements in the understanding of structure-property relationships in materials science. Our approach introduces a sophisticated feature encoding algorithm within the GNN architecture, wherein each physical quantity feature is assigned independent weight parameters and subsequently aggregated in a high-dimensional latent space. This unique self-adaptable Graph Attention Networks combined with SR (SA-GAT-SR) formulation enables the generation of importance coefficients (ICs) at both atomic and crystallographic levels, facilitating rigorous feature screening and offering unprecedented physical interpretability of deep learning models. The re-weighted feature representations, augmented by the predictive outputs from our GNN module, are then processed through a highly optimized SR framework to extract explicit mathematical descriptors. The analysis of the resulting symbolic expressions derived from our framework, elucidates the fundamental mathematical relationships governing material property variations. This integrated paradigm effectively bridges the gap between the predictive accuracy of deep learning approaches and the interpretability of traditional scientific modeling techniques, thereby offering a robust platform for accelerated materials discovery and design.

Results

Joint paradigm of graph attention network and symbolic regression

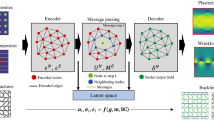

The SA-GAT-SR model is an end-to-end framework that takes crystal structures and associated features as input. Compared to previous works, which are predominately based on deep learning (DL) methods, and aim to develop universal models capable of predicting material properties across diverse systems35,36,37,38, the proposed SA-GAT-SR methodology provides both a mathematical expression and predictive results that offer physical insight. Additionally, the model outputs an importance ranking of the initial features. Figure 1a illustrates the flowchart for training a joint prediction model for specific material systems using the SA-GAT-SR approach, which can be broadly divided into four key steps. The data acquisition stage involves gathering materials from the system of interest to form the high-quality training dataset. To construct node feature vectors, the dataset also requires a large set of corresponding atoms and crystal characteristics including those that construct the best descriptors as much as possible. Subsequently, the feature engineering algorithm respectively converts the material structure information and physical characteristics into a graph representation and nodes, global features and produce the ICs of each characteristics. After that, we obtain the well-trained GNN model and the corresponding GNN-level prediction result, which completes SA-GAT step. According to the ICs and initial prediction results given by pre-stage GNN model, the third level filters atomic and global features based on the specified number of reserved features. The input features of SR module are combined with the reserved features and the GNN module prediction. Finally, in the fourth step, SR module derives a series of expressions based on the recombined features.

a The flowchart of SA-GAT-SR model encompasses four sequential stages: data acquisition (blue), preliminary GNN prediction (yellow), feature screening (pink), and symbolic regression (brown). The combination of GNN prediction and reserved features in the feature screening step is used as the input features of SR module. b The architecture of the GNN module. In the self-adaptable encoding (SAE) algorithm, the raw feature vector consists of scalar properties associated with atoms and unit cells from the crystal structure. The SAE assigns a weight to each characteristic and generates the initial feature vector. The blue and orange circles represent atomic and global node features, respectively. The message-passing layers include stacked node update modules, as illustrated, allowing iterative updating of feature vectors through the GNN architecture.

From the perspective of model architecture, the SA-GAT-SR framework consists primarily of two modules, i.e., the GNN module enhanced by self-adaptable encoding (SAE), and the SR solver module. The GNN module comprises an SAE layer, multiple message-passing and global feature updating layers, and a readout layer. The SAE layer is responsible for performing feature engineering, constructing the initial node, edge, and global feature vectors from atomic, bonding, and crystal cell features, respectively, based on ICs. The message-passing layers operate on the crystal graph, iteratively updating each feature vector. After processing through all the updating layers, the readout layer produces the final output. The output from the GNN module serves as an initial approximation of the final prediction and is fed into the SR module, implemented by the sure independence screening and specifying operator (SISSO) method39,40.

Self-adaptable encoding and screening of initial features

To convert crystal structures into graph representations compatible with GNN, we encode atomic properties as feature vectors, while the connections between atoms correspond to node and edge features, respectively. In CGCNN, one-hot encoding relies solely on atomic number, limiting its ability to capture other physical characteristics of atoms. When predicting target properties across different materials, the sensitivity of the output to input features can vary significantly. Our extensive experiments reveal that feature construction has a far greater impact on predictive performance than the specific algorithms used for feature updating. Therefore, we incorporate a self-attention-based importance-weighting module within the feature engineering process (see Supplementary Note 1).

The initial feature sets, based on physical quantities, are represented as Ra for the atomic level and Rc for the crystal level variables, respectively. Importantly, feature sets can also be constructed for distinct atomic types or other structural units. As shown in the Fig. 1b, the raw feature vector of an atom in the material is denoted as Ra = {r1, r2, r3, . . . , rN}, where N represents the size of the initial feature set. To capture more complex nonlinear relationships among these characteristics, we define a projection function σ( ⋅ ) that contains learnable weight vectors and adaptively embeds each scalar feature into a unique high-dimensional hidden vector. This can be represented as \({h}_{{r}_{i}}=\sigma ({r}_{i}),{h}_{{r}_{i}}\in {{\mathbb{R}}}^{M}\), where M denotes the dimension of feature vector of \({h}_{{r}_{i}}\). The raw hidden feature of Ra that is a vector of length 180, can be represented as \({H}_{a}=\sigma ({R}_{a})=\{{h}_{{r}_{1}},...,{h}_{{r}_{N}}\}\). Each hidden feature vector in Ha represents a unique high-dimensional embedding of its corresponding physical characteristic. Subsequently, to evaluate the importance of each high-dimensional feature, we introduce a learnable matrix Wa. The ICs are computed as the inner product between the weight vector Wa and each \({h}_{{r}_{i}}\), followed by softmax normalization to yield the attention coefficients, which are then used as weights for the hidden feature vectors. To capture the relevance of each feature to the target property, attention weights are assigned and summed to generate the initial node feature vectors in the graph representation. The feature engineering process of node features can be described by:

where the f( ⋅ ) function is used to compute the scale attention of \({h}_{{r}_{i}}\), and the h0 denotes the initial feature vector at layer 0. The node features are calculated by SAE algorithm. The outcome directly depends on the selection of the corresponding initial feature set Ra. However, the calculation method for the global feature differs slightly from that of node features. To obtain the ICs of features from different initial feature sets, we concatenate Ra and Rc to form the initial global feature set. By doing so, we can compute the ICs for each relevant characteristic and the global node feature vector, as outlined in eq (1). This approach enables the comparison of the importance between features derived from different feature sets. For various materials, the global node features integrate both atomic-level characteristics and structural material features, ensuring that the crystal graph is uniquely defined. This integration helps mitigate the risk of over-smoothing during model training, which is a common issue arising when node information is excessively aggregated.

As illustrated in Fig. 1, we can select highly relevant features from the initial feature set using the weight set and preliminary predictions generated by the trained GNN module. Assuming that there are M initial feature sets, each associated with key atoms and structural units, they are denoted as \(\Phi =\{{R}_{a}^{1},\ldots ,{R}_{a}^{M}\}\), along with a unique initial crystal feature set Rc. The size of each initial feature set is not required to be uniform, thereby allowing for the flexible selection of various physical quantities to construct these feature sets. The combined initial global feature set is represented as \(\Psi =\{{R}_{a}^{1},\ldots ,{R}_{a}^{M},{R}_{c}\}\). Subsequently, the ICs for all characteristics can be derived through the SAE process applied to the set Ψ. The ICs set of characteristics in different initial feature sets is defined as A = {a1, . . . , aN} serving as the foundation for subsequent feature screening, where N stands for the number of all characteristics. Based on the ICs sets A, we determine the importance ranking of each feature and select the top-k features from different feature set, which serves as the input for the subsequent SR module. The number of retained features per set can be independently adjusted according to specific requirements. In practice, reducing the number of selected features significantly accelerates the SR process highlighting critical importance of feature screening of GNN module.

The input feature set for the SR module is defined as \(\Omega =\{{S}_{a}^{1},...,{S}_{a}^{M},{g}_{s}\}\), where \({S}_{a}^{i}\) and gs represent the selected features from \({R}_{a}^{i}\) and g, respectively. In this work, the SR process is implemented using the SISSO machine-learning method. Unlike other approaches that rely on Density Functional Theory (DFT) results, which have limited accuracy but fast calculation speed and still demand substantial computational resources, our method uses the GNN predictions as input features to the SR module. These predictions are efficiently generated by the GNN model, thereby relaxing the strict requirement for material datasets to have DFT-based pre-calculated results. In addition to the number of screened features, the dimensionality and complexity of the descriptors significantly influence both the accuracy of the predictions and the computational efficiency of the SR process (see Supplementary Fig. 1). Detailed comparisons, including the effects and trade-offs of higher complexity on model performance and the emergence of overfitting, are provided in Supplementary Note 2. Careful optimization of these parameters is essential to achieving a balance between performance and resource consumption.

Application of the model in single oxide and halogen perovskite materials

In the following computational experiments, we concentrate on predicting multiple properties of single-oxide and single-halogen perovskites with the general formulae ABO3 and ABX3 (X=F, Cl, Br, I) using the JARVIS-DFT41 dataset (version 2021.8.18). This dataset comprises extensive materials and provides a comprehensive set of solid-state properties ideally suited for model training and evaluation. In total, we curated a subset of 689 perovskite materials for our studies. To handle missing labels in the JARVIS dataset, we filtered the perovskite structures to include only those with available computed values for each target property. The hyperparameter configurations of the GNN and SR modules in the SA-GAT-SR model are summarized in Supplementary Table 1. The SR module takes a total of 15 input features, which include the prediction output from the GNN module. To simplify the final expressions and accelerate the SR derivation speed, we set the unified descriptors complexity and expression dimension to 1 and 3 for all prediction tasks, respectively. The train-validation-test split ratio of our SA-GAT-SR and all baseline models is maintained at 85%:10%:5% due to the limited dataset of 689 perovskite samples, enhancing training stability while maintaining sufficient validation and test data. From the extensive set of properties available in this dataset, we select the following key attributes for evaluation: bandgaps corrected by the optimized Becke88 functional with van der Waals interaction (OptB88vdW)42, formation energies, dielectric constants without ionic contributions (ϵx, ϵy, ϵz), Voigt bulk modulus (Kv) and shear modulus (Gv), total energies, and energies above the convex hull (Ehull).

For the initial feature set, we select 7 physical quantities for each element and 9 physical quantities for the crystal, resulting in a combined feature vector comprising 30 features for each crystal43,44. However, since the X site of all ABO3 materials is fixed as oxygen, we focus on screening features from the A site and B site atoms as inputs to the SR module and the number of initial features is 23. The symbols used in the SA-GAT-SR model are summarized in Table 1 and their corresponding detailed information is provided in Supplementary Table 2. The octahedral factor Gμ and tolerance factor Gt are defined as Bir/rX and \(\frac{{A}_{ir}+{r}_{X}}{\sqrt{2}({B}_{ir}+{r}_{X})}\), respectively, which are commonly used for describing perovskite structures, where the rX denotes the ionic radius of oxygen or halogen anion. Both features can, to a certain extent, reflect the stability of the entire crystal structure. Moreover, the ratio of these two features has been shown to exhibit a strong correlation with several functional properties of perovskite materials32. As a result, this ratio is an initial feature in our analysis. Multiple studies have demonstrated that lattice structural distortions in perovskite materials can significantly influence a range of their properties45. To characterize these distortion characteristics, we select a representative BX6 octahedron and compute the mean B-X-B bond angle between its six neighboring octahedra, denoted as ΘBXB. To further enhance the stability and interpretability of the calculated values, we adopt the cosine of ΘBXB as a distortion feature. This cosine-based measure is preferred as it maps the bond angle distortion to a range between -1 and 1, providing a naturally normalized representation of the structural distortion. Further analysis of these distortion features, specifically the evaluation of data redundancy elimination using thresholds of 0.005 and 0.02, is provided in Supplementary Figs. 2 and 3.

The performance of the SA-GAT-SR model on the ABO3 and ABX3 datasets is summarized in Supplementary Tables 3 and 4, respectively. We employ the mean absolute error (MAE) metric to evaluate regression accuracy. Furthermore, to validate the robustness of the model, we combine both datasets and assesse the model’s performance on the merged dataset, with the results presented in Table 2. The merged dataset can be denoted as AB(O∣X)3. For comparison, we also train and test other state-of-the-art models, including Graph Attention Network (GAT)46, CGCNN, and ALIGNN, using the same dataset and identical train-validation-test splits on each dataset (see Supplementary Note 3). All models, including SA-GAT-SR and baselines, were trained and evaluated using the same problem-specific physical features (Table 1), ensuring performance differences stem from model architectures, not input variations. The SA-GAT-SR model integrates a GNN module with an SR module, where the final prediction accuracy largely depends on the performance of the GNN component. To further assess the contribution of each module, we conducted an ablation study to separately evaluate their individual performances. For the SR module, the input feature set remains consistent with that of the SA-GAT-SR model, except for the initial prediction results from the preceding GNN stage. To ensure robust evaluation, we performed multiple random independent experiments based on the same data split and reported the average performance metrics as the final results. In most cases, the SA-GAT-SR model demonstrates superior performance compared to the control group models. The lowest MAE for bandgap (Egap) with SA-GAT-SR is 0.147 eV, which outperforms GAT, CGCNN and ALIGNN by 19.7%, 18.3% and 10.9%, respectively. Furthermore, the full SA-GAT-SR model builds upon this foundation, achieving additional improvements in accuracy. Without the predictions from the GNN module, the MAE for Egap of individual SR module struggles to achieve 0.768 eV accuracy. However, in certain cases, the SR module outperforms the GNN module, which is shown in Supplementary Table 4. The MAE for Ehull using only the SR module is 0.075 eV, outperforming the 0.090 eV achieved by the GNN module alone. All models were trained and evaluated on the same dataset for each property, ensuring fair horizontal comparisons across models. However, varying sample sizes across properties may affect a single model’s performance comparison across properties, though this does not impact model comparisons for individual properties. Given the established importance of Out-of-Distribution (OOD) performance as a critical metric for material discovery, we have conducted a systematic evaluation of the OOD performance of the SA-GAT-SR method, with results and analyses presented in Supplementary Table 5 and Supplementary Note 4. In addition, in order to better demonstrate the generalization of our model, we also conducted systematic experiments on non-perovskite datasets, i.e., MOene dataset47,48,49. MOenes represent an emerging class of MXene-like two-dimensional (2D) materials, characterized by the chemical formula Mn+1OnT2 (n = 1–4), where “O” denotes an oxygen-group element (O, S, Se, or Te), expanding the scope of traditional MXenes to include 2D transition metal oxides. The SA-GAT-SR model demonstrated robust performance in predicting the total energy, work function, bandgap, valence band maximum (VBM), and conduction band minimum (CBM) (see Supplementary Fig. 4, Supplementary Table 6, and Supplementary Note 4), further confirming the generalizability of our framework. To assess space complexity, we analyze the number of trainable parameters in each model. The SA-GAT-SR model contains a total of 6.303M parameters, primarily due to the feature embedding matrices, which scale with the size of the input feature set and are not involved in the model’s training speed. In contrast, ALIGNN comprises only 4.027M parameters. Notably, the trainable core of SA-GAT-SR includes just 3.351M parameters. Moreover, when the hidden feature dimension is set to 128, the parameter count of the core model can be reduced to as few as 1.011M without a significant loss in prediction accuracy.

To evaluate the impact of the GNN module within the SA-GAT-SR framework on the final results, we conducted another ablation study, with the findings summarized in Fig. 2. This experiment primarily investigates the influence of the number of selected features on the SR module’s derivation time and the prediction MAE for formation energy. For the convenience of expression, the reserved features combination of bandgap with the number of n is denoted as BG{n}, where the total number of the input features of SR module is actually n + 1 including the prediction of GNN model. Figure 2a demonstrates that the derivation time increases sharply as the total number of features grows (Supplementary Note 5 and Supplementary Table 7). Notably, when the feature set size reaches 22, the SR derivation time exhibits exponential growth, rendering it impractical to compute descriptors for larger datasets. It should be emphasized that the exponential growth depends solely on the number of features and is independent of the specific types of features selected, making the SAE feature selection method suitable for scenarios involving varying feature sets. In the feature screening process of the SAE module, 16 features are retained as input for the SR module to enhance accuracy, resulting in a derivation time of 32.59 seconds. In contrast, the conventional SR process requires retaining most features to ensure generalization, such as using 28 features, which takes 720.95 seconds. This demonstrates that the SAE algorithm improves the efficiency of SR by a factor of 23 compared to the conventional SR process while simultaneously enhancing accuracy.

a Dependence of the SR derivation time on the number of input features. While keeping the dimensionality and complexity of the final expressions constant, the derivation time shows exponential growth as the number of input features increases. b The bandgap MAE performance of the SA-GAT model with different hidden feature dimensions across three datasets. c, d The performance of all methods-including SA-GAT-SR, SA-GAT, ALIGNN, CGCNN, SR, and GAT-on bandgap and formation energy prediction is evaluated. SA-GAT corresponds to the GNN module within SA-GAT-SR, while SR uses the same feature set as SA-GAT-SR but excludes the GNN-predicted output. e–g Bandgap prediction performance, comparing the SA-GAT-SR model with and without the GNN module across three datasets as the number of reserved features increases. e–g present the results of our study on the ABO3, ABX3 and AB(O∣X)3 datasets, respectively.

To demonstrate the influence of the hidden feature dimension on the performance of SA-GAT model, we conduct a study on the bandgap prediction across three datasets. The results are shown in Fig. 2b. A dimension that is too small can in under-fitting, whereas a dimension that is too large yields only marginal improvements in performance. As the dimension comes to 128, the MAE stabilizes around 0.190 eV, 0.107 eV, and 0.175 eV across three datasets. This indicates that each feature vector of node and edge is sufficiently expressive to capture the characteristics of real atoms and bonds. Additionally, in order to find out the adventage of SA-GAT-SR model against the traditional SR method, we conduct another ablation study. As shown in Fig. 2c, d, the GNN initial approximation method of SA-GAT-SR model has much lower MAE and higher stability compared to traditional method. Withing the SA-GAT-SR framework, the SR process serves as a second-level approximation building upon the prediction results of the GNN module and delivering further improvements in the overall prediction accuracy. In addition to the advantage of making the results more accurate, the screening capability of SAE algorithm can filter out less relevant features and reduce the size of SR input features. As for the prediction of formation energy and bandgap in AB(O∣X)3 dataset, the SA-GAT-SR model achieves lowest MAE of 0.101 and 0.147, respectively. The SA-GAT—GNN module of SA-GAT-SR model also suggests comparable performance compared with CGCNN and ALIGNN achieving MAE of 0.109 and 0.194. As shown in Fig. 2e–g, as the number of reserved feature increases, the feature search space expands accordingly. Therefore, as the number of input features increases, the MAE eventually converges to a fixed value, because the input feature set becomes sufficiently large to encompass all features required for the optimal solution. The speed of MAE convergence can thus serve as a valuable metric for evaluating the quality of the selected features. SR process with the GNN module exhibits stable performance BG14, while simultaneously maintaining a high level of prediction accuracy. Without the GNN module, the expression result in AB(O∣X)3 dataset remains unstable until BG22, while results in ABO3 and ABX3 datasets stabilize earlier but continue to exhibit high MAEs. The performance of the SA-GAT-SR prediction across all target properties is provided in Supplementary Fig. 4.

Despite the fact that the SAE mechanism does not directly enhance the accuracy of the final results, it provides valuable insights by reflecting the importance of each initial feature, thus offering physical interpretation. The ICs for each feature across all prediction tasks are shown in Fig. 3a–c, where the values represent the relative importance ratios. Although the AB(O∣X)3 dataset encompasses all materials in ABO3 and ABX3, the feature distributions differ across the three datasets. Consequently, the relative importance of the same initial feature varies between datasets. Similarly, the IC distributions for different properties are distinct, reflecting the physical significance of different features. In the ABO3 dataset, AQ shows a relatively high contribution to all properties except for bandgap, with IC varying from 0.0556 to 0.0689. In contrast, Gdis exhibits a high IC of 0.0739 for bandgap, indicating that crystal structure distortion significantly influences this property. For the AB(O∣X)3 dataset, Gdis also demonstrates a high IC from 0.1029 to 0.1683 across all properties, except formation energy and total energy.

a–c The ICs derived by the SAE algorithm within the GNN module, where (a–c) correspond to the ICs results for ABO3, ABX3, and AB(O∣X)3, respectively. For each property prediction task, the IC reflects the significance of a specific feature within its feature set. In the figures, EF, Egap, and ET denote formation energy, bandgap, and total energy, respectively, for convenience. d–f Comparison of the GNN model with different three feature embedding algorithms in formation energy prediction on the AB(O∣X)3 dataset. The blue diamonds and red dots represent the results on the training set and testing set, respectively. The GNN model with the fully connected network (FCN) embedding algorithm serves as a baseline, reflecting standard performance. The model with the CGCNN embedding algorithm utilizes one-hot encoding followed by the FCN to generate feature vectors.

To further evaluate the role of the SAE algorithm, we replace the SAE module with a fully connected network (FCN) and the CGCNN one-hot encoding method for feature embedding. Comparative experiments are conducted on the same dataset using identical hyperparameters. The MAE values obtained using the FCN, CGCNN, and SAE algorithms are denoted as \({E}_{{\rm{pred}}\_{\rm{FCN}}}\), Epred_CGCNN, and Epred_SAE, respectively. As shown in Fig. 3d–f, the GNN model based on SAE significantly outperforms those using FCN and CGCNN with the best MAE of 0.107 eV/atom. Among them, the FCN encoding demonstrates the poorest performance, yielding an MAE of 0.223 eV/atom. Additionally, it exhibits an overfitting issue, as evidenced by the MAE in the testing set being 0.022 eV/atom higher than that in the training set. The CGCNN encoding method shows suboptimal MAE of 0.142 eV/atom due to the limited information used to distinguish different atoms, as it relies solely on the atomic number. This limitation results in underfitting, as indicated by the MAE in the testing set being 0.030 eV/atom lower than that in the training set and prevents it from capturing the critical importance of features. Furthermore, the performance of the final descriptors depends on the predictive accuracy of the GNN module. Consequently, the SAE method yields the most accurate results and best robustness with a minimal difference of 0.002 eV/atom between the training and testing sets.

Compared to DL based methods, the interpretability of our SA-GAT-SR model primarily lies in the final derived mathematical expressions. The expression result of the SA-GAT-SR model is composed of material-specific combination feature descriptors (e.g., \(\frac{{B}_{en}}{{A}_{en}}\)) and a neural network-derived descriptor (EP). Notably, the relationship between EP and the initial material features is nonlinear. Changes in the initial features not only affect the final output of the expression but also alter the value of the coupled neural network descriptor EP. Let the feature set comprising the initially selected physical quantities excluding EP be denoted as M0. If we define the mapping relationship between a material’s structure and its properties as \({\mathcal{F}}(\cdot )\), the SA-GAT-SR model result can be represented as follows:

where EP( ⋅ ) represents the function describing the relationship between the GNN module’s predictions and the initial features. The above expression can be directly applied for material’s feature screening tasks. However, when using the model for guiding new material development, it is crucial to understand how the predicted results are influenced by each feature. The predicted value from the GNN model cannot be directly used as a guiding descriptor because it lacks an explicit mathematical relationship with the feature set M0. In most experimental outcomes, the coefficient of EP is typically close to 1. Consequently, the final expression can be interpreted as a sum of the prediction and correction terms.

To ensure that the derived expressions are interpretable and easy to analyze, we constrain the complexity of each descriptor to 1. In the bandgap prediction experiment, Table 3 represents a result that achieves both high accuracy and simplicity.

The expression incorporates both XQ and Gdis, which have high ICs and are factors related to the stability of crystal structure. Among the terms, the coefficient of EP is 1.06, which can be reasonably approximated as 1. The Gdis, which has a value range from −1 to 1, appeares with a small coefficient of −0.13 in the expression, indicating that it has only a minor impact on the predicted value. Therefore, the descriptor has a value range from −0.13 to 0.13. In contrast, XQ has relatively larger coefficient of 0.20, modulating bandgap through ionic effects of the X-site oxidation state. Given that the mean value of XQ across all materials is −1.83, the mean value of the descriptor is the same. The MAE of the derived equation of bandgap property in Table 3 and the corresponding GNN module is 0.159 eV and 0.194 eV, respectively, indicating that the inclusion of XQ as a key factor has a significant impact on the improvement in predictive performance. Both parameters may have a combined influence on the bandgap variation. Notably, −0.13 Gdis reducing the bandgap with increasing distortion. Octahedral distortions in perovskites reduce the bandgap by enhancing orbital overlap or altering d-orbital splitting, thereby raising the valence band maximum (VBM) or lowering the conduction band minimum (CBM) and leading to more significant bandgap narrowing. Based on this model, when designing perovskite materials, we can optimize the bandgap by tuning the lattice distortion (Gdis) and the tolerance factor (XQ). Additional expression results with a descriptor complexity of 2 are provided in Supplementary Table 8. The work by Wang et al. also derived an expression predicting the bandgap property of photovoltaic perovskites based on SISSO31. In contrast to the derived equation of SA-GAT-SR, they use the DFT calculation results based on Perdew-Burke-Ernzerhof (PBE) functional as a feature similar to the Ep in equation. (3), which can be expressed as

where EPBE denotes the PBE bandgap energy. And lX and lB represent the energy levels of lowest-unoccupied molecular orbitals (LUMO) of the atoms in B site and X site, respectively. Given that the PBE functional typically underestimates bandgaps by around 50%, the coefficient of EPBE in equation (3) is accordingly much greater than 1. This equation leverages a less accurate DFT result to learn a correction term that more closely approximates the true value. In comparison, our formulation uses a GNN-predicted value—offering higher accuracy—as the initial approximation and entirely removes the dependency on per-material first-principles calculations, making it highly suitable for fast, scalable property predictions over large materials databases.

Many prior DL based approaches have attempted to develop fully universal models capable of accurately predicting any property using ultra-large-scale datasets. GNN models are often highly sensitive to the training dataset, making it challenging to improve robustness without careful tuning and data selection. In many cases, the primary objective is to identify key descriptors of the target property and leverage them to guide the discovery of new materials. Compared to neural network-based descriptors derived from crystal structures, mathematical descriptors offer greater interpretability and more actionable insights. The derived equation of bandgap property in Table 3 presents a combined descriptor, −0.13Gdis + 0.20XQ, which effectively captures the relationship between structural factors and the target property. The SISSO algorithm generates multiple expression models with comparable predictive performance from a large descriptor space. In our experiments, we set the number of retained expression models to 50. Although the derived equation in Table 3 achieves an MAE of 0.159 eV, it is not the most accurate among the generated models. The MAE of the output models ranges from 0.1563 eV to 0.1723 eV, with the top-ranked models exhibiting similar predictive performance. In certain cases, it is not always necessary to select the expression with the best performance as the final result. A more concise and interpretable expression may be preferred, such as a descriptor with a complexity of 1. When screening materials across large-scale datasets to meet specific requirements, the expression with the best predictive performance is typically applied, as achieving the highest accuracy is paramount in such scenarios. However, in the context of new material development, simplicity and interpretability of the descriptors become more important32. A simpler expression allows for a clearer and more intuitive determination of the optimal composition or structural features of the new material, facilitating the material design process.

Discussion

In this study, we integrated GNN-based deep learning methods with symbolic regression and developed the SA-GAT-SR model, which achieves both high accuracy and interpretability. Compared to previously reported approaches, we propose a novel feature encoding algorithm that enhances automating large-scale feature screening, which reduces the number of SR input features from 31 to 14. This advancement significantly reduces the computation time of SR module achieving at least 23-fold acceleration compared to conventional SR method. Our approach simplifies and optimizes the feature requirements for input data in traditional SR-based methods, making the model more versatile and accessible when constructing and working with new datasets. We find that in predicating various material properties, the SA-GAT-SR model outperforms both the state-of-the-art GNN models and standalone SR models. In most property predictions, our model achieves an R2 of 0.93 or higher across tested systems indicating strong predictive performance and reliability. In addition to the high accuracy of the SA-GAT-SR model, which is well suited for large-scale material screening, our approach also offers strong interpretability by generationg physically meaningful descriptors, marking a significant advancement in both performance and insight. With our method of combining with GNN and SR, the model’s learning objective can be naturally shifted from directly predicting material properties to learning combinations of meaningful descriptors along with their associated coefficients. By embedding interpretability directly into neural architectures, these models enable a more transparent decision-making process and facilitate knowledge extraction from complex datasets. This may potentially foster data fusion bridging experimental and ab initio results that may have different fidelity and origin. Looking forward, the development of self-explanatory neural models will further enable automated and interpretable insights that will drive more efficient material design and discovery.

Methods

Dataset

In this study, we employ the dataset of ABO3 and ABX3 (X=F, Cl, Br, I) type perovskite materials from the JARVIS-DFT (Joint Automated Repository for Various Integrated Simulations)41 database for training and evaluating our model. The JARVIS-DFT database is a widely recognized resource that provides high-quality, density functional theory (DFT) calculated properties for a diverse range of materials. For our analysis, we selected materials that conform to the perovskite crystal structure as the dataset. Due to the presence of lattice distortions, the crystal symmetry of these materials includes not only cubic but also tetragonal, hexagonal, monoclinic, and orthorhombic phases. In total, we curated a subset of 689 perovskite materials for further study. It is worth noting that some materials in the dataset may be missing certain properties due to incomplete data. Given that the JARVIS-DFT database is regularly updated, we chose the specific version of the dataset from 2021.8.18, as it is the most commonly used version in recent studies and ensures consistency with previous research.

Self-adaptable Graph attention networks (SA-GAT)

Our SA-GAT-SR model is a variant architecture based on GAT50 for the specificity of material data, especially the crystal materials. In the following, we define the crystal graph as an undirected graph with N nodes, denoted by \({\mathcal{G}}=(V,E,g)\), where V represents the node set, E represents the edge set, and g denotes the global node vector containing initial global features, which provides the unique representation of the graph. The node with index i is represented as vi, and the feature vector of vi at layer l is denoted by \({h}_{i}^{l}\). The corresponding edge feature in E, which connects nodes vi and vj, is represented by \({e}_{ij}^{l}\). We consider the 12 nodes within a distance of 12 Å from vi as the neighboring nodes of vi and utilize a radial basis function (RBF) to compute the edge feature vectors. Additionally, we define a ranking index matrix \({{\mathcal{R}}}_{g}\in {{\mathbb{Z}}}^{N\times 12}\), which stores the distance ranking of each neighboring node relative to vi. Each row vector of \({{\mathcal{R}}}_{g}\) represents the ranking index of the neighboring nodes of vi.

To start, we convert the material structure into the graph representation using the self-adaptable encoding (SAE) method. Meanwhile, SAE produces the ICs of each feature for the following screening step. Then, the node features of graph are fed into the following message passing layers and iteratively updated. The normal nodes and global node representing atoms and crystal respectively are updated by different algorithms. After passing through all updating layers, we obtain the final global node feature gL, where L represents the total number of layers in the GNN module. The global node has learned the characteristics of the entire material and becomes a unique representation of this material. Finally, the readout function extracts deep information from gL by a feed-forward network and gives out the prediction result, which is represented as:

The specific form of the output \(\hat{y}\) depends on the nature of the prediction task, such as classification or regression. The FFN( ⋅ ) function includes activation functions and regularization operations, tailored to optimize the model performance according to the task requirements.

Atomic nodes and global node updating method

In the GNN module, we incorporate the distance factors of different node pairs by leveraging the ranking matrix. We adopt the encoder architecture of the Transformer51,52,53,54 to update graph node features and employ a novel set2set55 based algorithm to update the global node feature. As shown in Fig. 1b, the orange global node acts as q* vector in the set2set model to represent the state of the node set. The network is composed of multiple serially stacked blocks represented by green rectangle, each comprising a message passing layer and a set2set layer, which update the atomic and global nodes, respectively.

At the start of each layer, the ranking matrix \({{\mathcal{R}}}_{g}\) is embedded into a distance coefficient matrix \({{\mathcal{C}}}_{g}\in {{\mathbb{R}}}^{N\times 12}\), represented by \({{\mathcal{C}}}_{g}={{\rm{Emb}}}_{r}({{\mathcal{R}}}_{g})\). The message passing update algorithm is formulated as follows:

where \({c}_{ij}\in {{\mathcal{C}}}_{g}\) is a scaling factor representing the distance coefficient between vi and vj, and ⊕ denotes the vector concatenation operation. Ni denotes the neighbors of vi in graph \({\mathcal{G}}\), while Wqry, Wkey, Wval, Wedge are learnable embedding matrices. Equations (5) and (6) represent the updates of the node feature hi and edge feature eij connecting vi and vj in layer l + 1.

The core of the GNN module comprises multiple stacked message-passing layers that iteratively update the node and edge features. The readout operation is performed using the global node feature, which is updated at each layer. Inspired by previous work56,57, the global node, sometimes referred to as [VNode] in other models, is an artificially introduced node. Unlike other approaches, our model’s global node, which represents the entire graph is initialized from actual crystal properties via the SAE module. Thus, it acts as a representation of the graph for the readout operation but does not participate in node updates within the graph.

To enhance information retention across layers, we employ an inter-layer set2set method with a GRU58 updating unit. This configuration allows the readout operation to be conducted on the final output global node from the last layer, computed as follows:

where,

In the above equations, \({W}_{qry}^{q},{W}_{key}^{q},{W}_{val}^{q}\) are learnable matrix parameters within the readout module, and \({N}_{i}^{1}\) denotes the 1-hop neighbors of vi in graph \({\mathcal{G}}\). The query feature vector ql+1 in the set2set module is derived from the global node using a GRU function that encapsulates key graph information. The \({e}_{i}^{l+1}\) is computed based on \({x}_{i}^{l+1}\), obtained from node features while accounting for the local environment around each node. The function φ( ⋅ ) represents a feed forward network (FFN) that converts the combined result into the output global node feature.

Data availability

The data utilized in all experiments presented in this study are publicly accessible on GitHub at https://github.com/MustBeOne/SA-GAT-SR/rawdata. We have provided comprehensive datasets, including crystal structure data and their associated distortion information, to enable researchers to replicate the experiments and achieve consistent results.

Code availability

The code and well-trained GNN model, and all configurations used in this work are available on GitHub at https://github.com/MustBeOne/SA-GAT-SR.

References

Zou, Y., Qian, J., Wang, X., Li, S. & Li, Y. Machine learning-assisted prediction and interpretation of electrochemical corrosion behavior in high-entropy alloys. Comput. Mater. Sci. 244, 113259 (2024).

Xu, G., Xue, Y., Geng, X., Hou, X. & Xu, J. A machine learning-based crystal graph network and its application in development of functional materials. Mater. Genome Eng. Adv. 2, e38 (2024).

Okabe, R. et al. Virtual node graph neural network for full phonon prediction. Nat. Comput. Sci. 4, 522–531 (2024).

Chen, C., Ye, W., Zuo, Y., Zheng, C. & Ong, S. P. Graph networks as a universal machine learning framework for molecules and crystals. Chem. Mater. 31, 3564–3572 (2019).

Hwang, D. et al. Comprehensive study on molecular supervised learning with graph neural networks. J. Chem. Inf. Model. 60, 5936–5945 (2020).

Wang, T. et al. Crysformer: An attention-based graph neural network for properties prediction of crystals. Chin. Phys. B 32, 090703 (2023).

Gilmer, J., Schoenholz, S. S., Riley, P. F., Vinyals, O. & Dahl, G. E. Neural message passing for quantum chemistry. In Proc. 34th International Conference on Machine Learning (eds Precup, D. & Teh, Y. W.) vol. 70, 1263–1272 (2017).

Xie, T. & Grossman, J. C. Crystal graph convolutional neural networks for an accurate and interpretable prediction of material properties. Phys. Rev. Lett. 120, 145301 (2018).

Choudhary, K. & DeCost, B. Atomistic line graph neural network for improved materials property predictions. npj Comput. Mater. 7, 185 (2021).

Chen, D., O’Bray, L. & Borgwardt, K. Structure-aware transformer for graph representation learning. In Proc. 39th International Conference on Machine Learning (eds Chaudhuri, K. et al.) vol. 162, 3469–3489 (2022).

Witman, M. D., Goyal, A., Ogitsu, T., McDaniel, A. H. & Lany, S. Defect graph neural networks for materials discovery in high-temperature clean-energy applications. Nat. Comput. Sci. 3, 675–686 (2023).

Jin, L. et al. Transformer-generated atomic embeddings to enhance prediction accuracy of crystal properties with machine learning. Nat. Commun. 16, 1210 (2025).

Lim, J. et al. Predicting drug-target interaction using a novel graph neural network with 3d structure-embedded graph representation. J. Chem. Inf. Model. 59, 3981–3988 (2019).

Dwivedi, V. P., Luu, A. T., Laurent, T., Bengio, Y. & Bresson, X. Graph neural networks with learnable structural and positional representations. International Conference on Learning Representations (2022).

Ma, L., Rabbany, R. & Romero-Soriano, A. Graph attention networks with positional embeddings. In Advances in Knowledge Discovery and Data Mining (eds Karlapalem, K. et al.) 514–527 (Springer International Publishing, 2021).

Chen, Y. et al. Sp-gnn: Learning structure and position information from graphs. Neural Networks 161, 505–514 (2023).

Karamad, M. et al. Orbital graph convolutional neural network for material property prediction. Phys. Rev. Mater. 4, 093801 (2020).

Reiser, P. et al. Graph neural networks for materials science and chemistry. Commun. Mater. 3, 93 (2022).

Doshi, S. & Chepuri, S. P. Graph neural networks with parallel neighborhood aggregations for graph classification. IEEE Trans. Signal Process. 70, 4883–4896 (2022).

Schweidtmann, A. M. et al. Physical pooling functions in graph neural networks for molecular property prediction. Comput. Chem. Eng. 172, 108202 (2023).

Du, H., Wang, J., Hui, J., Zhang, L. & Wang, H. Densegnn: universal and scalable deeper graph neural networks for high-performance property prediction in crystals and molecules. npj Comput. Mater. 10, 292 (2024).

Shi, X., Zhou, L., Huang, Y., Wu, Y. & Hong, Z. A review on the applications of graph neural networks in materials science at the atomic scale. Mater. Genome Eng. Adv. 2, e50 (2024).

Gupta, V. et al. Structure-aware graph neural network-based deep transfer learning framework for enhanced predictive analytics on diverse materials datasets. npj Comput. Mater. 10, 1 (2024).

Li, H., Wang, X., Zhang, Z. & Zhu, W. Ood-gnn: Out-of-distribution generalized graph neural network. IEEE Trans. Knowl. Data Eng. 35, 7328–7340 (2023).

Gao, H., Guo, X.-W., Li, G., Li, C. & Yang, C. Gcpnet: An interpretable generic crystal pattern graph neural network for predicting material properties. Neural Netw. 188, 107466 (2025).

Shang, Y. et al. Materials genome engineering accelerates the research and development of organic and perovskite photovoltaics. Mater. Genome Eng. Adv. 2, e28 (2024).

Li, Q., Fu, N., Omee, S. S. & Hu, J. Md-hit: Machine learning for material property prediction with dataset redundancy control. npj Comput. Mater. 10, 245 (2024).

Omee, S. S., Fu, N., Dong, R., Hu, M. & Hu, J. Structure-based out-of-distribution (ood) materials property prediction: a benchmark study. npj Comput. Mater. 10, 144 (2024).

Fu, N., Omee, S. S. & Hu, J. Physical encoding improves ood performance in deep learning materials property prediction. Comput. Mater. Sci. 248, 113603 (2025).

Li, K. et al. Exploiting redundancy in large materials datasets for efficient machine learning with less data. Nat. Commun. 14, 7283 (2023).

Wang, H., Ouyang, R., Chen, W. & Pasquarello, A. High-quality data enabling universality of band gap descriptor and discovery of photovoltaic perovskites. J. Am. Chem. Soc. 146, 17636–17645 (2024).

Weng, B. et al. Simple descriptor derived from symbolic regression accelerating the discovery of new perovskite catalysts. Nat. Commun. 11, 3513 (2020).

Wang, G., Wang, E., Li, Z., Zhou, J. & Sun, Z. Exploring the mathematic equations behind the materials science data using interpretable symbolic regression. Interdiscip. Mater. 3, 637–657 (2024).

Liu, S., Li, Q., Shen, X., Sun, J. & Yang, Z. Automated discovery of symbolic laws governing skill acquisition from naturally occurring data. Nat. Comput. Sci. 4, 334–345 (2024).

Chen, C. & Ong, S. P. A universal graph deep learning interatomic potential for the periodic table. Nat. Comput. Sci. 2, 718–728 (2022).

Lu, Y. et al. A simple and efficient graph transformer architecture for molecular properties prediction. Chem. Eng. Sci. 280, 119057 (2023).

Ihalage, A. & Hao, Y. Formula graph self-attention network for representation-domain independent materials discovery. Adv. Sci. 9, 2200164 (2022).

Morgan, J. P., Paiement, A. & Klinke, C. Domain-informed graph neural networks: a quantum chemistry case study. Neural Networks 165, 938–952 (2023).

Ouyang, R., Curtarolo, S., Ahmetcik, E., Scheffler, M. & Ghiringhelli, L. M. Sisso: A compressed-sensing method for identifying the best low-dimensional descriptor in an immensity of offered candidates. Phys. Rev. Mater. 2, 083802 (2018).

Ouyang, R., Ahmetcik, E., Carbogno, C., Scheffler, M. & Ghiringhelli, L. M. Simultaneous learning of several materials properties from incomplete databases with multi-task sisso. J. Phys.: Mater. 2, 024002 (2019).

Choudhary, K. et al. The joint automated repository for various integrated simulations (Jarvis) for data-driven materials design. npj Comput. Mater. 6, 173 (2020).

Klimeš, J., Bowler, D. R. & Michaelides, A. Chemical accuracy for the van der Waals density functional. J. Phys.:Condens. Matter 22, 022201 (2009).

Li, X. et al. Computational screening of new perovskite materials using transfer learning and deep learning. Appl. Sci. 9, 5510 (2019).

Tao, Q., Xu, P., Li, M. & Lu, W. Machine learning for perovskite materials design and discovery. npj Comput. Mater. 7, 23 (2021).

Prasanna, R. et al. Band gap tuning via lattice contraction and octahedral tilting in perovskite materials for photovoltaics. J. Am. Chem. Soc. 139, 11117–11124 (2017).

Banik, S. et al. Cegann: Crystal edge graph attention neural network for multiscale classification of materials environment. npj Comput. Mater 9, 23 (2023).

Yan, L. et al. Extending the mxenes to mo enes with emergent quantum phenomena. ACS Nano 19, 30060–30071 (2025).

Yan, L. et al. Thickness-dependent superconductivity and quantum spin hall effects in ti n+ 1 o n (n = 2, 3) moenes. Phys. Rev. B 110, 045421 (2024).

Yan, L. et al. Two dimensional moene: From superconductors to direct semiconductors and weyl fermions. Nano Lett. 22, 5592–5599 (2022).

Schmidt, J., Pettersson, L., Verdozzi, C., Botti, S. & Marques, M. A. Crystal graph attention networks for the prediction of stable materials. Sci. Adv. 7, eabi7948 (2021).

Vaswani, A. et al. Attention is all you need. In Advances in Neural Information Processing Systems (eds Guyon, I. et al.) vol. 30 (Curran, 2017).

Kuang, W., Wang, Z., Wei, Z., Li, Y. & Ding, B. When transformer meets large graphs: An expressive and efficient two-view architecture. IEEE Trans. Knowledge Data Eng. 36, 5440–5452 (2024).

Sun, Y., Zhu, D., Wang, Y., Fu, Y. & Tian, Z. Gtc: Gnn-transformer co-contrastive learning for self-supervised heterogeneous graph representation. Neural Netw. 181, 106645 (2025).

Wang, C. et al. Automatic graph topology-aware transformer. IEEE Trans. Neural Networks Learn. Syst. 36, 8470–8484 (2024).

Vinyals, O., Bengio, S. & Kudlur, M. Order matters: Sequence to sequence for sets. International Conference on Learning Representations (2016).

Ying, C. et al. Do transformers really perform badly for graph representation? In Advances in Neural Information Processing Systems (eds Ranzato, M., Beygelzimer, A., Dauphin, Y., Liang, P. & Vaughan, J. W.) vol. 34, 28877–28888 (Curran, 2021).

Yang, L., Chen, J., Wang, Z. & Shang, F. Subgraph-aware virtual node matching graph attention network for entity alignment. Expert Syst. Appl. 231, 120694 (2023).

Bahdanau, D., Cho, K. & Bengio, Y. Neural machine translation by jointly learning to align and translate. In International Conference on Learning Representations (eds Bengio, Y. & LeCun, Y.) (2015).

Acknowledgements

This work is supported by National Natural Science Foundation of China (No.12374057), and Fundamental Research Funds for the Central Universities. The work (S.T.) at Los Alamos National Laboratory (LANL) was performed at the Center for Integrated Nanotechnologies (CINT), a U.S. Department of Energy, Office of Science user facility at LANL.

Author information

Authors and Affiliations

Contributions

J.L. performed the data analysis and wrote the manuscript; Y.T., S.T., and W.D. performed the validation; S.T., W.D., and L.Z. revised the manuscript. L.Z. conceived and supervised the study. All authors reviewed the manuscript.

Corresponding authors

Ethics declarations

Competing interests

The authors declare no competing interests.

Additional information

Publisher’s note Springer Nature remains neutral with regard to jurisdictional claims in published maps and institutional affiliations.

Supplementary information

Rights and permissions

Open Access This article is licensed under a Creative Commons Attribution-NonCommercial-NoDerivatives 4.0 International License, which permits any non-commercial use, sharing, distribution and reproduction in any medium or format, as long as you give appropriate credit to the original author(s) and the source, provide a link to the Creative Commons licence, and indicate if you modified the licensed material. You do not have permission under this licence to share adapted material derived from this article or parts of it. The images or other third party material in this article are included in the article’s Creative Commons licence, unless indicated otherwise in a credit line to the material. If material is not included in the article’s Creative Commons licence and your intended use is not permitted by statutory regulation or exceeds the permitted use, you will need to obtain permission directly from the copyright holder. To view a copy of this licence, visit http://creativecommons.org/licenses/by-nc-nd/4.0/.

About this article

Cite this article

Liu, J., Tang, Y., Tretiak, S. et al. SA-GAT-SR: self-adaptable graph attention networks with symbolic regression for high-fidelity material property prediction. npj Comput Mater 11, 377 (2025). https://doi.org/10.1038/s41524-025-01854-5

Received:

Accepted:

Published:

Version of record:

DOI: https://doi.org/10.1038/s41524-025-01854-5