Abstract

Effectively compressing transmitted images and reducing the distortion of reconstructed images are challenges in image semantic communication. This paper proposes a novel image semantic communication model that integrates a dynamic decision generation network and a generative adversarial network to address these challenges as efficiently as possible. At the transmitter, features are extracted and selected based on the channel’s signal-to-noise ratio (SNR) using semantic encoding and a dynamic decision generation network. This semantic approach can effectively compress transmitted images, thereby reducing communication traffic. At the receiver, the generator/decoder collaborates with the discriminator network, enhancing image reconstruction quality through adversarial and perceptual losses. The experimental results on the CIFAR-10 dataset demonstrate that our scheme achieves a peak SNR of 26 dB, a structural similarity of 0.9, and a compression ratio (CR) of 81.5% in an AWGN channel with an SNR of 3 dB. Similarly, in the Rayleigh fading channel, the peak SNR is 23 dB, structural similarity is 0.8, and the CR is 80.5%. The learned perceptual image patch similarity in both channels is below 0.008. These experiments thoroughly demonstrate that the proposed semantic communication is a superior deep learning-based joint source-channel coding method, offering a high CR and low distortion of reconstructed images.

Similar content being viewed by others

Introduction

In the realm of traditional communication, Shannon’s information theory1 predominantly focused on minimizing the Bit Error Rate while overlooking the semantic content of the data transmitted. This approach often resulted in significant information redundancy, leading to inefficient use of communication resources, which poses a significant challenge to the future development of communication. Currently, human–machine collaborative communication has become a trend, emphasizing the conveyance of the underlying meaning of data rather than the error-free transmission of symbols. This communication method is called semantic communication. Compared to Shannon’s information theory, Carnap et al.2 proposed a new semantic information theory that measures the normalized information content of sentence content using logical probability functions and developed a method to distinguish between absolute and relative information measurement. Similarly, Bao et al.3 extended the theory of semantic information by employing model theory to quantify the amount of semantic information. Additionally, Ref.4 expended the source encoding principle of classical information theory to achieve a higher compression ratio (CR). These works have provided valuable direction and guidance for the development of semantic communication, but many challenges remain to be addressed.

The advent of deep neural networks has ushered in a new era for encoding source signals5 and has made remarkable achievements in computer vision and communication systems. Specifically, deep convolutional neural networks have emerged as powerful tools for feature extraction6,7 demonstrating their capacity to extract and transmit semantic content from source signals, enabling their wider use in semantic communication systems. References8,9,10,11 proposed a deep learning-based text and speech semantic communication system, which enhanced the performance and semantic similarity of the system through attention mechanisms, optimized wireless resource allocation, and partial semantic information transmission, achieving efficient and robust semantic communication. Although existing technologies such as speech recognition and natural language processing adequately met the transmission needs for speech and text, image data contains richer visual information, and the compression methods previously mentioned can result in significant information redundancy. Therefore, we build on these research findings to design a solution that ensures the effective transmission of core semantic information in images, even with limited bandwidth and computing resources, achieving more efficient and intelligent image semantic communication. Undoubtedly, significant challenges remain in this endeavor.

Today, most communication systems use separate source coding (JPEG, BPG, etc.) and channel coding (LDPC, Polar, etc.) for wireless image transmission. Shannon’s separation theorem demonstrates that, under the infinite asymptotic limit of long source and channel blocks, these two-step source-channel coding approaches are theoretically optimal. However, in scenarios characterized by time-varying channels, bandwidth limitations, or energy constraints, the separation theorem becomes less applicable. Reference6 proposed a joint-source channel coding (JSCC) technique for wireless image transmission. This method directly mapped image pixel values to complex-valued channel input symbols using deep learning techniques and was jointly trained to minimize the mean square error (MSE) of image reconstruction. The method outperformed traditional digital transmission combined with JPEG or JPEG2000 source compression at low signal-to-noise ratio (SNR) and channel bandwidth values, and it also avoided the “cliff effect” typically encountered at low SNR. Consequently, learning-based JSCC can take advantage of the correlation among multi-user sources to surpass the performance of traditional separate source-channel coding.

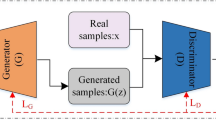

Generating reconstructed images that are visually closer to real images and improving users’ subjective perception quality is extremely important in the JSCC optimization process. In Refs.6,7,12,13,14, JSCC used MSE or structural similarity (SSIM) as distortion metrics. However, these metrics often ignore the semantic similarity between the source signal and the reconstructed signal, failing to accurately reflect human perception judgments. Therefore, it is necessary to introduce generative adversarial networks (GANs) and perceptual loss into the JSCC optimization process to improve image quality and reduce artifacts and distortions. Presently, researchers in image semantic communication primarily focus on improving the fidelity of images reconstituted at the receiver. However, most JSCC techniques are developed and optimized for a singular SNR, and in practical applications, channel conditions may change at any time. Mismatched channel conditions between network training optimization and model deployment can lead to a sharp deterioration in performance. To ensure the system’s robustness to channel changes, it is necessary to train the model at multiple different channel SNRs. This inevitably results in a significant increase in data traffic and requires higher computational and storage resources.

In this study, we aim to integrate a dynamic decision generation network (DDGN) and a GAN to train the JSCC system end-to-end, effectively compressing transmitted data while reducing the distortion of the reconstructed image. The main contributions are as follows:

-

1.

At the transmitter, the added DDGN can flexibly determine the transmission feature groups and the number of active channels based on the feedback SNR in the wireless channel and the features of the source image, thereby improving the CR of the transmitted data. Additionally, we use the Gumbel Softmax to enhance the decision network’s differentiability, facilitating further end-to-end training.

-

2.

At the receiver, the generator network collaborates with the discriminator network for training. This aims to overcome the challenges faced by convolution-based image semantic codecs in extracting global semantic information and mitigate the loss of image details during model training. It helps preserve high-frequency details of the image and improves the quality of image reconstruction.

-

3.

We jointly optimize the network architecture using adversarial loss and perceptual loss, aiming to improve the semantic similarity between the source and the reconstructed images, and reduce image distortion.

Related work

Research indicated in Refs.15,16,17 has shown that semantic communication technology plays a pivotal role in enhancing the efficiency of image transmission in wireless communication systems. In contrast to conventional image communication systems, JSCC based on source signals and channel perception capabilities, demonstrates superior competitive performance. Kurka et al.6 proposed a JSCC framework for wireless image transmission, and introduced an automated encoder with an untrained layer, capable of directly mapping image pixel values to a continuous channel input function. This scheme has achieved significant performance improvements under conditions of low SNR and limited channel bandwidth, unaffected by the ‛cliff effect’ commonly seen in digital communication schemes. Building on this foundation, Kurka proposed another image reconstruction scheme, DeepJSCC-f7, which incorporates channel feedback to enhance the accuracy of the reconstructed image. In Ref.18, three JSCC schemes based on hierarchical deep learning were presented to achieve continuous thinning of image representation. Furthermore, an adaptive JSCC scheme employing a policy network to optimize the equilibrium between data rate and signal fidelity was introduced in Ref.19. To more effectively extract semantic features, Dai et al.20 introduced an advanced class of deep JSCC methods, capable of adapting to the source distribution through nonlinear transformation, termed nonlinear transformation source-channel coding. Additionally, Hu et al.21 introduced an end-to-end semantic system architecture designed to counter semantic noise, enhancing the robustness of semantic communication systems to semantic disturbances and reducing the overhead of transmission. Sun et al.22 also proposed a novel JSCC architecture based on pixel semantics for image transmission.

Although JSCC is deemed effective in surpassing the performance of traditional source-channel coding methods, most JSCC methods have limitations in maintaining semantic similarity between the original signal and the reconstructed signal. This is because prevalent JSCC systems predominantly employed distortion metrics like peak signal-to-noise ratio (PSNR) and SSIM for optimization. These distortion metrics were used as loss terms, favoring pixel-level transmission while neglecting human perception of semantic information, often leading to a significant perceptual quality loss in certain scenarios. Regarding image quality evaluation, Zhang et al.23 designed the learned perceptual image patch similarity (LPIPS) metric, which calculates similarity scores between images using features extracted by neural networks. The results indicate that LPIPS is more effective in human perceptual judgment compared to PSNR and SSIM. In practice, narrowing the distribution differences between the original and the reconstructed images is usually achieved through minimizing perceptual loss, frequently involving GANs. Adversarial loss has been widely applied in image compression to enhance the perceptual quality of the reconstructed images24,25. Huang et al.26 introduced a method for image semantic encoding utilizing GANs, integrating local features and global features via an attention mechanism. Furthermore, Wang et al.27 enhanced the Deep JSCC model with adversarial loss to optimize its capability in capturing global semantic information and local textures, thus elevating both the transmission efficiency and perceptual quality.

In scenarios with limited communication resources, the flexibility of transmission features to adapt according to dynamic SNR and image content is crucial for efficiently conserving communication resources. While most JSCC models have been trained with fixed allocations of communication resources, Kurka et al.28 proposed training a single model with different communication resource allocations. Although this method could adaptively transmit images with dynamic data bandwidth for wireless channels, it struggled to meet the time-varying needs of wireless channels because it relied on a single SNR for training. Therefore, achieving adaptability to dynamic channels and diverse image features has become a crucial challenge in enhancing the performance of learning models and the efficiency of wireless channel utilization.

Drawing inspiration from the aforementioned research, particularly19, we have designed a DDGN that incorporates the generative adversarial concept into its architecture. Concurrently, we employ perceptual loss to assess feature similarity. Together, these strategies contribute to improving the performance of image semantic communication, making it applicable to various scenarios where efficient and high-fidelity image transmission is crucial, such as remote sensing, surveillance, and medical imaging, which require precise and rapid image analysis. Our proposed solution ensures minimal loss of critical information and supports enhanced decision-making and analysis across disciplines.

Deep learning-based JSCC method

System architecture

In this paper, we have meticulously designed a system architecture, illustrated in Fig. 1, which includes a source encoder, a channel encoder, a channel decoder, a source decoder, a discriminator, and a DDGN (P). The source encoder processes the input image \(\:\mathbf{x}\) to extract features \(\:\mathbf{S}\), subsequently encoding these features into \(\:\mathbf{s}\). These image features, \(\:\mathbf{s}\), are then relayed to the channel encoder, which encodes \(\:\mathbf{s}\) and adjusts the view to generate latent features. Subsequently, we introduce the DDGN (P), which generates a decision mask with a probability distribution. This mask is essentially a binary tensor. Next, we preprocess the mask and fill the front of the generated mask with all 1 s. In this way, the complete decision mask contains a part that is always activated and a part that is selectively activated through the decision mask. After channel coding, the mask is multiplied by the latent features to obtain the non-selectively activated feature group \(\:{\mathbf{G}}_{\mathbf{n}}\) and the selectively activated feature group \(\:{\mathbf{G}}_{\mathbf{s}}\). Following selection, all activated feature groups are normalized to produce \(\:{\mathbf{s}}^{{\prime\:}}\), which then traverses a noisy wireless channel, arriving at the decoder end as \(\:\widehat{\varvec{s}}\). The core of our architecture is the deep learning-based joint source-channel decoding process, where the channel decoder and source decoder collaboratively reconstruct the image, denoted as \(\:\widehat{\varvec{x}}\). This process underscores the essence of semantic communication receivers. The discriminator is pivotal in assessing the similarity between the reconstructed image \(\:\widehat{\varvec{x}}\) and the original image \(\:\mathbf{x}\), providing feedback to adjust the model parameters. Furthermore, it calculates a loss function derived from the discrimination results to update the generator. This study highlights the interactive training between the generator and the discriminator, where the adequately trained generator produces images that closely resemble the source images, ensuring \(\:\widehat{\varvec{x}}\) and \(\:\mathbf{x}\) have the same distribution. This approach aims to maximize the discrimination distortion, while the discriminator is continuously fine-tuned to minimize this distortion.

The architecture of an image semantic communication system based on generative joint source-channel coding.

To reduce the scale and computation of the model, our approach designs a strategy of selecting a few key channels within high SNR communication channels for effective feature extraction. In this research, the convolutional layer is configured with 16 output channels. The DDGN produces a binary tensor, P, where “1” indicates an activated channel, and “0” indicates a deactivated channel. By counting the “1” within P, we ascertain the count of activated channels present within the generated features for each sample.

In Fig. 2, at the encoder, the input image is normalized, then processed by three linked consecutive layers with BatchNorm and ReLU activation functions. Subsequently, two residual blocks and the modulation layer are added to reconstruct the output. To ensure that the output of the transmitter meets the average transmission power constraint, a power normalization layer is used before the input channel. The decoder reverses the operations performed by the encoder, and applying the Tanh activation function to the output of the transposed convolutional layer in the final layer can achieve a faster convergence speed than the sigmoid activation function.

The structure diagram of the encoder and decoder, where the parentheses in the convolutional layers indicate the size and number of convolution kernels.

Dynamic decision generation network

The DDGN is adept at adaptive processing or weighing input data in a specific environment, driven by the analysis of image content and the SNR. The architecture of DDGN is shown in Fig. 3. The process commences with the application of average pooling to the image feature Z, which is subsequently cascaded with the SNR. Then, a vector representing the probability distribution for different categories is generated through three fully connected layers. Following this, the Gumbel Softmax function is employed to refine and sample the output vector, thereby yielding a softened probability distribution. This distribution is then converted into a thermometer-coded mask via the One-Hot to Thermal function.

The dynamic decision generation network.

Given the inherent discreteness of sampling and the prerequisite for gradients to be computed in a continuous space, the conventional backpropagation algorithm cannot directly optimize the DDGN. To preserve gradient propagation during the sampling process, the method incorporates the Gumbel distribution.

Set the class probabilities as \(\:{p}_{1}\), \(\:{p}_{2}\),…, \(\:{p}_{k}\), where \(\:k\in\:\mathbf{Z}\), respectively. Assuming z is a categorical variable, based on Gumbel Max and Gumbel noise, z is:

where \(\:{g}_{i}=-\text{log}(-\text{log}\left({U}_{i}\right))\) is a random variable of standard Gumbel distribution with \(\:{U}_{i}\sim \,U\left(\text{0,1}\right)\).

As the argmax is non-differentiable, this study employs a softmax function with temperature control to approximate it.

where the annealing parameter τ > 0. The smaller τ, Eq. (2) is closer to one-hot form, however, the larger τ, Eq. (2) is closer to a uniform distribution.

For the backpropagation algorithm in the network, Eq. (1) to obtain discrete samples, and Eq. (2), i.e. the softmax function, approximates the gradient for backpropagation. In the DDGN, the final output Decision Mask: \(\:\mathbf{M}={\sum\:}_{i=k}^{\varOmega\:}{P}_{i}\), where Ω is the sample space sampled by Gumbel Max.

Discriminator

The discriminator architecture is shown in Fig. 4. This architecture integrates four convolutional layers, which precede a singular output layer. The convolutional layers gradually decrease the feature map’s dimensions and the count of channels, ultimately generating a feature map with dimensions of 1 × 1. Using a convolutional layer, the output layer transforms this feature map into scalar values. These scalar values are then normalized to the interval [0, 1] by applying a sigmoid function, quantitatively indicating the perceived authenticity of the input image.

As elucidated by Ref.29, InstanceNorm normalizes each instance of the feature map independently, which may attenuate the correlation among feature maps, thereby decelerating the convergence rate of the model. To address this issue, InstanceNorm has been replaced with SpectralNorm30 within the discriminator’s architecture. SpectralNorm is designed to regulate the norm and complexity of the model’s weights, which serves to mitigate the risks of overfitting, as well as the explosion or disappearance of gradients.

The architecture of the discriminator, LReLU denotes the leaky ReLU with \(\:\:\alpha\:=0.2\)31.

Loss function

The primary objective of the model is to engineer a pair of codecs capable of ‘deceiving’ the discriminator into classifying reconstructed images as real. Concurrently, we consider the effects of the noisy channel, making a concerted effort to minimize distortion throughout the training process. The employed loss function is articulated as follows:

where \(\:{d}_{\text{M}\text{S}\text{E}}\) represents the regularized MSE distortion function, \(\:{d}_{\text{L}\text{P}\text{I}\text{P}\text{S}}\) represents the perceptual loss function which is the distance in the feature space, and \(\:{\lambda\:}_{d}\), \(\:{\lambda\:}_{m}\) and \(\:{\lambda\:}_{p}\) are the proportion factors for the three losses, respectively. In general, \(\:{d}_{\text{M}\text{S}\text{E}}\) facilitates faster model convergence, \(\:{d}_{\text{L}\text{P}\text{I}\text{P}\text{S}}\:\)is used for assessing image similarity, and \(\:{\lambda\:}_{d}\), \(\:{\lambda\:}_{m}\) and \(\:{\lambda\:}_{p}\) enable the trade-off among the three losses. The initial term is the discriminator loss, while the latter two terms are the distortion losses within the generator.

Learning algorithm

This study implements an image semantic communication based on a JSCC model integrating the DDGN and the discriminator. Crucial steps within the model’s training are outlined in Algorithm 1. The details are described as follows:

-

Line 1 The architecture of the JSCC model integrating the DDGN and the discriminator is designed, including different components such as decision network, codec, discriminator, normalization and channel noise.

-

Line 2 The model parameters are initialized. The parameter set θ includes weights (w), learning rate (α), and temperature parameters (t).

-

Line 3–10 Training the model involves forward and backward propagation methods, employing the stochastic gradient descent optimization algorithm for backward propagation to iteratively update the model parameters32.

-

Line 11–12 Training concludes when the predetermined communication requirements are met, resulting in the generation of a deep learning-based JSCC model.

Training strategy of Optimized joint source-channel coding (JSCC).

Experiment and analysis

Experimental setting

Experimental parameters

In this study, we utilized PyTorch33 to implement our framework and leveraged a Tesla P40 GPU for both the training and evaluation34. The training dataset is compiled from the CIFAR-10 collection, which includes 50,000 images for training and 10,000 for testing, each with dimensions of 32 × 32 pixels. Model training was guided by the Adam optimizer, with \(\:\beta\:1\) set to 0.9 and \(\:\beta\:2\) set to 0.999. To prevent zero division errors during the calculation process, \(\:\epsilon\:\) is set to \(\:1\times\:{10}^{-8}\), as detailed in Ref.35. Firstly, in the data preprocessing stage, the CIFAR-10 dataset was subjected to standardization and data augmentation operations, including random horizontal flipping and cropping with a 50% probability, and the use of reflection filling operations to ensure that the pixel values of each image were within the range of [0, 1], and further standardized to [– 1, 1], in order to improve the training effectiveness and generalization ability of the model. Divide the training plan into three sequential stages during the training phase:

-

Step 1 Warm-up stage. The model quickly converges to a relatively reasonable state as soon as possible under the conditions that epochs are 300 and the learning rate is \(\:5\times\:{10}^{-4}\).

-

Step 2 Fine-tuning stage. Subsequently, the learning rate is diminished to refine the model parameters more delicately and to enhance the data generalization. The current condition is that epochs are 300 and the learning rate is \(\:5\times\:{10}^{-5}\).

-

Step 3 Stabilization stage. The model's capability to complete specific tasks is further augmented by fixing certain modules (encoder Es and decision network P) while fine-tuning others with 200 epochs

Additionally, the batch size is established at 128, with the initial temperature parameter \(\:\tau\:\) set to 5, which then progressively declines at an exponential decay rate of − 0.015. During the training process, we continuously optimize hyperparameters using evolutionary hyperparameter methods. Each input sample undergoes random SNR sampling, with the SNR uniformly distributed between 0 and 20 dB. In contrast, during the testing phase, all input samples are assigned the same SNR.

Metrics

To assess the efficacy of the proposed image semantic communication proposed herein, we conducted simulation tests to assess the fidelity of the reconstructed images and the efficiency of data transmission compression. This evaluation entailed comparisons with several benchmarks, including JSCC-No Discriminator (our proposed JSCC without discriminator), JSCC-MSE (deep JSCC optimized for MSE), BPG + LDPC, and BPG + Capacity. The key performance metrics in this analysis include PSNR, SSIM, LPIPS, and CR. These evaluation metrics are detailed as follows:

where \(\:{\mu\:}_{\mathbf{x}}\) and \(\:{\sigma\:}_{\mathbf{x}}^{2}\) are the mean and variance of x, respectively. And \(\:{\sigma\:}_{\mathbf{x}\widehat{\mathbf{x}}}\) is the covariance between x and \(\:\widehat{\mathbf{x}}\). \(\:{C}_{1}\) and \(\:{C}_{2}\) are stability constants to avoid having a denominator of 0.

LPIPS is trained to learn the inverse mapping from the generated images back to the original images, compelling the generator to learn how to reconstruct the original images from the fictitious images. This ensures that the generator preserves the essential features and details of the original images during image generation. A reduced LPIPS score reflects higher similarity between two images, whereas an elevated score denotes increased dissimilarity.

where in this paper, the data amount of the source image is s, and the data amount of the image deep learning JSCC is \(\:\widehat{\mathbf{s}}\). The CR is an important indicator that characterizes the compression degree of transmitting images in image semantic communication.

Results analysis

Two prevalent channel models were examined: the AWGN channel and the slow-fading channel. The AWGN channel’s transfer function is defined as \(\:\widehat{\mathbf{s}}=W\left(\mathbf{s}\right)=\mathbf{s}+\mathbf{n}\), where each component of the noise n adheres to an independent and identically distributed Gaussian distribution, denoted as \(\:\mathbf{n}\sim\mathcal{C}\mathcal{N}(0,{\sigma\:}^{2}{\mathbf{I}}_{k})\). Here, \(\:{\sigma\:}^{2}\) represents the average noise power. For the slow fading channel, we adopt the Rayleigh fading model, characterized by the transfer function \(\:\widehat{\mathbf{s}}=W\left(\mathbf{s}\right)=\mathbf{h}\mathbf{s}\), where \(\:\mathbf{h}\sim\mathcal{C}\mathcal{N}(0,{\mathbf{H}}_{\mathbf{c}})\) is a complex Gaussian random variable and \(\:{\mathbf{H}}_{\mathbf{c}}\) is the covariance matrix. The real part and imaginary part of the gain h are independent Gaussian random variables with a mean of 0 and a variance of 1/2, respectively. The SNR is converted into a linear SNR for calculating the noise standard deviation. Finally, the noise signal is normalized. In the experiment of the Rayleigh fading channel, we used 10 time steps to simulate the changes in h, reflecting the characteristics of the channel time-varying.

Initially, the efficacy of our scheme proposed, “D-JSCC”, was evaluated on the AWGN channel, and compared with JSCC-No Discriminator, JSCC-MSE, BPG + LDPC, and BPG + Capacity, with the channel utilization rate fixed at 0.5. JSCC-No Discriminator is D-JSCC without GAN. For the JSCC-MSE, the method described in Ref.6 was adopted, and we set \(\:{\text{S}\text{N}\text{R}}_{\text{t}\text{r}\text{a}\text{i}\text{n}}={\text{S}\text{N}\text{R}}_{\text{t}\text{e}\text{s}\text{t}}\). For the BPG+LDPC, BPG36 image codec is used for source encoding, and then combined with LDPC37 for channel encoding. We provided the envelope of the best performance configuration of different LDPC coding rates and modulation combinations at each SNR under ideal capacity, labeled as BPG+Capacity. In Fig. 5, we demonstrated the evaluation performance on three metrics: SSIM, PSNR, and LPIPS, as well as the average number of active channels (PCS) in all generated images by D-JSCC. At 3 dB SNR, the SSIM of the D-JSCC has reached 0.925, which is superior among all comparison schemes. It also has the same outstanding performance in PSNR as the BPG + Capacity, which surpasses most previous JSCC schemes. With the continuous increase of SNR, both the SSIM and the PSNR of D-JSCC are still outstanding. The results indicate that our approach can achieve comparable or even better effects in pixel-level distortion. Interestingly, the LPIPS of the D-JSCC is consistently below 0.02 within the SNRs shown in Fig. 5. A smaller LPIPS means higher similarity between the original image and the generated image. This is consistent with our method, where the proposed generative model delivers higher perceptual quality.

Evaluation performance of structural similarity (SSIM), peak signal-to-noise ratio (PSNR), and learned perceptual image patch similarity (LPIPS) performance metrics of D-JSCC on AWGN channel, and (d,e) respectively illustrate the average number of active channels (Channel) in all generated images by D-JSCC at various SNRs, and the Compression Ratio of channel transmission.

Figure 5d illustrates that at higher channel SNRs, our scheme activates a few key channels to extract effective feature information. Meanwhile, Fig. 5e shows the correspondence between CR and SNRs in the D_JSCC. Obviously, at a certain SNR, the number of active channels and the CR are as follows.

In the low SNRs section, DDGN tends to activate more channels, thereby reducing the CR; Similarly, in the high SNRs section, DDGN tends to reduce active channels, thereby increasing the CR. For example: when SNR = 3 dB, then channels = 7.5PCS, CR\(\:\approx\:\)81.5%; When SNR = 20 dB, then channels = 5 PCS, CR\(\:\approx\:\)93.8%.

We also conducted the same measurements on a Rayleigh fading channel. As shown in Fig. 6, D-JSCC has been trained with uniform sampling between 0 and 20 dB SNR, and our designed model exhibits stronger robustness against channel interference, as detailed below.

Evaluation performance of SSIM, PSNR, and LPIPS performance metrics of D-JSCC on Rayleigh fading channel, and (d,e) respectively illustrate the average number of active channels (Channel) in all generated images by D-JSCC at various SNRs, and the Compression Ratio of channel transmission.

At 3 dB SNR, the PSNR and the SSIM of D-JSCC still show better performance than other schemes in this paper. Moreover, the LPIPS of D-JSCC is consistently below 0.02 within the SNRs shown in Fig. 6. When SNR = 3 dB, then channels = 7.6PCS, and CR 80.5%. These reflect the difference between Rayleigh’s interference and AWGN’s interference in communication. At the same SNR, the Rayleigh channel introduces more interference than the AWGN channel, resulting in a higher number of active channels and a lower CR. At high SNRs, the impact of noise types on communication is minimal and can be largely ignored. Take for example: When SNR = 20 dB, then channels=6.8PCS, and CR\(\:\approx\:\)93.8%. This is almost the same as Fig. 5.

The performance comparison between JSCC-No Discriminator and JSCC-MSE in Figs. 5 and 6 demonstrates the impact of ablating the discriminator and perceptual loss. In the configuration without the discriminator (JSCC-No Discriminator), the PSNR and SSIM are worse compared to D-JSCC at low SNR, proving the effectiveness of GAN in reducing image differences. Similarly, the LPIPS is high at low SNR, indicating that perceptual loss plays an important role in enhancing the human perceptual quality of image reconstruction. Therefore, our proposed D-JSCC system can achieve high-quality image semantic transmission under low channel SNR.

Conclusion

In the study, we propose an image semantic communication system that combines a DDGN and a GAN. The transmitter utilizes the DDGN along with an encoding network for feature extraction, encoding, and compression of the images to be transmitted. At the receiver, a discriminator network, in conjunction with a generator network in JSCC, is used to successfully decode and restore the received images. Additionally, we adopt adversarial loss, perceptual loss, and MSE loss to holistically optimize the network architecture. The experimental results indicate that our image semantic communication scheme achieves a CR exceeding 80%. Compared to the encoding schemes and the JSCC method used for comparison in this paper, our scheme offers comparable or even better performance in PSNR and SSIM, especially demonstrating superior performance in LPIPS. Our future work will focus on designing lightweight encoder and decoder architectures to reduce the demand for computing resources. Additionally, we will incorporate a global attention mechanism from Ref.38 into the model to better capture key information in images and enhance compression performance in wireless communication.

Data availability

The data that support the findings of this study are available from the corresponding author upon reasonable request.

References

Shannon, C. E. A mathematical theory of communication. Bell Syst. Tech. J. 27, 623–656. https://doi.org/10.1002/j.1538-7305.1948.tb01338.x (1948).

Carnap, R. & Bar-Hillel, Y. An outline of a theory of semantic information. Br. J. Philos. Sci. 4, 1 (1953).

Bao, J., Basu, P., Dean, M., Partridge, C. & Swami, A. Towards a theory of semantic communication. IEEE. https://doi.org/10.1109/NSW.2011.6004632 (2011).

Basu, P., Bao, J., Dean, M. & Hendler, J. Preserving quality of information by using semantic relationships. Pervas. Mob. Comput. 11, 188–202. https://doi.org/10.1016/j.pmcj.2013.07.013 (2014).

Kim, S. et al. Fcss: Fully convolutional self-similarity for dense semantic correspondence. IEEE Trans. Pattern Anal. Mach. Intell. https://doi.org/10.1109/TPAMI.2018.2803169 (2017).

Yang, M., Bian, C. & Kim, H. S. Deep joint source channel coding for wireless image transmission with ofdm. IEEE. https://doi.org/10.1109/ICC42927.2021.9500996 (2021).

Kurka, D. B. & Gunduz, D. Deep joint source-channel coding of images with feedback. In ICASSP 2020–2020 IEEE International Conference on Acoustics, Speech and Signal Processing (ICASSP) 5235–5239. https://doi.org/10.1109/ICASSP40776.2020.9054216 (2020).

Xie, H., Qin, Z., Li, G. Y. & Juang, B. H. Deep learning based semantic communications: An initial investigation. IEEE. https://doi.org/10.1109/GLOBECOM42002.2020.9322296 (2020).

Xie, H., Qin, Z., Li, G. Y. & Juang, B. H. Deep learning enabled semantic communication systems. IEEE Trans. Signal Process. 69, 2663. https://doi.org/10.1109/TSP.2021.3071210 (2020).

Wang, Y. et al. Performance optimization for semantic communications: An attention-based learning approach. In IEEE Glob. Commun. Conf. (GLOBECOM) 1–6. https://doi.org/10.1109/GLOBECOM46510.2021.9685056 (2021).

Weng, Z. & Qin, Z. Semantic communication systems for speech transmission. IEEE J. Sel. Areas Commun. 39, 2434–2444. https://doi.org/10.1109/JSAC.2021.3087240 (2021).

Tung, T. Y. & Gündüz, D. Deepwive: Deep-learning-aided wireless video transmission. IEEE J. Sel. Areas Commun. 40, 2570–2583. https://doi.org/10.1109/JSAC.2022.3191354 (2022).

Yang, M., Bian, C. & Kim, H.-S. Ofdm-guided deep joint source channel coding for wireless multipath fading channels. IEEE Trans. Cogn. Commun. Netw. 8, 584–599. https://doi.org/10.1109/TCCN.2022.3151935 (2022).

Xu, J. et al. Wireless image transmission using deep source channel coding with attention modules. IEEE Trans. Circuits Syst. Video Technol. 32, 2315–2328. https://doi.org/10.1109/TCSVT.2021.3082521 (2022).

Xie, H., Qin, Z. & Li, G. Y. Task-oriented multi-user semantic communications for vqa. IEEE Wirel. Commun. Lett. 11, 553–557. https://doi.org/10.1109/LWC.2024.3417028 (2022).

Wang, J., Duan, Y., Tao, X., Xu, M. & Lu, J. Semantic perceptual image compression with a laplacian pyramid of convolutional networks. IEEE Trans. Image Process. 99, 1. https://doi.org/10.1109/TIP.2021.3065244 (2021).

Li, X., Shi, J. & Chen, Z. Task-driven semantic coding via reinforcement learning. IEEE Trans. Image Process. 99, 1. https://doi.org/10.1109/TIP.2021.3091909 (2021).

Kurka, D. B. & Gündüz, D. Successive refinement of images with deep joint source-channel coding. IEEE. https://doi.org/10.1109/SPAWC.2019.8815416 (2019).

Yang, M. & Kim, H. S. Deep Joint Source-Channel Coding for Wireless Image Transmission with Adaptive Rate Control. https://doi.org/10.48550/arXiv.2110.04456 (2021).

Dai, J. et al. Nonlinear transform source-channel coding for semantic communications. IEEE J. Sel. Areas Commun. 40, 802. https://doi.org/10.1109/JSAC.2022.3180802 (2022).

Hu, Q. et al. Robust Semantic Communications Against Semantic Noise. https://doi.org/10.48550/arXiv.2202.03338 (2022).

Sun, Q., Guo, C., Yang, Y., Tang, R. & Liu, C. Deep joint source-channel coding based on semantics of pixels for wireless image transmission. In 2023 IEEE 34th Annual International Symposium on Personal, Indoor and Mobile Radio Communications (PIMRC) 1–6 (2023).

Zhang, R., Isola, P., Efros, A. A., Shechtman, E. & Wang, O. The unreasonable effectiveness of deep features as a perceptual metric. IEEE 1, 68. https://doi.org/10.1109/CVPR.2018.00068 (2018).

Agustsson, E., Tschannen, M., Mentzer, F., Timofte, R. & Van Gool, L. Generative Adversarial Networks for Extreme Learned Image Compression. https://doi.org/10.48550/arXiv.1804.02958 (2018).

Oyelade, O. N. et al. A generative adversarial network for synthetization of regions of interest based on digital mammograms. Sci. Rep. https://doi.org/10.1038/s41598-022-09929-9 (2024).

Huang, D., Gao, F., Tao, X., Du, Q. & Lu, J. Toward semantic communications: Deep learning-based image semantic coding. IEEE J. Sel. Areas Commun. 41, 55–71 (2022).

Wang, J. et al. Perceptual learned source-channel coding for high-fidelity image semantic transmission. In GLOBECOM 2022–2022 IEEE Global Communications Conference 3959–3964 (IEEE, 2022).

Kurka, D. B. & Gündüz, D. Bandwidth-agile image transmission with deep joint source-channel coding. IEEE Trans. Wirel. Commun. https://doi.org/10.1109/TWC.2021.3090048 (2021).

Ulyanov, D., Vedaldi, A. & Lempitsky, V. Instance Normalization: The Missing Ingredient for Fast Stylization. https://doi.org/10.48550/arXiv.1607.08022 (2016).

Miyato, T., Kataoka, T., Koyama, M. & Yoshida, Y. Spectral Normalization for Generative Adversarial Networks. https://doi.org/10.48550/arXiv.1802.05957 (2018).

Xu, B., Wang, N., Chen, T. & Li, M. Empirical Evaluation of Rectified Activations in Convolutional Network. https://doi.org/10.48550/arXiv.1505.00853 (2015).

Liu, S. et al. A driver fatigue detection algorithm based on dynamic tracking of small facial targets using yolov7. IEICE Trans. Inf. Syst. 106, 1881–1890 (2023).

Paszke, A. et al. An Imperative Style, High-Performance Deep Learning Library. https://doi.org/10.48550/arXiv.1912.01703 (2019).

Liu, S., Wang, Y., Yu, Q., Liu, H. & Peng, Z. Ceam-yolov7: Improved yolov7 based on channel expansion and attention mechanism for driver distraction behavior detection. IEEE Access. 10, 129116–129124. https://doi.org/10.1109/ACCESS.2022.3228331 (2022).

Kingma, D., Ba, J. & Adam, A. Method for Stochastic Optimization. https://doi.org/10.48550/arXiv.1412.6980 (2014).

Bellard, F. Bpg Image Format. https://bellard.org/bpg/.

Gallager, R. G. Low-Density Parity-Check Codes (Springer, 2015).

Yu, X. & Li, D. Phase shift compression for control signaling reduction in IRS-aided wireless systems: Global attention and lightweight design. IEEE Trans. Wirel. Commun. 1, 1 (2024).

Acknowledgements

This work was supported in part by the National Engineering Research Center for Mobile Private Networks, Beijing Jiaotong University (No. BJTU20221102), and in part by the Xiangtan Key Science and Technology Achievement Transformation Project (No. GX-ZD202210012).

Author information

Authors and Affiliations

Contributions

This paper was co-authored by Zhan Peng and Shugang Liu. Shugang Liu contributed the conceptual framework and methodology, while Zhan Peng conducted the experiments and data analysis. Qiangguo Yu and Linan Duan participated in the discussions and analysis. Zhan Peng and Shugang Liu carefully reviewed the manuscript. All authors have contributed to the subsequent revisions of the paper.

Corresponding author

Ethics declarations

Competing interests

The authors declare no competing interests.

Additional information

Publisher’s note

Springer Nature remains neutral with regard to jurisdictional claims in published maps and institutional affiliations.

Rights and permissions

Open Access This article is licensed under a Creative Commons Attribution-NonCommercial-NoDerivatives 4.0 International License, which permits any non-commercial use, sharing, distribution and reproduction in any medium or format, as long as you give appropriate credit to the original author(s) and the source, provide a link to the Creative Commons licence, and indicate if you modified the licensed material. You do not have permission under this licence to share adapted material derived from this article or parts of it. The images or other third party material in this article are included in the article’s Creative Commons licence, unless indicated otherwise in a credit line to the material. If material is not included in the article’s Creative Commons licence and your intended use is not permitted by statutory regulation or exceeds the permitted use, you will need to obtain permission directly from the copyright holder. To view a copy of this licence, visit http://creativecommons.org/licenses/by-nc-nd/4.0/.

About this article

Cite this article

Liu, S., Peng, Z., Yu, Q. et al. A novel image semantic communication method via dynamic decision generation network and generative adversarial network. Sci Rep 14, 19636 (2024). https://doi.org/10.1038/s41598-024-70619-9

Received:

Accepted:

Published:

Version of record:

DOI: https://doi.org/10.1038/s41598-024-70619-9

Keywords

This article is cited by

-

SwinJSCC-GAN: enhanced joint source-channel coding for semantic communication with swin transformer and generative adversarial networks

EURASIP Journal on Wireless Communications and Networking (2025)