Abstract

This paper proposes a joint multi-innovation fractional gradient descent identification algorithm for fractional order systems. First, the flexibility of fractional calculus is leveraged to design a joint fractional gradient descent algorithm capable of estimating system parameters and unknown orders. The estimated system parameters are used as the initial conditions to identify the unknown order, and the identified order is used as the update conditions for the system parameters. Through the joint iteration of two fractional order gradients, both the identified order and parameters are updated. In addition, multi-innovation theory is applied to extend the joint fractional gradient descent algorithm to a joint multi-innovation fractional gradient descent algorithm, which improves the system identification accuracy. Then, the convergence of the algorithm is theoretically analyzed. Finally, the effectiveness of the algorithm is verified through numerical simulation and an experiment on the identification of an actual flexible linkage system.

Similar content being viewed by others

Introduction

Fractional calculus (FC) was introduced over 300 years ago. FC has been widely used in control science1,2, biology3, optics4, mathematics5and other fields. Compared with integer calculus, the fractional order model established using FC can more precisely describe dynamic change processes in real physical systems6,7, such as the relaxation and creep of viscoelastic materials8, temperature diffusion9, the spread of viruses10, the consumption of battery energy11, and heat conduction of the human head12.

Fractional order system identification technology involves the use of observed input and output data to estimate the unknown parameters and the fractional order of the system, enabling the establishment of precise mathematical models. This step is essential for controller design. Therefore, scholars have conducted substantial research on fractional order system identification methods. Dai et al.13 used modulation functions and numerical approximation methods to process fractional-order differentials and combined the recursive least-squares method to estimate system parameters. Aguilar et al.14 extended the integer order neural network to a fractional order neural network and successfully applied it to fractional order system identification. Victor et al.15 proposed a long memory recursive prediction error method that simultaneously identified model parameters and fractional order. Tian et al.16 estimated the parameters of a system through the least-squares method, combined with the gradient descent algorithm to identify the fractional order. Li et al.17used Haar wavelets to describe input and output signals and converted the fractional order system into an integral equation. They introduced an optimization method to solve integral equation and obtained the system parameters and orders. Gao18 studied a reduced-order Kalman filter to mitigate the impact of measurement noise on system parameters estimation accuracy. Galvao et al.19 converted a fractional-order system into a cubic equation using exponentially modulated signals and determined the fractional orders and system parameters by solving the equation. Wang et al.20 extended the frequency domain subspace parameter estimation method to fractional order systems. Djouambi et al.21 used a recursive least-squares and recursive auxiliary variable method for system identification. Wang et al.22 developed a wavelet integration operational matrix method, using wavelet decomposition and reconstruction to improve the estimation accuracy of system parameters and order coefficients. Li et al.23 proposed a gradient descent method based on forgetting factor to estimate system parameters, but this method could not identify fractional orders. Marzougui et al.24 combined the recursive least-squares and Levenberg–Marquardt algorithms to estimate the parameters and fractional order of a system. Zhang et al.25 proposed block pulse functions and the Gauss–Newton method to identify system parameters. Zhang et al.26converted a system identification problem into a nonlinear least-squares optimization problem and iteratively solved the optimization equation based on the sensitivity function. Moghaddam27combined the evolutionary method and the least-squares algorithm to estimate the parameters and fractional order of a system. Additionally, several intelligent optimization algorithms have been used in fractional order system identification, such as the genetic algorithm28, improved differential evolution algorithm29, and improved quantum bacterial foraging algorithm30.

As seen from the above-mentioned studies, the gradient descent method has been widely used in fractional-order system identification because of its broad application range and easy engineering implementation. However, gradient descent algorithms usually need to be combined with other algorithms to identify fractional order and system parameters separately. Moreover, integer order gradients have low convergence speed and accuracy. Therefore, this study proposes a joint multi-innovation fractional gradient descent identification algorithm.

The main contributions of this study can be summarized as follows:

-

The proposed algorithm uses two fractional gradients to iterate interactively, avoiding the complexity of combining multiple different algorithms for fractional order system identification.

-

The proposed algorithm combines multi-innovation theory and the flexibility of FC to improve the system identification accuracy and identification speed.

-

The effectiveness of the proposed algorithm in engineering applications is verified through an experiment involving the identification of a flexible linkage system.

-

The proposed algorithm can be extended to the identification of fractional order nonlinear systems or fractional order time-delay systems.

The remainder of this paper is organized as follows: Mathematical background and system description are presented in Sect. 2. The joint multi-innovation fractional gradient descent identification algorithm is proposed in Sect. 3. The convergence of the algorithm is analyzed in Sect. 4. A simulation example and an experiment are provided in Sect. 5. Finally, the conclusions are presented in Sect. 6.

Mathematical background and system description

Fractional calculus

FC extends integral or differential from integers to arbitrary order31. The definition of FC is also different from that of integer calculus. There are three most widely used definitions of FC, and each definition represents fractional operators differently. This paper mainly uses fractional order operators defined by Grünwald-Letnikov to describe fractional order systems32

where \(\Delta\) denotes the discrete fractional derivation operator, \(\overline{\alpha }\) is the fractional order of the operator, \(x(kh)\) represents a function of \(t = kh\), k represents the number of sampling times, h denotes the sampling interval, and \(\left( \begin{gathered} {\overline{\alpha }} \hfill \\ j \hfill \\ \end{gathered} \right)\) can be expressed as follows

Define the \(\beta (j) = ( - 1)^{j} \left( \begin{gathered} {\overline{\alpha }} \hfill \\ j \hfill \\ \end{gathered} \right)\). Then, Eq. (1) can be rewritten into the following equation

Under the assumption of h = 1, Eq. (3) can be expressed as follows

Fractional order linear systems

The fractional order linear discrete system is expressed as follows

where \(y(k)\) and \(u(k)\) are the system output and input, respectively; \(v\left( k \right)\) represents white noise with zero mean and finite variance \(\sigma_{v}^{2}\); and \(k = 1,2, \cdots ,N\) denotes the data length. The polynomials \(A(z)\) and \(B(z)\) of the system can be expressed as

where \(z^{ - 1}\) is the fractional backshift operator. \(a_{i}\) and \(b_{j}\) are the polynomial coefficients, \(\alpha_{i}\) and \(\gamma_{j}\) are the fractional orders of the polynomial.

When the fractional order of the polynomials in Eq. (6) and Eq. (7) becomes \(\alpha_{i} = i - \overline{\alpha },\gamma_{j} = j - \overline{\alpha }\), the system is referred to as a same-dimensional fractional order system; that is

According to \(z^{{ - i + \overline{\alpha }}} x(k) = \Delta^{{\overline{\alpha }}} x(k - i)\), Eq. (8) can be rewritten as

Equation (9) can be expressed as

where \(\varphi^{{\text{T}}} (k,\overline{\alpha })\) and \(\theta\) are the information vector and the parameter vector, respectively, which can be expressed as

This paper considers the same-dimensional fractional-order system in Eq. (10). In the following sections, identification methods are proposed to estimate the unknown fractional order \(\overline{\alpha }\) in Eq. (11) and the unknown parameter vectors in Eq. (12).

Joint multi-innovation fractional gradient descent identification algorithm

Compared with the integer order system, the fractional order system (10) introduces an unknown fractional order \(\overline{\alpha }\), and a strong coupling exists between the fractional order and each parameter. Inaccurate identification of the fractional order \(\overline{\alpha }\) or parameters will affect the dynamic performance of the system, which increases the complexity of system modelling. Therefore, this paper proposes the joint multi-innovation fractional gradient descent identification algorithm. The algorithm’s identification process is illustrated in Fig. 1.

Identification process of the joint multi-innovation fractional gradient descent identification algorithm.

The algorithm is divided into two stages: parameter identification and fractional order identification. In the first stage, the fractional gradient of the unknown parameters is established, and the gradient function is continuously iterated according to the length of the observation data to obtain the final parameter identification results. The parameter identification results of the first stage are used as the initial conditions for fractional order identification. In the second stage, the fractional gradient of the system order is established, and the unknown fractional order is also iterated according to the length of the observation data. The fractional order identification results in the second stage are used as the update conditions for parameter estimation. The identification results of the two stages are combined to achieve accurate identification of the fractional order and system parameters through joint iteration.

Identification of fractional order system parameters

This paper leverages the high flexibility of FC to extend the integer gradient into a fractional gradient to enhance algorithm performance. In addition, the use of multi-innovation theory enables the efficient utilization of the observation data information to further improve the identification speed and accuracy of the algorithm.

The output error of the system (10) is defined as

where \(\hat{\alpha }\) and \(\hat{\theta }\) are the estimated values of the fractional order \(\overline{\alpha }\) and system parameters \(\theta\), respectively.

Multi-innovation theory combines current and past time data to form a multi-innovation matrix, which is then used to estimate the current unknown information33,34. In multi-innovation theory, \(y(k),\varphi^{{\text{T}}} (k,\hat{\alpha }),e(k,\hat{\alpha })\) and \(v(k)\) are referred to as single innovations. We expand the single innovation to a p-dimensional multi-innovation matrix.

where p denotes the multi-innovation length. The larger the value of p, the larger the dimension of the matrix composed of data at the past moment and the current moment, and the higher the data utilization rate. According to Eqs. (13) - (15), Eq. (17) can be rewritten as

According to the obtained multi-innovation matrix, the criterion function of the unknown parameters in Eq. (10) is defined as follows

In Eq. (19), \(\Phi^{{\text{T}}} (p,k,\hat{\alpha })\) contains the unknown fractional order \(\hat{\alpha }\). To calculate the extreme value of the \(J_{1} (\hat{\theta })\) and obtain the estimated parameter \(\hat{\theta }\), we provide the initial state of the fractional order, enabling iteration using the fractional-order gradient

where \(\nabla^{\alpha }\)is the fractional order gradient. According to35, the fractional order gradient \(\nabla^{\alpha } f(x)\) of any function \(f(x)\) can be expressed as

where \(0 < \alpha < 2\) is the fractional order of the gradient, \(\varepsilon\) is a small non-negative number, c is the low integral terminal, \(\mu\) is the step size, and \(\Gamma (\alpha )\) is the gamma function. According to Eq. (21), Eq. (20) can be rewritten as

where \(\Xi (\hat{\theta },\alpha ,k) = {\text{diag}}\left\{ {\left[ {\left| {\hat{\theta }_{1} (k - 1) - \hat{\theta }_{1} (k - 2)} \right| + \varepsilon } \right]^{1 - \alpha } ,\left[ {\left| {\hat{\theta }_{2} (k - 1) - \hat{\theta }_{2} (k - 2)} \right| + \varepsilon } \right]^{1 - \alpha } , \cdots ,} \right.\)\(\left. {\left[ {\left| {\hat{\theta }_{l} (k - 1) - \hat{\theta }_{l} (k - 2)} \right| + \varepsilon } \right]^{1 - \alpha } } \right\}\), and l is the number of identification parameters. The step size \(\mu\) can be expressed as

where \(\overline{r}(k)\) is the iteration factor of the step size, and \(\left\| \cdot \right\|\) is the L2-norm. Combining Eqs. (18), (22), and (23), we can obtain the joint multi-innovation fractional gradient descent algorithm.

From Eq. (24), the unknown parameters can be identified through constant iteration, and the identification results \(\hat{\theta }(N)\) can be used as the initial state for identifying the fractional order.

Identification of the system fractional order

At present, some studies assume that the fractional order of the system is known and focus solely on estimating the parameters of the system. Others combine two algorithms, with one algorithm estimating the system parameters and the other identifying the fractional order. These combined methods increase the identification complexity of the system. Therefore, this study proposes a joint gradient identification algorithm. According to the parameter identification results \(\hat{\theta }(N)\), a fractional gradient of fractional order is constructed, and it is used to estimate the fractional order of the system. Through the joint iteration of the two gradients of the system parameters and the fractional order, the unknown parameters and the fractional order are simultaneously identified.

The fractional order identification process involves using the system parameters identified in one stage, combining them with the fractional gradient, and iteratively updating the fractional order.

The criterion function of fractional order is defined as

The fractional gradient of \(J_{2} (\hat{\alpha })\) is expressed as

where \(\mu_{1}\) is the fractional order step size. According to Eq. (15), \(\Phi^{{\text{T}}} (p,k,\hat{\alpha })\hat{\theta }(N)\) in Eq. (26) can be expanded as

The partial derivative of the inner variable \(\varphi^{{\text{T}}} (k,\hat{\alpha })\hat{\theta }(N)\) of Eq. (27) can be expressed as

where \(\kappa \hat{\alpha }(k - 1)\) is a small variation of \(\hat{\alpha }(k - 1)\).

The multi-innovation matrix \(\Phi_{{\hat{\alpha }}} (p,k,\hat{\alpha })\) is defined as

From Eq. (28) and (29), Eq. (26) can be rewritten as

The fractional order gradient iteration equation is expressed as

The fractional order step size \(\mu_{1}\) is taken as follows

According to Eqs. (31) and (32), we can derive the joint multi-innovation fractional gradient for identifying the fractional order.

The fractional order can be identified using Eq. (33). The identification result \(\hat{\alpha }(N)\) is used as the update condition for parameter estimation. Through interactive iteration, joint identification of parameters and fractional order is achieved. The steps of the joint multi-innovation fractional gradient descent identification algorithm are summarised below.

Convergence analysis

Convergence analysis is an important basis for algorithm stability and reliability. Therefore, the convergence of the joint multi-innovation fractional gradient descent identification algorithm is analyzed in this section. Convergence analysis mainly includes two parts, namely parameter convergence and fractional order convergence. To prove the convergence of the algorithm, some lemmas are required.

Lemma 1

36 For fractional order systems (10) and the joint multi-innovation fractional gradient descent identification algorithm, there exist constants \(0 < \chi \le \rho < \infty\) such that the following strong persistent excitation condition holds.

Then, \(\overline{r}(k)\) in Eq. (23) satisfies the inequality

Lemma 2

36 The fractional order \(\hat{\alpha }\) leads to the input fractional order information vector \(\varphi (k,\hat{\alpha })\) of the system satisfying the continuous excitation condition under the assumption that the noise signal \(\left\{ {v(k)} \right\}\) is an independent random signal, and the expectation of the signal satisfies \({\mathbb{E}}\left[ {v(k)} \right] = 0,{\kern 1pt} {\kern 1pt} {\kern 1pt} {\kern 1pt} {\mathbb{E}}\left[ {v(k)^{2} } \right] = \sigma_{v}^{2}\).

Lemma 3

37 Under the assumption that the non-negative sequences \(\left\{ {x(t)} \right\},\left\{ {a_{t} } \right\}\) and \(\left\{ {\beta_{t} } \right\}\) satisfy \(x(t + 1) \le (1 - a_{t} )x(t) + \beta_{t}\) and \(a_{t} \in [0,1),\sum\limits_{t = 1}^{\infty } {a_{t} } = \infty ,x(0) < \infty\), then \(\lim {\kern 1pt} {\kern 1pt} {\kern 1pt} \sup x(t{)} \le {\text{lim}}{\kern 1pt} {\kern 1pt} {\kern 1pt} \frac{{\beta_{t} }}{{a_{t} }}\).

Lemma 4

38 Non-negative random variables \(T(n),\beta (n)\) and \(\alpha (n)\) are measurable with respect to a non-decreasing sequence of \(\sigma\) algebra \(F(n - 1)\) if the following inequality is satisfied.

Then, we can obtain \(\sum\nolimits_{n = 1}^{\infty } {\beta (n) < \infty }\) and \(\lim T(n{)} \le T\),where \(T(n)\) is a finite non-negative random variable.

Convergence of identification parameters

The parameter estimation error vector is defined as follows

Equations (10) and (14)-(16) are used to obtain the multi-innovation matrices of the fractional-order system.

According to Eq. (38), by subtracting \(\theta\) from both ends of Eq. (24), we can obtain

Taking the norm of both sides of Eq. (39).

According to the discussion in Ref39. and the definition \(\Xi (\hat{\theta },\alpha ,k)\) in Eq. (22) that \(0 < \hat{\theta }_{i} (k - 1) - \hat{\theta }_{i} (k - 2) < 1,{\kern 1pt} {\kern 1pt} {\kern 1pt} {\kern 1pt} {\kern 1pt} {\kern 1pt} {\kern 1pt} {\kern 1pt} {\kern 1pt} i = 1,2, \cdots ,l\) holds.

Then, \(\Xi (\hat{\theta },\alpha ,k) = \max \{ \varepsilon^{1 - \alpha } ,\left( {1 + \varepsilon } \right)^{1 - \alpha } \}\) with \(0 < \alpha < 2\). Combining Eq. (41), Lemma 1, and Lemma 2 and considering that p = N, we can obtain

Applying Eq. (42), Eq. (43), and Lemma 1 and taking the expectation of Eq. (40), given that \(r(k) = \overline{r}(k - 1) + \left\| {\Xi (\hat{\theta },\alpha ,k)\Phi (p,k,\hat{\alpha })} \right\|^{2}\), we obtain \(r(k)> \overline{r}(k - 1)\). According to Lemma 2 and considering that \(V(p,k)\) and \(\tilde{\theta }(k - 1),\Phi (p,k,\hat{\alpha }),I_{n} - \frac{{\Phi (p,k,\hat{\alpha })\Xi (\hat{\theta },\alpha ,k)\Phi^{{\text{T}}} (p,k,\hat{\alpha })}}{r(k)\Gamma (2 - \alpha )}\) are not linearly correlated, we have

From Lemma 3, the limit of Eq. (44) is determined

When \(k \to \infty\),\({\mathbb{E}}\left[ {\left\| {\tilde{\theta }} \right\|^{2} } \right] \to 0\), the convergence of the identification parameters is proven. Subsequently, we prove the convergence of the identification order.

Convergence of the identification fractional order

The fractional order estimation error vector is defined.

According to Eqs. (38) and (46), subtracting \(\overline{\alpha }\) from both ends of Eq. (33) results in

Taking the norm of both sides of Eq. (47)

Taking the expectation of Eq. (48)

Equation (49) can be expressed in the same form as Eq. (36) given that \(r_{1} (k) = \overline{r}(k - 1) + \left\| {\Phi_{{\hat{\alpha }}} (p,k,\hat{\alpha })[\left| {\overline{\alpha }(k) - \overline{\alpha }(k - 1)} \right| + \varepsilon )^{1 - \alpha } ]} \right\|^{2}\); therefore, \(r_{1} (k)> \overline{r}(k - 1)\). According to Lemma 1 and Lemma 2, we have

where \(\tilde{\theta }\) is a fixed value, \(\Phi_{{\tilde{\alpha }}} (p,k,\tilde{\alpha })\) and \(\Phi^{{\text{T}}} (p,k,\tilde{\alpha })\) are finite. When \(k \to \infty\), Eq. (50) is convergent. Similarly, we have

When \(k \to \infty\), Eq. (51) is convergent. Using Lemma 4, we can deduce that \({\mathbb{E}}\left[ {\left\| {\tilde{\alpha }(k)} \right\|} \right]\) converges to random numbers. Therefore, the convergence of fractional order is proven.

The comprehensive analysis of Eqs. (45), (50), and (51) confirms the convergence of the joint multi-innovation fractional gradient descent identification algorithm. As k continues to increase, the parameters and fractional order can continue to converge, and the theory proves the stability and reliability of the algorithm.

Simulation and experiment

This paper presents a numerical example and an experiment on identifying a flexible linkage fractional order system. To verify the superiority of the proposed method, it is compared with the stochastic gradient (SG) descent algorithm and the fractional order stochastic gradient (FOSG) descent algorithm. The effects of multi-innovation length and fractional gradient order on the convergence speed and accuracy of the algorithm are analyzed. All simulations and experiment are performed using MATLAB (R2020a) software installed in a computer equipped with an Intel Core i7 12700H CPU with 16 GB RAM.

The system identification accuracy evaluation index is as follows

Numerical simulation

Consider the fractional order systems as follows

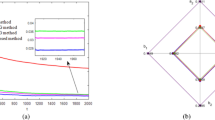

The parameters to be identified are \(\theta = \left[ {a_{1} ,a_{2} ,b_{0} } \right] = \left[ {8,5,3} \right]\) and \(\overline{\alpha } = 1.5\), and the \(\overline{\alpha }\) initial condition is set to 0.5. The system input \(u(k)\) is a persistent excitation signal, and \(v(k)\) is a white noise sequence with zero mean and variance \(\sigma_{v}^{2} = 0.5^{2}\). The proposed algorithm is compared with the SG and FOSG algorithms. In the proposed algorithm and the FOSG algorithm, the fractional order is set to \(\alpha = 1.2\), and the multi-innovation length is set to p = 5. The identification results are presented in Fig. 2 and Table 1.

Comparison of evaluation indices for different identification algorithms: (a) first joint iteration, (b) second joint iteration, (c) third joint iteration.

Figure 2 shows that compared with the SG and FOSG algorithms, the joint multi-innovation fractional gradient descent identification algorithm exhibits higher convergence speed and accuracy. As the number of joint iterations increases, the identification error gradually decreases. By the third joint iteration, the evaluation index decreases from 0.364 to 0.0025. Table 1 shows that the proposed algorithm has higher identification accuracy, and the identification results are closer to the true values. This improvement is due to two main factors: First, FC has high flexibility, which can accelerate the convergence speed and improve the convergence accuracy of the algorithm. Second, the proposed method combines the multi-innovation theory and applies more data in the system identification process; therefore, the identification accuracy can be further improved. Thus, the superiority of the proposed algorithm is verified.

The multi-information length p of the algorithm is a coefficient that can be set flexibly. Different values of p represent different amounts of observation data used for identification. In order to analyze the impact of the p on the performance of the algorithm, p is set to 1, 3, and 5; the algorithm order is set to \(\alpha = 1.2\); and the identification results are recorded in Figs. 3, 4, 5 and Table 2.

Evaluation index of joint iteration number.

Comparison of parameter and fractional order identification errors.

Comparison of the joint iteration process under different multi-innovation lengths: (a) system parameter \(a_{1}\), (b) system parameter \(a_{2}\), (c) system parameter \(b_{0}\), (d) fractional order \(\overline{\alpha }\).

Figure 3 and Table 2 show that as the multi-innovation length increases, the joint iteration error gradually decreases, and the system identification accuracy gradually improves. Notably, shifting from single innovation (p = 1) to multi-innovation (p = 3) increases the amount of information and the information utilization rate, reducing the evaluation index from 0.0083 to 0.0026. As shown in Fig. 4, at p = 5, the system identification error is the smallest. Figure 5 shows that the joint iteration of two multi-innovation fractional order gradients allows for accurate identification of the unknown parameters and fractional order of the system. In addition, as the number of joint iterations increases, the identification error of the system parameter and fractional order gradually decrease and become closer to the true values. Moreover, as shown in Fig. 5d, the convergence speed of the multi-innovation (p = 3 or p = 5) algorithm is greater than that of the single-innovation (p = 1) algorithm, verifying the effectiveness of multi-innovation theory in the proposed method.

The fractional order \(\alpha\) of the algorithm is also a coefficient that can be set flexibly. The impact of different fractional orders on the performance of the algorithm is verified below. p is set to 5, and the fractional orders are set as \(\alpha = 0.5,0.8,1.2,1.5\). The identification results are presented in Fig. 6 and Table 3.

Comparison of evaluation indices for different fractional orders \(\alpha\): (a) first joint iteration, (b) second joint iteration, (c) third joint iteration.

Figure 6a shows that in the first joint iteration, the larger the fractional order, the higher the convergence speed of the algorithm and the smaller the evaluation index. However, in the second (Fig. 6b) and third joint iterations (Fig. 6c), at \(\alpha = 1.5\), the algorithm still converges, but the convergence accuracy is lower than that at other fractional orders. As shown in Table 3, at \(\alpha = 1.5\), the identification error of the system parameters and fractional order are the largest. At \(\alpha = 1.2\), the algorithm exhibits the highest convergence accuracy. At \(\alpha = 0.8\), the convergence accuracy is still high. Therefore, the optimal fractional order range of the proposed algorithm is [0.8, 1.2]. Although an excessively large fractional order reduces the convergence accuracy, the algorithm can still identify the system parameter and the fractional order. Therefore, the effectiveness of the proposed algorithm is verified.

Experiment

A flexible linkage system is utilised for the experiment, which is shown in Fig. 7. This system consists of a flexible linkage, a data acquisition card, a servo motor, an angle sensor, and Quarc real-time control software. The computer inputs the voltage signal and applies it to the servo motor through a real-time data acquisition card, driving the flexible connecting rod to rotate. The deflection angle of the flexible linkage is collected by the angle sensor. Precise control of the drive voltage of the servo motor enables rapid, accurate, and stable deflection of the flexible linkage.

Flexible linkage system experimental device.

The flexible linkage system can be expressed as follows

In Matlab/Simulink, the system input and output measurement units are established using the Quarc database module. The input is a 1 V voltage signal, and the output is the deflection angle of the flexible linkage. The data collection step is 0.004 s, and the collection period is 5 s. Figure 8 shows the experimental data and identified system. The identification result is as follows

Experimental data and identified system.

Figure 8 demonstrates that the proposed algorithm can accurately identify the fractional order and unknown parameters of the flexible linkage system. The identified fractional order system can accurately characterise the dynamic process of the deflection angle of the flexible linkage. Therefore, the flexibility of the joint multi-innovation fractional gradient-descent identification algorithm in practical applications is verified.

Conclusion

This paper proposed a joint multi-innovation fractional gradient descent identification algorithm for fractional order systems. The algorithm identified parameters and fractional orders through the joint iteration of two fractional order gradients. Additionally, multi-innovation theory was applied to extend the joint fractional gradient to a joint multi-innovation fractional gradient. The effectiveness of the algorithm was verified through a simulation example and an experiment. The results indicated that the identification error gradually decreased with each joint iteration. Furthermore, the identification accuracy of the algorithm increased as the multi-innovation length increased. The optimal range for the order value of the joint multi-innovation fractional gradient was [0.8, 1.2], within which the algorithm’s performance was optimal. Finally, the algorithm’s effectiveness and flexibility in practical engineering were confirmed through the identification of a real flexible linkage fractional order system. Overall, the proposed algorithm can accurately and synchronously identify system parameters and fractional order, and it can be extended to the identification of fractional order nonlinear systems or fractional order time-delay systems.

Data availability

This paper completely describes the theoretical research. The collection of data set is random according to different readers, but some codes can be provided by contacting the corresponding author.

References

Zhao, L. D. A note on “Cluster synchronization of fractional-order directed networks via intermittent pinning control”. Physica A 561, 125150 (2021).

Zhao, L. D. Comments on “Finite-Time Control of Uncertain Fractional-Order Positive Impulsive Switched Systems with Mode-Dependent Average Dwell Time”. Circ. Syst. Signal Process. 39, 6394–6397 (2020).

Izadi, M., Yuzbasi, S. & Adel, W. Accurate and efficient matrix techniques for solving the fractional Lotka-Volterra population model. Physica A 600, 127558 (2022).

Guo, T., Deng, J. Q., Mao, Y. & Zhou, X. Improved particle swarm optimization fractional-system identification algorithm for electro-optical tracking system. Fractal Fract. 7, 264 (2023).

Zhao, L. D. & Chen, Y. H. Comments on “a novel approach to approximate fractional derivative with uncertain conditions”. Chaos, Solitons Fractals. 154, 111651 (2022).

Yang, J. P., Li, H. L., Zhang, L., Hu, C. & Jiang, H. J. Synchronization analysis and parameters identification of uncertain delayed fractional-order BAM neural networks. Neural Comput. Appl. 35, 1041–1052 (2023).

Adigintla, S., Aware, M. V. & Arun, N. Fractional order transfer function identification of Six-Phase induction motor using Dual-Chirp signal. IEEE J. Emerg. Select. Topics Power Electron. 11, 5183–5194 (2023).

Han, B. Z., Yin, D. S. & Gao, Y. F. The application of a novel variable-order fractional calculus on rheological model for viscoelastic materials. Mech. Adv. Mater. Struct. 11, 2283126 (2023).

Zhang, R. D., Zou, Q., Cao, Z. X. & Gao, F. R. Design of fractional order modeling based extended non-minimal state space MPC for temperature in an industrial electric heating furnace. J. Process Control 56, 13–22 (2017).

Adel, W., Günerhan, H., Nisar, K. S., Agarwal, P. & El-Mesady, A. Designing a novel fractional order mathematical model for COVID-19 incorporating lockdown measures. Sci. Rep. 14, 2926 (2024).

Wei, Y. & Ling, L. Y. State-of-charge estimation for lithium-Ion batteries based on temperature-based fractional-order model and dual fractional-order Kalman filter. IEEE Access 10, 37131–37148 (2022).

Izadi, M. & Atangana, A. Computational analysis of a class of singular nonlinear fractional multi-order heat conduction model of the human head. Sci. Rep. 14, 3466 (2024).

Dai, Y., Wei, Y. H., Hu, Y. S. & Wang, Y. Modulating function-based identification for fractional order systems. Neurocomputing. 173, 1959–1966 (2016).

Aguilar, C. J. Z., Gómez-Aguilar, J. F., Alvarado-Martínez, V. M. & Romero-Ugalde, H. M. Fractional order neural networks for system identification. Chaos, Solitons Fractals 130, 109444 (2020).

Victor, S., Duhé, J. F., Melchior, P., Abdelmounen, Y. & Roubertie, F. Long-memory recursive prediction error method for identification of continuous-time fractional models. Nonlinear Dyn. 110, 635–648 (2022).

Tian, J. P., Xiong, R., Shen, W. X., Wang, J. & Yang, R. X. Online simultaneous identification of parameters and order of a fractional order battery model. J. Clean Prod. 247, 119147 (2020).

Li, Y. L., Meng, X., Zheng, B. C. & Ding, Y. Q. Parameter identification of fractional order linear system based on Haar wavelet operational matrix. ISA Trans. 59, 79–84 (2016).

Gao, Z. Reduced order Kalman filter for a continuous-time fractional-order system using fractional-order average derivative. Appl. Math. Comput. 338, 72–86 (2018).

Galvao, R. K. H., Teixeira, M. C. M., Assunçao, E., Paiva, H. M. & Hadjiloucas, S. Identification of fractional-order transfer functions using exponentially modulated signals with arbitrary excitation waveforms. ISA Trans. 103, 10–18 (2020).

Wang, L., Cheng, P. & Wang, Y. Frequency domain subspace identification of commensurate fractional order input time delay systems. Int. J. Control, Autom. Syst. 9, 310–316 (2011).

Djouambi, A., Voda, A. & Charef, A. Recursive prediction error identification of fractional order models. Commun. Nonlinear Sci. Numer. Simul. 17, 2517–2524 (2012).

Wang, Z. S., Wang, C. Y., Ding, L. H., Wang, Z. & Liang, S. N. Parameter identification of fractional-order time delay system based on Legendre wavelet. Mech. Syst. Signal Process. 163, 108141 (2021).

Li, J., Zhang, H., Gu, J. & Hua, L. Parameter identification of fractional-order Wiener system based on FF-ESG and GI algorithms. Asian J. Control. 25, 4512–4524 (2023).

Marzougui, S., Bedoui, S. & Abderrahim, K. On the combined estimation of the parameters and the states of fractional-order systems. Process. Inst. Mech. Eng. Part I-J. Syst. Control Eng. 237, 1853–1866 (2023).

Zhang, B., Tang, Y. G., Zhang, J. & Lu, Y. Coefficients and orders identification of fractional order systems based on block pulse functions through two-stage algorithm. J. Dyn. Syst. Measur. Control Trans. ASME. 144, 071001 (2022).

Zhang, T., Lu, Z. R., Liu, J. K., Chen, Y. M. & Liu, G. Parameter estimation of linear fractional-order system from laplace domain data. Appl. Math. Comput. 438, 127522 (2023).

Moghaddam, M. J. Online system identification using fractional-order Hammerstein model with noise cancellation. Nonlinear Dyn. 111, 7911–7940 (2023).

Zhou, S. X., Cao, J. Y. & Chen, Y. Q. Genetic algorithm-based identification of fractional-order systems. Entropy. 15, 1624–1642 (2013).

Yu, W., Liang, H. H., Chen, R., Wen, C. L. & Luo, Y. Fractional-order system identification based on an improved differential evolution algorithm. Asian J. Control. 24, 2617–2631 (2022).

Liu, L., Shan, L., Dai, Y. W., Liu, C. L. & Qi, Z. D. A modified quantum bacterial foraging algorithm for parameters identification of fractional-order system. IEEE Access. 6, 6610–6619 (2018).

Hu, J. B., Zhao, L. D., Lu, G. P. & Zhang, S. B. The stability and control of fractional nonlinear system with distributed time delay. Appl. Math. Model. 40, 3257–3263 (2016).

Zhang, Q., Wang, H. W., Liu, C. L. & Ma, X. J. Multi-innovation identification method for fractional Hammerstein state space model with colored noise. Chaos Solitons Fractals. 173, 113631 (2023).

Mao, Y. & Ding, F. Data filtering-based multi-innovation stochastic gradient algorithm for nonlinear output error autoregressive systems. Circ. Syst. Signal Process. 35, 651–667 (2016).

Ma, P., Ding, F. & Zhu, Q. M. Decomposition-based recursive least squares identification methods for multivariate pseudo-linear systems using the multi-innovation. Int. J. Syst. Sci. 49, 920–928 (2018).

Wei, Y. H., Kang, Y., Yin, W. D. & Wang, Y. Generalization of the gradient method with fractional order gradient direction. J. Franklin Inst. 357, 2514–2532 (2020).

Zhang, Q., Wang, H. W. & Liu, C. L. Hybrid identification method for fractional-order nonlinear systems based on the multi-innovation principle. Appl. Intell. 53, 15711–15726 (2023).

Ding, F. & Chen, T. Performance analysis of multi–innovation gradient type identification methods. Automatica. 43, 1–14 (2007).

Cheng, S. S., Wei, Y. H., Sheng, D. A., Chen, Y. Q. & Wang, Y. Identification for Hammerstein nonlinear ARMAX systems based on multi-innovation fractional order stochastic gradient. Sig. Process. 142, 1–10 (2018).

Cheng, S. S., Wei, Y. H., Chen, Y. Q., Li, Y. & Wang, Y. An innovative fractional order LMS based on variable initial value and gradient order. Sig. Process. 133, 260–269 (2017).

Acknowledgements

The manuscript was supported by the Education Department of Jilin Province (Grant No. JJKH20240314KJ), Jilin Province Science and Technology Development Plan Project (Grant No. YDZJ202401615ZYTS) and Information Perception and Intelligent Control Laboratory.

Funding

The Education Department of Jilin Province,JJKH20240314KJ,Jilin Province Science and Technology Development Plan Project,YDZJ202401615ZYTS

Author information

Authors and Affiliations

Contributions

Zishuo Wang: Methodology, Writing original draft. Beichen Chen: Writingreview and editing. Hongliang Sun: Visualization. Shuning Liang: Validation. All authors reviewed the manuscript.

Corresponding author

Ethics declarations

Competing interests

The authors declare no competing interests.

Additional information

Publisher’s note

Springer Nature remains neutral with regard to jurisdictional claims in published maps and institutional affiliations.

Rights and permissions

Open Access This article is licensed under a Creative Commons Attribution-NonCommercial-NoDerivatives 4.0 International License, which permits any non-commercial use, sharing, distribution and reproduction in any medium or format, as long as you give appropriate credit to the original author(s) and the source, provide a link to the Creative Commons licence, and indicate if you modified the licensed material. You do not have permission under this licence to share adapted material derived from this article or parts of it. The images or other third party material in this article are included in the article’s Creative Commons licence, unless indicated otherwise in a credit line to the material. If material is not included in the article’s Creative Commons licence and your intended use is not permitted by statutory regulation or exceeds the permitted use, you will need to obtain permission directly from the copyright holder. To view a copy of this licence, visit http://creativecommons.org/licenses/by-nc-nd/4.0/.

About this article

Cite this article

Wang, Z., Chen, B., Sun, H. et al. Fractional order system identification using a joint multi-innovation fractional gradient descent algorithm. Sci Rep 14, 30802 (2024). https://doi.org/10.1038/s41598-024-81423-w

Received:

Accepted:

Published:

Version of record:

DOI: https://doi.org/10.1038/s41598-024-81423-w

Keywords

This article is cited by

-

Configuration and reduced-order modeling of a flow system based on experimental data

Scientific Reports (2025)