Abstract

Advanced high strength steels (AHSS) exhibit diverse mechanical properties due to their complex chemical compositions and microstructures. Existing machine learning (ML) studies often focus on specific steel grades, limiting generalizability in predicting and optimizing AHSS properties. Here, an ML framework was presented to predict and optimize the stretch-flangeability of AHSS based on composition-microstructure-property correlations, using datasets from 212 steel conditions. Support vector machine, symbolic regression, and extreme gradient boosting models accurately predicted hole expansion ratio (HER), ultimate tensile strength (UTS), and total elongation (TE). Shapley additive explanations revealed the importance of bainite volume fraction (VB), carbon content (C), and chromium content (Cr) for HER, UTS, and TE, respectively. Multi-objective optimization generated 252 optimized conditions with improved comprehensive mechanical properties. The best optimized chemical compositions (0.12wt.% C-1.10Mn-0.15Si-0.47Cr) along with the carbon equivalent (CE) of 0.44 wt.%, and microstructural features (7.2% ferrite, 44.5% bainite, 40.5% martensite, and 7.8% tempered martensite) yielded HER of 119.8%, UTS of 1013.5 MPa, and TE of 22.7%. This systematic framework enables efficient prediction and optimization of material properties (especially HER), with potential applications across various fields of materials science.

Similar content being viewed by others

Introduction

Advanced high strength steels (AHSS) have gained a widespread application in the automotive industry due to their superior performances in fuel efficiency enhancement, green-house gas emission reduction, crashworthiness improvement, and vehicle weight reduction1. Dual phase (DP) steels, a typical representative of AHSS, are characterized by a soft ferrite matrix with dispersed martensite islands, achieved through carefully selected compositions and strictly controlled processing2. These microstructural features contribute to an optimal balance between strength and ductility. The mechanical properties of DP steels include low yield strength (YS), high ultimate tensile strength (UTS), low yield to tensile strength ratio (YS/UTS), continuous yielding, and high initial work hardening ratio3. Complex phase (CP) steels, as the potential alternative to DP steels, are identified by a ferrite-bainite matrix with a limited amount of martensite4, tempered martensite1,5,6, cementite7or retained austenite8. Regarding mechanical properties, CP steels possess more uniform strain hardening behavior9, superior fatigue resistance10, and better energy absorption capacity10,11 compared to DP steels.

Stretch-flangeability is a crucial parameter in the fabrication of complex-shaped automotive parts, such as door panels, fenders, and body-in-white components, requiring extensive edge stretching during forming operations12. It is evaluated by hole expansion ratio (HER), a significant parameter in assessing the local ductility of DP or CP steels, especially during the complex shape forming process in the automotive industry12,13. HER values are determined by conducting hole expansion tests (HET) following ISO 1663014. During the test, the pre-punched central hole is expanded by a 60° conical punch and the test is stopped until the first through-thickness edge crack is observed on the hole surface. HER results are given by Eq. (1)14,

where, Do and Df represent the initial and final hole diameters before and after HET, respectively. Higher HER values indicate the greater sheared-edge ductility, which are optimum features for DP or CP steels in applications such as stretch flanging and deep drawing.

Earlier literature focused on key factors governing the stretch-flangeability of AHSS. Paul13 summarized that HER is influenced by microstructure features, mechanical properties, hole preparation approaches and punch geometries. For instance, in order to precisely predict HER values without performing HETs, linear or nonlinear correlations were established based on simple uniaxial tensile properties, including normal anisotropy (\(\:\overline{\text{R}}\))15,16, post uniform elongation (PUE)15,17, strain hardening ratio (n)18, and UTS15,17. Also, some other mechanical property, such as initial fracture energy, was found to be closely related to stretch-flangeability12. However, these relationships are only valid for several AHSS grades, such as interstitial free (IF), bake hardened (BH), mild, and high strength low alloy (HSLA) steels, but not for DP or CP steels. Since the complex microstructures of AHSS, often characterized by multiple phases with different resulting mechanical properties, make it difficult to predict their stretch-flangeability using traditional empirical models. These models often fail to attain the complicated relationships between microstructure features and mechanical behavior, leading to inaccurate predictions and limited applicability across different AHSS grades19.

To address this challenge, researchers have been studying the applications of machine learning (ML) techniques to develop predictive models for mechanical properties based on microstructure-property correlations. ML algorithms, such as regression, neural networks, and ensemble methods, have shown great potential in materials science for their ability to learn complex, non-linear relationships from large datasets20,21,22. These algorithms can effectively reveal the correlations between microstructural features and mechanical properties, enabling the development of accurate and generalizable predictive models22,23.

Several studies have successfully applied ML techniques to predict different mechanical properties of metal and alloys. For example, Lee et al.24 predicted the UTS and TE of medium Mn steels, such as a Fe-5.5Mn-0.2 C-0.3Si steel, applying a boosted decision tree regression model. Bhandari et al.25 developed a random forest regression model to predict the high-temperature YS of MoNbTaTiW and HfMoNbTaTiZr high entropy alloys. Ma et al.26 used a random forest algorithm to predict the room-temperature fracture toughness of Nb-Si based alloys based on their chemical compositions. Bao et al.27 employed a support vector regression model to predict the high cycle fatigue life of addictively manufactured Ti-6 Al-4 V alloys based on their defect characteristics. Moreover, Wang et al.28 applied various ML approaches to predict the creep rupture life of Cr-Mo steels. In the investigation, they developed a random forest model which demonstrated the highly precise prediction of Manson-Succop Parameter (MSP).

However, the application of ML to predict the stretch-flangeability of AHSS remains largely unexplored. The limited studies available on predicting stretch-flangeability using ML have primarily focused on several specific AHSS grades. For instance, Lee et al.29 integrated a generative adversarial imputation network with an extra tree regressor model to predict HER results of AHSS based on their experimental tensile properties. However, this study did not consider the microstructural features, which may play a crucial role in determining stretch-flangeability. Li et al.30 tried to evaluate the hole expansion performances of AHSS via different ML approaches according to their alloying elements and experimental microstructure features. While this study demonstrated the potential of ML to predict stretch-flangeability based on microstructure data, it was limited to several AHSS grades.

In this study, 212 datasets from earlier studies15,17,31,32,33,34,35,36,37,38,39,40,41,42,12,43,44,45,46,47,48,49,50,51,52,53,54,55,56,57,58,59,60,61 containing chemical compositions, microstructural characteristics and mechanical properties of AHSS, especially DP and CP steels, were analyzed and processed, completing missing values via multivariate imputation by chained equations (MICE). Then three ML algorithms, such as support vector machine (SVM), symbolic regression (SR) and extreme gradient boosting (XGBoost) were employed to determine correlations between HER, UTS or TE with compositions, including carbon (C), carbon equivalent (CE), manganese (Mn), silicon (Si), and chromium (Cr), and volume fractions of different phases, such as ferrite (VF), bainite (VB), martensite (VM) and tempered martensite (VTM). Here, CE is a measure that quantifies the combined effect of various alloying elements on the hardenability of AHSS, which is assumed to influence HER in this study. CE can be expressed by Eq. (2)62.

where, C, Mn, Cr, Mo, V, Ni, and Cu represent the weight percentages of carbon, manganese, chromium, molybdenum, vanadium, nickel, and copper, respectively. Further, a multiple objective optimization (MOO) approach was utilized to design AHSS with an optimal combination of HER, UTS and TE. This method allows for the appropriate volume percentages of multiple phases. By leveraging the power of ML algorithms and MOO techniques, this study aims to provide valuable insights into the complex composition-structure-property relationships in AHSS and guide the development of new AHSS grades with improved performance.

Results

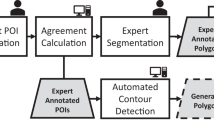

The proposed prediction and optimization framework for the stretch-flangeability of AHSS is illustrated in Fig. 1. A comprehensive four-step approach was implemented, including data collection (Fig. 1a), missing value imputation (Fig. 1b), regression (Fig. 1c), and MOO (Fig. 1d). In the data collection phase (Fig. 1a), 212 steel conditions were investigated, forming datasets containing 29 features including chemical composition content in weight% (iron (Fe), niobium (Nb), Ni, phosphorus (P), nitrogen (N), boron (B), sulfur (S), V, titanium (Ti), Cu, molybdenum (Mo), aluminum (Al), C, CE, Mn, Si, and Cr), microstructure characteristics (ferrite grain size (dF), VF, VB, VM and VTM) and mechanical properties (HER, YS, UTS, UE, PUE, TE, and reduction in area (RA)). Only 28 datasets were complete, highlighting the necessity of missing value analysis and imputation. For missing value imputation (Fig. 1b), MICE was employed. Three MICE methods (multiple linear regression (MLR), Lasso, and Ridge regression) were utilized and evaluated through cross-validation tests. The regression analysis step (Fig. 1c) employed three advanced ML algorithms, SVM with radial basis function kernel, SR using multigene genetic programming, and XGBoost employing gradient boosting decision tree. These methods were chosen for their ability to determine complex, non-linear relationships between chemical compositions and microstructural features with mechanical properties. To enhance interpretability, shapley additive explanations (SHAP) was utilized to explain the impact and importance of each input feature. Finally, a MOO approach (Fig. 1d) was implemented using the reference point based non-dominated sorting genetic algorithm III (R-NSGA-III) to simultaneously optimize HER, UTS, and TE while considering relevant constraints on both chemical compositions and microstructural phase fractions.

Overview of the prediction and optimization framework for the stretch-flangeability of AHSS. (a) Data collection: gathering chemical compositions, microstructural characteristics and mechanical properties from 212 steel conditions. (b) Missing value imputation: employing MICE methods to address incomplete datasets, with missing value analysis and cross-validation for method selection. (c) Regression: utilizing an interpretable ML framework utilizing SVM, SR, or XGBoost algorithm, coupled with SHAP for feature importance interpretation. (d) MOO: implementing R-NSGA-III to simultaneously optimize HER, UTS, and TE while considering compositional and microstructural constraints.

Performance evaluation for MICE

To better illustrate the performance of different imputation approaches in MICE, a 10-fold cross-validation analysis was conducted in this study. Table 1compares the Q2 values for each imputed variable with MLR, Lasso and Ridge. The Ridge regression in MICE demonstrated the best performance in predicting missing values.

Figures 2a-i further illustrates the effectiveness of the Ridge method. Significantly, the 10-fold cross-validation Q2 values for C, CE, Mn, Si, Cr, VF, VB, VM, and VTM were 0.9380, 0.9985, 0.9975, 0.9888, 0.9495, 0.9433, 0.9549, 0.8878, and 0.9460 (Table 1), respectively, with an average of 0.9560. These results indicate that the Ridge regression in MICE can predict unknown values with the superior accuracy across all compositions and microstructure features. Also, the values of frequency for C, CE, Mn, Si, Cr, VB and VTM mainly concentrate around one or few values (Fig. 2a-e, g and i), while those of VF and VM are more diverse (Fig. 2f and h), with VF values having more larger values than VM. According to the shaded regions (points with errors within ± 20%), VM seem to have comparatively more deviation from the real values, which is consistent with higher Q2 values compared to those of others. Overall, the scatter figures show that all imputed values not only fit the features well, but also have small errors with the real values.

Hyperparameter optimization and training process

Three regression methods, including SVM, SR and XGBoost were utilized to investigate the relationships between compositions (C, CE, Mn, Si, and Cr) and microstructural features (VF, VB, VM, and VTM) with mechanical properties (HER, UTS and TE) of AHSS. To optimize the performance of the SVM and XGBoost models, hyperparameter tuning was performed using Bayesian optimization, which aimed to maximize the 10-fold cross validation Q2 values. While to acquire the best SR models, we generated a large number of individuals in the SR population and select the ones possessing the best predicting performance, namely, largest Q2 in the 10% validation set.

Evaluation of Ridge method in MICE for compositions and microstructure features. (a-i) Detailed comparison and error scatter plots, as well as frequency histogram plots illustrating the relationships between imputed values (in purple) and true values (in blue) for individual microstructural characteristics using the Ridge regression method in MICE: (a) C, (b) CE, (c) Mn, (d) Si, (e) Cr, (f) VF, (g) VB, (h) VM, (i) VTM. Note: The shaded regions represent an error range of ± 20%.

For SVM, regularization parameter (C), margin of tolerance (epsilon) and kernel coefficient (gamma) were optimized, while in term of SR, the best symbolic models were tuned with their performances of in validation set. Regarding XGBoost, the number of decision trees (n_estimators), maximum depth of each tree (max_depth), samples’ ratio (subsample), inputs’ ratio (colsample_bytree), and step size at each iteration (learning_rate) were optimized. Additionally, based on prior experience and background information, the kernel function type (kernel) in SVM was set to ‘rbf’. The population size (pop_size), number of generations (num_gen) and mathematical operators (functions.name) in SR were set to 500, 800 and {‘times’, ‘minus’, ‘plus’, ‘neg’, ‘exp’, ‘negexp’, ‘gauss’, ‘sin’, ‘cos’, ‘tan’, ‘sinh’, ‘cosh’, ‘tanh’}, and the base estimator (booster) in XGBoost was set to ‘gbtree’. These adjustments to the hyperparameters were intended to strike a balance between the fitting performance and prediction robustness of the SVM, SR, and XGBoost models.

It is important to note that, even with identical optimized parameters, the results generated by SR using GPTIPS2 were still uncertain. Consequently, the SR results presented for the complete set were the best ones from multiple trials, while the SR results for 10-fold cross-validation were randomly collected.

Prediction of mechanical properties through ML approaches

Figure 3 illustrates the estimating performances of SVM, SR, and XGBoost models for HER, UTS and TE predictions of AHSS. Concerning HER predictions (Fig. 3a, d and g), scatter plots show small deviations from the true values for both the complete set and cross-validation. The frequency distribution plots and shaded regions (representing an error range of ± 20%) indicate that HER predictions from SR and XGBoost are similarly distributed around the shaded regions, although SR’s estimations from the complete set and cross-validation appear to be more coherent. SVM’s estimated scatters are more concentrated around the real values, and the results from the complete set and cross-validation are similar, demonstrating its accurate and robust estimation. Values of R2 for HER predictions are generally larger than 0.82, indicating that the employed ML models can fit the HER values with high accuracy. Moreover, the 10-fold cross-validation Q2 values for all models are larger than 0.74, suggesting good predictive abilities. The XGBoost model demonstrated superior fitting performance on the training set with an R2 of 0.9985, significantly outperforming both SVM and SR models having R2 values of 0.9518 and 0.8255, respectively. However, the cross-validation Q2 values for XGBoost and SR are only 0.8007 and 0.7427, respectively, showing relatively high predictive ability for unknown data. Notably, SVM regression for HER yields a Q2 of 0.8778, which is not only close to its R2 value, but also prominently higher than those of SR and XGBoost. These metrics indicate that SVM provides the best estimations with minimal overfitting bias, and the close alignment between R2 and Q2 underscores its potential for generalization.

For UTS predictions (Fig. 3b, e and h), the scatter distributions show excellent coherence within the shaded regions (errors within ± 20%) for all three ML models, and the distinctions between the complete set and cross-validation are small, indicating good estimating ability. XGBoost’s estimations of UTS are particularly close to the real values. The R2 values for UTS predictions typically exceed 0.82, suggesting that the ML models provide constructive estimations. The 10-fold cross-validation Q2 values are approximately 0.80, demonstrating reliable predictive capability across different datasets. As exhibited in Fig. 3, the R2 values for SVM and SR are 0.9217 and 0.8200, respectively, while the Q2 values are 0.8443 and 0.8012, respectively. Therefore, the estimating accuracy of SVM and SR is similar in cross-validation conditions, with SVM showing an advantage in the complete set and SR superior in less distinction between R2 and Q2. XGBoost’s estimating performance is notably leading in both the complete set and cross-validation, with R2 and Q2 values of 0.9987 and 0.8913, respectively, illustrating the highest regression accuracy and the best ability of predicting unobserved data.

In terms of TE predictions, the scatter plots in Fig. 3c, f and i show that SVM, SR, and XGBoost all have multiple cross-validation points outside the shaded regions (errors within ± 20%), indicating that the predicting performances for TE are not as satisfactory as those for HER and UTS. The scatter and residual plots for the XGBoost model show prominent distinctions between the complete set and cross-validation, which may be related to overfitting issues. SR exhibits the lowest R2 (0.5962) and Q2 (0.4972) values among three ML models for TE predictions. The R2 value indicates that SR cannot fit TE with good accuracy, while the small difference between R2 and Q2 suggests SR does not overfit. The R2 values for TE predictions using SVM and XGBoost are 0.8234 and 0.9874, respectively, which are greater than 0.82, and their Q2 values are 0.7181 and 0.6500, respectively, which are no lower than 0.65. These metrics suggest that SVM and XGBoost can provide more acceptable information on TE. For SVM, though its R2 value is slightly lower than that of XGBoost, its larger Q2 value and less distinction between R2 and Q2 indicates that SVM might have a less severe overfitting problem, which is exactly needed for predicting and optimizing AHSS’s properties.

Performance evaluation of ML models for predicting key mechanical properties of AHSS. (a-c) SVM model performance: (a) HER, (b) UTS, and (c) TE. (d-f) SR model performance: (d) HER, (e) UTS, and (f) TE. (g-i) XGBoost model performance: (g) HER, (h) UTS, and (i) TE. There exist detailed comparison and error scatter plots, as well as frequency histogram plots to illustrate the relationships between true values (in red), predicted values in complete sets (in dark blue) and cross-validation sets (in dark orange). Note: The shaded regions represent an error range of ± 20%.

In summary, SVM demonstrated the best performance for HER predictions, with a balanced R2 (0.9518) and Q2 (0.8778) values, indicating its ability to both fit the data well and predict unseen data samples with the highest accuracy. XGBoost excelled in UTS predictions, with an R2 of 0.9987 and a Q2 of 0.8913, illustrating the highest regression accuracy and the least amount of overfitting. For TE predictions, all models had lower R2 and Q2 values compared to HER and UTS, while SVM, with an R2 of 0.8234 and a Q2 of 0.7181, takes an advantage over others in predicting unknown values. These findings highlight the importance of selecting appropriate ML algorithms for predicting different mechanical properties and the need for careful evaluation of model performance using both R2 and Q2 metrics to assess fitting accuracy and generalization ability. As a result, the ML models with the best predictive performances for HER, UTS and TE, namely, SVM models for HER and TE, as well as XGBoost model for UTS, are applied in the following process of MOO to provide the most reliable virtual samples.

In addition, SR models offer the advantage of generating interpretable mathematical expressions that describe the relationships between compositions (C, CE, Mn, Si, and Cr) and microstructural features (VF, VB, VM and VTM) and mechanical properties (HER, UTS and TE). The SR-derived equations are given in Eqs. (3)-(5),

Interpretation of the trained ML models with SHAP analyses

To better understand the relationship between chemical composition contents (C, CE, Mn, Si, and Cr) and microstructural features (VF, VB, VM, and VTM) with mechanical properties (HER, UTS, and TE), SHAP analysis was employed. This method helps visualize the importance and impact of each input feature on the model’s predictions.

Figure 4 presents SHAP plots for each property across different ML models. The upper scatter plots used color coding (red for high, purple for mean, and blue for low values) to represent feature values, while the position on the x-axis indicates the SHAP value. The lower bar charts display the average SHAP values, indicating overall feature importance.

For HER (Fig. 4a, d and g), all ML models (SVM, SR and XGBoost) identified VB as the most crucial feature. The SVM model, which performed best in regression and prediction tasks, ranks VB as the most important feature, followed by CE. The scatter plot distributions suggest a positive correlation between VB and HER, and a negative correlation between CE and HER. Regarding UTS (Fig. 4b, e and h), SVM and XGBoost models consistently highlight C as the most significant feature, and all ML models indicate that VM also plays a significant role. Given XGBoost’s superior performance in UTS prediction, its emphasis on C and VM appears reliable. The scatter plots for C and VM in SVM, SR and XGBoost models, indicate a positive relationship between C and VM with UTS. In terms of TE (Fig. 4c, f and i), both SVM and SR models consistently identified Cr and CE as the leading important features. The scatter plot distributions across all these three models (SVM, SR and XGBoost) suggest a positive correlation between Cr and TE, and a negative correlation between CE and TE, with these relationships being particularly pronounced in SVM and SR models. Considering SVM is the best predictive model for TE, its indication of Cr as the most important feature is reliable.

SHAP analysis of the importance of compositions and microstructural features for predicting mechanical properties of AHSS using various ML approaches. (a-c) Feature importance derived from SVM models: (a) HER, (b) UTS, and (c) TE. (d-f) Feature importance derived from SR models: (d) HER, (e) UTS, and (f) TE. (g-i) Feature importance derived from XGBoost models: (g) HER, (h) UTS, and (i) TE. Note: For the SHAP scatter plots, the SHAP value measures the contribution value of a certain feature value for the outcome property, while a red scatter indicates a relatively large feature value, while blue scatter indicates a relatively small feature value. For the SHAP bar plots, the features lying on the upper positions, having larger SHAP mean values, are more influential for the outcome property.

Analysis of MOO solutions with ML models

Multi-objective optimization was employed to determine the optimal combinations of compositions and microstructural features (C, CE, Mn, Si, Cr, VF, VB, VM, and VTM) for achieving the superior mechanical property combination of HER, UTS and TE. Using the best ML models (i.e., SVM for HER and TE, as well as XGBoost for UTS) for predicting these properties, Pareto optimal solution points could be determined through the R-NSGA-III algorithm.

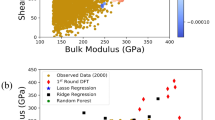

Figure 5 illustrates the optimization results. The optimal points show ranges of 31.0–181.0% for HER, 691.3–1296.1 MPa for UTS, and 5.9–43.0% for TE. Figure 5a reveals conflicting relationships between HER, UTS, and TE in the distribution of optimal solutions. The Pareto Front points in Fig. 5b and c, and 5d demonstrate negative correlations of TE-UTS, HER-TE, and HER-UTS, respectively. The strength of these negative associations varies, with HER and UTS showing the strongest inverse relationship, while HER and TE exhibit the weakest. Notably, the optimal solution points generally outperform the original dataset in terms of HER, UTS, and TE, indicating improved performance over existing steel conditions.

Visualization of optimized and real mechanical property results for AHSS. (a) 3D scatter plot of optimized values versus real data regarding HER, UTS and TE of AHSS. (b-d) 2D projections of the 3D plot, showing detailed insights in specific property correlations: (b) TE and UTS, (c) HER and TE, and (d) HER and UTS.

To identify more practical steel conditions, selection criteria based on industrial experience were applied. The applied criteria included (i) the product of UTS and TE exceeding 22,000 MPa × 100% and (ii) UTS over 980 MPa. As illustrated in Fig. 6, these selections show ranges of 31.0–119.8% for HER, 992.6–1296.1 MPa for UTS, and 20.5–33.8% for TE, with average values of 60.4%, 1096.1 MPa, and 26.8%, respectively. The product of UTS and TE ranges from 22,168.5 to 35,852.8 MPa × %, averaging 29,224.5 MPa × %. The compositions and microstructural features range are listed as follows: C (0.11–0.16 wt%), CE (0.31–0.64 wt%), Mn (0.31–2.43 wt%), Si (0.10–1.61 wt%), Cr (0.42–0.95 wt%), VF (0.3–34.5%), VB (0–70.9%), VM (23.3–97.1%), and VTM (0.1–38.5%), with average values of 0.13 wt%, 0.45 wt%, 0.98 wt%, 0.79 wt%, 0.55 wt%, 14.2%, 17.4%, 59.3%, and 9.0%, respectively.

These selections were further analyzed employing an entropy-weighted technique for order preference by similarity to ideal solution (TOPSIS) ranking method. This approach allowed for evaluating the performance of various steel conditions based on the combination of HER, UTS and TE. Figure 6 illustrates the distributions of the properties for industrially experienced selected (IES) steel conditions, along with the corresponding TOPSIS scores. Higher TOPSIS scores indicate better comprehensive mechanical behaviors. The visualization of the TOPSIS analysis results exhibit a triangular distribution of IES points, the closer to the corners, the larger the point sizes, with this effect being particular obvious on the corner of higher TE values. Therefore, when specific IES steel conditions have larger values in either one of HER, UTS or TE, especically TE, the TOPSIS values of these steel conditions are also larger. Steel conditions with the highest TE values generally achieved the highest TOPSIS scores, indicating superior overall performance. Among the studied steel conditions, those with HER values around 40%, UTS around 1050 MPa, and TE around 33% emerged as the top performers. Considering our strong demand on HER, we select the steel conditions with the highest HER values and a bit lower TOPSIS values as the highest-performing steel conditions. Table 2 presents the TOPSIS scores and rankings for the five highest-performing steel conditions identified through the optimization process. The top five highest-ranking steel conditions with respect to HER values exhibited compositions, including 0.12–0.13 wt.% C, 0.40–0.44 wt.% CE, 0.69–1.17 wt.% Mn, 0.10–0.21 wt.% Si, 0.42–0.47 wt.% Cr, with microstructures consisting of 2.9–12.9% ferrite, 52.7–70.9% bainite, 24.2–32.0% martensite, and 0.1–8.3% tempered martensite. These compositional and microstructural combinations resulted in exceptional mechanical properties, with HER ranging from 110.1% to 119.8%, UTS from 1009.5 MPa to 1032.8 MPa, and TE between 22.6% and 24.2%.

3D scatter plot showing key mechanical properties (HER, UTS and TE) and corresponding TOPSIS scores of industrially experienced selected (IES) steel conditions. Each sphere represents a unique steel condition positioned according to its optimized HER, UTS, and TE values, providing a comprehensive view of the property space occupied by these materials. A blue colormap is employed to represent the TOPSIS score of optimized steel condition. Darker blue indicates higher TOPSIS scores, representing better overall performance across the three properties. Also, the size of each data point is square proportional to its corresponding TOPSIS score, offering an additional visual cue for the overall performance of each condition.

Discussion

Data imputation and quality

This study started with data preparation, particularly focusing on handling missing values. The choice of missing value imputation method is crucial, as it must maximize information extraction from existing data while remaining practical and rational. Specifically, the imputed values for C, CE, Mn, Si, Cr, VF, VB, VM, and VTM must be non-negative, and the inter-relationships between variables must be preserved. Under this circumstance, MICE emerged as the preferred method due to its iterative optimization process and regularization capabilities. To assess the imputation quality of compositions and microstructural features, a 10-fold random cross-validation was conducted on all non-missing values of C, CE, Mn, Si, Cr, VF, VB, VM, and VTM in the original datasets, which allowed for comparing imputed values with actual hidden values.

As shown in Fig. 2, the Ridge regression method in MICE achieved an average Q2 of 0.9560 for imputing C, CE, Mn, Si, Cr, VF, VB, VM, and VTM. This result compares favorably with previous studies. For example, Li et al.30 predicted HER values of AHSS utilizing tensile properties, where the generative adversarial imputation networks (GAIN) method achieved a Q2 of 0.85. However, it’s important to note that the model in this work showed some limitations in accounting for ± 20% ratio deviations when the magnitudes of imputed features, particularly VM and VTM, were small. This observation highlights the need for careful interpretation of imputed values, especially when dealing with low phase percentages of minor phases in the microstructure.

ML models and property prediction

Three ML methods, such as SVM, SR and XGboost, were employed to predict HER, UTS and TE based on the C, CE, Mn, Si, Cr, VF, VB, VM, and VTM. Compared with another two ML models, the SR model provides mathematical expressions of mechanical properties dependent on microstructure characteristics, which allow for further analysis and practical estimations in industrial applications.

To assess the fitting and predictive capabilities of these models, two statistical metrics R2 and Q2 were employed. For the SR model, a 10-fold cross-validation was applied to optimize parameters, using the highest Q2 for function selection. The final models were then developed using the entire datasets, with corresponding R2 calculated. As illustrated in Fig. 5, the best performing models were selected based on their R2 and Q2 values, with Q2 given more weight due to its importance in estimating unknown data. Regarding HER, SVM performed best with R2 = 0.9518 and Q2 = 0.8778. For UTS, XGBoost showed the highest accuracy with R2 = 0.9987 and Q2 = 0.8913. While, in terms of TE, SVM overperformed ML approaches with the lower overall accuracy (R2 = 0.8234, Q2 = 0.7181).

These ML models demonstrate significant achievements, there are still discrepancies between predictions and actual values, particularly for TE. These errors can be attributed to variations in experimental settings, sample dimensions, and material conditions. Additionally, the relatively small number of independent features and inherent limitations of ML models contribute to these discrepancies. The prediction errors in regression models may be partially attributed to variations in the processing parameters of different AHSS. Significant differences in the distribution, sizes and morphologies of phases may be observed between two steel conditions with similar phase volume fractions. Despite these challenges, comparable performance metrics have been achieved by the best ML models for HER, UTS and TE. With R2 values exceeding 0.6 and Q2 values generally above 0.5, it can be concluded that these models are effective and not overfitting. Although exceptionally high predictive accuracy for HER, UTS, and TE (particularly TE) may not have been achieved, reasonably reliable estimates of these properties are provided by prediction models.

Although the SR models didn’t outperform other ML techniques, they provide explicit expressions that offer valuable insights into the relationships between compositional and microstructural features with mechanical properties microstructural features and mechanical properties. The SR-derived equations reveal that the interaction between compositions, phase fractions and HER is complex and nonlinear, rather than being determined by a single factor or following simple monotonic relationships.

Feature importance and structure-property relationships

SHAP was employed to evaluate the influence and importance of each input variable in predicting HER, UTS and TE, as shown in Fig. 4. This analysis helps uncover the relationships between these properties and bainite volume fraction, carbon content (C), and chromium content, providing insights beyond the complex models of SVM and XGBoost, as well as the intricate mathematical expressions of SR.

The SHAP analysis revealed different patterns for each mechanical property. For HER, VB is considered as the most significant promoting factor, in agreement with previous studies63 which suggested that increased VB improved hole expansion performances of multi-phase steels. In contrast, CE and VM were identified as critical depressing factors, consistent with findings64 that higher VM of DP steels can induce cracks and lower HER values due to the difference in deformation capacity between soft ferrite and hard martensite. Regarding UTS, C and VM were found to be the leading promoting factor, corroborating earlier research64 that higher VM led to higher UTS of DP steels. Interestingly, VB appeared as a significant depressing factor for UTS, which, while seemingly contradictory to some studies on ferrite-bainite steels65, may be explained by the complex interactions in multi-phase steels. For TE, Cr was identified as the most significant promoting factor, while CE emerged as the most significant depressing factor.

Multi-objective optimization and practical implications

The MOO of HER, UTS and TE using the R-NSGA-III algorithm offered opportunity for enhancing comprehensive mechanical behavior of AHSS. The selection of aspiration points based on initial R-NSGA-III results proved crucial in generating superior optimal solutions. Further refinement through domain knowledge and entropy-weighted TOPSIS analysis enhanced the practicality and comprehensiveness of the final solutions. Figure 5a demonstrates the effectiveness of the optimization approach, with optimized solutions (red points) showing consistently higher values for HER, UTS, and TE compared to the original data. However, the distributions in Figs. 5b-d reveal important trade-offs among these properties. The negative relationships observed, particularly strong between HER and UTS, moderate between TE and UTS, and weak between HER and TE, underscore the challenges in simultaneously maximizing all three properties. The inverse relationship between HER and UTS can be attributed to the reduced energy absorption capacity of steels with higher UTS, leading to lower HER values66. This trade-off is a critical consideration in AHSS design.

Guided by industrially experienced selected (IES) steel conditions (UTS × TE ≥ 22,000 MPa × % and UTS ≥ 980 MPa), 60 optimized steel conditions (Fig. 6) were identified, which met these criteria. These steel conditions generally exhibited lower HER values compared to the broader set of optimized points, consistent with the observed property trade-offs. Notably, most of these steel conditions featured low bainite (VB) content and high carbon equivalent (CE) content, aligning with the SHAP analysis results shown in Fig. 4b, e and h.

Further refinement using entropy-weighted TOPSIS allowed for identifying the five representative optimal steel conditions, as shown in Table 2. These conditions exhibited a trade-off relationship between values of HER and TE. Specifically, the optimzied steel conditions have the highest HER from 110.1 to 119.8%, and much lower UTS from 1009.5 MPa to 1032.8 MPa, as well as much lower TE from 22.6 to 24.2%, respectively. These steel conditions have much lower concentration of Si and relatively lower Cr. Their major phase is bainite, followed by martensite and ferrite. These phase percentages largely conform to the composition- microstructure-property relationship revealed by SHAP analysis.

Method

Data collection

In this work, 212 steel conditions were investigated, forming 212 datasets which are listed in Table S1. These datasets were obtained from earlier research. Each dataset contained 29 features, including compositions, microstructure characteristics and mechanical properties. The compositions include Fe, Nb, Ni, P, N, B, S, V, Ti, Cu, Mo, Al, C, CE, Mn, Si and Cr. The microstructural features consisted of dF, VF, VB, VM, and VTM. Concerning mechanical properties, HER, yield strength (YS), UTS, uniform elongation (UE), post uniform elongation (PUE), and TE were collected. Among the 212 datasets, only 28 were complete datasets, while others had varying degrees of missing data. The values of HER, UTS and TE were provided in each dataset. The presence of missing data in the majority of datasets highlights the significance of employing missing data analysis and imputation methods to ensure the robustness and reliability of the derived models.

Missing value imputation

Missing value analysis

The missing value analysis was performed on the acquired experimental data using the missing value analysis function in Statistical Package for the Social Sciences (SPSS) to identify the percentages of variables (features, such as microstructure characteristics and mechanical properties), datasets (steel conditions), and values containing missing values, as seen in Fig. 7a. From Fig. 7a, the missing rates of variables, datasets and values are 89.66%, 86.79% and 24.46%, respectively.

Additionally, the md.pattern() function from the multivariate imputation by chained equations (MICE) package in R was employed to analyze the missing value patterns. Figure 7b show the percentage of missing values in each variable and the missing value patterns. From Fig. 7b, the missing values follow non-monotone patterns, since some missing value blocks are above non-missing value blocks, indicating that missingness of certain variables does not lead to the missingness of all subsequent variables.

The theory of missing value mechanisms was derived from the classification framework developed by Rubin67. Missing value mechanisms include missing completely at random (MCAR), missing at random missing (MAR), and missing not at random (MNAR)68. Specifically, MCAR indicates that the probability of missingness is unrelated to both observed and unobserved values in the datasets. MAR means that the probability of missingness depends only on observed values, but not on unobserved values. Furthermore, MNAR arises when the probability of missingness is associated with unobserved values. In order to explore the missing value mechanism of the datasets in this study, the expectation maximum (EM) function in the SPSS multiple imputation options was chosen, generating Little’s MCAR chi-square test which helps determine whether the missing value is completely at random. In this work, the significance value of the MCAR test was 0.00, which was lower than 0.05, indicating that the missing data was not completely at random and may be related to other observed or unobserved values69 in the 212 datasets. However, distinguishing between MAR and MNAR is not feasible through statistical tests alone, and several suggestions on this case were provide by the literature70,71. Cummings70 suggested that the assumption of MAR could be reasonable if the datasets are properly sampled. Also, Pedersen et al.71 proposed that the assumption of MAR is more plausible when more variables are integrated into the imputation model than the analysis model. Therefore, the missing value mechanism for current 212 datasets can be considered MAR.

Missing value analysis. (a) Percentage of variables, datasets and values with missing values. (b) Percentage of missing values for each variable, arranged in ascending order along with patterns of missing data across the dataset, illustrating the distribution and co-occurrence of incomplete information.

Multivariate imputation by chained equations (MICE)

MICE was employed to handle missing data in the datasets consisting of 212 steel conditions. Figure 8represents the schematic illustration of MICE algorithm. In this iterative method, each missing value was initially filled with the mean of its corresponding variable (feature). Then, the imputed values for one variable were temporarily reset to missing. A prediction model was trained using the observed values of this variable as the dependent variable and all other features as independent variables. After that, the missing values of the variable were replaced with estimates from the prediction model. These steps were repeated for all features containing missing values, completing one iteration. The entire process was repeated for multiple iterations to achieve stable imputations72.

Three MICE methods were utilized, such as MLR, Lasso, and Ridge regression. The termination criterion for these methods was set as a convergence level where the variation between prior and posterior estimations became smaller than 10−3 times the variation of the corresponding variables within the complete (pre-existing) values. Given that microstructural features and mechanical properties are inherently positive, a post-processing step was implemented to convert any negative estimates to zero after each iteration.

Cross-validation tests were conducted to determine the optimal MICE method. Specifically, the observed values in the target features containing missing values were randomly divided into 10 groups. Ten distinct datasets were generated by sequentially replacing one group of observed values with missing values. Each of three MICE methods was applied to these 10 artificially incomplete datasets. The imputed values corresponding to the intentionally hidden observed values were compared with the real values, quantified by Q2 metric. To filter the most valuable features for further ML prediction, we tried cross-validation tests on all variables, and select the variables with highest Q2, which indicates that these variables are highly associated. As a result, 5 compositions, C, CE, Mn, Si and Cr, and four microstructural features, VF, VB, VM, and VTM, are selected to conduct further MICE cross validation and ML fitting.

Upon selection and application of the best-performing MICE method to the 212 steel datasets, a complete dataset suitable for subsequent regression analysis was obtained.

Schematic illustration of MICE algorithm for a dataset with features A, B, and C. The process begins with mean imputation to fill missing values (step i). Next, missing values in feature A are imputed using a regression model (A ~ B + C) to predict and replace them (steps ii and iii). This process is repeated for features B and C (step iv). The algorithm iterates through these steps until a convergence criterion is met, after which the imputation process terminates (step v).

Regression

Support vector machine (SVM)

SVM is a powerful ML algorithm used for both classification and regression tasks73. Figure 9 shows the schematic representation of the SVM structure. For regression, SVM is known as support vector regression (SVR) and this algorithm aims to find a function f(x) that minimizes \(\:\epsilon\:\)deviations from actual target values for all training data, while maintaining simplicity74. The linear SVR function can be expressed by Eq. (6)75,

where, w, x and b denote the weight vector, input vector and bias term, respectively. SVM offers advantages over traditional neural networks, particularly in achieving globally optimal results76. While SVM may not provide explicit interpretations of underlying mechanisms, it offers a robust approach for the regression task in materials science. The performance of SVR relies heavily on parameter optimization75. Key factors include the selection of kernel function type and its parameters, which determine the quality of data projections and regression75. Additionally, the regulation parameter C and error magnitude ε play crucial roles in defining the optimal function75. The optimization problem for SVR can be formulated as Eq. (7)75,

where, \(\xi_{\text{I}}\) and \(\xi_{\text{i}}^{\text{*}}\) are slack variables.

Common kernel functions include linear, polynomial, radial basis function (RBF), and sigmoid, with nonlinear functions often better suited to capture complex interactions in real-world problems76. In this study, the RBF was employed as the kernel function for our SVM model. Since it was chosen for its constrained complexity, which enhances the model’s generalization performance76. The corresponding SVM regression f and its RBF kernel K is given by Eq. (8)77,

where, \(\:{{\upalpha\:}}_{\text{I}}\) are Lagrangian multipliers and \(\:{\upalpha\:}\:=\:{\left[{{\upalpha\:}}_{1},\:{{\upalpha\:}}_{2},\:\cdots\:,\:{{\upalpha\:}}_{\text{n}}\right]}^{\text{T}}\). Moreover, this selection is based on a thorough parameter optimization process, ensuring the best fit for the specific problem of predicting stretch-flangeability of AHSS.

Schematic representation of the SVM structure. The architecture includes an input layer, a hidden layer, and an output layer. The input layer accepts variables X₁, X₂, …, Xn. In the hidden layer, kernel functions K(X₁, X), K(X₂, X), …, K(Xn, X) map the input variables to a higher-dimensional space. The output layer combines the kernel results through weighted linear combinations and a bias term (b), producing the final output as the sum of all weighted kernel functions and the bias.

Symbolic regression (SR)

SR is an advanced ML method that explores explicit mathematical relationships between variables. The schematic illustration of a SR architecture with multiple layers was indicated in Fig. 10. Unlike traditional approaches such as random forest, SR provides researchers with optimal combinations of mathematical operators, variables, and constants to characterize data78. The general form of an SR model can be expressed as Eq. (9):

where, y is dependent variable (i.e., HER, UTS and TE), and x1, x2, …, xnare independent variables (microstructure features). SR is further enhanced through the application of genetic programming (GP), a technique inspired by biological evolution. GP in SR employs strategies of reproduction, mutation, and survival of the fittest to generate and refine populations of mathematical formulas79.

In this work, the Genetic Programming Toolbox for the Identification of Physical Systems, version 2 (GPTIPS2), developed on the MATLAB platform, was utilized. GPTIPS2 implements a Multigene Genetic Programming (MGGP) algorithm, which offers significant advantages over simple SR in terms of flexibility and predictive performance80. The MGGP model can be expressed as Eq. (10)80,

where, a0 represents a bias term, ai denotes the ith scaling coefficient, and gi(x) denotes the ith nonlinear transformation of independent variables (genes). Each gene is an independent SR model, the typically of the form is given by Eq. (11)80,

where, f is a nonlinear function discovered by the GP process, and k<< n(the number of independent variables). GPTIPS2 can identify relatively important variables when presented with a large number of independent variables, contributing to both predictive accuracy and expression simplicity80. The importance of each variable can be quantified using techniques such as frequency of occurrence in the best models or sensitivity analysis. These features make GPTIPS2 particularly suitable for the complex materials science application, where multiple microstructural characteristics may influence stretch-flangeability in non-linear ways.

Schematic representation of a Symbolic Regression (SR) architecture with multiple layers. The structure includes an input layer, several hidden layers, and an output layer. The input layer takes features X₁, X₂, …, Xn, along with a constant term C. The hidden layers perform mathematical operations such as addition, subtraction, logarithms, and identity functions on the input features or outputs from previous layers. The output layer combines the results from the final hidden layer to produce the final prediction.

Extreme gradient boosting (XGBoost)

XGBoos is an advanced gradient boosting framework well-known for its efficiency and wide applicability among ML approaches81. Figure 11shows the schematic illustration of the XGBoost algorithm. XGBoost is fundamentally based on ensemble learning, especially decision tree ensembles, where the final prediction consists of multiple tree outputs. The algorithm’s core strength stems from its utilization of a second-order Taylor expansion of the loss function82. This approach, known as gradient boosting, iteratively refines the model by introducing new trees that address residual errors from previous iterations82. The objective function of XGBoost, which the algorithm seeks to minizine, is given by Eq. (12)81,

where, yi represents the ith observed dependent variable, \(\:{\widehat{{\text{y}}_{\text{i}}}}^{\text{(t-1)}}\) denotes the ith prediction from the previous iteration, xi is the ith independent variable, l means the loss function quantifying prediction error between yi and \(\:{\widehat{{\text{y}}_{\text{i}}}}^{\text{(t-1)}}\), ft is greedy function to minimize the objective, and Ω represents the penalty function for reducing the complexity of the model. Also, the second order Taylor expansion function for \(L\varnothing\) is formulated as Eq. (13)81,82,

where, gi and hi are the first and second derivatives of the loss function with respect to \(\:{\widehat{{\text{y}}_{\text{i}}}}^{\text{(t-1)}}\), respectively.

XGBoost’s advanced features make it particularly suitable for complex materials science applications. XGBoost’s ability to handle sparse datasets is particularly valuable through the integration of tree nodes’ indication, allowing the algorithm to efficiently process and learn from incomplete datasets often encountered in materials characterization. To enhance model generalization and prevent overfitting, XGBoost implements a penalty on model complexity. Furthermore, XGBoost employs cache-aware strategies to optimize memory usage and reduce computation time81. A key strength of XGBoost lies in its ability to approach target values both exactly and approximately81. This flexibility enhances its predictive capabilities across various AHSS compositions and processing conditions, capturing both linear and non-linear relationships between microstructural features and stretch-flangeability.

Schematic illustration of the XGBoost algorithm. This process begins with an initial prediction of \(\:{\widehat{\text{y}}}^{\left(0\right)}=0\), and sequentially builds decision trees to minimize the loss function \(\:\text{L}\), which combines prediction error \(\:\text{l}\)(from \(\:\text{l}({\text{y}}^{\left(0\right)},\:{\widehat{\text{y}}}^{\left(0\right)}),\:\text{l}({\text{y}}^{\left(1\right)},\:{\widehat{\text{y}}}^{\left(1\right)})\:\)… to \(\:\text{l}({\text{y}}^{\left(\text{K}\right)},\:{\widehat{\text{y}}}^{\left(\text{K}\right)})\)) and a regularization term Ω. Each tree \(\:{\text{f}}_{\text{k}}\left({\text{x}}_{\text{i}}\right)\) is built to predict the residuals of the previous iteration, with the final output being the summation of contributions from all trees. This iterative process would continue until convergence, with validation of test set ensuring the model’s generalization.

Shapley additive explanations (SHAP)

ML models, including SVM, SR, and XGBoost, have excellent capability of extracting effective information from known data and predicting unexplored data. However, SVM and XGBoost have obscure internal structures and low interpretability, and SR models typically produce complex nonlinear mathematical expressions. To address this limitation, SHAP was utilized in this study. SHAP is an effective approach to explain the impact and importance of each input feature and outcome property. Specifically, SHAP employs a linear addition of input features to interpret the attributions of each feature across all data samples in Eq. (14)83.

where, \(\:\text{f}\left(\text{x}\right)\)indicates the original model, \(\:{x}^{{\prime\:}}\) means the binary simplification of input features x, where 1 indicates the feature is used and 0 indicates it is not, and \(\:g\left({x}^{{\prime\:}}\right)\) represents the explanation model approaching the output of \(\:\text{f}\left(\text{x}\right)\), p is the number of input features, \(\:{{\upphi\:}}_{0}\) represents a constant, and \(\varphi_{\text{I}}\) indicates the contribution of feature \(\:{\text{x}}_{\text{i}}\). The contribution of each feature xi towards the model f(xi) is denoted by \(\:{{\upphi\:}}_{\text{I}}\), calculated by Eq. (15)83,

where, \(f_x(z^{\prime})\) is equal to \(\:\text{f}\text{(z)}\), with \(\:\text{z}\)’ being the binary simplification of z. The resulting \(\:{{\upphi\:}}_{\text{I}}\)values equivalent to Shapley values in game theory, representing each input feature’s contribution to the outcome property, when multiple features are involved. SHAP assesses the importance of each input feature by altering input values and calculating the sensitivity of predicted outcomes’ errors. Particularly, features that cause larger deviations in prediction errors are considered more important84,85.

Multi-objective optimization (MOO)

A MOO approach was also employed in this study to address the challenge of simultaneously optimizing multiple, often conflicting material properties of AHSS86. This approach is particularly relevant in materials science, where improving one property may come at the expense of another. For instance, maximizing both UTS and TE in DP steels often involves trade-offs.

The MOO framework developed in this study incorporated estimates for HER, UTS and TE, Specifically, the ML models with the best predicting performance are selected in MOO, namely, SVM model for HER and TE, and XGBoost model for UTS. The relevant constraints are taken into consideration. These constraints ensured all variables were non-negative, the acceptable ranges for C, CE, Mn, Si, and Cr are 0.05–0.25 wt.%, 0–0.8 wt.%, 0.3–3 wt.%, 0.1–2 wt.% and 0.4–1wt.%, respectively, and that the sum of volume percentages of different phases (ferrite (VF), bainite (VB), martensite (VM), and tempered martensite (VTM)) equaled 100%, with tolerance limit of ± 0.1%. The optimization process aimed to identify a Pareto front, comprising non-dominated solutions while improving one objective necessarily compromised another87.

To achieve this, the R-NSGA-III, an advanced evolutionary algorithm designed for MOO, was applied. R-NSGA-III is developed based on NSGA-III, with a distinct reference points generation strategy that based on aspersion that user provides88. In this study, the aspersion points (ref_points) were developed based on the 212 real steel conditions collected from literature, with population size per reference point (pop_per_ref_point) of 153, selection scale (mu) of 0.9, and maximum generation of 100 to ensure better MOO performances.

The R-NSGA-III process started with a randomly generated population. Then it calculated the intercepts of the unit hyperplane with the normalized aspiration points. After that, the Das and Dennis points were generated on the unit hyperplane, which were subsequently adjusted to create reference points. The iterative process involves normalizing the population, assigning solutions to reference directions, selecting solutions, performing genetic operations, and generating offspring. This iteration continues until a threshold condition is met, at which point the final optimal points are determined88.

Regression model evaluation metrics

In order to evaluate the performance of the regression models, several critical metrics were employed, such as the total summation of variances (TSS), summation of residual squares (RSS) and summation of squared predictive residuals (PRESS). These metrics were calculated using real values (yi), predicted values in the training set (\(\:{\widehat{\text{y}}}_{\text{i}}\)) and predicted values in the test set \(\:{\widehat{\text{y}}}_{\text{i}\text{p}}\), and the mean value (\(\:\stackrel{\text{-}}{\text{y}}\)) of the dependent variable. The coefficient of determination, R2, measures how well the statistical model fits the data, which is given by Eq. (16)89,

R² represents the proportion of variance in the dependent variable that is predictable from the independent variables, ranging from\(-\infty\)to 189. Therefore, a higher R² value indicates a better fit of the regression model to the data, with R2 larger than 0.6 can be considered ideal90. Additionally, Q was employed to assess the model’s predictive performance. Q2 is calculated similarly to R² but used the predicted values from the test set. The value of Q² can be calculated by Eq. (17)91,

Q² is ranging from \(-\infty\)to 1, and a higher Q² value suggests better predictive capability of the model for new, unseen data91. Q² can be interpreted as a cross-validated R² and is particularly useful for assessing the generalizability of models. Q2 larger than 0 means that the model has predictive power, and Q2 larger than 0.5 can be considered as an indication of high predictive ability92. To ensure accurate evaluation of models’ predictive abilities, a 10-fold cross-validation strategy was applied. This approach provides a robust assessment of the model’s performance across different subsets of the data, helping to mitigate overfitting and providing a more reliable estimate of the model’s predictive power93.

Conclusions

In this study, a novel ML framework was established, enabling accurate prediction and optimization of AHSS stretch-flangeability based on composition-microstructure-property correlations. The significant findings of our work are listed as follows.

-

1.

A diverse dataset from 212 steel conditions were collected, ensuring the generalizability of our findings across various AHSS grades. The dataset was carefully analyzed, and missing values were imputed using MICE with Ridge regression, ensuring high imputation quality (average Q2 of 0.9560).

-

2.

Advanced ML models were employed in this work, including SVM, SR and XGBoost, to predict key mechanical properties such as HER, UTS and TE. The best-performing models achieved R2 values of 0.9518, 0.9987, and 0.8234 and Q2 of 0.8778, 0.8913, and 0.7181 for HER, UTS, and TE, respectively, demonstrating their ability to obtain complex composition-microstructure-property relationships.

-

3.

Interpretable insights into the importance of different microstructural features were provided using SHAP analysis. This analysis revealed that bainite volume fraction (VB) is the most significant promoting factor for HER; carbon content (C) and martensite volume fraction (VM) are the most critical factor for UTS; and chromium content (Cr) is the most influential factor for TE.

-

4.

Multi-objective optimization was conducted using the reference point based non-dominated sorting genetic algorithm III (R-NSGA-III), generating 252 optimized steel conditions with improved comprehensive mechanical properties. The optimization results revealed important trade-offs among HER, UTS, and TE, particularly a strong inverse relationship between HER and UTS.

-

5.

Five representative optimal steel conditions were determined using industrially relevant selection criteria, entropy-weighted TOPSIS and objective-wise analysis. These conditions are characterized by a composition of 0.12–0.13 wt.% C, 0.42–0.47 wt.% Cr, 0.69–1.17 wt.% Mn, and 0.10–0.21 wt.% Si, with a carbon equivalent of 0.40–0.44 wt.%. The microstructural features predominantly consist of bainite (52.7–70.9%) and martensite (24.2–32.0%), with smaller proportions of ferrite (2.9–12.9%) and tempered martensite (0.1–8.3%). These optimized conditions demonstrate a desirable combination of mechanical properties, including HER ranging from 110.1% to 119.8%, UTS from 1009.5 MPa to 1032.8 MPa, and TE from 22.6% to 24.2%, providing valuable guidelines for industrial applications.

Data availability

The data used in this study, consisting of 212 AHSS datasets collected from previously published literature, have been compiled and made available in the Supplementary File. The source data underlying visualizations presented in Figs. 2a-i, 3a-i, 4a-i and 5a-d, and 6, have been deposited in a Figshare repository under https://doi.org/10.6084/m9.figshare.27894048.

Code availability

The codes utilized in this work are available from the corresponding author upon reasonable request.

References

Cui, J., Li, K., Yang, Z., Wu, Z. & Wu, Y. Investigation of effects of aluminum additions on microstructural evolution and mechanical properties of Ultra-High strength and vanadium bearing Dual-Phase steels. J. Mater. Res. Technol. 31, 1117–1131 (2024).

Matlock, D. K. & Speer, J. G. Third generation of AHSS: microstructure design concepts. in Microstruct. Texture Steels Other Mater. 185–205 (2008).

Rashid, M. S. Dual phase steels. Annu. Rev. Mater. Sci. 11, 245–266 (1981).

Chang, Y. et al. Revealing the relation between microstructural heterogeneities and local mechanical properties of Complex-Phase steel by correlative Electron microscopy and nanoindentation characterization. Mater. Des. 203, 109620 (2021).

Wu, Y., Uusitalo, J. & DeArdo, A. J. Investigation of effects of processing on stretch-flangeability of the ultra-high strength, vanadium-bearing dual-phase steels. Mater. Sci. Engineering: A. 797, 140094 (2020).

Wu, Y., Uusitalo, J. & DeArdo, A. J. Investigation of the critical factors controlling sheared edge stretching of ultra-high strength dual-phase steels. Mater. Sci. Engineering: A. 828, 142070 (2021).

Li, X., Ramazani, A., Prahl, U. & Bleck, W. Quantification of Complex-Phase steel microstructure by using combined EBSD and EPMA measurements. Mater. Charact. 142, 179–186 (2018).

Gong, Y., Hua, M., Uusitalo, J. & Deardo, A. J. Processing factors that influence the microstructure and properties of high-strength dual- phase steels produced using CGL simulations. in AISTech 2016 Proceedings 2779–2790 (2016).

Pathak, N., Butcher, C., Worswick, M., Bellhouse, E. & Gao, J. Damage evolution in Complex-Phase and Dual-Phase steels during edge stretching. Materials 10, 346 (2017).

Kim, S., Song, T., Sung, H. & Kim, S. Effect of Pre-Straining on high cycle fatigue and fatigue crack propagation behaviors of complex phase steel. Met. Mater. Int. 27, 3810–3822 (2021).

Erice, B., Roth, C. C. & Mohr, D. Stress-state and strain-rate dependent ductile fracture of dual and complex phase steel. Mech. Mater. 116, 11–32 (2018).

Yoon, J. I. et al. Key factors of stretch-flangeability of sheet materials. J. Mater. Sci. 52, 7808–7823 (2017).

Paul, S. K. A critical review on hole expansion ratio. Mater. (Oxf). 9, 100566 (2020).

ISO/TS 16630. Metallic Materials-Method of Hole Expanding Test (ISO, 2003).

Paul, S. K. Non-linear correlation between uniaxial tensile properties and shear-edge hole expansion ratio. J. Mater. Eng. Perform. 23, 3610–3619 (2014).

Comstock, R. J., Scherrer, D. K. & Adamczyk, R. D. Hole expansion in a variety of sheet steels. J. Mater. Eng. Perform. 15, 675–683 (2006).

Terrazas, O. R., Findley, K. O. & Van Tyne, C. J. Influence of martensite morphology on Sheared-Edge formability of Dual-Phase steels. ISIJ Int. 57, 937–944 (2017).

Chen, L. et al. Stretch-flangeability of high Mn TWIP steel. Steel Res. Int. 81, 552–568 (2010).

Sutton, C. et al. Identifying domains of applicability of machine learning models for materials science. Nat. Commun. 11, (2020).

Ramprasad, R., Batra, R., Pilania, G., Mannodi-Kanakkithodi, A. & Kim, C. Machine learning in materials informatics: recent applications and prospects. NPJ Comput. Mater. 3, 54 (2017).

Morgan, D. & Jacobs, R. Opportunities and challenges for machine learning in materials science. Annu. Rev. Mater. Res. 50, 71–103 (2020).

Wei, J. et al. Mach. Learn. Mater. Sci. InfoMat 1, 338–358 (2019).

Xiong, J., Zhang, T. & Shi, S. Machine learning of mechanical properties of steels. Sci. China Technol. Sci. 63, 1247–1255 (2020).

Lee, J. Y., Kim, M. & Lee, Y. K. Design of high strength Medium-Mn steel using machine learning. Mater. Sci. Engineering: A. 843, 143148 (2022).

Bhandari, U., Rafi, M. R., Zhang, C. & Yang, S. Yield strength prediction of High-Entropy alloys using machine learning. Mater. Today Commun. 26, 101871 (2021).

Ma, E., Shin, S. H., Choi, W., Byun, J. & Hwang, B. Machine learning approach for predicting the fracture toughness of Nb-Si based alloys. Int. J. Refract. Met. Hard Mater. 117, 106420 (2023).

Bao, H. et al. A Machine-Learning fatigue life prediction approach of additively manufactured metals. Eng. Fract. Mech. 242, 107508 (2021).

Wang, J., Fa, Y., Tian, Y. & Yu, X. A. Machine-Learning approach to predict creep properties of Cr–Mo steel with Time-Temperature parameters. J. Mater. Res. Technol. 13, 635–650 (2021).

Lee, J. A. et al. Influence of tensile properties on hole expansion ratio investigated using A generative adversarial imputation network with explainable artificial intelligence. J. Mater. Sci. 58, 4780–4794 (2023).

Li, W., Vittorietti, M., Jongbloed, G. & Sietsma, J. Microstructure–Property relation and machine learning prediction of hole expansion capacity of High-Strength steels. J. Mater. Sci. 56, 19228–19243 (2021).

Wu, Y. Processing-Microstructure-Property Relationship in the High Strength Dual-Phase Steels (University of Pittsburgh, 2021).

Gong, Y. The Mechanical Properties and Microstructures of Vanadium Bearing High Strength Dual Phase Steels Processed with Continuous Galvanizing Line Simulations (University of Pittsburgh, 2015).

Noder, J. et al. A comparative evaluation of Third-Generation advanced High-Strength steels for automotive forming and crash applications. Materials 14, 4970 (2021).

Taylor, M. D., De Moor, E., Speer, J. G. & Matlock, D. K. Effects of constituent hardness on formability of dual phase steels. Steel Res. Int. 92, 1–13 (2021).

Madrid, M. et al. Effects of testing method on Stretch-Flangeability of Dual-Phase 980/1180 steel grades. JOM 70, 918–923 (2018).

Kumar Akela, A., Satish Kumar, D. & Balachandran, G. Hole expansion test and characterization of High-Strength Hot-Rolled steel strip. Trans. Indian Inst. Met. 75, 625–633 (2022).

Park, J., Won, C., Lee, H. J. & Yoon, J. Integrated machine vision system for evaluating hole expansion ratio of advanced High-Strength steels. Materials 15, 553 (2022).

Kim, J. H., Lee, T. & Lee, C. S. Microstructural influence on stretch flangeability of Ferrite–Martensite Dual-Phase steels. Cryst. (Basel). 10, 1022 (2020).

Suppan, C., Hebesberger, T., Pichler, A., Rehrl, J. & Kolednik, O. On the microstructure control of the bendability of advanced high strength steels. Mater. Sci. Engineering: A. 735, 89–98 (2018).

Kim, J. H. et al. Prediction of hole expansion ratio for various steel sheets based on uniaxial tensile properties. Met. Mater. Int. 24, 187–194 (2018).

Yoon, J. I., Jung, J., Lee, H. H., Kim, J. Y. & Kim, H. S. Relationships between Stretch-Flangeability and Microstructure-Mechanical properties in Ultra-High-Strength Dual-Phase steels. Met. Mater. Int. 25, 1161–1169 (2019).

Shih, H. C. & Shi, M. F. An innovative shearing process for AHSS edge stretchability improvements. J. Manuf. Sci. Eng. 133, (2011).

Narayanan, A., Abedini, A., Khameneh, F. & Butcher, C. An experimental methodology to characterize the uniaxial fracture strain of sheet metals using the conical hole expansion test. J. Mater. Eng. Perform. 32, 4456–4482 (2023).

Takahashi, Y., Kawano, O., Tanaka, Y., Ohara, M. & Ushioda, K. Analysis of governing factors of stretch Flange-Ability of Hot-Rolled high strength steels on the basis of fracture mechanics. Tetsu-to-Hagane 98, 378–387 (2012).

Pathak, N., Butcher, C. & Worswick, M. Assessment of the critical parameters influencing the edge stretchability of advanced High-Strength steel sheet. J. Mater. Eng. Perform. 25, 4919–4932 (2016).

Taylor, M. D. et al. Correlations between nanoindentation hardness and macroscopic mechanical properties in DP980 steels. Mater. Sci. Engineering: A. 597, 431–439 (2014).

Yoon, J. I., Jung, J., Ryu, J. H., Lee, K. & Kim, H. S. Development of methodology with excellent reproducibility for evaluating Stretch-Flangeability using a Sheared-Edge tensile test. Exp. Mech. 57, 1349–1358 (2017).

Lee, J., Lee, S. J. & De Cooman, B. C. Effect of micro-alloying elements on the stretch-flangeability of dual phase steel. MATERIALS SCIENCE AND ENGINEERING A-STRUCTURAL MATERIALS PROPERTIES MICROSTRUCTURE AND PROCESSING 536, 231–238 (2012).

Lee, J., Lee, M., Do, H., Kim, S. & Kang, N. Effect of the tempered martensite matrix and granular bainite on Stretch-Flangeability for 980 mpa Hot-Rolled steel. Korean J. Met. Mater. 52, 113–121 (2014).

Matsuda, H. et al. Effects of Auto-Tempering behaviour of martensite on mechanical properties of ultra high strength steel sheets. J. Alloys Compd. 577, S661–S667 (2013).

Apimonton, C., Sungthong, C., Luksanayam, S., Suranuntchai, S. & Uthaisangsuk, V. Effects of bainitic phase on mechanical properties of Bainite – Aided multiphase steels. Steel Res. Int. 88, 1–12 (2017).

Hasegawa, K., Kawamura, K., Urabe, T. & Hosoya, Y. Effects of microstructure on Stretch-flange-formability of 980 mpa grade Cold-rolled ultra high strength steel sheets. ISIJ Int. 44, 603–609 (2004).

Qian, J. & Yue, Y. Factors influencing dual phase steel flanging limit punching. J. Iron. Steel Res. Int. 21, 1124–1128 (2014).

Casellas, D. et al. Fracture toughness to understand Stretch-Flangeability and edge cracking resistance in AHSS. Metall. Mater. Trans. A. 48, 86–94 (2017).

Hourman, T. Press forming of high strength steels and their use for safety parts. Revue De Métallurgie. 96, 121–132 (1999).

Miranda, S. et al. Assessment of scatter on material properties and its influence on formability in hole expansion. Proc. Institution Mech. Eng. Part. L: J. Materials: Des. Appl. 235, 1262–1270 (2021).

Behrens, B. A. et al. Improving hole expansion ratio by parameter adjustment in abrasive water jet operations for DP800. SAE Int. J. Mater. Manuf. 11 (11-), 05 (2018).

Hance, B. M. Practical application of the hole expansion test. SAE Int. J. Engines 10, (2017). 2017-01-0306.

Shih, H. C., Zhou, D. & Konopinski, B. Effects of punch configuration on the AHSS edge stretchability. SAE Int. J. Engines. 10, 2017–2001 (2017).

Dlouhy, J., Podany, P. & Dzugan, J. Copper-Induced strengthening in 0.2 C bainite steel. Materials 14, 1962 (2021).

Graux, A. et al. Design and development of complex phase steels with improved combination of strength and Stretch-Flangeability. Met. (Basel). 10, 824 (2020).

Talaş, Ş. The assessment of carbon equivalent formulas in predicting the properties of steel weld metals. Mater. Des. (1980–2015). 31, 2649–2653 (2010).

Fonstein, N., Jun, H. J., Huang, G., Sriram, S. & Yan, B. Effect of bainite on mechanical properties of multiphase ferrite-bainite-martensite steels. in Materials Science and Technology Conference and Exhibition MS&T’11 vol. 1 634–641 (2011). (2011).

Davies, R. G. Influence of martensite composition and content on the properties of dual phase steels. Metall. Trans. A. 9, 671–679 (1978).

Zhang, X., Gao, H., Zhang, X. & Yang, Y. Effect of volume fraction of bainite on microstructure and mechanical properties of X80 pipeline steel with excellent deformability. Mater. Sci. Engineering: A. 531, 84–90 (2012).

Chen, X., Jiang, H., Cui, Z., Lian, C. & Lu, C. Hole expansion characteristics of ultra high strength steels. Procedia Eng. 81, 718–723 (2014).

RUBIN, D. B. Inference and missing data. Biometrika 63, 581–592 (1976).

Lin, W. C. & Tsai, C. F. Missing value imputation: A review and analysis of the literature (2006–2017). Artif. Intell. Rev. 53, 1487–1509 (2020).

Li, C. Little’s test of missing completely at random. Stata Journal: Promoting Commun. Stat. Stata. 13, 795–809 (2013).

van Buuren, S. & Groothuis-Oudshoorn, K. MICE: multivariate imputation by chained equations in R. J. Stat. Softw. 45, 1–67 (2011).

Pedersen, A. et al. Missing data and multiple imputation in clinical epidemiological research. Clin. Epidemiol. Volume. 9, 157–166 (2017).

Schuetz, C. G. Using neuroimaging to predict relapse to smoking: role of possible moderators and mediators. Int. J. Methods Psychiatr Res. 17 (Suppl 1), S78–S82 (2008).

Vapnik, V. N. The Nature of Statistical Learnig Theory (Spriner, 1995).

Smola, A. Regression Estimation with Support Vector Learning Machines (Technische Universitat Munchen, 1996).

Onyelowe, K. C., Gnananandarao, T. & Ebid, A. M. Estimation of the erodibility of treated unsaturated lateritic soil using support vector machine-polynomial and -radial basis function and random forest regression techniques. Clean. Mater. 3, 100039 (2022).

Ramedani, Z., Omid, M., Keyhani, A., Shamshirband, S. & Khoshnevisan, B. Potential of radial basis function based support vector regression for global solar radiation prediction. Renew. Sustain. Energy Rev. 39, 1005–1011 (2014).

Wang, J., Chen, Q. & Chen, Y. RBF Kernel Based Support Vector Machine with Universal Approximation and Its Application. in Advances in Neural Networks - International Symposium on Neural Networks 2004 (eds. Yin, F., Wang, J. & Guo, C.) vol. 3173 512–517Springer, Berlin Heidelberg, Germany, (2004).

Wang, Y., Wagner, N. & Rondinelli, J. M. Symbolic regression in materials science. MRS Commun. 9, 793–805 (2019).

Koza, J. R. Genetic programming as a means for programming computers by natural selection. Stat. Comput. 4, 87–112 (1994).

Searson, D. P. GPTIPS 2: an Open-Source software platform for symbolic data mining. in Handbook of Genetic Programming Applications (eds Gandomi, A. H., Alavi, A. H. & Ryan, C.) 551–573 (Springer, doi:https://doi.org/10.1007/978-3-319-20883-1. (2015).

Chen, T., Guestrin, C. & XGBoost: A Scalable Tree Boosting System. in Proceedings of the 22nd ACM SIGKDD International Conference on Knowledge Discovery and Data Mining vol. 42 785–794ACM, New York, NY, USA, (2016).

Zhong, J. et al. XGBFEMF: an XGBoost-Based framework for essential protein prediction. IEEE Trans. Nanobiosci. 17, 243–250 (2018).

Lundberg, S. M. & Lee, S. I. A Unified Approach to Interpreting Model Predictions. in 31st Conference on Nuural Information Processing System (NIPS 4768–4777 (Curran Associates Inc., Long Beach, CA, USA, 2017). (2017).

Shapley, L. A value for n-Person games. Contrib. Theory Games. 2, 307–317 (1953).

Roth, A. E. Introduction to the Shapley value. In The Shapley Value: Essays in Hour of Lloyd S. Shpely (ed. Roth, A. E.) 1–27 (Cambridge University Press, 1988).

Gunantara, N. A review of multi-objective optimization: methods and its applications. Cogent Eng. 5, 1502242 (2018).

Simon, D. Evolutionary Optimization Algorithms: Biologically-Inspired and Population-Based Approaches To Computer Intelligence (WILEY, 2013).

Vesikar, Y., Deb, K. & Blank, J. Reference Point Based NSGA-III for Preferred Solutions. in IEEE Symposium Series on Computational Intelligence (SSCI) 1587–1594 (IEEE, 2018). (2018). https://doi.org/10.1109/SSCI.2018.8628819

Chicco, D., Warrens, M. J. & Jurman, G. The coefficient of determination R-squared is more informative than SMAPE, MAE, MAPE, MSE and RMSE in regression analysis evaluation. PeerJ Comput. Sci. 7, e623 (2021).

Veerasamy, R. et al. Validation of QSAR Models-Strategies and importance. Int. J. Drug Des. Discovery 2, (2011).

Consonni, V., Ballabio, D. & Todeschini, R. Comments on the definition of the Q2 parameter for QSAR validation. J. Chem. Inf. Model. 49, 1669–1678 (2009).

Consonni, V., Ballabio, D. & Todeschini, R. Evaluation of model predictive ability by external validation techniques. J. Chemom. 24, 194–201 (2010).

Berrar, D. & Cross-Validation Encyclopedia Bioinf. Comput. Biology 1–3, 542–545 (2019).

Acknowledgements

This work was supported by Sichuan Science and Technology Program (grant numbers 2023ZYD0136, 2024 NSFSC0952).

Author information

Authors and Affiliations

Contributions