Abstract

Deep learning has been used for automatic planning of radiotherapy targets, such as inferring the clinical target volume (CTV) for a given new patient. However, previous deep learning methods mainly focus on predicting CTV from CT images without considering the rich prior knowledge. This limits the usability of such methods and prevents them from being generalized to larger clinical scenarios. We propose an automatic CTV delineation method for cervical cancer based on prior knowledge of anatomical relationships. This prior knowledge involves the anatomical position relationship between Organ-at-risk (OAR) and CTV, and the relationship between CTV and psoas muscle. First, our model proposes a novel feature attention module to integrate the relationship between nearby OARs and CTV to improve segmentation accuracy. Second, we propose a width-driven attention network to incorporate the relative positions of psoas muscle and CTV. The effectiveness of our method is verified by conducting a large number of experiments in private datasets. Compared to the state-of-the-art models, our method has obtained the Dice of 81.33%±6.36% and HD95 of 9.39mm±7.12mm, and ASSD of 2.02mm±0.98mm, which has proved the superiority of our method in cervical cancer CTV delineation. Furthermore, experiments on subgroup analysis and multi-center datasets also verify the generalization of our method. Our study can improve the efficiency of automatic CTV delineation and help the implementation of clinical applications.

Similar content being viewed by others

Introduction

Cervical cancer is a major health problem for women worldwide, ranking among the top three in morbidity and mortality among all female cancers1. Radiotherapy, especially intensity-modulated radiation therapy (IMRT), is critical for the treatments of cervical cancer2. For patients with adverse pathologic findings, adding radiotherapy helps to reduce the risk of biochemical recurrence, local recurrence, and clinical progression of cancer over surgery alone. For radiotherapy of cervical cancer, precisely contouring the clinical target volume (CTV) to be irradiated is challenging3. CTV is a virtual volume encompassing areas that may contain microscopic tumor cells, not an expansion of a macroscopic or visible tumor volume. Consequently, segmenting the CTV is much more challenging than segmenting typical organs or gross target volumes (GTVs). According to the published consensus guidelines, the CTV’s borders are usually based on anatomical landmarks4. Physicians must consider not only published guidelines, but individual patient characteristics, variability in anatomy, and co-morbidities, when segmenting the CTV. Accurately segmenting the CTV is crucial for irradiating the microscopic spread of tumor cells sufficiently while mitigating the side effects of radiation therapy to surrounding Organs-at-risk (OARs)5.

In recent years, a large number of works have been proposed for CTV segmentation6,7,8,9. These methods can be divided into traditional methods based on hand-crafted features and deep learning methods based on Convolutional Neural Networks (CNN). Among traditional image segmentation methods, atlas-based methods are considered the suited for solving this problem10. However, these methods have not produced satisfactory results6. Atlas-based methods were observed to be effective for segmenting high-contrast organs on CT but insufficient for a structure like CTV that mainly consists of soft tissues11. Some deep learning-based studies have been also proposed for CTV segmentation7,8,9,12,13,14,15. However, these representative works only directly used deep neural networks such as U-Net to segment CTV without introducing their unique characteristics. CTV is a kind of virtual volume whose boundaries are not defined by the grayscale variation in the images, and the CTV is not an expansion of the GTV, leading to unclear boundaries. When CNN encounters the CTV areas without obvious boundaries, the CNN-based CTV segmentation methods will not follow the anatomical structure, resulting in a significant decrease in CTV segmentation performance9,16. For the delineation of CTV, it is necessary to consider the introduction of some prior anatomical knowledge.

For cervical cancer, the delineation of CTV is closely related to the location of the OARs, following the consensus guidelines of the Radiation Therapy Oncology Group (RTOG)4. For cervical cancer, anatomical structures such as the bladder, pelvis, pelvic bone marrow, rectum, and small intestine are the representative OARs. In clinical practice, the surrounding OARs should not be covered. Senior radiation oncologists also consider clinical prior knowledge of the psoas margin when delineating the CTV. For the delineation of the para-aortic lymph node CTV, it should extend laterally from the aorta to the medial edge of the left psoas muscle, covering the visible parts in this area, and the left edge is usually located 1-2 cm outside the aorta. Therefore, it is necessary to effectively model the dependency between these anatomical knowledge and CTV boundaries to improve the performance of CTV segmentation. Figure 1 (a) show OARs, psoas and CTV. The CTV boundaries and other OARs are highly correlated.

(a) OARs, CTV and psoas in CT slices of cervical cancer. The red curve represents CTV, the blue curve represents the psoas muscle, and the remaining curves represent the OARs. (b) The columns in different locations have different category distributions. Red is the CTV boundary, and blue is the psoas boundary. The segmentation results from the leftmost end to the middle of CT slice are background, psoas + background, CTV +psoas +background, CTV+ background.

To address these challenges, we proposed anatomical knowledge-based dual prior network (KDPN) for automatic segmentation of CTV. This method aims to incorporate prior anatomical knowledge (such as the relative position relationship between CTV and OAR, and the relative position relationship between CTV and psoas) into the deep learning network to improve the accuracy of CTV segmentation. It mainly consists of two modules, a spatial constraint network (SCN) based on the relative anatomical relationship between CTV and OAR, and a width-driven attention network (WAN) based on the locations of psoas muscle and CTV segmentation area.

First, optimal radiotherapy entails uniform full-dose coverage of the radiation target with a sharp dose fall-off around it. This necessitates precise model the relationship of both the radiation target and the nearby OARs at risk of radiation damage. Due to the characteristics of local operation, CNN-based methods usually fail to model the explicit long-range relationship between CTV and clinical prior knowledge17. To overcome the inherent local limitation, we design a novel relational transformer, the spatial constrained network, to model the dependency. Different from existing methods, SCN consists of two main parts: a dual-branch module (DBM) and a feature-correlation module (FCM). For DBM, the CT image is first sent to two independent branches to extract features from two streams. Then, local edge features and OAR features are further input into FCM. The transformer-based self-attention mechanism of FCM promotes the combination of corresponding OARs and CTV, thereby effectively modeling the relationship in space. Second, in the CT images of cervical cancer, the positions of the psoas muscle and CTV area are relatively fixed. We can observe that the corresponding category distribution has significant dependency on the horizontal direction. As shown in Fig. 1 (b), in the case of three-class segmentation including CTV, psoas muscle, and background, some columns contain psoas muscle and background. Some columns contain psoas muscle and CTV and background, and other columns contain CTV and background. That is, the left and right parts of the image will present the fixed anatomical locations. Motivated by these observations, we innovatively proposed a width-driven attention networks (WAN) as the general addon module for incorporating common structural priors depending on the spatial position. To our best knowledge, there is no work incorporating such clinical prior knowledge into deep learning for accurately CTV segmentation. We evaluate our framework on two available datasets (in-hospital and another center dataset). Our framework outperforms the state-of-the-art approaches, bringing significant improvements by incorporating the clinical knowledge.

Our contributions are summarized as follows: (1) By integrating the clinical anatomical knowledge into the deep segmentation network, we innovatively proposed the anatomical knowledge-based dual prior network for automatic segmentation of CTV by integrating the prior knowledge of nearby OARs and psoas muscle. To the best of our knowledge, we are the first one to incorporate such guidelines into deep learning CTV delineation. (2) Considering the relatively fixed spatial position relationship between OARs and CTV, we innovatively propose the spatial constraint network to implicitly integrate the relative position relationship by self-attention relationship transformer. (3) Based on the anatomical prior knowledge of psoas muscle and CTV segmentation area, we innovatively designed a width-driven attention network by class distribution to further correct the CTV delineation results. (4) Extensive experiments are conducted on two clinical radiotherapy datasets (in-hospital and another center dataset), verifying that our approach is superior to the state-of-the-art deep learning models.

Related works

CTV segmentation of cervical cancer

Conventional auto-segmentation methods of cervical cancer used atlas-based auto-segmentation (ABAS) algorithms, which mainly used deformable image alignment to create contours18. This process transforms voxels from a deformable image set to match those of a target image set, forming a deformation vector field. ABAS software struggles with variability in constructing a universal atlas, leading to suboptimal outcomes, particularly for regions with unclear edges like CTV19.

Deep learning has achieved significant success for the domain of medical image segmentation. Liu et al.8 developed DpnUNet, a variant of U-Net for cervical cancer CTV segmentation, which uses three adjacent CT slices as input to capture spatial context while keeping low computational cost. Unlike organ segmentation, CTV contains potential tumor spread tissue or subclinical disease tissue, resulting in poorly defined edge interfaces and irregular shapes. To this end, Shi et al.9 proposed RA-CTVNet, a region-aware reweighting strategy that assigned different weights to different slices, and developed a new recursive refinement strategy to address the edge challenge. Jiang et al.13 collected CT images of 200 cervical cancer patients undergoing radical radiotherapy. A RefineNet-based deep learning scheme was employed to segment the CTVs of 3D Brachytherapy for cervical cancer, which also improved segmentation efficiency. Similarly, Ju et al.14 evaluated the Dense V-Net for predicting CTV of cervical cancer patients. Dense V-Net is a dense and fully connected convolutional network that can learn appropriate features from limited samples and automatically delineate the CTV of cervical cancer patients based on CT images. Ma et al.15 proposed a V-net-based neural network for cervical cancer patients who received definitive radiotherapy and postoperative radiotherapy. For the definitive radiotherapy group, the CTV of the pelvic lymphatic drainage area (dCTV1) and the paravaginal area (dCTV2) were delineated; for the postoperative radiotherapy group, the pelvic lymphatic drainage area (pCTV1) was delineated. Further, using enhanced CT scans and multiple CT scans, they proposed a three-channel adaptive automatic segmentation network20 for CTV segmentation.

However, CTV is a kind of virtual volume whose boundaries are not defined by the grayscale variation in the images, and the CTV is not an expansion of the GTV, leading to unclear boundaries. When CNN encounters the CTV areas without obvious boundaries, the CNN-based CTV segmentation methods will not follow the anatomical structure, resulting in a significant decrease in CTV segmentation performance9.

Incorporating anatomical constraints for segmentation

Recently, some prior knowledge has been introduced into the medical image segmentation task and achieved promising performances21. Prior knowledge refers to the knowledge or experience used by medical experts in their daily diagnosis process, such as anatomical constraints. They incorporated domain knowledge at different stages, such as preprocessing, model designing and postprocessing stages.

During the preprocessing stage, considering the prior knowledge that pixels around multiple sclerosis lesions contain lesion-level information, Kats et al.22 constructed soft masks through 3D morphological dilation of binary segmentation masks to improve the accuracy of segmentation. Peng et al.23 first identified the fissure region of interest using lung anatomical knowledge and then segmented the lung lobes in CT images based on the segmentation framework.

During model designing stage, some scholars have attempted to add anatomical constraints by designing new models. Some works modify the loss functions to enhance medical image segmentation. To address the challenge of utilizing domain constraints, such as anatomical knowledge about inter-region relationships, Ganaye et al.24 introduced an algorithm to eliminate segmentation inconsistencies with non-adjacent constraints for multi-object medical image segmentation. This approach introduced an auxiliary training loss based on adjacency graphs to enhance the consistency of region labeling by penalizing outputs with anatomically incorrect adjacent relationships. Considering segmentation models were prone to errors due to interference from similar anatomical structures, Gao et al.25 designed an anatomically aware loss by calculating the distance between the activated areas of predicted segmentation and the true values. This loss function forced the model to distinguish anatomical structures similar to tumors, thereby making correct predictions. In order to incorporate anatomical prior information such as organ shape and position into CNN, Oktay et al.26 introduced a novel regularization function that followed the overall anatomical structure characteristics by learning a nonlinear representation of shape. Some works apply multi-task networks to incorporate the prior knowledge. Multi-task methods typically refer to incorporating various tasks (such as edge segmentation and predicted distance maps) into the same deep neural network to improve the final segmentation performance. For example, Navarro et al.27 improved CT multi-organ segmentation by introducing distance map regression and contour map detection, boosting Dice scores significantly. Ganaye et al.28 introduced a method integrating spatial constraints into a CNN architecture for brain MRI segmentation, using 2D and 3D patches at multiple scales to incorporate contextual information and reduce prediction inconsistencies through DistBranch and ProbBranch. For CTV segmentation after radiotherapy for prostate cancer, Balagopal et al.29 used a multi-task network to predict distance maps to help CTV boundary delineation.

Some researchers emphasize anatomical consistency or incorporate anatomical knowledge during post-processing. For example, Mlynarski et al.30 applied methods like triple hole-filling in the post-processing step to enforce anatomical consistency of the results. For segmenting prostate 3D MRI scans, Da et al.31 used a 3D active contour model for finer segmentation after coarse segmentation, obtaining the final prostate surface. Similar methods in the post-processing stage have also been applied to the work of segmenting the liver and its surroundings from abdominal computed tomography scans32. Specifically, they adopted robust bottleneck detection with neighboring contour constraints to remove nodule regions that were over-segmented due to ambiguous tissue separation to maintain the correct anatomical structure.

In conclusion, deep learning-based methods for automatic CTV segmentation in cervical cancer have predominantly focused on U-Net and its variants. The manual CTV delineation process generally involves outlining the visible cancer volume as the GTVs, guided by clinical insights, cancer pathology, and diagnostic images. Clinicians then expand the volume by adding margins to delineate CTVs, considering the potential for cancer spread. Unlike the clear boundaries of OARs, CTV delineation is more challenging due to its dependence on surrounding organs, resulting in indistinct boundaries. Directly applying deep learning segmentation algorithms to CTV tasks without considering the unique characteristics of CTV segmentation and its dependence on surrounding organs is unreasonable. The contour of CTV is determined by the surrounding tissues and organs of each specific anatomical region. Therefore, the integration of anatomical prior knowledge into the network can obtain more reasonable CTV segmentation and reduce the probability of catastrophic prediction. In contrast, unlike existing KBP models that rely more on general prior knowledge, this study specifically integrates clinical knowledge that can improve the accuracy of the cervical cancer CTV segmentation task. Different from previous studies, our work implicitly incorporates the relationship between CTVs and OARs and the relationship between CTVs and the psoas muscle into the CTV segmentation network, based on the references that physicians use when manually outlining CTVs, i.e., OARs and the psoas muscle.

Method

According to the RTOG consensus guidelines, the delineation of CTV is closely related to the location of the OARs. For cervical cancer, anatomical structures such as the bladder, pelvis, pelvic bone marrow, rectum, and small intestine are the representative OARs. Senior radiation oncologists also consider clinical prior knowledge of the psoas margin when delineating the CTV. For the delineation of the para-aortic lymph node CTV, it should extend laterally from the aorta to the medial edge of the left psoas muscle, covering the visible parts in this area, and the left edge is usually located 1-2 cm outside the aorta. In this study, we proposed anatomical knowledge-based dual prior network for automatic segmentation of cervical cancer CTV. This method aims to incorporate prior medical knowledge and integrate the relative position relationship between CTV and OAR into the deep learning network to improve the accuracy of cervical cancer CTV segmentation. It mainly consists of two modules, namely, a spatial constraint network based on the relative anatomical relationship between CTV and OARs and a width-driven attention network based on the medial edge of the psoas muscle being located in the CTV segmentation area. To the best of our knowledge, our work is the first study to propose incorporating clinical anatomical prior knowledge for cervical cancer CTV segmentation.

Spatial constraint network

The CTV in this case is a virtual volume whose boundaries are not defined by the grayscale variations in the images, and the CTV is not an expansion of the GTV. In this section, we propose the spatial constraint network (SCN). SCN aims to integrate the prior positional relationship between OARs and CTV into the network, and guide CTV segmentation by learning the relative positional relationship of anatomy. It consists of the dual-branch main network and feature correlation module. The former contains a self-predictor module and an OAR feature module. To preserve the original features of cervical cancer CTV, the self-predictor is used to estimate CTV pre-segmentation results from CT scans. The OAR feature module extracts OAR-related features of cervical cancer by learning the five OARs: bladder, pelvis, pelvic bone marrow, rectum, and small intestine. The influence of OAR on CTV segmentation is explicitly learned through the transformer-based feature correlation module.

Dual-branch main network

Based on the fixed relative position relationship between CTV and OAR, we use the query-key mechanism to learn the attention weights for cervical cancer CTV segmentation and perform weighted aggregation on contextual features. Specifically, inspired by transformer33, the feature maps extracted by the decoders of CTV and OAR are expanded into sequence vectors as key and query respectively. Cross-attention is used to calculate the similarity between the query and the corresponding key. The positional relationships among various features are learned by combining position embedding. The attention function is a mapping from a query and a set of key-value pairs to the output, where query, key, value and output are all vectors. The self-predictor and OAR feature modules share the same encoder but not the same decoder. The query comes from the feature map in the OAR decoder, and the key and value come from the feature map in the CTV decoder.

Taking nnU-Net34 as the backbone, the network structure diagram is dual-branch main network, which is shown in Figure 2(a). The dual-branch network consists of the self-predictor for extracting CTV features and OAR feature module for extracting OAR features. The encoder has four resolution steps. Each layer contains two \(3\times 3\times 3\) convolutions each followed by max pooling. For the decoder, each layer also contains convolution blocks and up-sampling operators. The parameters of the encoder parts are shared in two branches (self-predictor and OAR feature module). We use I to denote the input image, use \(F_E\) to denote the shared encoder operation, and use M to denote the intermediate feature obtained after encoder. Then, we can obtain \(\textbf{M}=F_\textrm{E}(\textbf{I})\). Furthermore, in order to take into account both global features and local features, we use the features (\(X_C\) and \(X_O\)) from the second layer of decoder for constructing the FCM module. This maybe because the second layer usually captures more complex and detailed features that are critical for the CTV delineation.

(a) The illustration of our SCN architecture, which consists of dual-branch main network (self-predictor and OAR feature module) and feature correlation module. CT volumes are fed into the dual-branch main network, which extracts features from two different branches respectively. Further, feature correlation module fuses two features \(X_C\) and \(X_O\) from dual-branch main network. Finally, the output feature of the feature correlation module is concatenated with the features came from the self-predictor decoder to obtain the final segmentation result. (b) The illustration of feature correlation module. \(X_C\) and \(X_O\) represent the features from the second layers of the decoder of the self-predictor and OAR feature model, respectively. Q, K, and V represent query, key, and value. \(\bigoplus\) denote the element-wise addition. The ellipsis indicates that different groups of parallel cross-attention heads are concatenated together.

After restoring the shape of the feature map output by the FCM, it is concatenated with the feature map of the self-predictor. After passing through the convolution layer and the sigmoid, the final segmentation result is the output. In Figure 2(a), the box of CTV pre-segmentation represents the coarse segmentation result of the CTV directly predicted by the self-predictor. The CTV final segmentation represents the CTV jointly predicted by the self-predictor and the OAR feature module through the FCM. The two prediction paths are correlated and will be optimized simultaneously.

Feature correlation module

In this subsection, we describe how to use our feature correlation module to model the relationship between the CTV feature space and the OAR feature space in a unified framework. Due to the inherent locality limitation of the convolution operation, we apply the transformer-based33 feature correlation module to fuse the features extracted from the dual-branch main network.

More specifically, the FCM is the core component of the network. The operation of the FCM can be divided into several steps to ensure the module’s effectiveness and clarity as depicted in Fig. 2(b). First, the inputs to the FCM include the features extracted from two branch networks: CTV features \(X_C\) and OAR features \(X_o\). These features are processed using patch embedding and position encoding to segment the 3D image feature maps into block sequences, with each block assigned a position encoding to retain its spatial information. Then, these block sequences are further fed into linear layers to generate keys (K), values (V), and queries (Q) for the multi-head cross-attention mechanism. Here, K and V correspond to the CTV features \(X_C\), while Q corresponds to the OAR features \(X_o\) . The attention formula is shown as follows:

where \(d_k\) is the dimension of the key according to33.

Finally, the FCM combines the weighted features with the original feature map from the CTV predictor, thereby integrating anatomical knowledge with the outputs of the deep learning model. Through this approach, the FCM not only models the relationship between CTV and OAR in the feature space but also strengthens the network’s segmentation capability for clinical target volumes, laying the foundation for improved segmentation accuracy.

In fact, our SCN obeys the framework of multi-task learning, where one task is CTV segmentation and the other is OAR segmentation. Different from traditional multi-tasks learning, we use features \(X_c\) and \(X_o\) to construct feature correlation module. The FCM obeys the idea of transformer. \(X_c\) corresponds the key and value of transformer, while \(X_o\) corresponds to the query of transformer. With the help of multi-head cross-attention mechanism, we incorporate the prior knowledge of OARs. During training, we use three loss functions to supervise the network. For the CTV pre-segmentation, OAR segmentation and CTV final segmentation, the dice losses are all applied to conduct the supervised learning.

Width-driven attention network

This section exploits the intrinsic features of CTV segmentation of cervical cancer and proposes a general add-on module for incorporating the unique characteristics. Depending on the anatomical knowledge that the margo medialis of the psoas muscle is in the segmentation area of CTV, each column of the image of the CT volumes has different statistics in terms of category distribution. When focusing on the three categories of CTV, psoas muscle, and background, as shown in Fig. 1(b), some columns in the image contain psoas muscle and background, some columns contain psoas muscle, CTV, and background, and some columns contain CTV and background. From left to right, different columns correspond to different contexts.

Motivated by these observations, we propose WAN for incorporating the prior knowledge. Specifically, we propose WA-block as a general add-on module for 3D medical image segmentation. Given an input feature map, WA-block computes width-driven attention weights to implicitly extracts width-wise contextual information. In this section, we first describe WA-block as a general plug-in module, and then merge WA-block into the trained dual-branch main network, which constructs WAN (Fig. 3(a)). WAN fine-tunes the trained two-branch main network by adding additional classification tasks to incorporate the prior knowledge of the psoas and CTV locations. WA-block extracts informative features using the wide-wise attention mechanism (Fig. 3(b)). These features are not used as input to the two-branch main network. Instead, these features are incorporated into the WAN network for more accurate CTV delineation.

More specifically, for our 3D width-wise medical image segmentation, let \(X_l\in R^{C_l\times D_l{\times H}_l\times W_l}\) and \({X_h\in R}^{C_h\times D_h{\times H}_h\times W_h}\) denote the lower and higher-level features in the semantic segmentation network, representatively. C is the number of channels, and D, H, W are the depth, the height and the width of the input tensor. Given the feature mapping \(X_l\), WA-block generates the channel-wise attention mapping \(A\in R^{C_h\times D_h\times 1{\times W}_h}\). This is done with a series of steps: height-wise pooling, interpolation for coarse attention, and computing width-driven attention map.

First, we perform a height-wise pooling operation. We first aggregate the input representations \(X_l\) using a height-grouping operation to extract the contextual information by height from each column to obtain a channel-wise attention map Z (\(C_l\times D_l\times 1{\times W}_l\)). Specifically, height-wise pooling performs global average pooling on the feature map along the height direction, and compresses the features of each spatial column into a single value in the height dimension.

(a) The overview of the proposed WAN. \(X_l\):lower-level feature map, A: the attention map, \({\widetilde{X}}_h\): transformed new feature map. \(\odot\) represents the element-wise multiplication. The above line is the trained self-predictor in the first stage, which is used to directly predict the CTV segmentation. (b) WA-block. It is inserted in the decoder stage to conduct classification. The purpose of inserting WA-block is to enhance the result of CTV segmentation by multiple tasks.

Second, we calculate the coarse attention interpolation. After the pooling operation, the model generates the matrix \(Z\in R^{C_l\times D_l\times 1{\times W}_l}\). However, only some of the columns of the matrix Z are necessary to compute the effective attention map. In our experiment, some columns have only one class (e.g., CTV) while others have two classes (e.g., CTV and psoas muscle). Therefore, we interpolate the matrix Z into \(\hat{Z} (C_l\times D_l\times 1\times \hat{W})\) by down-sampling. Constructed from down-sampled representations, the attention map is coarse.

Third, we compute the width-driven attention map. The width-driven channel-direction attention map A is obtained by means of a convolutional layer that takes \(\hat{Z}\) as the input. Some works utilize the channel-wise attention by fully connected layers since they generate the channel-wise attention for an entire image. In our study, in order to reflect the width information, we adopt convolutional layers to let the relationship between adjacent rows be considered while estimating the attention map since each row is related to adjacent rows. Through a series of convolutional operation and sigmoid function, we can obtain the attention map A (\(C_h\times D_h\times 1{\times W}_h\)). Then, through the element-wise multiplication of A and \(X_h\), we can obtain the feature expression that integrates width prior knowledge. The attention map A indicates which channels are critical at each individual column. In the last layer, each column can be associated with one to three labels (background/psoas + background/CTV + psoas + background/CTV + background). These operations consisting of N convolutional layers and sigmoid function.

Our proposed method WAN, has strong connections to the channel-wise attention approach, which exploits the inter-channel relationship of features and scales the feature map according to the importance of each channel. SENet35 captures the global context of the entire image using global average pooling and predicts per-channel scaling factors to extract informative features. It exploits the global context of the entire image and is widely used for image classification. However, the CTV medical image dataset shows relatively fixed anatomical locations, which means that different images share similar class statistics. Therefore, the global context between images is relatively similar. Our work is also similar to the work of36, who proposed a highly driven attention network to improve urban scene segmentation. Different from their work, our WAN is a variant that adopts width prior information and is designed specifically for 3D CTV segmentation.

Training process

Our framework is the two-stage training network, which trains SCN in the first stage and then take it as the backbone to further train WAN in the second stage. The former incorporates the prior knowledge of the relative anatomical relationship between CTV and OARs, and the latter considers the knowledge of medial edge of the psoas muscle being located in the CTV segmentation area. In our experiment, only a small portion of the psoas muscle data is labeled, therefore, it is used to fine-tune the SCN network.

In the first stage, the training loss \(L_{SCN}\) comprises three components. The first part is the Dice loss function of the self-predictor and it is represented by \(L_{self}\). The second part is the Dice loss function of OAR feature model predicting OARs segmentation, which is represented by \(L_{OAR}\). The third part is to connect the output of the feature correlation module with the output feature map of the backbone segmentation network. Then the final CTV segmentation result is obtained through the convolution layer, and the loss function is calculated using Dice loss, which is represented by \(L_{FCM}\) in the following equation.

In the second stage, the training loss \(L_{Final}\) comprises two parts. Similar to the first stage, the first part is the Dice loss function of the self-predictor, and the second part \(L_{WA}\) is the cross-entropy loss function of inserting WA-block.

Experiments configuration

Datasets

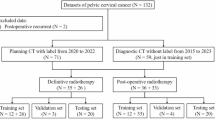

Data was collected from patients undergoing radical or postoperative radiotherapy for cervical cancer at the Radiotherapy Department of the First Affiliated Hospital of Anhui Medical University from June 2017 and May 2019. The study adhered to the principles of the Declaration of Helsinki and received approval from the hospital’s Ethics Committee. Informed consent was obtained from all subjects. This study was a retrospective analysis to evaluate the effectiveness of our proposed dual attention segmentation model by examining patient history data. There were a total of 621 cases in this study, of which 497 are used as training sets and 124 are used as test sets.

Following the consensus definition by the RTOG, the CTV for cervical cancer encompasses the vaginal stump, the upper 3 cm of the vagina, and the paravaginal pelvic lymphatic drainage areas (internal iliac, external iliac, obturator, part of presacral, and common iliac). Para-abdominal aortic lymphatic drainage area in those with risk factors. CT images were obtained in all patients using a SIEMEMS Somatom Definition AS with software Version of “syngo CT VA48A” and protocol name was “1_RT_Abdomen”. The scanning range was from the top of the liver to the lower perineum. The number of layers was 85-120 and the layer thickness was 5 mm. The scanning parameters were tube voltage of 120 KV, tube current of 400 mAs and reconstructed voxel values of \(512\times 512\times k\) (k stands for the number of CT slices). During CT scanning, the patients were set up in the supine position and immobilized with a thermoplastic body mold. The layer thickness was 3mm.

In addition, we also used a multi-center dataset to validate the model. The multi-center dataset includes 38 cases from Taihe Hospital of Wannan Medical College, which was collected using CT imaging equipment from Philips and Neusoft Medical, and manually annotated by two experts with 20 years of experience.

Implementation details

The implementation is based on the public PyTorch platform in Ubuntu 16.04, using a single NVIDIA GPU (GeForce GTX 1080Ti). The input images are cropped to reduce the interference of the signal-free areas in the image on the network and facilitate subsequent processing. To reduce the risk of overfitting and improve the robustness of medical image segmentation, the dataset is augmented by random horizontal flipping, vertical flipping, and rotation with a certain probability of 0.5. In addition, we randomly adjust the contrast of the image. During training, the batch size is set to 2. Batch normalization is performed, and the Adam optimization algorithm with a weight decay of 0.0005 is applied. The initial learning rate is 0.0002, and multiple learning rate schedules are applied9,36. The network is trained for 1000 epochs in the first stage and then 300 epochs in the second stage for fine-tuning.

Evaluation metrics

To make a quantitative comparison between the performance of different CTV delineation methods, Dice coefficient (Dice), 95th percentile Hausdorff distance (HD95) and Average Symmetric Surface Distance (ASSD) are calculated. Dice describes the relative overlap between the predicted results and the ground truth. The range is between 0 and 1. The equation is shown as follow:

where TP, TN, FP and FN represent the number of true positives, true negatives, false positives and false negatives, respectively.

Hausdorff distance (HD) is used to quantify the 3D distance between two segmented surface A and B. h(A, B) calculates the minimum distance from each point in point set A to point set B, and then takes the maximum from it. d(a, b) means the distance between point a and point b. The same is true for h(B, A). To reduce the effect of outliers, HD95 is the 95th percentile across all these distances.

Average Symmetric Surface Distance (ASSD) is also an evaluation metric for calculating the distance between surface A and surface B. To find the ASSD between A and B: 1) for each point on A, find its minimum distance from B, and then sum these distances; 2) for each point on B, find its minimum distance from A, and then sum these distances. The sum of the last two terms obtained in 1) and 2) is divided by the total number of midpoints in A and B.

Results and discussion

Comparison with state-of-the-art methods

We compare our method with the state-of-the-art models. The selected baselines are shown as follows. (1) 3D-UNet37: it is a deep learning medical image segmentation model consisting of an encoder and a decoder, which can effectively process 3D medical image data such as CT and MRI. (2) nnFormer38: a medical image segmentation model that combines Transformers and convolutional neural networks. It captures global information using Transformers and extracts local features with convolutional neural networks. (3) UNETR39: it is a model that uses Transformer encoder to extract features and U-Net decoder for image segmentation. (4) 3D-UXNet40: a deep learning model for 3D medical image segmentation that combines the hierarchical feature extraction of U-Net with the global attention mechanism of Transformers to capture both local and global information to achieve higher segmentation accuracy in complex 3D structures. (5) nnU-Net: an automated and versatile medical image segmentation framework designed to adapt to various segmentation tasks without manual adjustments. It achieves efficient segmentation of different types of medical image data by adaptively configuring network architecture and training strategies.

We performed quantitative analysis of the segmentation results. The experimental results are evaluated with Dice, HD95 and ASSD, which are shown in Table 1. Based on the experimental results, we can observe that our method shows the best performance compared with other baselines.

Compared to the original 3D-UNet, our method improves the performance by 11.88% from 69.45% ± 10.80% to 81.33% ± 6.36%. Compared to nnU-Net, our method improves the performance by 3.17% on Dice (from 78.16% ± 6.10% to 81.33% ± 6.36%). In terms of HD95 and ASSD, our method also achieves a new state-of-the-art performance. Compared to nnU-Net, the average HD95 value is reduced from 13.40mm ±7.28mm to about 9.39mm ± 7.12mm. Meanwhile, our method also performs well on surface distance measure. ASSD is reduced from about 2.49mm ± 1.12mm to 2.02mm ± 0.98mm.

Figure 4 shows the box plots for Dice, HD95 and ASSD in order to compare the distribution of results for these three statistics in different networks. Figure 4(a), Fig. 4(b), and Fig. 4(c) represent the statistics of the test images Dice, HD95 and ASSD, respectively. As we can see from Fig. 4(a), our method has improved the minimum, first quartile (Q1), median (Q2), third quartile (Q3), and maximum values of the Dice values compared to the baselines. From Fig. 4(b), we can see that compared with nnU-Net, our method reduces the five basic statistics of the HD95 box plot and also has lower variance. Observing Fig. 4(c), in addition to reducing the five basic statistics of the box plot, our method also has fewer outliers. There are also some outliers. These outliers are mainly concentrated in cases with smaller CTV foreground areas, such as postoperative cases. In the Baseline 3D-UNet, there are more deviated outliers. This is because 3D-UNet mainly recognizes pixels and does not add additional knowledge constraints. In comparison, our method is more concentrated in Dice, HS95, and ASSD indicators, with fewer outliers and smaller deviations.

The statistics of the test images about Dice, HD95 and ASSD are represented in (a-c) respectively. The five basic statistics of the box plot include: minimum, first quartile (Q1), median (Q2), third quartile (Q3) and maximum.

For integrating anatomical knowledge, our method incorporates the relationship between OARs and CTV based on spatial attention, which is different from traditional weighted methods (such as RA-CTVNet9). RA-CTVNet applies area-aware reweight strategy which assigns different weights for different slices. The weights are calculated with foreground area proportion. In contrast, our KDPN adopts a weighted method based on the transformer attention mechanism, implicitly representing the weight of the feature through the transformer’s K/Q/V. Specifically, the feature map \(X_c\) extracted by the decoder of CTV is expanded into sequence vectors as key and value. The feature map \(X_o\) extracted by the decoder of OAR is used as query. Cross-attention is used to calculate the similarity between the query and the corresponding key, which outputs the weighted features. This specific attention mechanism is used to learn the spatial-anatomical features of CTV and OAR. Furthermore, WAN module focuses on learning the width attention between CTV and the lumbar muscles through pre-training and fine-tuning strategy. These modules utilize attention mechanisms rather than traditional reweighting strategy.

Visualization

We randomly selected samples and visualized the prediction results in Fig. 5. It can be seen that the baseline network can identify the general area of cervical cancer CTV, but the segmentation performance at the edge is poor, which is consistent with our hypothesis. As shown in Fig. 5(a), 3D-UNet performs poorly at the upper boundary, where the distance outlined along the abdominal aorta is short and the outer edge of the uterine body morphology at the front boundary is not obvious. In contrast, our method shows significant improvement at the segmentation edge. The possible reason is that SCN learns the relative position relationship between the OARs and CTV, providing meaningful guidance for the segmentation of CTV. WAN helps to further improve the segmentation accuracy of the network by learning the anatomical prior knowledge between the psoas muscle and CTV.

Comparison of different methods for segmenting CTV. (a) 3D-UNet, (b) nnFormer, (c) UNETR, (d) 3D-UXNet, (e) nnU-Net, (f) Our method, (g) Ground-truth.

Ablation studies

In this section, we conduct a series of ablation studies to validate the effectiveness and contributions of each component within our proposed method for image segmentation. To assess the incremental benefit of incorporating the WAN into the segmentation framework, we compare the performance of the SCN alone with the combined SCN+WAN model (i.e. our KDPN method). As shown in Table 2, the experimental results clearly demonstrate that the combination of the WAN and SCN substantially improves segmentation accuracy of the model. It is worth noting that the SCN+WAN model is closer to the ground-truth, and the Dice, HD95 and ASSD are significantly improved compared with the SCN model alone. For the Dice value, SCN achieved a 1.6% improvement (from 78.16% to 79.76%). Furthermore, SCN+WAN improved it by another 1.57%.

Comparing a1 and a2, s1 and s2, D1 and D2 in Fig. 6, we found that SCN has greatly improved the overall contour segmentation of the backbone, especially the front boundary of CTV. However, there will be some over-segmentation at the upper boundary of CTV, as shown in s2 and c2 in Fig. 6, which also leads to the unsatisfactory Dice of SCN. This may be because the SCN model is used alone without adding the location constraint of OARs, resulting in over-segmentation. WAN can effectively suppress this, as shown in s3 and c3 in Fig. 6. This shows that the addition of the WAN module not only makes the segmentation network perform better in the width direction, as shown in s4 and s5 in Fig. 6, but also is very effective for segmentation in the height direction (a2 vs a3). In general, our ablation study fully demonstrates that the combination of SCN and WAN has better segmentation performance than SCN alone. Besides, we also show the statistical boxes in Fig. 7 to show the effectiveness of our method.

Comparison of backbone, SCN, Our method (SCN+WAN) for segmenting CTV. From top to bottom, axial plane(a), sagittal plane(s), coronal(c), 3D segmentation(D). From left to right, the output results of different models are shown: backbone, SCN, our method (SCN+WAN), and ground truth.

Dice, HD95, ASSD values of Backbone, SCN and our method.

Subgroup analysis

In order to evaluate and compare the segmentation accuracy and generalization performance of our model, we conducted subgroup analysis in two different treatment-based subgroups. This involved evaluating the performance of the models in accurately segmenting medical images from both radical and adjuvant treatment settings using the same metrics of Dice, HD95, and ASSD.

The dataset was divided into two main subgroups based on the treatment strategy adopted for the patients for whom the medical images were acquired. The first subgroup, called the adjuvant group, included images of patients who received adjuvant therapy after primary treatment. The goal of adjuvant therapy is to eliminate residual lesions and reduce the risk of recurrence. This subgroup was allocated 168 cases for training and 41 for testing. Adjuvant therapy is typically used in cases where there is a high risk of disease spread or recurrence, requiring additional therapeutic measures beyond the primary treatment. The second subgroup, called the radical treatment group, consists of images of patients who received radical treatment, such as surgery. This group was further divided into a training set of 329 cases and a test set of 82 cases. Radical treatment usually aims to eradicate the disease and is suitable for localized disease or disease with clear boundaries around the disease. After treatment, the CTV area is relatively small.

As can be seen from Table 3, our method is effective for both the auxiliary group and the radical group. In the radical group, our method is 3.01% higher than the backbone group on Dice. The auxiliary group has less data, only 168 cases for training, and our method performs 3.97% higher than the backbone group. Our method also achieves better results for HD95 and ASSD.

Our analysis showed that the model exhibited different performances when applied to the two subgroups, with the adjuvant group having better results than the radical group. These differences can be attributed to differences in disease representation and treatment effects captured in medical images. Images in the radical group may show fewer residual lesions, presenting a smaller CTV area, which makes it challenging to directly delineate the CTV target.

Multicenter evaluation

To verify the generalization of the method, we conduct further experiments on the multi-center dataset. We use the same process established for the initial dataset to process each case from the multi-center dataset. This includes preprocessing steps, model inference, and post-processing to ensure consistency in the way the data is processed. As in the previous experiments, Dice, HD95, and ASSD are calculated to verify the effectiveness of our method. The results are shown in Table 4. We can observe that despite the small amount of data, the multicenter data still shows better test results compared with Baseline of nnU-Net.

Considering that datasets acquired by different devices may have large differences in various parameters such as layer thickness and contrast, our model can also achieve satisfactory results on datasets acquired by other imaging devices. By testing our model on a multi-center dataset, we can evaluate the adaptability of the model to changes in data distribution and demonstrate its potential for clinical application. Experimental results show that our proposed model is helpful in developing an automatic delineation tool for cervical cancer CTV.

Besides, one benefit of automatically outlining the CTV based on KDPN is that it can reduce the time doctors spend outlining the CTV. After discussing with a senior oncologist with more than ten years of experience, it usually takes 20 to 40 minutes to delineate the CTV considering clinical structural prior information (such as the relationship among the CTV, OARs and the psoas muscle). However, the deep learning-based method we proposed can complete this process within one minute. This comparison highlights the efficiency and clinical applicability of our method.

Conclusion

Clinical target volume (CTV) segmentation is a key part of radiotherapy for cervical cancer. CTV segmentation poses a complex challenge to medical image analysis due to the fuzzy boundaries and irregular shapes of CTV in cervical cancer CT images. Manual delineation of CTV boundaries relies heavily on anatomical expertise. First, we propose a spatial constraint network (SCN) that introduces an OAR feature module in the encoder-decoder segmentation architecture to establish a connection between CTV segmentation and OARs. This modification allows the network to learn prior knowledge of OARs while providing valuable guidance for CTV segmentation. The five selected OARs include bladder, pelvis, pelvic bone marrow, rectum, and small intestine. In addition, the psoas muscle has a unique and clear relative position relationship with the CTV. To adapt to this prior knowledge, we propose a width-driven attention network (WAN) to refine the previous segmentation results and thus improve the accuracy of CTV delineation. Extensive experiments, ablation studies, group analysis and multicenter validation demonstrate that our approach significantly improves the performance of CTV segmentation in a cervical cancer abdominal CT dataset.

Data availibility

The datasets used and analyzed during the current study are available from the corresponding author on reasonable request.

References

Siegel, R. L., Miller, K. D., Wagle, N. S. & Jemal, A. Cancer statistics, 2023. CA: a cancer journal for clinicians 73, 17–48 (2023).

Yamada, T. et al. The current state and future perspectives of radiotherapy for cervical cancer.. Journal of Obstetrics and Gynaecology Research 50, 84–94 (2024).

Han, F. et al. Development and validation of an automated tomotherapy planning method for cervical cancer. Radiation Oncology 19, 88 (2024).

Jr Small, W. et al. Consensus guidelines for delineation of clinical target volume for intensity-modulated pelvic radiotherapy in postoperative treatment of endometrial and cervical cancer.. Int. J. Radiat. Oncol. Biol. Phys. 71, 428–434 (2008).

Tian, M. et al. Delineation of clinical target volume and organs at risk in cervical cancer radiotherapy by deep learning networks. Medical Physics 50, 6354–6365 (2023).

Sykes, J. Reflections on the current status of commercial automated segmentation systems in clinical practice 61(3), 131–134 (2014).

Wang, J., Chen, Y., Xie, H., Luo, L. & Tang, Q. Evaluation of auto-segmentation for ebrt planning structures using deep learning-based workflow on cervical cancer. Scientific Reports 12, 13650 (2022).

Liu, Z. et al. Development and validation of a deep learning algorithm for auto-delineation of clinical target volume and organs at risk in cervical cancer radiotherapy. Radiotherapy and Oncology 153, 172–179 (2020).

Shi, J. et al. Automatic clinical target volume delineation for cervical cancer in ct images using deep learning. Medical Physics 48, 3968–3981 (2021).

Powell, K. A. et al. Atlas-based segmentation of temporal bone surface structures. International Journal of Computer Assisted Radiology and Surgery 14, 1267–1273 (2019).

Ortiz, C. G. & Martel, A. Automatic atlas-based segmentation of the breast in mri for 3d breast volume computation. Medical physics 39, 5835–5848 (2012).

Rigaud, B. et al. Automatic segmentation using deep learning to enable online dose optimization during adaptive radiation therapy of cervical cancer.. Int. J. Radiat. Oncol. Biol. Phys. 109, 1096–1110 (2021).

Jiang, X., Wang, F., Chen, Y. & Yan, S. Refinenet-based automatic delineation of the clinical target volume and organs at risk for three-dimensional brachytherapy for cervical cancer. Annals of Translational Medicine 9(23), 1721 (2021).

Ju, Z. et al. Ct based automatic clinical target volume delineation using a dense-fully connected convolution network for cervical cancer radiation therapy. BMC cancer 21, 1–10 (2021).

Ma, C.-Y. et al. Deep learning-based auto-segmentation of clinical target volumes for radiotherapy treatment of cervical cancer. Journal of Applied Clinical Medical Physics 23, e13470 (2022).

Mao, X. et al. Deep learning with skip connection attention for choroid layer segmentation in oct images. In 2020 42nd Annual international conference of the IEEE engineering in medicine & biology society (EMBC), 1641–1645 (IEEE, 2020).

Ronneberger, O., Fischer, P. & Brox, T. U-net: Convolutional networks for biomedical image segmentation. In Medical image computing and computer-assisted intervention–MICCAI 2015: 18th international conference, Munich, Germany, October 5-9, 2015, proceedings, part III 18, 234–241 (Springer, 2015).

Kim, N., Chang, J. S., Kim, Y. B. & Kim, J. S. Atlas-based auto-segmentation for postoperative radiotherapy planning in endometrial and cervical cancers. Radiation Oncology 15, 1–9 (2020).

Ju, Z. et al. Ct based automatic clinical target volume delineation using a dense-fully connected convolution network for cervical cancer radiation therapy. BMC cancer 21, 1–10 (2021).

Ma, C.-Y. et al. Clinical evaluation of deep learning-based clinical target volume three-channel auto-segmentation algorithm for adaptive radiotherapy in cervical cancer. BMC Medical Imaging 22, 123 (2022).

Jiao, R. et al. Learning with limited annotations: a survey on deep semi-supervised learning for medical image segmentation. Computers in Biology and Medicine 169, 107840 (2024).

Kats, E., Goldberger, J. & Greenspan, H. Soft labeling by distilling anatomical knowledge for improved ms lesion segmentation. In 2019 IEEE 16th International Symposium on Biomedical Imaging (ISBI 2019), 1563–1566 (IEEE, 2019).

Peng, Y. et al. Pulmonary lobe segmentation in ct images based on lung anatomy knowledge. Mathematical Problems in Engineering 2021, 5588629 (2021).

Ganaye, P.-A., Sdika, M., Triggs, B. & Benoit-Cattin, H. Removing segmentation inconsistencies with semi-supervised non-adjacency constraint. Medical image analysis 58, 101551 (2019).

Gao, Y., Dai, Y., Liu, F., Chen, W. & Shi, L. An anatomy-aware framework for automatic segmentation of parotid tumor from multimodal mri. Computers in Biology and Medicine 161, 107000 (2023).

Oktay, O. et al. Anatomically constrained neural networks (acnns): application to cardiac image enhancement and segmentation. IEEE transactions on medical imaging 37, 384–395 (2017).

Navarro, F. et al. Shape-aware complementary-task learning for multi-organ segmentation. In Machine Learning in Medical Imaging: 10th International Workshop, MLMI 2019, Held in Conjunction with MICCAI 2019, Shenzhen, China, October 13, 2019, Proceedings 10, 620–627 (Springer, 2019).

Ganaye, P.-A., Sdika, M. & Benoit-Cattin, H. Towards integrating spatial localization in convolutional neural networks for brain image segmentation. In 2018 IEEE 15th International Symposium on Biomedical Imaging (ISBI 2018), 621–625 (IEEE, 2018).

Balagopal, A. et al. A deep learning-based framework for segmenting invisible clinical target volumes with estimated uncertainties for post-operative prostate cancer radiotherapy. Medical image analysis 72, 102101 (2021).

Mlynarski, P., Delingette, H., Alghamdi, H., Bondiau, P.-Y. & Ayache, N. Anatomically consistent cnn-based segmentation of organs-at-risk in cranial radiotherapy. Journal of Medical Imaging 7, 014502–014502 (2020).

da Silva, G. L. et al. Superpixel-based deep convolutional neural networks and active contour model for automatic prostate segmentation on 3d mri scans. Medical & Biological Engineering & Computing 58, 1947–1964 (2020).

Le, D. C., Chinnasarn, K., Chansangrat, J., Keeratibharat, N. & Horkaew, P. Semi-automatic liver segmentation based on probabilistic models and anatomical constraints. Scientific Reports 11, 6106 (2021).

Vaswani, A. Attention is all you need. Advances in Neural Information Processing Systems (2017).

Isensee, F., Jaeger, P. F., Kohl, S. A., Petersen, J. & Maier-Hein, K. H. nnu-net: a self-configuring method for deep learning-based biomedical image segmentation. Nature methods 18, 203–211 (2021).

Hu, J., Shen, L. & Sun, G. Squeeze-and-excitation networks. In Proceedings of the IEEE conference on computer vision and pattern recognition, 7132–7141 (2018).

Choi, S., Kim, J. T. & Choo, J. Cars can’t fly up in the sky: Improving urban-scene segmentation via height-driven attention networks. In Proceedings of the IEEE/CVF conference on computer vision and pattern recognition, 9373–9383 (2020).

Çiçek, Ö., Abdulkadir, A., Lienkamp, S. S., Brox, T. & Ronneberger, O. 3d u-net: learning dense volumetric segmentation from sparse annotation. In Medical Image Computing and Computer-Assisted Intervention–MICCAI 2016: 19th International Conference, Athens, Greece, October 17-21, 2016, Proceedings, Part II 19, 424–432 (Springer, 2016).

Zhou, H.-Y. et al. nnformer: Volumetric medical image segmentation via a 3d transformer. IEEE Transactions on Image Processing 32, 4036–4045 (2023).

Hatamizadeh, A. et al. Unetr: Transformers for 3d medical image segmentation. In Proceedings of the IEEE/CVF winter conference on applications of computer vision, 574–584 (2022).

Lee, H. H., Bao, S., Huo, Y. & Landman, B. A. 3d ux-net: A large kernel volumetric convnet modernizing hierarchical transformer for medical image segmentation. arXiv preprint arXiv:2209.15076 (2022).

Acknowledgements

This research was supported by Noncommunicable Chronic Diseases-National Science and Technology Major Project (2023ZD0506502); Guangdong Basic and Applied Basic Research Foundation (2023A1515110721); Brain Science and Brain-Inspired Intelligence Technology Major Project (2021ZD0200500); The Key Research and Development Program of NingXia (2023BEG02060); The Fundamental Research Funds for the Central Universities (FRF-TP-22-050A1).

Author information

Authors and Affiliations

Contributions

J.S and X.M implemented the algorithms, designed the experiments, performed the data analysis and wrote the manuscript. J.S received the funding. Y.Y performed the data analysis and revised the manuscript. S.L and Z.H performed the data analysis. W.Z, S.Z and W.L provided the data. Y.Y and W.L designed and supervised the research.

Corresponding authors

Ethics declarations

Competing interests

The authors declare no competing interests.

Additional information

Publisher’s note

Springer Nature remains neutral with regard to jurisdictional claims in published maps and institutional affiliations.

Electronic supplementary material

Below is the link to the electronic supplementary material.

Rights and permissions

Open Access This article is licensed under a Creative Commons Attribution-NonCommercial-NoDerivatives 4.0 International License, which permits any non-commercial use, sharing, distribution and reproduction in any medium or format, as long as you give appropriate credit to the original author(s) and the source, provide a link to the Creative Commons licence, and indicate if you modified the licensed material. You do not have permission under this licence to share adapted material derived from this article or parts of it. The images or other third party material in this article are included in the article’s Creative Commons licence, unless indicated otherwise in a credit line to the material. If material is not included in the article’s Creative Commons licence and your intended use is not permitted by statutory regulation or exceeds the permitted use, you will need to obtain permission directly from the copyright holder. To view a copy of this licence, visit http://creativecommons.org/licenses/by-nc-nd/4.0/.

About this article

Cite this article

Shi, J., Mao, X., Yang, Y. et al. Prior knowledge of anatomical relationships supports automatic delineation of clinical target volume for cervical cancer. Sci Rep 15, 23898 (2025). https://doi.org/10.1038/s41598-025-08586-y

Received:

Accepted:

Published:

Version of record:

DOI: https://doi.org/10.1038/s41598-025-08586-y