Abstract

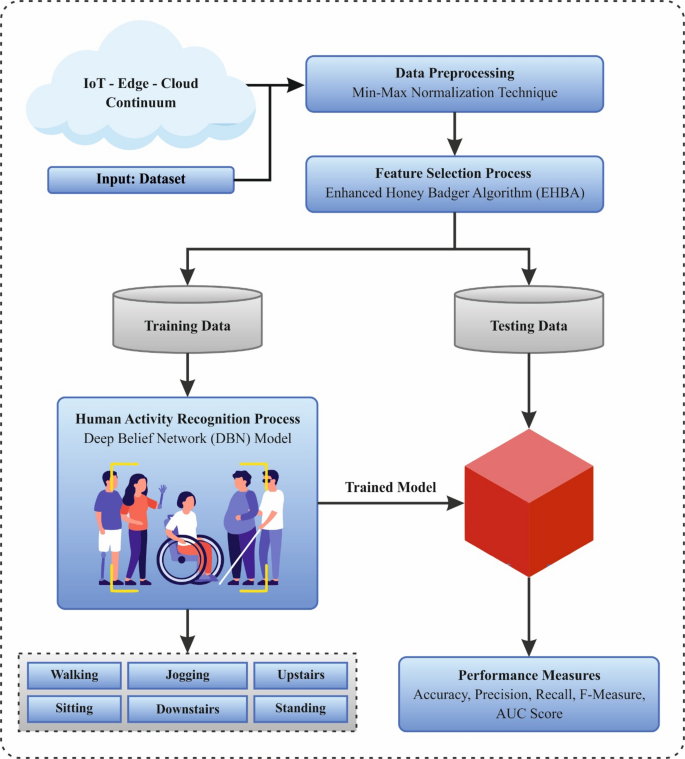

Aging is associated with a reduction in the capability to perform activities of everyday routine and a decline in physical activity, which affects physical and mental health. A human activity recognition (HAR) system can be a valuable tool for elderly individuals or patients, as it monitors their activities and detects any significant changes in behavior or events. When integrated with the Internet of Things (IoT), this system enables individuals to live independently while ensuring their well-being. The IoT-edge-cloud framework enhances this by processing data as close to the source as possible—either on edge devices or directly on the IoT devices themselves. However, the massive number of activity constellations and sensor configurations make the HAR problem challenging to solve deterministically. HAR involves collecting sensor data to classify diverse human activities and is a rapidly growing field. It presents valuable insights into the health, fitness, and overall wellness of individuals outside of hospital settings. Therefore, the machine learning (ML) model is mostly used for the growth of the HAR system to discover the models of human activity from the sensor data. In this manuscript, an Intelligent Deep Learning Technique for Human Activity Recognition of Persons with Disabilities using the Sensors Technology (IDLTHAR-PDST) technique is proposed. The purpose of the IDLTHAR-PDST technique is to efficiently recognize and interpret activities by leveraging sensor technology within a smart IoT-Edge-Cloud continuum. Initially, the IDLTHAR-PDST technique utilizes min-max normalization-based data pre-processing model to optimize sensor data consistency and enhance model performance. For feature subset selection, the enhanced honey badger algorithm (EHBA) model is used to effectively reduce dimensionality while retaining critical activity-related features. Finally, the deep belief network (DBN) model is employed for HAR. To exhibit the improved performance of the existing IDLTHAR-PDST model, a comprehensive simulation study is accomplished. The performance validation of the IDLTHAR-PDST model portrayed a superior accuracy value of 98.75% over existing techniques.

Similar content being viewed by others

Introduction

Although the monitoring and recognition of human activities can allow safety-critical applications, for example, monitoring disabled or elderly people living alone that may need clinical attention the emergence of low-cost sensing abilities has allowed ubiquitous possibilities of HAR for everyday applications1. Various context-aware applications have presently developed like efficiency, factory floor monitoring, and workout tracking. Classically, human activity has been deduced by both ambient sensors, like cameras, and wearable sensors, like smartwatches with IMUs2. Though wearables have been recognized to be efficient methods for HAR, it is not practical to undertake that all of the subjects in space will utilize wearables that are well-suited to the deduced method. HAR is the automatic understanding of human actions performed by a group of people or individuals3. There are many sectors and regions where it is employed, like health, tablets, security, cars, governments, commercial organizations, games, and smartphones. Most of the sensors mentioned earlier are incorporated into a smartphone4. Recently, smartphones are preferred for applying better HAR methods owing to the maximizing precision of their built-in sensors, low cost, wireless facilities, wireless connectivity, and popularity. Thus, smartphones are initiating a new horizon in the application for understanding users’ activities and their worldwide context5. HAR became a popular topic in the past years owing to its significance in many areas, like interactive gaming, sports, medical care, and monitoring methods for general purposes. Also, now, the aging population has become one of the world’s main concerns6.

To monitor the functional, physical, and cognitive health of aged adults in their homes, HAR is developing as a powerful tool. HAR aims to identify human activities in uncontrolled and controlled settings. HAR data are changing to become a novel structural paradigm rather than simply encompassing the cloud by considering the developing edge requirements7. This change is not restricted to but contains the version of edge computing, which becomes required for IoT applications in real-time. The fast development of the IoT to detect human activities to enhance possibilities utilizing diverse sensor readings8. For the majority of the applications, the sensor is damaged by users as portable appliances or fixed into household wares. This reading from the sensor is then gathered and understood for promising activity detection9. When compared to classical activity detection approaches, ML and deep learning (DL) models are applicable for processing time-series data, namely audio and video signals, and natural language10. These approaches are also directly affected by the nature of data issued from several sensory sources and modalities, for example, body-mounted sensors, accelerometers, multi-touch screens, or wearable sensors like data gloves to investigate human behaviors. It becomes crucial for continuous and reliable monitoring of individuals with disabilities, particularly in environments where timely assistance is critical. Advancements in sensor-based IoT and edge computing present promising ways for enabling intelligent, non-intrusive activity recognition. The is a growing need for building systems that can detect subtle behavioral alterations and emergency events with high accuracy, as there is a demand for personalized care. Unlike conventional methods that depend heavily on user compliance or infrastructure-heavy setups, modern sensor-based models can deliver real-time insights while maintaining user comfort. This creates an opportunity to bridge the gap between assistive healthcare and intelligent automation through adaptive DL models. Figure 1 depicts the layered architecture of IoT and cloud integration for HAR in individuals with disabilities.

Layered architecture of IoT and cloud integration for HAR.

In this manuscript, an Intelligent Deep Learning Technique for Human Activity Recognition of Persons with Disabilities using the Sensors Technology (IDLTHAR-PDST) technique is proposed. The purpose of the IDLTHAR-PDST technique is to efficiently recognize and interpret activities by leveraging sensor technology within a smart IoT-Edge-Cloud continuum. Initially, the IDLTHAR-PDST technique utilizes min-max normalization-based data pre-processing model to optimize sensor data consistency and enhance model performance. For feature subset selection, the enhanced honey badger algorithm (EHBA) model is used to effectively reduce dimensionality while retaining critical activity-related features. Finally, the deep belief network (DBN) model is employed for HAR. To exhibit the improved performance of the existing IDLTHAR-PDST model, a comprehensive simulation study is accomplished. The key contribution of the IDLTHAR-PDST model is listed below.

-

The IDLTHAR-PDST approach utilizes min-max normalization to scale sensor data, ensuring that all features are within a consistent range. This assists in eliminating discrepancies caused by varying feature magnitudes, improving the performance of the DL model. By standardizing the data, the model can learn more effectively, resulting in an improved accuracy in HAR.

-

The EHBA is used to perform effectual feature selection, mitigating the dimensionality of large and complex datasets. By detecting the most relevant features, EHBA improves the accuracy of the model and simplifies the computational process. This results in a more efficient and effective HAR system, particularly in real-time applications.

-

A DBN technique is utilized for classifying human activities, efficiently capturing complex patterns in sensor data. This allows the system to recognize and distinguish activities with high accuracy in real-time. The use of DBN significantly enhances the performance and reliability of HAR in dynamic environments.

-

The IDLTHAR-PDST model presents a novel approach by integrating min-max normalization, EHBA-based feature selection, and DBN-based classification. This combination ensures real-time, resource-efficient HAR for individuals with disabilities. The novelty is in the seamless integration of these techniques, optimizing both performance and computational efficiency in dynamic environments.

The structure of the article is as follows: Section “Related works” provides a review of the literature, Section “Methodology” describes the proposed method, Section “Performance validation” presents the evaluation of results, and Section “Conclusion” offers the study’s conclusions.

Related works

Wazwaz et al.11 utilized wearable sensors and smartphones to collect accelerometer and gyroscope data from multiple body positions, utilizing dynamic model selection and the light gradient boosting machine (LightGBM) approach for efficient and accurate HAR. By integrating smart IoT devices, edge computing, and cloud resources, the approach achieves high prediction accuracy while minimizing latency and computational load across diverse environments. Arokiaraj and Viswanathan12 introduce an ensemble of capsule networks (CN) and extreme learning machine (ELM) with modified gated recurrent units (MGRU) method for efficient classification depending on data accumulated by implementing IoT namely ensemble capsule gated (ECG) networks. This method utilizes CNs for extraction and MGRU for temporal extraction. Mohsin et al.13 analyze HAR model by examining signals gathered from real-world activity detection. This technique also employs a DBN trained in an unsupervised layered manner on statistical features extracted from multi-sensor a smartphone data. Data from the human sensors are accumulated via high-tech mobile devices and later investigated by a DL method. Hafeez et al.14 introduce a solution for fusion descriptors depending on features. Initially, denoising methods are utilized for preprocessing. The frames are utilized for eliminating background, which are later employed for extracting features. Then time and frequency features are extracted. Lastly, various feature sets are incorporated and sent to a zero-order optimizing technique and later logistic regression (LR) is employed for recognition. Bouazizi et al.15 proposed a model that utilizes multiple 2D Lidar sensors to capture comprehensive indoor activity data, which is then sent to image-like formats. A convolutional long short-term memory (LSTM) neural network is used to effectively classify activities, fall events, and unsteady gait patterns, achieving high accuracy in elderly monitoring scenarios. This approach leverages non-intrusive sensing and DL to improve real-time hazard detection. Alonazi et al.16 introduce a Symbiotic Organism Search with a Deep Convolutional NN-based HAR (SOSDCNN-HAR) method. The Wiener filtering (WF) model is used for pre-processing. Furthermore, a RetinaNet-based feature extractor is employed for feature extraction. Also, the SOS process is implemented for optimization. Moreover, a GRU model is used as a classifier to allocate appropriate classes. Djenouri et al.17 present a theory for Home-based Monitoring (HM) for enhanced caring and security. Spatio-temporal visual Learning for HM (STVL-HM) is a methodology that studies sensory information. This approach also presents a hybrid technique by depending on a CNN and Transformers. Yadav, Rani, and Verma18 improved HAR accuracy and computational efficiency using DL and ML model. The technique also utilizes VGG16, VGG19, and MobileNetV2 for feature extraction, integrated with four classifiers namely support vector machine (SVM), random forest (RF), multilayer perceptron (MLP), and Fuzzy Classifier for effective activity recognition.

Kumar and Sumathi19 improved the accuracy and efficiency of HAR by converting IMU data into spectrograms using short-time fourier transform (STFT) and analyzing them with a GHOST NEURAL NETWORK (GNet). Feature selection is optimized using the fire-hawk optimizer (FHO), enabling robust global optimization and effective handling of complex signal patterns across diverse datasets. Thukral, Haresamudram, and Ploetz20 proposed a model to improve sensor-based HAR in real-world, low-label scenarios by utilizing a teacher-student self-training framework. The cross-domain HAR methodology utilizes transfer learning (TL) from publicly available datasets and adapts to variations in sensor type, location, and activity through effective cross-domain knowledge transfer. Sharen et al.21 aimed to improve the accuracy of multi-class HAR by introducing WISNet, a custom 1D-CNN model. The method employs convolved normalized pooled (CNPM) blocks for early feature extraction, identity and basic (IDBN) blocks for learning sequential dependencies, and Channel and Spatial attention (CASb) blocks to emphasize crucial features. Sassi Hidri et al.22 improved HAR by optimizing DL methods, particularly LSTM recurrent neural networks (LSTM RNN), to effectually capture temporal dependencies in smartphone accelerometer data. The approach utilizes automated feature learning through CNNs, autoencoders, and LSTM RNNs to improve classification accuracy for real-time applications. Mohsen23 improved the accuracy of HAR by implementing a GRU-based DL. The model is trained and optimized using hyperparameter tuning within the TensorFlow framework. Rajeswari et al.24 enabled real-time and accurate health monitoring by integrating wearable IoT sensors with CNN for feature extraction, integrated with edge computing to process data locally. Mazhar et al.25 aimed to strengthen IoT security by using ML and DL techniques to detect and analyze cyberattack patterns from unstructured data. By integrating AI-driven models, the approach enables intelligent, adaptive, and up-to-date protection for next-generation IoT systems against evolving threats. Ma, Zhang, and Liang26 improved badminton action recognition by optimizing spatiotemporal feature extraction and multimodal data fusion using DL approaches such as spatial-temporal graph convolutional network (ST-GCN), vision-attention transformer for real-time motion recognition (VATRM), and multi-modal network for sports action recognition (MM-Net). This approach enhances recognition accuracy and computational efficiency. Ghadi et al.27 improved privacy and efficiency in IoT applications by employing federated learning (FL), which enables decentralized AI training on local IoT devices without sharing raw data. The approach facilitates collaborative model improvement while preserving data privacy across diverse smart environments such as healthcare, transportation, and smart cities. Table 1 describes the comparison of methods, datasets, and performance metrics for various HAR and monitoring systems.

Despite crucial advancements in HAR through the integration of DL with sensor-based IoT systems across edge and cloud computing, various research gaps still exist. Most DL models are computationally intensive and inappropriate for real-time execution on resource-constrained edge devices, making model optimization for both accuracy and efficiency crucial. Data heterogeneity across users, environments, and devices limits model generalization, while privacy concerns arise when transmitting sensitive health data to the cloud. Although edge computing assists in reducing latency and privacy risks, maintaining performance without compromising data security is challenging. Moreover, achieving real-time HAR with multi-sensor fusion and large datasets demands low-latency models, and the requirement for energy-efficient architectures is critical for wearable deployment. These challenges highlight the ongoing research gap in developing robust, adaptive, and privacy-aware HAR systems.

Methodology

In this manuscript, an IDLTHAR-PDST technique is presented. The purpose of the IDLTHAR-PDST technique is to efficiently recognize and interpret activities by leveraging sensor technology within a smart IoT-Edge-Cloud continuum. To accomplish that, the IDLTHAR-PDST approach comprises step-by-step processes namely data preprocessing, EHBA-based feature selection, and DBN-based classification processes are represented in Fig. 2.

Overall process of IDLTHAR-PDST approach.

Data preprocessing

Firstly, the IDLTHAR-PDST technique utilizes min-max normalization-based data pre-processing model to optimize sensor data consistency and enhance model performance28. This model is chosen in this model because it scales the data to a fixed range, usually between 0 and 1, which assists in standardizing input features. This is specifically crucial in DL models, where features with diverse magnitudes can negatively impact the learning process, causing the model to favor features with larger values. By normalizing the data, the model can learn more efficiently and converge faster. Min-max normalization is also computationally effectual and preserves the relationships between the data points, making it ideal for sensor data in HAR. Furthermore, this technique works well with the IoT-Edge-Cloud continuum, where real-time data from diverse sensors is processed.

Data pre-processing is important for enhancing the model’s precision and minimizing computational complexity. Initially, irrelevant and redundant features are extracted from the datasets. At last, Min–Max Normalization has been used for scaling the values of the feature among \((0\),1), following the Eq. (1):

whereas \(x\) refers to a new value, and\(\text{m}\text{a}\text{x} \left(x\right) \text{a}\text{n}\text{d} \text{m}\text{i}\text{n} \left(x\right)\) represent maximum and minimum feature values, correspondingly. This conversion improves model performance by normalizing the data.

EHBA-based feature selection

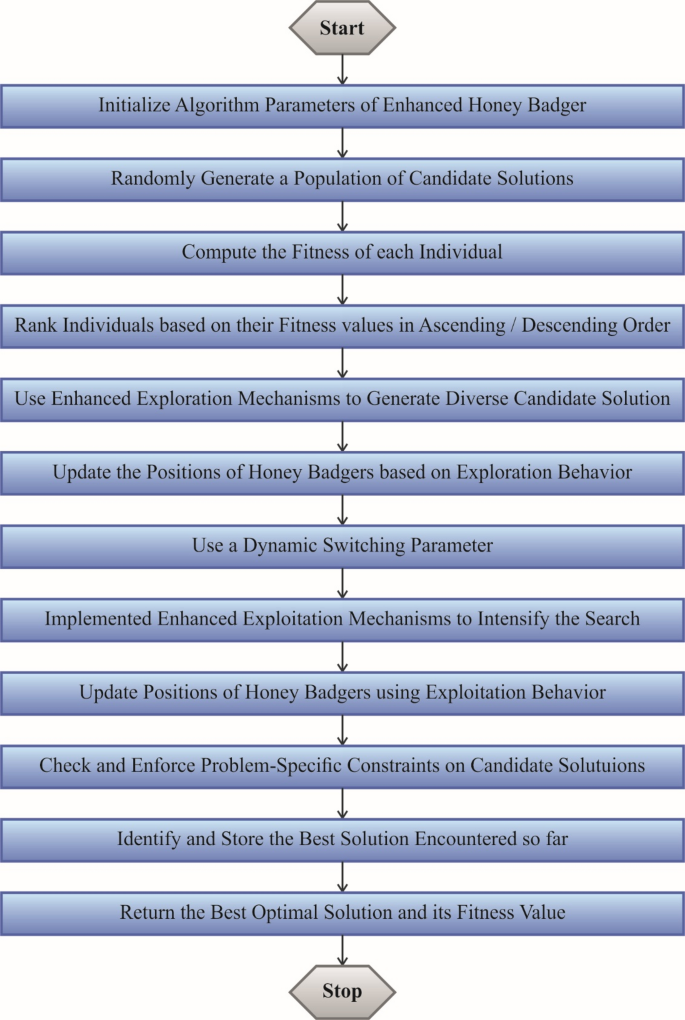

For feature subset selection, the EHBA is used to effectively reduce dimensionality while retaining critical activity-related features29. This technique is chosen due to its robustness and effectiveness in handling complex, high-dimensional datasets. EHBA replicates the aggressive foraging behavior of honey badgers, allowing it to explore the feature space effectively and choose the most relevant features while avoiding overfitting. This is specifically valuable in HAR, where the data is often noisy and contains redundant or irrelevant features. Compared to other techniques, EHBA presents a balance between exploration and exploitation, ensuring optimal feature subsets with improved classification performance. Furthermore, the ability of the EHBA model to handle dynamic, real-time data from IoT sensors makes it appropriate for HAR applications in smart environments. Figure 3 illustrates the steps involved in the EHBA model.

Steps involved in the EHBA method.

EHBA selects the top features from the removed features. The process of feature selection intends to classify the IDS using higher precision however reducing dimensionalities, overfitting, and complexities problems. Stimulated by the searching behavior of honey badgers, it outshines in addressing optimizer problems including complicated search spaces. The Levy flight model has been used in this method for assigning the population to improve the ability to explore and utilize EHBA. In this work, the outcomes of EHBA combine the Levy flying method using the HBA. Harmony and Quick convergence through exploration and production are other dual benefits. The process of EHBA is defined and explained below.

Initialization

This Levy Flight model is used to configure the constraints of EHBA, such as the maximum feature size, iteration number, and size of archive (N), and to select the finest features. Equation (2) has been applied to choose the features depending on the upper and lower limits of population size (KO):

Where random1 refers to randomly generated number among (1,\(0)\); \(ad\) signifies input data illustrated in the \({i}^{th}\) iteration; \(ur{b}_{i}\) and \(lr{b}_{i}\) describes the search areas of the higher and lower bounds. The improved HBA method uses the LF model based on the empty archive \({K}_{f}\). During these time steps is represented by \(f\), and when \(f=0\), the initial populations were generated, and formulated in the succeeding Eq. (3).

Now, the entry-to-entry multiplication operations are indicated by the symbol \(\oplus\), the distribution parameter of the levy flight’s \(L\left(\delta \right)\) has been signified by \(\delta ,\) and the arbitrary step size is symbolized as \(\beta\). Then, with the\(ith\) iteration, the features are selected based on the EHBA’s initial location.

Random generation

To discover the optimum response, the intensity searching habit arbitrarily makes the density factor of EHBA.

Objective function

After selecting the optimum features, the fitness function (FF) represents the EHBA’s factor of density. The upgraded tactic is then utilized with the HBA approach to recognize the optimal features using smaller iterations and effective classification precision. To improve the precision of the classification, dual FFs are used: the first one (FF1) has been applied to minimize the rate of error. Conversely, another function (FF2) was employed to select proper features and its Eqs. (4)–(5):

In such cases\({M}_{error}\) refers to the wrongly predicted features, and \({M}_{AI}\) stands for instance counts in the dataset. Formerly, the next FF is stated under.

Now, \(F\) signifies the primary features generated by the density factor.

Updated the density factor of EHBA to choose the best features

The ideal features are selected with the density factor of EHBA. A unified transformation from exploration to exploitation has been guaranteed by using the density module\(\alpha\). Equation (6) has been applied to slowly minimize randomness and regulate the factor of density that reduces the number of repetitions.

Equation (12) should be substituted as Eq. (7) when the randomly generated value is lower than 0.5:

Whereas, the best position was signified as \(a{d}_{prey}\); the ability of honey badger for foraging is noticeable as \(\alpha\); the distance between\(i th\) honey badger and prey were stated as \(h{b}_{i}\); random 3, 4, 5, and 7 are designated the randomly generated numbers among (1, \(0\)) and \(Dr\) represents the search direction. Equations (8)–(9) are applied to select the top features and Eqs. (6) and (7), to enhance the rate of error:

In such cases, the parameter \(G\) contains a normal value of 2 and is fixed as a continuous using the state \(G\ge 1\); randomly generated numbers among 1 and \(0\) is signified as random 6 and \({f}_{maximum}\) describes the maximal iteration counts. Since the population, \({K}_{f+1}\), the best features are selected with Eq. (8) to \(\left(9\right)\). Then, these generations take place and have been upgraded to the value of \(i=i+1\). Formerly, the FF was verified by applying this process.

End.

Over complexities decrease, the EHBA model chooses the top features from the recovered features in an optimum way. The model then replicates stages 4 over 3 till the termination conditions are fulfilled.

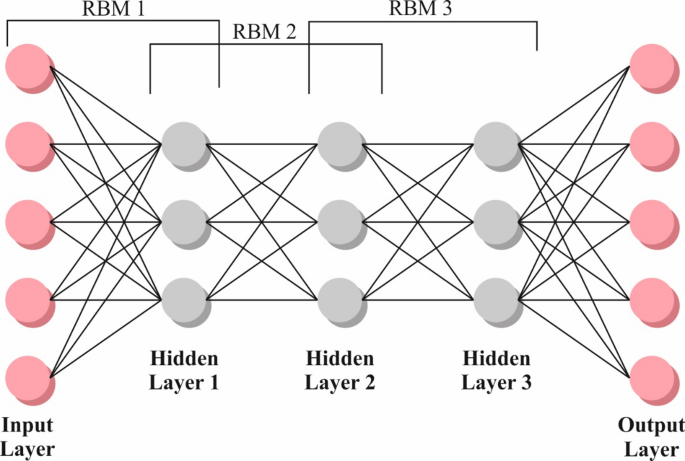

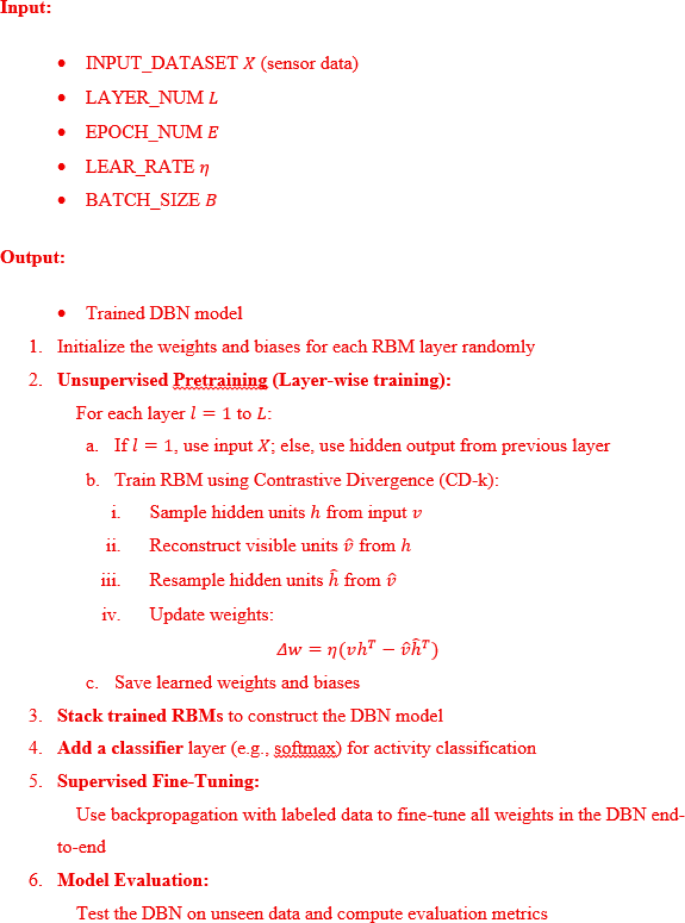

DBN-based classification process

Finally, the DBN model is employed for recognition of human activities30. This technique is chosen for its capability to model complex hierarchical features from large, high-dimensional datasets. DBNs comprises of multiple layers of Restricted Boltzmann Machines (RBMs) that can efficiently learn feature representations without extensive feature engineering, making them ideal for HAR tasks. DBNs outperforms in capturing the complex patterns and dependencies in sensor data, which is often noisy and unstructured. Their deep architecture enables the learning of abstract features, improving classification accuracy compared to shallow models. Additionally, DBNs can efficiently handle the large volumes of data generated by IoT sensors in real-time, making them highly appropriate for smart IoT-edge-cloud systems in HAR applications. Figure 4 represents the architecture of DBN.

DBN architecture.

DBNs is an innovative NNs intended to enhance generalization and handle the flow of information in data. They concentrated on the estimation of the fundamental data distribution however reducing dependence on BMs, leading to more precise methods. DBNs use a multilayered training approach, integrating RBMs at every layer, which have been trained under observation. The backpropagation-based methods enhance the training procedure, fine-tuning weights to improve the performances and minimize overfitting. It is mainly proficient at removing higher-level features from data training, efficiently taking the relations among various class labels. This layered RBM training offered detailed data knowledge. It has shown its efficiency in different areas, like language processing and image recognition, owing to its ability to exhibit composite data distributions.

To determine the input and hidden layer’s standard distribution, the energy function is applied in below Eq. (10):

Now, \({E}_{(v,h)}\) specifies the RBM’s energy function and is calculated by the equation shown below:

Whereas, the weight between clear and hidden ones is symbolized by \({w}_{ij}\). The coefficient for the obvious and hidden nodes has been represented by \({b}_{j}\), and\({ a}_{i}.\)

In this work, the log-probability‐based gradient random fall model has been applied to provide efficient training to the data patterns. It is attained by choosing \(a,\) \(b\) features in the accurate manner that creates for the RBM. When the analysis offered an instance, they could rephrase the probability in the following way:

For the development of the logarithm’s derivation, the random gradient is applied,

Now,

and

Whereas, the amount of hidden nodes has been designated by \(n\). This ratio of learning is described by \(\vartheta\). The function of logistic sigmoid has been established by \(sigm\). The momentum weight and the reduction of weight have been demonstrated by \(\alpha .\) The optimization procedure has been obtained by incorporating the gradient-development method using the re-propagation model. Assuming the complexity of tackling the variations and error function of the NP problems, conventional techniques are often used. Most of the performance optimization is understood by minimizing the Mean Square Error (MSE), which computes the variance between the expected value and the NN’s output, as exposed in the subsequent Eq. (18):

Now, the data quantity is demonstrated by \(T\), and the amount of output layers has been established by \(N\). The amount of the \({j}^{th}\) the entities in DBNs output nodes at the time \(t\) is exemplified by \({D}_{j}\left(i\right)\). The \({j}^{th}\) feature of the beneficial quantity is proven by \({Y}_{j}\left(i\right)\). This model has been used for the DBN, in addition to its repetitions to attain the condition of stopping. Algorithm 1 indicates the DBN technique.

DBN method.

Performance validation

The performance validation of the IDLTHAR-PDST approach is inspected on the database31 (https://www.cis.fordham.edu/wisdm/dataset.php)/ doi: https://doi.org/10.1145/1964897.1964918. This dataset contains 12,000 instances in terms of 6 classes as depicted in Table 2.

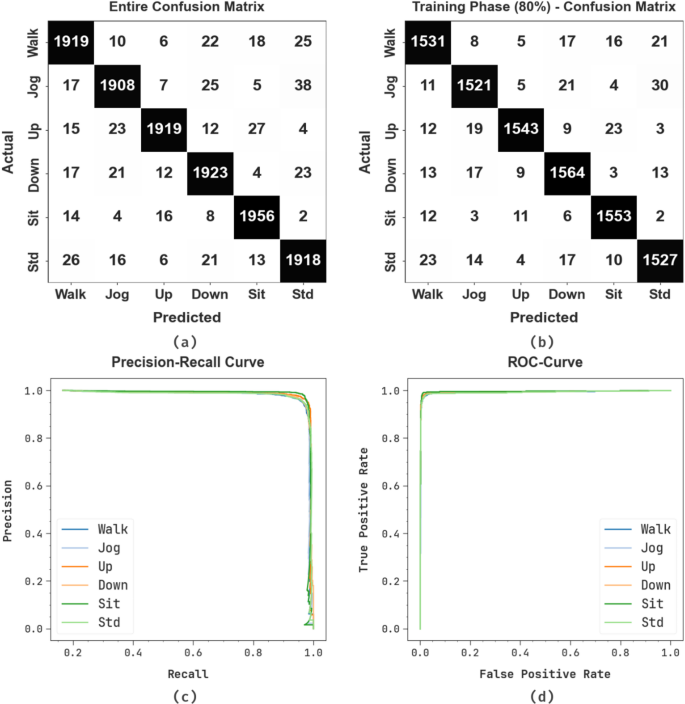

Figure 5 portrayed the confusion matrices made by the IDLTHAR-PDST approach below 80:20 and 70:30 of TRPH/TSPH. The results identify that the IDLTHAR-PDST approach has successful detection and identification of all 6 classes accurately.

Confusion matrices of (a-c) TRPH of 80% and 70% and (b-d) TSPH of 20% and 30%.

Table 3 describes the HAR of IDLTHAR-PDST method under 80:70 of TRPH and 20:30 of TSPH. The outcomes suggest that the IDLTHAR-PDST method appropriately recognized the samples. With 80%TRPH, the IDLTHAR-PDST method provides average \(acc{u}_{y}\), \(pre{c}_{n}\), \(rec{a}_{l},\) \({F1}_{measure}\) and \(AU{C}_{score}\) of 98.75%, 96.24%, 96.24%, 96.24%, and 97.74%, individually. Besides, with 20%TSPH, the IDLTHAR-PDST method gives average \(acc{u}_{y}\), \(pre{c}_{n}\), \(rec{a}_{l},\) \({F1}_{measure}\) and \(AU{C}_{score}\)of 98.67%, 96.00%, 95.98%, 95.98%, and 97.59%, appropriately. Additionally, with 70%TRPH, the IDLTHAR-PDST methodology presents an average \(acc{u}_{y}\), \(pre{c}_{n}\), \(rec{a}_{l},\) \({F1}_{measure}\) and \(AU{C}_{score}\)of 96.67%, 90.07%, 90.05%, 90.01%, and 94.03%, consistently. Eventually, with 30%TSPH, the IDLTHAR-PDST methodology provides average \(acc{u}_{y}\), \(pre{c}_{n}\), \(rec{a}_{l},\) \({F1}_{measure}\) and \(AU{C}_{score}\)of 96.73%, 90.19%, 90.09%, 90.09%, and 94.07%, respectively.

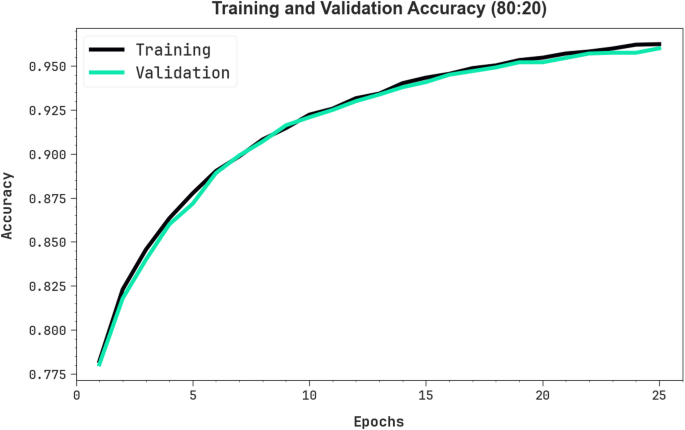

In Fig. 6, the training (TRA) \(acc{u}_{y}\) and validation (VAL) \(acc{u}_{y}\) results of the IDLTHAR-PDST approach below 80%TRPH and 20%TSPH are illustrated. The \(acc{u}_{y}\)values are calculated for 0–25 epochs. The figure discovered that the TRA and VAL \(acc{u}_{y}\) values exemplify developing tendencies which indicates the proficiencies of the IDLTHAR-PDST approach with heightened performance through dissimilar iterations. Additionally, the TRA and VAL \(acc{u}_{y}\)stays nearer through the epoch counts, which designates decreased overfitting and demonstrates better performance of the IDLTHAR-PDST model, assuring steady prediction on undetected samples.

\(Acc{u}_{y}\) curve of IDLTHAR-PDST model under 80%TRPH and 20%TSPH

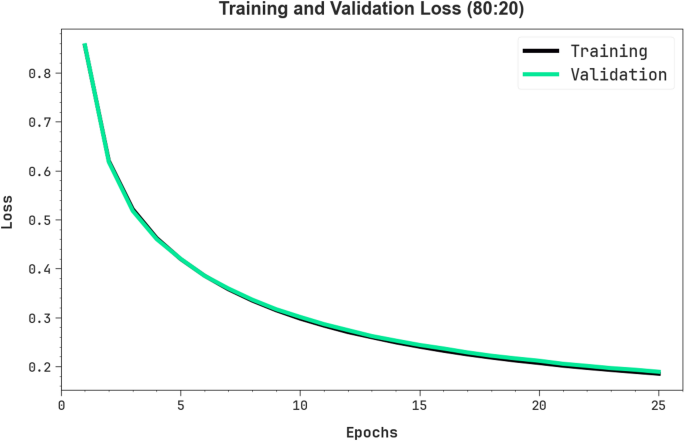

In Fig. 7, the TRA loss (TRALOS) and VAL loss (VALLOS) graph of the IDLTHAR-PDST approach on 80%TRPH and 20%TSPH is established. The loss values are computed for 0–25 epoch counts. It is signified that the TRALOS and VALLOS values exemplify declining tendencies, informing the abilities of the IDLTHAR-PDST technique in balancing an exchange between generality and data fitting. The incessant fall in loss values also pledges the advanced functioning of the IDLTHAR-PDST technique and tuning of the prediction outcomes gradually.

Loss curve of IDLTHAR-PDST model under 80%TRPH and 20%TSPH.

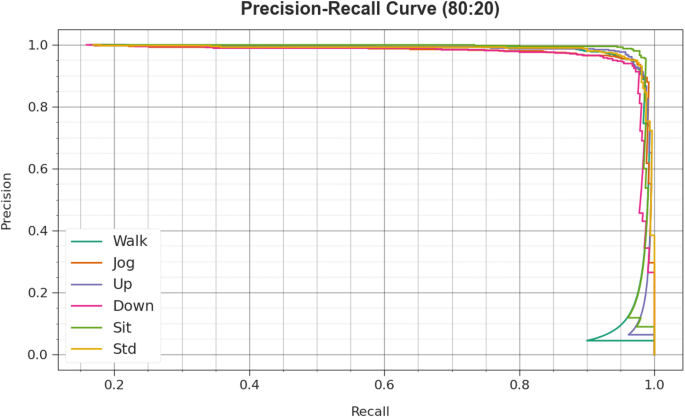

In Fig. 8, the precision-recall (PR) studies investigation of the IDLTHAR-PDST method in terms of 80%TRPH and 20%TSPH gives an understanding of its performance by Plott Precision against Recall for all class labels. This figure demonstrates that the IDLTHAR-PDST approach repetitively accomplishes improved PR outcomes through numerous classes, indicating its capabilities to keep a vital section of true positive predictions between all positive predictions (precision) but also seizing greater quantities of real positives (recall). The continuous growth in PR outcomes between each class depicts the competence of the IDLTHAR-PDST technique in the classifier process.

PR curve of IDLTHAR-PDST model under 80%TRPH and 20%TSPH.

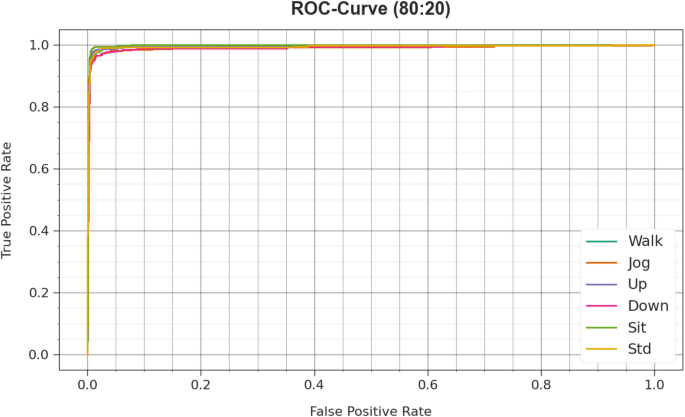

In Fig. 9, the ROC analysis of the IDLTHAR-PDST approach is examined. The results indicate that the IDLTHAR-PDST technique in terms of 80%TRPH and 20%TSPH achieves advanced ROC results across all classes, signifying major proficiency in differentiating the class labels. This steady tendency of boosted values of ROC across several classes directs the effective outcome of the IDLTHAR-PDST technique in predicting classes, discovering the stronger nature of the classifier process.

ROC curve of IDLTHAR-PDST model under 80%TRPH and 20%TSPH.

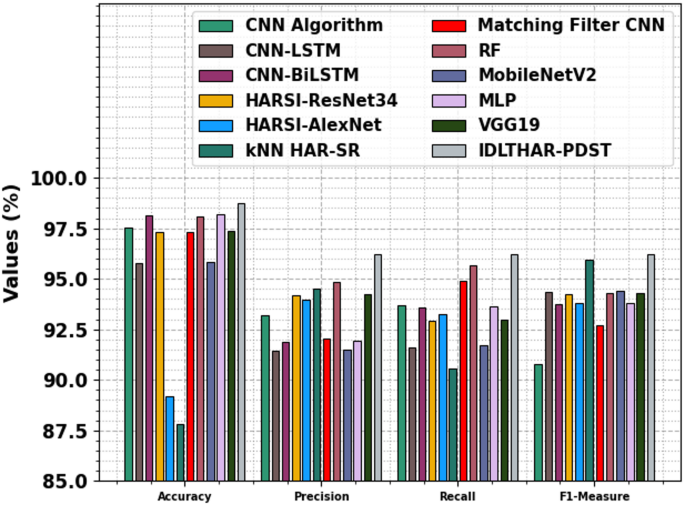

Table 4; Fig. 10 specifies the comparative results of the IDLTHAR-PDST technique with recent methodologies18,32,33,34. The results emphasized that the CNN, CNN-LSTM, CNN-BiLSTM, HARSI-ResNet34, HARSI-AlexNet, kNN HAR-SR, Matching Filter CNN, MobileNetV2, MLP, and VGG19 methodologies have specified poor performance. Meanwhile, the RF technique has acquired nearer results with \(acc{u}_{y}\), \(pre{c}_{n}\), \(rec{a}_{l}\), and \({F1}_{measure}\) of 98.06%, 94.84%, 95.69%, and 94.32% individually. While the IDLTHAR-PDST approach identified better performance with maximum \(acc{u}_{y}\), \(pre{c}_{n}\), \(rec{a}_{l}\), and \({F1}_{measure}\) of 98.75%, 96.24%, 96.24%, and 96.24% respectively.

Comparative analysis of IDLTHAR-PDST model with existing approaches.

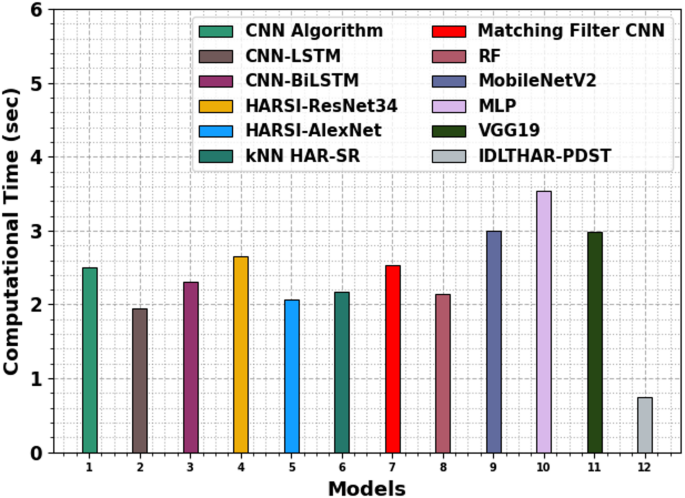

Table 5; Fig. 11 demonstrates the computational time (CT) analysis of the IDLTHAR-PDST model with existing approaches. The CNN model takes 2.51 s, while the CNN-LSTM model is slightly faster at 1.95 s. The CNN-BiLSTM model has a CT of 2.31 s. Among the DL models, HARSI-ResNet34 takes the longest time at 2.66 s, while HARSI-AlexNet is faster with a CT of 2.06 s. The kNN HAR-SR model has a CT of 2.17 s, and the Matching Filter CNN takes 2.53 s. The RF, MobileNetV2, MLP, and VGG19 model performs relatively efficiently with a CT of 2.14, 3.00, 3.54, and 2.99 s, while the IDLTHAR-PDST model outperforms with the fastest CT of 0.75 s.

CT analysis of IDLTHAR-PDST model with existing approaches.

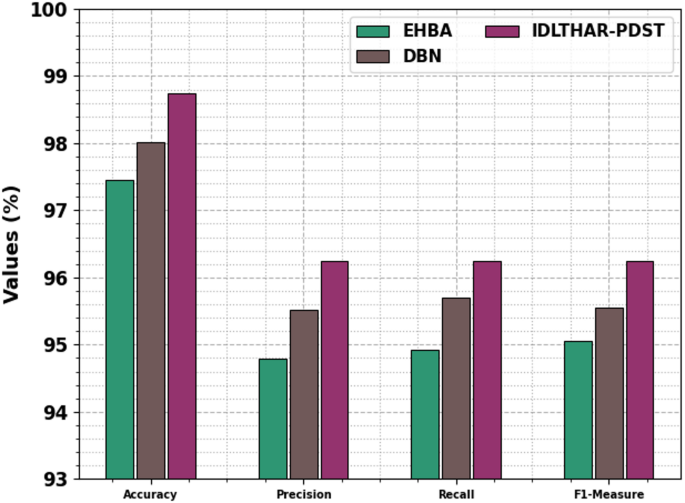

Table 6; Fig. 12 specifies the ablation study of the IDLTHAR-PDST technique. The EHBA model achieved an \(acc{u}_{y}\) of 97.46%, \(pre{c}_{n}\) of 94.79%, \(rec{a}_{l}\) of 94.93%, and an \({F1}_{measure}\) of 95.05%. The DBN technique showed improved outcomes with an \(acc{u}_{y}\) of 98.02%, \(pre{c}_{n}\) of 95.52%, \(rec{a}_{l}\) of 95.7%, and an \({F1}_{measure}\) of 95.55%. The IDLTHAR-PDST methodology outperformed both with an \(acc{u}_{y}\) of 98.75%, \(pre{c}_{n}\) of 96.24%, \(rec{a}_{l}\) of 96.24%, and an \({F1}_{measure}\) of 96.24%. These results demonstrate that the components added in the IDLTHAR-PDST methodology contribute significantly to enhancing overall performance. This consistent improvement across all metrics highlights the efficiency of the model and confirms the robustness of the final model in multi-class classification scenarios.

Result analysis of the ablation study of IDLTHAR-PDST technique.

Conclusion

In this manuscript, an IDLTHAR-PDST technique was presented. The purpose of the IDLTHAR-PDST technique is to efficiently recognize and interpret activities by leveraging sensor technology within a smart IoT-Edge-Cloud continuum. The IDLTHAR-PDST technique has data preprocessing, EHBA-based feature selection, and DBN-based classification processes. Initially, the IDLTHAR-PDST technique utilized a min-max normalization-based data pre-processing model to optimize sensor data consistency and enhance model performance. For feature subset selection, the EHBA was used to effectively reduce dimensionality while retaining critical activity-related features. Finally, the DBN model was employed for recognition of human activities. To exhibit the improved performance of the existing IDLTHAR-PDST model, a comprehensive simulation study is accomplished. The performance validation of the IDLTHAR-PDST model portrayed a superior accuracy value of 98.75% over existing techniques. The limitations of the IDLTHAR-PDST model comprise the reliance on sensor data, which may be affected by noise and inaccuracies, potentially affecting the performance of the system in real-world scenarios. Additionally, the capability of the model to generalize across diverse environments and varying conditions remains a challenge, as it may not perform optimally in unfamiliar settings or with diverse populations. The computational complexity of the approach could also limit its deployment on resource-constrained devices. Furthermore, the accuracy of the system is dependent on the quality and quantity of labeled data for training, which may not always be readily available. Future work may concentrate on improving the robustness of the model to environmental variability, optimizing computational efficiency, and integrating real-time adaptation to improve overall performance in diverse settings.

Data availability

The authors confirm that the data supporting the findings of this study are available within the article31.

References

Dhiman, C. & Vishwakarma, D. K. A review of state-of-the-art techniques for abnormal human activity recognition. Eng. Appl. Artif. Intell. 77, 21–45 (2019).

Schweizer, P. Neutrosophy for physiological data compression: In particular by neural Nets using deep learning. Int. J. Neutrosophic Sci. 1 (2), 74–80 (2020).

Du, Y., Lim, Y. & Tan, Y. A novel human activity recognition and prediction in smart home based on interaction. Sensors, 19(20), 4474. (2019).

Subasi, A., Fllatah, A., Alzobidi, K., Brahimi, T. & Sarirete, A. Smartphone-based human activity recognition using bagging and boosting. Procedia Comput. Sci. 163, 54–61 (2019).

Inoue, M., Inoue, S. & Nishida, T. Deep recurrent neural network for mobile human activity recognition with high throughput. Artif. Life Rob. 23, 173–185 (2018).

Islam, N. et al. A blockchain-based fog computing framework for activity recognition as an application to e-Healthcare services. Future Gener. Comput. Syst. 100, 569–578 (2019).

Avci, A., Bosch, S., Marin-Perianu, M., Marin-Perianu, R. & Havinga, P. Activity recognition using inertial sensing for healthcare, wellbeing and sports applications: A survey. In 2010 23rd International Conference on Architecture of Computing Systems (ARCS), VDE 1–10. (2010).

Lentzas, A. & Vrakas, D. Non-intrusive human activity recognition and abnormal behavior detection on elderly people: A review. Artif. Intell. Rev. 53 (3), 1975–2021 (2020).

Wang, Y., Cang, S. & Yu, H. A survey on wearable sensor modality centred human activity recognition in health care. Expert Syst. Appl. 137, 167–190 (2019).

Abdelaziz, A. & Mahmoud, A. N. Clustered IoT based data fusion model for smart healthcare systems. J. Intell. Syst. Internet Things 2, 22 – 2 (2022).

Wazwaz, A., Amin, K., Semary, N. & Ghanem, T. Dynamic and distributed intelligence over smart devices, internet of things edges, and cloud computing for human activity recognition using wearable sensors. J. Sensor Actuator Netw., 13(1), p.5. (2024).

Arokiaraj, S. & Viswanathan, N. ECG-NETS–A novel integration of capsule networks and extreme gated recurrent neural network for IoT based human activity recognition. J. Intell. Fuzzy Syst. 44 (5), 8219–8229 (2023).

Mohsin, S. K. et al. Artificial intelligence-assisted pattern discovery for narrow domains of human activity using a heuristic-optimised approach. In 2024 International Conference on Smart Systems for Electrical, Electronics, Communication and Computer Engineering (ICSSEECC) (pp. 648–653). IEEE. (2024).

Hafeez, S., Alotaibi, S. S., Alazeb, A., Al Mudawi, N. & Kim, W. Multi-Sensor-Based action monitoring and recognition via hybrid descriptors and logistic regression. IEEE Access. 11, 48145–48157 (2023).

Bouazizi, M., Mora, A. L., Feghoul, K. & Ohtsuki, T. Activity detection in indoor environments using multiple 2d lidars. Sensors, 24(2). (2024).

Alonazi, M. et al. Deep convolutional neural network with symbiotic organism search-based human activity recognition for cognitive health assessment. Biomimetics, 8(7), 554. (2023).

Djenouri, Y., Belbachir, A. N., Cano, A. & Belhadi, A. Spatio-temporal visual learning for home-based monitoring. Inf. Fusion, 101, 101984. (2024).

Yadav, D., Rani, D. & Verma, O. P. Optimizing human activity recognition using stacked CNN with ensemble learning. Procedia Comput. Sci. 258, 1598–1607 (2025).

Kumar, A. R. & Sumathi, D. GNet-FHO: A Light Weight Deep Neural Network for Monitoring Human Health and Activities (IEEE Access, 2024).

Thukral, M., Haresamudram, H., Ploetz, T. & Cross-Domain, H. A. R. Few-Shot transfer learning for human activity recognition. ACM Trans. Intell. Syst. Technol., 16(1), 1–35. (2025).

Sharen, H. et al. WISNet: A deep neural network based human activity recognition system. Expert Syst. Appl. 258, 124999. (2024).

Sassi Hidri, M., Hidri, A., Alsaif, S. A., Alahmari, M. & AlShehri, E. Enhancing sensor-based human physical activity recognition using deep neural networks. J. Sensor Actuator Netw. 14(2), 42 (2025).

Mohsen, S. Recognition of human activity using GRU deep learning algorithm. Multimed. Tools Appl. 82 (30), 47733–47749 (2023).

Rajeswari, P., Gobinath, A., Kumar, N. S. & Anandan, M. IoT-Based smart health monitoring with convolutional neural network (CNN) using edge computing. In dge AI for Industry 5.0 and Healthcare 5.0 Applications (198–208). Auerbach. (2025).

Mazhar, T. et al. Analysis of IoT security challenges and its solutions using artificial intelligence. Brain Sci. 13(4), 683 (2023).

Ma, H., Zhang, F. & Liang, N. Development and training strategy of badminton action recognition system under the background of artificial intelligence. IEEE Access (2025).

Ghadi, Y. Y. et al. Integration of federated learning with IoT for smart cities applications, challenges, and solutions. PeerJ Comput. Sci. 9, e1657 (2023).

Bensaoud, A. & Kalita, J. Optimized detection of cyber-attacks on Iot networks via hybrid deep learning models.

Maddu, M. & Rao, Y. N. Res2Net-ERNN: Deep learning based cyberattack classification in software defined network. Cluster Comput., pp. 1–19. (2024).

Wang, S., Zhang, H. J., Wang, T. T. & Hossain, S. Simulating runoff changes and evaluating under climate change using CMIP6 data and the optimal SWAT model: A case study. Sci. Rep., 14(1), p.23228. (2024).

Kwapisz, J. R., Weiss, G. M. & Moore, S. A. Activity recognition using cell phone accelerometers. ACM SigKDD Explor. Newsl. 12, 74–82 (2011).

Nafea, O., Abdul, W., Muhammad, G. & Alsulaiman, M. Sensor-based human activity recognition with spatio-temporal deep learning. Sensors, 21(6), 2141. (2021).

Alexan, A. I., Alexan, A. R. & Oniga, S. Real-time machine learning for human activities recognition based on wrist-worn wearable devices. Appl. Sci. 14(1), 329. (2023).

Cavalcante, A. F. et al. da Deep learning in the recognition of activities of daily living using smartwatch data. Sensors, 23(17), 7493. (2023).

Acknowledgements

The author extends his appreciation to the King Salman center For Disability Research for funding this work through Research Group no KSRG-2024-269.

Author information

Authors and Affiliations

Contributions

All contributions done by Dr. Mohammed Maray.

Corresponding author

Ethics declarations

Competing interests

The authors declare no competing interests.

Additional information

Publisher’s note

Springer Nature remains neutral with regard to jurisdictional claims in published maps and institutional affiliations.

Rights and permissions

Open Access This article is licensed under a Creative Commons Attribution-NonCommercial-NoDerivatives 4.0 International License, which permits any non-commercial use, sharing, distribution and reproduction in any medium or format, as long as you give appropriate credit to the original author(s) and the source, provide a link to the Creative Commons licence, and indicate if you modified the licensed material. You do not have permission under this licence to share adapted material derived from this article or parts of it. The images or other third party material in this article are included in the article’s Creative Commons licence, unless indicated otherwise in a credit line to the material. If material is not included in the article’s Creative Commons licence and your intended use is not permitted by statutory regulation or exceeds the permitted use, you will need to obtain permission directly from the copyright holder. To view a copy of this licence, visit http://creativecommons.org/licenses/by-nc-nd/4.0/.

About this article

Cite this article

Maray, M. Intelligent deep learning for human activity recognition in individuals with disabilities using sensor based IoT and edge cloud continuum. Sci Rep 15, 29640 (2025). https://doi.org/10.1038/s41598-025-09514-w

Received:

Accepted:

Published:

Version of record:

DOI: https://doi.org/10.1038/s41598-025-09514-w