Abstract

Corrosion-induced bond strength degradation between reinforcing bars and concrete significantly compromises the durability and structural performance of reinforced concrete (RC) systems. Accurate prediction of bond behavior under corrosion is critical for assessing residual capacity and informing rehabilitation strategies. This study proposes a data-driven framework for predicting both Ultimate Bond Strength (UBS) and Relative Bond Strength (RBS) of corroded reinforcement using a multi-output machine learning (ML) approach. A comprehensive dataset was compiled from experimental studies, and six ML algorithms, Multi-Layer Perceptron (MLP), Support Vector Regression (SVR), Decision Tree (DT), Random Forest (RF), Gradient Boosting (GBoost), and Extreme Gradient Boosting (XGBoost), were trained to forecast UBS and RBS simultaneously. Among them, XGBoost exhibited the highest predictive performance, achieving R2 values of 0.977 for UBS and 0.966 for RBS, with corresponding mean absolute percentage errors (MAPE) of 9.6% and 7.0%, respectively. Feature importance was evaluated using SHapley Additive exPlanations (SHAP), which revealed that Corrosion Level, Compressive Strength, and Yield Strength were the most influential factors for both targets. The results underscore the potential of explainable ML tools as efficient alternatives to traditional laboratory testing for evaluating bond degradation in corrosion-affected RC structures.

Similar content being viewed by others

Introduction

Corrosion of reinforcing steel is one of the most critical durability issues in reinforced concrete (RC) structures, leading to the deterioration of mechanical properties and eventual system failure. Among the various structural performance parameters affected by corrosion, The Bond Strength between steel reinforcement and surrounding concrete plays a pivotal role in maintaining the integrity of the composite action. Bond strength is primarily governed by three mechanisms: chemical adhesion, frictional resistance at the interface, and mechanical interlock due to surface deformations on the steel bar1,2,3. However, these mechanisms can be compromised by corrosion, particularly in environments with high humidity, chloride exposure, or carbonation. As corrosion progresses, expansive products form around the steel surface, causing internal cracking and reducing the effective contact area and mechanical interlocking between concrete and steel, thereby compromising the bond4,5,6,7,8.

Over the past decades, numerous experimental studies have investigated the deterioration of bond strength due to corrosion using various test methods such as pull-out, beam-end, and direct tension tests9,10,11. Some of these studies provide comprehensive insights into the mechanisms by which corrosion degrades the bond behavior in reinforced concrete elements12,13,14,15,16,17,18. Recent advancements in materials such as ultra-high-performance concrete (UHPC) and the incorporation of supplementary materials have also been shown to influence bond behavior under corrosion conditions18,19,20. While these studies have yielded important insights, they are often time-consuming, costly, and limited in scope. Moreover, comprehensive quantitative models that generalize across different material properties and environmental conditions remain lacking.

Recent machine learning (ML) advances offer promising alternatives for predicting complex material behaviors using experimental data. ML algorithms have been widely applied in structural engineering for estimating properties such as compressive strength, flexural capacity, and durability21,22,23,24,25,26,27. In particular, tree-based ensemble methods such as Random Forest (RF), Gradient Boosting (GBoost), and eXtreme Gradient Boosting (XGBoost) have demonstrated high accuracy and robustness in regression tasks related to structural materials28,29,30. Recent developments also include the application of advanced XGBoost-based frameworks to predict bond strength and failure modes in UHPC or UHPSSC systems31, as well as deep learning models leveraging natural language processing and transfer learning for bond prediction across diverse concrete-bar configurations32.

Several studies have employed ML techniques for modeling bond strength under various conditions. For example, Mahmoudian et al.33 applied tree-based models, including DT, RF, AdaBoost, and XGBoost, to predict the flexural bond strength of GFRP bars, using SHapley Additive exPlanations (SHAP) for feature analysis. Similarly, Sun et al.34 used SVR, RF, and XGBoost to estimate the bond strength of ribbed stainless-steel rebars embedded in concrete, incorporating Partial Dependence Plots (PDP) and SHAP to interpret model behavior.

Building on this, recent cutting-edge contributions have explored novel data-driven strategies and explainability methods in bond strength and durability modeling. For instance, the application of augmentation and SHAP-based interpretation to predict bond strength at ultra-high-performance concrete–normal concrete (UHPC–NC) interfaces has demonstrated improved generalization and insight35. In parallel, explainable ML frameworks have also been used to predict compressive strength in FRP-confined concrete columns, highlighting the growing relevance of model transparency in structural design contexts36.

Despite these advancements, few studies have explicitly focused on the combined prediction of Ultimate Bond Strength (UBS) and Relative Bond Strength (RBS) for corroded steel rebars, using a unified multi-feature input space. RBS is also referred to in literature as Bond Strength Ratio, or Ratio of Bond Stress13,14. Furthermore, limited attention has been paid to explaining input features’ physical and mechanistic relevance in ML-based predictions, which is essential for practical and interpretable engineering applications. Understanding the contributions of factors such as corrosion level, bar diameter, bond length, and concrete cover thickness is crucial for advancing durability design and assessment37,38.

In this paper, a set of six popular ML algorithms—Multilayer Perceptron (MLP), Support Vector Regression (SVR), Decision Tree (DT), Random Forest (RF), Gradient Boosting (GBoost), and Extreme Gradient Boosting (XGBoost)—were applied to predict both UBS and RBS of corroded rebars in an integrated model using a comprehensive dataset compiled from experimental studies based on six key features: Concrete Compressive Strength, Minimum Concrete Cover, Steel Bar Diameter, Bond Length, Corrosion Level, and Yield Strength of Steel Bar. Given the nonlinear interactions among these features and the complexity of corrosion-induced deterioration, ML methods provide a promising alternative to empirical or mechanistic models. Unlike traditional regression techniques, ML models can capture complex, high-order relationships between variables without assuming a specific functional form. The objectives of this study are to:

-

Develop accurate and interpretable ML models for UBS and RBS prediction,

-

Analyze the relative importance of input features using SHAP values,

-

Compare and evaluate model performance through error metrics and visual inspection,

-

Provide engineering insight into the predictive mechanisms revealed by the ML models.

This work aims to provide a data-driven framework for assessing the residual performance of corroded RC structures by integrating cutting-edge ML techniques with structural knowledge.

Data collection

This study employed a comprehensive dataset compiled from multiple experimental investigations on the bond behavior of corroded reinforced concrete. The initial dataset included 350 data points sourced from seven peer-reviewed studies12,13,14,15,16,17,18, covering various material properties, corrosion levels, and testing configurations. All data were extracted from standardized test methods—primarily pull-out tests, beam-end tests, and direct tension tests—ensuring methodological consistency across the dataset.

To ensure data integrity and suitability for machine learning modeling, a structured data preparation process was conducted. This included cross-verification of numerical consistency, removal of irrational or ambiguous entries, and standardization of units across all input parameters. Data points exhibiting inconsistencies, or values outside reasonable engineering bounds (like high ratio values or non-physical bond behavior) were excluded. Following this cleaning process, the refined dataset consisted of 255 valid entries, which were then used for model training and evaluation.

This rigorous preparation not only enhanced the reliability of the input–output relationships but also ensured that the dataset reflected realistic and physically meaningful bond behavior under corrosion, addressing potential concerns about data precision and reproducibility.

Input parameters

Six independent parameters were selected as input parameters based on their physical significance and demonstrated influence on bond behavior:

-

Concrete compressive strength (MPa): Indicates the strength of the concrete matrix, a key factor affecting bond performance. Stronger concrete generally enhances mechanical interlock and adhesion.

-

Minimum concrete cover (mm): Refers to the shortest distance from the reinforcing bar’s surface to the concrete’s outer surface. Cover thickness influences crack propagation and corrosion ingress.

-

Steel bar diameter (mm): The nominal diameter of the reinforcing steel bar used. Larger diameters are associated with different bond mechanisms due to variations in surface area and confinement effects.

-

Bond length (mm): The embedded length of the steel bar within the concrete. Bond length directly affects the bond capacity and the distribution of stresses along the rebar.

-

Corrosion level (% loss of mass): This critical parameter quantifies the severity of steel deterioration due to corrosion. It is typically calculated as the percentage mass loss of the steel bar relative to its original mass, following cleaning and measurement:

$$Corrosion\;level\left( \% \right) = \frac{{W_{0} - W_{c} }}{{W_{0} }} \times 100$$(1)

Where \(W_{0}\) is the original mass of the rebar, and \(W_{c}\) is the cleaned corroded mass after the removal of rust15.

-

Yield Strength of Steel Bar (MPa): Represents the tensile strength at which the steel bar begins to deform plastically. Higher yield strength can influence bond performance by affecting the stress transfer between steel and concrete.

Output parameters

The model was trained to predict two output parameters representing the bond strength of corroded rebars:

-

Ultimate Bond Strength (UBS, MPa): The peak bond stress recorded during testing. This value reflects the maximum capacity of the concrete–rebar interface before failure.

-

Relative Bond Strength (RBS or Ratio of Bond Strength): Defined as the ratio of the bond strength of a corroded specimen to that of an identical uncorroded specimen under similar test conditions. It can also be referred to as the bond strength ratio or the ratio of bond stress:

$$RBS = \frac{{\tau_{corroded} }}{{\tau_{reference} }}$$(2)

Where \(\tau\) represents bond stress. This dimensionless ratio provides a normalized metric for comparing bond degradation across different corrosion levels and concrete types14,16.

Data sources and range

The dataset was derived from the following experimental studies: Shayanfar et al.12, Chung et al.13, Yalciner et al.14, Coccia et al.15, Ma et al.16, Bidari et al.17, and Khaksefidi et al.18. These studies investigated the effects of various parameters on bond behavior through controlled laboratory testing. Table 1 delineates the characteristics of the dataset employed.

Table 1 presents the input parameters, including Concrete Compressive Strength (CS), Minimum Concrete Cover (Cmin), Diameter of Steel Bar (db), Bond Length (Ld), Corrosion Level (Cw), and Yield Strength of the Steel Bar (YS), as well as the output parameters—Ultimate Bond Strength (UBS) and Relative Bond Strength (RBS). A matrix illustrating the correlations between the inputs and outputs used in the research is shown in Fig. 1, where the shading indicates the intensity of the correlation.

Multi-correlation matrix of the input parameters and outputs.

Figure 1 highlights the critical influence of Cw on both UBS and RBS, with strong negative correlations of − 0.65 and − 0.73, respectively. This confirms the well-documented degradation of bond behavior due to corrosion-induced cracking and disruption of steel–concrete interaction. CS exhibits a moderate positive correlation with UBS (r = 0.36), suggesting that higher concrete strength enhances resistance to pull-out, though its impact on RBS is minimal (r = –0.12). Ld and YS show modest associations with UBS (r = –0.47 and r = 0.35), db and Cmin demonstrate relatively weak correlations. A similar pattern holds for RBS, where only Cw maintains a strong correlation, and all other features display coefficients below ± 0.25. Notably, some inter-feature correlations, such as CS and YS (r = 0.52), and Cmin and YS (r = 0.69), indicate moderate multicollinearity that may affect model interpretability. These linear correlations provide an initial understanding but do not capture the full complexity of feature interactions. Therefore, further nonlinear modeling and interpretability analysis (like SHAP) are necessary to reveal the actual influence of each parameter. Overall, the matrix confirms Cw as the most influential linear predictor, while suggesting the need for advanced modeling tools to explore the subtle, non-linear roles of other features.

All data was digitized and compiled into a unified format. Before model development, the data was normalized using Min–Max scaling to enhance algorithm performance and eliminate unit-based biases across features.

While bond behavior is influenced by factors such as chemical adhesion, friction, and mechanical interlock, the surface treatment of the reinforcing bars was not included as an input feature because all the referenced studies utilized deformed (ribbed) steel bars without any special coatings or surface modifications. Consequently, the bond mechanism evaluated in each study is governed by the naturally occurring surface conditions that arise from corrosion and rib geometry. The omission of surface treatment as a parameter is justified, as there was no variation in this factor throughout the dataset. These experiments emphasized quantifying the bond degradation caused by corrosion under otherwise standard conditions, rendering the dataset homogeneous concerning bar surface treatment12,13,14,15,16,17,18. This aligns with conventional testing standards such as ASTM A615/A615M39, specifying deformed steel bars as the default reinforcement for mechanical anchorage and bond-related evaluations.

Methods

This section presents the methodology employed in this study, including the selection of ML algorithms, evaluation metrics, and the approach used for model interpretability. A multi-output regression framework was adopted to simultaneously predict the Ultimate Bond Strength (UBS) and Relative Bond Strength (RBS), which are influenced by the same set of input features.

Algorithms

Multi-Output is a ML technique employed to address regression challenges in which the objective is to forecast multiple target parameters concurrently. Within conventional regression scenarios, a singular target parameter is typically present, and the objective is to identify a model that most effectively captures the association between the input characteristics and that specific target39. To address the multi-output regression task, several supervised ML algorithms were implemented, namely DT, RF, MLP, SVR, GBoost, and XGBoost. DTs operate by recursively splitting data based on input features to form a tree-like structure for decision-making. RF enhances this approach through ensemble averaging of multiple DTs, improving accuracy and robustness. MLP is a type of artificial neural network composed of multiple layers of interconnected neurons, capable of modeling complex nonlinear relationships. SVR adapts the principles of support vector machines for regression tasks by fitting a function within a specified error margin, with kernel functions enabling flexible handling of nonlinear data. GBoost builds predictive models stage-wise by minimizing errors through gradient descent, while XGBoost extends this technique by incorporating regularization, scalability, and efficient handling of sparse data. These algorithms were selected based on their strong performance in regression problems, adaptability to multi-output tasks, and proven effectiveness in engineering applications40,41,42,43,44,45,46,47,48,49,50,51,52.

Performance evaluation indices

The predictive performance of each model was assessed using four standard regression metrics: the coefficient of determination (R2), root mean squared error (RMSE), mean absolute error (MAE), and mean absolute percentage error (MAPE). These indices are defined as follows:

In these equations, \(y_{i}^{*}\) and \(y_{i}\) represent the predicted and observed values, respectively; \(\overline{y}\) is the mean of the observed values; and \(n\) is the number of samples. Together, these metrics provide a comprehensive evaluation of model accuracy and generalization.

SHAP values for model interpretability

To enhance interpretability of the machine learning models, SHapley Additive exPlanations (SHAP) were employed. SHAP is a model-agnostic approach grounded in cooperative game theory, wherein each feature is assigned an importance value representing its average marginal contribution to the model’s output53,54. The method decomposes predictions into additive contributions from each input feature, enabling transparent explanation of complex model behavior. Mathematically, a prediction \(f_{\left( x \right)}\) is expressed as:

where \(\varphi_{0}\) is the baseline prediction (expected value), and \(\varphi_{i}\) is the SHAP value corresponding to the feature \(x_{i}^{\prime }\)55. These values are computed by averaging the differences in model predictions across all possible feature subsets that include or exclude \(x_{i}^{\prime }\) , as shown below:

wherein |z′| signifies the count of non-zero entries within z′, and z′ ⊆ x′ encompasses all z′ vectors such that the non-zero entries constitute a subset of the non-zero entries present in x′56,57. This approach was applied after model training to identify the most influential features contributing to UBS and RBS, thereby improving the interpretability and transparency of the multi-output regression models. Figure 2 illustrates the operational mechanics of the SHAP values methodology.

Workflow of the SHAP values method.

As demonstrated in Fig. 2, the model’s functionality is explained from training to visualizing the results of SHAP values. Figure 3 elucidates the characteristics of the SHAP values.

SHAP values attributes.

As shown in Fig. 3, various visual representations, including summary plots, feature importance graphs, and mean value distributions, are utilized to illustrate the SHAP values within a dataset. The interpretation of a machine learning model’s output is expressed through SHAP values as the aggregation of the influences of each feature included in a conditional expectation.

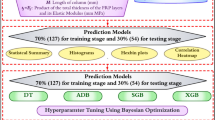

Numerical results

This section presents the outcomes derived from the proposed ML models, following the methodology outlined in the preceding sections. The available dataset was partitioned using an 80:20 split, where 80% of the data was used for training and model development, while the remaining 20% was reserved for independent testing. The performance metrics and visual results discussed herein exclusively pertain to this test subset, enabling an unbiased evaluation of the models’ generalization capabilities.

The MLP architecture was optimized through a parametric study involving various configurations. The best-performing structure comprised four hidden layers, each containing ten neurons. The predictive performance of the six employed ML algorithms—MLP, SVR, DT, RF, GBoost, and XGBoost—is illustrated in Fig. 4, which compares the actual and predicted values for the two target outputs: UBS and RBS.

Comparison of actual and predicted values of ML models (a-MLP, b-SVR, c-DT, d-RF, e-GBoost, f-XGBoost) for two outputs, represented by blue and red bars, represent UBS predictions; green and yellow bars represent RBS predictions.

Figure 4 demonstrates that most models were closely aligned with the actual values, particularly for the majority of test samples. The DT, RF, and GBoost models (Fig. 4c, d, e) showed excellent agreement with the actual data, while the SVR model (Fig. 4b) exhibited the weakest predictive match.

To further assess prediction fidelity, Fig. 5 provides direct comparisons between predicted and observed values from the test set. Each algorithm organizes this comparison:

Actual versus predicted values for UBS and RBS across the six ML models on the testing dataset. Blue dots correspond to UBS, and orange dots correspond to RBS.

Notably, the XGBoost model (Fig. 5f) yielded predictions with near-perfect linear alignment to actual values, demonstrating its robust predictive power. The DT model (Fig. 5c) followed closely in accuracy, while the MLP model (Fig. 5a) showed the highest level of deviation from empirical data.

To analyze the prediction errors, residual plots for all machine learning models are presented in Fig. 6. The left plot shows the residuals for UBS predictions, while the right plot represents those for RBS. In both cases, residuals are defined as the difference between actual and predicted values.

Residual distributions for all ML models: (a) Prediction residuals for UBS, (b) Prediction residuals for RBS.

For UBS (Fig. 6a), the XGBoost model displays the narrowest residual distribution, suggesting the highest predictive precision. In contrast, SVR, MLP, and DT exhibit broader residual spreads, indicating lower accuracy and higher variability. RF and GBoost maintain moderate residual dispersion, but some outliers are noticeable across all models—especially within SVR, MLP, and DT.

For RBS (Fig. 6b), the RF, GBoost, and XGBoost models again show tightly clustered residuals near zero, reinforcing their reliability. SVR and MLP exhibit more widely dispersed residuals and noticeable outliers, indicating inconsistent performance. DT demonstrates average performance, with slightly higher variability than MLP while still maintaining relatively compact clustering.

These findings collectively highlight the robust predictive ability of XGBoost and DT for both target parameters. To quantitatively validate these visual assessments, Table 2 summarizes the performance metrics.

The tabulated results in Table 2 facilitate a comprehensive comparison of model performance based on four key evaluation metrics (R2, MAE, RMSE, and MAPE) for both UBS and RBS. For the UBS prediction, the SVR model displayed the lowest coefficient of determination (R2), indicating the weakest overall fit. The MLP model produced the highest MAE, suggesting substantial inconsistencies between predicted and actual values. GBoost recorded a relatively high RMSE, indicating greater dispersion in its prediction errors. Meanwhile, RF exhibited the highest MAPE, reflecting reduced accuracy in terms of relative percentage error.

In the case of RBS prediction, MLP again demonstrated the poorest performance in terms of R2, confirming its limited ability to capture the underlying patterns. SVR produced the highest MAE, implying less reliable pointwise predictions. GBoost continued to show a comparatively higher RMSE, while RF exhibited the highest MAPE, indicating weaker proportional accuracy. These trends collectively reaffirm the superior predictive capability and stability of the XGBoost and DT models, particularly in managing both target outputs simultaneously.

In summary, although all models reached acceptable performance levels, the XGBoost and DT algorithms excelled across all evaluation metrics for both UBS and RBS. The XGBoost model, in particular, consistently outperformed the others, making it the most reliable and robust candidate for future applications in predicting the bond strength of corroded reinforcement bars.

Discussion

To interpret the inner workings of the trained ML models, SHAP was utilized to quantify the contribution of each input feature to the predicted outcomes. Figure 7 presents the mean absolute SHAP values for both target parameters: (a) UBS and (b) RBS, highlighting the most influential features in the XGBoost model.

Sensitivity analysis related to (a) UBS and (b) RBS.

In Fig. 7a, Cw stands out as the most significant feature influencing UBS, followed by CS and YS. In contrast, Cmin, Ld, and db exert relatively less influence. The high importance of Cw confirms that bond degradation due to corrosion is a key driver of UBS variation. Additionally, the strength characteristics of concrete and steel play a crucial role in overall bond performance.

Figure 7b, which corresponds to RBS, also identifies Cw and CS as the top-ranking features, with YS closely following. Once again, db, Ld, and Cmin show limited relative impact. This consistent trend across both targets reaffirms the significance of material degradation and concrete integrity in governing bond behavior.

Figure 8 further illustrates the detailed distribution of SHAP values across the dataset, offering insights into how variations in each feature influence the model predictions. In each plot, feature impact is displayed along the horizontal axis, while feature values (high or low) are color-coded from red to blue. The vertical axis ranks the features from most to least influential.

The distribution plots of SHAP for UBS (a) and RBS (b).

In Fig. 8a, UBS increases significantly with higher values of CS and YS, as indicated by the dense concentration of red points on the positive SHAP side. Conversely, an increase in Cw results in substantial reductions in UBS, as shown by the abundance of red points on the negative SHAP side. Features like db, Cmin, and Ld exhibit much smaller and more dispersed SHAP values, suggesting limited or case-specific influence.

For RBS in Fig. 8b, a similar pattern emerges. Higher Cw values are associated with negative SHAP values, confirming their detrimental effect on relative bond performance. Meanwhile, greater CS and YS lead to improved RBS. Once again, db, Ld, and Cmin show only modest or inconsistent contributions to the outcome.

Overall, the SHAP analysis confirms that the most critical factors affecting both UBS and RBS are Corrosion Level (Cw), Compressive Strength (CS), and Steel Yield Strength (YS). These findings are consistent with known mechanical and chemical mechanisms underlying bond degradation in corroded reinforced concrete58. Less dominant parameters, such as Minimum Cover (Cmin), Bond Length(Ld), and Bar Diameter (db), exhibit more localized effects but are still relevant in specific configurations.

Conclusion

This study presents a machine learning (ML)-based framework for predicting the bond strength behavior of corroded reinforcing bars embedded in concrete. Both the Ultimate Bond Strength (UBS) and the Relative Bond Strength (RBS), defined as the ratio of the bond strength of corroded to uncorroded specimens, are simultaneously estimated using a multi-output regression approach. A total of six ML algorithms are evaluated and compared, and the SHapley Additive exPlanations (SHAP) method is used to interpret the influence of input features. Based on the results, the following conclusions can be drawn:

-

1.

Among the six ML models implemented (MLP, SVR, DT, RF, GBoost, and XGBoost), the Extreme Gradient Boosting (XGBoost) algorithm provided the most accurate performance in predicting both UBS and RBS. Specifically, it achieved R2 values of 0.977 for UBS and 0.966 for RBS, with mean absolute percentage errors (MAPE) of 9.6% and 7.0%, respectively, on the test dataset.

-

2.

The simultaneous prediction of UBS and RBS using multi-output ML models has proven effective, providing a more comprehensive understanding of bond degradation mechanisms in corroded reinforced concrete.

-

3.

SHAP-based feature importance analysis revealed that Corrosion Level (Cw), Compressive Strength (CS), and Yield Strength (YS) were the most influential parameters affecting both UBS and RBS. Corrosion Level had a consistently negative impact, while Compressive and Yield Strength positively contributed to bond capacity.

-

4.

Secondary factors such as Bar Diameter (db), Minimum Cover (Cmin), and Bond Length (Ld) were found to have a smaller yet still notable influence, especially in UBS prediction. Their interactions with more dominant features require further investigation.

-

5.

Despite promising results, the study acknowledges limitations related to the size and diversity of the dataset. Enhancing the dataset with a wider variety of experimental conditions, such as variations in stirrup configurations, concrete types, and corrosion patterns, could increase the generalizability of the model.

-

6.

From a practical standpoint, the results demonstrate the potential of ML models to serve as efficient alternatives to traditional laboratory testing, providing rapid and cost-effective assessments of bond strength deterioration due to corrosion. These tools could be valuable for structural assessment, durability forecasting, and maintenance planning for aging infrastructure.

-

7.

Future studies should investigate the integration of image-based or sensor-derived corrosion indicators, along with more comprehensive datasets, to create real-time predictive tools suitable for a broader range of structural conditions.

In summary, this work confirms that data-driven ML techniques, particularly XGBoost, can accurately predict bond strength degradation in corroded reinforced concrete members. These findings support the broader adoption of explainable ML models in structural engineering research and practice, especially for durability diagnostics and residual capacity evaluation in corrosion-affected reinforced concrete systems.

Data availability

The data set used during the current study are available from the first or corresponding author on reasonable request.

References

Bond, A. Development of Straight Reinforcing Bars in Tension (aci 408r-03) (American Concrete Institute Committee, 2003).

Okelo, R. & Yuan, R. L. Bond strength of fiber reinforced polymer rebars in normal strength concrete. J. Compos. Constr. 9(3), 203–213 (2005).

Aslani, F. Residual bond between concrete and reinforcing GFRP rebars at elevated temperatures. Proc. Inst. Civ. Eng.-Struct. Build. 172(2), 127–140 (2019).

Al-Sulaimani, G., Kaleemullah, M. & Basunbul, I. Influence of corrosion and cracking on bond behavior and strength of reinforced concrete members. Struct. J. 87(2), 220–231 (1990).

Austin, S., Robins, P. & Pan, Y. Tensile bond testing of concrete repairs. Mater. Struct. 28, 249–259 (1995).

Takewaka, K., Yamaguchi, T. & Maeda, S. Simulation model for deterioration of concrete structures due to chloride attack. J. Adv. Concr. Technol. 1(2), 139–146 (2003).

Sæther, I. Bond deterioration of corroded steel bars in concrete. Struct. Infrastruct. Eng. 7(6), 415–429 (2011).

Lin, H. et al. State-of-the-art review on the bond properties of corroded reinforcing steel bar. Constr. Build. Mater. 213, 216–233 (2019).

Molina, F. J., Alonso, C. & Andrade, C. Cover cracking as a function of rebar corrosion: Part 2—Numerical model. Mater. Struct. 26, 532–548 (1993).

Vidal, T., Castel, A. & François, R. Analyzing crack width to predict corrosion in reinforced concrete. Cem. Concr. Res. 34(1), 165–174 (2004).

Banić, D., Grandić, D. & Bjegović, D. Bond characteristics of corroding reinforcement in concrete beams. In Application of Codes, Design and Regulations: Proceedings of the International Conference held at the University of Dundee, Scotland, UK on 5–7 July 2005 203–210 (Thomas Telford Publishing, 2005).

Shayanfar, M., Ghalehnovi, M. & Safiey, A. Corrosion effects on tension stiffening behavior of reinforced concrete. Comput. Concrete 4(5) (2007).

Chung, L., Kim, J.-H.J. & Yi, S.-T. Bond strength prediction for reinforced concrete members with highly corroded reinforcing bars. Cement Concr. Compos. 30(7), 603–611 (2008).

Yalciner, H., Eren, O. & Sensoy, S. An experimental study on the bond strength between reinforcement bars and concrete as a function of concrete cover, strength and corrosion level. Cem. Concr. Res. 42(5), 643–655 (2012).

Coccia, S., Imperatore, S. & Rinaldi, Z. Influence of corrosion on the bond strength of steel rebars in concrete. Mater. Struct. 49, 537–551 (2016).

Ma, Y., Guo, Z., Wang, L. & Zhang, J. Experimental investigation of corrosion effect on bond behavior between reinforcing bar and concrete. Constr. Build. Mater. 152, 240–249 (2017).

Bidari, O., Singh, B. K. & Maheshwari, R. Effect of corrosion on bond between reinforcement and concrete-an experimental study. Discov. Civ. Eng. 1(1), 67 (2024).

Khaksefidi, S., Ghalehnovi, M. & Rahdar, H. Factors affecting the bond behavior of corroded rebars embedded in ultra-high-performance concrete. In Structures vol. 66 106875 (Elsevier, 2024).

Ghalehnovi, M., Rahdar, H., Ghorbanzadeh, M., Roshan, N. & Ghalehnovi, S. Effect of marble waste powder and silica fume on the bond behavior of corroded reinforcing bar embedded in concrete. J. Mater. Civ. Eng. 35(3), 04022460 (2023).

Khaksefidi, S., Ghalehnovi, M. & Rahdar, H. Bond-slip relationship between deformed rebar and ultra-high-performance concrete with corrosion effect. Case Stud. Constr. Mater. 21, e03585 (2024).

El-Chabib, H. & Nehdi, M. Effect of mixture design parameters on segregation of self-consolidating concrete. ACI Mater. J. 103(5), 374 (2006).

Duan, Z.-H., Kou, S.-C. & Poon, C.-S. Prediction of compressive strength of recycled aggregate concrete using artificial neural networks. Constr. Build. Mater. 40, 1200–1206 (2013).

Köroğlu, M. A. Artificial neural network for predicting the flexural bond strength of FRP bars in concrete. Sci. Eng. Compos. Mater. 26(1), 12–29 (2019).

Zhang, F. et al. Prediction of FRP-concrete interfacial bond strength based on machine learning. Eng. Struct. 274, 115156 (2023).

Pakzad, S. S., Ghalehnovi, M. & Ganjifar, A. A comprehensive comparison of various machine learning algorithms used for predicting the splitting tensile strength of steel fiber-reinforced concrete. Case Stud. Constr. Mater. 20, e03092 (2024).

Najmoddin, A., Etemadfard, H. & Ghalehnovi, M. Multi-output machine learning for predicting the mechanical properties of BFRC. Case Stud. Constr. Mater. 20, e02818 (2024).

Zhu, Y., Taffese, W. Z. & Chen, G. Enhancing FRP-concrete interface bearing capacity prediction with explainable machine learning: A feature engineering approach and SHAP analysis. Eng. Struct. 319, 118831 (2024).

Tuerxunmaimaiti, Y. et al. Predicting fatigue slip and fatigue life of FRP rebar-concrete bonds using tree-based and theory-informed learning models. Int. J. Fatigue 193, 108816 (2025).

Zhang, P.-F., Iqbal, M., Zhang, D., Zhao, X.-L. & Zhao, Q. Bond strength prediction of FRP bars to seawater sea sand concrete based on ensemble learning models. Eng. Struct. 302, 117382 (2024).

Zhao, X., Zhang, P.-F., Zhang, D., Zhao, Q. & Tuerxunmaimaiti, Y. Prediction of interlaminar shear strength retention of FRP bars in marine concrete environments using XGBoost model. J. Build. Eng. 105, 112466 (2025).

Zhang, P.-F. et al. Prediction of bond strength and failure mode of FRP bars embedded in UHPC or UHPSSC utilising extreme gradient boosting technique. Compos. Struct. 346, 118437 (2024).

Zhang, P. F. et al. Natural language processing-based deep transfer learning model across diverse tabular datasets for bond strength prediction of composite bars in concrete. Comput.-Aided Civ. Infrastruct. Eng. 40(7), 917–939 (2025).

Mahmoudian, A., Tajik, N., Taleshi, M. M., Shakiba, M. & Yekrangnia, M. Ensemble machine learning-based approach with genetic algorithm optimization for predicting bond strength and failure mode in concrete-GFRP mat anchorage interface. In Structures vol. 57 105173 (Elsevier, 2023).

Sun, Y. Forecasting ultimate bond strength between ribbed stainless steel bar and concrete using explainable machine learning algorithms. Multidiscip. Model. Mater. Struct. 20(3), 401–416 (2024).

Hu, T., Zhang, H., Khodadadi, N., Taffese, W. Z. & Nanni, A. Enhancing bond strength prediction at UHPC-NC interface: A data-driven approach with augmentation and explainability. Constr. Build. Mater. 451, 138757 (2024).

Hu, T., Zhang, H., Cheng, C., Li, H. & Zhou, J. Explainable machine learning: Compressive strength prediction of FRP-confined concrete column. Mater. Today Commun. 39, 108883 (2024).

Yan, F., Lin, Z., Wang, X., Azarmi, F. & Sobolev, K. Evaluation and prediction of bond strength of GFRP-bar reinforced concrete using artificial neural network optimized with genetic algorithm. Compos. Struct. 161, 441–452 (2017).

Su, M., Zhong, Q., Peng, H. & Li, S. Selected machine learning approaches for predicting the interfacial bond strength between FRPs and concrete. Constr. Build. Mater. 270, 121456 (2021).

Borchani, H., Varando, G., Bielza, C. & Larranaga, P. A survey on multi-output regression. Wiley Interdiscip. Rev. Data Min. Knowl. Discov. 5(5), 216–233 (2015).

Bishop, C. M. Neural Networks for Pattern Recognition (Oxford University Press, 1995).

Friedman, J. H. Greedy function approximation: A gradient boosting machine. Ann. Stat. 1189–1232 (2001).

Ghosh, D. & Cabrera, J. Enriched random forest for high dimensional genomic data. IEEE/ACM Trans. Comput. Biol. Bioinf. 19(5), 2817–2828 (2021).

Goodfellow, I. Deep learning (MIT Press, 2016).

Hastie, T. The Elements of Statistical Learning: Data Mining, Inference, and Prediction (Springer, 2009).

Lin, S. D., Chen, L. & Chen, W. Thermal face recognition under different conditions. BMC Bioinform. 22, 1–17 (2021).

Quinlan, J. R. Induction of decision trees. Mach. Learn. 1, 81–106 (1986).

Sandhu, A. K. & Batth, R. S. Software reuse analytics using integrated random forest and gradient boosting machine learning algorithm. Softw. Pract. Exp. 51(4), 735–747 (2021).

Tian, Y., Shi, Y. & Liu, X. Recent advances on support vector machines research. Technol. Econ. Dev. Econ. 18(1), 5–33 (2012).

Wang, K. L. et al. Machine learning to estimate the bond strength of the corroded steel bar-concrete. Struct. Concr. 25(1), 696–715 (2024).

Wang, S., Dong, P. & Tian, Y. A novel method of statistical line loss estimation for distribution feeders based on feeder cluster and modified XGBoost. Energies 10(12), 2067 (2017).

Zhang, F. & O’Donnell, L. J. Support vector regression. In Machine learning 123–140 (Elsevier, 2020).

Zhao, C. et al. BoostTree and BoostForest for ensemble learning. IEEE Trans. Pattern Anal. Mach. Intell. 45(7), 8110–8126 (2022).

Lundberg, S. M. & Lee, S.-I. A unified approach to interpreting model predictions. Adv. Neural Inf. Process. Syst. 30 (2017).

Shapley, L. S. A value for n-person games. (1953).

Doostmohamadi, A., Karamloo, M., Oskouei, A. V., Shakiba, M. & Kheyroddin, A. Enhancement of punching strength in GFRP reinforced single footings by means of handmade GFRP shear bands. Eng. Struct. 262, 114349 (2022).

Mangalathu, S., Hwang, S.-H. & Jeon, J.-S. Failure mode and effects analysis of RC members based on machine-learning-based SHapley Additive exPlanations (SHAP) approach. Eng. Struct. 219, 110927 (2020).

Mangalathu, S., Shin, H., Choi, E. & Jeon, J.-S. Explainable machine learning models for punching shear strength estimation of flat slabs without transverse reinforcement. J. Build. Eng. 39, 102300 (2021).

Syll, A. S. & Kanakubo, T. Impact of corrosion on the bond strength between concrete and rebar: A systematic review. Materials 15(19), 7016 (2022).

Author information

Authors and Affiliations

Contributions

Alireza Hosseinzadeh Kashani: Software, Formal analysis, Data curation, Visualization, Writing—Original Draft. Hossein Etemadfard: Conceptualization, Methodology, Validation, Supervision, Writing—Editing. Mansour Ghalehnovi: Supervision, Project administration.

Corresponding author

Ethics declarations

Competing interests

The authors declare no competing interests.

Additional information

Publisher’s note

Springer Nature remains neutral with regard to jurisdictional claims in published maps and institutional affiliations.

Rights and permissions

Open Access This article is licensed under a Creative Commons Attribution-NonCommercial-NoDerivatives 4.0 International License, which permits any non-commercial use, sharing, distribution and reproduction in any medium or format, as long as you give appropriate credit to the original author(s) and the source, provide a link to the Creative Commons licence, and indicate if you modified the licensed material. You do not have permission under this licence to share adapted material derived from this article or parts of it. The images or other third party material in this article are included in the article’s Creative Commons licence, unless indicated otherwise in a credit line to the material. If material is not included in the article’s Creative Commons licence and your intended use is not permitted by statutory regulation or exceeds the permitted use, you will need to obtain permission directly from the copyright holder. To view a copy of this licence, visit http://creativecommons.org/licenses/by-nc-nd/4.0/.

About this article

Cite this article

Kashani, A.H., Ghalehnovi, M. & Etemadfard, H. ML modeling of ultimate and relative bond strength for corroded rebars based on concrete and steel properties. Sci Rep 15, 26830 (2025). https://doi.org/10.1038/s41598-025-09532-8

Received:

Accepted:

Published:

Version of record:

DOI: https://doi.org/10.1038/s41598-025-09532-8