Abstract

Powder properties, particularly their morphology (which includes size, shape, surface roughness, etc.), play a critical role in the quality of cold spray additively manufactured materials. A change in feedstock powder morphology can impact flowability and deposition quality, deposition efficiency, and porosity. Image analysis can be used to quantify a powder’s morphology, but performing this analysis with manual visual inspection can be laborious and time-consuming. Alternatively, computer vision techniques have shown promise in automating powder morphology analysis, reducing the work and time required to quantify a powder’s morphology. However, the capabilities of these models are limited to the quality of their training data, which can be equally difficult and expensive to collect and annotate. Thus, this work presents DualSight, a novel multi-stage computer vision framework that improves metallic powder segmentation quality without requiring additional data or model training. With this framework, powder morphology can be extracted more accurately from scanning electron microscope images, enabling a more informed manufacturing process.

Similar content being viewed by others

Introduction

Additive manufacturing techniques such as cold spray (CSAM)1,2, powder bed fusion (PBF)3,4, and powder-based directed energy deposition (DED)5 utilize metallic powder to manufacture parts with complex geometries and unique properties among other qualities. Of these techniques, CSAM is unique, utilizing kinetic energy to plastically deform powder rather than relying on thermal energy. As a result, parts can be manufactured with reduced thermal stress and preservation of material properties, such as maintaining the original phases and microstructure of the powder. To achieve enough kinetic energy, powder is sprayed from the deposition nozzle at high velocities. As a result, powder morphology is crucial to designing, selecting, and optimizing powders for CSAM.

Commercial options to extract powder morphology, such as Microtrac Sync System6, do exist, which use Laser Diffraction (LD) and Dynamic Image Analysis (DIA). However, these characterization approaches have certain limitations concerning an automated system for characterizing powder morphology. First, many of these systems are not open-source. As a result, the outputs are limited to the predefined parameters set by the manufacturer. As the field of analyzing powder morphology grows and develops, the ability to compute and extract additional metrics is particularly useful, especially in research settings. Additionally, advanced characterization, such as identifying satellites or agglomerations and comparing surface textures, can be quite difficult with this system.

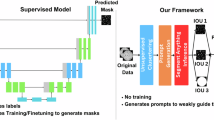

Alternatively, image analysis can be used to quantify a powder’s morphology with much higher precision, but it is time-consuming and laborious to do by hand. Computer vision can automate this process, reducing the cost and time required to analyze a powder7,8,9. However, computer vision models are limited to the quality of their training data, which can be difficult and expensive to collect and annotate, resulting in imprecise annotations. For example, as shown in Fig. 1A, the particle segmentations (in blue) frequently have long, straight edges that under-segment the boundary of their respective particle. Thus, this work presents DualSight, a novel multi-stage framework that enhances computer vision model performance, as shown in Fig. 1B, without requiring any additional data or model training.

Improved segmentation quality through DualSight enabling enhanced calculation of powder morphology.

Using foundational models like the SAM (Segment Anything Model), outputs from models such as YOLO (You Only Look Once)10, Mask R-CNN (Mask Region-Based Convolutional Neural Network)11, or U-Net (Unit Conversion Network)12 can be processed and refined to extract significantly more precise shape data. YOLOv8 outputs, as shown in Fig. 1A, were used as a starting point. These results often correctly identified a particle’s location and general size but lacked the precision to extract morphological characteristics accurately. DualSight processes these outputs to be fed into SAM, resulting in significantly more accurate segmentations.

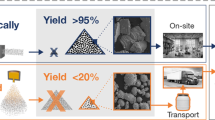

In addition to developing a high-performance instance segmentation framework, this project aims to achieve generalizability and accessibility. Training a model to segment a specific powder does offer benefits. However, the model might perform differently when using a powder with a different size, shape, or texture8. Thus, models in this study were trained on a diverse dataset containing 13 powder types across five different image magnifications. Including this variety in the training data should help the models generalize across different powder types, even those previously unseen. Additionally, as computer vision techniques have advanced in recent years, the requirement for data and computational resources has also increased. For example, SAM was trained using 11 million images and 17,408 GPU hours13. DualSight was developed to overcome these limitations. Thus, the methodologies presented here can be utilized on a personal laptop, requiring no advanced GPU clusters. Additionally, in support of improving accessible solutions, particularly for powder analysis, the dataset used throughout this study has been made publicly available. This dataset may serve as a benchmark moving forward to test the performance, generalizability, and robustness of computer vision techniques for segmenting powder particles.

Contributions

The contributions of this work are as follows:

-

1.

Developed DualSight, a novel framework to improve segmentation accuracy without requiring additional data or training.

-

2.

Demonstrated DualSight’s ability to automatically extract morphology across various shapes, sizes, and magnifications.

-

3.

Highlighted DualSight’s lightweight nature, using a personal laptop without any additional hardware or GPU clusters.

-

4.

Provided open-access to one of the largest datasets of annotated powder, including 13 powder types and five magnifications, to serve as a benchmark, in addition to all code required to reproduce results: https://github.com/sprice134/DualSight.

Results

This work builds on the performance of two models, YOLOv814 and SAM13, and capitalizes on their unique abilities and addresses their limitations. Thus, this work presents DualSight, a novel multi-stage framework that integrates the two, yielding higher-quality segmentations in this experiment than either model produced individually. To evaluate performance, three metrics were chosen: intersection-over-union (IoU), precision, and recall. These results were collected using a dataset of 132 images, broken into a training set (79 images), a validation set (33 images), and a held-out test set (20 images).

Challenges with YOLOv8 and SAM model performance

YOLOv8 and SAM utilize very distinct architectures to segment images. YOLOv8 is built on a CSPDarknet53 backbone that frequently must be fine-tuned to a specific use case for maximum performance14. By contrast, SAM is a foundational model that has been trained once on a vast dataset and requires no additional training13. As a result, segmentations made by SAM are precise but can lack an understanding of the desired parameter space.

Due to these distinct architectures and techniques, each model has different strengths and weaknesses, which the presented framework, DualSight, addresses. Specifically, SAM generates extremely crisp segmentations, as shown in Fig. 2B, E, and H. However, it lacks a fundamental understanding of the nature of a particle, limiting its ability to accurately detect its edges. For example, as highlighted in Fig. 2B, SAM is particularly sensitive to shadows, identifying a single particle as multiple. In contrast, as shown in Fig. 2A, YOLOv8 correctly identifies the highlighted particle as a single particle but does not have the same level of precision as SAM. Similarly, SAM identifies small deformations on particles’ surfaces (known as satellites) as independent objects, as shown in Fig. 2E. Due to its fine-tuning, YOLOv8 learned these should be segmented together and correctly identifies this as a single particle but still creates a less precise segmentation, as shown in Fig. 2D. While some applications exist that require treating satellites independently8, in most situations, these satellites should be treated as a part of their parent particle. Occasionally, YOLOv8 segmentations are particularly imprecise, as shown in Fig. 2G, labeling the boundary of a particle as a series of jagged edges instead of a fluid curve. On the same particle, SAM again creates a much more precise boundary, but misidentified this particle as two particles, as shown in Fig. 2H.

Visualizing performance of three distinct computer vision techniques for particle segmentation. (A), (D) and (G) (left column) highlight YOLOv8’s poor segmentation on the perimeter of particles. (B), (E), and (H) (center column) highlight SAM’s sensitivity to shadows and satellites, incorrectly segmenting single particles as multiple. (C), (F), and (I) (right column) highlight our approach, DualSight, with improved perimeter segmentation accuracy of YOLOv8 outputs and reduced sensitivity to shadows and satellites compared to SAM.

Additionally, SAM is a semantic segmentation model that identifies and segments every component of an image, even the background. These annotations are semantically ambiguous, meaning that there is no way of determining if it is a particle, a component of a particle, or not a particle. As a result, additional steps are required to filter the outputs from SAM to remove segmentations of the background. These techniques have proven useful in specific applications, but often require fine-tuning to their parameter space, filtering based on shape, color, or brightness thresholds15. This work is designed to be a generalizable tool that can be implemented across various powder shapes, sizes, and colors. Additionally, the brightness or contrast of an SEM may be inconsistent between different machines or even different users on the same machine. With these considerations, standardized thresholding techniques cannot be relied on, requiring the final solution to be able to distinguish particles from their surroundings.

Improving model performance with DualSight framework

Using YOLOv8 and SAM for particle segmentation revealed four key challenges that must be addressed for a successful model.

-

The model must be robust enough to handle shadows and small variations of light.

-

The model must have the domain knowledge to know that satellites are a part of the particle instead of a separate entity.

-

The model must be able to create precise segmentations that accurately fit the particle border.

-

The model must be an instance segmentation model, only identifying particles and not their surroundings

Thus, DualSight joins the domain knowledge of YOLOv8 gained from fine-tuning with the precision of SAM to produce high-quality segmentations, addressing all four challenges.

As shown in Fig. 2C, DualSight produced a single particle annotation, unaffected by the shadow, as SAM was, and significantly more accurate than the initial YOLOv8 segmentation. Similarly, as shown in Fig. 2F, DualSight accurately identified a singular particle containing the larger parent particle as well as its conjoined satellite. Additionally, as shown in Fig. 2I, in cases where YOLOv8 struggled with precision, DualSight produces a much cleaner and more accurate segmentation. Lastly, the annotations produced by DualSight are distinct instance segmentations, requiring no additional processing to differentiate from the background or environment. Thus, by using DualSight to join the strengths of YOLOv8 and SAM, higher-quality annotations can be produced, leveraging the domain knowledge of YOLOv8 with the improved accuracy of SAM.

Quantitative comparison of performance improvement

In pursuit of addressing the limitations of YOLOv8 and SAM, DualSight showed improvement across all three performance metrics. Evaluated on a held-out test set of 20 images containing 1,520 unique particles, as shown in Table 1, DualSight increased the mean IoU by 0.059, mean precision by 0.005, and mean recall by 0.060 for the YOLOv8 model, in addition to raising the minimum and maximum value for all three metrics. Compared to SAM, DualSight achieved a mean IoU improvement of 0.268, a mean precision improvement of 0.049, and a mean recall improvement of 0.268. These results highlight how, without any additional data or model training, DualSight improved segmentation performance across all three recorded metrics on the held-out test set compared to YOLOv8. Additionally, by providing domain knowledge from an initial model, DualSight was able to far exceed SAM’s performance on its own.

These results indicate an average improvement in performance. A more detailed analysis further highlights the robustness of these improvements. As shown in Fig. 3, DualSight resulted in a consistent average increase in performance. Of the 20 images in the held-out test set, DualSight scored a higher IoU score than YOLOv8 on all 20, ranging from 0.011 to 0.155. Considering that IoU has a maximum of 1.0, a range from 0.011 to 0.155 is quite large. This indicates that the degree of improvement DualSight offers may be dependent on the powder, morphology, and magnification. Despite this variable degree of impact, some form of improvement can still be expected. Additional information regarding direct image-to-image comparison of IoU, precision, and recall can be found in Supplementary Materials A.

Comparison of IoU scores from raw YOLOv8 (blue) and DualSight (red) highlighting the consistent improvement in segmentation accuracy across the entire held-out test set. For more details, refer to Supplementary Materials A.

Evaluating model generalizability

DualSight was designed to be an accessible framework, providing high-quality segmentation capabilities without requiring a large computer or a complex model. As a result, the smallest YOLOv8 model (nano) was selected from the suite of available YOLOv8 models (nano, small, medium, large, x-large). By using the smallest model, training can be completed faster with fewer resources, requiring less than 2 hours to train on a personal laptop and less than 15 minutes on a GPU. Furthermore, inference can be completed approximately 5 times faster on the YOLOv8 Nano than on the much larger YOLOv8 X-Large. However, a smaller model with fewer parameters, while faster, may not perform with as high accuracy16. To ensure the improvements presented here were not a result of using a model that was too small or dependent on a specific architecture, YOLOv8 X-Large and Mask R-CNN models were also evaluated.

For this experiment, paired t-tests were conducted to calculate the mean IoU improvement, as well as the statistical significance of this improvement, as shown in Table 2. Comparing each model’s IoU scores individually, before and after DualSight refinement, YOLOv8 Nano performed the best initially, scoring a mean IoU of 0.778, whereas YOLOv8 X-Large scored 0.749 and Mask R-CNN scored 0.761. After refinement with DualSight, YOLOv8 Nano and X-Large both had an average increase of 0.069, and Mask R-CNN had an increase of 0.102. Each model’s improvement had a p-score less than 0.0001, with 95% confidence intervals at 0.052–0.087, 0.053–0.087, and 0.085–0.121 for YOLOv8 Nano, X-Large, and Mask R-CNN, respectively.

Interpreting these results, DualSight offers statistically significant improvements for segmentation accuracy that exceed increasing parameter size or switching model architecture. By enabling high-accuracy segmentations with small models, DualSight can be applied to a broader set of use cases. For example, in cases of edge computing17, extending to a significantly larger model might not be possible. Alternatively, in cases where there is limited data, increasing the number of parameters can decrease performance, resulting in a model overfitting the dataset and losing its ability to generalize to unseen data. In this case, DualSight offers the benefits of a small model to learn the domain, and the precision of a larger model. Alternatively, when resources or data are not an issue, this framework can still be applied to large models to yield even further improvements.

Applications of DualSight: performance metrics in context

Throughout this study, intersection-over-union (IoU), precision, and recall were used to evaluate performance, as is common in the field of computer vision. However, these metrics can be challenging to interpret and contextualize from a morphological perspective. Therefore, the performance impact of DualSight was also measured in the context of specific morphological characteristics. These characteristics include area, convex area, eccentricity, equivalent diameter, feret diameter, major axis length, minor axis length, perimeter, and solidity. To assess the impact of using DualSight to predict these characteristics, the mean absolute percent error (MAPE) was measured for each particle. A decrease in MAPE indicates a smaller error, and therefore closer prediction to the ground truth for that characteristic.

Evaluated on YOLOv8 Nano, X-Large, and Mask R-CNN, as shown in Table 3, these results demonstrate a consistent improvement. Specifically, in the 27 experiments (nine characteristics on three models each), the refined segmentation always had a reduced MAPE score compared to the initial segmentation. Bulk morphological characteristics such as area and convex area had the largest improvement, with an average MAPE score decreasing from 18.24% to 12.20% (area) and 20.26% to 14.08% (convex area). While more fine-grained metrics had a smaller effect, such as solidity decreasing from an average of 3.88% to 3.14% and perimeter decreasing from 14.10% to 10.09%, they still have a reduced error. This highlights DualSight’s ability to improve both large-scale and small-scale morphological characteristics, leading to improved segmentations.

These results confirm that optimizing traditional computer vision metrics, such as IoU, precision, and recall, yields improved predictions for morphological characteristics as well. Additionally, they demonstrate that DualSight is capable of producing high-quality segmentations of particles across numerous materials and magnifications. For example, major axis length, minor axis length, equivalent diameter, perimeter, and solidity all scored a MAPE less than 10%, and area, convex area, and eccentricity were close, scoring 11.38, 13.14, and 11.35%, respectively, for YOLOv8 Nano.

DualSight limitations: overlapping objects

When analyzing these results, an important distinction must be made between identification accuracy and segmentation accuracy. DualSight’s ability to identify particles and distinguish them from the background was nearly perfect, as shown in Figs. 1 and 2C, F, and I. Furthermore, by aggregating every DualSight prediction into a single mask and evaluating each pixel to see if it was correctly identified as a particle or non-particle, DualSight scored an IoU of 0.960, a precision of 0.973 and a recall of 0.987. These results are detailed further in Supplemental Materials C. This increase in performance when evaluating at a pixel level after aggregating every particle together hints at DualSight’s key limitation. When particles overlap, it will correctly identify the particles with a high level of accuracy, but may struggle to distinguish where one particle ends and the other begins. In support of this, the highest measured IoU of 0.929 was recorded on an image with easily distinguishable particles, as shown in Fig. 4A. The lowest recorded IoU score of 0.592 was recorded on an image with a high amount of overlap that was much harder to distinguish particle boundaries, even to the human eye, as shown in Fig. 4B. It is also important to note that these experiments were conducted exclusively on powder particles, and further testing is required to fully assess this framework’s generalizability to new domains.

Images with best and worst measured IoU scores from DualSight.

Discussion

Particle morphology is a crucial factor in the performance of powder-based AM processes such as CSAM. This morphology, including size, shape, and surface roughness characteristics, is heavily influenced by the production process utilized and varies greatly between commonly used production methods. These powder production methods include gas and water atomization, melting and crushing, sintering and crushing, milling, mechanical alloying, spray drying, calcination, and cladding techniques. This makes the selection or identification of the powder production methods essential to effectively understand the effects of powder morphology on the deposition process. Once a powder is selected, its morphological characteristics impact many components of the CSAM process. A particle’s in-flight aerodynamics, drag forces, heat fluxes, and motion dynamics within a gas stream are all dependent on morphology. Morphology directly impacts the resultant microstructure and mechanical properties of the deposited material. For example, properties such as tensile strength, ductility, hardness, wear resistance, adhesion strength, and toughness are all impacted by morphology. Additionally, the flowability of feedstock powder within the feeding mechanisms of the cold spray process is significantly influenced by the morphology18. Spherical or round-shaped particles are typically selected for use in cold spray due to their superior flowability, predictability/controllability, and deformation characteristics when compared to similarly sized irregularly shaped powders18,19. However, irregularly shaped particles offer advantages over spherically shaped particles as well, such as a higher critical and impact velocity and an increased deposition efficiency18,19. For example, Wong et al. and Ning et al. both reported higher in-flight particle velocities and heterogenous impact velocities when depositing irregularly shaped powders due to the larger specific surface areas20,21. Overall, spherical morphologies tend to provide improved coating-to-substrate adhesion, while irregular morphologies affect the stress distribution and can cause non-uniform contact at the substrate interface18. These powder morphology-dependent factors directly impact the resultant microstructure and mechanical properties of cold spray deposits, including properties such as tensile strength, ductility, hardness, wear resistance, adhesion strength, and toughness.

As a result, a thorough accounting of feedstock powder’s morphology is essential for tailoring the cold spray process to achieve desired deposit characteristics for application-specific use cases. To quickly extract these characteristics, computer vision provides a viable, high-precision analysis technique. Results from this work highlight DualSight’s ability to improve the segmentation accuracy of commonly used models without requiring additional data or model training. Analyzing performance scores from the raw YOLOv8 model and DualSight reveals that DualSight had a substantially larger improvement in recall than precision. Specifically, on the held-out test set, using DualSight resulted in a 12-times larger improvement for recall than precision. This difference is indicative of the particular challenges addressed by DualSight. For example, YOLOv8 had a tendency to under-segment particles, leaving parts of the borders unidentified. As a result, there was more room for improvement in recall, leading to the increase from 0.799 to 0.859. In contrast, YOLOv8 rarely over-segmented particles, resulting in a high precision score. Thus, when refining segmentations with DualSight, while precision did improve, it only improved from 0.922 to 0.926. As a result, IoU presents a more comprehensive metric and highlights these improvements, increasing from 0.757 to 0.816.

Powder particles exist in a variety of shapes, sizes, and textures, including large and small particles, particles with and without satellites, spherical and jagged particles, irregularly shaped particles, and textured particles, as shown in Fig. 5. When training a model, these variations are quite important to consider. If trained on a single powder or a small subset of powders, it will likely struggle to accurately segment the boundaries of unseen powders with different morphologies8. As a result, models in this study were trained on thirteen morphologies across five different magnifications, ensuring that DualSight has high accuracy and can generalize to unseen powders.

A diverse set of powder morphologies included in training and evaluation.

When using computer vision to analyze and detect powder particles, nondeterminism has been found to be an issue22 impacting the reproducibility of results. Specifically, two identical model architectures trained on the same machine using the same dataset can yield drastically different results. Here, the YOLOv8 was configured to be deterministic, consistently yielding identical results. However, two sources of nondeterminism are present in the DualSight framework. Mainly, a pseudo-random sub-sampling process is implemented to improve run-time. Additionally, SAM uses a nondeterministic architecture13. Despite these sources, minimal changes were noted between different runs, indicating a negligible impact of nondeterminism.

Results presented in this study highlight DualSight’s strong capabilities to extract morphological characteristics from SEM micrographs. However, they also highlight the limitations of computer vision for segmenting overlapping particles. While still able to identify particles and distinguish them from the surrounding background, these models particularly struggle to separate particles from each other when they overlap. However, the overlapping particles that present an issue to these models are also challenging for humans to distinguish, resulting in contradictory segmentations when annotated by multiple individuals. As a result, increasing the size of training data and developing a more accurate model may yield higher performance scores but will likely not fully solve this problem, especially for irregular powder morphologies.

Methods

Segment anything model (SAM) inputs

As a foundational model, SAM has two main functionalities: semantic and instance segmentation. Semantic segmentation can be performed automatically but is limited to the underlying training of SAM and is difficult to tailor to specific use cases. Alternatively, instance segmentation can be performed when paired with user-prompting. However, this requires specific prompts to instruct SAM approximately what object should be annotated. For instance segmentation, SAM accepts prompts in multiple formats: inclusion points, exclusion points, and bounding boxes. To make a preliminary annotation, SAM requires at least one inclusion point or a bounding box. This annotation can then be iteratively refined by introducing additional inclusion points, a bounding box (if not already included), and exclusion points.

Primary model predictions

DualSight utilizes a multi-stage approach in which a lightweight model is tuned to learn the specific parameter space. These predictions are then processed and fed into SAM to be refined. The objective of this study was to develop a high-performing model that could be used with minimal hardware requirements to be as accessible as possible. In pursuit of this, the YOLOv8 Nano segmentation model from Ultralytics was selected. This model required six gigabytes of memory for training and two gigabytes of memory for inference and was trained on a personal laptop in less than two hours.

Since the outputs from the primary model are refined and improved in the next stage, they do not need to be perfect. For these purposes, the YOLOv8 Nano performed well enough to feed the SAM model. However, in more difficult applications where a more complex model may be necessary, the primary model can be easily replaced. This framework was designed to be ambiguous to the architecture or specifications of the primary model. As long as the model is a segmentation model and produces an output that can be converted into polygons or a binary mask, it can easily be inserted into this framework.

Transforming model outputs into inputs

As a foundational model trained on a vast dataset, SAM is the state-of-the-art for instance segmentation and is capable of generating highly precise segmentations. However, requiring prompting makes it challenging to use in an automated system. Thus, an additional model must be used to automatically make initial predictions that may guide SAM. Once the primary model makes an initial prediction, they are processed and fed into the secondary model (SAM) to be refined. Intelligently processing these predictions into inputs to SAM for improved segmentation accuracy is DualSight’s primary contribution.

To accomplish this, initial predictions must be made by the primary model, identifying the approximate location, shape, and size of the desired particle, as shown in Fig. 6B. This prediction is then stored as a binary mask. This mask is then fed through an optimization algorithm, which will extract a few key points, referred to as points of interest (POIs), as shown in Fig. 6C. The POIs are then fed into SAM as prompts, resulting in higher-quality segmentations while still remaining automated, as shown in Fig. 6D, and described in Algorithm 1. When selecting POIs, the primary objective is to identify a few key points that represent a particle’s shape. Thus, the optimization algorithm, as defined in Algorithm 1 searches for the set of points with the largest collective distance from each other. By maximizing distance, these points are most likely to represent the full particle, especially in cases of abnormal shapes. For each particle, it was identified that three POIs were enough to encapsulate a particle’s shape for both simple and complex morphologies, as shown in Fig. 7.

Step-by-step breakdown of DualSight (A) taking an unlabeled particle, (B) creating a preliminary segmentation (with YOLOv8 or Mask R-CNN), (C) selecting optimal points-of-interest (POIs) from the initial segmentation, and (D) obtaining a refined segmentation from SAM.

Visualizing coverage of particle shape using three POIs (points of interest) on various shapes.

This approach of sampling a series of points and selecting POIs as those with the largest distance worked quite well for images with no overlapping particles. However, this is not the case in many SEM images. By maximizing distance, these POIs were almost always on the edge of a particle. In cases where particles were overlapping, POIs located on the boundary between two particles had a tendency to result in SAM segmenting the two particles as one, even if the primary model detected them as distinct. To remedy this, the selection process was refined in two key ways. First, a buffer zone was introduced, sampling points from the inner 95% of a particle instead of the entire particle, as shown in Fig. 6C. As a result, POIs located on the boundary of two overlapping particles became much less frequent. Second, in addition to prompting SAM with the POIs, SAM was also constrained by a bounding box. This bounding box was similar to the bounding box of the primary model’s annotation but slightly expanded to account for missed regions. By adding the box as a constraint, segmentation precision can still be improved with SAM, but final annotations remain closer to the domain knowledge learned by fine-tuning.

DualSight Refinement Algorithm

Exhaustively searching all points for POI selection, as described in Algorithm 1, can be quite computationally heavy, especially when particles represent a large portion of an image. Specifically, this algorithm has a run time of \(O\left( \left( {\begin{array}{c}S\\ k\end{array}}\right) \left( {\begin{array}{c}k\\ 2\end{array}}\right) \right)\), where S is the number of available points for selection, and k is the number of POIs to be selected. Thus, this approach leverages random sampling to search for POIs from a much smaller set of points than the exhaustive list while still representing the entire shape. In this work, 50 points were randomly sampled for each particle, resulting in a run time of \(O\left( \left( {\begin{array}{c}50\\ 3\end{array}}\right) \left( {\begin{array}{c}3\\ 2\end{array}}\right) \right)\), or 58,800 combinations. When computed in parallel, these computations were found to have a negligible impact on run time. However, if used in other applications, increasing or decreasing the number of sampled points or final POIs may improve results, but there is a direct link between increasing resolution and increasing run-time.

Evaluating model performance

To evaluate model performance, three metrics commonly used, for instance segmentation, were utilized: intersection-over-union, precision, and recall23,24,25. While similar, each of these metrics targets a specific aspect of performance. IoU measures the overlap between the predicted segmentation mask and the ground truth, calculated as a ratio from 0 to 1. Alternatively, precision calculates the percentage of a predicted particle’s mask that is correct. In contrast, recall calculates what percentage of a particle’s ground truth was correctly predicted. As a result, IoU is a holistic measure, whereas precision is a measure of positive prediction accuracy, and recall is a measure of the proportion of actual positives correctly identified. The combination of these metrics enables a broad comparison of each model’s overall performance.

Configuring DualSight for alternative applications

DualSight is a general framework designed to improve the quality of segmentations from a computer vision model. Here, it is utilized specifically for powder segmentation. However, this framework is data-ambiguous. By re-training the primary model on a different dataset, this framework could easily be utilized for alternative tasks. Additionally, while YOLOv8 was selected in this case, this is not a requirement. The key contribution of this work is how to process a model’s outputs so that they can be fed into SAM. Alternative models, such as a U-Net or Mask R-CNN, could be implemented as well. Ultimately, any model that produces binary masks or similar outputs that can be converted into binary masks can be utilized with this framework.

Conclusion

This work presents a novel multi-stage framework for refining powder particle segmentations without requiring any additional data or training. By leveraging a foundational model as a secondary step, powder particles were annotated with consistently improved IoU, precision, and recall, enabling a more accurate extraction of powder morphology from SEM images in this experiment. Furthermore, high-quality image analysis of powders can be performed automatically, enabling improved characterization to facilitate additive manufacturing processes such as CSAM. The work presented here was specifically designed to be accessible. In pursuit of this, results here were collected using YOLOv8 Nano as a preliminary model, the smallest of YOLOv8 models. Using DualSight, results were found to be comparable when using either YOLOv8 Nano or X-Large as a preliminary step, despite the difference in computational resources required. Lastly, this work provides open access to one of the largest datasets of annotated powders to serve as a benchmark moving forward, as well as the code required to implement DualSight.

Data availability

Necessary data is provided within the manuscript, supplementary materials, and https://github.com/sprice134/DualSight.

References

Gärtner, F., Stoltenhoff, T., Schmidt, T. & Kreye, H. The cold spray process and its potential for industrial applications. J. Therm. Spray Technol. 15, 223–232 (2006).

Moridi, A., Hassani-Gangaraj, S. M., Guagliano, M. & Dao, M. Cold spray coating: Review of material systems and future perspectives. Surf. Eng. 30, 369–395 (2014).

King, W. E. et al. Laser powder bed fusion additive manufacturing of metals; physics, computational, and materials challenges. Appl. Phys. Rev. 2, 041304 (2015).

Sun, S., Brandt, M. & Easton, M. Powder bed fusion processes: An overview. In Laser Additive Manufacturing 55–77 (2017).

Svetlizky, D. et al. Laser-based directed energy deposition of advanced materials. Mater. Sci. Eng. A 840, 142967 (2022).

Haverland, R., Hendricks, D. & Knisei, V. Microtrac particle-size analyzer: An alternative particle. Size determination method for sediment and soils. Science 138 (1984).

Cohn, R. et al. Instance segmentation for direct measurements of satellites in metal powders and automated microstructural characterization from image data. JOM 73, 2159–2172 (2021).

Price, S. E., Gleason, M. A., Cote, D. L. & Neamtu, R. Automated and refined application of convolutional neural network modeling to metallic powder particle satellite detection. Integr. Mater. Manuf. Innov. 10, 661–676 (2021).

Rettenberger, L. et al. Uncertainty-aware particle segmentation for electron microscopy at varied length scales. npj Comput. Mater. 10, 124 (2024).

Redmon, J., Divvala, S., Girshick, R. & Farhadi, A. You only look once: Unified, real-time object detection. In Proceedings of the IEEE Conference on Computer Vision and Pattern Recognition 779–788 (2016).

He, K., Gkioxari, G., Dollár, P. & Girshick, R. Mask R-CNN. In Proceedings of the IEEE International Conference on Computer Vision 2961–2969 (2017).

Ronneberger, O., Fischer, P. & Brox, T. U-net: Convolutional networks for biomedical image segmentation. In Medical Image Computing and Computer-Assisted Intervention–MICCAI 2015: 18th International Conference, Munich, Germany, October 5–9, 2015, Proceedings, Part III 18 234–241 (Springer, 2015).

Kirillov, A. et al. Segment anything. In Proceedings of the IEEE/CVF International Conference on Computer Vision 4015–4026 (2023).

Ultralytics. Yolov8 architecture explained: Exploring the yolov8 architecture. Accessed 07 July 2024 (2023).

Yamagiwa, H. et al. Zero-shot edge detection with scesame: Spectral clustering-based ensemble for segment anything model estimation. In Proceedings of the IEEE Winter Conference on Applications of Computer Vision 541–551 (2024).

Zhao, W. X. et al. A survey of large language models. arXiv preprint arXiv:2303.18223 (2023).

Cob-Parro, A. C., Losada-Gutiérrez, C., Marrón-Romera, M., Gardel-Vicente, A. & Bravo-Muñoz, I. Smart video surveillance system based on edge computing. Sensors 21, 2958 (2021).

Nouri, A. & Sola, A. Powder morphology in thermal spraying. J. Adv. Manuf. Process. 1, e10020 (2019).

Palodhi, L., Das, B. & Singh, H. Effect of particle size and morphology on critical velocity and deformation behavior in cold spraying. J. Mater. Eng. Perform. 30, 8276–8288 (2021).

Ning, X.-J., Jang, J.-H. & Kim, H.-J. The effects of powder properties on in-flight particle velocity and deposition process during low pressure cold spray process. Appl. Surf. Sci. 253, 7449–7455 (2007).

Wong, W. et al. Effect of particle morphology and size distribution on cold-sprayed pure titanium coatings. J. Therm. Spray Technol. 22, 1140–1153 (2013).

Price, S. & Neamtu, R. Identifying, evaluating, and addressing nondeterminism in mask R-CNNs. In International Conference on Pattern Recognition and Artificial Intelligence 3–14 (Springer, 2022).

Tian, Y., Su, D., Lauria, S. & Liu, X. Recent advances on loss functions in deep learning for computer vision. Neurocomputing 497, 129–158 (2022).

Pont-Tuset, J. & Marques, F. Supervised evaluation of image segmentation and object proposal techniques. IEEE Trans. Pattern Anal. Mach. Intell. 38, 1465–1478 (2015).

Voigtlaender, P., Luiten, J., Torr, P. H. & Leibe, B. Siam R-CNN: Visual tracking by re-detection. In Proceedings of the IEEE/CVF Conference on Computer Vision and Pattern Recognition 6578–6588 (2020).

Author information

Authors and Affiliations

Contributions

Software Development: S.P.; Model Training & Inference: S.P.; SEM Data Acquisition and analysis: K.J., K.T.; Project Supervision: R.N., K.T., D.L.C.; Initial Manuscript Draft: S.P., K.J.; Manuscript Revisions: All Authors. All authors have approved this manuscript.

Corresponding author

Ethics declarations

Competing interests

The authors declare no competing interests.

Additional information

Publisher’s note

Springer Nature remains neutral with regard to jurisdictional claims in published maps and institutional affiliations.

Supplementary Information

Rights and permissions

Open Access This article is licensed under a Creative Commons Attribution-NonCommercial-NoDerivatives 4.0 International License, which permits any non-commercial use, sharing, distribution and reproduction in any medium or format, as long as you give appropriate credit to the original author(s) and the source, provide a link to the Creative Commons licence, and indicate if you modified the licensed material. You do not have permission under this licence to share adapted material derived from this article or parts of it. The images or other third party material in this article are included in the article’s Creative Commons licence, unless indicated otherwise in a credit line to the material. If material is not included in the article’s Creative Commons licence and your intended use is not permitted by statutory regulation or exceeds the permitted use, you will need to obtain permission directly from the copyright holder. To view a copy of this licence, visit http://creativecommons.org/licenses/by-nc-nd/4.0/.

About this article

Cite this article

Price, S., Judd, K., Tsaknopoulos, K. et al. DualSight: multi-stage instance segmentation framework for improved precision. Sci Rep 15, 27521 (2025). https://doi.org/10.1038/s41598-025-09642-3

Received:

Accepted:

Published:

Version of record:

DOI: https://doi.org/10.1038/s41598-025-09642-3