Abstract

Mild cognitive impairment (MCI) and dementia pose significant health challenges in aging societies, emphasizing the need for accessible, cost-effective, and noninvasive diagnostic tools. Electroencephalography (EEG) is a promising biomarker, but traditional systems are limited by size, cost, and the need for skilled technicians. This study proposes a deep-learning-based approach using data from a portable EEG device to distinguish healthy volunteers (HVs) from patients with dementia-related conditions. We analyzed EEG data from 233 participants, including 119 HVs and 114 patients, and transformed the signals into frequency-domain features using a short-time Fourier transform. A customized transformer-based model was trained and evaluated using 10-fold cross-validation and a holdout dataset. In the cross-validation, the model achieved an area under the curve (AUC) of 0.872 and a balanced accuracy (bACC) of 80.8% in distinguishing HVs from patients. Subgroup analyses were conducted for HVs versus patients stratified by dementia severity and by clinical diagnosis, yielding AUCs ranging from 0.812 to 0.898 and bACCs from 74.9 to 86.4%. Comparable results were obtained in the holdout dataset. These findings suggest that portable EEG data combined with deep learning may serve as a practical tool for the early detection and classification of dementia-related conditions.

Similar content being viewed by others

Introduction

Dementia affects more than 55 million people worldwide, with the associated costs estimated at $1.3 trillion annually. The number of patients and related expenses are expected to continue increasing1. In Japan, as of 2020, approximately 6.02 million people have dementia, and the prevalence of mild cognitive impairment (MCI) among those aged ≥ 65 is 13%, affecting approximately 4.71 million individuals2. Establishing a regional medical care system for dementia is essential; however, the number of dementia specialists in Japan is limited to approximately 2,0003. Similar problems have been reported in the United States4, suggesting that this is a global issue. Consequently, in areas with a shortage of specialists, the early diagnosis of dementia is challenging, leading to delays in diagnosis and therapeutic intervention.

The early diagnosis of dementia at a mild stage allows pharmacological and non-pharmacological interventions, potentially slowing disease progression5. Additionally, practical support for future planning and the establishment of a living environment that maintains an individual’s quality of life can reduce the burden of caregiving6.

In addition, an accurate differential diagnosis of dementia diseases is essential to optimize clinical care: for instance, by carefully avoiding medications with anticholinergic properties and promoting aggressive identification of therapeutic targets, such as orthostatic hypotension and constipation7. The differential diagnosis of dementia largely depends on clinical features, neuropsychological profiles, and various biomarkers, including structural magnetic resonance imaging (MRI), single-photon emission computed tomography (SPECT), amyloid positron emission tomography (PET), cerebrospinal fluid (CSF) markers, meta-iodobenzylguanidine (MIBG) myocardial scintigraphy, dopamine transporter (DAT) uptake in the basal ganglia, and polysomnography (PSG)-based confirmation of rapid eye movement (REM) sleep without atonia8. However, these investigations can be invasive, costly, require specialized skills, and are typically conducted at a limited number of institutions equipped with cutting-edge technology9.

Owing to the global increase in the number of individuals with dementia1, a strong demand exists for the development of noninvasive, inexpensive, and widely accessible diagnostic methods across numerous facilities.

Electroencephalography (EEG) is a diagnostic method that is used to addresses these conditions. EEG is widely available, noninvasive, and inexpensive, making it a valuable tool in clinical practice, especially when compared to the high-precision biomarkers mentioned earlier. Because brain activity primarily involves neuronal electrical activity, EEG and other electrophysiological approaches are considered highly reflective of brain function, making EEG a promising biomarker for dementia management10.

EEG has recently been used as a promising tool to screen for and assist in the diagnosis of dementia11, and neurophysiological findings associated with neurodegenerative diseases have accumulated10. Recent studies have reported that the application of advanced machine learning12,13 and deep learning techniques11,14 to clinical electroencephalography (EEG) data significantly improves the accuracy of dementia identification.

We previously reported that deep learning models applied to clinical EEG data can accurately distinguish between multiple dementia-related diseases15. Furthermore, we demonstrated that deep learning models trained on the clinical EEG data of patients with dementia at one institute could accurately identify patients with dementia at an early MCI level, as well as those from other facilities16.

These results suggest that EEG can serve as an auxiliary technology for the differential diagnosis of dementia. However, conventional multi-channel clinical EEG systems have several disadvantages: they are expensive, large, and heavy (weighing 100 kg); require specialized medical personnel; and require accurate placement of electrodes and paste on the scalp, which can be physically burdensome to participants. Additionally, participants must move to the hospital’s examination room for EEG measurement, which involves logistical efforts and preparation time for the technician, posing barriers to screening in a large patient population.

The broader adoption of portable EEG devices is expected to address the limitations of clinical EEGs. As previously discussed, recent studies have advanced the applications of deep learning for dementia classification using multi-channel EEG11,17,18. A recent review emphasized quantitative EEG as a promising noninvasive biomarker for dementia disorders19. Although our previous work demonstrated the feasibility of portable EEG with machine learning20,21, the use of transformer-based models applied to portable systems remain underexplored. Our earlier research revealed that data obtained from portable EEG devices and analyzed using deep learning techniques could effectively differentiate between patients with Alzheimer’s disease (AD) and healthy volunteers, albeit with a relatively small sample size22. Building on these findings, this study aimed to develop a transformer-based model using a larger dataset to facilitate dementia diagnosis and classify dementia severity. Given the global increase in the number of patients with dementia, this approach could serve as a non-invasive and accessible screening tool for the early identification and classification of dementia-related conditions, potentially offering significant clinical benefits.

The specific objectives of this study were as follows: (1) to evaluate whether a transformer-based deep learning model applied to portable EEG data could accurately distinguish healthy volunteers from patients with dementia-related conditions, and (2) to assess the model’s performance in differentiating healthy volunteers from patients stratified by dementia severity and clinical diagnosis.

Methods and materials

Study population

Portable EEG data were obtained from 294 participants (122 healthy volunteers (HVs) and 172 patients) at the Osaka University Hospital.

The HVs were community-dwelling older adults and selected based on the following inclusion criteria: (1) no history of neurological or psychiatric diseases, (2) no history of severe head injury or alcohol/drug abuse, and (3) no impairment of daily living or global cognitive impairment (Mini-Mental State Examination (MMSE)23 score ≥ 27), as in our previous study15.

All patients underwent baseline assessments, including demographic, cognitive, and neuropsychiatric assessments; brain structure assessments using MRI or computed tomography; and laboratory measurements (e.g., blood cell count, blood chemistry measurements, thyroid hormone levels, vitamin B1, B12, and folic acid). The recruitment period was from April 2021 to February 2024. The following examinations were optionally performed according to the attending physician’s judgement: SPECT, CSF markers, MIBG myocardial scintigraphy, DAT uptake in the basal ganglia, and PSG. Based on these examinations, the patients were evaluated through expert conferences to determine their clinical diagnoses based on international criteria, and subsequent treatment plans. As in our previous study15, this study included three dementia-related diseases: AD, Lewy body disease (LBD), and idiopathic normal-pressure hydrocephalus (iNPH). The patient details are described below.

Patients with AD were diagnosed according to international criteria24,25, those with LBD based on standard diagnostic consensus criteria8,26, and those with iNPH met standard criteria27. The diagnoses of LBD and iNPH were prioritized over those of AD in patients considered to have LBD or iNPH with comorbid AD24. In addition, as a consequence of the expert conference, patients in whom a specific neurodegenerative or dementia-related disease could not be identified as the underlying pathology were included as “non-specific.”

Regarding cognitive function, this study enrolled patients with MCI and dementia. Dementia severity was assessed based on the clinical dementia rating (CDR)28. In subsequent analyses, we considered MCI to have a CDR of 0.5 according to a previous study29, mild dementia to have a CDR of 128, and moderate dementia to have a CDR of 228.

Under these conditions, the final analysis included 119 HVs and 114 patients. The 114 patients included 45 with MCI, 48 with mild dementia, and 21 with moderate dementia when categorized by severity, while diagnosis included 53 with AD, 32 with LBD, 22 with iNPH, and 7 with non-specific MCI when categorized by clinical diagnosis. The demographic and clinical information of the HVs and patients is summarized by severity in Table 1 and by clinical diagnoses in Table 2.

Ethics

This study was conducted in accordance with the principles of the Declaration of Helsinki, approved by the Ethics Review Committee of Osaka University Hospital, and registered in the UMIN Clinical Trial Registry (UMIN 000042903). Before enrollment, each participant received both written and verbal explanations of the study objectives, procedures, potential risks and benefits, and the measures for data protection and privacy. Written informed consent was obtained for participation, and the participants were informed that their participation was voluntary and that they could withdraw their consent at any point without any impact on their care.

A patch-type portable EEG sensor

EEG measurements were performed using a portable, patch-type EEG device, HARU-1 (Supplementary Fig. 1 (S1); PGV Inc., Tokyo, Japan). The HARU-1 has received medical approval from Japan’s Pharmaceuticals and Medical Devices Agency (PMDA) and has been evaluated using the same standards as traditional clinical EEGs (Certification Number: 302AFBZX00079000, class II). The EEG signals were measured using three channels, the ChZ (center), ChR (right), and ChL (left), with a sampling frequency of 250 Hz. The specifications of the wireless sensing device and the electrode sheet are listed in Supplementary Table 1 (S2) and 2 (S3), respectively.

The device was lightweight, weighing only 27 g, and had a curved shape designed to fit the user’s forehead comfortably. Its Li-ion battery, with a capacity of 200 mAh, was chargeable via a micro-USB connector and lasted for approximately 12 h in recording mode. The wireless communication interface was based on the Bluetooth Low Energy (BLE) protocol, which provides easy device control. The HARU-1 device boasted a high voltage resolution of up to 24 bits (22 nV/LSB) and low input-referred noise of 1 µVpp.

The disposable electrode sheets (Notification Number: 13B2X10421000001, class I) of the device had a thickness of < 50 μm, stretchability of up to 200%, and a moisture permeability of 2700 g/m²/day. These sheets were manufactured using a screen-printing process with a biocompatible gel on an elastic base and a silver-based material. The biocompatibility of the conductive and nonconductive gels used in these electrode sheets was assessed in accordance with the ISO 10,993 standards for skin sensitization, irritation, and in vitro cytotoxicity.

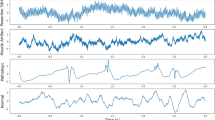

EEG preprocessing

The analysis used resting-state EEG data with eyes closed. As part of the data preprocessing, a 0.5–95 Hz band-pass filter was applied to capture a broad range of clinically-relevant EEG frequencies, from delta to gamma bands, while reducing the slow drifts and high-frequency noise. Given the variations in local power supply frequencies, notch filters at 50 and 60 Hz were included to attenuate power line interference and its harmonics. The filtered EEG data were subjected to short-term Fourier transform (STFT) to analyze the frequency content over time30. The STFT was computed using the following parameters: Hamming window, segment length (\(\:{n}_{perseg}\)) of 8 s (2000 samples), overlap (\(\:{n}_{overlap}\)) of 7 s (1750 samples), and FFT length (\(\:{n}_{fft}\)) of 2048 points.

The STFT was defined as follows:

where \(\:x\left(t\right)\) represents the signal, \(\:w\left(t\right)\) is the Hamming window function, \(\:t\) is the time, and \(\:f\) is frequency. The Hamming window helps minimize the spectral leakage.

In this study, the segment length was set to 8 s, allowing for a detailed frequency analysis while maintaining adequate time resolution. An overlap of 7 s ensured a high degree of temporal continuity between the segments, further enhancing the resolution of transient events in the EEG data. An FFT length of 2048 points provided a high-resolution frequency spectrum, facilitating the detection of subtle changes in EEG signals.

After applying the STFT, the axes were rearranged and the absolute values of the amplitude spectra were obtained. Because the frequency features are given by \(\:{n}_{fft}/2\) +1, this results in 1025 frequency bins. Finally, the 2-min EEG data for each participant were transformed into frequency features with dimensions of 108 × 1025 × 3, where 108 is the number of 8-s epochs, 1025 is the number of frequency bins, and 3 is the number of channels.

Data splitting

First, the dataset was divided into cross-validation and holdout datasets at a 0.9 to 0.1 ratio. Owing to this split, the holdout dataset included 12 HVs and 12 patients, whereas the cross-validation dataset included 107 HVs and 102 patients. Within the cross-validation dataset, the data was further split into training and validation sets in a 0.9 to 0.1 ratio. A 10-fold cross-validation method was employed, applying the Stratified K Fold31 to ensure that the distribution of diseases and CDR was consistent across all folds, thus minimizing bias.

Model architecture

A transformer-encoder-based model was deployed to perform the classification task. The architecture of the model is illustrated in Fig. 1. The model architecture is based on a customized transformer encoder. However, several modifications were made to the standard transformer encoder32, to facilitate the extraction of features from the 3-channel EEG data. The modifications are as follows:

Model Architecture. This figure illustrates the architecture of the EEG classification model built using a customized transformer encoder. (a) Overview of the classification pipeline. Resting-state EEG data recorded with the eyes closed were preprocessed by applying bandpass and notch filters to remove low-frequency drifts and power line noise. Short-time Fourier transform (STFT) was subsequently applied to extract the time-frequency features. The resulting spectral features were input into the model, which outputted the class probabilities corresponding to the cognitive status (i.e., healthy or patients). (b) The internal structure of the transformer encoder. The model employs three parallel transformer encoder blocks, each consisting of a multi-head attention mechanism followed by a convolutional feedforward network using 1D convolutional layers. Dropout was applied at multiple stages to prevent overfitting, and layer normalization was applied to stabilize the training. ‘N’ denotes the batch size.

First, three parallel transformer encoder blocks were used instead of a single transformer encoder. This parallelization allows the model to capture diverse features from the input data, thereby enhancing its robustness and accuracy. In addition, the traditional feedforward network is replaced with a convolutional feedforward network using Conv1D layers33. This change enhances the ability of the model to capture local dependencies in the data, making it particularly useful for learning inter-channel relationships in time-series data. Furthermore, to prevent overfitting, enhanced dropout regularization34 was applied at multiple stages within each transformer encoder block. This improved the generalization performance of the model for unseen data. Finally, as in the conventional architecture, layer normalization was applied after both the multihead attention mechanism and feedforward network. This step is crucial for stabilizing the training process and ensuring a faster convergence. Using these customizations, the model was effectively adapted to extract features from 3-channel EEG data.

Model training

We conducted a 10-fold cross-validation, resulting in the creation of 10 models35. In each fold, 90% of the dataset was used for training, and the remaining 10% was used for validation. An ADAM36 optimizer with a learning rate of 0.0001 was used for each cross-validation. To prevent overfitting, an early stopping mechanism was introduced. Specifically, if no improvement in the validation metrics was observed for 50 consecutive epochs, training was halted and the best weights observed during training were restored37.

In each fold, features were generated per participant with 108 epochs of features created per participant. The epochwise features of the entire training dataset were concatenated, randomly shuffled to avoid order dependency during training, and fed into the model using a batch size of 32. This approach ensured the integrity and reliability of the data. To maintain consistency, the data were not shuffled during the calculation of the validation metrics.

The transformer model comprises approximately 199,995 trainable parameters. Dropout regularization was applied after each encoder block, and early stopping at 50 epochs was applied to prevent overfitting. Weight decay was further implemented by regularizing the optimizer. Additionally, data augmentation using the MixUp method (α = 0.6, probability = 0.8)38 was applied during training.

The main libraries and their versions used in the training process were numpy39 version 1.24.3, scipy40 version 1.12.0, and TensorFlow41 version 2.13.0.

The performance of the model was comprehensively evaluated using this setup,, and its robustness and reliability were confirmed.

Model inference

Model outputs were generated per epoch, and the epoch-wise results were averaged for each participant to obtain participant-level results. In the evaluation of the 10-fold cross-validation dataset, the results for each participant in the validation dataset of each fold were aggregated to assess the overall performance. An ensemble method was adopted to evaluate the holdout dataset by averaging the outputs of the ten models trained through cross-validation. Specifically, we averaged the epochwise results for each participant and further averaged the output results of each of the 10-fold models to obtain the results for the holdout participants.

Evaluation metrics

For the binary classification task of distinguishing between the HVs and patients, we employed several evaluation metrics to comprehensively assess the performance of our model. The selected metrics included the sensitivity, specificity, balanced accuracy (bACC), and area under the receiver operating characteristic (ROC) curve (AUC)42,43. bACC was calculated as:

The bACC is the average sensitivity and specificity, providing a single metric that considers both types of classification errors. This is particularly useful for imbalanced datasets44.

Using these evaluation metrics, we comprehensively assessed the ability of our model to distinguish between HVs and patients. Each metric provides valuable insights into the different aspects of model performance, contributing to a robust evaluation framework.

Statistical analyses

To compare the ages of the HVs and patients, a one-way analysis of variance (ANOVA) was conducted across all groups. Post-hoc pairwise comparisons were subsequently performed using t-tests with the Bonferroni correction. Sex distribution was assessed using Fisher’s exact test. In the post-hoc analysis, assuming a two-tailed test with an effect size of 0.5, the power was calculated to be 96.8% for the sample sizes of HVs and patients. All tests were two-tailed, and the significance level was set at p < 0.05. Statistical analyses were conducted using Python (version 3.11.9) and the SciPy library (version 1.14.1).

Results

Demographic and clinical data analysis

When comparing the HVs and patients categorized by severity, a significant difference (p = 4.19 × 10−10) in age was observed. Post hoc analysis revealed significant differences between the HVs and each patient group (HVs vs. MCI: p = 2.74 × 10−5, HVs vs. mild dementia: p = 2.17 × 10−7, HVs vs. moderate dementia: p = 4.00 × 10−4, respectively), while no significant differences were detected among the patient groups themselves. When comparing the HVs and patients categorized by clinical diagnosis, a significant difference (p = 1.30 × 10−11) in age was observed. Post hoc analysis revealed significant differences in age between the HVs and each patient group (HVs vs. AD: p = 6.51 × 10−4, HVs vs. LBD: p = 2.11 × 10−9, HVs vs. iNPH: p = 1.79 × 10−8), however, no significant difference was observed between the HVs and non-specific MCI.

In the comparison of the sex distribution (male-to-female ratio) between the HVs and patient groups according to the severity and clinical diagnosis, no significant differences were found (p = 0.745 and p = 0.677, respectively).

Differentiating between HVs and patients using a deep-learning model

The model achieved an AUC of 0.872 and a bACC of 80.8% in distinguishing the HVs from the patients. Comparisons between the HVs and patients across severity levels resulted in an AUC of 0.862 and a bACC of 79.1% (HVs vs. MCI), an AUC of 0.888 and a bACC of 81.1% (HVs vs. mild dementia), and an AUC of 0.858 and a bACC of 83.7% (HVs vs. moderate dementia). (Table 3). These results are summarized in the ROC curves for the four classifications (Fig. 2).

Receiver operating characteristic curve for classifications based on severity and clinical diagnosis. Left: Receiver operating characteristic curve (ROC) curves for classifying healthy volunteers versus patients stratified by dementia severity (MCI, mild dementia, and moderate dementia). Right: ROC curves for classifying healthy volunteers versus patients grouped by clinical diagnosis. The curves illustrate the model performance across subgroups, with consistent discrimination ability observed in both stratification approaches. AD, Patients with Alzheimer’s disease; AUC, Area under the receiver operating characteristic curve; HV, Healthy volunteers; iNPH, Patients with idiopathic normal pressure hydrocephalus; LBD, Patients with Lewy body disease; MCI, Patients with mild cognitive impairment.

Additionally, comparisons between HVs and patients classified by clinical diagnosis yielded an AUC of 0.866 and bACC of 77.8% (HVs vs. AD), an AUC of 0.898 and bACC of 86.4% (HVs vs. LBD), an AUC of 0.867 and bACC of 81.6% (HVs vs. iNPH), and an AUC of 0.812 and bACC of 74.9% (HVs vs. nonspecific MCI) (Table 4). These results are summarized in the ROC curves for the four classifications (Fig. 2).

The holdout analysis demonstrated an AUC of 0.882 and a bACC of 87.5% between the HVs and patients (Table 5). The sensitivities in the holdout set based on clinical diagnoses were 83.3% for patients with AD, 100.0% for patients with LBD, 50.0% for patients with iNPH, and 0.0% for patients with nonspecific MCI; the latter result was based on only one participant (Table 6).

Age-adjusted sub-analyses

We further conducted a sub-analysis to assess the impact of age on the differentiation process. Given that the number of HVs was greater than that of each subgroup (MCI, mild dementia, and moderate dementia), we adjusted the number of HVs to match that of each subgroup. The adjustment method involved the random selection of the same number of HVs as those in each subgroup. This random selection was repeated until no significant age difference was observed between each subgroup and selected HVs. The threshold for no significant difference was defined as p ≥ 0.05 based on independent t-tests comparing the mean ages between each subgroup and the sampled HVs. The detailed subgroup sizes, age distributions, and p-values are presented in Supplementary Table 3 (S4).

Consequently, for the HVs vs. MCI, the bACC was 78.7% (79.1% before adjustment), for the HVs vs. mild dementia it was 76.7% (81.1% before adjustment), and for the HVs vs. moderate dementia it was 84.2% (83.7% before adjustment), with an average decrease in bACC of 1.4% after adjustment.

We subsequently examined the impact of age on the classification, using the same method as that used for the analysis based on the severity of dementia. Consequently, for the HVs vs. AD the bACC was 80.8% (77.8% before adjustment), for the HVs vs. LBD it was 79.3% (86.4% before adjustment), for the HVs vs. iNPH it was 75.0% (81.6% before adjustment), and for the HVs vs. nonspecific MCI it was 83.3% (74.9% before adjustment), with an average increase in bACC of 0.5% after adjustment.

Discussion

In the present study, we developed a deep-learning model that uses data from a portable EEG device to differentiate HVs from patients with dementia-related conditions. Our cross-validation analysis yielded an AUC of 0.872 and bACC of 80.8% for distinguishing HVs from patients. Severity-based comparisons produced AUCs ranging from 0.858 to 0.888 and bACCs ranging from 79.1 to 83.7%, whereas clinical diagnosis-based comparisons produced AUCs ranging from 0.812 to 0.898 and bACCs ranging from 74.9 to 86.4%. These results confirmed that a high classification accuracy was achieved in all identification tasks.

A prior study, which applied deep learning to EEG data from 19 channels45, reported an AUC of 0.86 and an accuracy of 0.79 for distinguishing HVs from patients with MCI or dementia, including multiple clinical diagnoses, as in our study. While their results are comparable with ours, our model demonstrated a slightly superior performance despite using only a portable 3-channel EEG device. This highlights the practicality of the proposed approach, which offers a competitive accuracy with fewer channels.

Additionally, our results indicated that the classification accuracy, as measured by the bACC, increased with dementia severity. This trend is consistent with the findings of Fan et al.46, who applied machine learning to 19-channel EEG data to differentiate HVs from patients with AD based on CDR scores. However, this prior study was limited to patients with AD, whereas our study included a broader range of clinical diagnoses. In a study by Fan et al., the accuracy ranged from 42 to 56% for HVs vs. MCI, 59–70% for HVs vs. mild dementia, and 58–79% for HVs vs. moderate dementia. Notably, in our study, even when distinguishing MCI, where Fan et al.46 reported lower accuracy, we achieved higher precision, suggesting that our model is better at detecting early-stage dementia.

When comparing our findings with those of other studies that focused on MCI vs. HV classification, deep learning models applied to 19-channel EEG data have shown accuracies ranging from 75%17 to 92%11,14. Although the accuracy of our model (79%) exceeded that reported by Kim et al.17, it remained lower than that of higher-performing models11,14. However, our model uses only three frontal channels, which offers significant practical advantages in terms of accessibility and feasibility for widespread use.

In the results based on clinical diagnosis, previous studies employing machine learning and deep learning algorithms for EEG-based AD classification have reported varying degrees of success, with accuracies ranging from 80 to 95%18,47,48. However, these studies were limited by the relatively small sample sizes, which may have constrained their generalizability and robustness of their results. Even in a study with more than 100 participants, which secured a substantial number of patients and compared AD and HV classifications using machine learning13 and achieved an AUC of 0.76, our model demonstrated higher accuracy, indicating that our approach provides superior performance despite using fewer EEG channels. Compared with Fan et al.46, who reported an accuracy in the high 60% range for distinguishing HVs from patients with AD, even when averaging the best results across multiple models for different disease severity levels, our study demonstrated superior performance, achieving an approximately 10% higher accuracy in this classification task. However, compared with studies by Morabito et al.14 and Ieracitano et al.11, which reported accuracies of 85% and 91%, respectively, our model lagged behind in terms of accuracy. This discrepancy may be attributed to the reduced information available from the portable 3-channel EEG used in our study, which could limit further improvements in classification accuracy.

Limited research has been conducted on the application of machine-learning or deep-learning techniques to EEG data from patients with LBD. One exception was the study by Dauwan et al.12, who applied machine learning to clinical EEG data and achieved a discrimination rate of approximately 90% between HVs and patients with LBD. Our findings are consistent with this, as the highest classification accuracy in our study was observed in distinguishing LBD, likely because of the presence of distinct neurophysiological disturbances commonly associated with LBD, as outlined in the international clinical diagnostic criteria8. These disturbances can be effectively captured by our deep learning model, highlighting its ability to identify characteristic patterns specific to LBD.

To our knowledge, no prior studies, aside from our own, have applied machine learning or deep learning to EEG data from patients with idiopathic normal pressure hydrocephalus (iNPH). Moreover, our previous work was the only study that attempted to distinguish between AD, LBD, and iNPH using EEG data. Compared with our earlier studies15,16, the classification accuracy in this study was 4–15% lower across clinical diagnoses, likely because of the limited number of EEG channels used. This suggests that although our model has made advancements, the reduction in available data when using a portable EEG device presents challenges in achieving the same level of accuracy as models using 19-channel clinical EEG systems. Despite this reduction in accuracy, the model nevertheless demonstrated a strong discrimination performance relative to prior studies. As discussed above, these findings further emphasize that our model achieves competitive performance even when focusing on early-stage dementia classification, despite relying on a lightweight, portable 3-channel EEG system. This demonstrates the potential for broader clinical implementation without requiring complex or large-scale EEG equipment and highlights that comparable accuracy can be obtained relative to prior studies using multi-channel clinical EEG.

To the best of our knowledge, this study is the first to apply a customized transformer-based deep learning model to portable EEG data for dementia classification. Unlike prior studies which primarily used convolutional neural networks or traditional machine learning with multi-channel EEG systems, our approach integrates multi-head self-attention mechanisms and parallel encoder structures optimized for limited-channel portable recordings. Additionally, we further evaluated the classification performance not only when differentiating HVs from patients, but also across multiple dementia severities and diagnostic categories, thus demonstrating the broad applicability of the model.

This study has several limitations. First, we extracted specific groups with defined clinical diagnoses from consecutively measured portable EEG cases collected during the study period. Consequently, there was some variability in both the cognitive function levels and sample sizes for each clinical diagnosis. Therefore, the results should be interpreted with caution. Second, with respect to the age gap between the HVs and patient groups, the bACC in distinguishing between HV and patients with each severity level of dementia and each clinical diagnosis fluctuated by no more than 2% in the analysis adjusted for age differences. Thus, the influence of age on discrimination appeared to be reduced, suggesting the possibility that factors other than age contributed to the observed classification performance. A more detailed consideration is that residual confounding owing to age differences may have been present in this study. Although a subsampling approach was implemented to match the subgroup sizes and ensure that there were no statistically significant age differences, random sampling alone may not fully eliminate the influence of age-related EEG variability. Future studies should therefore consider recruiting age-matched cohorts, or applying statistical modeling techniques that incorporate age as a covariate to further control for this confounding factor. Additionally, the EEG recordings were obtained under different procedural contexts for patients and controls, which may have introduced systematic differences unrelated to the disease status. Furthermore, regarding model evaluation, although a combination of 10-fold cross-validation and a holdout set was applied in the present study, this strategy may not fully prevent any optimistic bias owing to potentially favorable data splits. Nested cross-validation would offer a more robust estimate of model generalizability, however was not implemented here because of the computational constraints. Future research should consider incorporating nested cross-validation and evaluating the model on independent external datasets to further validate its performance. Subsequently, we employed a transformer-based architecture with approximately 199,995 trainable parameters. While dividing EEG recordings into multiple epochs increased the number of the training instances, these segments were not fully independent, which may have contributed to the overfitting relative to the true sample size at the participant level. Although dropout, early stopping, weight decay, and MixUp-based data augmentation were applied to reduce this risk, additional strategies, such as data augmentation or simpler model architectures should be incorporated in future research to further improve generalizability. Another important limitation is that the complexity of the transformer-based model used in this study hinders a straightforward interpretation of the feature contributions. Although attention mechanisms can sometimes provide partial insights, the combination of parallel encoders and convolutional layers complicates the direct mapping to specific EEG features. Future studies should apply dedicated explainability approaches, such as SHapley Additive exPlanations (SHAP) or saliency maps, to improve transparency and support clinical trust in the model outputs. Another important consideration relates to the limitations inherent in the hardware used in this study. While the use of a portable 3-channel EEG device offers significant advantages in terms of ease of use and accessibility, this device inherently provides a lower spatial resolution than conventional multi-channel EEG systems. This limitation may have reduced the ability to capture more detailed spatial patterns of neural activity. However, the tradeoff was considered acceptable in this study to prioritize feasibility and scalability in clinical and community settings.

Future research building on this study should integrate complementary biomarkers, such as neuroimaging and fluid-based measures, to improve the robustness of EEG-based classification. In addition, validating the model in larger and more diverse populations across multiple institutions is essential to confirm its generalizability and clinical utility. Prospective studies with external validation cohorts should be conducted to strengthen the evidence for practical implementation.

Conclusions

In this study, we developed a deep-learning model to differentiate HVs from patients with dementia-related conditions using a patch-type portable EEG device. Our model demonstrated high accuracy in distinguishing HVs from patients, as well as in classifying dementia according to severity and clinical diagnosis. With the mobility of a portable EEG device, the model holds promise for clinical use, particularly in facilitating early detection and preliminary evaluation in a variety of clinical settings.

Data availability

Data is provided within the manuscript or supplementary information files.

Code availability

The code related to the model architecture is available at the following GitHub repository: https://github.com/mhata-lab/dementia-transformer-eeg.

Change history

30 October 2025

A Correction to this paper has been published: https://doi.org/10.1038/s41598-025-25176-0

References

Alzheimer’s disease and international. World Alzheimer Report. (2023). Available at: https://www.alzint.org/resource/world-alzheimer-report-2023/

Ministry of Health, Labour and Welfare. Comprehensive Research Project on Dementia: Prevalence of Dementia and Responses to Functional Impairment in Daily Living in Urban Areas (FY 2011–2012 Comprehensive Research Report). (2013).

Japan Dementia Society. List of dementia specialists. Available at: https://square.umin.ac.jp/dementia/g1.html

Liu, J. L., Baker, L., Chen, A. Y. A. & Wang, J. J. Geographic variation in shortfalls of dementia specialists in the united States. Health Aff Sch. 2, qxae088 (2024).

Alzheimer’s Association. Alzheimer’s disease facts and figures. Alzheimers Dement. 15, 321–387 (2019). (2019).

Mittelman, M. S., Haley, W. E., Clay, O. J. & Roth, D. L. Improving caregiver well-being delays nursing home placement of patients with alzheimer disease. Neurology 67, 1592–1599 (2006).

Taylor, J. P. et al. New evidence on the management of lewy body dementia. Lancet Neurol. 19, 157–169 (2020).

McKeith, I. G. et al. Diagnosis and management of dementia with lewy bodies: fourth consensus report of the DLB consortium. Neurology 89, 88–100 (2017).

Malek, N., Baker, M. R., Mann, C. & Greene, J. Electroencephalographic markers in dementia. Acta Neurol. Scand. 135, 388–393 (2017).

Nardone, R. et al. Usefulness of EEG techniques in distinguishing frontotemporal dementia from Alzheimer’s disease and other dementias. Dis. Markers 6581490 (2018). (2018).

Ieracitano, C., Mammone, N., Bramanti, A., Hussain, A. & Morabito, F. C. A convolutional neural network approach for classification of dementia stages based on 2D-spectral representation of EEG recordings. Neurocomputing 323, 96–107 (2019).

Dauwan, M. et al. Random forest to differentiate dementia with lewy bodies from alzheimer’s disease. Alzheimers Dement. (Amst). 4, 99–106 (2016).

Farina, F. R. et al. A comparison of resting state EEG and structural MRI for classifying alzheimer’s disease and mild cognitive impairment. Neuroimage 215, 116795 (2020).

Morabito, F. C. et al. Deep convolutional neural networks for classification of mild cognitive impaired and Alzheimer’s disease patients from scalp EEG recordings IEEE 2nd International Forum on Research and Technologies for Society and Industry Leveraging a better tomorrow (RTSI), Bologna, Italy. 2016 1–6. (2016).

Hata, M. et al. Precise discrimination for multiple etiologies of dementia cases based on deep learning with electroencephalography. Neuropsychobiology 82, 81–90 (2023).

Watanabe, Y. et al. A deep learning model for the detection of various dementia and MCI pathologies based on resting-state electroencephalography data: A retrospective multicentre study. Neural Netw. 171, 242–250 (2024).

Kim, M. J., Youn, Y. C. & Paik, J. Deep learning-based EEG analysis to classify normal, mild cognitive impairment, and dementia: algorithms and dataset. Neuroimage 272, 120054 (2023).

Bi, X. & Wang, H. Early alzheimer’s disease diagnosis based on EEG spectral images using deep learning. Neural Netw. 114, 119–135 (2019).

Smailovic, U. & Jelic, V. Neurophysiological markers of alzheimer’s disease: quantitative EEG approach. Neurol. Ther. 8 (Suppl 2), 37–55 (2019).

Hata, M. et al. Predicting postoperative delirium after cardiovascular surgeries from preoperative portable electroencephalography oscillations. Front. Psychiatry. 14, 1287607 (2023).

Hata, M. et al. Screening of Aβ and phosphorylated Tau status in the cerebrospinal fluid through machine learning analysis of portable electroencephalography data. Sci. Rep. 15, 2067 (2025).

Hata, M. et al. Utilizing portable electroencephalography to screen for pathology of alzheimer’s disease: A methodological advancement in diagnosis of neurodegenerative diseases. Front. Psychiatry. 15, 1392158 (2024).

Folstein, M. F., Folstein, S. E. & McHugh, P. R. Mini-mental state. A practical method for grading the cognitive state of patients for the clinician. J. Psychiatr Res. 12, 189–198 (1975).

McKhann, G. M. et al. The diagnosis of dementia due to alzheimer’s disease: recommendations from the National Institute on Aging-Alzheimer’s association workgroups on diagnostic guidelines for alzheimer’s disease. Alzheimers Dement. 7, 263–269 (2011).

Albert, M. S. et al. The diagnosis of mild cognitive impairment due to alzheimer’s disease: recommendations from the National Institute on Aging-Alzheimer’s association workgroups on diagnostic guidelines for alzheimer’s disease. Alzheimers Dement. 7, 270–279 (2011).

McKeith, I. G. et al. Research criteria for the diagnosis of prodromal dementia with lewy bodies. Neurology 94, 743–755 (2020).

Nakajima, M. et al. Guidelines for management of idiopathic normal pressure hydrocephalus (Third Edition): Endorsed by the Japanese Society of normal pressure hydrocephalus. Neurol. Med. Chir. (Tokyo). 61, 63–97 (2021).

Hughes, C. P., Berg, L., Danziger, W. L., Coben, L. A. & Martin, R. L. A new clinical scale for the staging of dementia. Br. J. Psychiatry. 140, 566–572 (1982).

Ganguli, M. et al. Mild cognitive impairment that does not progress to dementia: A population-based study. J. Am. Geriatr. Soc. 67, 232–238 (2019).

Griffin, D. W. & Lim, J. S. Signal Estimation from modified short-time fourier transform. IEEE Trans. Acoust. Speech Signal. Process. 32, 236–243 (1984).

Pedregosa, F. et al. Scikit-learn: machine learning in Python. JMLR 12, 2825–2830 (2011). https://www.researchgate.net/publication/51969319_Scikit-learn_Machine_Learning_in_Python

Vaswani, A. et al. NIPS. Attention is all you need. Adv. Neural Inf. Process. Syst. 30 30 (2017). (2017).

Gehring, J., Auli, M., Grangier, D., Yarats, D. & Dauphin, Y. N. Convolutional sequence to sequence learning. (2017). arXiv.1705.03122.

Srivastava, N. et al. A simple way to prevent neural networks from overfitting. JMLR 15, 1929–1958 (2014).

Kohavi, R. A study of cross-validation and bootstrap for accuracy Estimation and model selection. IJCAI 14, 1137–1145 (1995).

Kingma, D. P. & Ba, J. Adam: A method for stochastic optimization, arXiv:1412.6980. (2014).

Prechelt, L. Early stopping-but when? in Neural networks: Tricks of the trade 55–69Springer, Berlin, Heidelberg, (1998).

Zhang, H., Cisse, M., Dauphin, Y. N. & Lopez-Paz, D. Mixup: Beyond Empirical Risk Minimization. arXiv:1710.09412v2. (2018).

Harris, C. R. et al. Array programming with numpy. Nature 585, 357–362 (2020).

Virtanen, P. et al. SciPy 1.0: fundamental algorithms for scientific computing in Python. Nat. Methods. 17, 261–272 (2020).

Abadi, M. et al. Tensorflow: A system for large-scale machine learning. In 12th USENIX symposium on OSDI; 16:265–283. (2016).

Powers, D. M. Evaluation: from precision, recall and F-measure to ROC, informedness, markedness and correlation. JMLT 2, 37–63 (2011).

Fawcett, T. An introduction to ROC analysis. Pattern Recognit. Lett. 27, 861–874 (2006).

Brodersen, K. H., Ong, C. S., Stephan, K. E. & Buhmann, J. M. The balanced accuracy and its posterior distribution 20th International Conference on Pattern Recognition, Istanbul, Turkey, 2010. (2010).

Kim, D. & Kim, K. Detection of early stage alzheimer’s disease using EEG relative power with deep neural network. Annu. Int. Conf. IEEE Eng. Med. Biol. Soc. 2018, 352–355 (2018).

Fan, M., Yang, A. C., Fuh, J. L. & Chou, C. A. Topological pattern recognition of severe alzheimer’s disease via regularized supervised learning of EEG complexity. Front. Neurosci. 12, 685 (2018).

Pritchard, W. S. et al. EEG-based, neural-net predictive classification of alzheimer’s disease versus control subjects is augmented by non-linear EEG measures. Electroencephalogr. Clin. Neurophysiol. 91, 118–130 (1994).

Trambaiolli, L. R. et al. Improving alzheimer’s disease diagnosis with machine learning techniques. Clin. EEG Neurosci. 42, 160–165 (2011).

Acknowledgements

We would like to thank all the study participants and supporting personnel for their help and interest in this study. In addition, we thank the staff of the Biochemistry and Neuropsychology laboratories who collected the specimens and performed the clinical tests.

Funding

This study was supported by PGV Inc., Tokyo, Japan and JSPS KAKENHI Grant Number JP25K10813.

Author information

Authors and Affiliations

Contributions

MH and TY were responsible for the study conceptualization and design, drafting of the manuscript, and all statistical analyses. YM, HO, AH, YN, ME, KY, HK, ST, and MI collected and interpreted the data. BN, SY, and KE conducted the model building, training, and testing. All the authors provided critical feedback on the analysis and the manuscript. All the authors have read and approved the final version of the manuscript.

Corresponding author

Ethics declarations

Competing interests

The authors declare no competing interests.

Additional information

Publisher’s note

Springer Nature remains neutral with regard to jurisdictional claims in published maps and institutional affiliations.

The original online version of this Article was revised: The original version of this Article contains errors Table 1 and Table 2. Full information regarding the corrections made can be found in the correction for this Article.

Electronic supplementary material

Below is the link to the electronic supplementary material.

Rights and permissions

Open Access This article is licensed under a Creative Commons Attribution-NonCommercial-NoDerivatives 4.0 International License, which permits any non-commercial use, sharing, distribution and reproduction in any medium or format, as long as you give appropriate credit to the original author(s) and the source, provide a link to the Creative Commons licence, and indicate if you modified the licensed material. You do not have permission under this licence to share adapted material derived from this article or parts of it. The images or other third party material in this article are included in the article’s Creative Commons licence, unless indicated otherwise in a credit line to the material. If material is not included in the article’s Creative Commons licence and your intended use is not permitted by statutory regulation or exceeds the permitted use, you will need to obtain permission directly from the copyright holder. To view a copy of this licence, visit http://creativecommons.org/licenses/by-nc-nd/4.0/.

About this article

Cite this article

Hata, M., Yanagisawa, T., Miyazaki, Y. et al. Accurate deep-learning model to differentiate dementia severity and diagnosis using a portable electroencephalography device. Sci Rep 15, 26304 (2025). https://doi.org/10.1038/s41598-025-12526-1

Received:

Accepted:

Published:

Version of record:

DOI: https://doi.org/10.1038/s41598-025-12526-1